A Sparsification Approach for Temporal Graphical Model Decomposition

- Slides: 26

A Sparsification Approach for Temporal Graphical Model Decomposition Ning Ruan Kent State University Joint work with Ruoming Jin (KSU), Victor Lee (KSU) and Kun Huang (OSU)

Motivation: Financial Markets

Motivation: Biological Systems Fluorescence Counts Protein-Protein Interaction Microarray time series profile 3

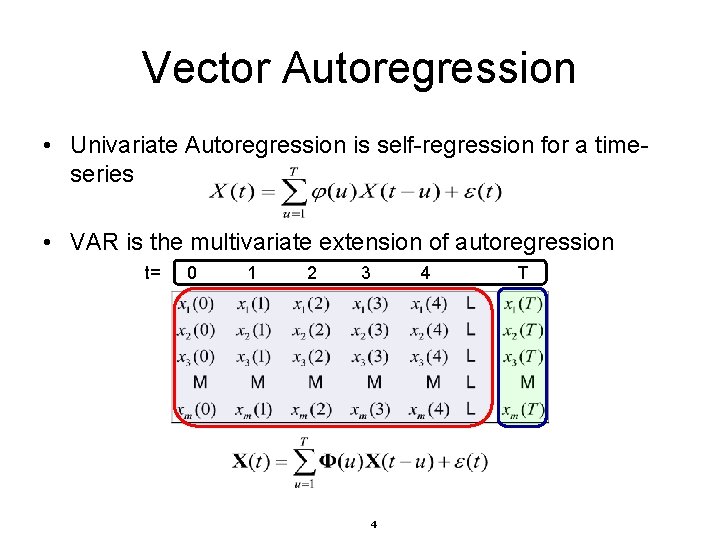

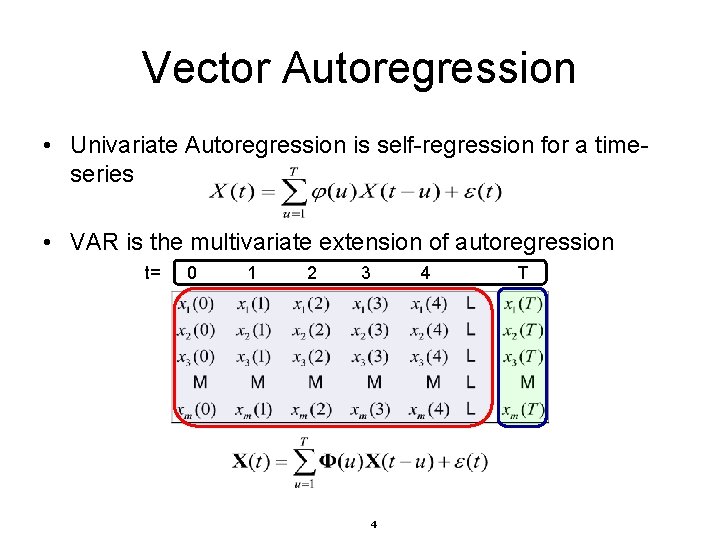

Vector Autoregression • Univariate Autoregression is self-regression for a timeseries • VAR is the multivariate extension of autoregression t= 0 1 2 3 4 4 T

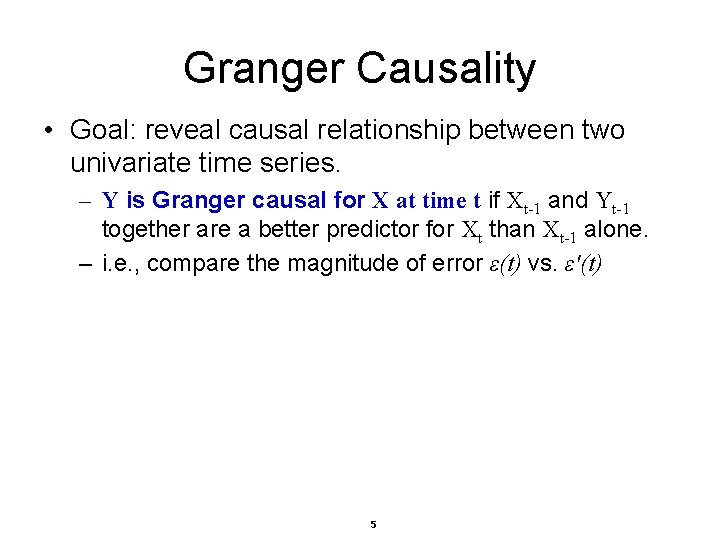

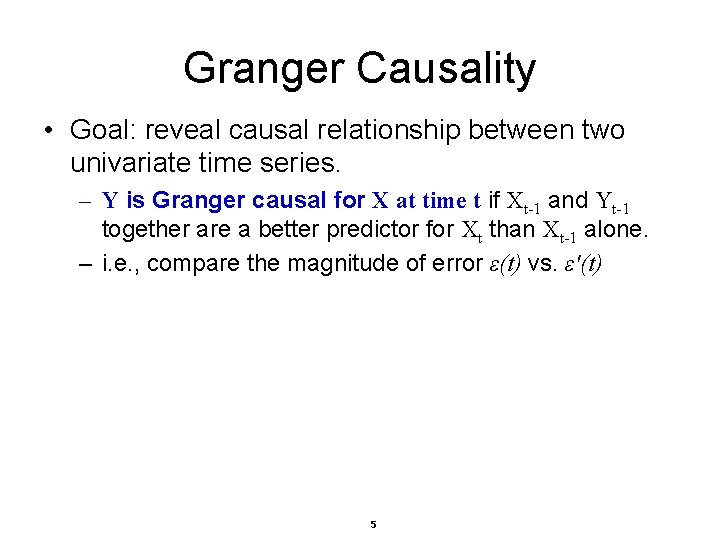

Granger Causality • Goal: reveal causal relationship between two univariate time series. – Y is Granger causal for X at time t if Xt-1 and Yt-1 together are a better predictor for Xt than Xt-1 alone. – i. e. , compare the magnitude of error ε(t) vs. ε′(t) 5

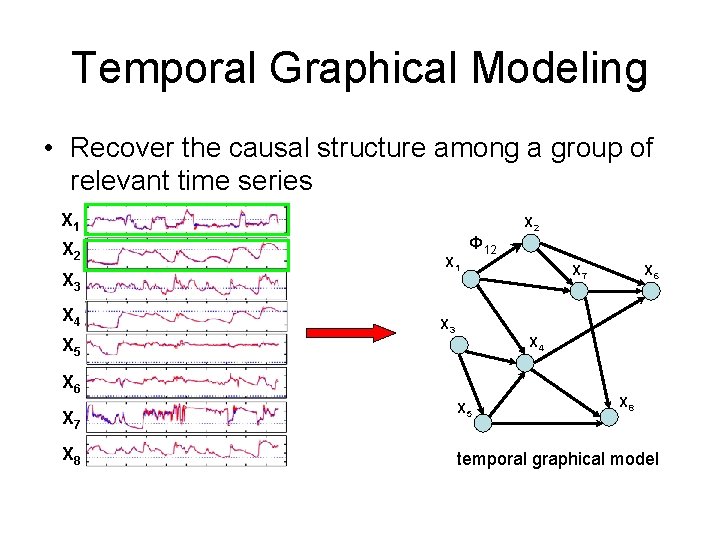

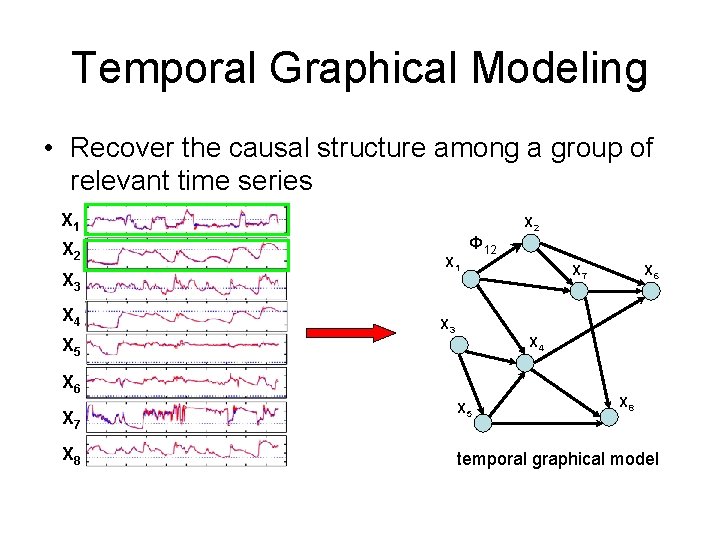

Temporal Graphical Modeling • Recover the causal structure among a group of relevant time series X 1 X 2 X 3 X 4 X 2 X 1 Φ 12 X 3 X 6 X 8 X 6 X 4 X 5 X 7 X 5 X 8 temporal graphical model

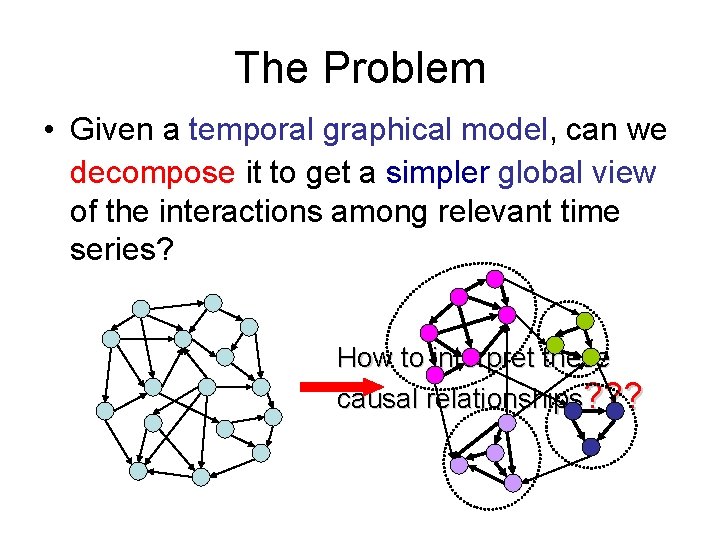

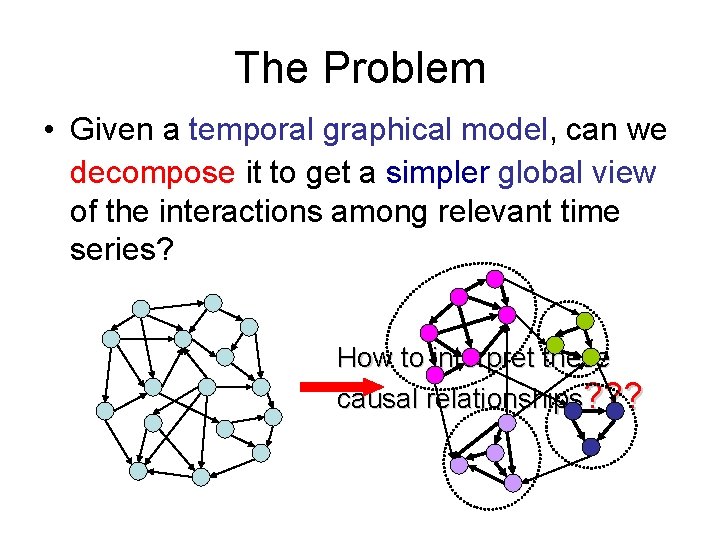

The Problem • Given a temporal graphical model, can we decompose it to get a simpler global view of the interactions among relevant time series? How to interpret these causal relationships? ? ?

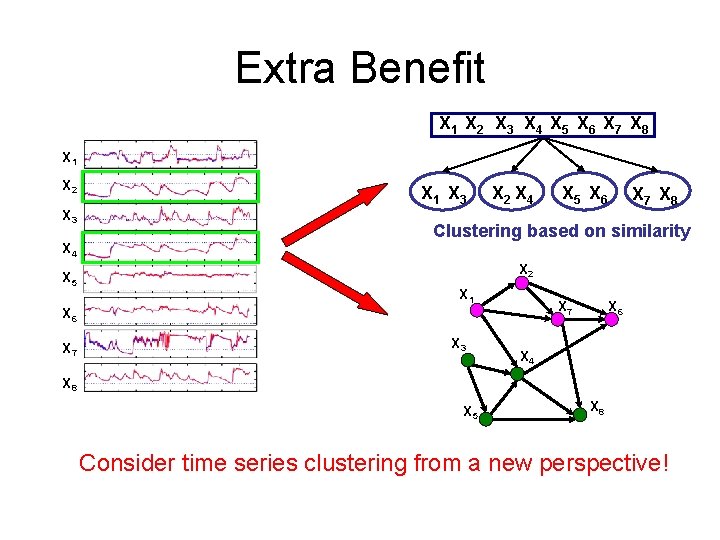

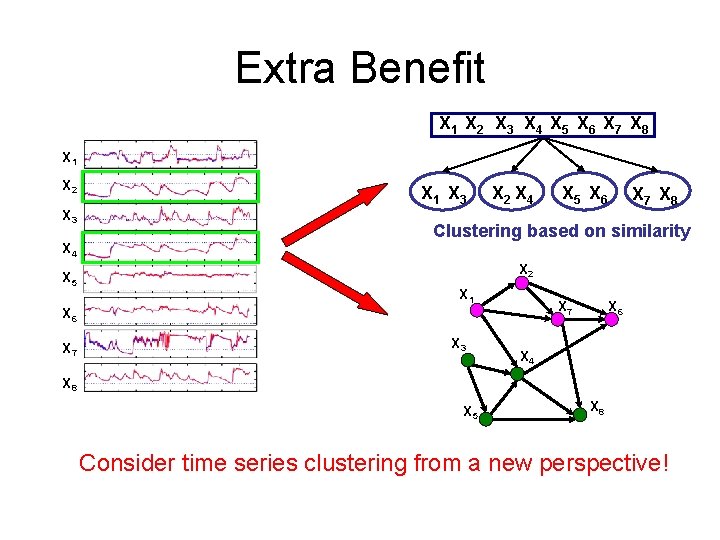

Extra Benefit X 1 X 2 X 3 X 4 X 5 X 6 X 7 X 8 X 1 X 2 X 3 X 4 X 5 X 1 X 3 X 2 X 4 X 7 X 8 Clustering based on similarity X 2 X 1 X 7 X 6 X 7 X 5 X 6 X 3 X 6 X 4 X 8 X 5 X 8 Consider time series clustering from a new perspective!

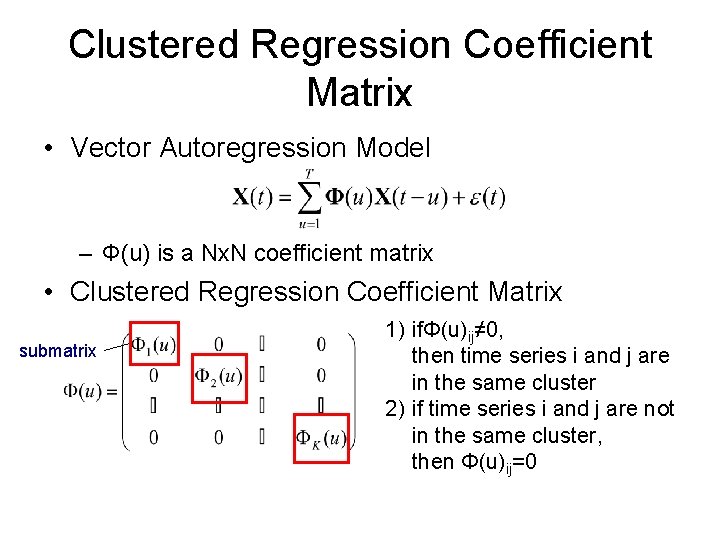

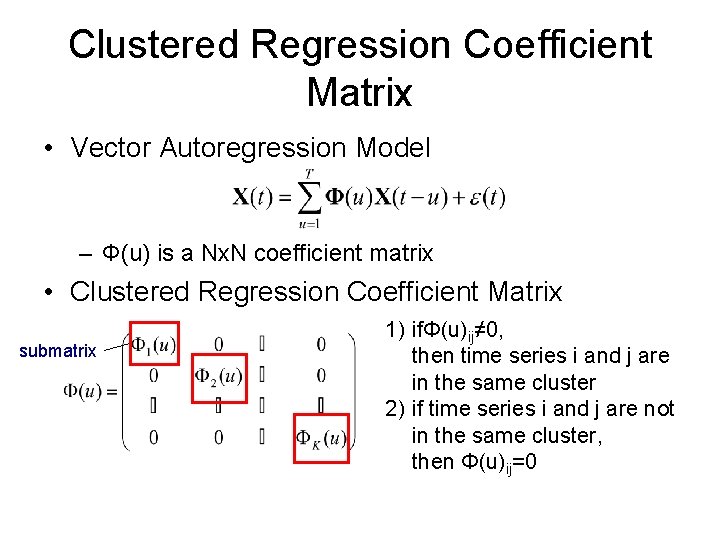

Clustered Regression Coefficient Matrix • Vector Autoregression Model – Φ(u) is a Nx. N coefficient matrix • Clustered Regression Coefficient Matrix submatrix 1) ifΦ(u)ij≠ 0, then time series i and j are in the same cluster 2) if time series i and j are not in the same cluster, then Φ(u)ij=0

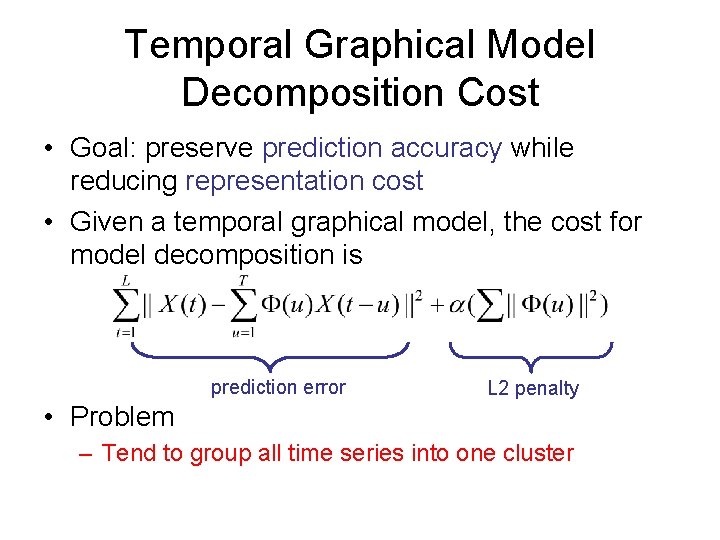

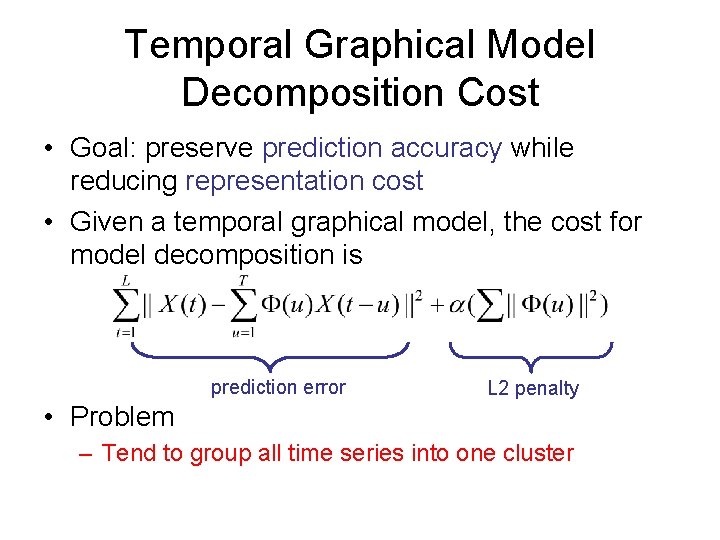

Temporal Graphical Model Decomposition Cost • Goal: preserve prediction accuracy while reducing representation cost • Given a temporal graphical model, the cost for model decomposition is prediction error L 2 penalty • Problem – Tend to group all time series into one cluster

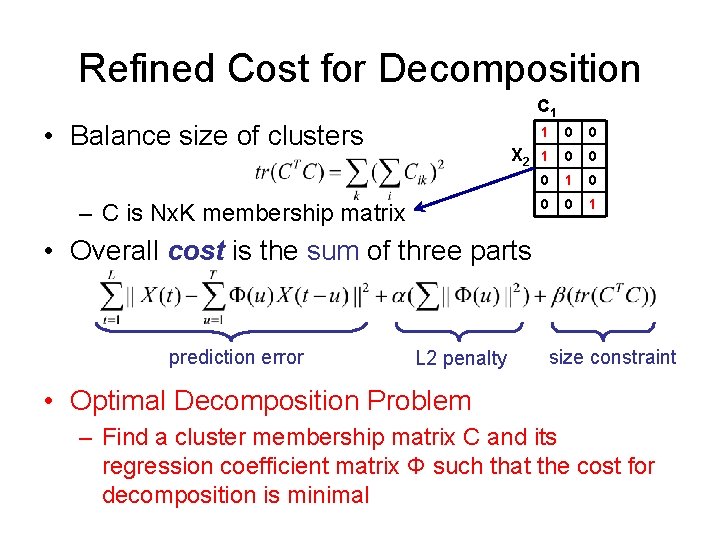

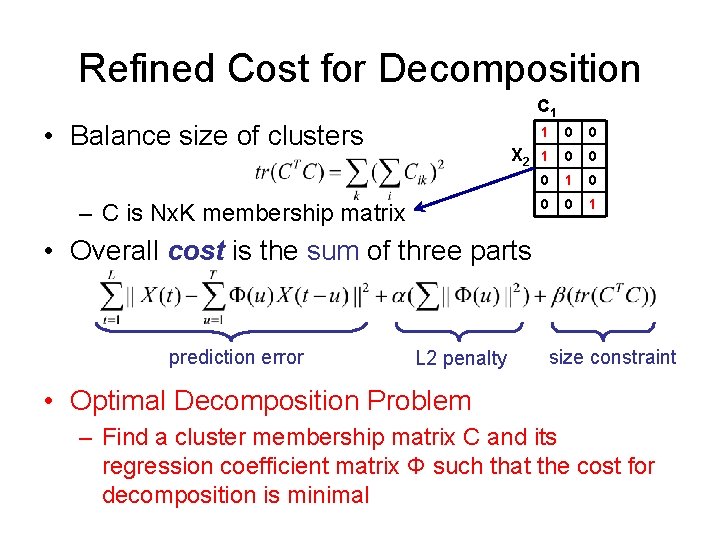

Refined Cost for Decomposition C 1 • Balance size of clusters X 2 – C is Nx. K membership matrix 1 0 0 0 1 • Overall cost is the sum of three parts prediction error L 2 penalty size constraint • Optimal Decomposition Problem – Find a cluster membership matrix C and its regression coefficient matrix Φ such that the cost for decomposition is minimal

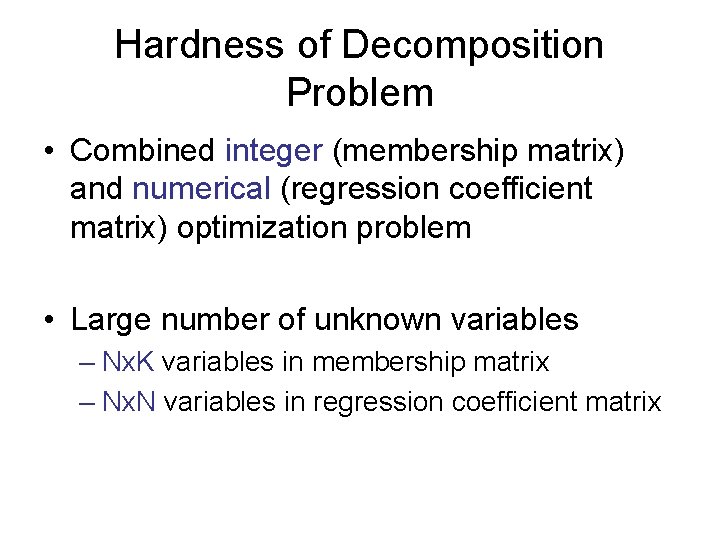

Hardness of Decomposition Problem • Combined integer (membership matrix) and numerical (regression coefficient matrix) optimization problem • Large number of unknown variables – Nx. K variables in membership matrix – Nx. N variables in regression coefficient matrix

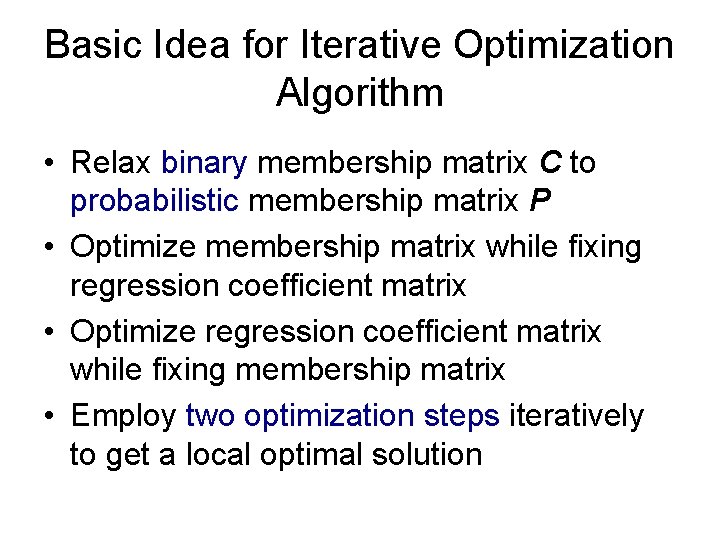

Basic Idea for Iterative Optimization Algorithm • Relax binary membership matrix C to probabilistic membership matrix P • Optimize membership matrix while fixing regression coefficient matrix • Optimize regression coefficient matrix while fixing membership matrix • Employ two optimization steps iteratively to get a local optimal solution

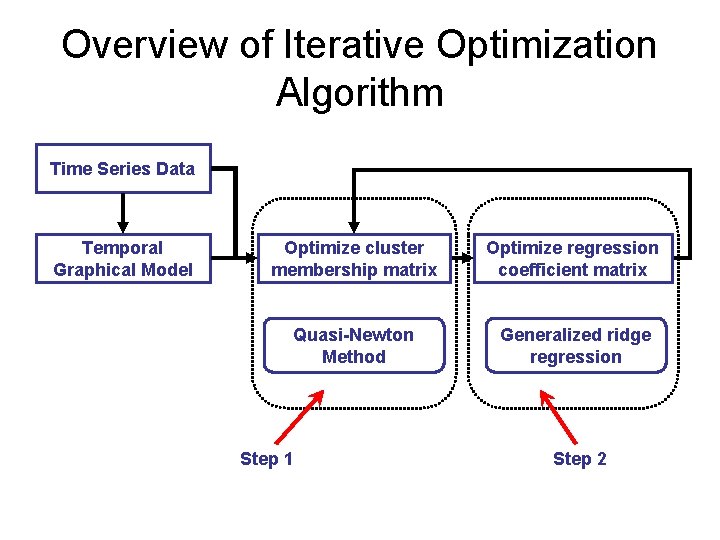

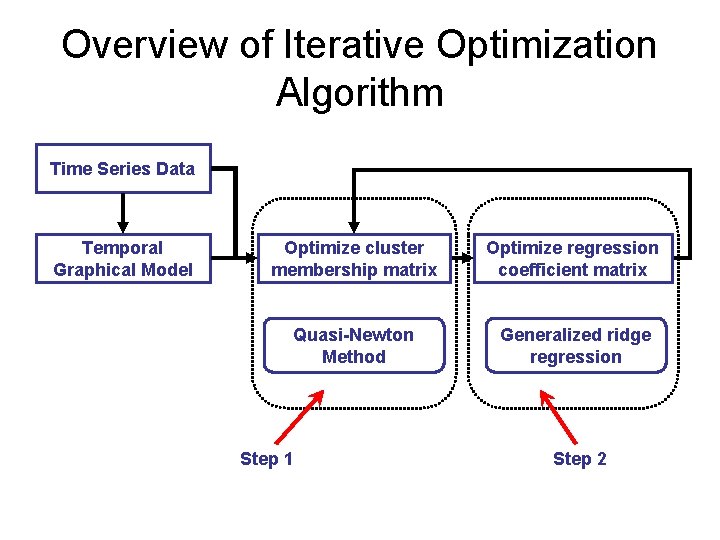

Overview of Iterative Optimization Algorithm Time Series Data Temporal Graphical Model Optimize cluster membership matrix Optimize regression coefficient matrix Quasi-Newton Method Generalized ridge regression Step 1 Step 2

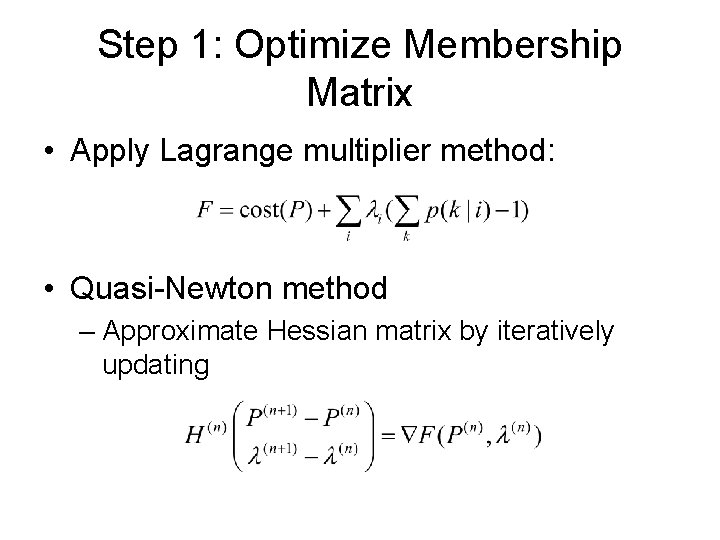

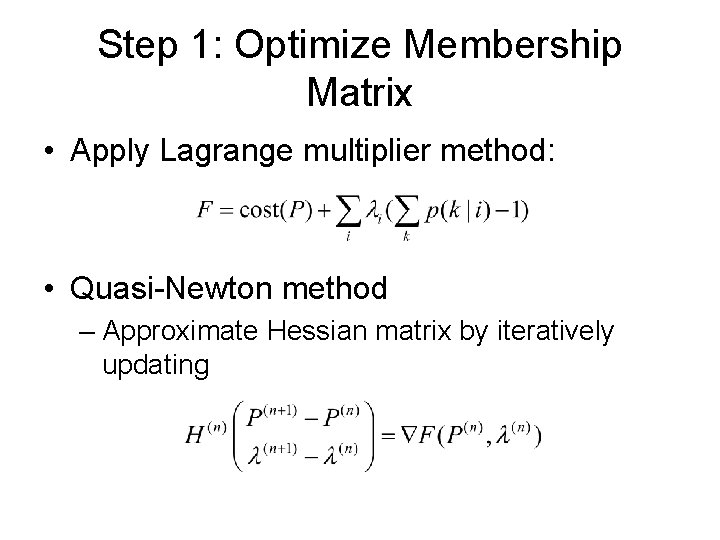

Step 1: Optimize Membership Matrix • Apply Lagrange multiplier method: • Quasi-Newton method – Approximate Hessian matrix by iteratively updating

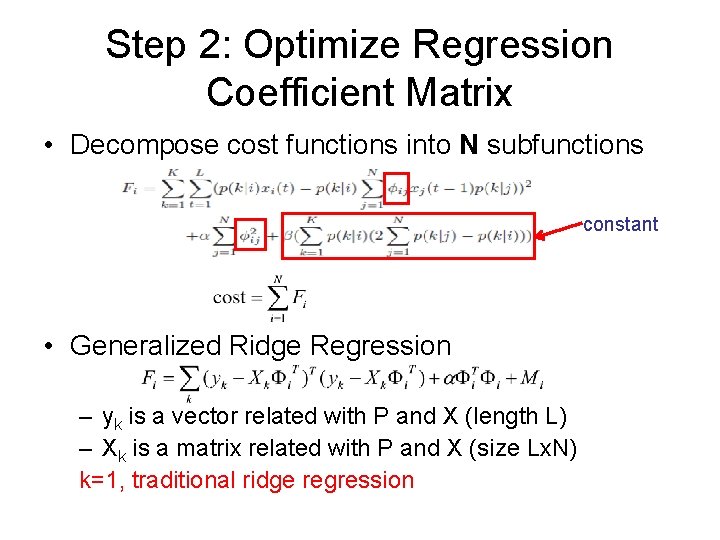

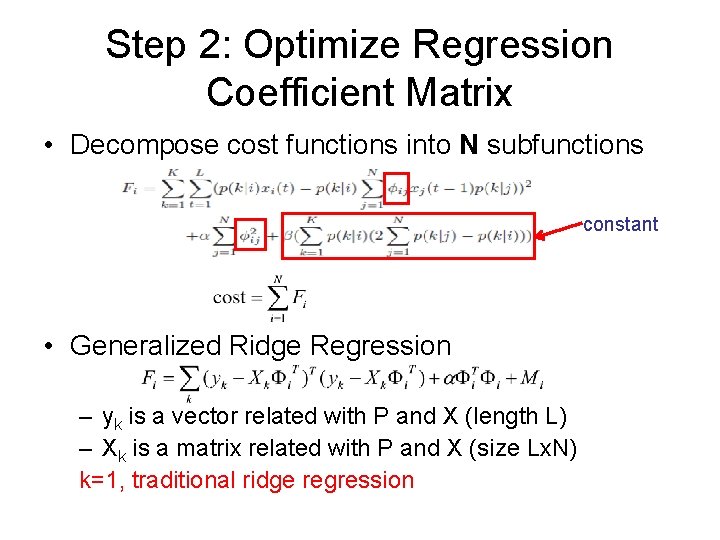

Step 2: Optimize Regression Coefficient Matrix • Decompose cost functions into N subfunctions constant • Generalized Ridge Regression – yk is a vector related with P and X (length L) – Xk is a matrix related with P and X (size Lx. N) k=1, traditional ridge regression

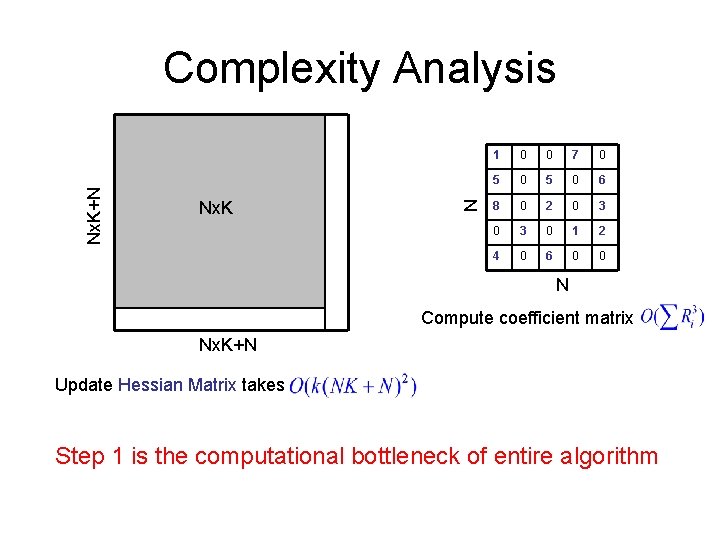

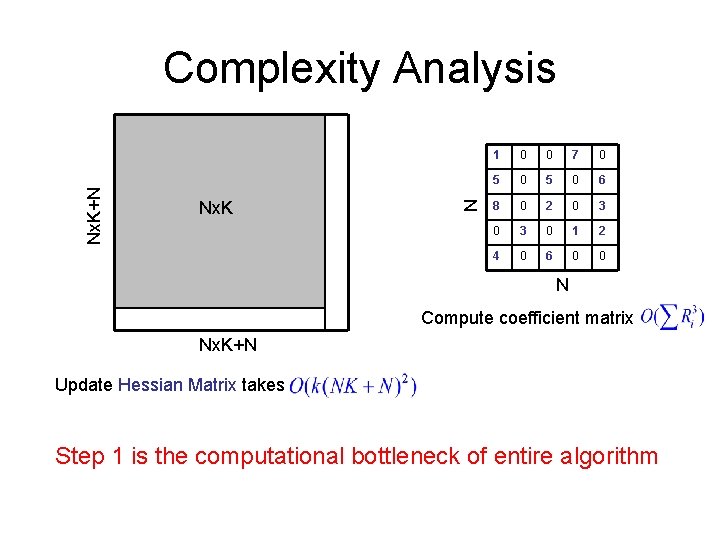

Nx. K N Nx. K+N Complexity Analysis 1 0 0 7 0 5 0 6 8 0 2 0 3 0 1 2 4 0 6 0 0 N Compute coefficient matrix Nx. K+N Update Hessian Matrix takes Step 1 is the computational bottleneck of entire algorithm

Basic Idea for Scalable Approach • Utilize variable dependence relationship to optimize each variable (or a small number of variables) independently, assuming other relationships are fixed • Convert the problem to a Maximal Weight Independent Set (MWIS) problem

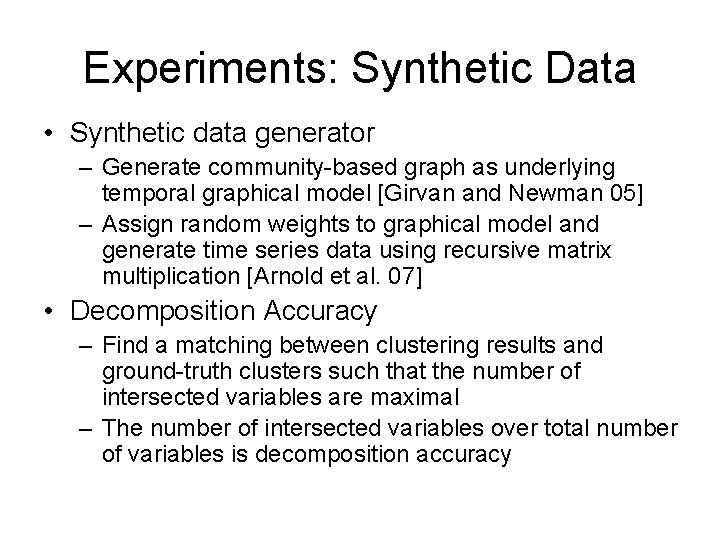

Experiments: Synthetic Data • Synthetic data generator – Generate community-based graph as underlying temporal graphical model [Girvan and Newman 05] – Assign random weights to graphical model and generate time series data using recursive matrix multiplication [Arnold et al. 07] • Decomposition Accuracy – Find a matching between clustering results and ground-truth clusters such that the number of intersected variables are maximal – The number of intersected variables over total number of variables is decomposition accuracy

Experiments: Synthetic Data (cont. ) • Applied algorithms – Iterative optimization algorithm based on Quasi. Newton method (newton) – Iterative optimization algorithm based on MWIS method (mwis) – Benchmark 1: Pearson correlation test to generate temporal graphical model, and Ncut [Shi 00] for clustering (Cor_Ncut) – Benchmark 2: directed spectral clustering [Zhou 05] on ground-truth temporal graphical model (Dcut)

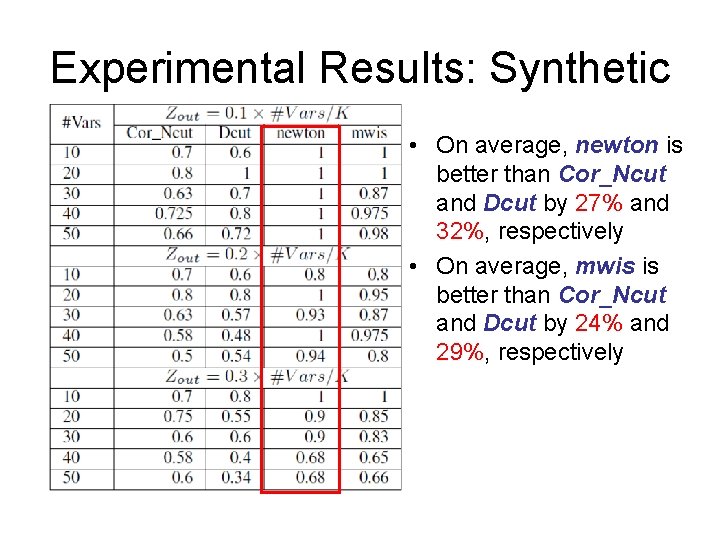

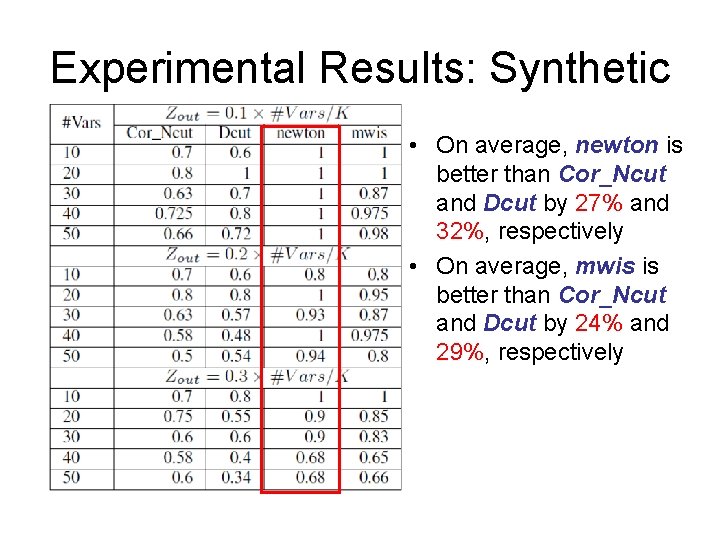

Experimental Results: Synthetic • On average, newton is better than Cor_Ncut and Dcut by 27% and 32%, respectively • On average, mwis is better than Cor_Ncut and Dcut by 24% and 29%, respectively

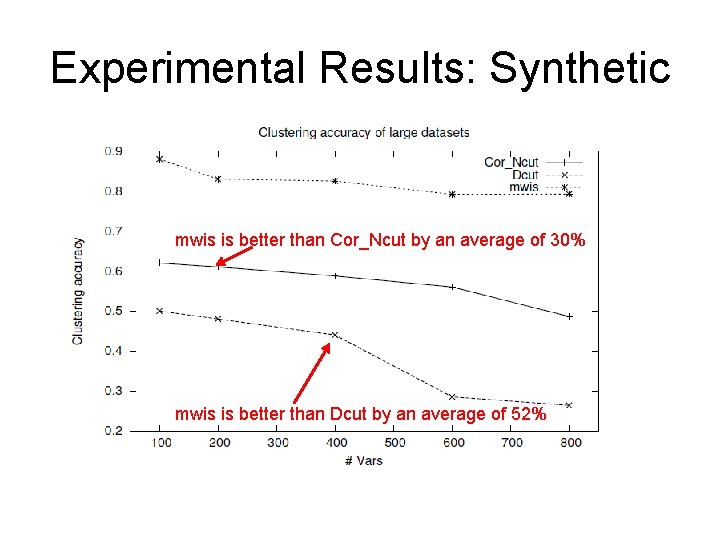

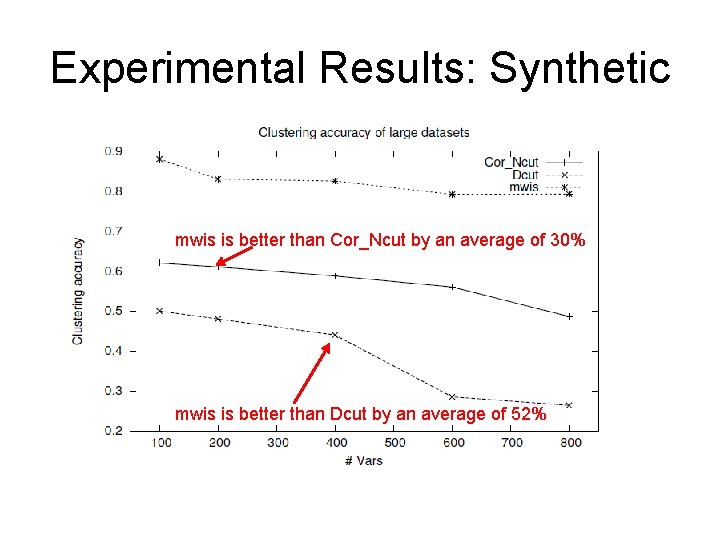

Experimental Results: Synthetic mwis is better than Cor_Ncut by an average of 30% mwis is better than Dcut by an average of 52%

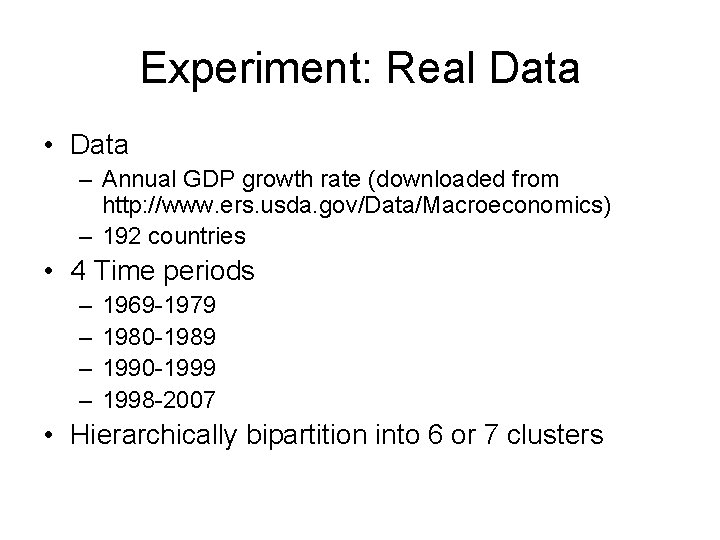

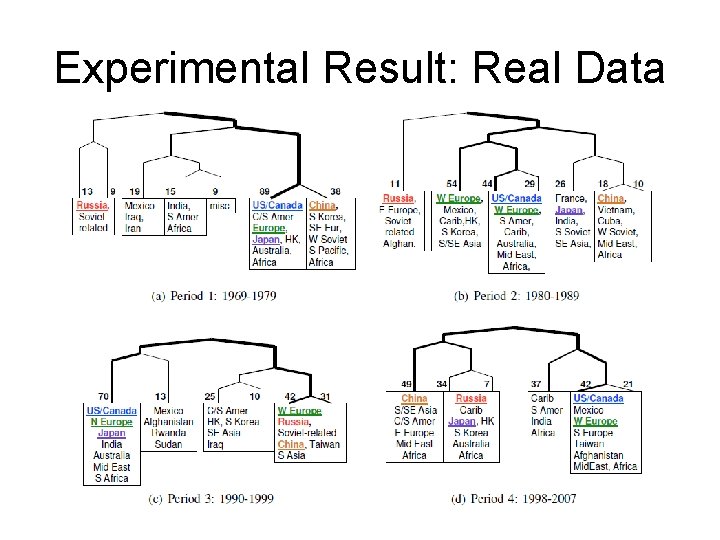

Experiment: Real Data • Data – Annual GDP growth rate (downloaded from http: //www. ers. usda. gov/Data/Macroeconomics) – 192 countries • 4 Time periods – – 1969 -1979 1980 -1989 1990 -1999 1998 -2007 • Hierarchically bipartition into 6 or 7 clusters

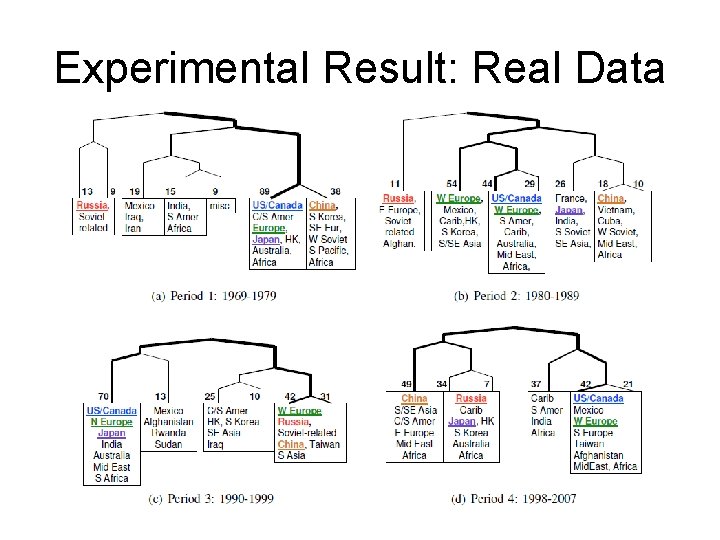

Experimental Result: Real Data

Summary • We formulate a novel objective function for the decomposition problem in temporal graphical modeling. • We introduce an iterative optimization approach utilizing Quasi-Newton method and generalized ridge regression. • We employ a maximum weight independent set based approach to speed up the Quasi-Newton method. • The experimental results demonstrate the effective and efficiency of our approaches.

Thank you