A Robust MainMemory Compression Scheme Magnus Ekman and

A Robust Main-Memory Compression Scheme Magnus Ekman and Per Stenström Chalmers University of Technology Göteborg, Sweden

Motivation • Memory resources are wasted to compensate for the increasing processor/memory/disk speedgap >50% of die size occupied by caches >50% of cost of a server is DRAM (and increasing) • Lossless data compression techniques have the potential to free up more than 50% of memory resources. Unfortunately, compression introduces several challenging design and performance issues

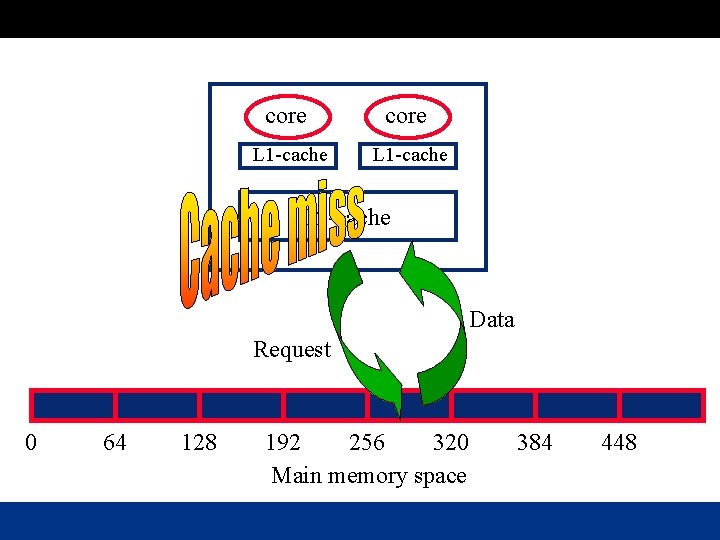

core L 1 -cache L 2 -cache Data Request 0 64 128 192 256 320 Main memory space 384 448

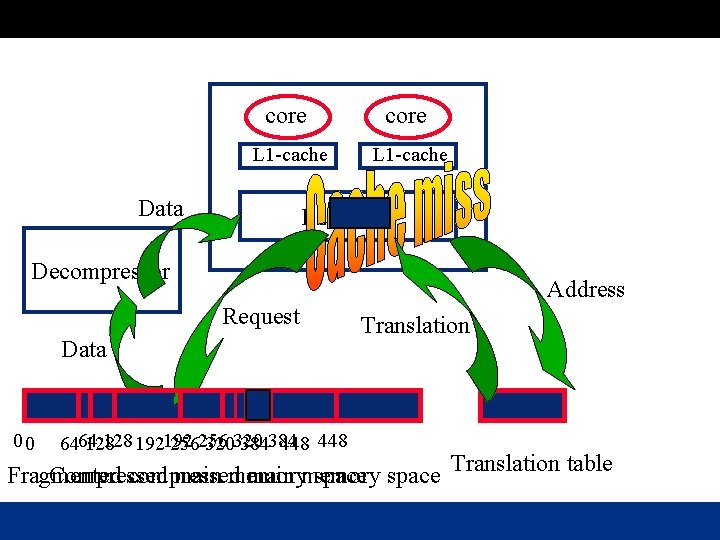

core L 1 -cache Data L 2 -cache Decompressor Address Request Data 00 128 192192 6464 128 256256 320320 384384 448 Translation Fragmented Compressed compressed main memory space Translation table

Contributions A low-overhead main-memory compression scheme: • Low decompression latency by using simple and fast algorithm (zero aware) • Fast address translation by a proposed small translation structure that fits on the processor die • Reduction of fragmentation through occassional relocation of data when compressibility varies Overall, our compression scheme frees up 30% of the memory at a marginal performance loss of 0. 2%!

Outline • • Motivation Issues Contributions Effectiveness of Zero-Aware Compressors Our Compression Scheme Performance Results Related Work Conclusions

Frequency of zero-valued locations • • 12% of all 8 KB pages only contain zeros 30% of all 64 B blocks only contain zeros 42% of all 4 B words only contain zeros 55% of all bytes are zero! Zero-aware compression schemes have a great potential!

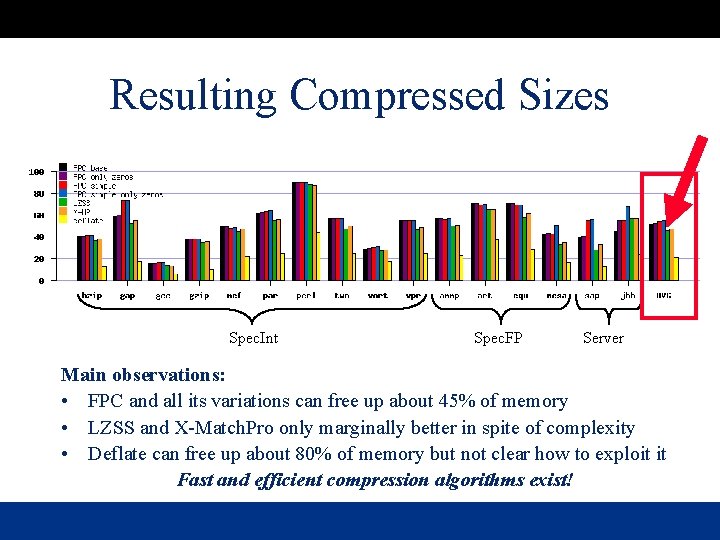

Evaluated Algorithms Zero aware algorithms: • FPC (Alameldeen and Wood) + 3 simplified versions For comparison, we also consider: • X-Match Pro (efficient hardware implementations exist) • LZSS (popular algorithm, previously used by IBM for memory compression) • Deflate (upper bound on compressibility)

Resulting Compressed Sizes Spec. Int Spec. FP Server Main observations: • FPC and all its variations can free up about 45% of memory • LZSS and X-Match. Pro only marginally better in spite of complexity • Deflate can free up about 80% of memory but not clear how to exploit it Fast and efficient compression algorithms exist!

Outline • • Motivation Issues Contributions Effectiveness of Zero-Aware Compressors Our Compression Scheme Performance Results Related Work Conclusions

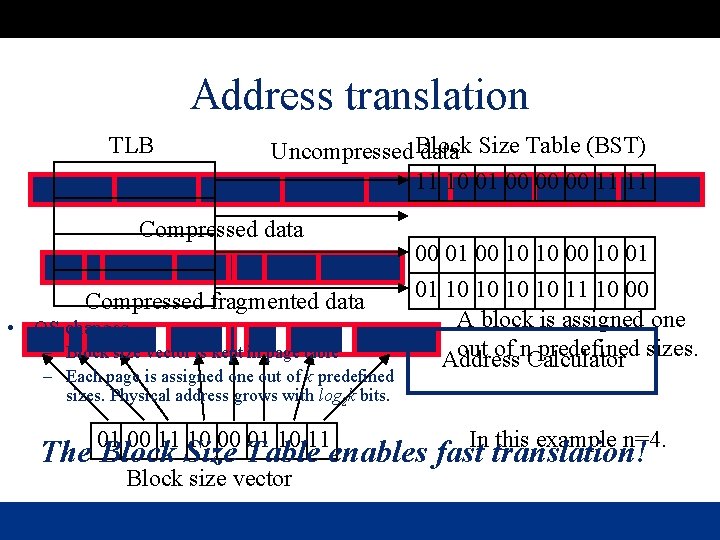

Address translation TLB Uncompressed Block data Size Table (BST) 11 10 01 00 00 00 11 11 Compressed data Compressed fragmented data • OS changes – Block size vector is kept in page table – Each page is assigned one out of k predefined sizes. Physical address grows with log 2 k bits. 01 00 11 10 00 01 10 11 00 01 00 10 10 01 01 10 10 11 10 00 A block is assigned one out of n. Calculator predefined sizes. Address In this example n=4. The Block Size Table enables fast translation! Block size vector

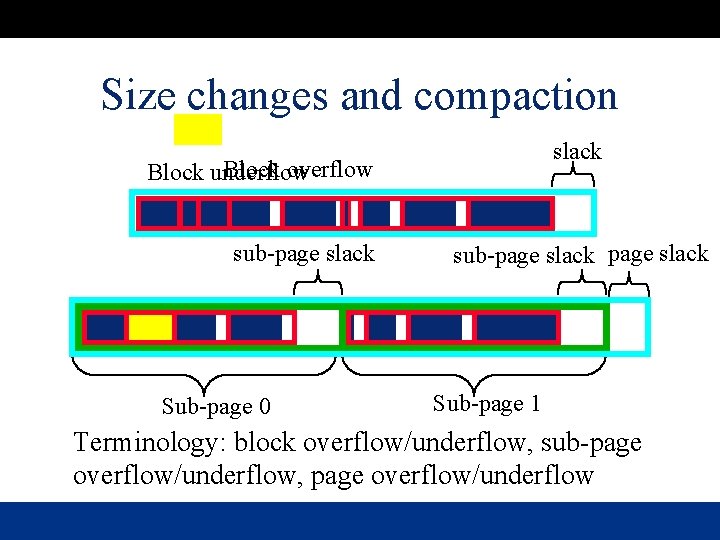

Size changes and compaction slack Block overflow Block underflow sub-page slack Sub-page 0 sub-page slack Sub-page 1 Terminology: block overflow/underflow, sub-page overflow/underflow, page overflow/underflow

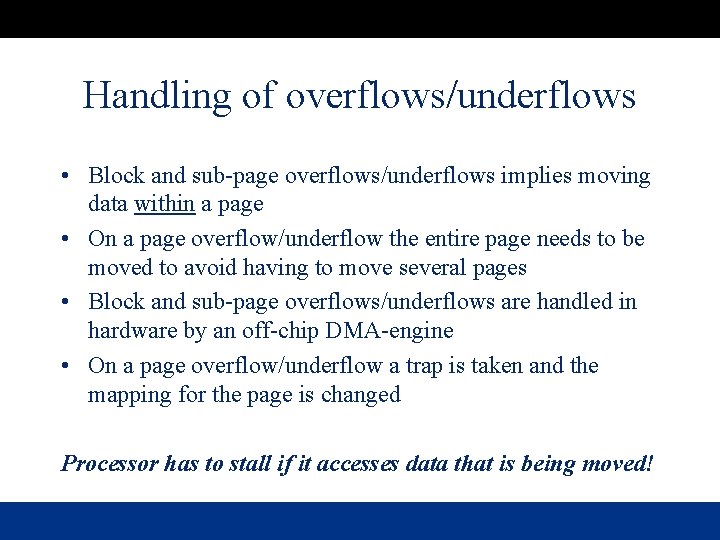

Handling of overflows/underflows • Block and sub-page overflows/underflows implies moving data within a page • On a page overflow/underflow the entire page needs to be moved to avoid having to move several pages • Block and sub-page overflows/underflows are handled in hardware by an off-chip DMA-engine • On a page overflow/underflow a trap is taken and the mapping for the page is changed Processor has to stall if it accesses data that is being moved!

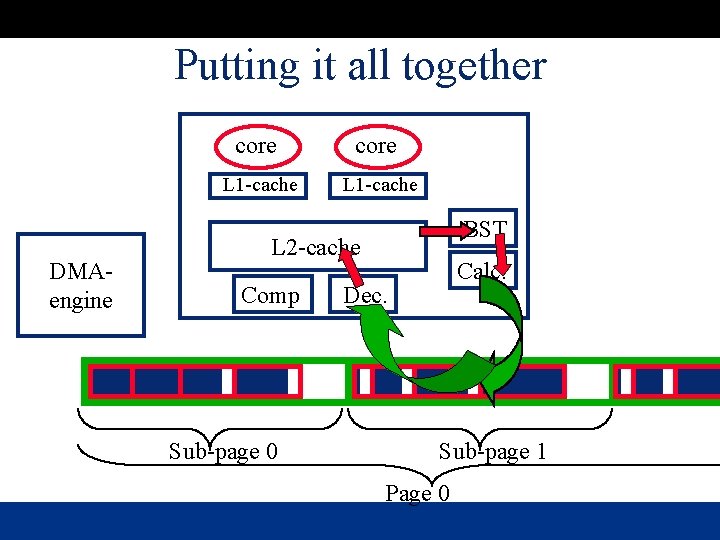

Putting it all together DMAengine core L 1 -cache BST L 2 -cache Comp Sub-page 0 Calc. Dec. Sub-page 1 Page 0

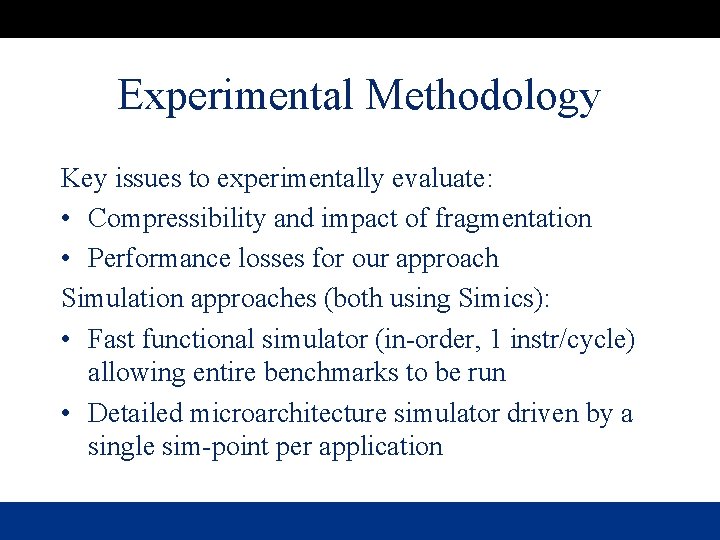

Experimental Methodology Key issues to experimentally evaluate: • Compressibility and impact of fragmentation • Performance losses for our approach Simulation approaches (both using Simics): • Fast functional simulator (in-order, 1 instr/cycle) allowing entire benchmarks to be run • Detailed microarchitecture simulator driven by a single sim-point per application

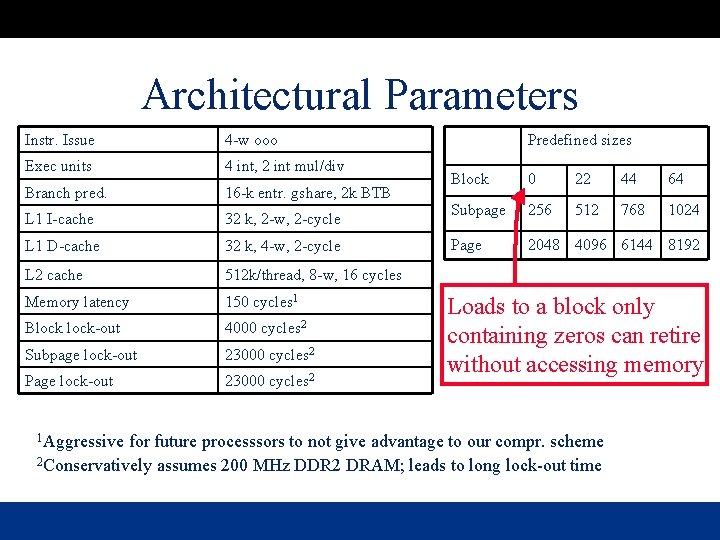

Architectural Parameters Instr. Issue 4 -w ooo Exec units 4 int, 2 int mul/div Branch pred. 16 -k entr. gshare, 2 k BTB L 1 I-cache 32 k, 2 -w, 2 -cycle L 1 D-cache 32 k, 4 -w, 2 -cycle L 2 cache 512 k/thread, 8 -w, 16 cycles Memory latency 150 cycles 1 Block-out 4000 cycles 2 Subpage lock-out 23000 cycles 2 Page lock-out 23000 cycles 2 1 Aggressive Predefined sizes Block 0 22 44 64 Subpage 256 512 768 1024 Page 2048 4096 6144 8192 Loads to a block only containing zeros can retire without accessing memory! for future processsors to not give advantage to our compr. scheme 2 Conservatively assumes 200 MHz DDR 2 DRAM; leads to long lock-out time

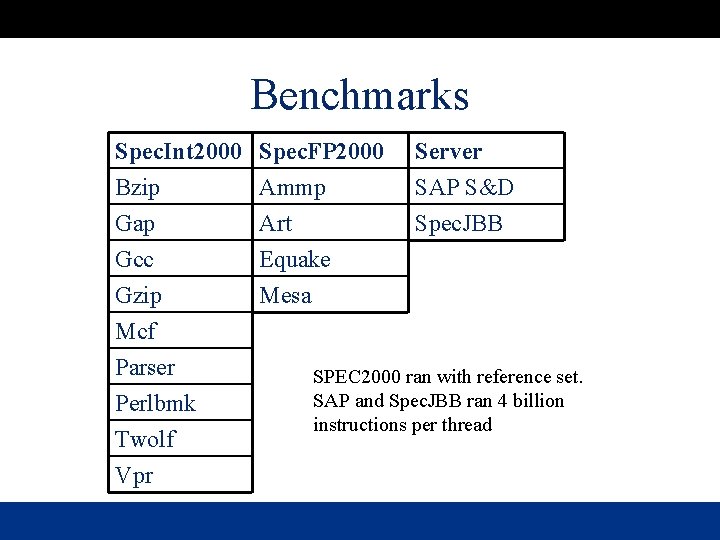

Benchmarks Spec. Int 2000 Bzip Gap Gcc Spec. FP 2000 Ammp Art Equake Gzip Mcf Parser Perlbmk Twolf Vpr Mesa Server SAP S&D Spec. JBB SPEC 2000 ran with reference set. SAP and Spec. JBB ran 4 billion instructions per thread

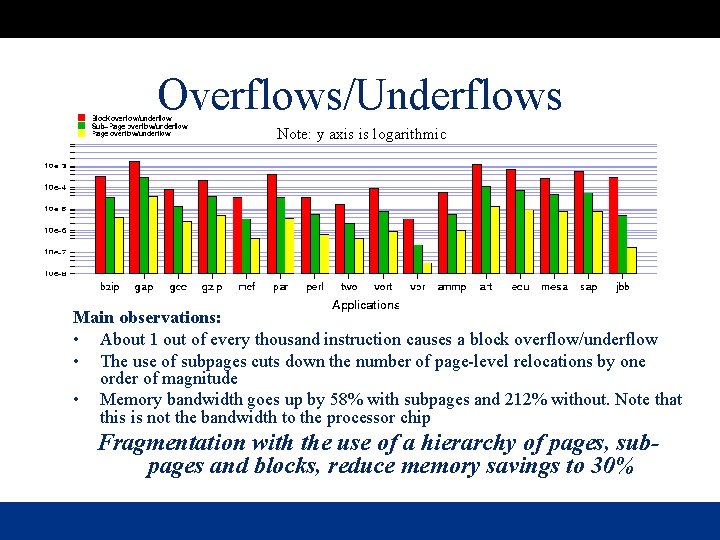

Overflows/Underflows Note: y axis is logarithmic Main observations: • About 1 out of every thousand instruction causes a block overflow/underflow • The use of subpages cuts down the number of page-level relocations by one order of magnitude • Memory bandwidth goes up by 58% with subpages and 212% without. Note that this is not the bandwidth to the processor chip Fragmentation with the use of a hierarchy of pages, subpages and blocks, reduce memory savings to 30%

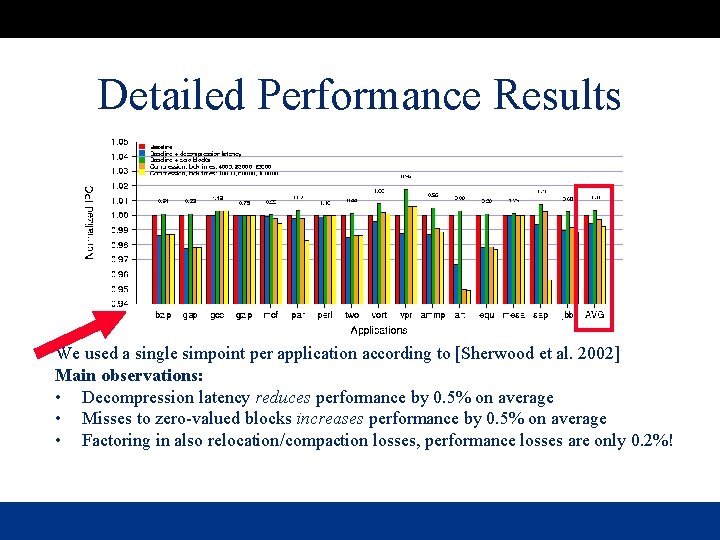

Detailed Performance Results We used a single simpoint per application according to [Sherwood et al. 2002] Main observations: • Decompression latency reduces performance by 0. 5% on average • Misses to zero-valued blocks increases performance by 0. 5% on average • Factoring in also relocation/compaction losses, performance losses are only 0. 2%!

![Related Work Early work on main memory compression: – Douglis [1993], Kjelso et al. Related Work Early work on main memory compression: – Douglis [1993], Kjelso et al.](http://slidetodoc.com/presentation_image_h2/8b2b5bc1d7702be8a0d7151d74b1aaca/image-20.jpg)

Related Work Early work on main memory compression: – Douglis [1993], Kjelso et al. [1999], and Wilson et al. [1999] – These works aimed at reducing paging overhead so the significant decompression and address translation latencies were offset by the wins More recently – – IBM MXT [Abali et al. 2001] Compresses entire memory with LZSS (64 cycle decompression latency) Translation through memory resident translation table Shields latency by huge (at that point in time) 32 -MByte cache. Sensitive to working set size Compression algorithm – Inspired by frequent-value locality work by Zhang, Yang and Gupta 2000 – Compression algorithm from Alameldeen and Wood, 2004

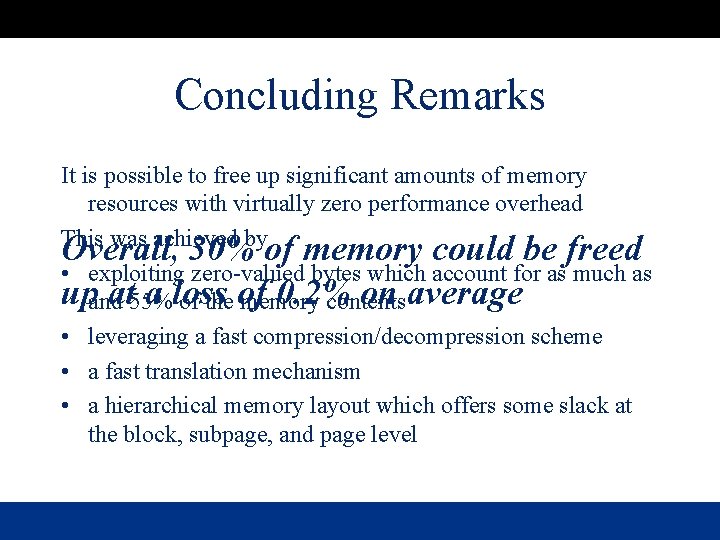

Concluding Remarks It is possible to free up significant amounts of memory resources with virtually zero performance overhead This was achieved by Overall, 30% of memory could be freed • exploiting zero-valued bytes which account for as much as upandat 55% a loss 0. 2% on average of the of memory contents • leveraging a fast compression/decompression scheme • a fast translation mechanism • a hierarchical memory layout which offers some slack at the block, subpage, and page level

Backup Slides

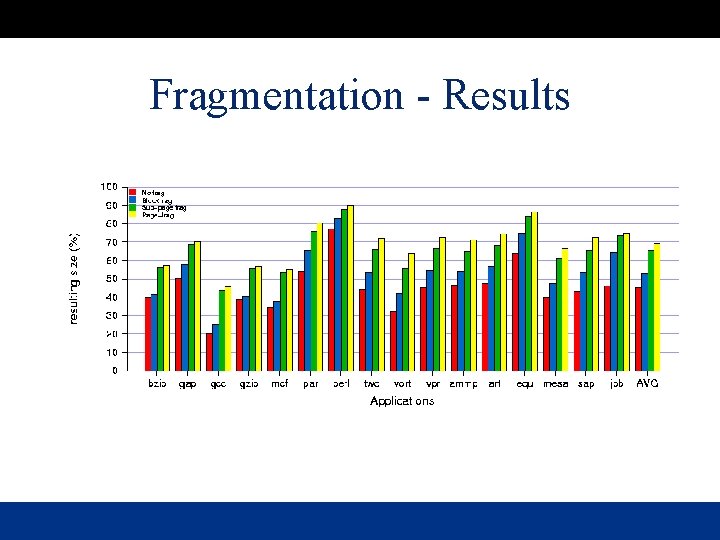

Fragmentation - Results

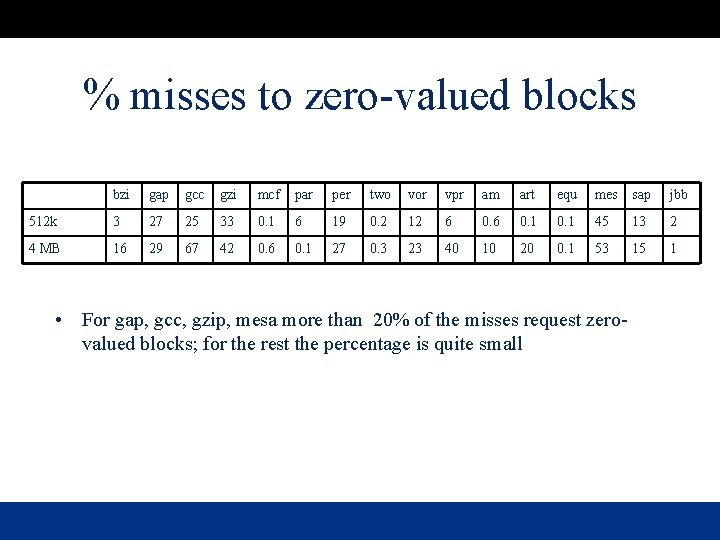

% misses to zero-valued blocks bzi gap gcc gzi mcf par per two vor vpr am art equ mes sap jbb 512 k 3 27 25 33 0. 1 6 19 0. 2 12 6 0. 1 45 13 2 4 MB 16 29 67 42 0. 6 0. 1 27 0. 3 23 40 10 20 0. 1 53 15 1 • For gap, gcc, gzip, mesa more than 20% of the misses request zerovalued blocks; for the rest the percentage is quite small

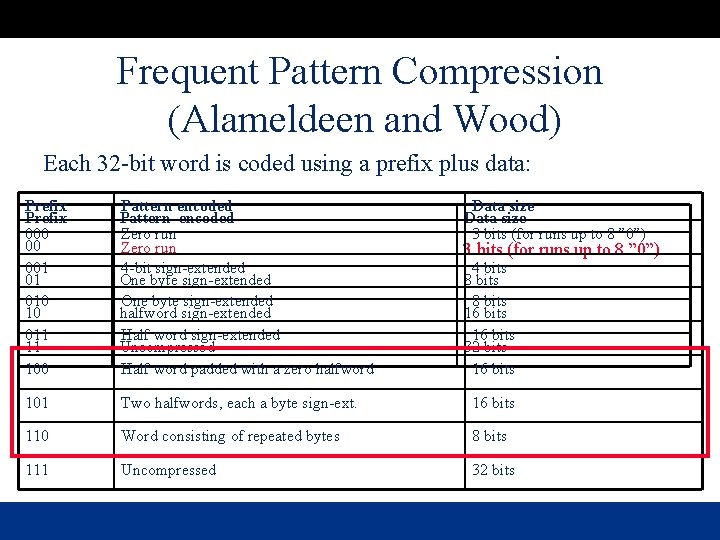

Frequent Pattern Compression (Alameldeen and Wood) Each 32 -bit word is coded using a prefix plus data: Prefix 000 00 001 01 010 10 011 11 100 Pattern encoded Zero run Zero word run 4 -bit sign-extended One byte sign-extended halfword sign-extended Half word sign-extended Uncompressed Half word padded with a zero halfword Data size 3 bits (for runs up to 8 ” 0”) 0 3 bits (for runs up to 8 ” 0”) 4 bits 8 bits 16 bits 32 bits 16 bits 101 Two halfwords, each a byte sign-ext. 16 bits 110 Word consisting of repeated bytes 8 bits 111 Uncompressed 32 bits

- Slides: 25