A Predictionbased Realtime Scheduling Advisor Peter A Dinda

A Prediction-based Real-time Scheduling Advisor Peter A. Dinda Prescience Lab Department of Computer Science Northwestern University http: //www. cs. northwestern. edu/~pdinda

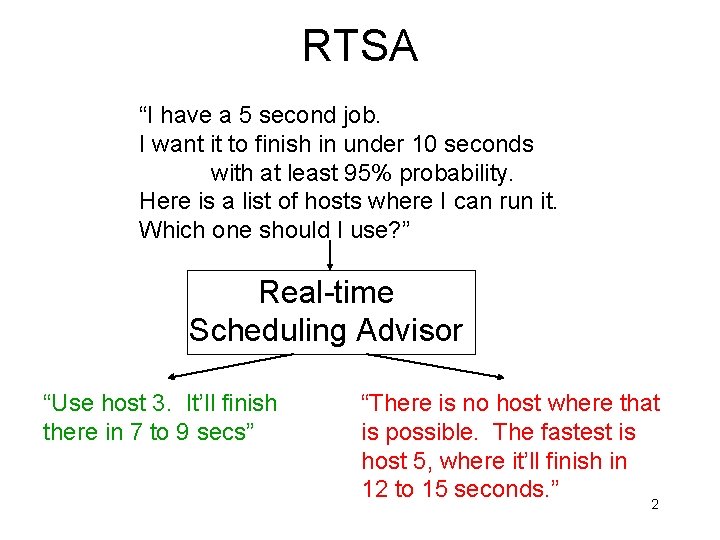

RTSA “I have a 5 second job. I want it to finish in under 10 seconds with at least 95% probability. Here is a list of hosts where I can run it. Which one should I use? ” Real-time Scheduling Advisor “Use host 3. It’ll finish there in 7 to 9 secs” “There is no host where that is possible. The fastest is host 5, where it’ll finish in 12 to 15 seconds. ” 2

Core Results • RTSA based on predictive signal processing • Layered system architecture for scalable performance prediction • Targets commodity shared, unreserved distributed environments • All at user level • Randomized trace-based evaluation giving evidence of its effectiveness • Limitations • Compute-bound tasks • Evaluation on Digital Unix platform Publicly available as part of RPS system 3

Outline • • • Motivation: interactive applications Interface Implementation Performance evaluation Conclusions and future work 4

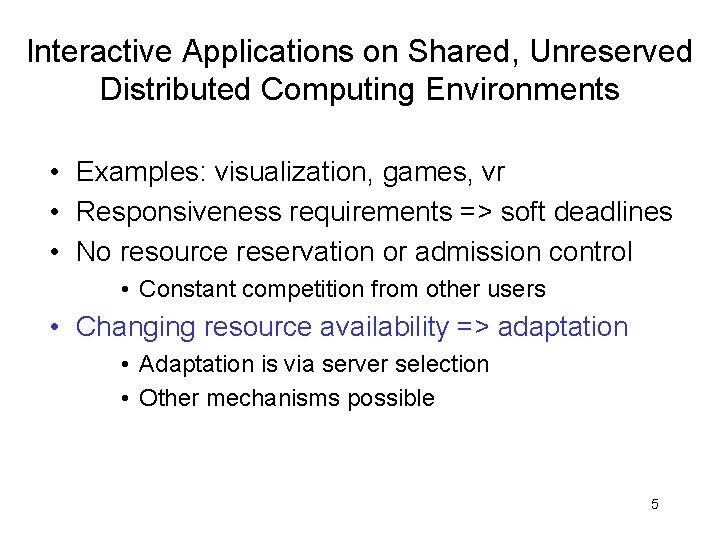

Interactive Applications on Shared, Unreserved Distributed Computing Environments • Examples: visualization, games, vr • Responsiveness requirements => soft deadlines • No resource reservation or admission control • Constant competition from other users • Changing resource availability => adaptation • Adaptation is via server selection • Other mechanisms possible 5

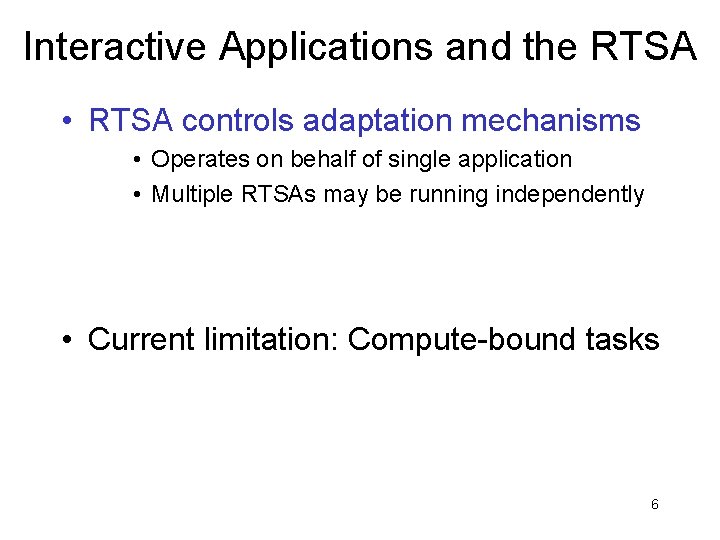

Interactive Applications and the RTSA • RTSA controls adaptation mechanisms • Operates on behalf of single application • Multiple RTSAs may be running independently • Current limitation: Compute-bound tasks 6

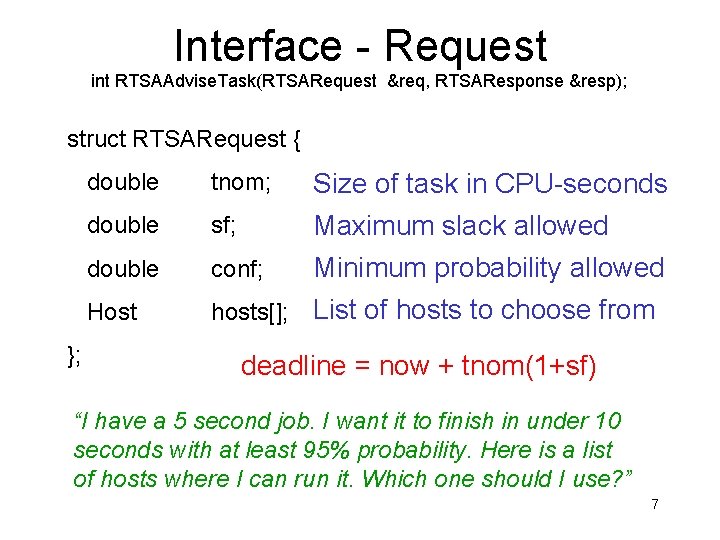

Interface - Request int RTSAAdvise. Task(RTSARequest &req, RTSAResponse &resp); struct RTSARequest { }; double tnom; double sf; double conf; Size of task in CPU-seconds Maximum slack allowed Minimum probability allowed Host hosts[]; List of hosts to choose from deadline = now + tnom(1+sf) “I have a 5 second job. I want it to finish in under 10 seconds with at least 95% probability. Here is a list of hosts where I can run it. Which one should I use? ” 7

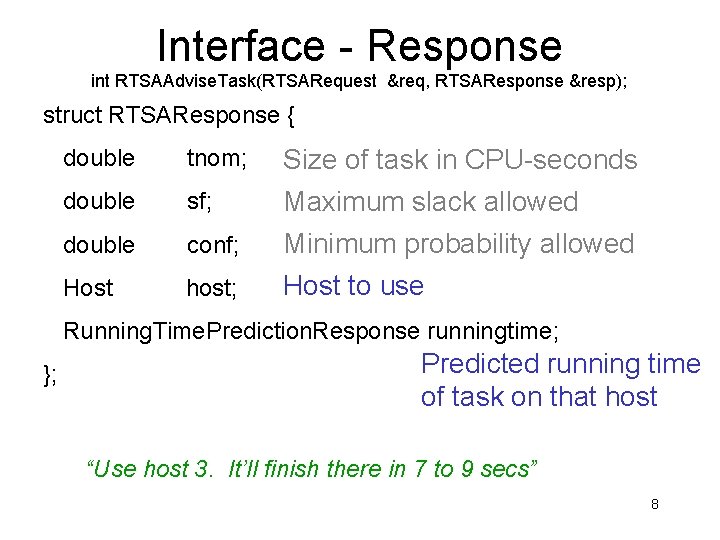

Interface - Response int RTSAAdvise. Task(RTSARequest &req, RTSAResponse &resp); struct RTSAResponse { double tnom; double sf; double conf; Size of task in CPU-seconds Maximum slack allowed Minimum probability allowed Host host; Host to use Running. Time. Prediction. Response runningtime; }; Predicted running time of task on that host “Use host 3. It’ll finish there in 7 to 9 secs” 8

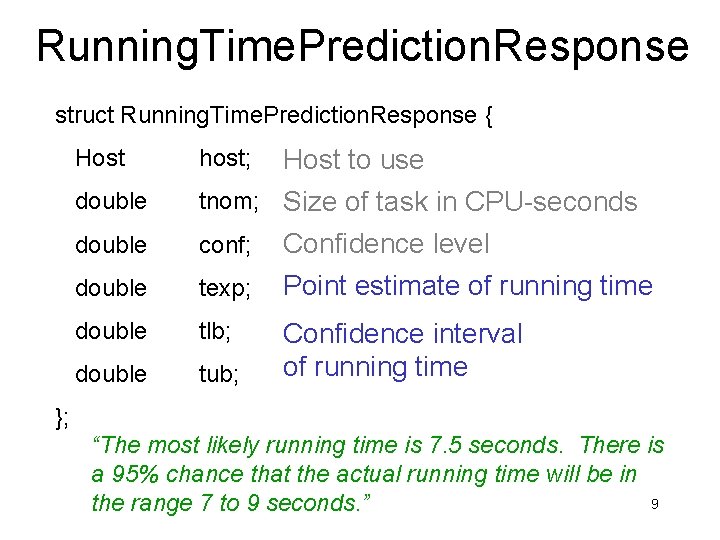

Running. Time. Prediction. Response struct Running. Time. Prediction. Response { double Host to use tnom; Size of task in CPU-seconds conf; Confidence level double texp; Point estimate of running time double tlb; double tub; Confidence interval of running time Host double host; }; “The most likely running time is 7. 5 seconds. There is a 95% chance that the actual running time will be in 9 the range 7 to 9 seconds. ”

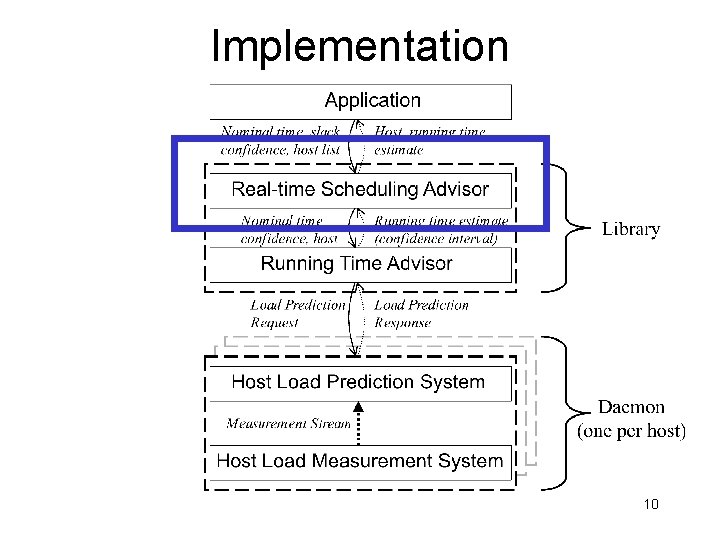

Implementation 10

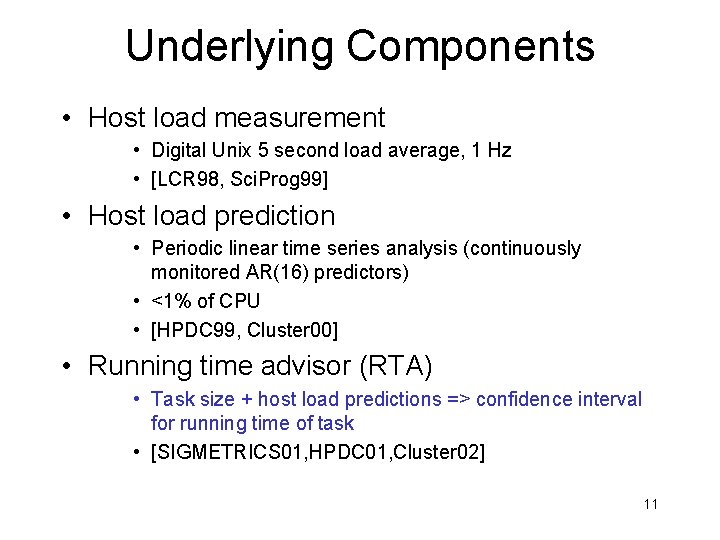

Underlying Components • Host load measurement • Digital Unix 5 second load average, 1 Hz • [LCR 98, Sci. Prog 99] • Host load prediction • Periodic linear time series analysis (continuously monitored AR(16) predictors) • <1% of CPU • [HPDC 99, Cluster 00] • Running time advisor (RTA) • Task size + host load predictions => confidence interval for running time of task • [SIGMETRICS 01, HPDC 01, Cluster 02] 11

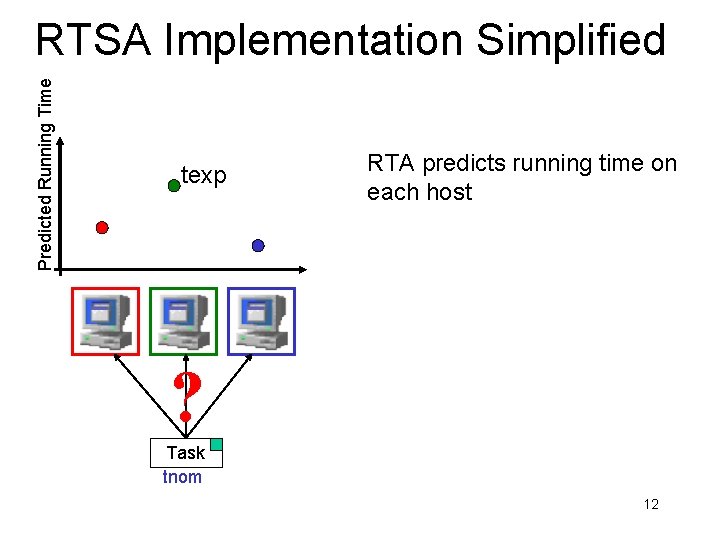

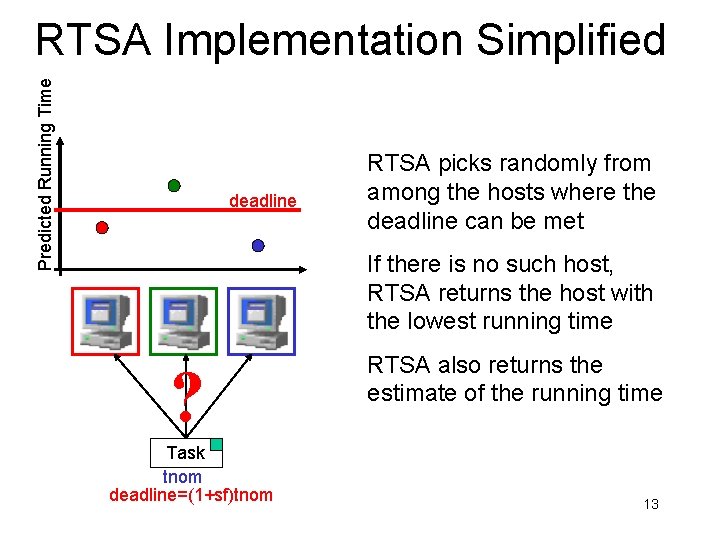

Predicted Running Time RTSA Implementation Simplified texp RTA predicts running time on each host ? Task tnom 12

Predicted Running Time RTSA Implementation Simplified deadline RTSA picks randomly from among the hosts where the deadline can be met If there is no such host, RTSA returns the host with the lowest running time ? Task tnom deadline=(1+sf)tnom RTSA also returns the estimate of the running time 13

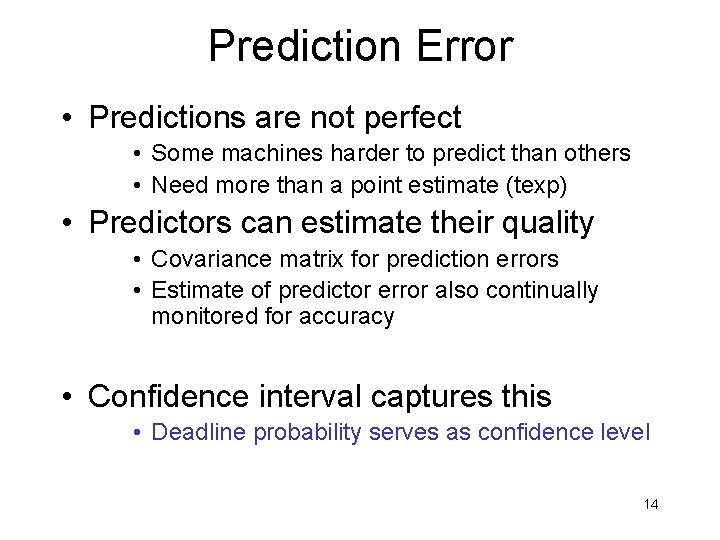

Prediction Error • Predictions are not perfect • Some machines harder to predict than others • Need more than a point estimate (texp) • Predictors can estimate their quality • Covariance matrix for prediction errors • Estimate of predictor error also continually monitored for accuracy • Confidence interval captures this • Deadline probability serves as confidence level 14

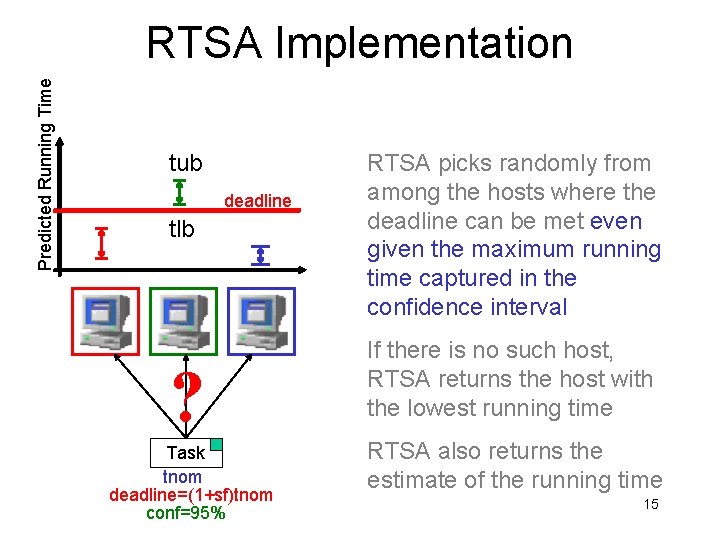

Predicted Running Time RTSA Implementation tub deadline tlb ? Task tnom deadline=(1+sf)tnom conf=95% RTSA picks randomly from among the hosts where the deadline can be met even given the maximum running time captured in the confidence interval If there is no such host, RTSA returns the host with the lowest running time RTSA also returns the estimate of the running time 15

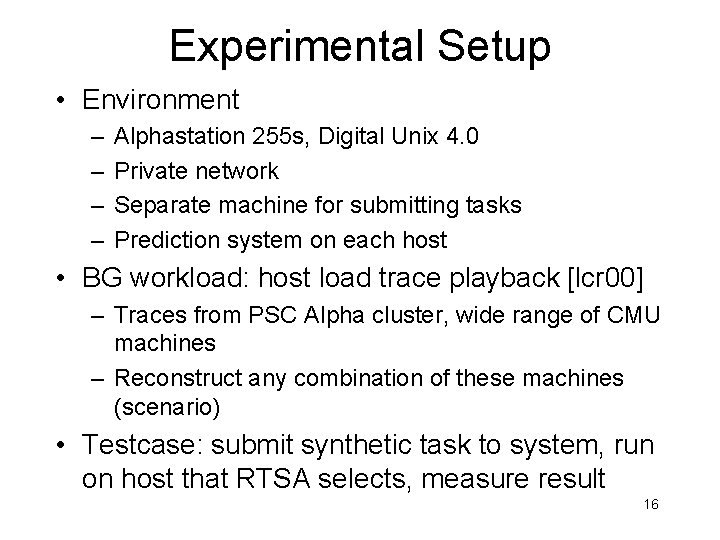

Experimental Setup • Environment – – Alphastation 255 s, Digital Unix 4. 0 Private network Separate machine for submitting tasks Prediction system on each host • BG workload: host load trace playback [lcr 00] – Traces from PSC Alpha cluster, wide range of CMU machines – Reconstruct any combination of these machines (scenario) • Testcase: submit synthetic task to system, run on host that RTSA selects, measure result 16

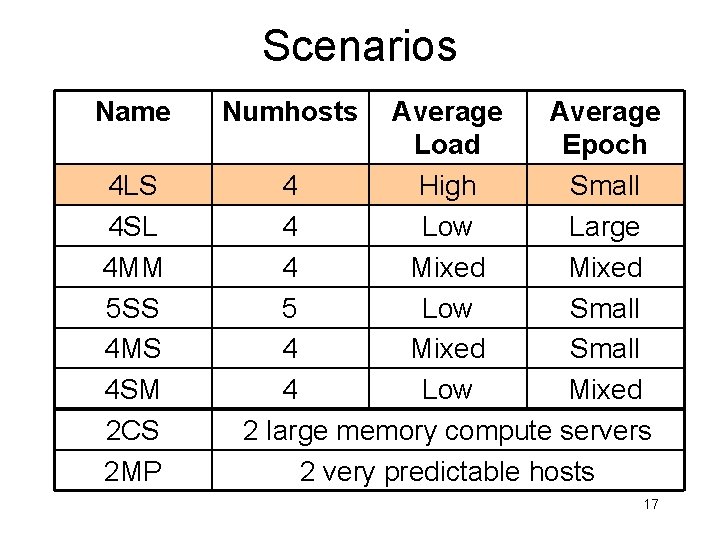

Scenarios Name 4 LS 4 SL 4 MM 5 SS 4 MS 4 SM 2 CS 2 MP Numhosts Average Load Epoch 4 High Small 4 Low Large 4 Mixed 5 Low Small 4 Mixed Small 4 Low Mixed 2 large memory compute servers 2 very predictable hosts 17

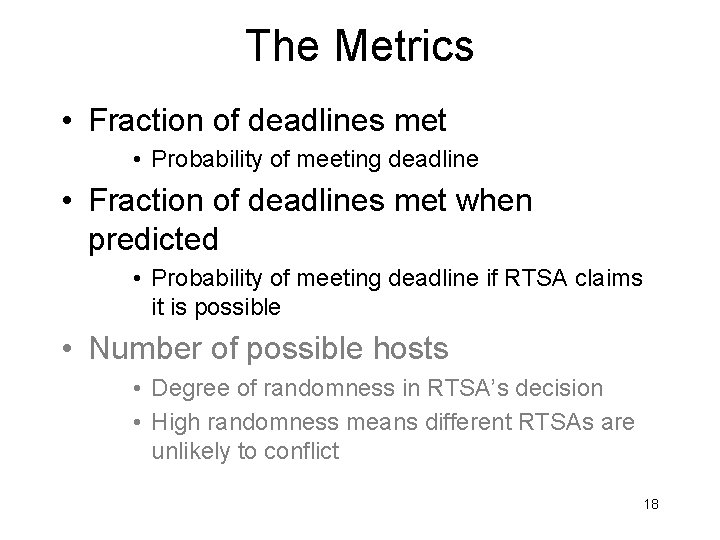

The Metrics • Fraction of deadlines met • Probability of meeting deadline • Fraction of deadlines met when predicted • Probability of meeting deadline if RTSA claims it is possible • Number of possible hosts • Degree of randomness in RTSA’s decision • High randomness means different RTSAs are unlikely to conflict 18

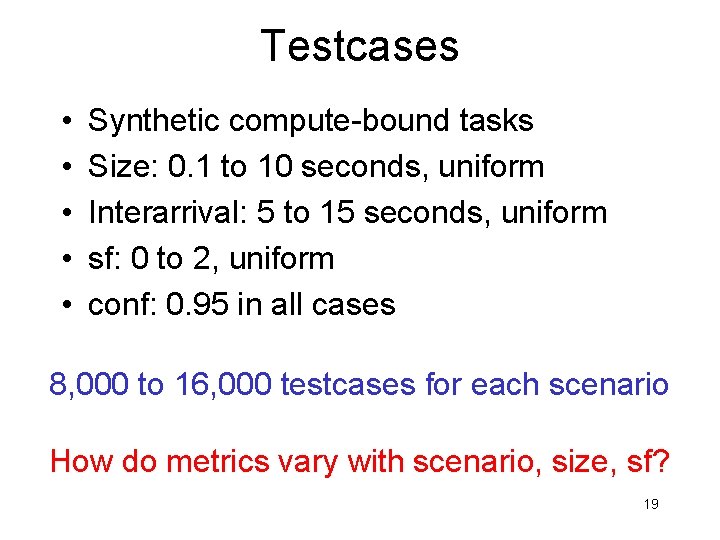

Testcases • • • Synthetic compute-bound tasks Size: 0. 1 to 10 seconds, uniform Interarrival: 5 to 15 seconds, uniform sf: 0 to 2, uniform conf: 0. 95 in all cases 8, 000 to 16, 000 testcases for each scenario How do metrics vary with scenario, size, sf? 19

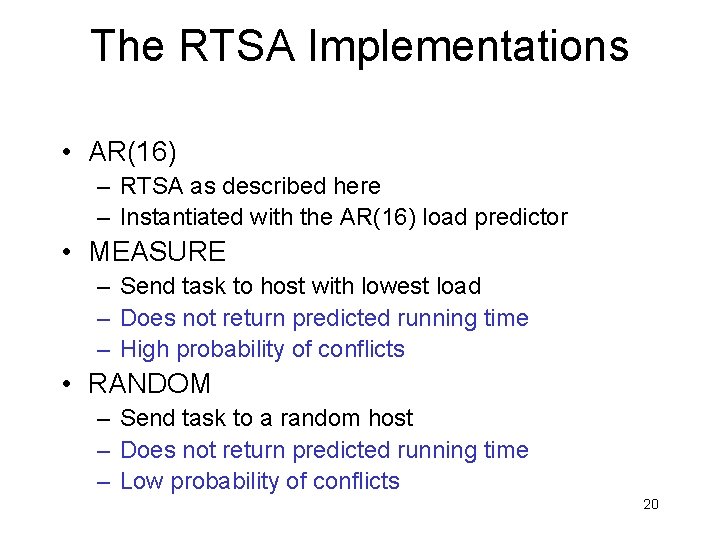

The RTSA Implementations • AR(16) – RTSA as described here – Instantiated with the AR(16) load predictor • MEASURE – Send task to host with lowest load – Does not return predicted running time – High probability of conflicts • RANDOM – Send task to a random host – Does not return predicted running time – Low probability of conflicts 20

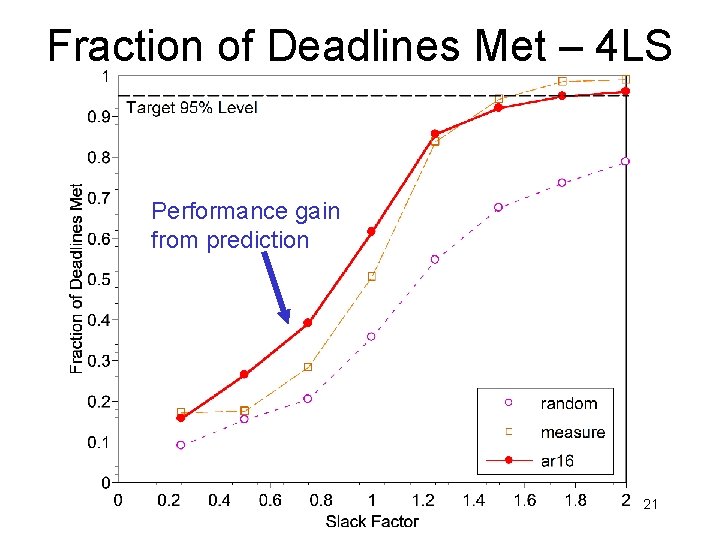

Fraction of Deadlines Met – 4 LS Performance gain from prediction 21

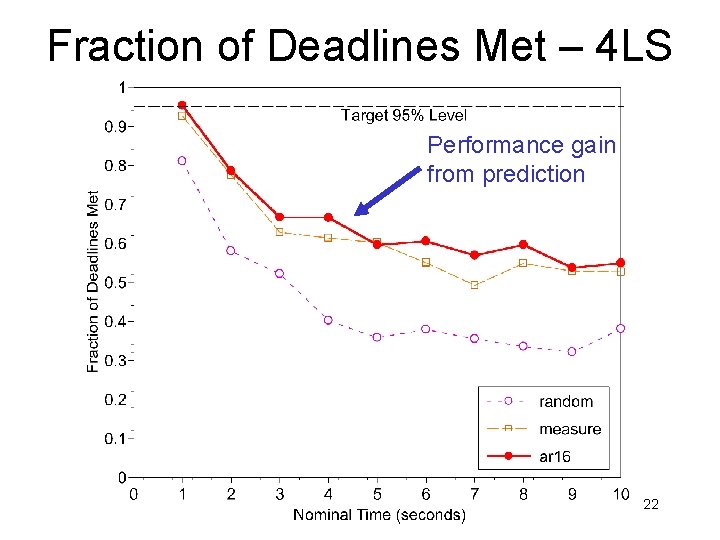

Fraction of Deadlines Met – 4 LS Performance gain from prediction 22

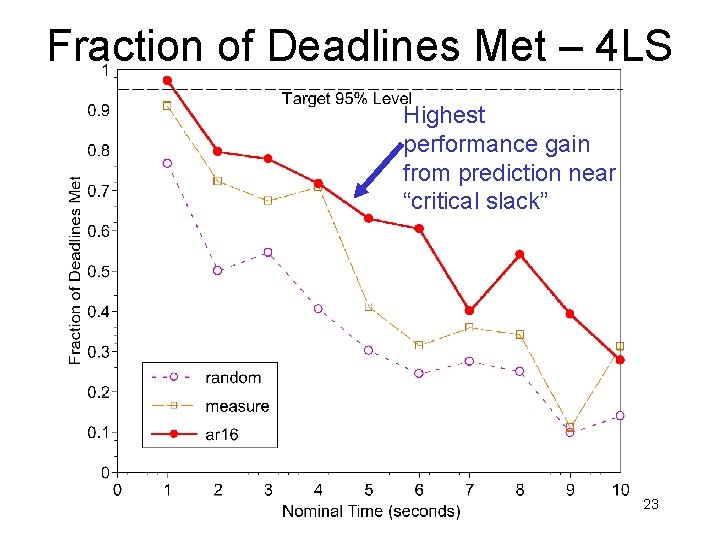

Fraction of Deadlines Met – 4 LS Highest performance gain from prediction near “critical slack” 23

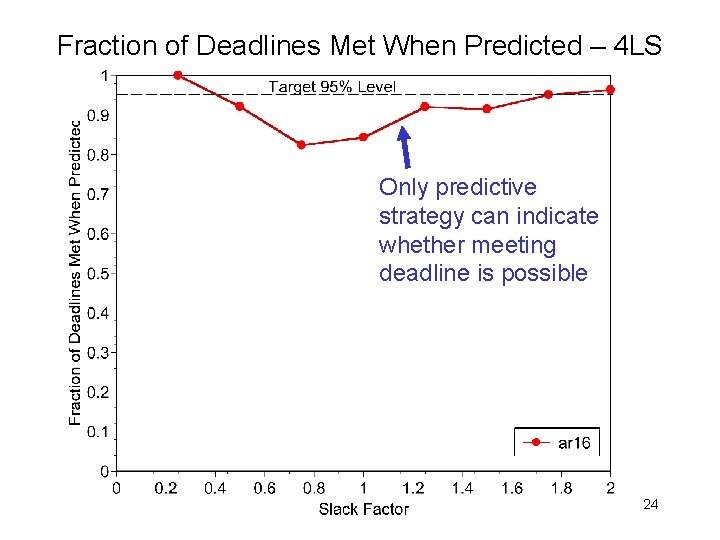

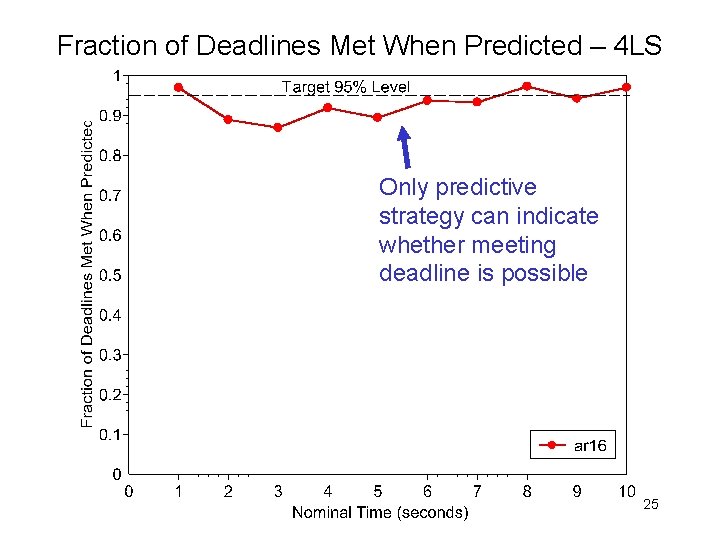

Fraction of Deadlines Met When Predicted – 4 LS Only predictive strategy can indicate whether meeting deadline is possible 24

Fraction of Deadlines Met When Predicted – 4 LS Only predictive strategy can indicate whether meeting deadline is possible 25

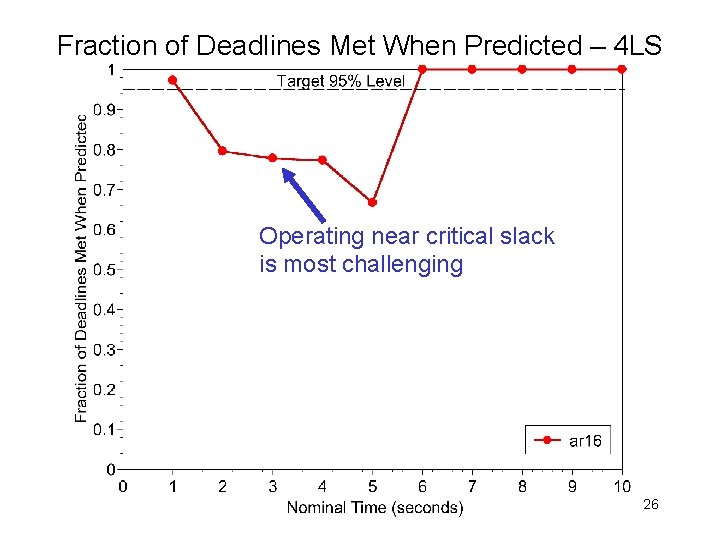

Fraction of Deadlines Met When Predicted – 4 LS Operating near critical slack is most challenging 26

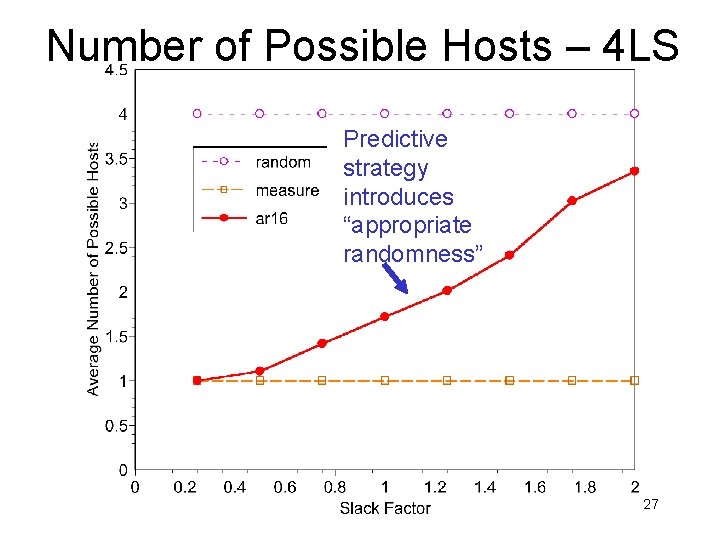

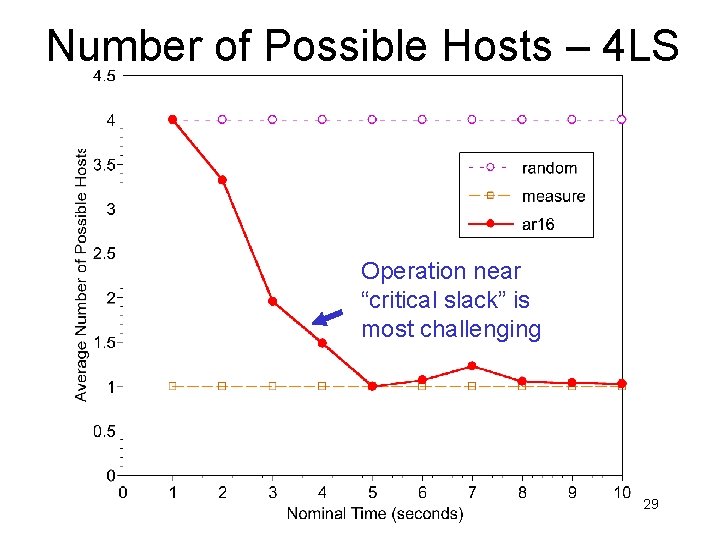

Number of Possible Hosts – 4 LS Predictive strategy introduces “appropriate randomness” 27

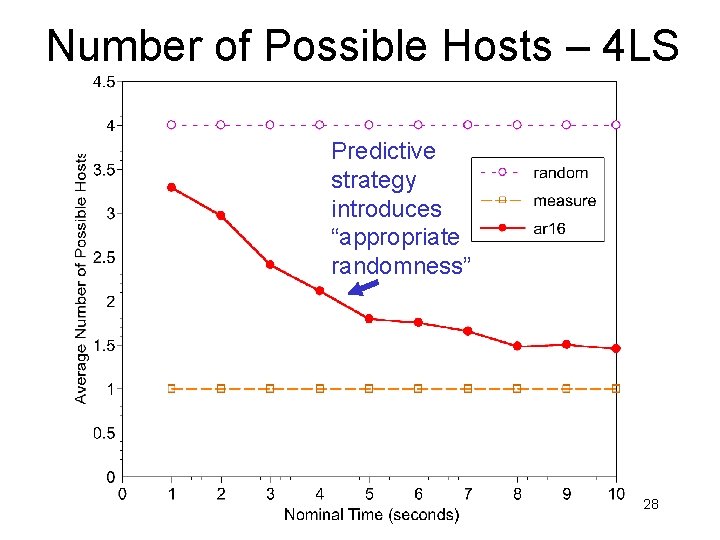

Number of Possible Hosts – 4 LS Predictive strategy introduces “appropriate randomness” 28

Number of Possible Hosts – 4 LS Operation near “critical slack” is most challenging 29

Conclusions and Future Work • • Introduced RTSA concept Described prediction-based implementation Demonstrated feasibility Evaluated performance • Current and future work – Incorporate communication, memory, disk – Improved predictive models 30

For More Information • Peter Dinda – http: //www. cs. northwestern. edu/~pdinda • RPS – http: //www. cs. northwestern. edu/~RPS • Prescience Lab – http: //www. cs. northwestern. edu/~plab 31

- Slides: 31