A New GPCA Algorithm for Clustering Subspaces by

A New GPCA Algorithm for Clustering Subspaces by Fitting, Differentiating and Dividing Polynomials René Vidal Yi Ma Jacopo Piazzi Biomedical Engineering Johns Hopkins University Elect. & Comp. Eng. University of Illinois Mechanical Engineering Johns Hopkins University

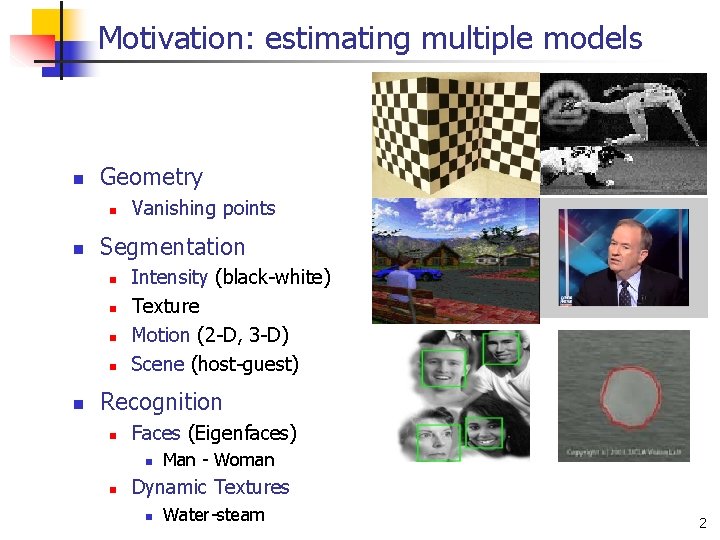

Motivation: estimating multiple models n Geometry n n Segmentation n n Vanishing points Intensity (black-white) Texture Motion (2 -D, 3 -D) Scene (host-guest) Recognition n Faces (Eigenfaces) n n Man - Woman Dynamic Textures n Water-steam 2

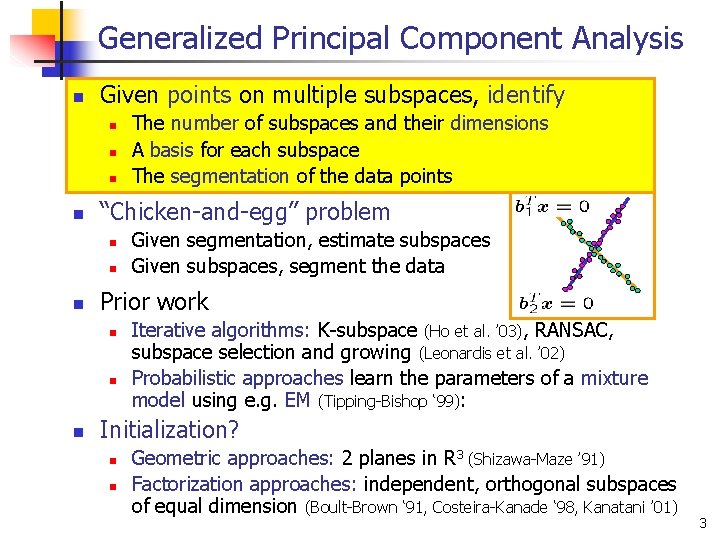

Generalized Principal Component Analysis n Given points on multiple subspaces, identify n n “Chicken-and-egg” problem n n n Given segmentation, estimate subspaces Given subspaces, segment the data Prior work n n n The number of subspaces and their dimensions A basis for each subspace The segmentation of the data points Iterative algorithms: K-subspace (Ho et al. ’ 03), RANSAC, subspace selection and growing (Leonardis et al. ’ 02) Probabilistic approaches learn the parameters of a mixture model using e. g. EM (Tipping-Bishop ‘ 99): Initialization? n n Geometric approaches: 2 planes in R 3 (Shizawa-Maze ’ 91) Factorization approaches: independent, orthogonal subspaces of equal dimension (Boult-Brown ‘ 91, Costeira-Kanade ‘ 98, Kanatani ’ 01) 3

Our approach to segmentation (GPCA) n We consider the most general case n n Subspaces of unknown and possibly different dimensions Subspaces may intersect arbitrarily (not only at the origin) Subspaces do not need to be orthogonal Multiple subspaces can be represented with multiple homogeneous polynomials n n n Number of subspaces = degree of a polynomial Subspace basis = derivatives of a polynomial Subspace clustering is algebraically equivalent to n n n Polynomial fitting Polynomial differentiation Polynomial division 4

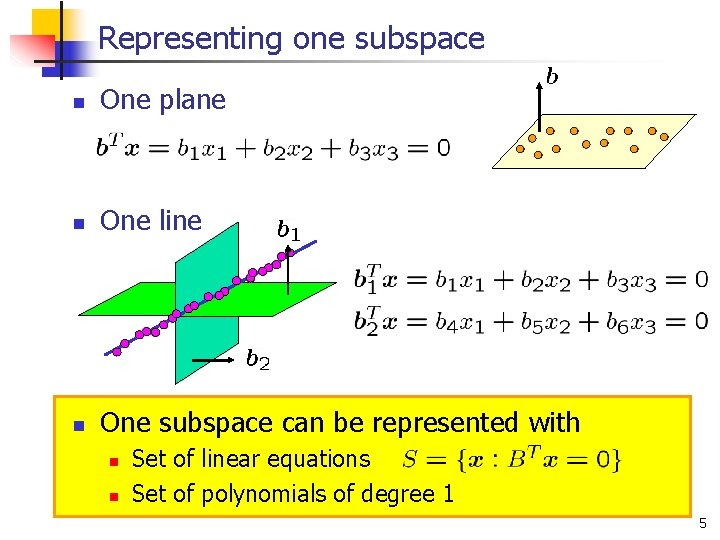

Representing one subspace n One plane n One line n One subspace can be represented with n n Set of linear equations Set of polynomials of degree 1 5

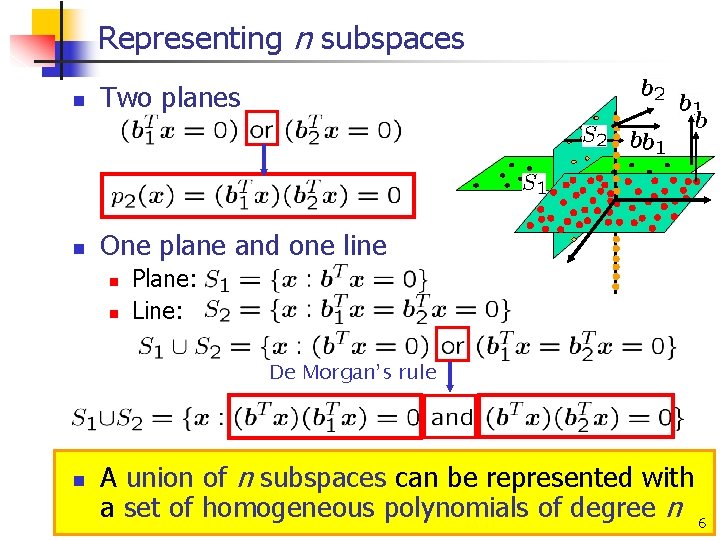

Representing n subspaces n Two planes n One plane and one line n n Plane: Line: De Morgan’s rule n A union of n subspaces can be represented with a set of homogeneous polynomials of degree n 6

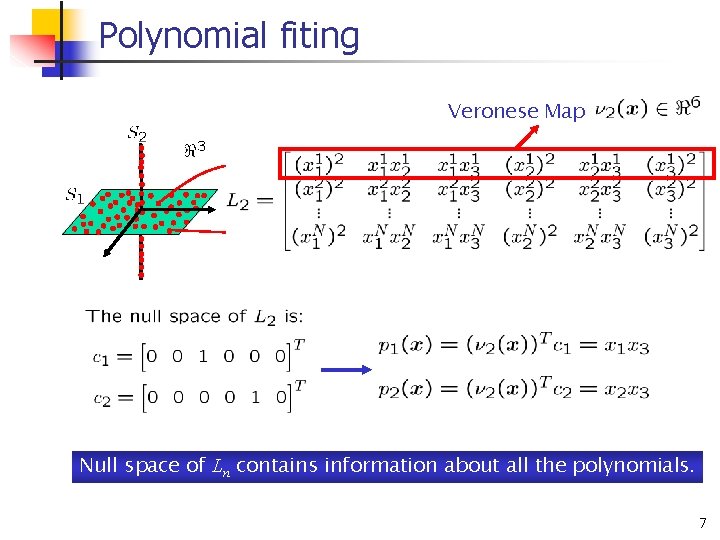

Polynomial fiting Veronese Map Null space of Ln contains information about all the polynomials. 7

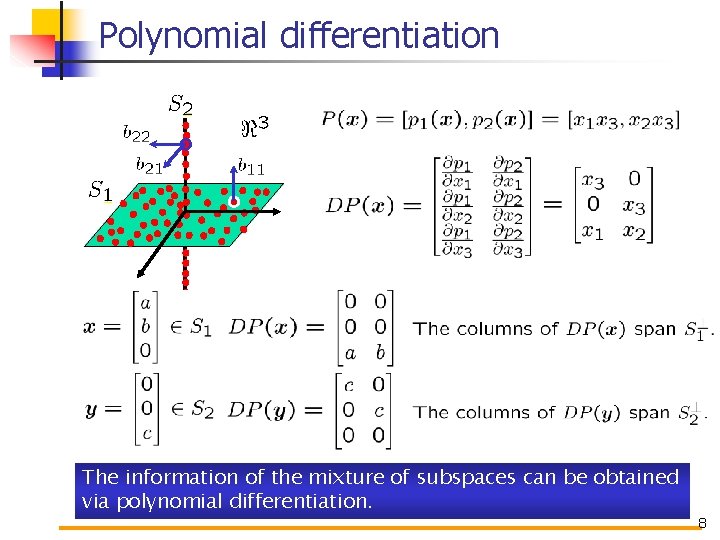

Polynomial differentiation The information of the mixture of subspaces can be obtained via polynomial differentiation. 8

Hybrid decoupling polynomial 9

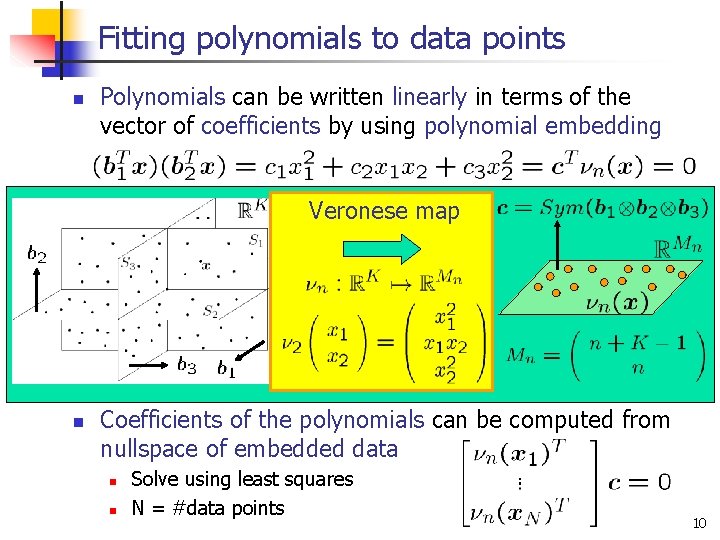

Fitting polynomials to data points n Polynomials can be written linearly in terms of the vector of coefficients by using polynomial embedding Veronese map n Coefficients of the polynomials can be computed from nullspace of embedded data n n Solve using least squares N = #data points 10

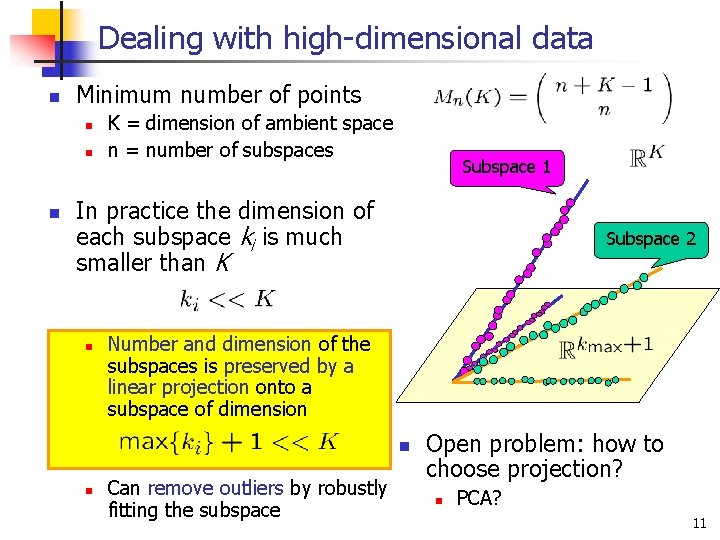

Dealing with high-dimensional data n Minimum number of points n n n K = dimension of ambient space n = number of subspaces Subspace 1 In practice the dimension of each subspace ki is much smaller than K n Subspace 2 Number and dimension of the subspaces is preserved by a linear projection onto a subspace of dimension n n Can remove outliers by robustly fitting the subspace Open problem: how to choose projection? n PCA? 11

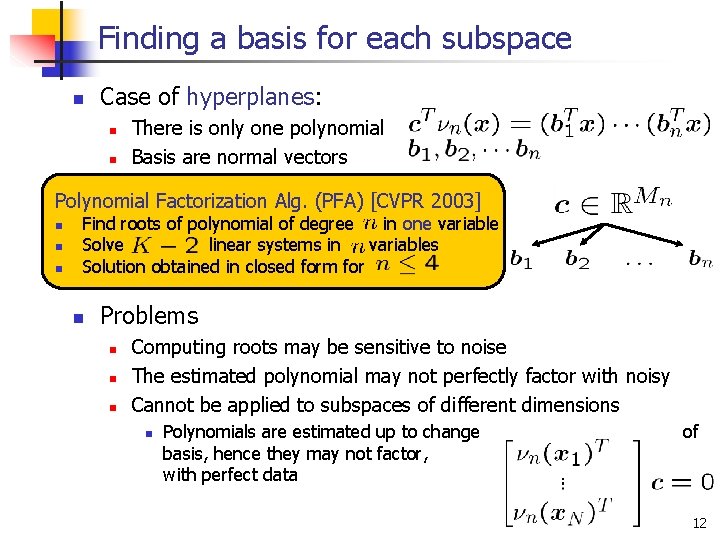

Finding a basis for each subspace n Case of hyperplanes: n n There is only one polynomial Basis are normal vectors Polynomial Factorization Alg. (PFA) [CVPR 2003] n n n Find roots of polynomial of degree in one variable Solve linear systems in variables Solution obtained in closed form for n Problems n n n Computing roots may be sensitive to noise The estimated polynomial may not perfectly factor with noisy Cannot be applied to subspaces of different dimensions n Polynomials are estimated up to change basis, hence they may not factor, with perfect data of even 12

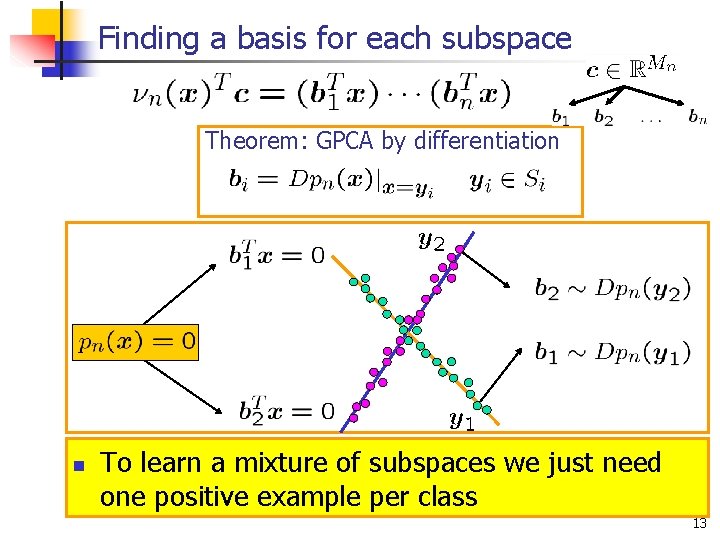

Finding a basis for each subspace Theorem: GPCA by differentiation n To learn a mixture of subspaces we just need one positive example per class 13

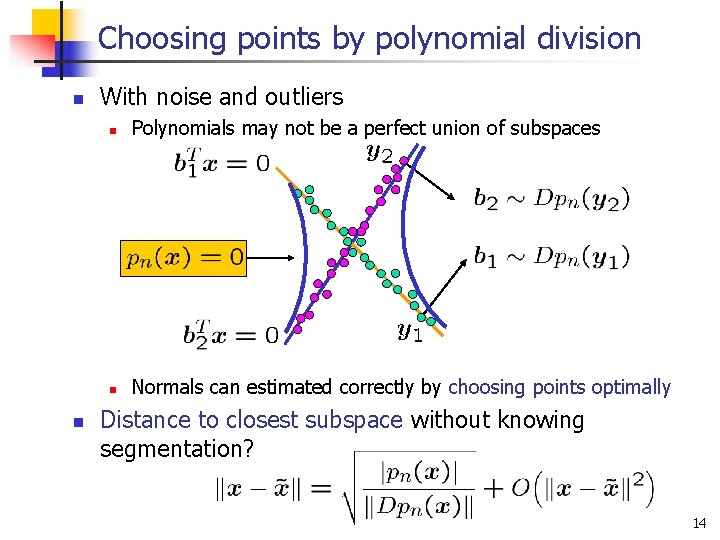

Choosing points by polynomial division n n With noise and outliers n Polynomials may not be a perfect union of subspaces n Normals can estimated correctly by choosing points optimally Distance to closest subspace without knowing segmentation? 14

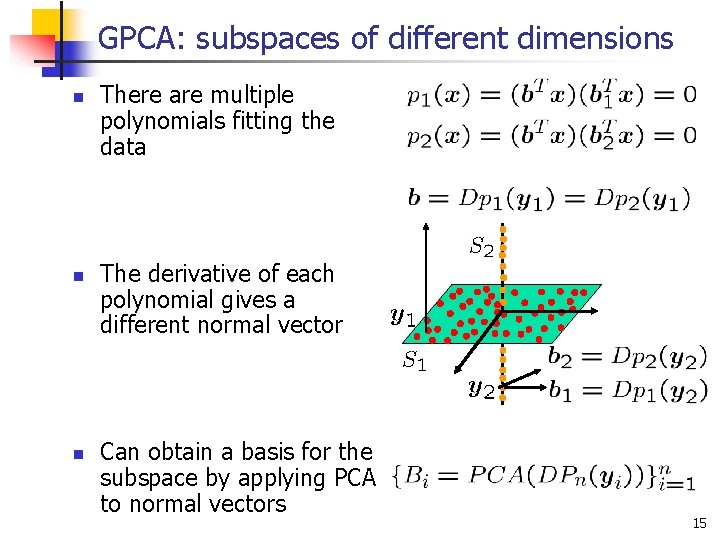

GPCA: subspaces of different dimensions n n n There are multiple polynomials fitting the data The derivative of each polynomial gives a different normal vector Can obtain a basis for the subspace by applying PCA to normal vectors 15

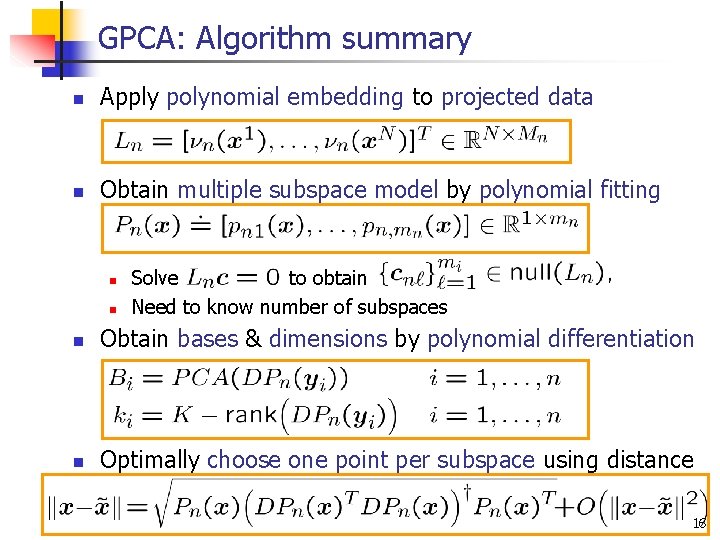

GPCA: Algorithm summary n Apply polynomial embedding to projected data n Obtain multiple subspace model by polynomial fitting n n Solve to obtain Need to know number of subspaces n Obtain bases & dimensions by polynomial differentiation n Optimally choose one point per subspace using distance 16

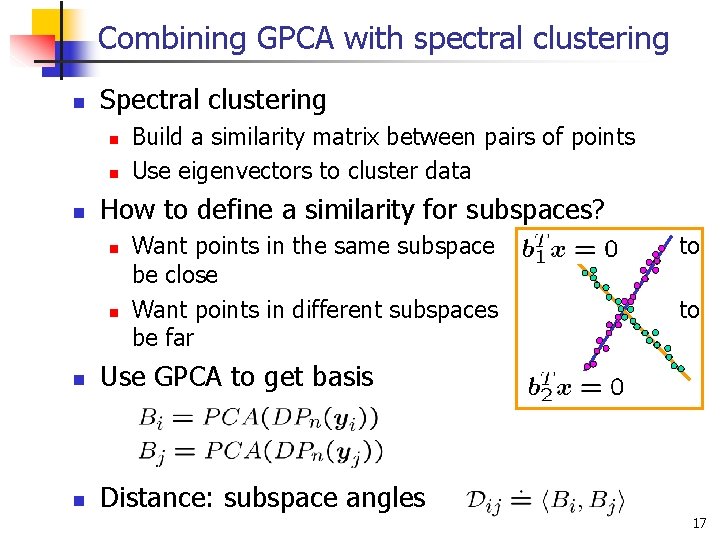

Combining GPCA with spectral clustering n Spectral clustering n n n Build a similarity matrix between pairs of points Use eigenvectors to cluster data How to define a similarity for subspaces? n n Want points in the same subspace be close Want points in different subspaces be far n Use GPCA to get basis n Distance: subspace angles to to 17

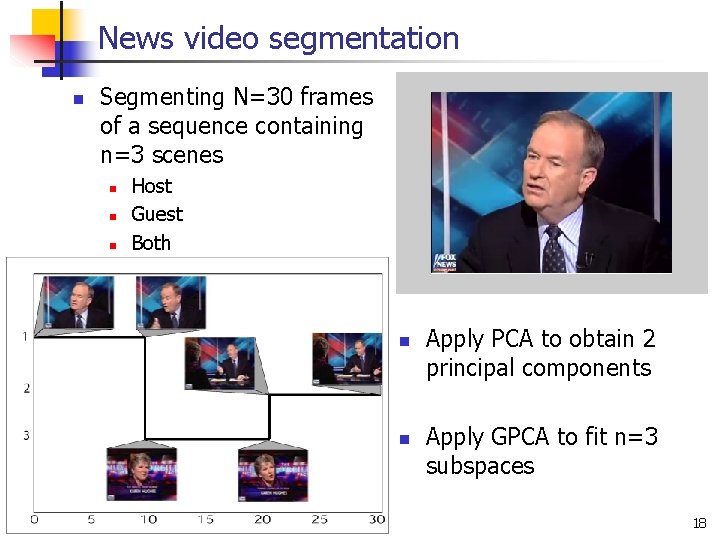

News video segmentation n Segmenting N=30 frames of a sequence containing n=3 scenes n n n Host Guest Both n n Apply PCA to obtain 2 principal components Apply GPCA to fit n=3 subspaces 18

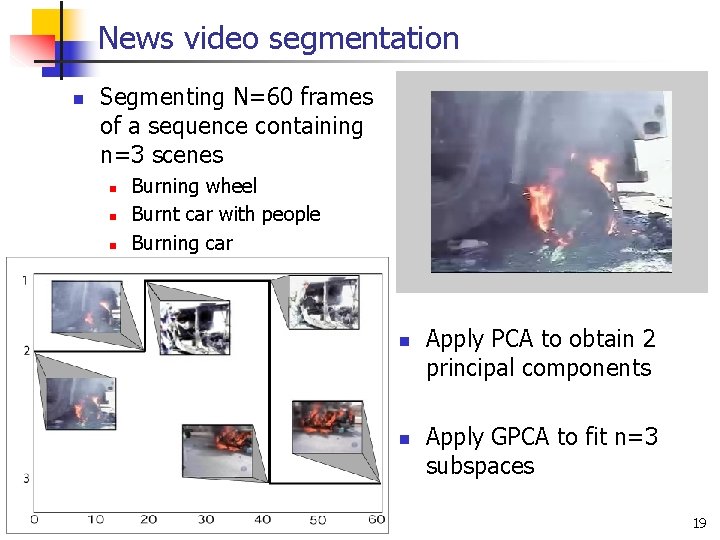

News video segmentation n Segmenting N=60 frames of a sequence containing n=3 scenes n n n Burning wheel Burnt car with people Burning car n n Apply PCA to obtain 2 principal components Apply GPCA to fit n=3 subspaces 19

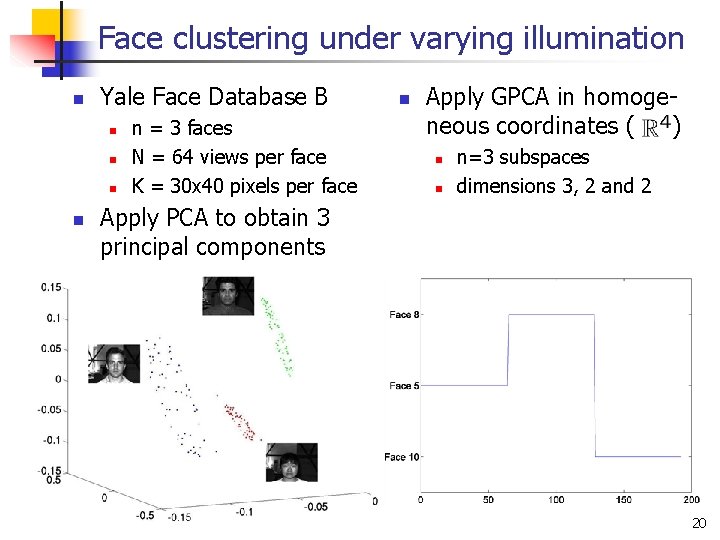

Face clustering under varying illumination n Yale Face Database B n n n = 3 faces N = 64 views per face K = 30 x 40 pixels per face n Apply GPCA in homogeneous coordinates ( ) n n n=3 subspaces dimensions 3, 2 and 2 Apply PCA to obtain 3 principal components 20

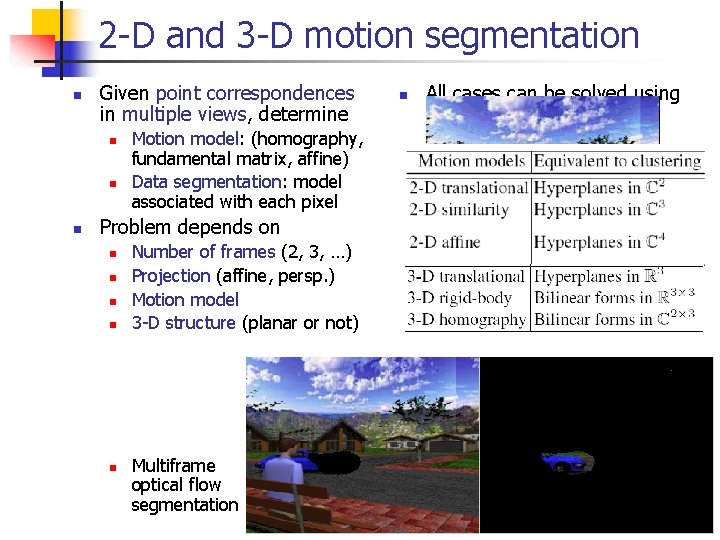

2 -D and 3 -D motion segmentation n Given point correspondences in multiple views, determine n n All cases can be solved using complex GPCA (ECCV’ 04) Motion model: (homography, fundamental matrix, affine) Data segmentation: model associated with each pixel Problem depends on n n Number of frames (2, 3, …) Projection (affine, persp. ) Motion model 3 -D structure (planar or not) Multiframe optical flow segmentation 21

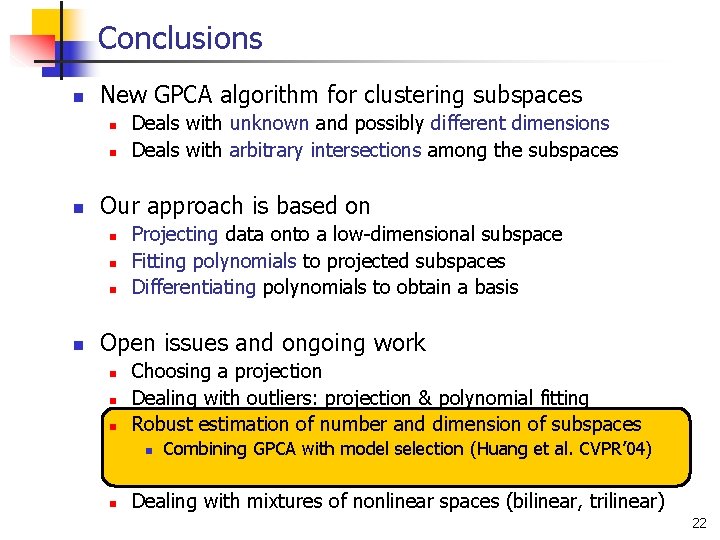

Conclusions n New GPCA algorithm for clustering subspaces n n n Our approach is based on n n Deals with unknown and possibly different dimensions Deals with arbitrary intersections among the subspaces Projecting data onto a low-dimensional subspace Fitting polynomials to projected subspaces Differentiating polynomials to obtain a basis Open issues and ongoing work n n n Choosing a projection Dealing with outliers: projection & polynomial fitting Robust estimation of number and dimension of subspaces n n Combining GPCA with model selection (Huang et al. CVPR’ 04) Dealing with mixtures of nonlinear spaces (bilinear, trilinear) 22

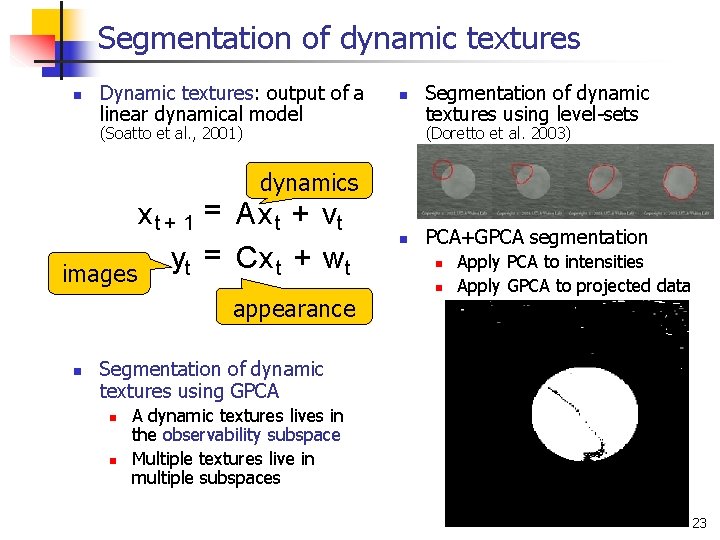

Segmentation of dynamic textures n Dynamic textures: output of a linear dynamical model n (Soatto et al. , 2001) Segmentation of dynamic textures using level-sets (Doretto et al. 2003) dynamics x t + 1 = Ax t + vt = Cx t + wt y t images appearance n n PCA+GPCA segmentation n n Apply PCA to intensities Apply GPCA to projected data Segmentation of dynamic textures using GPCA n n A dynamic textures lives in the observability subspace Multiple textures live in multiple subspaces 23

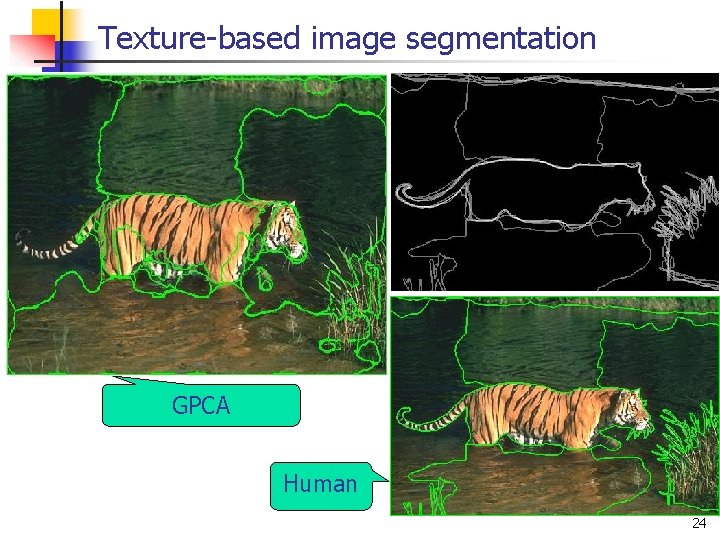

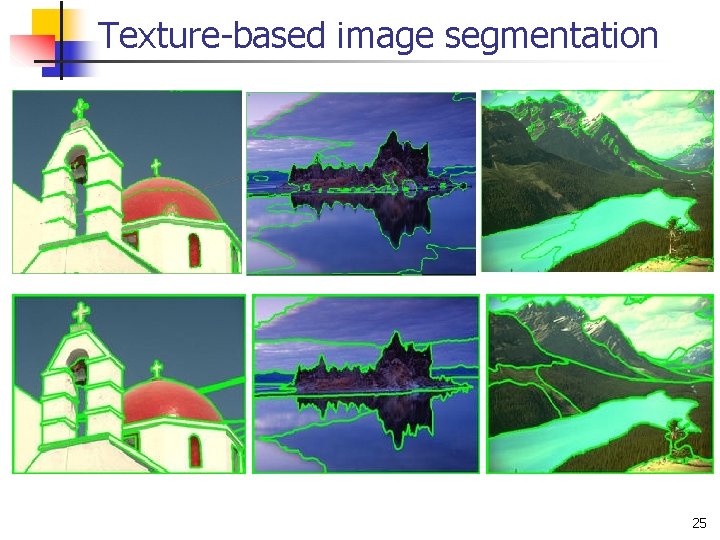

Texture-based image segmentation GPCA Human 24

Texture-based image segmentation 25

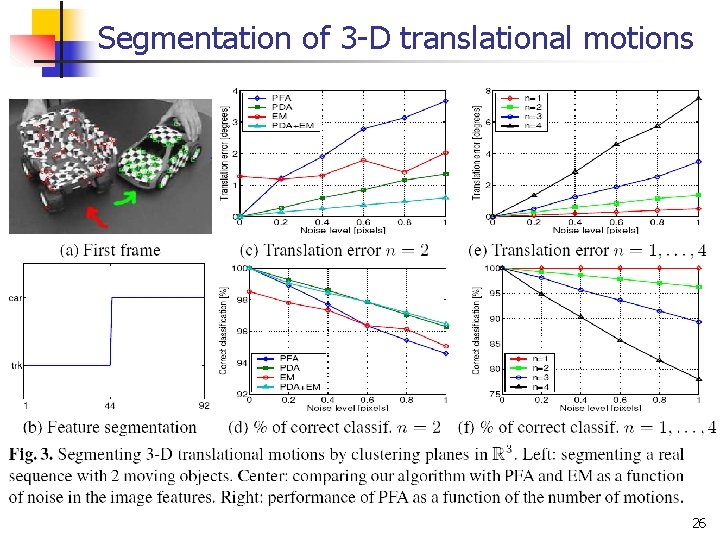

Segmentation of 3 -D translational motions 26

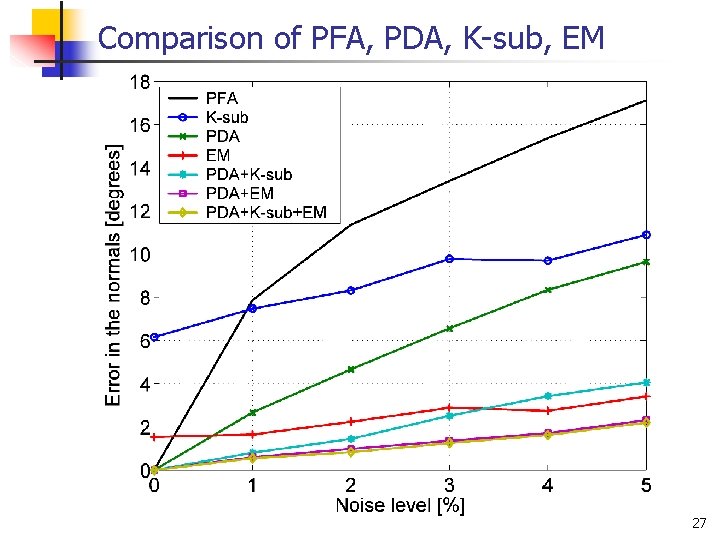

Comparison of PFA, PDA, K-sub, EM 27

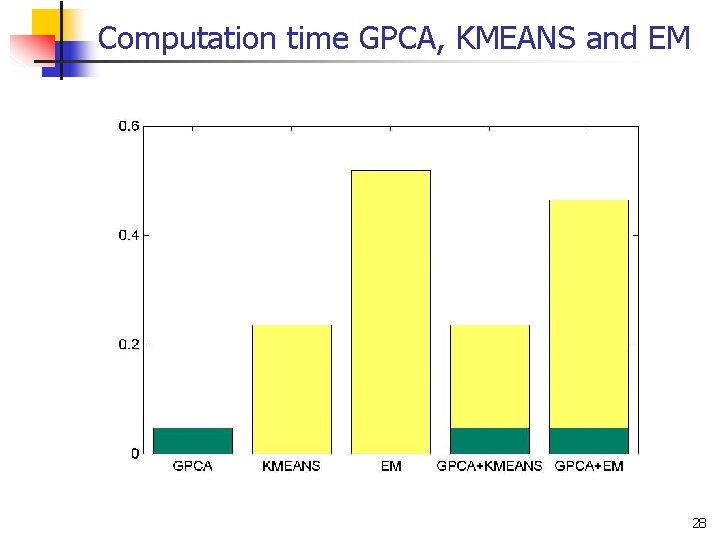

Computation time GPCA, KMEANS and EM 28

- Slides: 28