A MultiAgent Learning Approach to Online Distributed Resource

A Multi-Agent Learning Approach to Online Distributed Resource Allocation Chongjie Zhang Victor Lesser Prashant Shenoy Computer Science Department University of Massachusetts Amherst

Focus • This paper presents a multi-agent learning (MAL) approach to address resource sharing in cluster networks. – Exploit unknown task arrival patterns • Problem characteristics: – Realistic – Multiple agents – Partial observability – No global reward signal – Communication delay – Two interacting learning problems

Increasing Computing Demands • “Software as a service” is becomeing a popular IT business model. • Challenging to build large computing infrastructure to host such wide-spread online services.

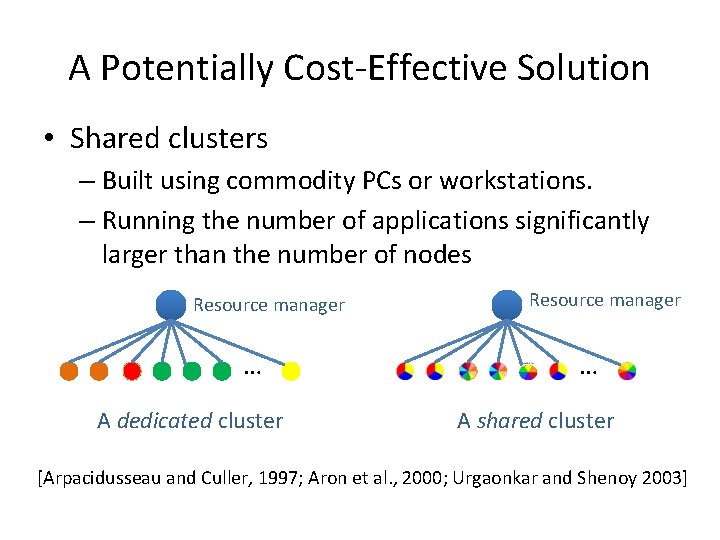

A Potentially Cost-Effective Solution • Shared clusters – Built using commodity PCs or workstations. – Running the number of applications significantly larger than the number of nodes Resource manager … A dedicated cluster Resource manager … A shared cluster [Arpacidusseau and Culler, 1997; Aron et al. , 2000; Urgaonkar and Shenoy 2003]

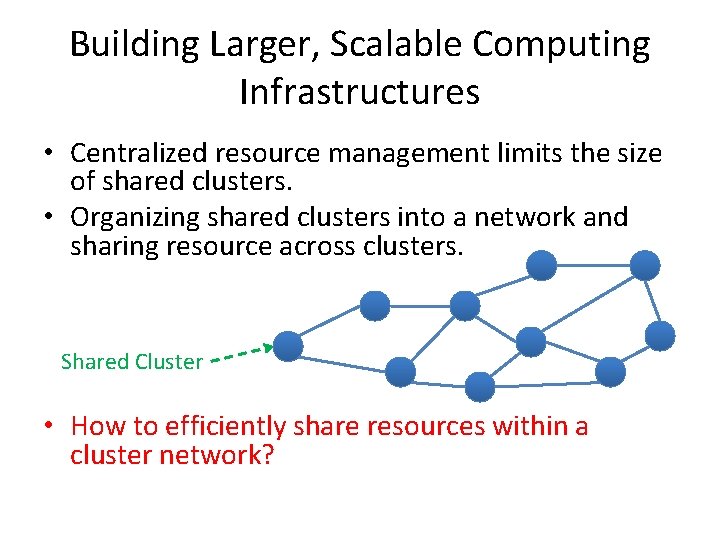

Building Larger, Scalable Computing Infrastructures • Centralized resource management limits the size of shared clusters. • Organizing shared clusters into a network and sharing resource across clusters. Shared Cluster • How to efficiently share resources within a cluster network?

Outline • Problem Formulation • Fair Action Learning Algorithm • Learning Distributed Resource Allocation – Local Allocation Decision – Task Routing Decision • Experimental Results • Summary

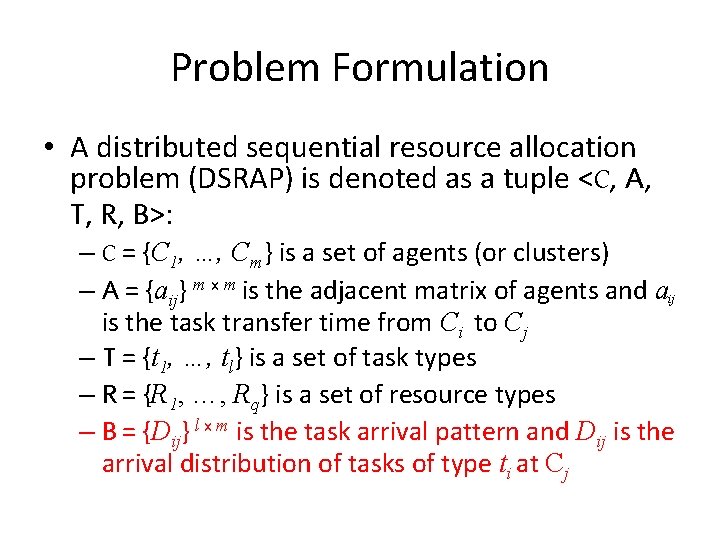

Problem Formulation • A distributed sequential resource allocation problem (DSRAP) is denoted as a tuple <C, A, T, R, B>: – C = {C 1, …, Cm} is a set of agents (or clusters) – A = {aij} m x m is the adjacent matrix of agents and aij is the task transfer time from Ci to Cj – T = {t 1, …, tl} is a set of task types – R = {R 1, …, Rq} is a set of resource types – B = {Dij} l x m is the task arrival pattern and Dij is the arrival distribution of tasks of type ti at Cj

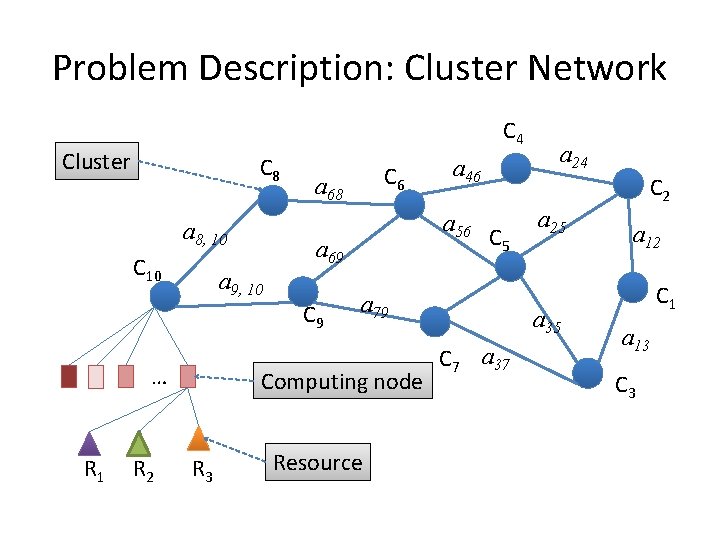

Problem Description: Cluster Network C 4 Cluster C 8 a 8, 10 C 10 a 68 C 9 R 1 R 2 C 5 a 79 Computing node R 3 a 46 a 56 a 69 a 9, 10 … C 6 Resource a 24 a 25 a 35 C 7 a 37 a 12 C 1 a 13 C 3

Problem Description: Task • A task is denoted as a tuple <t, u, w, d 1, … dq>, where – t is the task type – u is the utility rate of the task – w is the maximum waiting time before being allocated – di is the demand for resource i = 1, …, q.

Problem Description: Task Type • A task type characterizes a set of tasks, each of whose feature components follows a common distribution. • A task type t is denoted as a tuple <Dts, Dtu, Dtw, Dtd 1, … Dtdq>, where – Dts is the task service time distribution – Dtu is the distribution of utility rate – Dtw is the distribution of the maximum waiting time – Dtdi is the distribution of the demand for resource i = 1, …, q.

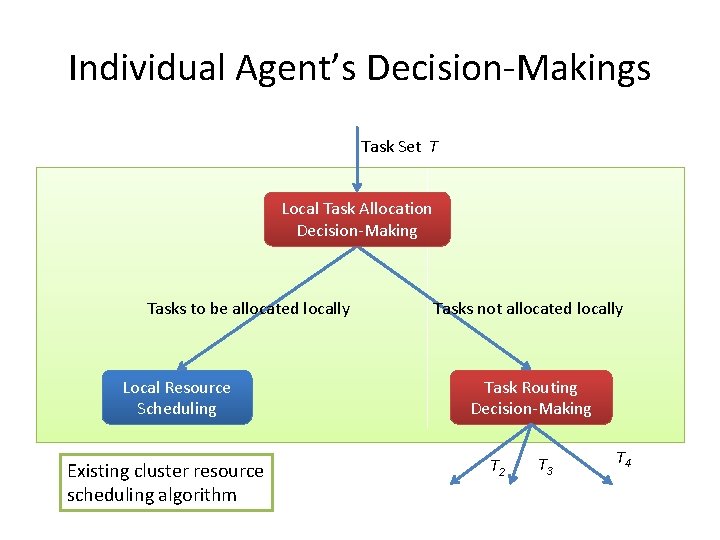

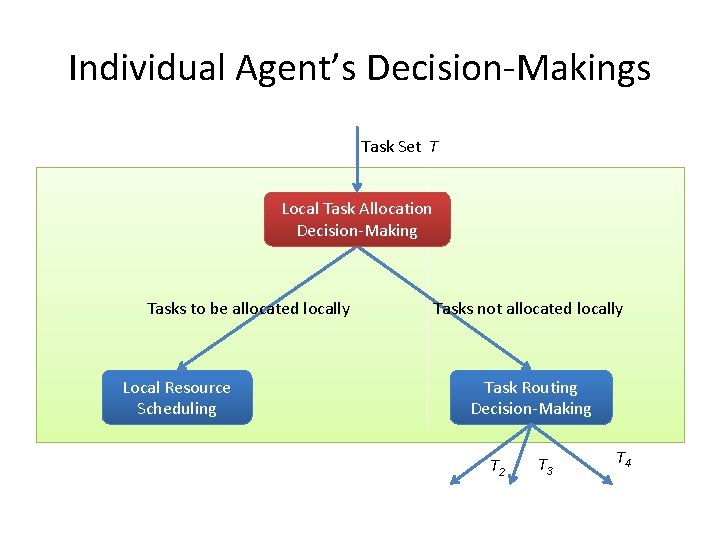

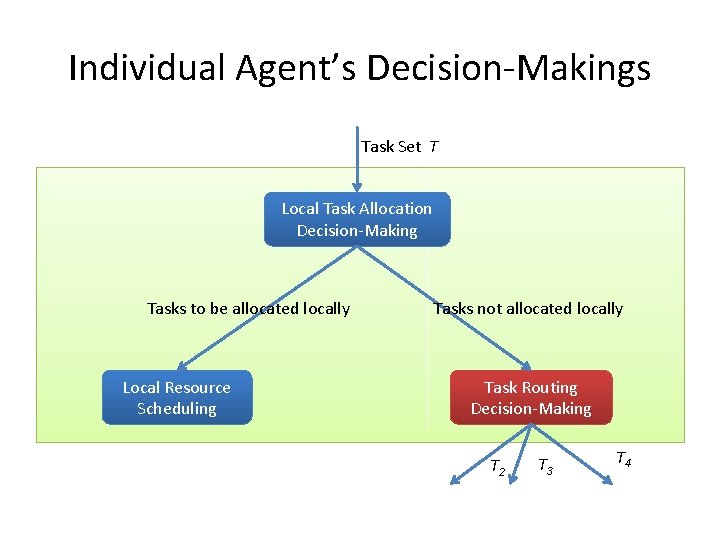

Individual Agent’s Decision-Makings Task Set T Local Task Allocation Decision-Making Tasks to be allocated locally Local Resource Scheduling Existing cluster resource scheduling algorithm Tasks not allocated locally Task Routing Decision-Making T 2 T 3 T 4

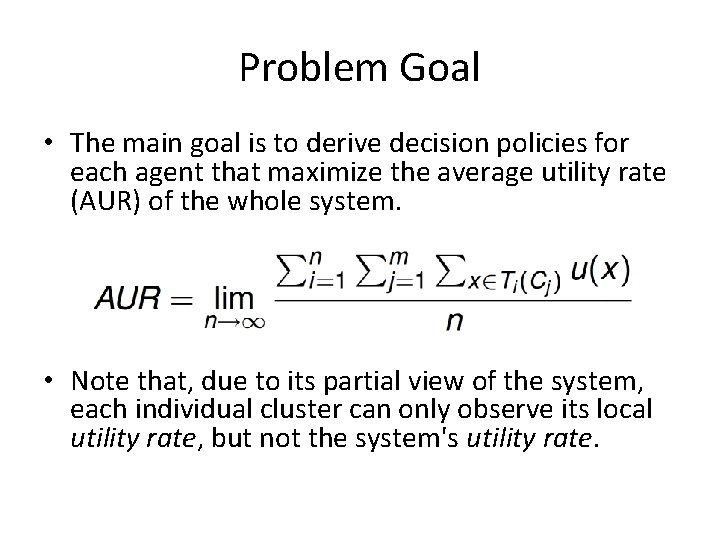

Problem Goal • The main goal is to derive decision policies for each agent that maximize the average utility rate (AUR) of the whole system. • Note that, due to its partial view of the system, each individual cluster can only observe its local utility rate, but not the system's utility rate.

Multi-Agent Reinforcement Learning (MARL) • In a multi-agent setting, all agents are concurrently learning their policies. • The environment becomes non-stationary from the perspective of an individual agent. • Single-agent reinforcement learning algorithms may diverge due to lack of synchronization. • Several MARL algorithms are proposed. – GIGA, GIGA-Wolf, WPL, etc.

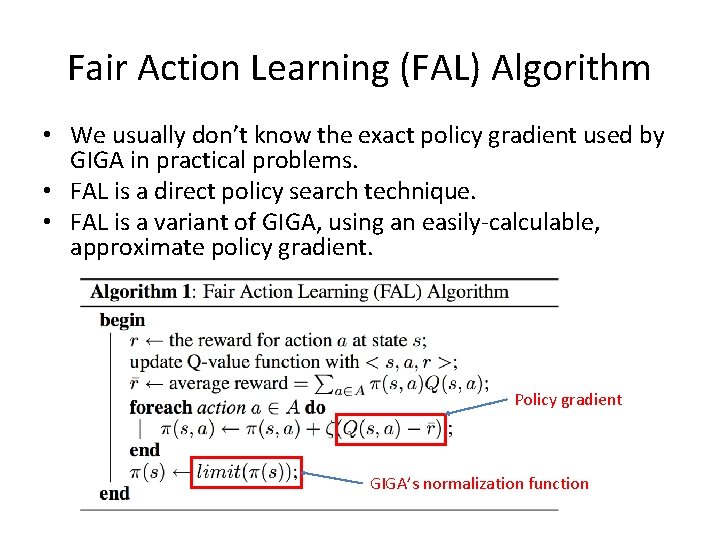

Fair Action Learning (FAL) Algorithm • We usually don’t know the exact policy gradient used by GIGA in practical problems. • FAL is a direct policy search technique. • FAL is a variant of GIGA, using an easily-calculable, approximate policy gradient. Policy gradient GIGA’s normalization function

Individual Agent’s Decision-Makings Task Set T Local Task Allocation Decision-Making Tasks to be allocated locally Local Resource Scheduling Tasks not allocated locally Task Routing Decision-Making T 2 T 3 T 4

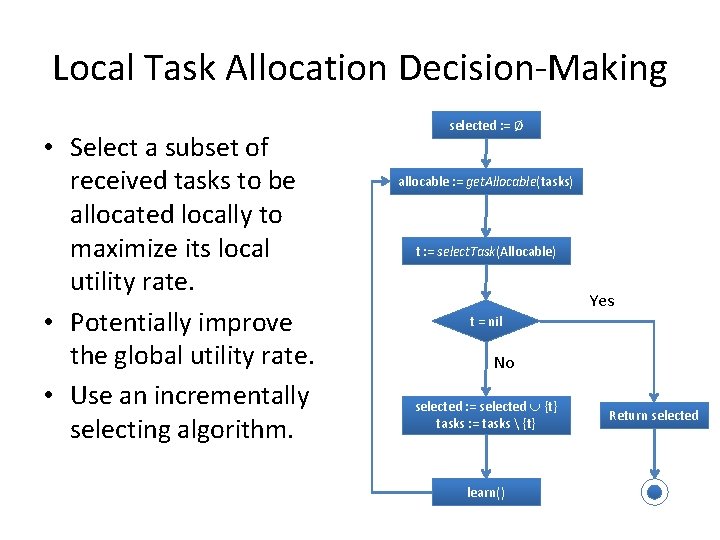

Local Task Allocation Decision-Making • Select a subset of received tasks to be allocated locally to maximize its local utility rate. • Potentially improve the global utility rate. • Use an incrementally selecting algorithm. selected : = Ø allocable : = get. Allocable(tasks) t : = select. Task(Allocable) Yes t = nil No selected : = selected {t} tasks : = tasks {t} learn() Return selected

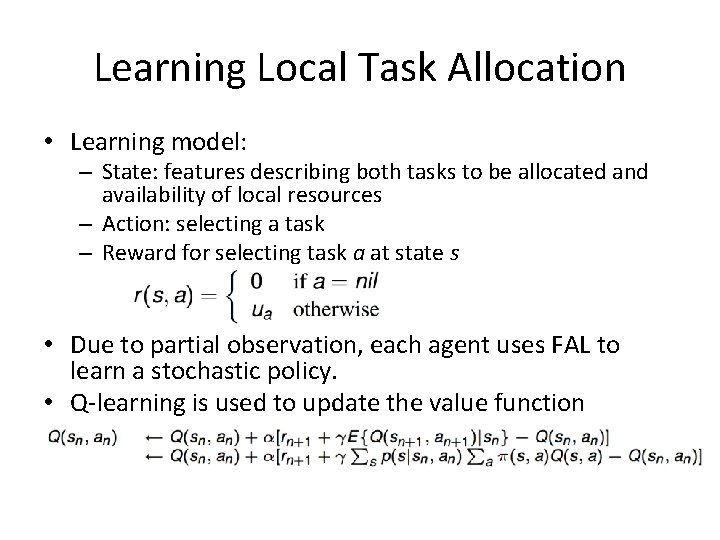

Learning Local Task Allocation • Learning model: – State: features describing both tasks to be allocated and availability of local resources – Action: selecting a task – Reward for selecting task a at state s • Due to partial observation, each agent uses FAL to learn a stochastic policy. • Q-learning is used to update the value function

Accelerating the Learning Process • Reasons – Extremely large policy search space – Non-stationary learning environment – Avoid poor initial policies in practical systems • Techniques – Initialize policies with a greedy allocation algorithm – Set utilization threshold for conducting ε-greedy exploration – Limit the exploration rate for selecting nil task

Individual Agent’s Decision-Makings Task Set T Local Task Allocation Decision-Making Tasks to be allocated locally Local Resource Scheduling Tasks not allocated locally Task Routing Decision-Making T 2 T 3 T 4

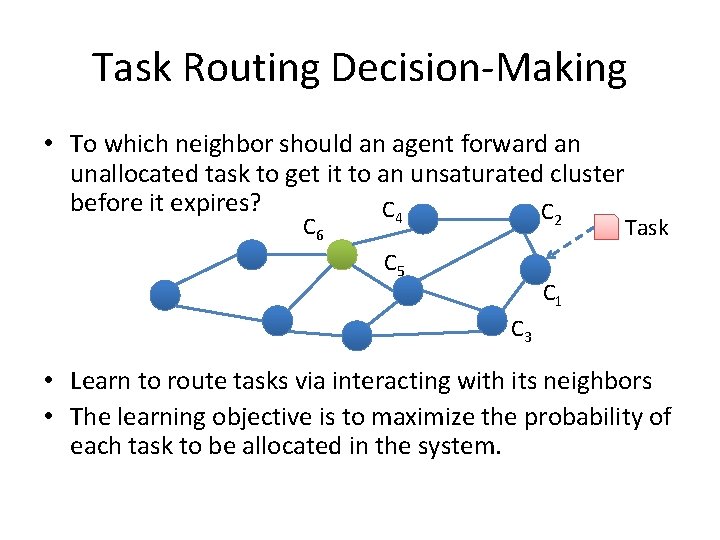

Task Routing Decision-Making • To which neighbor should an agent forward an unallocated task to get it to an unsaturated cluster before it expires? C 4 C 2 C 6 C 5 Task C 1 C 3 • Learn to route tasks via interacting with its neighbors • The learning objective is to maximize the probability of each task to be allocated in the system.

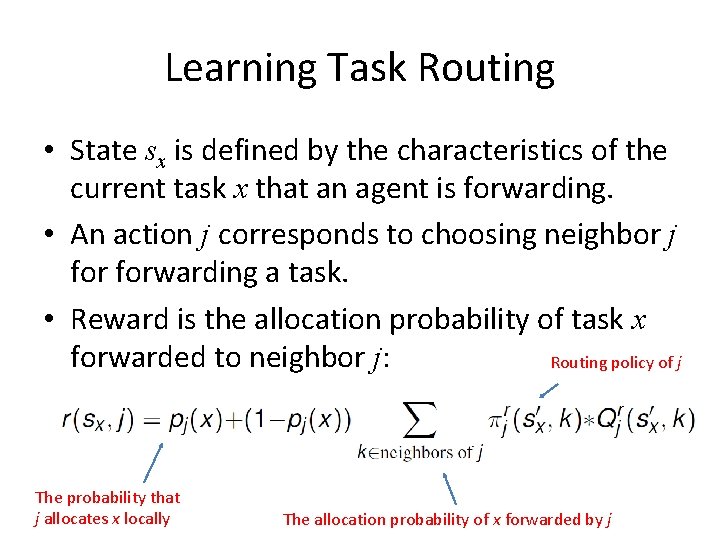

Learning Task Routing • State sx is defined by the characteristics of the current task x that an agent is forwarding. • An action j corresponds to choosing neighbor j forwarding a task. • Reward is the allocation probability of task x forwarded to neighbor j: Routing policy of j The probability that j allocates x locally The allocation probability of x forwarded by j

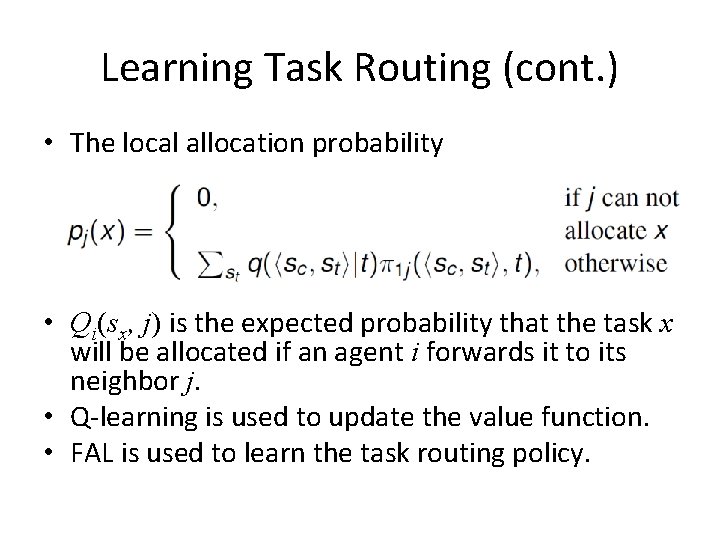

Learning Task Routing (cont. ) • The local allocation probability • Qi(sx, j) is the expected probability that the task x will be allocated if an agent i forwards it to its neighbor j. • Q-learning is used to update the value function. • FAL is used to learn the task routing policy.

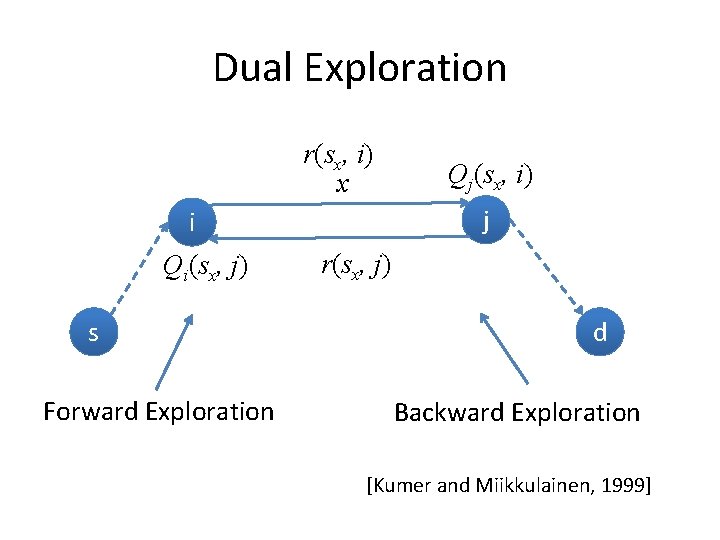

Dual Exploration r(sx, i) x j i Qi(sx, j) s Forward Exploration Qj(sx, i) r(sx, j) d Backward Exploration [Kumer and Miikkulainen, 1999]

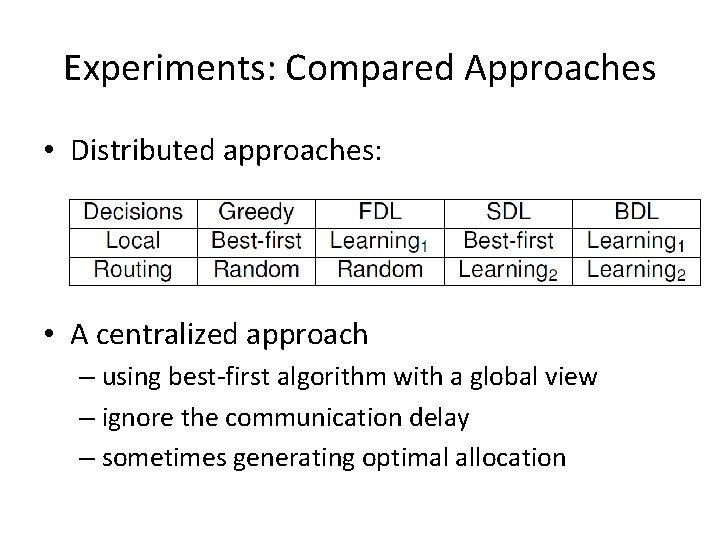

Experiments: Compared Approaches • Distributed approaches: • A centralized approach – using best-first algorithm with a global view – ignore the communication delay – sometimes generating optimal allocation

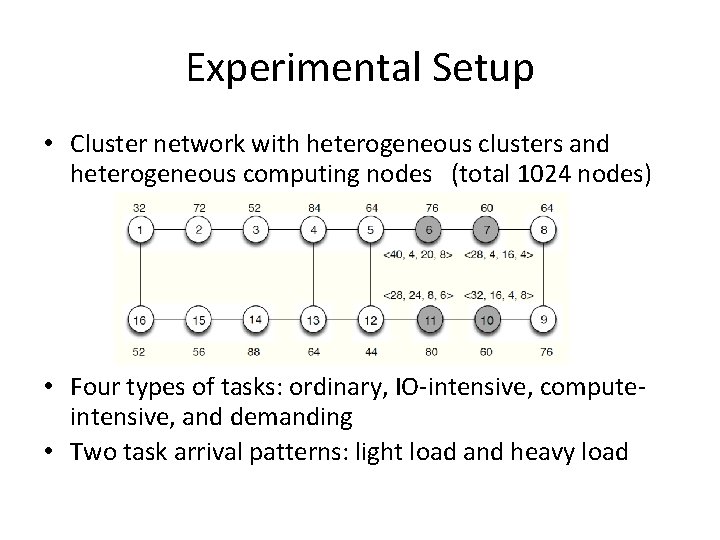

Experimental Setup • Cluster network with heterogeneous clusters and heterogeneous computing nodes (total 1024 nodes) • Four types of tasks: ordinary, IO-intensive, computeintensive, and demanding • Two task arrival patterns: light load and heavy load

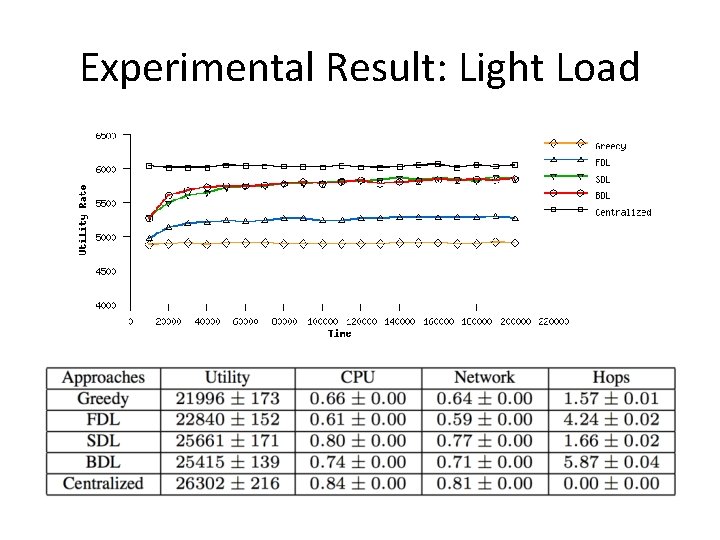

Experimental Result: Light Load

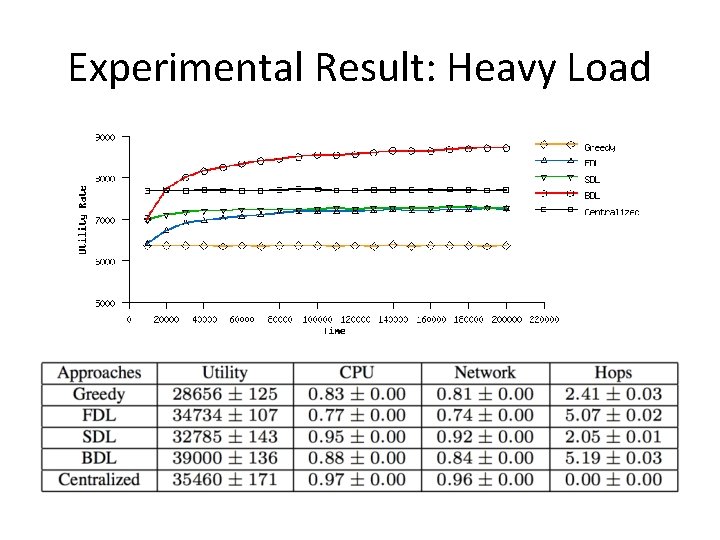

Experimental Result: Heavy Load

Summary • This paper presents a multi-agent learning (MAL) approach to address resource sharing in cluster networks for building large computing infrastructure. • Experimental results are encouraging. • This work plausibly suggests that MAL may be a promising approach to online optimization problems in distributed systems.

- Slides: 28