A Mechanistic Model for Superscalar Processors J E

- Slides: 55

A Mechanistic Model for Superscalar Processors J. E. Smith University of Wisconsin-Madison Lieven Eeckhout, Stijn Eyerman Ghent University Tejas Karkhanis AMD Superscalar Modeling © J. E. Smith, 2006

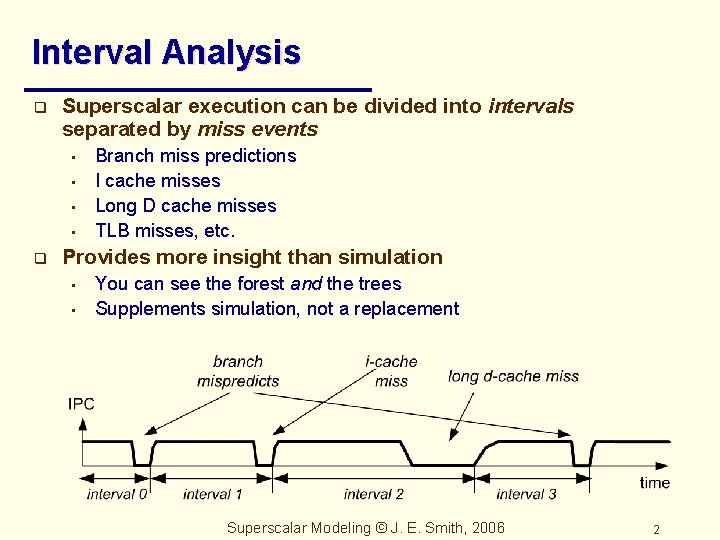

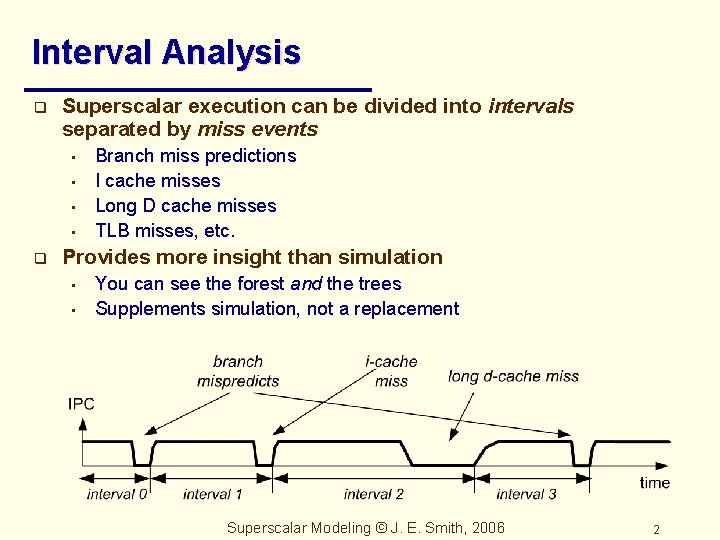

Interval Analysis q Superscalar execution can be divided into intervals separated by miss events • • q Branch miss predictions I cache misses Long D cache misses TLB misses, etc. Provides more insight than simulation • • You can see the forest and the trees Supplements simulation, not a replacement Superscalar Modeling © J. E. Smith, 2006 2

Outline q Development of Interval Analysis • • q Balanced Superscalar Processors • • q Modeling ILP Modeling miss events Performance components Optimal pipeline configurations Performance Counter Architecture • Accurate CPI stacks Superscalar Modeling © J. E. Smith, 2006 3

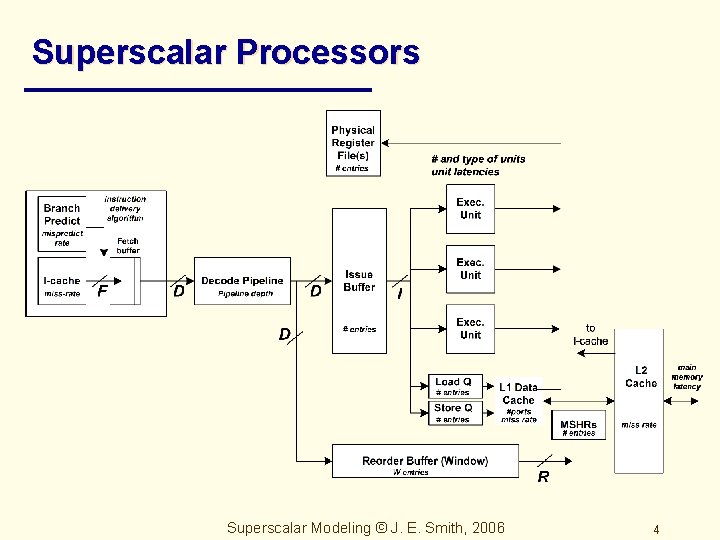

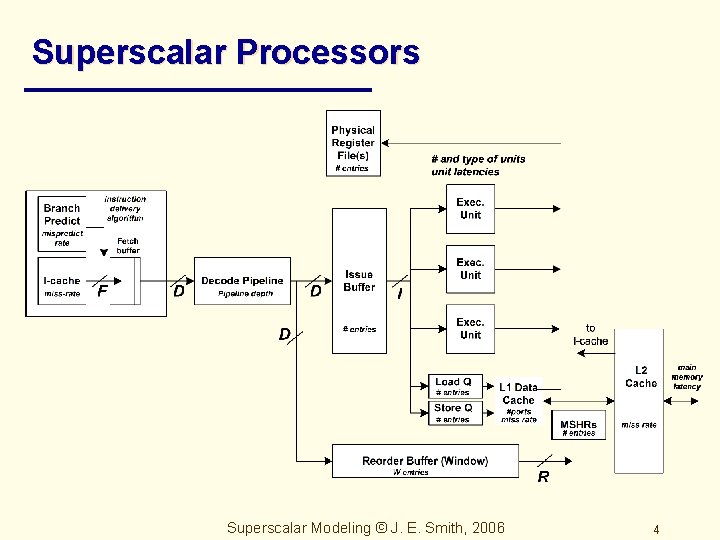

Superscalar Processors Superscalar Modeling © J. E. Smith, 2006 4

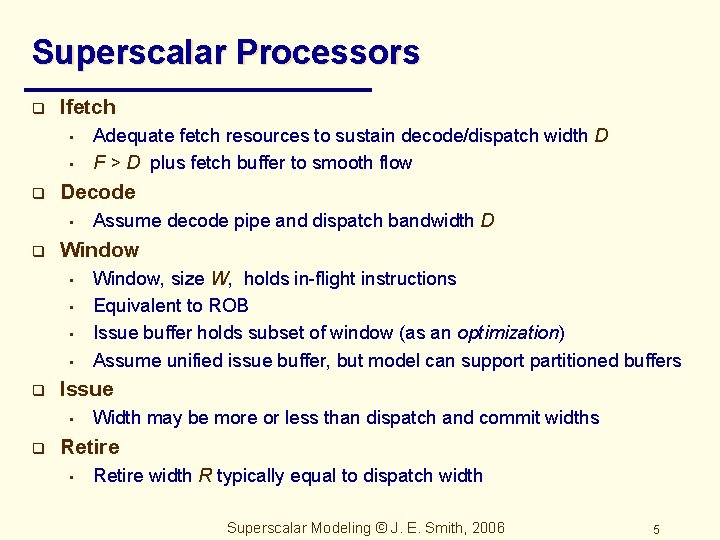

Superscalar Processors q Ifetch • • q Decode • q • • • Window, size W, holds in-flight instructions Equivalent to ROB Issue buffer holds subset of window (as an optimization) Assume unified issue buffer, but model can support partitioned buffers Issue • q Assume decode pipe and dispatch bandwidth D Window • q Adequate fetch resources to sustain decode/dispatch width D F > D plus fetch buffer to smooth flow Width may be more or less than dispatch and commit widths Retire • Retire width R typically equal to dispatch width Superscalar Modeling © J. E. Smith, 2006 5

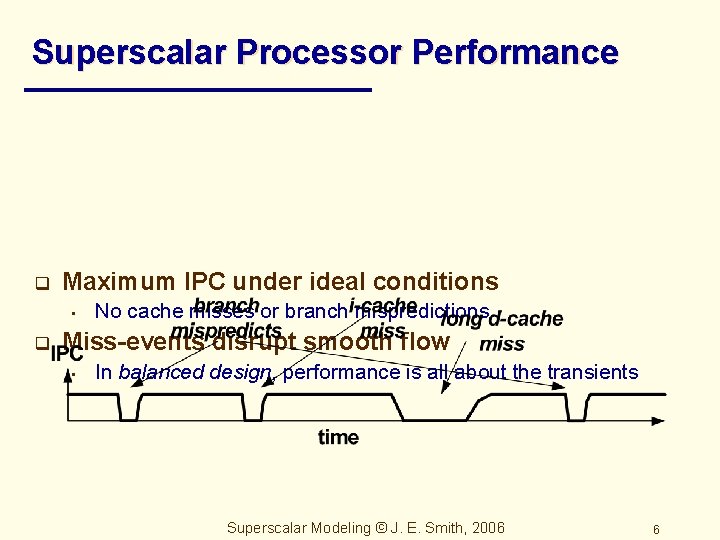

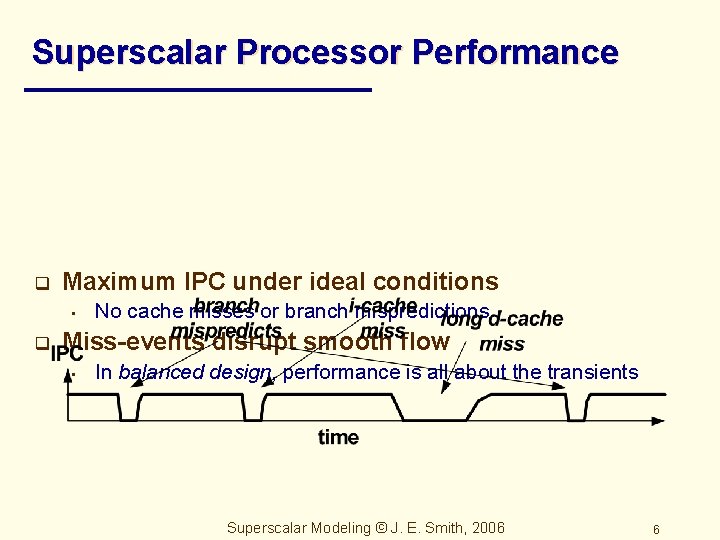

Superscalar Processor Performance q Maximum IPC under ideal conditions • q No cache misses or branch mispredictions Miss-events disrupt smooth flow • In balanced design, performance is all about the transients Superscalar Modeling © J. E. Smith, 2006 6

Modeling ILP q q q Relationship between maximum window size W and achieved issue width i Program dependence structure Has a long history… Superscalar Modeling © J. E. Smith, 2006 7

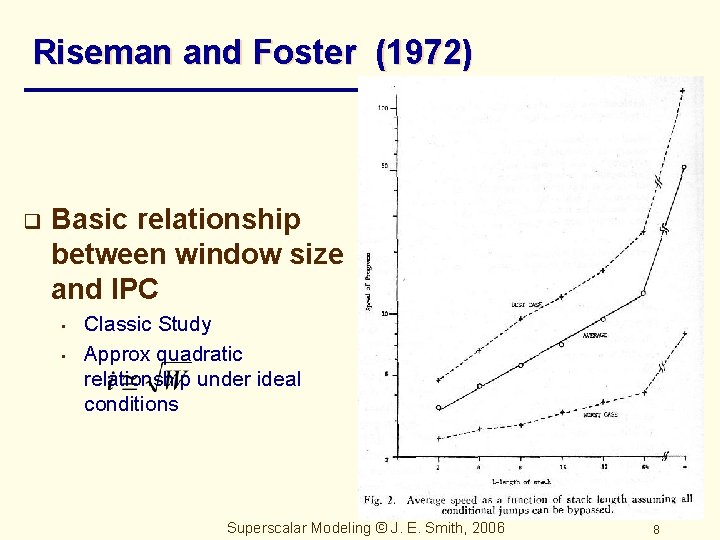

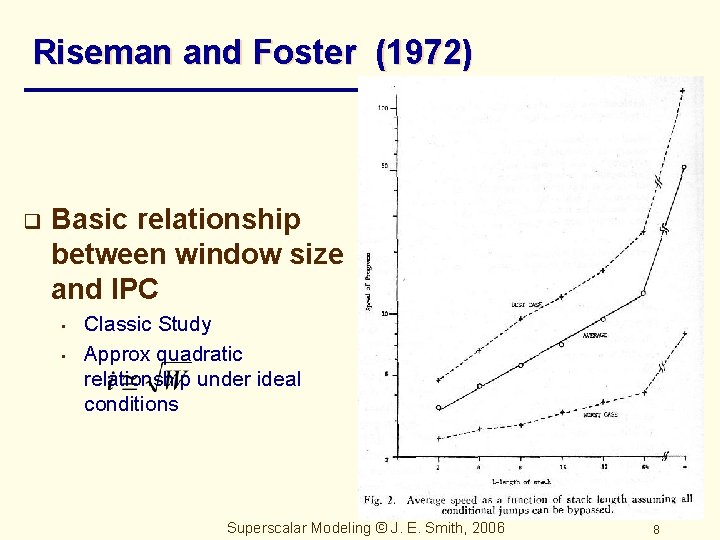

Riseman and Foster (1972) q Basic relationship between window size and IPC • • Classic Study Approx quadratic relationship under ideal conditions Superscalar Modeling © J. E. Smith, 2006 8

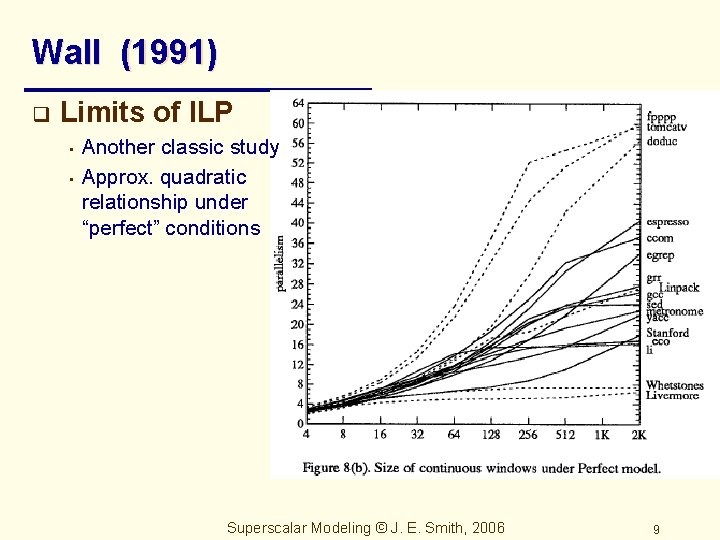

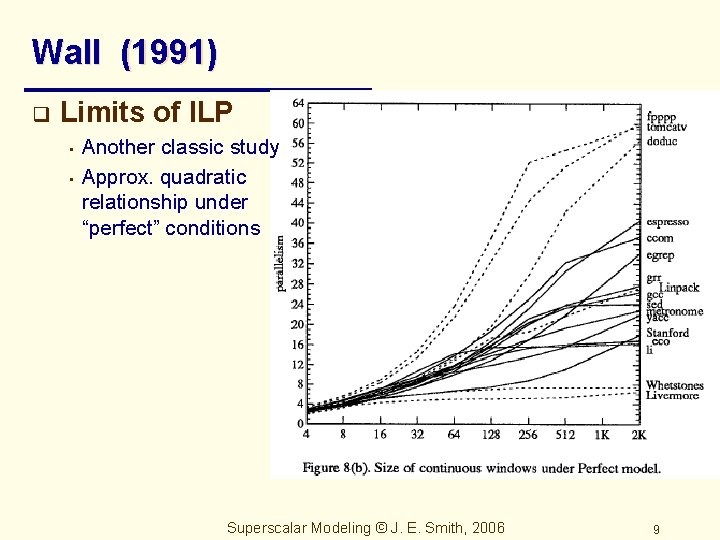

Wall (1991) q Limits of ILP • • Another classic study Approx. quadratic relationship under “perfect” conditions Superscalar Modeling © J. E. Smith, 2006 9

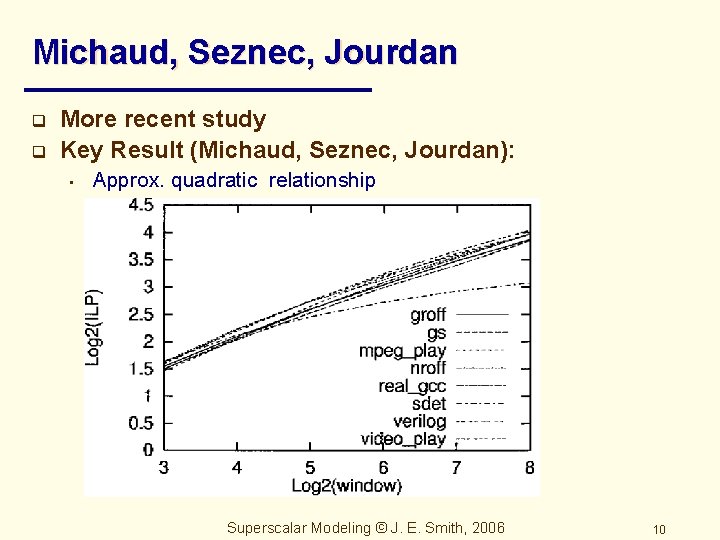

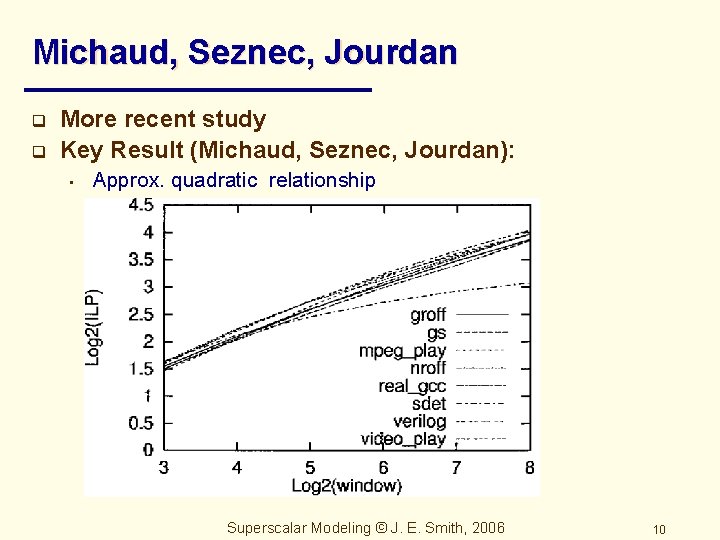

Michaud, Seznec, Jourdan q q More recent study Key Result (Michaud, Seznec, Jourdan): • Approx. quadratic relationship Superscalar Modeling © J. E. Smith, 2006 10

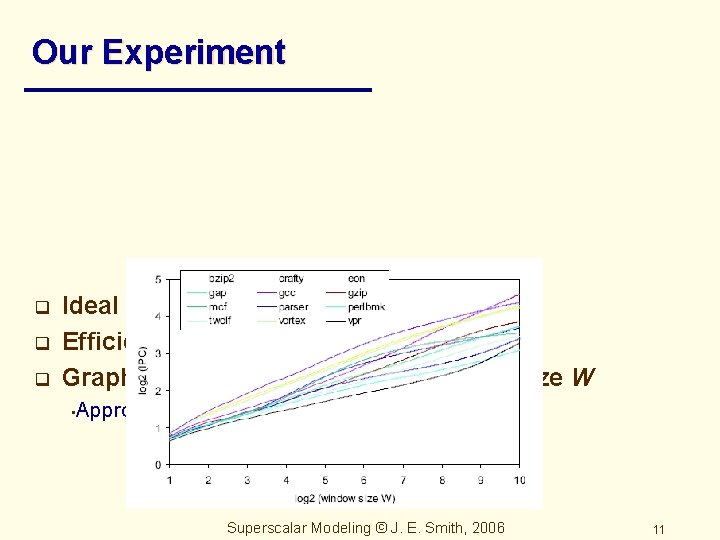

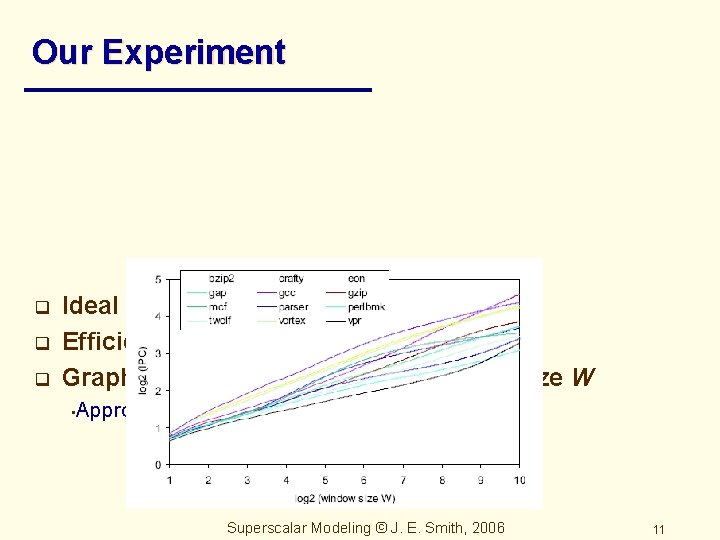

Our Experiment q q q Ideal caches, predictor Efficient I fetch keeps window full Graph issue rate i, as a fcn of window size W • Approx. quadratic relationship Superscalar Modeling © J. E. Smith, 2006 11

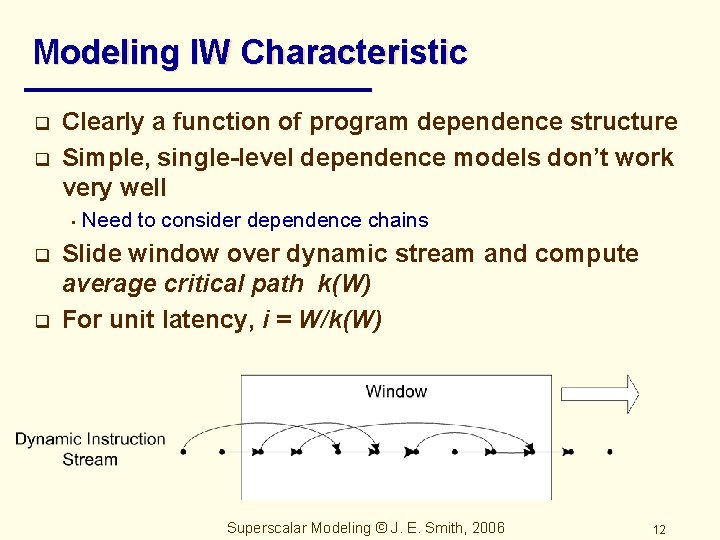

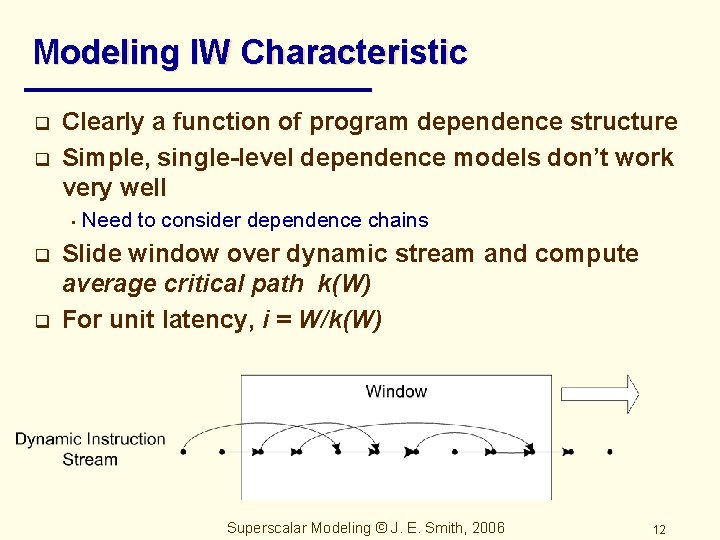

Modeling IW Characteristic q q Clearly a function of program dependence structure Simple, single-level dependence models don’t work very well • q q Need to consider dependence chains Slide window over dynamic stream and compute average critical path k(W) For unit latency, i = W/k(W) Superscalar Modeling © J. E. Smith, 2006 12

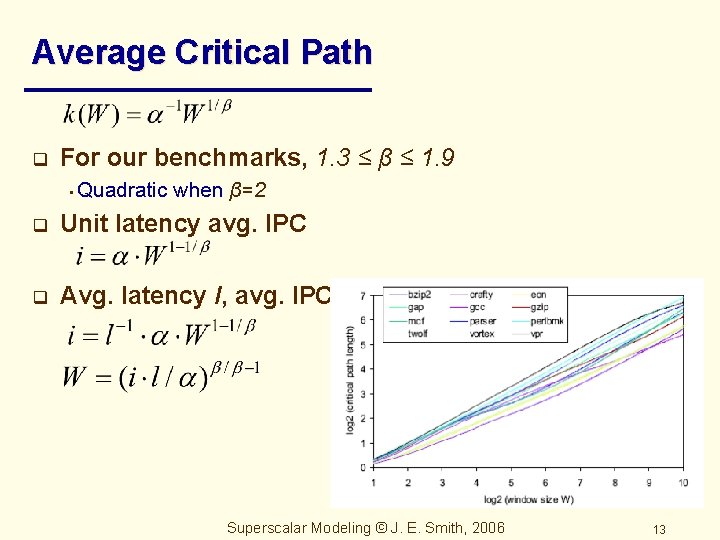

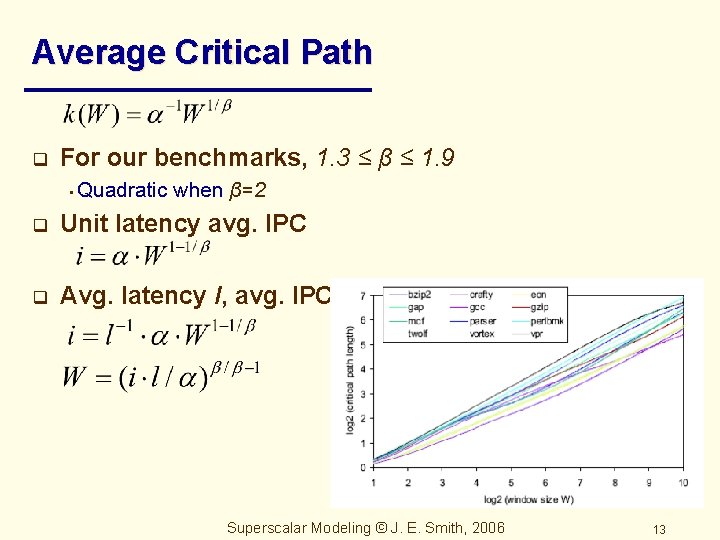

Average Critical Path q For our benchmarks, 1. 3 ≤ β ≤ 1. 9 • Quadratic when β=2 q Unit latency avg. IPC q Avg. latency l, avg. IPC Superscalar Modeling © J. E. Smith, 2006 13

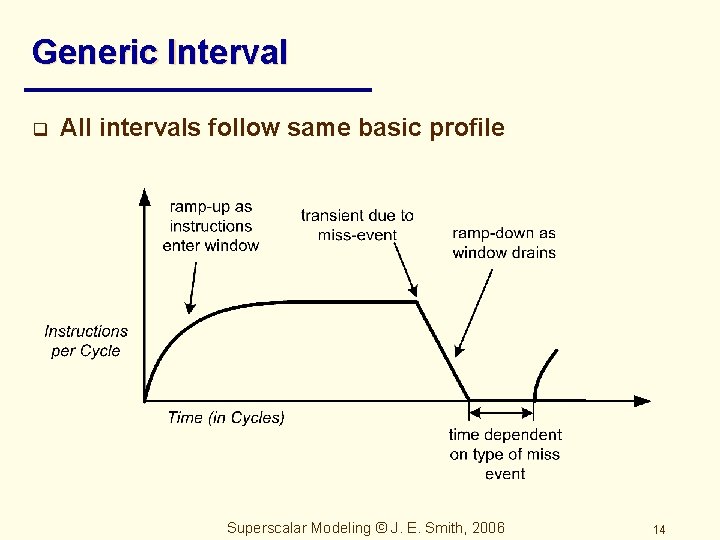

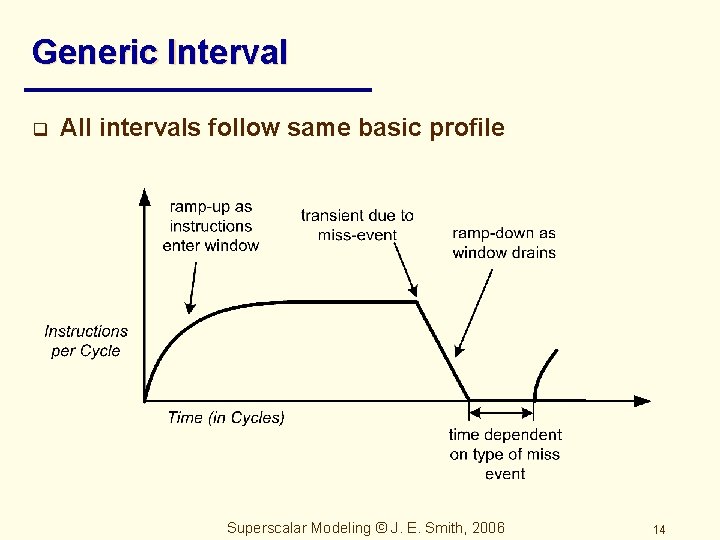

Generic Interval q All intervals follow same basic profile Superscalar Modeling © J. E. Smith, 2006 14

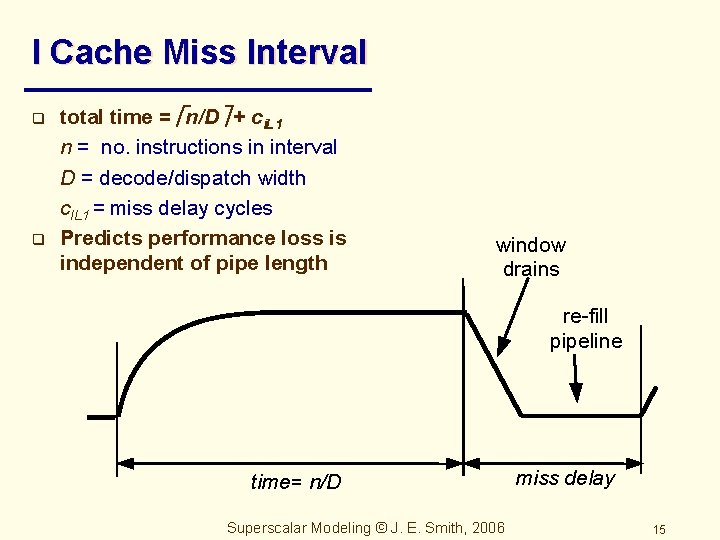

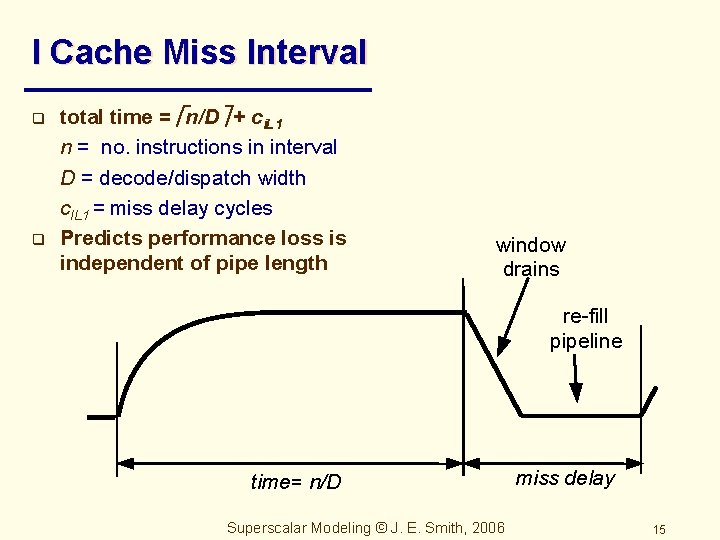

I Cache Miss Interval q q total time = n/D + ci. L 1 n = no. instructions in interval D = decode/dispatch width c. IL 1 = miss delay cycles Predicts performance loss is independent of pipe length window drains re-fill pipeline time= n/D Superscalar Modeling © J. E. Smith, 2006 miss delay 15

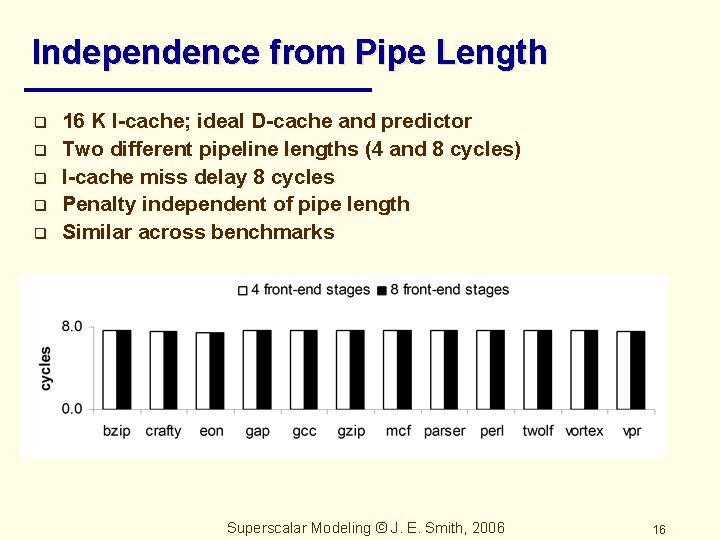

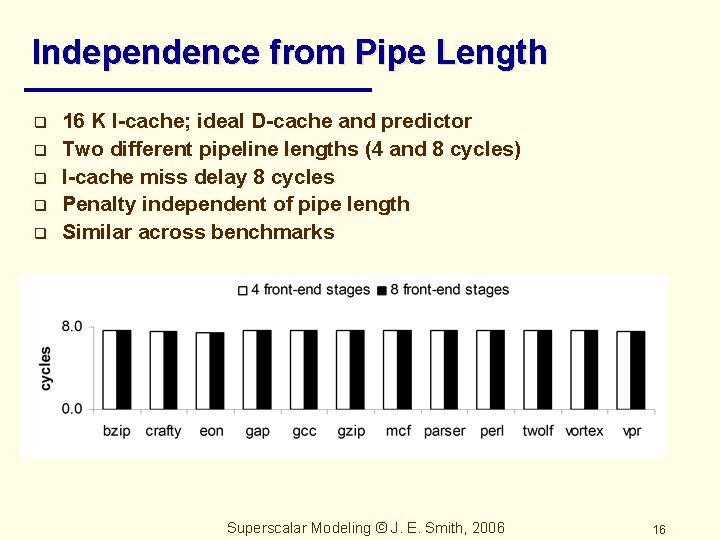

Independence from Pipe Length q q q 16 K I-cache; ideal D-cache and predictor Two different pipeline lengths (4 and 8 cycles) I-cache miss delay 8 cycles Penalty independent of pipe length Similar across benchmarks Superscalar Modeling © J. E. Smith, 2006 16

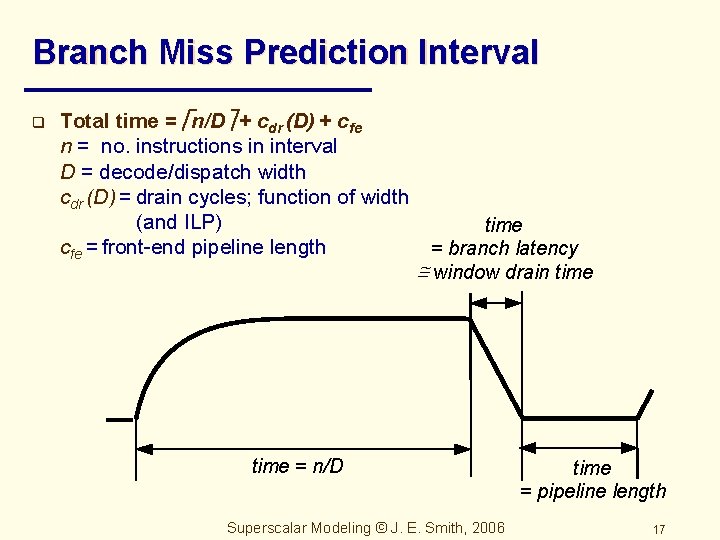

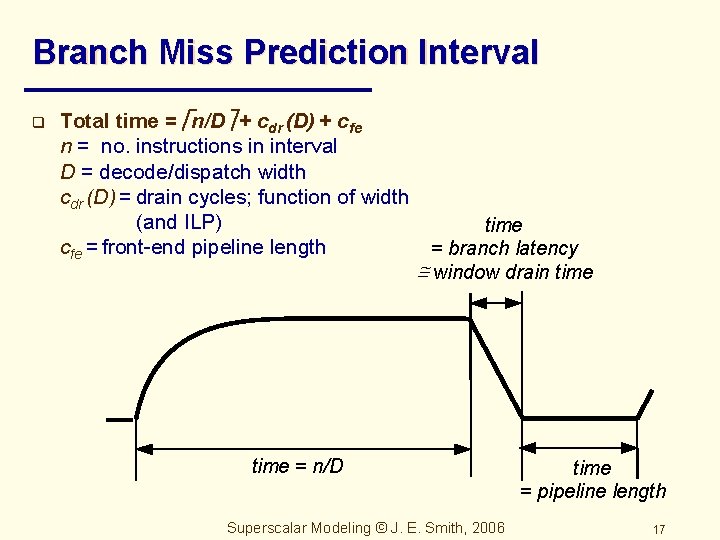

Branch Miss Prediction Interval q Total time = n/D + cdr (D) + cfe n = no. instructions in interval D = decode/dispatch width cdr (D) = drain cycles; function of width (and ILP) cfe = front-end pipeline length time = branch latency @ window drain time = n/D Superscalar Modeling © J. E. Smith, 2006 time = pipeline length 17

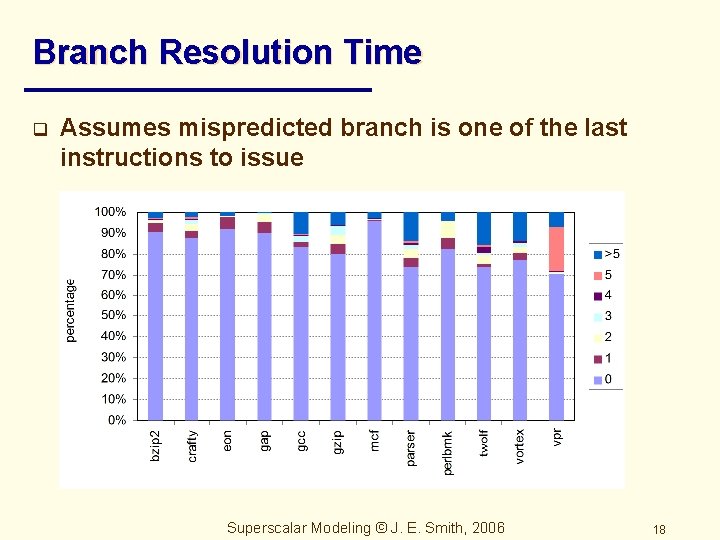

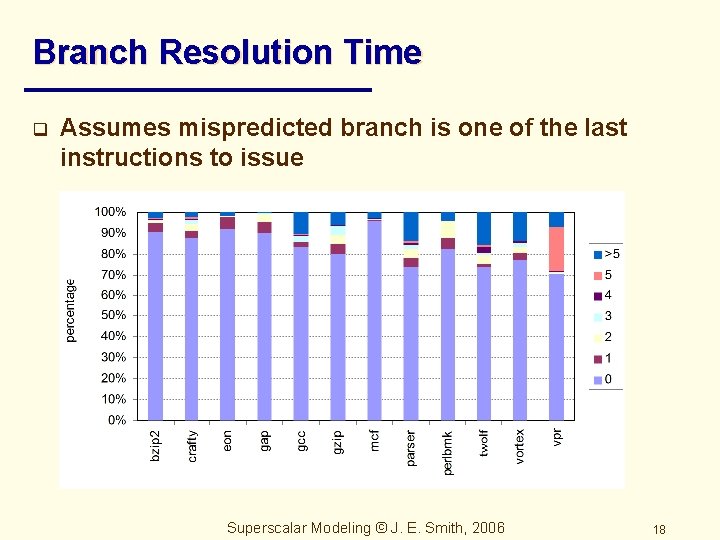

Branch Resolution Time q Assumes mispredicted branch is one of the last instructions to issue Superscalar Modeling © J. E. Smith, 2006 18

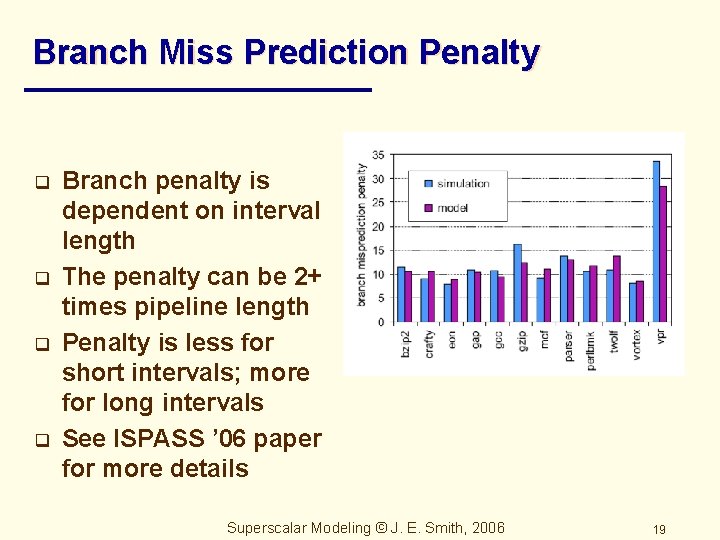

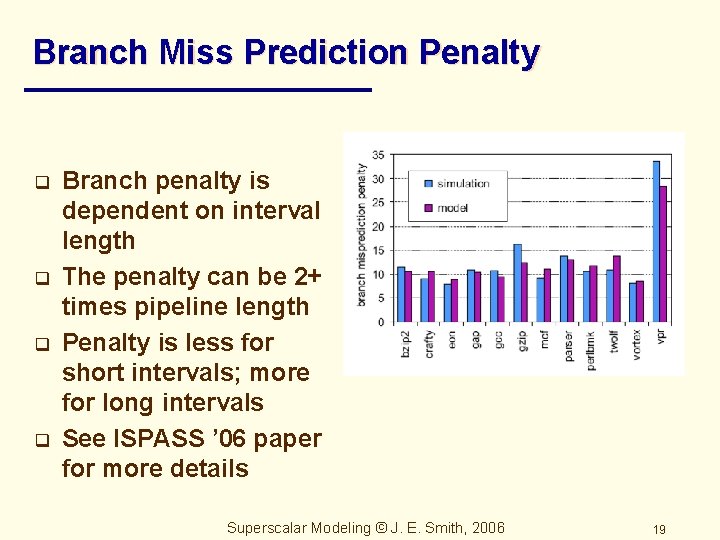

Branch Miss Prediction Penalty q q Branch penalty is dependent on interval length The penalty can be 2+ times pipeline length Penalty is less for short intervals; more for long intervals See ISPASS ’ 06 paper for more details Superscalar Modeling © J. E. Smith, 2006 19

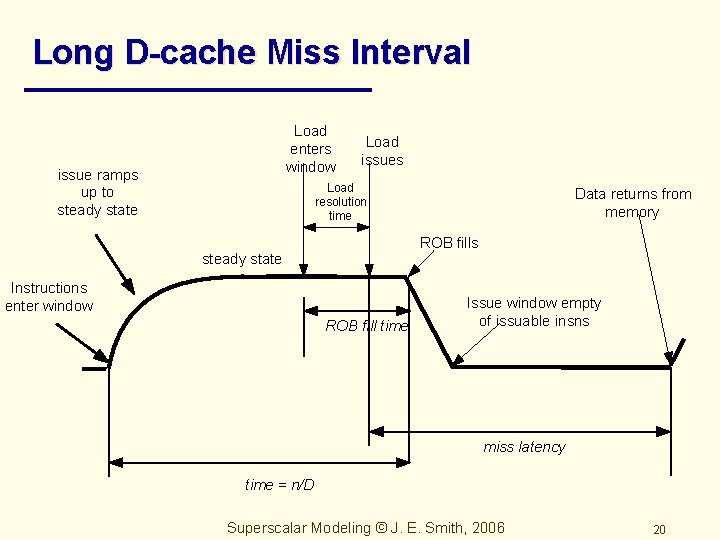

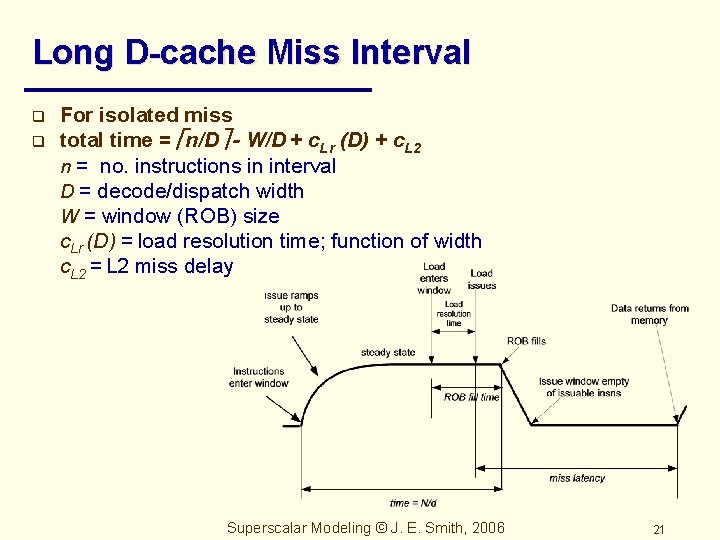

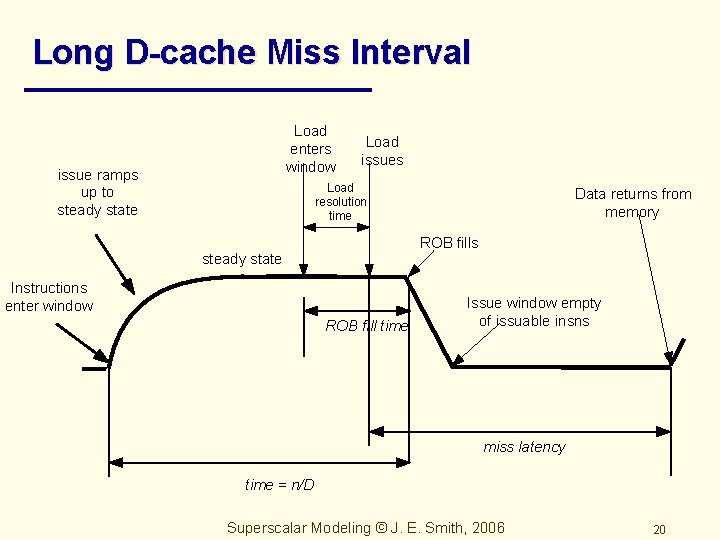

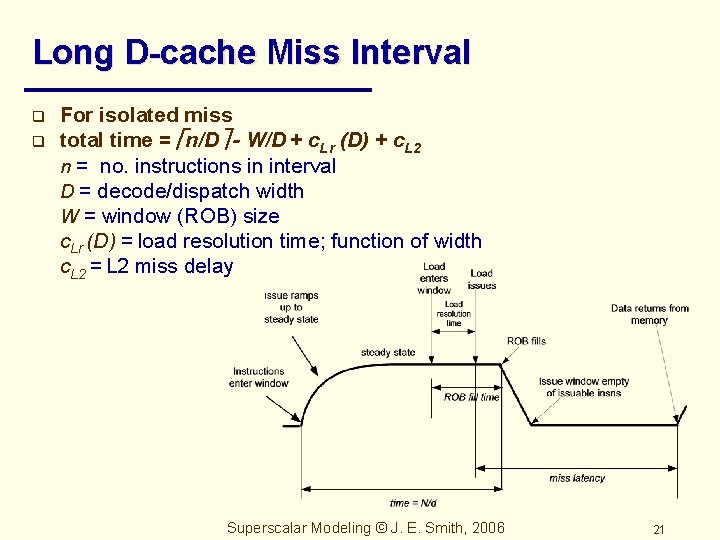

Long D-cache Miss Interval Load enters window issue ramps up to steady state Load issues Load resolution time Data returns from memory ROB fills steady state Instructions enter window ROB fill time Issue window empty of issuable insns miss latency time = n/D Superscalar Modeling © J. E. Smith, 2006 20

Long D-cache Miss Interval q q For isolated miss total time = n/D - W/D + c. Lr (D) + c. L 2 n = no. instructions in interval D = decode/dispatch width W = window (ROB) size c. Lr (D) = load resolution time; function of width c. L 2 = L 2 miss delay Superscalar Modeling © J. E. Smith, 2006 21

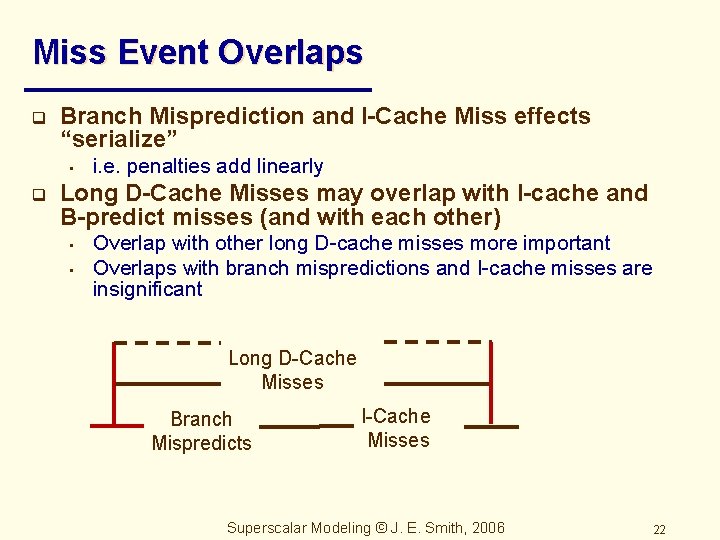

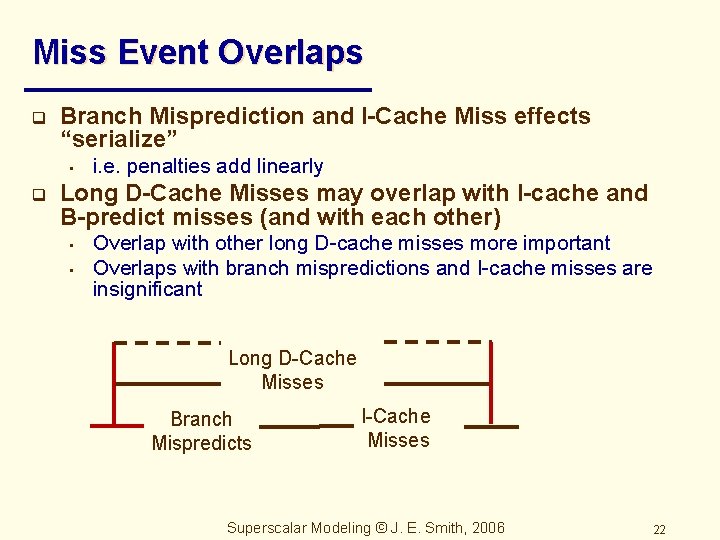

Miss Event Overlaps q Branch Misprediction and I-Cache Miss effects “serialize” • q i. e. penalties add linearly Long D-Cache Misses may overlap with I-cache and B-predict misses (and with each other) • • Overlap with other long D-cache misses more important Overlaps with branch mispredictions and I-cache misses are insignificant Long D-Cache Misses Branch Mispredicts I-Cache Misses Superscalar Modeling © J. E. Smith, 2006 22

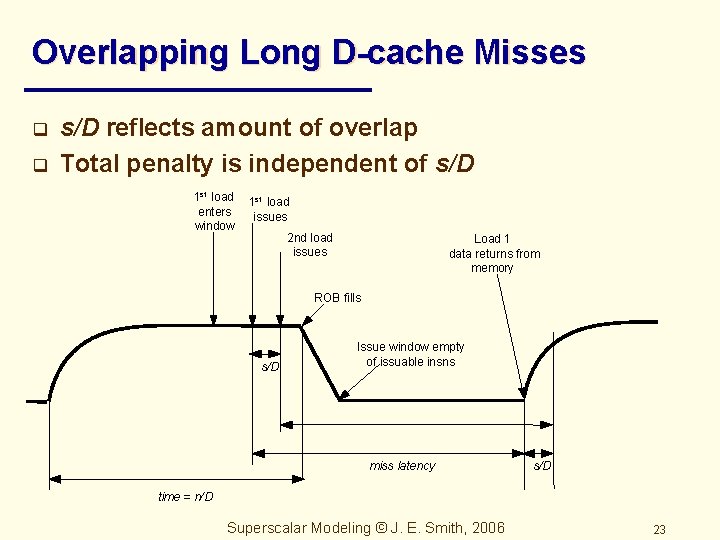

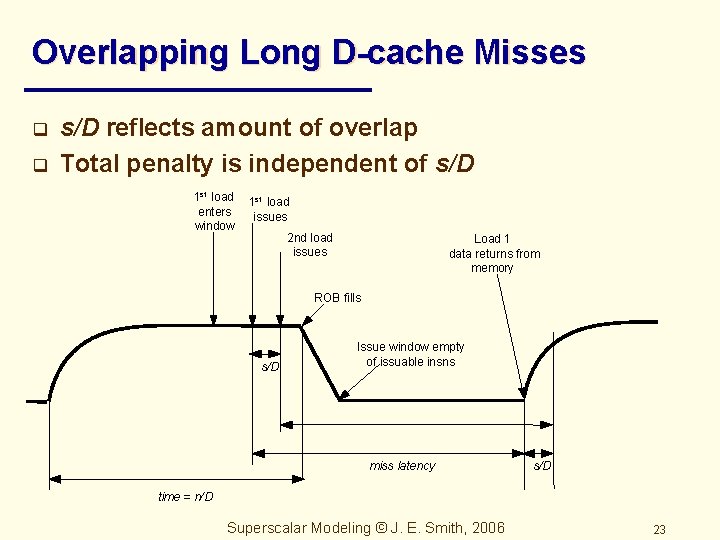

Overlapping Long D-cache Misses q q s/D reflects amount of overlap Total penalty is independent of s/D 1 st load enters window 1 st load issues 2 nd load issues Load 1 data returns from memory ROB fills s/D Issue window empty of issuable insns miss latency s/D time = n/D Superscalar Modeling © J. E. Smith, 2006 23

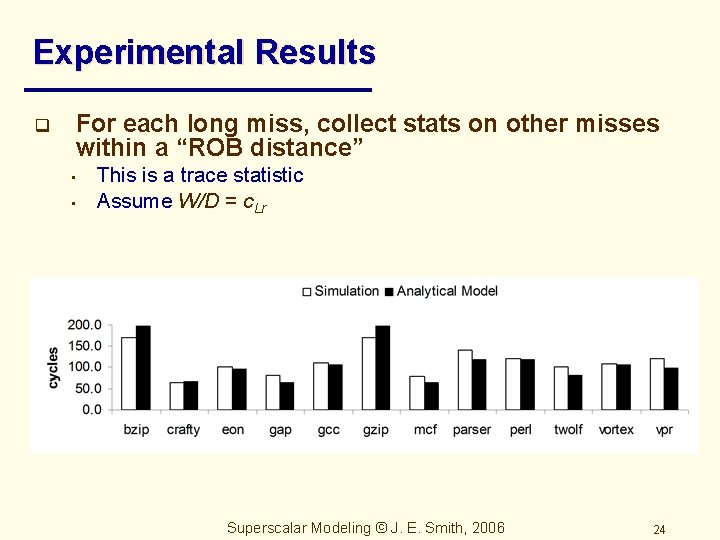

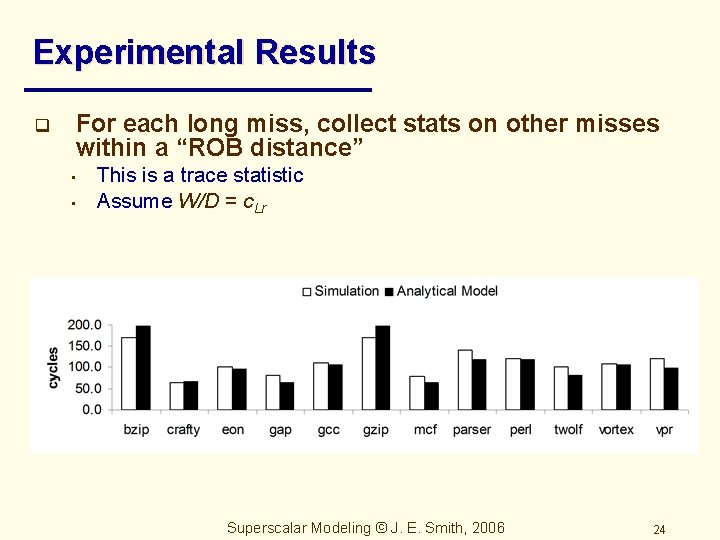

Experimental Results q For each long miss, collect stats on other misses within a “ROB distance” • • This is a trace statistic Assume W/D = c. Lr Superscalar Modeling © J. E. Smith, 2006 24

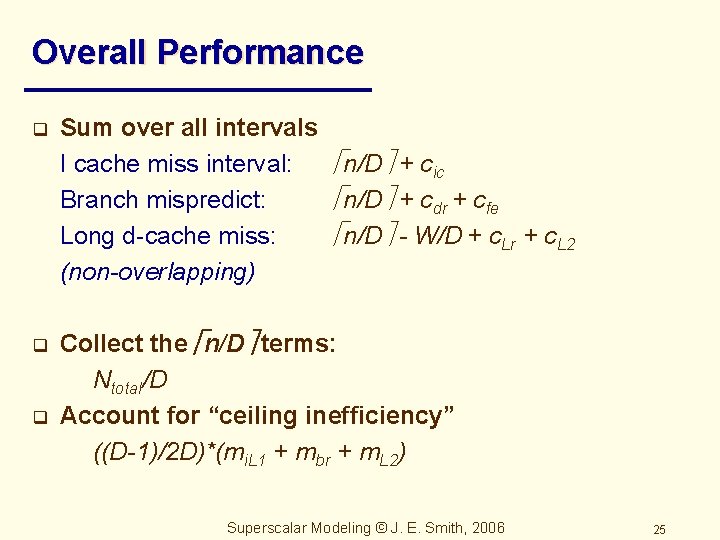

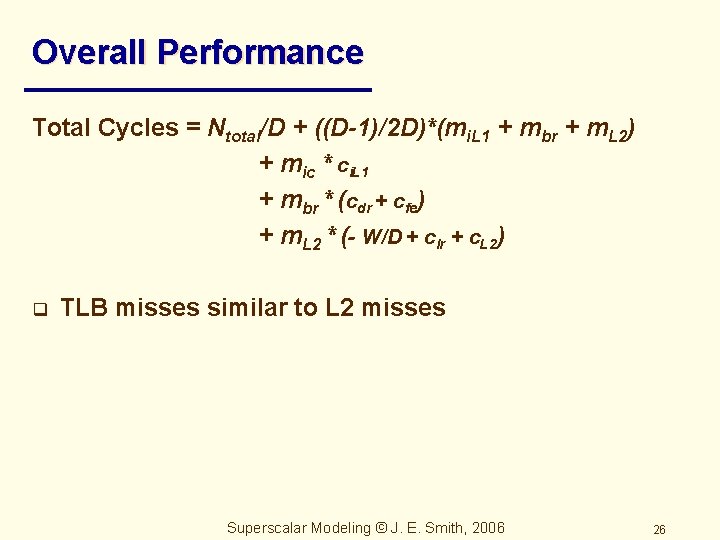

Overall Performance q q q Sum over all intervals I cache miss interval: n/D + cic Branch mispredict: n/D + cdr + cfe Long d-cache miss: n/D - W/D + c. Lr + c. L 2 (non-overlapping) Collect the n/D terms: Ntotal/D Account for “ceiling inefficiency” ((D-1)/2 D)*(mi. L 1 + mbr + m. L 2) Superscalar Modeling © J. E. Smith, 2006 25

Overall Performance Total Cycles = Ntotal/D + ((D-1)/2 D)*(mi. L 1 + mbr + m. L 2) + mic * ci. L 1 + mbr * (cdr + cfe) + m. L 2 * (- W/D + clr + c. L 2) q TLB misses similar to L 2 misses Superscalar Modeling © J. E. Smith, 2006 26

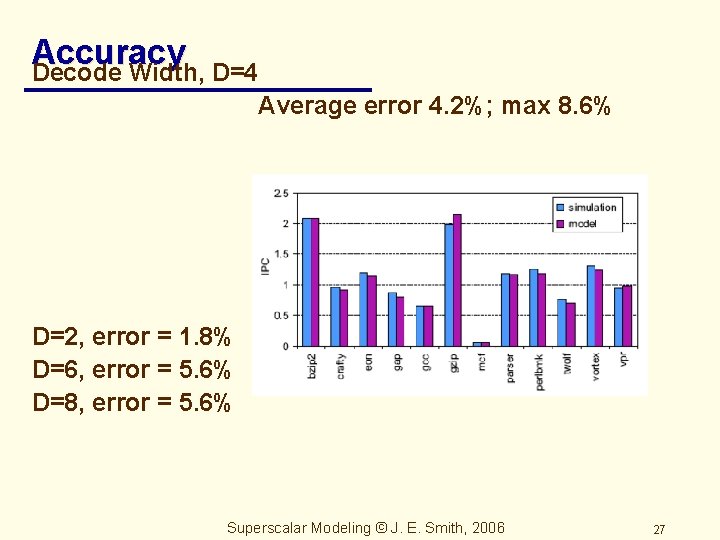

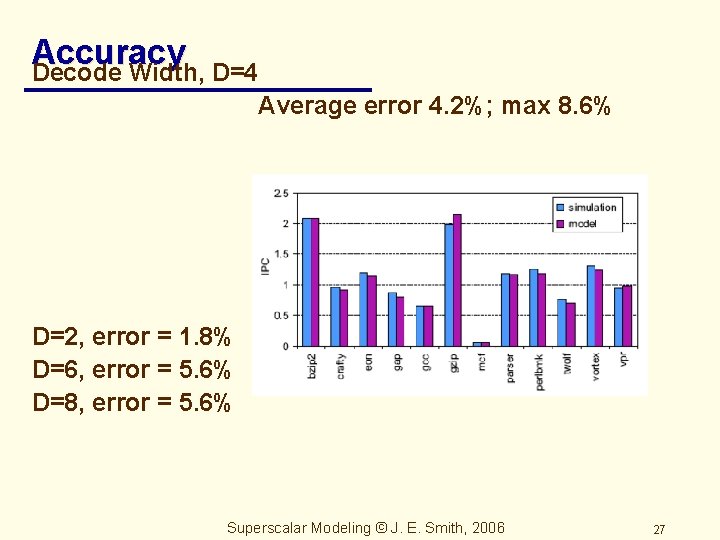

Accuracy Decode Width, D=4 Average error 4. 2%; max 8. 6% D=2, error = 1. 8% D=6, error = 5. 6% D=8, error = 5. 6% Superscalar Modeling © J. E. Smith, 2006 27

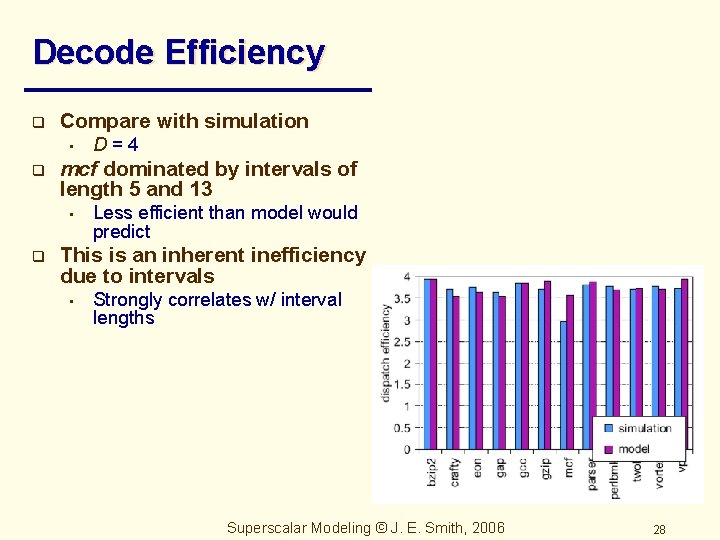

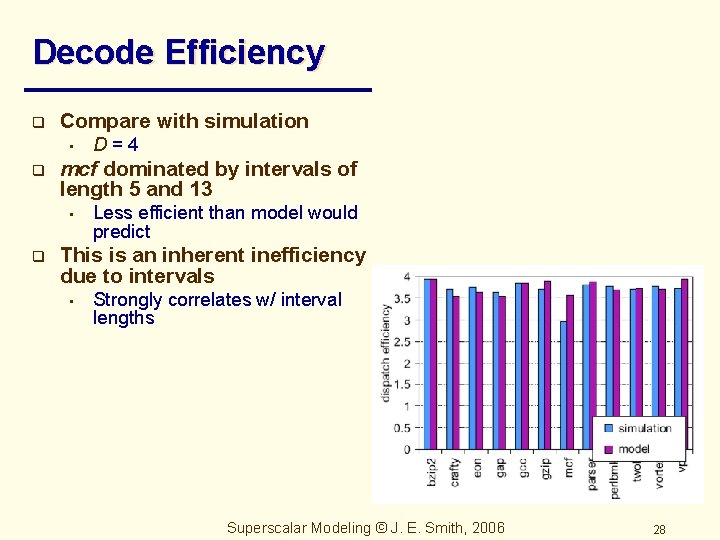

Decode Efficiency q Compare with simulation • q mcf dominated by intervals of length 5 and 13 • q D=4 Less efficient than model would predict This is an inherent inefficiency due to intervals • Strongly correlates w/ interval lengths Superscalar Modeling © J. E. Smith, 2006 28

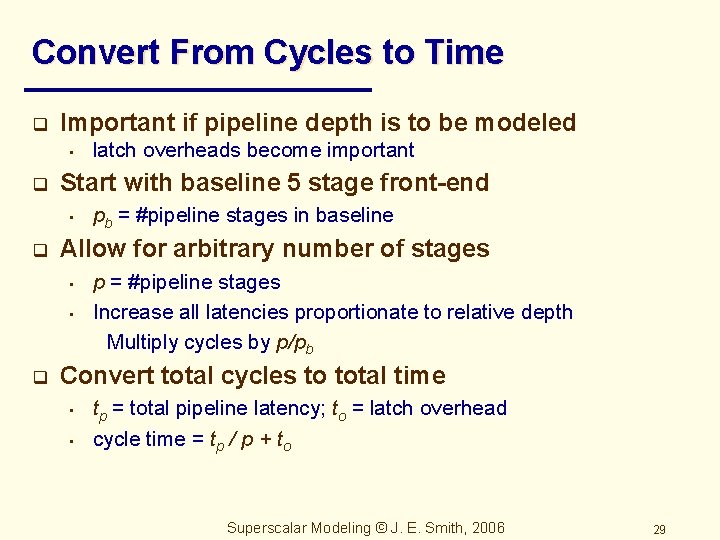

Convert From Cycles to Time q Important if pipeline depth is to be modeled • q Start with baseline 5 stage front-end • q pb = #pipeline stages in baseline Allow for arbitrary number of stages • • q latch overheads become important p = #pipeline stages Increase all latencies proportionate to relative depth Multiply cycles by p/pb Convert total cycles to total time • • tp = total pipeline latency; to = latch overhead cycle time = tp / p + to Superscalar Modeling © J. E. Smith, 2006 29

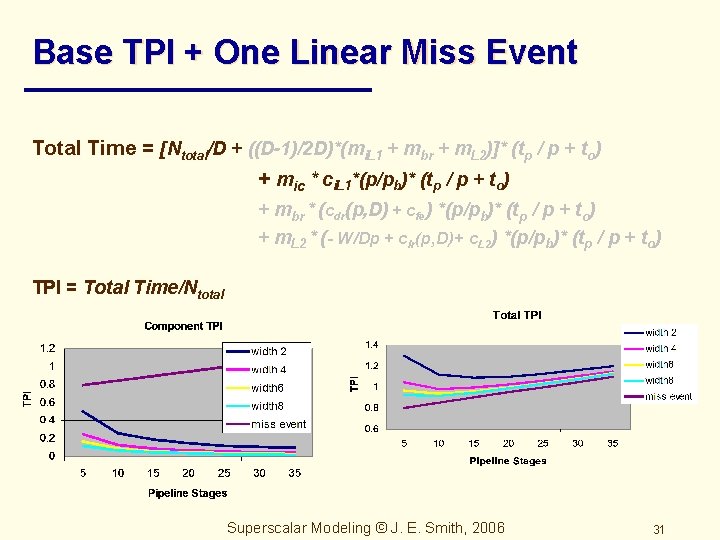

Convert to Absolute Time Total Time = [Ntotal/D + ((D-1)/2 D)*(mi. L 1 + mbr + m. L 2)]* (tp / p + to) + mic * ci. L 1*(p/pb)* (tp / p + to) + mbr * (cdr(p, D) + cfe) *(p/pb)* (tp / p + to) + m. L 2 * (- W/Dp + clr(p, D)+ c. L 2) *(p/pb)* (tp / p + to) TPI = Total Time/Ntotal q Now, consider some of the terms in isolation Superscalar Modeling © J. E. Smith, 2006 30

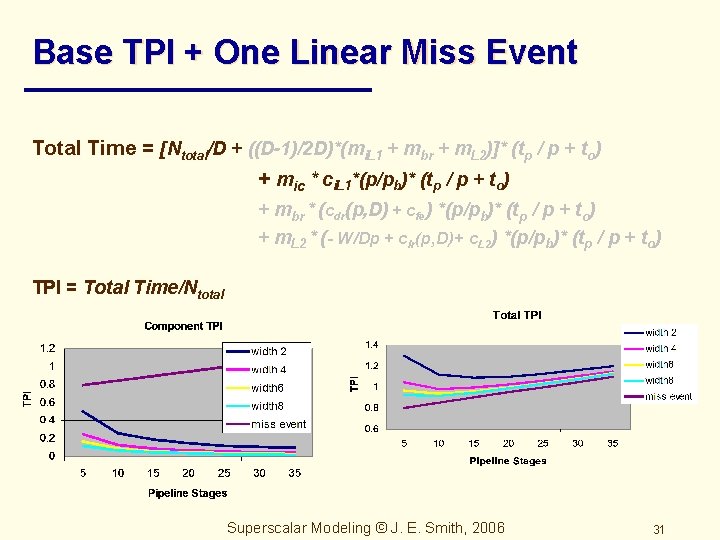

Base TPI + One Linear Miss Event Total Time = [Ntotal/D + ((D-1)/2 D)*(mi. L 1 + mbr + m. L 2)]* (tp / p + to) + mic * ci. L 1*(p/pb)* (tp / p + to) + mbr * (cdr(p, D) + cfe) *(p/pb)* (tp / p + to) + m. L 2 * (- W/Dp + clr(p, D)+ c. L 2) *(p/pb)* (tp / p + to) TPI = Total Time/Ntotal Superscalar Modeling © J. E. Smith, 2006 31

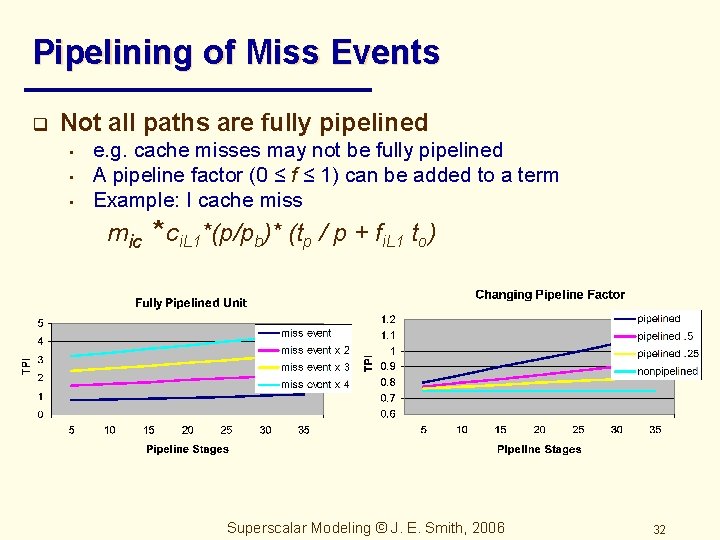

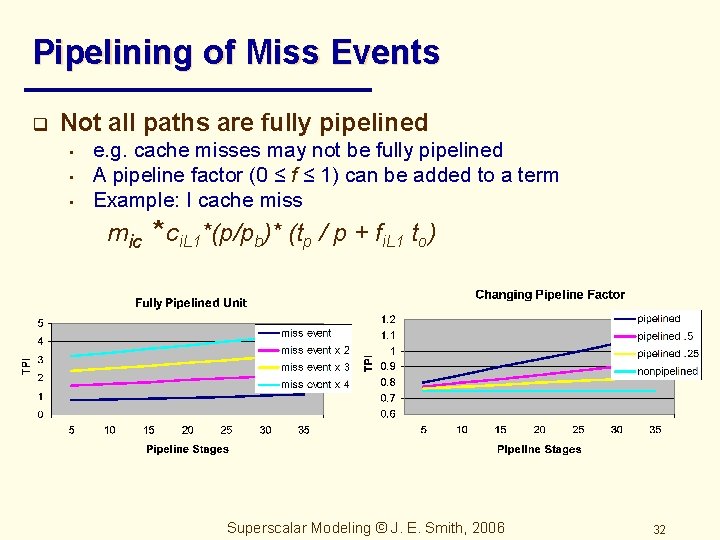

Pipelining of Miss Events q Not all paths are fully pipelined • • • e. g. cache misses may not be fully pipelined A pipeline factor (0 ≤ f ≤ 1) can be added to a term Example: I cache miss mic * ci. L 1*(p/pb)* (tp / p + fi. L 1 to) Superscalar Modeling © J. E. Smith, 2006 32

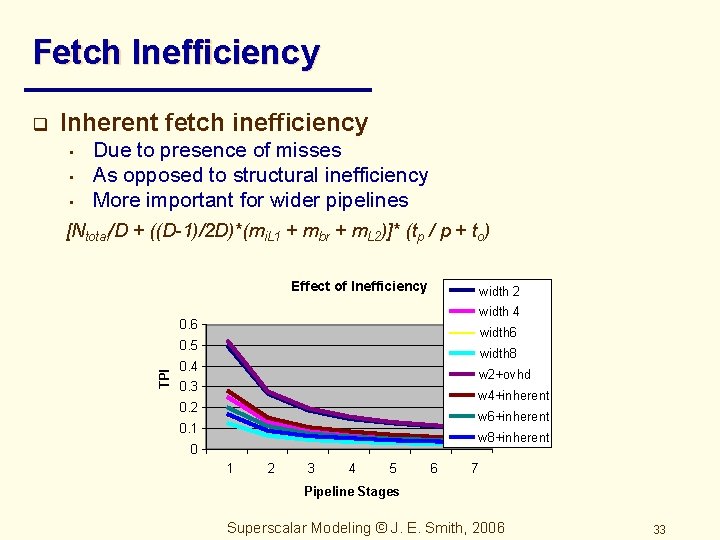

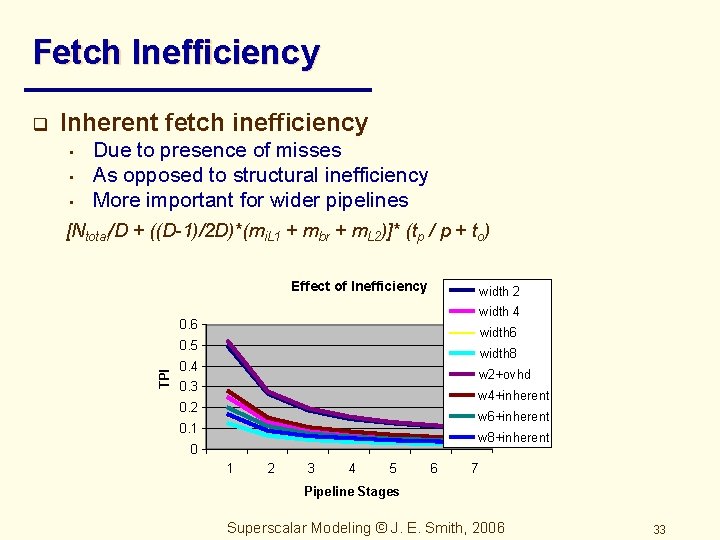

Fetch Inefficiency Inherent fetch inefficiency • • • Due to presence of misses As opposed to structural inefficiency More important for wider pipelines [Ntotal/D + ((D-1)/2 D)*(mi. L 1 + mbr + m. L 2)]* (tp / p + to) Effect of Inefficiency width 2 width 4 0. 6 width 6 0. 5 TPI q width 8 0. 4 w 2+ovhd 0. 3 w 4+inherent 0. 2 w 6+inherent 0. 1 w 8+inherent 0 1 2 3 4 5 6 7 Pipeline Stages Superscalar Modeling © J. E. Smith, 2006 33

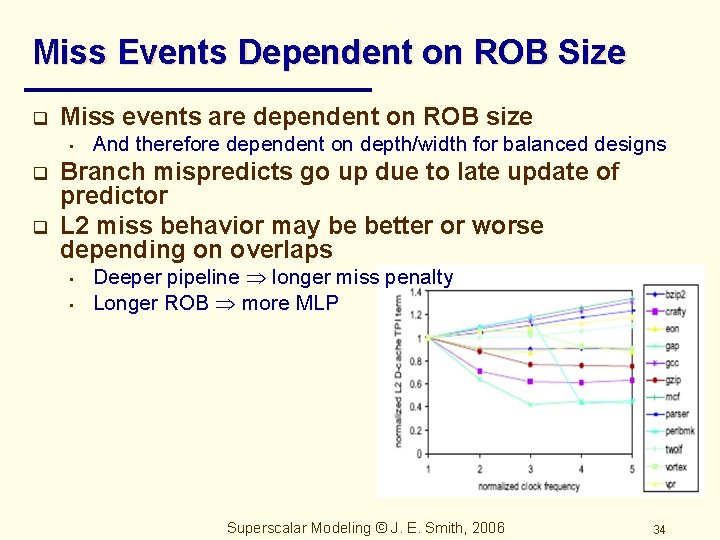

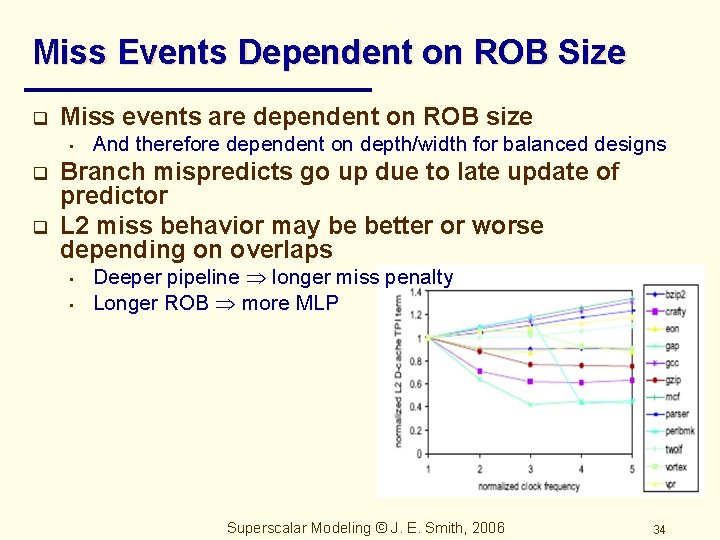

Miss Events Dependent on ROB Size q Miss events are dependent on ROB size • q q And therefore dependent on depth/width for balanced designs Branch mispredicts go up due to late update of predictor L 2 miss behavior may be better or worse depending on overlaps • • Deeper pipeline longer miss penalty Longer ROB more MLP Superscalar Modeling © J. E. Smith, 2006 34

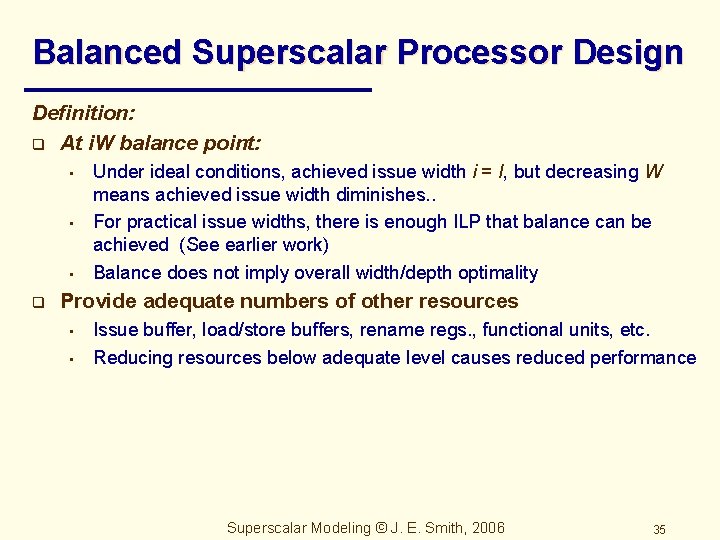

Balanced Superscalar Processor Design Definition: q At i. W balance point: • • • q Under ideal conditions, achieved issue width i = I, but decreasing W means achieved issue width diminishes. . For practical issue widths, there is enough ILP that balance can be achieved (See earlier work) Balance does not imply overall width/depth optimality Provide adequate numbers of other resources • • Issue buffer, load/store buffers, rename regs. , functional units, etc. Reducing resources below adequate level causes reduced performance Superscalar Modeling © J. E. Smith, 2006 35

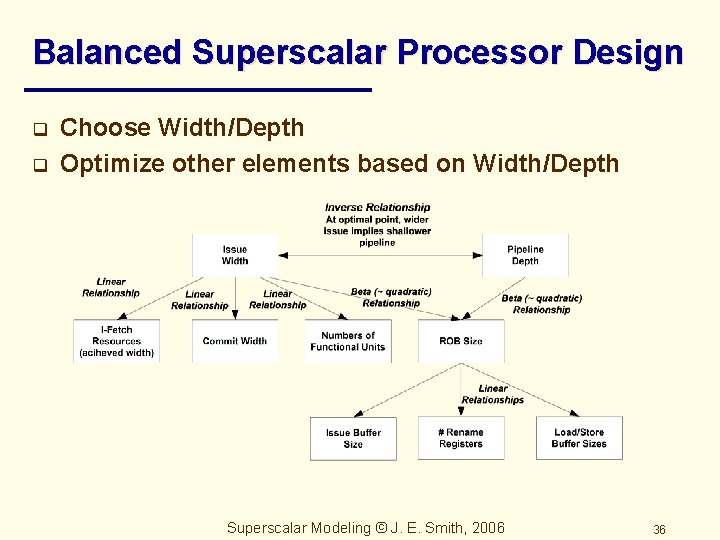

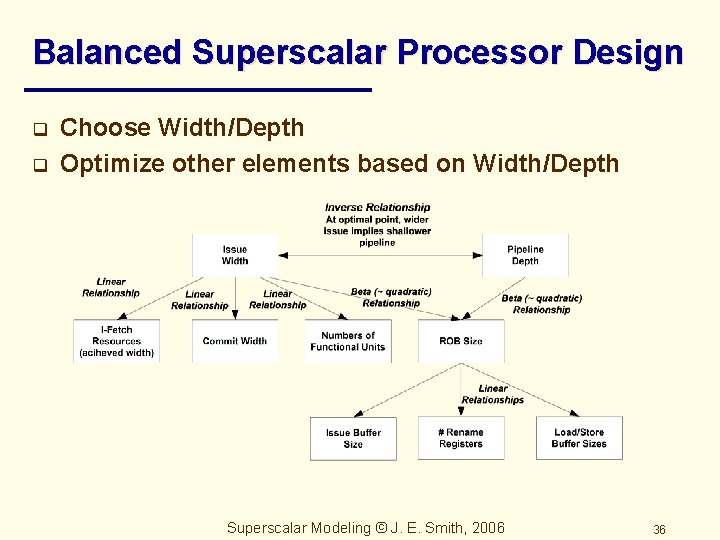

Balanced Superscalar Processor Design q q Choose Width/Depth Optimize other elements based on Width/Depth Superscalar Modeling © J. E. Smith, 2006 36

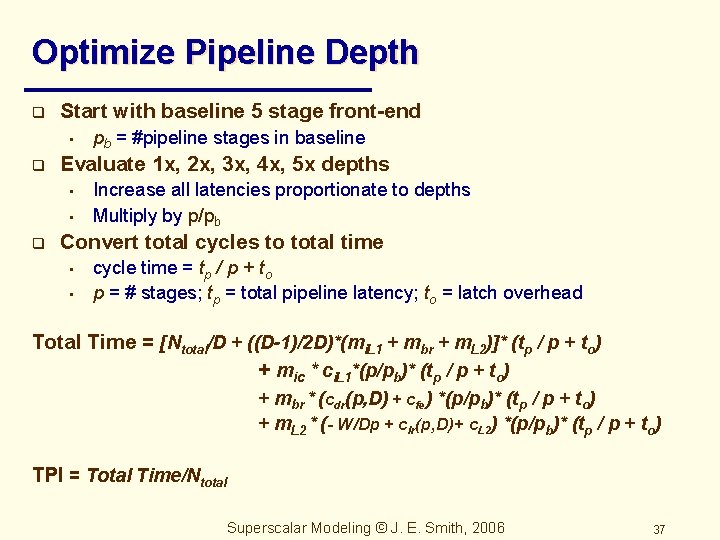

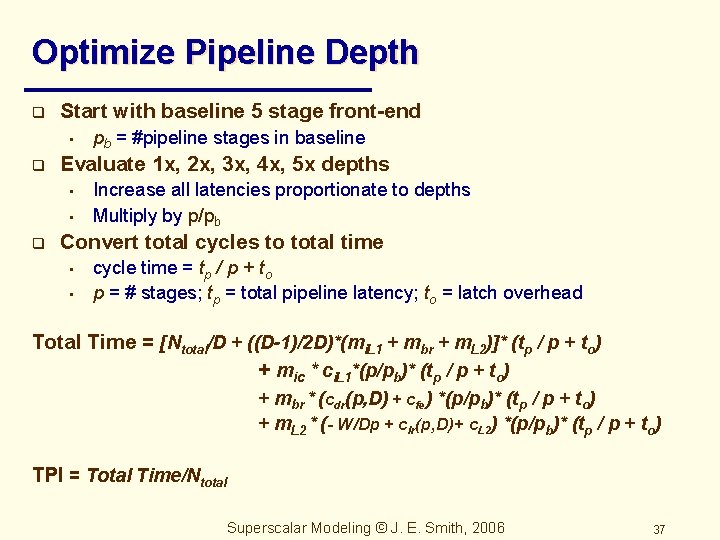

Optimize Pipeline Depth q Start with baseline 5 stage front-end • q Evaluate 1 x, 2 x, 3 x, 4 x, 5 x depths • • q pb = #pipeline stages in baseline Increase all latencies proportionate to depths Multiply by p/pb Convert total cycles to total time • • cycle time = tp / p + to p = # stages; tp = total pipeline latency; to = latch overhead Total Time = [Ntotal/D + ((D-1)/2 D)*(mi. L 1 + mbr + m. L 2)]* (tp / p + to) + mic * ci. L 1*(p/pb)* (tp / p + to) + mbr * (cdr(p, D) + cfe) *(p/pb)* (tp / p + to) + m. L 2 * (- W/Dp + clr(p, D)+ c. L 2) *(p/pb)* (tp / p + to) TPI = Total Time/Ntotal Superscalar Modeling © J. E. Smith, 2006 37

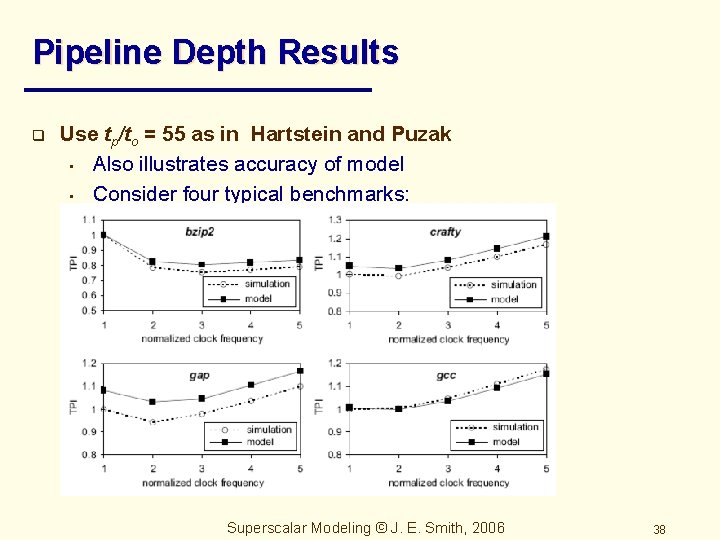

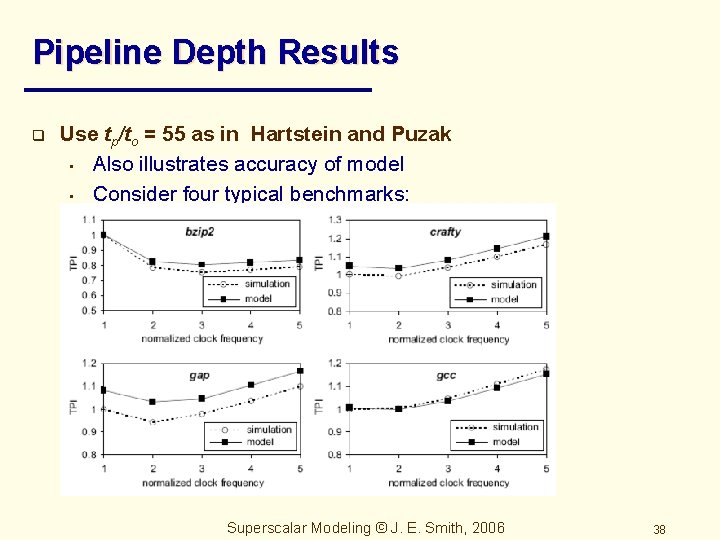

Pipeline Depth Results q Use tp/to = 55 as in Hartstein and Puzak • Also illustrates accuracy of model • Consider four typical benchmarks: Superscalar Modeling © J. E. Smith, 2006 38

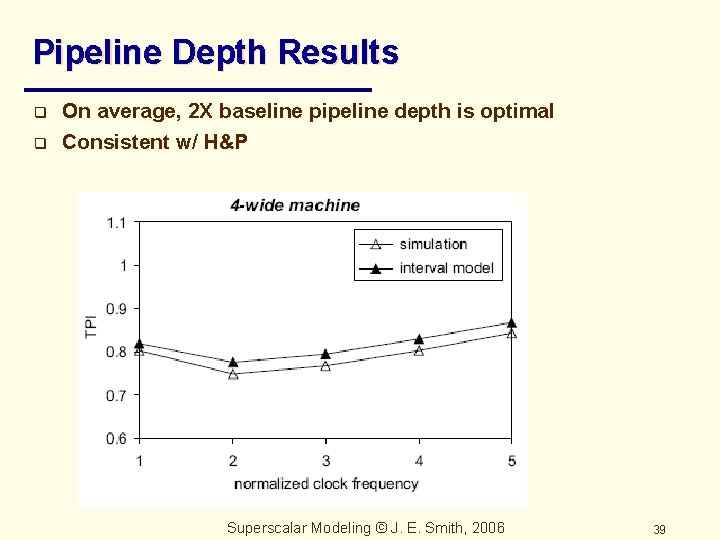

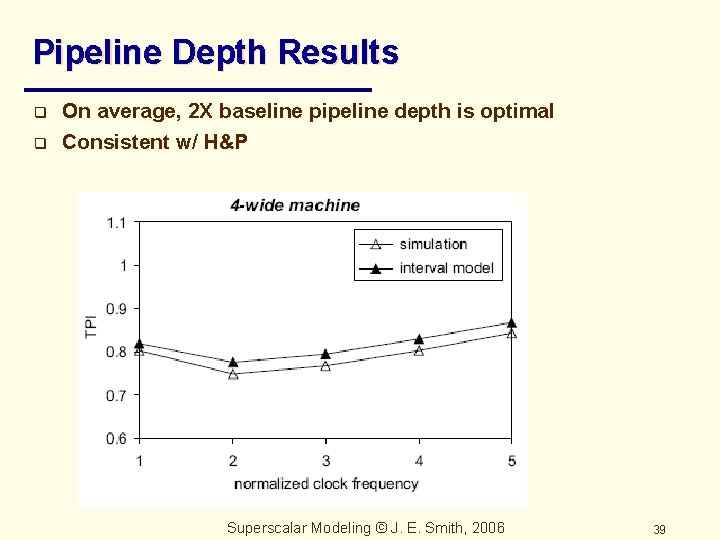

Pipeline Depth Results q q On average, 2 X baseline pipeline depth is optimal Consistent w/ H&P Superscalar Modeling © J. E. Smith, 2006 39

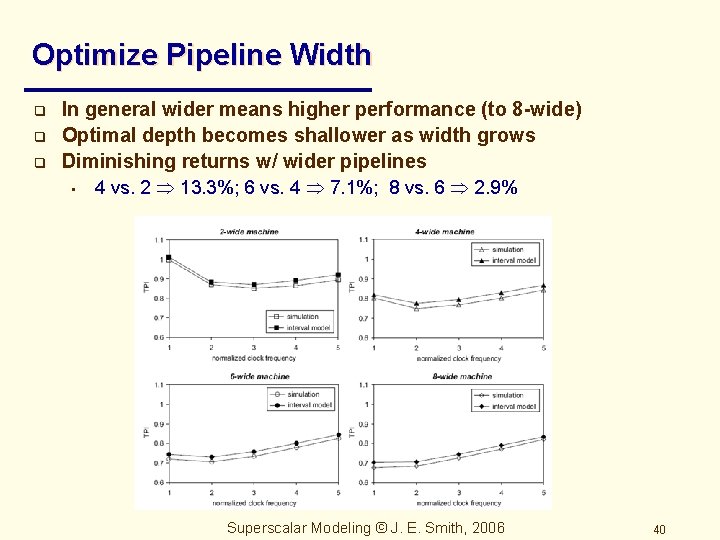

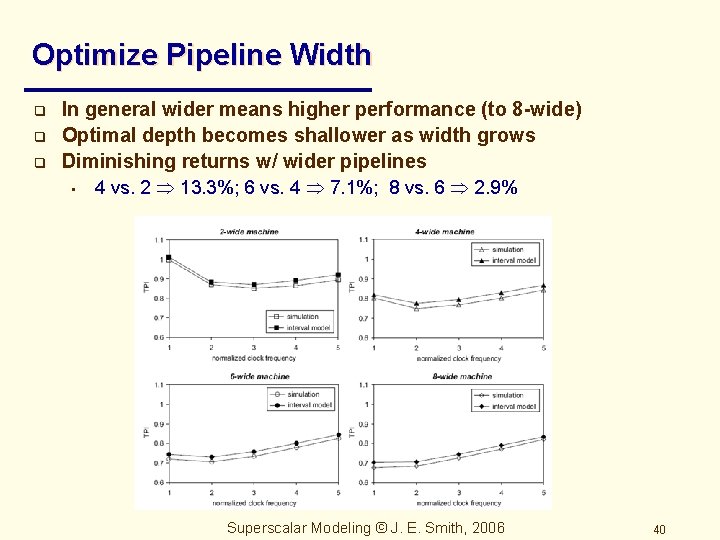

Optimize Pipeline Width q q q In general wider means higher performance (to 8 -wide) Optimal depth becomes shallower as width grows Diminishing returns w/ wider pipelines • 4 vs. 2 13. 3%; 6 vs. 4 7. 1%; 8 vs. 6 2. 9% Superscalar Modeling © J. E. Smith, 2006 40

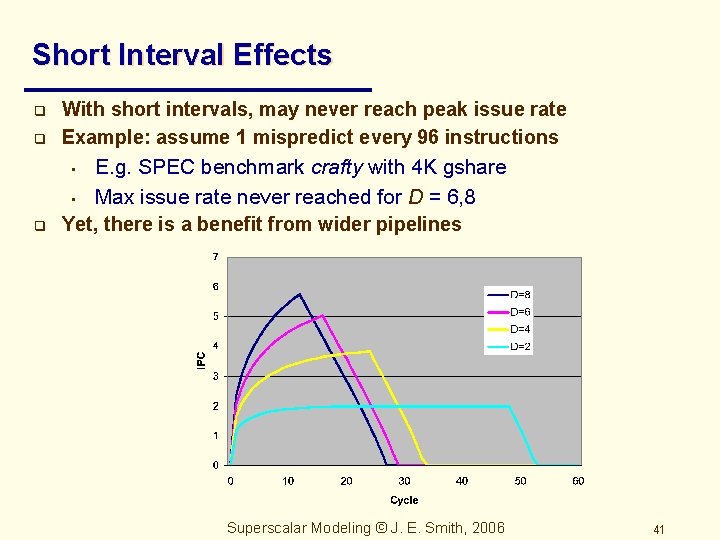

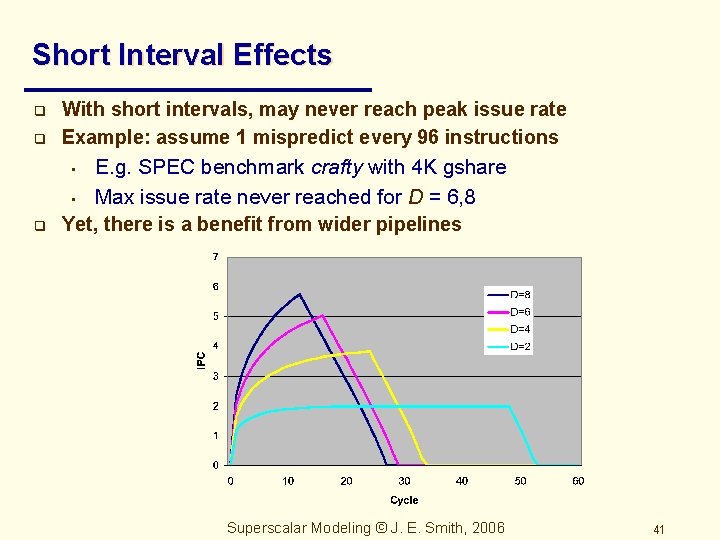

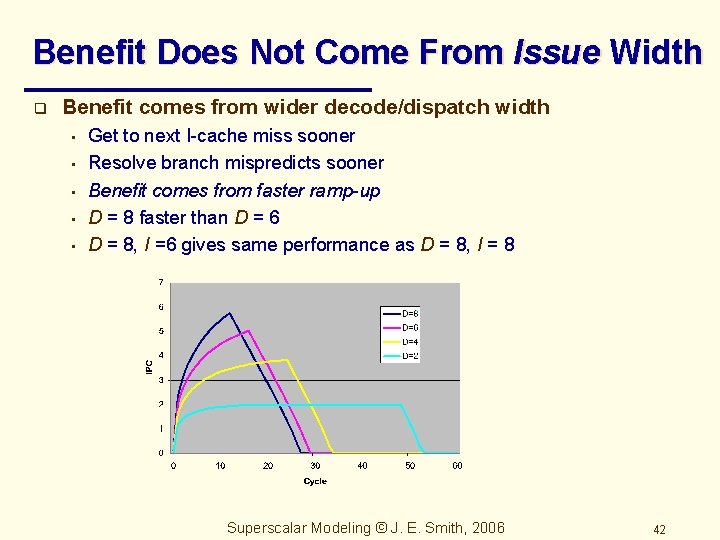

Short Interval Effects q With short intervals, may never reach peak issue rate Example: assume 1 mispredict every 96 instructions q E. g. SPEC benchmark crafty with 4 K gshare • Max issue rate never reached for D = 6, 8 Yet, there is a benefit from wider pipelines q • Superscalar Modeling © J. E. Smith, 2006 41

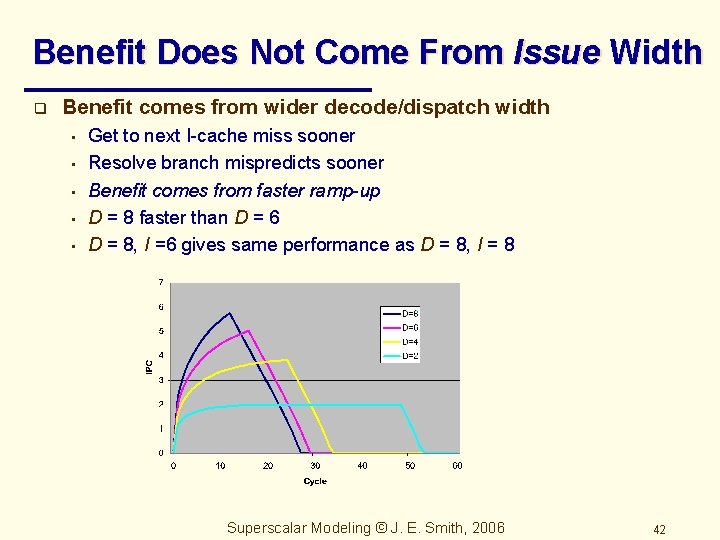

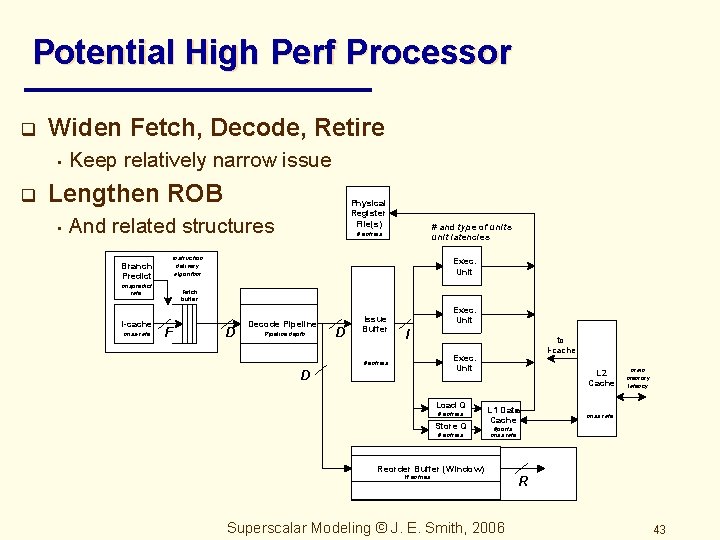

Benefit Does Not Come From Issue Width q Benefit comes from wider decode/dispatch width • • • Get to next I-cache miss sooner Resolve branch mispredicts sooner Benefit comes from faster ramp-up D = 8 faster than D = 6 D = 8, I =6 gives same performance as D = 8, I = 8 Superscalar Modeling © J. E. Smith, 2006 42

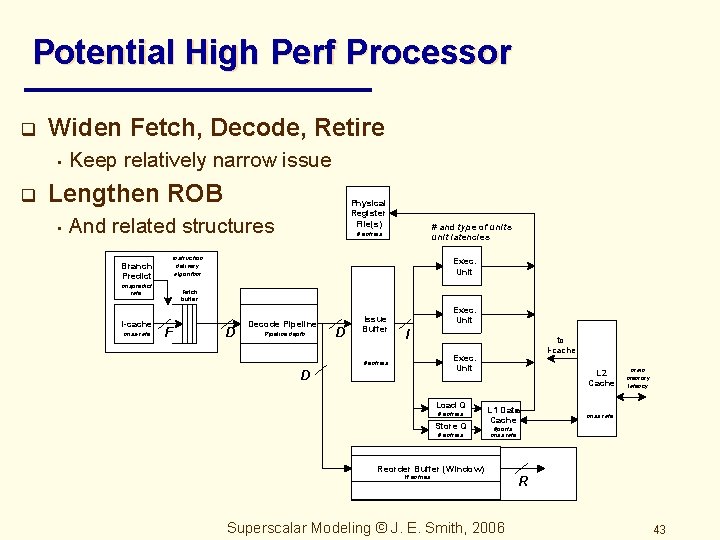

Potential High Perf Processor q Widen Fetch, Decode, Retire • q Keep relatively narrow issue Lengthen ROB • Physical Register File(s) And related structures Branch Predict instruction delivery algorithm mispredict rate I-cache miss-rate # and type of units unit latencies # entries Exec. Unit Fetch buffer F D Decode Pipeline depth D Issue Buffer Exec. Unit I to I-cache Exec. Unit # entries D Load Q # entries Store Q # entries L 2 Cache L 1 Data Cache main memory latency miss rate #ports miss rate Reorder Buffer (Window) W entries Superscalar Modeling © J. E. Smith, 2006 R 43

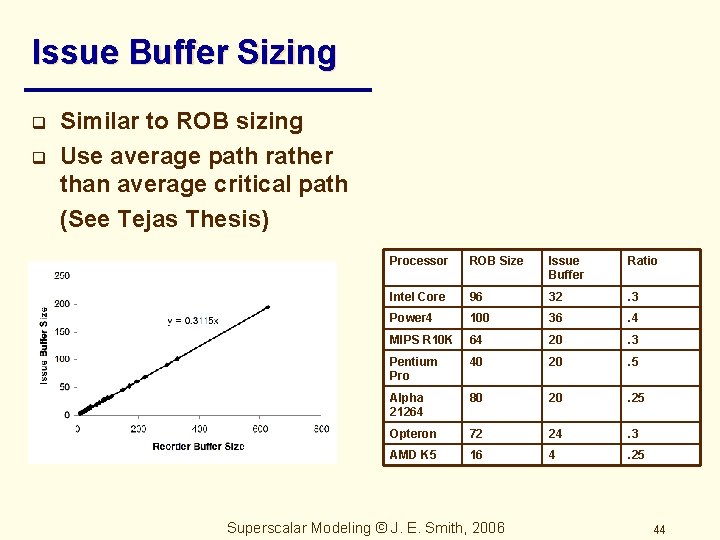

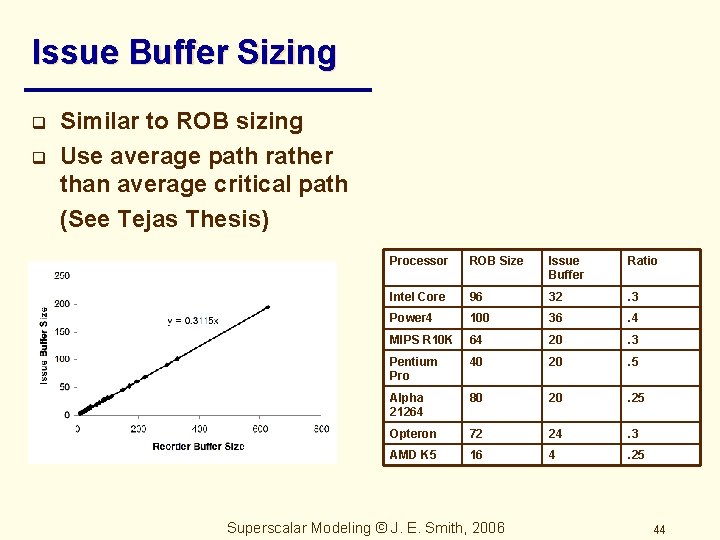

Issue Buffer Sizing q q Similar to ROB sizing Use average path rather than average critical path (See Tejas Thesis) Processor ROB Size Issue Buffer Ratio Intel Core 96 32 . 3 Power 4 100 36 . 4 MIPS R 10 K 64 20 . 3 Pentium Pro 40 20 . 5 Alpha 21264 80 20 . 25 Opteron 72 24 . 3 AMD K 5 16 4 . 25 Superscalar Modeling © J. E. Smith, 2006 44

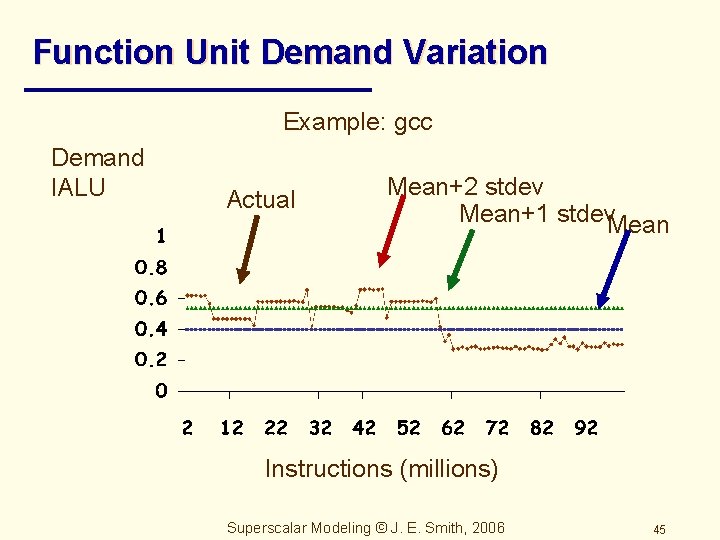

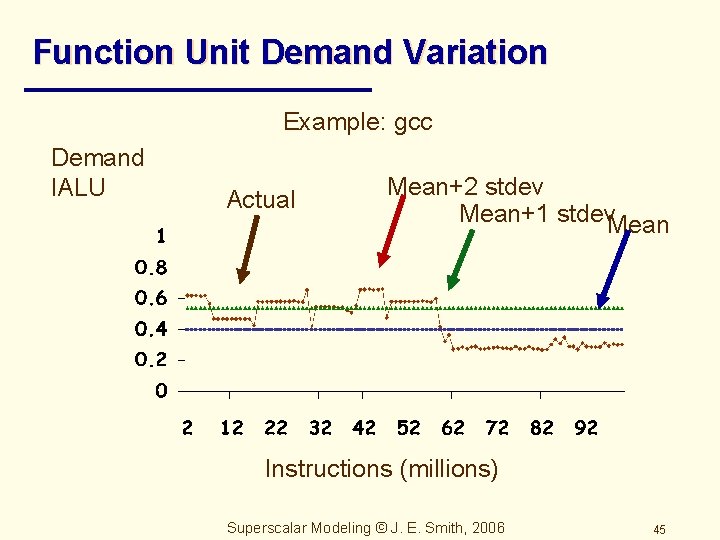

Function Unit Demand Variation Example: gcc Demand IALU Actual Mean+2 stdev Mean+1 stdev. Mean Instructions (millions) Superscalar Modeling © J. E. Smith, 2006 45

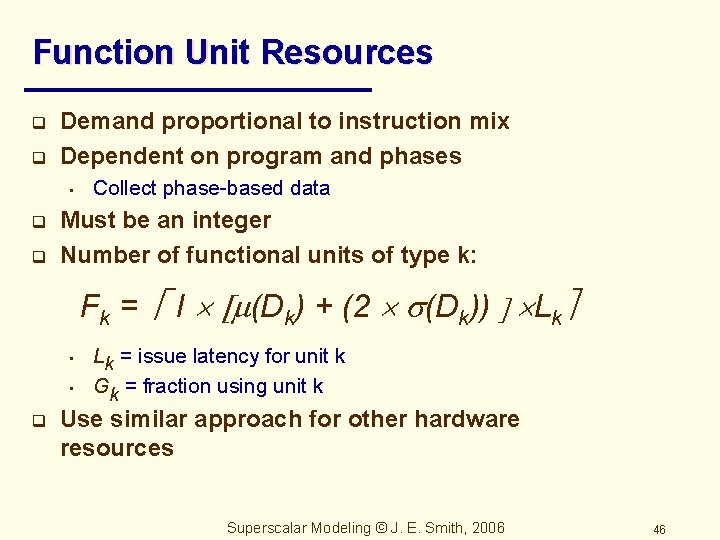

Function Unit Resources q q Demand proportional to instruction mix Dependent on program and phases • q q Collect phase-based data Must be an integer Number of functional units of type k: Fk = I (Dk) + (2 (Dk)) Lk • • q Lk = issue latency for unit k Gk = fraction using unit k Use similar approach for other hardware resources Superscalar Modeling © J. E. Smith, 2006 46

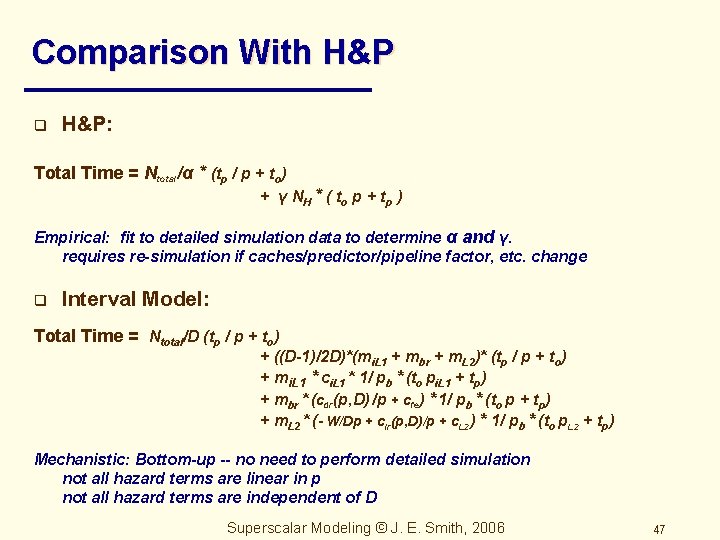

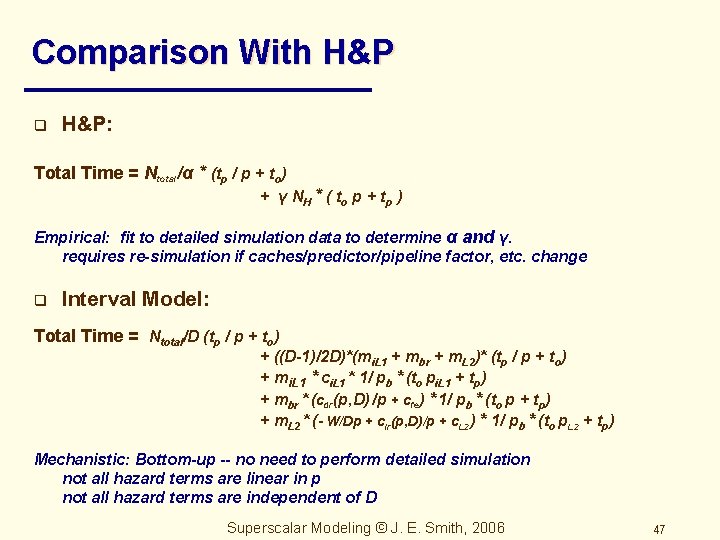

Comparison With H&P q H&P: Total Time = Ntotal/α * (tp / p + to) + γ N H * ( t o p + tp ) Empirical: fit to detailed simulation data to determine α and γ. requires re-simulation if caches/predictor/pipeline factor, etc. change q Interval Model: Total Time = Ntotal/D (tp / p + to) + ((D-1)/2 D)*(mi. L 1 + mbr + m. L 2)* (tp / p + to) + mi. L 1 * ci. L 1 * 1/ pb * (to pi. L 1 + tp) + mbr * (cdr(p, D) /p + cfe) * 1/ pb * (to p + tp) + m. L 2 * (- W/Dp + clr(p, D)/p + c. L 2) * 1/ pb * (to p. L 2 + tp) Mechanistic: Bottom-up -- no need to perform detailed simulation not all hazard terms are linear in p not all hazard terms are independent of D Superscalar Modeling © J. E. Smith, 2006 47

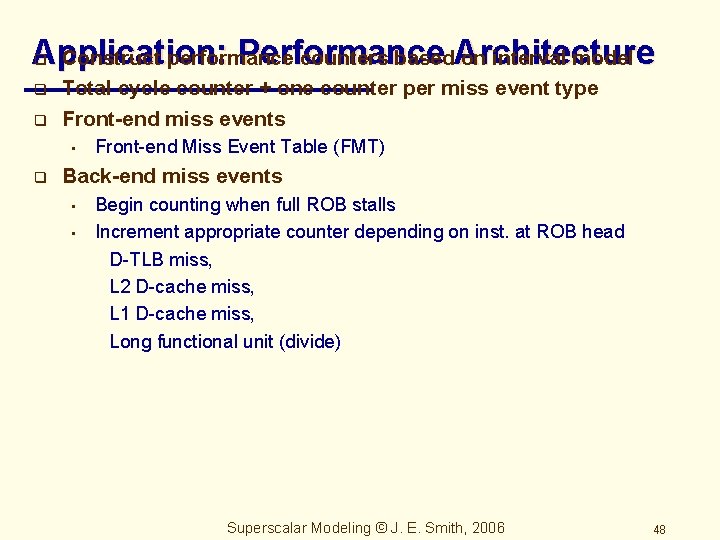

Application: Performance q Construct performance counters based. Architecture on interval model q q Total cycle counter + one counter per miss event type Front-end miss events • q Front-end Miss Event Table (FMT) Back-end miss events • • Begin counting when full ROB stalls Increment appropriate counter depending on inst. at ROB head D-TLB miss, L 2 D-cache miss, L 1 D-cache miss, Long functional unit (divide) Superscalar Modeling © J. E. Smith, 2006 48

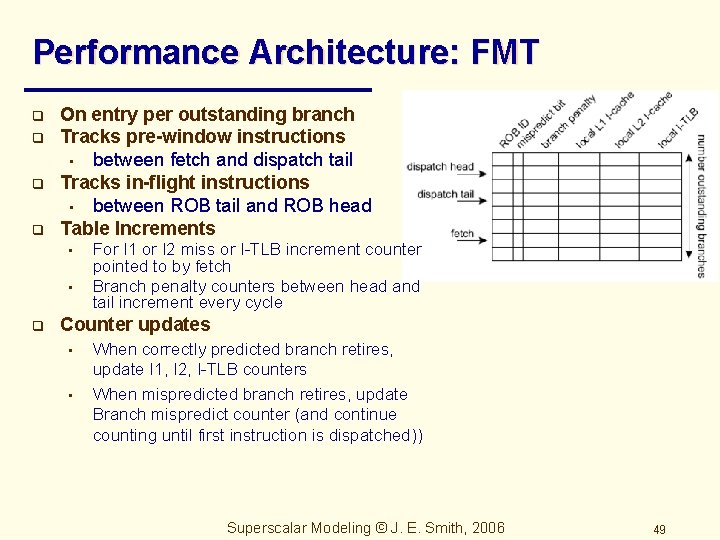

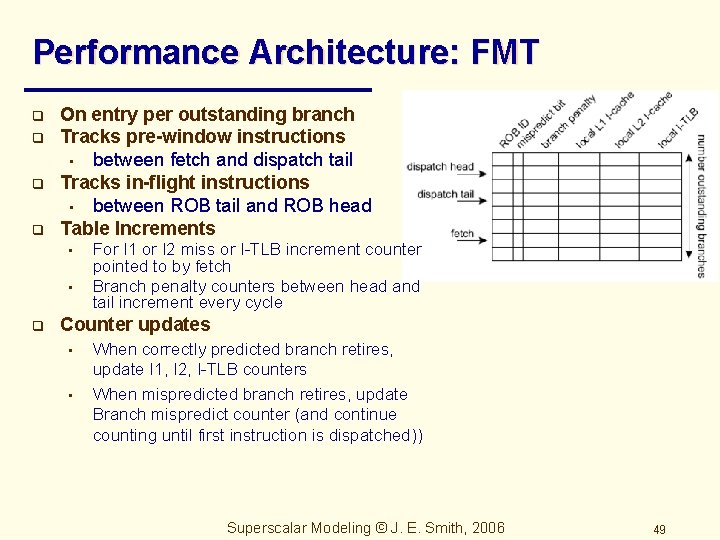

Performance Architecture: FMT q q On entry per outstanding branch Tracks pre-window instructions • between fetch and dispatch tail Tracks in-flight instructions • between ROB tail and ROB head Table Increments • • q For I 1 or I 2 miss or I-TLB increment counter pointed to by fetch Branch penalty counters between head and tail increment every cycle Counter updates • • When correctly predicted branch retires, update I 1, I 2, I-TLB counters When mispredicted branch retires, update Branch mispredict counter (and continue counting until first instruction is dispatched)) Superscalar Modeling © J. E. Smith, 2006 49

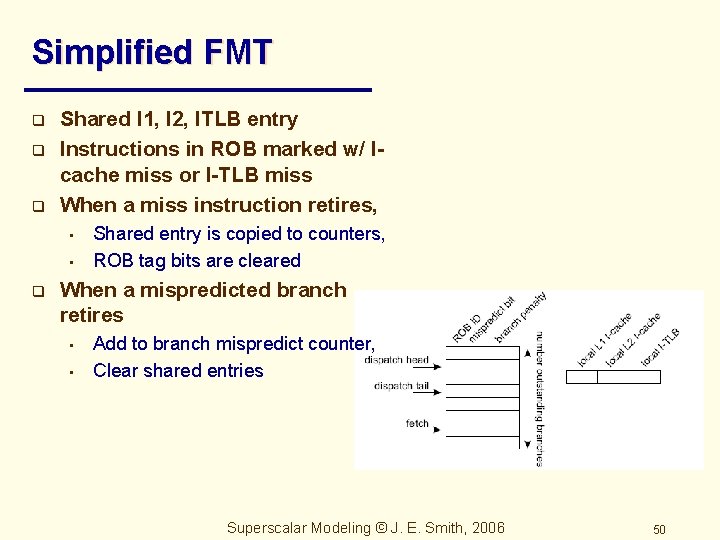

Simplified FMT q q q Shared I 1, I 2, ITLB entry Instructions in ROB marked w/ Icache miss or I-TLB miss When a miss instruction retires, • • q Shared entry is copied to counters, ROB tag bits are cleared When a mispredicted branch retires • • Add to branch mispredict counter, Clear shared entries Superscalar Modeling © J. E. Smith, 2006 50

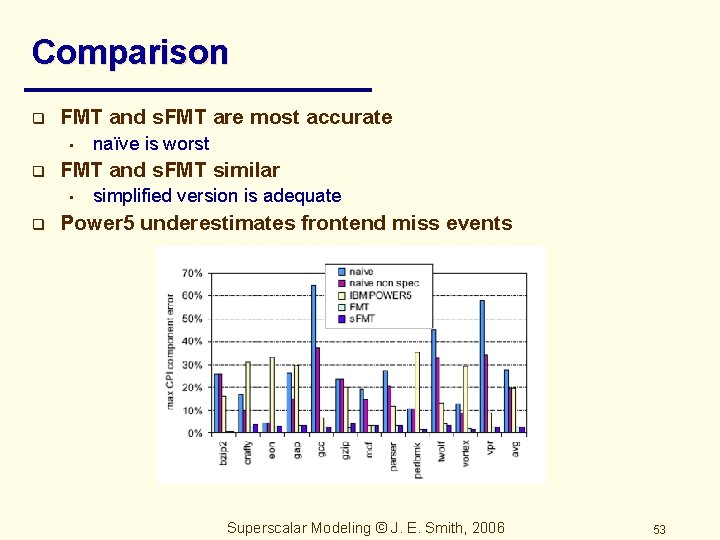

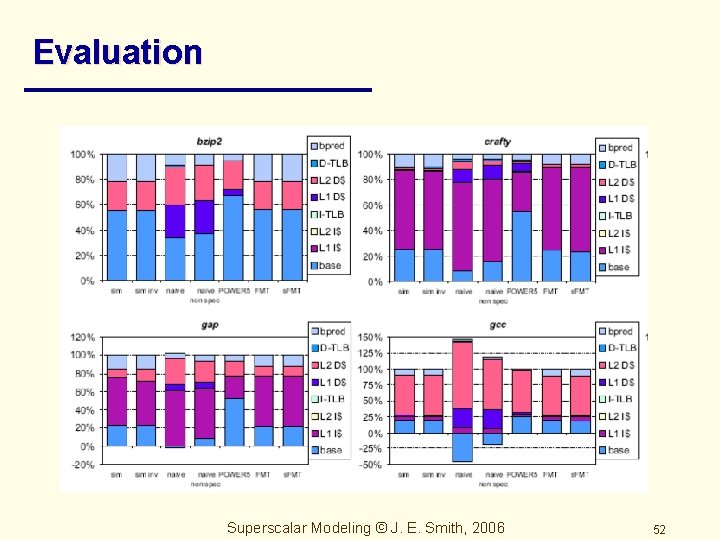

Evaluation q Compare: • • Simulation – add miss events one at a time and measure difference Simulation-rev – same as above, but reverse order of miss events naïve -- Count miss events, multiply by fixed penalty naïve non-spec – Similar to above, but wrong-path events not counted Power 5 – IBM Power 5 method FMT s. FMT Superscalar Modeling © J. E. Smith, 2006 51

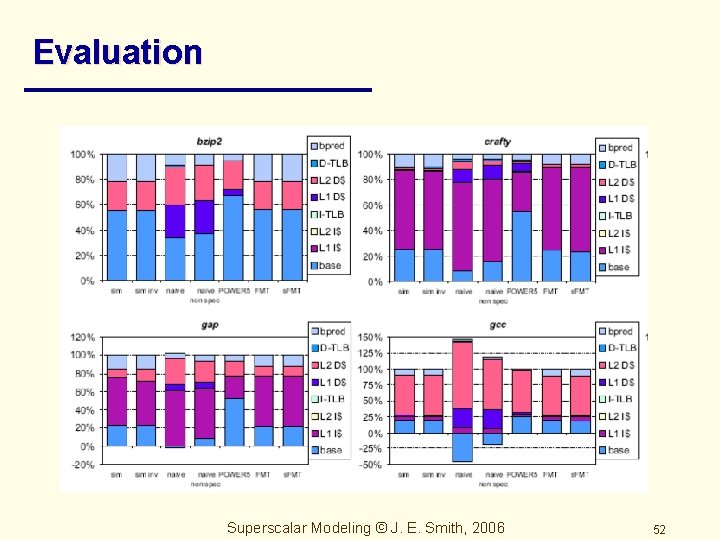

Evaluation Superscalar Modeling © J. E. Smith, 2006 52

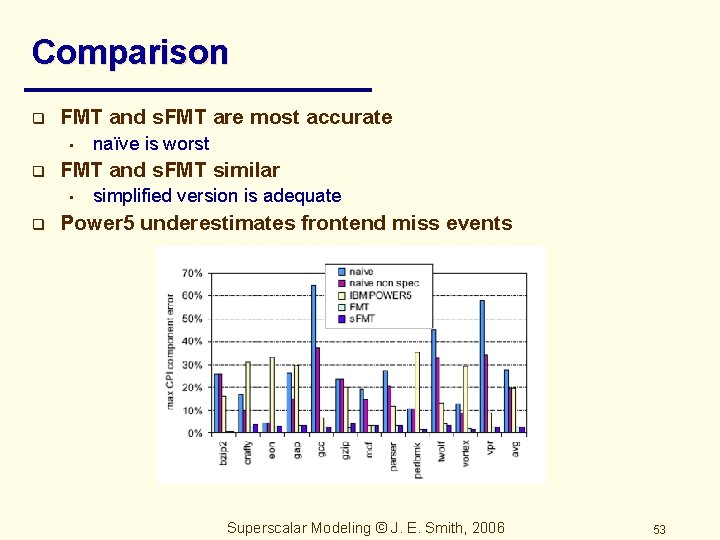

Comparison q FMT and s. FMT are most accurate • q FMT and s. FMT similar • q naïve is worst simplified version is adequate Power 5 underestimates frontend miss events Superscalar Modeling © J. E. Smith, 2006 53

Interval Model Development q q Michaud, Seznec, Jourdan – Issue transient Tejas Gap model – All transients Taha and Wills -- Interval (macro block) model Hartstein and Puzak – Optimal pipelines Superscalar Modeling © J. E. Smith, 2006 54

Conclusions q q q Intervals yield a divide-and-conquer approach Supports intuition (adds confidence to intuition) Its all about transients • q q Application to automated design, performance monitoring, very fast simulation, optimizing compiler analysis, etc. Analysis of pipeline limits, • • q The only things that count are cache miss and branch mispredictions Re-enforces conventional wisdom We are close to the practical limits for depth and width Extends to energy modeling (Tejas Ph. D) Superscalar Modeling © J. E. Smith, 2006 55