A hierarchical unsupervised growing neural network for clustering

- Slides: 28

A hierarchical unsupervised growing neural network for clustering gene expression patterns Javier Herrero, Alfonso Valencia & Joaquin Dopazo Seminar “Neural Networks in Bioinformatics” by Barbara Hammer Presentation by Nicolas Neubauer January, 25 th, 2003 25. 1. 2002 SOTA

Topics • Introduction – Motivation – Requirements • Parent Techniques and their Problems • SOTA • Conclusion 25. 1. 2002 SOTA 2

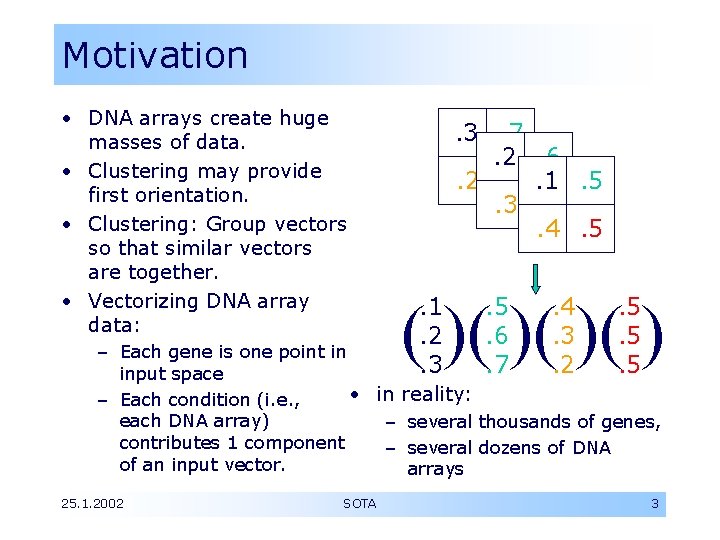

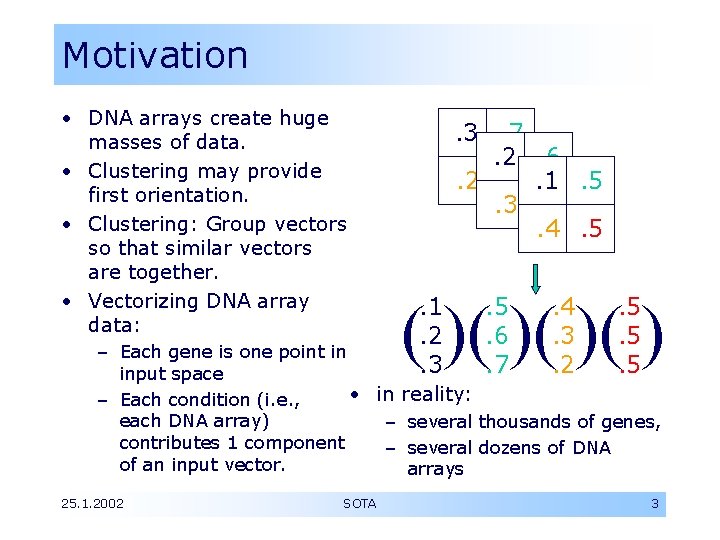

Motivation • DNA arrays create huge masses of data. • Clustering may provide first orientation. • Clustering: Group vectors so that similar vectors are together. • Vectorizing DNA array data: . 3. 7. 2. 6. 2. 5. 1. 5. 3. 5. 4. 5 ( )( ). 1. 2. 3 . 5. 6. 7 . 4. 3. 2 . 5. 5. 5 – Each gene is one point in input space • in reality: – Each condition (i. e. , each DNA array) – several thousands of genes, contributes 1 component – several dozens of DNA of an input vector. arrays 25. 1. 2002 SOTA 3

Requirements • Clustering algorithm should… … be tolerant to noise … capture high-level (inter-cluster) relations … be able to scale topology based on • topology of input data • users’ required level of detail • Clustering is based on similarity measure – Biological sense of distance function must be validated 25. 1. 2002 SOTA 4

Topics • Introduction • Parent Techniques and their Problems – Hierarchical Clustering – SOM • SOTA • Conclusion 25. 1. 2002 SOTA 5

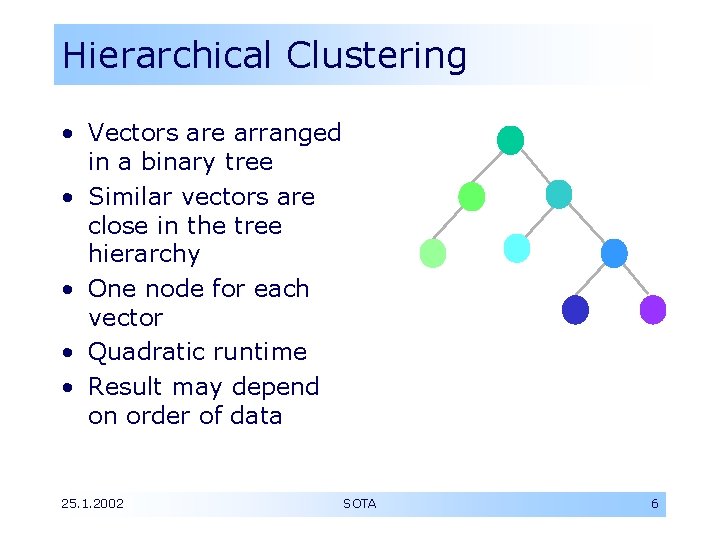

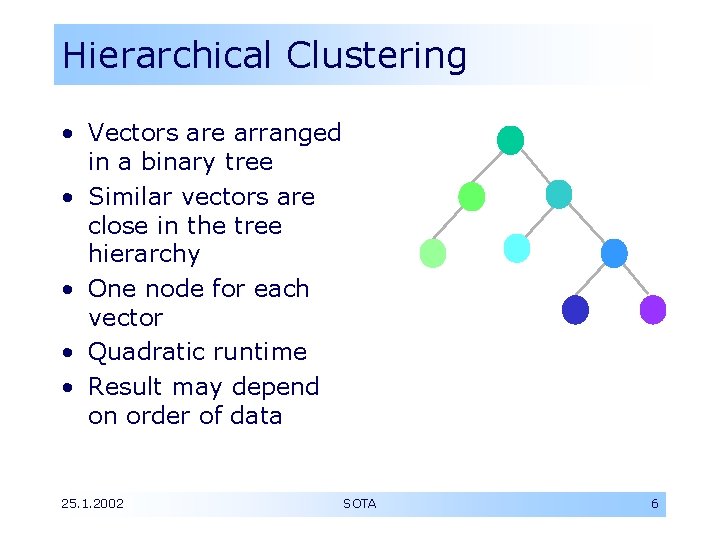

Hierarchical Clustering • Vectors are arranged in a binary tree • Similar vectors are close in the tree hierarchy • One node for each vector • Quadratic runtime • Result may depend on order of data 25. 1. 2002 SOTA 6

Hierarchical Clustering (II) Clustering algorithm should… … be tolerant to noise no - data is directly used to define position … capture high-level (inter-cluster) relations yes - tree structure gives very clear relationships between clusters … be able to scale topology based on – topology of input data yes - tree is built to fit distribution in input data – users’ required level of detail no - tree is fully built; may be reduced by later analysis, but has to be fully built before 25. 1. 2002 SOTA 7

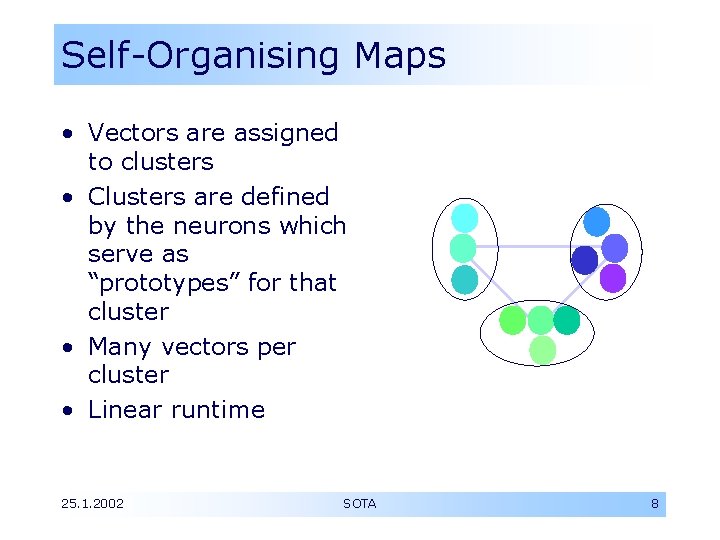

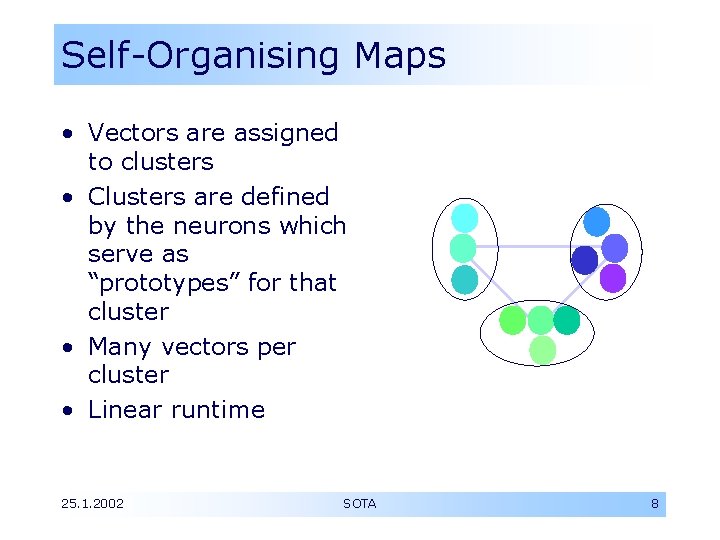

Self-Organising Maps • Vectors are assigned to clusters • Clusters are defined by the neurons which serve as “prototypes” for that cluster • Many vectors per cluster • Linear runtime 25. 1. 2002 SOTA 8

Self-Organising Maps (II) Clustering algorithm should… … be tolerant to noise yes - data is not aligned directly but in relation to prototypes which are averages … capture high-level (inter-cluster) relations ? - paper says no, but neighbourhood of neurons? … be able to scale topology based on – topology of input data no - number of clusters is set before-hand, data may be stretched to fit the SOM’s topology “if some particular type of profile is abundant, … this type of data will populate the vast majority of clusters” – users’ required level of detail yes - choice of number of clusters influences detail 25. 1. 2002 SOTA 9

Topics • Introduction • Parent Techniques and their Problems • SOTA – – Growing Cell Structure Learning Algorithm Distance Measures Abortion Criteria • Conclusion 25. 1. 2002 SOTA 10

SOTA Overview • SOTA stands for self-organising tree algorithm • SOTA combines best things from hierarchical clustering and SOMs: – Align clusters in a hierarchical structure – Use cluster prototypes created in a SOM-like way • New idea: Growing Cell Structures – Topology is built up incrementally as data requires it 25. 1. 2002 SOTA 11

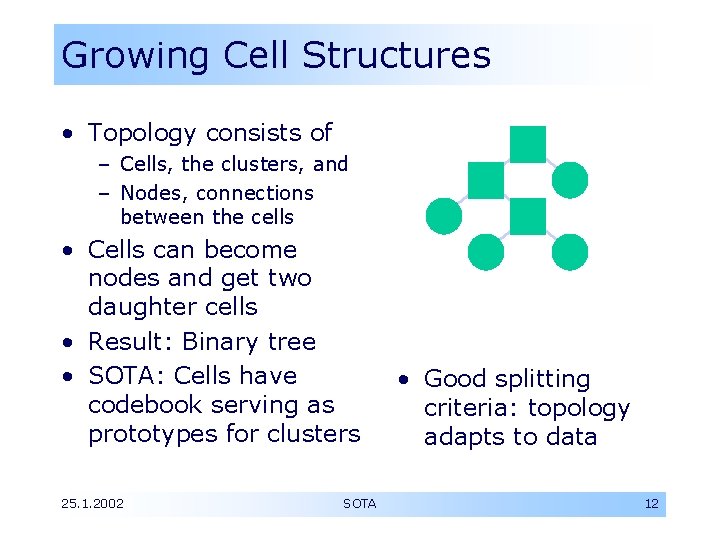

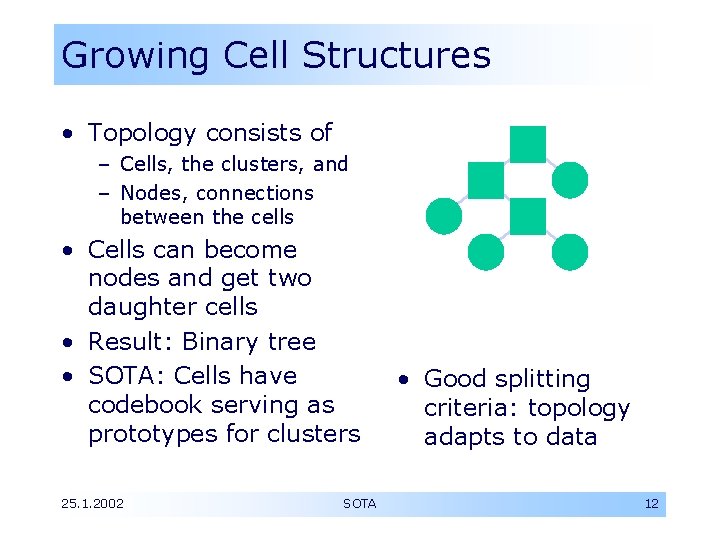

Growing Cell Structures • Topology consists of – Cells, the clusters, and – Nodes, connections between the cells • Cells can become nodes and get two daughter cells • Result: Binary tree • SOTA: Cells have codebook serving as prototypes for clusters 25. 1. 2002 SOTA • Good splitting criteria: topology adapts to data 12

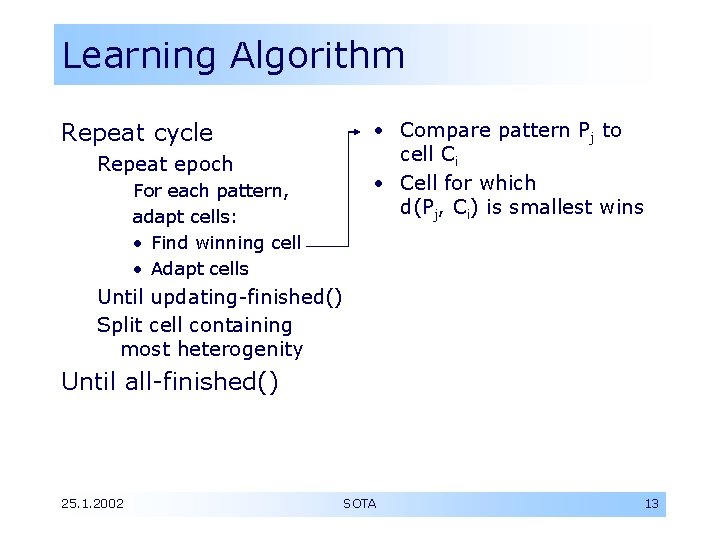

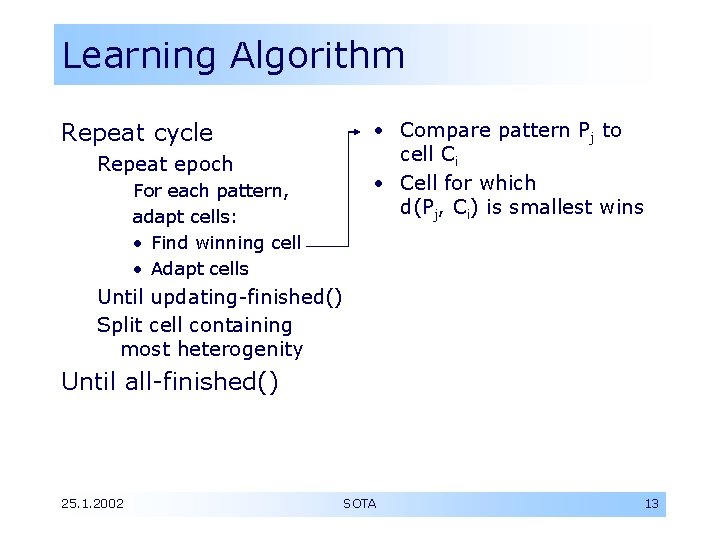

Learning Algorithm • Compare pattern Pj to cell Ci • Cell for which d(Pj, Ci) is smallest wins Repeat cycle Repeat epoch For each pattern, adapt cells: • Find winning cell • Adapt cells Until updating-finished() Split cell containing most heterogenity Until all-finished() 25. 1. 2002 SOTA 13

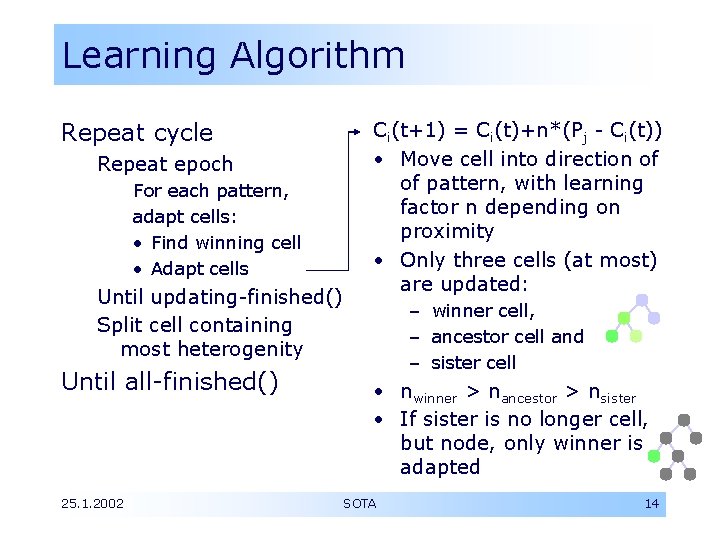

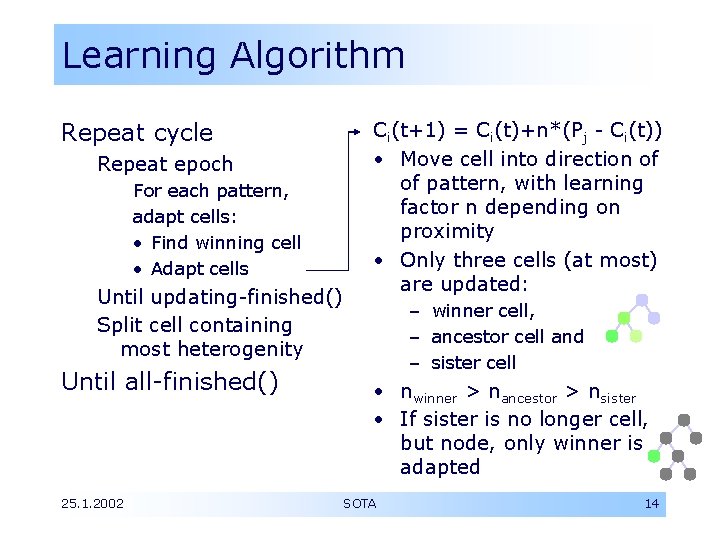

Learning Algorithm Repeat cycle Repeat epoch For each pattern, adapt cells: • Find winning cell • Adapt cells Until updating-finished() Split cell containing most heterogenity Until all-finished() 25. 1. 2002 Ci(t+1) = Ci(t)+n*(Pj - Ci(t)) • Move cell into direction of of pattern, with learning factor n depending on proximity • Only three cells (at most) are updated: – winner cell, – ancestor cell and – sister cell • nwinner > nancestor > nsister • If sister is no longer cell, but node, only winner is adapted SOTA 14

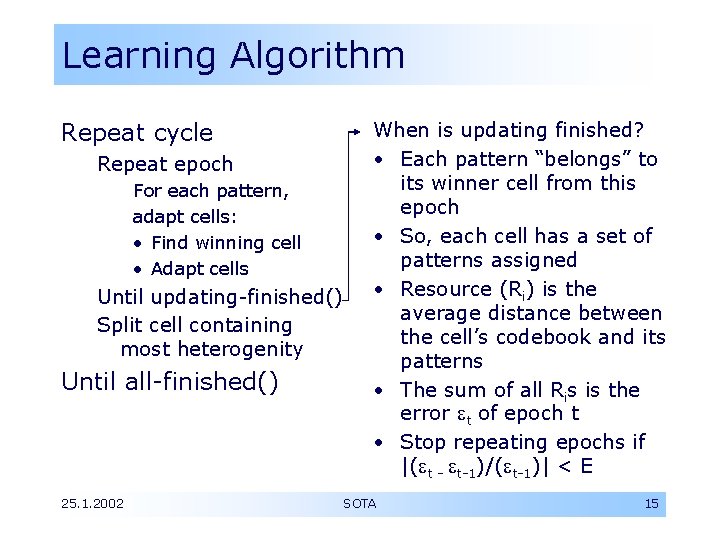

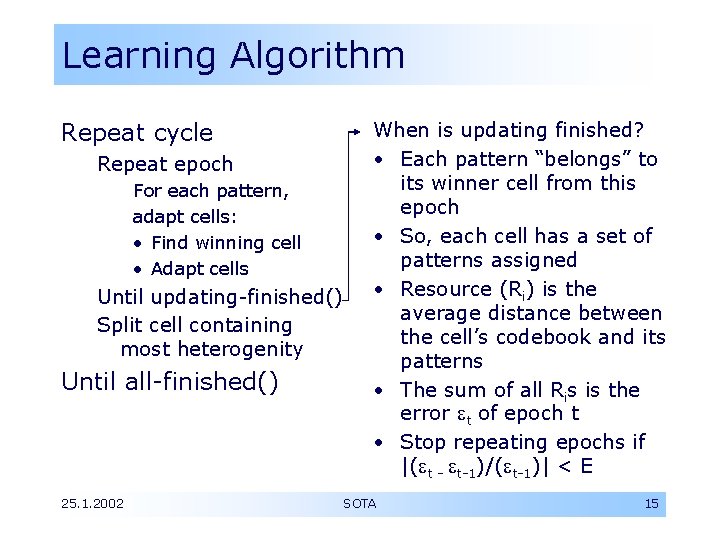

Learning Algorithm Repeat cycle Repeat epoch For each pattern, adapt cells: • Find winning cell • Adapt cells Until updating-finished() Split cell containing most heterogenity Until all-finished() 25. 1. 2002 When is updating finished? • Each pattern “belongs” to its winner cell from this epoch • So, each cell has a set of patterns assigned • Resource (Ri) is the average distance between the cell’s codebook and its patterns • The sum of all Ris is the error t of epoch t • Stop repeating epochs if |( t - t-1)/( t-1)| < E SOTA 15

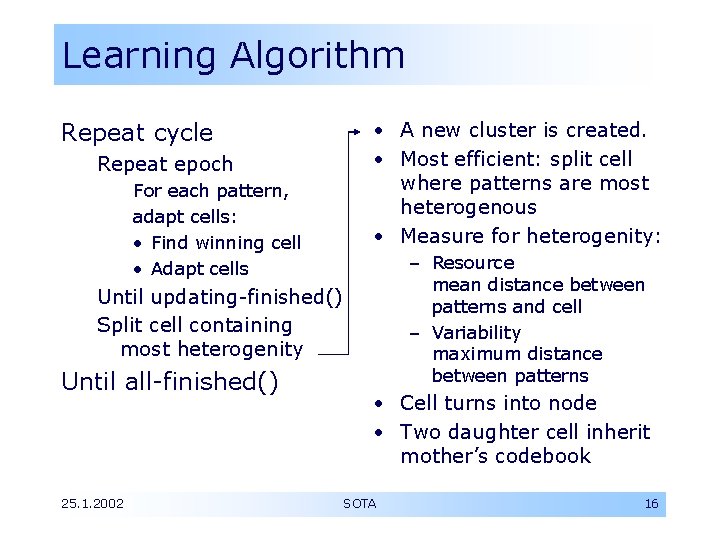

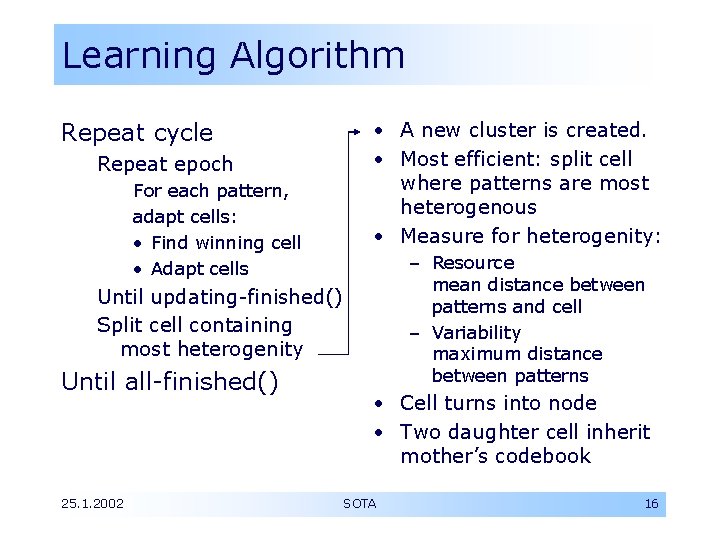

Learning Algorithm • A new cluster is created. • Most efficient: split cell where patterns are most heterogenous • Measure for heterogenity: Repeat cycle Repeat epoch For each pattern, adapt cells: • Find winning cell • Adapt cells – Resource mean distance between patterns and cell – Variability maximum distance between patterns Until updating-finished() Split cell containing most heterogenity Until all-finished() 25. 1. 2002 • Cell turns into node • Two daughter cell inherit mother’s codebook SOTA 16

Learning Algorithm Repeat cycle Repeat epoch For each pattern, adapt cells: • Find winning cell • Adapt cells Until updating-finished() Split cell containing most heterogenity The big question: When to stop iterating cycles • When each pattern has its own cell • When maximum number of nodes is reached • When maximum resource or variability value drops under a certain level Until all-finished() 25. 1. 2002 SOTA – See later for sophisticated calculations of such a threshold 17

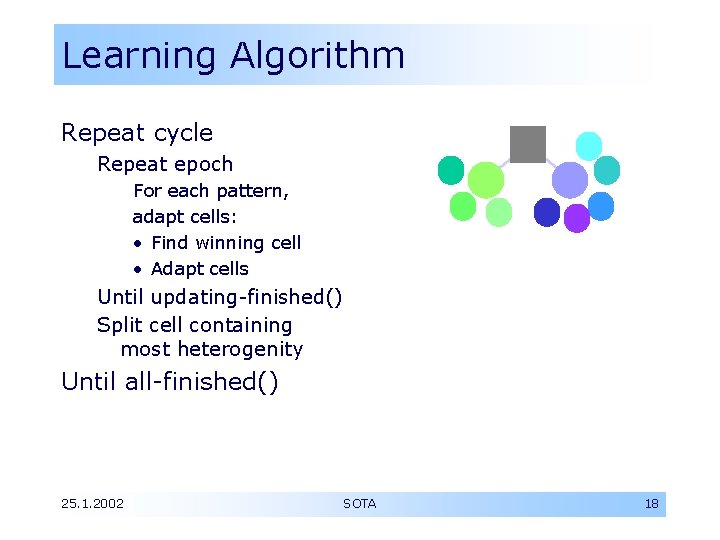

Learning Algorithm Repeat cycle Repeat epoch For each pattern, adapt cells: • Find winning cell • Adapt cells Until updating-finished() Split cell containing most heterogenity Until all-finished() 25. 1. 2002 SOTA 18

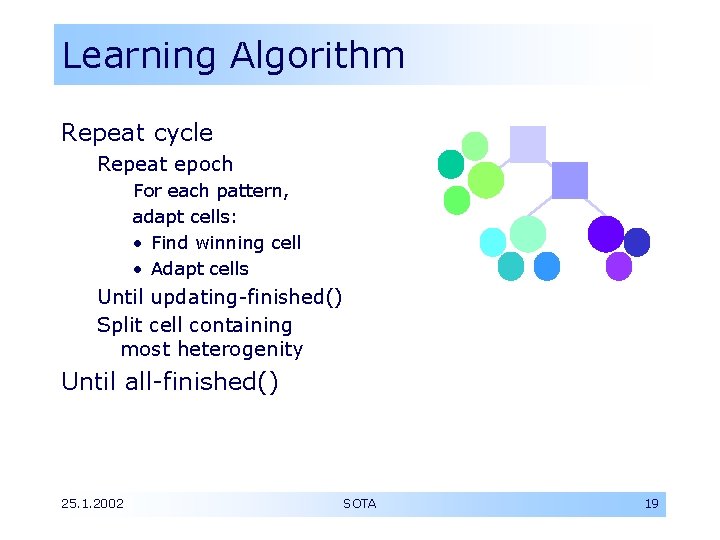

Learning Algorithm Repeat cycle Repeat epoch For each pattern, adapt cells: • Find winning cell • Adapt cells Until updating-finished() Split cell containing most heterogenity Until all-finished() 25. 1. 2002 SOTA 19

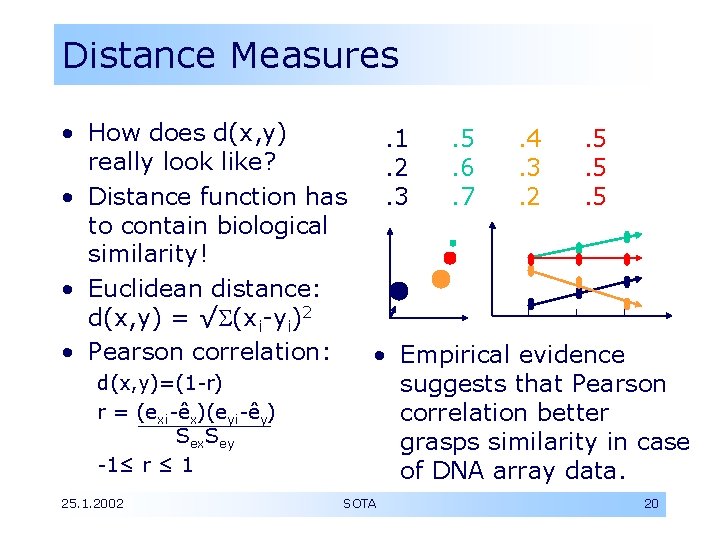

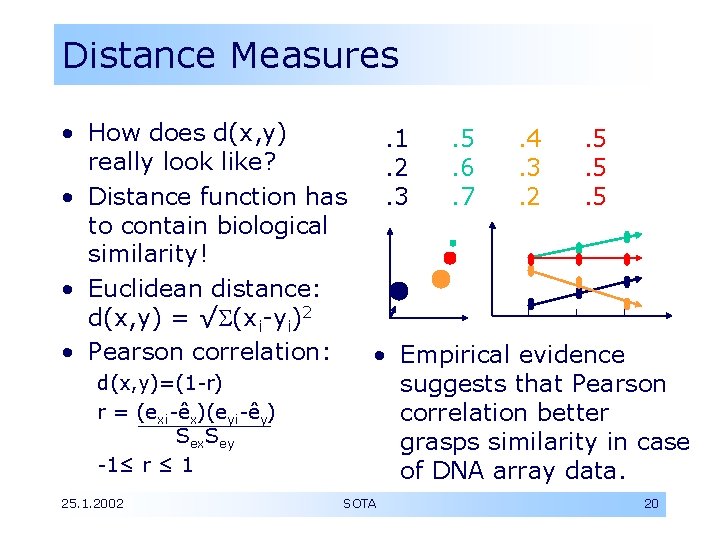

Distance Measures • How does d(x, y) really look like? • Distance function has to contain biological similarity! • Euclidean distance: d(x, y) = √ (xi-yi)2 • Pearson correlation: d(x, y)=(1 -r) r = (exi-êx)(eyi-êy) Sex. Sey -1≤ r ≤ 1 25. 1. 2002 . 1. 2. 3 . 5. 6. 7 . 4. 3. 2 . 5. 5. 5 • Empirical evidence suggests that Pearson correlation better grasps similarity in case of DNA array data. SOTA 20

SOTA evaluation Clustering algorithm should… … be tolerant to noise yes - data is averaged via codebooks just as in SOM … capture high-level (inter-cluster) relations yes - hierarchical structure as in hierarch. clustering … be able to scale topology based on – topology of input data yes - tree is extended to meet the distribution of variance in the input data – users’ required level of detail yes - tree can be adjusted to desired level of detail; criteria may also be set to meet certain confidence levels. . . 25. 1. 2002 SOTA 21

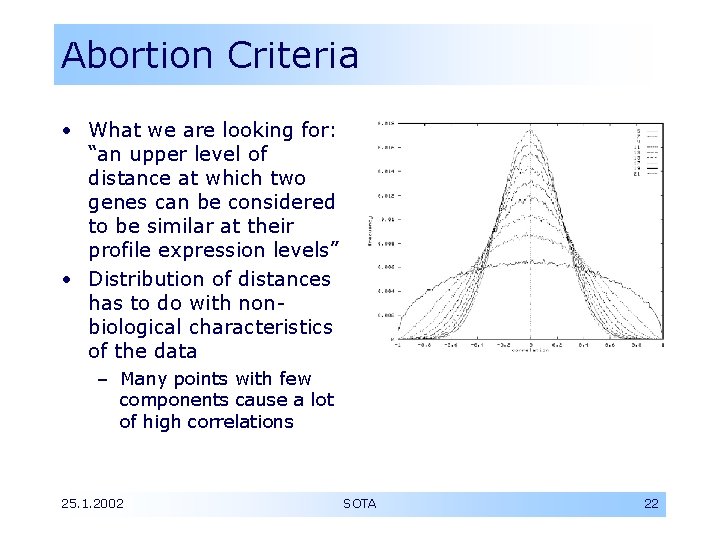

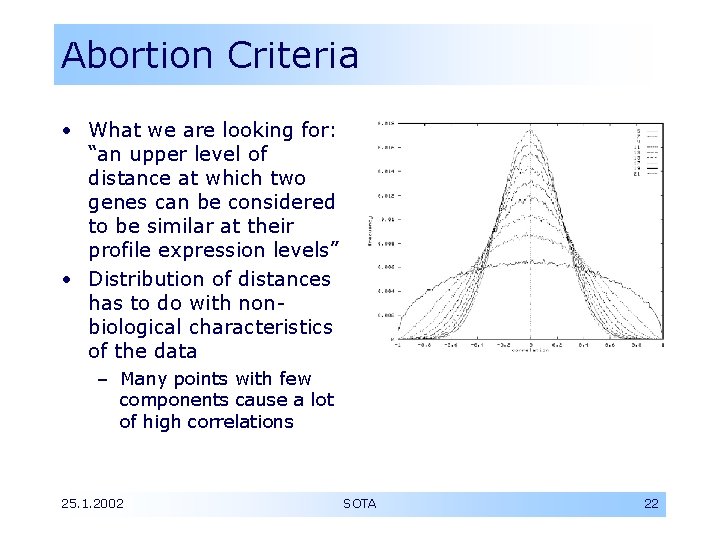

Abortion Criteria • What we are looking for: “an upper level of distance at which two genes can be considered to be similar at their profile expression levels” • Distribution of distances has to do with nonbiological characteristics of the data – Many points with few components cause a lot of high correlations 25. 1. 2002 SOTA 22

Abortion Criteria (II) • Idea: • Problem: – If we knew the distribution in the data that is random, – A confidence level could be given – Meaning that having a given distance given two unrelated genes is not more probable than . 25. 1. 2002 – We cannot know the random distribution: – We only know the real distribution which is partially due to random properties, partially due to “real” correlations • Solution: SOTA – Approximation by shuffling 23

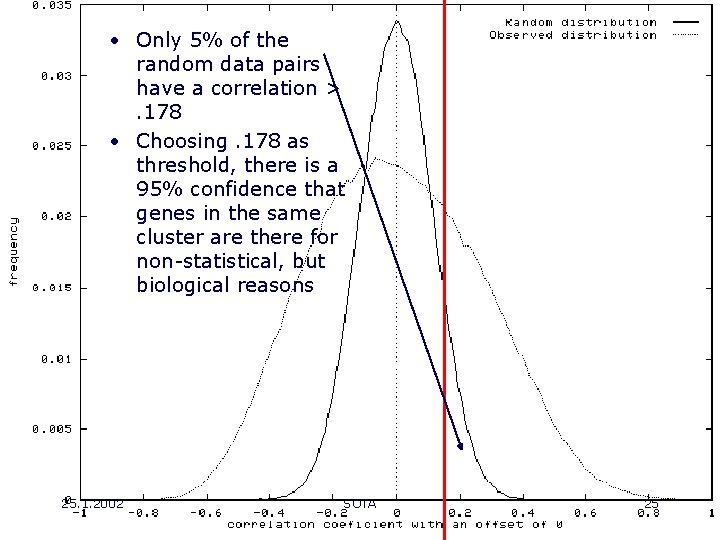

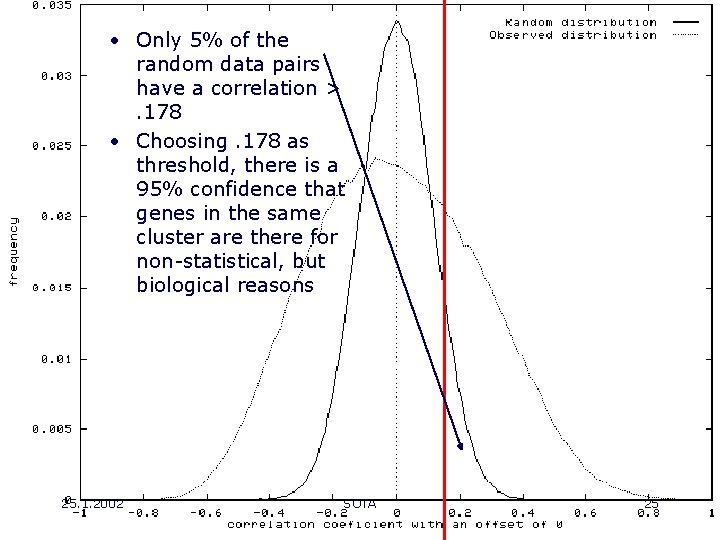

Abortion Criteria (III) • Shuffling • Claim: – For each pattern, the components are randomly shuffled – Correlation is destroyed – Number of points, ranges of values, frequency of values are conserved 25. 1. 2002 – Random distance distribution in this data approximates random distance distribution in real data • Conclusion – If p(corr. >a)<=5% in random data, – Finding corr. >a in the real data is meaningful with 95% confidence SOTA 24

• Only 5% of the random data pairs have a correlation >. 178 • Choosing. 178 as threshold, there is a 95% confidence that genes in the same cluster are there for non-statistical, but biological reasons 25. 1. 2002 SOTA 25

Topics • Introduction • Parent Techniques and their Problems • SOTA • Conclusion – Additional nice properties – Summary of differences compared to parent techniques 25. 1. 2002 SOTA 26

Additional properties • As patterns do not have to be compared to each other, runtime is approximately linear in the number of patterns – Like SOM – Hierarchical clustering uses a distance matrix relating each pattern to each other pattern • The cell’s vectors approach very closely the average of the assigned data points 25. 1. 2002 SOTA 27

Summary of SOTA • Compared to SOMs – SOTA builds up a topology that reflects higher-order relations – Level of detail can be defined very flexible • Compared to standard hierarchical clustering – SOTA is more robust to noise – Has better runtime properties – Has a more flexible concept of cluster • Nicer topological properties • Adding of new data into an existing tree would be problematic(? ) 25. 1. 2002 SOTA 28