A Dataflow Approach to Design Low Power Control

- Slides: 29

A Dataflow Approach to Design Low Power Control Paths in CGRAs Hyunchul Park, Yongjun Park, and Scott Mahlke University of Michigan 1 University of Michigan Electrical Engineering and Computer Science

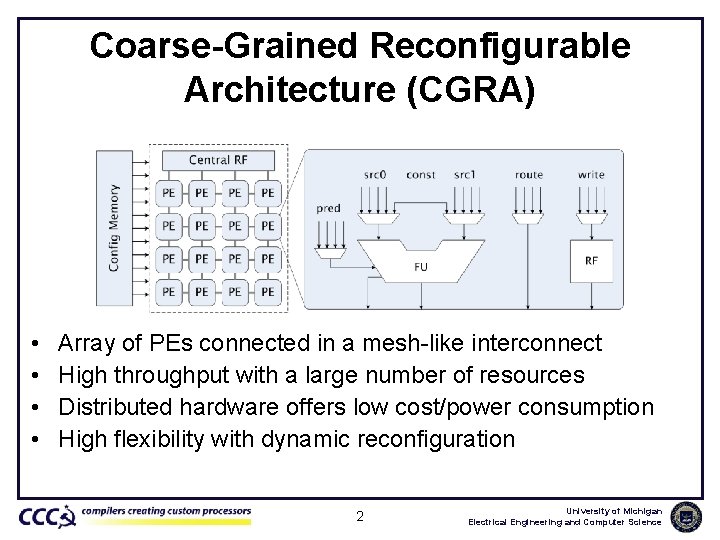

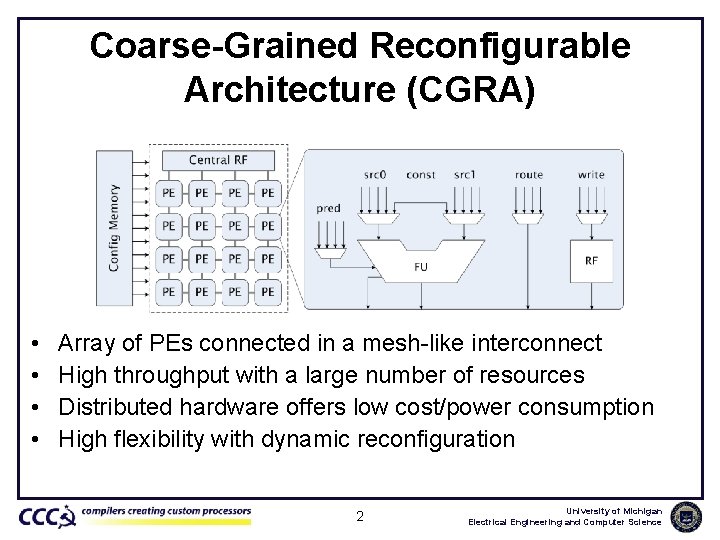

Coarse-Grained Reconfigurable Architecture (CGRA) • • Array of PEs connected in a mesh-like interconnect High throughput with a large number of resources Distributed hardware offers low cost/power consumption High flexibility with dynamic reconfiguration 2 University of Michigan Electrical Engineering and Computer Science

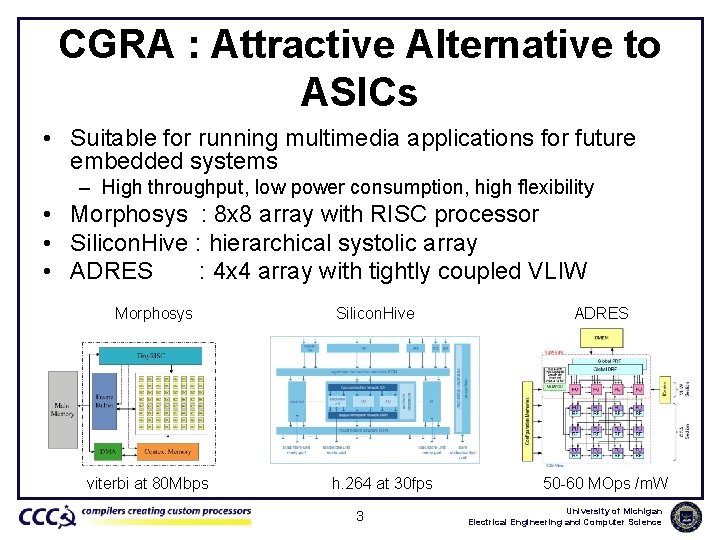

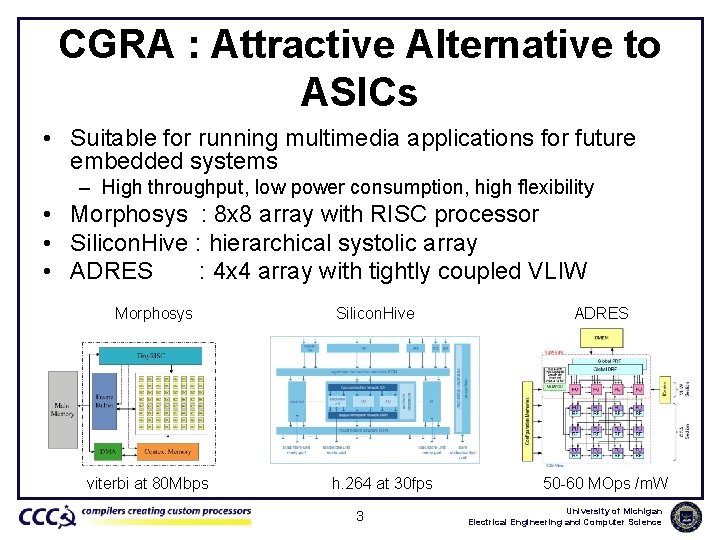

CGRA : Attractive Alternative to ASICs • Suitable for running multimedia applications for future embedded systems – High throughput, low power consumption, high flexibility • Morphosys : 8 x 8 array with RISC processor • Silicon. Hive : hierarchical systolic array • ADRES : 4 x 4 array with tightly coupled VLIW Morphosys viterbi at 80 Mbps Silicon. Hive h. 264 at 30 fps 3 ADRES 50 -60 MOps /m. W University of Michigan Electrical Engineering and Computer Science

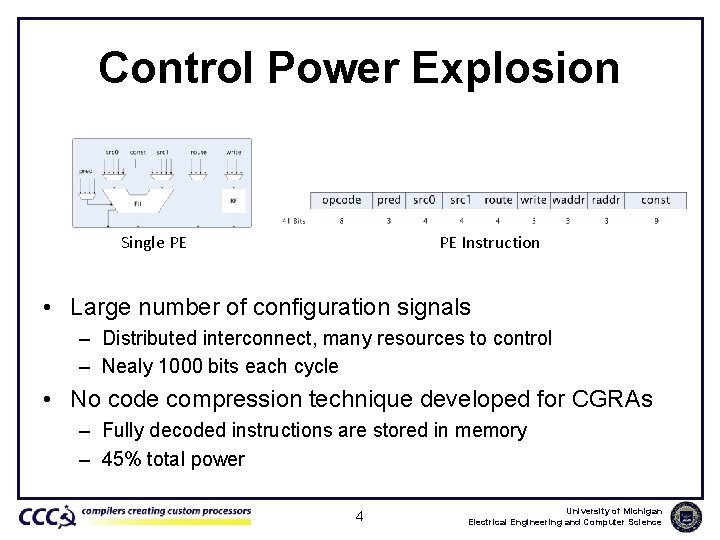

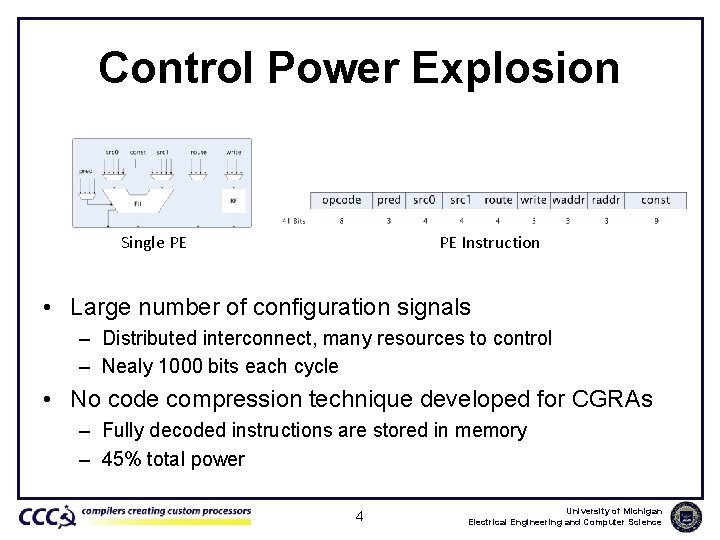

Control Power Explosion Single PE PE Instruction • Large number of configuration signals – Distributed interconnect, many resources to control – Nealy 1000 bits each cycle • No code compression technique developed for CGRAs – Fully decoded instructions are stored in memory – 45% total power 4 University of Michigan Electrical Engineering and Computer Science

Code Compressions • Huffman encoding – High efficiency, but sequential process • Dictionary-based – Recurring patterns stored in dictionary – Not many patterns found in CGRAs • Instruction level code compression – No-op compression : Itanium, DSPs – Only 17% are no-ops in CGRA University of Michigan Electrical Engineering and Computer Science

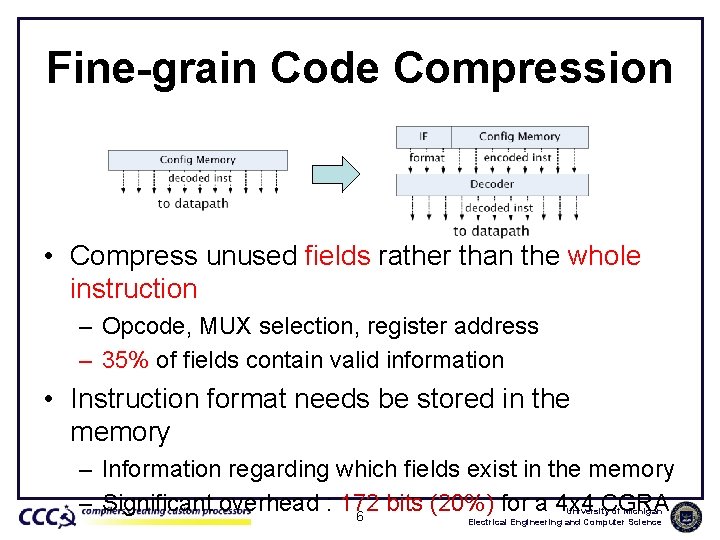

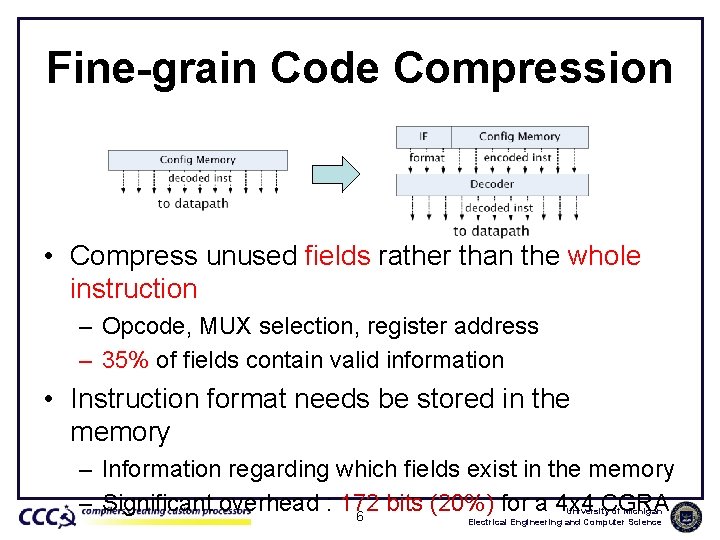

Fine-grain Code Compression • Compress unused fields rather than the whole instruction – Opcode, MUX selection, register address – 35% of fields contain valid information • Instruction format needs be stored in the memory – Information regarding which fields exist in the memory – Significant overhead : 172 bits (20%) for a 4 x 4 CGRA 6 University of Michigan Electrical Engineering and Computer Science

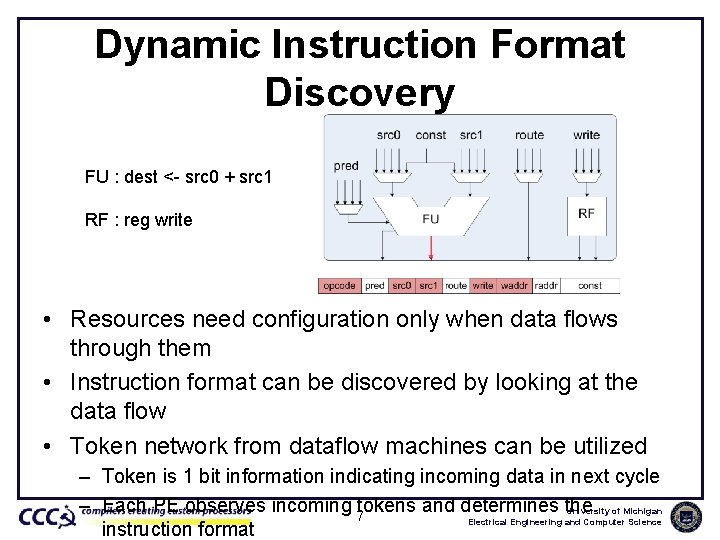

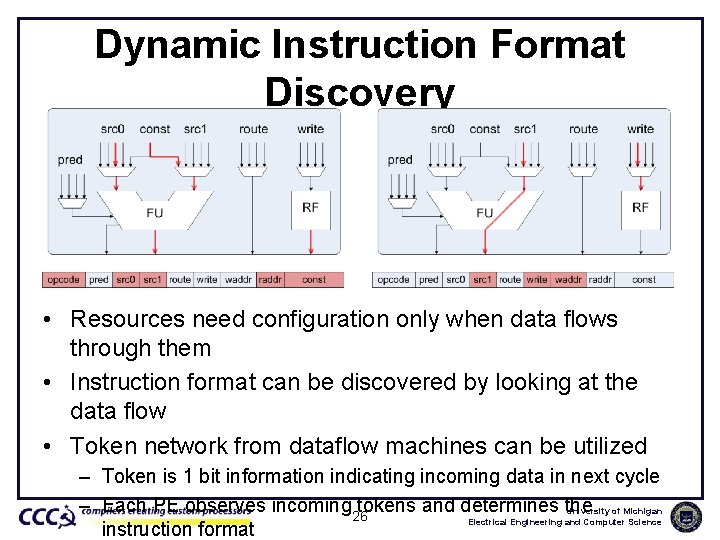

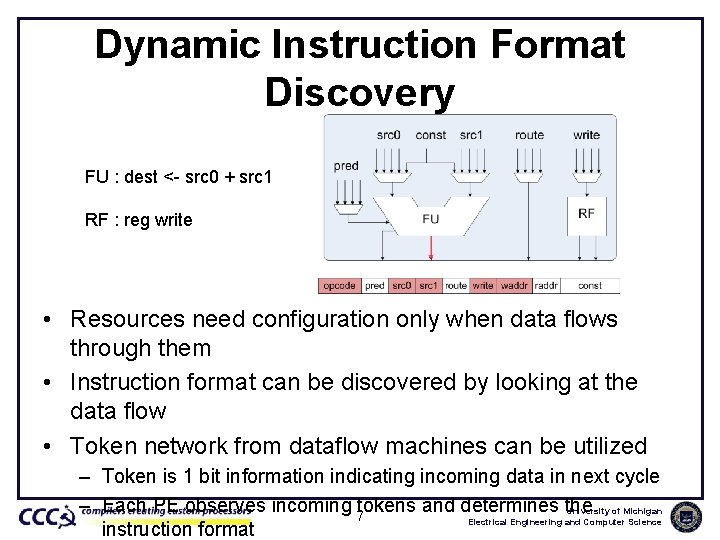

Dynamic Instruction Format Discovery FU : dest <- src 0 + src 1 RF : reg write • Resources need configuration only when data flows through them • Instruction format can be discovered by looking at the data flow • Token network from dataflow machines can be utilized – Token is 1 bit information indicating incoming data in next cycle – Each PE observes incoming 7 tokens and determines the University of Michigan Electrical Engineering and Computer Science instruction format

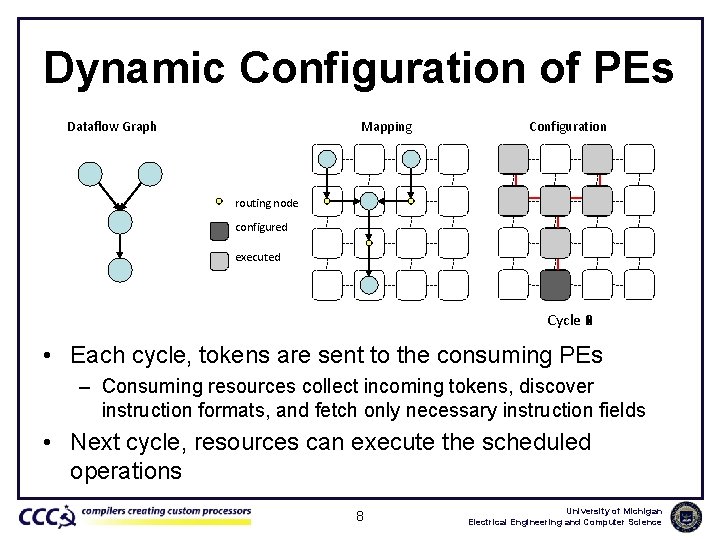

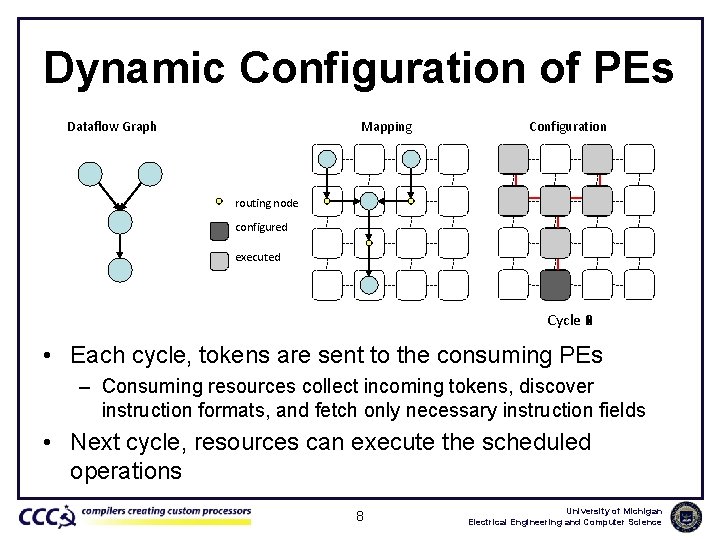

Dynamic Configuration of PEs Dataflow Graph Mapping Configuration routing node configured executed Cycle 4 0 1 2 3 • Each cycle, tokens are sent to the consuming PEs – Consuming resources collect incoming tokens, discover instruction formats, and fetch only necessary instruction fields • Next cycle, resources can execute the scheduled operations 8 University of Michigan Electrical Engineering and Computer Science

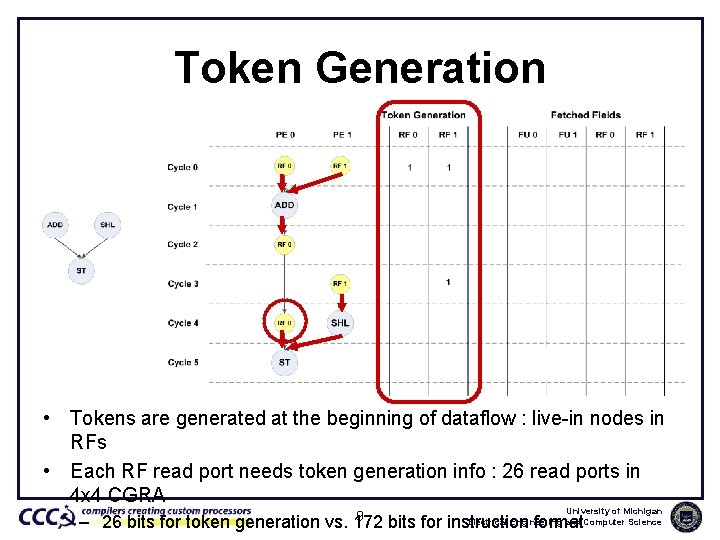

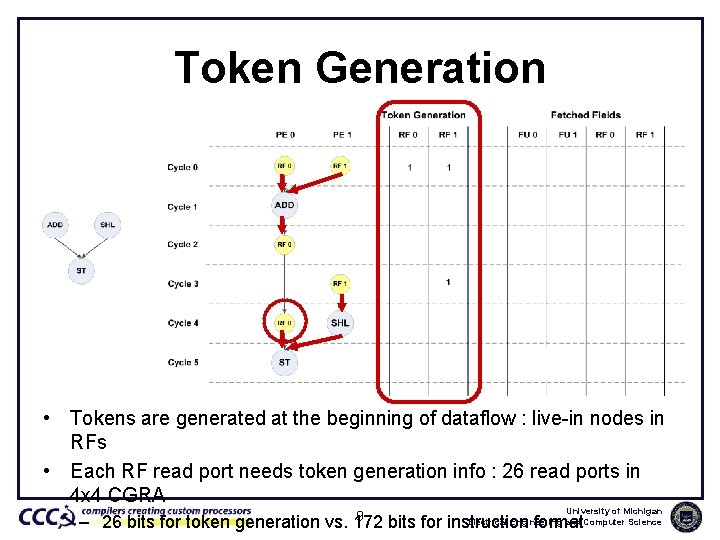

Token Generation • Tokens are generated at the beginning of dataflow : live-in nodes in RFs • Each RF read port needs token generation info : 26 read ports in 4 x 4 CGRA 9 University of Michigan Electrical Engineering and Computer Science – 26 bits for token generation vs. 172 bits for instruction format

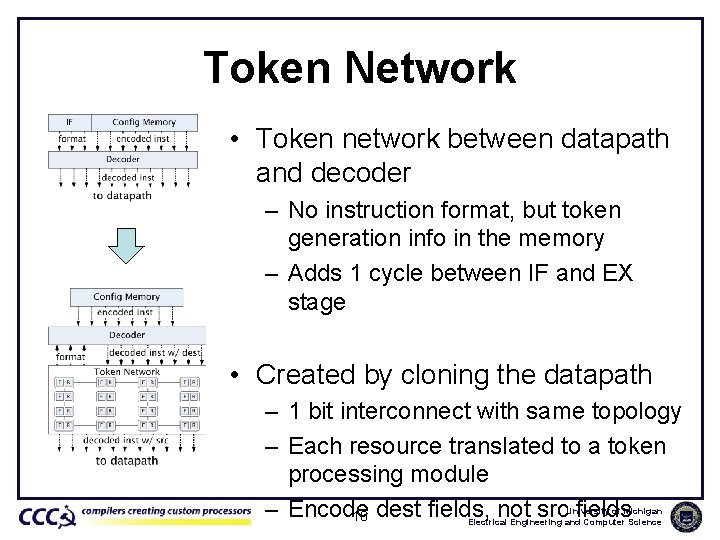

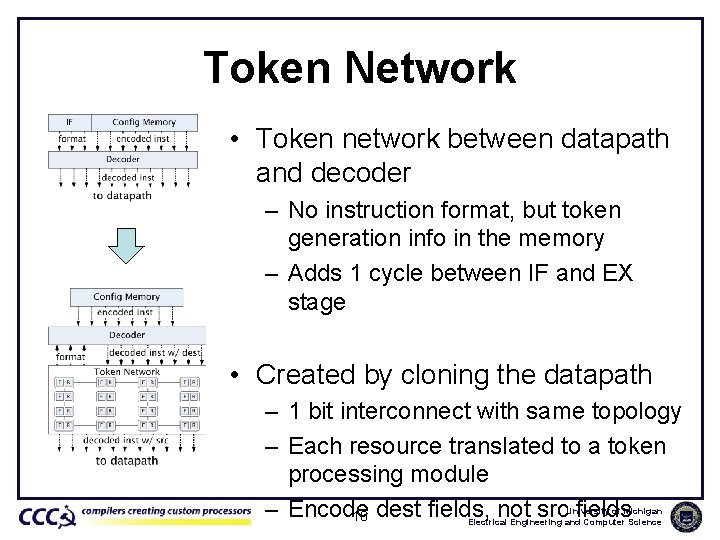

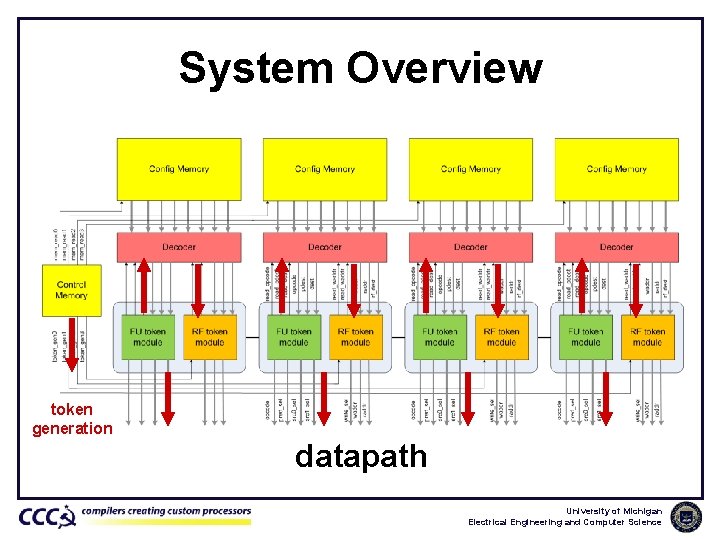

Token Network • Token network between datapath and decoder – No instruction format, but token generation info in the memory – Adds 1 cycle between IF and EX stage • Created by cloning the datapath – 1 bit interconnect with same topology – Each resource translated to a token processing module – Encode 10 dest fields, not src fields University of Michigan Electrical Engineering and Computer Science

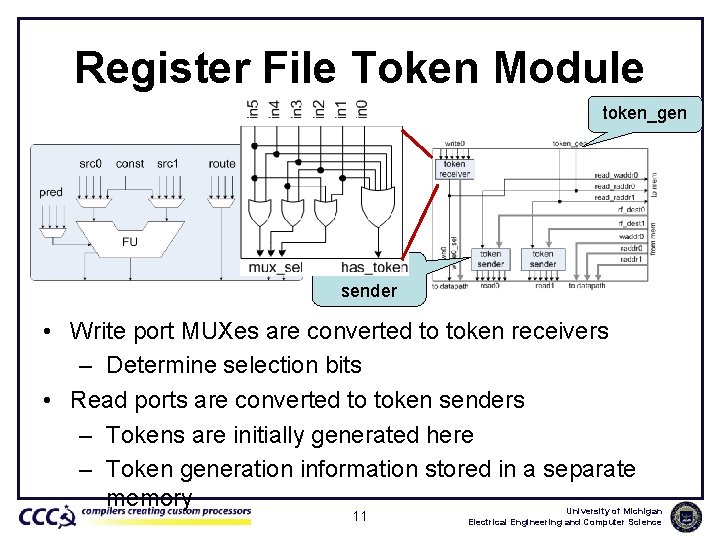

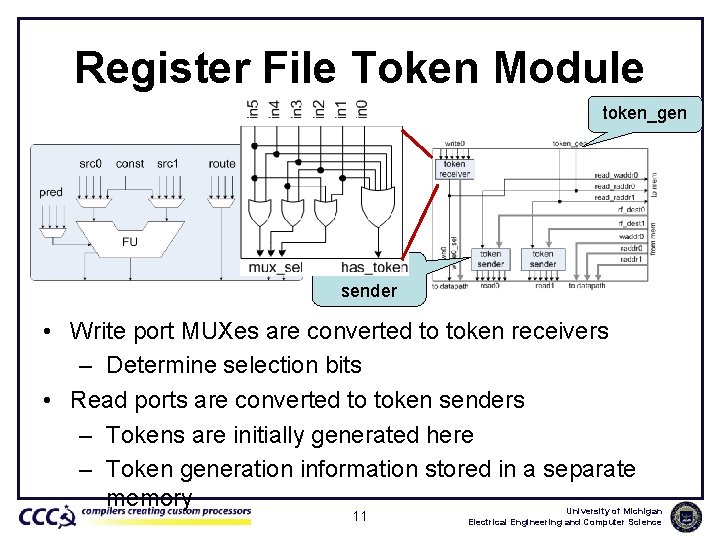

Register File Token Module token_gen token sender • Write port MUXes are converted to token receivers – Determine selection bits • Read ports are converted to token senders – Tokens are initially generated here – Token generation information stored in a separate memory 11 University of Michigan Electrical Engineering and Computer Science

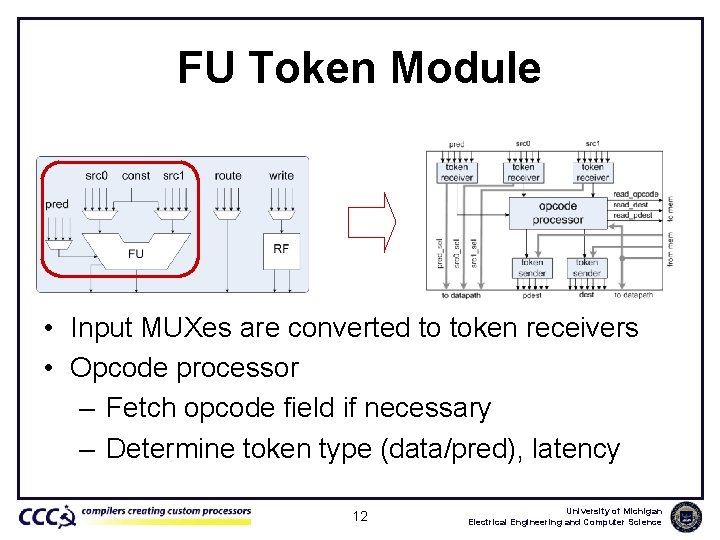

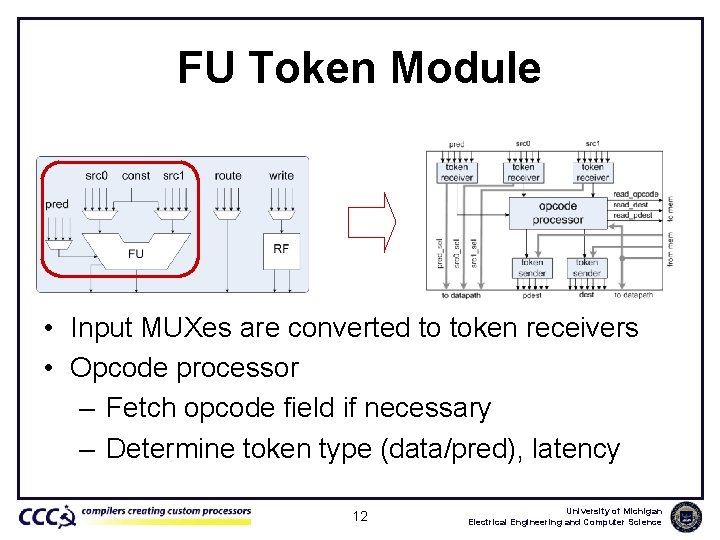

FU Token Module • Input MUXes are converted to token receivers • Opcode processor – Fetch opcode field if necessary – Determine token type (data/pred), latency 12 University of Michigan Electrical Engineering and Computer Science

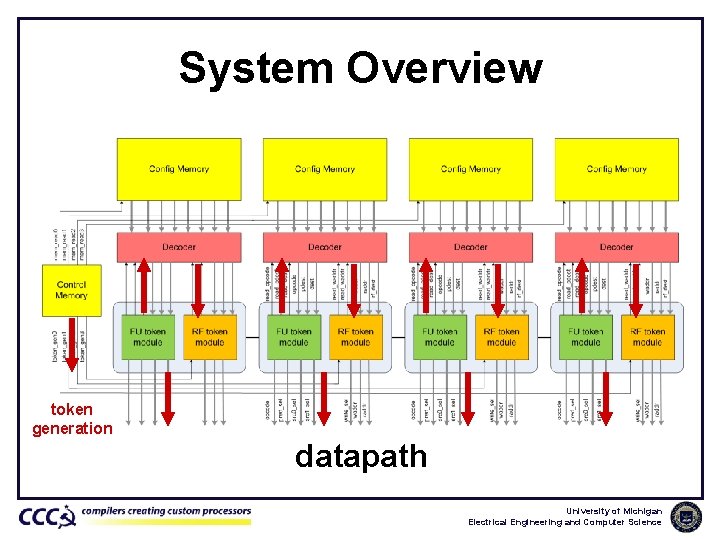

System Overview token generation datapath University of Michigan Electrical Engineering and Computer Science

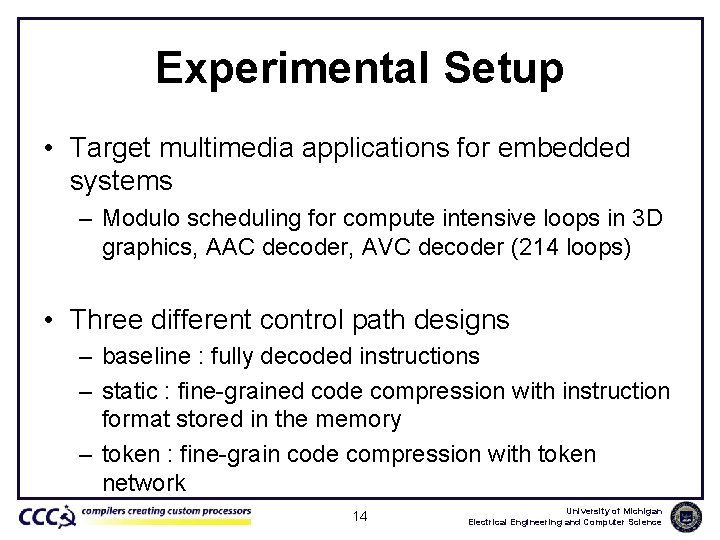

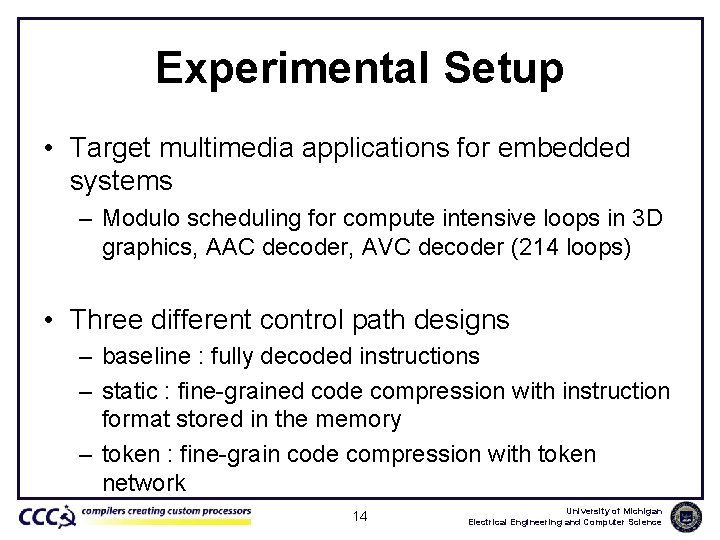

Experimental Setup • Target multimedia applications for embedded systems – Modulo scheduling for compute intensive loops in 3 D graphics, AAC decoder, AVC decoder (214 loops) • Three different control path designs – baseline : fully decoded instructions – static : fine-grained code compression with instruction format stored in the memory – token : fine-grain code compression with token network 14 University of Michigan Electrical Engineering and Computer Science

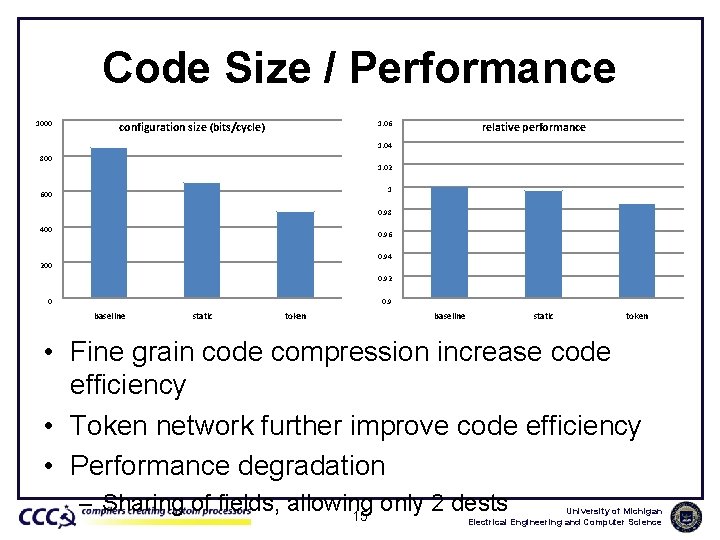

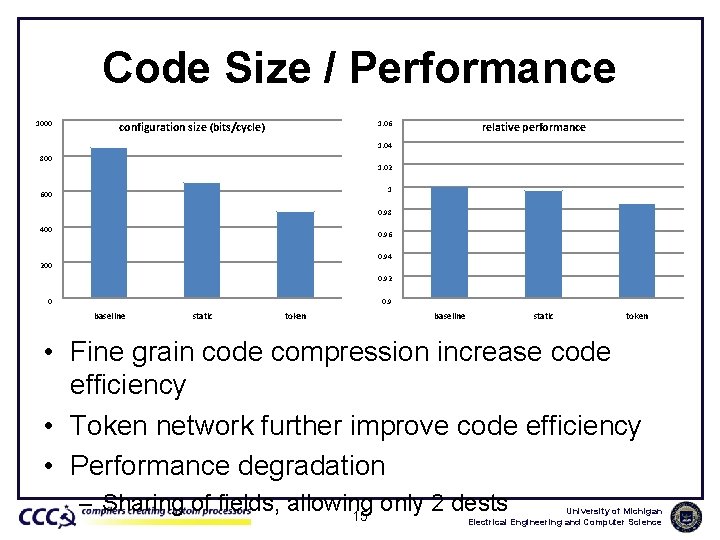

Code Size / Performance 1000 1. 06 configuration size (bits/cycle) relative performance 1. 04 800 1. 02 1 600 0. 98 400 0. 96 0. 94 200 0. 92 0 0. 9 baseline static token • Fine grain code compression increase code efficiency • Token network further improve code efficiency • Performance degradation – Sharing of fields, allowing only 2 dests 15 University of Michigan Electrical Engineering and Computer Science

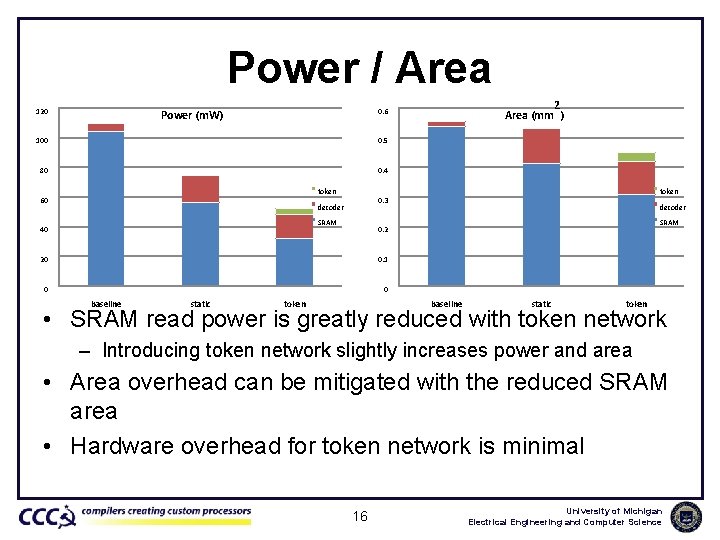

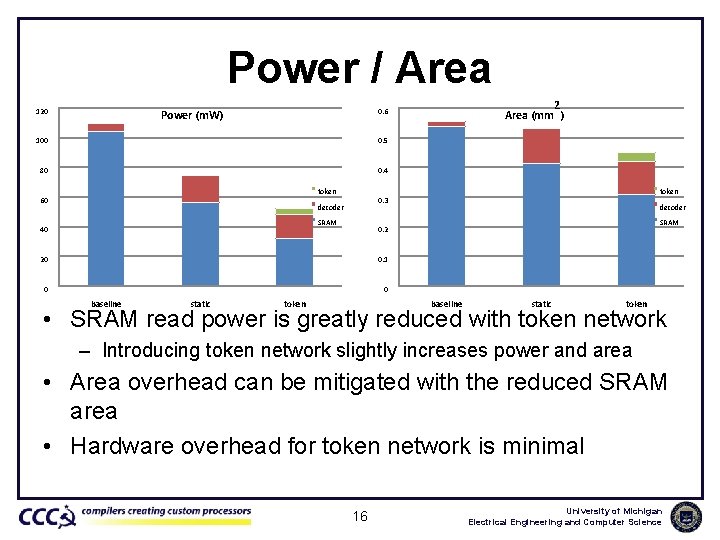

Power / Area 120 100 0. 5 80 0. 4 token 60 SRAM 40 decoder SRAM 0. 2 20 0. 1 0 0 static token 0. 3 decoder baseline 2 Area (mm ) 0. 6 Power (m. W) token baseline static token • SRAM read power is greatly reduced with token network – Introducing token network slightly increases power and area • Area overhead can be mitigated with the reduced SRAM area • Hardware overhead for token network is minimal 16 University of Michigan Electrical Engineering and Computer Science

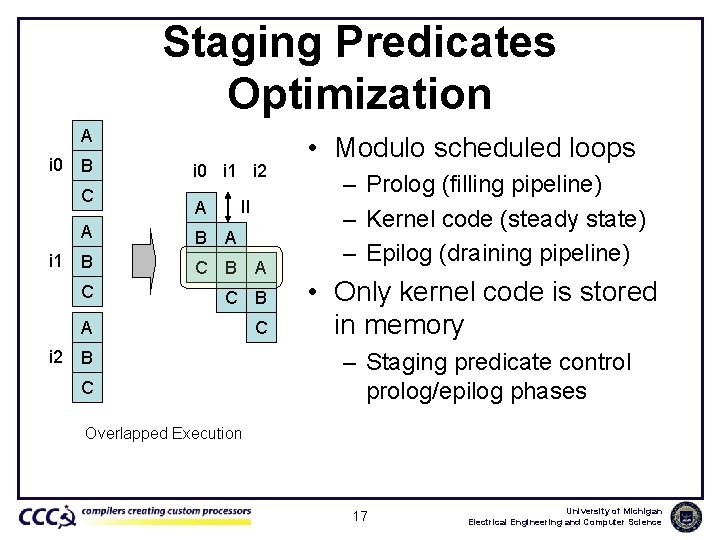

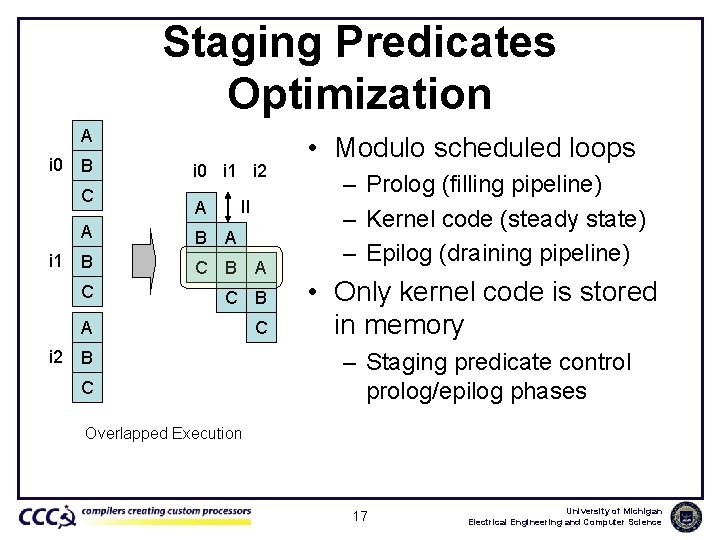

Staging Predicates Optimization A i 0 B C A i 1 B i 0 i 1 i 2 II A B A C C B A C i 2 B C • Modulo scheduled loops – Prolog (filling pipeline) – Kernel code (steady state) – Epilog (draining pipeline) • Only kernel code is stored in memory – Staging predicate control prolog/epilog phases Overlapped Execution 17 University of Michigan Electrical Engineering and Computer Science

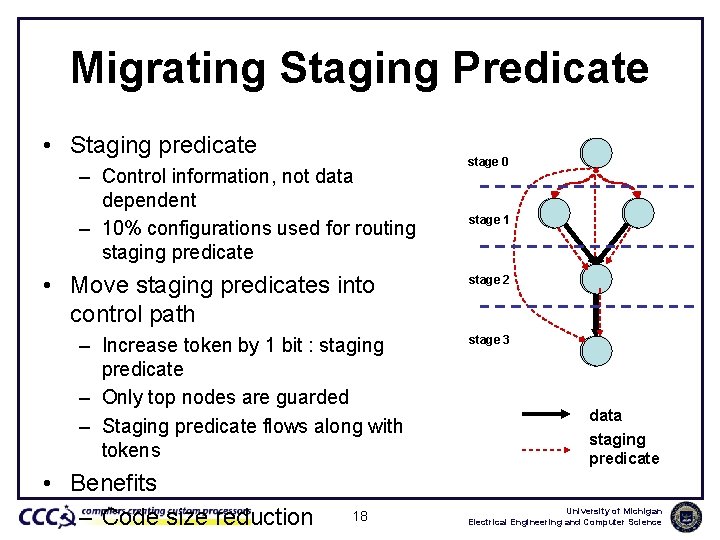

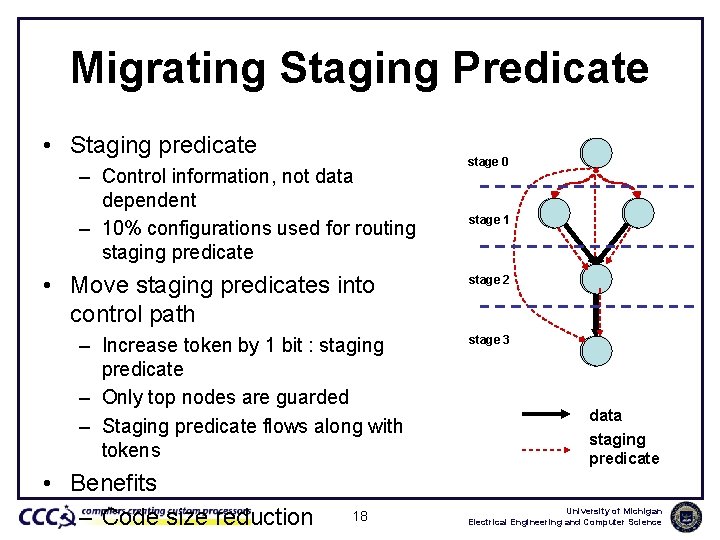

Migrating Staging Predicate • Staging predicate – Control information, not data dependent – 10% configurations used for routing staging predicate • Move staging predicates into control path – Increase token by 1 bit : staging predicate – Only top nodes are guarded – Staging predicate flows along with tokens • Benefits – Code size reduction 18 stage 0 stage 1 stage 2 stage 3 data staging predicate University of Michigan Electrical Engineering and Computer Science

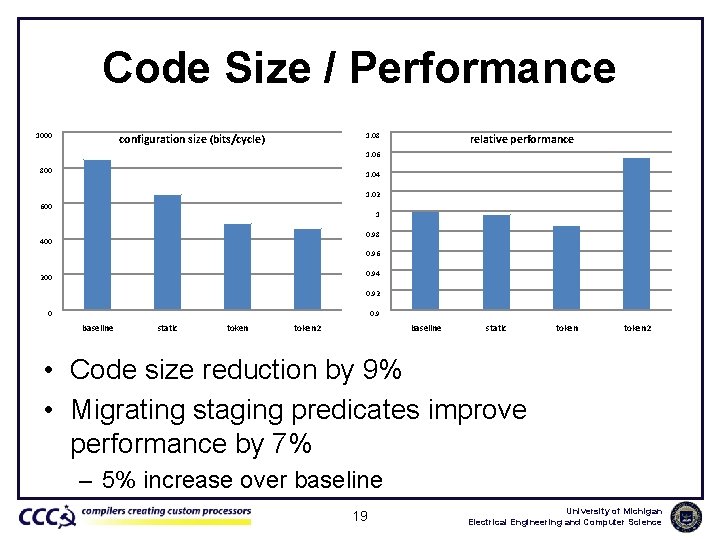

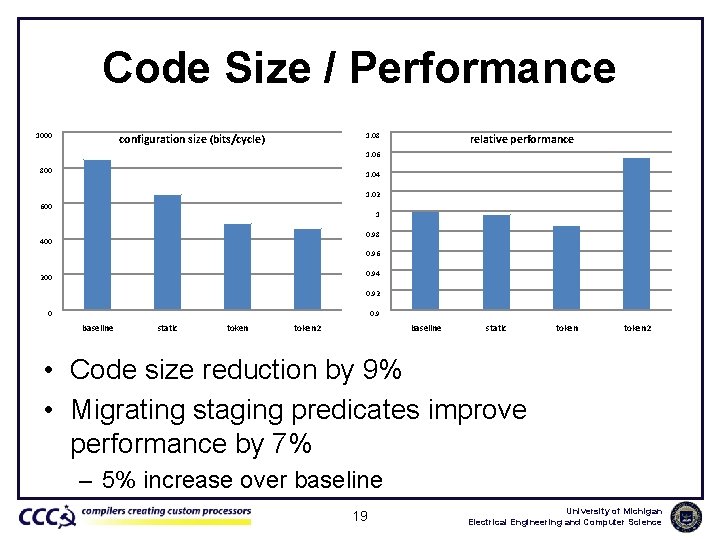

Code Size / Performance 1000 1. 08 configuration size (bits/cycle) relative performance 1. 06 800 1. 04 1. 02 600 1 0. 98 400 0. 96 0. 94 200 0. 92 0 0. 9 baseline static token 2 • Code size reduction by 9% • Migrating staging predicates improve performance by 7% – 5% increase over baseline 19 University of Michigan Electrical Engineering and Computer Science

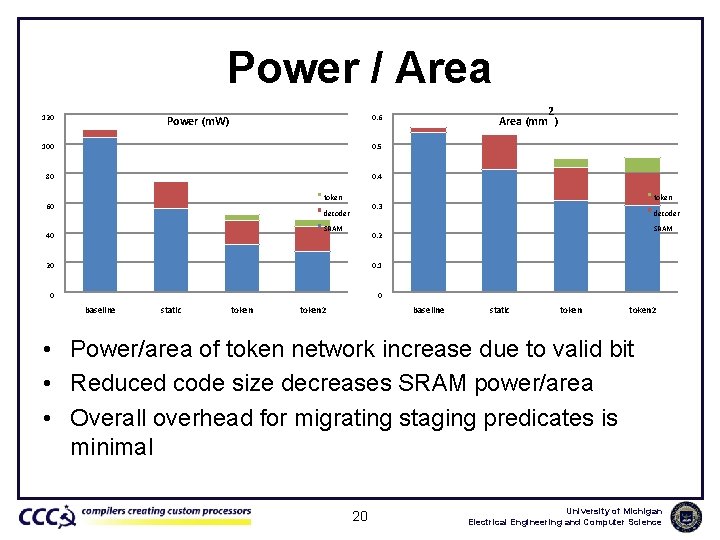

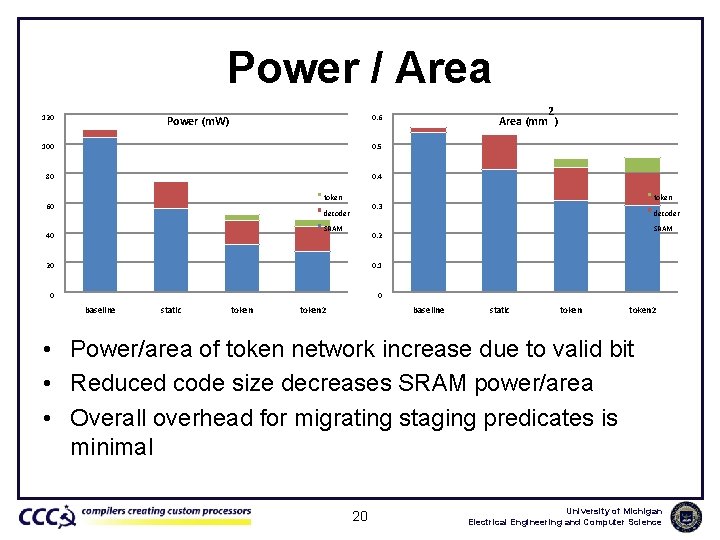

Power / Area 120 100 0. 5 80 0. 4 token 60 SRAM 40 0. 1 0 0 token decoder SRAM 0. 2 20 static token 0. 3 decoder baseline 2 Area (mm ) 0. 6 Power (m. W) token 2 baseline static token 2 • Power/area of token network increase due to valid bit • Reduced code size decreases SRAM power/area • Overall overhead for migrating staging predicates is minimal 20 University of Michigan Electrical Engineering and Computer Science

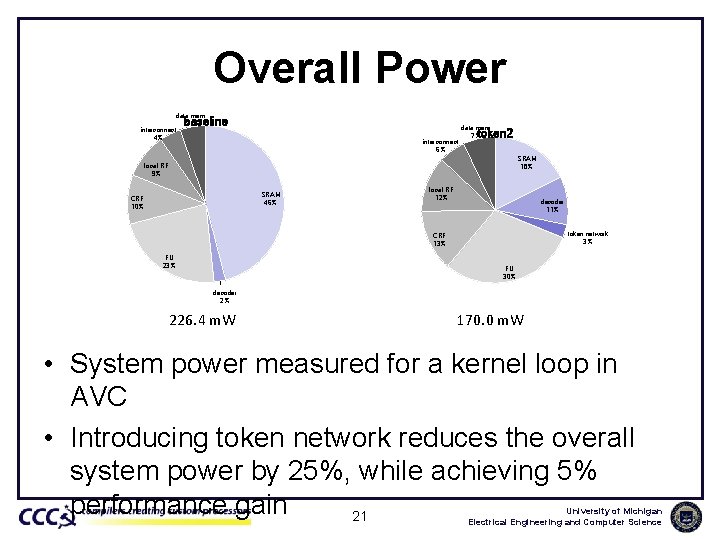

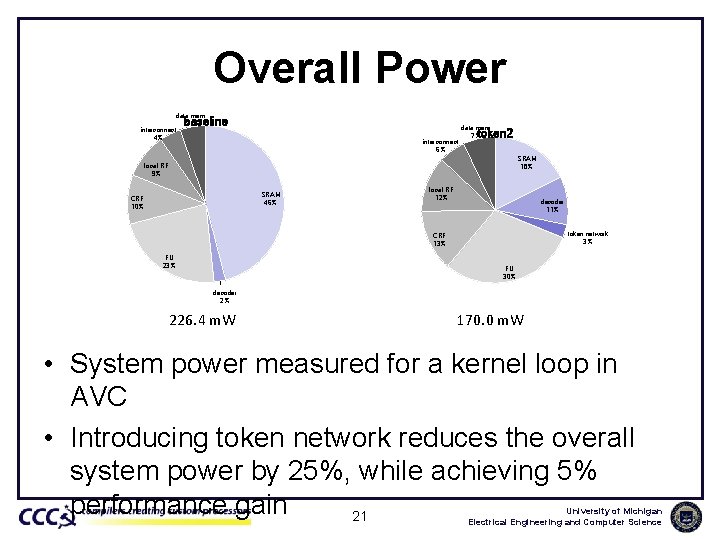

Overall Power data mem 5% interconnect 4% baseline interconnect 6% data mem 7% token 2 SRAM 18% local RF 9% SRAM 46% CRF 10% local RF 12% decoder 11% token network 3% CRF 13% FU 23% FU 30% decoder 2% 226. 4 m. W 170. 0 m. W • System power measured for a kernel loop in AVC • Introducing token network reduces the overall system power by 25%, while achieving 5% performance gain 21 University of Michigan Electrical Engineering and Computer Science

Conclusion • Fine grain code compression is a good fit for CGRAs • Token network can eliminate the instruction format overhead – Dynamic discovery of instruction format – Small overhead (< 3%) – Migrating staging predicates to token network improves performance • Applicable to other highly distributed 22 architectures University of Michigan Electrical Engineering and Computer Science

Questions? 23 University of Michigan Electrical Engineering and Computer Science

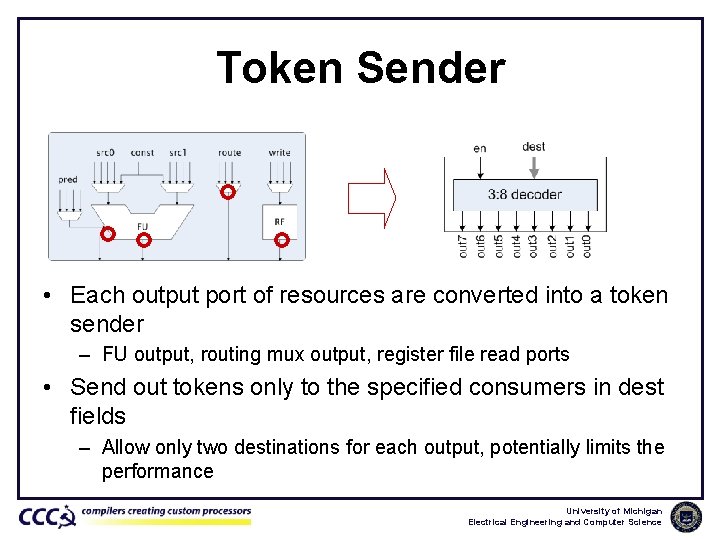

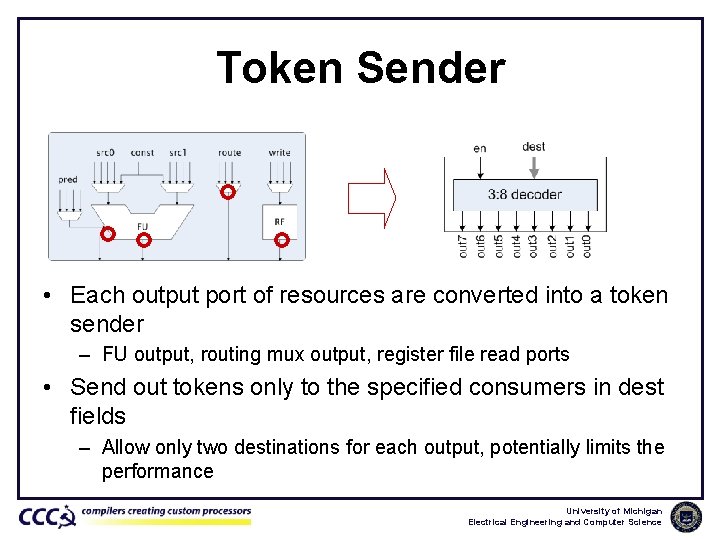

Token Sender • Each output port of resources are converted into a token sender – FU output, routing mux output, register file read ports • Send out tokens only to the specified consumers in dest fields – Allow only two destinations for each output, potentially limits the performance University of Michigan Electrical Engineering and Computer Science

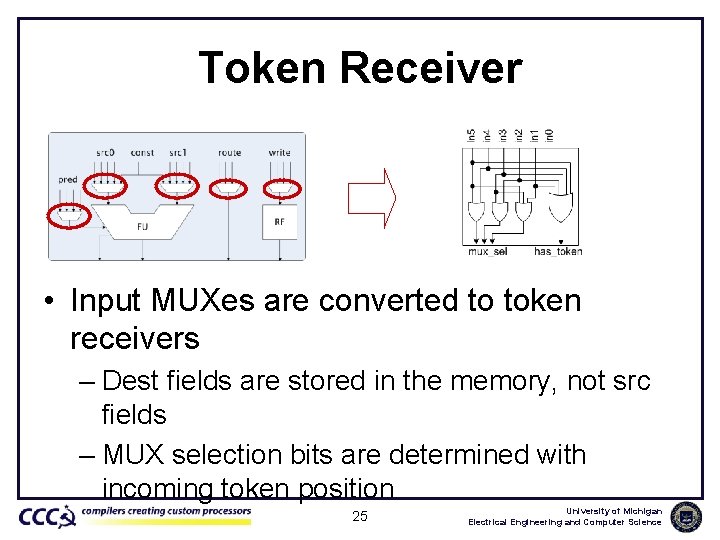

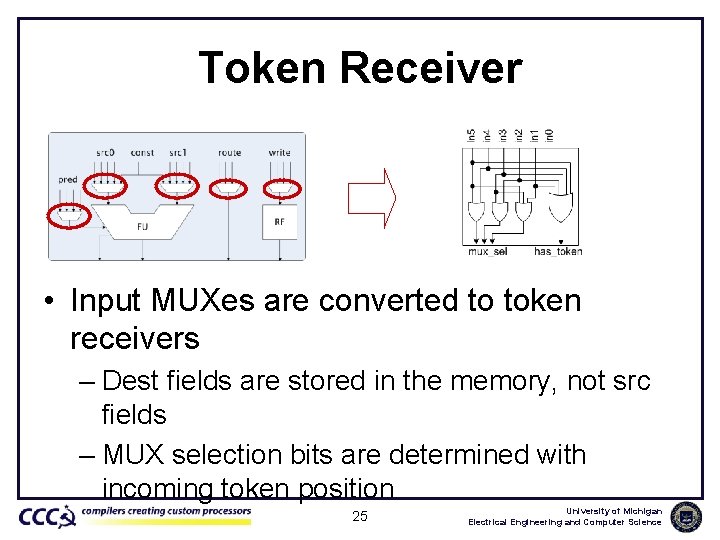

Token Receiver • Input MUXes are converted to token receivers – Dest fields are stored in the memory, not src fields – MUX selection bits are determined with incoming token position 25 University of Michigan Electrical Engineering and Computer Science

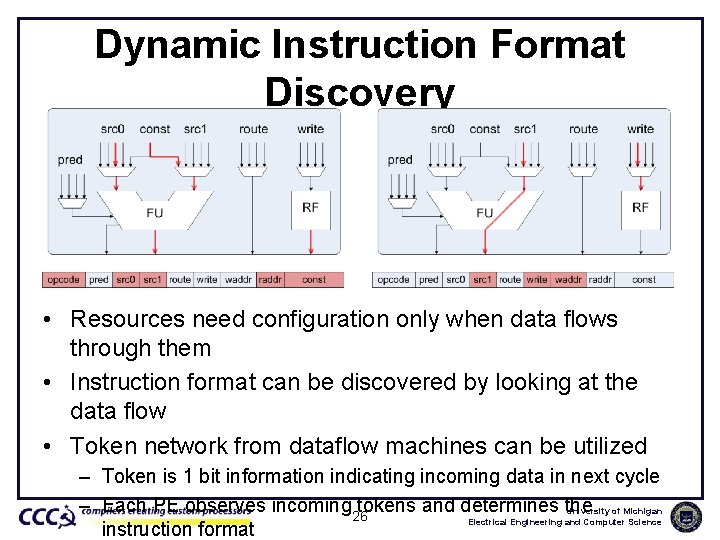

Dynamic Instruction Format Discovery • Resources need configuration only when data flows through them • Instruction format can be discovered by looking at the data flow • Token network from dataflow machines can be utilized – Token is 1 bit information indicating incoming data in next cycle – Each PE observes incoming 26 tokens and determines the University of Michigan Electrical Engineering and Computer Science instruction format

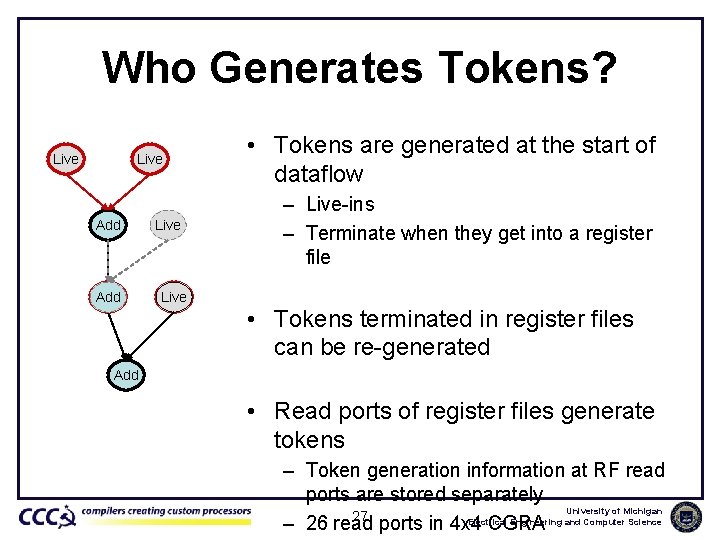

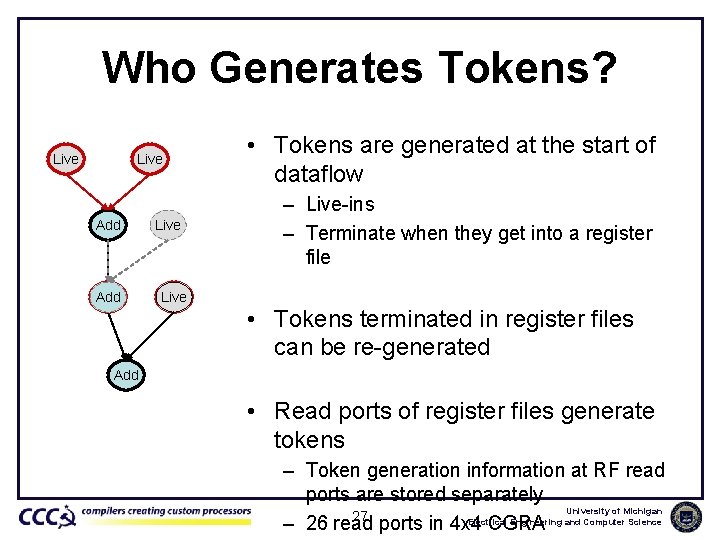

Who Generates Tokens? Live Add RF Live • Tokens are generated at the start of dataflow – Live-ins – Terminate when they get into a register file • Tokens terminated in register files can be re-generated Add • Read ports of register files generate tokens – Token generation information at RF read ports are stored separately University of Michigan 27 Electrical Engineering and Computer Science – 26 read ports in 4 x 4 CGRA

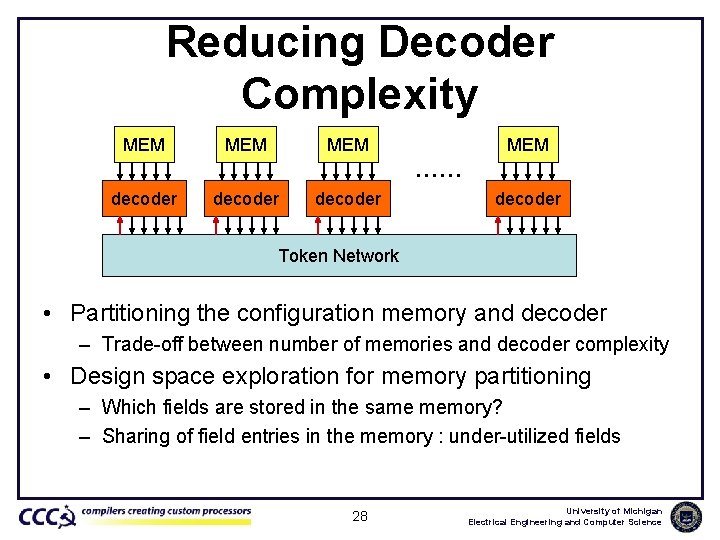

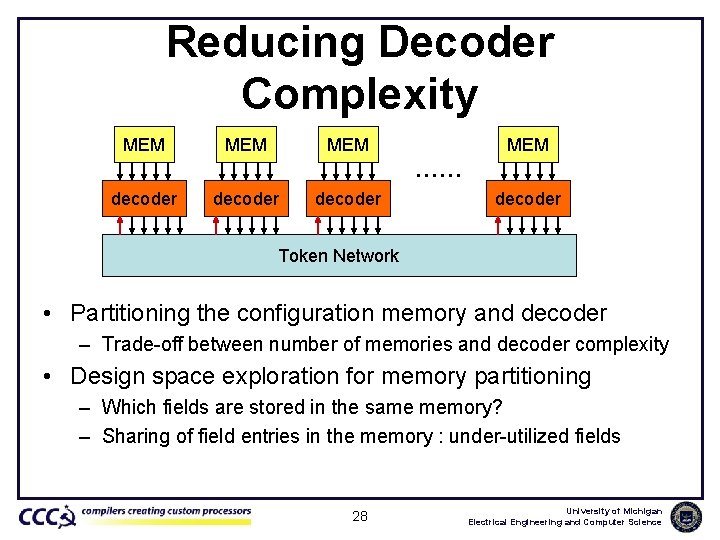

Reducing Decoder Complexity MEM MEM …… decoder Token Network • Partitioning the configuration memory and decoder – Trade-off between number of memories and decoder complexity • Design space exploration for memory partitioning – Which fields are stored in the same memory? – Sharing of field entries in the memory : under-utilized fields 28 University of Michigan Electrical Engineering and Computer Science

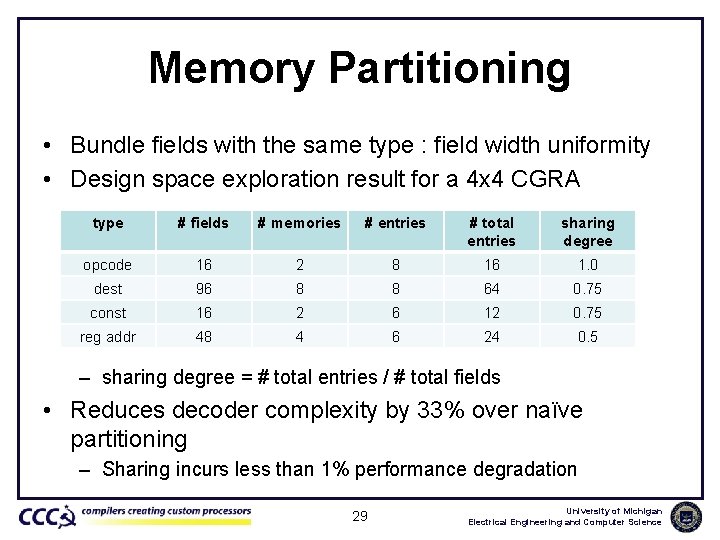

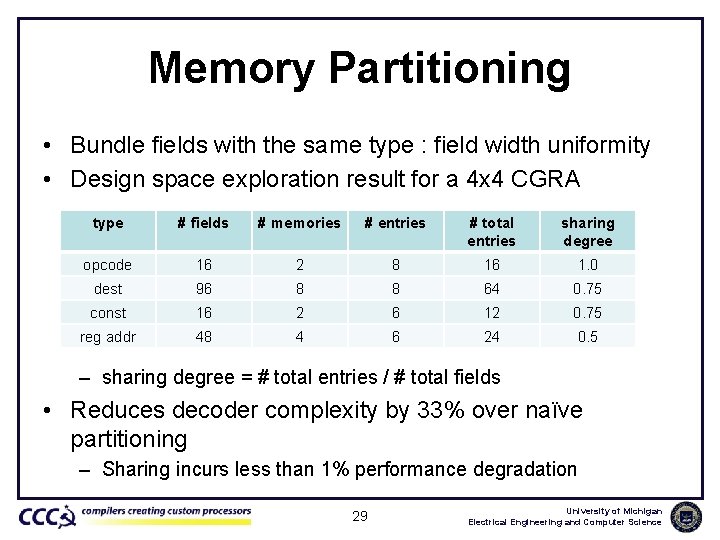

Memory Partitioning • Bundle fields with the same type : field width uniformity • Design space exploration result for a 4 x 4 CGRA type # fields # memories # entries # total entries sharing degree opcode 16 2 8 16 1. 0 dest 96 8 8 64 0. 75 const 16 2 6 12 0. 75 reg addr 48 4 6 24 0. 5 – sharing degree = # total entries / # total fields • Reduces decoder complexity by 33% over naïve partitioning – Sharing incurs less than 1% performance degradation 29 University of Michigan Electrical Engineering and Computer Science