A Dashboard Report Value Added Through DrillDowns Peer

A Dashboard Report: Value Added Through Drill-Downs, Peer Comparisons, and Significance Tests Mary M. Sapp, Ph. D. Assistant Vice President Office of Planning & Institutional Research University of Miami SAIR, October 24, 2005

Definition of Dashboard A Dashboard is a visual display of Key Performance Indicators (KPIs) presented in a concise, intuitive format that allows decision makers to monitor institutional performance at a glance.

Dashboard Characteristics w Provides visual display of important Key Performance Indicators (KPIs) w Uses concise, intuitive, “at-a-glance” format (uses icons and colors) w Offers high-level summary (reduces voluminous data) Display of “gauges” to monitor key areas

Uses of A Dashboard w Provides quick overview of institutional performance w Monitors progress of institution over time (trends) w Alerts user to problems (colors indicate positive/negative data) w Highlights important trends and/or comparisons with peers w Allows access to supporting analytics when needed to understand KPI results (drill down)

Predecessors & Related Approaches w Executive Information Systems, and their predecessor, Decision Support Systems w On-Line Analytical Processing (OLAP) associated with data warehouses w Balanced scorecards w Key success factors w Benchmarking w Key performance indicators

Why Do A Dashboard? Senior managers w Want to monitor institutional performance w Are very busy—little time to study reports w Value reports that clearly show conclusions w Appreciate overview, with indicators from different areas in one place w Use both trends and peer data “What you measure is what you get. ” Robert S. Kaplan and David P. Norton

Impetus for Next Generation Dashboard Report w Session at Winter 2004 HEDS conference w Representatives from 4 HEDS institutions shared dashboard reports w Presentation & discussion prompted ideas about features that might add value w Dashboard report described here developed as result Example of how conference session led to project that would not have been done otherwise

Characteristics of Dashboards Presented at HEDS Conference w All used single page (though some had 2 nd page for definitions & instructions) w All presented trend data (changes over 1, 5, 6, and 10 years) w All used up/down arrows, </> icons, or “Up”/“Down” to show direction of trends w Three displayed minima and maxima values for the trend period w Three used colors to show whether trends were positive or negative w One used peer data

Questions Generated by HEDS Dashboards and Proposed Solutions 1. Concise or detailed? w HEDS: Laments about not being able to provide more detail (“senior administrators should want to see more”) w Reaction: Sympathized with viewpoint, but have learned most senior administrators want summaries, not detail w Next Generation Dashboard: Keep concise format plus links to optional graphs & tables

Issues that Came Up in Discussion at HEDS and UM Solutions 2. Trends or peer data? w HEDS: All four dashboards used trend data; one also used peer data w Reaction: UM values peer data to support benchmarking w Next Generation Dashboard : Use both peer and trend data

Issues that Came Up in Discussion at HEDS and UM Solutions 3. When should icon for trend or difference from peers appear? w HEDS: Dashboards seemed to display icons for all non-zero differences w Reaction: Didn’t want small differences to be treated as real changes w Next Generation Dashboard : Use p-values from regression and t-tests to control display of icons for trends and peer comparisons

Issues that Came Up in Discussion at HEDS and UM Solutions 4. Include minima and maxima? w HEDS: Three displayed minima and maxima over the trend period w Reaction: UM’s senior VP decided too cluttered w Next Generation Dashboard : Shows trends of own institution and 25 th and 75 th percentiles of peers, with no maxima or minima

Unique Aspects of Next Generation Dashboard w Provide drill-down links to graphs and tables for more detail, if desired w Provide peer data in addition to trends w Use regression (rather than maxima and minima) to determine direction of trends w Use statistical significance of slope (rather than just difference) to generate trend icons w Use t-tests to generate peer comparison summary Functions like adding “Global Positioning System (GPS)” to your dashboard

Implementation w Two dashboard reports: student indicators and faculty/financial indicators w 17/21 KPIs on single page w Box for each with current value, arrows to show trends, and text to show relation to peers w Links to more detailed graphs and tables

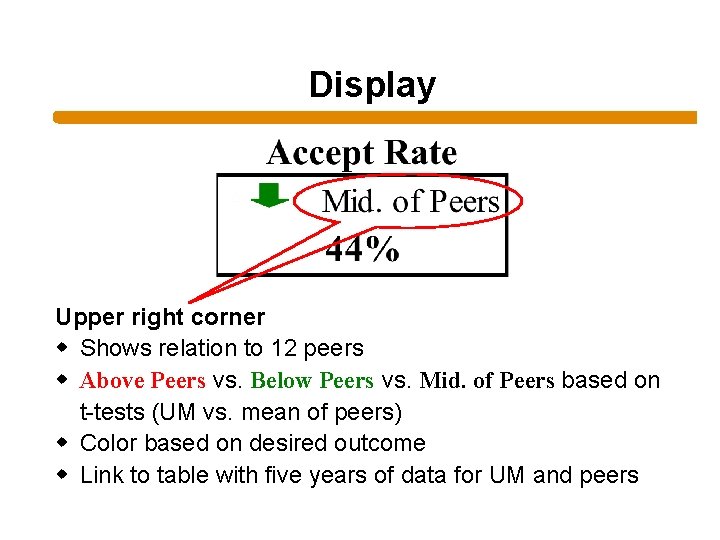

Indicator Display Current value Upper left corner w Up arrow, down arrow, or horizontal line w Shows direction of UM trend for last 6 years w vs. based on slope of regression & p-values w Color based on desired outcome w Link to graph with trends for UM and 25 th & 75 th percentiles for peers

Display Upper right corner w Shows relation to 12 peers w Above Peers vs. Below Peers vs. Mid. of Peers based on t-tests (UM vs. mean of peers) w Color based on desired outcome w Link to table with five years of data for UM and peers

Macros Used to display w Data for year chosen w Direction of arrow icons w Color of arrow (green for positive, red for negative, black for neutral)

Spreadsheet w Dashboard developed using Excel spreadsheet, with one sheet for the dashboard report and one sheet for each indicator (graph, peer data, and raw data) w Macro updates year and controls display of arrow icons (direction and color) w Spreadsheet with template for the dashboard and instructions for customizing it shared upon request (leave card)

Indicators Used w Selected with input from the Provost, Vice President for Enrollments, Senior Vice President for Business & Finance, and Treasurer w Mandatory criterion: availability of peer data; sources: – CDS data from U. S. News (Peterson’s/Fiske for earlier years) – IPEDS – National Association of College and University Business Officers – Council for the Aid to Education – National Science Foundation – Moody’s—average A data used instead of individual peers – National academies w See last page of handout for list of indicators used by UM and HEDS institutions

Dashboard Complements Existing Key Success Factors (KSF) Report w Distinction between monitoring “critical” measures (tactical/operational, usually updated on daily, weekly, or monthly basis) and tracking strategic outcomes (key to longterm goals, updated less often) w Both KSF & Dashboard presented to senior administrators in Operations Planning Meeting (KSF bi-monthly and each Dashboard annually)

KSF Monitors Changes for Critical Tactical KPIs w KPIs in KSF usually related to process (e. g. , admissions, revenue sources, and expenditures in various categories) w KSF indicators limited to indicators that change on a continuous (e. g. , daily, monthly) basis, as captured at the end of each month

Dashboard Monitors Strategic KPIs w KPIs related to effectiveness and quality (student quality and success, faculty characteristics, peer evaluations) w Dashboard KPIs not included in KSF because measured on annual rather than continuous basis w Dashboard KPIs limited to indicators for which peer data available

Future Directions And Adaptations w Adapt Dashboard format for UM’s KSF report w Include targets and significant differences from targets instead of/in addition to peers w Make available online w Link directly to various data sources (e. g. , data warehouse) w Apply at the school or department level w Allow individuals to personalize their own databases to include KPIs directly relevant to them

Implementing Next Generation Dashboard at Other Institutions w Session focus is on effective presentation rather than integration of data into report (lowtech spreadsheet, with tables of existing data copied in) w Spreadsheet itself can be used or some of the key concepts can be adapted in other situations w Author will e-mail spreadsheet template and instructions to those interested

Choosing KPIs w w w Choosing which KPIs to use is critical Small amount of space, so choose carefully Appropriateness of KPIs is institution-specific Critical or strategic focus? Need to interview key stakeholders to determine what data are important for them w Use different types of KPIs (e. g. , quality, process, financial, personnel) to provide balanced perspective

Demo of Dashboard Spreadsheet Copies of spreadsheet available upon request— e-mail pliu@miami. edu

- Slides: 26