A Comparison of Data Science Systems Carlos Ordonez

- Slides: 29

A Comparison of Data Science Systems Carlos Ordonez University of Houston USA

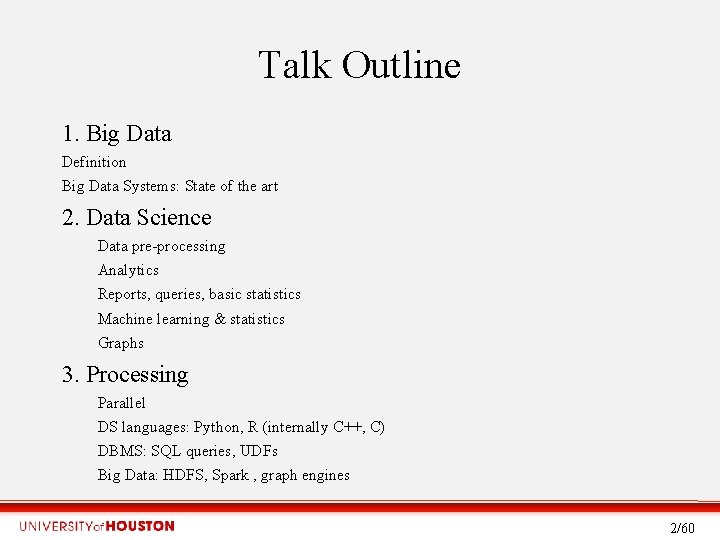

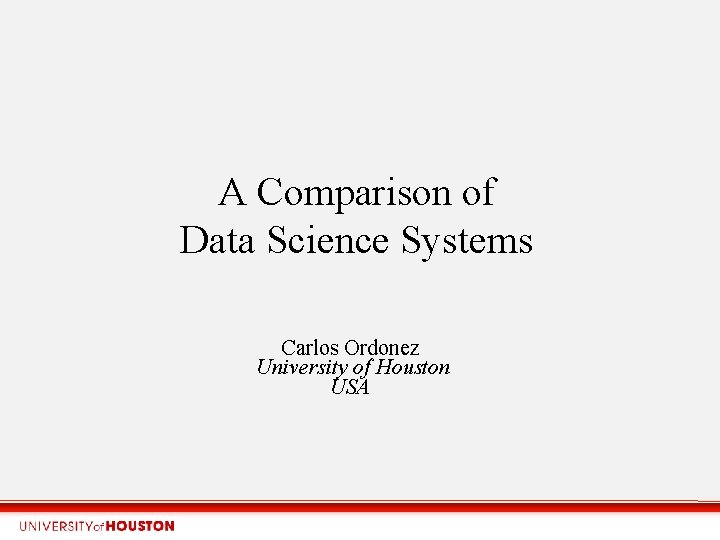

Talk Outline 1. Big Data Definition Big Data Systems: State of the art 2. Data Science Data pre-processing Analytics Reports, queries, basic statistics Machine learning & statistics Graphs 3. Processing Parallel DS languages: Python, R (internally C++, C) DBMS: SQL queries, UDFs Big Data: HDFS, Spark , graph engines 2/60

Acknowledgments • Anirban Mondal, Ashoka University • Mike Stonebraker, MIT (DBMS-centric) • Divesh Srivastava, ATT (Theory) 3/79

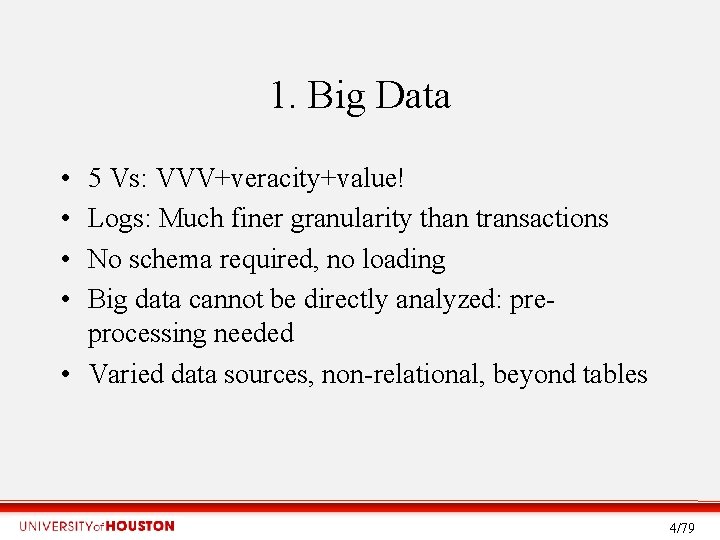

1. Big Data • • 5 Vs: VVV+veracity+value! Logs: Much finer granularity than transactions No schema required, no loading Big data cannot be directly analyzed: preprocessing needed • Varied data sources, non-relational, beyond tables 4/79

Big Data Systems: today • old. SQL, No. SQL, new. SQL: SQL wins for queries • Some ACID guarantees, OLTP gradually stronger • Web-scale data not universal; many practical problems are smaller • Data(base) integration and cleaning harder than relational databases • Many applications • SQL remains the defacto query language • Python becoming universal glue 5/79

2. Data Science • • Evolution from data mining and big data analytics Interdisciplinary: math, programming Stastistics Machine learning Includes big data and small data Deep learning becoming the norm Dynamic computing environment SQL and hadoop are tools, not the unique system 6/79

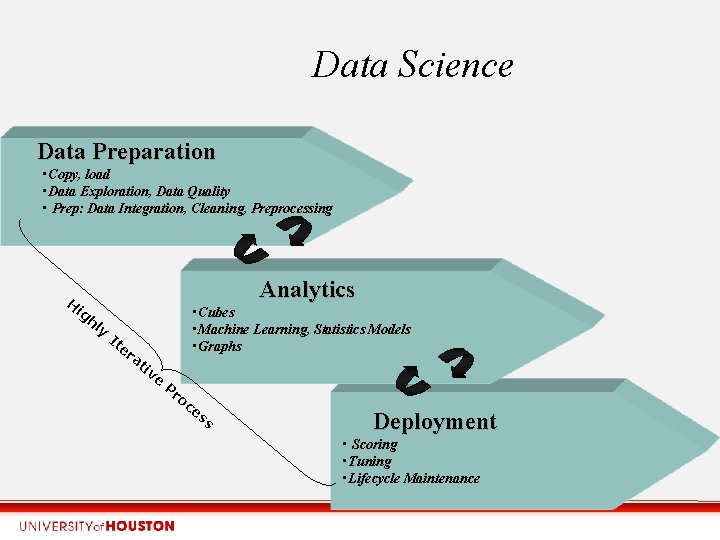

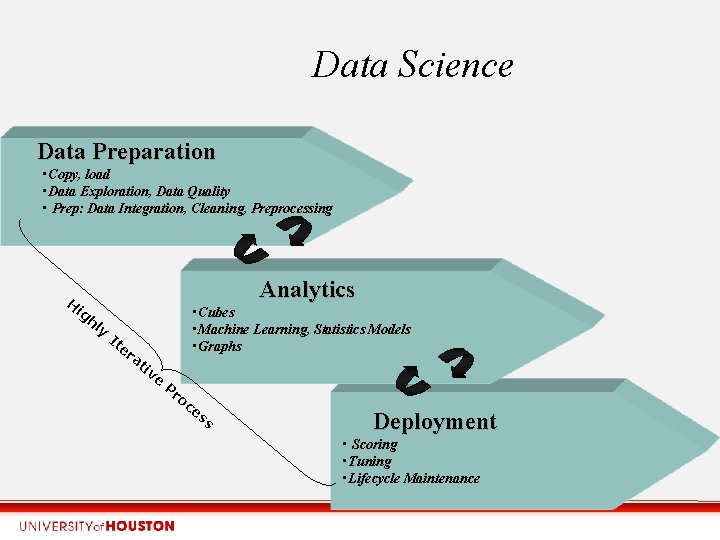

Data Science Data Preparation • Copy, load • Data Exploration, Data Quality • Prep: Data Integration, Cleaning, Preprocessing H ig hl Analytics y It er at • Cubes • Machine Learning, Statistics Models • Graphs iv e Pr oc es s Deployment • Scoring • Tuning • Lifecycle Maintenance

Data pre-processing Ignored and misunderstood aspects • Preparing data takes a lot of time. SQL helps but it requires expertise, statistical programming in R or SAS, spreadsheet macros, custom applications in C++/Java • Lot of data: tabular data from logs, DBMS, spreadsheets • Emphasis on large n, but many problems have many “small” files • The data set, built with a target in mind, is generally much smaller than raw data • Model computation has received too much attention; pre-processing and deployment need more attention 8/79

Analytics • Simple: – Exploration: queries, cubes, BI, OLAP – Descriptive statistics (histograms, mu/std, quantiles, plots) & statistical tests (means, chi, . . ) • Complex: – Machine Learning, subsuming statistics, and optimization – Graphs (path, connectivity, cliques) 9/60

Machine Learning Models • Unsupervised: – math: simpler – task: clustering, dimensionality reduction, time – models: KM, EM, PCA/SVD, FA, HMM • Supervised: – math: + tune, complex validation than unsuperv. – tasks: classification, regression, series – models: decision trees, Naïve Bayes, linear/logistic regression, SVM, deep neural nets 10/60

Machine Learning and Statistics challenges • Large n or d: cannot fit in RAM, minimize I/O • Multidimensional – d: 10 s-1000 s of dimensions – feature selection and dimensionality reduction • Represented & computed with matrices & vectors – data set: mixed attributes – model: numeric=matrices, discrete: histograms – intermediate computations: matrices, histograms, equations 11/60

Machine Learning Algorithms • Iterative behavior on data set X: – Few: one pass, fixed # passes (SVD, regress. , NB) – Most: iterative, convergence (k-means, CN 5. 0, SVM) • Time complexity: • Research issues: – Faster convergence – Parallel processing, gradually more CPU and GPU – Main memory coming back – time complexity may increase with disk – incremental and online learning 12/60

Graph Problems • G=(V, E) n=|V| • Large n, but G sparse • Typical problems (poly, combinatorial, NP-complete): – Measures: eccentricity, diameter – transitive closure, reachability – Connected components – shortest/critical path – neighborhood analysis

Graph algorithms • Alternative approaches: combinatorial vs matrices • Complexity – P, but high polynomial degree – NP-complete, NP-hard – Exponential

3. Processing 2. 1 Parallel processing 2. 2 Data Science Languages 2. 3 DBMS 2. 4 Hadoop Big Data 2. 5 HPC 15/60

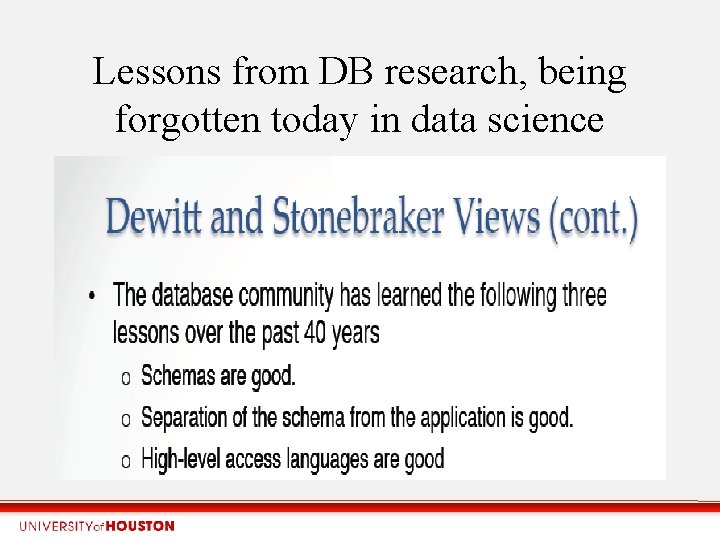

Lessons from DB research, being forgotten today in data science

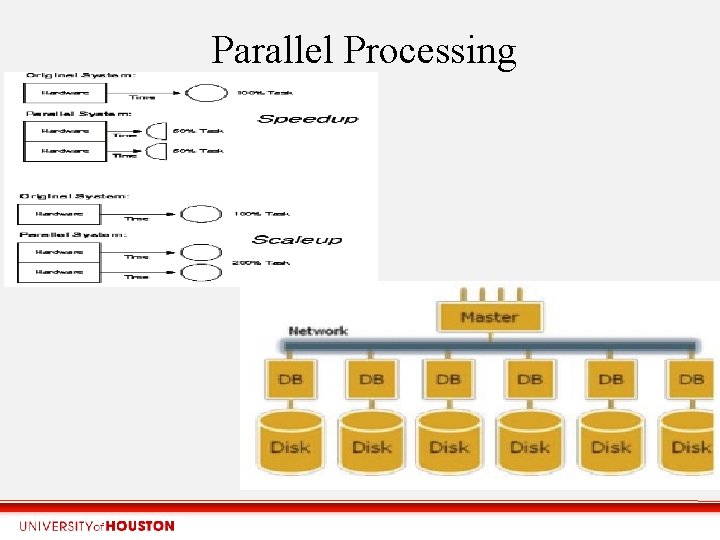

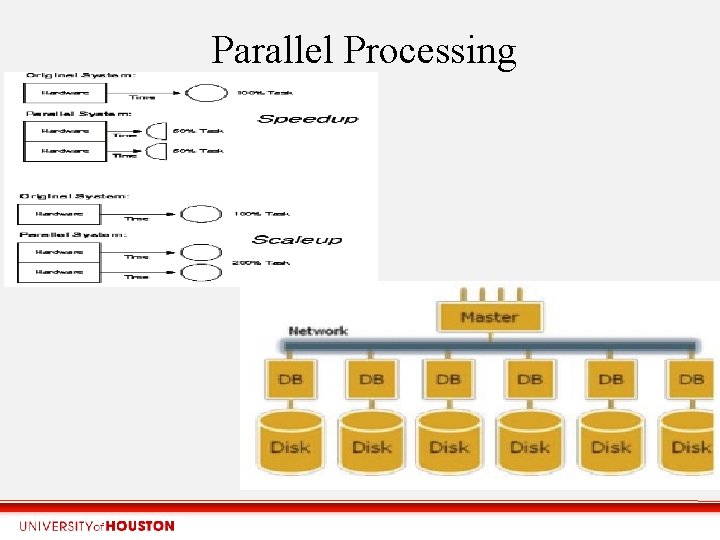

Parallel Processing

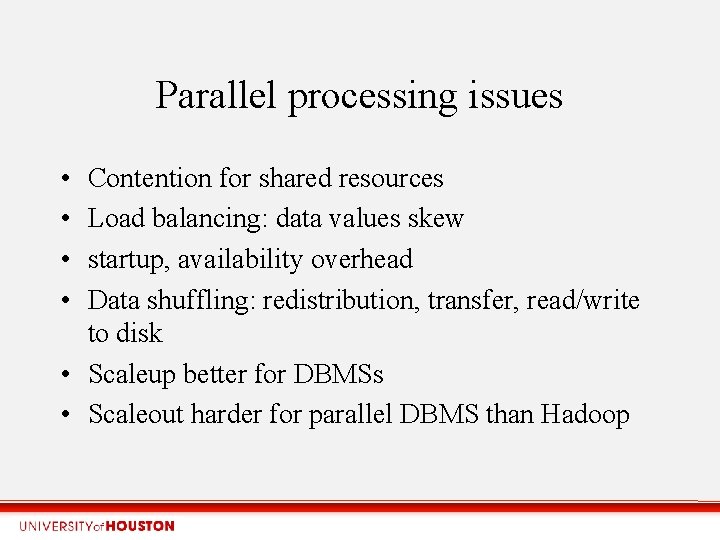

Parallel processing issues • • Contention for shared resources Load balancing: data values skew startup, availability overhead Data shuffling: redistribution, transfer, read/write to disk • Scaleup better for DBMSs • Scaleout harder for parallel DBMS than Hadoop

Data Science languages • Python, growing stronger • R, still important due to long-time research • Pure C++, Java losing ground, libraries wrapped in Python and R • Javascript a front end on the web, doing visualization and some calculations

Data Science languages: plain files, sequential processing • Statistical and DS languages (e. g. Python, R): – exported flat files; sometimes proprietary file formats – Memory-based (processing data records, models, internal data structures) • Programming languages (arrays, programming control statements, libraries) • Main limitation: large n, but gradually solved with distributed processing • Languages: Py, R, SAS, SPSS, Matlab, WEKA 20/60

Analytics inside the DBMS? Moving away • Large, but not huge data volumes: potentially better results with larger amounts of data; less processing time with queries • Deep learning outside DBMS realm • Losing importance: data redundancy; Eliminate proprietary data structures; simplifies data management; security • Reasons: SQL is not as powerful as C++, limited mathematical functionality, complex DBMS architecture to extend source code

• Assumptions: in-DBMS – data records are in the DBMS; exporting cumbsercome/inefficient – data set or graph stored as a table • Programming alternatives: – SQL queries and UDFs: SQL code generation (JDBC), precompiled UDFs. +: SP, embedded SQL, cursors – Internal C Code (direct access to file system and RAM). . hard, non-portable • DBMS advantages: – important: storage, queries, security – secondary: recovery, concurrency cntl, integrity, OLTP 22/60

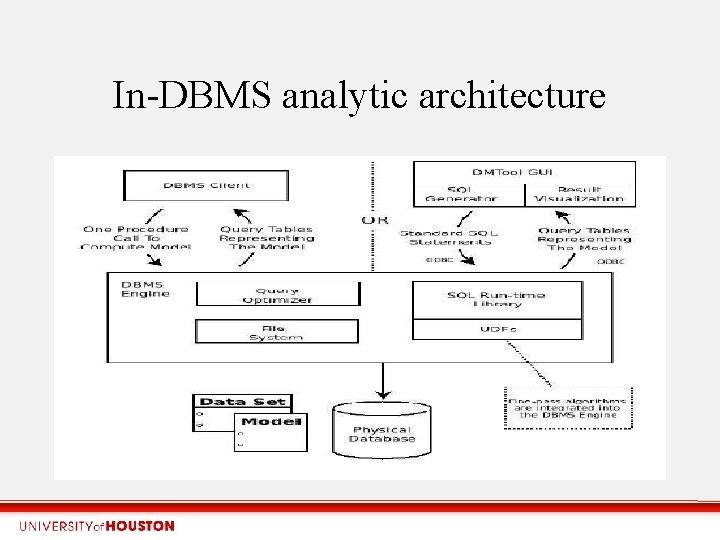

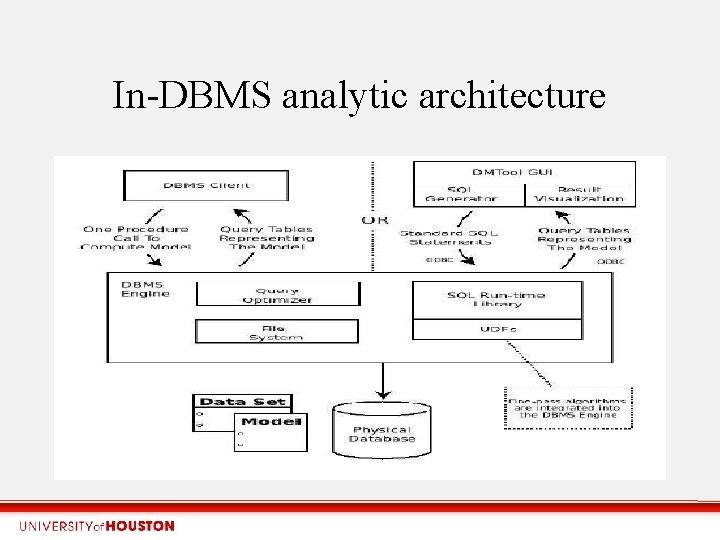

In-DBMS analytic architecture

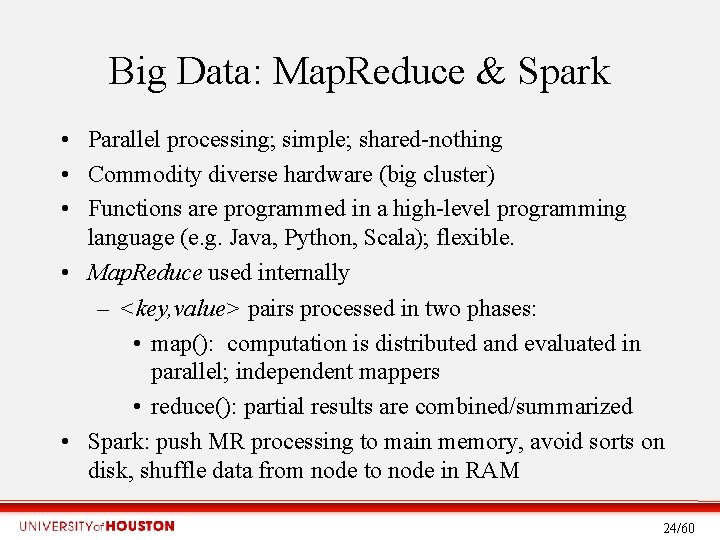

Big Data: Map. Reduce & Spark • Parallel processing; simple; shared-nothing • Commodity diverse hardware (big cluster) • Functions are programmed in a high-level programming language (e. g. Java, Python, Scala); flexible. • Map. Reduce used internally – <key, value> pairs processed in two phases: • map(): computation is distributed and evaluated in parallel; independent mappers • reduce(): partial results are combined/summarized • Spark: push MR processing to main memory, avoid sorts on disk, shuffle data from node to node in RAM 24/60

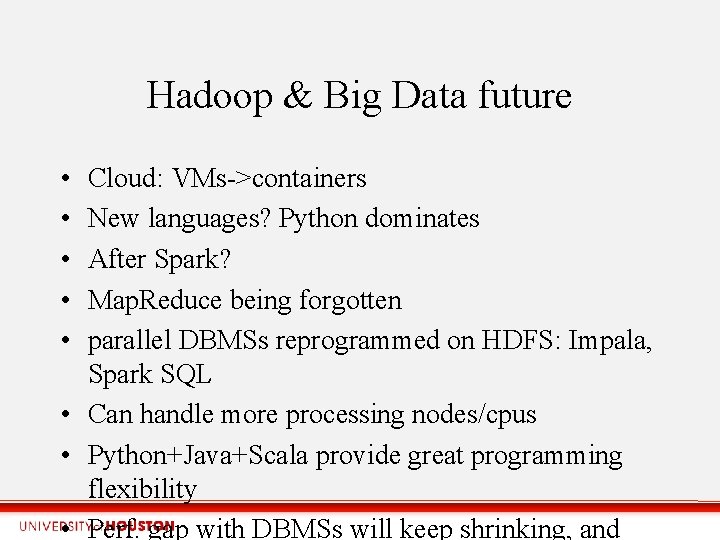

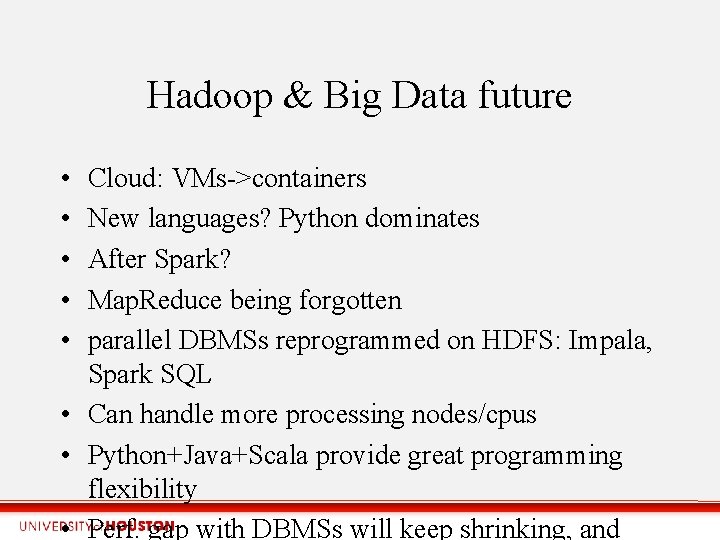

Hadoop & Big Data future • • • Cloud: VMs->containers New languages? Python dominates After Spark? Map. Reduce being forgotten parallel DBMSs reprogrammed on HDFS: Impala, Spark SQL • Can handle more processing nodes/cpus • Python+Java+Scala provide great programming flexibility • Perf. gap with DBMSs will keep shrinking, and

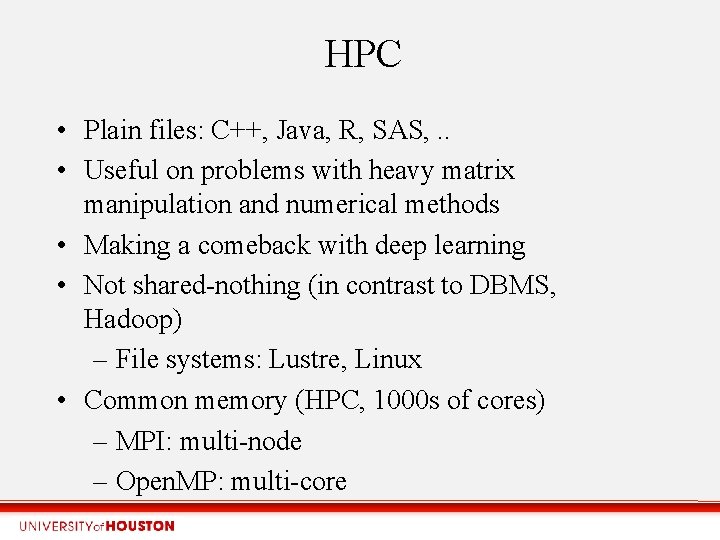

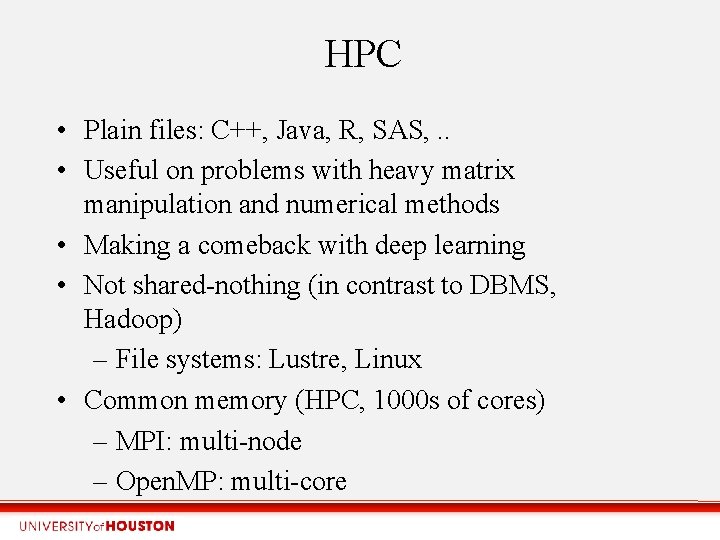

HPC • Plain files: C++, Java, R, SAS, . . • Useful on problems with heavy matrix manipulation and numerical methods • Making a comeback with deep learning • Not shared-nothing (in contrast to DBMS, Hadoop) – File systems: Lustre, Linux • Common memory (HPC, 1000 s of cores) – MPI: multi-node – Open. MP: multi-core

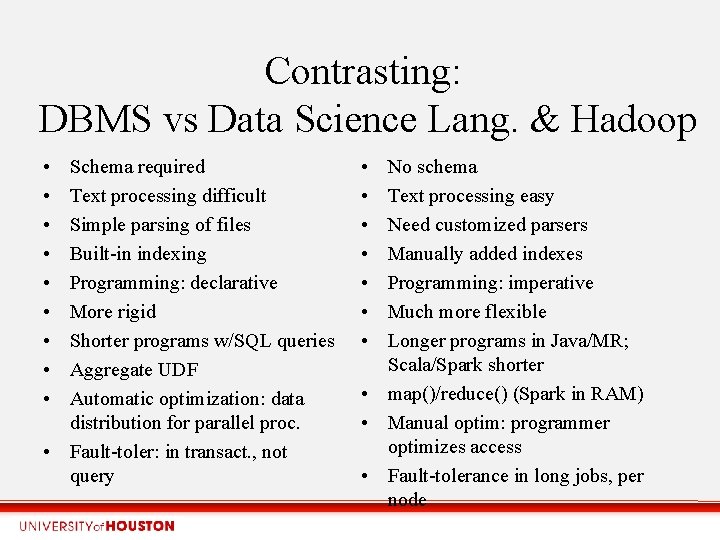

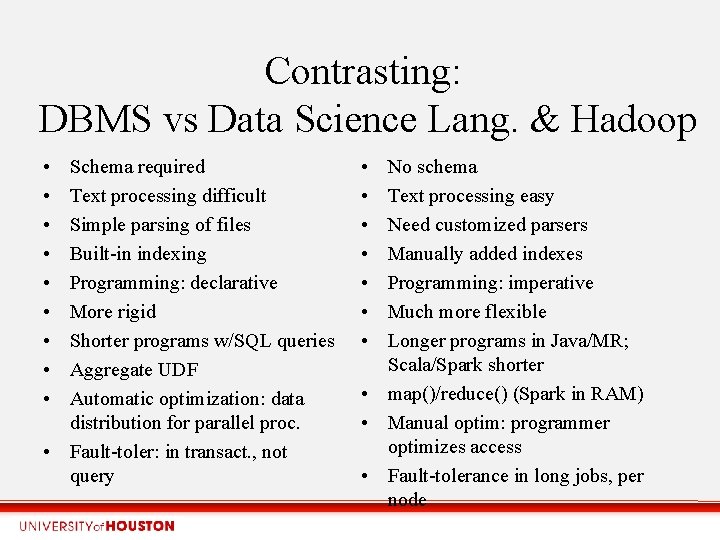

Contrasting: DBMS vs Data Science Lang. & Hadoop • • • Schema required Text processing difficult Simple parsing of files Built-in indexing Programming: declarative More rigid Shorter programs w/SQL queries Aggregate UDF Automatic optimization: data distribution for parallel proc. • Fault-toler: in transact. , not query • • No schema Text processing easy Need customized parsers Manually added indexes Programming: imperative Much more flexible Longer programs in Java/MR; Scala/Spark shorter • map()/reduce() (Spark in RAM) • Manual optim: programmer optimizes access • Fault-tolerance in long jobs, per node

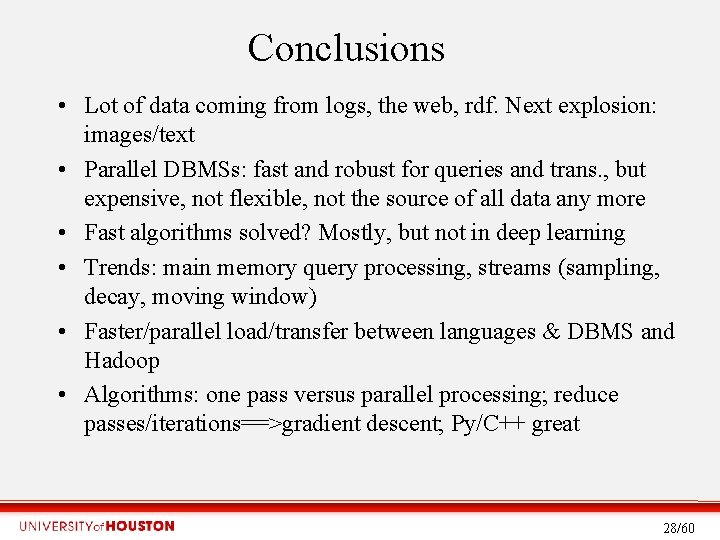

Conclusions • Lot of data coming from logs, the web, rdf. Next explosion: images/text • Parallel DBMSs: fast and robust for queries and trans. , but expensive, not flexible, not the source of all data any more • Fast algorithms solved? Mostly, but not in deep learning • Trends: main memory query processing, streams (sampling, decay, moving window) • Faster/parallel load/transfer between languages & DBMS and Hadoop • Algorithms: one pass versus parallel processing; reduce passes/iterations==>gradient descent; Py/C++ great 28/60

Future work • • • Data pre-processing, especially human in the loop More flexible, low cost, parallel architectures Interoperability, sacrificing some performance ER: automatic ER diagram creation, workflow+structure Scalability in the cloud Incorporate database algorithms and techniques into DS languages • Processing tradeoffs among SQL, Spark, Python 29/60