A cleanslate perspective on where NFV and SDN

- Slides: 42

A clean-slate perspective on where NFV and SDN meet Sylvia Ratnasamy In collaboration with the SPAN group at U. C. Berkeley

• Clean slate throw everything out • Does mean – we start top down – only keep what’s needed • Result is simpler, more streamlined systems – vital for performance and extensibility

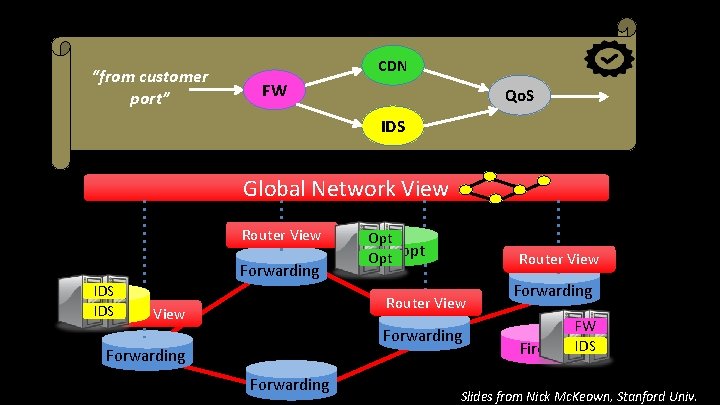

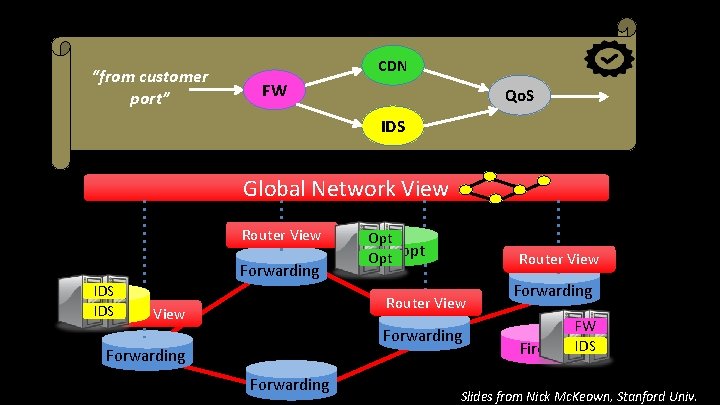

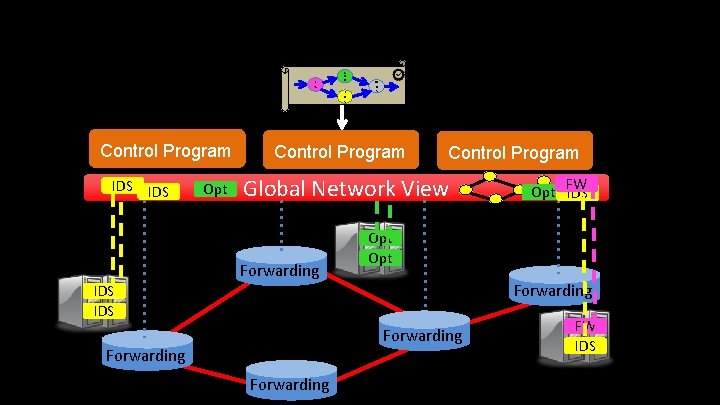

“from customer port” CDN FW Qo. S IDS Global Network View Router View Forwarding IDS Router View Opt WAN Opt opt Router View Forwarding FW Firewall IDS Slides from Nick Mc. Keown, Stanford Univ.

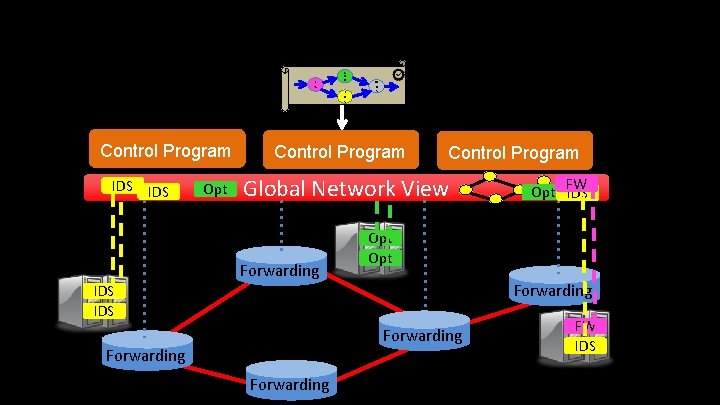

F W C D N I D S Control Program IDS Opt Q o S Control Program Global Network View Forwarding Opt Forwarding IDS Forwarding FW Opt IDS FW IDS

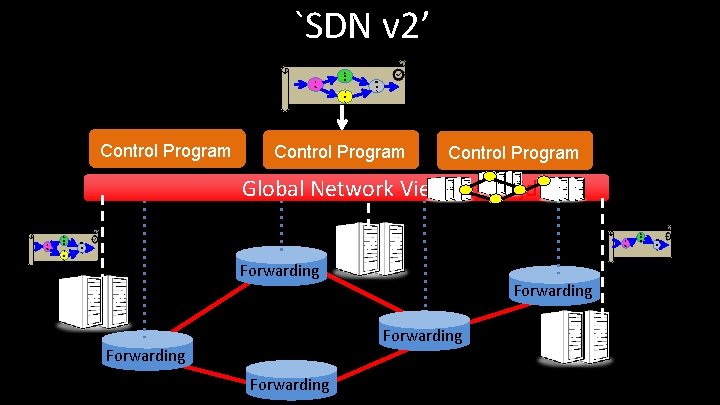

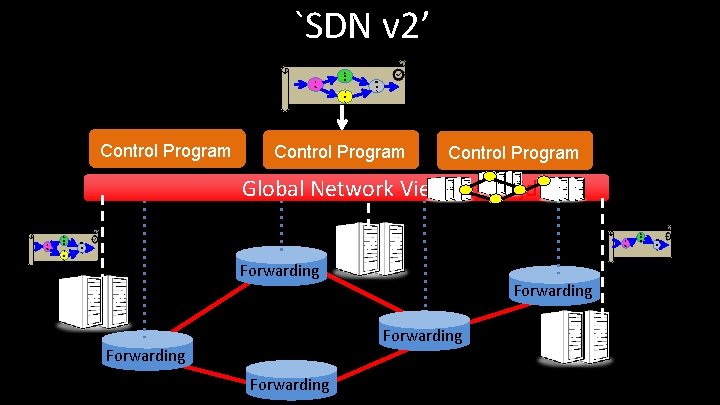

`SDN v 2’ F W C D N I D S Control Program Q o S Control Program Global Network Viewjasjdlasjd F W C D N I D S F W Q o S Forwarding Forwarding C D N Q o S

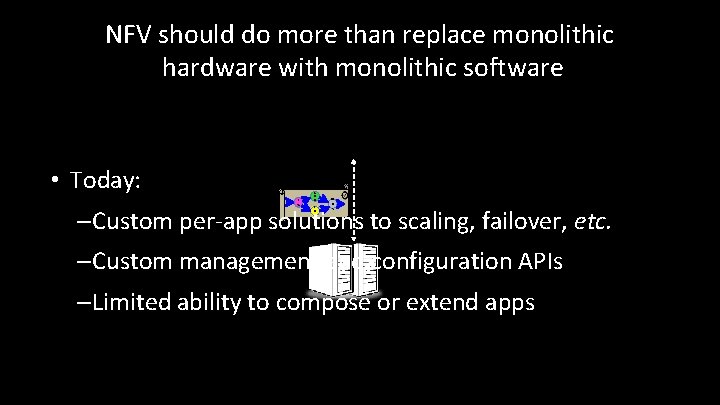

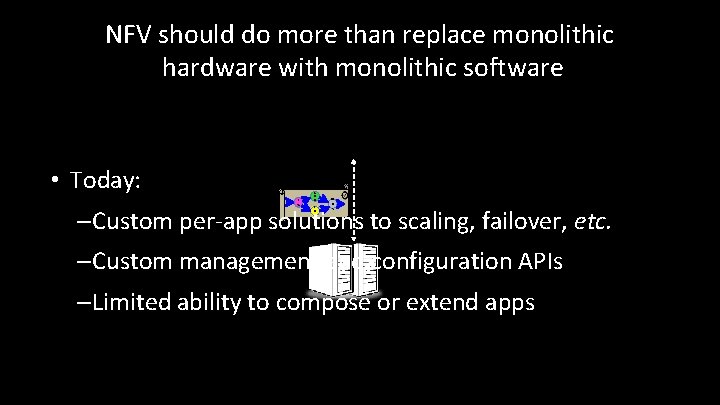

NFV should do more than replace monolithic hardware with monolithic software • Today: F W C D N I D S Q o S – Custom per-app solutions to scaling, failover, etc. – Custom management and configuration APIs – Limited ability to compose or extend apps

Contrast / Inspiration • Modern data analytics systems (Spark, Hadoop, etc. ) – a programming model (map-reduce) – but also a runtime framework • Framework takes care of routine tasks and end-to-end coordination – placement, scheduling, elastic scaling, failover, … • Need a runtime framework for NFV – Frees app developers to focus on app logic – Automates/consolidates management for operators

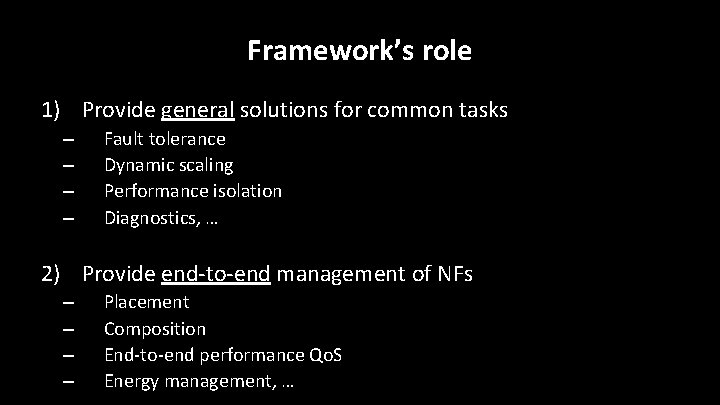

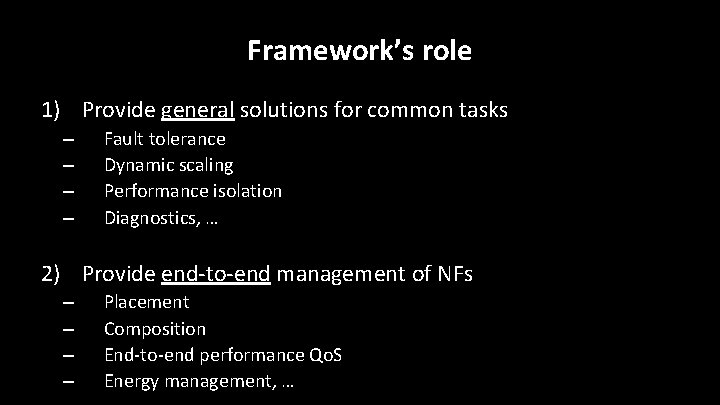

Framework’s role 1) Provide general solutions for common tasks – – Fault tolerance Dynamic scaling Performance isolation Diagnostics, … 2) Provide end-to-end management of NFs – – Placement Composition End-to-end performance Qo. S Energy management, …

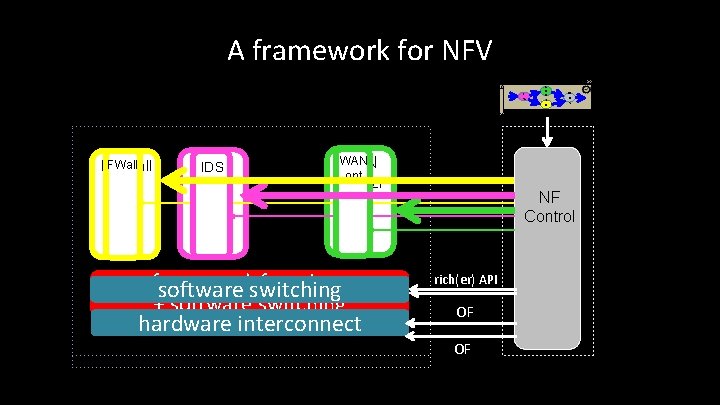

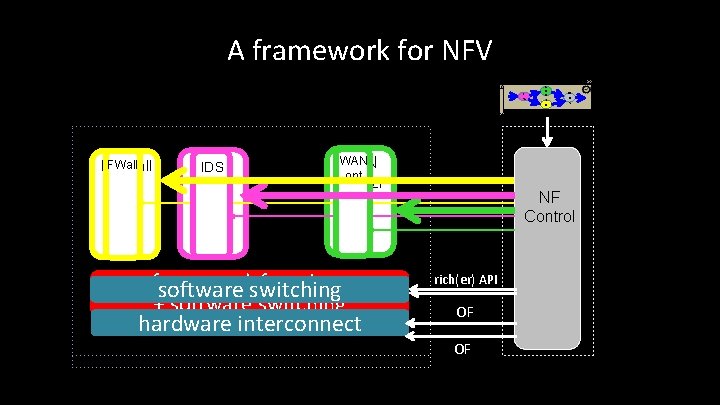

A framework for NFV F W FWall Firewall IDS C D N I D S Q o S WAN optmizr framework functions software switching + software switching hardware interconnect NF Control rich(er) API OF OF

Implications • For NF vendor – write core app logic – provide some NF-specific information • For operator – write high-level policy pipeline – select NFs – provide some hardware information • Framework automates pipeline execution – automated response to load changes, failure, etc.

Remainder of this talk F W C D N I D S Q o S Details in ACM SOSP 2015 paper “E 2: A runtime framework for NFV”

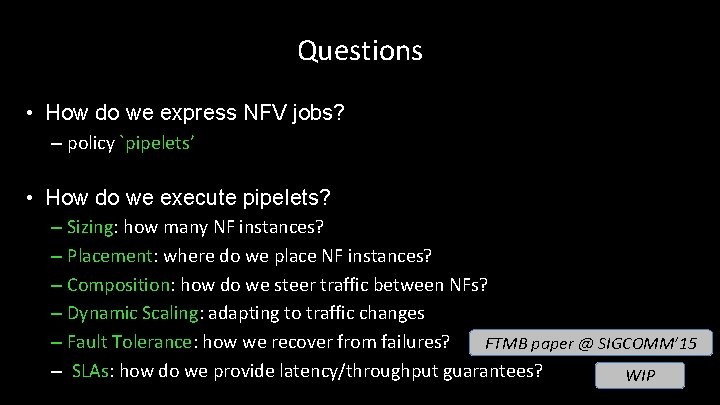

Questions • How do we express NFV jobs? – policy `pipelets’ • How do we execute pipelets? – Sizing: how many NF instances? – Placement: where do we place NF instances? – Composition: how do we steer traffic between NFs? – Dynamic Scaling: adapting to traffic changes – Fault Tolerance: how we recover from failures? FTMB paper @ SIGCOMM’ 15 – SLAs: how do we provide latency/throughput guarantees? WIP 12

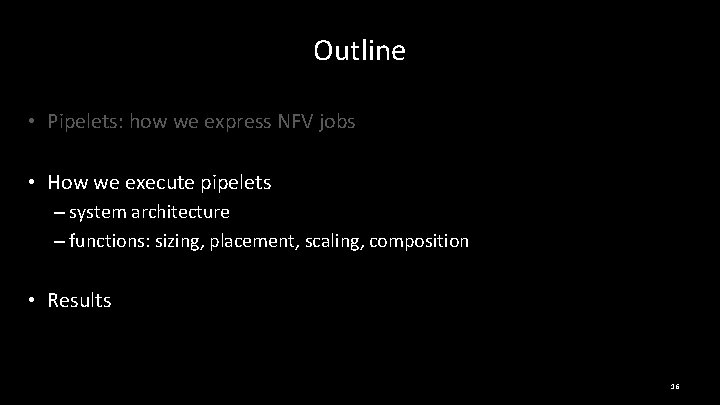

Outline • Pipelets: how we express NFV jobs • How we execute pipelets – system architecture – functions: sizing, placement, scaling, composition • Results 13

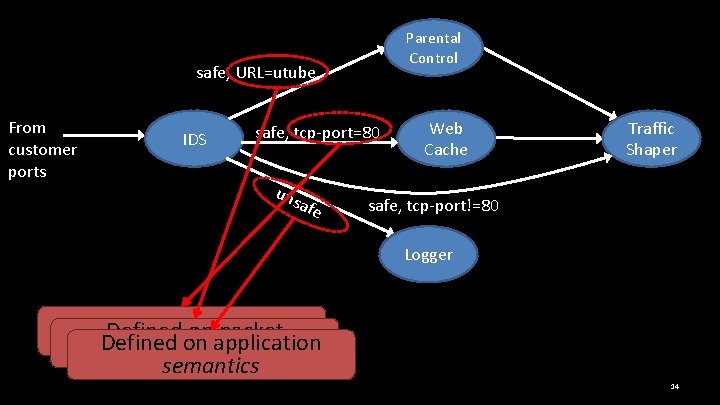

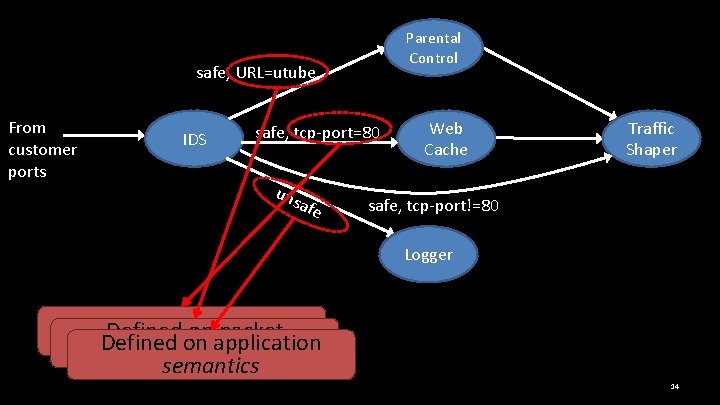

Parental Control safe, URL=utube From customer ports IDS safe, tcp-port=80 uns afe Web Cache Traffic Shaper safe, tcp-port!=80 Logger Defined onapplication packet Defined on packet header Defined on bytestream semantics 14

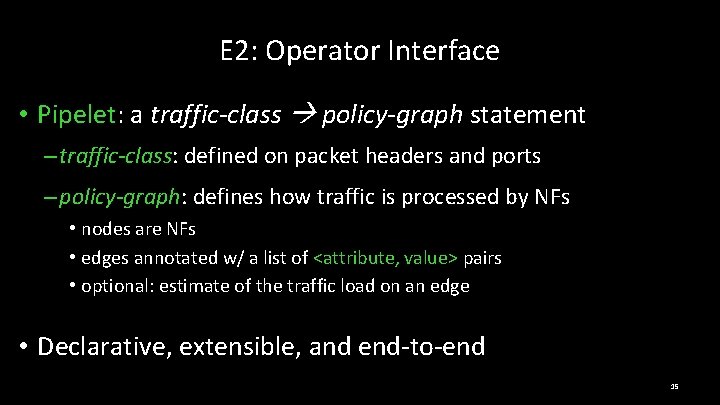

E 2: Operator Interface • Pipelet: a traffic-class policy-graph statement – traffic-class: defined on packet headers and ports – policy-graph: defines how traffic is processed by NFs • nodes are NFs • edges annotated w/ a list of <attribute, value> pairs • optional: estimate of the traffic load on an edge • Declarative, extensible, and end-to-end 15

Outline • Pipelets: how we express NFV jobs • How we execute pipelets – system architecture – functions: sizing, placement, scaling, composition • Results 16

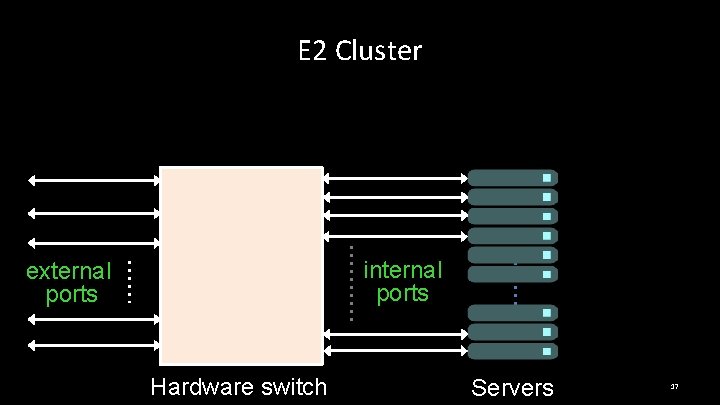

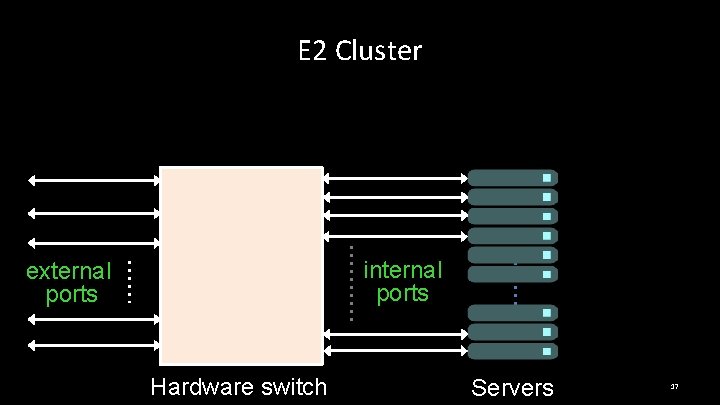

E 2 Cluster internal ports external ports Hardware switch Servers 17

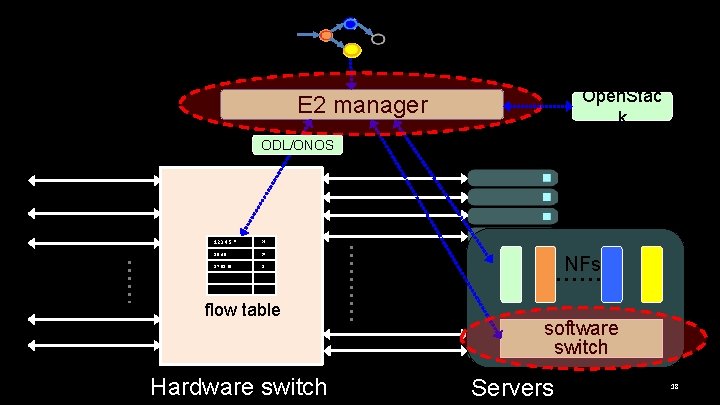

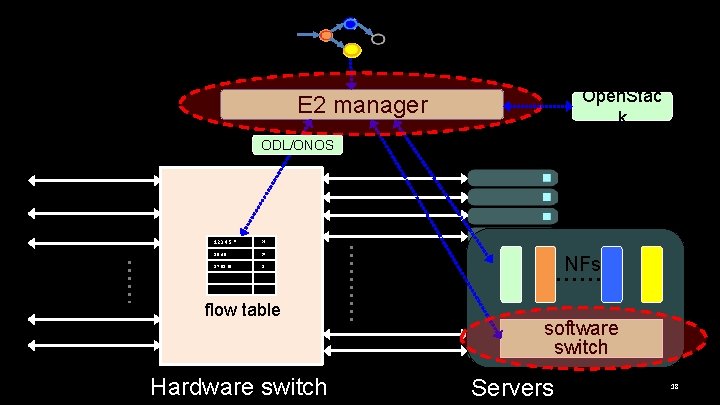

Open. Stac k E 2 manager ODL/ONOS 123. 4. 5. * p 2 19. 4. 5. *. * p 7 87. 98. 65. * D flow table Hardware switch NFs software switch Servers 18

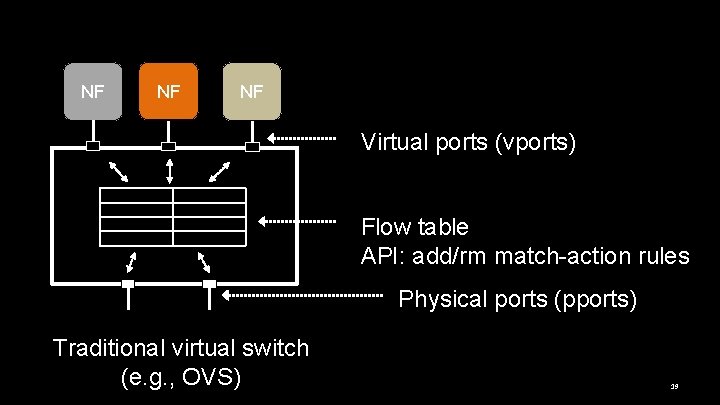

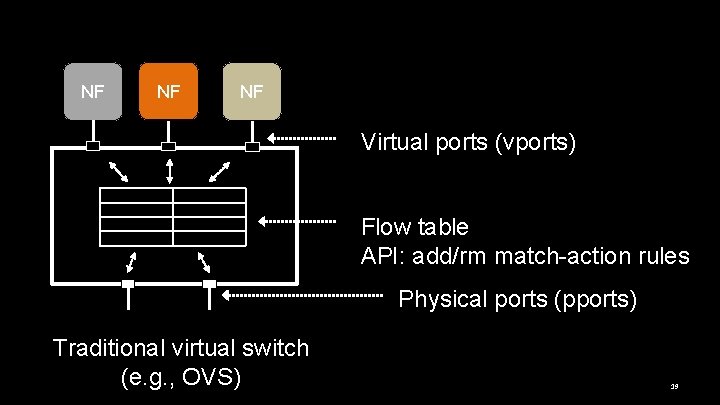

NF NF NF Virtual ports (vports) Flow table API: add/rm match-action rules Physical ports (pports) Traditional virtual switch (e. g. , OVS) 19

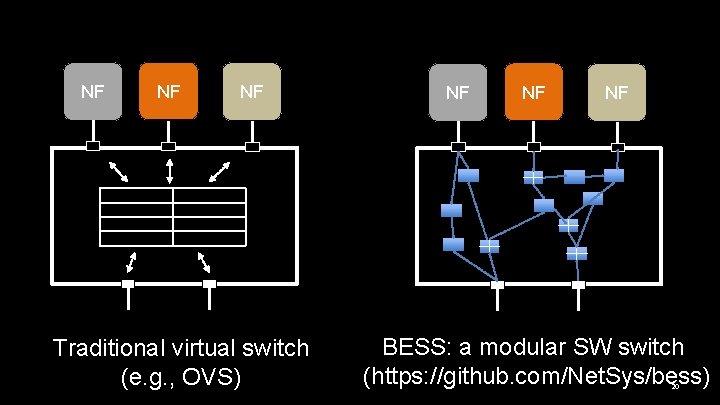

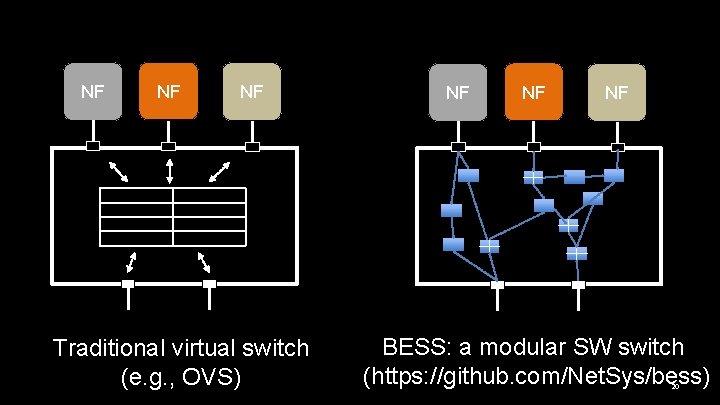

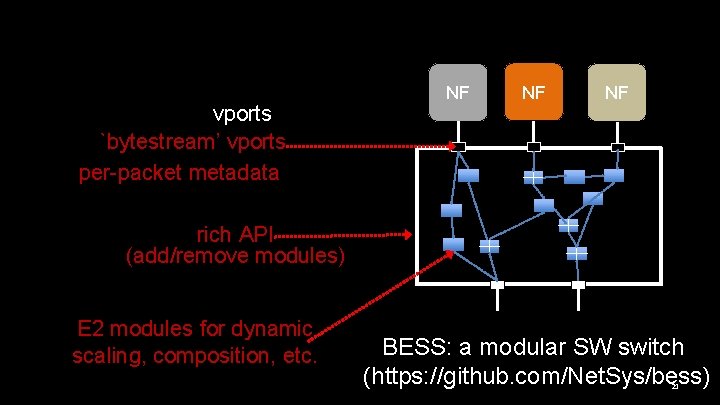

NF NF NF Traditional virtual switch (e. g. , OVS) NF NF NF BESS: a modular SW switch (https: //github. com/Net. Sys/bess) 20

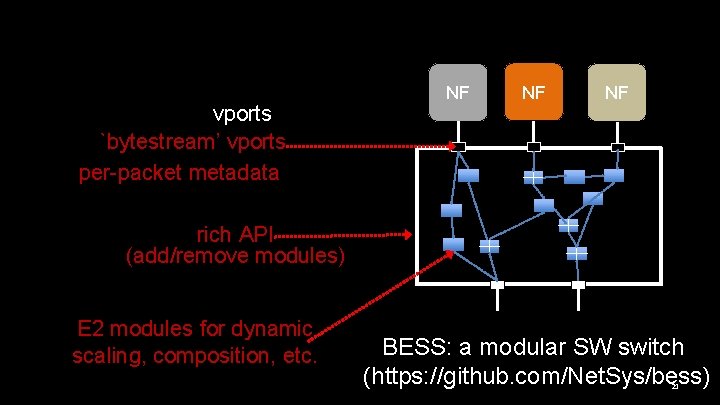

vports `bytestream’ vports per-packet metadata NF NF NF rich API (add/remove modules) E 2 modules for dynamic scaling, composition, etc. BESS: a modular SW switch (https: //github. com/Net. Sys/bess) 21

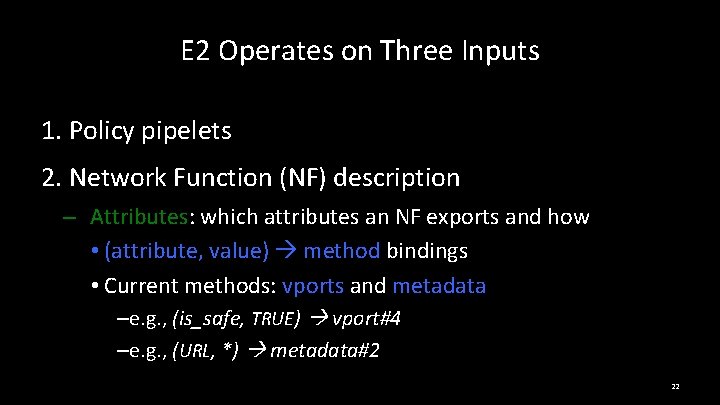

E 2 Operates on Three Inputs 1. Policy pipelets 2. Network Function (NF) description – Attributes: which attributes an NF exports and how • (attribute, value) method bindings • Current methods: vports and metadata –e. g. , (is_safe, TRUE) vport#4 –e. g. , (URL, *) metadata#2 22

E 2 Operates on Three Inputs 1. Policy pipelets 2. Network Function (NF) description – Attributes: which attributes an NF exports and how – Affinity: traffic aggregate an NF acts on • e. g. , “per flow”, “per prefix”, “per port” 23

E 2 Operates on Three Inputs 1. Policy pipelets 2. Network Function (NF) description – Attributes: which attributes an NF exports and how – Affinity: traffic aggregate an NF acts on – Performance profile (optional) • e. g. , per-core throughput 24

E 2 Operates on Three Inputs 1. Policy pipelets 2. Network Function (NF) description 3. Hardware description – capabilities of HW infrastructure – e. g. , #cores/server, #ports/switch, bandwidth/link, … 25

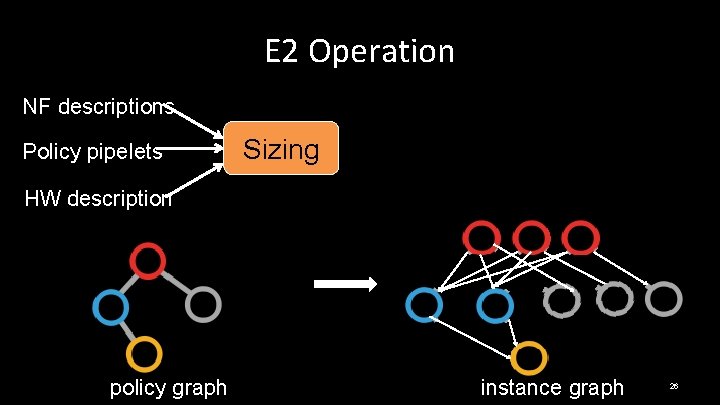

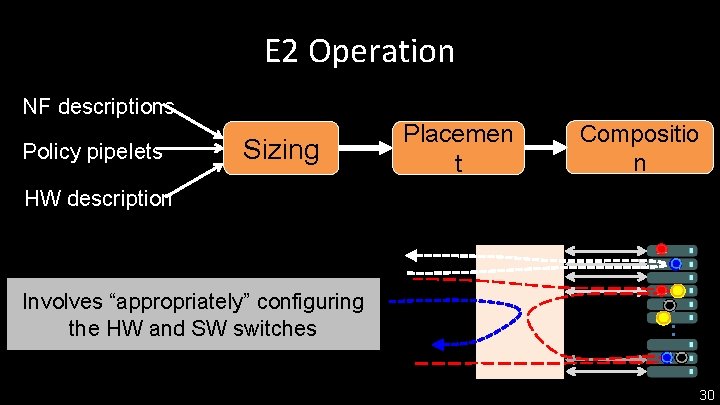

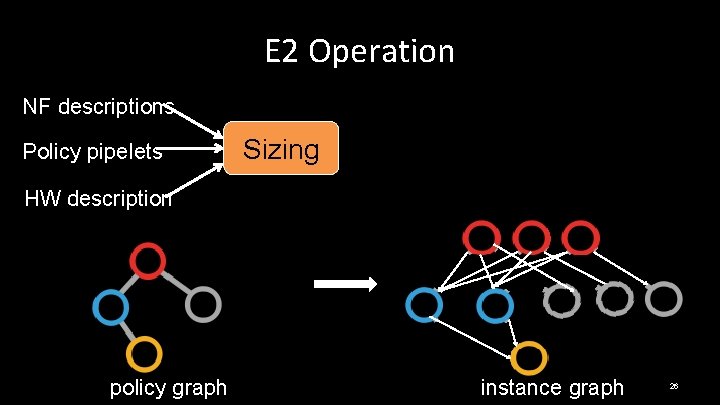

E 2 Operation NF descriptions Policy pipelets Sizing HW description policy graph instance graph 26

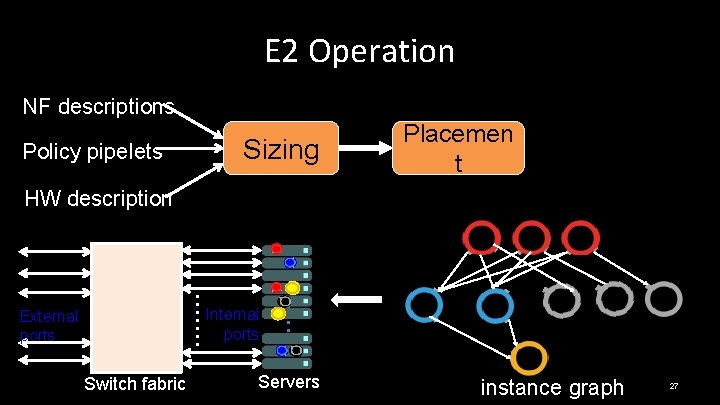

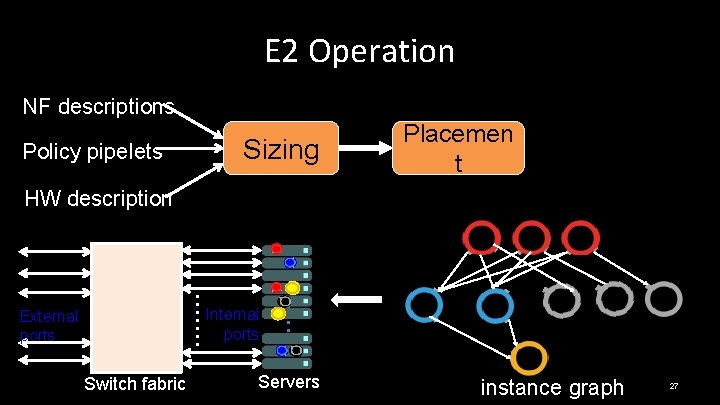

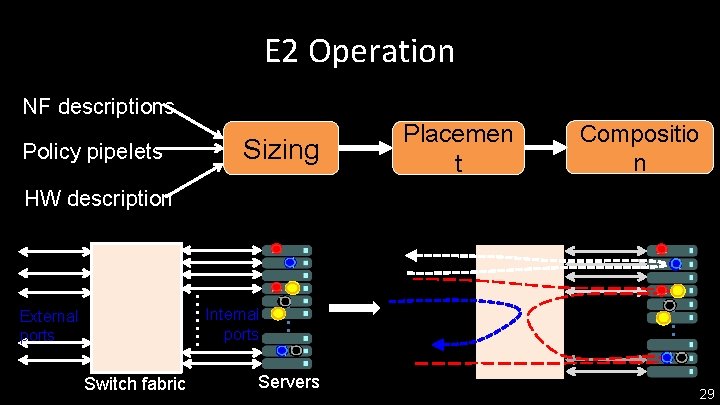

E 2 Operation NF descriptions Policy pipelets Sizing Placemen t HW description Internal ports External ports Switch fabric Servers instance graph 27

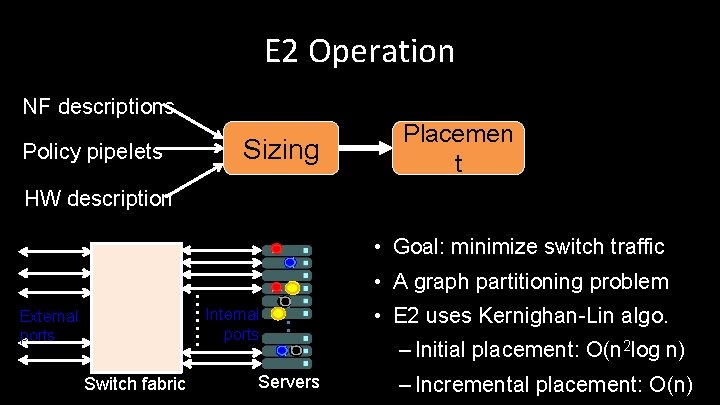

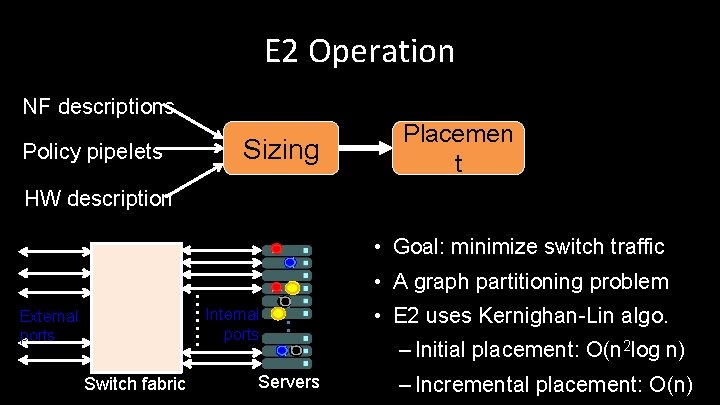

E 2 Operation NF descriptions Policy pipelets Sizing Placemen t HW description • Goal: minimize switch traffic • A graph partitioning problem Internal ports External ports Switch fabric Servers • E 2 uses Kernighan-Lin algo. – Initial placement: O(n 2 log n) – Incremental placement: O(n)

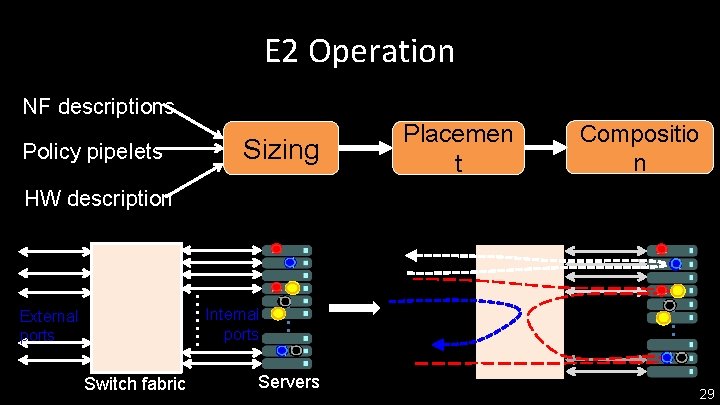

E 2 Operation NF descriptions Policy pipelets Sizing Placemen t Compositio n HW description Internal ports External ports Switch fabric Servers 29

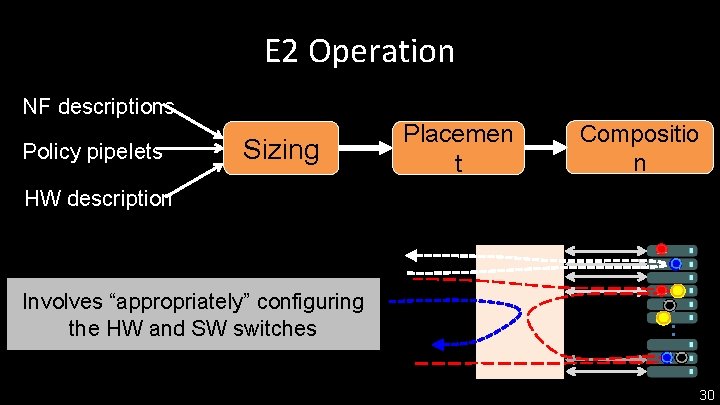

E 2 Operation NF descriptions Policy pipelets Sizing Placemen t Compositio n HW description Involves “appropriately” configuring the HW and SW switches 30

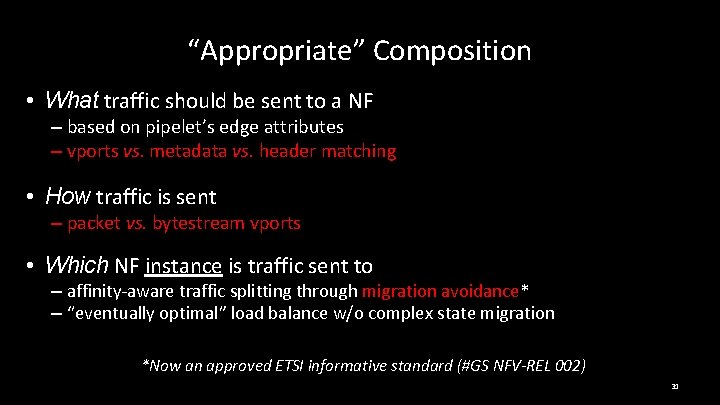

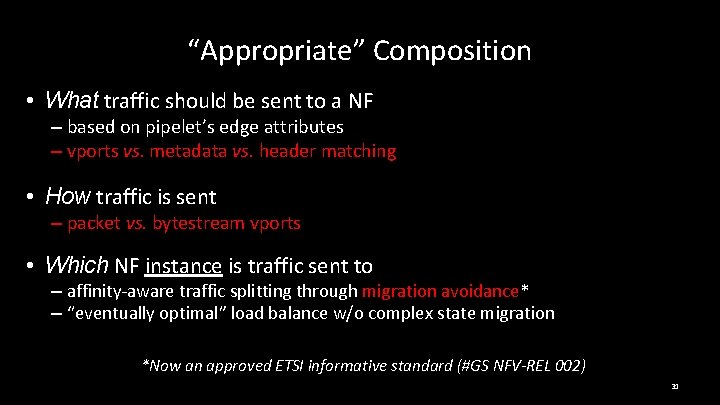

“Appropriate” Composition • What traffic should be sent to a NF – based on pipelet’s edge attributes with NF methods – vports vs. metadata vs. header matching • How traffic is sent – packet vs. bytestream vports • Which NF instance is traffic sent to – affinity-aware traffic splitting through migration avoidance** – “eventually optimal” load balance w/o complex state migration *Now an approved ETSI informative standard (#GS NFV-REL 002) 31

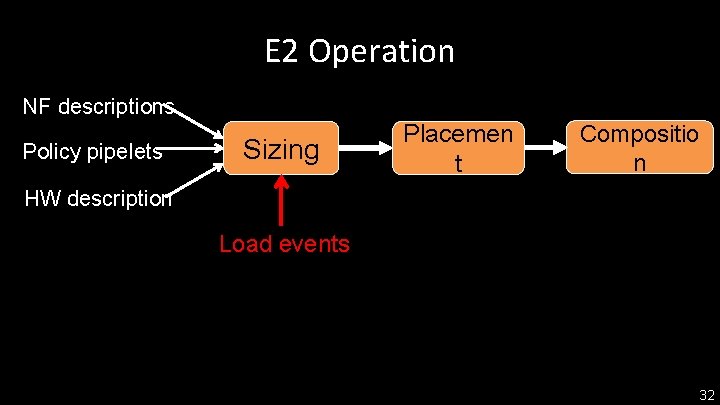

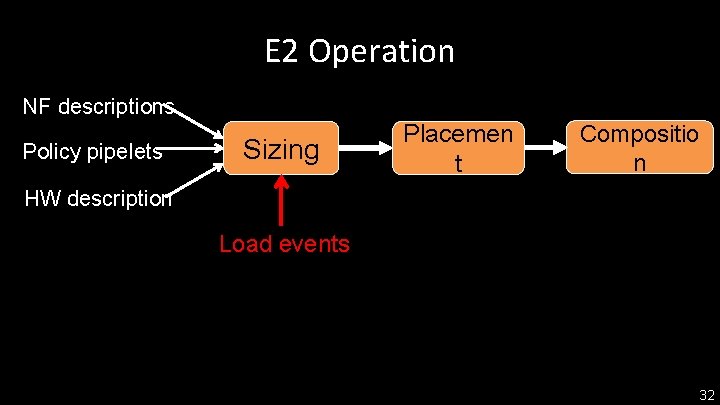

E 2 Operation NF descriptions Policy pipelets Sizing Placemen t Compositio n HW description Load events 32

Outline • Pipelets: how we express NFV jobs • How we execute pipelets – system architecture – functions: sizing, placement, scaling, composition • Results 33

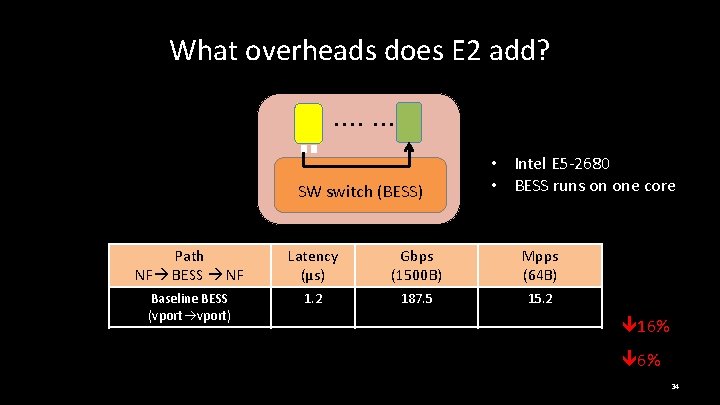

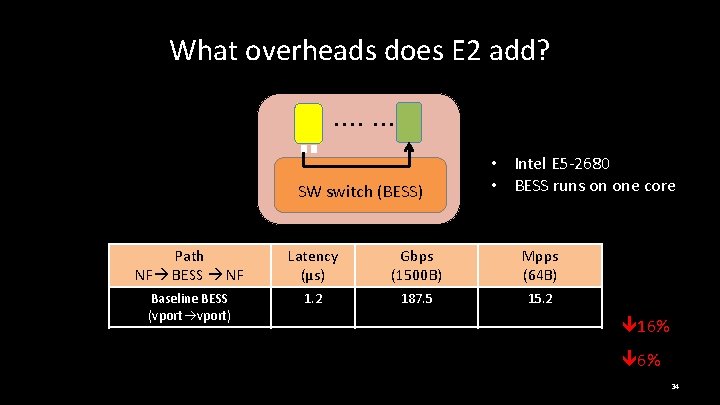

What overheads does E 2 add? SW switch (BESS) • Intel E 5 -2680 • BESS runs on one core Path NF BESS NF Latency (μs) Gbps (1500 B) Mpps (64 B) Baseline BESS (vport) 1. 2 187. 5 15. 2 Header-Match 1. 56 152. 5 12. 76 Metadata-Match 1. 695 145. 826 11. 96 16% 6% 34

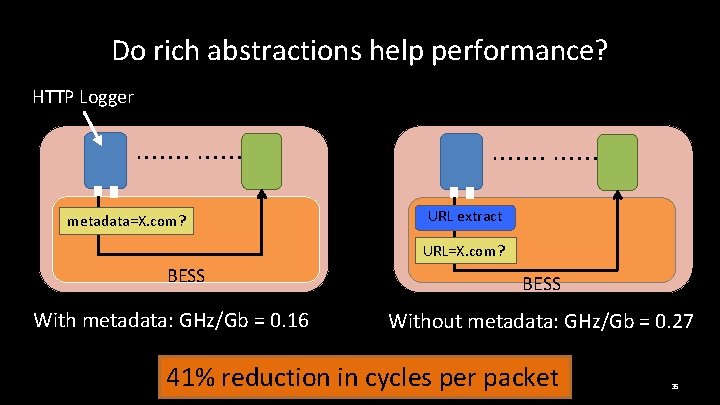

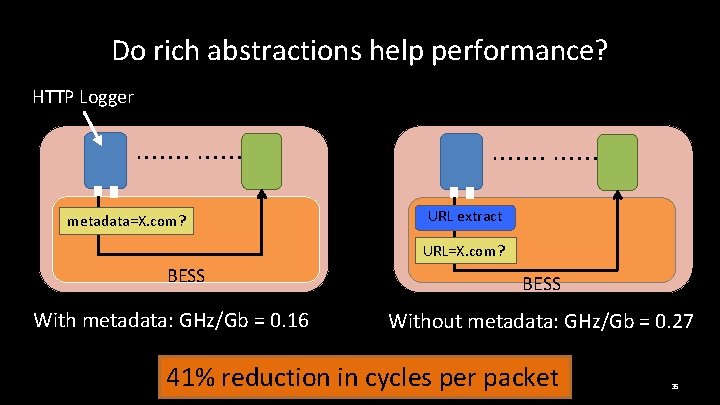

Do rich abstractions help performance? HTTP Logger metadata=X. com? URL extract URL=X. com? BESS With metadata: GHz/Gb = 0. 16 BESS Without metadata: GHz/Gb = 0. 27 41% reduction in cycles per packet 35

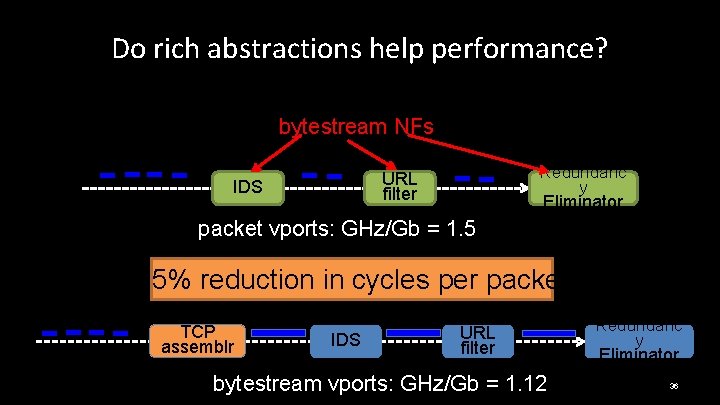

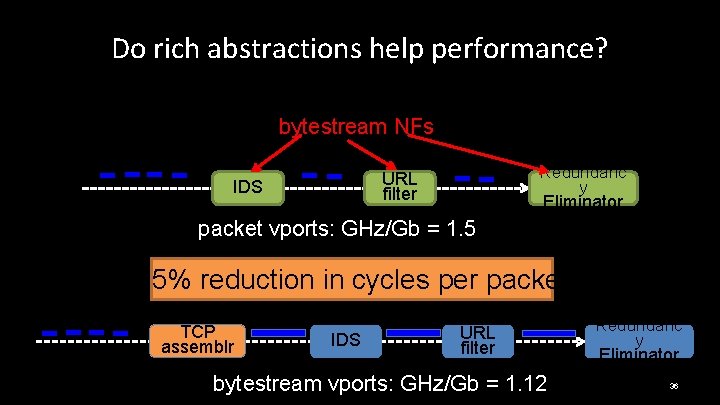

Do rich abstractions help performance? bytestream NFs Redundanc y URL filter IDS Eliminator packet vports: GHz/Gb = 1. 5 25% reduction in cycles per packet TCP assemblr IDS URL filter bytestream vports: GHz/Gb = 1. 12 Redundanc y Eliminator 36

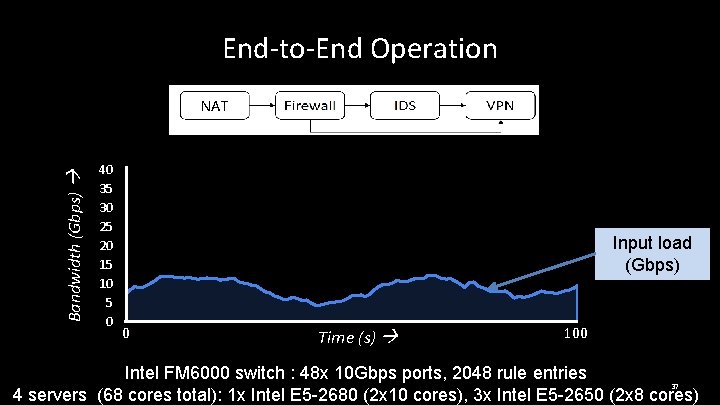

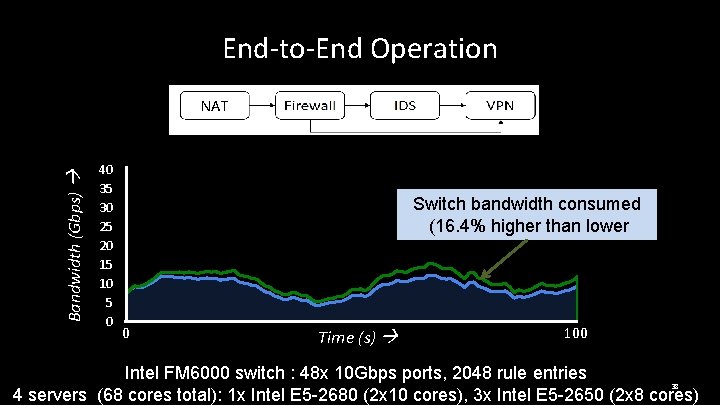

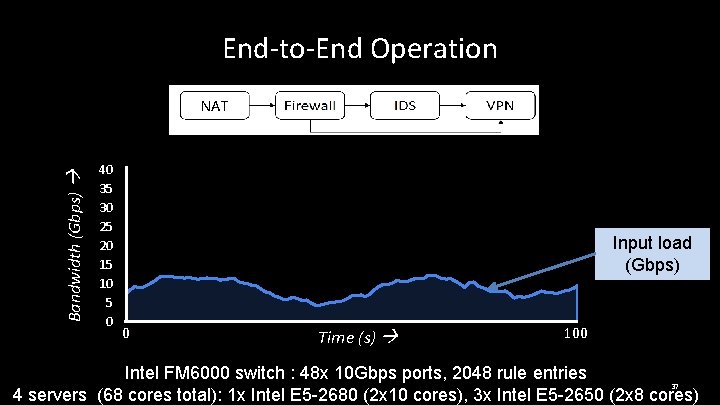

End-to-End Operation Bandwidth (Gbps) NAT 40 35 30 25 20 15 10 5 0 Input load (Gbps) 0 Time (s) 100 Intel FM 6000 switch : 48 x 10 Gbps ports, 2048 rule entries 37 4 servers (68 cores total): 1 x Intel E 5 -2680 (2 x 10 cores), 3 x Intel E 5 -2650 (2 x 8 cores)

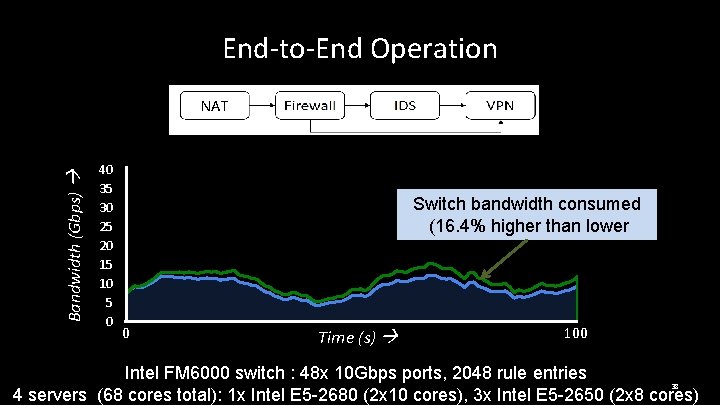

End-to-End Operation Bandwidth (Gbps) NAT 40 35 30 25 20 15 10 5 0 Switch bandwidth consumed (16. 4% higher than lower bound) 0 Time (s) 100 Intel FM 6000 switch : 48 x 10 Gbps ports, 2048 rule entries 38 4 servers (68 cores total): 1 x Intel E 5 -2680 (2 x 10 cores), 3 x Intel E 5 -2650 (2 x 8 cores)

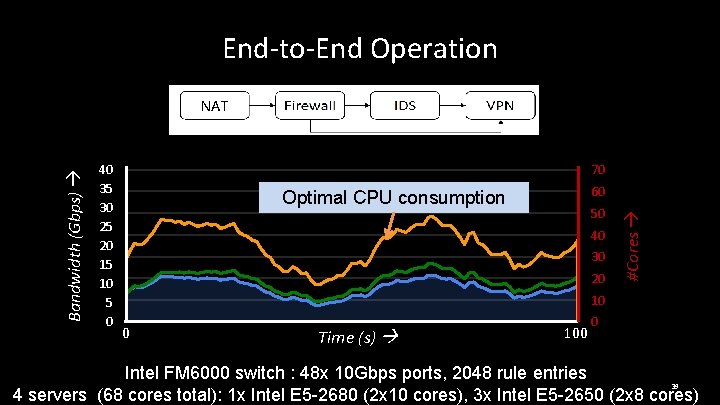

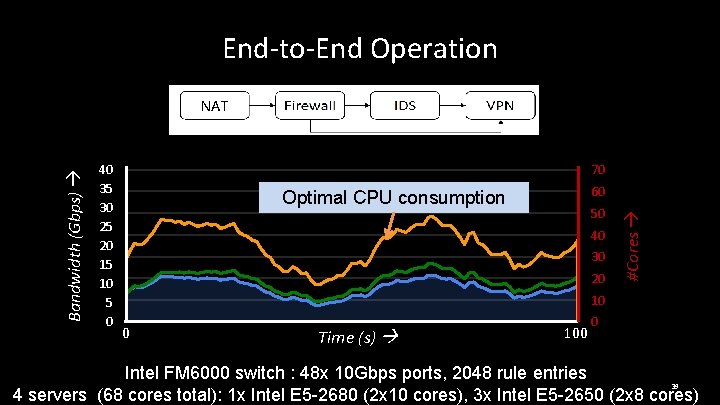

End-to-End Operation 40 35 30 25 20 15 10 5 0 70 60 Optimal CPU consumption 50 40 30 20 #Cores Bandwidth (Gbps) NAT 10 0 Time (s) 100 0 Intel FM 6000 switch : 48 x 10 Gbps ports, 2048 rule entries 39 4 servers (68 cores total): 1 x Intel E 5 -2680 (2 x 10 cores), 3 x Intel E 5 -2650 (2 x 8 cores)

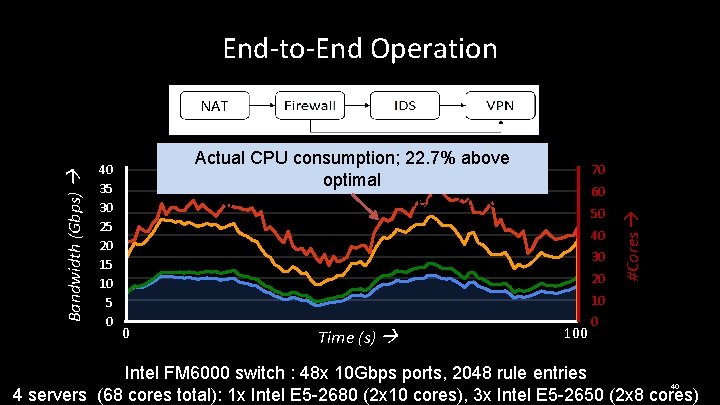

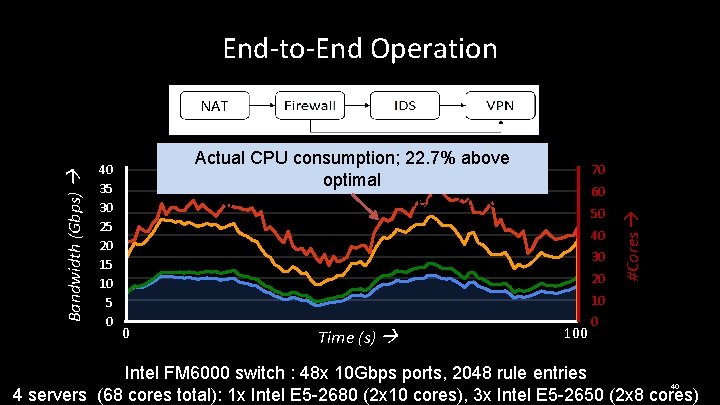

End-to-End Operation 40 35 30 25 20 15 10 5 0 Actual CPU consumption; 22. 7% above optimal #NF instances varies between 22 -56 70 60 50 40 30 20 #Cores Bandwidth (Gbps) NAT 10 0 Time (s) 100 0 Intel FM 6000 switch : 48 x 10 Gbps ports, 2048 rule entries 40 4 servers (68 cores total): 1 x Intel E 5 -2680 (2 x 10 cores), 3 x Intel E 5 -2650 (2 x 8 cores)

Conclusion • NFV needs a runtime framework – simplifies NF development and management • Interface: traffic-class NF-pipeline • Design for a clean separation of concerns – NFs implement app-specific processing – Framework implements common functions – Framework coordinates end-to-end execution 41

Thanks! 42