A Cholesky OutofCore factorization Jorge Castellanos Germn Larrazbal

A Cholesky Out-of-Core factorization Jorge Castellanos Germán Larrazábal Centro Multidisciplinario de Visualización y Cómputo Científico (CEMVICC) Facultad Experimental de Ciencias y Tecnología (FACYT) Universidad de Carabobo Valencia - Venezuela Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 1

Out-of-core - motivation The computational kernel that consumes the more of CPU time in a engineer numerical simulation package is the linear solver The linear solver is mainly based on a classic solving method, i. e. , direct or iterative method The problem matrix associated to the equations system is sparse and have a big size Iterative methods are highly parallel (based on matrix-vector product) but they are not general and they need preconditioning Direct methods (Cholesky, LDLT or LU) are more general (inputs: matrix A and rhs vector b), but they main advantage is the amount of memory needed when the problem size A increases Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 2

Out-of-core - motivation Efficient numerical solutions of linear system of equations can show limitations when the associated set of matrices does not fit into memory Data can not be stored in memory, therefore it must be stored in hard disk Disk access is very slow (latency+bandwidth) compared to memory access In order to have good performance, the algorithm should (Toledo, 1999): Carry out the storage in terms of consecutive large blocks Use the data stored in memory as many times as possible Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 3

Out-of-core - definition Algorithms which are designed to have efficient performance when their data structure is stored in disk are called “out-of-core algorithms” (Toledo, 1999). Out-of core applications handle very large data sets to store in conventional internal memory There a group of applications (parallel out-of-core) with data sets whose address space exceeds the capacity of the virtual memory Examples: Scientific computing (modeling, simulation, etc. ) Scientific visualization Database Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 4

Another face The out-of-core concept permits users to solve efficiently large problems using inexpensive computers Storage in disk is cheaper than storage in main memory (DRAM) and its actual cost rate is 1 to 100 (dec, 2007) An out-of-core algorithm running on a machine with a limited memory can give a better cost/performance ratio than in-core algorithms running on a machine with enough memory Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 5

Related work In 1984, J. Reid creates the TREESOLV, written in fortran to solve big linear equation sytems based on the multifrontal algorithm In 1999, E. Rothberg and R. Shreiber, review the implementation of 3 out-of-core methods for the Cholesky factorization. Each is based on a partitioning of the matrix into panels En 2009, J. Reid and J. Scott present a Cholesky Out-of-core solver written in fortran 95, whose operation is based on a virtual memory package that provides the facilities to read/write hard disk files Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 6

Out-of-core support - proposal To incorporate into UCSparse. Lib library a low level software layer that frees the user from worrying about memory constraints The low level software layer will handle the I/O operations automatically The support includes: caches, prefetching, multithreading, among other features to obtain good computational performance The out-of-core support manages the memory The implementation makes use of “in-core” coding included in the UCSparse. Lib library Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 7

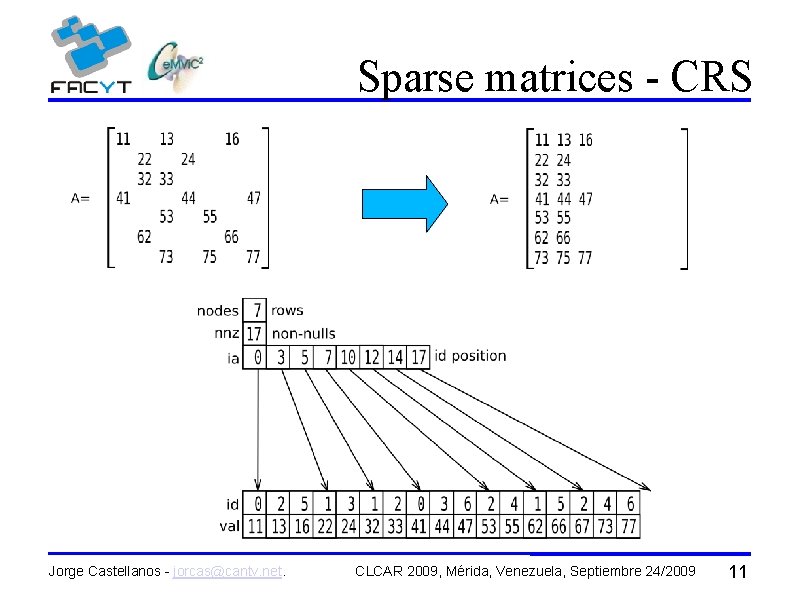

UCSparse. Lib (Larrazabal, 2004) has a set of functionalities for solving sparse linear systems The library handles and stores sparse matrices using a compact format similar to: CRS (Compressed Row Storage) CCS (Compressed Column Storage) It was designed with an out-of-core perspective Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 8

UCSparse. Lib PDVSA - INTEVEP S. A. Oil reservoir simulator (SEMIYA) CSRC (Computational Sciences Research Center), SDSU, USA Ocean simulator Computational fluid dynamic ULA Portal of damage project CEMVICC CFD projects Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 9

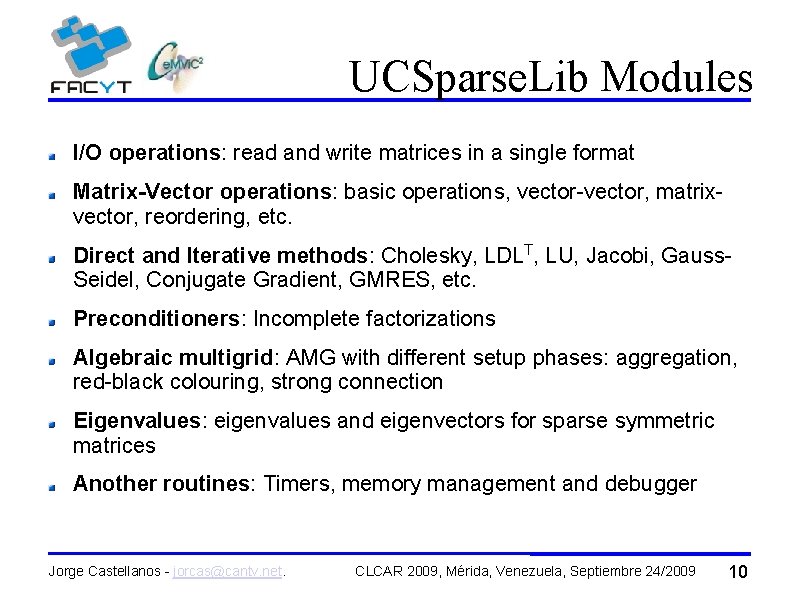

UCSparse. Lib Modules I/O operations: read and write matrices in a single format Matrix-Vector operations: basic operations, vector-vector, matrixvector, reordering, etc. Direct and Iterative methods: Cholesky, LDLT, LU, Jacobi, Gauss. Seidel, Conjugate Gradient, GMRES, etc. Preconditioners: Incomplete factorizations Algebraic multigrid: AMG with different setup phases: aggregation, red-black colouring, strong connection Eigenvalues: eigenvalues and eigenvectors for sparse symmetric matrices Another routines: Timers, memory management and debugger Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 10

Sparse matrices - CRS Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 11

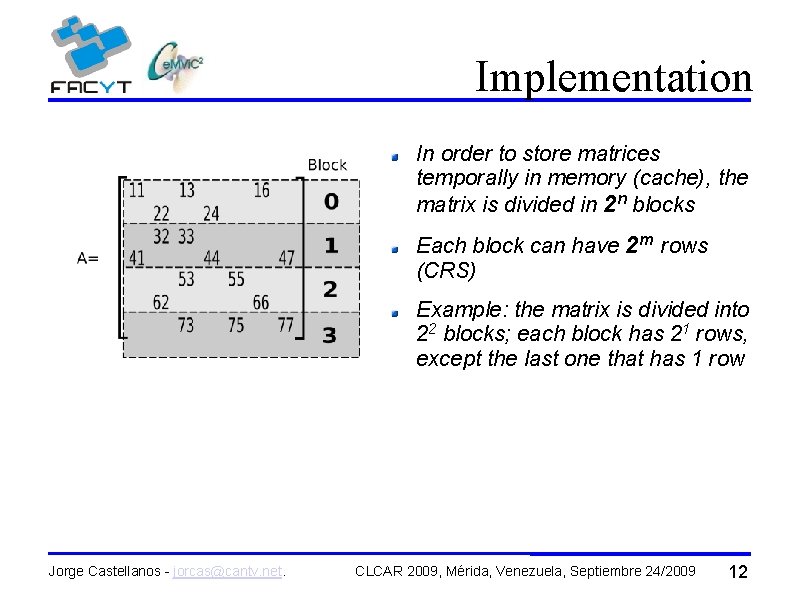

Implementation In order to store matrices temporally in memory (cache), the matrix is divided in 2 n blocks Each block can have 2 m rows (CRS) Example: the matrix is divided into 22 blocks; each block has 21 rows, except the last one that has 1 row Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 12

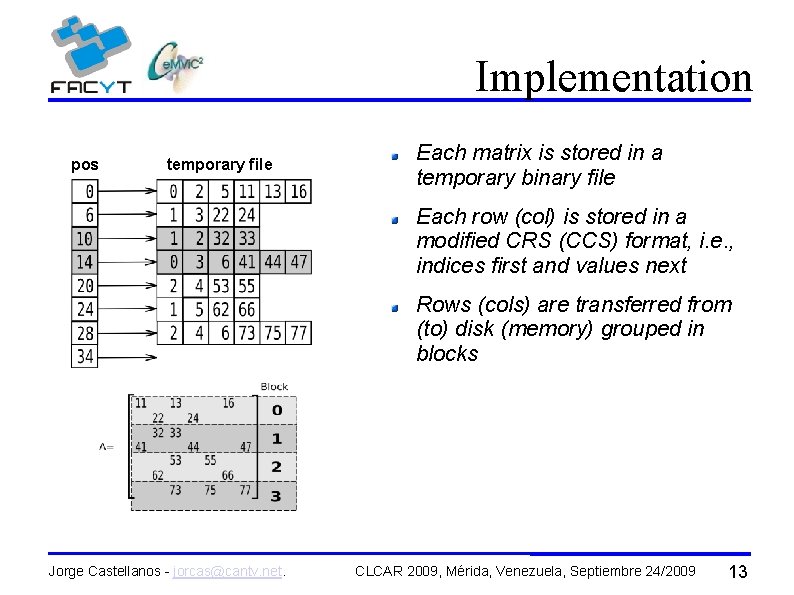

Implementation pos temporary file Each matrix is stored in a temporary binary file Each row (col) is stored in a modified CRS (CCS) format, i. e. , indices first and values next Rows (cols) are transferred from (to) disk (memory) grouped in blocks Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 13

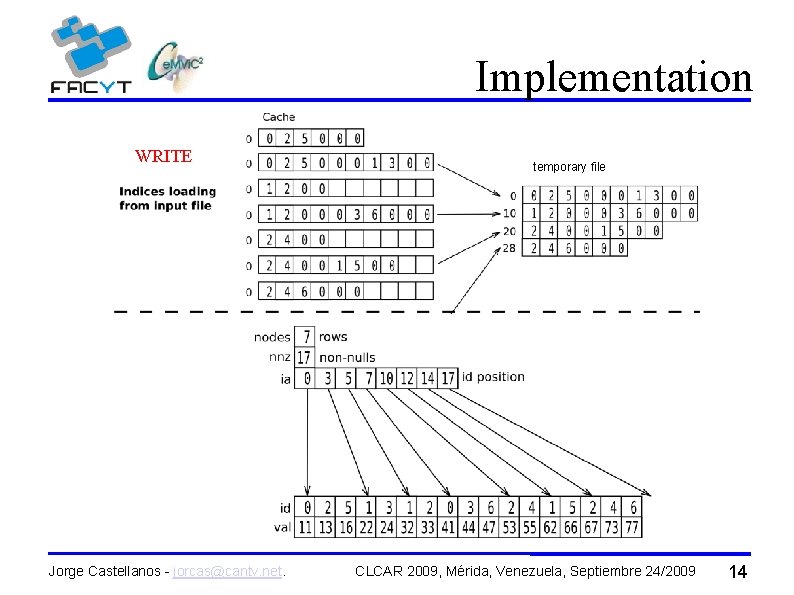

Implementation WRITE Jorge Castellanos - jorcas@cantv. net. temporary file CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 14

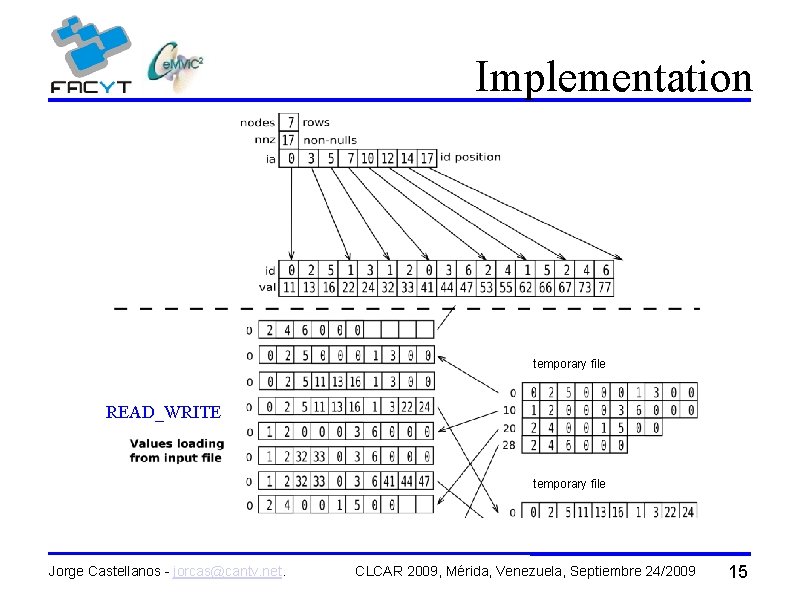

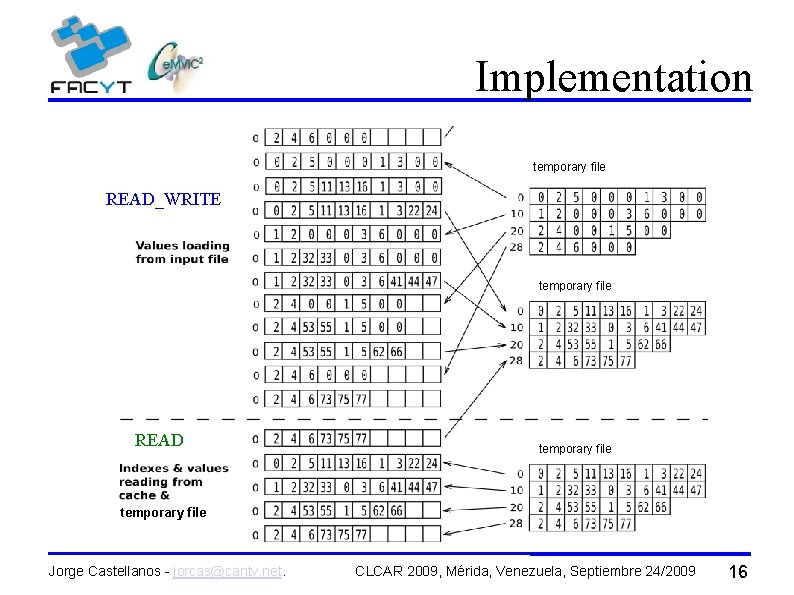

Implementation temporary file READ_WRITE temporary file Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 15

Implementation temporary file READ_WRITE temporary file READ temporary file Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 16

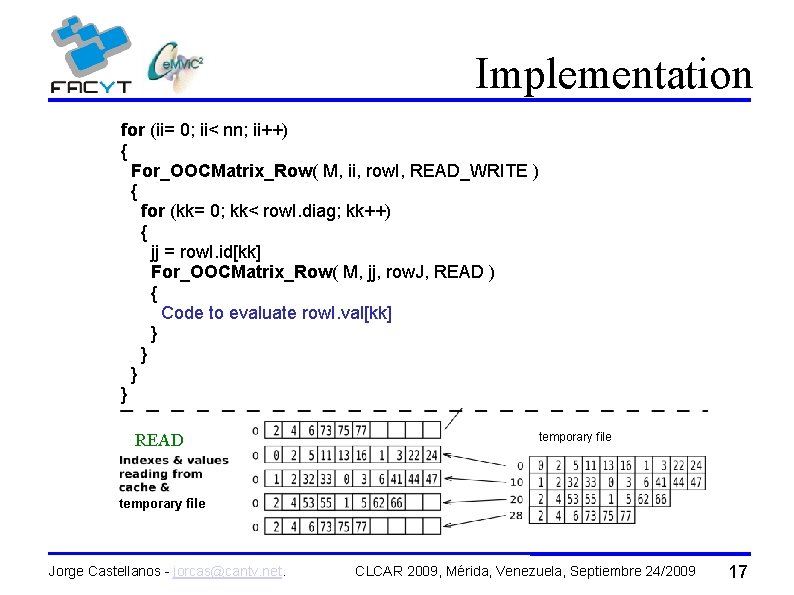

Implementation for (ii= 0; ii< nn; ii++) { For_OOCMatrix_Row( M, ii, row. I, READ_WRITE ) { READ_WRITE for (kk= 0; kk< row. I. diag; kk++) { jj = row. I. id[kk] For_OOCMatrix_Row( M, jj, row. J, READ ) { Code to evaluate row. I. val[kk] } } READ temporary file Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 17

Implementation To support the nesting of For. OOCMatrix_Row macros, the cache used in earlier versions was modified to have multiple ways To mitigate the effect of misses increasing caused by the nested macro, a Reference Prediction Table (RPT) based on the proposal of Chen & Baer (1994) was incorporated To ensure that the input/output cache data worked in parallel with computation to hide the latency caused by misses and prefetches an Outstanding Request List based on the scheme proposed by D. Kroft (1987) was implemented Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 18

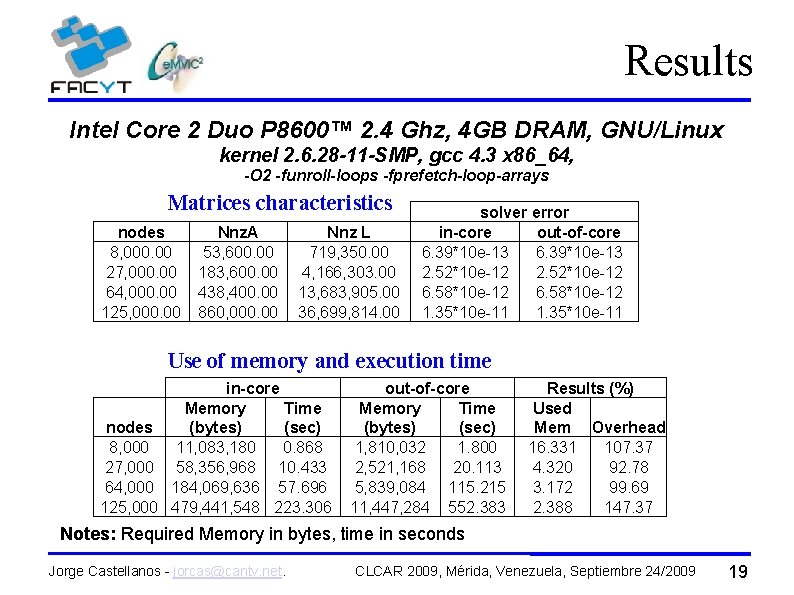

Results Intel Core 2 Duo P 8600™ 2. 4 Ghz, 4 GB DRAM, GNU/Linux kernel 2. 6. 28 -11 -SMP, gcc 4. 3 x 86_64, -O 2 -funroll-loops -fprefetch-loop-arrays Matrices characteristics nodes 8, 000. 00 27, 000. 00 64, 000. 00 125, 000. 00 Nnz. A 53, 600. 00 183, 600. 00 438, 400. 00 860, 000. 00 Nnz L 719, 350. 00 4, 166, 303. 00 13, 683, 905. 00 36, 699, 814. 00 solver error in-core out-of-core 6. 39*10 e-13 2. 52*10 e-12 6. 58*10 e-12 1. 35*10 e-11 Use of memory and execution time in-core Memory Time nodes (bytes) (sec) 8, 000 11, 083, 180 0. 868 27, 000 58, 356, 968 10. 433 64, 000 184, 069, 636 57. 696 125, 000 479, 441, 548 223. 306 out-of-core Memory Time (bytes) (sec) 1, 810, 032 1. 800 2, 521, 168 20. 113 5, 839, 084 115. 215 11, 447, 284 552. 383 Results (%) Used Mem Overhead 16. 331 107. 37 4. 320 92. 78 3. 172 99. 69 2. 388 147. 37 Notes: Required Memory in bytes, time in seconds Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 19

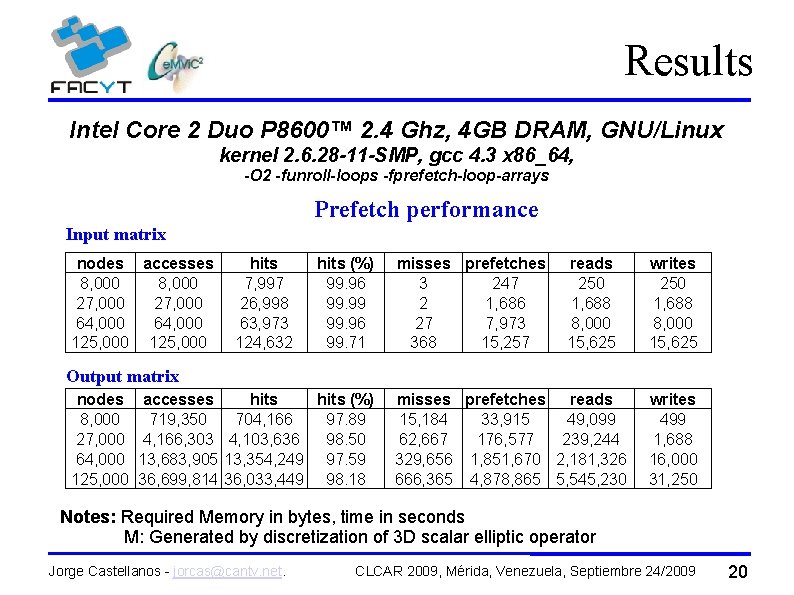

Results Intel Core 2 Duo P 8600™ 2. 4 Ghz, 4 GB DRAM, GNU/Linux kernel 2. 6. 28 -11 -SMP, gcc 4. 3 x 86_64, -O 2 -funroll-loops -fprefetch-loop-arrays Prefetch performance Input matrix nodes accesses 8, 000 27, 000 64, 000 125, 000 hits 7, 997 26, 998 63, 973 124, 632 hits (%) 99. 96 99. 99 99. 96 99. 71 misses prefetches 3 247 2 1, 686 27 7, 973 368 15, 257 reads 250 1, 688 8, 000 15, 625 writes 250 1, 688 8, 000 15, 625 Output matrix nodes 8, 000 27, 000 64, 000 125, 000 accesses hits (%) 719, 350 704, 166 97. 89 4, 166, 303 4, 103, 636 98. 50 13, 683, 905 13, 354, 249 97. 59 36, 699, 814 36, 033, 449 98. 18 misses prefetches reads 15, 184 33, 915 49, 099 62, 667 176, 577 239, 244 329, 656 1, 851, 670 2, 181, 326 666, 365 4, 878, 865 5, 545, 230 writes 499 1, 688 16, 000 31, 250 Notes: Required Memory in bytes, time in seconds M: Generated by discretization of 3 D scalar elliptic operator Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 20

Conclusions The out-of-core kernel supports efficiently the Cholesky sparse matrix factorization because it shows big saves in memory use with overheads less than the 148% in CPU time For a better performance of the out-of-core support, we believe it is important to have a high efficiency prefetch algorithm, as in this work, which reduces the adverse effect of cache misses The prefetch algorithm must operate in parallel with the computation to take advantage of multicore technology present in most of the modern personal computers Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 21

Future work To incorporate improvements in the parallelization of the prefetch algorithm to reduce the penalty in execution time To study the effect of the block size of the cache and the total size of the cache (number of blocks) in order to define heuristics for automatic selection of these parameters To extend the use of the out-of-core layer to other UCSparse. Lib library functions, including direct methods: LU and LDLT To implement the out-of-core support for other methods of solving sparse linear systems such as iterative methods and algebraic multigrid Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 22

References J. Castellanos and G. Larrazábal, Implementación out-of-core para producto matriz-vector y transpuesta de matrices dispersas, Conferencia Latinoamericana de Computación de Alto Rendimiento, Santa Marta, Colombia. Pags. 250 --256. ISBN: 978 --958 --708 --299 --9, 2007. J. Castellanos and G. Larrazábal, Soporte out-of-core para operaciones básicas con matrices dispersas, En Desarrollo y avances en métodos numéricos para ingeniería y ciencias aplicadas, Sociedad Venezolana de Métodos Numéricos en Ingeniería, Caracas, Venezuela. ISBN: 978 --980 -7161 --00 --8, 2008. T. F. Chen and J. L. Baer, A performance study of software and hardware data prefetching schemes, In International Symposium on Computer architecture, Proceedings of the 21 st annual international symposium on Computer Architecture, Chicago Ill, USA, Pages 223 -232, 1994. Z. Bai, J. Demmel, J. Dongarra, A. Ruhe and H. van der Vorst, Templates for the Solution of Algebraic Eigenvalue Problems: A Practical Guide, SIAM, Philadelphia, 2000. Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 23

References N. I. M. Gould, J. A. ~Scott and Y. Hu, A numerical evaluation of sparse direct solvers for the solution of large sparse symmetric linear systems of equations, ACM Trans. Math. Softw. 33, 2, Article 10, 2007. G. Karypis and V. Kumar, A Fast and Highly Quality Multilevel Scheme for Partitioning Irregular Graphs, SIAM Journal on Scientific Computing, Vol. 20, No. 1, pp. 359— 392, 1999. D. Kroft, Lockup-free instruction fetch/prefetch cache organization, In International Symposium on Computer architecture, Proceedings of the 8 th annual symposium on Computer Architecture, Minneapolis, Minnesota USA, Pages 81 -87, 1981. G. Larrazábal, UCSparse. Lib: Una biblioteca numérica para resolver sistemas lineales dispersos, Simulación Numérica y Modelado Computacional, SVMNI, TC 19 --TC 25, ISBN: 980 -6745 -00 -0, 2004. Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 24

References G. Larrazábal, Técnicas algebráicas de precondicionamiento para la resolución de sistemas lineales, Departamento de Arquitectura de Computadores (DAC), Universidad Politécnica de Cataluña, Barcelona, Spain. Tesis Doctoral ISBN: 84 --688 --1572 --1, 2002. D. A. Patterson and J. L. Hennessy, Computer Organization and Design: The Hardware/Software Interface, Morgan Kaufmann, Third Edition, 2005. J. Reid, TREESOLV, a Fortran package for solving large sets of linear finite element equations, Report CSS 155. AERE Harwell, U. K. , 1984. J. Reid and J. Scott, An out-of-core sparse Cholesky solver, ACM Transactions on Mathematical Software (TOMS), Volume 36, Issue 2, Article No. 9, 2009. E. Rothberg and R. Schreiber, Efficient Methods for Out-of-Core Sparse Cholesky Factorization, SIAM Journal on Scientific Computing, Vol 21, Issue 1, pages: 129 - 144, 1999. Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 25

References A. J. Smith, Cache Memories, ACM Computing Surveys, Vol 14, Issue 3, pages: 473 -530, 1982. S. Toledo, A survey of out-of-core algorithms in numerical linear algebra, In External Memory Algorithms and Visualization, J. Abello and J. S. Vitter, Eds. , DIMACS Series in Discrete Mathematics and Theoretical Computer Science, 1999. Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 26

Thanks Jorge Castellanos - jorcas@cantv. net. CLCAR 2009, Mérida, Venezuela, Septiembre 24/2009 27

- Slides: 27