A Briefly Introduction of Region Based Image Segmentation

A Briefly Introduction of Region. Based Image Segmentation Advice Researcher: 丁建均教授(Jian-Jiun Ding ) Presenter: 郭政錦(Cheng-Jin Kuo) Digital Image and Signal Processing Laboratory Graduate Institute of Communication Engineering National Taiwan University, Taipei, Taiwan, ROC 台大電信所 數位影像與訊號處理實驗室 1

Outline Introduction n Data Clustering n Hierarchical Clustering n Partitional Clustering (k-means method) n Simulation Results n Comparison n Conclusion and Future Work n References n 2

Introduction n Image Segmentation: Threshold Technique p Edge-Based Segmentation p Ø p Watershed Algorithm Method Region-Based Segmentation Data Clustering Ø Region Growing Ø Region Splitting and Merging Ø 3

Introduction n Image Segmentation: Threshold Technique p Edge-Based Segmentation p Ø p Watershed Algorithm Method Region-Based Segmentation Data Clustering Ø Region Growing Ø Region Splitting and Merging Ø 4

Data Clustering n Data Clustering: n Goal: To classify the statistical databases 2. To segment the digital images by a systematical way. 1. 5

Data Clustering n Two Kinds of Data Clustering : Ø Hierarchical Clustering Ø hierarchical agglomerative algorithm Ø hierarchical divisive algorithm Ø Partitional Clustering Ø K-means method 6

Data Clustering n Two Kinds of Data Clustering : The biggest difference between them: Ø Hierarchical Clustering n Ø The Ø number of clusters is flexible. Partitional Clustering Ø The number of clusters is assigned before processing. 7

Data Clustering n Two Kinds of Data Clustering : Ø Hierarchical Clustering Ø hierarchical agglomerative algorithm Ø hierarchical divisive algorithm Ø Partitional Clustering Ø K-means method 8

Hierarchical Clustering n Characteristics: ¨ Has been widely used in statistical fields p Tree Diagram p Simple Concept p User could change the number of clusters during the processing time Which means: the numbers of clustering is flexible 9

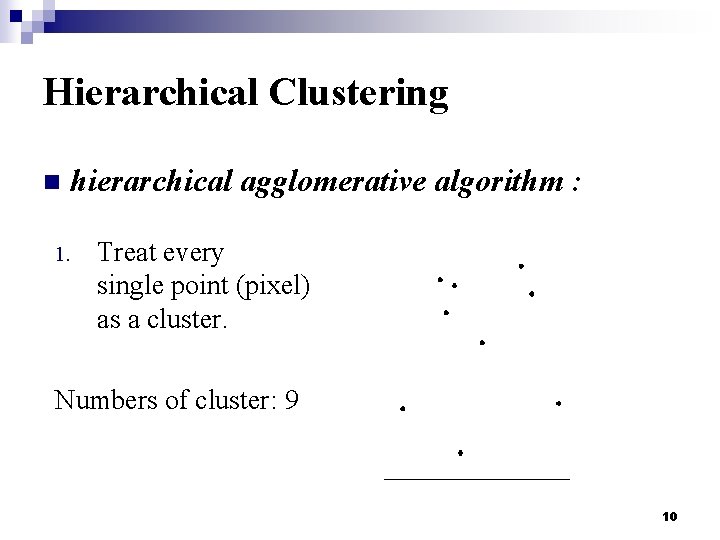

Hierarchical Clustering n hierarchical agglomerative algorithm : 1. Treat every single point (pixel) as a cluster. Numbers of cluster: 9 10

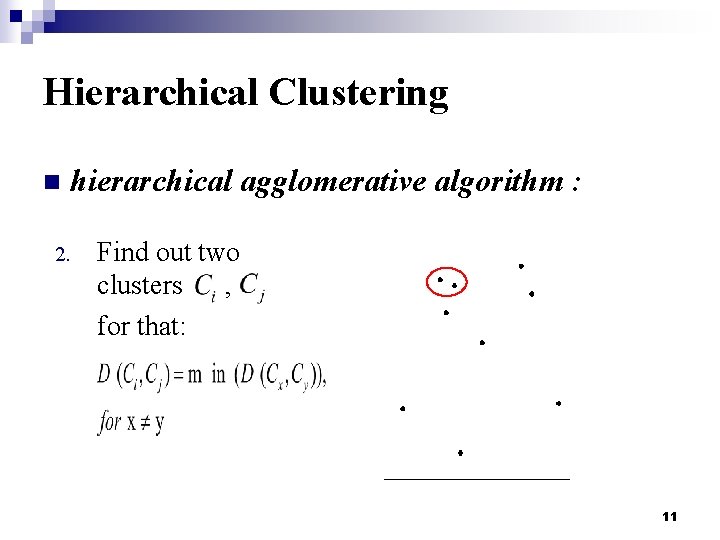

Hierarchical Clustering n hierarchical agglomerative algorithm : 2. Find out two clusters , for that: 11

Hierarchical Clustering n hierarchical agglomerative algorithm : n The Definition of Distance D: ¨ Single-Linkage agglomerative algorithm: ¨ Complete-Linkage agglomerative algorithm: 12

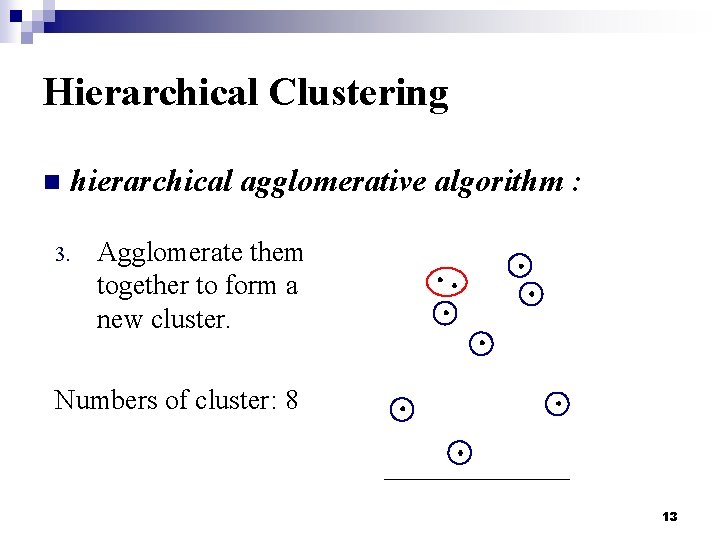

Hierarchical Clustering n hierarchical agglomerative algorithm : 3. Agglomerate them together to form a new cluster. Numbers of cluster: 8 13

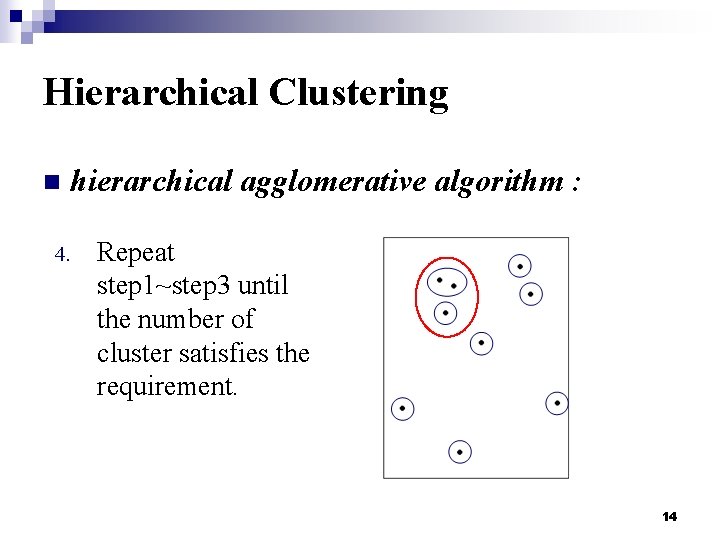

Hierarchical Clustering n hierarchical agglomerative algorithm : 4. Repeat step 1~step 3 until the number of cluster satisfies the requirement. 14

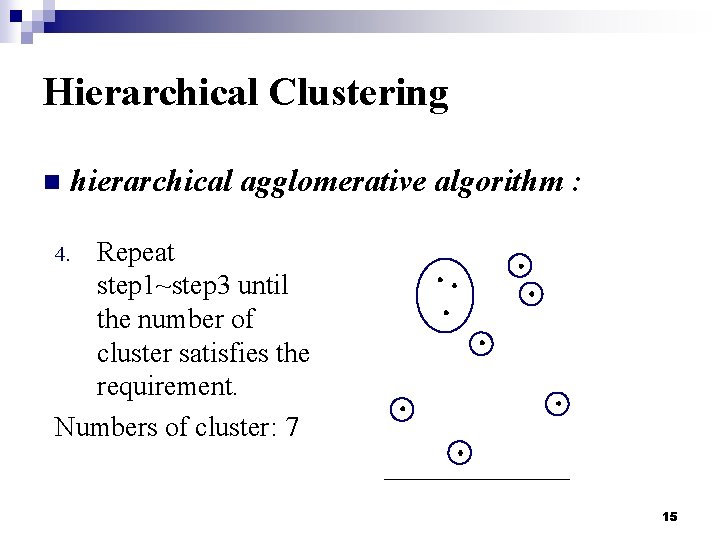

Hierarchical Clustering n hierarchical agglomerative algorithm : Repeat step 1~step 3 until the number of cluster satisfies the requirement. Numbers of cluster: 7 4. 15

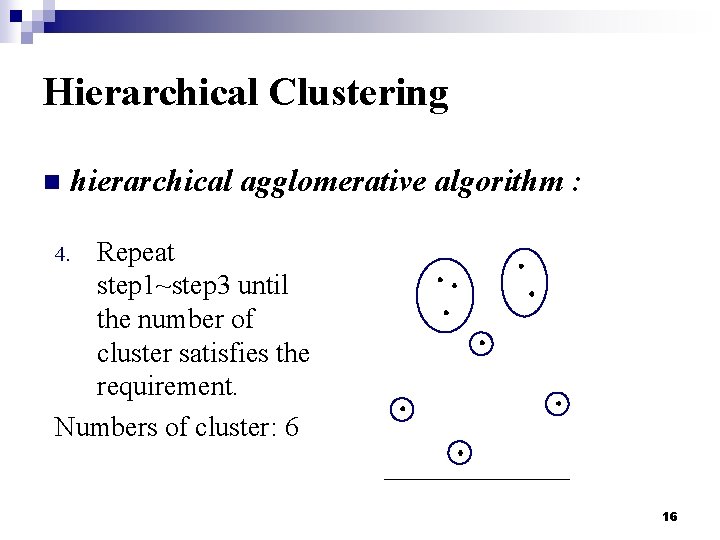

Hierarchical Clustering n hierarchical agglomerative algorithm : Repeat step 1~step 3 until the number of cluster satisfies the requirement. Numbers of cluster: 6 4. 16

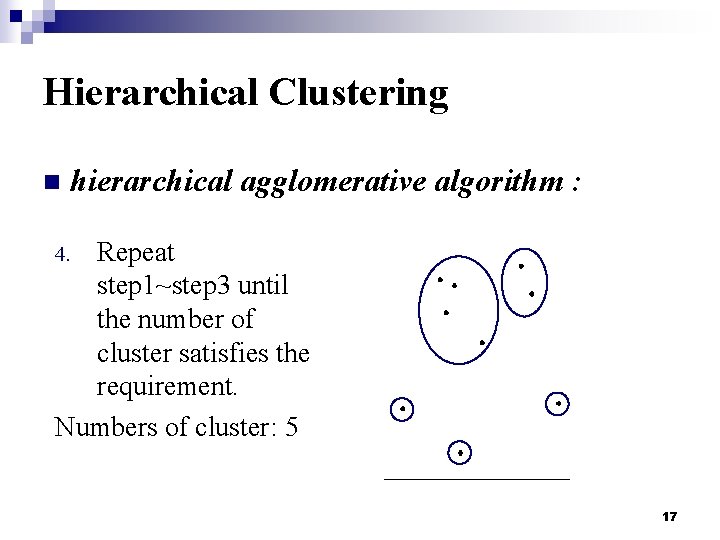

Hierarchical Clustering n hierarchical agglomerative algorithm : Repeat step 1~step 3 until the number of cluster satisfies the requirement. Numbers of cluster: 5 4. 17

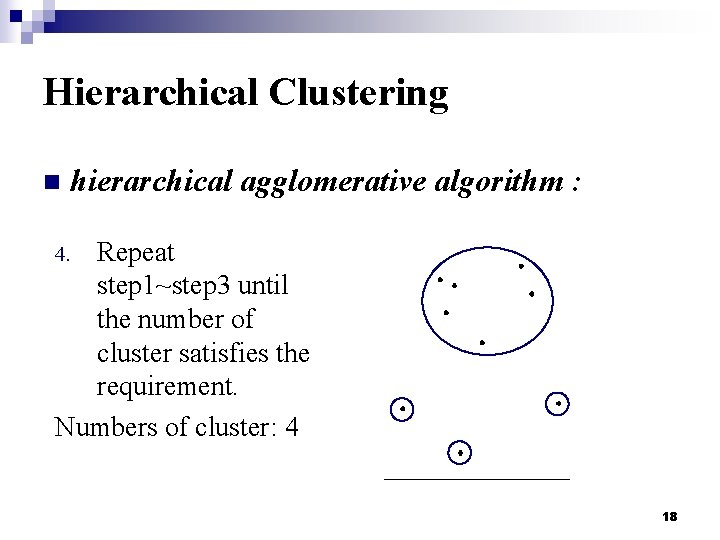

Hierarchical Clustering n hierarchical agglomerative algorithm : Repeat step 1~step 3 until the number of cluster satisfies the requirement. Numbers of cluster: 4 4. 18

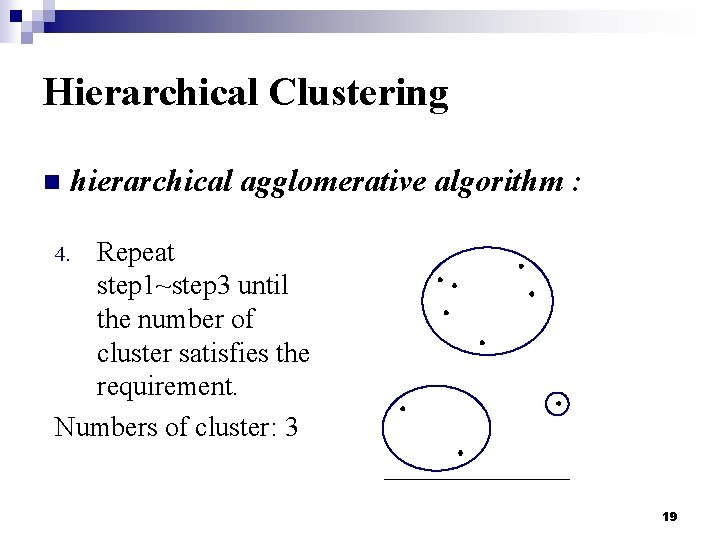

Hierarchical Clustering n hierarchical agglomerative algorithm : Repeat step 1~step 3 until the number of cluster satisfies the requirement. Numbers of cluster: 3 4. 19

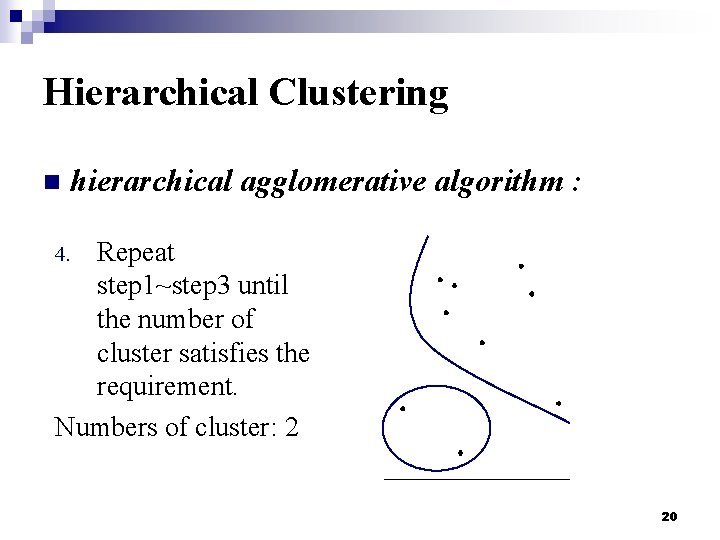

Hierarchical Clustering n hierarchical agglomerative algorithm : Repeat step 1~step 3 until the number of cluster satisfies the requirement. Numbers of cluster: 2 4. 20

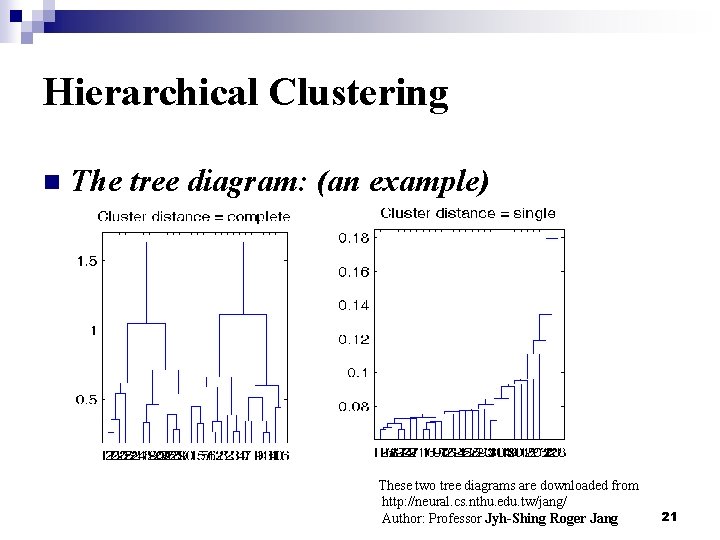

Hierarchical Clustering n The tree diagram: (an example) These two tree diagrams are downloaded from http: //neural. cs. nthu. edu. tw/jang/ Author: Professor Jyh-Shing Roger Jang 21

Hierarchical Clustering n Advantages: p Simple: We can check all the stages by checking out the tree diagram only. p Number of cluster is flexible: We can change the number of cluster anytime during processing. which means? 22

Hierarchical Clustering n Drawback: p Slower compared to partitional clustering: Not suitable for processing larger database 23

Hierarchical Clustering n Two Kinds of Data Clustering : Ø Hierarchical Clustering Ø hierarchical agglomerative algorithm Ø hierarchical divisive algorithm Ø Partitional Clustering Ø K-means method 24

Hierarchical Clustering n hierarchical division algorithm : Treat the whole database as a cluster. Numbers of cluster: 1 1. 25

Partitional Clustering n Characteristics: Computational time is short ¨ User have to decide the number of clusters before starting classifying data ¨ The concept of centroid ¨ One of the famous method: K-means Method ¨ 26

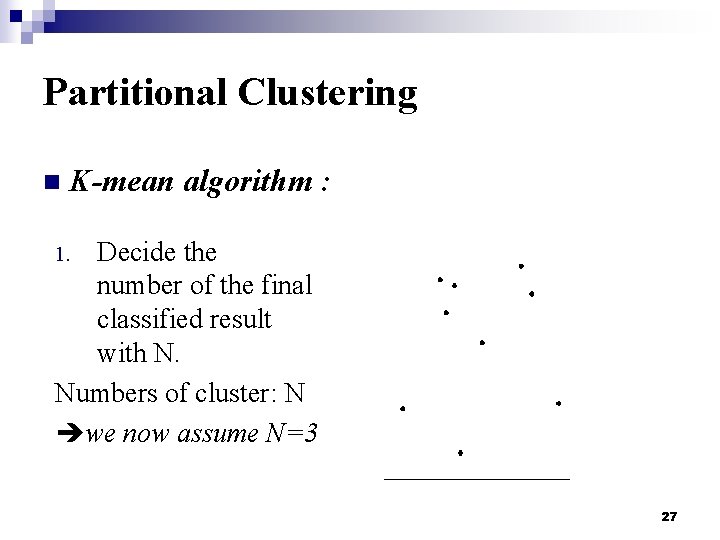

Partitional Clustering n K-mean algorithm : Decide the number of the final classified result with N. Numbers of cluster: N we now assume N=3 1. 27

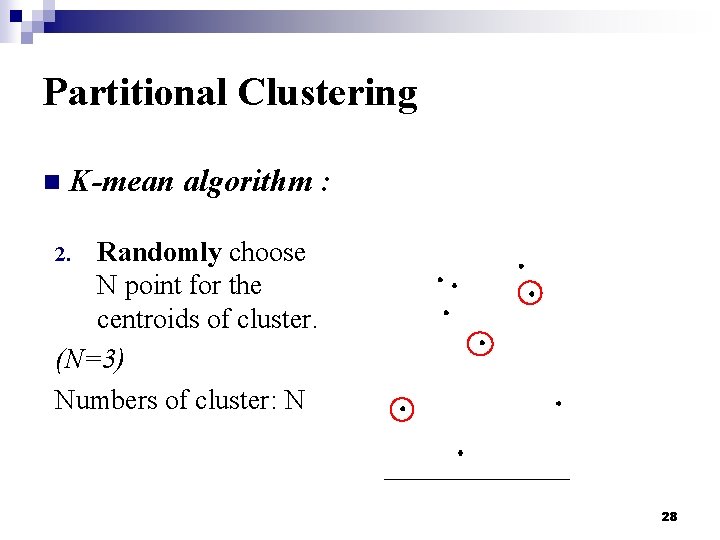

Partitional Clustering n K-mean algorithm : Randomly choose N point for the centroids of cluster. (N=3) Numbers of cluster: N 2. 28

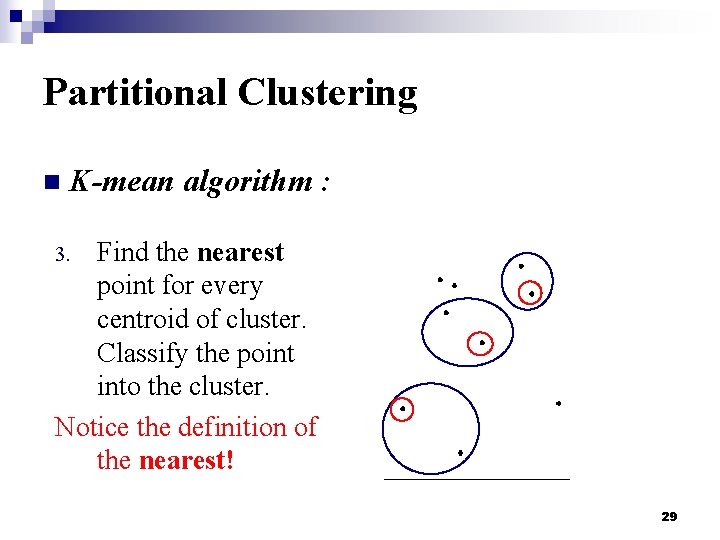

Partitional Clustering n K-mean algorithm : Find the nearest point for every centroid of cluster. Classify the point into the cluster. Notice the definition of the nearest! 3. 29

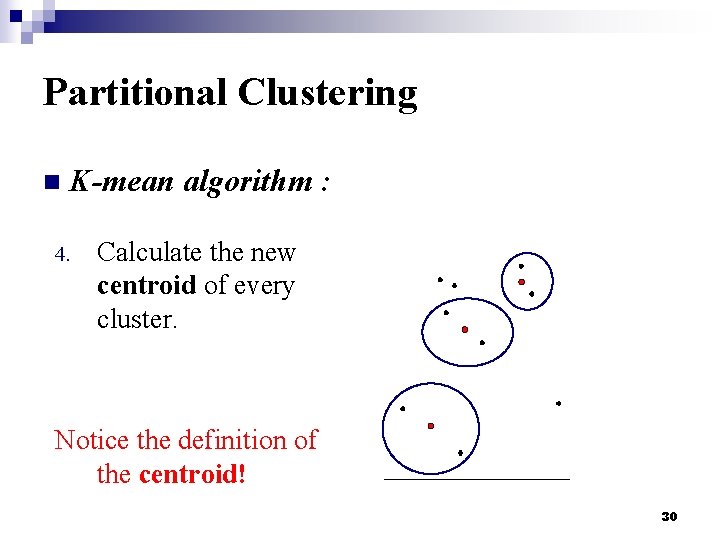

Partitional Clustering n K-mean algorithm : 4. Calculate the new centroid of every cluster. Notice the definition of the centroid! 30

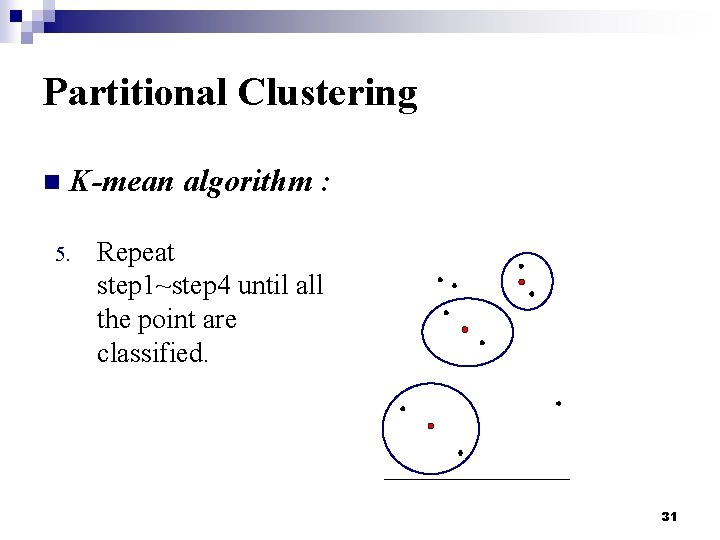

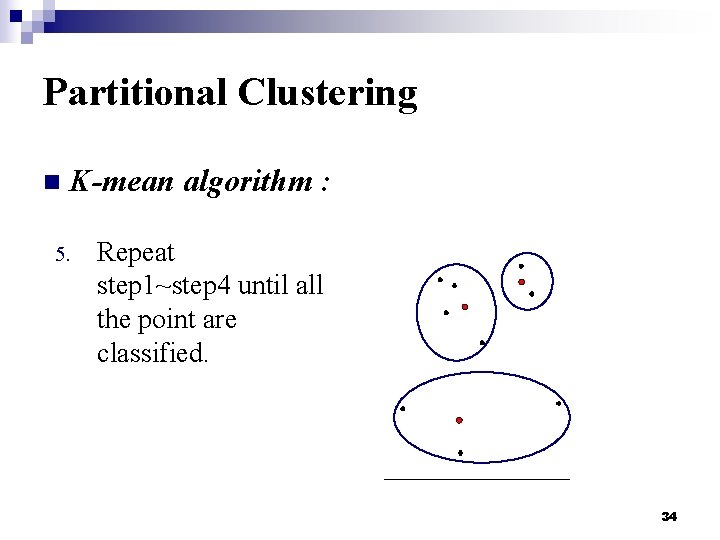

Partitional Clustering n K-mean algorithm : 5. Repeat step 1~step 4 until all the point are classified. 31

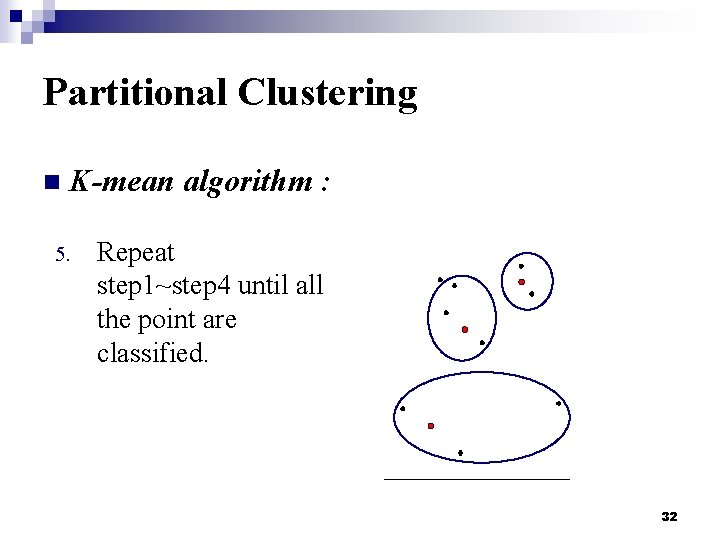

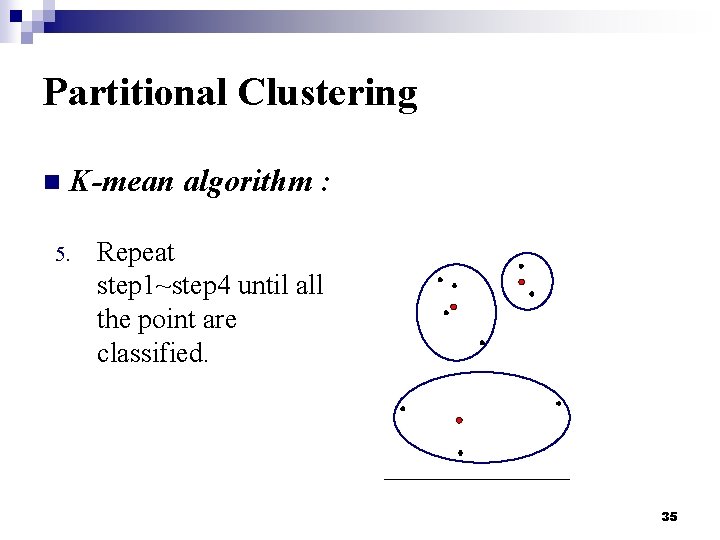

Partitional Clustering n K-mean algorithm : 5. Repeat step 1~step 4 until all the point are classified. 32

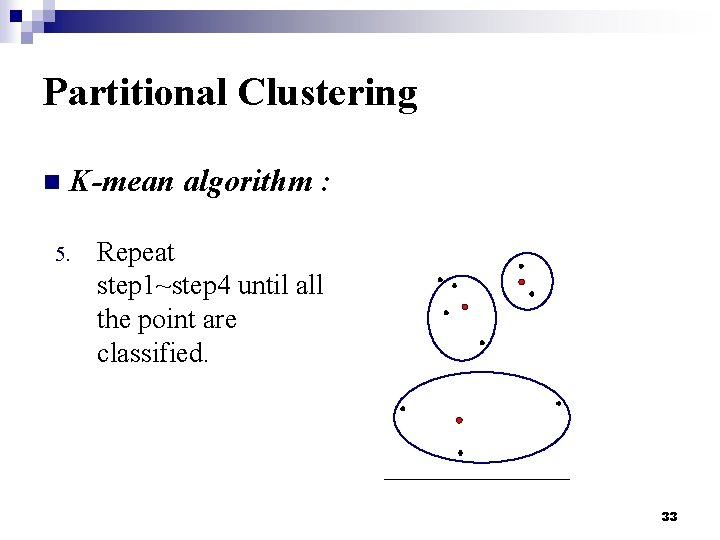

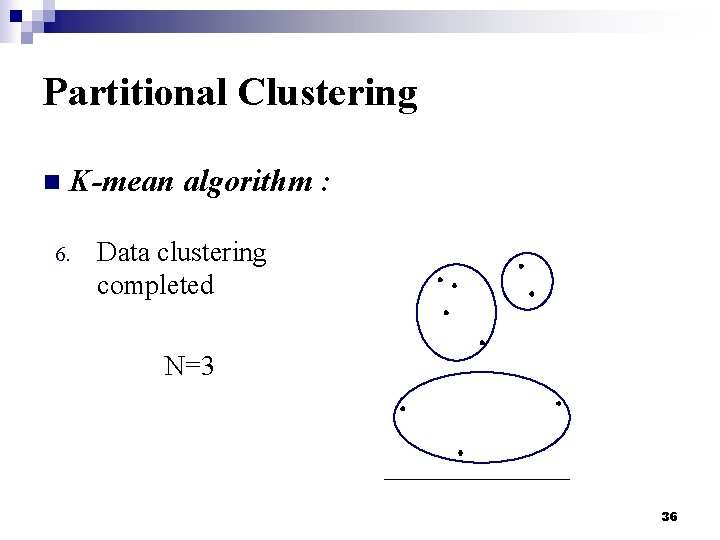

Partitional Clustering n K-mean algorithm : 5. Repeat step 1~step 4 until all the point are classified. 33

Partitional Clustering n K-mean algorithm : 5. Repeat step 1~step 4 until all the point are classified. 34

Partitional Clustering n K-mean algorithm : 5. Repeat step 1~step 4 until all the point are classified. 35

Partitional Clustering n K-mean algorithm : 6. Data clustering completed N=3 36

Partitional Clustering n Advantages: p Fast: Since the N has been decided at beginning, the computational time is short. p Simple: Since the N has been decided at beginning, every simulation testing will be clear. 37

Partitional Clustering n Drawbacks: p. N is fixed: What is the best value of N? p Choice of initial points influence the result p Circular shape 38

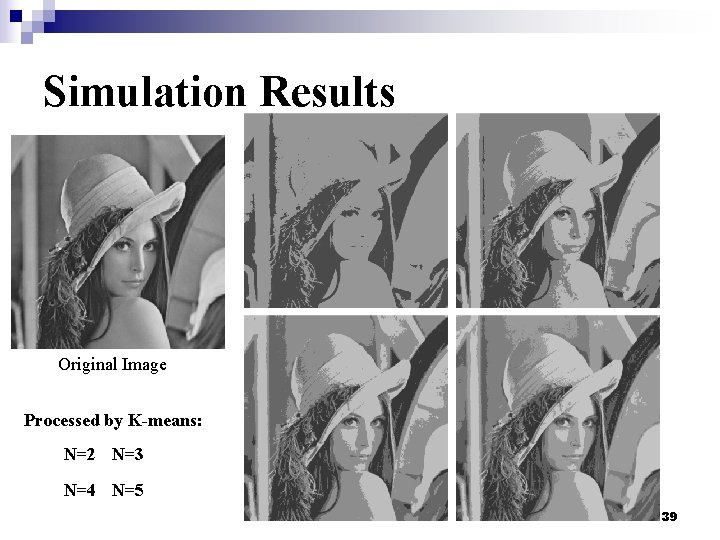

Simulation Results Original Image Processed by K-means: N=2 N=3 N=4 N=5 39

Comparison n Hierarchical clustering: ¨ Reliable ¨ But is time consuming ¨ Cannot perform on a large database n Partitional clustering: ¨ Fast ¨ But got the initial problem ¨ And the circular shape problem 40

Conclusion and Future Work n n K-means algorithm has been widely used in image segmentation field because of the fast processing speed. How do we choose the best N? Can we use PSO algorithm to improve it? Other ideas of image segmentation: ¨ Segment the image by the graphical features 41

References n n n R. C. Gonzalez, R. E. Woods, Digital Image Processing 2 nd Edition, Prentice Hall, New Jersey 2002. M. Petrou and P. Bosdogianni, Image Processing the Fundamentals, Wiley, UK, 2004. W. K. Pratt, Digital Image Processing 4 nd Edition, John Wiley & Sons, Inc. , Los Altos, California, 2007. S. C. Satapathy, J. V. R. Murthy, R. Prasada, B. N. V. S. S, R. Prasad, P. V. G. D. “A Comparative Analysis of Unsupervised K-Means, PSO and Self-Organizing PSO for Image Clustering”, Conference on Computational Intelligence and Multimedia Applications, 2007. International Conference on Volume: 2 13 -15 Dec. 2007, Page(s): 229 -237 J. Tilton, “Analysis of Hierarchically Related Image Segmentations”, pre-sented at the IEEE Workshop on Advances in Techniques for Analysis of Remotely Sensed Data, Greenbelt, MD, 2003. 42

THANK YOU 43

- Slides: 43