A Brief Introduction to Graphical Models CSCE 883

A Brief Introduction to Graphical Models CSCE 883 Machine Learning University of South Carolina

Outline n n n Application Definition Representation Inference and Learning Conclusion

Application n n Probabilistic expert system for medical diagnosis Widely adopted by Microsoft n n n e. g. the Answer Wizard of Office 95 the Office Assistant of Office 97 over 30 technical support troubleshooters

Application n n n Machine Learning Statistics Patten Recognition Natural Language Processing Computer Vision Image Processing Bio-informatics …….

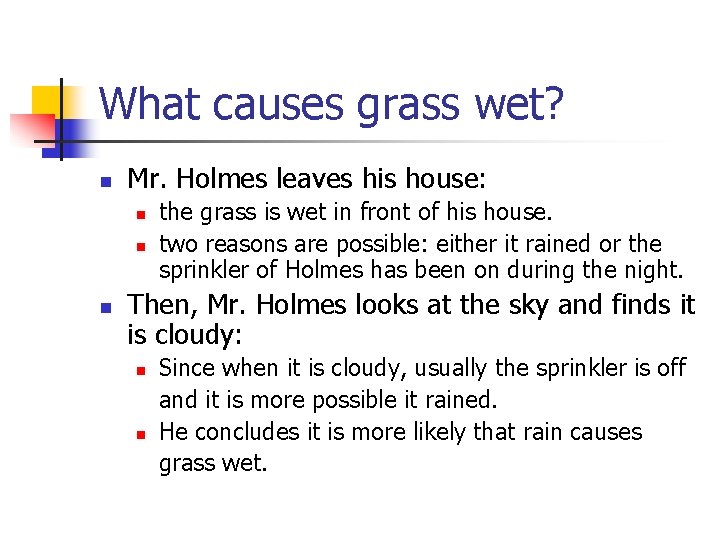

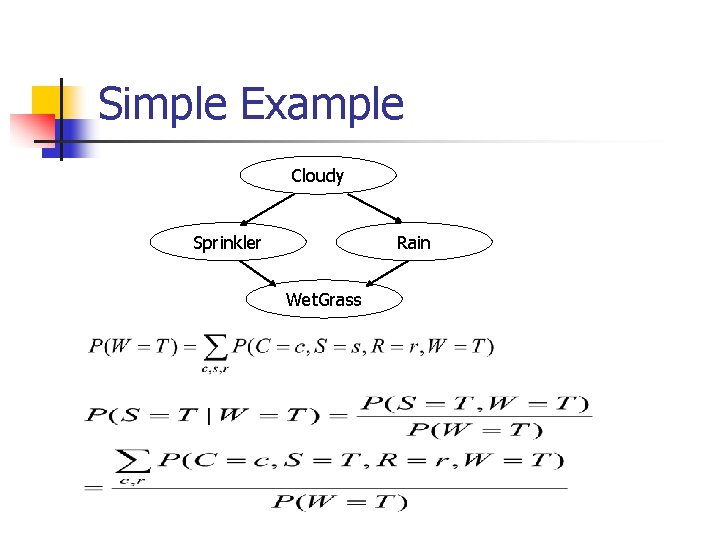

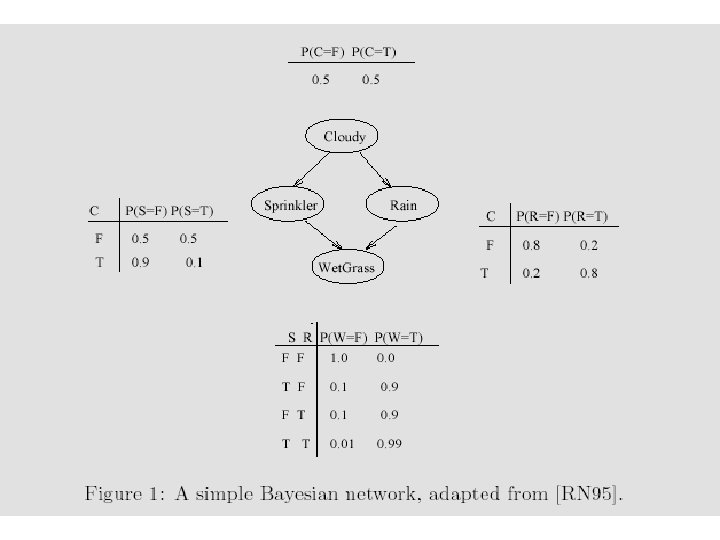

What causes grass wet? n Mr. Holmes leaves his house: n n n the grass is wet in front of his house. two reasons are possible: either it rained or the sprinkler of Holmes has been on during the night. Then, Mr. Holmes looks at the sky and finds it is cloudy: n n Since when it is cloudy, usually the sprinkler is off and it is more possible it rained. He concludes it is more likely that rain causes grass wet.

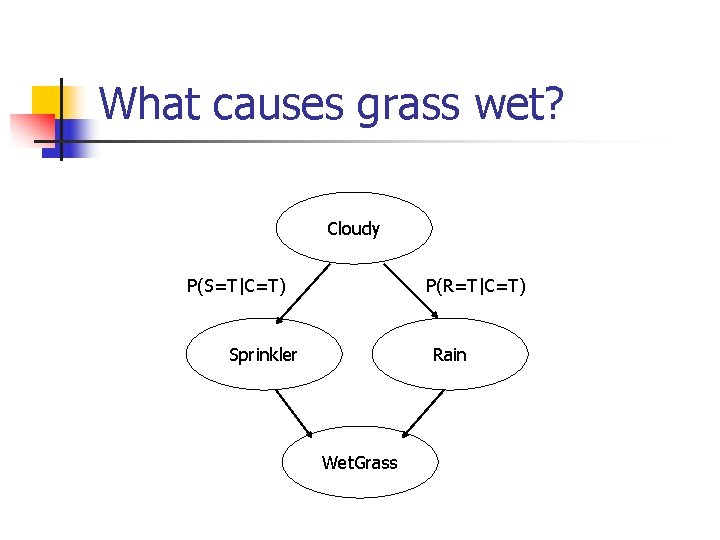

What causes grass wet? Cloudy P(S=T|C=T) P(R=T|C=T) Sprinkler Rain Wet. Grass

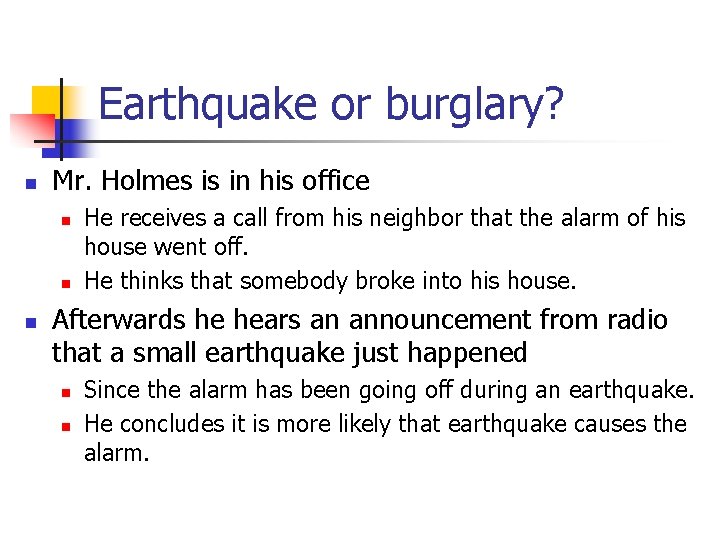

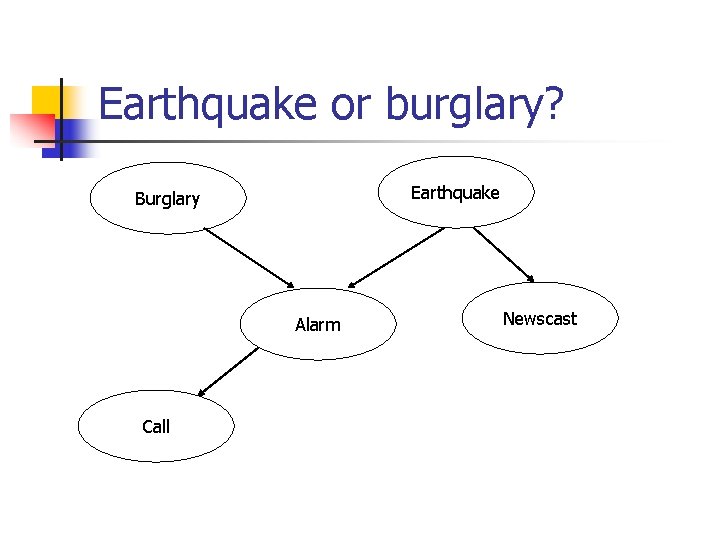

Earthquake or burglary? n Mr. Holmes is in his office n n n He receives a call from his neighbor that the alarm of his house went off. He thinks that somebody broke into his house. Afterwards he hears an announcement from radio that a small earthquake just happened n n Since the alarm has been going off during an earthquake. He concludes it is more likely that earthquake causes the alarm.

Earthquake or burglary? Earthquake Burglary Alarm Call Newscast

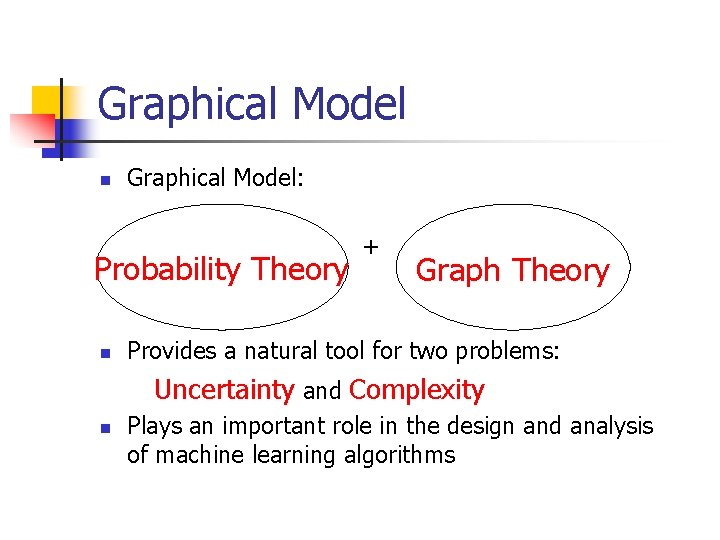

Graphical Model n Graphical Model: Probability Theory n + Graph Theory Provides a natural tool for two problems: Uncertainty and Complexity n Plays an important role in the design and analysis of machine learning algorithms

Graphical Model n Modularity: a complex system is built by combining simpler parts. n Probability theory: ensures consistency, provides interface models to data. n Graph theory: intuitively appealing interface for humans, efficient general purpose algorithms.

Graphical Model n n Many of the classical multivariate probabilistic systems are special cases of the general graphical model formalism: -Mixture models -Factor analysis -Hidden Markov Models -Kalman filters The graphical model framework provides a way to view all of these systems as instances of common underlying formalism.

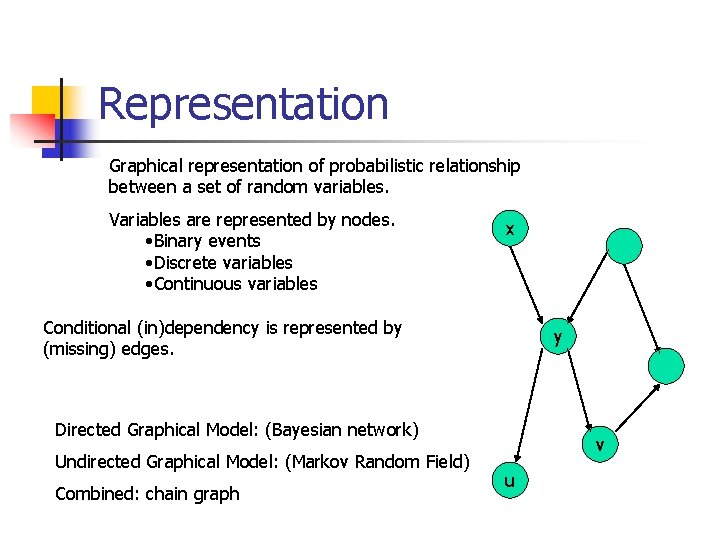

Representation Graphical representation of probabilistic relationship between a set of random variables. Variables are represented by nodes. • Binary events • Discrete variables • Continuous variables x Conditional (in)dependency is represented by (missing) edges. y Directed Graphical Model: (Bayesian network) Undirected Graphical Model: (Markov Random Field) Combined: chain graph v u

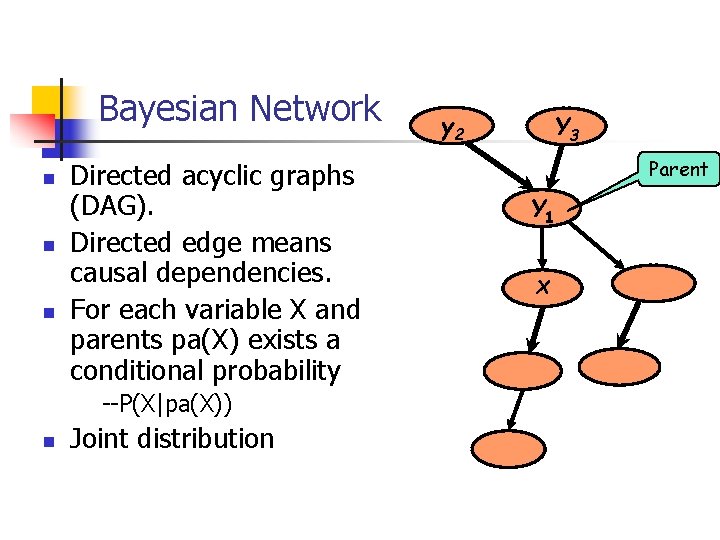

Bayesian Network n n n Directed acyclic graphs (DAG). Directed edge means causal dependencies. For each variable X and parents pa(X) exists a conditional probability --P(X|pa(X)) n Joint distribution y 2 Y 3 Parent Y 1 X

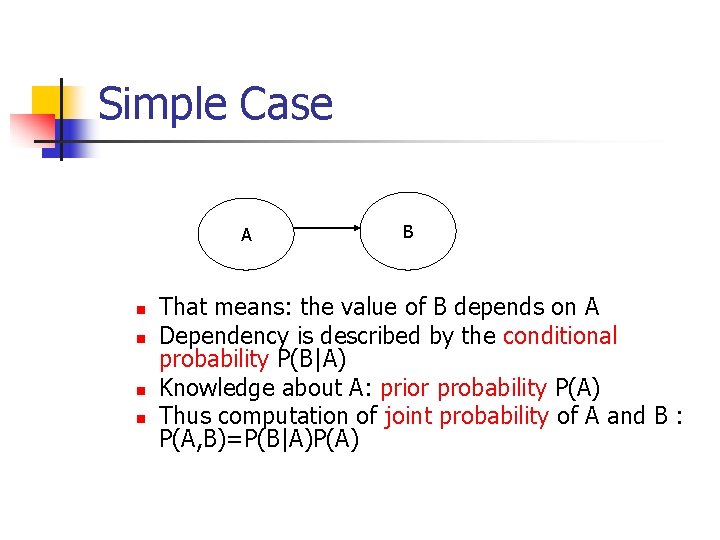

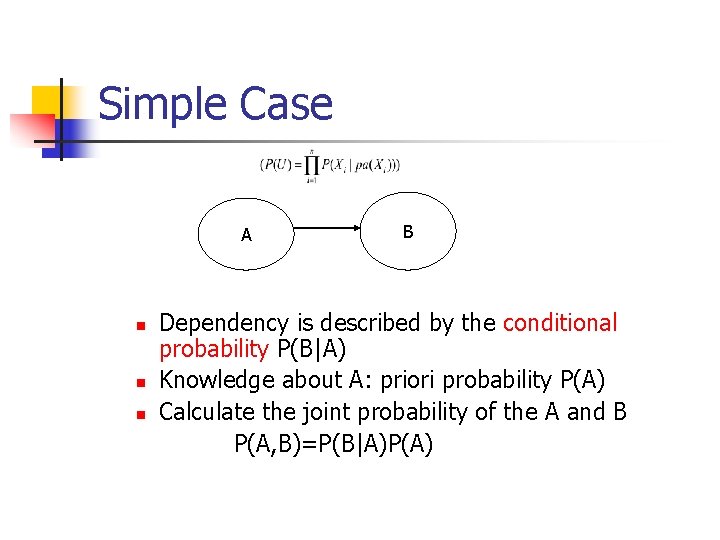

Simple Case A n n B That means: the value of B depends on A Dependency is described by the conditional probability P(B|A) Knowledge about A: prior probability P(A) Thus computation of joint probability of A and B : P(A, B)=P(B|A)P(A)

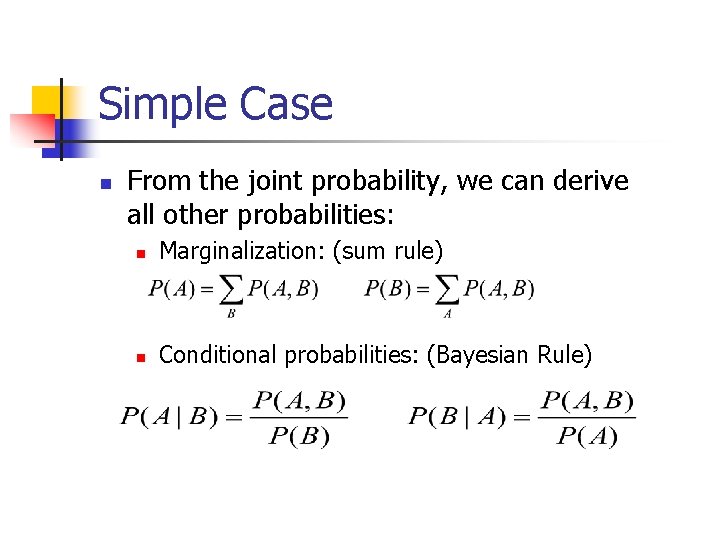

Simple Case n From the joint probability, we can derive all other probabilities: n Marginalization: (sum rule) n Conditional probabilities: (Bayesian Rule)

Simple Example Cloudy Sprinkler Rain Wet. Grass

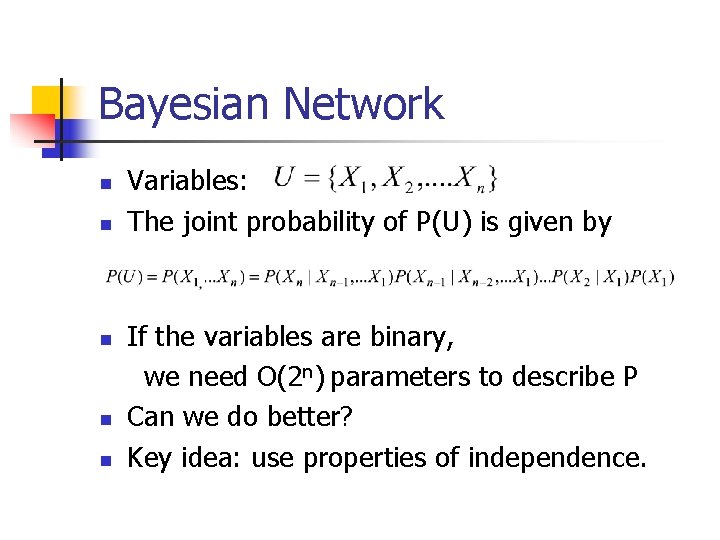

Bayesian Network n n n Variables: The joint probability of P(U) is given by If the variables are binary, we need O(2 n) parameters to describe P Can we do better? Key idea: use properties of independence.

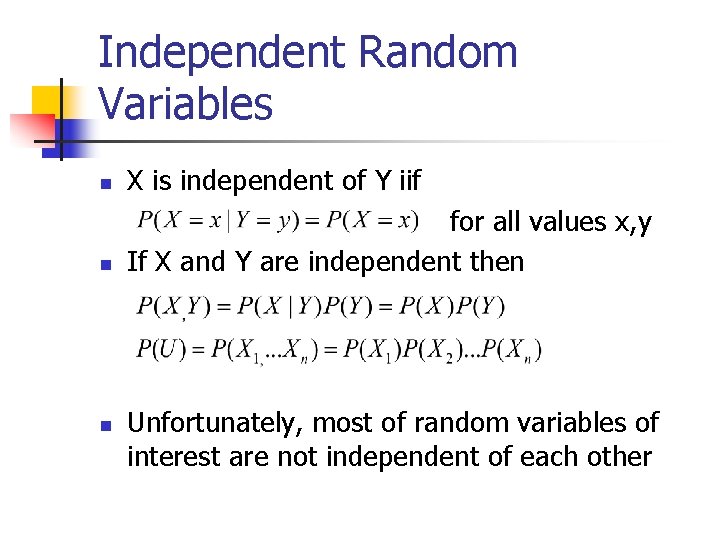

Independent Random Variables n X is independent of Y iif n for all values x, y If X and Y are independent then n Unfortunately, most of random variables of interest are not independent of each other

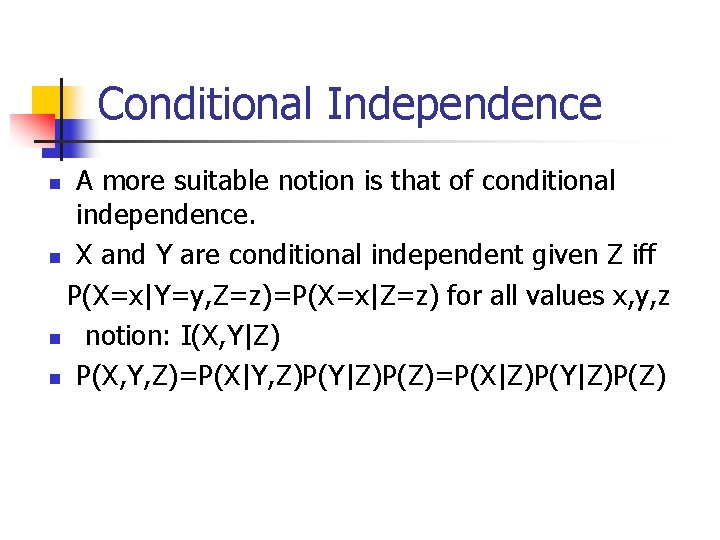

Conditional Independence A more suitable notion is that of conditional independence. n X and Y are conditional independent given Z iff P(X=x|Y=y, Z=z)=P(X=x|Z=z) for all values x, y, z n notion: I(X, Y|Z) n P(X, Y, Z)=P(X|Y, Z)P(Y|Z)P(Z)=P(X|Z)P(Y|Z)P(Z) n

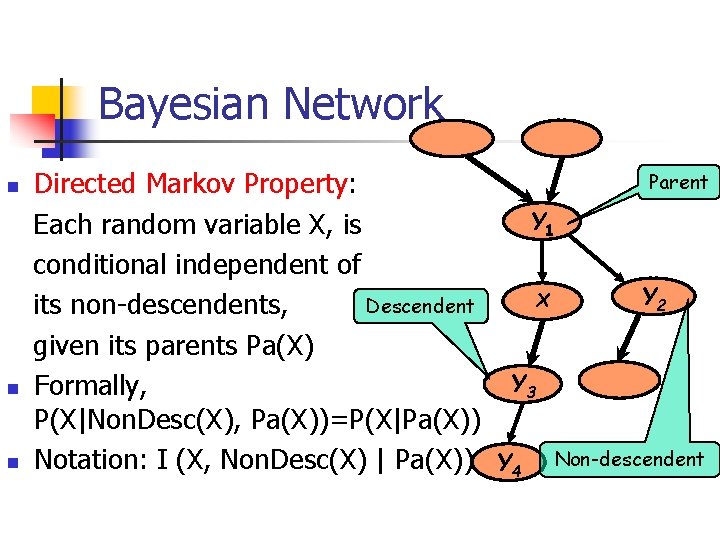

Bayesian Network n n n Parent Directed Markov Property: Y 1 Each random variable X, is conditional independent of Y 2 X Descendent its non-descendents, given its parents Pa(X) Y 3 Formally, P(X|Non. Desc(X), Pa(X))=P(X|Pa(X)) Notation: I (X, Non. Desc(X) | Pa(X)) Y 4 Non-descendent

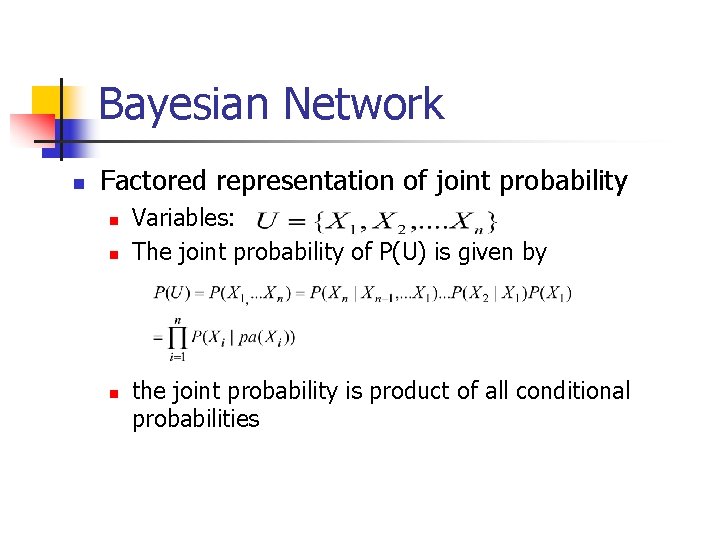

Bayesian Network n Factored representation of joint probability n n n Variables: The joint probability of P(U) is given by the joint probability is product of all conditional probabilities

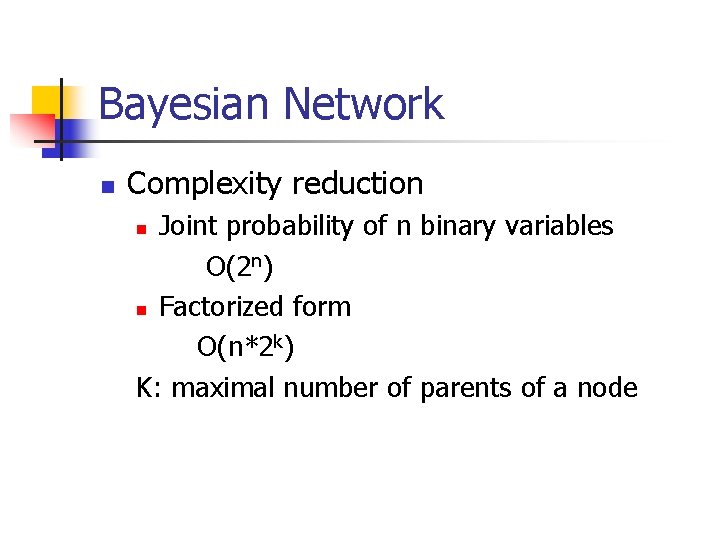

Bayesian Network n Complexity reduction Joint probability of n binary variables O(2 n) n Factorized form O(n*2 k) K: maximal number of parents of a node n

Simple Case A n n n B Dependency is described by the conditional probability P(B|A) Knowledge about A: priori probability P(A) Calculate the joint probability of the A and B P(A, B)=P(B|A)P(A)

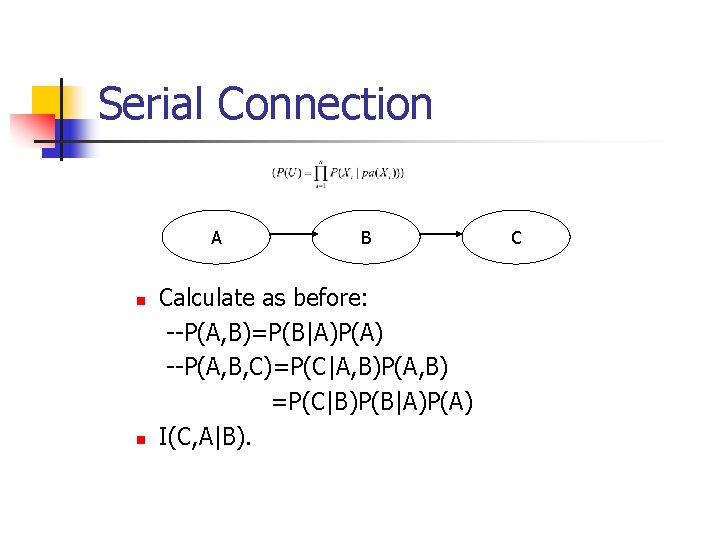

Serial Connection A n n B Calculate as before: --P(A, B)=P(B|A)P(A) --P(A, B, C)=P(C|A, B)P(A, B) =P(C|B)P(B|A)P(A) I(C, A|B). C

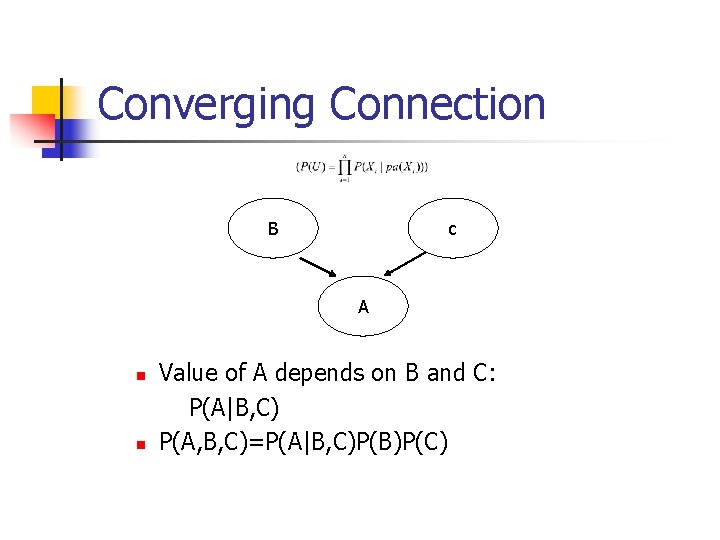

Converging Connection B c A n n Value of A depends on B and C: P(A|B, C) P(A, B, C)=P(A|B, C)P(B)P(C)

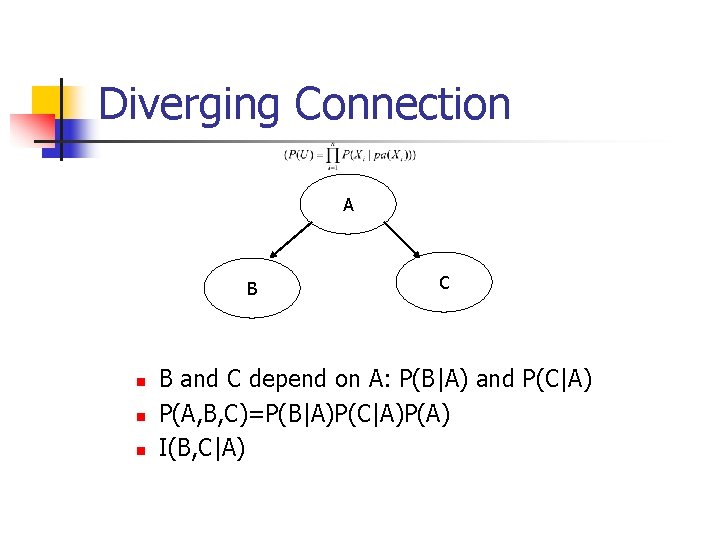

Diverging Connection A B n n n C B and C depend on A: P(B|A) and P(C|A) P(A, B, C)=P(B|A)P(C|A)P(A) I(B, C|A)

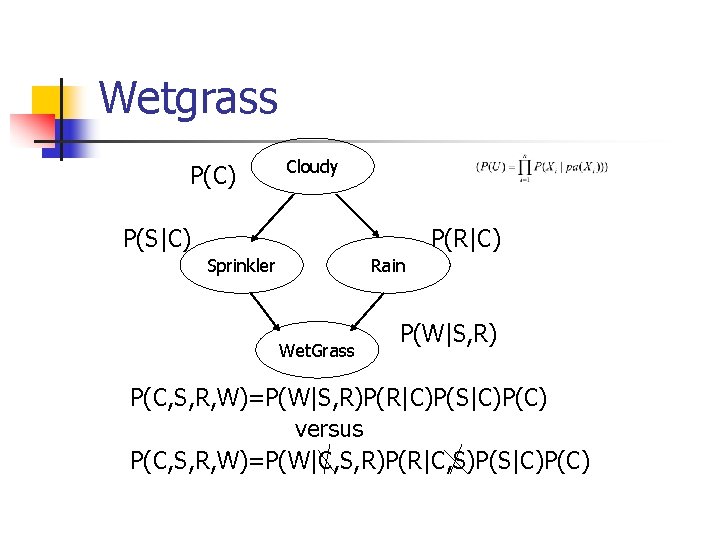

Wetgrass P(C) Cloudy P(S|C) P(R|C) Sprinkler Rain Wet. Grass P(W|S, R) P(C, S, R, W)=P(W|S, R)P(R|C)P(S|C)P(C) versus P(C, S, R, W)=P(W|C, S, R)P(R|C, S)P(S|C)P(C)

Markov Random Fields n n n Links represent symmetrical probabilistic dependencies Direct link between A and B: conditional dependency. Weakness of MRF: inability to represent induced dependencies.

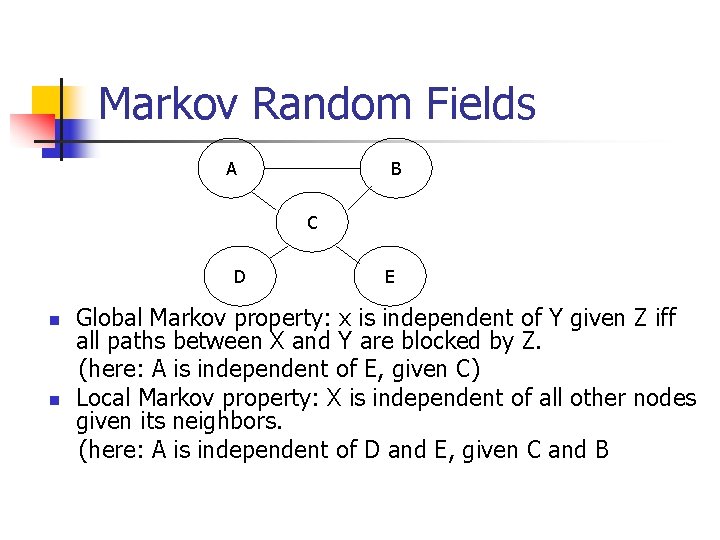

Markov Random Fields A B C D n n E Global Markov property: x is independent of Y given Z iff all paths between X and Y are blocked by Z. (here: A is independent of E, given C) Local Markov property: X is independent of all other nodes given its neighbors. (here: A is independent of D and E, given C and B

Inference n n Computation of the conditional probability distribution of one set of nodes, given a model and another set of nodes. Bottom-up n n Observation (leaves): e. g. wet grass The probabilities of the reasons (rain, sprinkler) can be calculated accordingly “diagnosis” from effects to reasons Top-down n n Knowledge (e. g. “it is cloudy”) influences the probability for “wet grass” Predict the effects

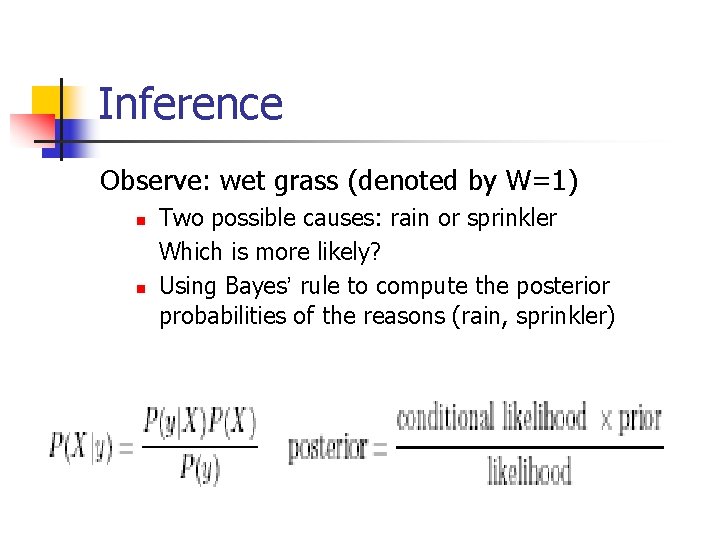

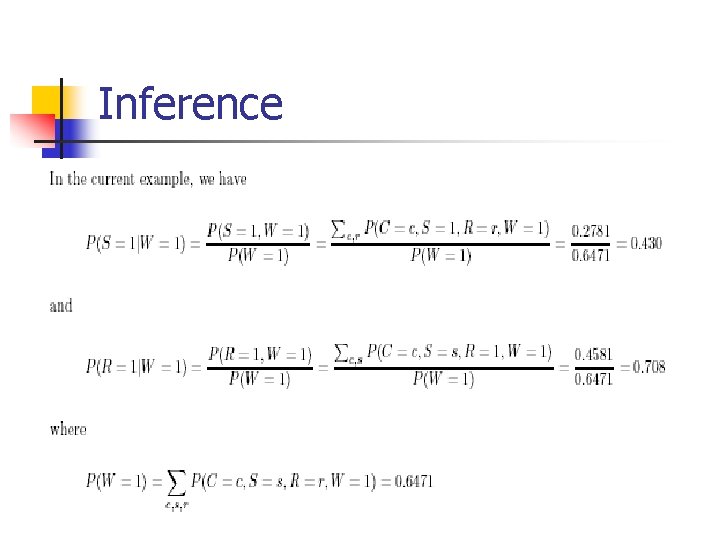

Inference Observe: wet grass (denoted by W=1) n n Two possible causes: rain or sprinkler Which is more likely? Using Bayes’ rule to compute the posterior probabilities of the reasons (rain, sprinkler)

Inference

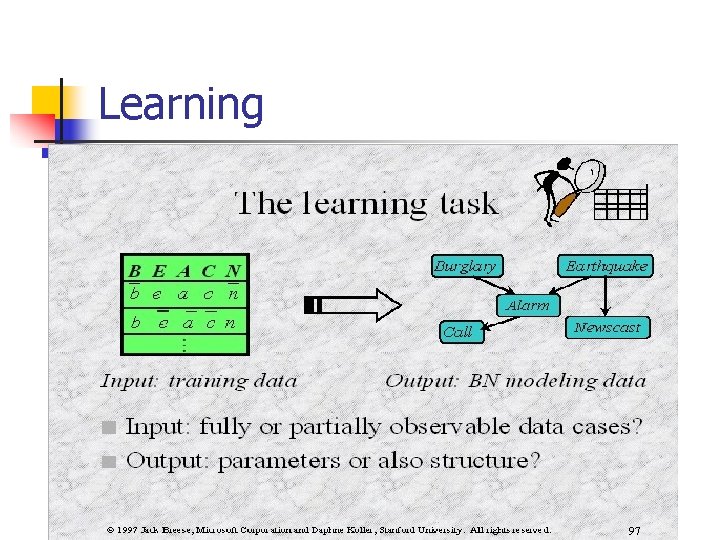

Learning

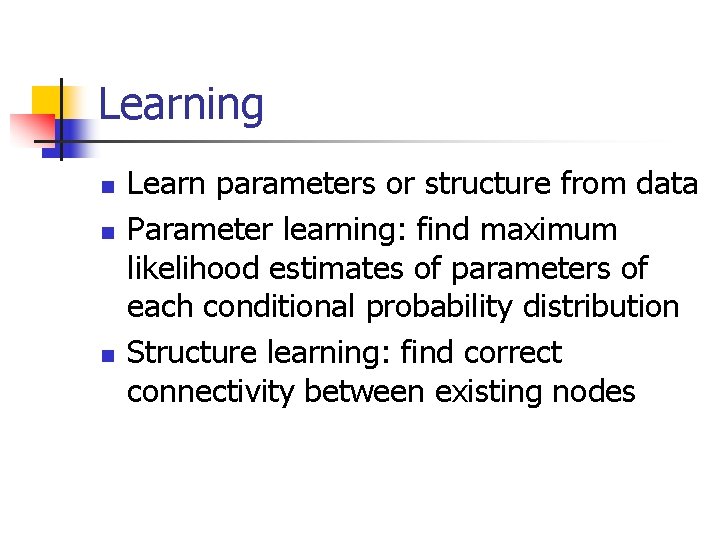

Learning n n n Learn parameters or structure from data Parameter learning: find maximum likelihood estimates of parameters of each conditional probability distribution Structure learning: find correct connectivity between existing nodes

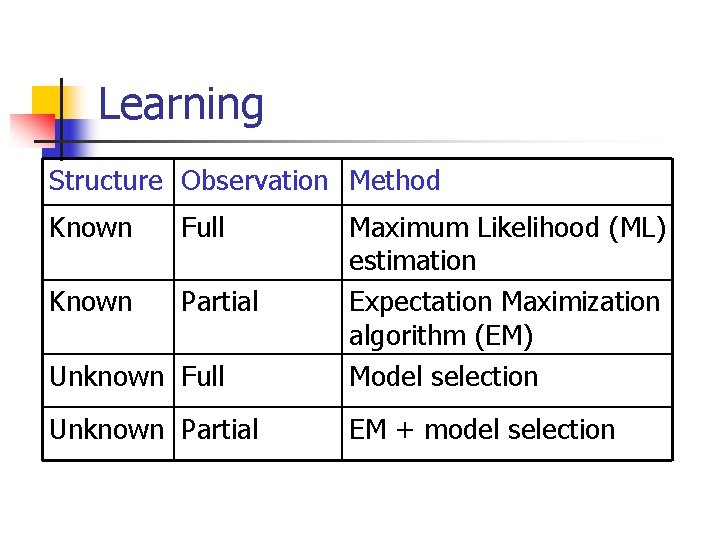

Learning Structure Observation Method Known Full Unknown Full Maximum Likelihood (ML) estimation Expectation Maximization algorithm (EM) Model selection Known Partial Unknown Partial EM + model selection

Model Selection Method - Select a ‘good’ model from all possible models and use it as if it were the correct model - Having defined a scoring function, a search algorithm is then used to find a network structure that receives the highest score fitting the prior knowledge and data - Unfortunately, the number of DAG’s on n variables is super-exponential in n. The usual approach is therefore to use local search algorithms (e. g. , greedy hill climbing) to search through the space of graphs.

The Bayes Net Toolbox for Matlab n n n What is BNT? Why yet another BN toolbox? Why Matlab? An overview of BNT’s design How to use BNT Other GM projects

What is BNT? n n BNT is an open-source collection of matlab functions for inference and learning of (directed) graphical models Started in Summer 1997 (DEC CRL), development continued while at UCB Over 100, 000 hits and about 30, 000 downloads since May 2000 About 43, 000 lines of code (of which 8, 000 are comments)

Why yet another BN toolbox? n In 1997, there were very few BN programs, and all failed to satisfy the following desiderata: n n n Must support real-valued (vector) data Must support learning (params and struct) Must support time series Must support exact and approximate inference Must separate API from UI Must support MRFs as well as BNs Must be possible to add new models and algorithms Preferably free Preferably open-source Preferably easycriteria to read/ modify BNT meets all these except for the last Preferably fast

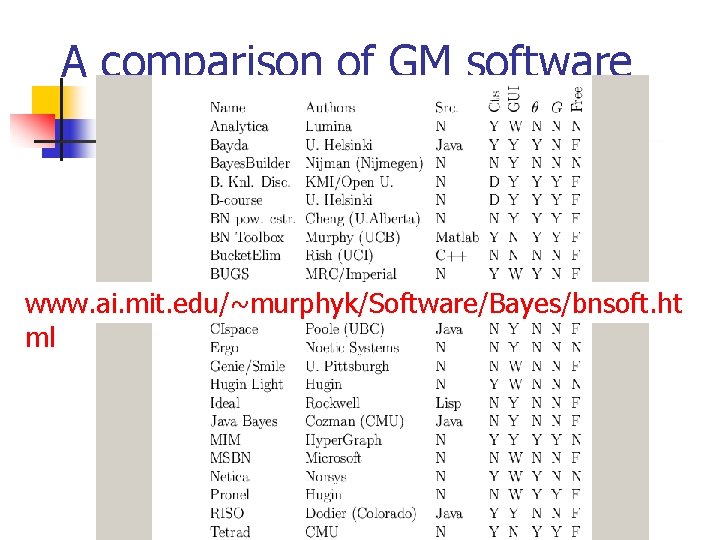

A comparison of GM software www. ai. mit. edu/~murphyk/Software/Bayes/bnsoft. ht ml

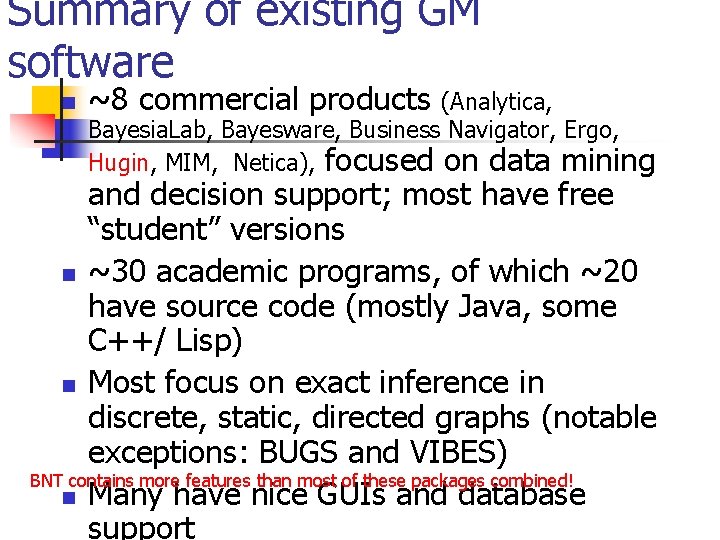

Summary of existing GM software n ~8 commercial products (Analytica, Bayesia. Lab, Bayesware, Business Navigator, Ergo, Hugin, MIM, Netica), focused on data mining and decision support; most have free “student” versions n ~30 academic programs, of which ~20 have source code (mostly Java, some C++/ Lisp) n Most focus on exact inference in discrete, static, directed graphs (notable exceptions: BUGS and VIBES) BNT contains more features than most of these packages combined! n Many have nice GUIs and database support

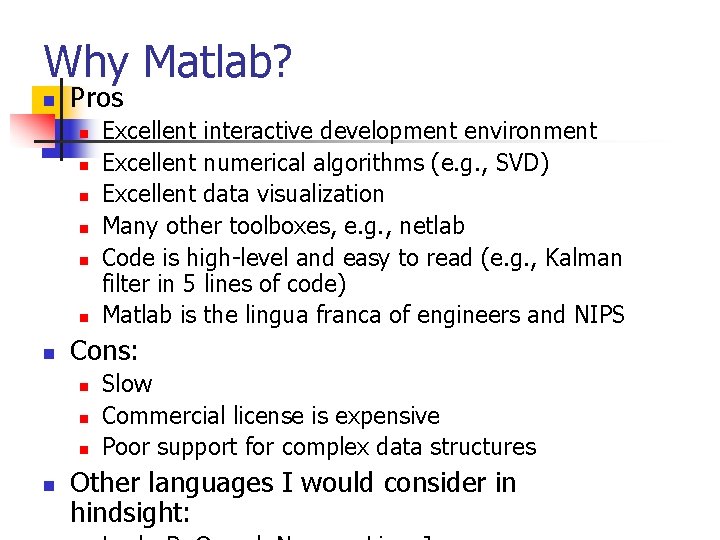

Why Matlab? n Pros n n n n Cons: n n Excellent interactive development environment Excellent numerical algorithms (e. g. , SVD) Excellent data visualization Many other toolboxes, e. g. , netlab Code is high-level and easy to read (e. g. , Kalman filter in 5 lines of code) Matlab is the lingua franca of engineers and NIPS Slow Commercial license is expensive Poor support for complex data structures Other languages I would consider in hindsight:

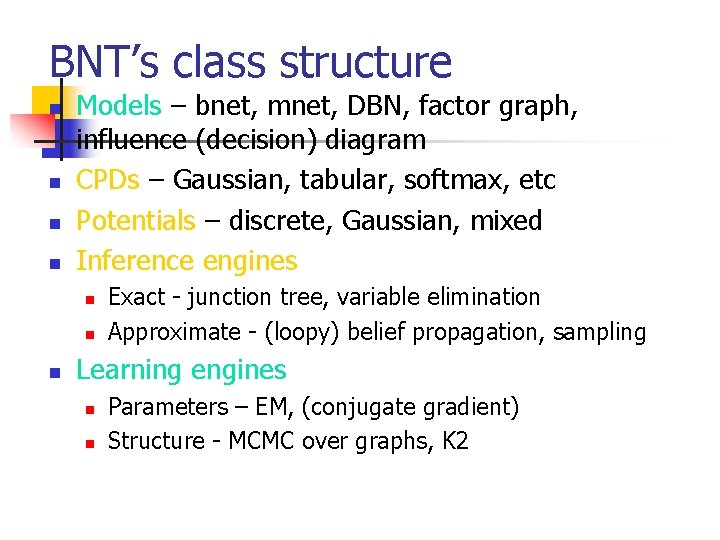

BNT’s class structure n n Models – bnet, mnet, DBN, factor graph, influence (decision) diagram CPDs – Gaussian, tabular, softmax, etc Potentials – discrete, Gaussian, mixed Inference engines n n n Exact - junction tree, variable elimination Approximate - (loopy) belief propagation, sampling Learning engines n n Parameters – EM, (conjugate gradient) Structure - MCMC over graphs, K 2

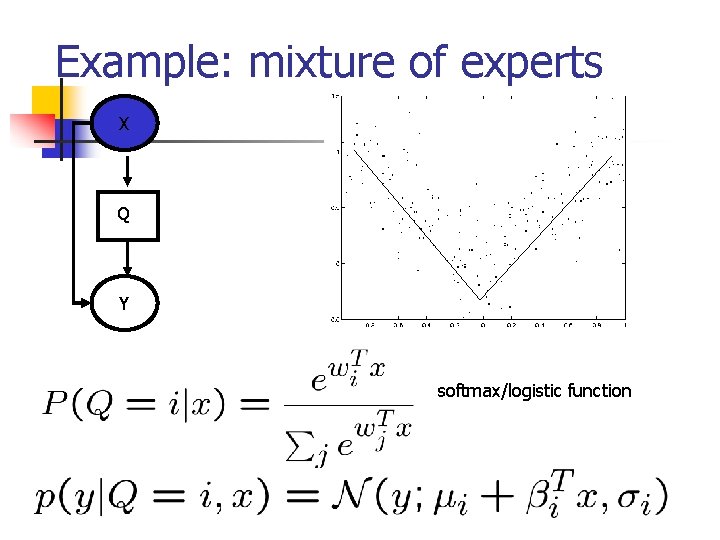

Example: mixture of experts X Q Y softmax/logistic function

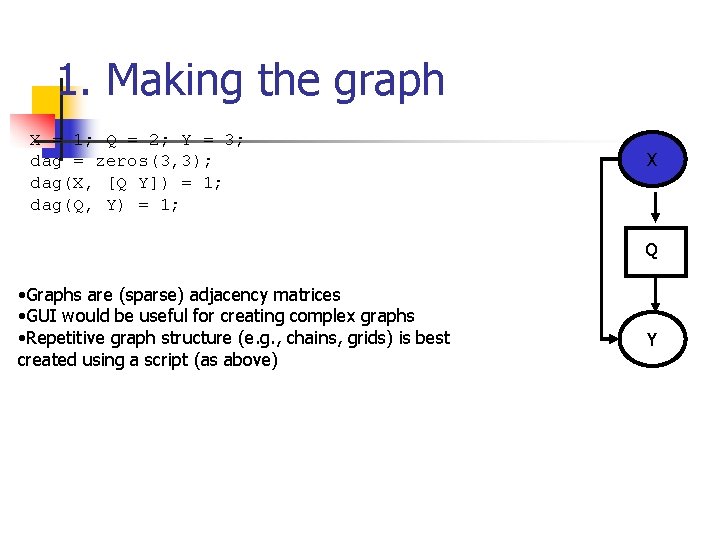

1. Making the graph X = 1; Q = 2; Y = 3; dag = zeros(3, 3); dag(X, [Q Y]) = 1; dag(Q, Y) = 1; X Q • Graphs are (sparse) adjacency matrices • GUI would be useful for creating complex graphs • Repetitive graph structure (e. g. , chains, grids) is best created using a script (as above) Y

![2. Making the model node_sizes = [1 2 1]; dnodes = [2]; bnet = 2. Making the model node_sizes = [1 2 1]; dnodes = [2]; bnet =](http://slidetodoc.com/presentation_image_h/a247b2e21d5471b486ebbb2bad61e108/image-47.jpg)

2. Making the model node_sizes = [1 2 1]; dnodes = [2]; bnet = mk_bnet(dag, node_sizes, … ‘discrete’, dnodes); • X is always observed input, hence only one effective value • Q is a hidden binary node • Y is a hidden scalar node • bnet is a struct, but should be an object • mk_bnet has many optional arguments, passed as string/value pairs X Q Y

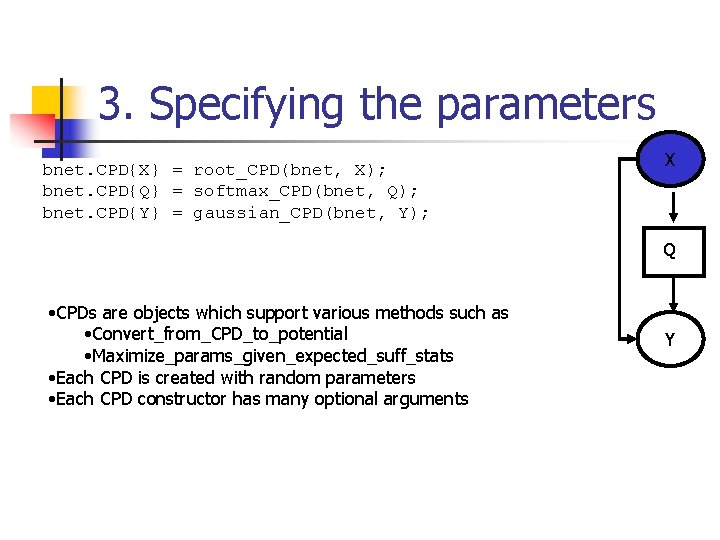

3. Specifying the parameters bnet. CPD{X} = root_CPD(bnet, X); bnet. CPD{Q} = softmax_CPD(bnet, Q); bnet. CPD{Y} = gaussian_CPD(bnet, Y); X Q • CPDs are objects which support various methods such as • Convert_from_CPD_to_potential • Maximize_params_given_expected_suff_stats • Each CPD is created with random parameters • Each CPD constructor has many optional arguments Y

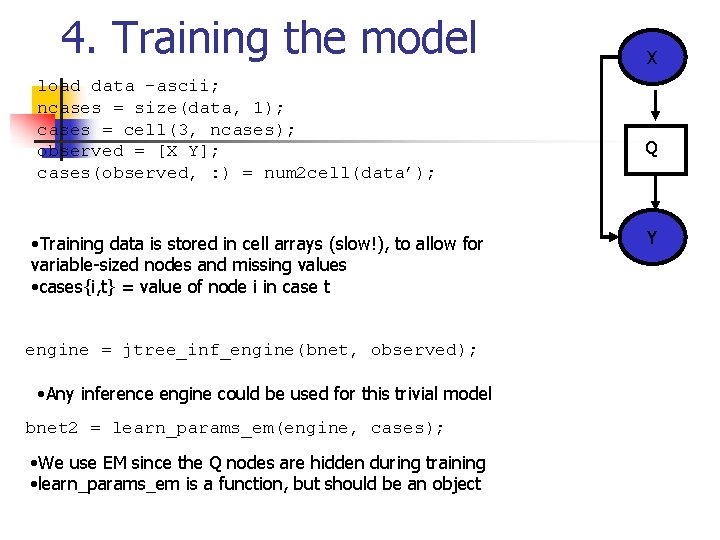

4. Training the model load data –ascii; ncases = size(data, 1); cases = cell(3, ncases); observed = [X Y]; cases(observed, : ) = num 2 cell(data’); • Training data is stored in cell arrays (slow!), to allow for variable-sized nodes and missing values • cases{i, t} = value of node i in case t engine = jtree_inf_engine(bnet, observed); • Any inference engine could be used for this trivial model bnet 2 = learn_params_em(engine, cases); • We use EM since the Q nodes are hidden during training • learn_params_em is a function, but should be an object X Q Y

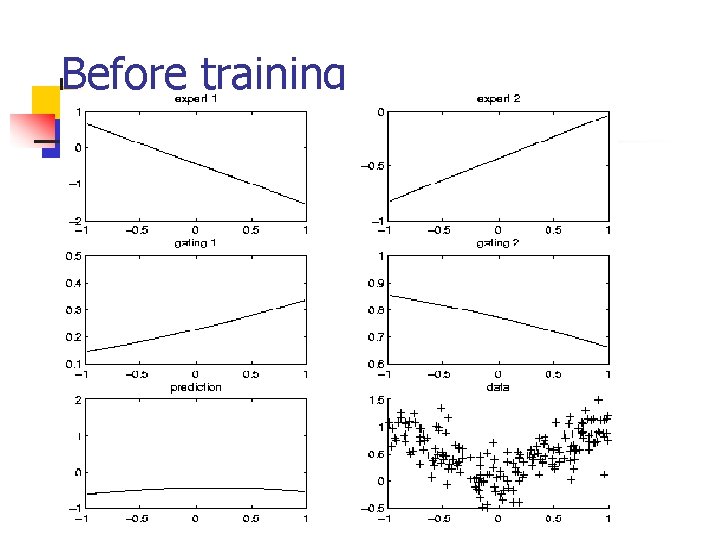

Before training

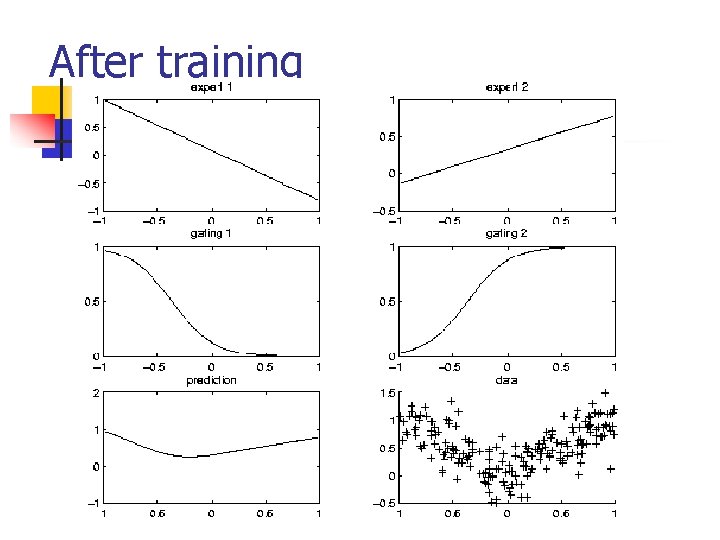

After training

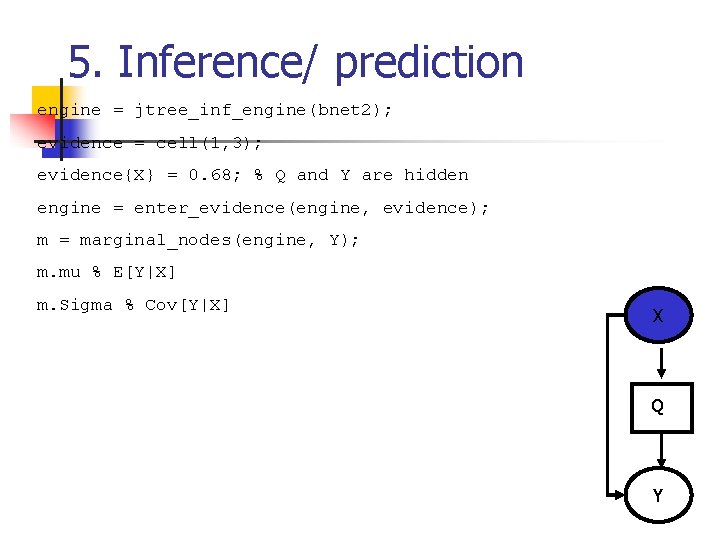

5. Inference/ prediction engine = jtree_inf_engine(bnet 2); evidence = cell(1, 3); evidence{X} = 0. 68; % Q and Y are hidden engine = enter_evidence(engine, evidence); m = marginal_nodes(engine, Y); m. mu % E[Y|X] m. Sigma % Cov[Y|X] X Q Y

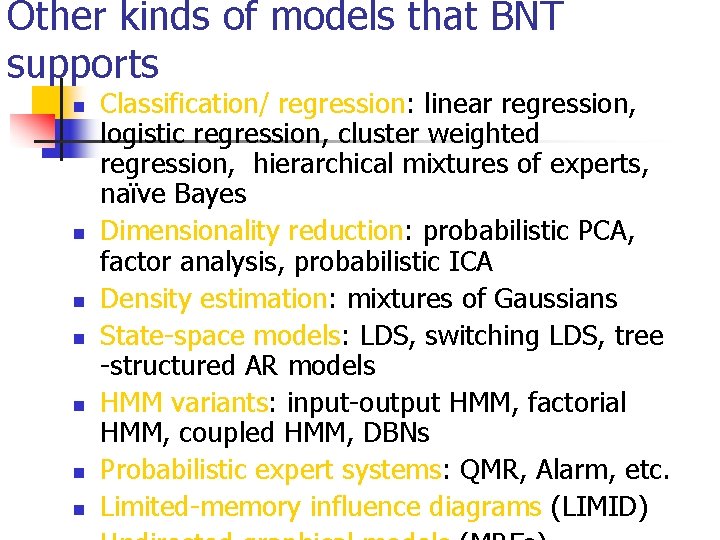

Other kinds of models that BNT supports n n n n Classification/ regression: linear regression, logistic regression, cluster weighted regression, hierarchical mixtures of experts, naïve Bayes Dimensionality reduction: probabilistic PCA, factor analysis, probabilistic ICA Density estimation: mixtures of Gaussians State-space models: LDS, switching LDS, tree -structured AR models HMM variants: input-output HMM, factorial HMM, coupled HMM, DBNs Probabilistic expert systems: QMR, Alarm, etc. Limited-memory influence diagrams (LIMID)

Summary of BNT n n Provides many different kinds of models/ CPDs – lego brick philosophy Provides many inference algorithms, with different speed/ accuracy/ generality tradeoffs (to be chosen by user) Provides several learning algorithms (parameters and structure) Source code is easy to read and extend

What is wrong with BNT? n n n n n It is slow It has little support for undirected models Models are not bona fide objects Learning engines are not objects It does not support online inference/learning It does not support Bayesian estimation It has no GUI It has no file parser It is more complex than necessary

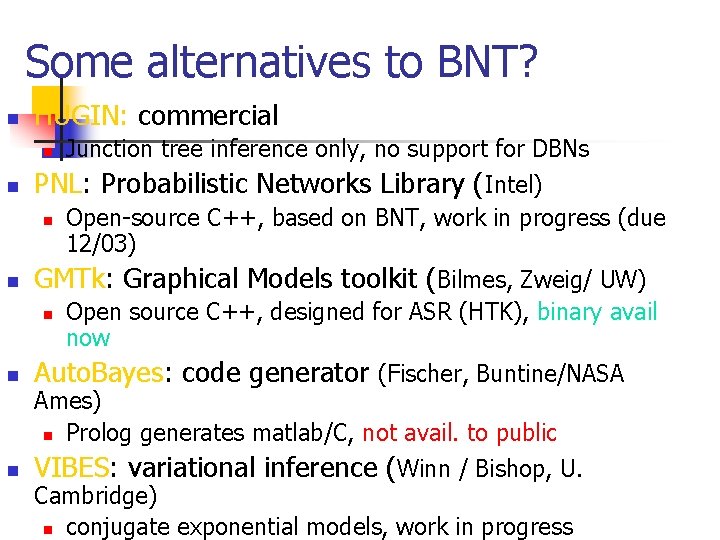

Some alternatives to BNT? n HUGIN: commercial n n PNL: Probabilistic Networks Library (Intel) n n Junction tree inference only, no support for DBNs Open-source C++, based on BNT, work in progress (due 12/03) GMTk: Graphical Models toolkit (Bilmes, Zweig/ UW) n Open source C++, designed for ASR (HTK), binary avail now n Auto. Bayes: code generator (Fischer, Buntine/NASA n VIBES: variational inference (Winn / Bishop, U. Ames) n Prolog generates matlab/C, not avail. to public Cambridge) n conjugate exponential models, work in progress

Conclusion n n A graphical representation of the probabilistic structure of a set of random variables, along with functions that can be used to derive the joint probability distribution. Intuitive interface for modeling. Modular: Useful tool for managing complexity. Common formalism for many models.

- Slides: 57