Chapter 21 IA64 Architecture Think Intel Itanium also

![Assembly Language Format [qp] mnemonic [. comp] dest = srcs ; ; // • Assembly Language Format [qp] mnemonic [. comp] dest = srcs ; ; // •](https://slidetodoc.com/presentation_image/083059a7af6848482cd0fd4819866965/image-19.jpg)

![Assembly Example Register Dependency: ld 8 r 1 = [r 5] ; ; //first Assembly Example Register Dependency: ld 8 r 1 = [r 5] ; ; //first](https://slidetodoc.com/presentation_image/083059a7af6848482cd0fd4819866965/image-20.jpg)

![Software Pipelining Consider loop in which: L 1: y[i] = x[i] + c ld Software Pipelining Consider loop in which: L 1: y[i] = x[i] + c ld](https://slidetodoc.com/presentation_image/083059a7af6848482cd0fd4819866965/image-32.jpg)

- Slides: 38

Chapter 21 IA-64 Architecture (Think Intel Itanium) also known as (EPIC – Extremely Parallel Instruction Computing)

Superpipelined & Superscaler Machines Superpipelined machine: • Superpiplined machines overlap pipe stages — Relies on stages being able to begin operations before the last is complete. Superscaler Machine: A Superscalar machine employs multiple independent pipelines to executes multiple independent instructions in parallel. — Particularly common instructions (arithmetic, load/store, conditional branch) can be executed independently.

Why A New Architecture Direction? Processor designers obvious choices for use of increasing number of transistors on chip and extra speed: • Bigger Caches diminishing returns • Increase degree of Superscaling by adding more execution units complexity wall: more logic, need improved branch prediction, more renaming registers, more complicated dependencies. • Multiple Processors challenge to use them effectively in general computing • Longer pipelines greater penalty for misprediction

IA-64 : Background • Explicitly Parallel Instruction Computing (EPIC) - Jointly developed by Intel & Hewlett-Packard (HP) • New 64 bit architecture — Not extension of x 86 series — Not adaptation of HP 64 bit RISC architecture • To exploit increasing chip transistors and increasing speeds • Utilizes systematic parallelism • Departure from superscalar trend Note: Became the architecture of the Intel Itanium

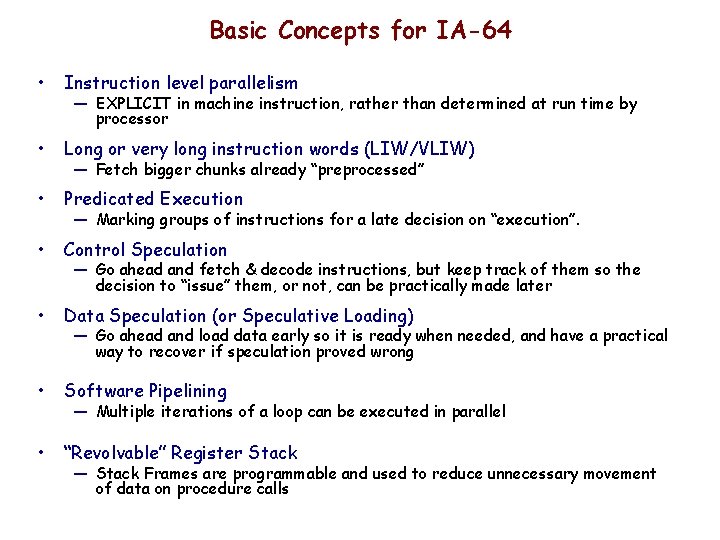

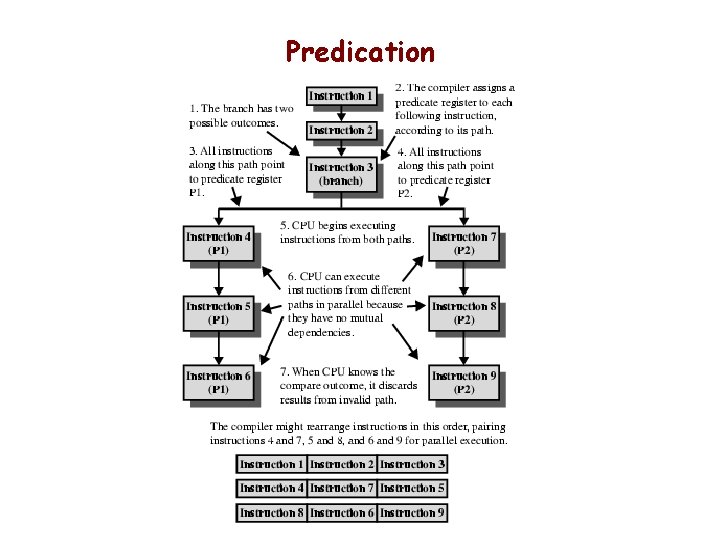

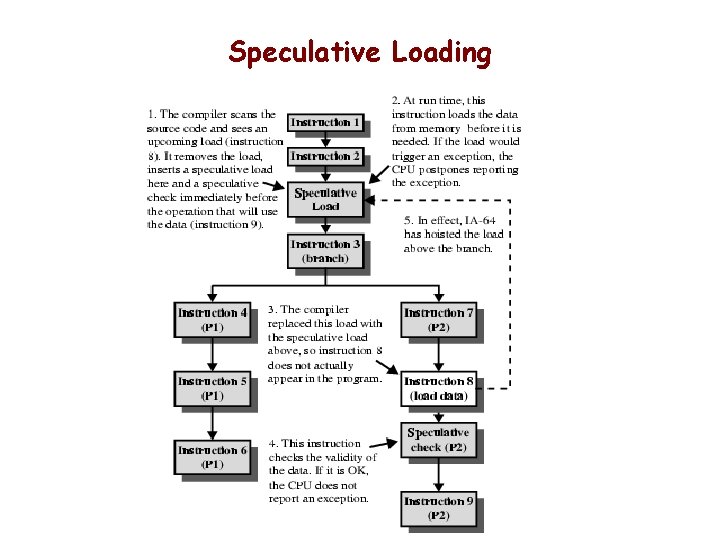

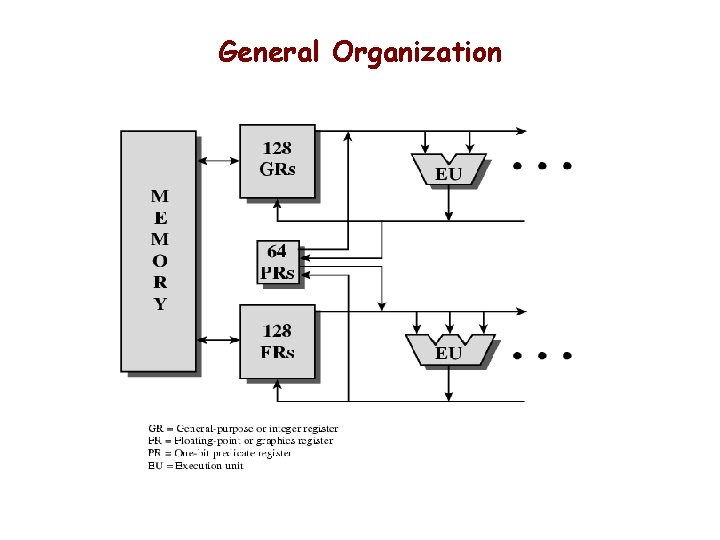

Basic Concepts for IA-64 • Instruction level parallelism • Long or very long instruction words (LIW/VLIW) • Predicated Execution • Control Speculation • Data Speculation (or Speculative Loading) • Software Pipelining • “Revolvable” Register Stack — EXPLICIT in machine instruction, rather than determined at run time by processor — Fetch bigger chunks already “preprocessed” — Marking groups of instructions for a late decision on “execution”. — Go ahead and fetch & decode instructions, but keep track of them so the decision to “issue” them, or not, can be practically made later — Go ahead and load data early so it is ready when needed, and have a practical way to recover if speculation proved wrong — Multiple iterations of a loop can be executed in parallel — Stack Frames are programmable and used to reduce unnecessary movement of data on procedure calls

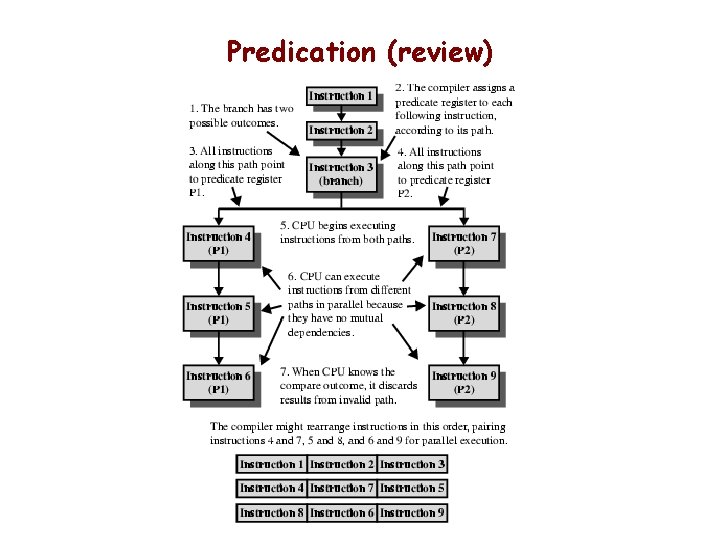

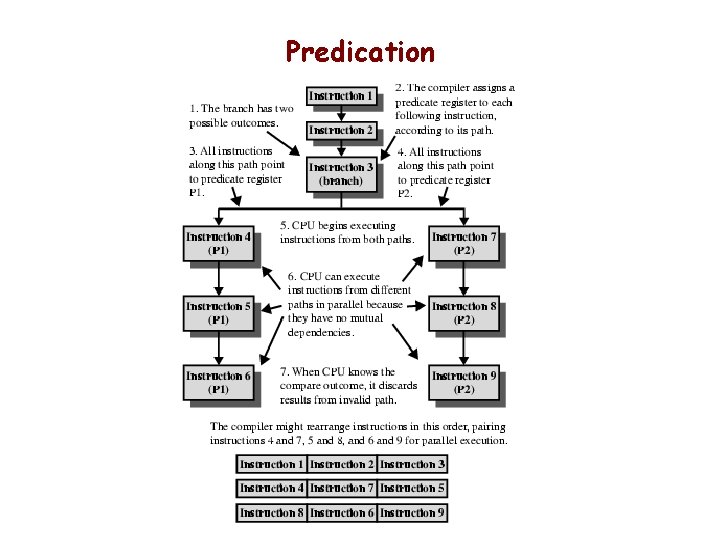

Predication

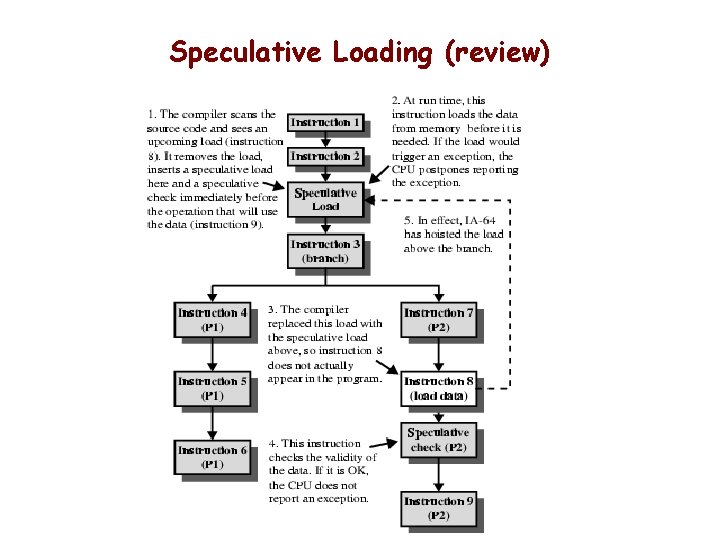

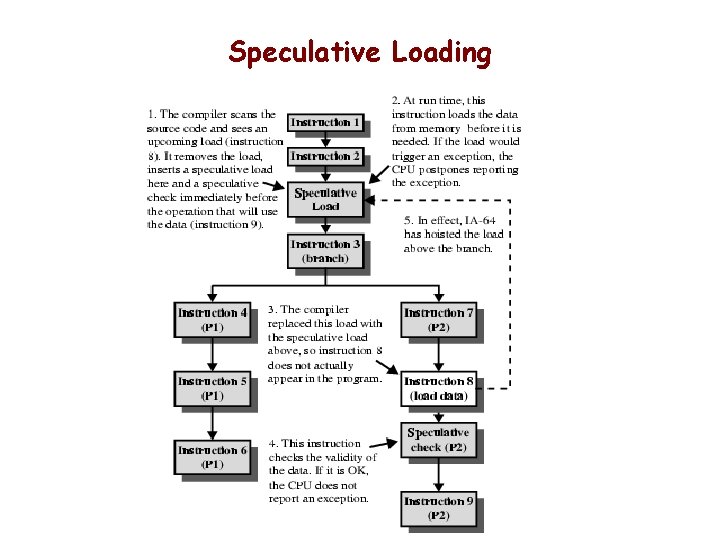

Speculative Loading

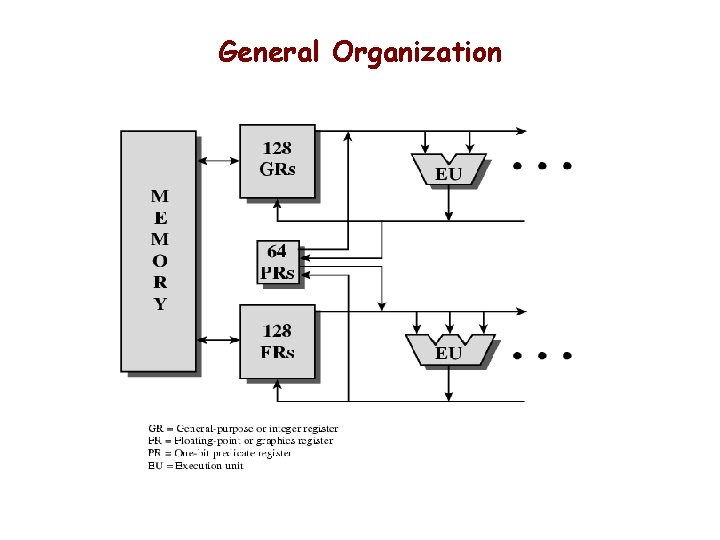

General Organization

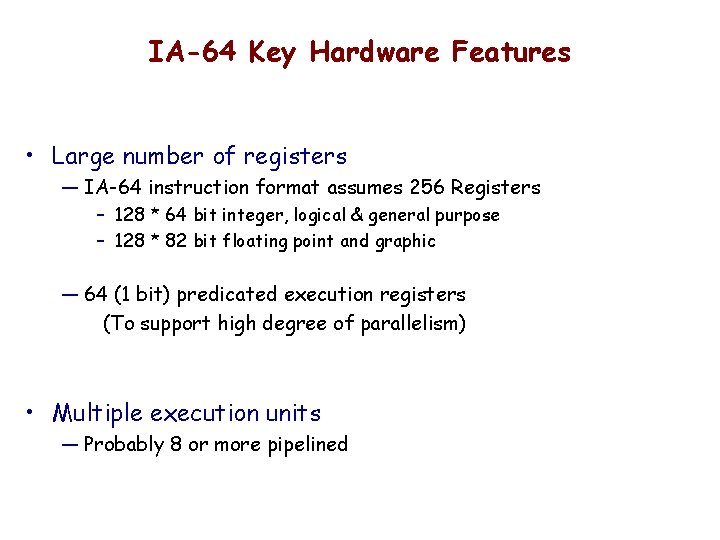

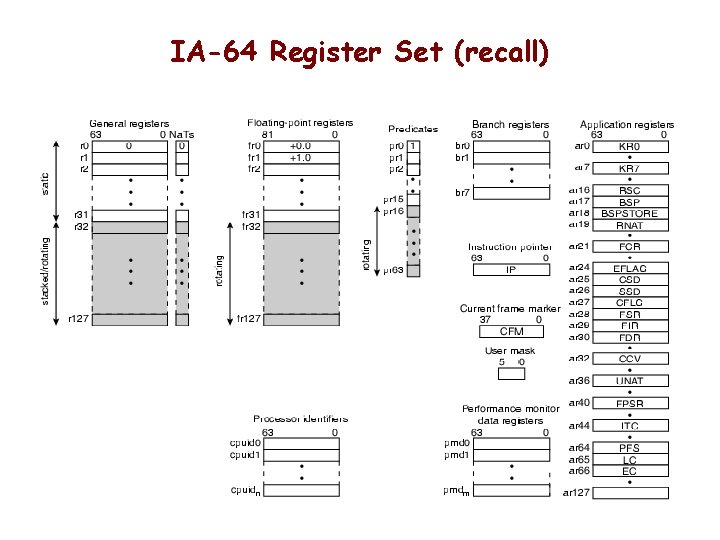

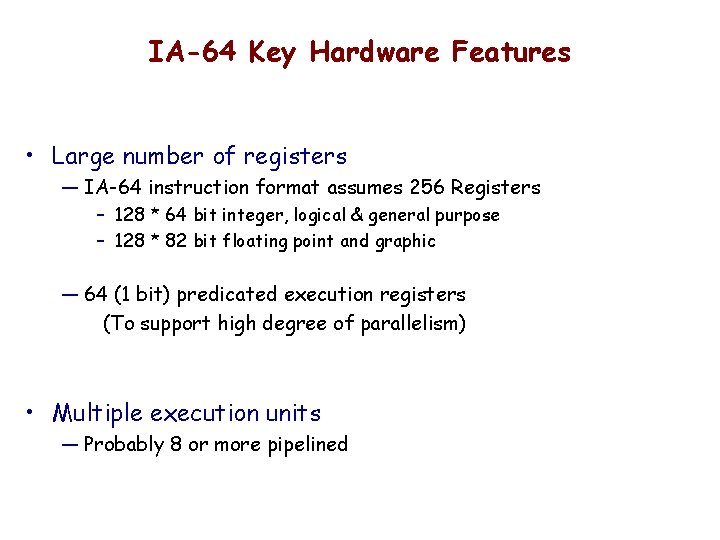

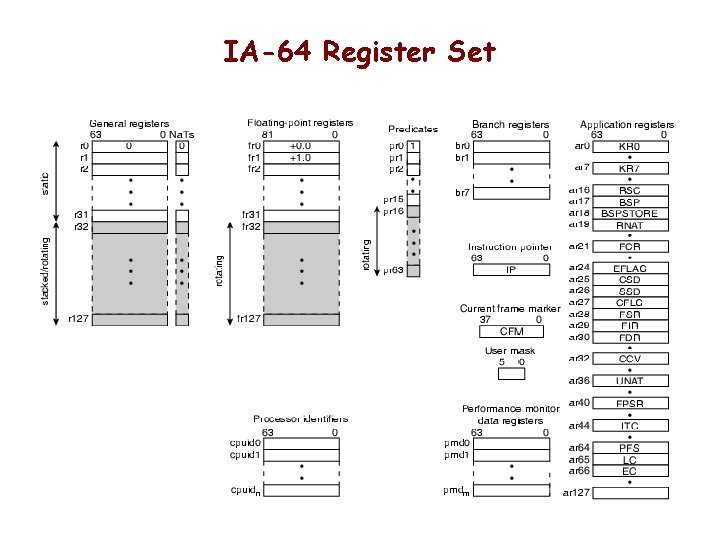

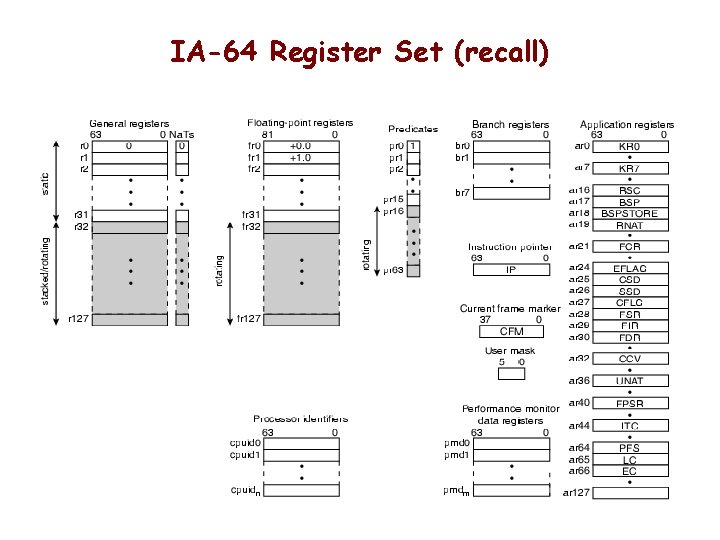

IA-64 Key Hardware Features • Large number of registers — IA-64 instruction format assumes 256 Registers – 128 * 64 bit integer, logical & general purpose – 128 * 82 bit floating point and graphic — 64 (1 bit) predicated execution registers (To support high degree of parallelism) • Multiple execution units — Probably 8 or more pipelined

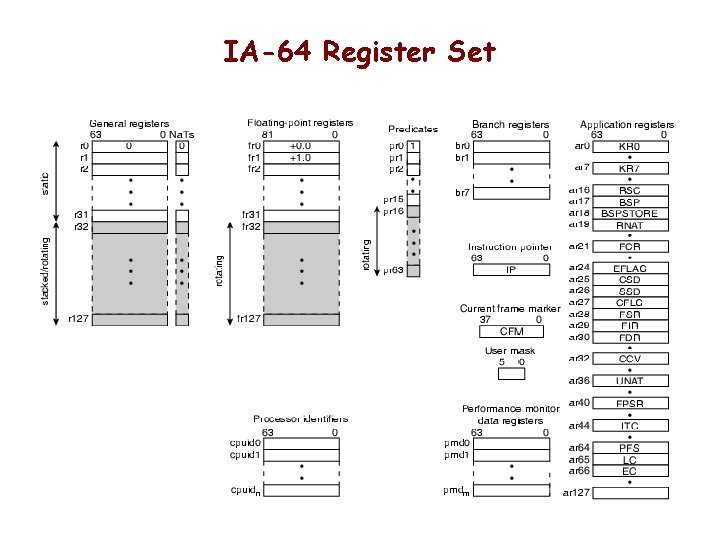

IA-64 Register Set

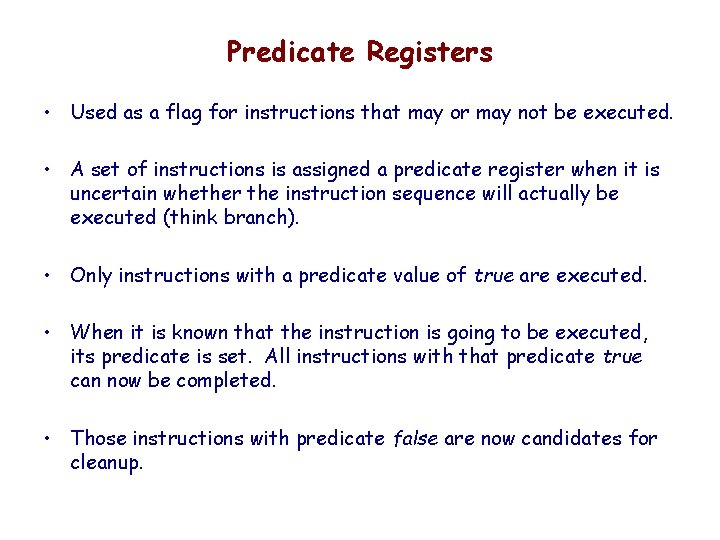

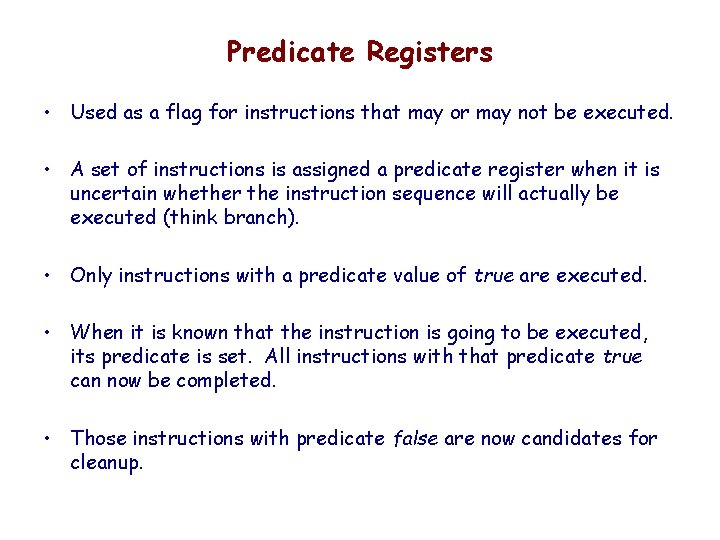

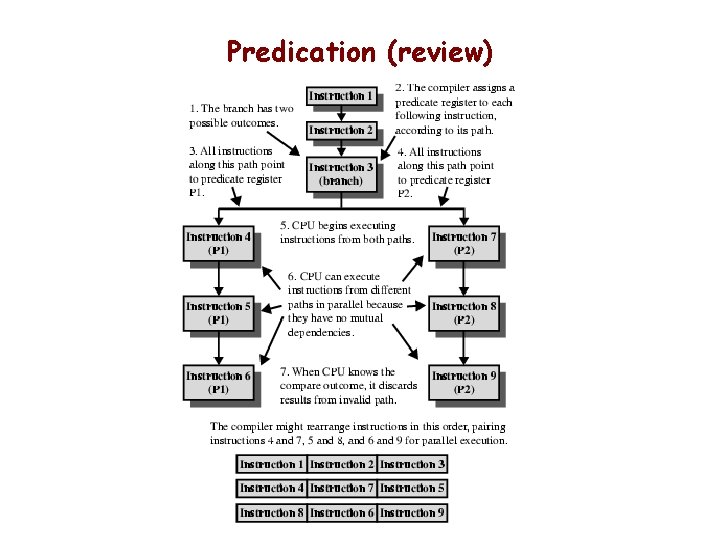

Predicate Registers • Used as a flag for instructions that may or may not be executed. • A set of instructions is assigned a predicate register when it is uncertain whether the instruction sequence will actually be executed (think branch). • Only instructions with a predicate value of true are executed. • When it is known that the instruction is going to be executed, its predicate is set. All instructions with that predicate true can now be completed. • Those instructions with predicate false are now candidates for cleanup.

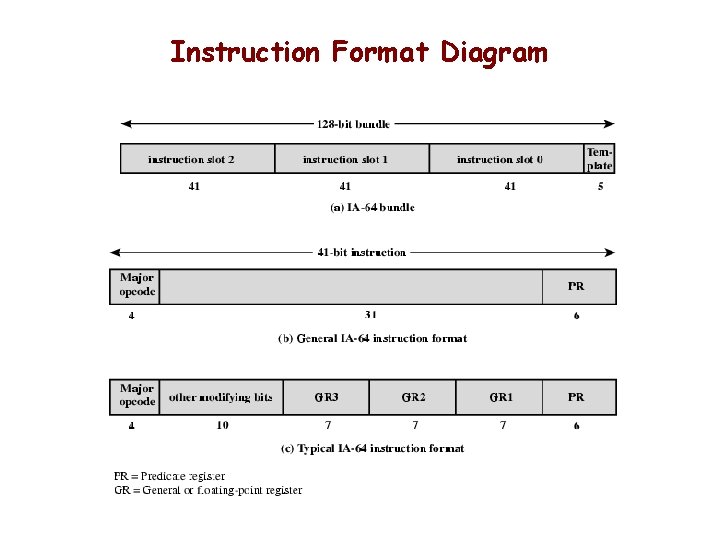

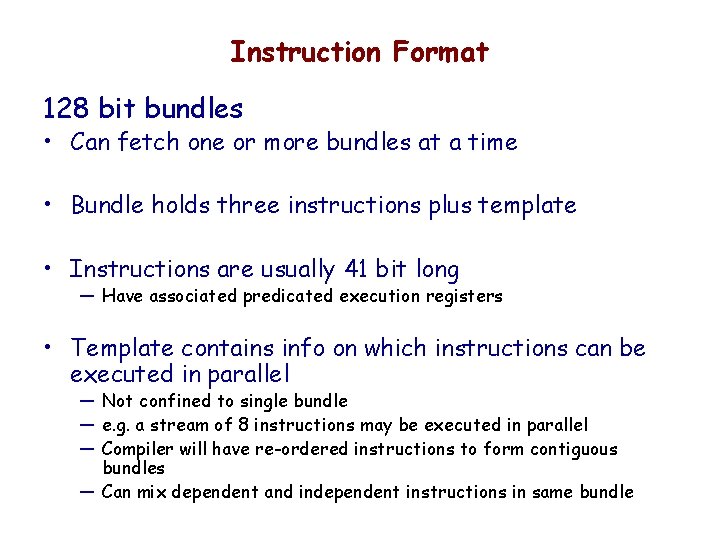

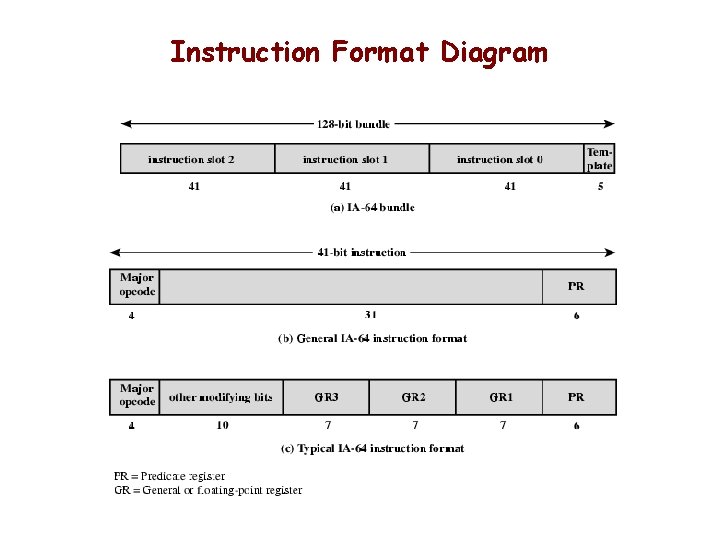

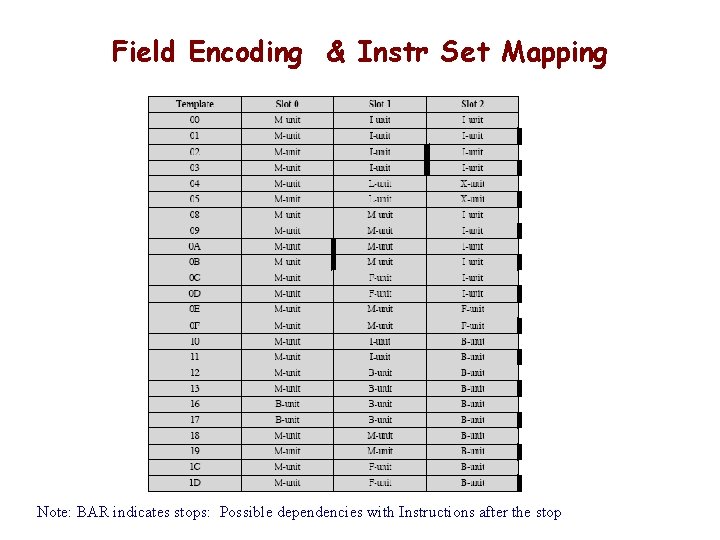

Instruction Format 128 bit bundles • Can fetch one or more bundles at a time • Bundle holds three instructions plus template • Instructions are usually 41 bit long — Have associated predicated execution registers • Template contains info on which instructions can be executed in parallel — Not confined to single bundle — e. g. a stream of 8 instructions may be executed in parallel — Compiler will have re-ordered instructions to form contiguous bundles — Can mix dependent and independent instructions in same bundle

Instruction Format Diagram

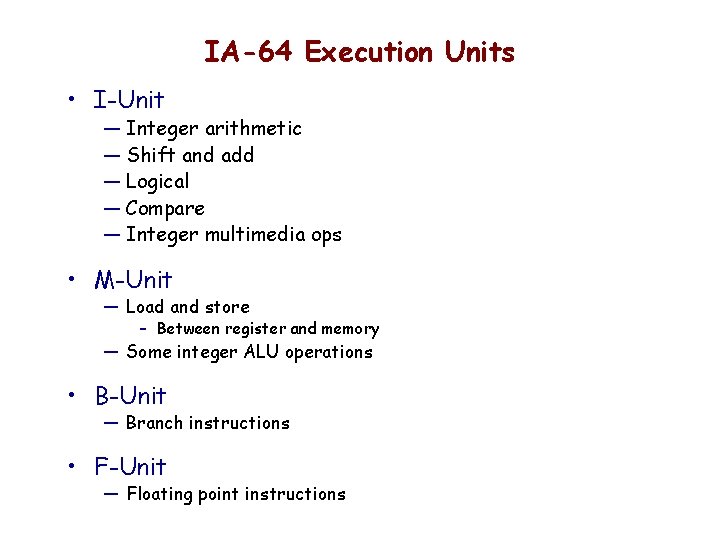

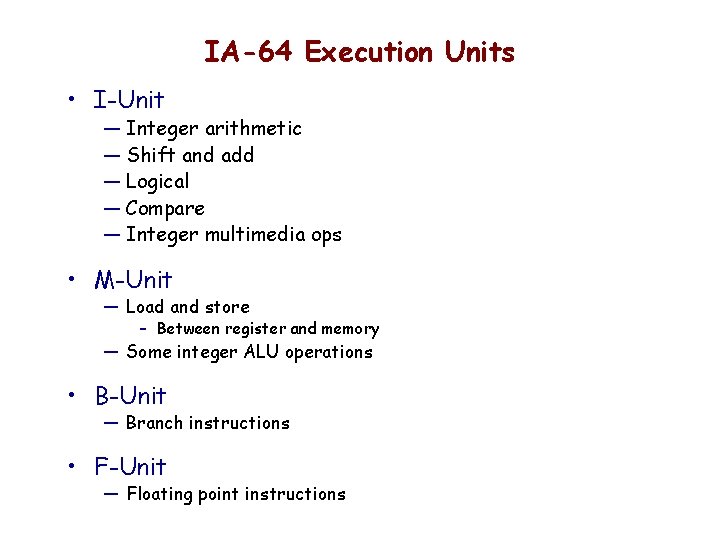

IA-64 Execution Units • I-Unit — Integer arithmetic — Shift and add — Logical — Compare — Integer multimedia ops • M-Unit — Load and store – Between register and memory — Some integer ALU operations • B-Unit — Branch instructions • F-Unit — Floating point instructions

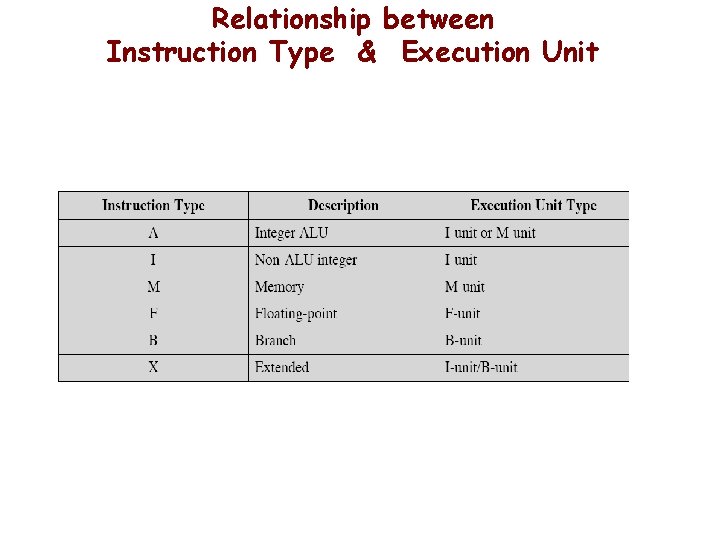

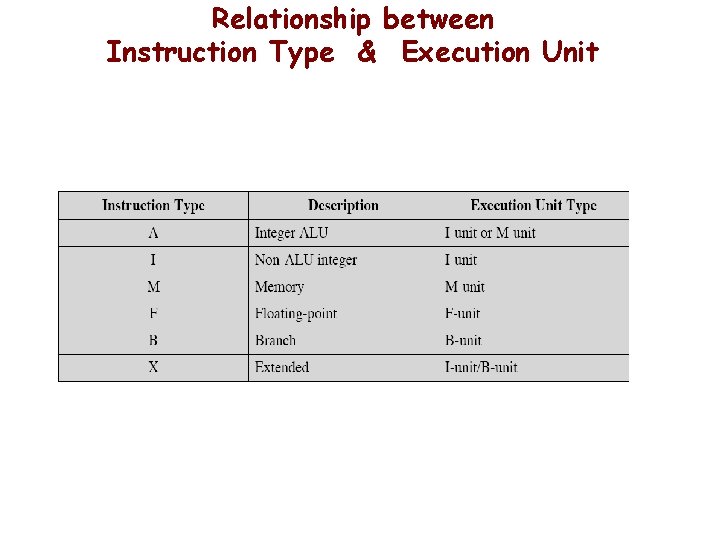

Relationship between Instruction Type & Execution Unit

Field Encoding & Instr Set Mapping Note: BAR indicates stops: Possible dependencies with Instructions after the stop

Predication (review)

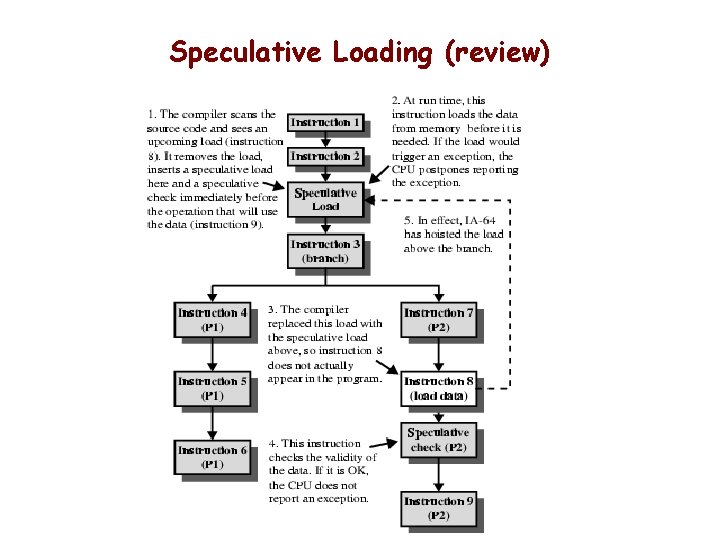

Speculative Loading (review)

![Assembly Language Format qp mnemonic comp dest srcs Assembly Language Format [qp] mnemonic [. comp] dest = srcs ; ; // •](https://slidetodoc.com/presentation_image/083059a7af6848482cd0fd4819866965/image-19.jpg)

Assembly Language Format [qp] mnemonic [. comp] dest = srcs ; ; // • qp - predicate register – 1 at execution time execute and commit result to hardware – 0 at execution time result is discarded • mnemonic - name of instruction • comp – one or more instruction completers used to qualify mnemonic • dest – one or more destination operands • srcs – one or more source operands • ; ; • // - instruction groups stops – Sequence without hazards - read after write, write after write, . . - comment follows

![Assembly Example Register Dependency ld 8 r 1 r 5 first Assembly Example Register Dependency: ld 8 r 1 = [r 5] ; ; //first](https://slidetodoc.com/presentation_image/083059a7af6848482cd0fd4819866965/image-20.jpg)

Assembly Example Register Dependency: ld 8 r 1 = [r 5] ; ; //first group add r 3 = r 1, r 4 //second group • Second instruction depends on value in r 1 — Changed by first instruction — Can not be in same group for parallel execution • Note ; ; ends the group of instructions that can be executed in parallel

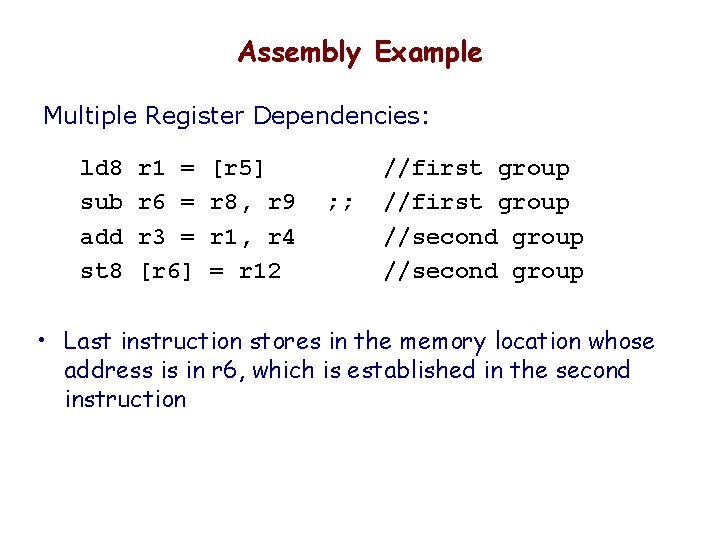

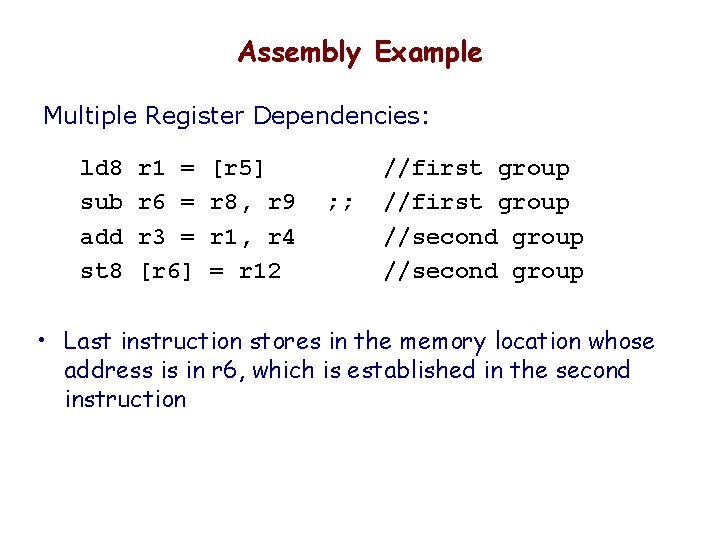

Assembly Example Multiple Register Dependencies: ld 8 sub add st 8 r 1 = r 6 = r 3 = [r 6] [r 5] r 8, r 9 r 1, r 4 = r 12 ; ; //first group //second group • Last instruction stores in the memory location whose address is in r 6, which is established in the second instruction

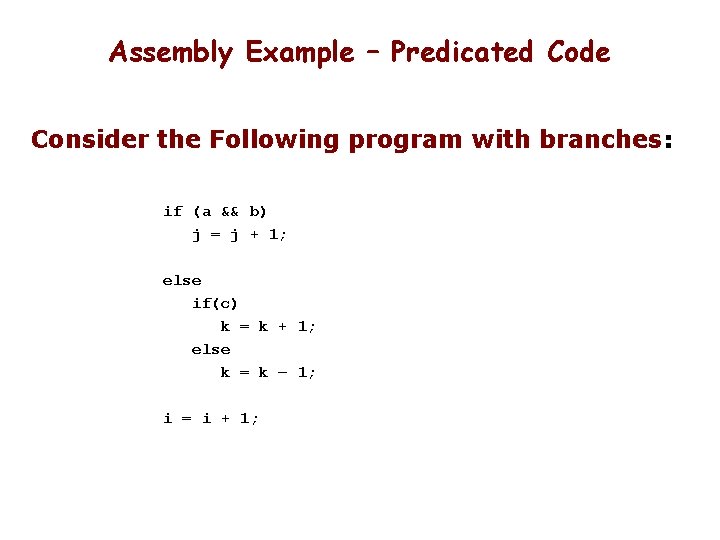

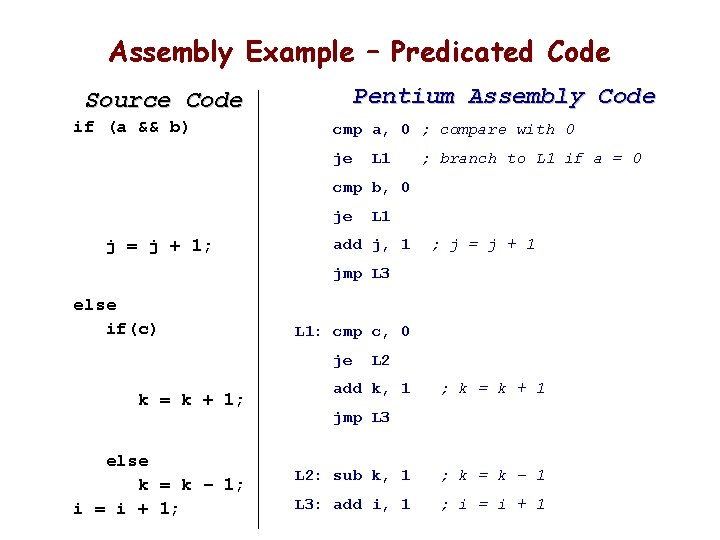

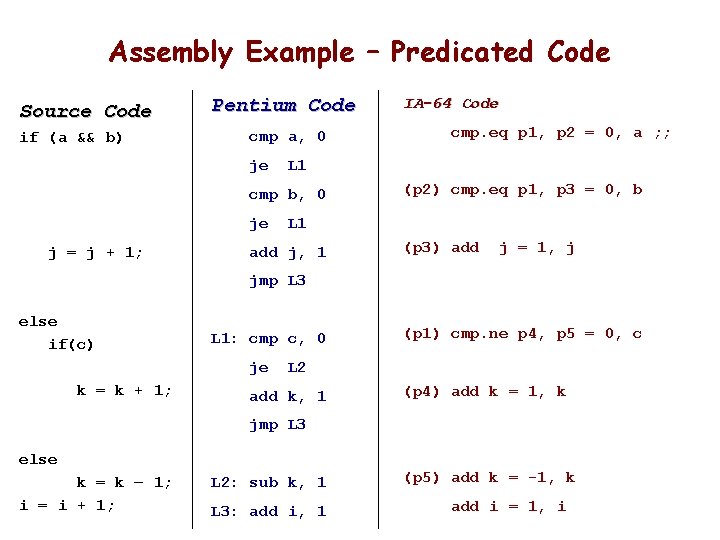

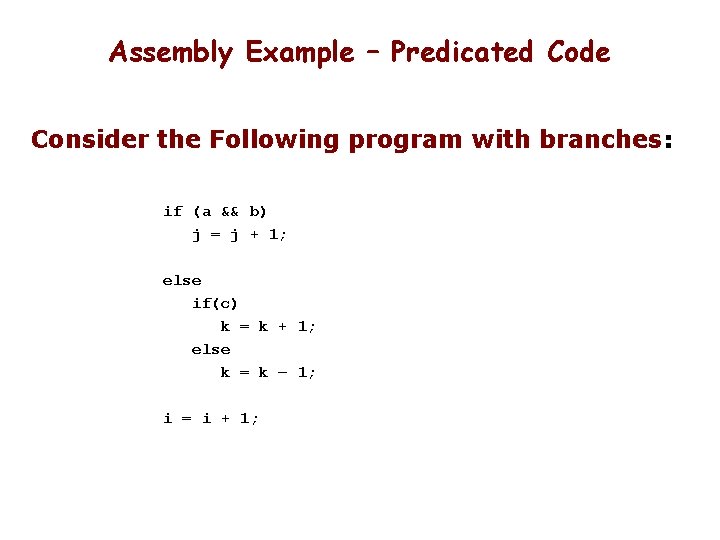

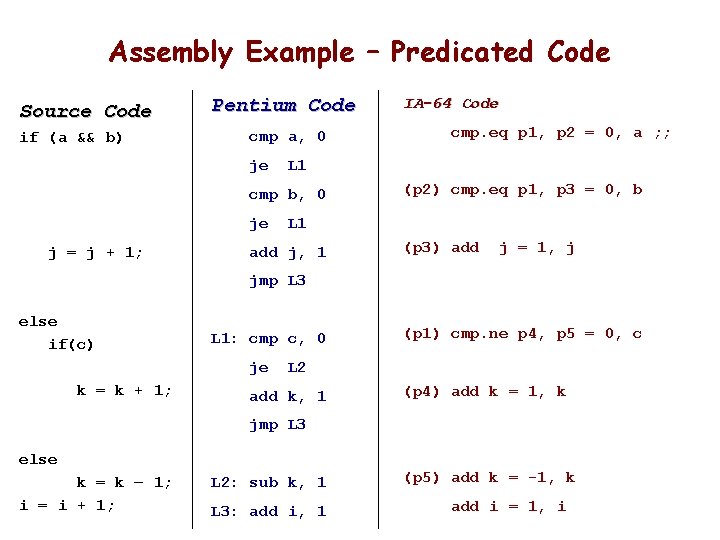

Assembly Example – Predicated Code Consider the Following program with branches: if (a && b) j = j + 1; else if(c) k = k + 1; else k = k – 1; i = i + 1;

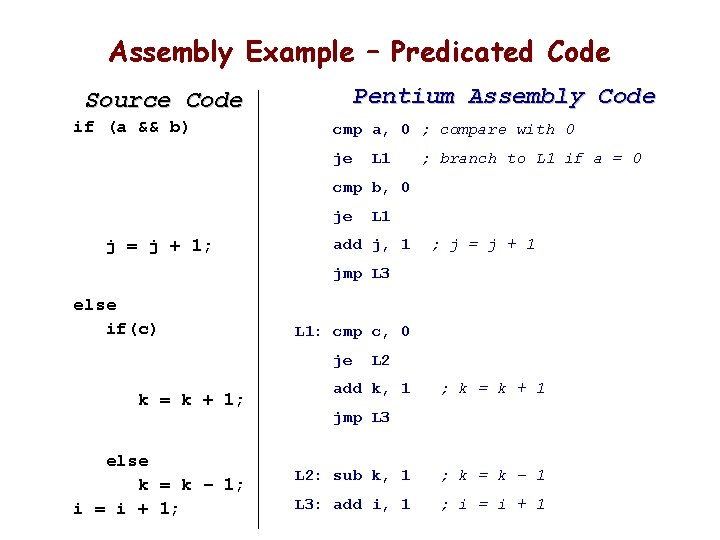

Assembly Example – Predicated Code Pentium Assembly Code Source Code if (a && b) cmp a, 0 ; compare with 0 je L 1 ; branch to L 1 if a = 0 cmp b, 0 je j = j + 1; L 1 add j, 1 ; j = j + 1 jmp L 3 else if(c) L 1: cmp c, 0 je k = k + 1; else k = k – 1; i = i + 1; L 2 add k, 1 ; k = k + 1 jmp L 3 L 2: sub k, 1 ; k = k – 1 L 3: add i, 1 ; i = i + 1

Assembly Example – Predicated Code Source Code if (a && b) Pentium Code cmp a, 0 je j = j + 1; cmp. eq p 1, p 2 = 0, a ; ; L 1 cmp b, 0 je IA-64 Code (p 2) cmp. eq p 1, p 3 = 0, b L 1 add j, 1 (p 3) add j = 1, j jmp L 3 else if(c) L 1: cmp c, 0 je k = k + 1; (p 1) cmp. ne p 4, p 5 = 0, c L 2 add k, 1 (p 4) add k = 1, k jmp L 3 else k = k – 1; i = i + 1; L 2: sub k, 1 L 3: add i, 1 (p 5) add k = -1, k add i = 1, i

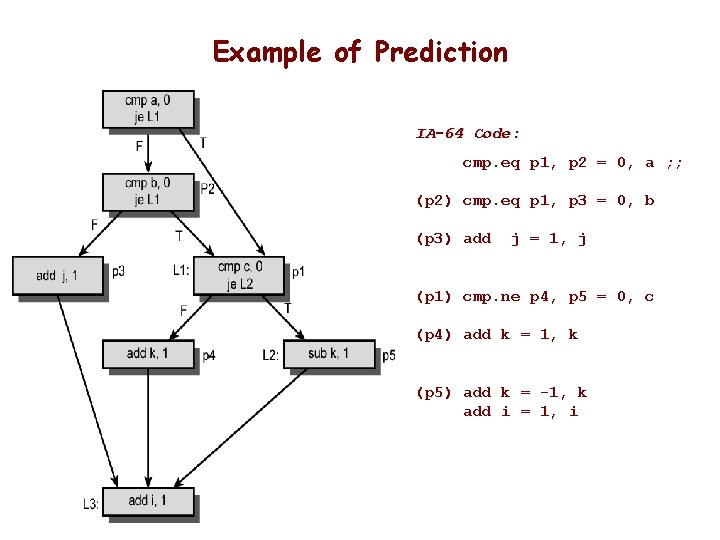

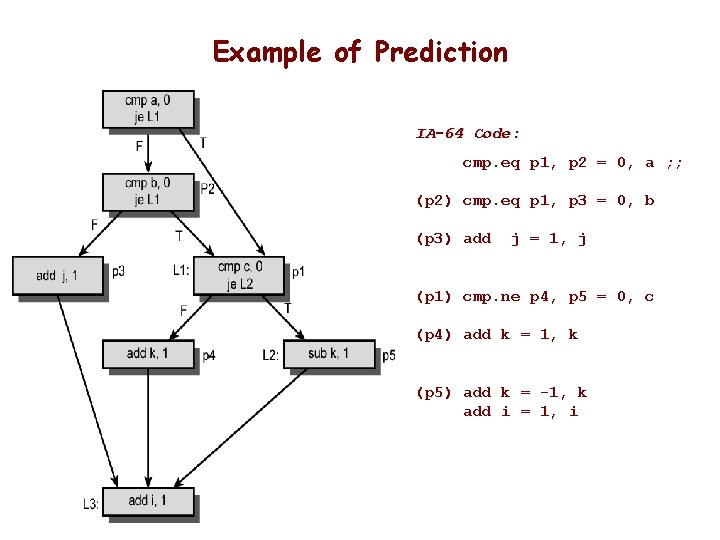

Example of Prediction IA-64 Code: cmp. eq p 1, p 2 = 0, a ; ; (p 2) cmp. eq p 1, p 3 = 0, b (p 3) add j = 1, j (p 1) cmp. ne p 4, p 5 = 0, c (p 4) add k = 1, k (p 5) add k = -1, k add i = 1, i

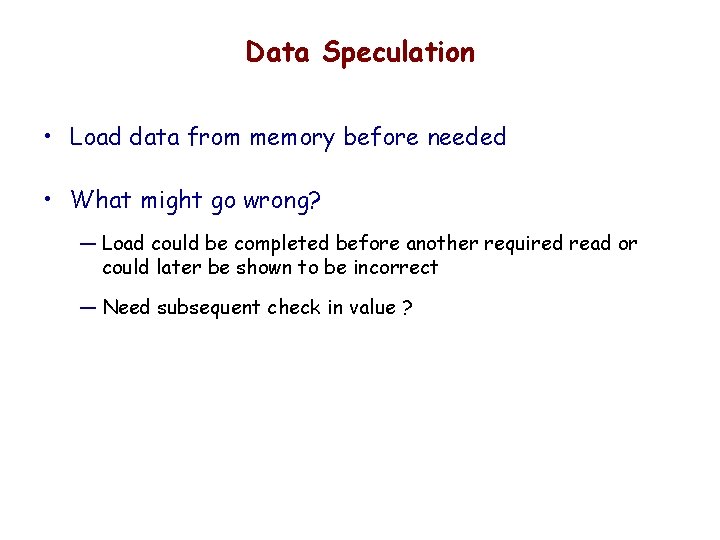

Data Speculation • Load data from memory before needed • What might go wrong? — Load could be completed before another required read or could later be shown to be incorrect — Need subsequent check in value ?

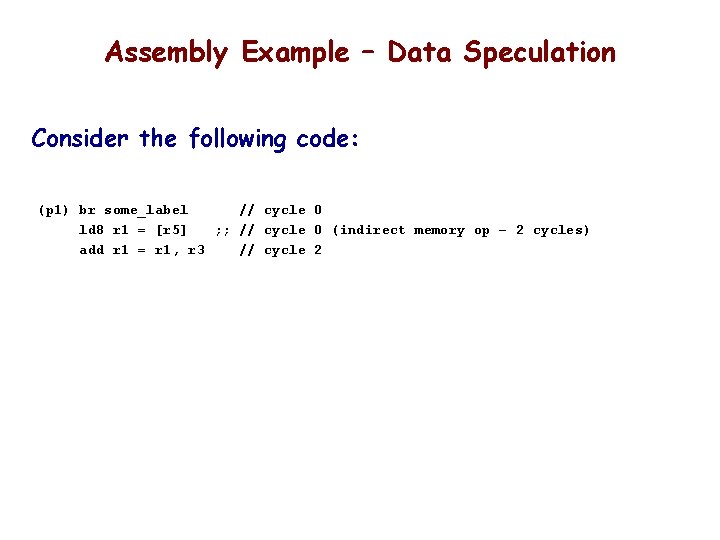

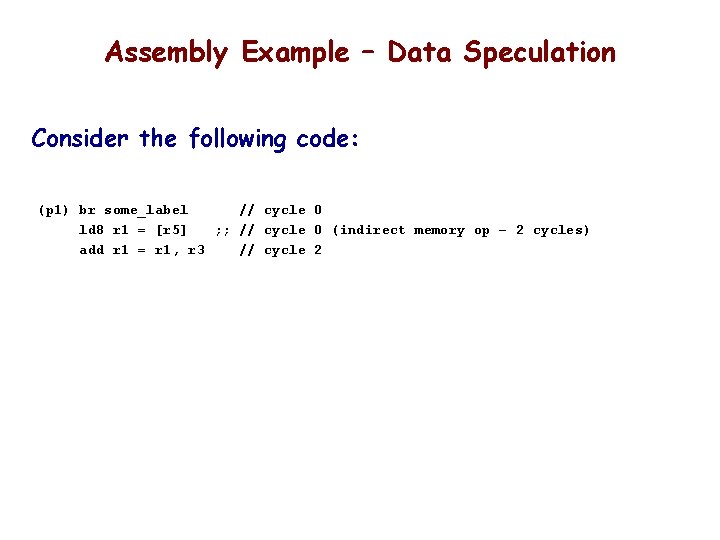

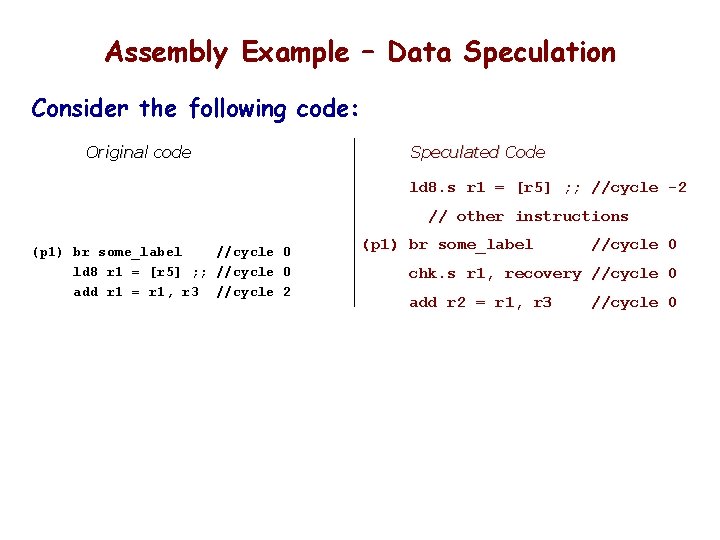

Assembly Example – Data Speculation Consider the following code: (p 1) br some_label // cycle 0 ld 8 r 1 = [r 5] ; ; // cycle 0 (indirect memory op – 2 cycles) add r 1 = r 1, r 3 // cycle 2

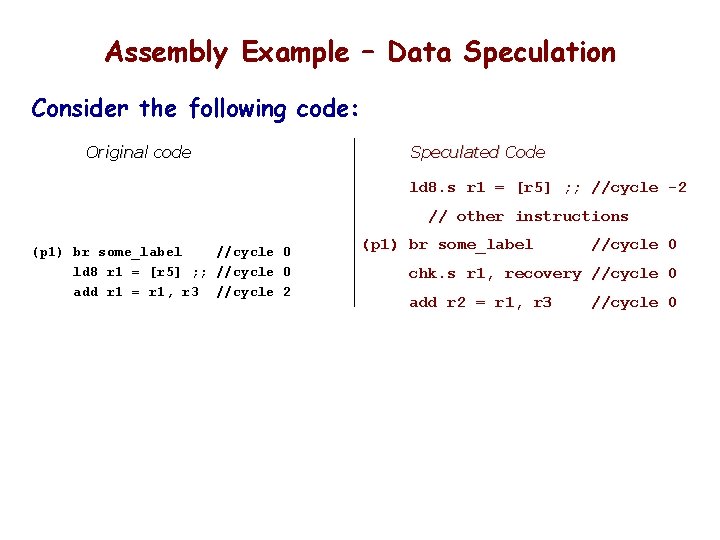

Assembly Example – Data Speculation Consider the following code: Original code Speculated Code ld 8. s r 1 = [r 5] ; ; //cycle -2 // other instructions (p 1) br some_label //cycle 0 ld 8 r 1 = [r 5] ; ; //cycle 0 add r 1 = r 1, r 3 //cycle 2 (p 1) br some_label //cycle 0 chk. s r 1, recovery //cycle 0 add r 2 = r 1, r 3 //cycle 0

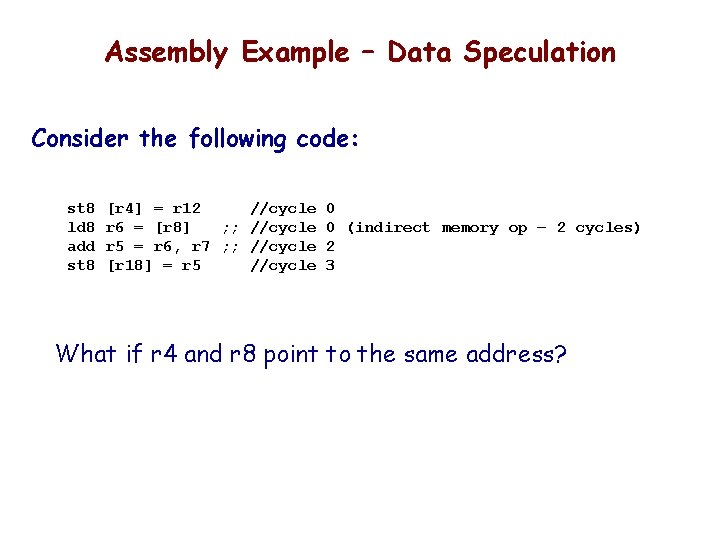

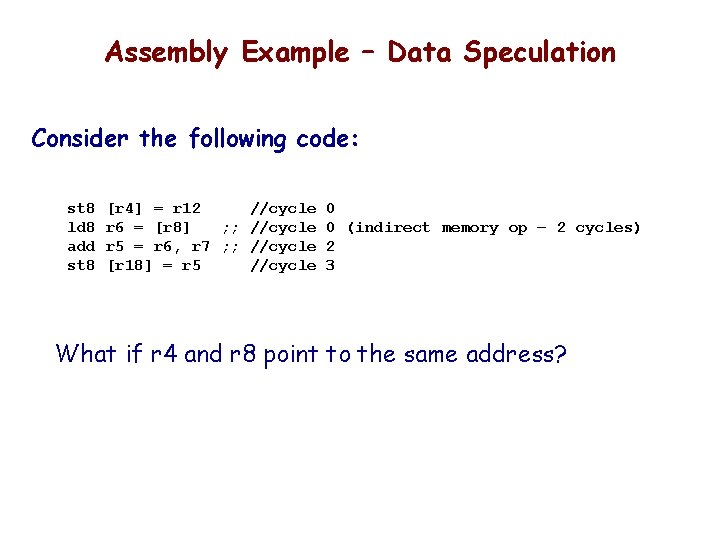

Assembly Example – Data Speculation Consider the following code: st 8 ld 8 add st 8 [r 4] = r 12 //cycle 0 r 6 = [r 8] ; ; //cycle 0 (indirect memory op – 2 cycles) r 5 = r 6, r 7 ; ; //cycle 2 [r 18] = r 5 //cycle 3 What if r 4 and r 8 point to the same address?

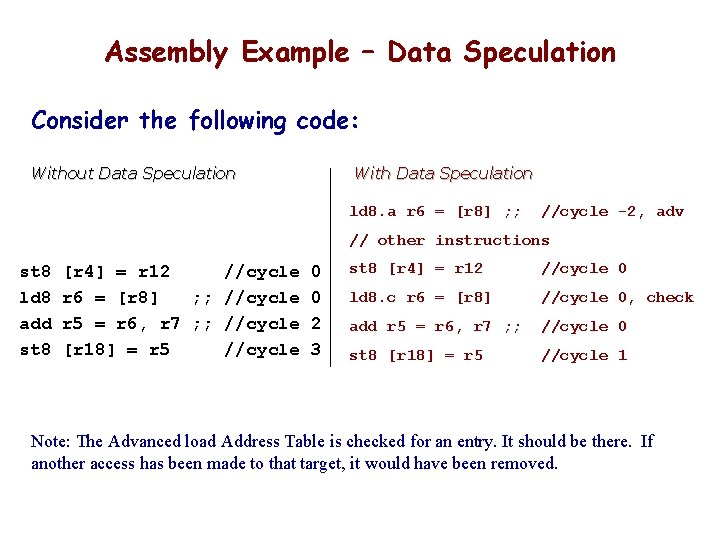

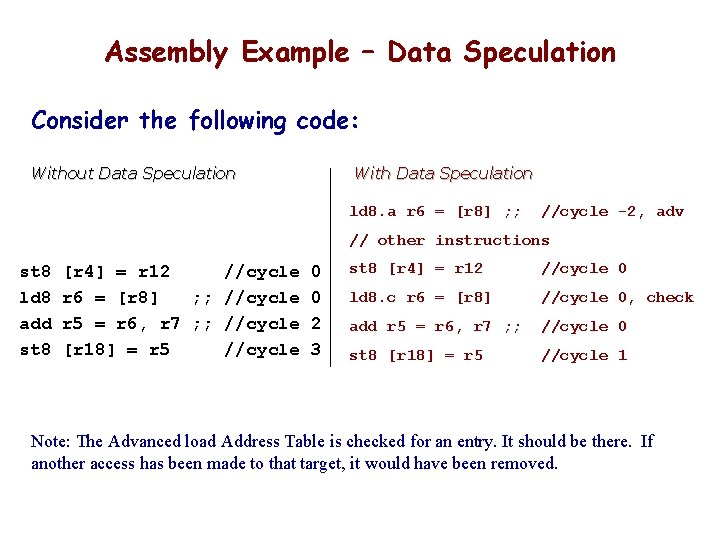

Assembly Example – Data Speculation Consider the following code: Without Data Speculation With Data Speculation ld 8. a r 6 = [r 8] ; ; //cycle -2, adv // other instructions st 8 ld 8 add st 8 [r 4] = r 12 //cycle 0 r 6 = [r 8] ; ; //cycle 0 r 5 = r 6, r 7 ; ; //cycle 2 [r 18] = r 5 //cycle 3 st 8 [r 4] = r 12 //cycle 0 ld 8. c r 6 = [r 8] //cycle 0, check add r 5 = r 6, r 7 ; ; //cycle 0 st 8 [r 18] = r 5 //cycle 1 Note: The Advanced load Address Table is checked for an entry. It should be there. If another access has been made to that target, it would have been removed.

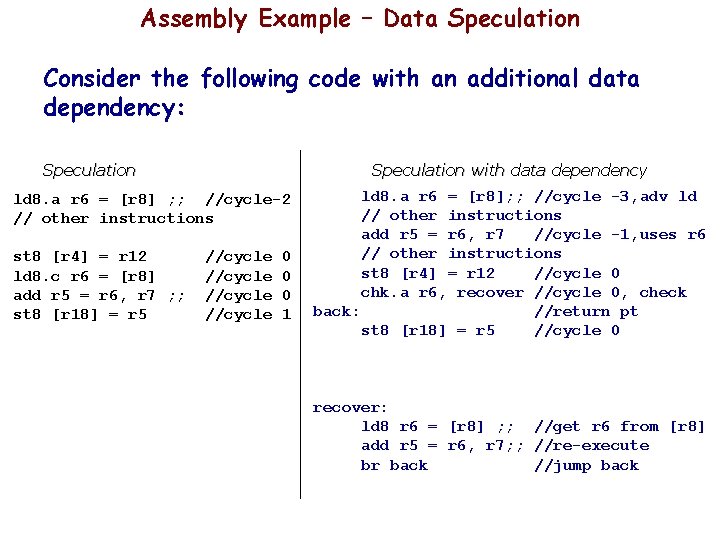

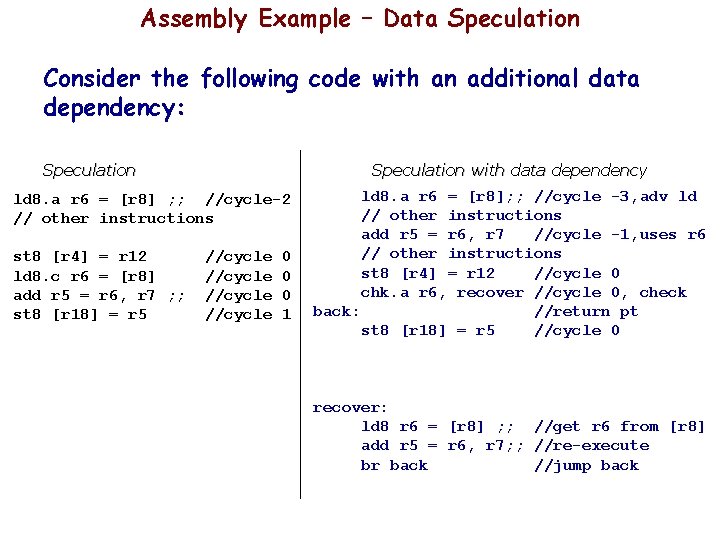

Assembly Example – Data Speculation Consider the following code with an additional data dependency: Speculation with data dependency ld 8. a r 6 = [r 8] ; ; //cycle-2 // other instructions st 8 [r 4] = r 12 ld 8. c r 6 = [r 8] add r 5 = r 6, r 7 ; ; st 8 [r 18] = r 5 //cycle 0 0 0 1 ld 8. a r 6 = [r 8]; ; //cycle -3, adv ld // other instructions add r 5 = r 6, r 7 //cycle -1, uses r 6 // other instructions st 8 [r 4] = r 12 //cycle 0 chk. a r 6, recover //cycle 0, check back: //return pt st 8 [r 18] = r 5 //cycle 0 recover: ld 8 r 6 = [r 8] ; ; //get r 6 from [r 8] add r 5 = r 6, r 7; ; //re-execute br back //jump back

![Software Pipelining Consider loop in which L 1 yi xi c ld Software Pipelining Consider loop in which: L 1: y[i] = x[i] + c ld](https://slidetodoc.com/presentation_image/083059a7af6848482cd0fd4819866965/image-32.jpg)

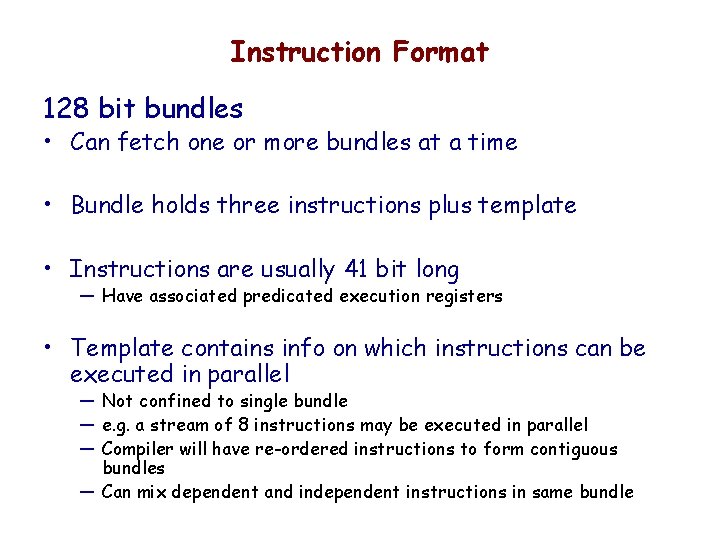

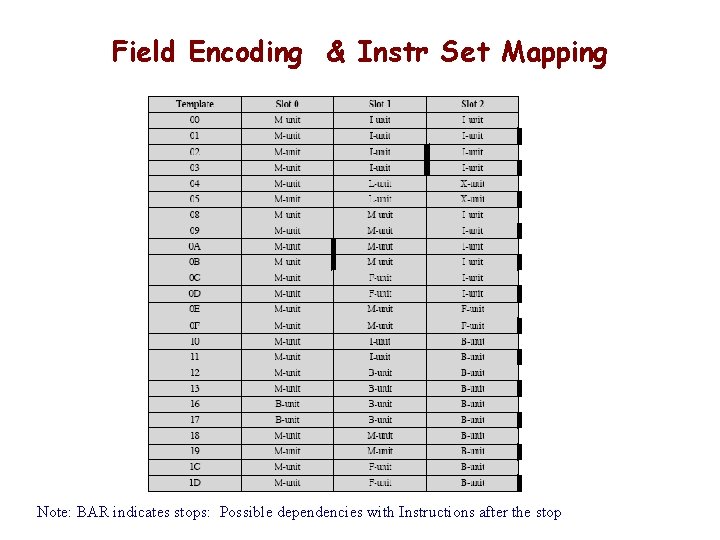

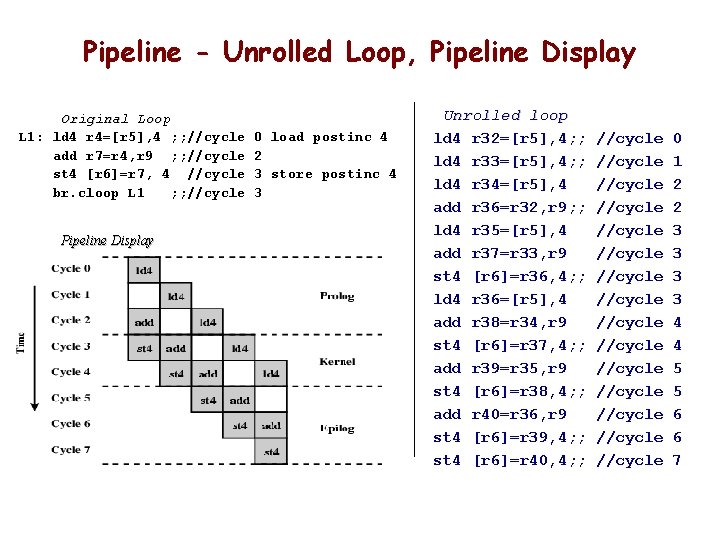

Software Pipelining Consider loop in which: L 1: y[i] = x[i] + c ld 4 r 4=[r 5], 4 ; ; //cycle 0 add r 7=r 4, r 9 ; ; //cycle 2 st 4 [r 6]=r 7, 4 //cycle 3 br. cloop L 1 ; ; //cycle 3 load postinc 4 r 9 holds c store postinc 4 • Adds constant to one vector and stores result in another • No opportunity for instruction level parallelism in one iteration • Instruction in iteration x all executed before iteration x+1 begins

IA-64 Register Set (recall)

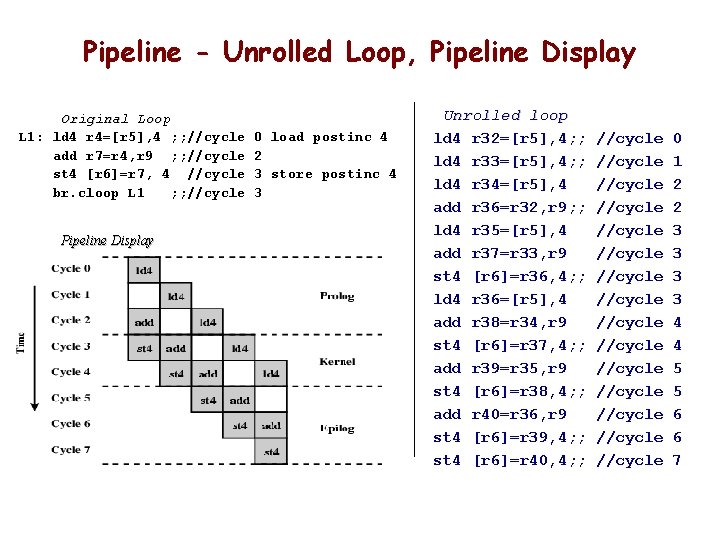

Pipeline - Unrolled Loop, Pipeline Display Original Loop L 1: ld 4 r 4=[r 5], 4 ; ; //cycle add r 7=r 4, r 9 ; ; //cycle st 4 [r 6]=r 7, 4 //cycle br. cloop L 1 ; ; //cycle Pipeline Display 0 load postinc 4 2 3 store postinc 4 3 Unrolled loop ld 4 r 32=[r 5], 4; ; ld 4 r 33=[r 5], 4; ; ld 4 r 34=[r 5], 4 add r 36=r 32, r 9; ; ld 4 r 35=[r 5], 4 add r 37=r 33, r 9 st 4 [r 6]=r 36, 4; ; ld 4 r 36=[r 5], 4 add r 38=r 34, r 9 st 4 [r 6]=r 37, 4; ; add r 39=r 35, r 9 st 4 [r 6]=r 38, 4; ; add r 40=r 36, r 9 st 4 [r 6]=r 39, 4; ; st 4 [r 6]=r 40, 4; ; //cycle //cycle //cycle //cycle 0 1 2 2 3 3 4 4 5 5 6 6 7

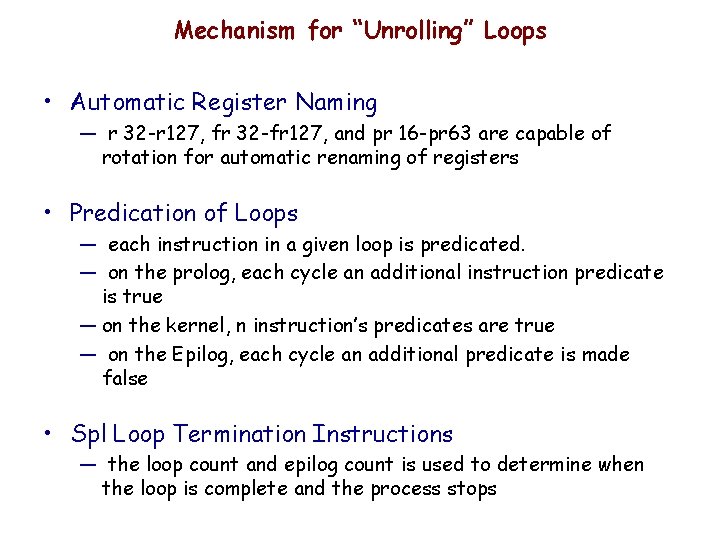

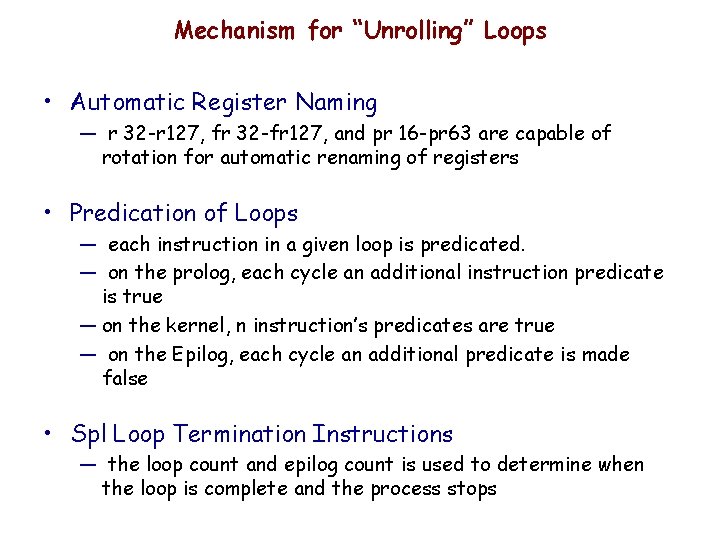

Mechanism for “Unrolling” Loops • Automatic Register Naming — r 32 -r 127, fr 32 -fr 127, and pr 16 -pr 63 are capable of rotation for automatic renaming of registers • Predication of Loops — each instruction in a given loop is predicated. — on the prolog, each cycle an additional instruction predicate is true — on the kernel, n instruction’s predicates are true — on the Epilog, each cycle an additional predicate is made false • Spl Loop Termination Instructions — the loop count and epilog count is used to determine when the loop is complete and the process stops

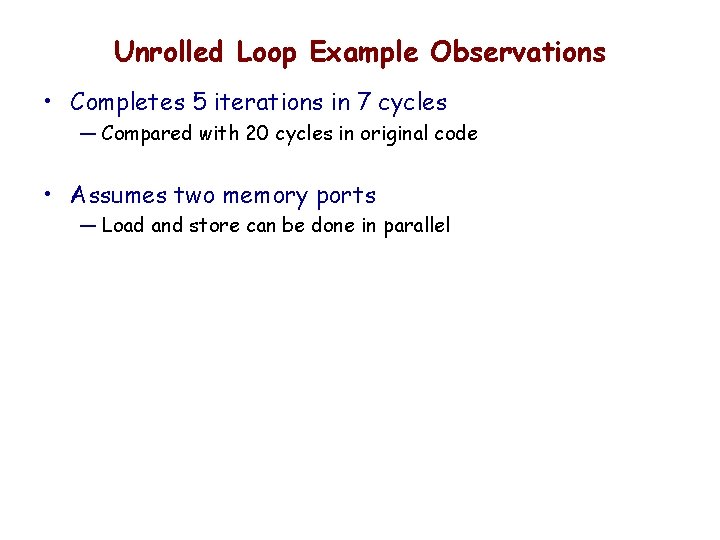

Unrolled Loop Example Observations • Completes 5 iterations in 7 cycles — Compared with 20 cycles in original code • Assumes two memory ports — Load and store can be done in parallel

IA-64 Register Stack • The Register Stack mechanism avoids unecessary movement of register data during procedure call and return (r 32 -r 127 are used in a rotation) — the number of local, & pass/return are specifiable — the “register renaming” allows locals to become hidden and pass/return to become local on a call, and changed back on a return — IF the stacking mechanism runs out of registers, the last used are moved to memory

Basic Concepts for IA-64 • Instruction level parallelism • Long or very long instruction words (LIW/VLIW) • Predicated Execution • Control Speculation • Data Speculation (or Speculative Loading) • Software Pipelining • “Revolvable” Register Stack — EXPLICIT in machine instruction, rather than determined at run time by processor — Fetch bigger chunks already “preprocessed” — Marking groups of instructions for a late decision on “execution”. — Go ahead and fetch & decode instructions, but keep track of them so the decision to “issue” them, or not, can be practically made later — Go ahead and load data early so it is ready when needed, and have a practical way to recover if speculation proved wrong — Multiple iterations of a loop can be executed in parallel — Stack Frames are programmable and used to reduce unnecessary movement of data on procedure calls