3122021 Distributed Training on HPC Presented By Aaron

3/12/2021 Distributed Training on HPC Presented By: Aaron D. Saxton, Ph. D

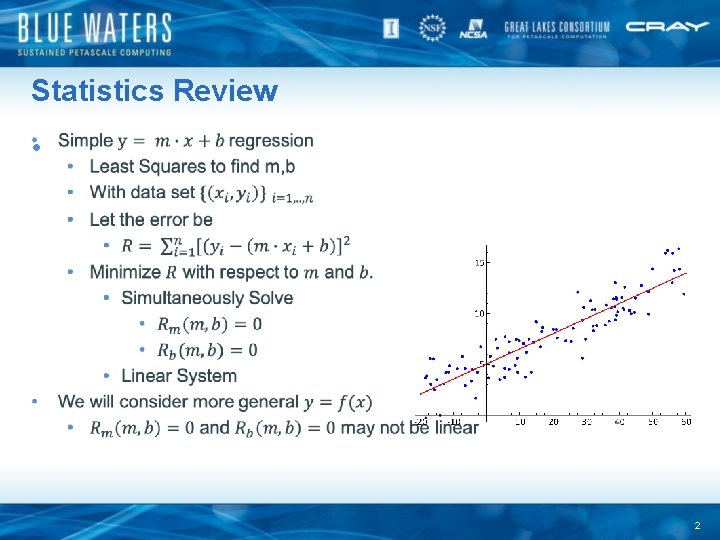

Statistics Review • 2

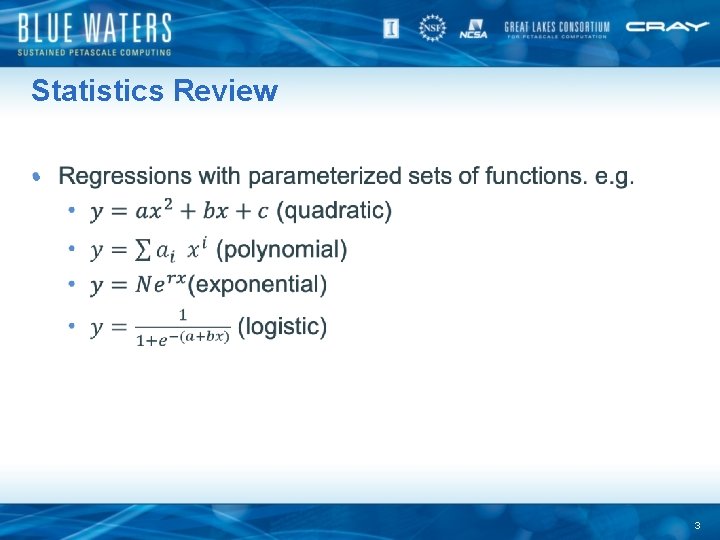

Statistics Review • 3

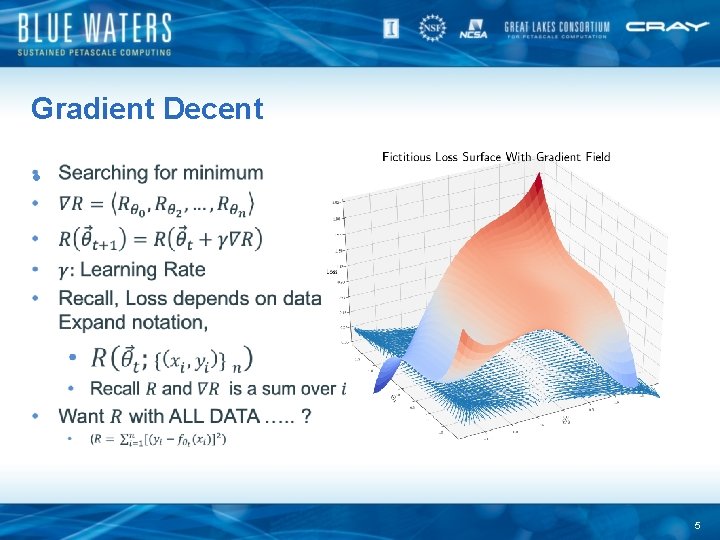

Gradient Decent • 5

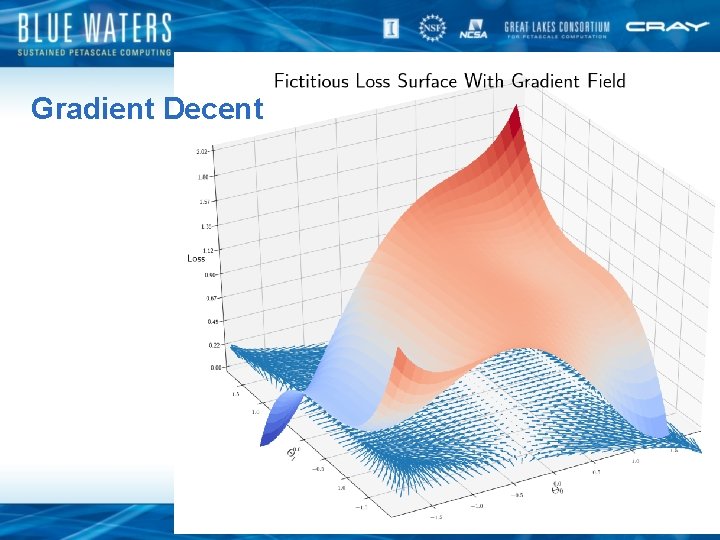

Gradient Decent 6

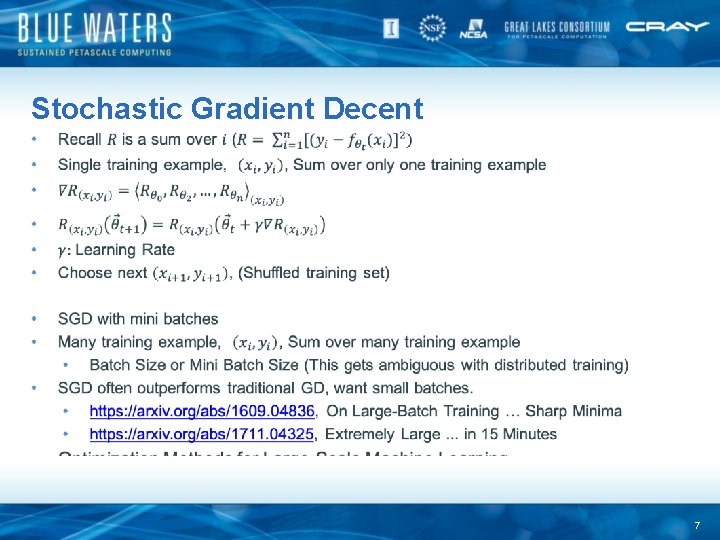

Stochastic Gradient Decent 7

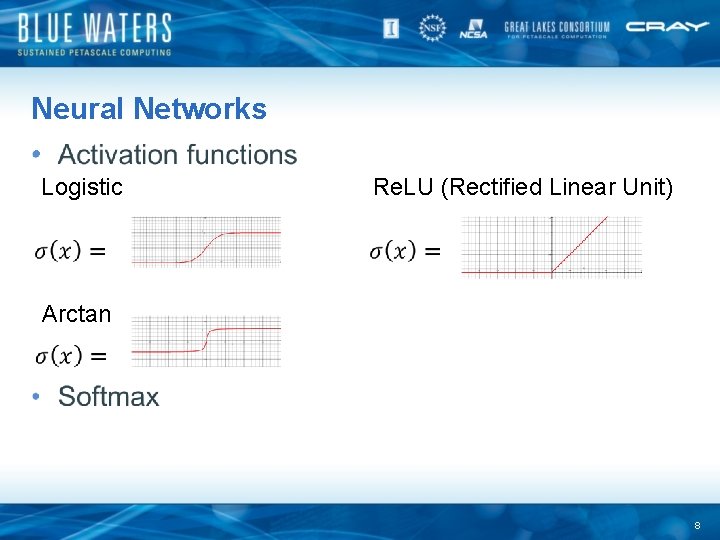

Neural Networks • Logistic Re. LU (Rectified Linear Unit) Arctan 8

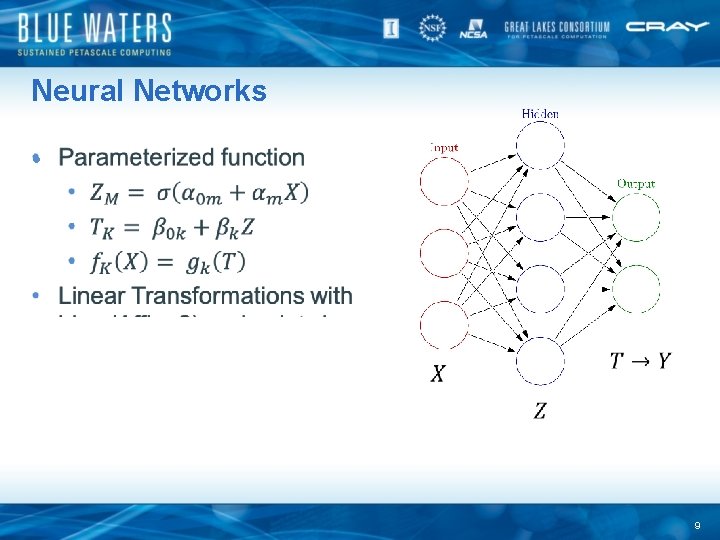

Neural Networks • 9

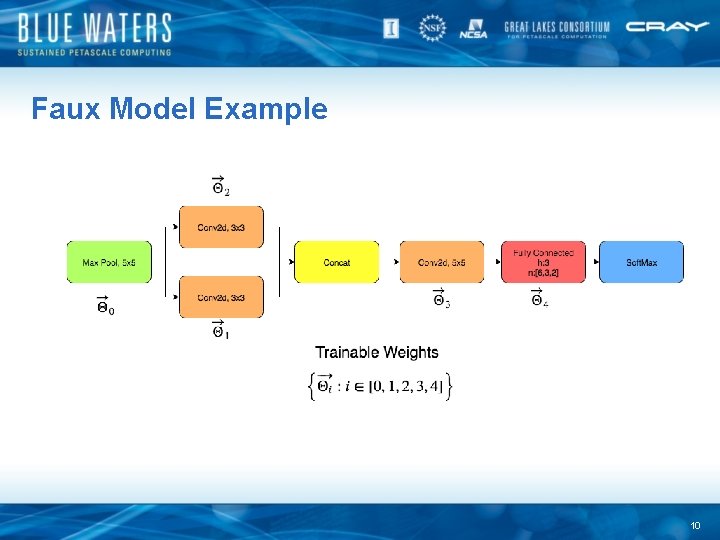

Faux Model Example 10

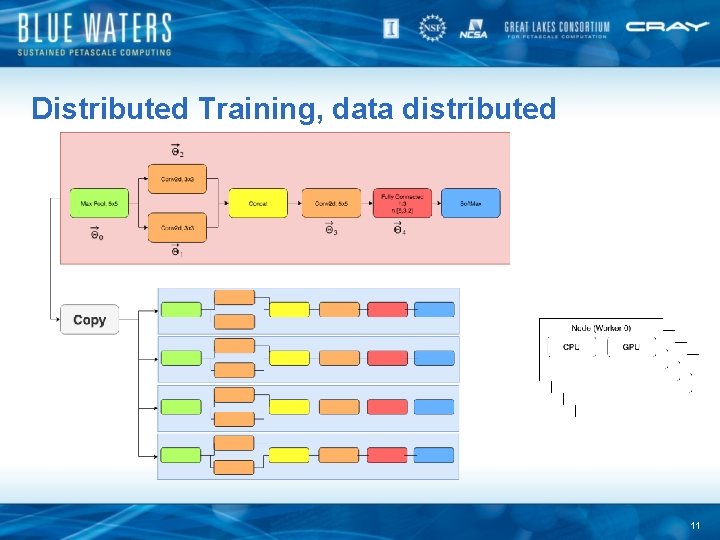

Distributed Training, data distributed 11

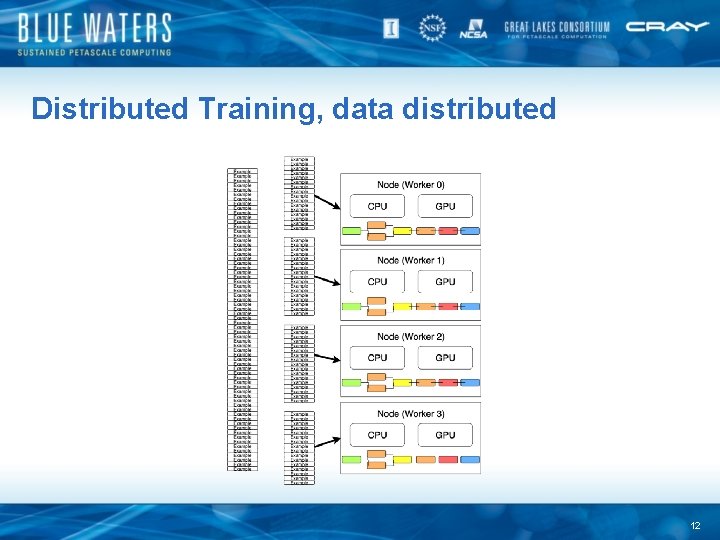

Distributed Training, data distributed 12

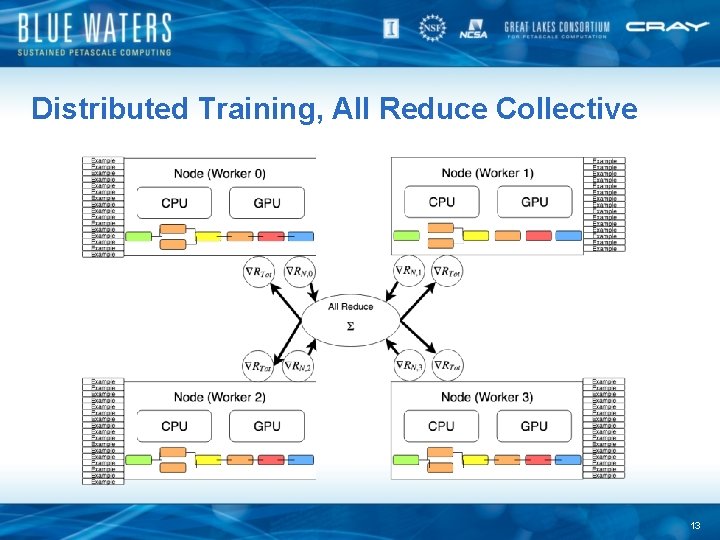

Distributed Training, All Reduce Collective 13

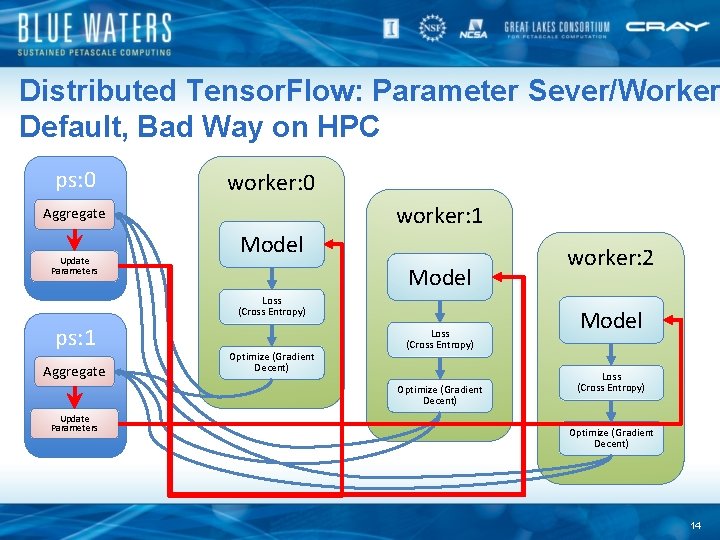

Distributed Tensor. Flow: Parameter Sever/Worker Default, Bad Way on HPC ps: 0 worker: 1 Aggregate Update Parameters Model Loss (Cross Entropy) ps: 1 Aggregate Optimize (Gradient Decent) Loss (Cross Entropy) Optimize (Gradient Decent) Update Parameters worker: 2 Model Loss (Cross Entropy) Optimize (Gradient Decent) 14

Practical Implementations • • Native Tensorflow Native Py. Torch Horovod Cray ML Plugin 15

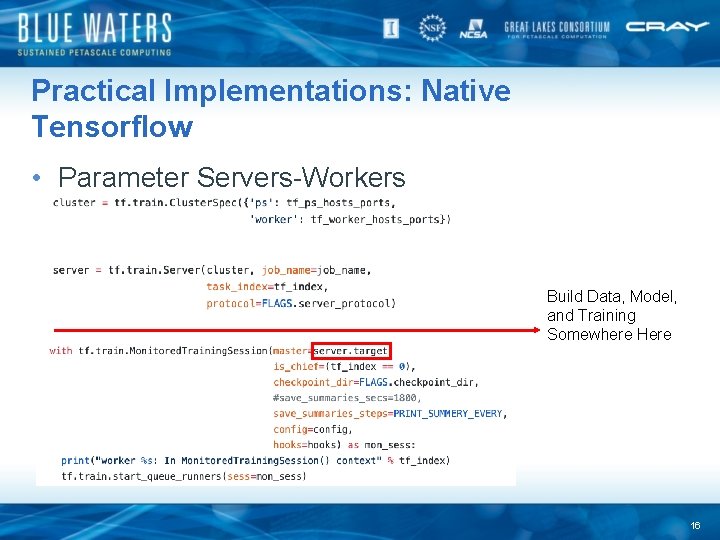

Practical Implementations: Native Tensorflow • Parameter Servers-Workers Build Data, Model, and Training Somewhere Here 16

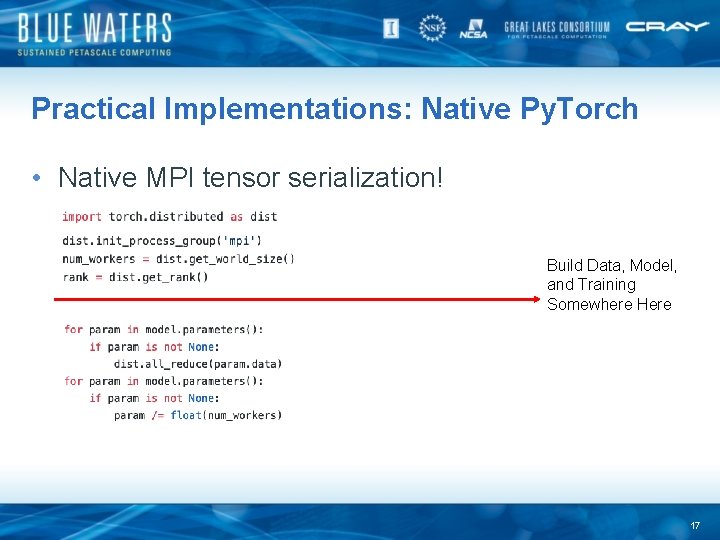

Practical Implementations: Native Py. Torch • Native MPI tensor serialization! Build Data, Model, and Training Somewhere Here 17

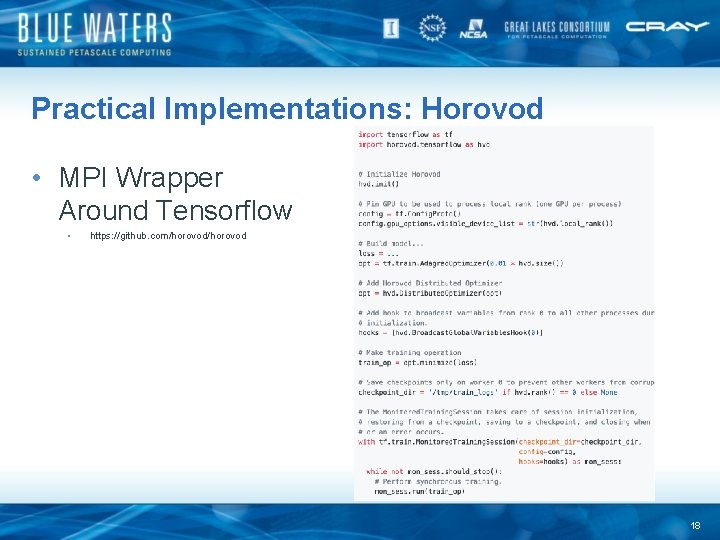

Practical Implementations: Horovod • MPI Wrapper Around Tensorflow • https: //github. com/horovod 18

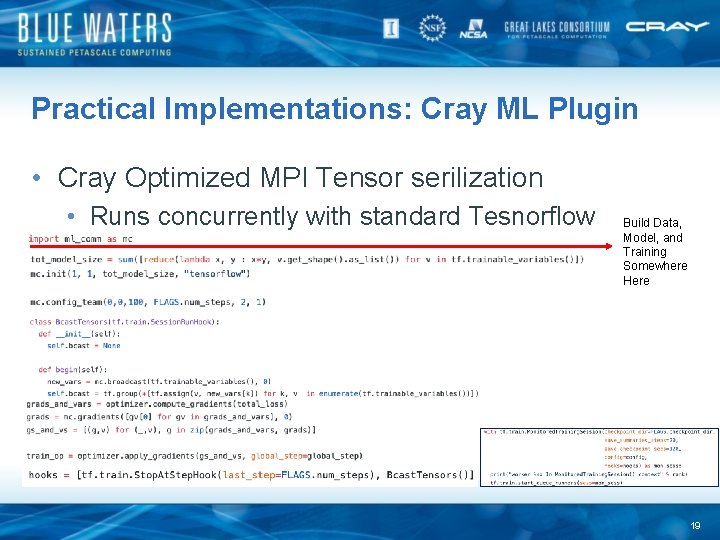

Practical Implementations: Cray ML Plugin • Cray Optimized MPI Tensor serilization • Runs concurrently with standard Tesnorflow Build Data, Model, and Training Somewhere Here 19

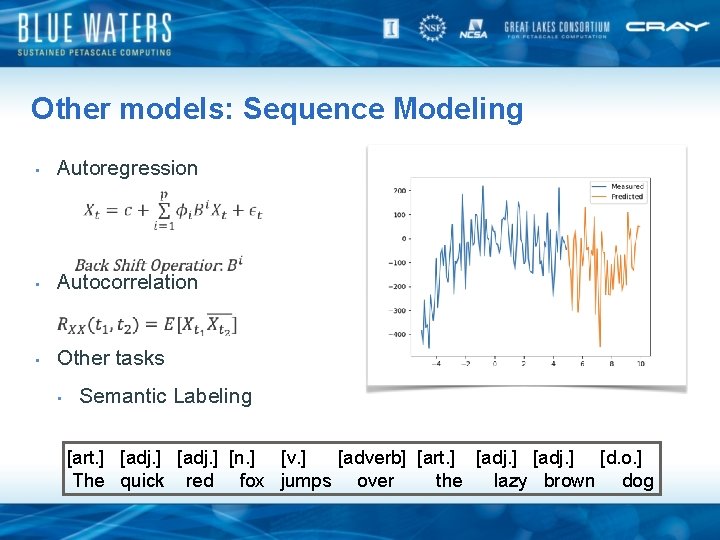

Other models: Sequence Modeling • Autoregression • Autocorrelation • Other tasks • Semantic Labeling [art. ] [adj. ] [n. ] [v. ] [adverb] [art. ] [adj. ] [d. o. ] The quick red fox jumps over the lazy brown dog

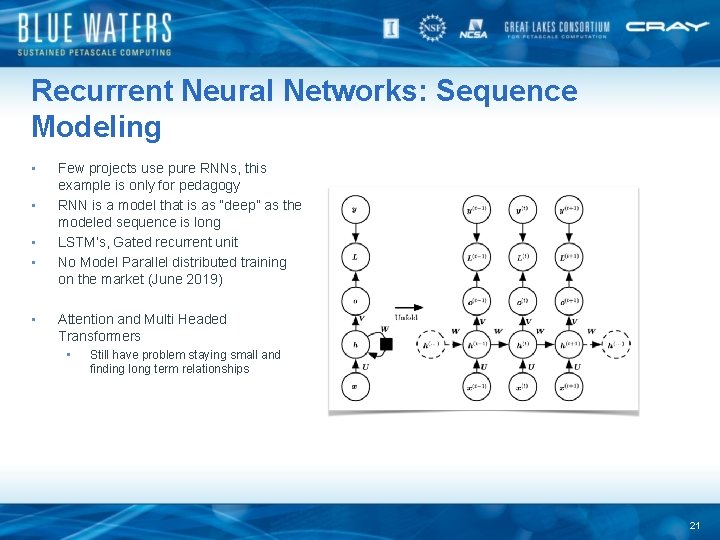

Recurrent Neural Networks: Sequence Modeling • • • Few projects use pure RNNs, this example is only for pedagogy RNN is a model that is as “deep” as the modeled sequence is long LSTM’s, Gated recurrent unit No Model Parallel distributed training on the market (June 2019) Attention and Multi Headed Transformers • Still have problem staying small and finding long term relationships 21

- Slides: 20