24 th ALICE RRB l Collaboration News l

24 th ALICE RRB l Collaboration News l Project Status ð Production (TOF, TRD, PHOS, PMD) ð Installation ð Commissioning 15 April 2008 24 th ALICE RRB J. Schukraft CERN-RRB-2008 -20

Collaboration News l New Institutes ð Purdue (USA) ð Tennessee (USA); EMCAL ð Yonsei (Korea) TRD, Physics ð Pusan (Korea): replaces Pohang which left end 2007 ð Istanbul (Yildiz Technical University, Turkey) µ associate member while looking for increased funding Physics l Institutes leaving ð IPE Karlsruhe (Germany) leaving ALICE µ associate member, completed its technical contribution to the TRD electronics l Ongoing discussions ð Houston (USA, awaiting DOE approval) EMCAL, Grid computing 2 24/10/2007 23 rd RRB J. Schukraft

Collaboration News l Elections/Nominations ð Management Board: 4 members re-elected 1. 6. 2008 for 3 years µ E. Nappi (Bari), R. Kamermans (NIKHEF/Utrecht), µ Y. Schutz (Nantes), J. P. Revol (CERN) ð Editorial Board chair: µ H. A. Gustafsson (Lund) re-elected 1. 4. 2008 for 3 years ð Since 5 April: Technical Coordinator: L. Leistam; Deputy TC: W. Riegler µ Special thanks to C. Fabjan, who lead ALICE as TC from 2001 to 2008 l ALICE Industrial awards (April 2008) 3 ð Xilinx Inc, San Jose, CA end 2001 early 2008 24/10/2007 23 rd RRB J. Schukraft

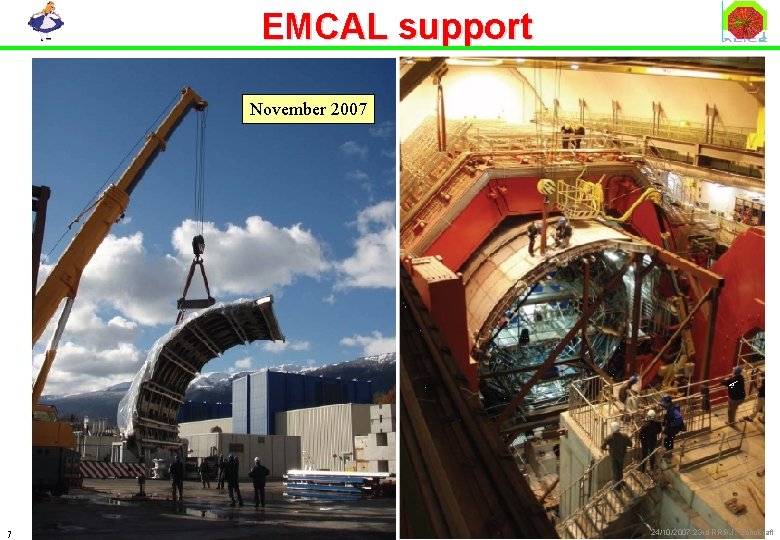

Funding: EMCAL l DOE CD 2/3 review of EMCAL 18/19 December ð outcome: EMCAL is now approved and funded in the US ! µ help and participation from France/Italy/CERN recognized and appreciated by DOE ð Total Project Cost: 13. 5 M US$ µ not including R&D money, emcal support, CF contribution (~ 500 k CHF), computing ð Schedule: µ production started in April µ trying to accelerate by 1 year (cash flow) ð Computing Resources: proportional to Ph. Ds (~ 7% for 40 Ph. D’s in 2012) µ computing plan submitted to DOE, under discussion 4 24/10/2007 23 rd RRB J. Schukraft

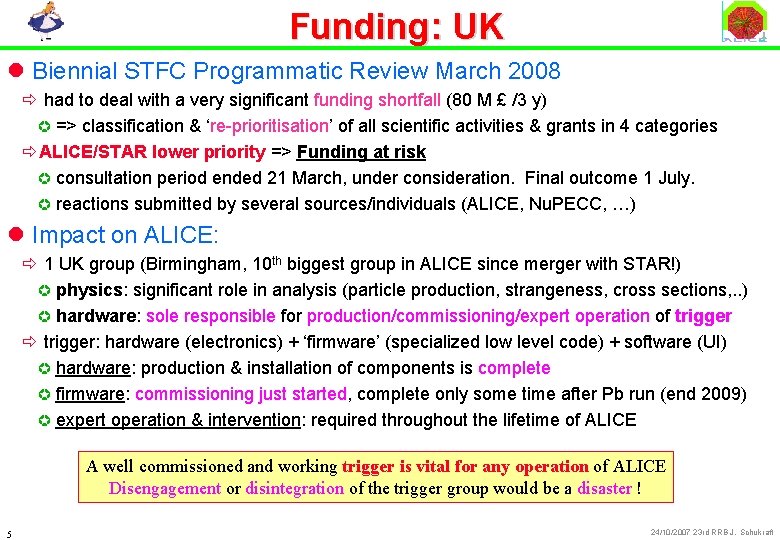

Funding: UK l Biennial STFC Programmatic Review March 2008 ð had to deal with a very significant funding shortfall (80 M £ /3 y) µ => classification & ‘re-prioritisation’ of all scientific activities & grants in 4 categories ðALICE/STAR lower priority => Funding at risk µ consultation period ended 21 March, under consideration. Final outcome 1 July. µ reactions submitted by several sources/individuals (ALICE, Nu. PECC, …) l Impact on ALICE: ð 1 UK group (Birmingham, 10 th biggest group in ALICE since merger with STAR!) µ physics: significant role in analysis (particle production, strangeness, cross sections, . . ) µ hardware: sole responsible for production/commissioning/expert operation of trigger ð trigger: hardware (electronics) + ‘firmware’ (specialized low level code) + software (UI) µ hardware: production & installation of components is complete µ firmware: commissioning just started, complete only some time after Pb run (end 2009) µ expert operation & intervention: required throughout the lifetime of ALICE A well commissioned and working trigger is vital for any operation of ALICE Disengagement or disintegration of the trigger group would be a disaster ! 5 24/10/2007 23 rd RRB J. Schukraft

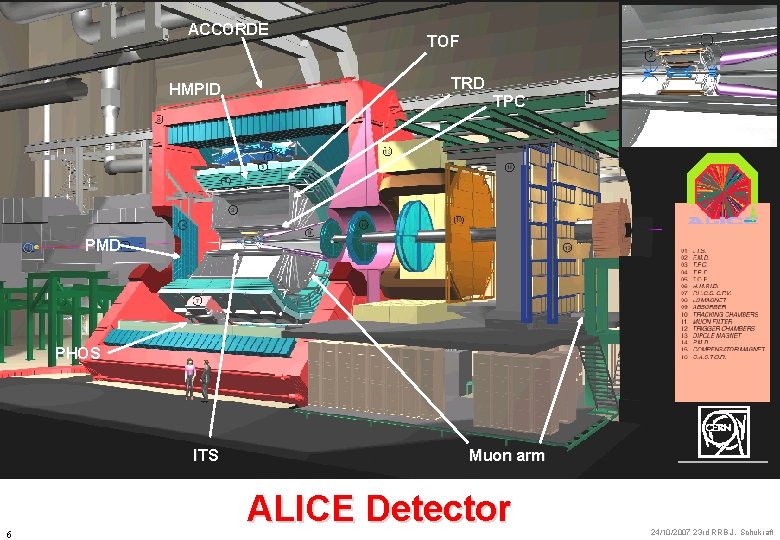

ACCORDE HMPID TOF TRD TPC PMD PHOS ITS 6 Muon arm ALICE Detector 24/10/2007 23 rd RRB J. Schukraft

EMCAL support November 2007 7 24/10/2007 23 rd RRB J. Schukraft

‘Mini frame’ 29 Nov 2007: Descent of the last big structure 8 24/10/2007 23 rd RRB J. Schukraft

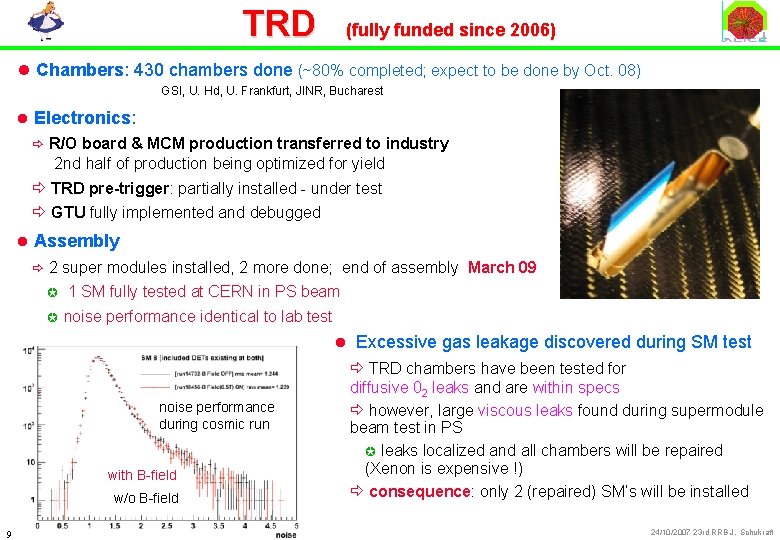

TRD (fully funded since 2006) l Chambers: 430 chambers done (~80% completed; expect to be done by Oct. 08) GSI, U. Hd, U. Frankfurt, JINR, Bucharest l Electronics: ð R/O board & MCM production transferred to industry 2 nd half of production being optimized for yield ð TRD pre-trigger: partially installed - under test ð GTU fully implemented and debugged l Assembly ð 2 super modules installed, 2 more done; end of assembly March 09 µ µ 1 SM fully tested at CERN in PS beam noise performance identical to lab test l Excessive gas leakage discovered during SM test noise performance during cosmic run with B-field w/o B-field 9 ð TRD chambers have been tested for diffusive 02 leaks and are within specs ð however, large viscous leaks found during supermodule beam test in PS µ leaks localized and all chambers will be repaired (Xenon is expensive !) ð consequence: only 2 (repaired) SM’s will be installed 24/10/2007 23 rd RRB J. Schukraft

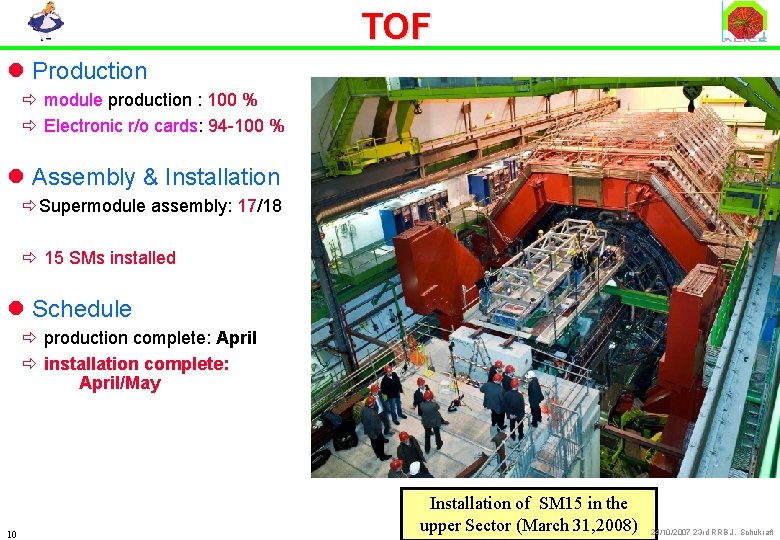

TOF l Production ð module production : 100 % ð Electronic r/o cards: 94 -100 % l Assembly & Installation ðSupermodule assembly: 17/18 ð 15 SMs installed l Schedule ð production complete: April ð installation complete: April/May 10 Installation of SM 15 in the upper Sector (March 31, 2008) 24/10/2007 23 rd RRB J. Schukraft

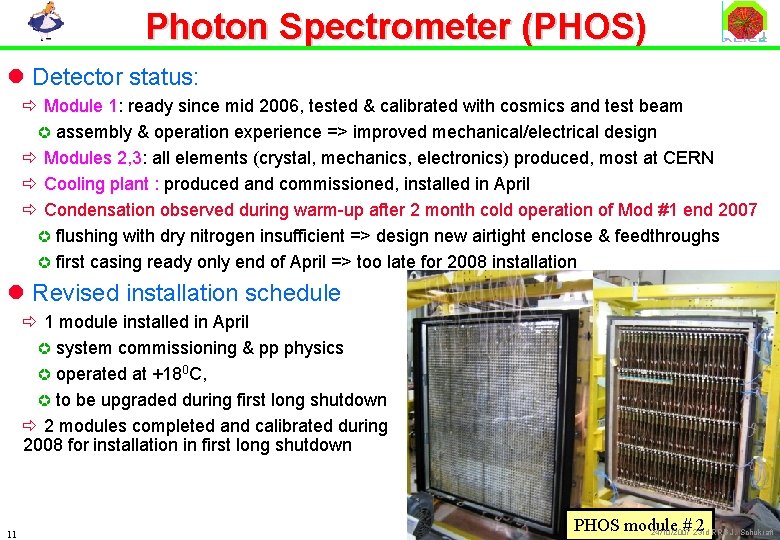

Photon Spectrometer (PHOS) l Detector status: ð Module 1: ready since mid 2006, tested & calibrated with cosmics and test beam µ assembly & operation experience => improved mechanical/electrical design ð Modules 2, 3: all elements (crystal, mechanics, electronics) produced, most at CERN ð Cooling plant : produced and commissioned, installed in April ð Condensation observed during warm-up after 2 month cold operation of Mod #1 end 2007 µ flushing with dry nitrogen insufficient => design new airtight enclose & feedthroughs µ first casing ready only end of April => too late for 2008 installation l Revised installation schedule ð 1 module installed in April µ system commissioning & pp physics µ operated at +180 C, µ to be upgraded during first long shutdown ð 2 modules completed and calibrated during 2008 for installation in first long shutdown 11 PHOS module # 23 rd 2 RRB J. Schukraft 24/10/2007

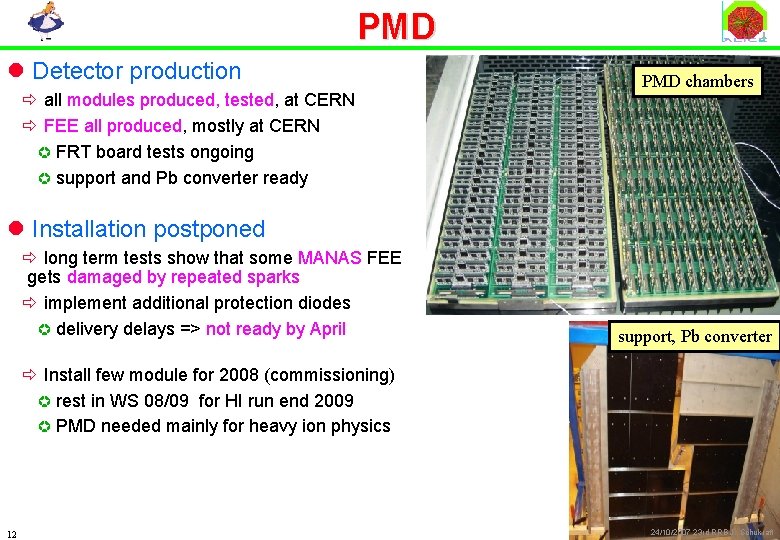

PMD l Detector production ð all modules produced, tested, at CERN ð FEE all produced, mostly at CERN µ FRT board tests ongoing µ support and Pb converter ready PMD chambers l Installation postponed ð long term tests show that some MANAS FEE gets damaged by repeated sparks ð implement additional protection diodes µ delivery delays => not ready by April support, Pb converter ð Install few module for 2008 (commissioning) µ rest in WS 08/09 for HI run end 2009 µ PMD needed mainly for heavy ion physics 12 24/10/2007 23 rd RRB J. Schukraft

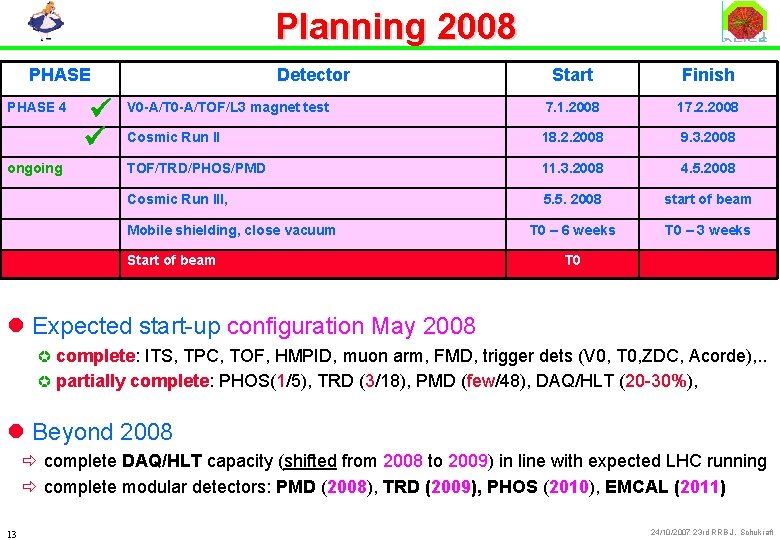

Planning 2008 PHASE 4 ongoing ü ü Detector Start Finish V 0 -A/TOF/L 3 magnet test 7. 1. 2008 17. 2. 2008 Cosmic Run II 18. 2. 2008 9. 3. 2008 TOF/TRD/PHOS/PMD 11. 3. 2008 4. 5. 2008 Cosmic Run III, 5. 5. 2008 start of beam T 0 – 6 weeks T 0 – 3 weeks T 0 Mobile shielding, close vacuum Start of beam l Expected start-up configuration May 2008 complete: ITS, TPC, TOF, HMPID, muon arm, FMD, trigger dets (V 0, T 0, ZDC, Acorde), . . µ partially complete: PHOS(1/5), TRD (3/18), PMD (few/48), DAQ/HLT (20 -30%), µ l Beyond 2008 ð complete DAQ/HLT capacity (shifted from 2008 to 2009) in line with expected LHC running ð complete modular detectors: PMD (2008), TRD (2009), PHOS (2010), EMCAL (2011) 13 24/10/2007 23 rd RRB J. Schukraft

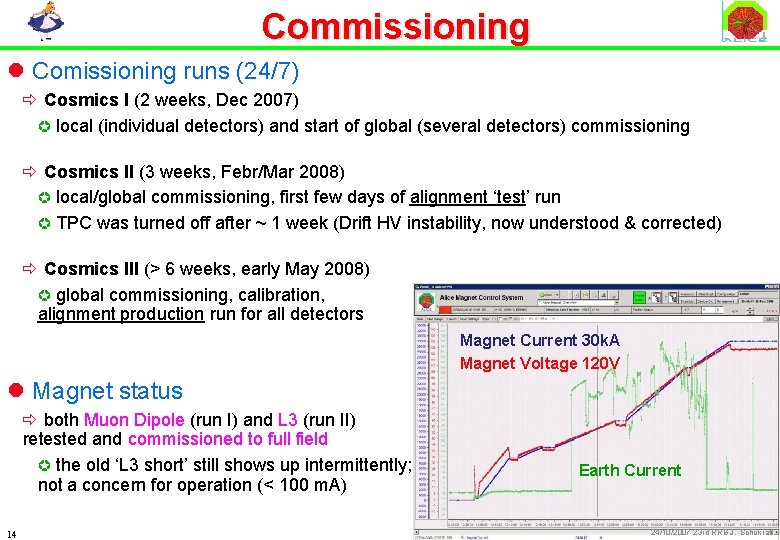

Commissioning l Comissioning runs (24/7) ð Cosmics I (2 weeks, Dec 2007) µ local (individual detectors) and start of global (several detectors) commissioning ð Cosmics II (3 weeks, Febr/Mar 2008) µ local/global commissioning, first few days of alignment ‘test’ run µ TPC was turned off after ~ 1 week (Drift HV instability, now understood & corrected) ð Cosmics III (> 6 weeks, early May 2008) µ global commissioning, calibration, alignment production run for all detectors Magnet Current 30 k. A Magnet Voltage 120 V l Magnet status ð both Muon Dipole (run I) and L 3 (run II) retested and commissioned to full field µ the old ‘L 3 short’ still shows up intermittently; not a concern for operation (< 100 m. A) 14 Earth Current 24/10/2007 23 rd RRB J. Schukraft

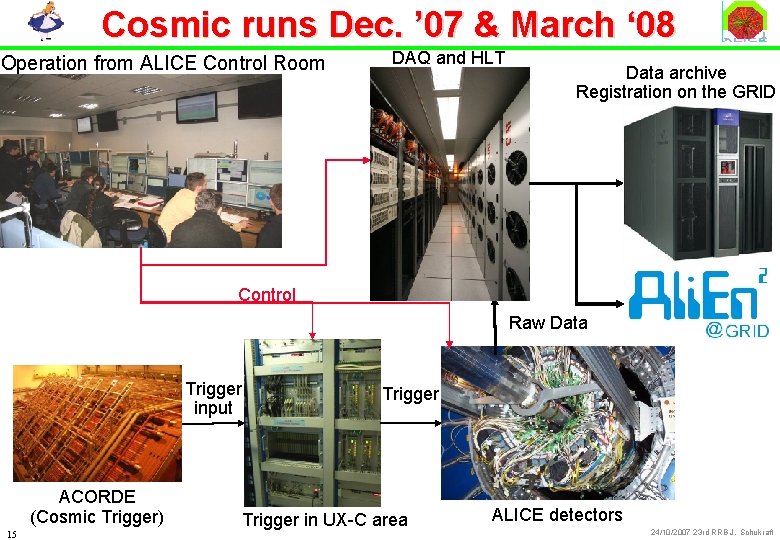

Cosmic runs Dec. ’ 07 & March ‘ 08 Operation from ALICE Control Room DAQ and HLT Data archive Registration on the GRID Control Raw Data Trigger input ACORDE (Cosmic Trigger) 15 Trigger in UX-C area ALICE detectors 24/10/2007 23 rd RRB J. Schukraft

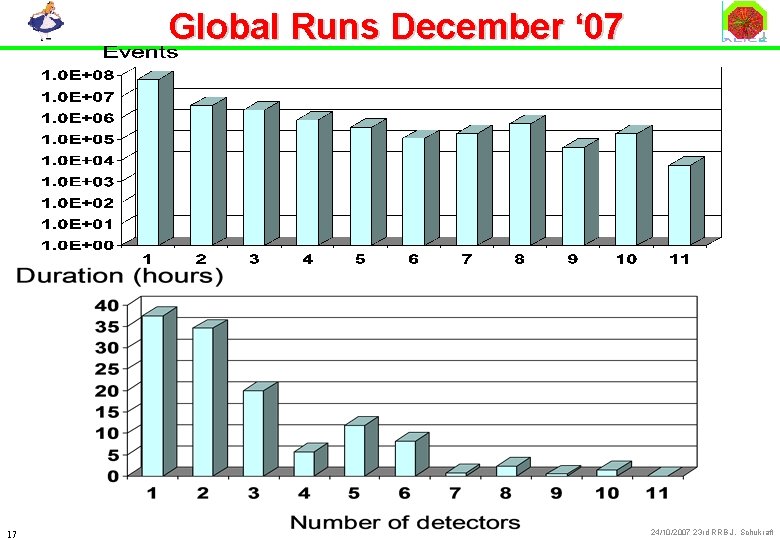

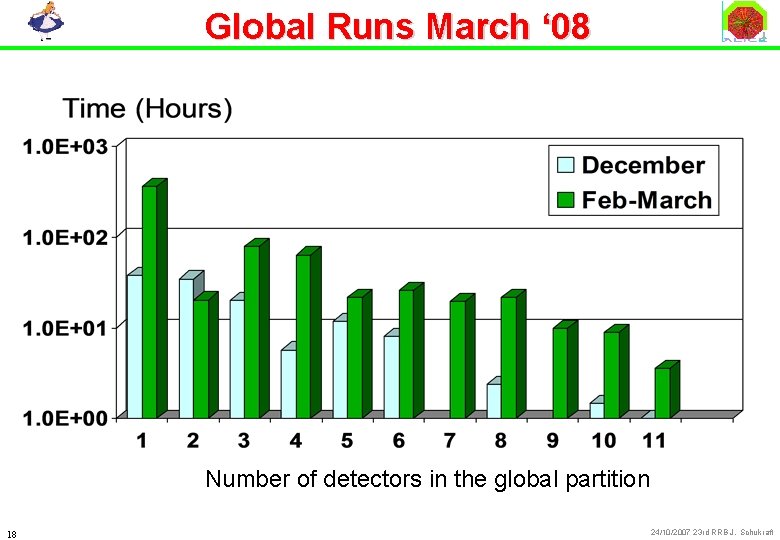

Cosmic Runs l 14 detectors could run standalone and in some global runs: ð SPD, SSD, SDD, TPC, TRD, TOF, Muon TRK, Muon TRG, HMPID, FMD, V 0, T 0, ACORDE, ZDC (missing: PHOS/CPV, PMD, EMCAL) µ R/O for a subset of the installed detector (limited by services, connections, power supply. . ) l Global runs with multiple detectors (up to 11) : ð All with pulser or ACORDE as Trigger detector and the Central Trigger Processor ð Event building on several GDCs ð Data recording: µ ROOT format µ Migration to CASTOR 82 TB from 14/02 to 09/03 (26 days) µ Registration in Ali. En 90 k files ð Electronic Logbook 16 24/10/2007 23 rd RRB J. Schukraft

Global Runs December ‘ 07 17 24/10/2007 23 rd RRB J. Schukraft

Global Runs March ‘ 08 Number of detectors in the global partition 18 24/10/2007 23 rd RRB J. Schukraft

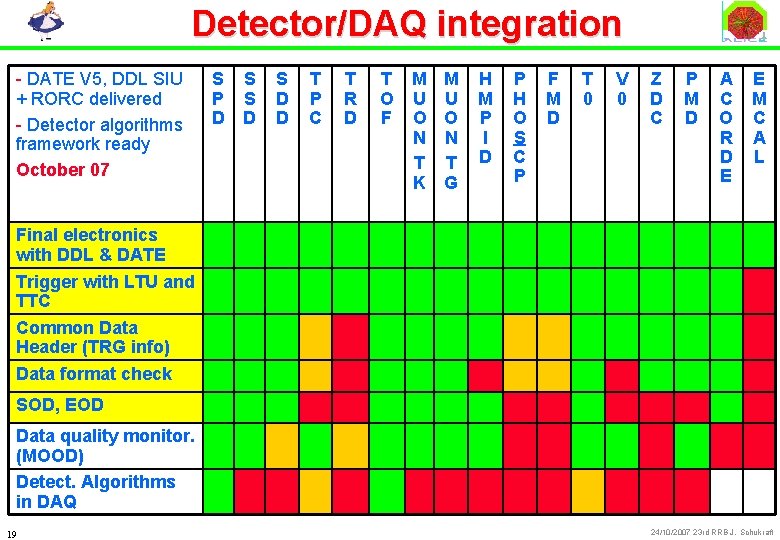

Detector/DAQ integration - DATE V 5, DDL SIU + RORC delivered - Detector algorithms framework ready October 07 S P D S S D D T P C T R D T O F M U O N T K M U O N T G H M P I D P H O S C P F M D T 0 V 0 Z D C P M D A C O R D E E M C A L Final electronics with DDL & DATE Trigger with LTU and TTC Common Data Header (TRG info) Data format check SOD, EOD Data quality monitor. (MOOD) Detect. Algorithms in DAQ 19 24/10/2007 23 rd RRB J. Schukraft

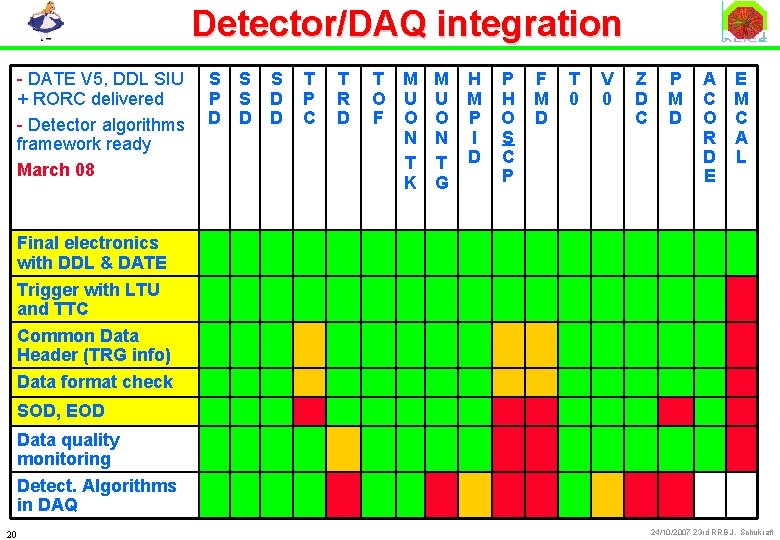

Detector/DAQ integration - DATE V 5, DDL SIU + RORC delivered - Detector algorithms framework ready March 08 S S P S D D T P C T R D T M M O U U F O O N N T T K G H M P I D P H O S C P F M D T 0 V 0 Z D C P M D A E C M O C R A D L E Final electronics with DDL & DATE Trigger with LTU and TTC Common Data Header (TRG info) Data format check SOD, EOD Data quality monitoring Detect. Algorithms in DAQ 20 24/10/2007 23 rd RRB J. Schukraft

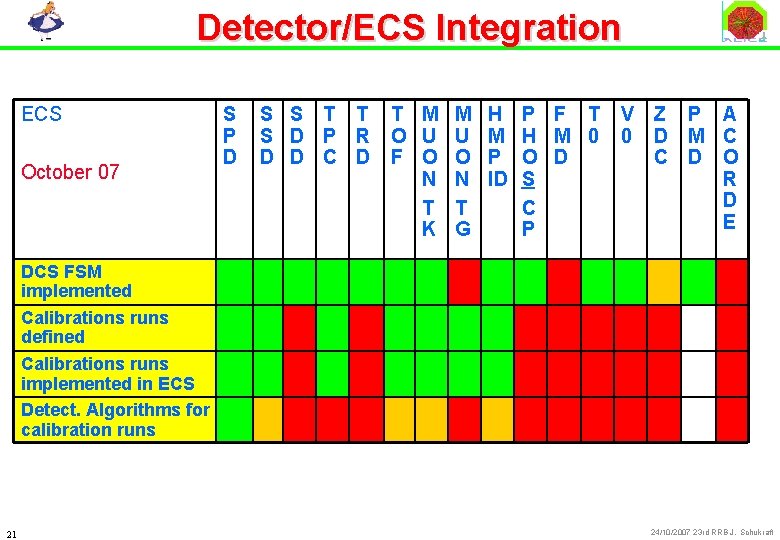

Detector/ECS Integration ECS October 07 S P D S S T T S D P R D D C D T M M H O U U M F O O P N N ID T T K G P F T H M 0 O D S C P V Z P A 0 D M C C D O R D E DCS FSM implemented Calibrations runs defined Calibrations runs implemented in ECS Detect. Algorithms for calibration runs 21 24/10/2007 23 rd RRB J. Schukraft

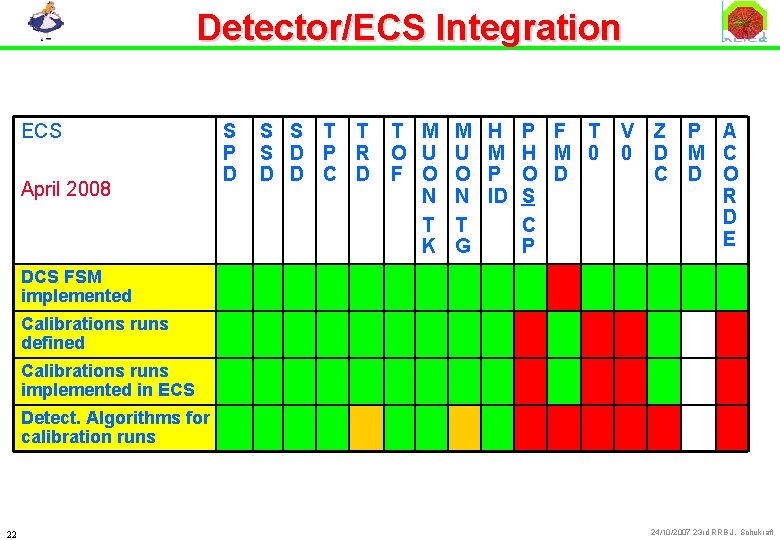

Detector/ECS Integration ECS April 2008 S P D S S T T S D P R D D C D T M M H O U U M F O O P N N ID T T K G P F T H M 0 O D S C P V Z P A 0 D M C C D O R D E DCS FSM implemented Calibrations runs defined Calibrations runs implemented in ECS Detect. Algorithms for calibration runs 22 24/10/2007 23 rd RRB J. Schukraft

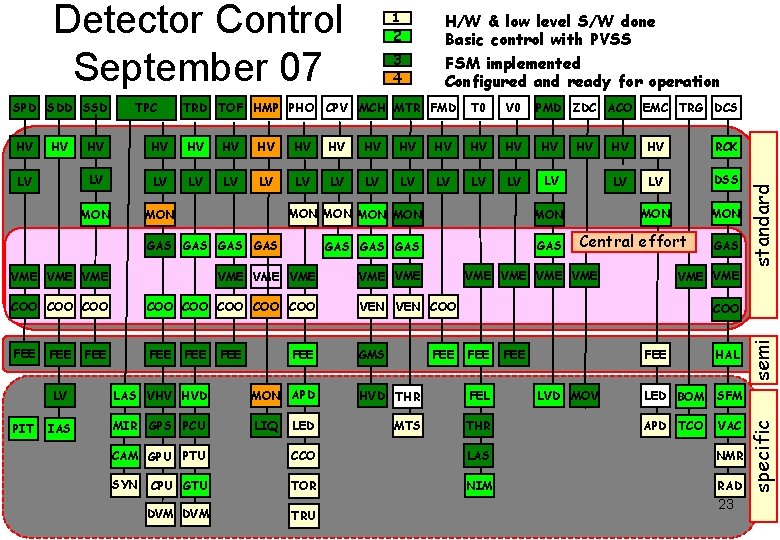

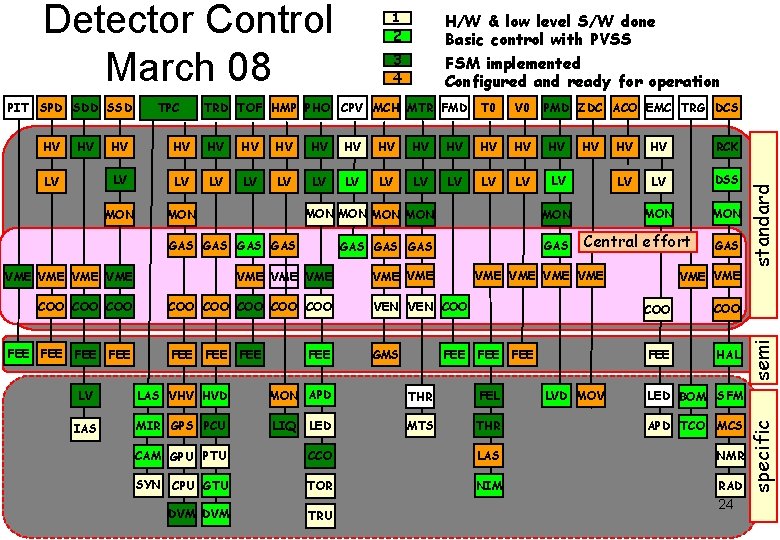

HV LV 3 4 TRD TOF HMP PHO CPV MCH MTR FMD PMD ZDC ACO EMC TRG DCS HV HV HV LV LV LV LV MON MON GAS GAS VME VME VME COO COO VEN COO FEE GMS FEE FEE HV HV HV RCK LV LV DSS MON Central effort VME VME PIT V 0 HV GAS GAS FEE T 0 FEE GAS VME standard HV TPC 2 H/W & low level S/W done Basic control with PVSS FSM implemented Configured and ready for operation COO FEE LV LAS VHV HVD MON APD HVD THR FEL IAS MIR GPS PCU LIQ LED MTS THR FEE LVD MOV FEE HAL LED BOM SFM APD TCO VAC CAM GPU PTU CCO LAS NMR SYN CPU GTU TOR NIM RAD DVM TRU 23 semi SPD SDD SSD 1 specific Detector Control September 07

HV LV 2 3 4 TRD TOF HMP PHO CPV MCH MTR FMD T 0 V 0 PMD ZDC ACO EMC TRG DCS HV HV HV HV LV LV LV LV MON MON GAS GAS VME HV HV HV RCK LV LV DSS MON Central effort VME VME GAS VME VME VME COO COO VEN COO COO FEE FEE GMS FEE HAL FEE FEE LV LAS VHV HVD MON APD THR FEL IAS MIR GPS PCU LIQ LED MTS THR APD TCO MCS LVD MOV standard HV TPC H/W & low level S/W done Basic control with PVSS FSM implemented Configured and ready for operation semi PIT SPD SDD SSD 1 LED BOM SFM CAM GPU PTU CCO LAS NMR SYN CPU GTU TOR NIM RAD DVM TRU 24 specific Detector Control March 08

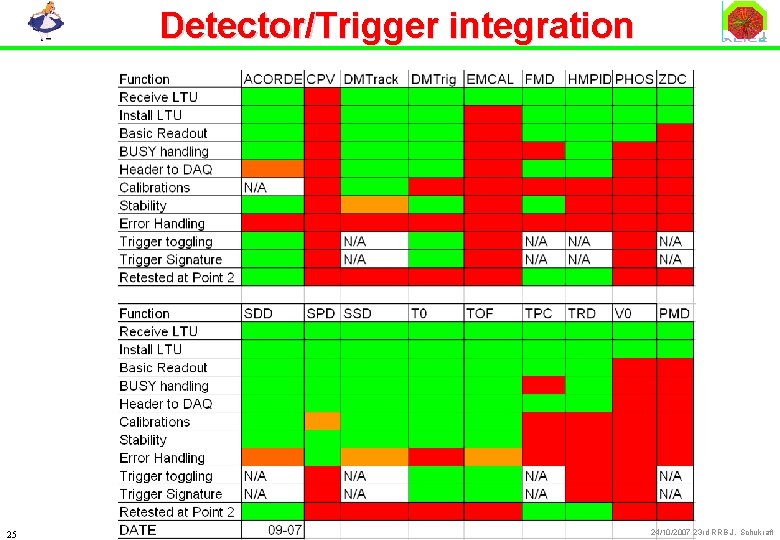

Detector/Trigger integration 25 24/10/2007 23 rd RRB J. Schukraft

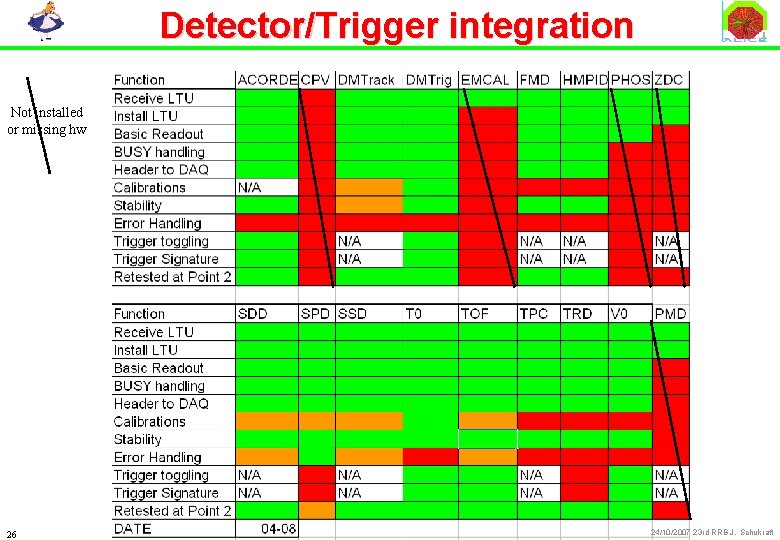

Detector/Trigger integration Not installed or missing hw 26 24/10/2007 23 rd RRB J. Schukraft

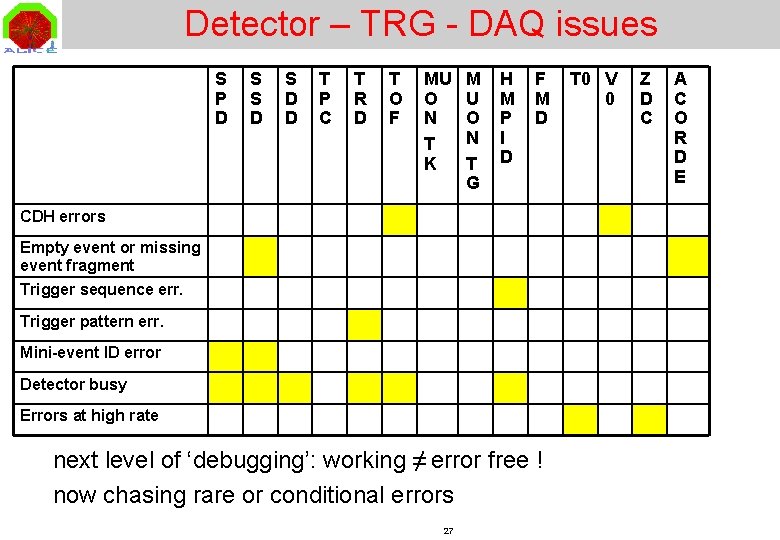

Detector – TRG - DAQ issues S P D S S D D T P C T R D T O F MU O N T K M U O N T G H M P I D F M D CDH errors Empty event or missing event fragment Trigger sequence err. Trigger pattern err. Mini-event ID error Detector busy Errors at high rate next level of ‘debugging’: working ≠ error free ! now chasing rare or conditional errors 27 T 0 V 0 Z D C A C O R D E

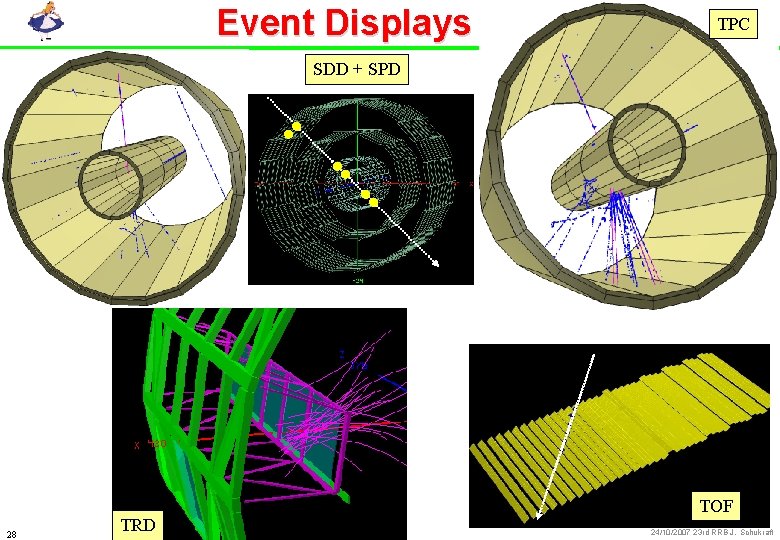

Event Displays TPC SDD + SPD 28 TRD TOF 24/10/2007 23 rd RRB J. Schukraft

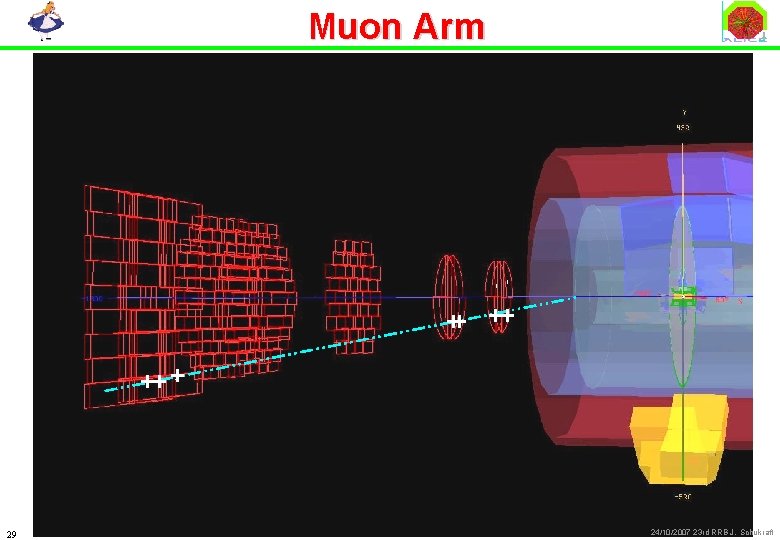

Muon Arm 29 Christophe. Suire@ipno. in 2 p 3. fr 24/10/2007 23 rd RRB J. Schukraft

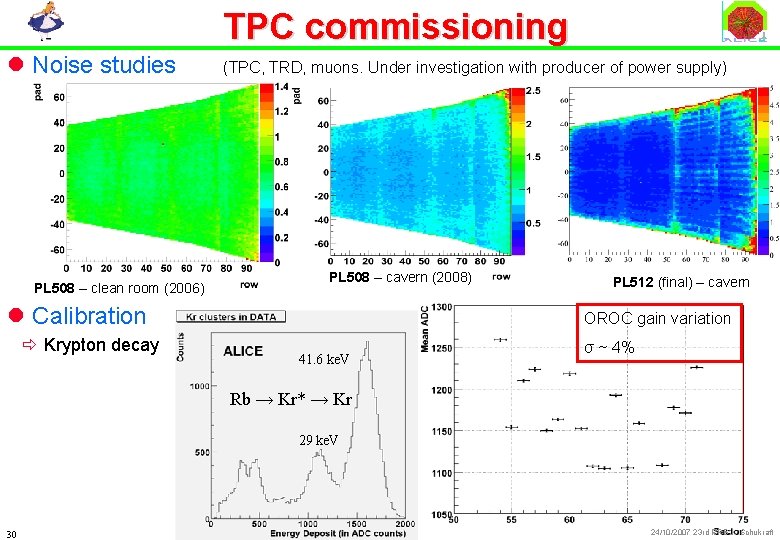

TPC commissioning l Noise studies PL 508 – clean room (2006) (TPC, TRD, muons. Under investigation with producer of power supply) PL 508 – cavern (2008) l Calibration ð Krypton decay PL 512 (final) – cavern OROC gain variation 41. 6 ke. V σ ~ 4% Rb → Kr* → Kr 29 ke. V 30 24/10/2007 23 rd RRB J. Schukraft

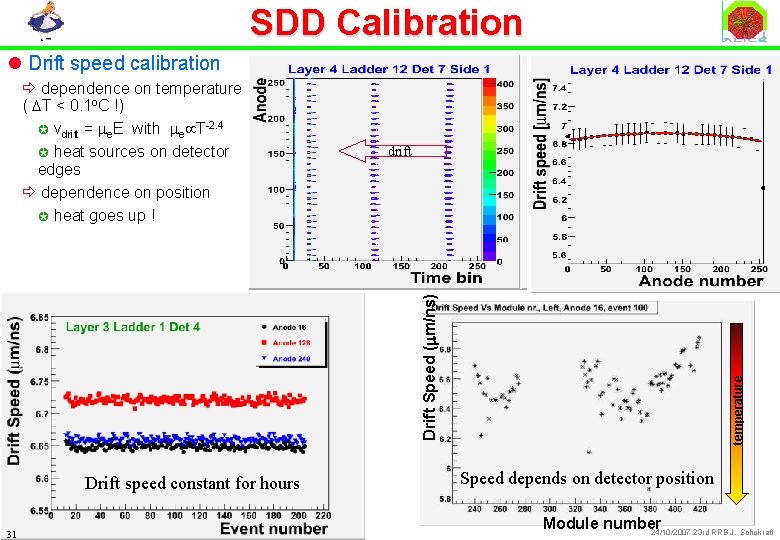

SDD Calibration l Drift speed calibration drift Drift speed constant for hours 31 temperature Drift Speed (mm/ns) ð dependence on temperature ( DT < 0. 1 o. C !) µ vdrift = me. E with me T-2. 4 µ heat sources on detector edges ð dependence on position µ heat goes up ! Speed depends on detector position Module number 24/10/2007 23 rd RRB J. Schukraft

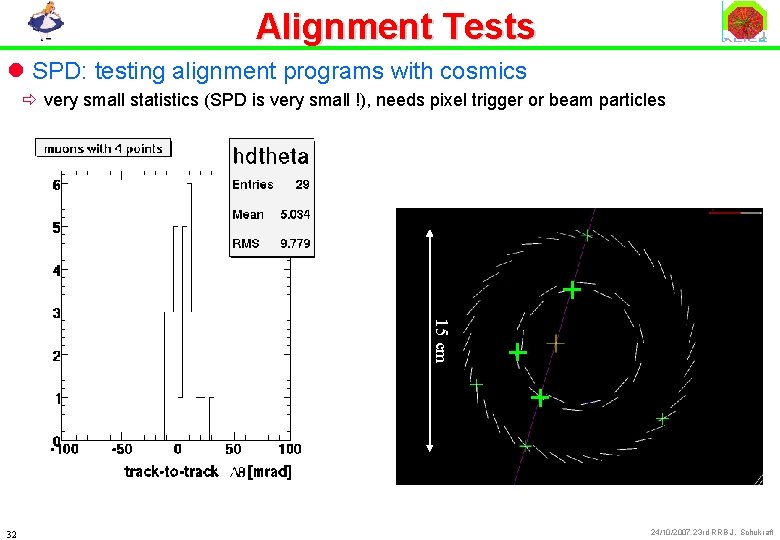

Alignment Tests l SPD: testing alignment programs with cosmics ð very small statistics (SPD is very small !), needs pixel trigger or beam particles 15 cm 32 24/10/2007 23 rd RRB J. Schukraft

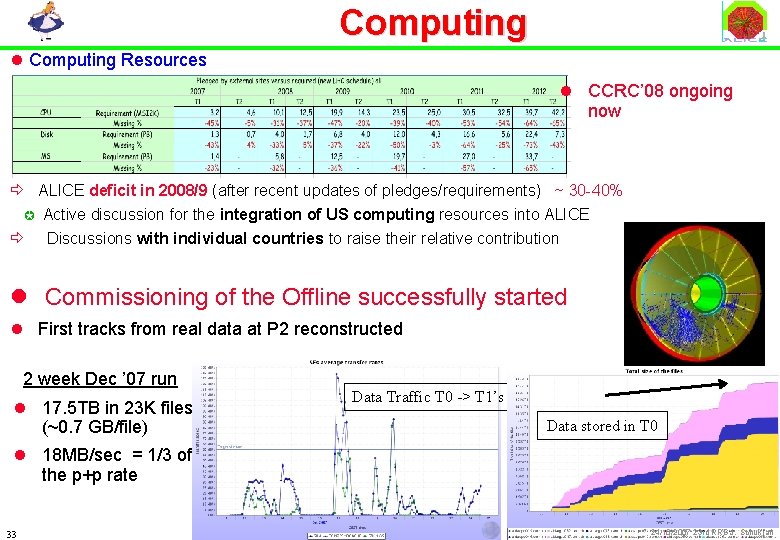

Computing l Computing Resources l CCRC’ 08 ongoing now ð ALICE deficit in 2008/9 (after recent updates of pledges/requirements) ~ 30 -40% µ Active discussion for the integration of US computing resources into ALICE ð Discussions with individual countries to raise their relative contribution l Commissioning of the Offline successfully started l First tracks from real data at P 2 reconstructed 2 week Dec ’ 07 run l 17. 5 TB in 23 K files (~0. 7 GB/file) Data Traffic T 0 -> T 1’s Data stored in T 0 l 18 MB/sec = 1/3 of the p+p rate 33 24/10/2007 23 rd RRB J. Schukraft

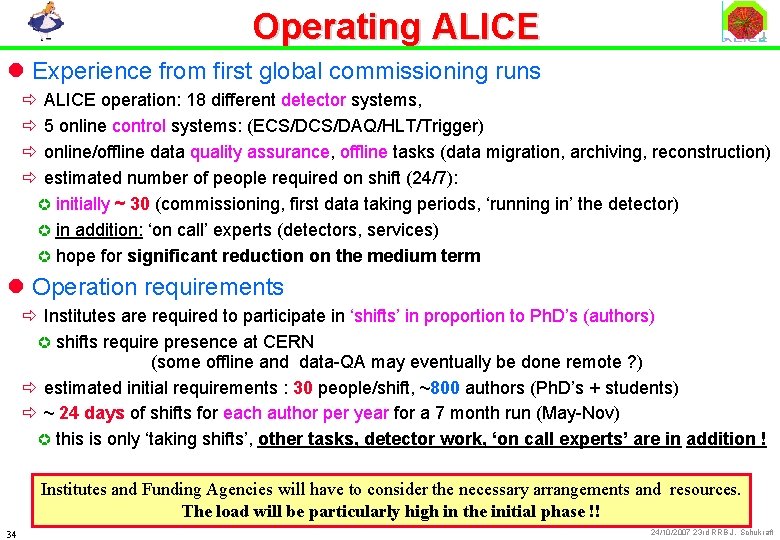

Operating ALICE l Experience from first global commissioning runs ð ALICE operation: 18 different detector systems, ð 5 online control systems: (ECS/DAQ/HLT/Trigger) ð online/offline data quality assurance, offline tasks (data migration, archiving, reconstruction) ð estimated number of people required on shift (24/7): µ initially ~ 30 (commissioning, first data taking periods, ‘running in’ the detector) µ in addition: ‘on call’ experts (detectors, services) µ hope for significant reduction on the medium term l Operation requirements ð Institutes are required to participate in ‘shifts’ in proportion to Ph. D’s (authors) µ shifts require presence at CERN (some offline and data-QA may eventually be done remote ? ) ð estimated initial requirements : 30 people/shift, ~800 authors (Ph. D’s + students) ð ~ 24 days of shifts for each author per year for a 7 month run (May-Nov) µ this is only ‘taking shifts’, other tasks, detector work, ‘on call experts’ are in addition ! Institutes and Funding Agencies will have to consider the necessary arrangements and resources. The load will be particularly high in the initial phase !! 34 24/10/2007 23 rd RRB J. Schukraft

Summary l Major Milestones ð Installation for 2008 essentially finished ! ð Global commissioning started l Biggest Concerns ð UK commitment to ALICE trigger ð Computing resources: large deficit projected for 2009 and beyond 35 24/10/2007 23 rd RRB J. Schukraft

- Slides: 35