2213 Datamining Big Data big data up to

2/2/13 Datamining Big Data big data: up to trillions of rows (or more) and, possibly, thousands of columns (or many more). I structure data vertically (p. Trees) and process it horizontally. Looping across thousands of columns can be orders of magnitude faster than looping down trillions of rows. So sometimes that means a task can be done in human time only if the data is vertically organized. Data mining is [largely] CLASSIFICATION or PREDICTION (assigning a class label to a row based on a training set of classified rows). What about clustering and ARM? They are important and related! Roughly clustering creates/improves training sets and ARM is used to data mine more complex data (e. g. , relationship matrixes, etc. ). CLASSIFICATION is [largely] case-based reasoning. To make a decision we typically search our memory for similar situations (near neighbor cases) and base our decision on the decisions we made in those cases (we do what worked before for us or others). We let near neighbors vote. "The Magical Number Seven, Plus or Minus Two. . . Information"[2] cited to argue that the number of objects (contexts) an average human can hold in working memory is 7 ± 2. We can think of classification as providing a better 7 (so it's decision support, not decision making). One can say that all Classification methods (even model based ones) are a form of Near Neighbor Classification. E. g. in Decision Tree Induction (DTI) the classes at the bottom of a decision branch ARE the Near Neighbor set due to the fact that the sample arrived at that leaf. Rows of an entity table (e. g. , Iris(SL, SW, PL, PW) or Image(R, G, B) describe instances of the entity (Irises or Image pixels). Columns are descriptive information on the row instances (e. g. , Sepal Length, Sepal Width, Pedal Length, Pedal Width or Red, Green, Blue photon counts). If the table consists entirely of real numbers, then the row set can be viewed [as s subset of] a real vector space with dimension = # of columns. Then, the notion of "near" [in classification and clustering] can be defined using a dissimilarity (~distance) or a similarity. Two rows are near if the distance between them is low or their similarity is high. Near for columns can be defined using a correlation (e. g. , Pearson's, Spearman's. . . ) If the columns also describe instances of an entity then the table is really a matrix or relationship between instances of the row entity and the column entity. Each matrix cell measures some attribute of that relationship pair (The simplest: 1 if that row is related to that column, else 0. The most complex: an entire structure of data describing that pair (that row instance and that column instance). In Market Basket Research (MBR), the row entity is customers and the columnis items. Each cell: 1 iff that customer has that item in the basket. In Netflix Cinematch, the row entity is customers and column movies and each cell has the 5 -star rating that customer gave to that movie. In Bioinformatics the row entity might be experiments and the column entity might be genes and each cell has the expression level of that gene in that experiment or the row and column entities might both be proteins and each cell has a 1 -bit iff the two proteins interact in some way. In Facebook the rows might be people and the columns might also be people (and a cell has a one bit iff the row and column persons are friends) Even when the table appears to be a simple entity table with descriptive feature columns, it may be viewable as a relationship between 2 entities. E. g. , Image(R, B, G) is a table of pixel instances with columns, R, G, B. The R-values count the photons in a "red" frequency range detected at that pixel over an interval of time. That red frequency range is determined more by the camera technology than by any scientific definition. If we had separate CCD cameras that could count photons in each of a million very thin adjacent frequency intervals, we could view the column values of that image as instances a frequency entity, Then the image would be a relationship matrix between the pixel and the frequency entities. So an entity table can often be usefully viewed as a relationship matrix. If so, it can also be rotated so that the former column entity is now viewed as the new row entity and the former row entity is now viewed as the new set of descriptive columns. The bottom line is that we can often do data mining on a table of data in many ways: as an entity table (classification and clustering), as a relationship matrix (ARM) or upon rotation that matrix, as another entity table. For a rotated entity table, the concepts of nearness that can be used also rotate (e. g. , The cosine correlation of two columns morphs into the cosine of the angle between 2 vectors as a row similarity measure. )

DBs, DWs are merging as In-memory DBs: SAP® In-Memory Computing Enabling Real-Time Computing SAP® In-Memory enables real-time computing by bringing together online transaction proc. OLTP (DB) and online analytical proc. OLAP (DW). Combining advances in hardware technology with SAP In. Memory Computing empowers business – from shop floor to boardroom – by giving real-time bus. proc. instantaneous access to data-eliminating today’s info lag for your business. In-memory computing is already under way. The question isn’t if this revolution will impact businesses but when/ how. In-memory computing won’t be introduced because a co. can afford the technology. It will be because a business cannot afford to allow its competitors to adopt the it first. Here is sample of what in-memory computing can do for you: • Enable mixed workloads of analytics, operations, and performance management in a single software landscape. • Support smarter business decisions by providing increased visibility of very large volumes of business information • Enable users to react to business events more quickly through real-time analysis and reporting of operational data. • Deliver innovative real-time analysis and reporting. • Streamline IT landscape and reduce total cost of ownership. In manufacturing enterprises, in-memory computing tech will connect the shop floor to the boardroom, and the shop floor associate will have instant access to the same data as the board [[shop floor = daily transaction processing. Boardroom = executive data mining]]. The shop floor will then see the results of their actions reflected immediately in the relevant Key Performance Indicators (KPI). SAP Business. Objects Event Insight software is key. In what used to be called exception reporting, the software deals with huge amounts of realtime data to determine immediate and appropriate action for a real-time situation. Product managers will still look at inventory and point-of-sale data, but in the future they will also receive, eg. , tell customers broadcast dissatisfaction with a product over Twitter. Or they might be alerted to a negative product review released online that highlights some unpleasant product features requiring immediate action. From the other side, small businesses running real-time inventory reports will be able to announce to their Facebook and Twitter communities that a high demand product is available, how to order, and where to pick up. Bad movies have been able to enjoy a great opening weekend before crashing 2 nd weekend when negative word-of-mouth feedback cools enthusiasm. That week-long grace period is about to disappear for silver screen flops. Consumer feedback won’t take a week, a day, or an hour. The very second showing of a movie could suffer from a noticeable falloff in attendance due to consumer criticism piped instantaneously through the new technologies. It will no longer be good enough to have weekend numbers ready for executives on Monday morning. Executives will run their own reports on revenue, Twitter their reviews, and by Monday morning have acted on their decisions. The final example is from the utilities industry: The most expensive energy a utilities provides is energy to meet unexpected demand during peak periods of consumption. If the company could analyze trends in power consumption based on real-time meter reads, it could offer – in real time – extra low rates for the week or month if they reduce their consumption during the following few hours. This advantage will become much more dramatic when we switch to electric cars; predictably, those cars are recharged the minute the owners return home from work. Hardware: blade servers and multicore CPUs and memory capacities measured in terabytes. Software: in-memory database with highly compressible row / column storage designed to maximize in-memory comp. tech. [[Both row and column storage! They convert to column-wise storage only for Long-Lived-High-Value data? ]] Parallel processing takes place in the database layer rather than in the app layer - as it does in the client-server arch. Total cost is 30% lower than traditional RDBMSs due to: • Leaner hardware, less system capacity req. , as mixed workloads of analytics, operations, performance mgmt is in a single system, which also reduces redundant data storage. [[Back to a single DB rather than a DB for TP and a DW for boardroom dec. sup. ]] • Less extract transform load (ETL) between systems and fewer prebuilt reports, reducing support required to run sofwr. Report runtime improvements of up to 1000 times. Compression rates of up to a 10 times. Performance improvements expected even higher in SAP apps natively developed for inmemory DBs. Initial results: a reduction of computing time from hours to seconds. However, in-memory computing will not eliminate the need for data warehousing. Real-time reporting will solve old challenges and create new opportunities, but new challenges will arise. SAP HANA 1. 0 software supports realtime database access to data from the SAP apps that support OLTP. Formerly, operational reporting functionality was transferred from OLTP applications to a data warehouse. With in-memory computing technology, this functionality is integrated back into the transaction system. Adopting in-memory computing results in an uncluttered arch based on a few, tightly aligned core systems enabled by service-oriented architecture (SOA) to provide harmonized, valid metadata and master data across business processes. Some of the most salient shifts and trends in future enterprise architectures will be: • A shift to BI self-service apps like data exploration, instead of static report solutions. • Central metadata and masterdata repositories that define the data architecture, allowing data stewards to work across all business units and all platforms Real-time in-memory computing technology will cause a decline Structured Query Language (SQL) satellite databases. The purpose of those databases as flexible, ad hoc, more business-oriented, less IT-static tools might still be required, but their offline status will be a disadvantage and will delay data updates. Some might argue that satellite systems with in-memory computing technology will take over from satellite SQL DBs. SAP Business Explorer tools that use in-memory computing technology represent a paradigm shift. Instead of waiting for IT to work on a long queue of support tickets to create new reports, business users can explore large data sets and define reports on the fly.

FAUST Functional-Gap-based clustering methods the unsupervised part of FAUST = Functional Analytic Unsupervised and Supervised machine Teaching) The FAUST clustering algorithm relies on choosing a distance dominating functional (map of each table row to R 1, in which the difference between two functional values, F(x) and F(y), is always the distance between x and y - then if we find a gap in F-values, there is a gap at least as large in the dataset (which divide the dataset into 2 clusters. Dot Product Projection: DPPd(y)≡ (y-p)od where the unit vector, d, can be obtained as d=(p-q)/|p-q| for points, p and q. Square Distance Functional: SDp(y) ≡ (y-p)o(y-p) Dot Product Radius: DPRpq(y) ≡ √ SDp(y) - DPPpq(y)2 Coordinate Projection is a the simplest DPP: ej(y) ≡ yj Square Dot Product Radius: SDPRpq(y)≡ SDp(y)-DPPpq(y)2 Note: The same DPPd gaps are revealed by DPd(y)≡ yod since ((y-p)od=yod-pod and thus DP just shifts all DPP values by pod. Do we have to loop thru an entire grid of unit vectors, d (over an n-1 sphere) looking for the best (a good) one for DPd? Once we find a "good" one, can we hill-climb its value to a better one? In a later slide, we examine redefining d to run from the mean of one cluster to the mean of the other or from the margin mean of one to the margin mean of the other. We could also try shifting d in the direction of the F-Variance Gradient? Can we find the d that maximizes the Variance? (or some other measure of dispersion - replace Variance by avg dis from value=P<<min(F-value) so all F(y)-P are positive and P is unchanged as we hill-climb d? Combinations? : DP-KM DPp, d(y) gaps. 1. Check sparse extremes. 2. After several rounds, apply k-means to the resulting clusters (when k seems to be determined). DP-DA 1. DPp, d(y) gaps against density of subcluster. 1. 1 Sparse extremes against subcluster density. 2. Apply other methods once Dot ceases to be effective. DP-SD 1. DPp, d(y) and SDp(y) (over a p-grid). 1. 1 Check sparse ends distance with subcluster density. (DP pd , SDp share construction steps!)

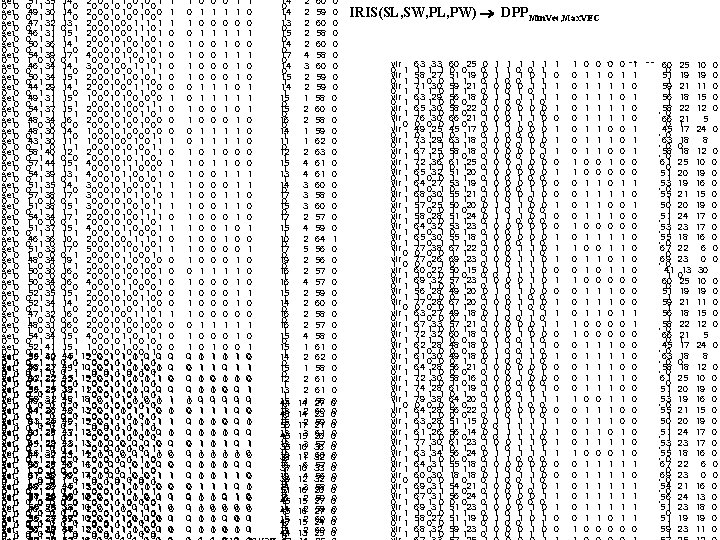

set 0 0 set 0 0 set 0 0 set 0 0 set 0 0 set 0 0 set 0 0 set 0 0 set 0 0 ver set 1 0 0 ver set 0 1 0 ver set 1 0 0 ver set 1 0 0 ver set 0 1 0 ver set 1 0 0 51 35 0 1 1 49 30 0 1 1 47 32 0 1 1 46 31 0 1 1 50 36 0 1 1 54 39 1 0 0 46 34 0 1 1 50 34 0 1 1 44 29 0 1 1 49 31 0 1 1 54 37 0 1 1 48 34 1 0 0 48 30 0 1 1 43 30 0 1 0 58 40 0 1 1 57 44 0 1 1 54 39 0 1 1 51 35 0 1 1 57 38 1 0 0 51 38 0 1 1 54 34 1 0 0 51 37 0 1 1 46 36 0 1 0 51 33 1 0 0 48 34 1 0 0 50 30 1 0 0 50 34 1 0 0 52 35 0 1 1 52 34 0 1 1 47 32 1 0 0 48 31 1 0 0 54 34 0 1 1 52 41 0561 30 1 0551 42 1 58 27 0491 31 0 1 62 22 0501 32 1 56 25 35 0550 1 1 59 31 32 49 1 0 0610 1 28 1 0441 30 0 1 63 25 51 34 1 0 0610 1 28 1 0501 35 1 64 29 0451 23 0 1 66 30 0441 32 1 68 28 1500 35 0 67 30 1510 38 0 60 29 0481 30 1 58 38 57 26 51 1 0 0 0 155 50 32 23 24 0 0460 1 561 37 27 55 24 53 0 0 0 1 1 57 33 30 58 27 0 0500 1 1 14 2 0 1 1 0 0 0 1 0 14 2 0 1 1 0 0 0 0 0 13 2 0 1 1 1 0 0 0 0 15 2 0 1 1 1 0 0 1 0 14 2 0 1 1 0 0 0 1 0 17 4 0 1 1 0 1 0 0 14 3 0 1 1 1 1 0 0 0 1 1 15 2 0 1 1 0 0 1 0 14 2 0 1 1 0 0 0 1 0 15 1 0 1 1 0 0 0 0 0 1 15 2 0 1 1 1 1 0 0 1 0 16 2 0 1 1 0 0 0 0 0 14 1 0 1 1 0 0 0 1 11 1 0 1 0 1 1 1 0 0 0 1 12 2 0 1 1 1 0 0 0 0 15 4 0 1 1 1 0 0 0 13 4 0 1 1 0 1 0 0 14 3 0 1 1 0 0 0 1 1 17 3 0 1 1 1 0 0 0 0 1 1 15 3 0 1 1 0 0 1 1 17 2 0 1 1 0 1 0 15 4 0 1 1 0 0 1 1 1 0 0 0 10 2 0 1 1 1 1 0 0 0 17 5 0 1 1 0 0 0 1 19 2 0 1 1 0 0 0 0 16 2 0 1 1 0 0 0 0 1 0 16 4 0 1 1 0 0 0 0 0 15 2 0 1 1 0 0 1 0 14 2 0 1 1 0 1 0 0 0 16 2 0 1 1 1 0 0 0 1 0 16 2 0 1 1 0 0 0 0 0 15 4 0 1 1 1 1 0 0 0 15 1 0 1 0 145 1 15 00 01 01 01 00 10 14 2 0 0 0 1 1 1 1 0 141 1 0 0 15 101 00 11 11 010 100 010 0 145 1 15 00 01 11 12 1 2 0 0 0 1 1 0 39 0 112 00 11 010 01 001 13 1 048 1 18 00 01 1 0 0 1 1 0 15 1 0 0 1 1 010 100 010 0 140 0 1 13 0 01 1 1 01 10 13 0 2 0 0 0 1 1 0 0 49 1 152 00 11 010 110 011 15 0 147 1 12 00 01 1 0 13 3 0 1 11 110 001 1 043 1 13 01 00 00 10 10 13 3 0 1 1 0 1 044 1 14 01 00 1 0 0 1 13 0 2 0 0 0 1 100 101 010 0 1 14 1 0 00 00 1 0 48 16 0 0 0 1 1 0 1 0 50 174 10 01 00 00 11 19 1 0 0 0 1 45 1 153 00 011 11 110 10 000 14 0 1 0 0 1 1 135 40 0 10 122 00 11 010 00 0 1 16 1 1 0 01 1 1 0 038 0 0 33 11 102 00 11 10 01 11 14 0 0 1 0 1 1 42 10 13 0 1 011 0 1 0 37 15 0 2 00 01 1 10 1 01 0 10 0 139 0 42 122 00 11 11 10 00 1 01 14 1 1 0 0 0 1 0 1 1 1 0 0 0 1 0 1 1 1 0 1 0 01 01 00 01 11 10 01 00 00 11 00 01 1 00 1 01 1 10 0 1 0 1 1 0 0 1 1 1 1 0 1 1 01 00 01 01 10 00 01 01 01 00 01 01 0 1 0 0 1 1 0 0 0 0 1 0 0 10 11 10 10 01 11 10 10 10 11 10 10 0 1 1 0 0 1 1 0 0 0 0 0 1 0 1 11 11 00 10 01 11 10 10 10 11 10 00 1 10 10 0 s 1 1 1 s 2 1 1 1 0 s 3 0 1 1 1 s 4 1 1 s 5 0 1 1 1 s 6 1 1 1 0 s 7 1 1 1 0 s 8 1 1 s 9 0 1 1 1 s 101 1 s 110 1 1 0 s 121 1 s 131 1 s 141 1 1 0 s 150 1 1 1 s 160 1 1 1 s 171 1 1 0 s 181 1 s 191 1 s 201 1 1 0 s 211 1 s 220 1 1 1 s 230 0 s 240 1 1 0 s 251 1 s 261 1 1 0 s 271 1 1 0 s 281 1 1 0 s 291 1 1 0 s 300 1 1 1 s 311 1 1 0 s 321 1 1 0 s 330 1 11 10 s 341 1 11 01 s 351 1 11 10 s 360 1 01 00 s 371 1 01 01 1 s 38 e 26 1 01 1 0 11 1 s 39 e 27 1 1 00 0 1 s 40 e 28 1 1 0 1 10 0 1 s 41 e 29 1 1 0 1 11 0 1 s 42 e 30 1 1 1 0 10 1 0 s 43 e 31 1 0 10 0 s 441 e 32 1 1 1 0 11 1 1 s 45 e 33 1 1 1 0 1 s 46 e 34 1 1 01 1 s 471 e 35 1 1 0 1 10 1 0 0 0 s 48 e 36 1 1 01 0 0 s 49 e 37 1 1 0 1 10 0 1 0 s 50 e 38 1 1 1 35 51 1 1 0 0 30 49 1 0 32 47 1 1 0 1 31 46 1 0 36 50 1 1 0 1 39 54 1 0 34 46 1 1 0 0 34 50 1 1 29 44 1 0 1 1 31 49 1 0 1 1 37 54 1 1 0 0 34 48 1 0 1 0 30 43 1 1 1 0 40 58 1 1 1 0 44 57 1 1 0 1 39 54 1 1 0 1 35 51 1 1 0 0 38 57 1 0 38 51 1 1 0 0 34 54 1 0 0 1 37 51 1 0 36 46 0 0 0 1 33 51 1 0 0 0 34 48 1 0 0 0 30 50 1 0 0 0 34 50 1 0 0 1 35 52 1 0 34 52 1 1 0 0 32 47 1 0 1 1 31 48 1 0 0 0 34 54 1 0 1 1 41 52 1 1 0 0 0 42 55 1 11 49 31 1 00 50 32 1 1 0 11 55 35 1 1 0 01 49 31 66 1 0 0301 1 1 0 0 44 30 68 280 1 1 1 0 34 51 67 30 1 0 0 1351 0 0 1 50 60 29 1 1 1 0230 1 1 1 45 57 1 1 0260 0 0 44 32 55 240 1 1 0 0 1 35 50 55 24 1 0 0 0380 0 51 58 1 0 0270 0 0 1 0 48 30 60 271 1 0 0 0 38 51 54 30 1 1 0 0321 1 1 0 0 46 60 1 0 0341 1 0 1 53 37 67 310 1 1 1 0 0 0 1 1 33 50 63 1 1230 14 1 13 0 15 0 14 0 17 0 14 0 15 1 14 1 15 0 16 0 14 1 11 0 12 1 15 1 13 1 14 0 17 0 15 0 17 1 15 1 10 0 17 0 19 0 16 1 15 1 14 0 16 1 15 0 15 1 14 0 15 0 12 1 13 1 15 44 0 1 13 48 0 1 15 50 1 1 13 45 1 0 13 38 0 0 16 37 1 1 19 39 0 0 14 51 0 0 16 45 1 1 14 45 1 1 15 47 0 0 14 44 0 2 2 2 4 3 2 2 1 1 2 4 4 3 3 3 2 4 2 5 2 2 4 1 2 2 141 142 172 153 103 112 106 124 163 152 162 152 132 60 59 60 58 60 59 59 58 60 58 59 62 63 61 61 60 58 60 57 59 64 56 56 57 57 59 60 58 57 58 61 62 58 61 61 58 27 60 23 59 21 61 26 57 36 60 32 57 33 56 32 58 20 59 27 60 24 60 25 0 0 0 0 0 0 1 0 0 0 00 00 00 00 IRIS(SL, SW, PL, PW) DPPMin. Vec, Max. VEC vir 0 1 vir 0 1 vir 0 1 vir 1 0 vir 0 1 vir 0 1 vir 0 1 vir 1 0 vir 0 1 vir 0 1 vir 0 1 vir 0 0 vir 0 1 vir 0 1 63 33 1 1 1 58 27 1 0 0 71 30 1 1 0 63 29 1 1 0 65 30 1 1 0 76 30 0 49 25 0 1 1 73 29 1 1 1 67 25 1 1 0 72 36 1 1 1 65 32 1 0 0 64 27 1 0 1 68 30 1 57 25 1 0 0 58 28 1 0 0 64 32 1 0 1 65 30 1 77 38 0 0 0 77 26 0 0 1 60 22 1 0 0 69 32 1 1 0 56 28 1 0 0 77 28 0 0 0 63 27 1 0 0 67 33 1 1 0 72 32 1 1 1 62 28 1 0 0 61 30 1 0 0 64 28 1 1 0 72 30 1 1 0 74 28 1 1 1 79 38 0 0 0 64 28 1 1 0 63 28 1 0 0 61 26 1 1 0 77 30 1 1 1 63 34 1 1 0 64 31 1 0 1 60 30 1 0 0 69 31 1 0 1 67 31 1 1 0 69 31 1 0 0 58 27 1 0 0 68 32 1 1 0 60 0 51 1 59 1 56 0 58 1 66 1 45 0 63 1 58 1 61 0 51 1 53 0 55 1 50 1 51 1 53 0 55 1 67 1 69 0 50 1 57 0 49 0 67 1 49 0 57 0 60 0 48 0 49 0 56 0 58 1 61 0 64 0 56 0 51 1 56 0 61 0 56 0 55 1 18 1 54 1 56 0 51 1 59 1 25 0 19 1 21 1 18 0 22 0 21 0 17 1 18 0 25 1 20 1 19 1 21 1 20 0 24 1 23 1 18 1 22 1 23 1 15 0 23 1 20 1 18 1 21 1 18 0 18 1 21 0 16 0 19 1 20 0 22 0 15 1 14 0 23 1 24 0 18 1 18 0 21 0 24 0 23 1 19 1 23 1 0 0 0 1 0 1 0 0 0 1 0 1 0 0 0 1 0 0 0 1 0 1 0 1 0 0 0 1 0 1 0 0 0 1 1 1 1 0 1 0 1 0 1 1 1 0 1 0 1 1 0 0 1 1 1 0 1 0 1 0 1 1 0 0 1 1 1 0 1 0 1 1 1 1 0 0 0 0 0 0 1 1 0 0 0 1 0 0 0 0 0 0 1 1 0 0 0 0 1 0 0 1 1 1 0 0 0 1 1 0 0 1 1 1 1 0 0 1 1 0 1 1 1 1 0 0 0 1 1 0 0 1 1 0 0 1 0 0 0 1 0 1 1 1 1 1 0 0 0 1 1 1 0 0 0 1 1 1 1 0 0 0 1 1 1 0 0 1 0 1 0 0 1 0 0 0 0 0 1 0 1 1 0 0 0 0 1 1 1 0 0 0 1 1 0 0 1 1 1 0 1 1 1 0 0 0 1 1 1 1 0 0 0 0 1 1 0 0 1 0 0 0 1 0 0 0 1 1 1 1 1 0 0 1 1 0 1 1 1 0 1 1 1 0 0 1 1 1 1 0 0 1 1 0 1 0 0 1 1 1 1 1 0 0 0 63 1 i 1 0 0 1 58 1 i 2 0 1 1 71 0 i 3 0 0 1 1 0 63 1 i 4 0 0 1 1 1 65 0 i 5 0 0 1 1 1 76 0 i 6 0 0 0 49 1 i 7 0 1 1 1 0 73 1 i 8 0 0 0 67 1 i 9 0 0 1 1 0 72 0 i 10 0 0 1 0 0 65 0 i 11 0 0 1 64 1 i 12 0 1 1 68 0 i 13 0 0 1 0 0 57 1 i 14 0 1 0 58 0 i 15 0 1 0 0 0 64 0 i 16 0 1 1 65 0 i 17 0 1 1 77 0 i 18 0 0 1 77 0 i 19 0 0 0 1 1 57 0 e 50 0 0 1 0 0 63 0 i 1 0 0 1 1 0 58 0 i 2 0 1 0 71 0 i 3 0 0 1 63 1 i 4 0 0 1 0 0 65 1 i 5 0 0 1 0 0 76 0 i 6 0 0 0 1 0 49 0 i 7 0 1 1 73 0 i 8 0 0 0 1 0 67 0 i 9 0 0 1 1 1 72 0 i 10 0 0 1 1 0 65 0 i 11 0 1 1 64 0 i 12 0 1 0 68 0 i 13 0 0 1 1 0 57 0 i 14 0 1 0 0 1 58 0 i 15 0 1 1 64 0 i 16 0 1 0 0 1 65 0 i 17 0 1 1 77 1 i 18 0 0 0 1 1 77 0 i 19 0 0 0 1 1 69 1 i 40 0 1 1 67 1 i 41 0 0 1 1 1 69 1 i 42 0 1 0 0 1 58 1 i 43 0 1 0 0 0 68 0 i 44 0 0 1 33 60 0 1 0 27 51 0 1 1 30 59 0 1 1 29 56 1 1 1 30 58 1 0 0 30 66 0 1 0 25 45 0 0 0 29 63 1 0 0 25 58 1 0 0 36 61 0 32 51 0 1 1 27 53 0 0 0 30 55 1 1 1 25 50 0 1 1 28 51 0 0 1 32 53 0 0 1 30 55 0 0 0 38 67 1 1 0 26 69 0 0 0 28 41 1 33 60 0 1 0 27 51 0 1 1 30 59 0 1 1 29 56 1 1 1 30 58 1 0 0 30 66 0 1 0 25 45 0 0 0 29 63 1 0 0 25 58 1 0 0 36 61 0 32 51 0 1 1 27 53 0 0 0 30 55 1 1 1 25 50 0 1 1 28 51 0 0 1 32 53 0 0 1 30 55 0 0 0 38 67 1 1 0 26 69 0 0 0 31 54 0 0 0 31 56 1 0 1 31 51 0 27 51 0 1 1 32 59 0 1 1 25 19 21 18 22 21 1 17 18 0 18 25 20 19 21 20 24 23 18 22 23 13 0 25 19 21 18 22 21 1 17 18 0 18 25 20 19 21 20 24 23 18 22 23 21 24 23 19 23 10 19 11 15 12 5 24 8 12 10 19 16 15 19 17 17 16 6 0 30 10 19 11 15 12 5 24 8 12 10 19 16 15 19 17 17 16 6 0 16 13 18 19 11 0 0 0 0 0 0 0 0 0 0

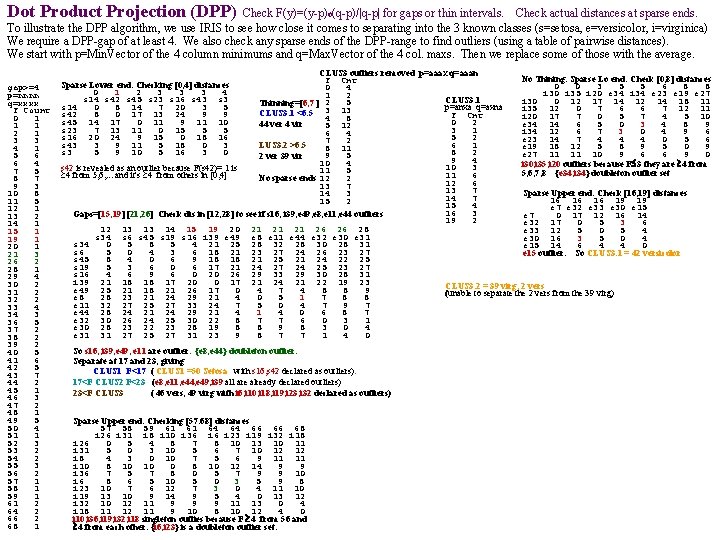

Dot Product Projection (DPP) Check F(y)=(y-p)o(q-p)/|q-p| for gaps or thin intervals. Check actual distances at sparse ends. To illustrate the DPP algorithm, we use IRIS to see how close it comes to separating into the 3 known classes (s=setosa, e=versicolor, i=virginica) We require a DPP-gap of at least 4. We also check any sparse ends of the DPP-range to find outliers (using a table of pairwise distances). We start with p=Min. Vector of the 4 column minimums and q=Max. Vector of the 4 col. maxs. Then we replace some of those with the average. gap>=4 p=nnnn q=xxxx F Count 0 1 1 1 2 1 3 3 4 1 5 6 6 4 7 5 8 7 9 3 10 8 11 5 12 1 13 2 14 1 15 1 19 1 20 1 21 3 26 2 28 1 29 4 30 2 31 2 32 2 33 4 34 3 36 5 37 2 38 2 39 2 40 5 41 6 42 5 43 7 44 2 45 1 46 3 47 2 48 1 49 5 50 4 51 1 52 3 53 2 54 2 55 3 56 2 57 1 58 1 59 1 61 2 64 2 66 2 68 1 CLUS 3 outliers removed p=aaax q=aaan No Thining. Sparse Lo end: Check [0, 8] distances F Cnt 0 0 3 5 5 6 8 8 0 4 i 30 i 35 i 20 e 34 i 34 e 23 e 19 e 27 1 2 CLUS 3. 1 i 30 0 12 17 14 12 14 18 11 5 Thinning=[6, 7 ] 2 p=anxa q=axna i 35 12 0 7 6 6 7 12 11 CLUS 3. 1 <6. 5 3 13 F Cnt i 20 17 7 0 5 7 4 5 10 4 8 0 2 e 34 14 6 5 0 3 4 8 9 44 ver 4 vir 5 12 3 1 i 34 12 6 7 3 0 4 9 6 6 4 5 2 e 23 14 7 4 4 4 0 5 6 7 2 6 1 LUS 3. 2 >6. 5 e 19 18 12 5 8 9 5 0 9 8 11 8 2 e 27 11 11 10 9 6 6 9 0 9 5 2 ver 39 vir 9 4 10 4 i 30, i 35, i 20 outliers because F 3 they are 4 from 10 3 s 42 is revealed as an outlier because F(s 42)= 1 is 11 5 5, 6, 7, 8 {e 34, i 34} doubleton outlier set 11 6 4 from 5, 6, . . . and it's 4 from others in [0, 4] No sparse ends 12 2 12 6 13 7 Sparse Upper end: Check [16, 19] distances 14 3 14 7 16 16 16 19 19 15 2 15 4 e 7 e 32 e 33 e 30 e 15 16 3 Gaps=[15, 19] [21, 26] Check dis in [12, 28] to see if s 16, i 39, e 49, e 8, e 11, e 44 outliers e 7 0 17 12 16 14 19 2 e 32 17 0 5 3 6 12 13 13 14 15 19 20 21 21 21 26 26 28 e 33 12 5 0 5 4 s 34 s 6 s 45 s 19 s 16 i 39 e 49 e 8 e 11 e 44 e 32 e 30 e 31 e 30 16 3 5 0 4 s 34 0 5 8 5 4 21 25 28 32 28 30 28 31 e 15 14 6 4 4 0 s 6 5 0 4 3 6 18 21 23 27 24 26 23 27 e 15 outlier. So CLUS 3. 1 = 42 versicolor s 45 8 4 0 6 9 18 18 21 25 21 24 22 25 s 19 5 3 6 0 6 17 21 24 27 24 25 23 27 s 16 4 6 9 6 0 20 26 29 33 29 30 28 31 i 39 21 18 18 17 20 0 17 21 24 21 22 19 23 CLUS 3. 2 = 39 virg, 2 vers e 49 25 21 18 21 26 17 0 4 7 4 8 8 9 (unable to separate the 2 vers from the 39 virg) e 8 28 23 21 24 29 21 4 0 5 1 7 8 8 e 11 32 27 25 27 33 24 7 5 0 4 7 9 7 e 44 28 24 21 24 29 21 4 0 6 8 7 e 32 30 26 24 25 30 22 8 7 7 6 0 3 1 e 30 28 23 22 23 28 19 8 8 9 8 3 0 4 e 31 31 27 25 27 31 23 9 8 7 7 1 4 0 Sparse Lower end: Checking [0, 4] distances 0 1 2 3 3 3 4 s 14 s 42 s 45 s 23 s 16 s 43 s 14 0 8 14 7 20 3 5 s 42 8 0 17 13 24 9 9 s 45 14 17 0 11 9 11 10 s 23 7 13 11 0 15 5 5 s 16 20 24 9 15 0 18 16 s 43 3 9 11 5 18 0 3 s 3 5 9 10 5 16 3 0 So s 16, , i 39, e 49, e 11 are outlier. {e 8, e 44} doubleton outlier. Separate at 17 and 23, giving CLUS 1 F<17 ( CLUS 1 =50 Setosa with s 16, s 42 declared as outliers). 17<F CLUS 2 F<23 (e 8, e 11, e 44, e 49, i 39 all are already declared outliers) 23<F CLUS 3 ( 46 vers, 49 virg with i 6, i 10, i 18, i 19, i 23, i 32 declared as outliers) Sparse Upper end: Checking [57. 68] distances 57 58 59 61 61 64 64 66 66 68 i 26 i 31 i 8 i 10 i 36 i 23 i 19 i 32 i 18 i 26 0 5 4 8 7 8 10 13 10 11 i 31 5 0 3 10 5 6 7 10 12 12 i 8 4 3 0 10 7 5 6 9 11 11 i 10 8 10 10 0 8 10 12 14 9 9 i 36 7 5 7 8 0 5 7 9 9 10 i 6 8 6 5 10 5 0 3 5 9 8 i 23 10 7 6 12 7 3 0 4 11 10 i 19 13 10 9 14 9 5 4 0 13 12 i 32 10 12 11 9 9 9 11 13 0 4 i 18 11 12 11 9 10 8 10 12 4 0 i 10, i 36, i 19, i 32, i 18 singleton outlies because F 4 from 56 and 4 from each other. {i 6, i 23} is a doubleton outlier set.

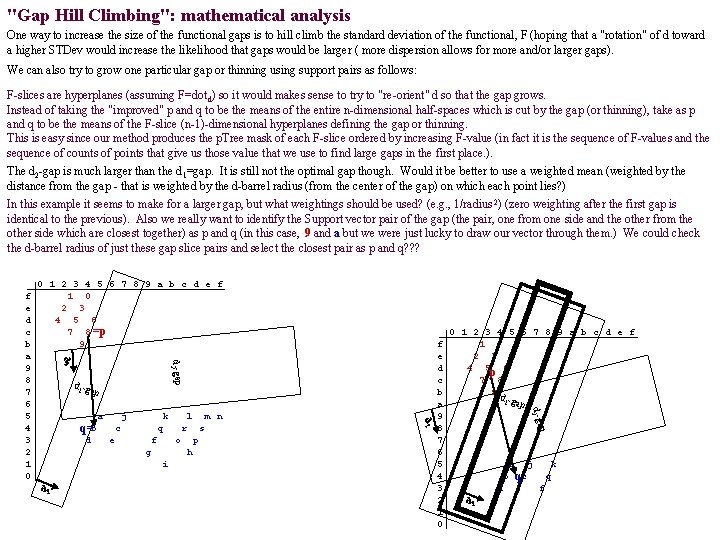

"Gap Hill Climbing": mathematical analysis One way to increase the size of the functional gaps is to hill climb the standard deviation of the functional, F (hoping that a "rotation" of d toward a higher STDev would increase the likelihood that gaps would be larger ( more dispersion allows for more and/or larger gaps). We can also try to grow one particular gap or thinning using support pairs as follows: F-slices are hyperplanes (assuming F=dot d) so it would makes sense to try to "re-orient" d so that the gap grows. Instead of taking the "improved" p and q to be the means of the entire n-dimensional half-spaces which is cut by the gap (or thinning), take as p and q to be the means of the F-slice (n-1)-dimensional hyperplanes defining the gap or thinning. This is easy since our method produces the p. Tree mask of each F-slice ordered by increasing F-value (in fact it is the sequence of F-values and the sequence of counts of points that give us those value that we use to find large gaps in the first place. ). The d 2 -gap is much larger than the d 1=gap. It is still not the optimal gap though. Would it be better to use a weighted mean (weighted by the distance from the gap - that is weighted by the d-barrel radius (from the center of the gap) on which each point lies? ) In this example it seems to make for a larger gap, but what weightings should be used? (e. g. , 1/radius 2) (zero weighting after the first gap is identical to the previous). Also we really want to identify the Support vector pair of the gap (the pair, one from one side and the other from the other side which are closest together) as p and q (in this case, 9 and a but we were just lucky to draw our vector through them. ) We could check the d-barrel radius of just these gap slice pairs and select the closest pair as p and q? ? ? 0 1 2 3 4 5 6 7 8 9 a b c d e f 1 0 2 3 4 5 6 7 8 =p 9 d 2 -gap d 2 d 1 -g ap j e m n r f s o g p h i d 1 l q 0 1 2 3 4 5 6 7 8 9 a b c d e f 1 2 3 4 5 p 6 7 8 9 d 1 -gap p d k c f e d c b a 9 8 7 6 5 4 3 2 1 0 d 2 -ga a q=b d 2 f e d c b a 9 8 7 6 5 4 3 2 1 0 a b d d 1 j k qc e q f

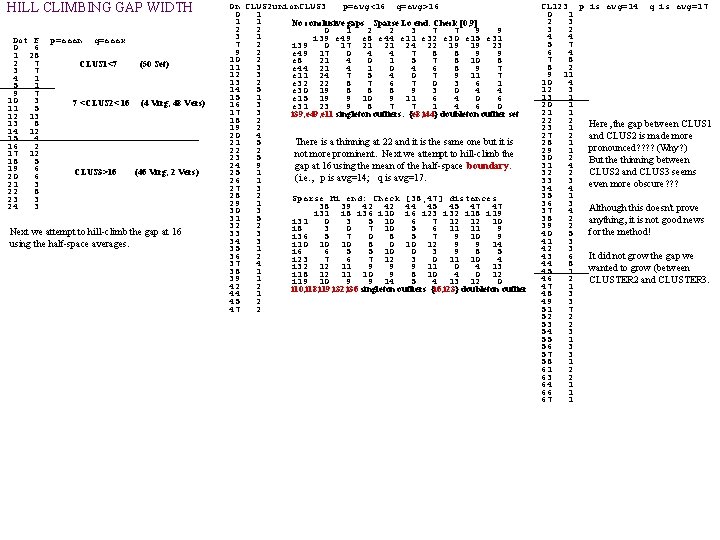

HILL CLIMBING GAP WIDTH Dot F 0 6 1 28 2 7 3 7 4 1 5 1 9 7 10 3 11 5 12 13 13 8 14 12 15 4 16 2 17 12 18 5 19 6 20 6 21 3 22 8 23 3 24 3 p=aaan q=aaax CLUS 1<7 7 <CLUS 2< 16 CLUS 3>16 (50 Set) (4 Virg, 48 Vers) (46 Virg, 2 Vers) Next we attempt to hill-climb the gap at 16 using the half-space averages. On CLUS 2 union. CLUS 3 p=avg<16 q=avg>16 0 1 1 1 No conclusive gaps Sparse Lo end: Check [0, 9] 2 2 0 1 2 2 3 7 7 9 9 3 1 i 39 e 49 e 8 e 44 e 11 e 32 e 30 e 15 e 31 7 2 i 39 0 17 21 21 24 22 19 19 23 9 2 e 49 17 0 4 4 7 8 8 9 9 10 2 e 8 21 4 0 1 5 7 8 10 8 11 3 e 44 21 4 1 0 4 6 8 9 7 12 3 e 11 24 7 5 4 0 7 9 11 7 13 2 e 32 22 8 7 6 7 0 3 6 1 14 5 e 30 19 8 8 8 9 3 0 4 4 15 1 e 15 19 9 10 9 11 6 4 0 6 16 3 e 31 23 9 8 7 7 1 4 6 0 17 3 i 39, e 49, e 11 singleton outliers. {e 8, i 44} doubleton outlier set 18 2 19 2 20 4 21 5 There is a thinning at 22 and it is the same one but it is 22 2 not more prominent. Next we attempt to hill-climb the 23 5 24 9 gap at 16 using the mean of the half-space boundary. 25 1 (i. e. , p is avg=14; q is avg=17. 26 1 27 3 28 2 Sparse Hi end: Check [38, 47] distances 29 1 38 39 42 42 44 45 45 47 47 30 3 i 31 i 8 i 36 i 10 i 6 i 23 i 32 i 18 i 19 31 5 i 31 0 3 5 10 6 7 12 12 10 32 2 i 8 3 0 7 10 5 6 11 11 9 33 3 i 36 5 7 0 8 5 7 9 10 9 34 3 i 10 10 10 8 0 10 12 9 9 14 35 1 i 6 6 5 5 10 0 3 9 8 5 36 2 i 23 7 6 7 12 3 0 11 10 4 37 4 i 32 12 11 9 9 9 11 0 4 13 38 1 i 18 12 11 10 9 8 10 4 0 12 39 1 i 19 10 9 9 14 5 4 13 12 0 42 2 i 10, i 18, i 19, i 32, i 36 singleton outliers {i 6, i 23} doubleton outlier 44 1 45 2 47 2 CL 123 p is avg=14 q is avg=17 0 1 2 3 3 2 4 4 5 7 6 4 7 8 8 2 9 11 10 4 12 3 13 1 20 1 21 1 22 2 Here, the gap between CLUS 1 23 1 27 2 and CLUS 2 is made more 28 1 pronounced? ? (Why? ) 29 1 30 2 But the thinning between 31 4 CLUS 2 and CLUS 3 seems 32 2 33 3 even more obscure? ? ? 34 4 35 1 36 3 Although this doesn't prove 37 4 38 2 anything, it is not good news 39 2 for the method! 40 5 41 3 42 3 43 6 It did not grow the gap we 44 8 wanted to grow (between 45 1 46 2 CLUSTER 2 and CLUSTER 3. 47 1 48 3 49 3 51 7 52 2 53 2 54 3 55 1 56 3 57 3 58 1 61 2 63 2 64 1 66 1 67 1

CAINE 2013 Call for Papers 26 th International Conference on Computer Applications in Industry and Engineering September 25{27, 2013, Omni Hotel, Los Angles, Califorria, USA Sponsored by the International Society for Computers and Their Applications (ISCA) Provides an international forum for presentation and discussion of research on computers and their applications. The conference also includes a Best Paper Award. CAINE{2013 will feature contributed papers as well as workshops and special sessions. Papers will be accepted into oral presentation sessions. The topics will include, but are not limited to, the following areas: Agent-Based Systems Image/Signal Processing Autonomous Systems Information Assurance Big Data Analytics Information Systems/Databases Bioinformatics, Biomedical Systems/Engineering Internet and Web-Based Systems Computer-Aided Design/Manufacturing Knowledge-based Systems Computer Architecture/VLSI Mobile Computing Computer Graphics and Animation Multimedia Applications Computer Modeling/Simulation Neural Networks Computer Security Pattern Recognition/Computer Vision Computers in Education Rough Set and Fuzzy Logic Computers in Healthcare Robotics Computer Networks Fuzzy Logic Control Systems Sensor Networks Data Communication Scientic Computing Data Mining Software Engineering/CASE Distributed Systems Visualization Embedded Systems Wireless Networks and Communication Important Dates Workshop/special session proposal. . . . May 2. 5, . 2. 01. 3 Full Paper Submission. . . June 5, . 2. 0. 1. 3. Notication of Acceptance. . . July. 5 , 2013. Pre-registration & Camera-Ready Paper Due. . . . . August 5, 2013. Event Dates. . . September 25 -27, 2013 The 22 nd SEDE Conference is interested in gathering researchers and professionals in the domains of Software Engineering and Data Engineering to present and discuss high-quality research results and outcomes in their fields. SEDE 2013 aims at facilitating cross-fertilization of ideas in Software and Data Engineering, The conference also encourages research and discussions on topics including, but not limited to: . Requirements Engineering for Data Intensive Software Systems. Software Verification and Model of Checking. Model-Based Methodologies. Software Quality and Software Metrics. Architecture and Design of Data Intensive Software Systems. Software Testing. Service- and Aspect-Oriented Techniques. Adaptive Software Systems. Information System Development. Software and Data Visualization. Development Tools for Data Intensive. Software Systems. Software Processes. Software Project Mgnt. Applications and Case Studies. Engineering Distributed, Parallel, and Peer-to-Peer Databases. Cloud infrastructure, Mobile, Distributed, and Peer-to-Peer Data Management. Semi-Structured Data and XML Databases. Data Integration, Interoperability, and Metadata. Data Mining: Traditional, Large-Scale, and Parallel. Ubiquitous Data Management and Mobile Databases. Data Privacy and Security. Scientific and Biological Databases and Bioinformatics. Social networks, web, and personal information management. Data Grids, Data Warehousing, OLAP. Temporal, Spatial, Sensor, and Multimedia Databases. Taxonomy and Categorization. Pattern Recognition, Clustering, and Classification. Knowledge Management and Ontologies. Query Processing and Optimization. Database Applications and Experiences. Web Data Mgnt and Deep Web Submission procedures May 23, 2013 Paper Submission Deadline June 30, 2013 Notification of Acceptance July 20, 2013 Registration and Camera-Ready Manuscript Conference Website: http: //theory. utdallas. edu/SEDE 2013/ September 25 -27, 2013 Omni Hotel, Los Angeles, California, USA The International Conference on Advanced Computing and Communications (ACC-2013) provides an international forum for presentation and discussion of research on a variety of aspects of advanced computing and its applications, and communication and networking systems. ACC-2013 will feature contributed as well as invited papers in all aspects of advanced computing and communications and will include a BEST PAPER AWARD given to a paper presented at the conference. Important Dates May 5, 2013 - Special Sessions Proposal Papers on Current FAUST Cluster (functional gap based) and on revisions to FAUST Classification. June 5, 2013 - Full Paper Submission Who will lead what? July 5, 2013 - Author Notification Aug. 5, 2013 - Advance Registration & Camera Ready Paper Due

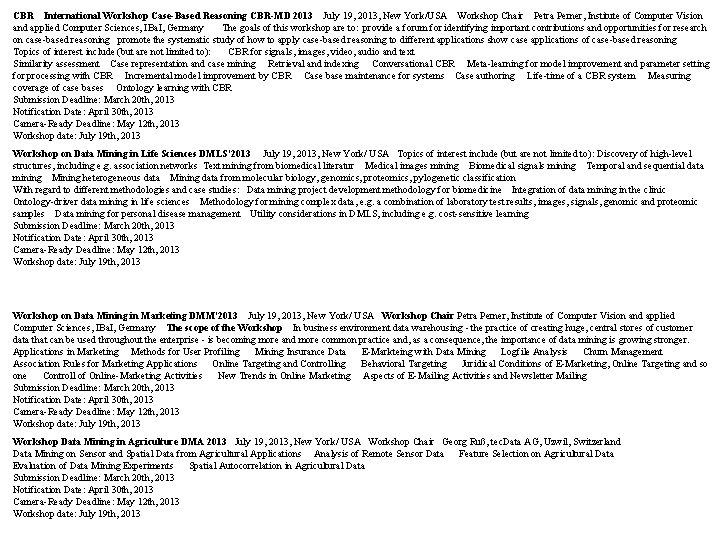

CBR International Workshop Case-Based Reasoning CBR-MD 2013 July 19, 2013, New York/USA Workshop Chair Petra Perner, Institute of Computer Vision and applied Computer Sciences, IBa. I, Germany The goals of this workshop are to: provide a forum for identifying important contributions and opportunities for research on case-based reasoning promote the systematic study of how to apply case-based reasoning to different applications show case applications of case-based reasoning Topics of interest include (but are not limited to): CBR for signals, images, video, audio and text Similarity assessment Case representation and case mining Retrieval and indexing Conversational CBR Meta-learning for model improvement and parameter setting for processing with CBR Incremental model improvement by CBR Case base maintenance for systems Case authoring Life-time of a CBR system Measuring coverage of case bases Ontology learning with CBR Submission Deadline: March 20 th, 2013 Notification Date: April 30 th, 2013 Camera-Ready Deadline: May 12 th, 2013 Workshop date: July 19 th, 2013 Workshop on Data Mining in Life Sciences DMLS'2013 July 19, 2013, New York/ USA Topics of interest include (but are not limited to): Discovery of high-level structures, including e. g. association networks Text mining from biomedical literatur Medical images mining Biomedical signals mining Temporal and sequential data mining Mining heterogeneous data Mining data from molecular biology, genomics, proteomics, pylogenetic classification With regard to different methodologies and case studies: Data mining project development methodology for biomedicine Integration of data mining in the clinic Ontology-driver data mining in life sciences Methodology for mining complex data, e. g. a combination of laboratory test results, images, signals, genomic and proteomic samples Data mining for personal disease management Utility considerations in DMLS, including e. g. cost-sensitive learning Submission Deadline: March 20 th, 2013 Notification Date: April 30 th, 2013 Camera-Ready Deadline: May 12 th, 2013 Workshop date: July 19 th, 2013 Workshop on Data Mining in Marketing DMM'2013 July 19, 2013, New York/ USA Workshop Chair Petra Perner, Institute of Computer Vision and applied Computer Sciences, IBa. I, Germany The scope of the Workshop In business environment data warehousing - the practice of creating huge, central stores of customer data that can be used throughout the enterprise - is becoming more and more common practice and, as a consequence, the importance of data mining is growing stronger. Applications in Marketing Methods for User Profiling Mining Insurance Data E-Markteing with Data Mining Logfile Analysis Churn Management Association Rules for Marketing Applications Online Targeting and Controlling Behavioral Targeting Juridical Conditions of E-Marketing, Online Targeting and so one Controll of Online-Marketing Activities New Trends in Online Marketing Aspects of E-Mailing Activities and Newsletter Mailing Submission Deadline: March 20 th, 2013 Notification Date: April 30 th, 2013 Camera-Ready Deadline: May 12 th, 2013 Workshop date: July 19 th, 2013 Workshop Data Mining in Agriculture DMA 2013 July 19, 2013, New York/ USA Workshop Chair Georg Ruß, tec. Data AG, Uzwil, Switzerland Data Mining on Sensor and Spatial Data from Agricultural Applications Analysis of Remote Sensor Data Feature Selection on Agricultural Data Evaluation of Data Mining Experiments Spatial Autocorrelation in Agricultural Data Submission Deadline: March 20 th, 2013 Notification Date: April 30 th, 2013 Camera-Ready Deadline: May 12 th, 2013 Workshop date: July 19 th, 2013

![Finding the best [or a good] unit vector, d, for the Dot Product functional, Finding the best [or a good] unit vector, d, for the Dot Product functional,](http://slidetodoc.com/presentation_image_h/f0c33da01225996b36d0097f29545e7f/image-10.jpg)

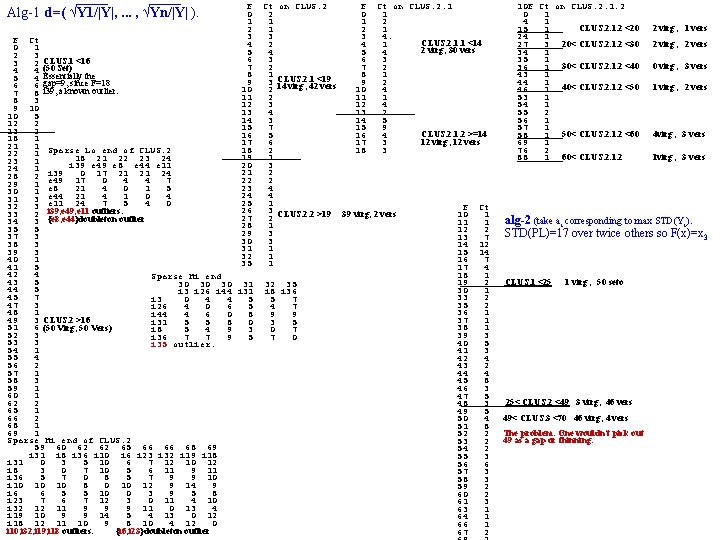

Finding the best [or a good] unit vector, d, for the Dot Product functional, DPP Xod = vector resulting from the matrix multiplication of X with d. If means column-wise average, Variance. DPPd(X) = subjected to i=1. . n di 2= 1 (Xod)2 - ( Xod )2 = (1/N) ( ( j=1. . n xi, jdj)2 ) - ( = (1/N) ( j=1. . n xi, jdj) ( k=1. . n xi, kdk) - ( = (1/N) ( j=1. . n xi, j 2 dj 2 + 2 j<k xi, jxi, kdjdk ) i=1. . N j=1. . n Xj 2 dj 2 +(2 j=1. . n<k Xj. Xkdjdk ) = (1/N) Xj 2 dj 2 - ( j=1. . n Xj 2 dj 2 + - Xj 2 ) dj 2 + ( Xj 2 j=1. . n = (1/N) Xj dj) ( k=1. . n Xk dk) X mm d = DPPd(X) X X 1. . . Xj. . . Xn x 1 x 2. d 1 = dn x 1 od x 2 od . . xi xi, j xiod x. Nod - ( j=1. . n Xj 2 dj 2 + 2 j<k Xj. Xkdjdk ) = (1/N) j=1. . n Xj dj )2 - ( j=1. . n Xj 2 dj 2 - 2 j<k Xj. Xkdjdk ) +(2 j=1. . n<k Xj. Xkdjdk ) - 2 j<k Xj. Xkdjdk ) +(2 j=1. . n<k=1. . n (Xj. Xk - Xj. Xk ) djdk ) VX o V X 1. . . Xj. . . Xn X 1 : Xi Xi. Xj-Xi. X, j : XN dd =Var. DPPd. X V d 1. . . dj. . . dn d 1 : di didj : d. N = V Alg 1 (heuristic): Compute ( , . . . , Xn 2 - Xn 2 ) ≡ Y using p. Trees only. The unit vector (a 1, . . . , an) ≡ A which X 12 - X 12 maximizes Yo. A is A=Y/|Y|=d 2. Thus d=( √Y 1/|Y|, . . . , √Yn/|Y| ). This assumes the 2 nd term (a covariance? ) is close to 0. We might want to go through an outlier removal step and then calculate d and then cluster using d-gap analysis? (next slide) Alg 2 (heuristic): Find maximum ak. Set that dk=1 and the others to zero. (We have done this when using ek with max std. . . ) Alg 3 (optimum): Of course we would prefer an algorithm that finds the d producing maximum Variance. DPP. Approach-1: View the n n matricies, VX and DD as n 2 -vectors. Then V=VXodd = [VXi, j] o[ddi, j] and the dd vector that gives the maximum V is VX/|VX|. So we want the d such that dd forms the minimum angle with VX (maximum cosine). So we want to minimize Cos(d) = VX o dd / |VM| is constant so minimize F(d)=VXodd. (note: |d|=1 |dd|=1, so dd is a unit vector iff d is a unit vector). The optimum d may be the one shown in Alg-1 because it is the square root of the diagonal of VX/|VX| and I believe VX still has at most n degrees of freedom (generates an n-D subspace of Rn 2) and is therefore a subset of the n-D space generated by its diagonal elements (or their square roots).

Alg-1 d=( √Y 1/|Y|, . . . , √Yn/|Y| ). F 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 35 F Ct 0 1 2 3 3 2 CLUS. 1 <16 4 4 (50 Set) 5 4 Essentially the 6 6 gap=9, since F=18 7 8 i 39, a known outlier. 8 3 9 10 10 5 12 2 13 2 18 1 21 1 Sparse Lo end of CLUS. 2 22 1 18 21 22 23 24 23 1 e 49 e 8 e 44 e 11 24 1 i 39 0 17 21 21 24 28 2 e 49 17 0 4 4 7 29 1 e 8 4 0 1 5 30 1 e 44 21 4 1 0 4 31 3 e 11 21 24 7 5 4 0 32 3 33 2 i 39, e 49, e 11 outliers. 34 3 {e 8, e 44}doubleton outlier 35 5 37 3 38 3 39 3 40 1 41 5 42 4 Sparse Hi end 43 5 30 30 30 31 44 5 i 3 i 26 i 44 i 31 45 7 i 3 0 4 4 5 47 3 i 26 4 0 6 5 48 1 i 44 4 6 0 8 49 3 CLUS. 2 >16 i 31 5 5 8 0 51 6 (50 Virg, 50 Vers) i 8 5 4 9 3 52 3 i 36 7 7 9 5 53 3 i 35 outlier. 54 1 55 4 56 2 57 1 58 3 59 1 60 1 62 2 65 1 66 2 68 1 69 1 Sparse Hi end of CLUS. 2 59 60 62 62 65 66 66 68 69 i 31 i 8 i 36 i 10 i 6 i 23 i 32 i 19 i 18 i 31 0 3 5 10 6 7 12 10 12 i 8 3 0 7 10 5 6 11 9 11 i 36 5 7 0 8 5 7 9 9 10 i 10 10 10 8 0 10 12 9 14 9 i 6 6 5 5 10 0 3 9 5 8 i 23 7 6 7 12 3 0 11 4 10 i 32 12 11 9 9 9 11 0 13 4 i 19 10 9 9 14 5 4 13 0 12 i 18 12 11 10 9 8 10 4 12 0 i 10, i 32, i 19, i 18 outliers. {i 6, i 23}doubleton outlier Ct 2 1 1 3 2 4 3 2 1 3 2 2 3 4 3 7 5 6 2 1 3 2 2 4 4 1 3 2 1 3 3 1 1 1 on CLUS. 2. 1 <19 14 virg; 42 vers CLUS. 2. 2 >19 32 35 i 8 i 36 5 7 4 7 9 9 3 5 0 7 7 0 F 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 Ct on CLUS. 2. 1 1 2 1 4. CLUS. 2. 1. 1 <14 1 2 virg; 30 vers 4 3 2 1 2 4 1 4 2 5 9 CLUS. 2. 1. 2 >=14 4 12 virg; 12 vers 3 3 39 virg, 2 vers 10 F Ct on CLUS. 2. 1. 2 0 1 4 1 _____30< CLUS. 2. 1. 2 <20 15 1 24 1 _____20< CLUS. 2. 1. 2 <30 27 3 34 1 35 1 _____30< CLUS. 2. 1. 2 <40 36 1 43 1 44 1 _____40< CLUS. 2. 1. 2 <50 46 1 53 1 54 1 55 2 56 1 57 1 _____50< CLUS. 2. 1. 2 <60 58 1 69 1 76 2 _____60< CLUS. 2. 1. 2 <60 88 1 2 virg; 1 vers 2 virg; 2 vers 0 virg; 3 vers 1 virg; 2 vers 4 virg; 3 vers 1 virg; 3 vers F Ct 10 1 alg-2 (take ai corresponding to max STD(Yi). 11 1 12 2 STD(PL)=17 over twice others so F(x)=x 3 13 7 14 12 15 14 16 7 17 4 18 1 _____CLUS. 1 <25 1 virg; 50 seto 19 2 30 1 33 2 35 2 36 1 37 1 38 1 39 3 40 5 41 3 42 4 43 2 44 4 45 8 46 3 47 5 _____25< CLUS. 2 <49 3 virg; 46 vers 48 3 49 5 49< CLUS. 3 <70 46 virg; 4 vers 50 4 51 8 The problem: One wouldn't pick out 52 2 49 as a gap or thinning. 53 2 54 2 55 3 56 6 57 3 58 3 59 2 60 2 61 3 63 1 64 1 66 1 67 2

![Functional Gap Clustering using Fpq(x)=RND[(x-p)o(q-p)/|q-p|-min. F] on a Spaeth image (p=mean) X x 1 Functional Gap Clustering using Fpq(x)=RND[(x-p)o(q-p)/|q-p|-min. F] on a Spaeth image (p=mean) X x 1](http://slidetodoc.com/presentation_image_h/f0c33da01225996b36d0097f29545e7f/image-12.jpg)

Functional Gap Clustering using Fpq(x)=RND[(x-p)o(q-p)/|q-p|-min. F] on a Spaeth image (p=mean) X x 1 x 2 1 1 3 1 2 2 3 3 5 2 9 3 15 1 14 2 15 3 13 4 10 9 11 1111 7 8 The 15 Value_Arrays (one for each q=z 1, z 2, z 3, . . . ) The 15 Count_Arrays z 1 z 2 z 3 z 4 z 3 z 5 z 6 z 7 z 8 z 4 z 9 za zbz 5 zc zd ze zfz 6 z 7 z 8 z 9 0 1 2 5 6 10 11 12 14 0 2 0 5 0 6 0 10 0 11 0 12 0 14 1 0 0 0 0 1 0 0 5 0 0 1 12 0 0 0 2 0 0 6 0 1 11 0 0 14 0 0 0 0 0 1 0 3 1 6 0 10 0 11 0 12 0 14 0 0 1 0 0 0 0 1 0 2 1 3 0 5 0 6 0 10 0 11 0 12 0 14 0 0 1 0 0 0 0 0 0 0 0 1 0 2 0 3 1 7 0 8 0 9 0 10 0 0 Level 0, stride=z 1 Point. Set (as a p. Tree mask) 0 0 0 1 1 1 2 2 2 3 3 3 4 4 4 6 6 6 2 3 4 5 7 11 12 13 zb 0 1 2 3 4 6 8 10 11 12 zc 0 1 2 3 5 6 7 8 0 1 2 3 7 8 9 10 ze 0 1 2 3 5 7 9 11 12 13 5 6 The FAUST algorithm: 7 1 1 2 1 z 2 2 2 4 1 1 2 1 z 3 1 5 2 1 1 2 1 z 4 2 2 1 1 2 1 z 5 2 2 3 1 1 1 z 6 2 1 1 3 3 3 z 7 1 4 1 3 1 1 1 2 1 z 8 1 2 3 1 1 2 1 z 9 2 1 1 2 1 3 1 1 2 1 za 2 1 1 1 4 1 1 2 zb 1 2 1 1 3 2 1 1 1 2 zc 1 1 1 2 2 1 1 1 zd 3 3 3 1 1 2 ze 1 1 2 1 3 2 1 1 2 1 zf 1 2 1 2 2 2 1 p. Tree masks of the 3 z 1_clusters (obtained by ORing) 9 11 12 13 zd 3 4 7 10 12 13 1 1 2 9 11 12 0 0 2 9 11 12 za zf z 1 1 2 3 4 5 6 7 8 9 a b 1 1=q 2 3 3 2 4 4 5 5 10] = 6 F 6, F= 7 f [ : 8 gap 9 6 p d 5] b = a F 2, F= b [ F=2 c e : c gap F=1 Fp=MN, q=z 1=0 d a e 8 f 7 9 8 1 2 9 10 11 1. project onto each pq line using the dot product with the unit vector from p to q. 2. Generate Value. Arrays (also generate the Count. Array and the mask p. Trees). 3. Analyze all gaps and create sub-cluster p. Tree Masks. z 11 0 0 0 1 1 1 1 0 z 12 0 0 0 1 0 0 0 0 1 z 13 1 1 1 0 0 0 0 0

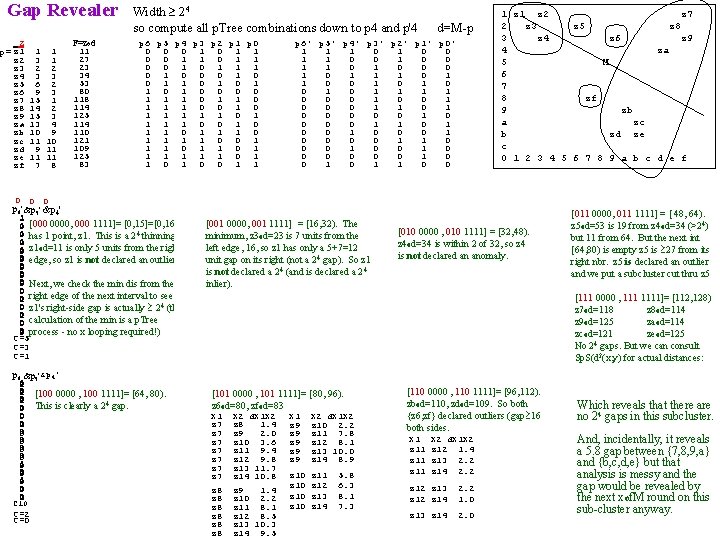

Gap Revealer Z p= z 1 z 2 z 3 z 4 z 5 z 6 z 7 z 8 z 9 za zb zc zd ze zf 1 1 3 1 2 2 3 3 6 2 9 3 15 1 14 2 15 3 13 4 10 9 11 11 11 7 8 F=zod 11 27 23 34 53 80 118 114 125 114 110 121 109 125 83 Width 24 so compute all p. Tree combinations down to p 4 and p'4 d=M-p p 6 p 5 p 4 p 3 p 2 p 1 p 0 0 1 0 1 1 1 0 0 0 1 1 0 0 0 0 1 1 1 0 0 1 1 1 1 1 0 0 1 1 1 1 0 0 1 1 1 0 1 1 0 0 1 1 p 6' p 5' p 4' p 3' p 2' p 1' p 0' 1 1 1 0 0 1 1 0 0 0 1 1 1 0 0 1 1 1 1 0 0 0 1 1 0 0 0 0 0 1 1 0 0 0 0 1 1 0 0 0 1 0 0 1 z 2 z 7 2 z 3 z 5 z 8 3 z 4 z 6 z 9 4 za 5 M 6 7 8 zf 9 zb a zc b zd ze c 0 1 2 3 4 5 6 7 8 9 a b c d e f 0 0 0 p 6'p 5'p 4' 1 p &p 6' 5' &p 4' 0 1 1 0 [000 0000, 000 1111]= [0, 15]=[0, 16) 1 1 4 thinning. 0 0 has 1 point, z 1. This is a 2 0 1 1 0 z 1 o d=11 is only 5 units from the right 0 1 1 0 0 0 1 edge, so z 1 is not declared an outlier) 0 0 0 Next, we check the min dis from the 0 1 0 0 right edge of the next interval to see if 0 0 1 4 (the 1 0 z 1's right-side gap is actually 2 0 0 0 1 calculation of the min is a p. Tree 0 0 1 process - no x looping required!) C=5 C=3 C=1 p 6'p 5'p 4 p 6'p 5 p 4' 0 0 1 1 1 1 0 0 1 [001 0000, 001 1111] = [16, 32). The 1 1 1 [010 0000 , 010 1111] = [32, 48). 0 minimum, z 3 od=23 is 7 units from the 0 1 1 1 1 0 0 z 4 od=34 is within 2 of 32, so z 4 1 1 0 0 0 left edge, 16, so z 1 has only a 5+7=12 0 is not declared an anomaly. 1 1 0 0 unit gap on its right (not a 2 0 4 gap). So z 1 0 1 1 0 0 1 1 4 (and is declared a 2 4 is not declared a 2 1 1 0 0 1 1 1 inlier). 1 0 0 0 0 1 1 1 0 0 1 1 1 0 0 1 C=5 C=2 C=3 C=2 C=1 p 6 p 5'p 4' p 6 &p 5'&p 4' 1 0 0 1 [100 0000 , 100 1111]= [64, 80). 1 0 0 1 1 4 gap. 1 This is clearly a 2 0 1 0 0 0 1 1 0 0 1 1 0 0 1 1 p 6 0 1 1 0 1 0 0 1 1 0 1 C 10 C=2 C=0 p 6'p 5 p 4 0 0 1 1 0 [011 0000, 011 1111] = [ 48, 64). 0 1 1 0 z 5 od=53 is 19 from z 4 0 od=34 (>24) 0 0 1 1 1 but 11 from 64. But the next int 1 1 1 0 [64, 80) is empty z 5 is 27 from its 0 1 1 0 0 right nbr. z 5 is declared an outlier 1 1 0 0 1 and we put a subcluster cut thru z 5 1 0 0 1 1 1 0 0 1 [111 0000 , 1111]= [112, 128) 0 0 1 z 7 od=118 1 z 8 1 od=114 0 0 1 1 z 9 od=125 1 od=114 0 0 za C=5 zcod=121 ze od=125 C=2 C=1 No 24 gaps. But we can consult Sp. S(d 2(x, y) for actual distances: p 5'p 4 p 6 p 5'p 4 0 1 [101 0000 , 101 1111]= [80, 96). 0 1 z 6 od=80, zfod=83 0 1 X 2 d. X 1 X 2 0 X 1 X 2 d. X 1 X 2 z 7 z 8 1. 4 1 z 9 z 10 2. 2 0 z 7 z 9 2. 0 1 z 9 z 11 7. 8 0 z 7 z 10 3. 6 1 z 9 z 12 8. 1 1 0 z 7 z 11 9. 4 z 9 z 13 10. 0 1 0 z 7 z 12 9. 8 z 9 z 14 8. 9 0 z 7 z 13 11. 7 1 1 z 10 z 11 5. 8 z 7 z 14 10. 8 0 0 1 z 10 z 12 6. 3 1 z 8 z 9 1. 4 0 1 z 8 z 10 2. 2 z 10 z 13 8. 1 C 10 z 8 z 11 8. 1 z 10 z 14 7. 3 C=2 z 12 8. 5 C=2 z 8 z 13 10. 3 z 8 z 14 9. 5 p 6 p 5 p 4' p 6 p 5 p 4 0 0 0 1 1 0 [110 0000 , 110 1111]= [96, 112). 0 0 0 1 1 0 zb o d=110, zd o d=109. So both 0 0 1 1 1 Which reveals that there are 1 0 0 0 1 1 1 no 24 gaps in this subcluster. {z 6, zf} declared outliers (gap 16 1 1 0 0 both sides. 1 1 1 0 0 1 X 2 d. X 1 X 2 1 1 1 And, incidentally, it reveals 0 0 1 1 0 0 1 z 12 1. 4 a 5. 8 gap between {7, 8, 9, a} 1 1 1 0 0 1 z 13 2. 2 1 1 1 0 0 and {b, c, d, e} but that 1 z 14 2. 2 1 1 analysis is messy and the 0 0 1 1 1 0 z 12 z 13 2. 2 1 1 gap would be revealed by 0 0 1 1 0 0 0 the next xof. M round on this 0 z 12 z 14 1. 0 C 10 C=8 C=2 C 10 z 13 z 14 2. 0 C=8 C=6 sub-cluster anyway.

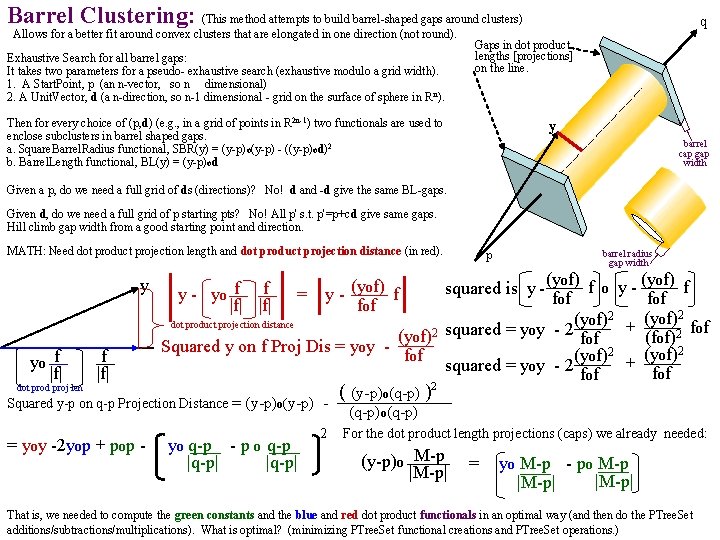

Barrel Clustering: (This method attempts to build barrel-shaped gaps around clusters) Allows for a better fit around convex clusters that are elongated in one direction (not round). Exhaustive Search for all barrel gaps: It takes two parameters for a pseudo- exhaustive search (exhaustive modulo a grid width). 1. A Start. Point, p (an n-vector, so n dimensional) 2. A Unit. Vector, d (a n-direction, so n-1 dimensional - grid on the surface of sphere in R n). q Gaps in dot product lengths [projections] on the line. Then for every choice of (p, d) (e. g. , in a grid of points in R 2 n-1) two functionals are used to enclose subclusters in barrel shaped gaps. a. Square. Barrel. Radius functional, SBR(y) = (y-p) o(y-p) - ((y-p)od)2 b. Barrel. Length functional, BL(y) = (y-p) od y barrel cap gap width Given a p, do we need a full grid of ds (directions)? No! d and -d give the same BL-gaps. Given d, do we need a full grid of p starting pts? No! All p' s. t. p'=p+cd give same gaps. Hill climb gap width from a good starting point and direction. MATH: Need dot product projection length and dot product projection distance (in red). y yo f |f| p barrel radius gap width (yof) f o y - (yof) f squared is y - fof (yof)2 + f dot product projection distance (yof)2 squared = yoy - 2 fof (f of)2 Squared y on f Proj Dis = y o y f (yof)2 + fof squared = yoy - 2 fof y - yo f f |f| = y - (yof) f fof ( (y-p)o(q-p) )2 Squared y-p on q-p Projection Distance = (y-p)o(y-p) (q-p)o(q-p) dot prod proj len 1 st = yoy -2 yop + pop - ( yo(q-p) - p o(q-p |q-p| 2 For the dot product length projections (caps) we already needed: (y-p)o M-p = ( yo(M-p) - po M-p ) |M-p| That is, we needed to compute the green constants and the blue and red dot product functionals in an optimal way (and then do the PTree. Set additions/subtractions/multiplications). What is optimal? (minimizing PTree. Set functional creations and PTree. Set operations. )

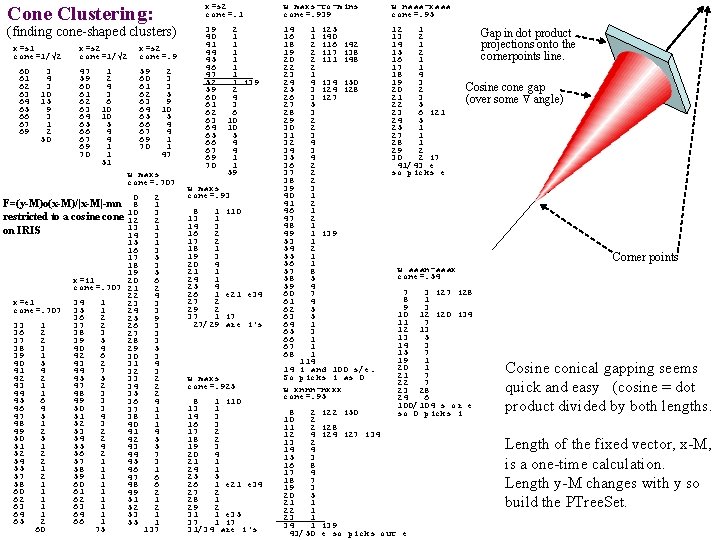

Cone Clustering: (finding cone-shaped clusters) x=s 1 cone=1/√ 2 60 61 62 63 64 65 66 67 69 3 4 3 10 15 9 3 1 2 50 x=s 2 cone=1/√ 2 x=s 2 cone=. 9 47 59 60 61 62 63 64 65 66 67 69 70 1 2 4 3 6 10 10 5 4 4 1 1 51 2 3 3 5 9 10 5 4 4 1 1 47 w maxs cone=. 707 0 2 F=(y-M)o(x-M)/|x-M|-mn 8 1 3 restricted to a cosine cone 10 12 2 13 1 on IRIS 14 3 15 1 16 3 17 5 18 3 19 5 x=i 1 20 6 cone=. 707 21 2 22 4 x=e 1 34 1 23 3 cone=. 707 35 1 24 3 36 2 25 9 33 1 37 2 26 3 36 2 38 3 27 3 37 2 39 5 28 3 38 3 40 4 29 5 39 1 42 6 30 3 40 5 43 2 31 4 44 7 32 3 42 2 45 5 33 2 43 1 47 2 34 2 44 1 48 3 35 2 45 6 49 3 36 4 46 4 50 3 37 1 47 5 51 4 38 1 48 1 52 3 40 1 49 2 53 2 41 4 50 5 54 2 42 5 51 1 55 4 43 5 52 2 56 2 44 7 54 2 57 1 45 3 55 1 58 1 46 1 57 2 59 1 47 6 58 1 60 1 48 6 60 1 61 1 49 2 62 1 51 1 63 1 52 2 64 1 53 1 65 2 66 1 55 1 60 75 137 x=s 2 cone=. 1 w maxs-to-mins cone=. 939 w naaa-xaaa cone=. 95 39 40 41 44 45 46 47 52 59 60 61 62 63 64 65 66 67 69 70 14 16 18 19 20 22 23 24 25 26 27 28 29 30 31 32 34 35 36 37 38 39 40 41 46 47 48 49 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 12 1 13 2 14 1 15 2 16 1 17 1 18 4 19 3 20 2 21 3 22 5 23 6 i 21 24 5 25 1 27 1 28 1 29 2 30 2 i 7 41/43 e so picks e 2 1 1 1 1 i 39 2 4 3 6 10 10 5 4 4 1 1 59 w maxs cone=. 93 8 1 13 1 14 3 16 2 17 2 18 1 19 3 20 4 21 1 24 1 25 4 26 1 27 2 29 2 37 1 27/29 i 10 e 21 e 34 i 7 are i's w maxs cone=. 925 8 1 i 10 13 1 14 3 16 3 17 2 18 2 19 3 20 4 21 1 24 1 25 5 26 1 e 21 e 34 27 2 28 1 29 2 31 1 e 35 37 1 i 7 31/34 are i's 1 i 25 1 i 40 2 i 16 i 42 2 i 17 i 38 2 i 11 i 48 2 1 4 i 34 i 50 3 i 24 i 28 3 i 27 5 3 2 2 3 4 2 2 2 3 1 2 1 1 i 39 1 2 1 1 8 5 4 7 4 5 5 1 3 1 114 14 i and 100 s/e. So picks i as 0 w xnnn-nxxx cone=. 95 8 2 10 2 11 2 12 4 13 2 14 4 15 3 16 8 17 4 18 7 19 3 20 5 21 1 22 1 23 1 34 1 43/50 i 22 i 50 Gap in dot product projections onto the cornerpoints line. Cosine cone gap (over some angle) Corner points w aaan-aaax cone=. 54 7 3 i 27 i 28 8 1 9 3 10 12 i 20 i 34 11 7 12 13 13 5 14 3 15 7 19 1 20 1 21 7 22 7 23 28 24 6 100/104 s or e so 0 picks i i 28 i 24 i 27 i 34 i 39 e so picks out e Cosine conical gapping seems quick and easy (cosine = dot product divided by both lengths. Length of the fixed vector, x-M, is a one-time calculation. Length y-M changes with y so build the PTree. Set.

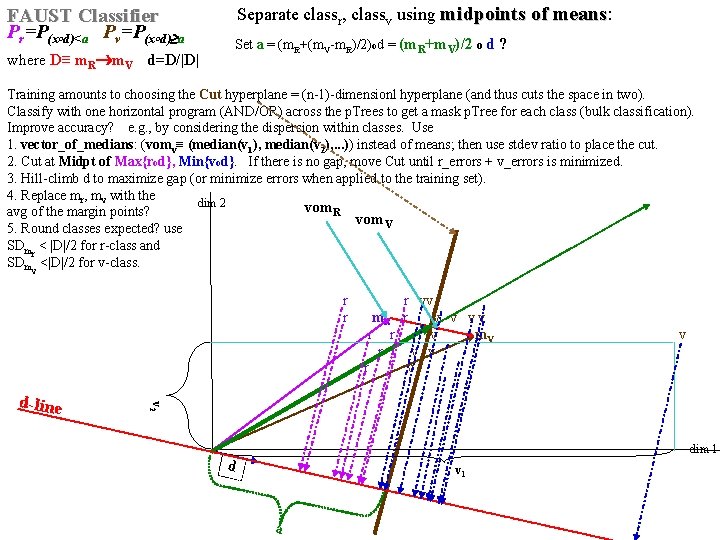

Separate classr, classv using midpoints of means: means FAUST Classifier Pr=P(x d)<a Pv=P(x d) a o o Set a = (m. R+(m. V-m. R)/2)od = (m. R+m. V)/2 o d ? where D≡ m. R m. V d=D/|D| Training amounts to choosing the Cut hyperplane = (n-1)-dimensionl hyperplane (and thus cuts the space in two). Classify with one horizontal program (AND/OR) across the p. Trees to get a mask p. Tree for each class (bulk classification). Improve accuracy? e. g. , by considering the dispersion within classes. Use 1. vector_of_medians: (vomv≡ (median(v 1), median(v 2), . . . )) instead of means; then use stdev ratio to place the cut. 2. Cut at Midpt of Max{rod}, Min{vod}. If there is no gap, move Cut until r_errors + v_errors is minimized. 3. Hill-climb d to maximize gap (or minimize errors when applied to the training set). 4. Replace mr, mv with the dim 2 vom. R avg of the margin points? vom. V 5. Round classes expected? use SDmr < |D|/2 for r-class and SDmv <|D|/2 for v-class. v 2 d-line r vv r m. R r v v v v r r v m. V v r v dim 1 d v 1 a

- Slides: 16