2020 11 28 Biostatistics for biomedical profession Lecture

2020‐ 11‐ 28 Biostatistics for biomedical profession Lecture II BIMM 18 Karin Källen & Linda Hartman September 2015 1

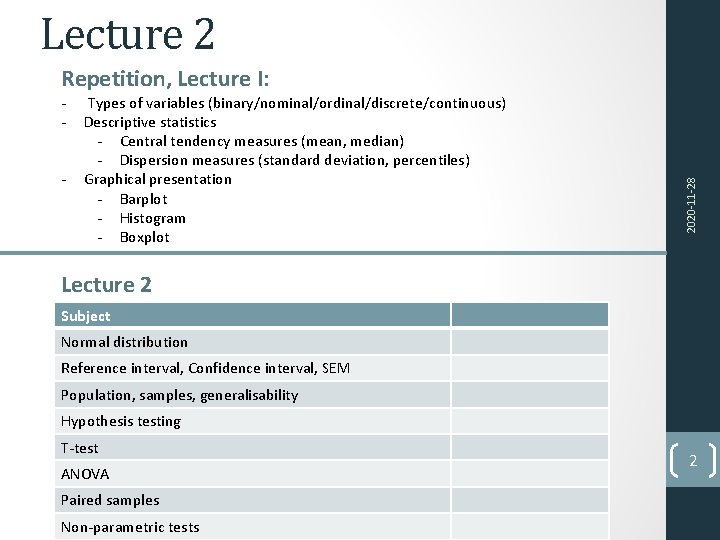

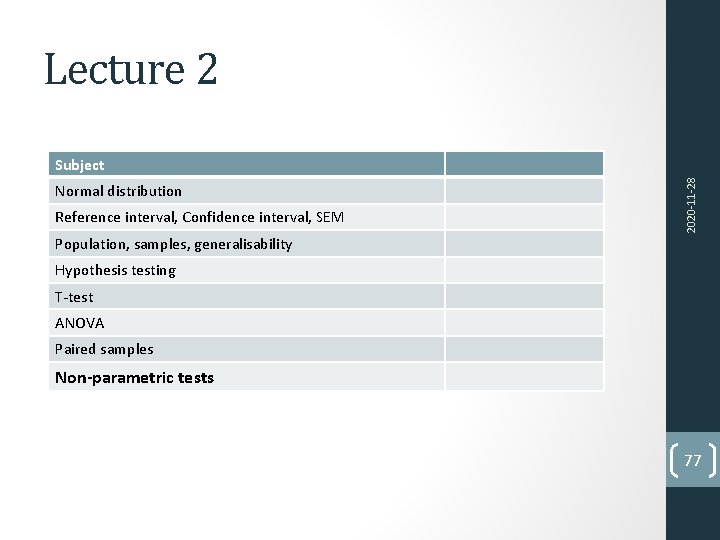

Lecture 2 ‐ ‐ ‐ Types of variables (binary/nominal/ordinal/discrete/continuous) Descriptive statistics ‐ Central tendency measures (mean, median) ‐ Dispersion measures (standard deviation, percentiles) Graphical presentation ‐ Barplot ‐ Histogram ‐ Boxplot 2020‐ 11‐ 28 Repetition, Lecture I: Lecture 2 Subject Normal distribution Reference interval, Confidence interval, SEM Population, samples, generalisability Hypothesis testing T‐test ANOVA Paired samples Non‐parametric tests 2

‐ Types of variables (binary/nominal/ordinal/discrete/continuous) ‐ Descriptive statistics ‐ Central tendency measures (mean, median) ‐ Dispersion measures (standard deviation, percentiles) 2020‐ 11‐ 28 Repetition, Lecture I: ‐ Graphical presentation ‐ Barplot ‐ Histogram ‐ Boxplot 3

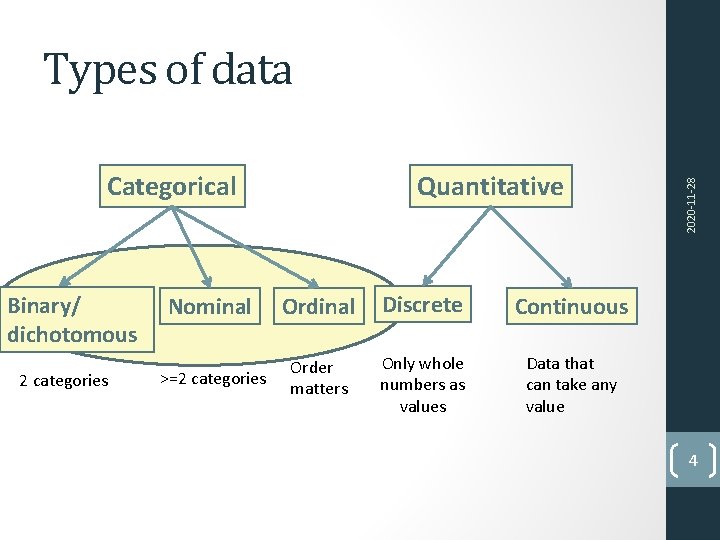

Categorical Binary/ dichotomous 2 categories Quantitative Nominal Ordinal Discrete Continuous >=2 categories Order matters Only whole numbers as values Data that can take any value 2020‐ 11‐ 28 Types of data 4

‐ Types of variables (binary/nominal/ordinal/discrete/continuous) ‐ Descriptive statistics ‐ Central tendency measures (mean, median) ‐ Dispersion measures (standard deviation, percentiles) 2020‐ 11‐ 28 Repetition, Lecture I: ‐ Graphical presentation ‐ Barplot ‐ Histogram ‐ Boxplot 5

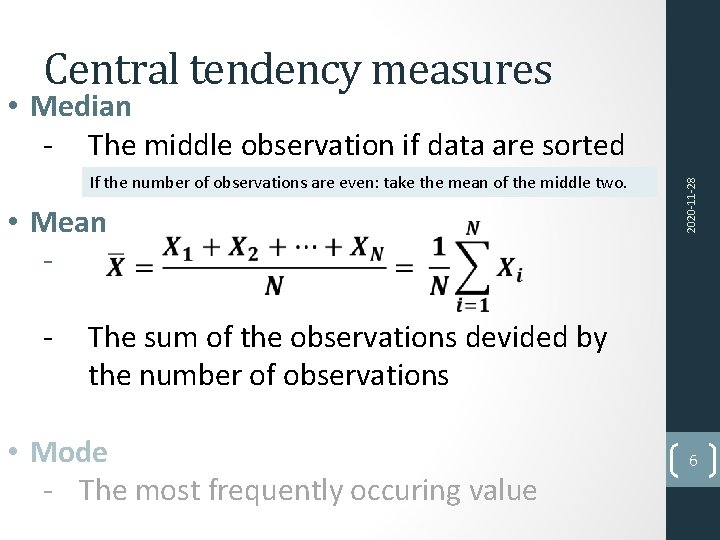

• Median ‐ The middle observation if data are sorted What to do if there is an even number of observations? If the number of observations are even: take the mean of the middle two. • Mean ‐ ‐ 2020‐ 11‐ 28 Central tendency measures The sum of the observations devided by the number of observations • Mode ‐ The most frequently occuring value 6

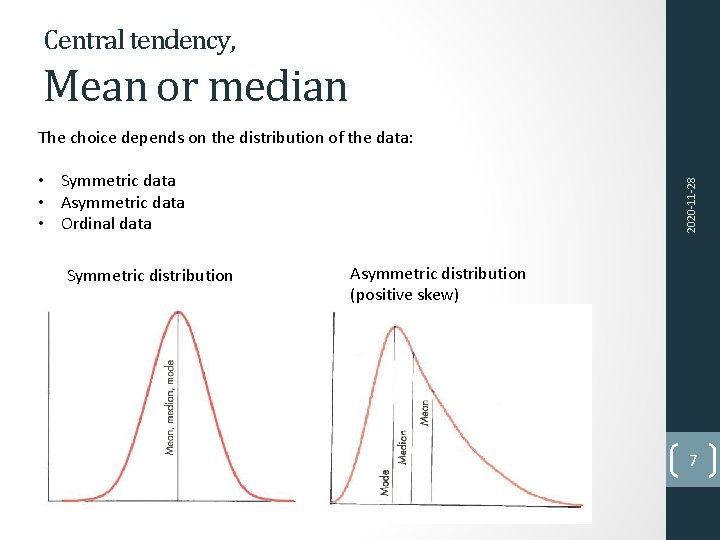

Central tendency, Mean or median The choice depends on the distribution of the data: Symmetric distribution 2020‐ 11‐ 28 • Symmetric data • Asymmetric data • Ordinal data Asymmetric distribution (positive skew) 7

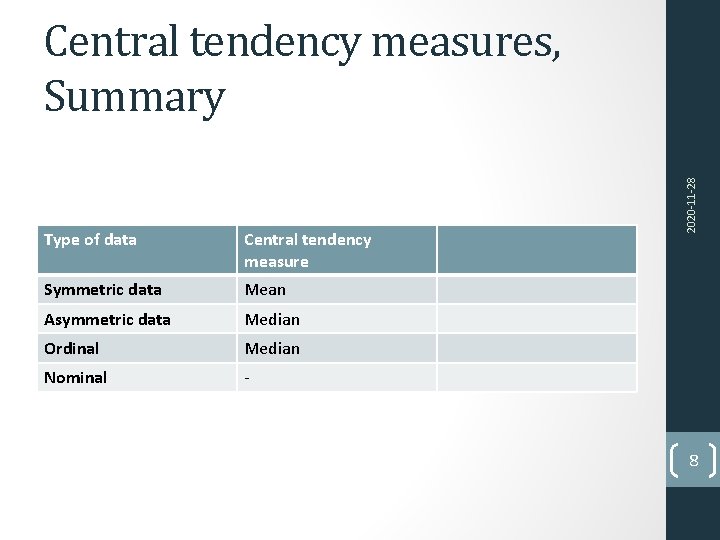

Type of data Central tendency measure Symmetric data Mean Asymmetric data Median Ordinal Median Nominal ‐ 2020‐ 11‐ 28 Central tendency measures, Summary 8

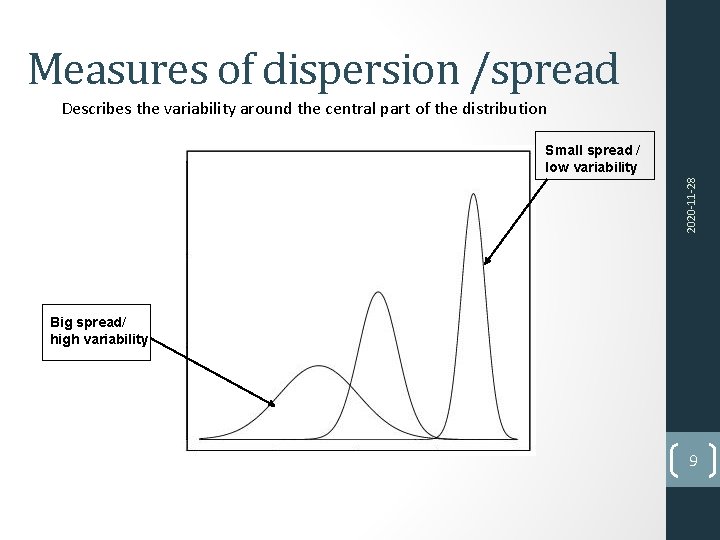

Measures of dispersion /spread Describes the variability around the central part of the distribution 2020‐ 11‐ 28 Small spread / low variability Big spread/ high variability 9

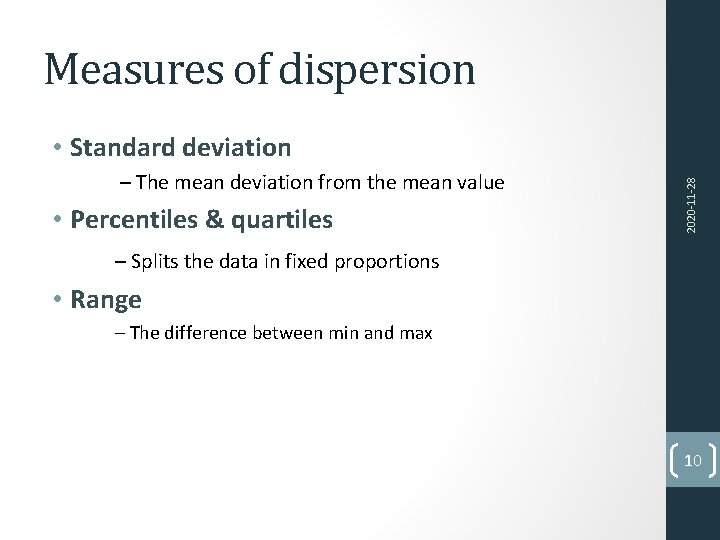

Measures of dispersion – The mean deviation from the mean value • Percentiles & quartiles 2020‐ 11‐ 28 • Standard deviation – Splits the data in fixed proportions • Range – The difference between min and max 10

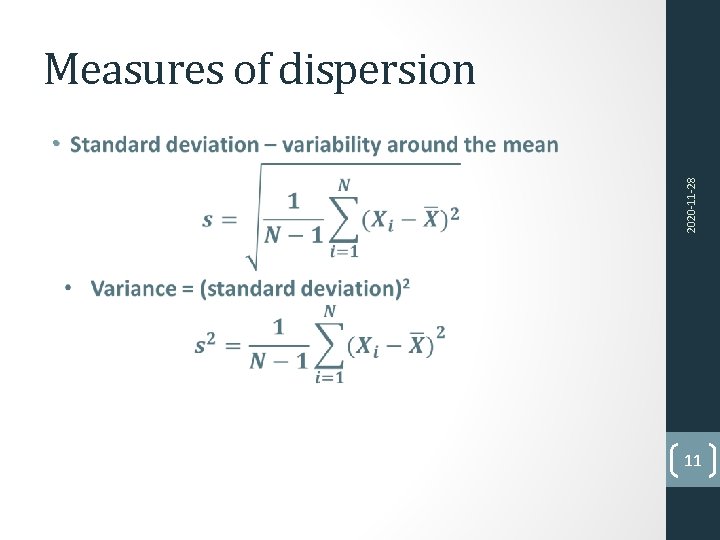

Measures of dispersion 2020‐ 11‐ 28 • 11

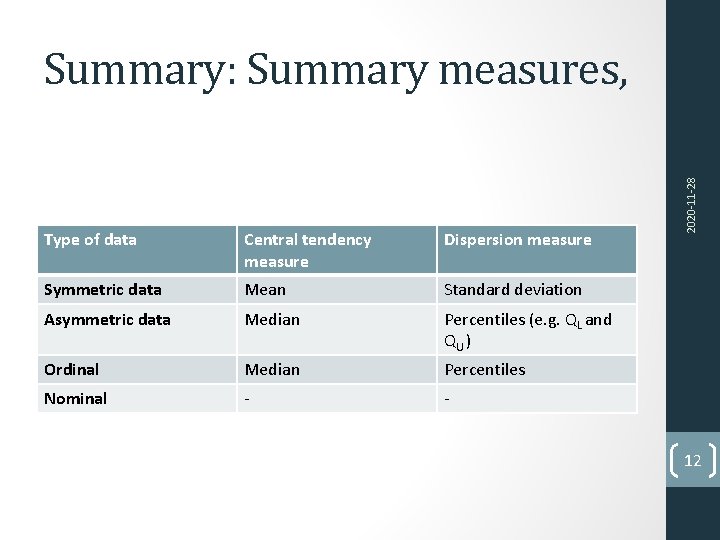

Type of data Central tendency measure Dispersion measure Symmetric data Mean Standard deviation Asymmetric data Median Percentiles (e. g. QL and QU ) Ordinal Median Percentiles Nominal ‐ ‐ 2020‐ 11‐ 28 Summary: Summary measures, 12

‐ Types of variables (binary/nominal/ordinal/discrete/continuous) ‐ Descriptive statistics ‐ Central tendency measures (mean, median) ‐ Dispersion measures (standard deviation, percentiles) 2020‐ 11‐ 28 Repetition, Lecture I: ‐ Graphical presentation ‐ Barplot ‐ Histogram ‐ Boxplot 13

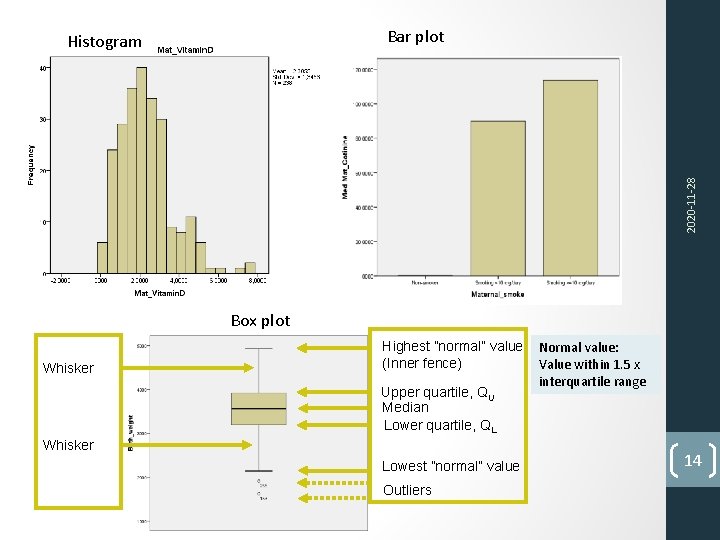

Bar plot 2020‐ 11‐ 28 Histogram Box plot Whisker Highest ”normal” value (Inner fence) Upper quartile, QU Median Lower quartile, QL Whisker Lowest ”normal” value Outliers Normal value: Value within 1. 5 x interquartile range 14

Lecture 2 ‐ ‐ ‐ Types of variables (binary/nominal/ordinal/discrete/continuous) Descriptive statistics ‐ Central tendency measures (mean, median) ‐ Dispersion measures (standard deviation, percentiles) Graphical presentation ‐ Barplot ‐ Histogram ‐ Boxplot 2020‐ 11‐ 28 Repetition, Lecture I: Lecture 2 Subject Normal distribution Reference interval, Confidence interval, SEM Population, samples, generalisability Hypothesis testing T‐test ANOVA Paired samples Non‐parametric tests 15

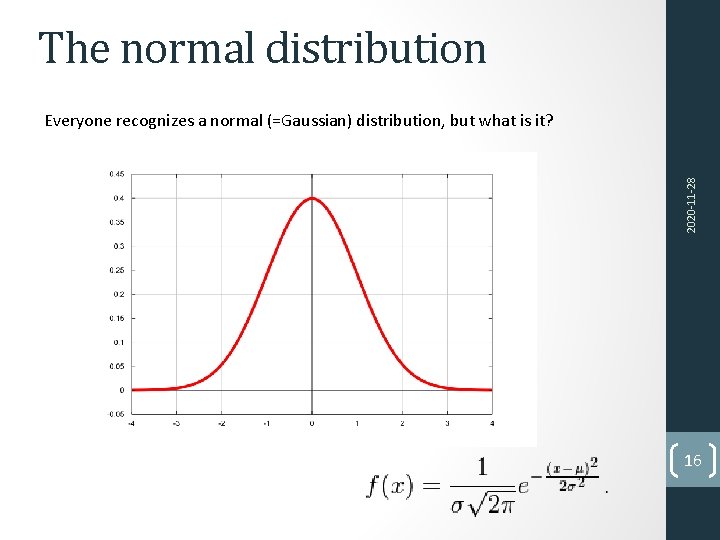

The normal distribution 2020‐ 11‐ 28 Everyone recognizes a normal (=Gaussian) distribution, but what is it? 16

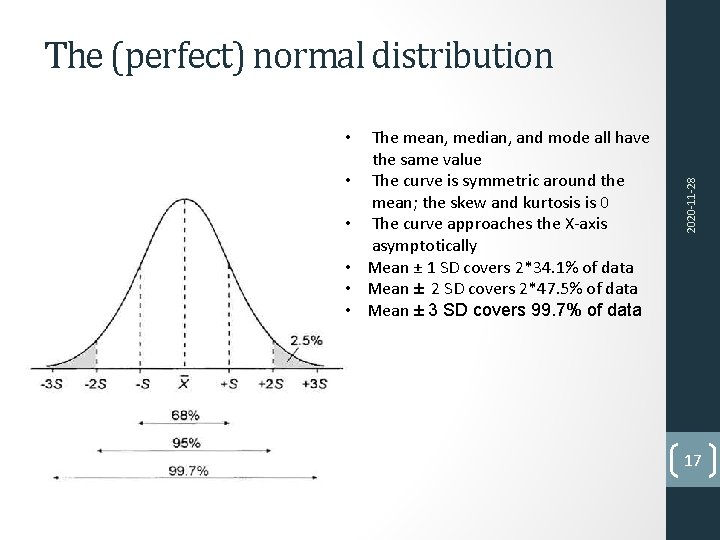

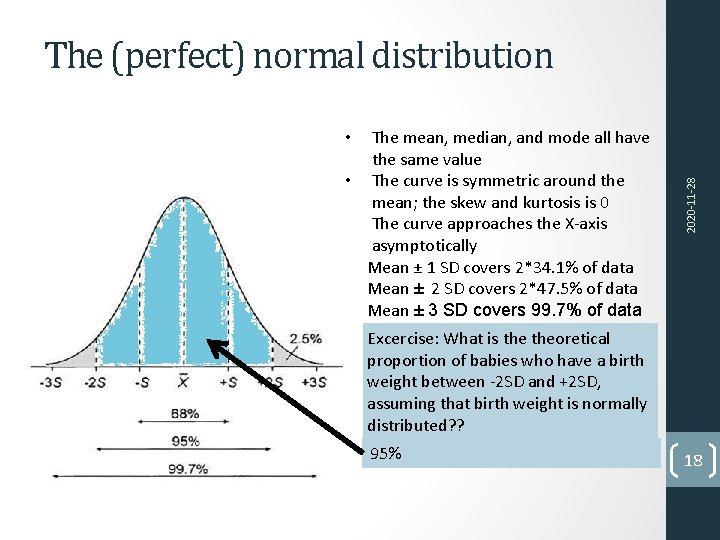

• • • The mean, median, and mode all have the same value The curve is symmetric around the mean; the skew and kurtosis is 0 The curve approaches the X‐axis asymptotically Mean ± 1 SD covers 2*34. 1% of data Mean ± 2 SD covers 2*47. 5% of data Mean ± 3 SD covers 99. 7% of data 2020‐ 11‐ 28 The (perfect) normal distribution 17

• • • The mean, median, and mode all have the same value The curve is symmetric around the mean; the skew and kurtosis is 0 The curve approaches the X‐axis asymptotically Mean ± 1 SD covers 2*34. 1% of data Mean ± 2 SD covers 2*47. 5% of data Mean ± 3 SD covers 99. 7% of data 2020‐ 11‐ 28 The (perfect) normal distribution Excercise: What is theoretical proportion of babies who have a birth weight between ‐ 2 SD and +2 SD, assuming that birth weight is normally distributed? ? 95% 18

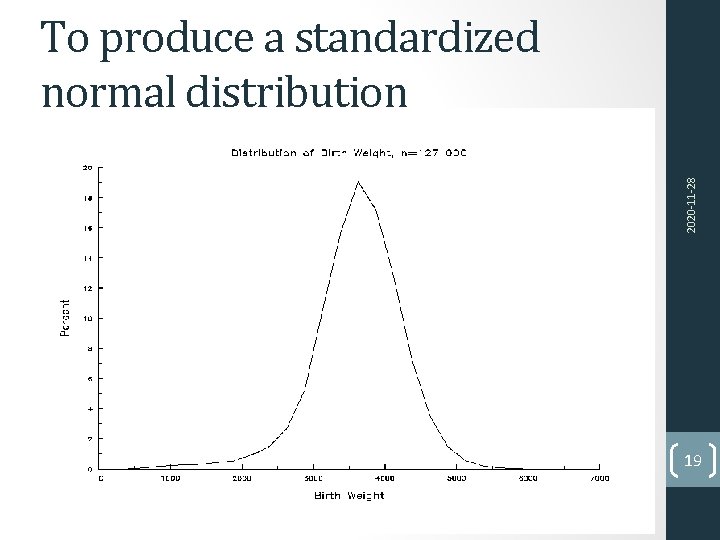

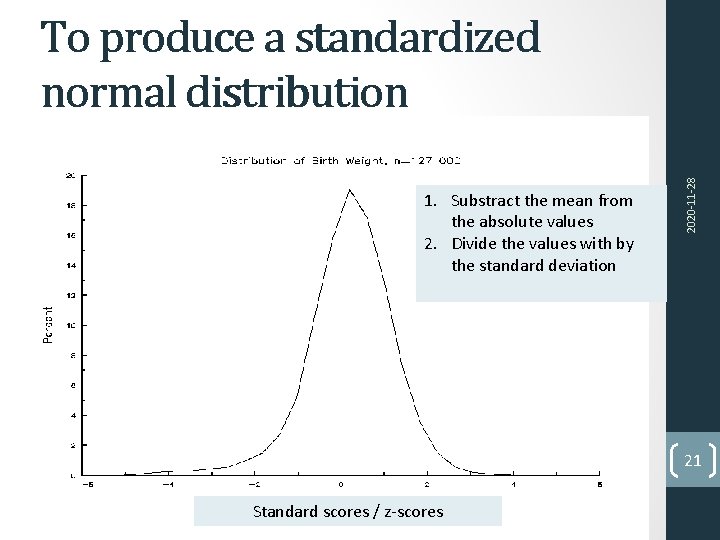

2020‐ 11‐ 28 To produce a standardized normal distribution 19

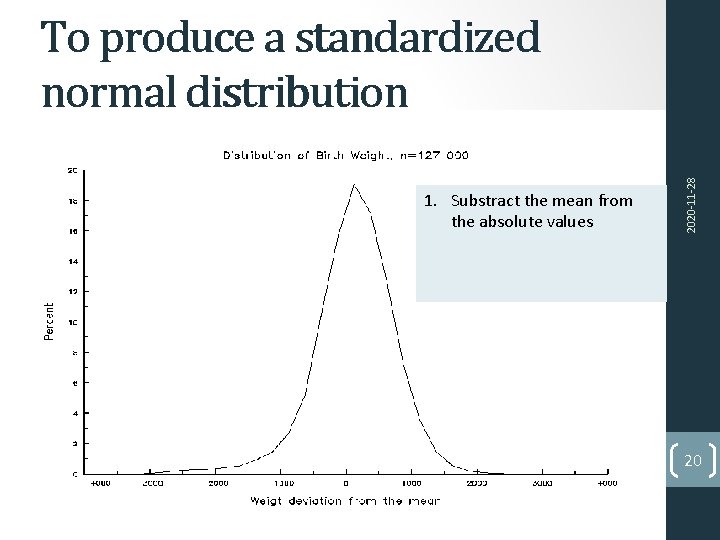

1. Substract the mean from the absolute values 2020‐ 11‐ 28 To produce a standardized normal distribution 20

1. Substract the mean from the absolute values 2. Divide the values with by the standard deviation 2020‐ 11‐ 28 To produce a standardized normal distribution 21 Standard scores / z‐scores

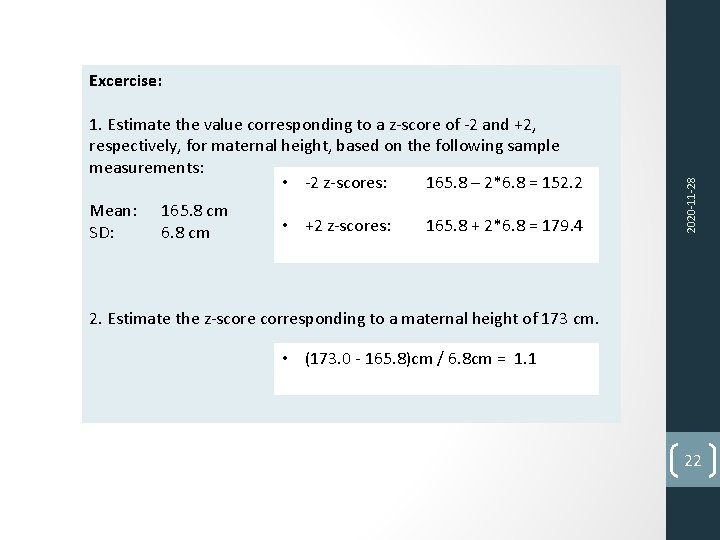

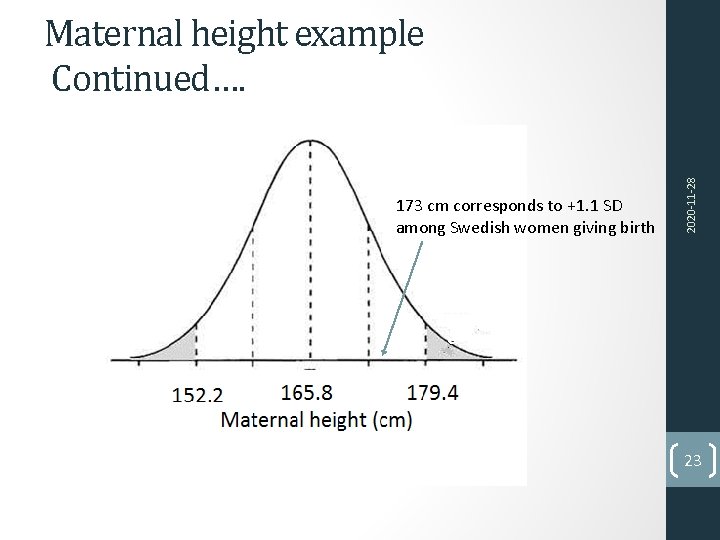

1. Estimate the value corresponding to a z‐score of ‐ 2 and +2, respectively, for maternal height, based on the following sample measurements: • ‐ 2 z‐scores: 165. 8 – 2*6. 8 = 152. 2 Mean: SD: 165. 8 cm 6. 8 cm • +2 z‐scores: 165. 8 + 2*6. 8 = 179. 4 2020‐ 11‐ 28 Excercise: 2. Estimate the z‐score corresponding to a maternal height of 173 cm. • (173. 0 ‐ 165. 8)cm / 6. 8 cm = 1. 1 22

173 cm corresponds to +1. 1 SD among Swedish women giving birth 2020‐ 11‐ 28 Maternal height example Continued…. 23

Subject Normal distribution Reference interval, Confidence interval, SEM 2020‐ 11‐ 28 Lecture 2 Population, samples, generalisability Hypothesis testing T‐test ANOVA Paired samples Non‐parametric tests 24

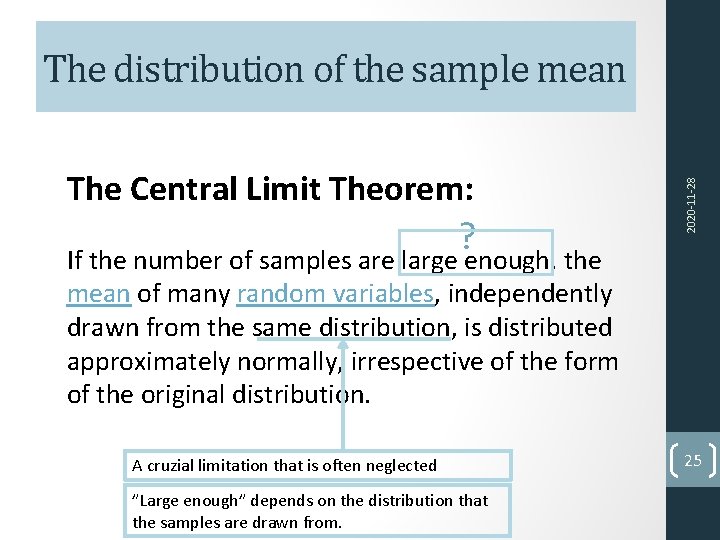

The Central Limit Theorem: ? 2020‐ 11‐ 28 The distribution of the sample mean If the number of samples are large enough, the mean of many random variables, independently drawn from the same distribution, is distributed approximately normally, irrespective of the form of the original distribution. A cruzial limitation that is often neglected ”Large enough” depends on the distribution that the samples are drawn from. 25

1. What does theoretical distribution from rolling a dice look like? 2. What is the expected mean from rolling a dice 100 times? 2020‐ 11‐ 28 Exercise 3. What does theoretical distribution from the means of 100 series of dice rolls look like, if each series is based on 100 rolls? 26

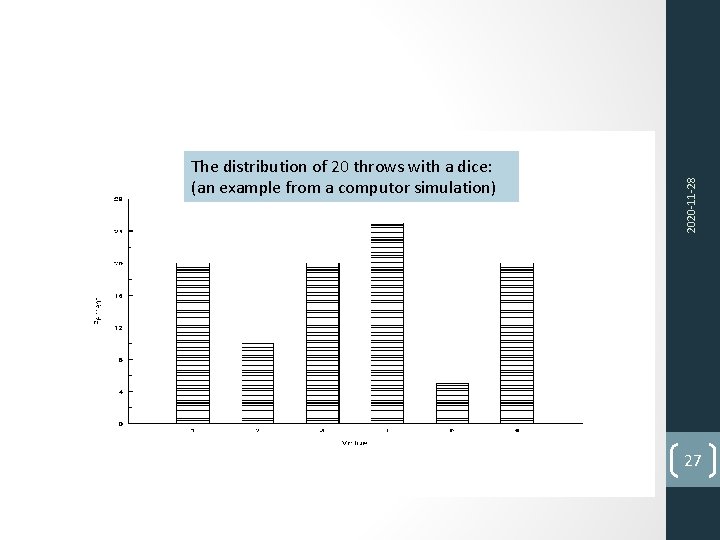

2020‐ 11‐ 28 The distribution of 20 throws with a dice: (an example from a computor simulation) 27

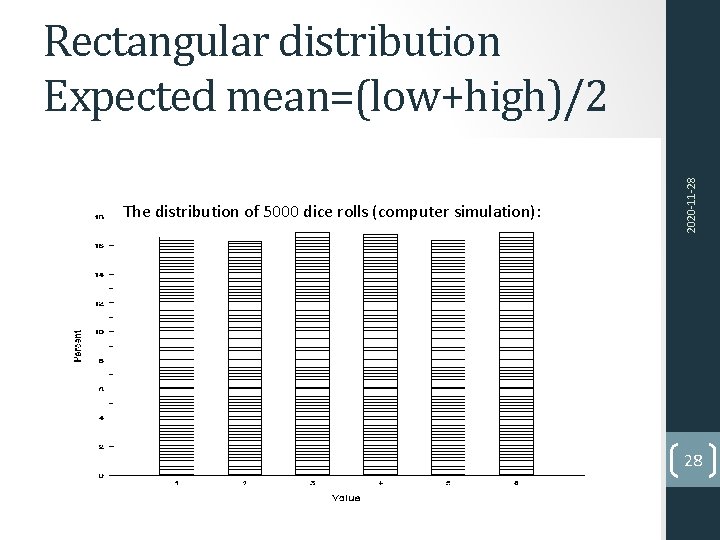

The distribution of 5000 dice rolls (computer simulation): 2020‐ 11‐ 28 Rectangular distribution Expected mean=(low+high)/2 28

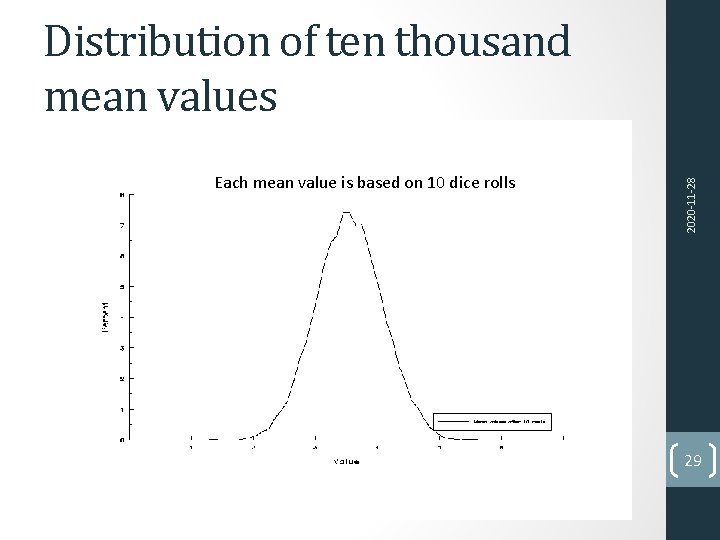

Each mean value is based on 10 dice rolls 2020‐ 11‐ 28 Distribution of ten thousand mean values 29

1. What does theoretical distribution from rolling a dice look like? Rectangular 1. What is the expected mean from rolling a dice 100 times? 3. 5 2020‐ 11‐ 28 Exercise… continued 1. What does theoretical distribution from the means of 100 series of dice rolls look like, if each series is based on 100 rolls? Normal distribution 30

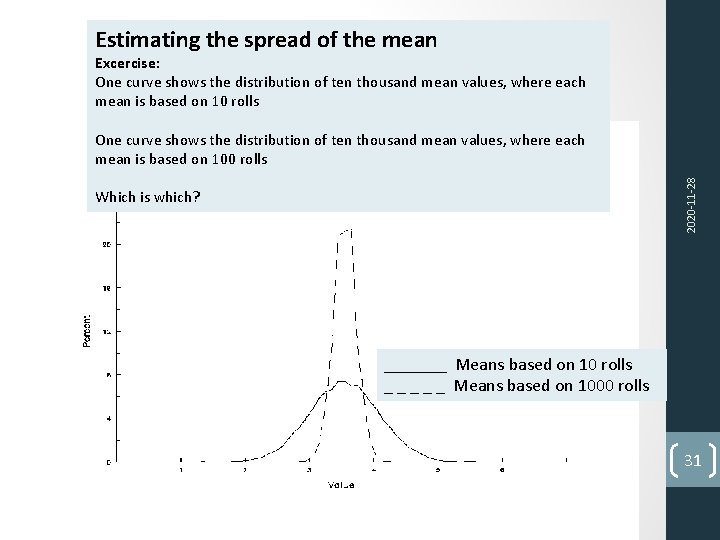

Estimating the spread of the mean Excercise: One curve shows the distribution of ten thousand mean values, where each mean is based on 10 rolls 2020‐ 11‐ 28 One curve shows the distribution of ten thousand mean values, where each mean is based on 100 rolls Which is which? _______ Means based on 10 rolls _ _ _ Means based on 1000 rolls 31

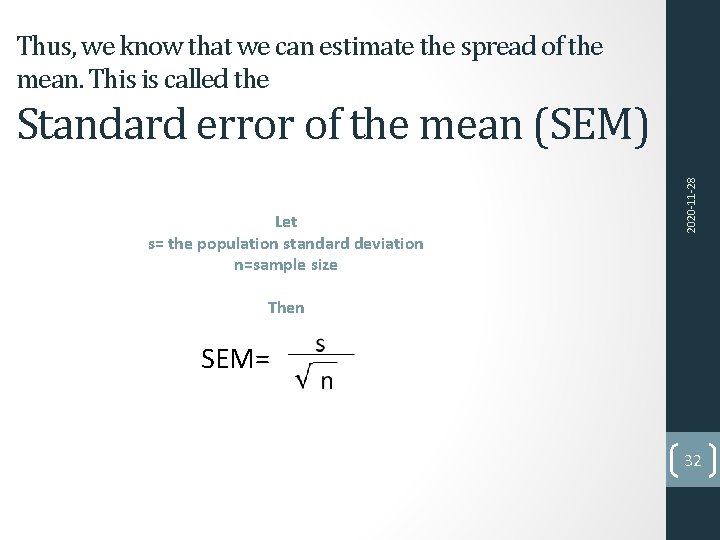

Thus, we know that we can estimate the spread of the mean. This is called the Let s= the population standard deviation n=sample size 2020‐ 11‐ 28 Standard error of the mean (SEM) Then SEM= 32

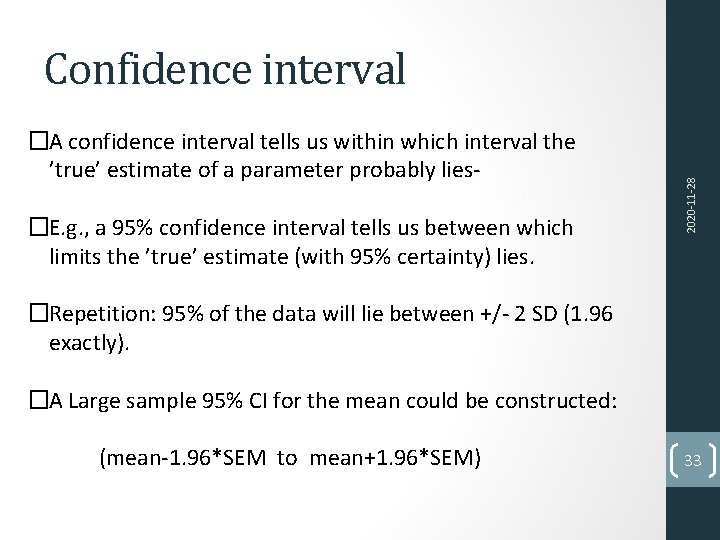

�A confidence interval tells us within which interval the ’true’ estimate of a parameter probably lies‐ �E. g. , a 95% confidence interval tells us between which limits the ’true’ estimate (with 95% certainty) lies. 2020‐ 11‐ 28 Confidence interval �Repetition: 95% of the data will lie between +/‐ 2 SD (1. 96 exactly). �A Large sample 95% CI for the mean could be constructed: (mean‐ 1. 96*SEM to mean+1. 96*SEM) 33

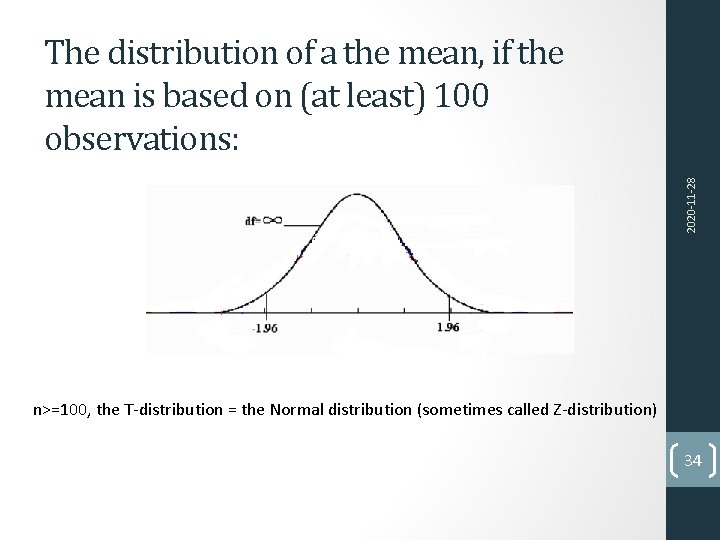

2020‐ 11‐ 28 The distribution of a the mean, if the mean is based on (at least) 100 observations: n>=100, the T‐distribution = the Normal distribution (sometimes called Z‐distribution) 34

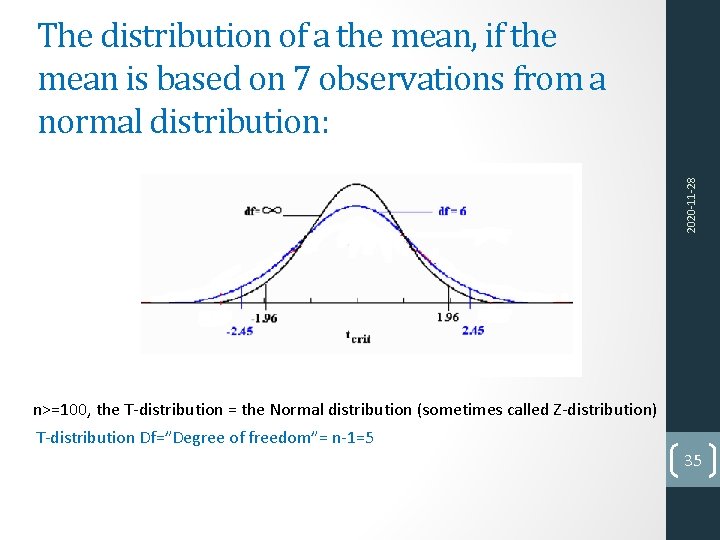

2020‐ 11‐ 28 The distribution of a the mean, if the mean is based on 7 observations from a normal distribution: n>=100, the T‐distribution = the Normal distribution (sometimes called Z‐distribution) T‐distribution Df=”Degree of freedom”= n‐ 1=5 35

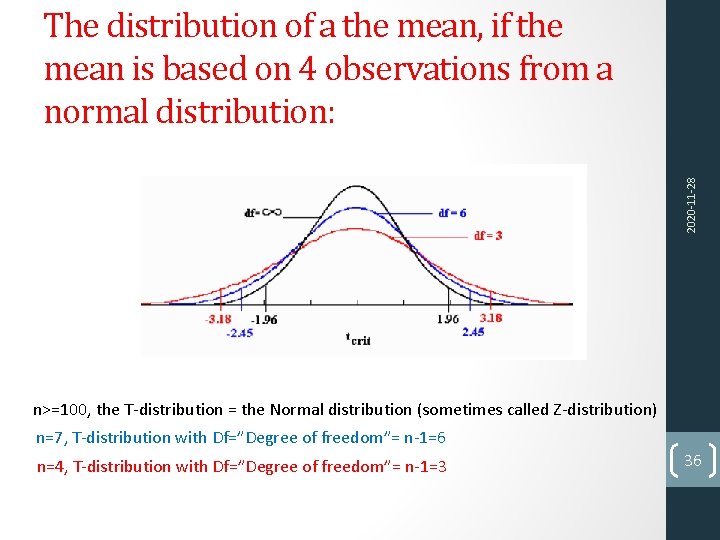

2020‐ 11‐ 28 The distribution of a the mean, if the mean is based on 4 observations from a normal distribution: n>=100, the T‐distribution = the Normal distribution (sometimes called Z‐distribution) n=7, T‐distribution with Df=”Degree of freedom”= n‐ 1=6 n=4, T‐distribution with Df=”Degree of freedom”= n‐ 1=3 36

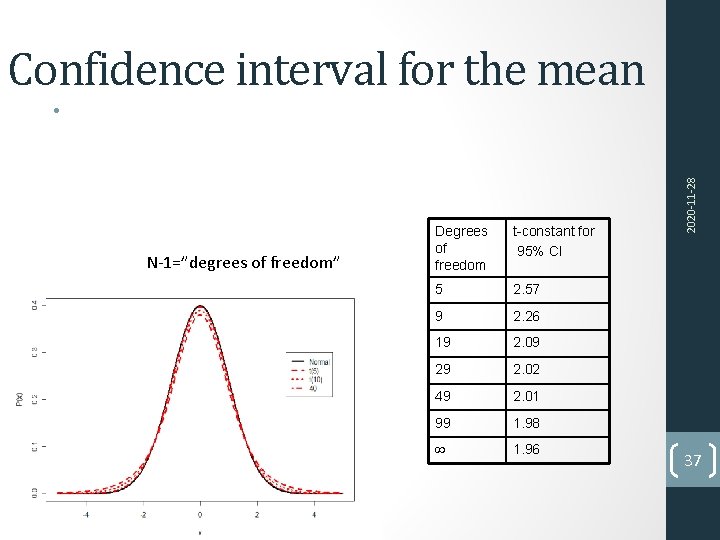

Confidence interval for the mean N‐ 1=”degrees of freedom” Degrees of freedom t-constant for 95% CI 5 2. 57 9 2. 26 19 2. 09 29 2. 02 49 2. 01 99 1. 98 1. 96 2020‐ 11‐ 28 • 37

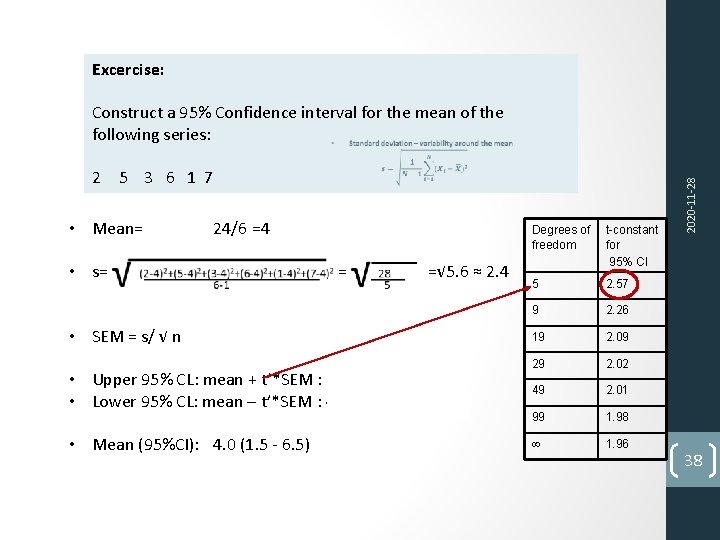

Construct a 95% Confidence interval for the mean of the following series: 2 5 3 6 1 7 • Mean= • s= • SEM = s/ √ n 24/6 =4 =√ 5. 6 ≈ 2. 4 =2. 4 / √ 6 ≈ 0. 97 • Upper 95% CL: mean + t’*SEM : 4+2. 57*0. 97 ≈ 6. 5 • Lower 95% CL: mean – t’*SEM : 4 ‐ 2. 57*0. 97 ≈ 1. 5 • Mean (95%CI): 4. 0 (1. 5 ‐ 6. 5) Degrees of freedom t-constant for 95% CI 5 2. 57 9 2. 26 19 2. 09 29 2. 02 49 2. 01 99 1. 98 1. 96 2020‐ 11‐ 28 Excercise: 38

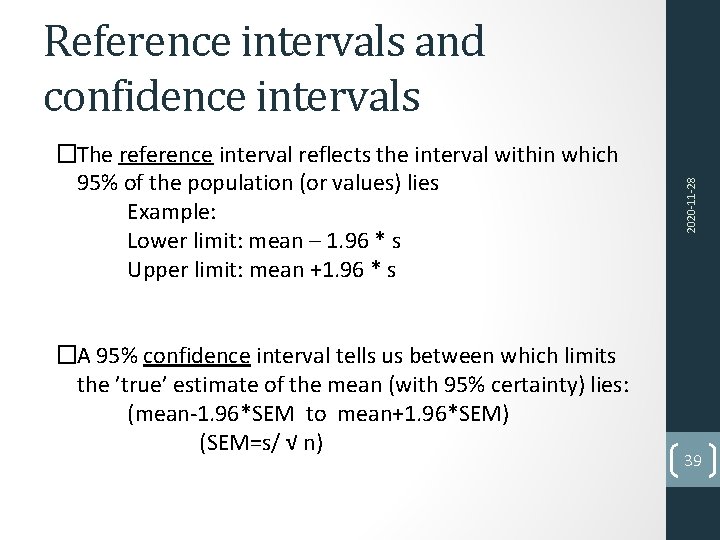

�The reference interval reflects the interval within which 95% of the population (or values) lies Example: Lower limit: mean – 1. 96 * s Upper limit: mean +1. 96 * s �A 95% confidence interval tells us between which limits the ’true’ estimate of the mean (with 95% certainty) lies: (mean‐ 1. 96*SEM to mean+1. 96*SEM) (SEM=s/ √ n) 2020‐ 11‐ 28 Reference intervals and confidence intervals 39

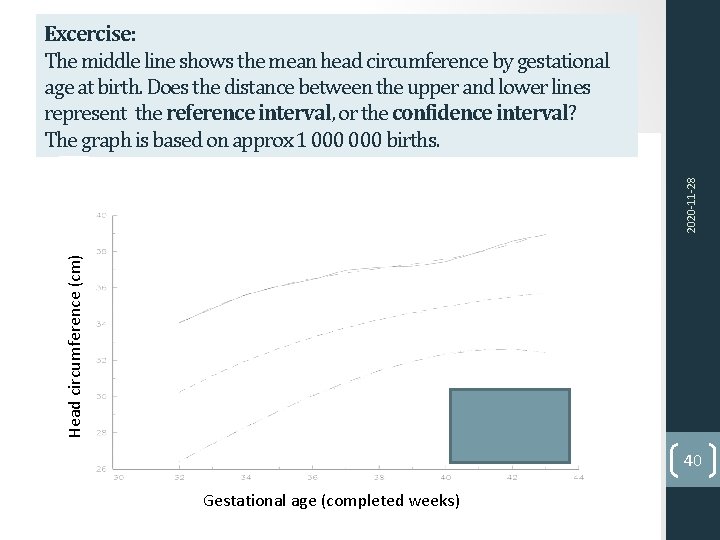

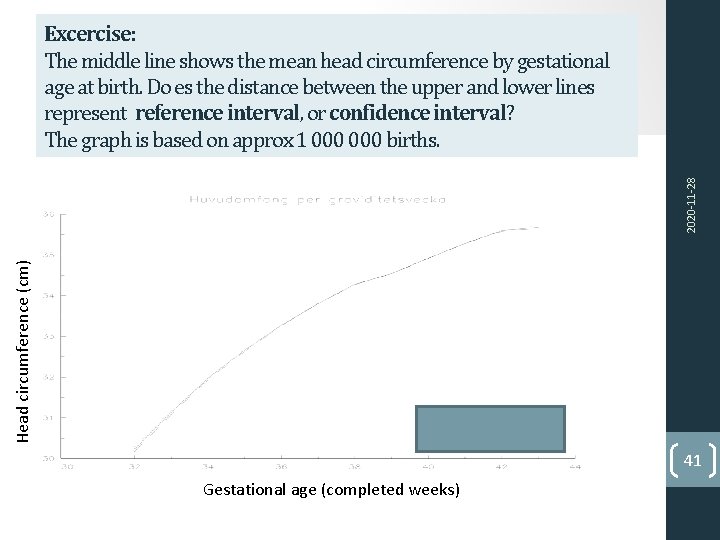

Head circumference (cm) 2020‐ 11‐ 28 Excercise: The middle line shows the mean head circumference by gestational age at birth. Does the distance between the upper and lower lines represent the reference interval, or the confidence interval? The graph is based on approx 1 000 births. 40 Gestational age (completed weeks)

Head circumference (cm) 2020‐ 11‐ 28 Excercise: The middle line shows the mean head circumference by gestational age at birth. Do es the distance between the upper and lower lines represent reference interval, or confidence interval? The graph is based on approx 1 000 births. 41 Gestational age (completed weeks)

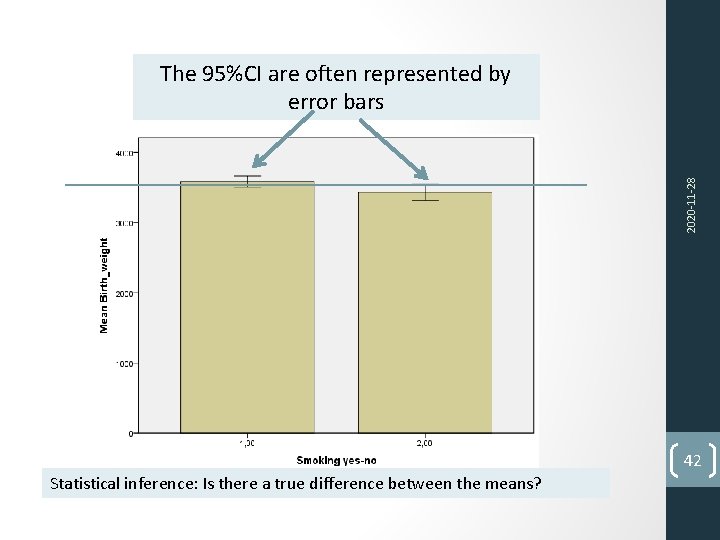

2020‐ 11‐ 28 The 95%CI are often represented by error bars 42 Statistical inference: Is there a true difference between the means?

Lecture 2 Normal distribution Reference interval, Confidence interval, SEM 2020‐ 11‐ 28 Subject Population, samples, generalisability Hypothesis testing T‐test ANOVA Paired samples Non‐parametric tests 43

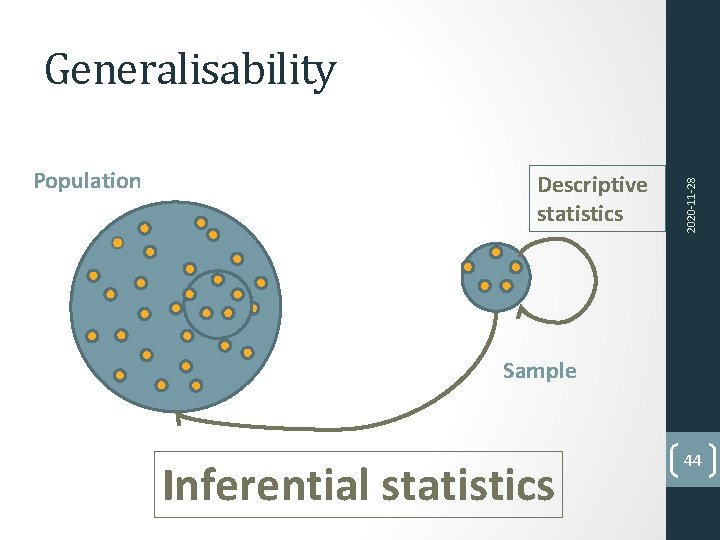

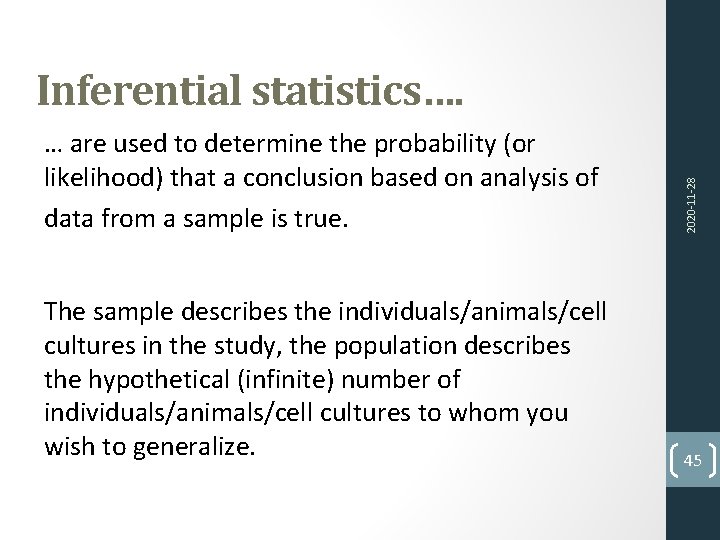

Population Descriptive statistics 2020‐ 11‐ 28 Generalisability Sample Inferential statistics 44

… are used to determine the probability (or likelihood) that a conclusion based on analysis of data from a sample is true. The sample describes the individuals/animals/cell cultures in the study, the population describes the hypothetical (infinite) number of individuals/animals/cell cultures to whom you wish to generalize. 2020‐ 11‐ 28 Inferential statistics…. 45

Random error Any measurement based on a biological sample, even though the sample is selected by a process of random sampling, will differ from the ’true’ value as a result of random process. 2020‐ 11‐ 28 46

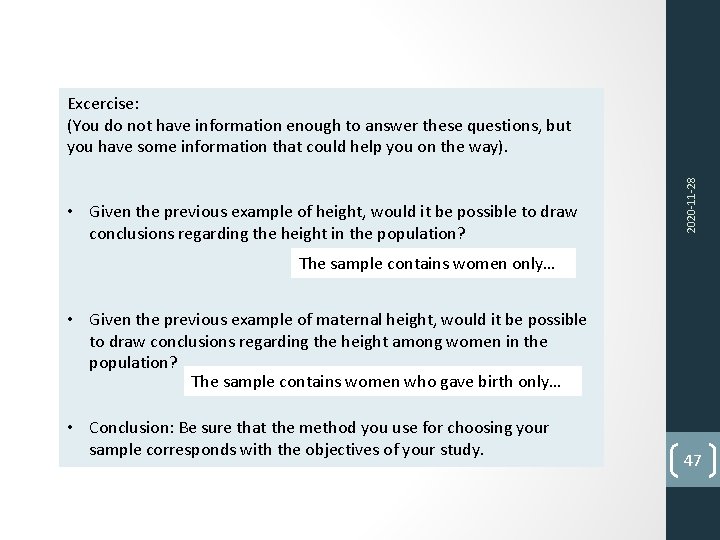

• Given the previous example of height, would it be possible to draw conclusions regarding the height in the population? 2020‐ 11‐ 28 Excercise: (You do not have information enough to answer these questions, but you have some information that could help you on the way). The sample contains women only… • Given the previous example of maternal height, would it be possible to draw conclusions regarding the height among women in the population? The sample contains women who gave birth only… • Conclusion: Be sure that the method you use for choosing your sample corresponds with the objectives of your study. 47

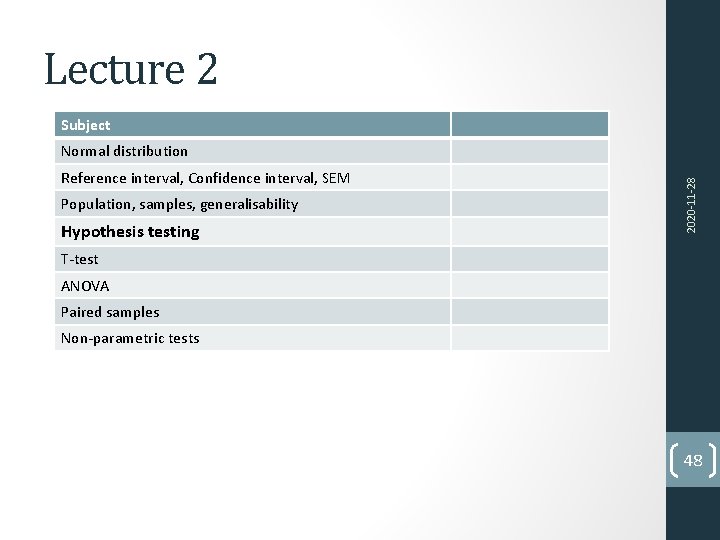

Lecture 2 Subject Reference interval, Confidence interval, SEM Population, samples, generalisability Hypothesis testing 2020‐ 11‐ 28 Normal distribution T‐test ANOVA Paired samples Non‐parametric tests 48

Hypothesis testing �A sample is collected to make inferences about a population �It is not possible to prove anything about a study population �However you can reject a theory that is more or less likely to be true �This is done by hypothesis testing!

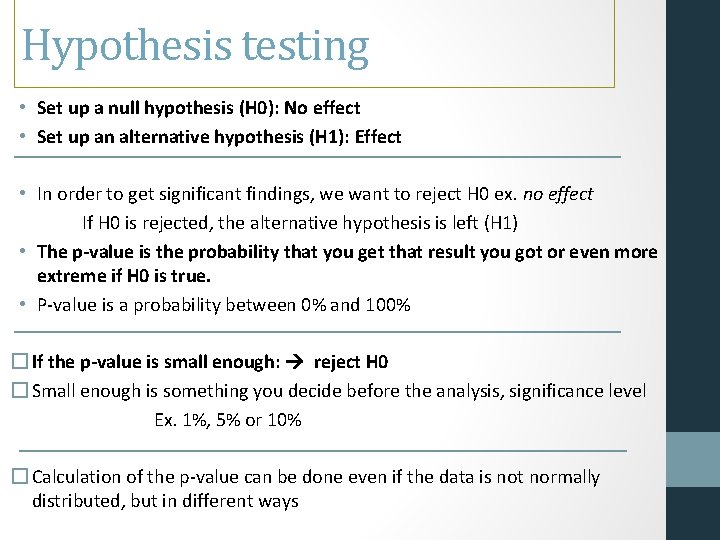

Hypothesis testing • Set up a null hypothesis (H 0): No effect • Set up an alternative hypothesis (H 1): Effect • In order to get significant findings, we want to reject H 0 ex. no effect If H 0 is rejected, the alternative hypothesis is left (H 1) • The p-value is the probability that you get that result you got or even more extreme if H 0 is true. • P‐value is a probability between 0% and 100% � If the p-value is small enough: reject H 0 � Small enough is something you decide before the analysis, significance level Ex. 1%, 5% or 10% � Calculation of the p‐value can be done even if the data is not normally distributed, but in different ways

• The p-value is the probability of obtaining a test statistic at least as extreme as the one that was actually observed, assuming that the null hypothesis (H 0)is true. 2020‐ 11‐ 28 Elements of statistical inference • Type I error (often referred to as alpha) is the probability of rejecting H 0 when in fact H 0 is true. • Type II error (often referred to as beta) is the probability of accepting H 0 when in fact H 0 is false. 51

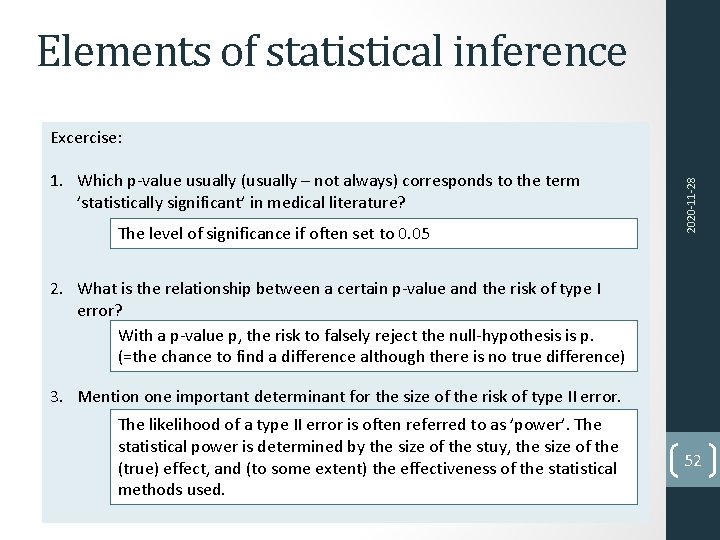

Elements of statistical inference 1. Which p‐value usually (usually – not always) corresponds to the term ’statistically significant’ in medical literature? The level of significance if often set to 0. 05 2020‐ 11‐ 28 Excercise: 2. What is the relationship between a certain p‐value and the risk of type I error? With a p‐value p, the risk to falsely reject the null‐hypothesis is p. (=the chance to find a difference although there is no true difference) 3. Mention one important determinant for the size of the risk of type II error. The likelihood of a type II error is often referred to as ’power’. The statistical power is determined by the size of the stuy, the size of the (true) effect, and (to some extent) the effectiveness of the statistical methods used. 52

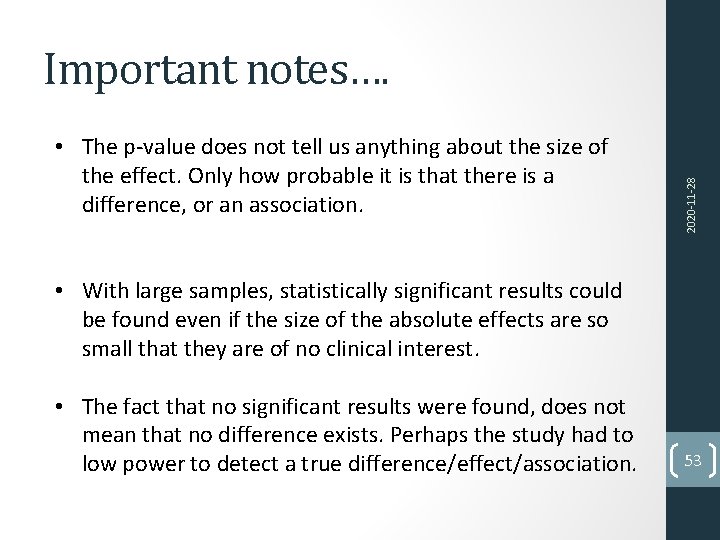

• The p‐value does not tell us anything about the size of the effect. Only how probable it is that there is a difference, or an association. 2020‐ 11‐ 28 Important notes…. • With large samples, statistically significant results could be found even if the size of the absolute effects are so small that they are of no clinical interest. • The fact that no significant results were found, does not mean that no difference exists. Perhaps the study had to low power to detect a true difference/effect/association. 53

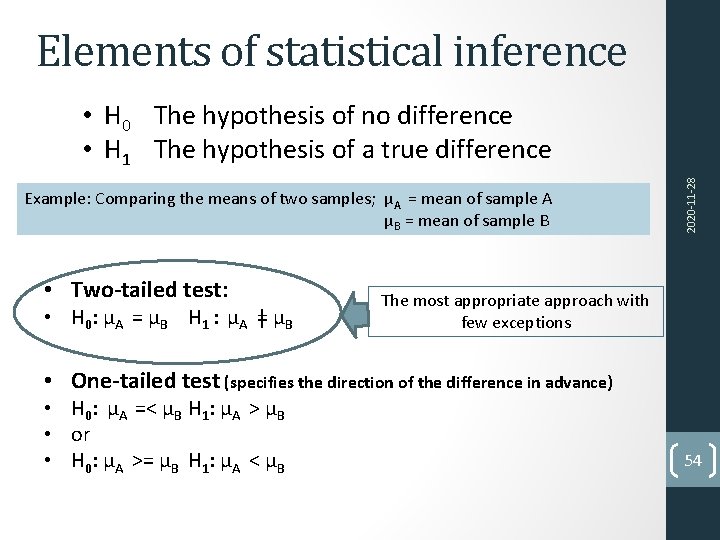

Elements of statistical inference Example: Comparing the means of two samples; μA = mean of sample A μB = mean of sample B • Two-tailed test: • H 0: μA = μB H 1 : μA ǂ μB 2020‐ 11‐ 28 • H 0 The hypothesis of no difference • H 1 The hypothesis of a true difference The most appropriate approach with few exceptions • One-tailed test (specifies the direction of the difference in advance) • H 0: μA =< μB H 1: μA > μB • or • H 0: μA >= μB H 1: μA < μB 54

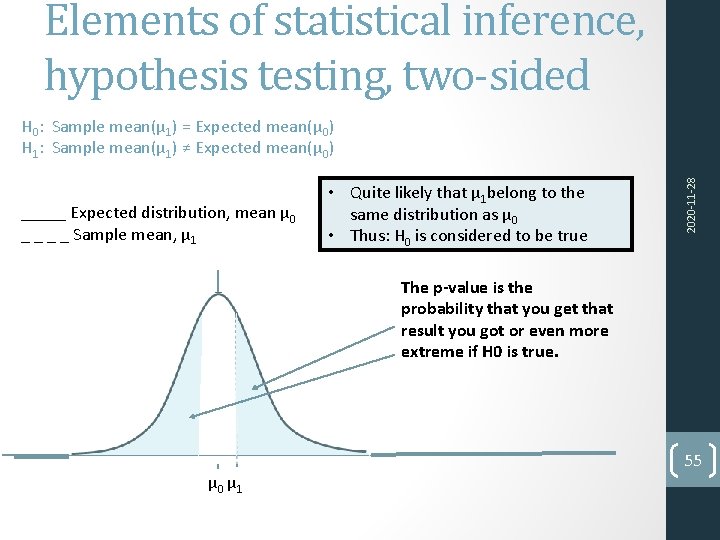

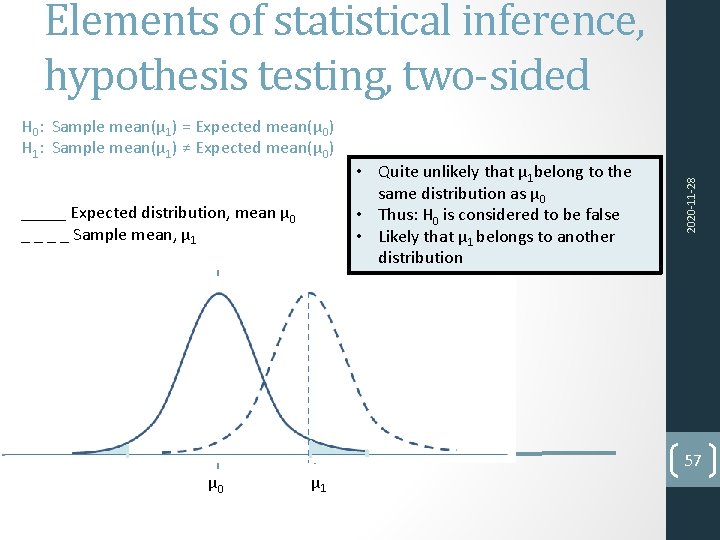

Elements of statistical inference, hypothesis testing, two-sided _____ Expected distribution, mean μ 0 _ _ Sample mean, μ 1 • Quite likely that μ 1 belong to the same distribution as μ 0 • Thus: H 0 is considered to be true 2020‐ 11‐ 28 H 0: Sample mean(μ 1) = Expected mean(μ 0) H 1: Sample mean(μ 1) ≠ Expected mean(μ 0) The p-value is the probability that you get that result you got or even more extreme if H 0 is true. μ 0 μ 1 55

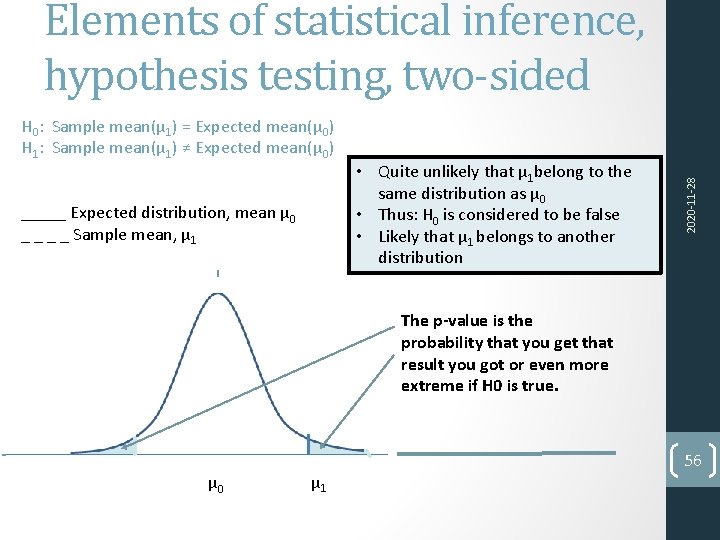

H 0: Sample mean(μ 1) = Expected mean(μ 0) H 1: Sample mean(μ 1) ≠ Expected mean(μ 0) _____ Expected distribution, mean μ 0 _ _ Sample mean, μ 1 • Quite unlikely that μ 1 belong to the same distribution as μ 0 • Thus: H 0 is considered to be false • Likely that μ 1 belongs to another distribution 2020‐ 11‐ 28 Elements of statistical inference, hypothesis testing, two-sided The p-value is the probability that you get that result you got or even more extreme if H 0 is true. μ 0 μ 1 56

H 0: Sample mean(μ 1) = Expected mean(μ 0) H 1: Sample mean(μ 1) ≠ Expected mean(μ 0) _____ Expected distribution, mean μ 0 _ _ Sample mean, μ 1 μ 0 μ 1 • Quite unlikely that μ 1 belong to the same distribution as μ 0 • Thus: H 0 is considered to be false • Likely that μ 1 belongs to another distribution 2020‐ 11‐ 28 Elements of statistical inference, hypothesis testing, two-sided 57

Lecture 2 Normal distribution Reference interval, Confidence interval, SEM Population, samples, generalisability 2020‐ 11‐ 28 Subject Hypothesis testing T-test ANOVA Paired samples Non‐parametric tests 58

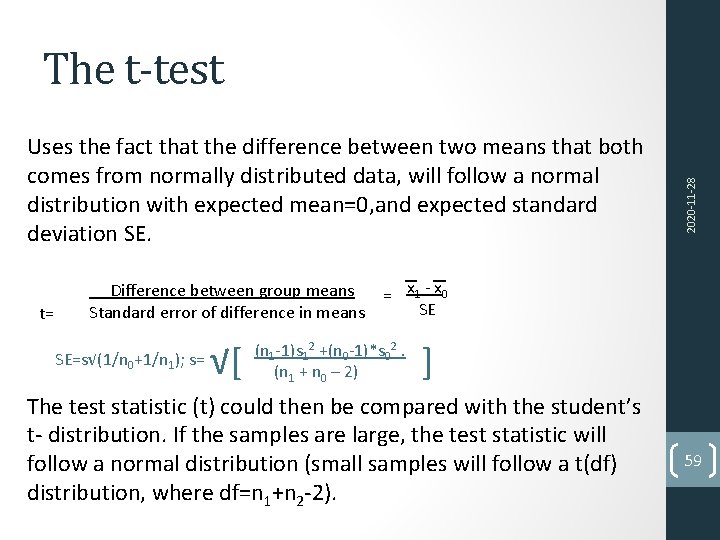

Uses the fact that the difference between two means that both comes from normally distributed data, will follow a normal distribution with expected mean=0, and expected standard deviation SE. t= 2020‐ 11‐ 28 The t-test Difference between group means = x 1 ‐ x 0 SE Standard error of difference in means SE=s√(1/n 0+1/n 1); s= √[ (n 1‐ 1)s 12 +(n 0‐ 1)*s 02. (n 1 + n 0 – 2) ] The test statistic (t) could then be compared with the student’s t‐ distribution. If the samples are large, the test statistic will follow a normal distribution (small samples will follow a t(df) distribution, where df=n 1+n 2‐ 2). 59

The t‐test can be used to determine if two small sets of normal distributed data are significantly different from each other, and the spread is not known but instead estimated from the data. Under certain conditions, the test statistic will follow a Student's t distribution. 2020‐ 11‐ 28 The t-test 60

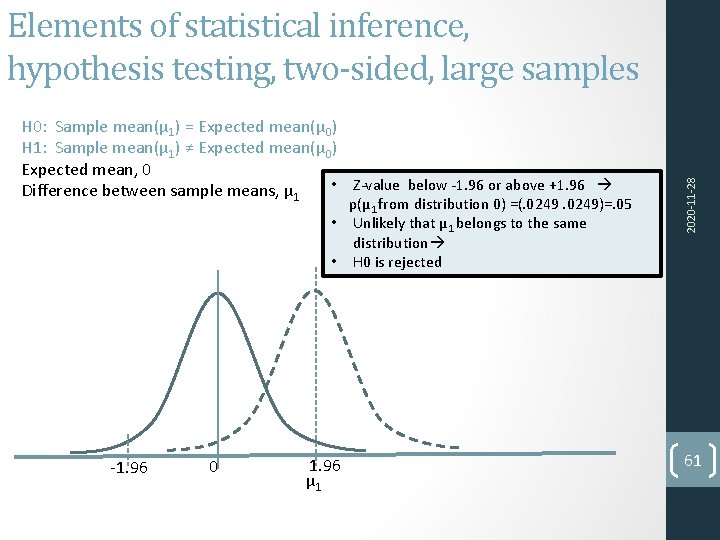

H 0: Sample mean(μ 1) = Expected mean(μ 0) H 1: Sample mean(μ 1) ≠ Expected mean(μ 0) Expected mean, 0 • Z‐value below ‐ 1. 96 or above +1. 96 Difference between sample means, μ 1 p(μ 1 from distribution 0) =(. 0249)=. 05 • Unlikely that μ 1 belongs to the same distribution • H 0 is rejected ‐ 1. 96 0 1. 96 μ 1 2020‐ 11‐ 28 Elements of statistical inference, hypothesis testing, two-sided, large samples 61

Elements of statistical inference We want to investigate if there is equally many cells per A square in two different preparations. 2020‐ 11‐ 28 Exercise: How can we specify H 0 and H 1? H 0: Cellcounts in prep A = cellcounts in prep B H 1: Cellcounts in prep A cellcounts in prep B We found that prep A had 1164000 cells/ml at day 4, and prep B had 666000 cells/ml at day four. The p-value will give us the probability that we will find a sample with 666000 cells/ml, or more extreme, given that H 0 is true. 62

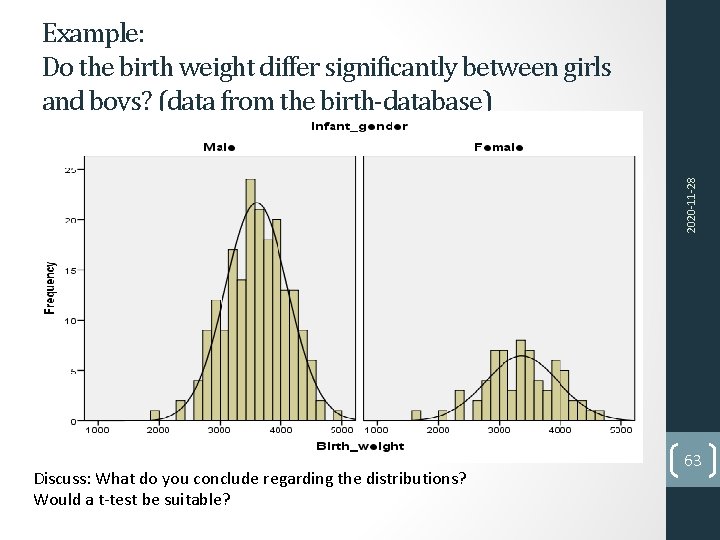

2020‐ 11‐ 28 Example: Do the birth weight differ significantly between girls and boys? (data from the birth-database) Discuss: What do you conclude regarding the distributions? Would a t‐test be suitable? 63

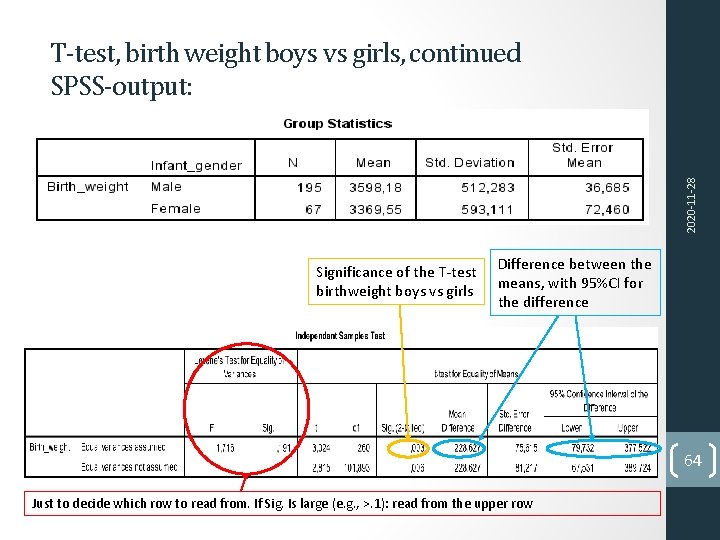

2020‐ 11‐ 28 T-test, birth weight boys vs girls, continued SPSS-output: Significance of the T‐test birthweight boys vs girls Difference between the means, with 95%CI for the difference 64 Just to decide which row to read from. If Sig. Is large (e. g. , >. 1): read from the upper row

Lecture 2 Normal distribution Reference interval, Confidence interval, SEM Population, samples, generalisability 2020‐ 11‐ 28 Subject Hypothesis testing T‐test ANOVA Paired samples Non‐parametric tests 65

Multiple T‐tests could result in mass‐significance! Do ANOVA instead of repeated T‐tests. 2020‐ 11‐ 28 More than two groups, one way ANOVA (analysis of variance) • ANOVA: • H 0: Mean 1=mean 2=mean 3 • H 1: At least two of the means are different • In short: In an ANOVA, the total variance is devided into the within‐groups, and between‐ groups variance. 66

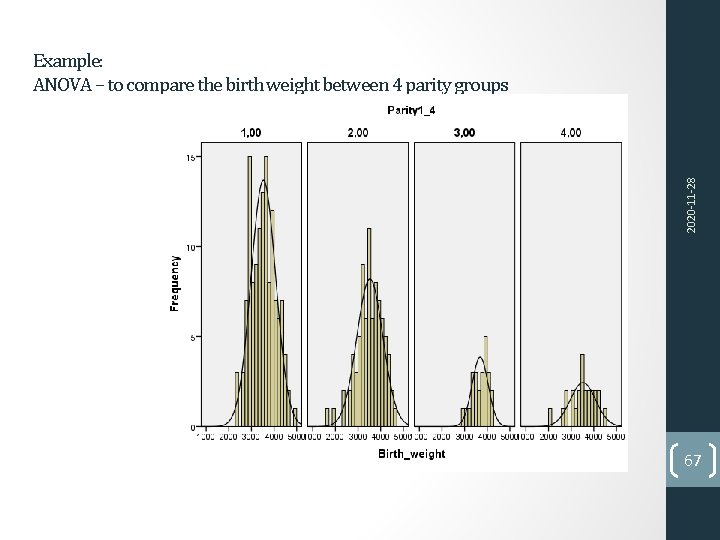

2020‐ 11‐ 28 Example: ANOVA – to compare the birth weight between 4 parity groups 67

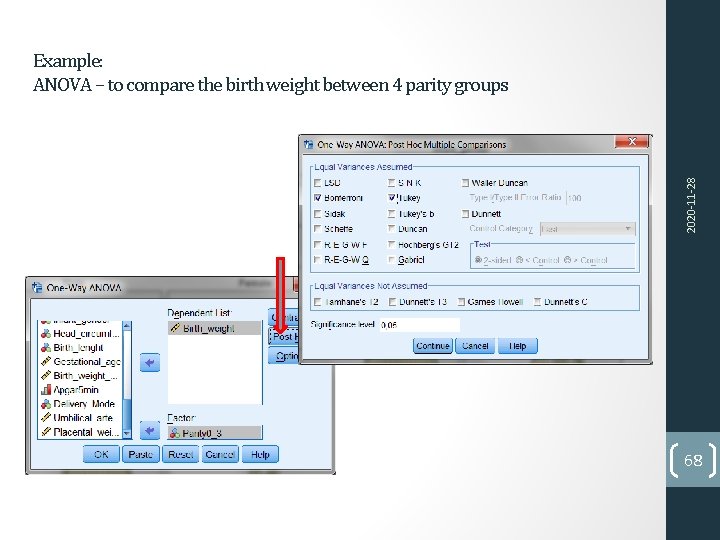

2020‐ 11‐ 28 Example: ANOVA – to compare the birth weight between 4 parity groups 68

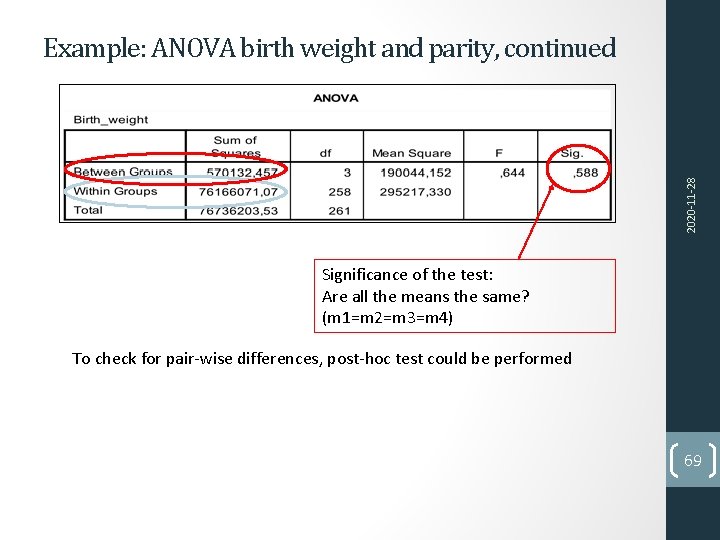

2020‐ 11‐ 28 Example: ANOVA birth weight and parity, continued Significance of the test: Are all the means the same? (m 1=m 2=m 3=m 4) To check for pair‐wise differences, post‐hoc test could be performed 69

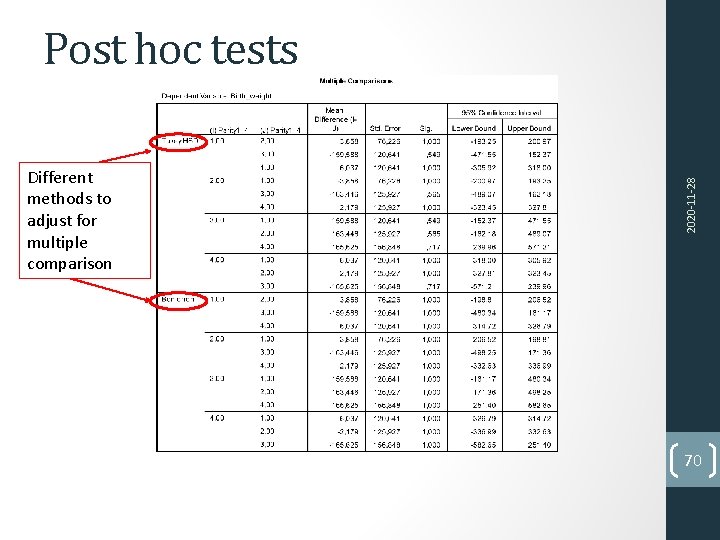

Different methods to adjust for multiple comparison 2020‐ 11‐ 28 Post hoc tests 70

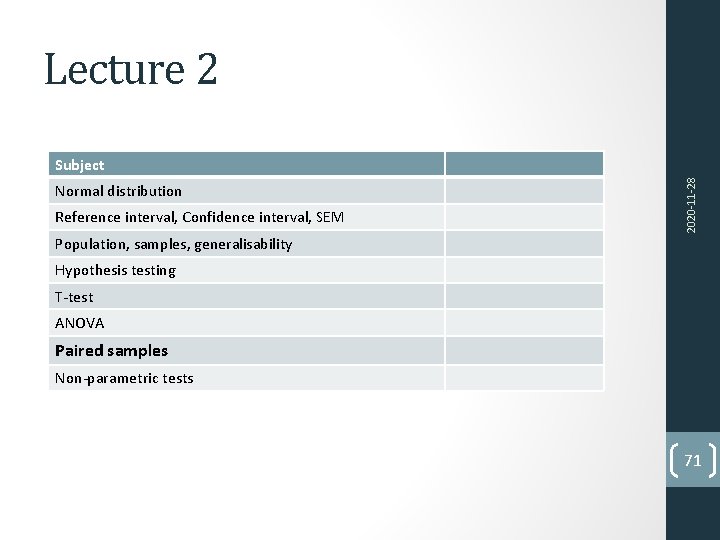

Lecture 2 Normal distribution Reference interval, Confidence interval, SEM 2020‐ 11‐ 28 Subject Population, samples, generalisability Hypothesis testing T‐test ANOVA Paired samples Non‐parametric tests 71

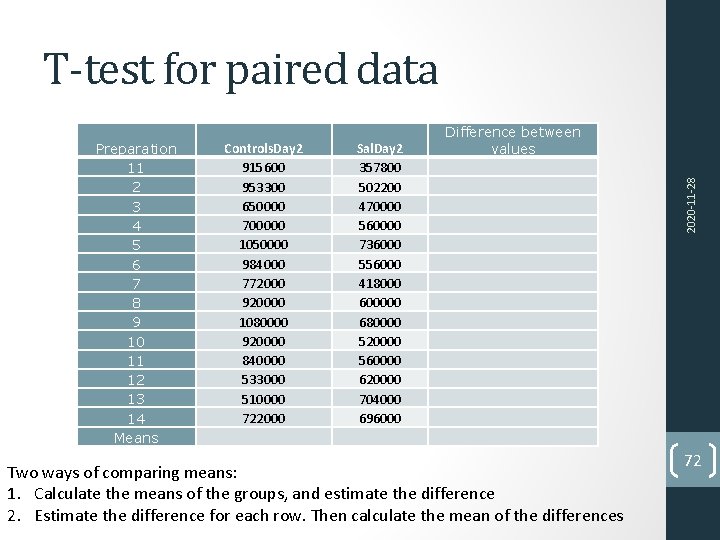

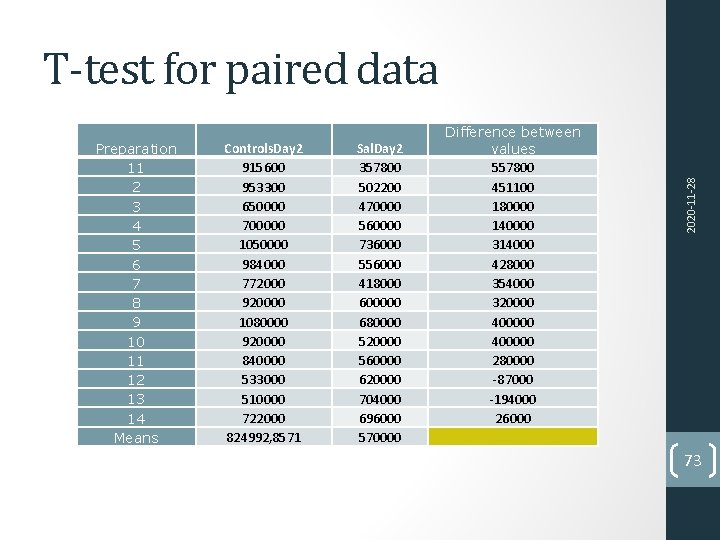

T-test for paired data Controls. Day 2 915600 953300 650000 700000 1050000 984000 772000 920000 1080000 920000 840000 533000 510000 722000 Sal. Day 2 357800 502200 470000 560000 736000 556000 418000 600000 680000 520000 560000 620000 704000 696000 Two ways of comparing means: 1. Calculate the means of the groups, and estimate the difference 2. Estimate the difference for each row. Then calculate the mean of the differences 2020‐ 11‐ 28 Preparation 11 2 3 4 5 6 7 8 9 10 11 12 13 14 Means Difference between values 72

Preparation 11 2 3 4 5 6 7 8 9 10 11 12 13 14 Means Controls. Day 2 915600 953300 650000 700000 1050000 984000 772000 920000 1080000 920000 840000 533000 510000 722000 824992, 8571 Sal. Day 2 357800 502200 470000 560000 736000 556000 418000 600000 680000 520000 560000 620000 704000 696000 570000 Difference between values 557800 451100 180000 140000 314000 428000 354000 320000 400000 280000 ‐ 87000 ‐ 194000 26000 2020‐ 11‐ 28 T-test for paired data 73

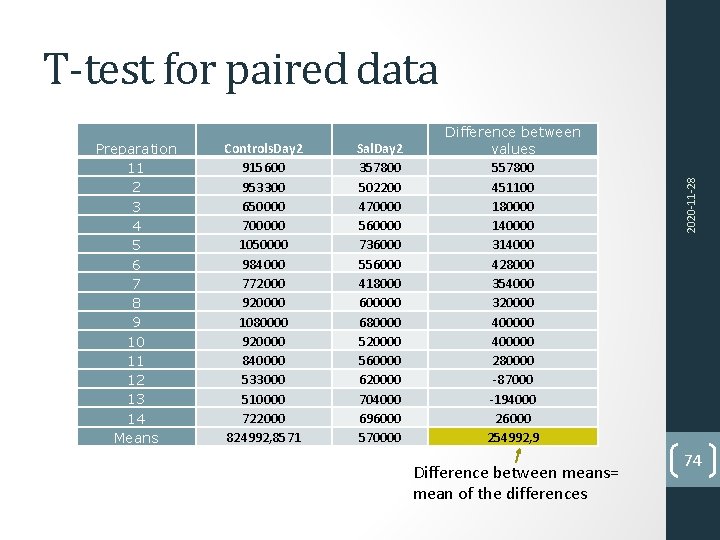

Preparation 11 2 3 4 5 6 7 8 9 10 11 12 13 14 Means Controls. Day 2 915600 953300 650000 700000 1050000 984000 772000 920000 1080000 920000 840000 533000 510000 722000 824992, 8571 Sal. Day 2 357800 502200 470000 560000 736000 556000 418000 600000 680000 520000 560000 620000 704000 696000 570000 Difference between values 557800 451100 180000 140000 314000 428000 354000 320000 400000 280000 ‐ 87000 ‐ 194000 26000 254992, 9 Difference between means= mean of the differences 2020‐ 11‐ 28 T-test for paired data 74

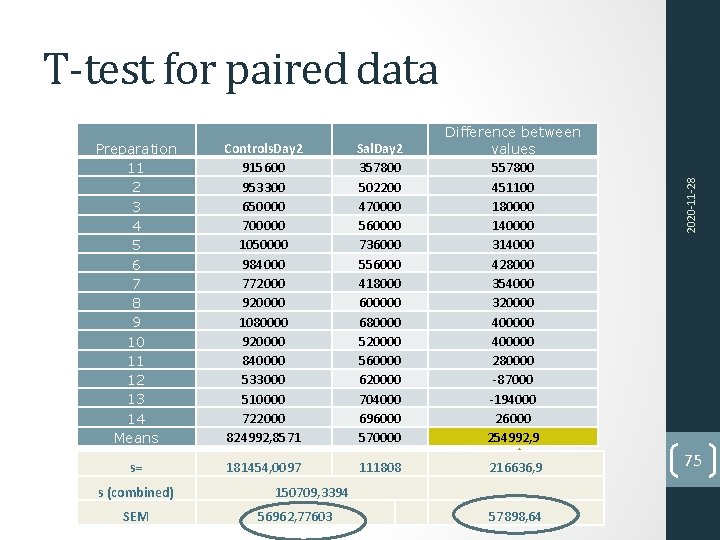

Preparation 11 2 3 4 5 6 7 8 9 10 11 12 13 14 Means Controls. Day 2 915600 953300 650000 700000 1050000 984000 772000 920000 1080000 920000 840000 533000 510000 722000 824992, 8571 Sal. Day 2 357800 502200 470000 560000 736000 556000 418000 600000 680000 520000 560000 620000 704000 696000 570000 Difference between values 557800 451100 180000 140000 314000 428000 354000 320000 400000 280000 ‐ 87000 ‐ 194000 26000 254992, 9 s= 181454, 0097 111808 216636, 9 s (combined) SEM 150709, 3394 56962, 77603 57898, 64 2020‐ 11‐ 28 T-test for paired data 75

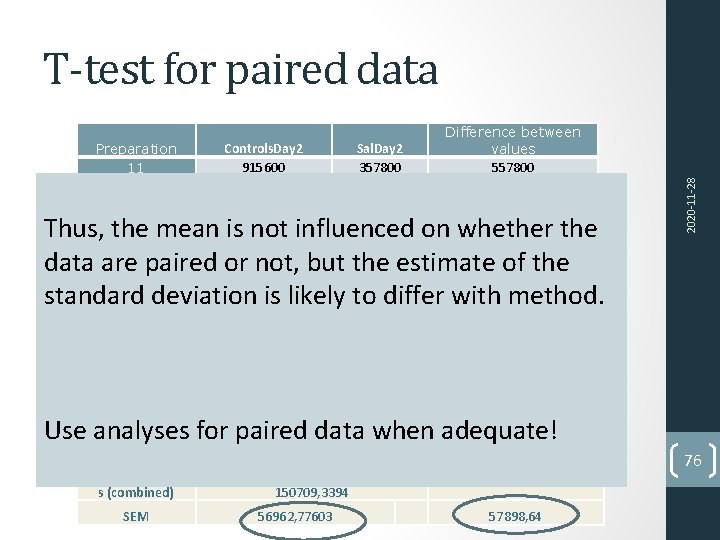

Preparation 11 2 3 4 5 6 7 8 9 10 11 12 13 14 Means Controls. Day 2 915600 953300 650000 700000 1050000 984000 772000 920000 1080000 920000 840000 533000 510000 722000 824992, 8571 Sal. Day 2 357800 502200 470000 560000 736000 556000 418000 600000 680000 520000 560000 620000 704000 696000 570000 Difference between values 557800 451100 180000 140000 314000 428000 354000 320000 400000 280000 ‐ 87000 ‐ 194000 26000 254992, 9 s= 181454, 0097 111808 216636, 9 Thus, the mean is not influenced on whether the data are paired or not, but the estimate of the standard deviation is likely to differ with method. 2020‐ 11‐ 28 T-test for paired data Use analyses for paired data when adequate! s (combined) SEM 150709, 3394 56962, 77603 57898, 64 76

Lecture 2 Normal distribution Reference interval, Confidence interval, SEM 2020‐ 11‐ 28 Subject Population, samples, generalisability Hypothesis testing T‐test ANOVA Paired samples Non-parametric tests 77

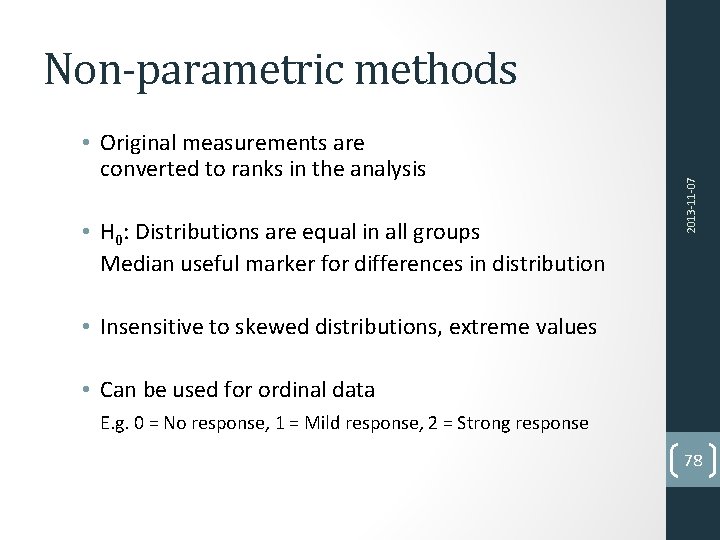

• Original measurements are converted to ranks in the analysis • H 0: Distributions are equal in all groups Median useful marker for differences in distribution 2013‐ 11‐ 07 Non-parametric methods • Insensitive to skewed distributions, extreme values • Can be used for ordinal data E. g. 0 = No response, 1 = Mild response, 2 = Strong response 78

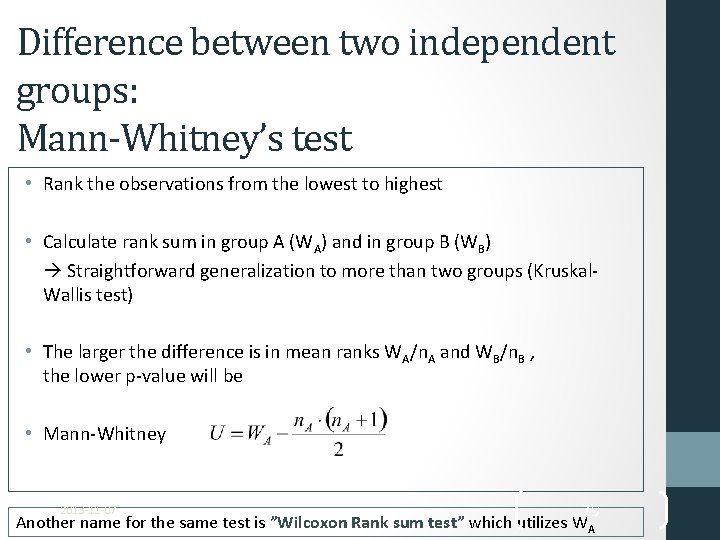

Difference between two independent groups: Mann-Whitney’s test • Rank the observations from the lowest to highest • Calculate rank sum in group A (WA) and in group B (WB) Straightforward generalization to more than two groups (Kruskal‐ Wallis test) • The larger the difference is in mean ranks WA/n. A and WB/n. B , the lower p‐value will be • Mann‐Whitney 79 Another name for the same test is ”Wilcoxon Rank sum test” which utilizes WA 2013‐ 11‐ 07

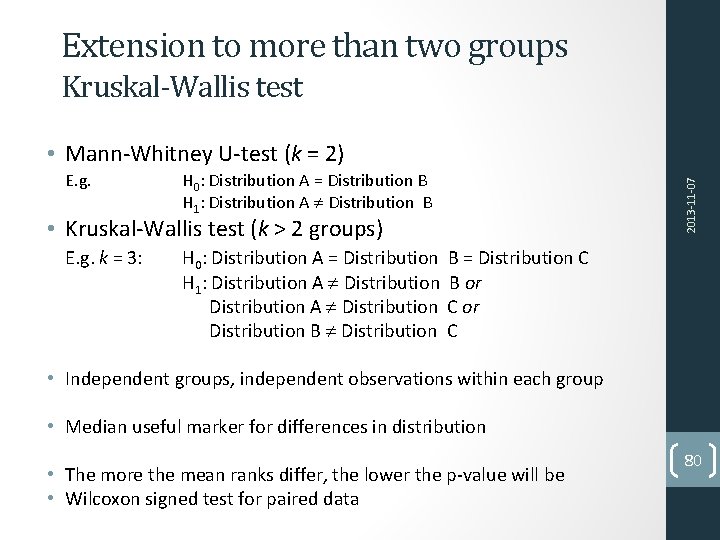

Extension to more than two groups Kruskal-Wallis test E. g. H 0: Distribution A = Distribution B H 1: Distribution A Distribution B • Kruskal‐Wallis test (k > 2 groups) E. g. k = 3: 2013‐ 11‐ 07 • Mann‐Whitney U‐test (k = 2) H 0: Distribution A = Distribution B = Distribution C H 1: Distribution A Distribution B or Distribution A Distribution C or Distribution B Distribution C • Independent groups, independent observations within each group • Median useful marker for differences in distribution • The more the mean ranks differ, the lower the p‐value will be • Wilcoxon signed test for paired data 80

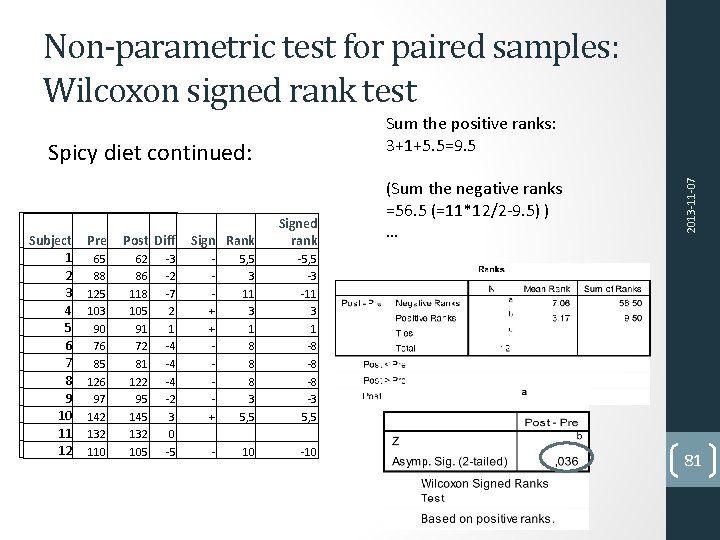

Non-parametric test for paired samples: Wilcoxon signed rank test Spicy diet continued: Subject 11 22 33 44 55 66 77 88 99 10 10 11 11 12 12 Pre Post Diff Sign Rank 65 65 88 88 125 103 90 90 76 76 85 85 126 97 97 142 132 110 62 62 86 86 118 105 91 91 72 72 81 81 122 95 95 145 132 105 ‐ 3 ‐ 2 ‐ 7 22 11 ‐ 4 ‐ 4 ‐ 4 ‐ 2 33 00 ‐ 5 Signed rank ‐‐ ‐‐ ‐‐ ++ ++ ‐‐ ‐‐ ++ 5, 5 33 11 11 33 11 88 88 88 33 5, 5 ‐ 3 ‐ 11 33 11 ‐ 8 ‐ 8 ‐ 8 ‐ 3 5, 5 ‐‐ 10 10 ‐ 10 (Sum the negative ranks =56. 5 (=11*12/2‐ 9. 5) ) … 2013‐ 11‐ 07 Sum the positive ranks: 3+1+5. 5=9. 5 81

Lecture 2 Repetition, Lecture I: Puhh!!! Dependent, indipendent variables Types of variables (binary/nominal/ordinal/discrete/continuous) Descriptive statistics ‐ Central tendency measures (mean, median) ‐ Dispersion measures (standard deviation, percentiles) Graphical presentation ‐ Barplot ‐ Histogram ‐ Boxplot This was all for the lecture today. Lecture 2 ‐ Subject Norman & Streiner Normal distribution 4 Population, samples, generalisability 6 Hypothesis testing 6 Reference interval, Confidence interval, SEM 6 T‐test 7 ANOVA 8 2020‐ 11‐ 28 ‐ ‐ ‐ 82

Next Lecture 2020‐ 11‐ 28 Repetition, Lecture I and 2: 83

- Slides: 83