2 tensorboard logdir Ex tensorboard logdir Userschoihyunyoungjupyter Workspacetensorflowtensorboardleclogtest

![사용법[2] § 텐서 보드 코드 작성 § 터미널에서 명령어 실행 ‐tensorboard --logdir=저장위치 ‐Ex) tensorboard 사용법[2] § 텐서 보드 코드 작성 § 터미널에서 명령어 실행 ‐tensorboard --logdir=저장위치 ‐Ex) tensorboard](http://slidetodoc.com/presentation_image_h2/ab9f4d9aa4636b266535640ecef26589/image-4.jpg)

사용법[2] § 텐서 보드 코드 작성 § 터미널에서 명령어 실행 ‐tensorboard --logdir=저장위치 ‐Ex) tensorboard --logdir= /Users/choihyunyoung/jupyter. Workspace/tensorflow/tensorboard/lec_log/test 1 ‐결과 § 웹브라우저에서 접속 ‐http: //0. 0: 6006

![Constants example import tensorflow as tf matrix 1 = tf. constant([[3, 3]], name="matrix 1") Constants example import tensorflow as tf matrix 1 = tf. constant([[3, 3]], name="matrix 1")](http://slidetodoc.com/presentation_image_h2/ab9f4d9aa4636b266535640ecef26589/image-6.jpg)

Constants example import tensorflow as tf matrix 1 = tf. constant([[3, 3]], name="matrix 1") matrix 2 = tf. constant([[2], [2]], name="matrix 2") product = tf. matmul(matrix 1, matrix 2, name="product") tf. histogram_summary("matrix 1", matrix 1) tf. histogram_summary("matrix 2", matrix 2) tf. histogram_summary("product", product) with tf. Session() as sess: #sess. run(t) = t. eval() writer = tf. train. Summary. Writer("/Users/choihyun-young/Desktop/tensorboard/lec/test", sess. graph) merged = tf. merge_all_summaries() summary, _ = sess. run([merged, product], feed_dict={}) writer. add_summary(summary)

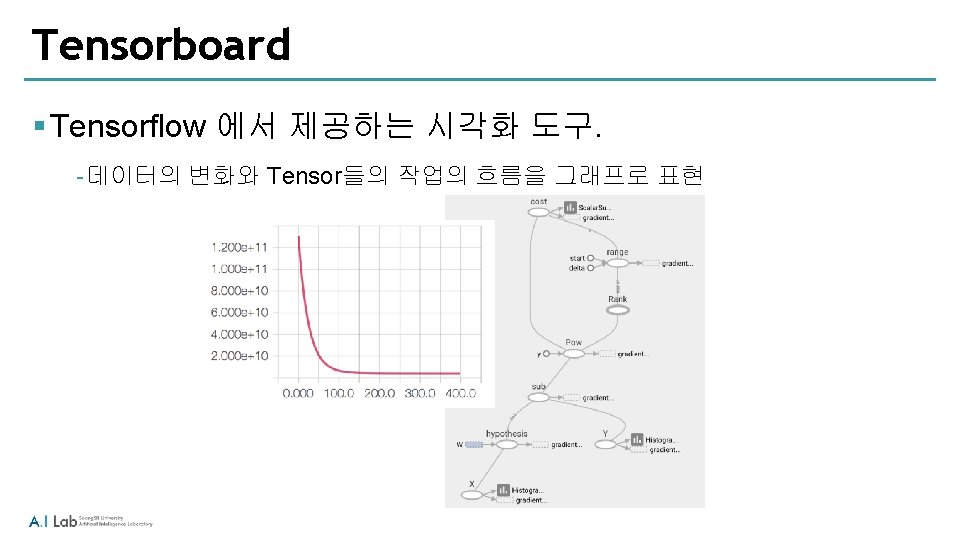

place holder example - tensorboard import tensorflow as tf x = tf. placeholder("float", (2, 3), name='x') y = tf. zeros([2, 3], "float", name = 'y') z = tf. add(x, y, name='z') tf. histogram_summary("x", x) tf. histogram_summary("y", y) tf. histogram_summary("z", z) with tf. Session() as sess: writer = tf. train. Summary. Writer("/Users/choihyun-young/Desktop/tensorboard/lec/test 3", sess. graph) merged = tf. merge_all_summaries() summary, _= sess. run([merged, z], feed_dict={x: [[1, 2, 3], [4, 5, 6]]}) writer. add_summary(summary)

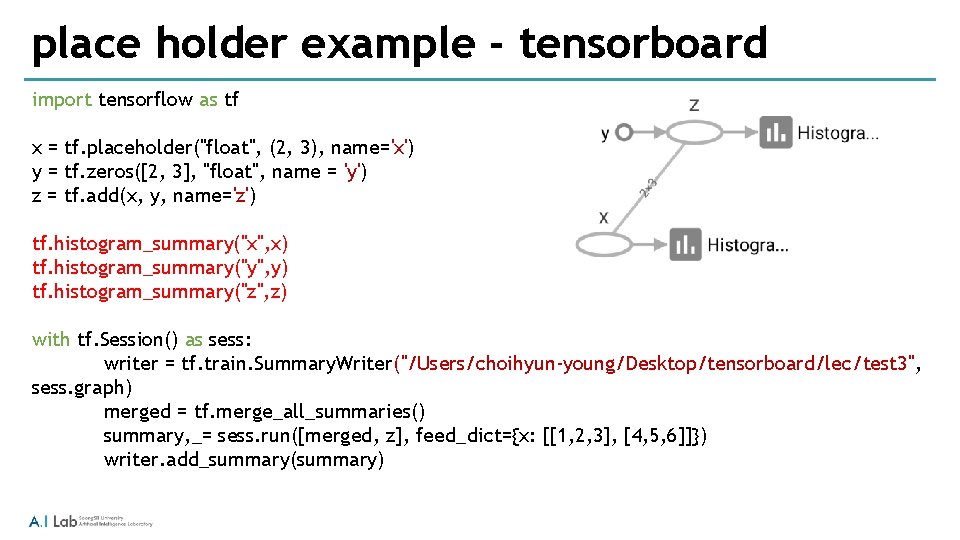

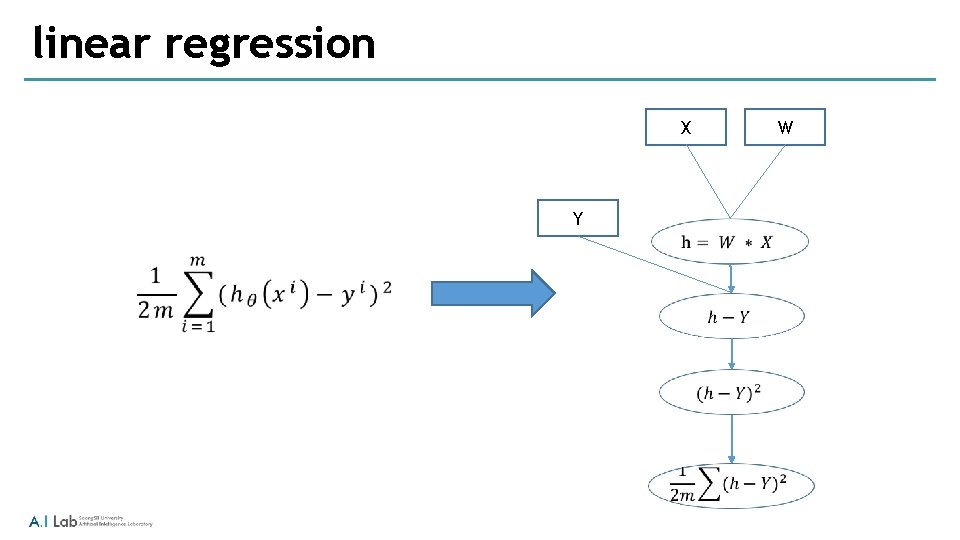

linear regression fname = 'ex 1 data 1. txt’ xy = genfromtxt(fname, delimiter=', ', dtype='float 32') m = xy. shape[0] n = xy. shape[1] x_data = np. concatenate((np. ones((m, 1)), xy[0: , : (n-1)]), axis=1) y_data = xy[0: , (n-1): ] with tf. name_scope("data") as scope: x = tf. placeholder(tf. float 32, name='X') tf. histogram_summary("X", x) y = tf. placeholder(tf. float 32, name ='Y') tf. histogram_summary("y", y) W = tf. Variable(tf. random_uniform([n, 1], -1. 0 , 1. 0), dtype=tf. float 32, name ='W') tf. histogram_summary("weight", W)

linear regression X Y W

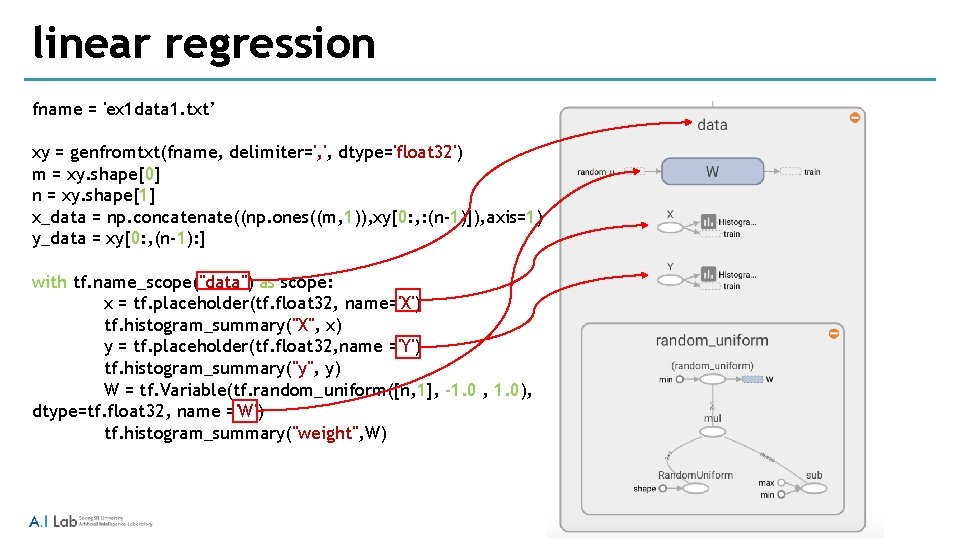

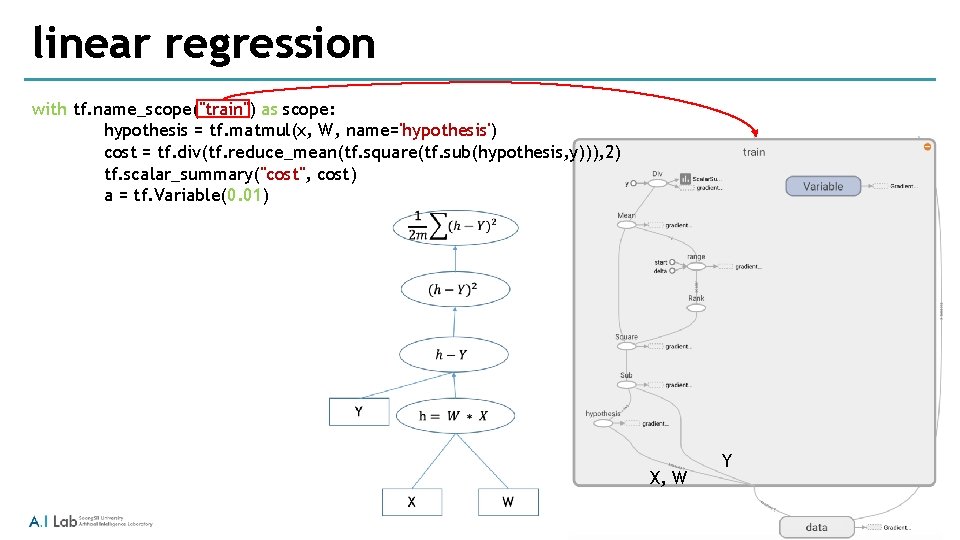

linear regression with tf. name_scope("train") as scope: hypothesis = tf. matmul(x, W, name='hypothesis') cost = tf. div(tf. reduce_mean(tf. square(tf. sub(hypothesis, y))), 2) tf. scalar_summary("cost", cost) a = tf. Variable(0. 01) X, W Y

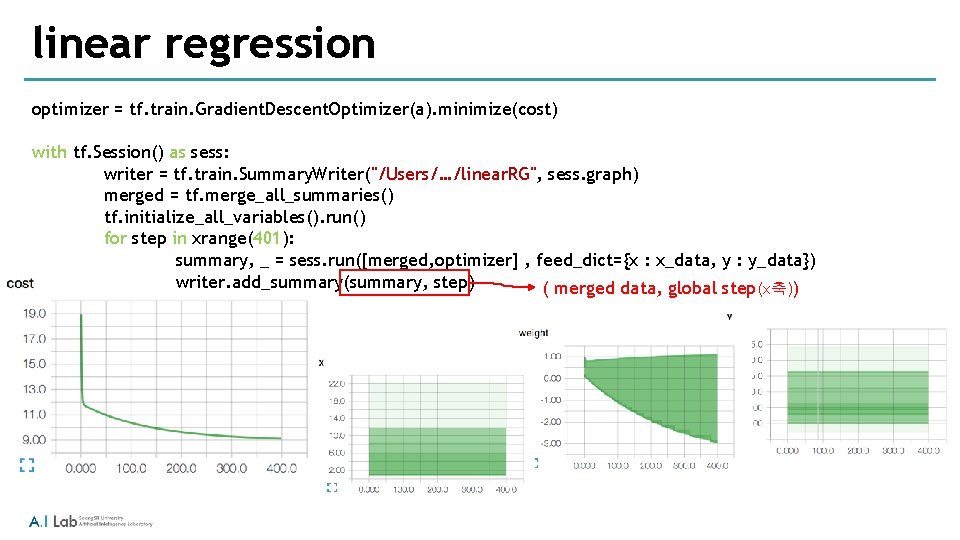

linear regression optimizer = tf. train. Gradient. Descent. Optimizer(a). minimize(cost) with tf. Session() as sess: writer = tf. train. Summary. Writer("/Users/…/linear. RG", sess. graph) merged = tf. merge_all_summaries() tf. initialize_all_variables(). run() for step in xrange(401): summary, _ = sess. run([merged, optimizer] , feed_dict={x : x_data, y : y_data}) writer. add_summary(summary, step) ( merged data, global step(x축))

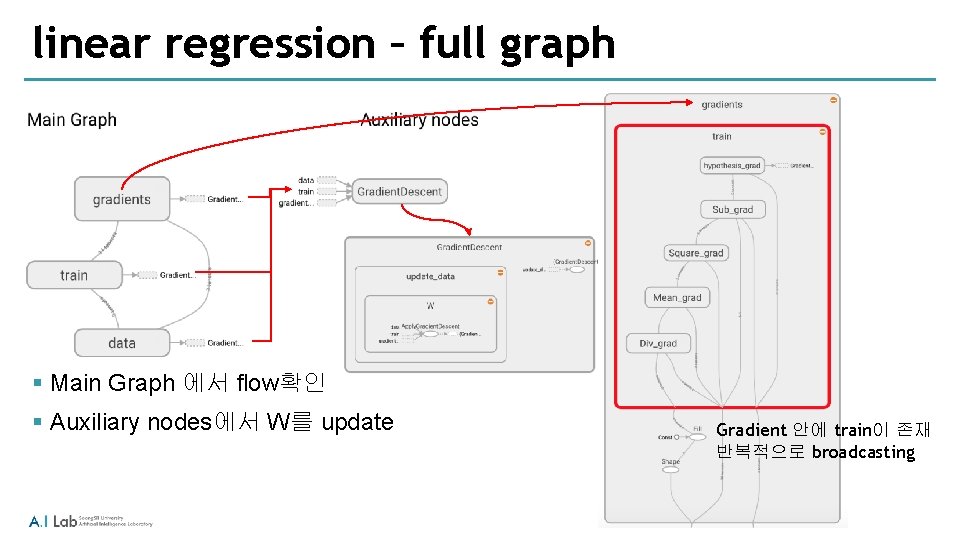

linear regression – full graph § Main Graph 에서 flow확인 § Auxiliary nodes에서 W를 update Gradient 안에 train이 존재 반복적으로 broadcasting

- Slides: 12