2 Review of Probability and Statistics Refs K

2. Review of Probability and Statistics Refs: K. Salah 0 Law & Kelton, Chapter 4

Random Variables • Experiment: a process whose outcome is not known with certainty • Sample space: S = {all possible outcomes of an experiment} • Sample point: an outcome (a member of sample space S) • Example: in coin flipping, S={Head, Tail}, Head and Tail are outcomes • Random variable: a function that assigns a real number to each point in sample space S • Example: in flipping two coins: • If X is the random variable = number of heads that occur • then X=1 for outcomes (H, T) and (T, H), and X=2 for (H, H) K. Salah 1

Random Variables: Notation • Denote random variables by upper case letters: X, Y, Z, . . . • Denote values of random variables by lower case letters: x, y, z, … • The distribution function (cumulative distribution function) F(x) of random variable X for real number x is F(x) = P(X x) for - <x< where P(X x) is the probability associated with event {X x} F(x) has the following properties: 0 F(x) 1 for all x F(x) is nondecreasing: i. e. if x 1 x 2 then F(x 1) F(x 2) K. Salah 2

Discrete Random Variables • A random variable is discrete if it takes on at most a countable number of values • The probability that random variable X takes on value xi is given by: p(xi) = P(X=xi) for I=1, 2, … and p(x) is the probability mass function of discrete random variable X F(x) is the probability distribution function of discrete random variable X K. Salah 3

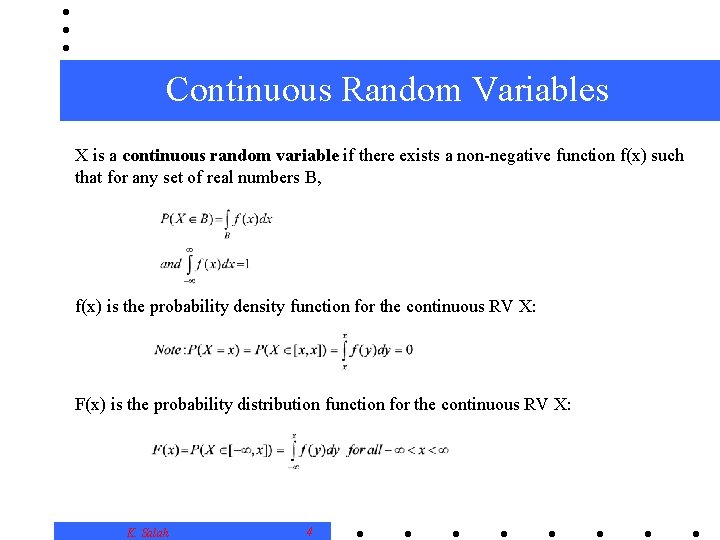

Continuous Random Variables X is a continuous random variable if there exists a non-negative function f(x) such that for any set of real numbers B, f(x) is the probability density function for the continuous RV X: F(x) is the probability distribution function for the continuous RV X: K. Salah 4

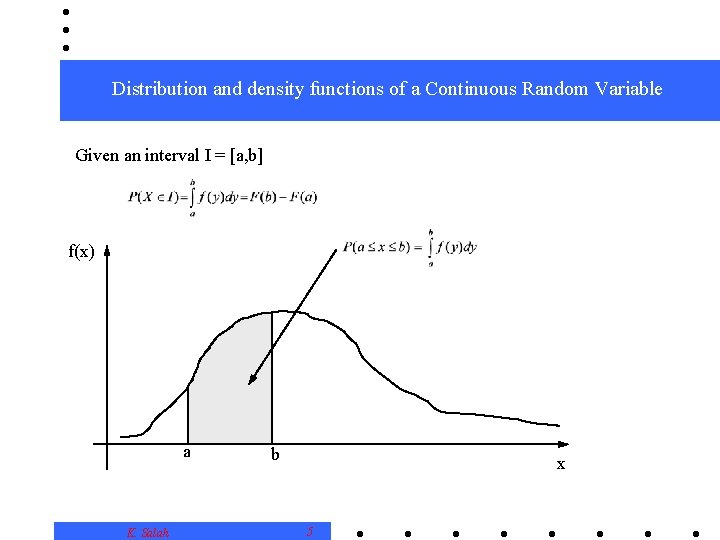

Distribution and density functions of a Continuous Random Variable Given an interval I = [a, b] f(x) a K. Salah b x 5

Joint Random Variables In the M/M/1 queuing system, the input can be represented as two sets of random variables: arrival times of customers: A 1, A 2, …, An and service times of customers: S 1, S 2, …, Sn The output can be a set of random variables: delays in queue of customers: D 1, D 2, …, Dn The D’s are not independent. K. Salah 6

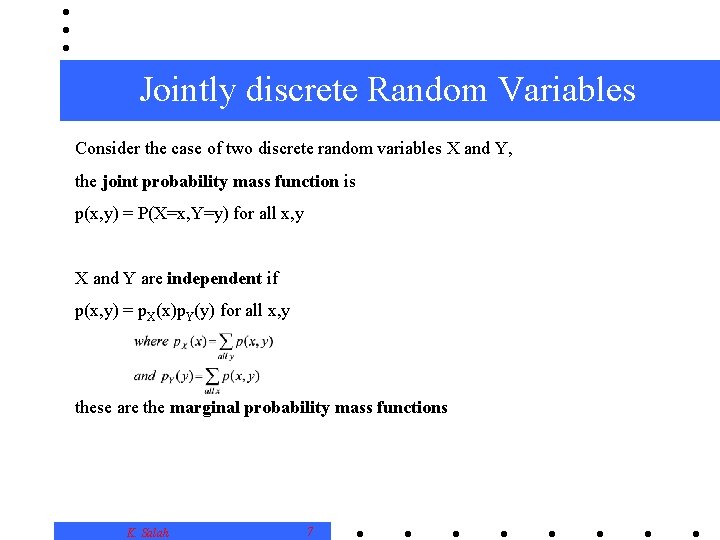

Jointly discrete Random Variables Consider the case of two discrete random variables X and Y, the joint probability mass function is p(x, y) = P(X=x, Y=y) for all x, y X and Y are independent if p(x, y) = p. X(x)p. Y(y) for all x, y these are the marginal probability mass functions K. Salah 7

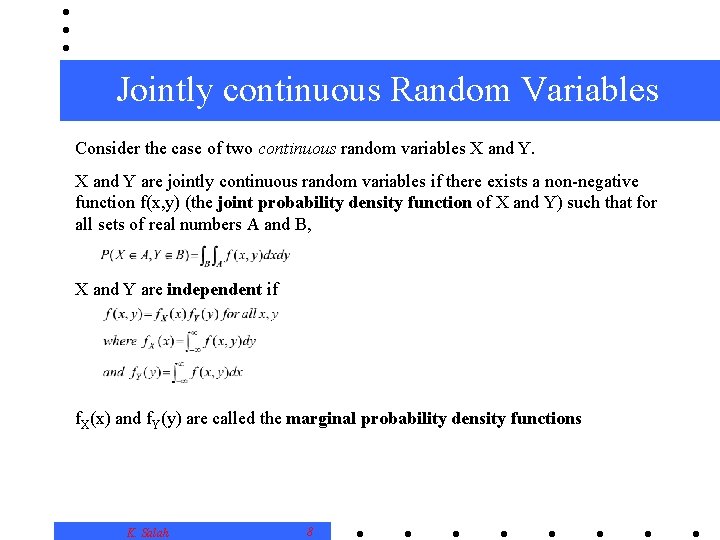

Jointly continuous Random Variables Consider the case of two continuous random variables X and Y are jointly continuous random variables if there exists a non-negative function f(x, y) (the joint probability density function of X and Y) such that for all sets of real numbers A and B, X and Y are independent if f. X(x) and f. Y(y) are called the marginal probability density functions K. Salah 8

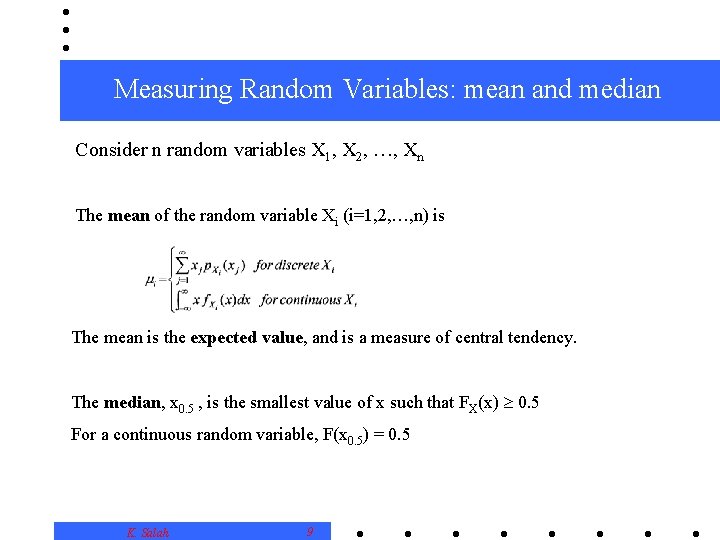

Measuring Random Variables: mean and median Consider n random variables X 1, X 2, …, Xn The mean of the random variable Xi (i=1, 2, …, n) is The mean is the expected value, and is a measure of central tendency. The median, x 0. 5 , is the smallest value of x such that FX(x) 0. 5 For a continuous random variable, F(x 0. 5) = 0. 5 K. Salah 9

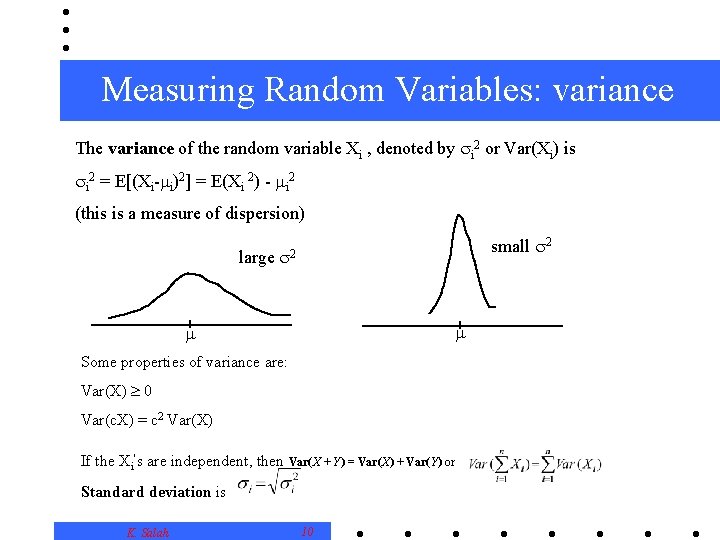

Measuring Random Variables: variance The variance of the random variable Xi , denoted by i 2 or Var(Xi) is i 2 = E[(Xi- i)2] = E(Xi 2) - i 2 (this is a measure of dispersion) large small 2 2 Some properties of variance are: Var(X) 0 Var(c. X) = c 2 Var(X) If the Xi’s are independent, then Var(X + Y) = Var(X) + Var(Y) or Standard deviation is K. Salah 10

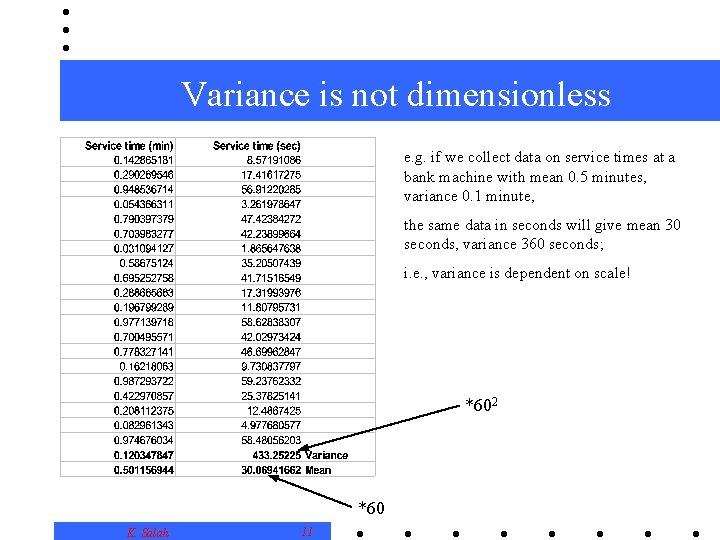

Variance is not dimensionless e. g. if we collect data on service times at a bank machine with mean 0. 5 minutes, variance 0. 1 minute, the same data in seconds will give mean 30 seconds, variance 360 seconds; i. e. , variance is dependent on scale! *602 *60 K. Salah 11

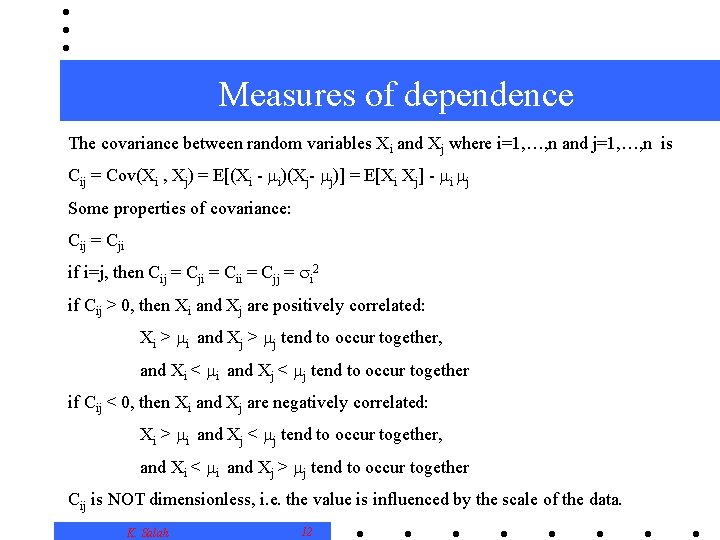

Measures of dependence The covariance between random variables Xi and Xj where i=1, …, n and j=1, …, n is Cij = Cov(Xi , Xj) = E[(Xi - i)(Xj- j)] = E[Xi Xj] - i j Some properties of covariance: Cij = Cji if i=j, then Cij = Cji = Cii = Cjj = i 2 if Cij > 0, then Xi and Xj are positively correlated: Xi > i and Xj > j tend to occur together, and Xi < i and Xj < j tend to occur together if Cij < 0, then Xi and Xj are negatively correlated: Xi > i and Xj < j tend to occur together, and Xi < i and Xj > j tend to occur together Cij is NOT dimensionless, i. e. the value is influenced by the scale of the data. K. Salah 12

A dimensionless measure of dependence Correlation is a dimensionless measure of dependence: Var(a 1 X 1+ a 2 X 2 ) = a 12 Var(X 1)+2 a 1 a 2 Covar(X 1, X 2)+ a 22 Var(X 1) Var(X-Y) = Var(X) + Var(Y) – 2 Cov(X, Y) Var(X+Y) = Var(X) + Var(Y) +2 Cov(X, Y) K. Salah 13

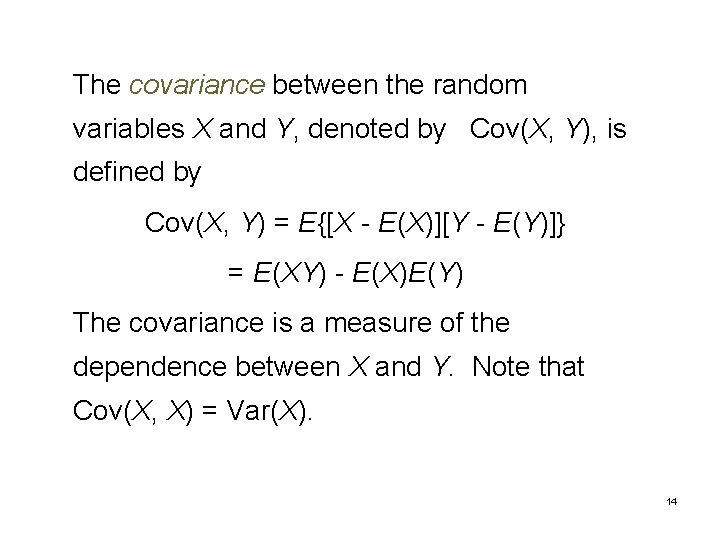

The covariance between the random variables X and Y, denoted by Cov(X, Y), is defined by Cov(X, Y) = E{[X - E(X)][Y - E(Y)]} = E(XY) - E(X)E(Y) The covariance is a measure of the dependence between X and Y. Note that Cov(X, X) = Var(X). 14

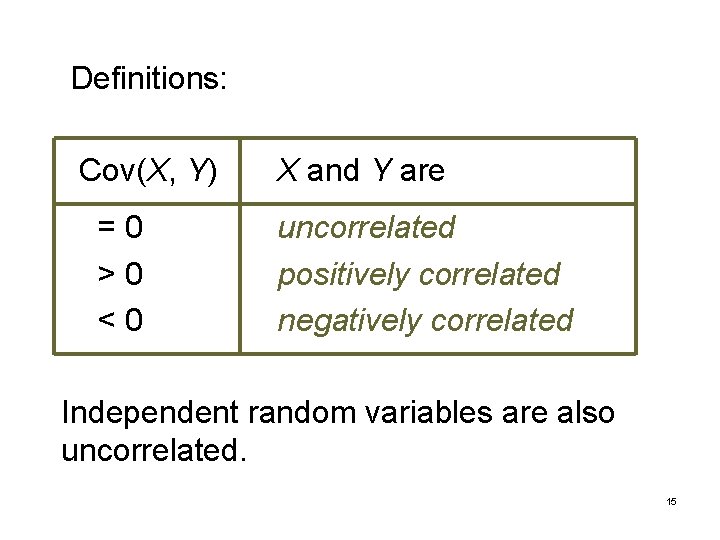

Definitions: Cov(X, Y) =0 >0 <0 X and Y are uncorrelated positively correlated negatively correlated Independent random variables are also uncorrelated. 15

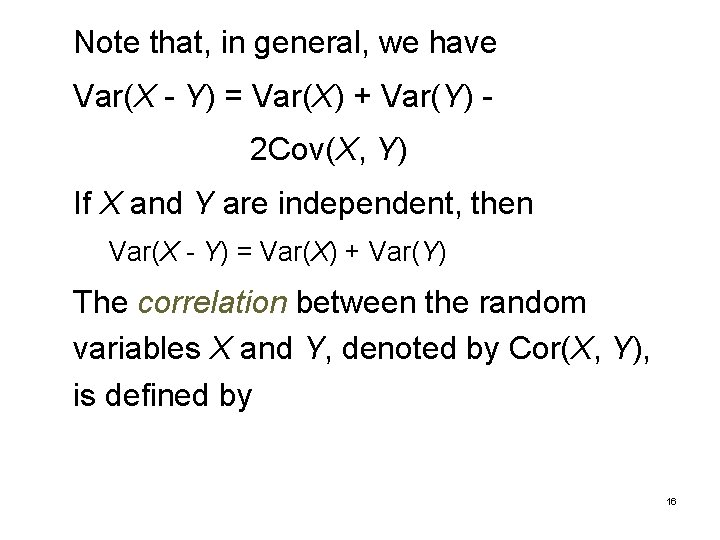

Note that, in general, we have Var(X - Y) = Var(X) + Var(Y) 2 Cov(X, Y) If X and Y are independent, then Var(X - Y) = Var(X) + Var(Y) The correlation between the random variables X and Y, denoted by Cor(X, Y), is defined by 16

It can be shown that -1 Cor(X, Y) 1 17

4. 2. Simulation Output Data and Stochastic Processes A stochastic process is a collection of "similar" random variables ordered over time all defined relative to the same experiment. If the collection is X 1, X 2, . . . , then we have a discrete-time stochastic process. If the collection is {X(t), t 0}, then we have a continuous-time stochastic process. 18

Example 4. 3: Consider the single-server queueing system of Chapter 1 with independent interarrival times A 1, A 2, . . . and independent processing times P 1, P 2, . . Relative to the experiment of generating the Ai's and Pi's, one can define the discrete-time stochastic process of delays in queue D 1, D 2, . . . as follows: D 1 = 0 Di +1 = max{Di + Pi - Ai +1, 0} for i = 1, 2, . . . 19

Thus, the simulation maps the input random variables into the output process of interest. Other examples of stochastic processes: • N 1, N 2, . . . , where Ni = number of parts produced in the ith hour for a manufacturing system • T 1, T 2, . . . , where Ti = time in system of the ith part for a manufacturing system 20

• {Q(t), t 0}, where Q(t) = number of customers in queue at time t • C 1, C 2, . . . , where Ci = total cost in the ith month for an inventory system • E 1, E 2, . . . , where Ei = end-to-end delay of ith message to reach its destination in a communications network • {R(t), t 0}, where R(t) = number of red tanks in a battle at time t 21

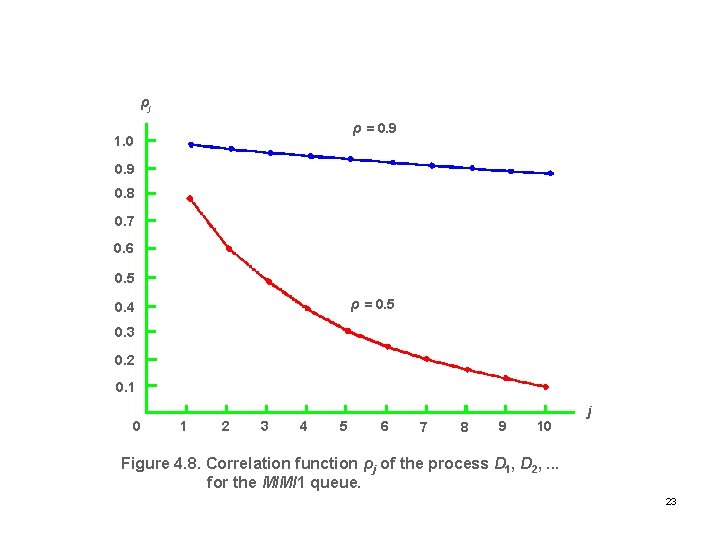

Example 4. 4: Consider the delay-inqueue process D 1, D 2, . . . for the M/M/1 queue with utilization factor ρ. Then the correlation function ρj between Di and Di+j is given in Figure 4. 8. 22

ρj ρ = 0. 9 1. 0 0. 9 0. 8 0. 7 0. 6 0. 5 ρ = 0. 5 0. 4 0. 3 0. 2 0. 1 0 1 2 3 4 5 6 7 8 9 10 j Figure 4. 8. Correlation function ρj of the process D 1, D 2, . . . for the M/M/1 queue. 23

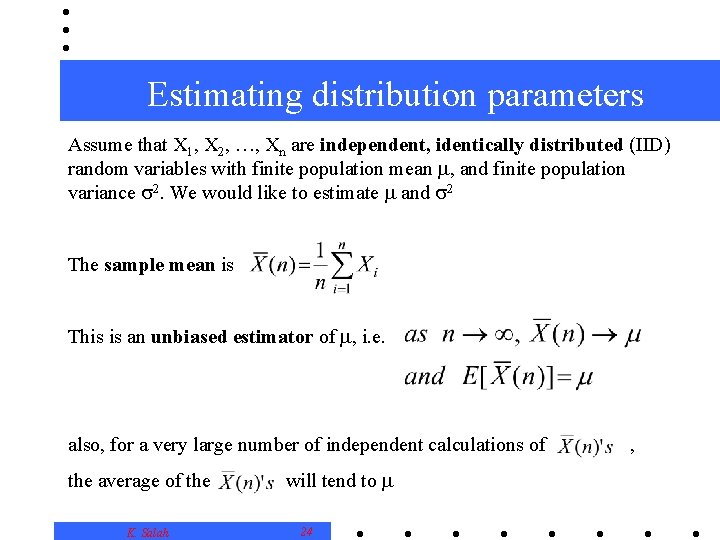

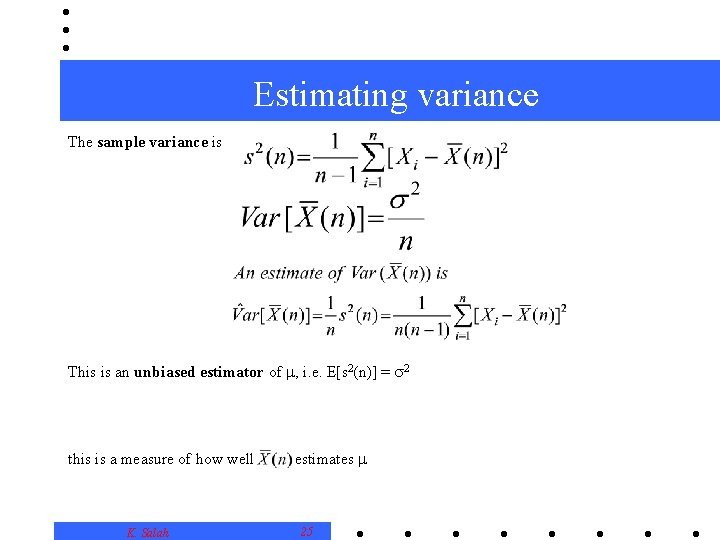

Estimating distribution parameters Assume that X 1, X 2, …, Xn are independent, identically distributed (IID) random variables with finite population mean , and finite population variance 2. We would like to estimate and 2 The sample mean is This is an unbiased estimator of , i. e. also, for a very large number of independent calculations of the average of the K. Salah will tend to 24 ,

Estimating variance The sample variance is This is an unbiased estimator of , i. e. E[s 2(n)] = 2 this is a measure of how well K. Salah estimates 25

Two interesting theorems Central Limit Theorem: For large enough n, tends to be normally distributed with mean and variance 2/n This means that we can assume that the average of a large numbers of random samples is normally distributed - very convenient. Strong Law of Large Numbers: For an infinite number of experiments, each resulting in close to for almost all experiments. , for sufficiently large n, will be arbitrarily This means that we must choose n carefully: every sample must be large enough to give a good K. Salah 26 .

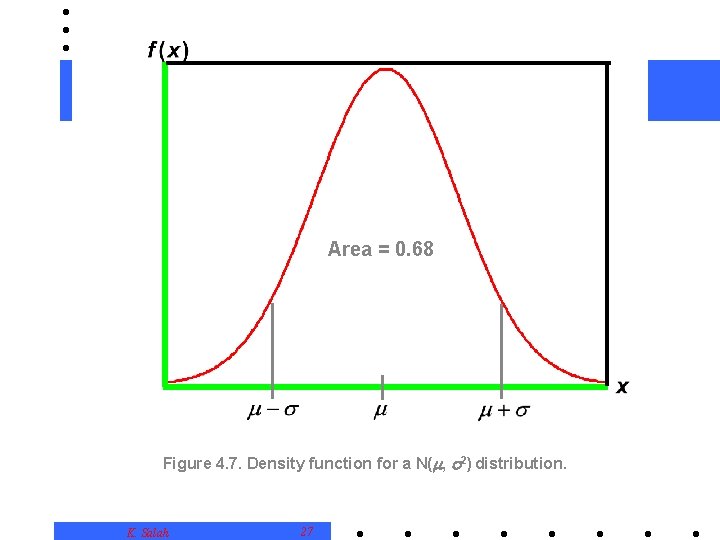

Area = 0. 68 Figure 4. 7. Density function for a N( , 2) distribution. K. Salah 27

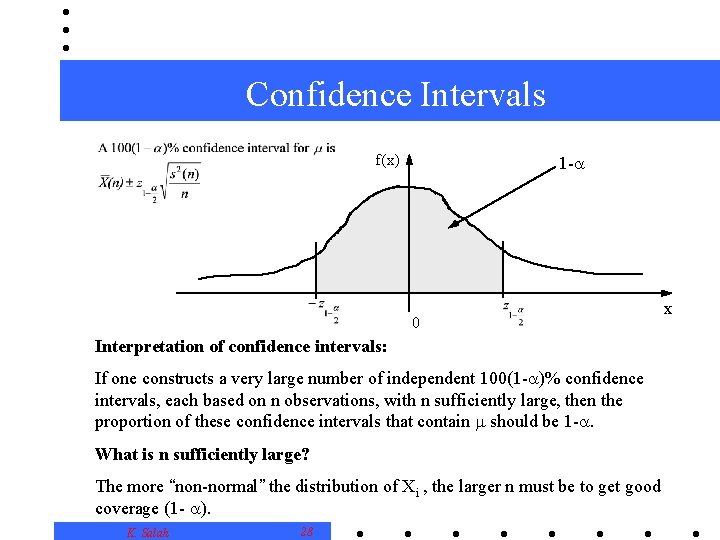

Confidence Intervals f(x) 1 - 0 Interpretation of confidence intervals: If one constructs a very large number of independent 100(1 - )% confidence intervals, each based on n observations, with n sufficiently large, then the proportion of these confidence intervals that contain should be 1 -. What is n sufficiently large? The more “non-normal” the distribution of Xi , the larger n must be to get good coverage (1 - ). K. Salah 28 x

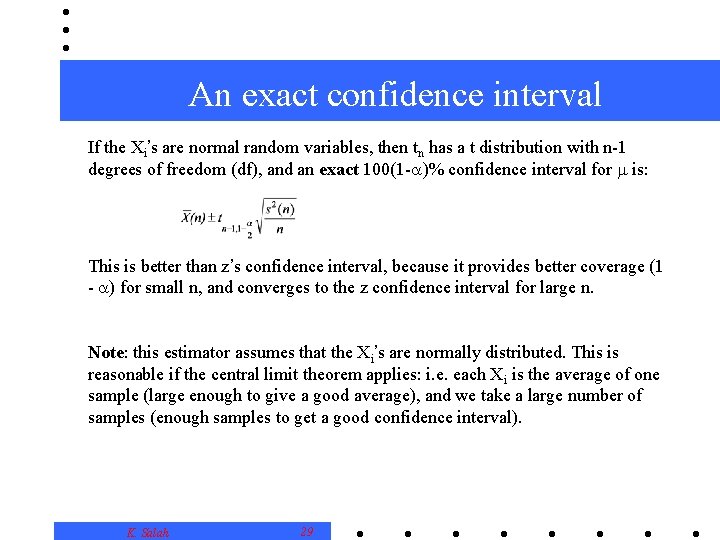

An exact confidence interval If the Xi’s are normal random variables, then tn has a t distribution with n-1 degrees of freedom (df), and an exact 100(1 - )% confidence interval for is: This is better than z’s confidence interval, because it provides better coverage (1 - ) for small n, and converges to the z confidence interval for large n. Note: this estimator assumes that the Xi’s are normally distributed. This is reasonable if the central limit theorem applies: i. e. each Xi is the average of one sample (large enough to give a good average), and we take a large number of samples (enough samples to get a good confidence interval). K. Salah 29

- Slides: 30