18 447 Computer Architecture Lecture 24 Memory Scheduling

![STFM Scheduling Algorithm [MICRO’ 07] n n For each thread, the DRAM controller q STFM Scheduling Algorithm [MICRO’ 07] n n For each thread, the DRAM controller q](https://slidetodoc.com/presentation_image/4c09122f51e5554bf31ac066738900e7/image-29.jpg)

- Slides: 48

18 -447 Computer Architecture Lecture 24: Memory Scheduling Prof. Onur Mutlu Presented by Justin Meza Carnegie Mellon University Spring 2014, 3/31/2014

Last Two Lectures n Main Memory q q n DRAM Design and Enhancements q q q n Organization and DRAM Operation Memory Controllers More Detailed DRAM Design: Subarrays Row. Clone and In-DRAM Computation Tiered-Latency DRAM Memory Access Scheduling q FR-FCFS – row-hit-first scheduling 2

Today n Row Buffer Management Policies n Memory Interference (and Techniques to Manage It) q With a focus on Memory Request Scheduling 3

Review: DRAM Scheduling Policies (I) n FCFS (first come first served) q n Oldest request first FR-FCFS (first ready, first come first served) 1. Row-hit first 2. Oldest first Goal: Maximize row buffer hit rate maximize DRAM throughput q Actually, scheduling is done at the command level n n Column commands (read/write) prioritized over row commands (activate/precharge) Within each group, older commands prioritized over younger ones 4

Review: DRAM Scheduling Policies (II) n A scheduling policy is essentially a prioritization order n Prioritization can be based on q q q Request age Row buffer hit/miss status Request type (prefetch, read, write) Requestor type (load miss or store miss) Request criticality n n Oldest miss in the core? How many instructions in core are dependent on it? 5

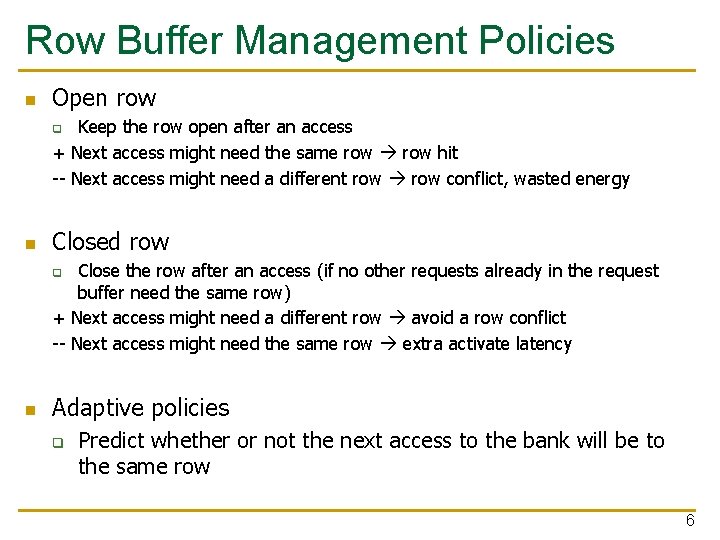

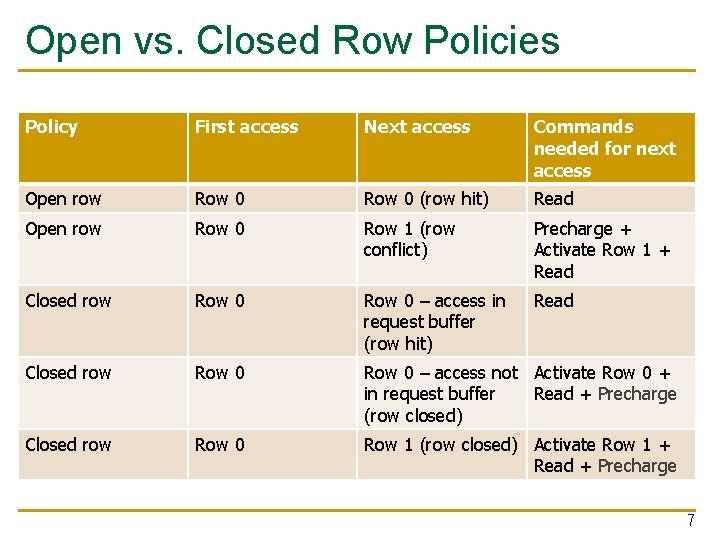

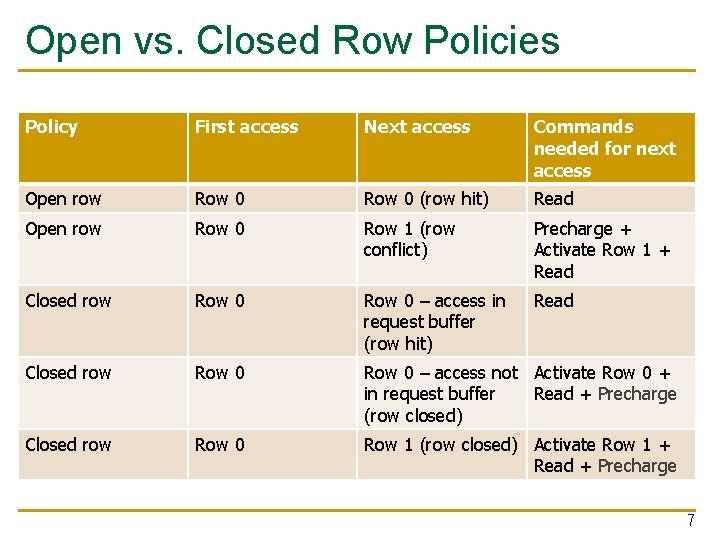

Row Buffer Management Policies n Open row Keep the row open after an access + Next access might need the same row hit -- Next access might need a different row conflict, wasted energy q n Closed row Close the row after an access (if no other requests already in the request buffer need the same row) + Next access might need a different row avoid a row conflict -- Next access might need the same row extra activate latency q n Adaptive policies q Predict whether or not the next access to the bank will be to the same row 6

Open vs. Closed Row Policies Policy First access Next access Commands needed for next access Open row Row 0 (row hit) Read Open row Row 0 Row 1 (row conflict) Precharge + Activate Row 1 + Read Closed row Row 0 – access in request buffer (row hit) Read Closed row Row 0 – access not Activate Row 0 + in request buffer Read + Precharge (row closed) Closed row Row 0 Row 1 (row closed) Activate Row 1 + Read + Precharge 7

Memory Interference and Scheduling in Multi-Core Systems

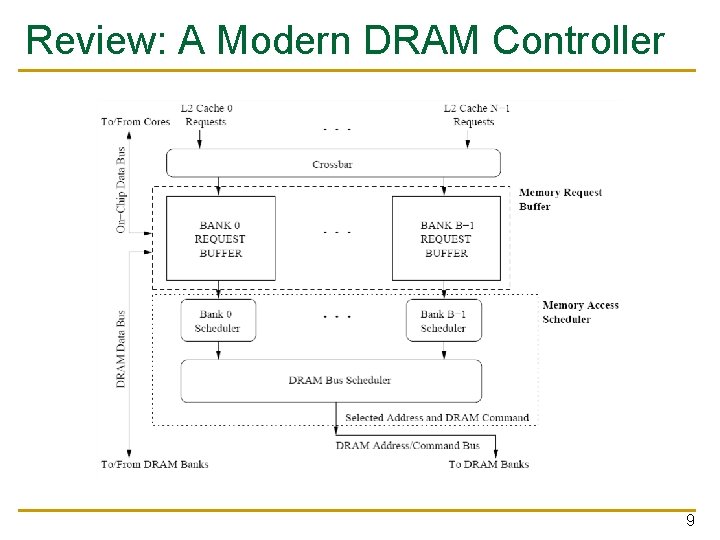

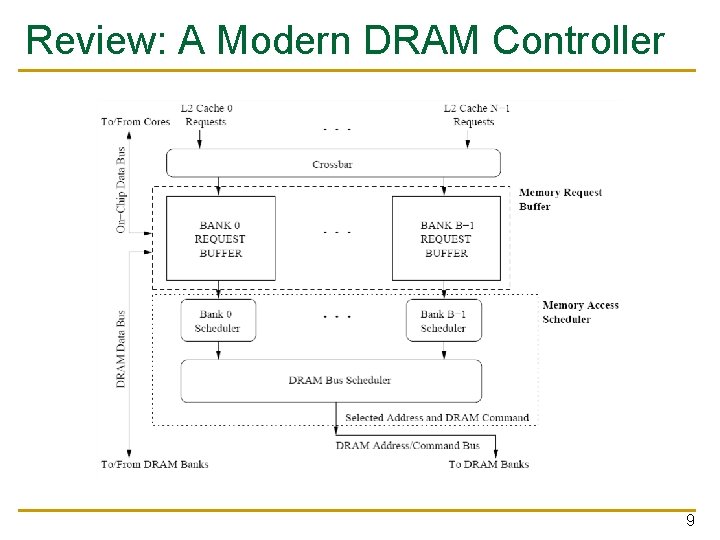

Review: A Modern DRAM Controller 9

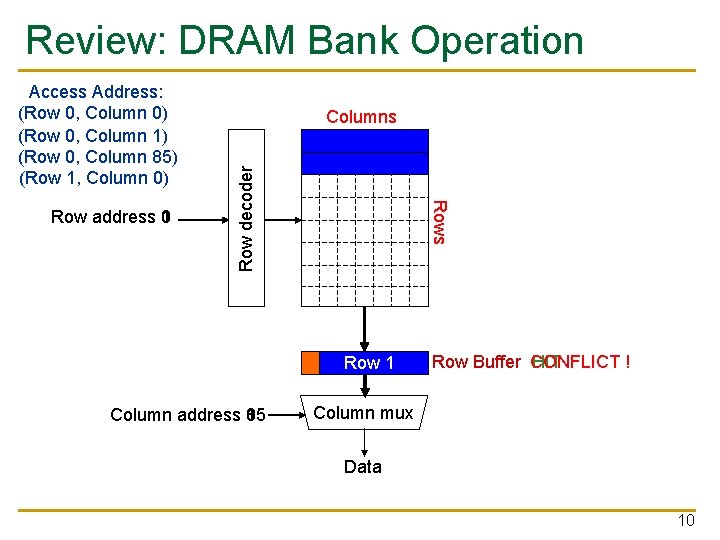

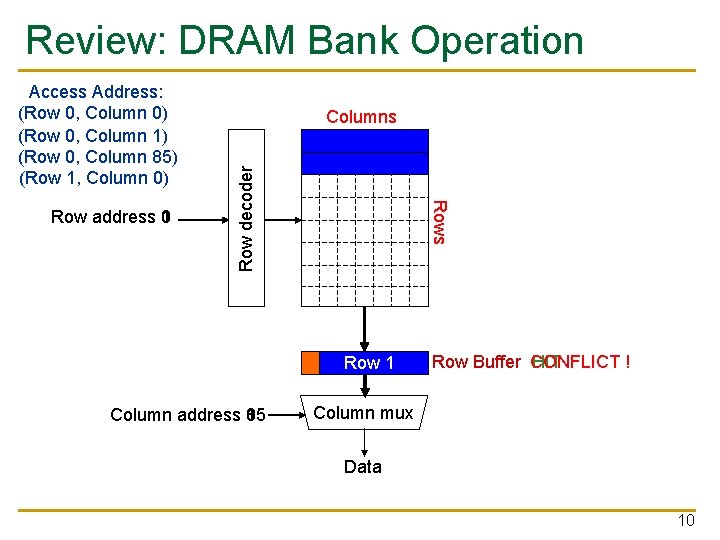

Review: DRAM Bank Operation Rows Row address 0 1 Columns Row decoder Access Address: (Row 0, Column 0) (Row 0, Column 1) (Row 0, Column 85) (Row 1, Column 0) Row 01 Row Empty Column address 0 1 85 Row Buffer CONFLICT HIT ! Column mux Data 10

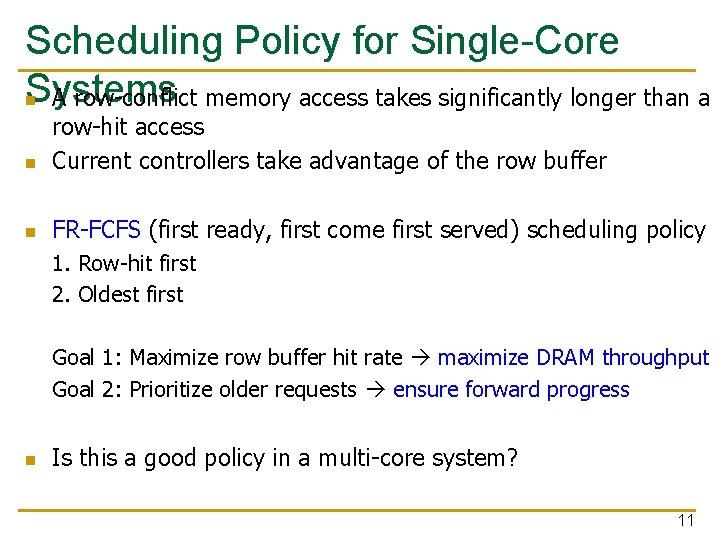

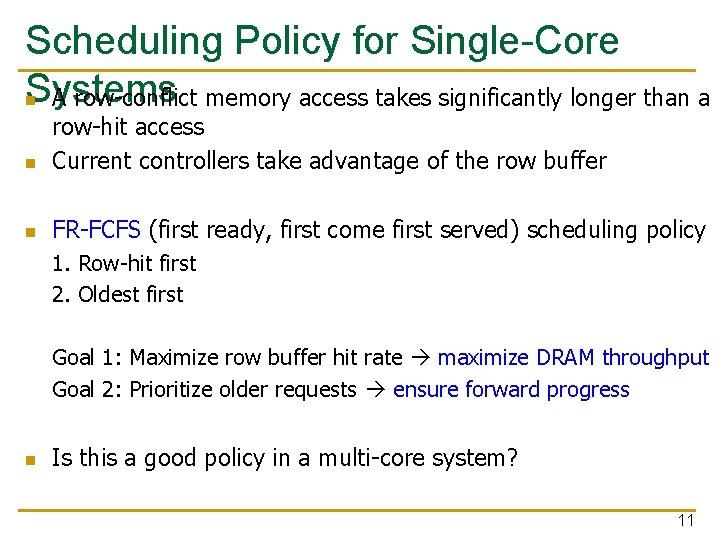

Scheduling Policy for Single-Core Systems n A row-conflict memory access takes significantly longer than a n row-hit access Current controllers take advantage of the row buffer n FR-FCFS (first ready, first come first served) scheduling policy 1. Row-hit first 2. Oldest first Goal 1: Maximize row buffer hit rate maximize DRAM throughput Goal 2: Prioritize older requests ensure forward progress n Is this a good policy in a multi-core system? 11

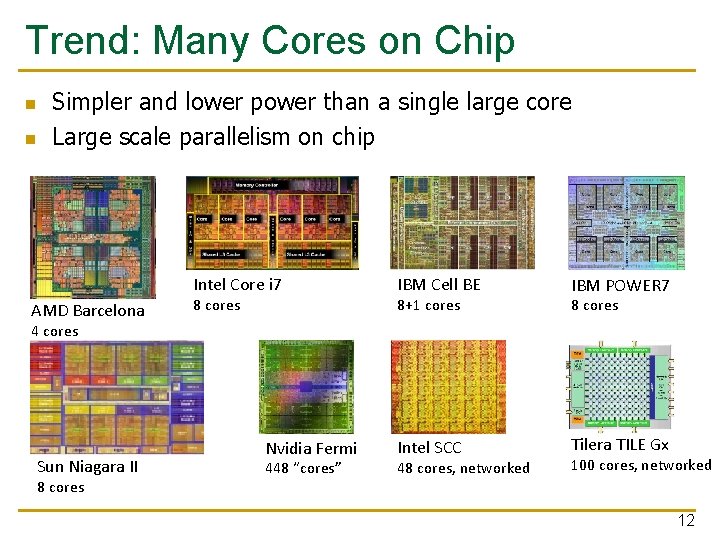

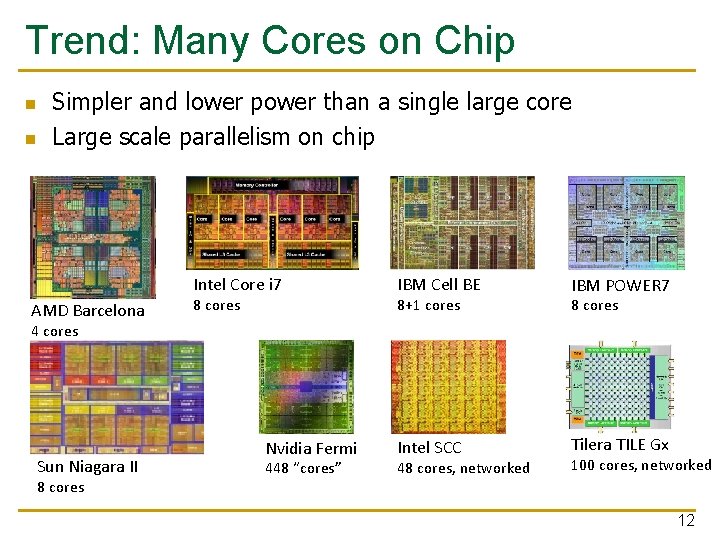

Trend: Many Cores on Chip n n Simpler and lower power than a single large core Large scale parallelism on chip Intel Core i 7 AMD Barcelona 8 cores IBM Cell BE IBM POWER 7 Intel SCC Tilera TILE Gx 8+1 cores 8 cores 4 cores Sun Niagara II 8 cores Nvidia Fermi 448 “cores” 48 cores, networked 100 cores, networked 12

Many Cores on Chip n What we want: q n N times the system performance with N times the cores What do we get today? 13

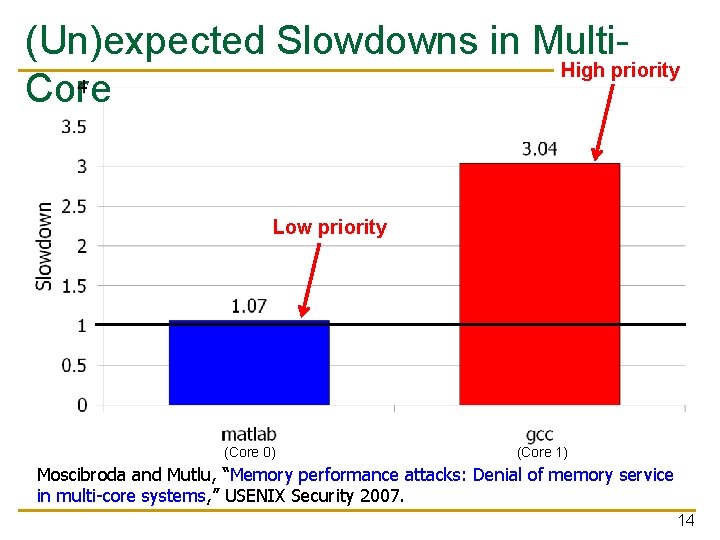

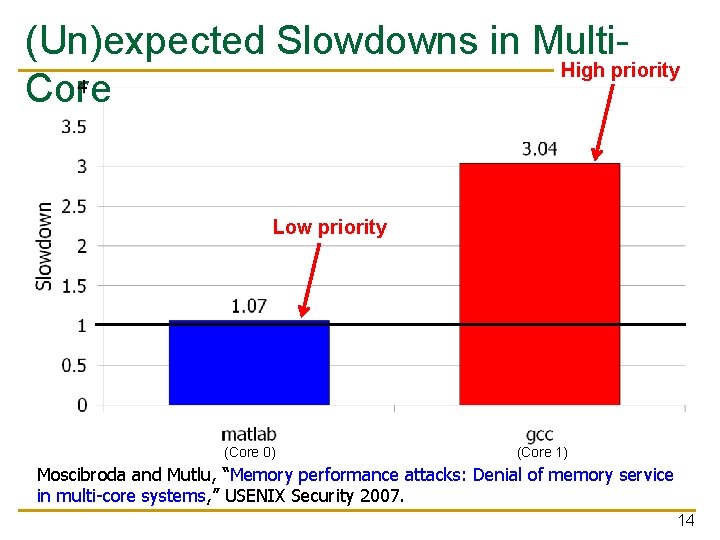

(Un)expected Slowdowns in Multi. High priority Core Low priority (Core 0) (Core 1) Moscibroda and Mutlu, “Memory performance attacks: Denial of memory service in multi-core systems, ” USENIX Security 2007. 14

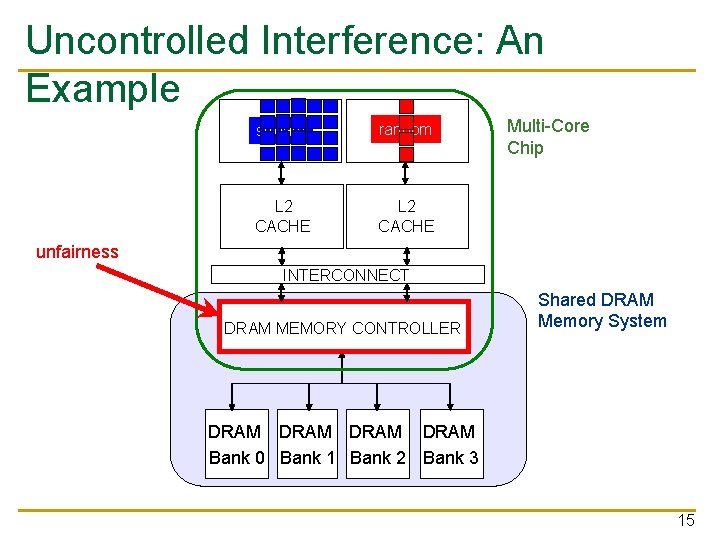

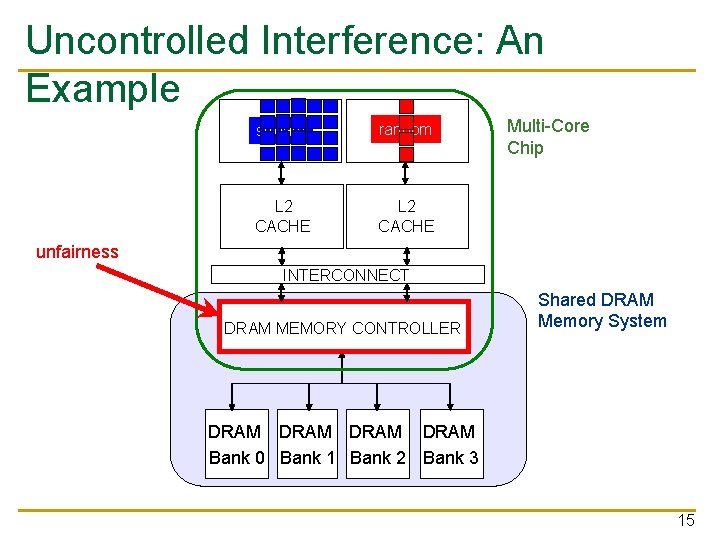

Uncontrolled Interference: An Example stream 1 CORE random 2 CORE L 2 CACHE Multi-Core Chip unfairness INTERCONNECT DRAM MEMORY CONTROLLER Shared DRAM Memory System DRAM Bank 0 Bank 1 Bank 2 Bank 3 15

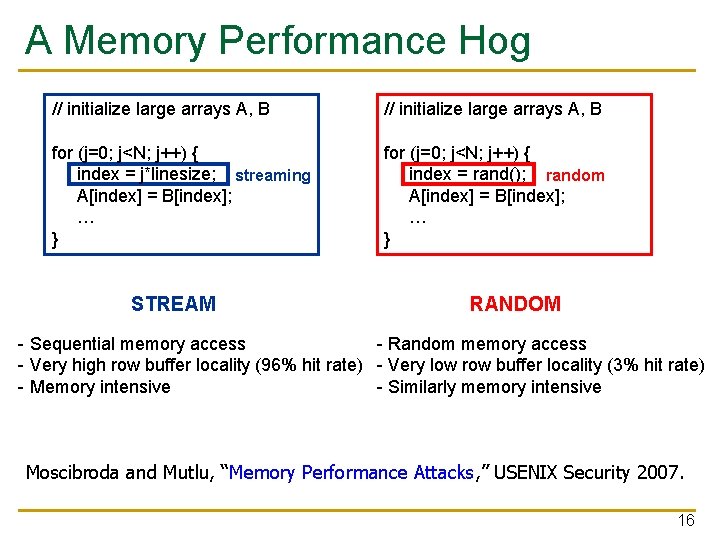

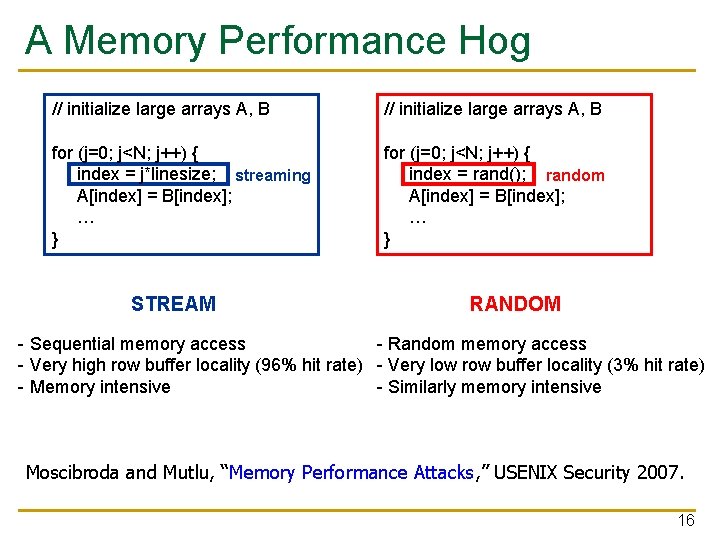

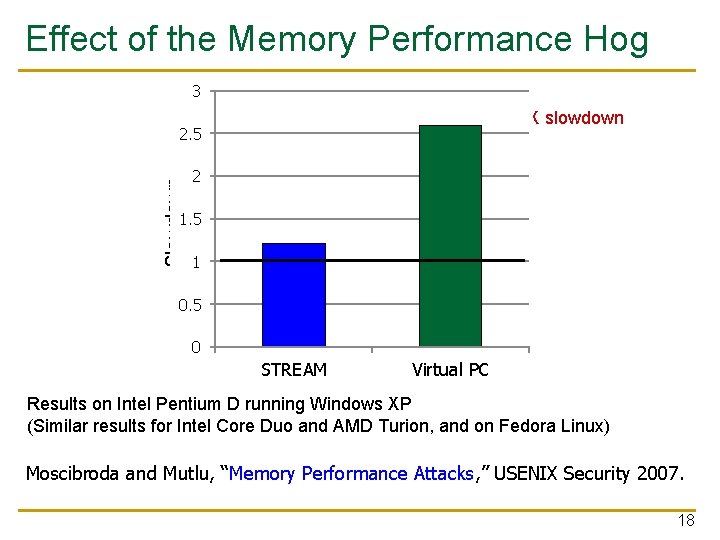

A Memory Performance Hog // initialize large arrays A, B for (j=0; j<N; j++) { index = j*linesize; streaming A[index] = B[index]; … } for (j=0; j<N; j++) { index = rand(); random A[index] = B[index]; … } STREAM RANDOM - Sequential memory access - Random memory access - Very high row buffer locality (96% hit rate) - Very low row buffer locality (3% hit rate) - Memory intensive - Similarly memory intensive Moscibroda and Mutlu, “Memory Performance Attacks, ” USENIX Security 2007. 16

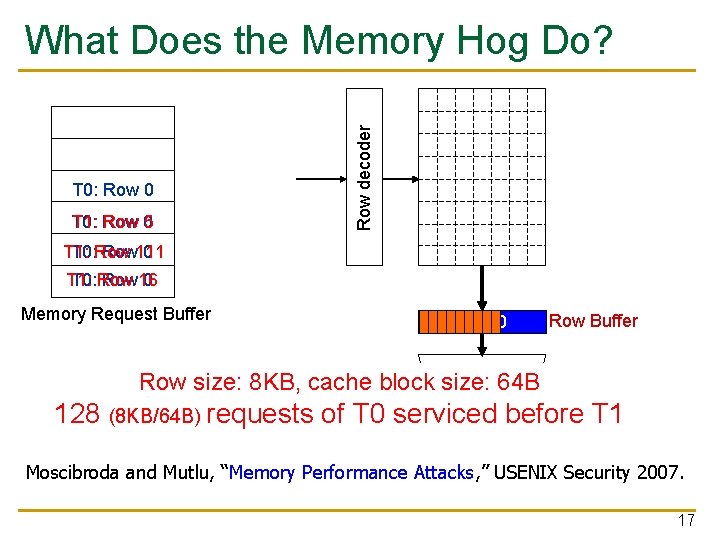

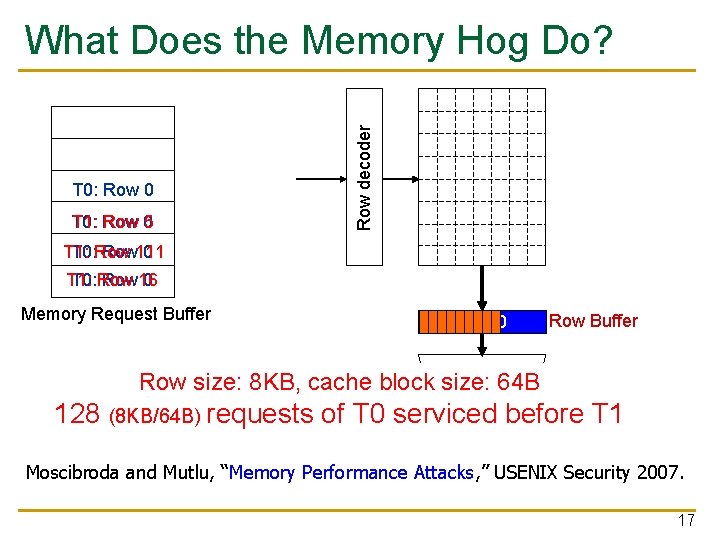

Row decoder What Does the Memory Hog Do? T 0: Row 0 T 0: T 1: Row 05 T 1: T 0: Row 111 0 T 1: T 0: Row 16 0 Memory Request Buffer Row 00 Row Buffer mux Row size: 8 KB, cache block. Column size: 64 B T 0: STREAM 128 (8 KB/64 B) T 1: RANDOM requests of T 0 serviced Data before T 1 Moscibroda and Mutlu, “Memory Performance Attacks, ” USENIX Security 2007. 17

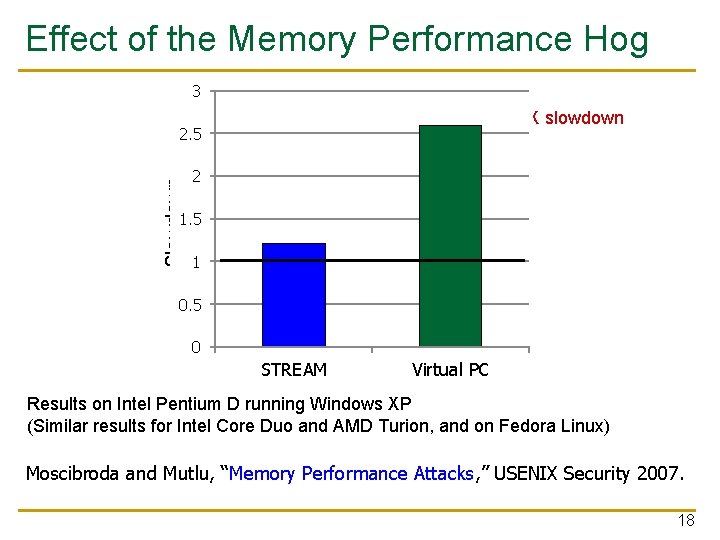

Effect of the Memory Performance Hog 3 2. 82 X slowdown Slowdown 2. 5 2 1. 5 1. 18 X slowdown 1 0. 5 0 STREAM Virtual gcc PC Results on Intel Pentium D running Windows XP (Similar results for Intel Core Duo and AMD Turion, and on Fedora Linux) Moscibroda and Mutlu, “Memory Performance Attacks, ” USENIX Security 2007. 18

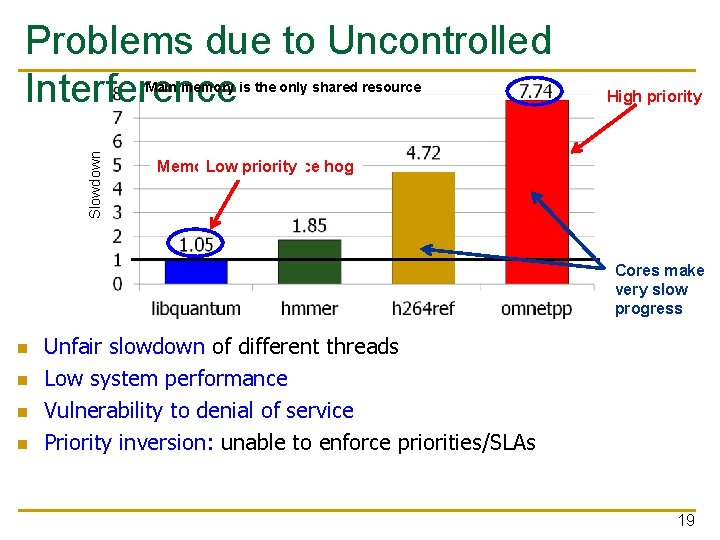

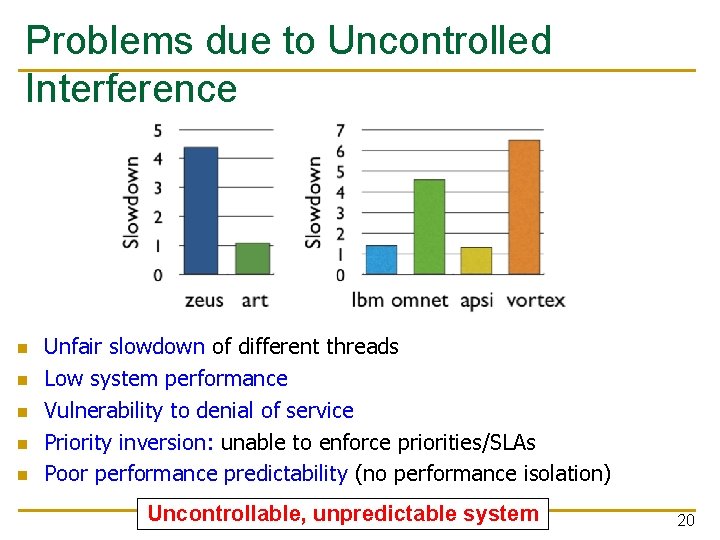

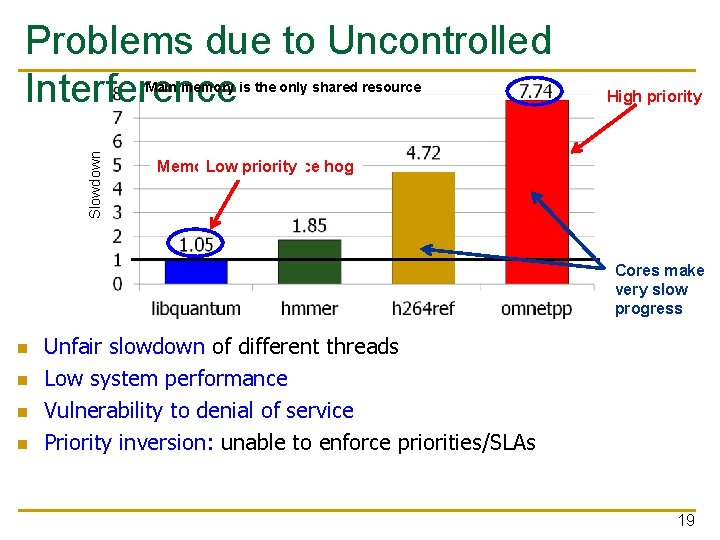

Problems due to Uncontrolled Interference Slowdown Main memory is the only shared resource High priority Memory Low performance priority hog Cores make very slow progress n n Unfair slowdown of different threads Low system performance Vulnerability to denial of service Priority inversion: unable to enforce priorities/SLAs 19

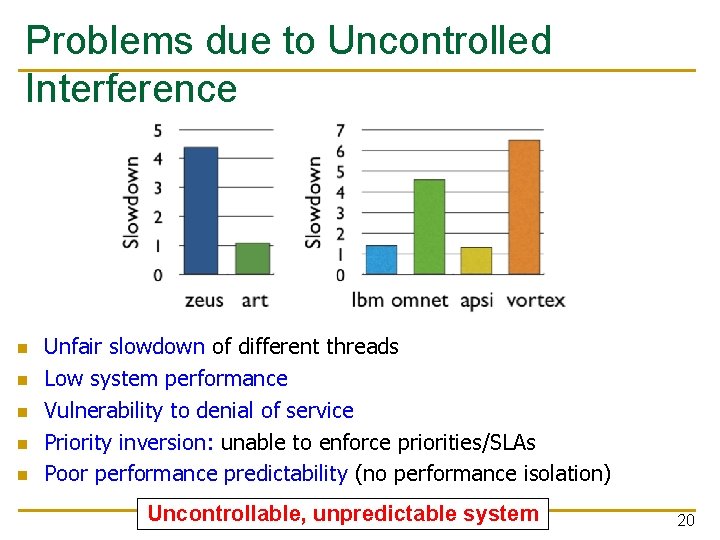

Problems due to Uncontrolled Interference n n n Unfair slowdown of different threads Low system performance Vulnerability to denial of service Priority inversion: unable to enforce priorities/SLAs Poor performance predictability (no performance isolation) Uncontrollable, unpredictable system 20

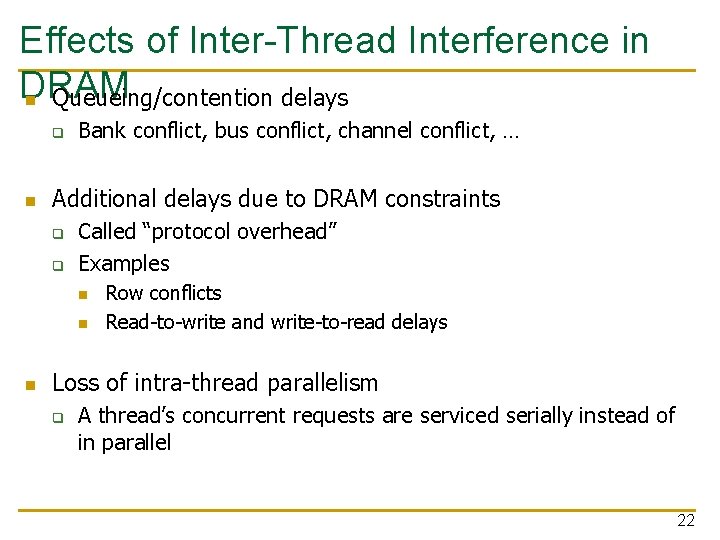

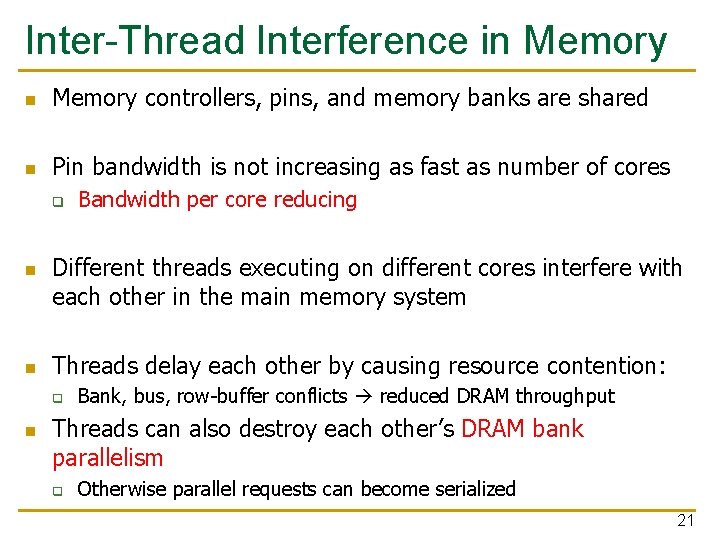

Inter-Thread Interference in Memory controllers, pins, and memory banks are shared n Pin bandwidth is not increasing as fast as number of cores q n n Different threads executing on different cores interfere with each other in the main memory system Threads delay each other by causing resource contention: q n Bandwidth per core reducing Bank, bus, row-buffer conflicts reduced DRAM throughput Threads can also destroy each other’s DRAM bank parallelism q Otherwise parallel requests can become serialized 21

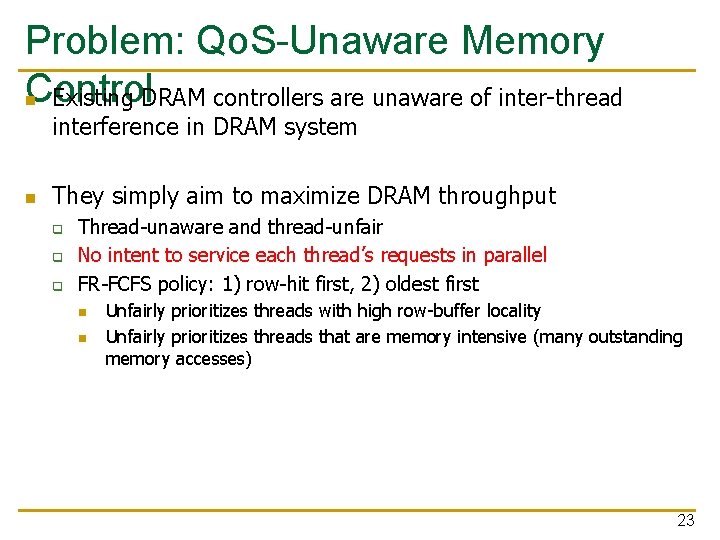

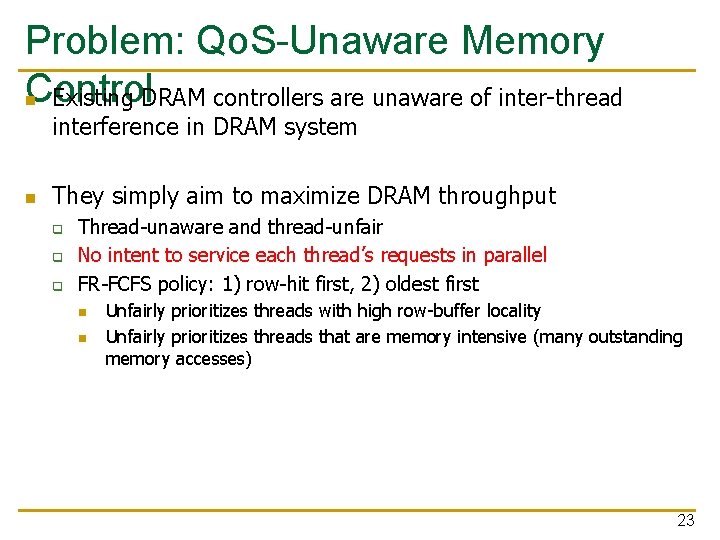

Effects of Inter-Thread Interference in DRAM n Queueing/contention delays q n Bank conflict, bus conflict, channel conflict, … Additional delays due to DRAM constraints q q Called “protocol overhead” Examples n n n Row conflicts Read-to-write and write-to-read delays Loss of intra-thread parallelism q A thread’s concurrent requests are serviced serially instead of in parallel 22

Problem: Qo. S-Unaware Memory Control n Existing DRAM controllers are unaware of inter-thread interference in DRAM system n They simply aim to maximize DRAM throughput q q q Thread-unaware and thread-unfair No intent to service each thread’s requests in parallel FR-FCFS policy: 1) row-hit first, 2) oldest first n n Unfairly prioritizes threads with high row-buffer locality Unfairly prioritizes threads that are memory intensive (many outstanding memory accesses) 23

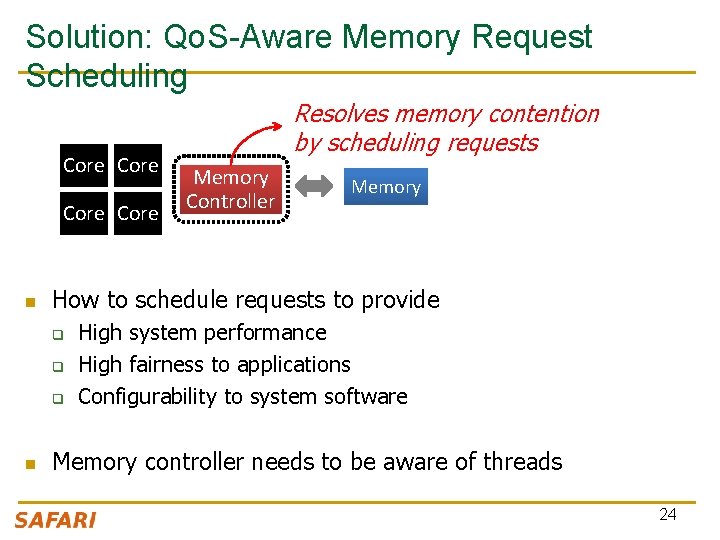

Solution: Qo. S-Aware Memory Request Scheduling Core n Memory Controller Memory How to schedule requests to provide q q q n Resolves memory contention by scheduling requests High system performance High fairness to applications Configurability to system software Memory controller needs to be aware of threads 24

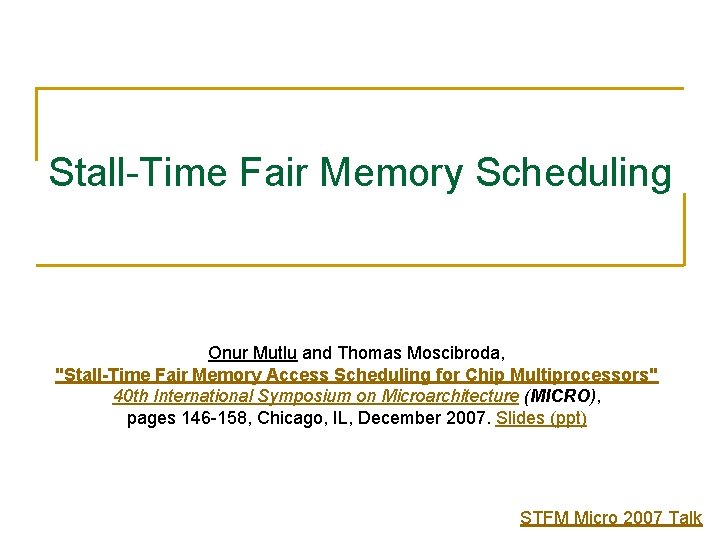

Stall-Time Fair Memory Scheduling Onur Mutlu and Thomas Moscibroda, "Stall-Time Fair Memory Access Scheduling for Chip Multiprocessors" 40 th International Symposium on Microarchitecture (MICRO), pages 146 -158, Chicago, IL, December 2007. Slides (ppt) STFM Micro 2007 Talk

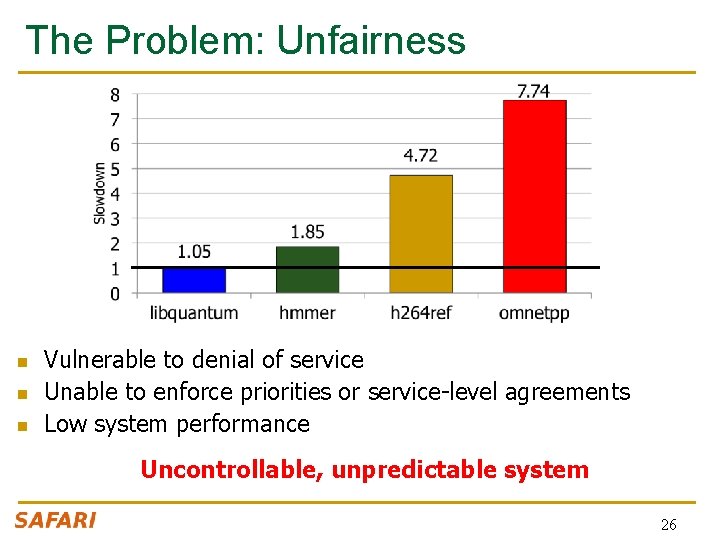

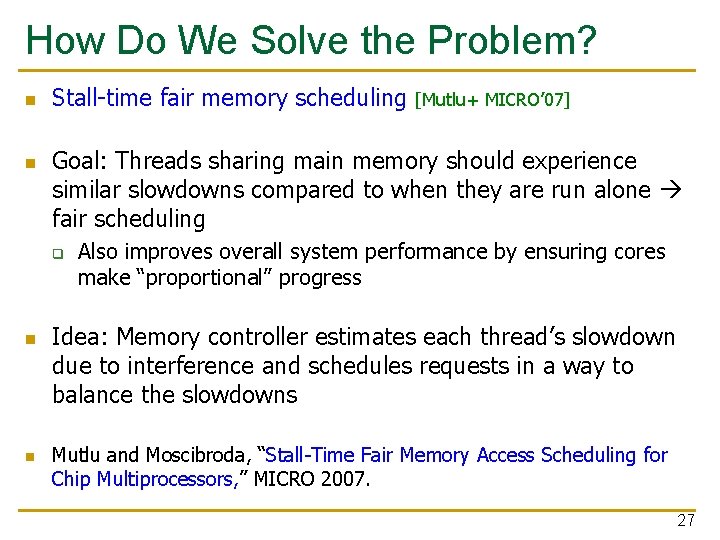

The Problem: Unfairness n n n Vulnerable to denial of service Unable to enforce priorities or service-level agreements Low system performance Uncontrollable, unpredictable system 26

How Do We Solve the Problem? n n Stall-time fair memory scheduling Goal: Threads sharing main memory should experience similar slowdowns compared to when they are run alone fair scheduling q n n [Mutlu+ MICRO’ 07] Also improves overall system performance by ensuring cores make “proportional” progress Idea: Memory controller estimates each thread’s slowdown due to interference and schedules requests in a way to balance the slowdowns Mutlu and Moscibroda, “Stall-Time Fair Memory Access Scheduling for Chip Multiprocessors, ” MICRO 2007. 27

Stall-Time Fairness in Shared DRAM Systems n n n A DRAM system is fair if it equalizes the slowdown of equal-priority threads relative to when each thread is run alone on the same system DRAM-related stall-time: The time a thread spends waiting for DRAM memory STshared: DRAM-related stall-time when the thread runs with other threads STalone: DRAM-related stall-time when the thread runs alone Memory-slowdown = STshared/STalone q n Relative increase in stall-time Stall-Time Fair Memory scheduler (STFM) aims to equalize -slowdown for interfering threads, without sacrificing performance q q Memory Considers inherent DRAM performance of each thread Aims to allow proportional progress of threads 28

![STFM Scheduling Algorithm MICRO 07 n n For each thread the DRAM controller q STFM Scheduling Algorithm [MICRO’ 07] n n For each thread, the DRAM controller q](https://slidetodoc.com/presentation_image/4c09122f51e5554bf31ac066738900e7/image-29.jpg)

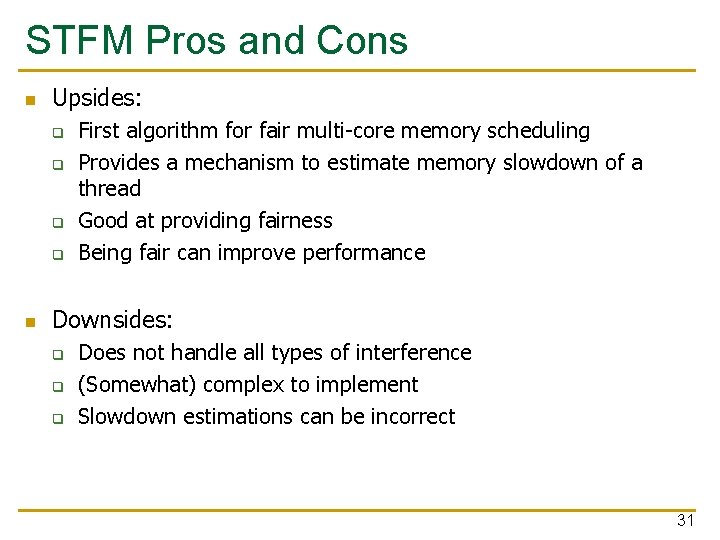

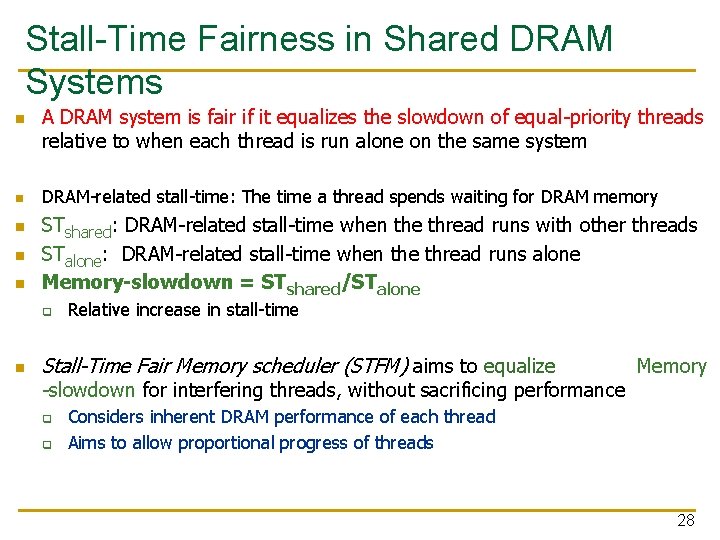

STFM Scheduling Algorithm [MICRO’ 07] n n For each thread, the DRAM controller q Tracks STshared q Estimates STalone Each cycle, the DRAM controller q Computes Slowdown = STshared/STalone for threads with legal requests q Computes unfairness = MAX Slowdown / MIN Slowdown If unfairness < q Use DRAM throughput oriented scheduling policy If unfairness ≥ q Use fairness-oriented scheduling policy n n (1) requests from thread with MAX Slowdown first (2) row-hit first , (3) oldest-first 29

How Does STFM Prevent Unfairness? T 0: Row 0 T 1: Row 5 T 0: Row 0 T 1: Row 111 T 0: Row 0 T 0: T 1: Row 0 16 T 0 Slowdown 1. 10 1. 04 1. 07 1. 03 Row 16 Row 00 Row 111 Row Buffer T 1 Slowdown 1. 14 1. 03 1. 06 1. 08 1. 11 1. 00 Unfairness 1. 06 1. 04 1. 03 1. 00 1. 05 Data 30

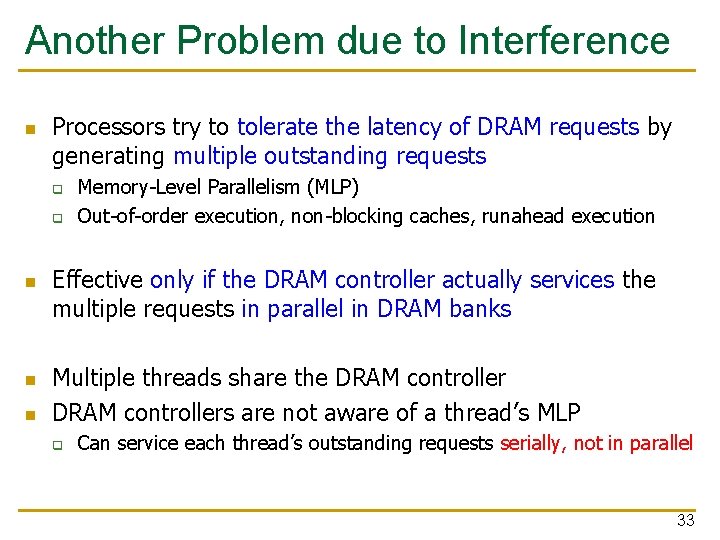

STFM Pros and Cons n Upsides: q q n First algorithm for fair multi-core memory scheduling Provides a mechanism to estimate memory slowdown of a thread Good at providing fairness Being fair can improve performance Downsides: q q q Does not handle all types of interference (Somewhat) complex to implement Slowdown estimations can be incorrect 31

Parallelism-Aware Batch Scheduling Onur Mutlu and Thomas Moscibroda, "Parallelism-Aware Batch Scheduling: Enhancing both Performance and Fairness of Shared DRAM Systems” 35 th International Symposium on Computer Architecture (ISCA), pages 63 -74, Beijing, China, June 2008. Slides (ppt) PAR-BS ISCA 2008 Talk

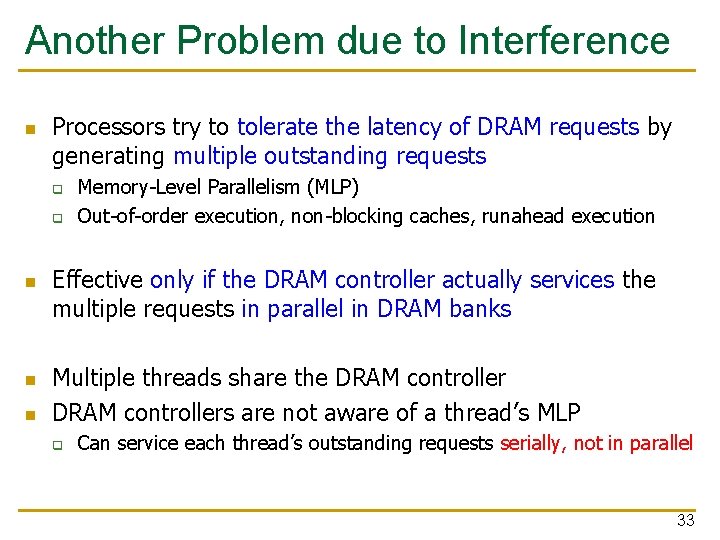

Another Problem due to Interference n Processors try to tolerate the latency of DRAM requests by generating multiple outstanding requests q q n n n Memory-Level Parallelism (MLP) Out-of-order execution, non-blocking caches, runahead execution Effective only if the DRAM controller actually services the multiple requests in parallel in DRAM banks Multiple threads share the DRAM controllers are not aware of a thread’s MLP q Can service each thread’s outstanding requests serially, not in parallel 33

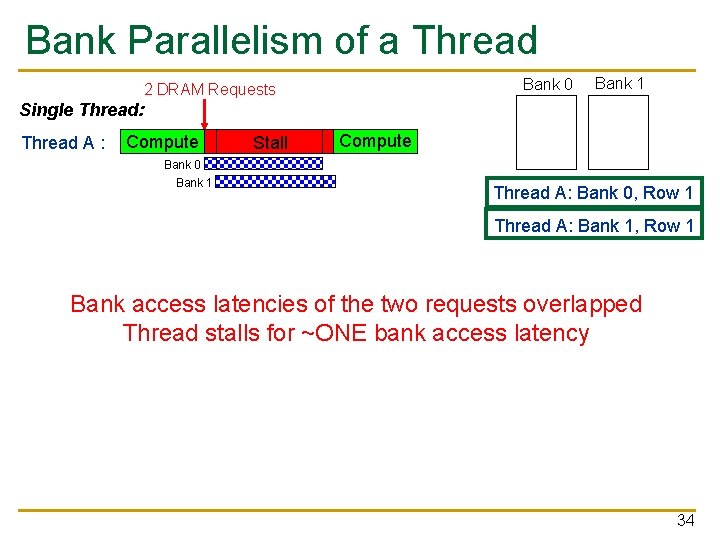

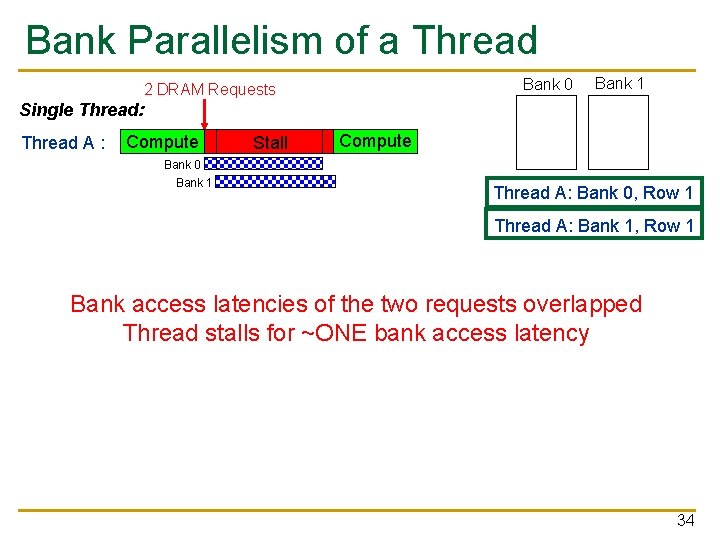

Bank Parallelism of a Thread Bank 0 2 DRAM Requests Bank 1 Single Thread: Thread A : Compute Stall Compute Bank 0 Bank 1 Thread A: Bank 0, Row 1 Thread A: Bank 1, Row 1 Bank access latencies of the two requests overlapped Thread stalls for ~ONE bank access latency 34

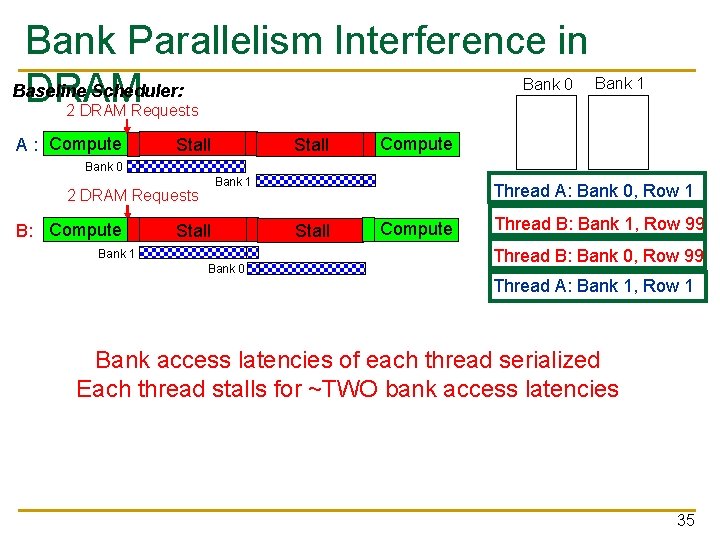

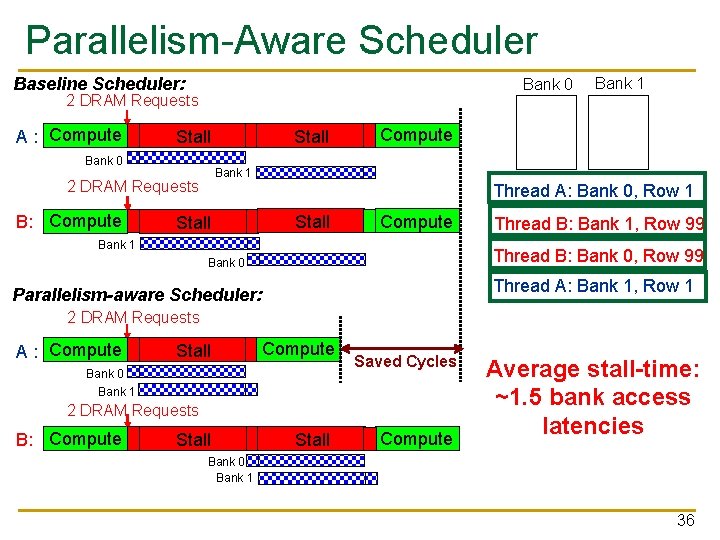

Bank Parallelism Interference in Baseline Scheduler: DRAM Bank 0 Bank 1 2 DRAM Requests A : Compute Stall Compute Bank 0 Bank 1 2 DRAM Requests B: Compute Stall Bank 1 Bank 0 Thread A: Bank 0, Row 1 Stall Compute Thread B: Bank 1, Row 99 Thread B: Bank 0, Row 99 Thread A: Bank 1, Row 1 Bank access latencies of each thread serialized Each thread stalls for ~TWO bank access latencies 35

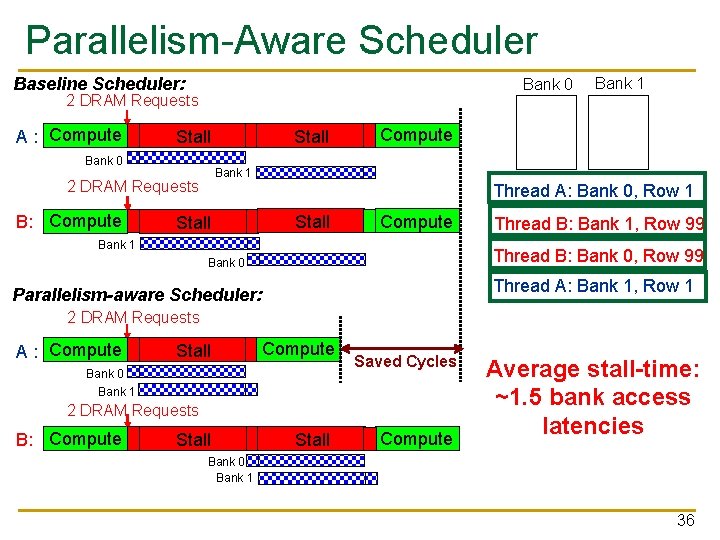

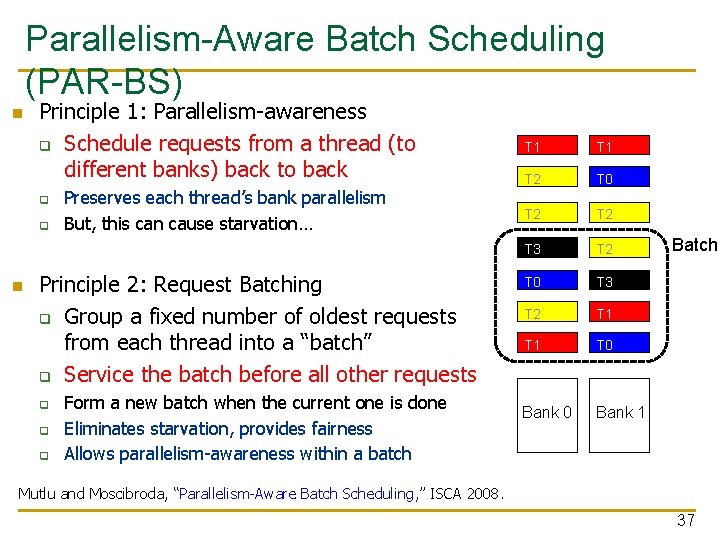

Parallelism-Aware Scheduler Baseline Scheduler: Bank 0 2 DRAM Requests A : Compute Bank 0 Compute Bank 1 2 DRAM Requests B: Compute Stall Bank 1 Thread A: Bank 0, Row 1 Stall Compute Bank 1 Thread B: Bank 1, Row 99 Thread B: Bank 0, Row 99 Bank 0 Thread A: Bank 1, Row 1 Parallelism-aware Scheduler: 2 DRAM Requests A : Compute Stall Compute Bank 0 Bank 1 Saved Cycles 2 DRAM Requests B: Compute Stall Compute Average stall-time: ~1. 5 bank access latencies Bank 0 Bank 1 36

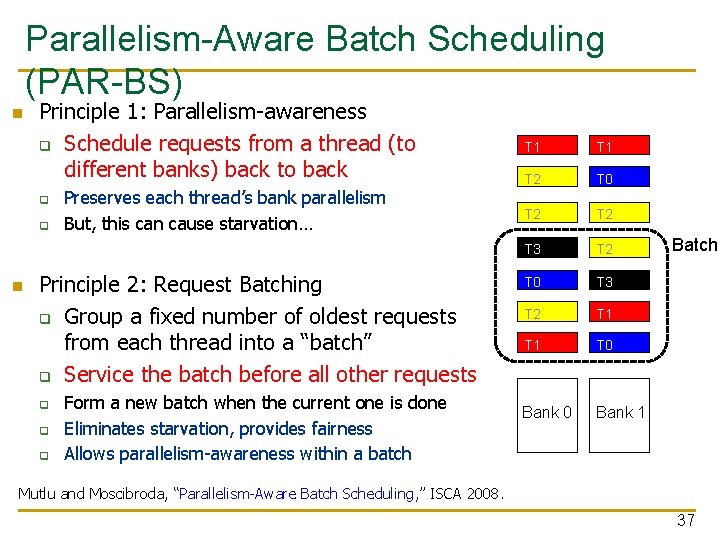

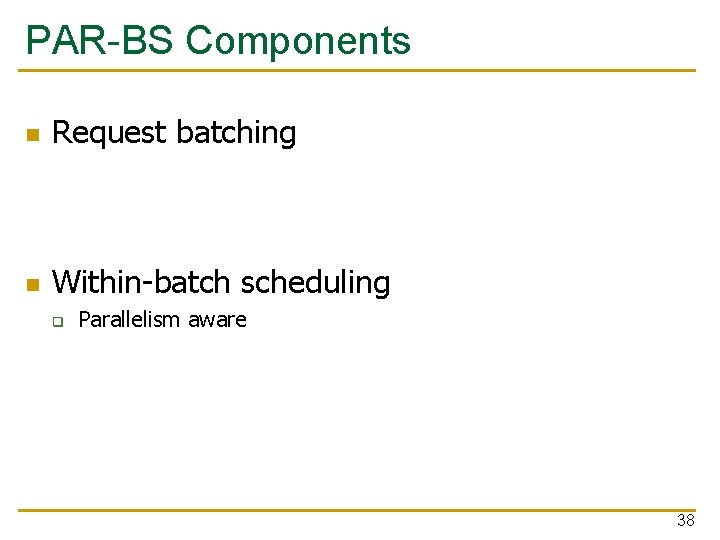

Parallelism-Aware Batch Scheduling (PAR-BS) n Principle 1: Parallelism-awareness q Schedule requests from a thread (to different banks) back to back q q n Preserves each thread’s bank parallelism But, this can cause starvation… Principle 2: Request Batching q Group a fixed number of oldest requests from each thread into a “batch” q Service the batch before all other requests q q q Form a new batch when the current one is done Eliminates starvation, provides fairness Allows parallelism-awareness within a batch T 1 T 2 T 0 T 2 T 3 T 2 T 0 T 3 T 2 T 1 T 0 Bank 1 Batch Mutlu and Moscibroda, “Parallelism-Aware Batch Scheduling, ” ISCA 2008. 37

PAR-BS Components n Request batching n Within-batch scheduling q Parallelism aware 38

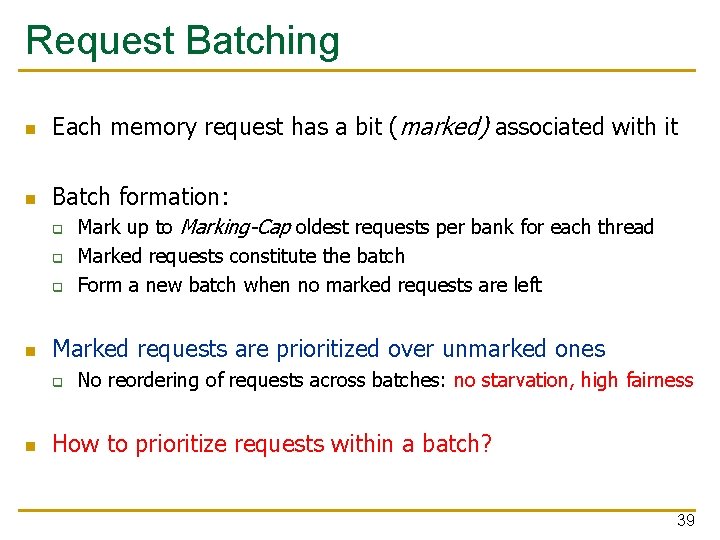

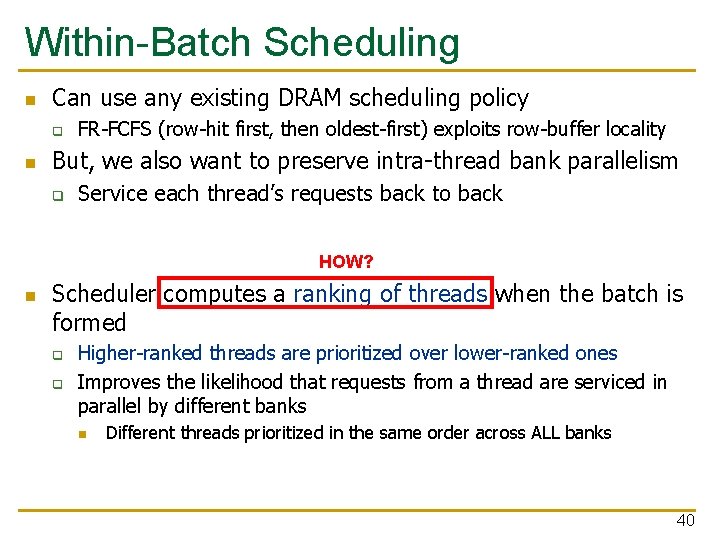

Request Batching n Each memory request has a bit (marked) associated with it n Batch formation: q q q n Marked requests are prioritized over unmarked ones q n Mark up to Marking-Cap oldest requests per bank for each thread Marked requests constitute the batch Form a new batch when no marked requests are left No reordering of requests across batches: no starvation, high fairness How to prioritize requests within a batch? 39

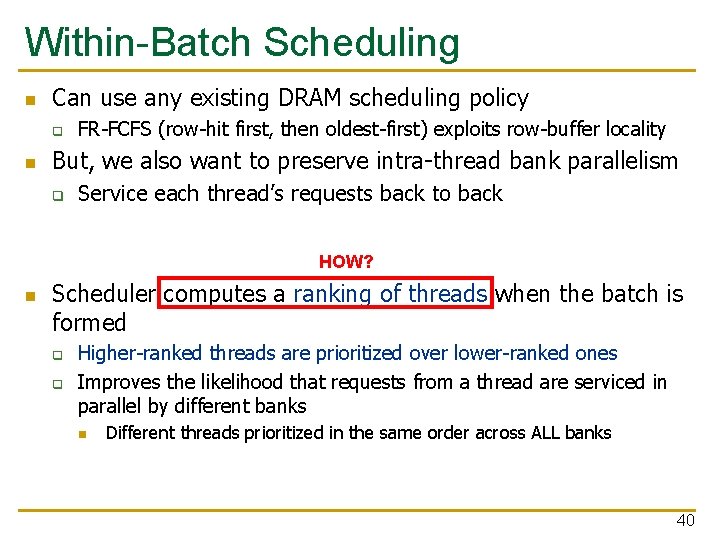

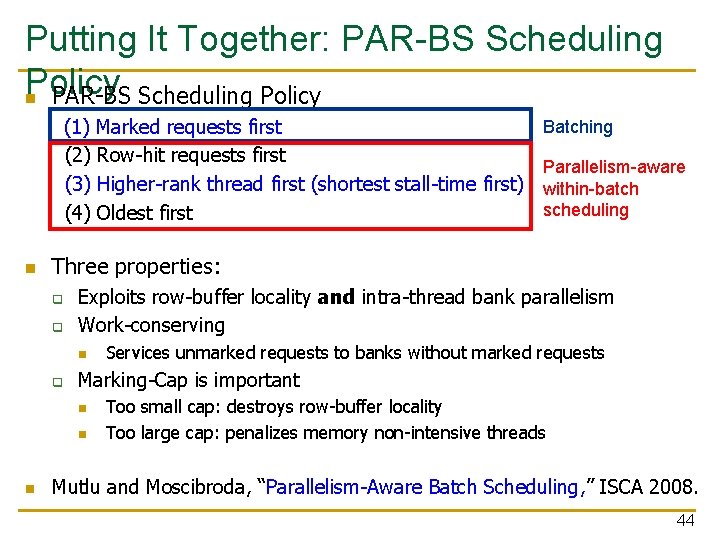

Within-Batch Scheduling n Can use any existing DRAM scheduling policy q n FR-FCFS (row-hit first, then oldest-first) exploits row-buffer locality But, we also want to preserve intra-thread bank parallelism q Service each thread’s requests back to back HOW? n Scheduler computes a ranking of threads when the batch is formed q q Higher-ranked threads are prioritized over lower-ranked ones Improves the likelihood that requests from a thread are serviced in parallel by different banks n Different threads prioritized in the same order across ALL banks 40

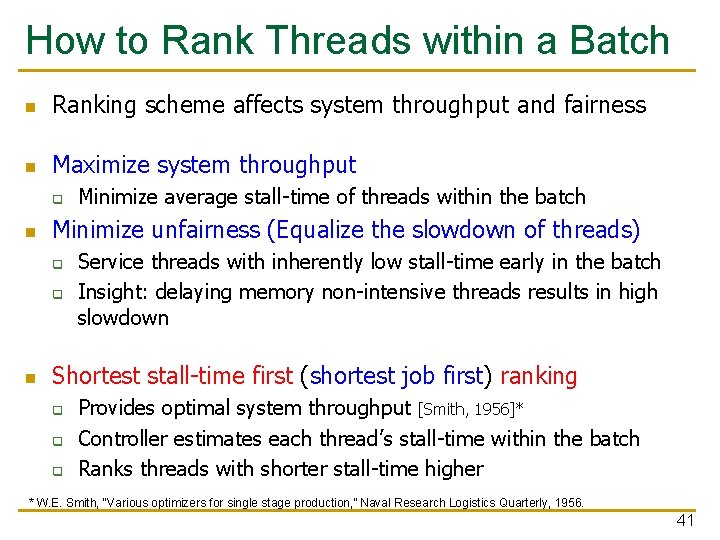

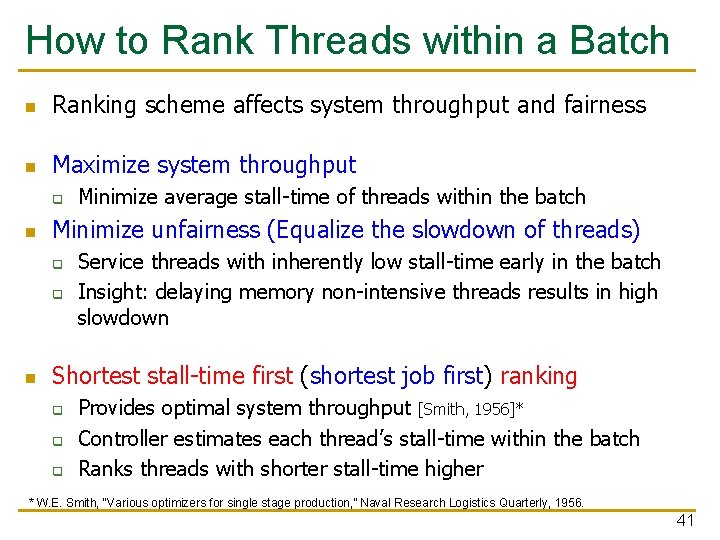

How to Rank Threads within a Batch n Ranking scheme affects system throughput and fairness n Maximize system throughput q n Minimize unfairness (Equalize the slowdown of threads) q q n Minimize average stall-time of threads within the batch Service threads with inherently low stall-time early in the batch Insight: delaying memory non-intensive threads results in high slowdown Shortest stall-time first (shortest job first) ranking q q q Provides optimal system throughput [Smith, 1956]* Controller estimates each thread’s stall-time within the batch Ranks threads with shorter stall-time higher * W. E. Smith, “Various optimizers for single stage production, ” Naval Research Logistics Quarterly, 1956. 41

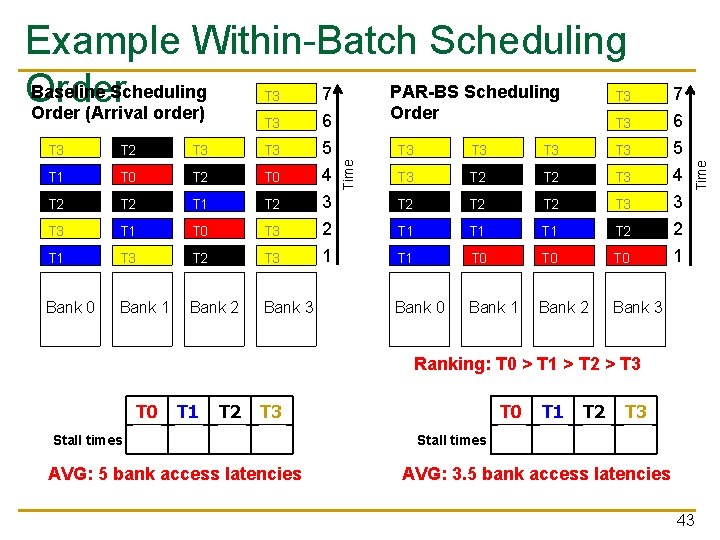

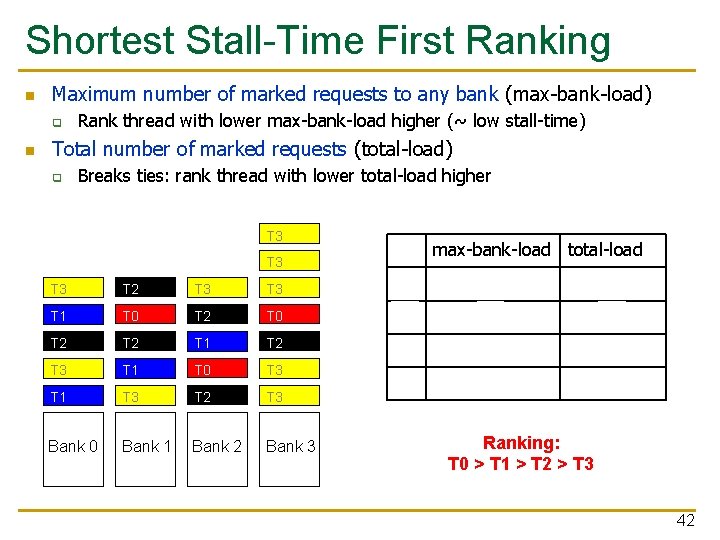

Shortest Stall-Time First Ranking n Maximum number of marked requests to any bank (max-bank-load) q n Rank thread with lower max-bank-load higher (~ low stall-time) Total number of marked requests (total-load) q Breaks ties: rank thread with lower total-load higher T 3 max-bank-load total-load T 3 T 2 T 3 T 0 1 3 T 1 T 0 T 2 T 0 T 1 2 4 T 2 T 1 T 2 2 6 T 3 T 1 T 0 T 3 T 1 T 3 T 2 T 3 5 9 Bank 0 Bank 1 Bank 2 Bank 3 Ranking: T 0 > T 1 > T 2 > T 3 42

T 3 6 7 6 T 3 T 2 T 3 T 1 T 0 T 2 T 2 T 1 T 2 T 3 T 1 T 0 T 3 5 4 3 2 T 1 T 3 T 2 T 3 1 Bank 0 Bank 1 Bank 2 Bank 3 Time T 3 T 3 T 2 T 2 T 2 T 3 T 1 T 1 T 2 5 4 3 2 T 1 T 0 T 0 1 Bank 0 Bank 1 Bank 2 Bank 3 Time Example Within-Batch Scheduling Baseline Scheduling PAR-BS Scheduling 7 Order (Arrival order) Order Ranking: T 0 > T 1 > T 2 > T 3 Stall times T 0 T 1 T 2 T 3 4 4 5 7 AVG: 5 bank access latencies Stall times T 0 T 1 T 2 T 3 1 2 4 7 AVG: 3. 5 bank access latencies 43

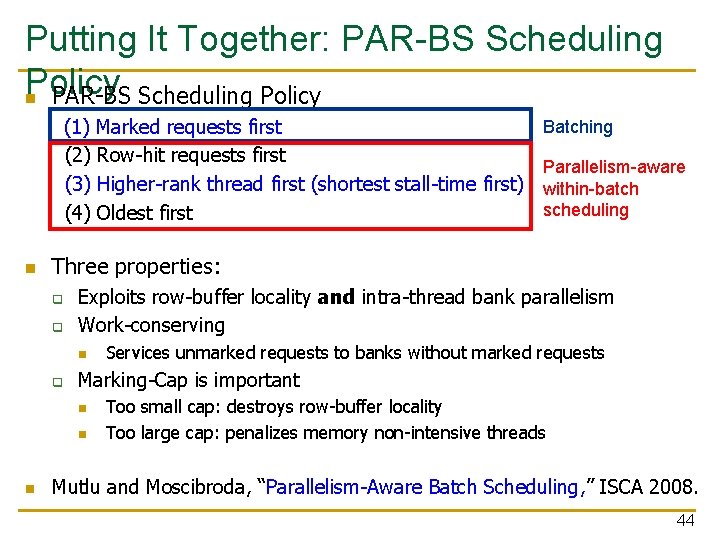

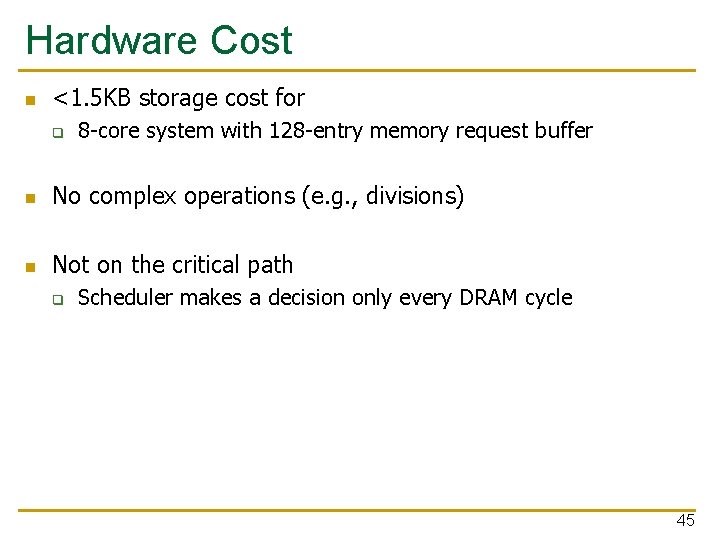

Putting It Together: PAR-BS Scheduling Policy n PAR-BS Scheduling Policy Batching (1) Marked requests first (2) Row-hit requests first Parallelism-aware (3) Higher-rank thread first (shortest stall-time first) within-batch scheduling (4) Oldest first n Three properties: q q Exploits row-buffer locality and intra-thread bank parallelism Work-conserving n q Marking-Cap is important n n n Services unmarked requests to banks without marked requests Too small cap: destroys row-buffer locality Too large cap: penalizes memory non-intensive threads Mutlu and Moscibroda, “Parallelism-Aware Batch Scheduling, ” ISCA 2008. 44

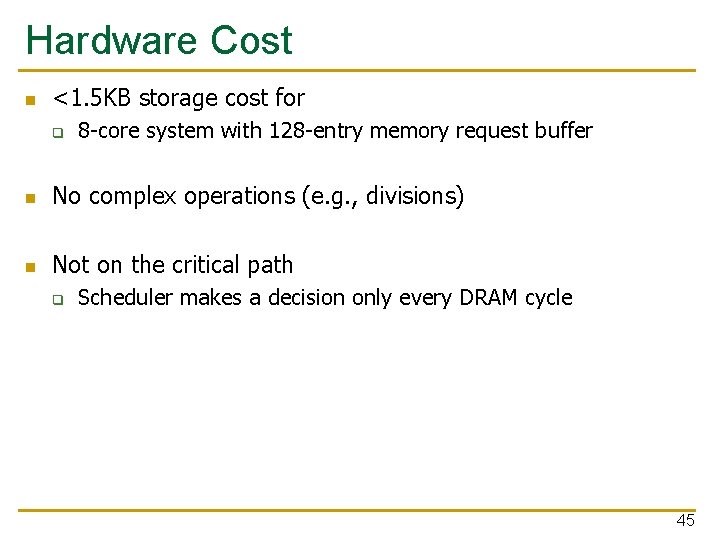

Hardware Cost n <1. 5 KB storage cost for q 8 -core system with 128 -entry memory request buffer n No complex operations (e. g. , divisions) n Not on the critical path q Scheduler makes a decision only every DRAM cycle 45

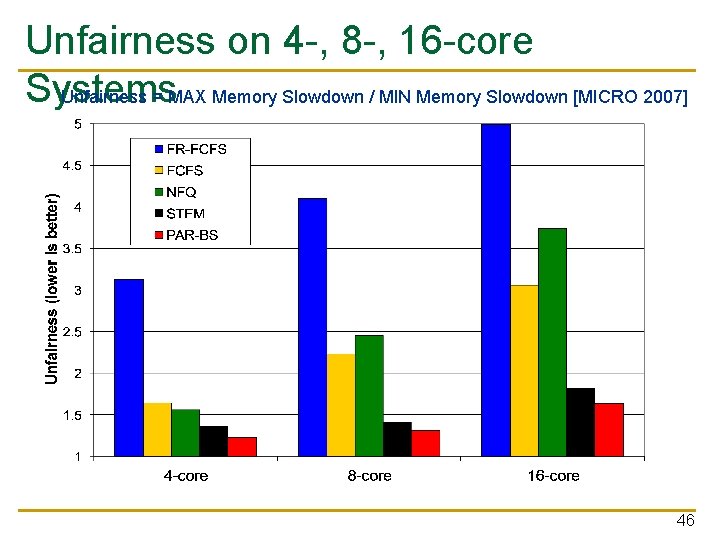

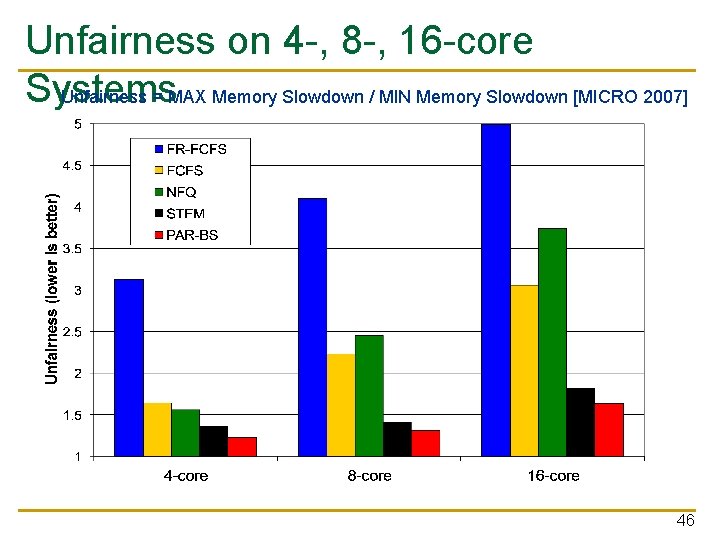

Unfairness on 4 -, 8 -, 16 -core Systems Unfairness = MAX Memory Slowdown / MIN Memory Slowdown [MICRO 2007] 46

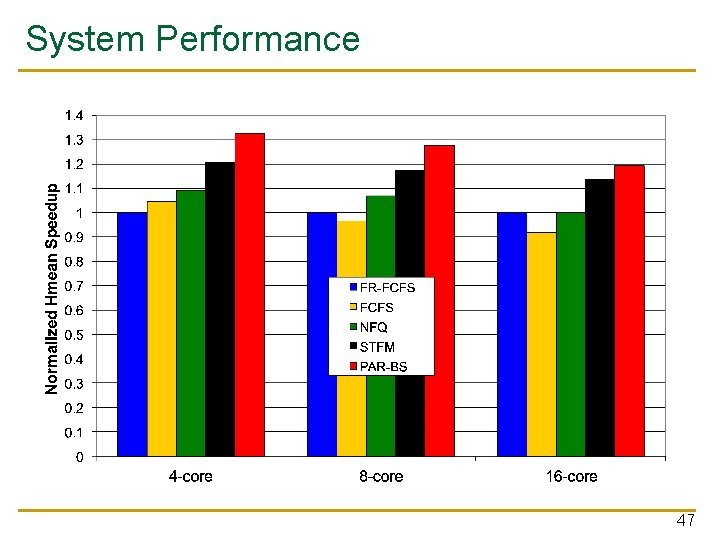

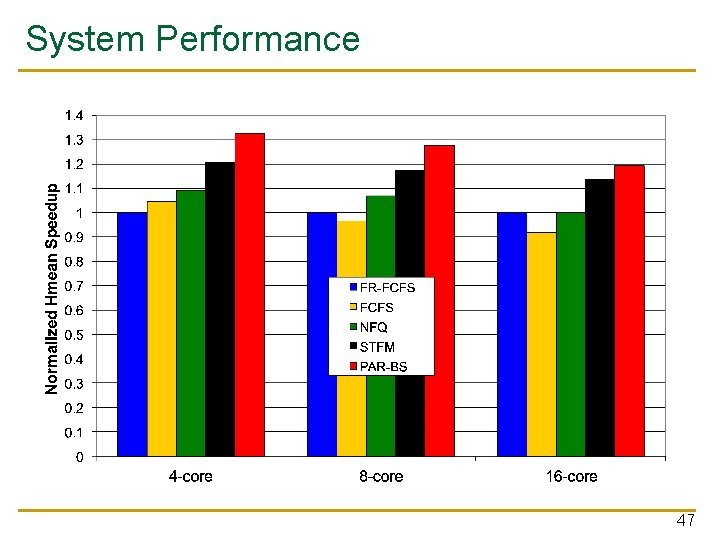

System Performance 47

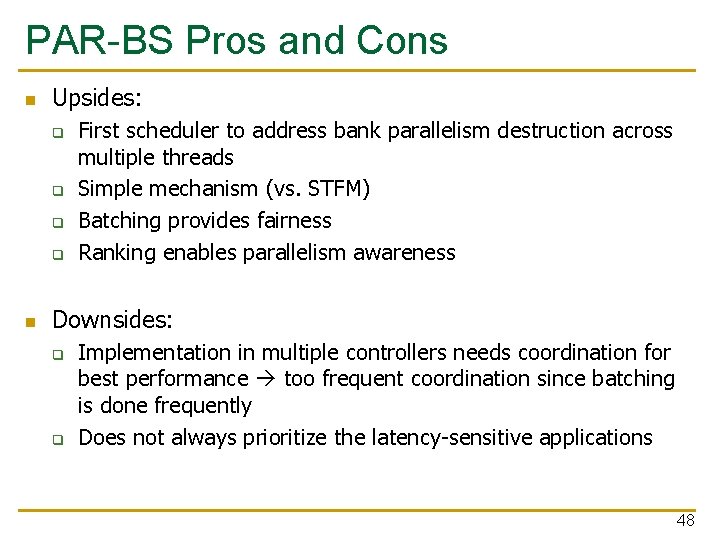

PAR-BS Pros and Cons n Upsides: q q n First scheduler to address bank parallelism destruction across multiple threads Simple mechanism (vs. STFM) Batching provides fairness Ranking enables parallelism awareness Downsides: q q Implementation in multiple controllers needs coordination for best performance too frequent coordination since batching is done frequently Does not always prioritize the latency-sensitive applications 48