16 Adaptive Resonance Theory ART 1 16 Basic

16 Adaptive Resonance Theory (ART) 1

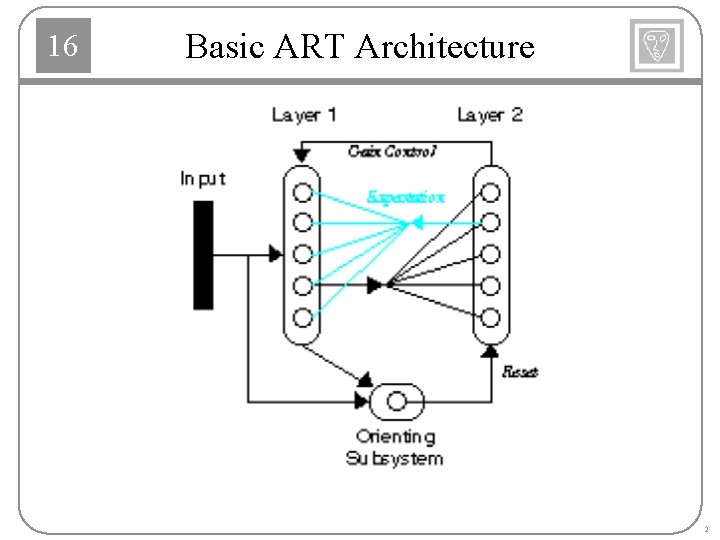

16 Basic ART Architecture 2

16 ART Subsystems Layer 1 Normalization Comparison of input pattern and expectation L 1 -L 2 Connections (Instars) Perform clustering operation. Each row of W 1: 2 is a prototype pattern. Layer 2 Competition, contrast enhancement L 2 -L 1 Connections (Outstars) Expectation Perform pattern recall. Each column of W 2: 1 is a prototype pattern Orienting Subsystem Causes a reset when expectation does not match input Disables current winning neuron 3

16 Layer 1 4

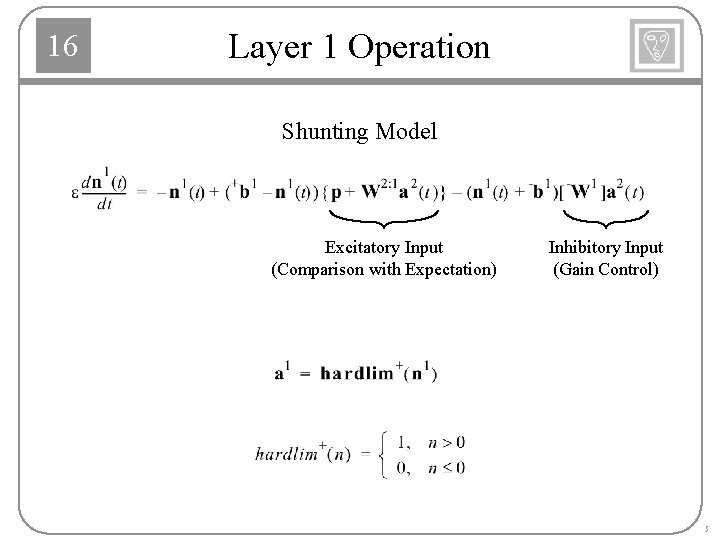

16 Layer 1 Operation Shunting Model Excitatory Input (Comparison with Expectation) Inhibitory Input (Gain Control) 5

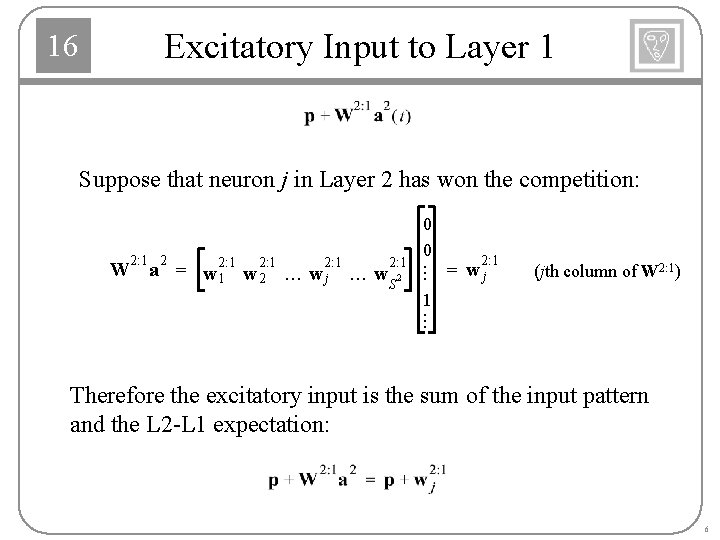

16 Excitatory Input to Layer 1 Suppose that neuron j in Layer 2 has won the competition: ¼ 2: 1 2: 1 W a = w 2: 1 w w w ¼ ¼ 2 1 2 j S 0 0 2: 1 = wj (jth column of W 2: 1) 1 ¼ Therefore the excitatory input is the sum of the input pattern and the L 2 -L 1 expectation: 6

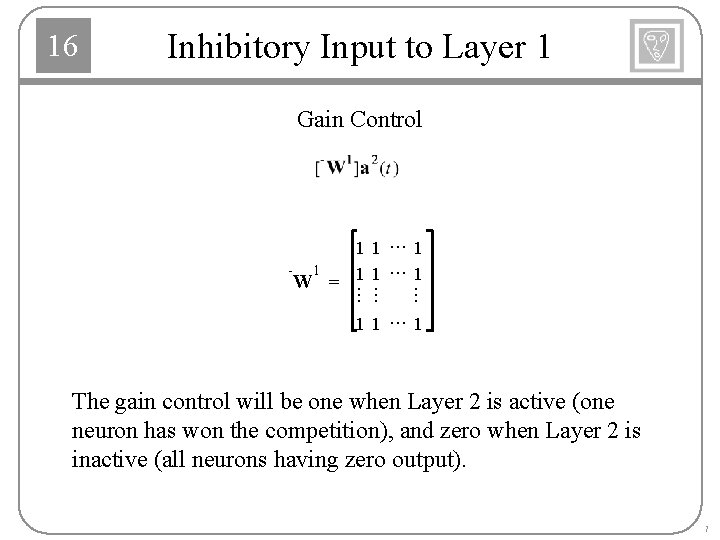

16 Inhibitory Input to Layer 1 Gain Control ¼ ¼ ¼ 11 ¼ 1 - 1 W = 11 11 ¼ 1 The gain control will be one when Layer 2 is active (one neuron has won the competition), and zero when Layer 2 is inactive (all neurons having zero output). 7

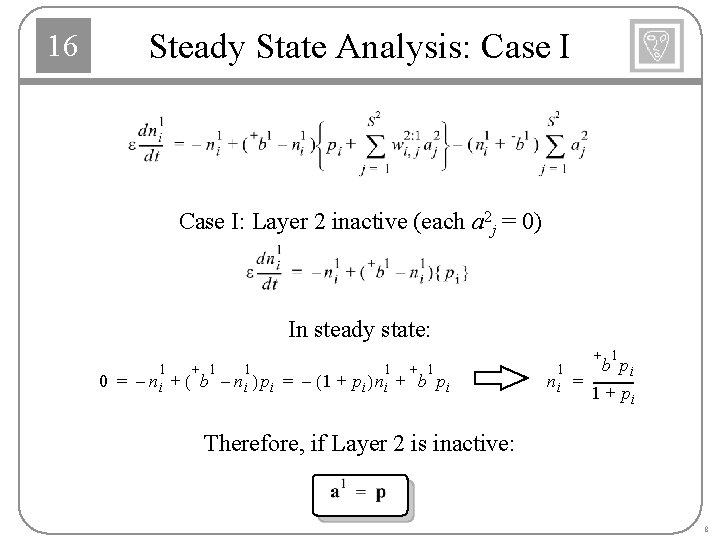

Steady State Analysis: Case I 16 Case I: Layer 2 inactive (each a 2 j = 0) In steady state: 0 = – 1 ni + 1 +( b – 1 ni ) pi = – (1 + 1 p i )n i + 1 + b pi 1 ni + 1 b pi = -------1 + pi Therefore, if Layer 2 is inactive: 8

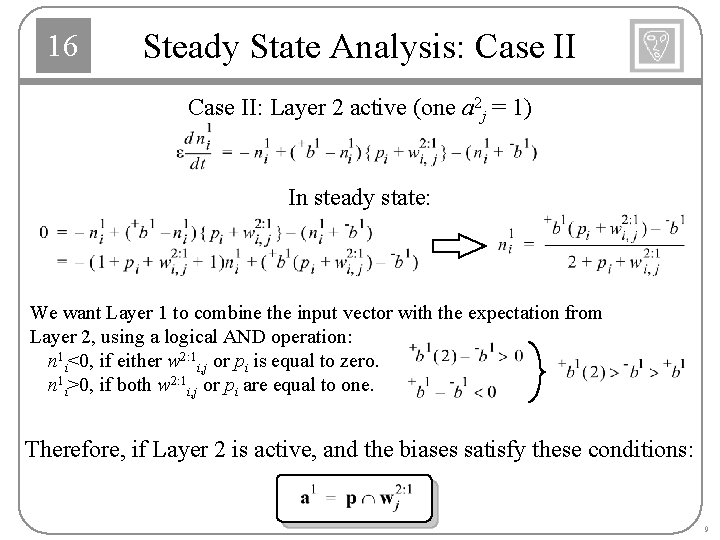

16 Steady State Analysis: Case II: Layer 2 active (one a 2 j = 1) In steady state: We want Layer 1 to combine the input vector with the expectation from Layer 2, using a logical AND operation: n 1 i<0, if either w 2: 1 i, j or pi is equal to zero. n 1 i>0, if both w 2: 1 i, j or pi are equal to one. Therefore, if Layer 2 is active, and the biases satisfy these conditions: 9

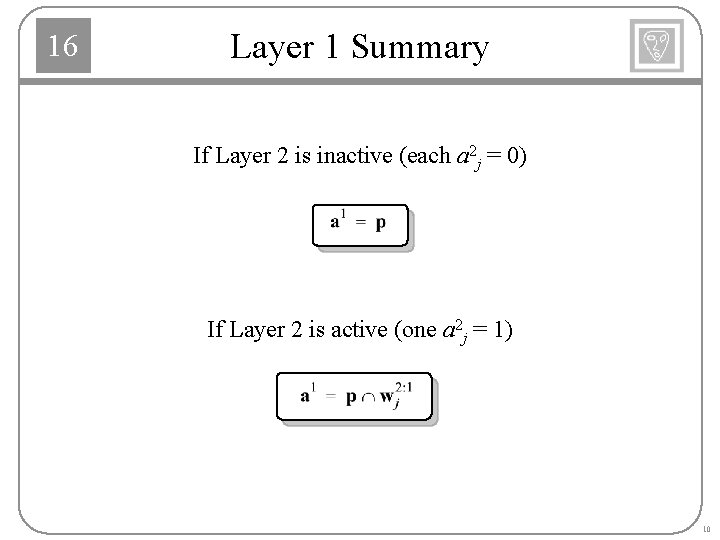

16 Layer 1 Summary If Layer 2 is inactive (each a 2 j = 0) If Layer 2 is active (one a 2 j = 1) 10

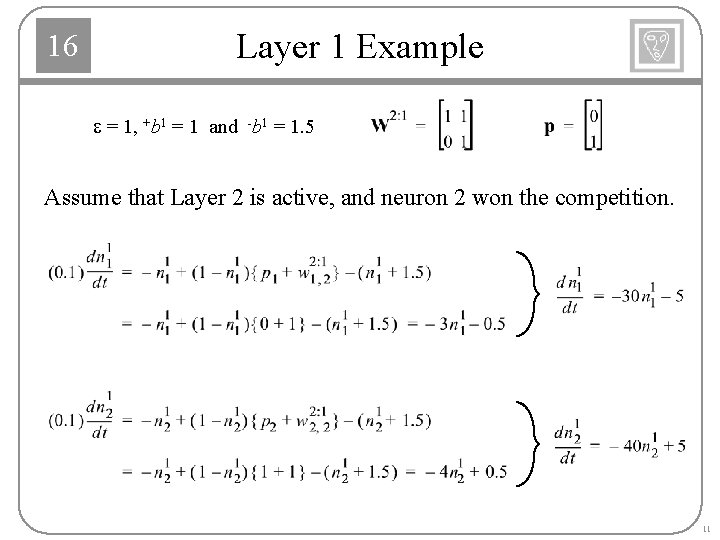

16 Layer 1 Example e = 1, +b 1 = 1 and -b 1 = 1. 5 Assume that Layer 2 is active, and neuron 2 won the competition. 11

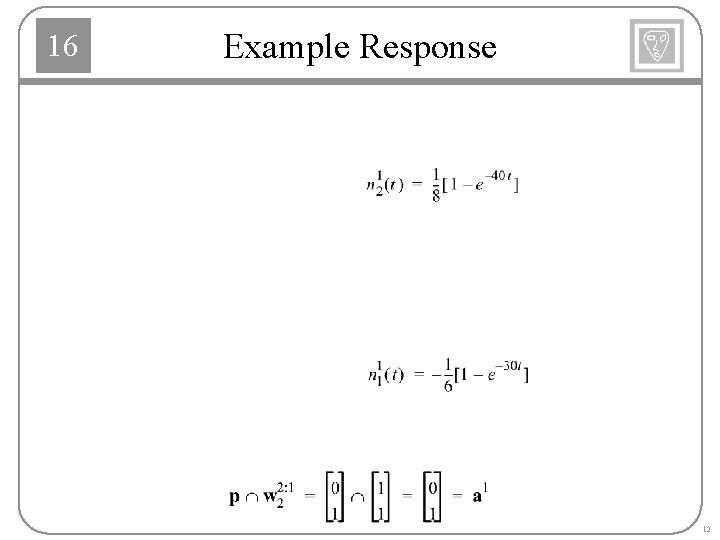

16 Example Response 12

16 Layer 2 13

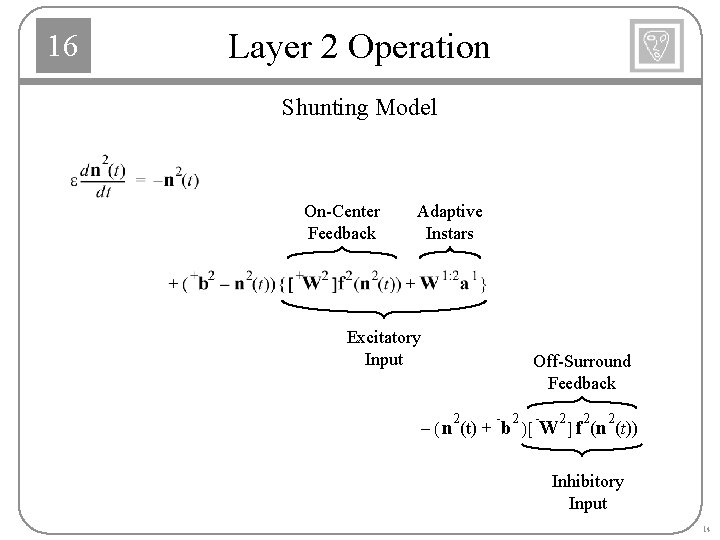

16 Layer 2 Operation Shunting Model On-Center Feedback Adaptive Instars Excitatory Input Off-Surround Feedback 2 - 2 2 2 – ( n (t ) + b ) [ W ] f (n (t )) Inhibitory Input 14

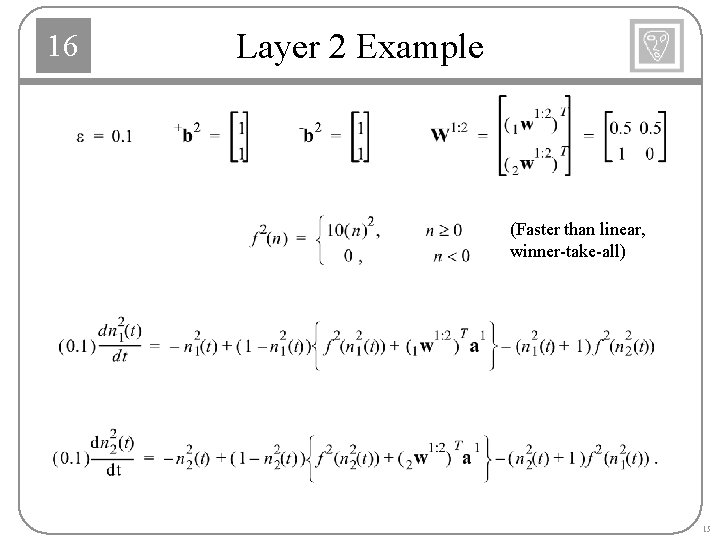

16 Layer 2 Example (Faster than linear, winner-take-all) 15

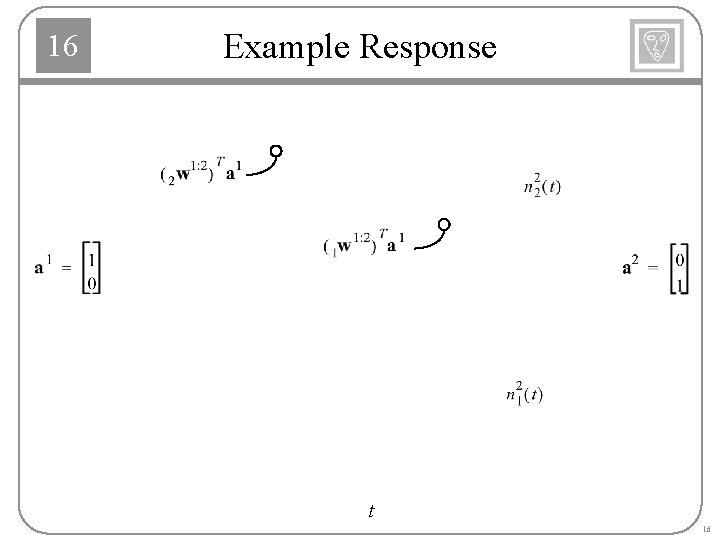

16 Example Response t 16

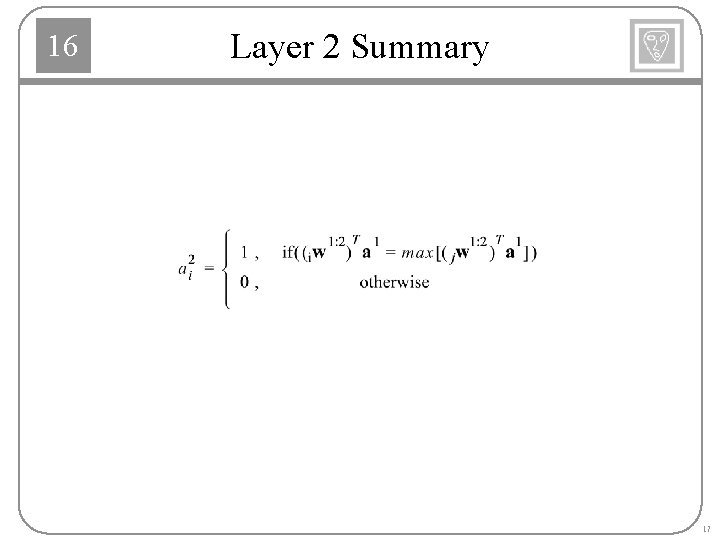

16 Layer 2 Summary 17

16 Orienting Subsystem Purpose: Determine if there is a sufficient match between the L 2 -L 1 expectation (a 1) and the input pattern (p). 18

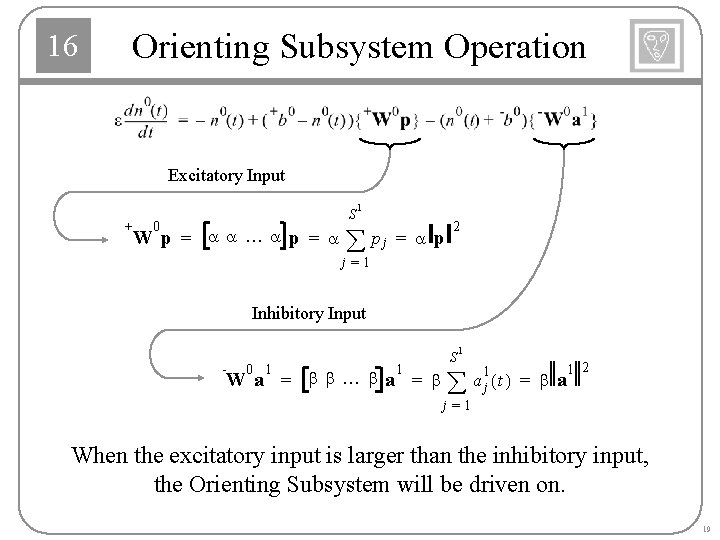

16 Orienting Subsystem Operation Excitatory Input + S 0 W p = aa¼ap = a 1 å pj = a p 2 j=1 Inhibitory Input - 0 1 1 W a = bb¼ba = b S 1 å 1 a j (t) = b a 1 2 j=1 When the excitatory input is larger than the inhibitory input, the Orienting Subsystem will be driven on. 19

16 Steady State Operation 1 2ü ì 0 = – n + ( b – n ){ a p } – (n + b )í b a ý î þ 0 + 0 = – (1 + a p 2 0 2 +b a 1 2 + 0 0 - 0 0 + 0 2 2 - 0 1 2 - 0 )n + b ( a p ) – b ( b a 1 2 ) b (a p ) – b (b a ) 0 n = -------------------------------2 1 2 (1 + a p + b a ) Let 0 n >0 1 2 if a a ------- < --- = r 2 b p Vigilance RESET Since , a reset will occur when there is enough of a mismatch between p and. 20

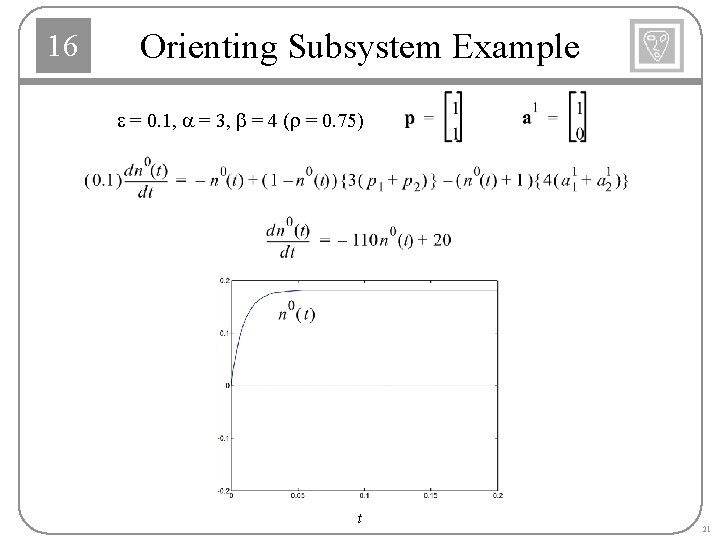

16 Orienting Subsystem Example e = 0. 1, a = 3, b = 4 (r = 0. 75) t 21

16 Orienting Subsystem Summary ì ï 1, 0 a = í ï 0, î if [ a 1 2 ¤ p 2 < r] otherwise 22

16 Learning Laws: L 1 -L 2 and L 2 -L 1 The ART 1 network has two separate learning laws: one for the L 1 -L 2 connections (instars) and one for the L 2 -L 1 connections (outstars). Both sets of connections are updated at the same time - when the input and the expectation have an adequate match. The process of matching, and subsequent adaptation is referred to as resonance. 23

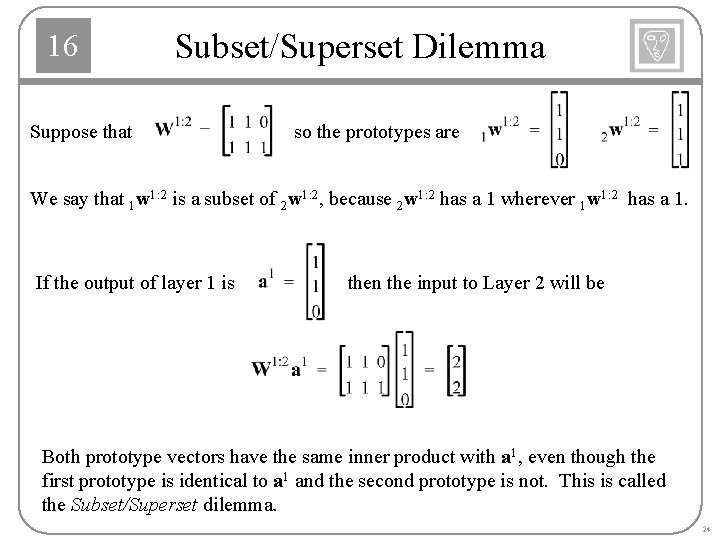

16 Subset/Superset Dilemma Suppose that so the prototypes are We say that 1 w 1: 2 is a subset of 2 w 1: 2, because 2 w 1: 2 has a 1 wherever 1 w 1: 2 has a 1. If the output of layer 1 is then the input to Layer 2 will be Both prototype vectors have the same inner product with a 1, even though the first prototype is identical to a 1 and the second prototype is not. This is called the Subset/Superset dilemma. 24

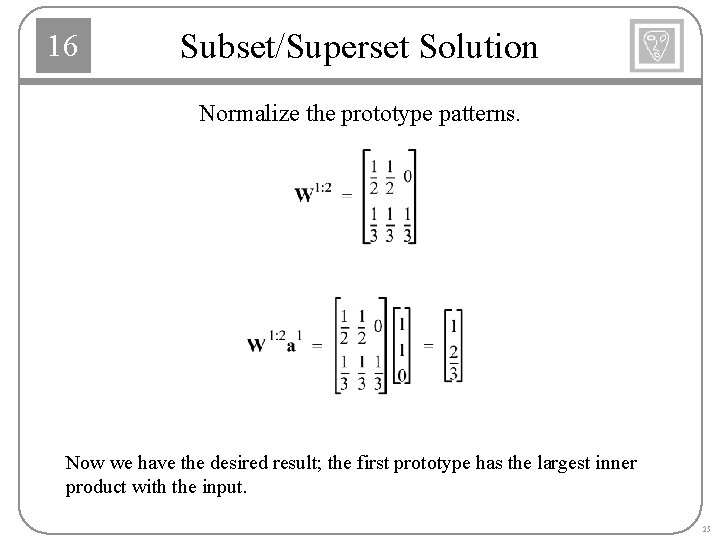

16 Subset/Superset Solution Normalize the prototype patterns. Now we have the desired result; the first prototype has the largest inner product with the input. 25

L 1 -L 2 Learning Law 16 Instar Learning with Competition where 0 0¼ 1 1 1¼ 0 Upper Limit Lower Limit Bias On-Center Connections ¼ 0 ¼ ¼ 1 ¼ 0 1¼ 1 W = 10 ¼ ¼ 1 0¼ 0 + W = 01 ¼ 0 b = 0 ¼ 1 + b = 1 Off-Surround Connections When neuron i of Layer 2 is active, iw 1: 2 is moved in the direction of a 1. The elements of iw 1: 2 compete, and therefore iw 1: 2 is normalized. 26

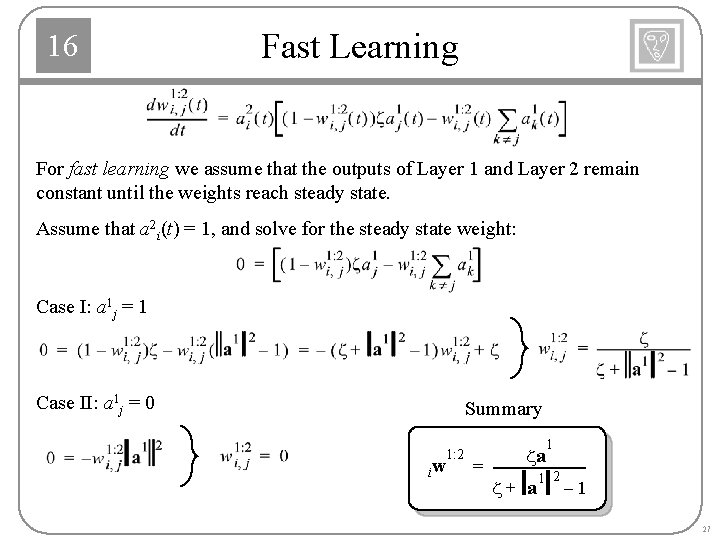

16 Fast Learning For fast learning we assume that the outputs of Layer 1 and Layer 2 remain constant until the weights reach steady state. Assume that a 2 i(t) = 1, and solve for the steady state weight: Case I: a 1 j = 1 Case II: a 1 j = 0 Summary iw 1: 2 1 za = ---------------1 2 z+ a – 1 27

16 Learning Law: L 2 -L 1 Outstar Fast Learning Assume that a 2 j(t) = 1, and solve for the steady state weight: or Column j of W 2: 1 converges to the output of Layer 1, which is a combination of the input pattern and the previous prototype pattern. The prototype pattern is modified to incorporate the current input pattern. 28

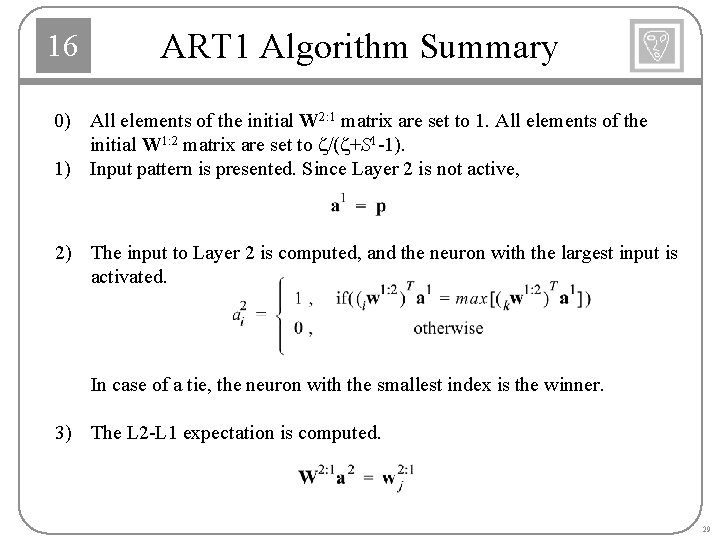

16 ART 1 Algorithm Summary 0) All elements of the initial W 2: 1 matrix are set to 1. All elements of the initial W 1: 2 matrix are set to z/(z+S 1 -1). 1) Input pattern is presented. Since Layer 2 is not active, 2) The input to Layer 2 is computed, and the neuron with the largest input is activated. In case of a tie, the neuron with the smallest index is the winner. 3) The L 2 -L 1 expectation is computed. 29

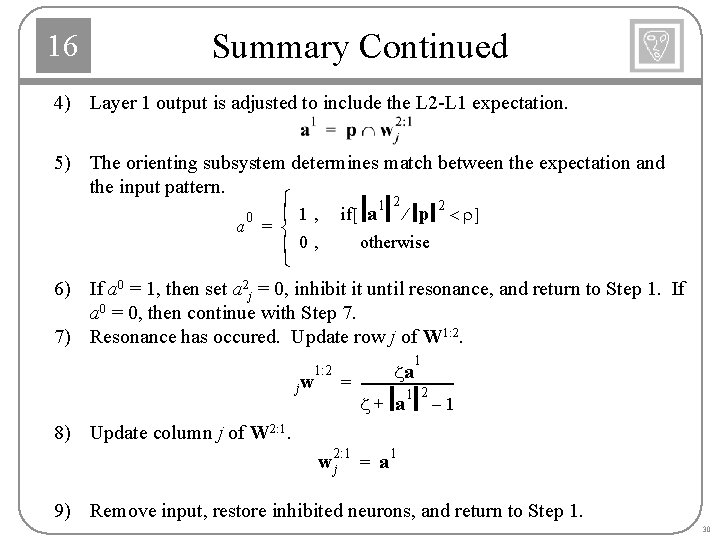

16 Summary Continued 4) Layer 1 output is adjusted to include the L 2 -L 1 expectation. 5) The orienting subsystem determines match between the expectation and the input pattern. ì 1 2 2 ï 1 , if [ a ¤ p < r ] 0 a = í ï 0, î otherwise 6) If a 0 = 1, then set a 2 j = 0, inhibit it until resonance, and return to Step 1. If a 0 = 0, then continue with Step 7. 7) Resonance has occured. Update row j of W 1: 2. jw 1 za = ---------------1 2 z+ a – 1 1: 2 8) Update column j of W 2: 1 wj = a 1 9) Remove input, restore inhibited neurons, and return to Step 1. 30

- Slides: 30