15 446 Networked Systems Practicum Lecture 14 WormsVirusesBotnets

15 -446 Networked Systems Practicum Lecture 14 – Worms/Viruses/Botnets 1

Outline • Worms • Worm Defense • Botnet/Viruses 2

What is a Computer Worm? • Self replicating network program • Exploit vulnerabilities to infect remote machines • Victim machines continue to propagate infection • Three main stages • Detect new targets • Attempt to infect new targets • Activate code on victim machine Network • Difference w/ computer virus? • No human intervention required 3

Why Worry About Worms? • Speed • Much faster than viruses • CRv 2: 14 hours for 359. 000 victims • Slammer: 10 minutes for 75. 000 victims • Faster than human reaction • Highly malicious payloads • DDo. S or data corruption 4

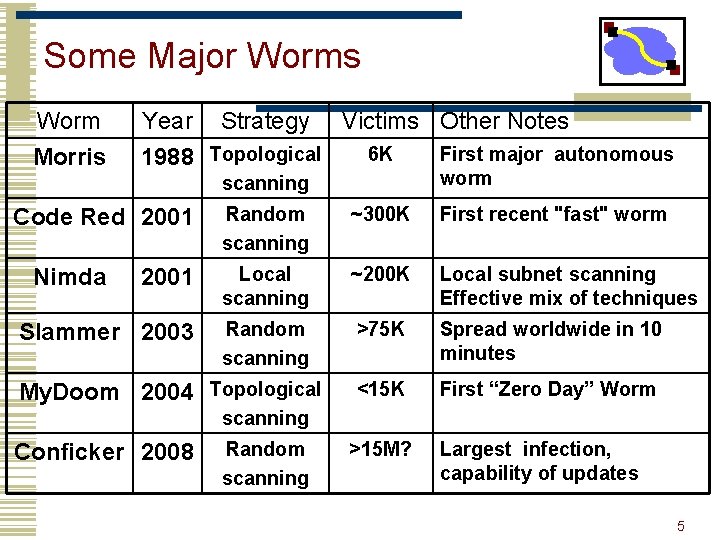

Some Major Worms Worm Year Strategy Morris 1988 Topological Victims Other Notes 6 K First major autonomous worm Random scanning ~300 K First recent "fast" worm 2001 Local scanning ~200 K Local subnet scanning Effective mix of techniques Slammer 2003 Random scanning >75 K Spread worldwide in 10 minutes <15 K First “Zero Day” Worm scanning Code Red 2001 Nimda My. Doom 2004 Topological scanning Conficker 2008 Random scanning >15 M? Largest infection, capability of updates 5

Threat Model Traditional • High-value targets • Insider threats Worms & Botnets • Automated attack of millions of targets • Value in aggregate, not individual systems • Threats: Software vulnerabilities; naïve users 6

. . . and it's profitable • Botnets used for • Spam (and more spam)? • Credit card theft • DDo. S extortion • Flourishing Exchange market • Spam proxying: 3 -10 cents/host/week • 25 k botnets: $40 k - $130 k/year • Also for stolen account compromised machines, credit cards, identities, etc. (be worried)? 7

Why is this problem hard? • Monoculture: little “genetic diversity” in hosts • Instantaneous transmission: Almost entire network within 500 ms • Slow immune response: human scales (10 x 1 Mx slower!)? • Poor hygiene: Out of date / misconfigured systems; naïve users • Intelligent designer. . . of pathogens • Near-Anonymitity 8

Code Red I v 1 • July 12 th, 2001 • Exploited a known vulnerability in Microsoft’s Internet Information Server (IIS) • Buffer overflow in a rarely used URL decoding routine – published June 18 th • 1 st – 19 th of each month: attempts to spread • Random scanning of IP address space • 99 propagation threads, 100 th defaced pages on server • Static random number generator seed • Every worm copy scans the same set of addresses Linear growth 9

Code Red I v 1 • 20 th – 28 th of each month: attacks • DDOS attack against 198. 137. 240. 91 (www. whitehouse. gov) • Memory resident – rebooting the system removes the worm • However, could quickly be reinfected 10

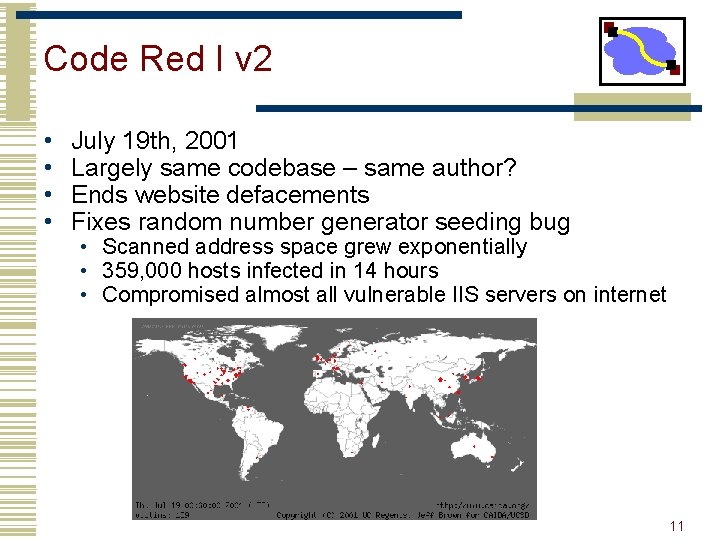

Code Red I v 2 • • July 19 th, 2001 Largely same codebase – same author? Ends website defacements Fixes random number generator seeding bug • Scanned address space grew exponentially • 359, 000 hosts infected in 14 hours • Compromised almost all vulnerable IIS servers on internet 11

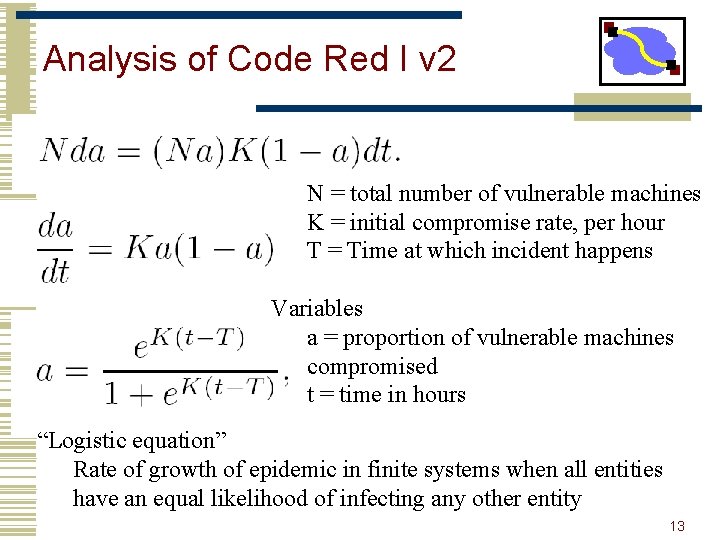

Analysis of Code Red I v 2 • Random Constant Spread model • Constants • N = total number of vulnerable machines • K = initial compromise rate, per hour • T = Time at which incident happens • Variables • a = proportion of vulnerable machines compromised • t = time in hours 12

Analysis of Code Red I v 2 N = total number of vulnerable machines K = initial compromise rate, per hour T = Time at which incident happens Variables a = proportion of vulnerable machines compromised t = time in hours “Logistic equation” Rate of growth of epidemic in finite systems when all entities have an equal likelihood of infecting any other entity 13

Code Red I v 2 – Plot • K = 1. 8 • T = 11. 9 Hourly probe rate data for inbound port 80 at the Chemical Abstracts Service during the initial outbreak of Code Red I on July 19 th, 2001. 14

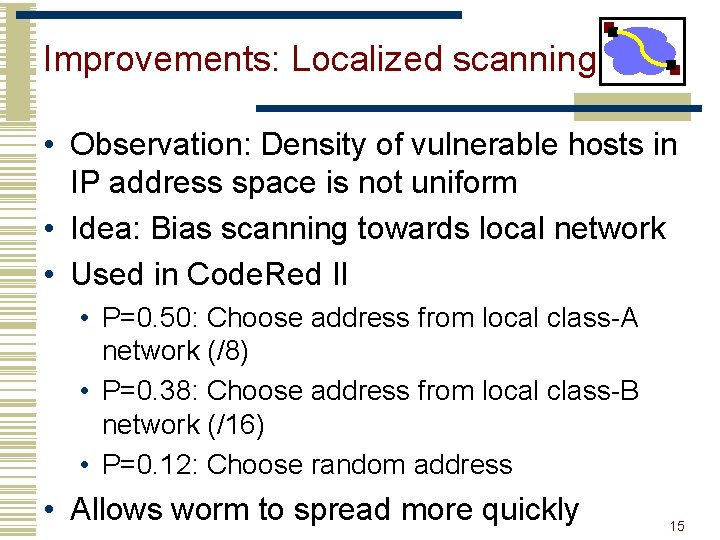

Improvements: Localized scanning • Observation: Density of vulnerable hosts in IP address space is not uniform • Idea: Bias scanning towards local network • Used in Code. Red II • P=0. 50: Choose address from local class-A network (/8) • P=0. 38: Choose address from local class-B network (/16) • P=0. 12: Choose random address • Allows worm to spread more quickly 15

Code Red II (August 2001) • Began : August 4 th, 2001 • Exploit : Microsoft IIS webservers (buffer overflow) • Named “Code Red II” because : • It contained a comment stating so. However the codebase was new. • Infected IIS on windows 2000 successfully but caused system crash on windows NT. • Installed a root backdoor on the infected machine. 16

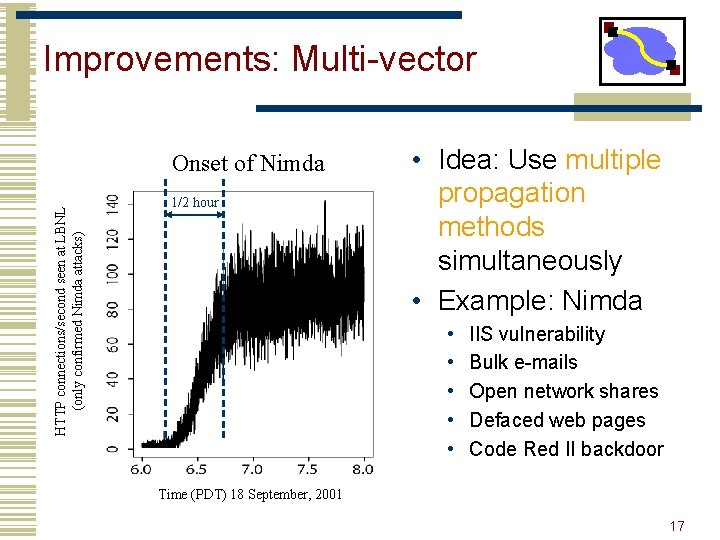

Improvements: Multi-vector HTTP connections/second seen at LBNL (only confirmed Nimda attacks) Onset of Nimda 1/2 hour • Idea: Use multiple propagation methods simultaneously • Example: Nimda • • • IIS vulnerability Bulk e-mails Open network shares Defaced web pages Code Red II backdoor Time (PDT) 18 September, 2001 17

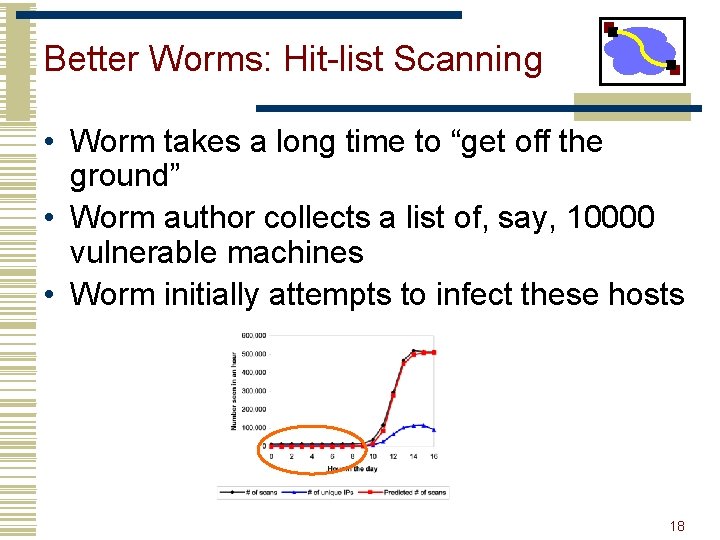

Better Worms: Hit-list Scanning • Worm takes a long time to “get off the ground” • Worm author collects a list of, say, 10000 vulnerable machines • Worm initially attempts to infect these hosts 18

How to build Hit-List • Stealthy randomized scan over number of months • Distributed scanning via botnet • DNS searches – e. g. assemble domain list, search for IP address of mail server in MX records • Web crawling spider similar to search engines • Public surveys – e. g. Netcraft • Listening for announcements – e. g. vulnerable IIS servers during Code Red I 19

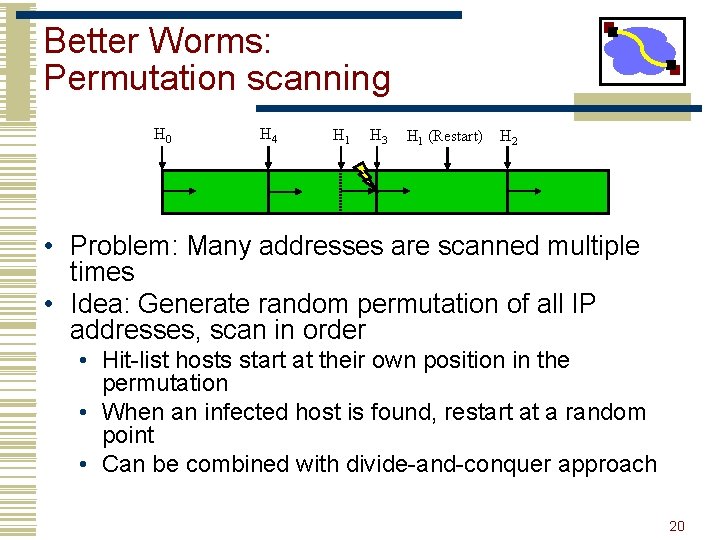

Better Worms: Permutation scanning H 0 H 4 H 1 H 3 H 1 (Restart) H 2 • Problem: Many addresses are scanned multiple times • Idea: Generate random permutation of all IP addresses, scan in order • Hit-list hosts start at their own position in the permutation • When an infected host is found, restart at a random point • Can be combined with divide-and-conquer approach 20

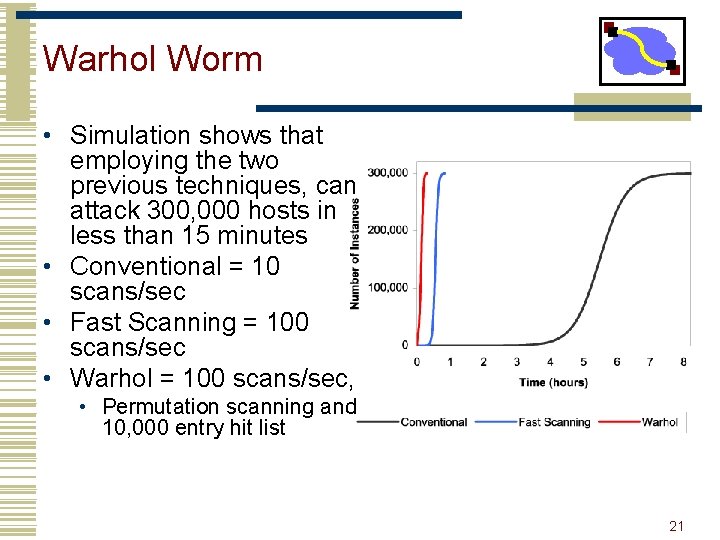

Warhol Worm • Simulation shows that employing the two previous techniques, can attack 300, 000 hosts in less than 15 minutes • Conventional = 10 scans/sec • Fast Scanning = 100 scans/sec • Warhol = 100 scans/sec, • Permutation scanning and 10, 000 entry hit list 21

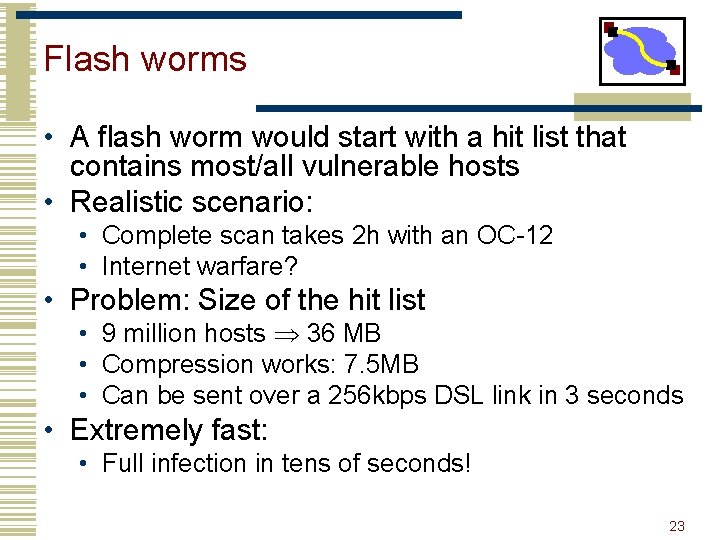

Flash worms • A flash worm would start with a hit list that contains most/all vulnerable hosts • Realistic scenario: • Complete scan takes 2 h with an OC-12 • Internet warfare? • Problem: Size of the hit list • 9 million hosts 36 MB • Compression works: 7. 5 MB • Can be sent over a 256 kbps DSL link in 3 seconds • Extremely fast: • Full infection in tens of seconds! 23

Surreptitious worms • Idea: Hide worms in inconspicuous traffic to avoid detection • Leverage P 2 P systems? • • • High node degree Lots of traffic to hide in Proprietary protocols Homogeneous software Immense size (30, 000 Kazaa downloads!) 24

Example Outbreak: SQL Slammer (2003) • Single, small UDP packet exploit (376 b) • First ~1 min: classic random scanning • Doubles # of infected hosts every ~8. 5 sec • (In comparison: Code Red doubled in 40 min) • After 1 min, starts to saturate access b/w • Interferes with itself, so it slows down • By this point, was sending 20 M pps • Peak of 55 million IP scans/sec @ 3 min • 90% of Internet scanned in < 10 mins • Infected ~100 k or more hosts 25

Stuxnet Worm • The first worm for control systems • Discovered in June 2010 • Attack SCADA systems using Siemens Win. CC/PCS 7 software • Not only spying but also reprogrammable logic controllers (PLCs) • Four zero-day attacks used • Infection includes Iran (62 K) and China (6 M? ) • Nation-wide support cyberwarefare? 26

Prevention • Get rid of the or permute vulnerabilities • (e. g. , address space randomization) • makes it harder to compromise • Block traffic (firewalls) • only takes one vulnerable computer wandering between in & out or multi-homed, etc. • Keep vulnerable hosts off network • incomplete vuln. databases & 0 -day worms • Slow down scan rate • Allow hosts limited # of new contacts/sec. • Can slow worms down, but they do still spread • Quarantine • Detect worm, block it 27

Outline • Worms • Worm Defense • Botnet/Viruses 28

Context • Worm Detection • Scan detection • Honeypots • Host based behavioral detection • Payload-based 29

Worm: Countermeasures • Signature-based worm scan filtering • Vulnerable to polymorphic worms • Scan detection • High scanning activity to identify victims • Scanning with high failure rate compared to legitimate users (DNS) • TCP RST, ICMP Unreachable • • • Two dimensions: time, space Rate limiting, rate halting False positive (Index crawler, NAT, etc. ) Disruption to legitimate services Not applicable to UDP based propagation 30

Worm behavior • Content Invariance • Limited polymorphism e. g. encryption • key portions are invariant e. g. decryption routine • Content Prevalence • invariant portion appear frequently • Address Dispersion • # of infected distinct hosts grow overtime • reflecting different source and dest. addresses 31

Signature Inference • Content prevalence: Autograph, Early. Bird, etc. • Assumes some content invariance • Pretty reasonable for starters. • Goal: Identify “attack” substrings • Maximize detection rate • Minimize false positive rate 32

Content Sifting • For each string w, maintain • prevalence(w): Number of times it is found in the network traffic • sources(w): Number of unique sources corresponding to it • destinations(w): Number of unique destinations corresponding to it • If thresholds exceeded, then block(w) 33

Issues • How to compute prevalence(w), sources(w) and destinations(w) efficiently? • Scalable • Low memory and CPU requirements • Real time deployment over a Gigabit link 34

![Estimating Content Prevalence • Table[payload] • 1 GB table filled in 10 seconds • Estimating Content Prevalence • Table[payload] • 1 GB table filled in 10 seconds •](http://slidetodoc.com/presentation_image/4aac68d41aad914096910a30985828e6/image-34.jpg)

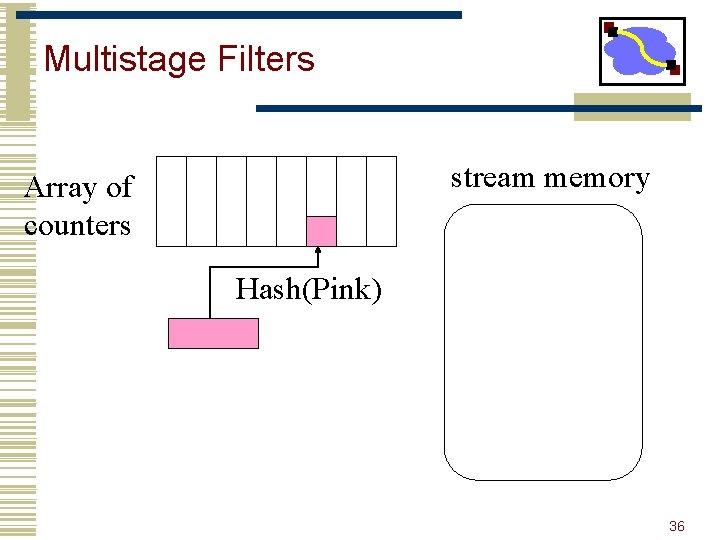

Estimating Content Prevalence • Table[payload] • 1 GB table filled in 10 seconds • Table[hash[payload]] • 1 GB table filled in 4 minutes • Tracking millions of ants to track a few elephants • Collisions. . . false positives 35

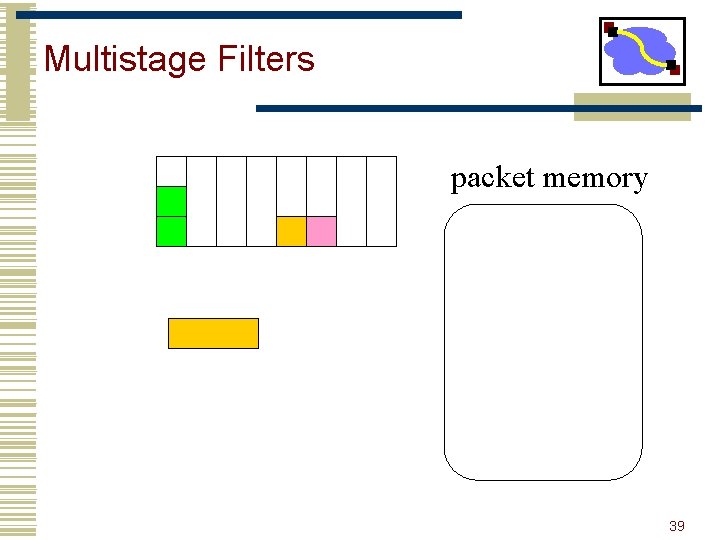

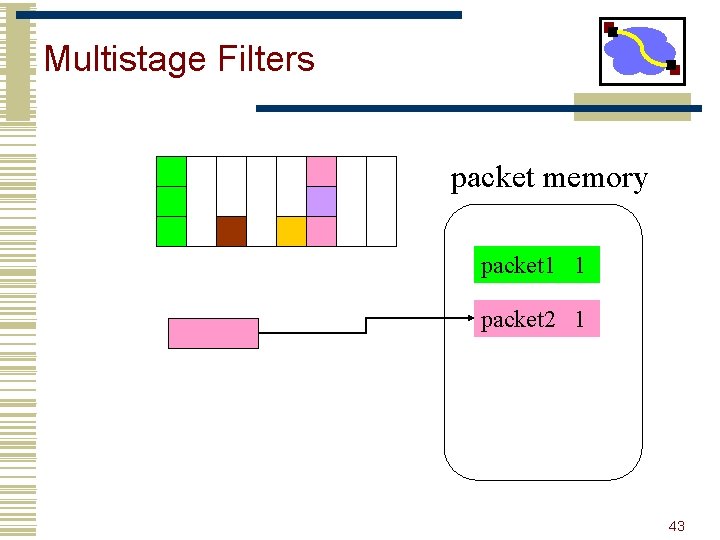

Multistage Filters stream memory Array of counters Hash(Pink) 36

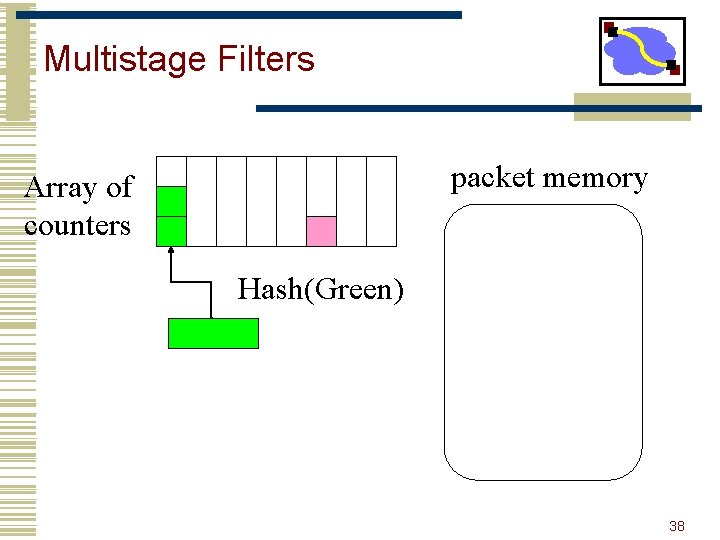

Multistage Filters packet memory Array of counters Hash(Green) 37

Multistage Filters packet memory Array of counters Hash(Green) 38

Multistage Filters packet memory 39

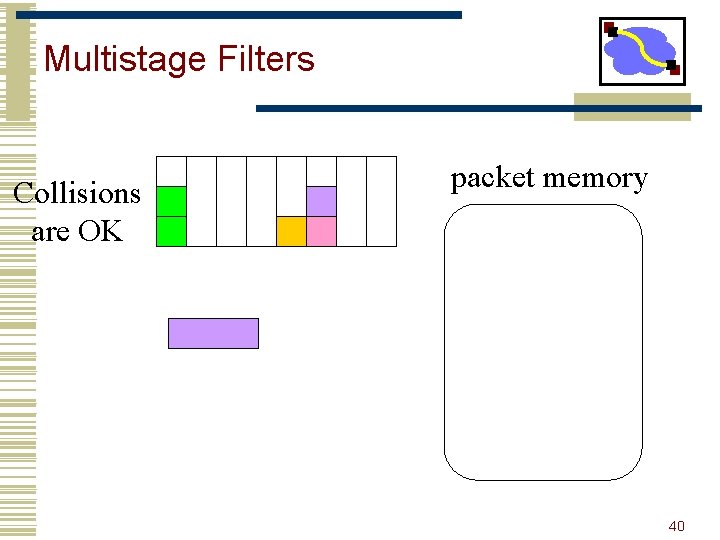

Multistage Filters Collisions are OK packet memory 40

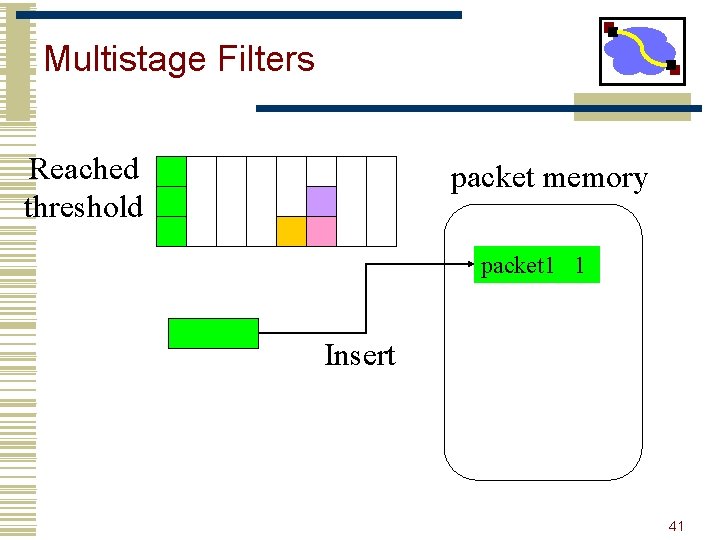

Multistage Filters Reached threshold packet memory packet 1 1 Insert 41

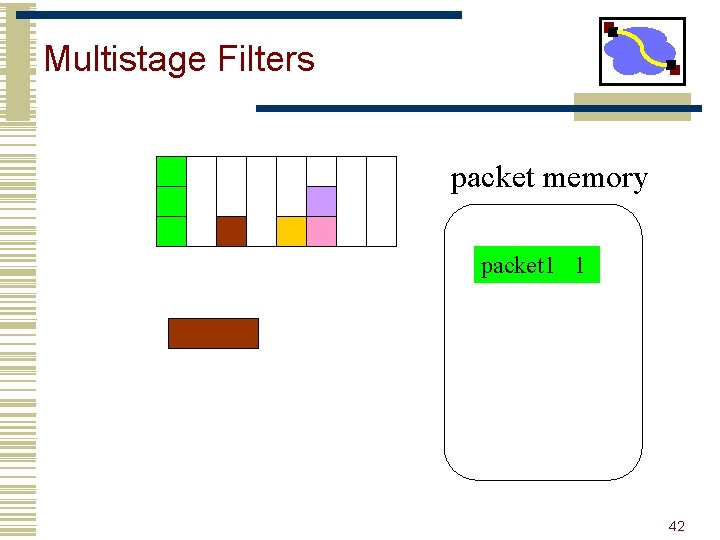

Multistage Filters packet memory packet 1 1 42

Multistage Filters packet memory packet 1 1 packet 2 1 43

Multistage Filters Stage 1 packet memory packet 1 1 Stage 2 No false negatives! (guaranteed detection) 44

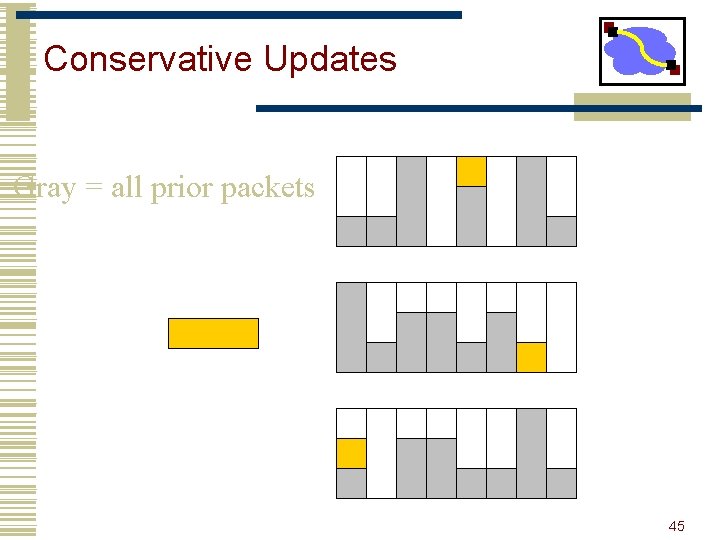

Conservative Updates Gray = all prior packets 45

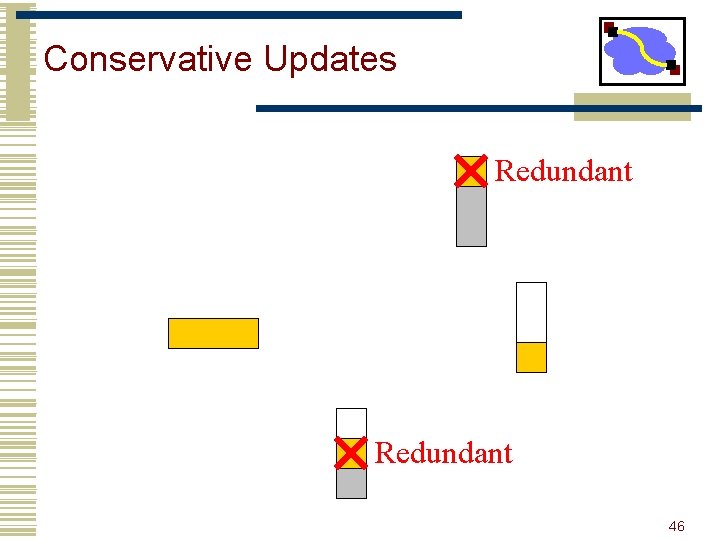

Conservative Updates Redundant 46

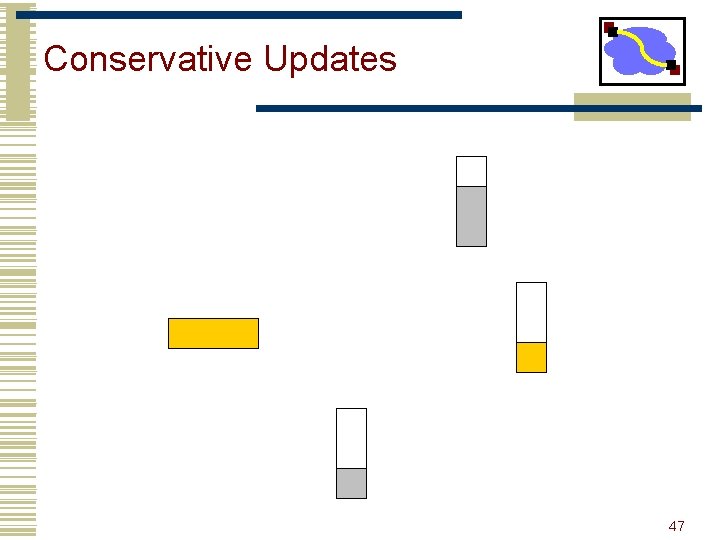

Conservative Updates 47

Value Sampling • • The problem: s-b+1 substrings Solution: Sample But: Random sampling is not good enough Trick: Sample only those substrings for which the fingerprint matches a certain pattern 48

sources(w) & destinations(w) • Address Dispersion • Counting distinct elements vs. repeating elements • Simple list or hash table is too expensive • Key Idea: Bitmaps • Trick : Scaled Bitmaps 49

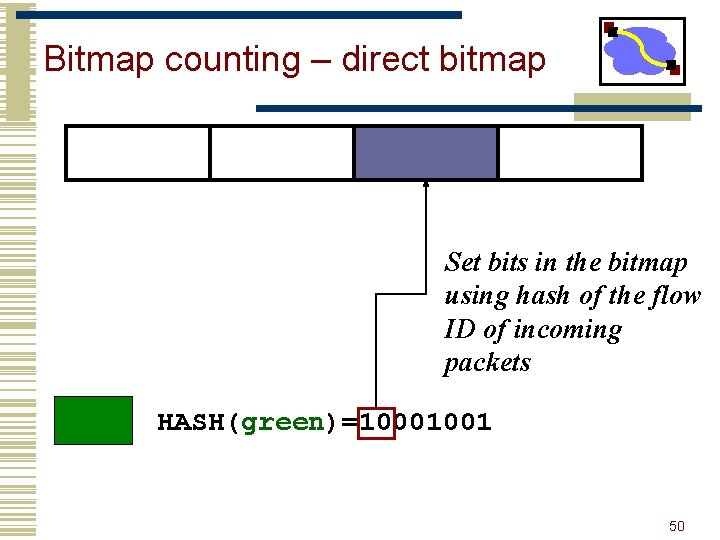

Bitmap counting – direct bitmap Set bits in the bitmap using hash of the flow ID of incoming packets HASH(green)=10001001 50

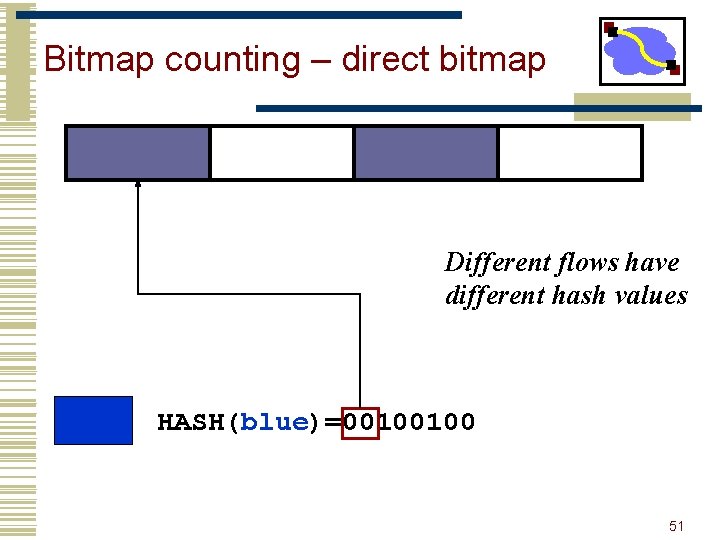

Bitmap counting – direct bitmap Different flows have different hash values HASH(blue)=00100100 51

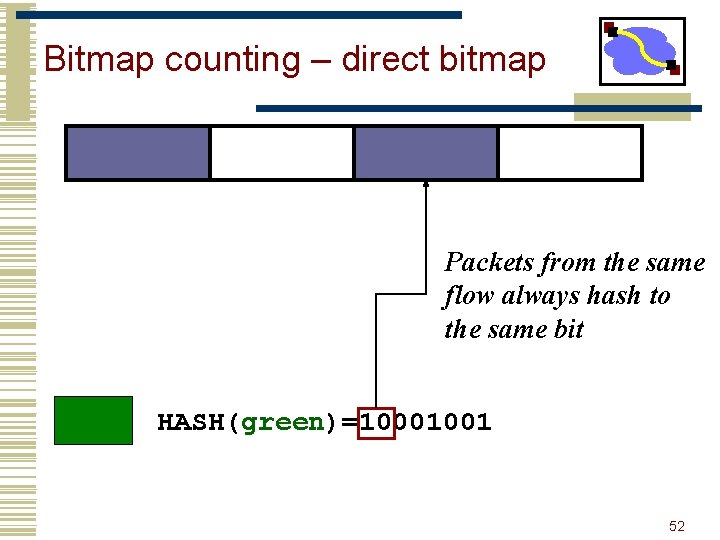

Bitmap counting – direct bitmap Packets from the same flow always hash to the same bit HASH(green)=10001001 52

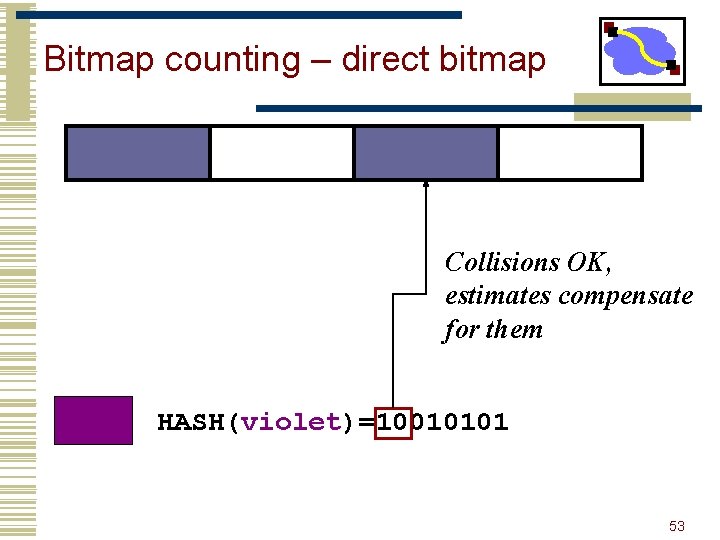

Bitmap counting – direct bitmap Collisions OK, estimates compensate for them HASH(violet)=10010101 53

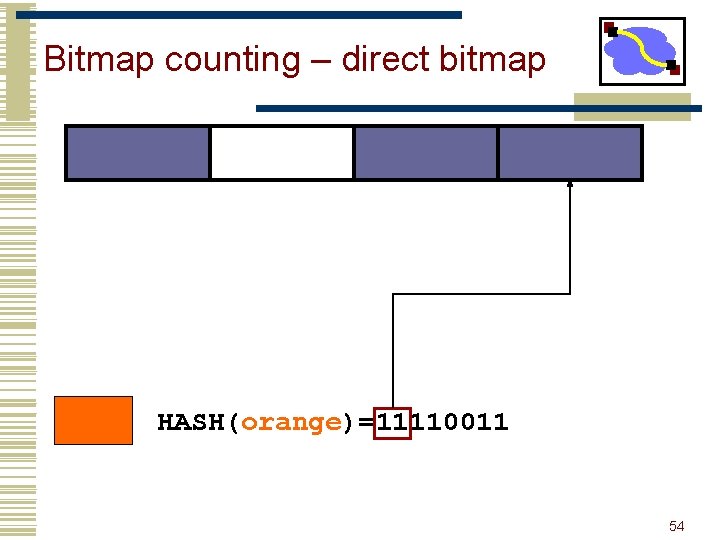

Bitmap counting – direct bitmap HASH(orange)=11110011 54

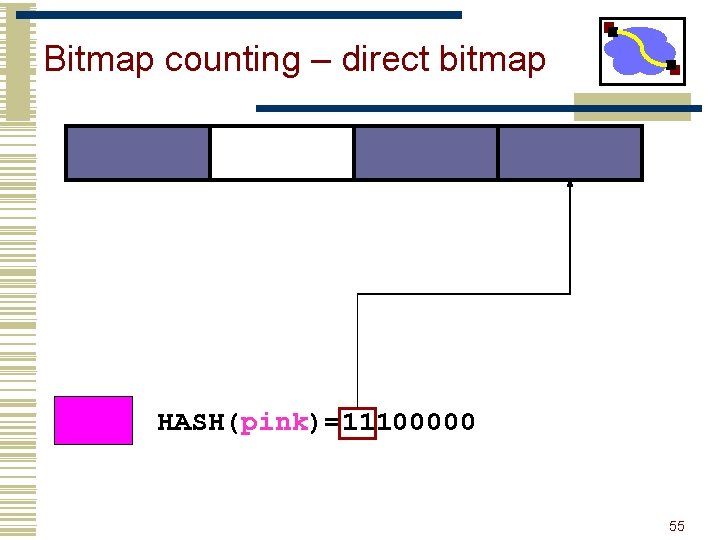

Bitmap counting – direct bitmap HASH(pink)=11100000 55

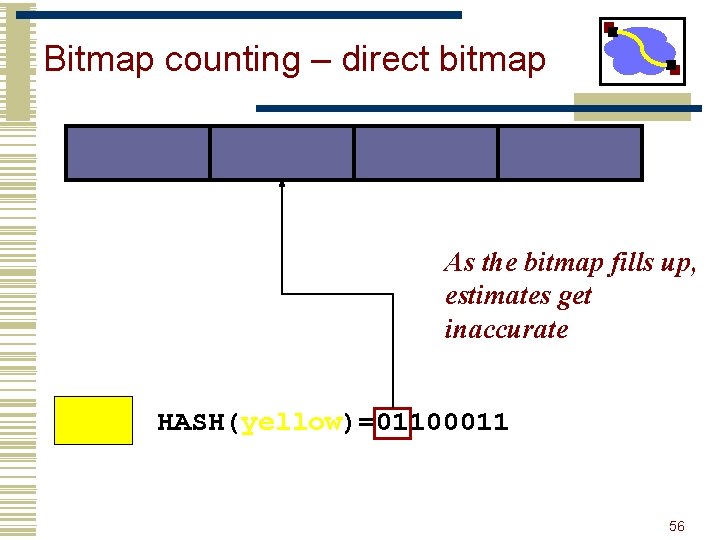

Bitmap counting – direct bitmap As the bitmap fills up, estimates get inaccurate HASH(yellow)=01100011 56

Bitmap counting – direct bitmap Solution: use more bits HASH(green)=10001001 57

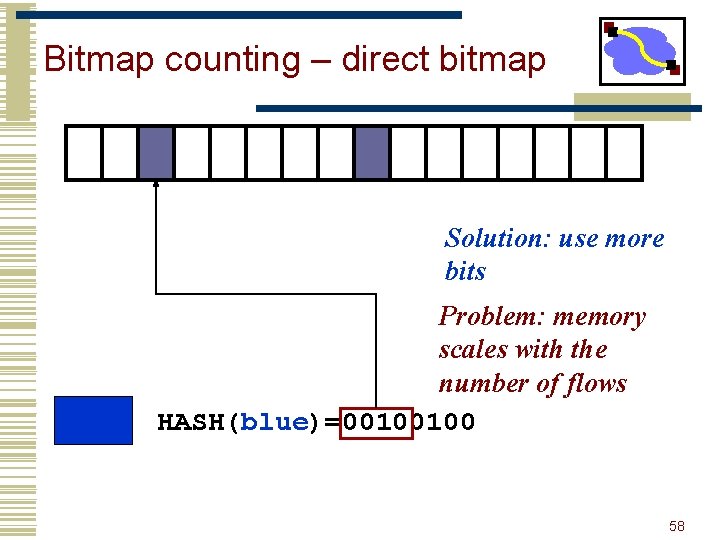

Bitmap counting – direct bitmap Solution: use more bits Problem: memory scales with the number of flows HASH(blue)=00100100 58

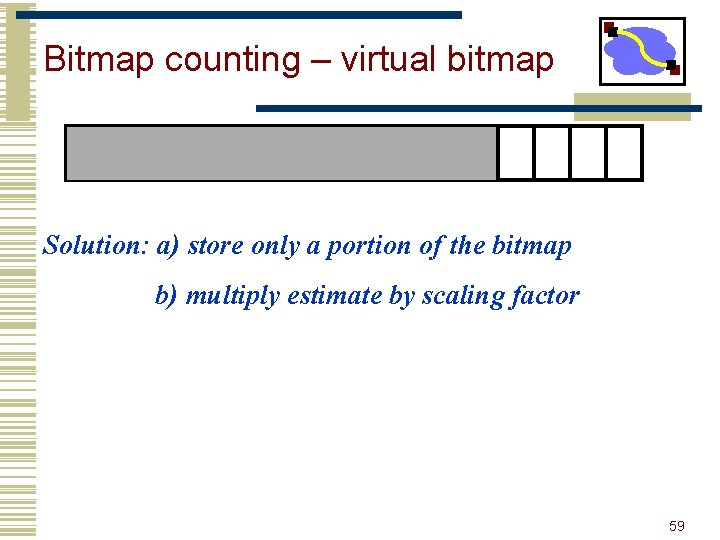

Bitmap counting – virtual bitmap Solution: a) store only a portion of the bitmap b) multiply estimate by scaling factor 59

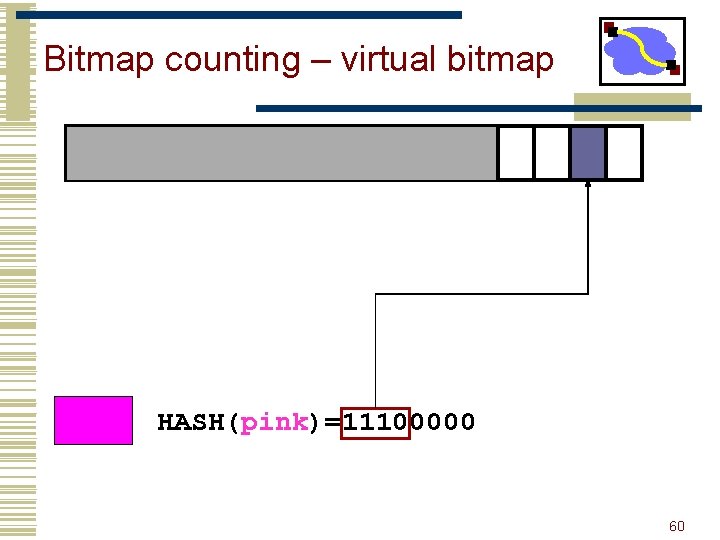

Bitmap counting – virtual bitmap HASH(pink)=11100000 60

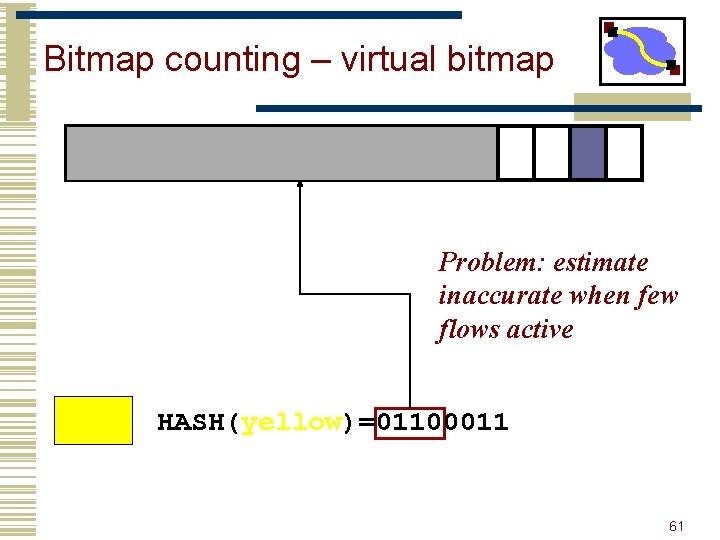

Bitmap counting – virtual bitmap Problem: estimate inaccurate when few flows active HASH(yellow)=01100011 61

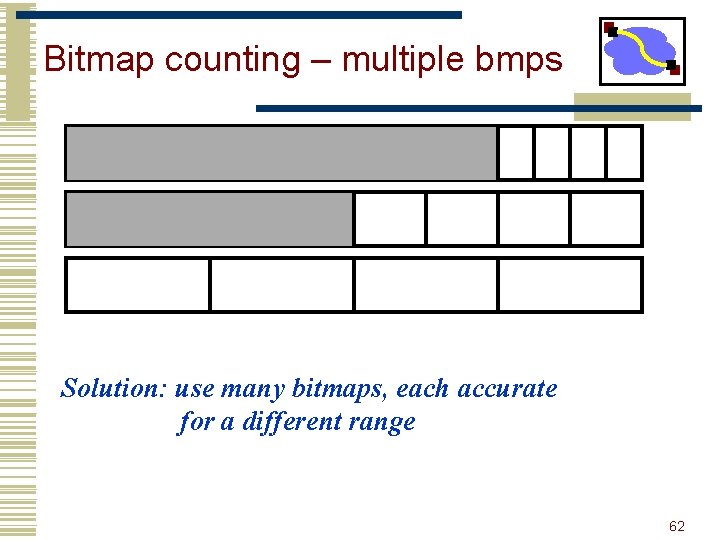

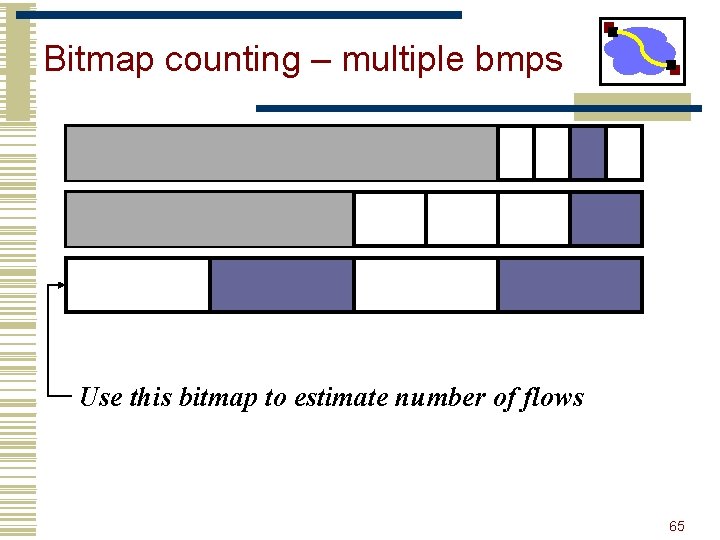

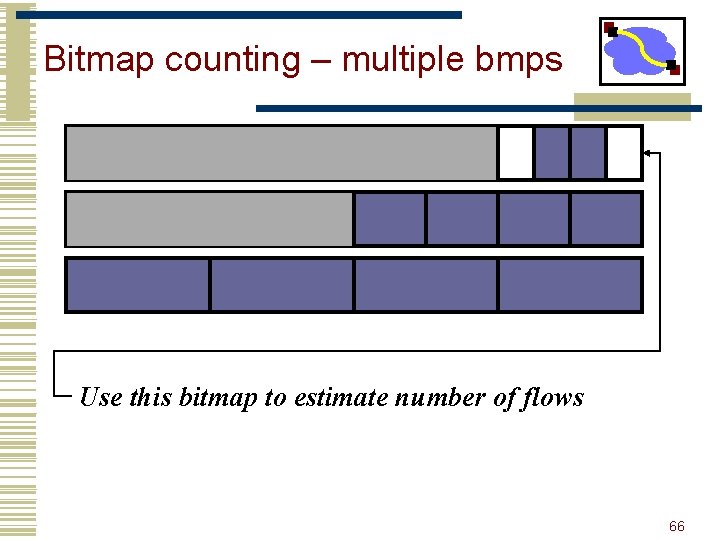

Bitmap counting – multiple bmps Solution: use many bitmaps, each accurate for a different range 62

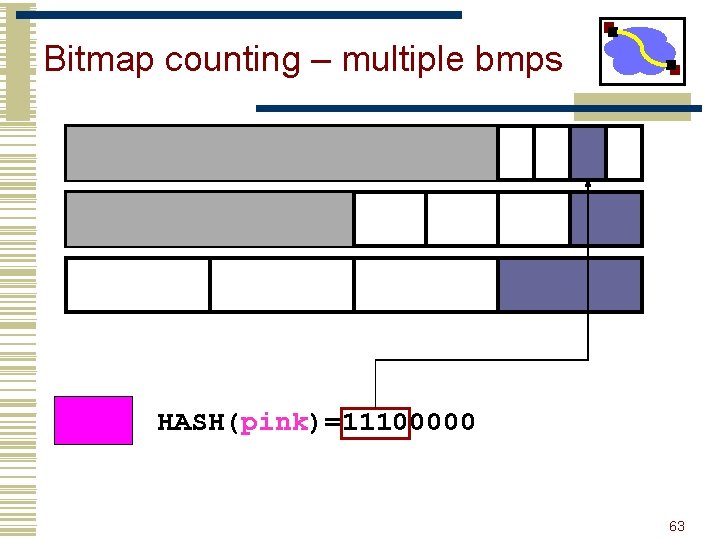

Bitmap counting – multiple bmps HASH(pink)=11100000 63

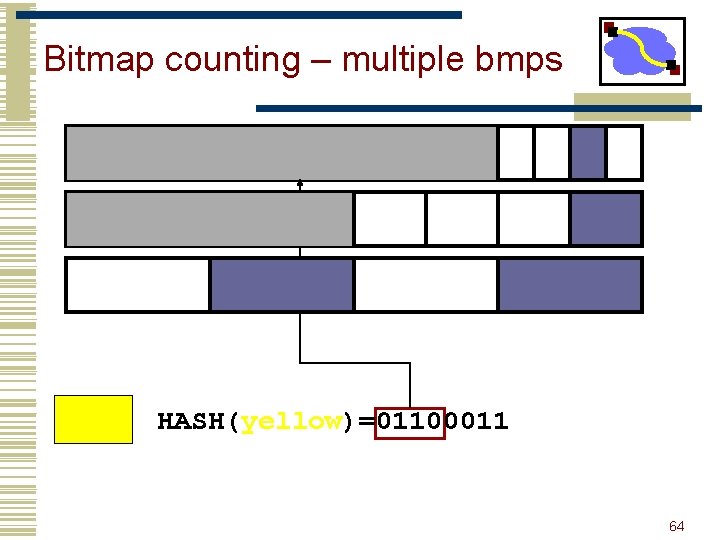

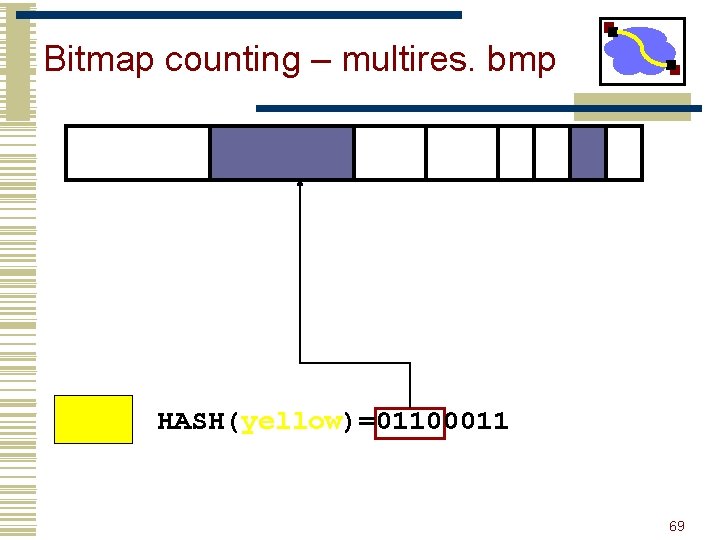

Bitmap counting – multiple bmps HASH(yellow)=01100011 64

Bitmap counting – multiple bmps Use this bitmap to estimate number of flows 65

Bitmap counting – multiple bmps Use this bitmap to estimate number of flows 66

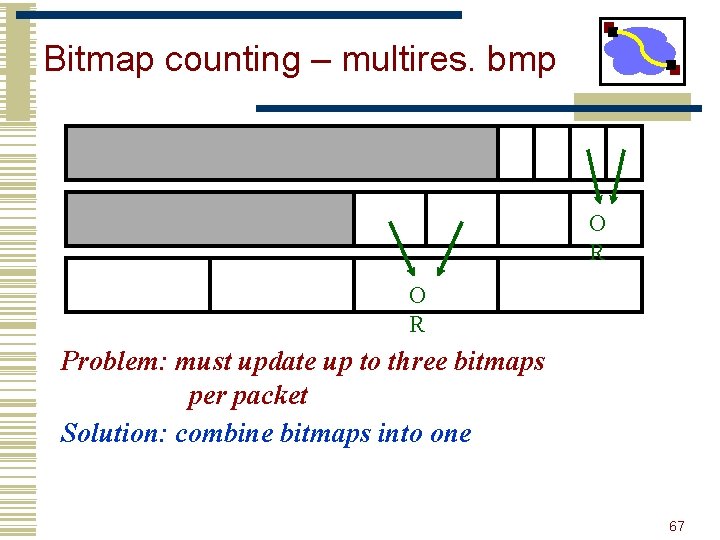

Bitmap counting – multires. bmp O R Problem: must update up to three bitmaps per packet Solution: combine bitmaps into one 67

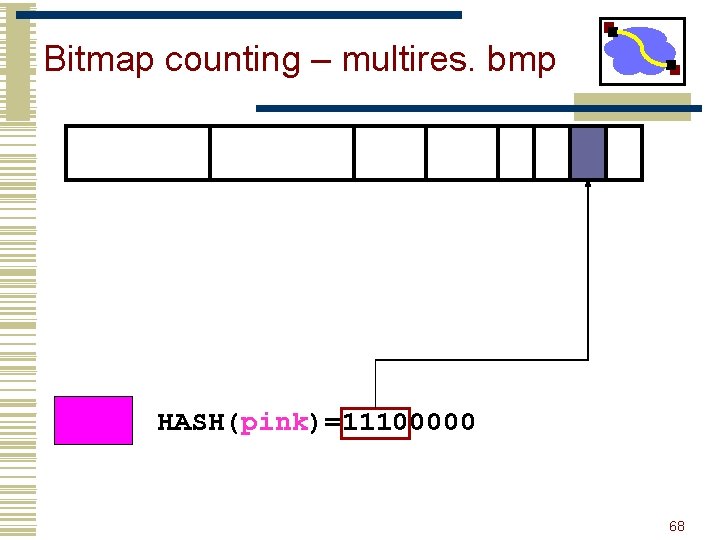

Bitmap counting – multires. bmp HASH(pink)=11100000 68

Bitmap counting – multires. bmp HASH(yellow)=01100011 69

Multiresolution Bitmaps • Still too expensive to scale • Scaled bitmap • Recycles the hash space with too many bits set • Adjusts the scaling factor according 70

Scaled Bitmap • Idea: Subsample the range of hash space • How it works? • multiple bitmaps each mapped to progressively smaller and smaller portions of the hash space. • bitmap recycled if necessary. Result Roughly 5 time less memory + actual estimation of address dispersion 71

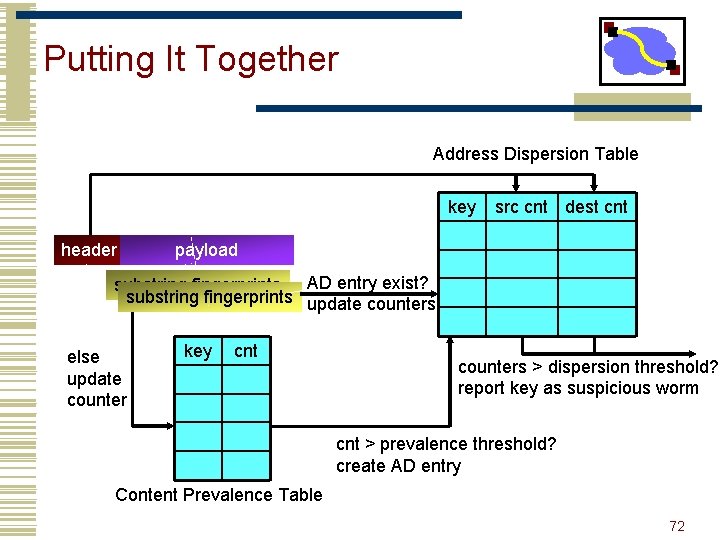

Putting It Together Address Dispersion Table key header src cnt dest cnt payload substring fingerprints AD entry exist? substring fingerprints update counters else update counter key cnt counters > dispersion threshold? report key as suspicious worm cnt > prevalence threshold? create AD entry Content Prevalence Table 72

Putting It Together • Sample frequency: 1/64 • String length: 40 • Use 4 hash functions to update prevalence table • Multistage filter reset every 60 seconds 73

Parameter Tuning • Prevalence threshold: 3 • Very few signatures repeat • Address dispersion threshold • 30 sources and 30 destinations • Reset every few hours • Reduces the number of reported signatures down to ~25, 000 74

Parameter Tuning • Tradeoff between and speed and accuracy • Can detect Slammer in 1 second as opposed to 5 seconds • With 100 x more reported signatures 75

False Negatives in EB • False Negatives • Very hard to prove. . . • Earlybird detected all worm outbreaks reported on security lists over 8 months • EB detected all worms detected by Snort (signature-based IDS)? • And some that weren't 76

False Positives in EB • Common protocol headers • HTTP, SMTP headers • p 2 p protocol headers • Non-worm epidemic activity • Spam • Bit. Torrent (!) • Solution: • Small whitelist. . . 77

Outline • Worms • Worm Defense • Botnet/Viruses 78

. . . and it's profitable • Botnets used for • Spam (and more spam)? • Credit card theft • DDo. S extortion • Flourishing Exchange market • Spam proxying: 3 -10 cents/host/week • 25 k botnets: $40 k - $130 k/year • Also for stolen account compromised machines, credit cards, identities, etc. (be worried)? 79

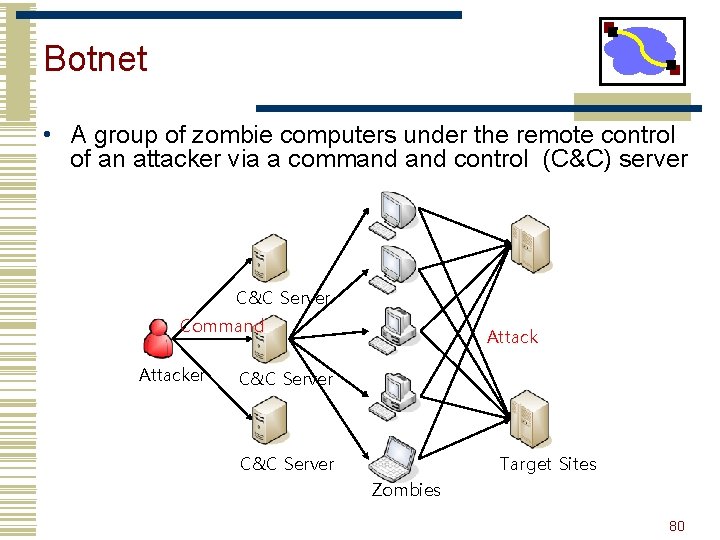

Botnet • A group of zombie computers under the remote control of an attacker via a command control (C&C) server C&C Server Command Attacker Attack C&C Server Target Sites Zombies 80

Botnet Countermeasure • Detecting new botnets by using honeypots, analyzing spam pools, capturing group activities in DNS • Sinkholing or nullrouting C&C server connections and cleaning zombies 81

Outline • Worms • Worm Defense • Botnet/Viruses 82

Malicious Code • Many types of malicious code • Virus, worm, botnet, spyware, spam, etc. • Who writes this and why? • Challenge (for fun) • Fame (for pride) • Business (for money) • Black markets for attacks (DDo. S and spams) and info(credit cards, vulnerabilities) • Ideology (for activism) • Hactivism, cyberterrorism, cyberwarefare 83

What is a Computer Virus? • Program that spreads itself by infecting (modifying) an executable file and making copies of itself 84

Components 1. Propagation mechanism • Sharing infected file with other computers • • USB drive, email attachment, and shared folders Executing infected file Infect other computers and spread infection 2. Trigger • Time/condition when payload is activated 3. Payload • • • Damage existing files Extort sensitive information Consume computer’s resources 85

Infected File Before 1 Insert document in fax machine. (Program entry-point) 2 Dial the phone number. 3 Hit the SEND button on the fax. 4 Wait for completion. If a problem occurs, go back to step 1. 5 End task. After 1 Skip to step 6. 2 Dial the phone number. 3 Hit the SEND button on the fax. 4 Wait for completion. If a problem occurs, go back to step 1. 5 End task. 6 VIRUS instructions 7 Insert document in fax machine and go to step 2. Nachenberg, Computer Virus-Antivirus Coevolution, CACM 1997 86

Propagation • Virus replicates when infected file is executed • Task is not entirely automated • User makes the first step • Virus copies malicious code to other files • Jump instruction to malicious code is added • Why are Windows-based viruses most prolific? • Largest population • Why write a virus if only a few people are infected? 87

Detection • Infected file has a larger size than initial version of file • Scanners record files lengths and searches for changes • Virus can easily bypass detection through compression P (packing) V P P 89

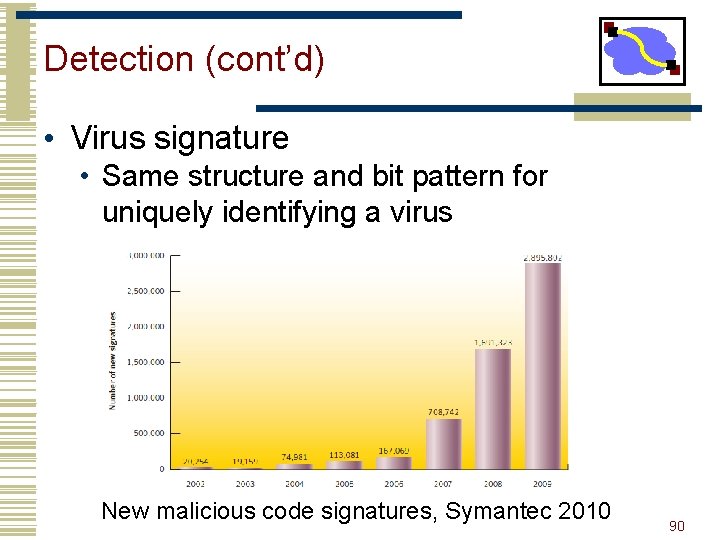

Detection (cont’d) • Virus signature • Same structure and bit pattern for uniquely identifying a virus New malicious code signatures, Symantec 2010 90

More Advanced Viruses • Encrypted viruses • Prevent “signature” to detect virus via encryption § Polymorphic viruses § Change virus code to prevent signature 91

Detection of Encrypted Virus • A different encryption key is generated for each new infection • Therefore, encrypted virus body appears different in each infected file • Antivirus can no longer parse virus body for the virus signatures • Still pattern matching possible • Still identical copy of decryption routine 92

Detection of Polymorphic Viruses • Advanced encrypted virus • Formerly, constant decryption routine • Now, mutable decryption routine • unique crypto code generated for each copy • No more signatures in code 93

- Slides: 91