12 Multiple Linear Regression CHAPTER OUTLINE 12 1

- Slides: 94

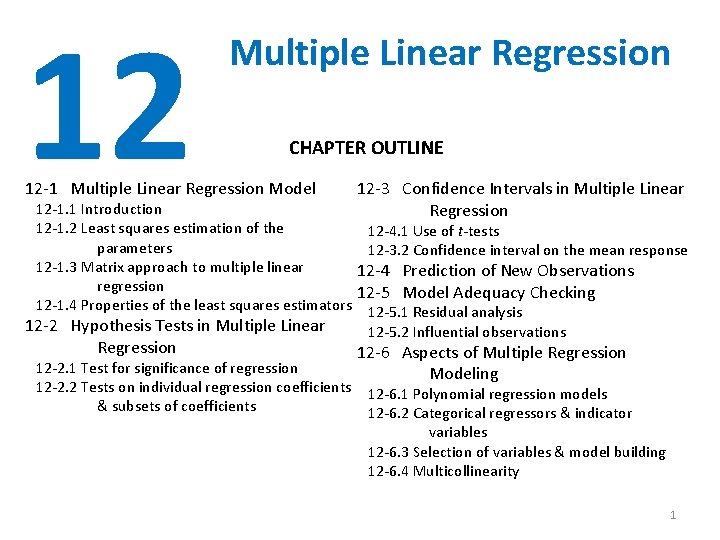

12 Multiple Linear Regression CHAPTER OUTLINE 12 -1 Multiple Linear Regression Model 12 -1. 1 Introduction 12 -1. 2 Least squares estimation of the parameters 12 -1. 3 Matrix approach to multiple linear regression 12 -1. 4 Properties of the least squares estimators 12 -2 Hypothesis Tests in Multiple Linear Regression 12 -3 Confidence Intervals in Multiple Linear Regression 12 -4. 1 Use of t-tests 12 -3. 2 Confidence interval on the mean response 12 -4 Prediction of New Observations 12 -5 Model Adequacy Checking 12 -5. 1 Residual analysis 12 -5. 2 Influential observations 12 -6 Aspects of Multiple Regression Modeling 12 -2. 1 Test for significance of regression 12 -2. 2 Tests on individual regression coefficients 12 -6. 1 Polynomial regression models & subsets of coefficients 12 -6. 2 Categorical regressors & indicator variables 12 -6. 3 Selection of variables & model building 12 -6. 4 Multicollinearity 1

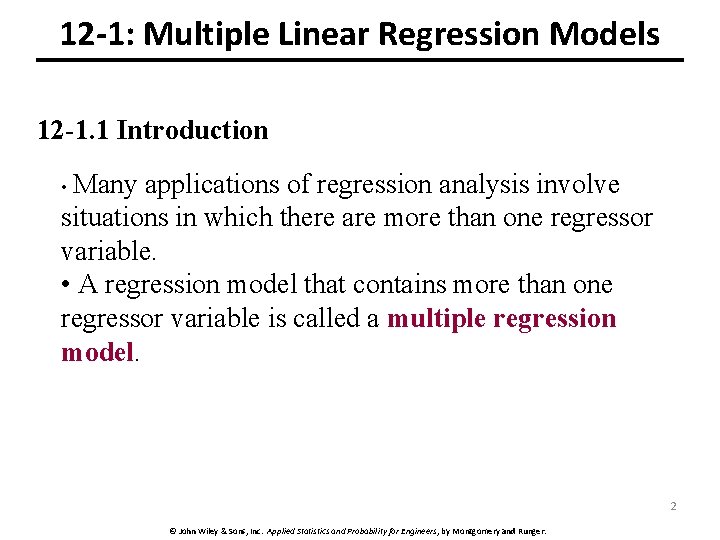

12 -1: Multiple Linear Regression Models 12 -1. 1 Introduction • Many applications of regression analysis involve situations in which there are more than one regressor variable. • A regression model that contains more than one regressor variable is called a multiple regression model. 2 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

12 -1: Multiple Linear Regression Models 12 -1. 1 Introduction • For example, suppose that the effective life of a cutting tool depends on the cutting speed and the tool angle. A possible multiple regression model could be where Y – tool life x 1 – cutting speed x 2 – tool angle β 1 and β 2 partial regression coefficients • β 1 measures the expected change in Y per unit change in x 1 when x 2 is held constant, • β 2 measures the expected change in Y per unit change in x 2 when x 1 is 3 held constant. © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

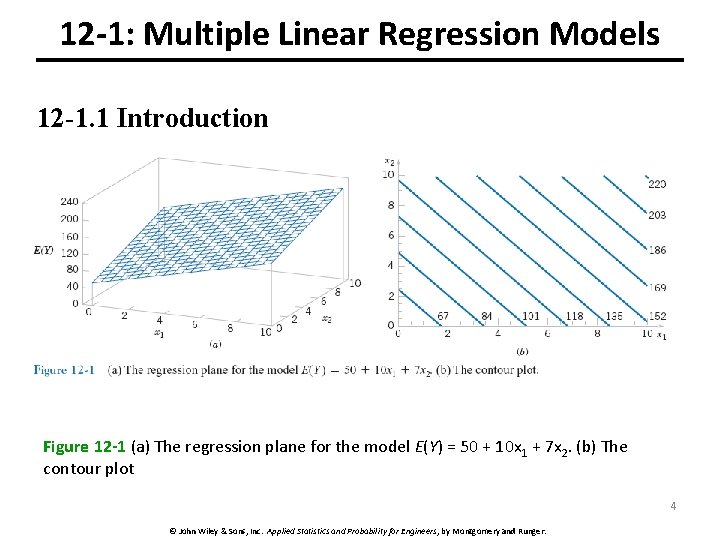

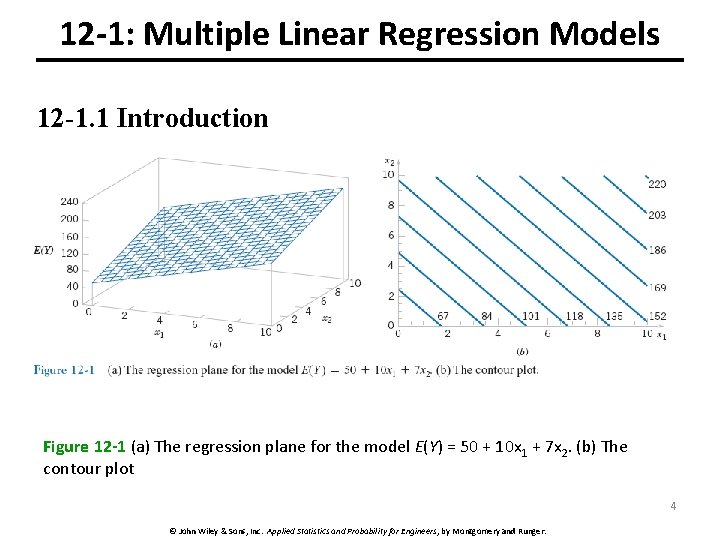

12 -1: Multiple Linear Regression Models 12 -1. 1 Introduction Figure 12 -1 (a) The regression plane for the model E(Y) = 50 + 10 x 1 + 7 x 2. (b) The contour plot 4 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

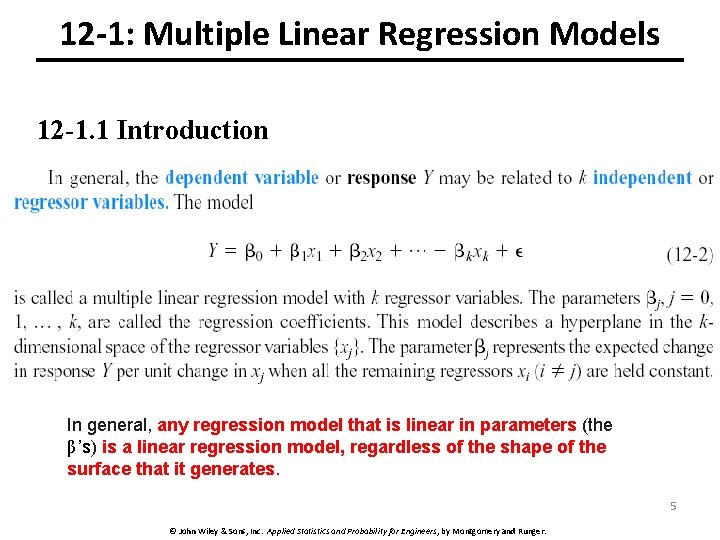

12 -1: Multiple Linear Regression Models 12 -1. 1 Introduction In general, any regression model that is linear in parameters (the β’s) is a linear regression model, regardless of the shape of the surface that it generates. 5 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

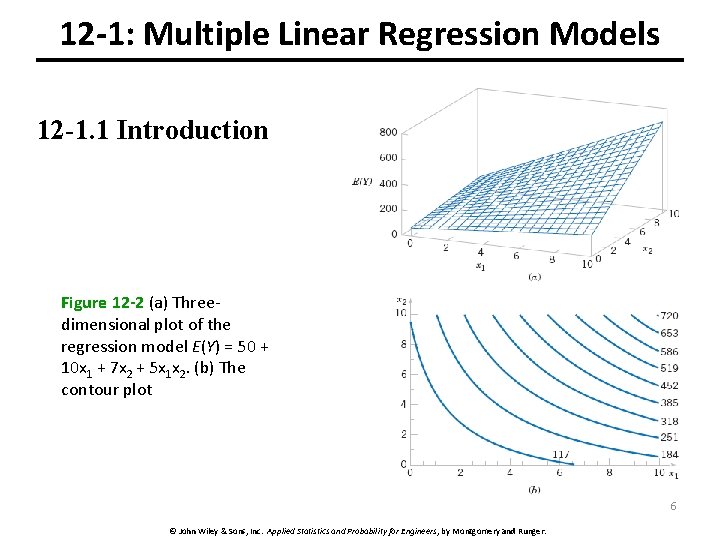

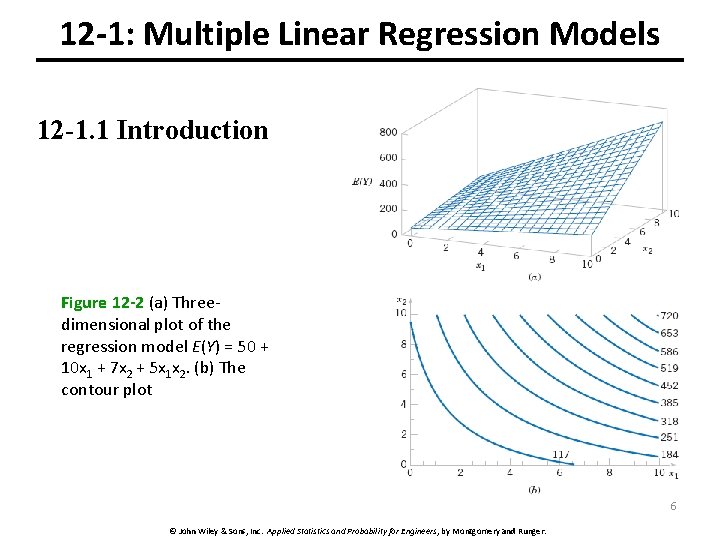

12 -1: Multiple Linear Regression Models 12 -1. 1 Introduction Figure 12 -2 (a) Threedimensional plot of the regression model E(Y) = 50 + 10 x 1 + 7 x 2 + 5 x 1 x 2. (b) The contour plot 6 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

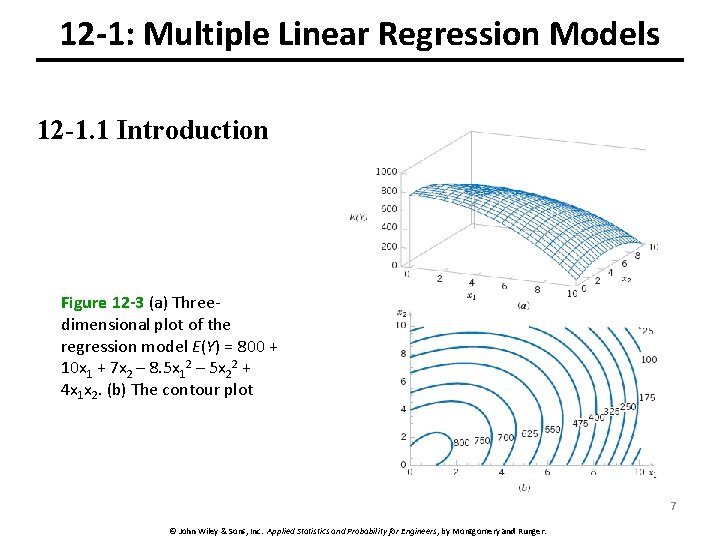

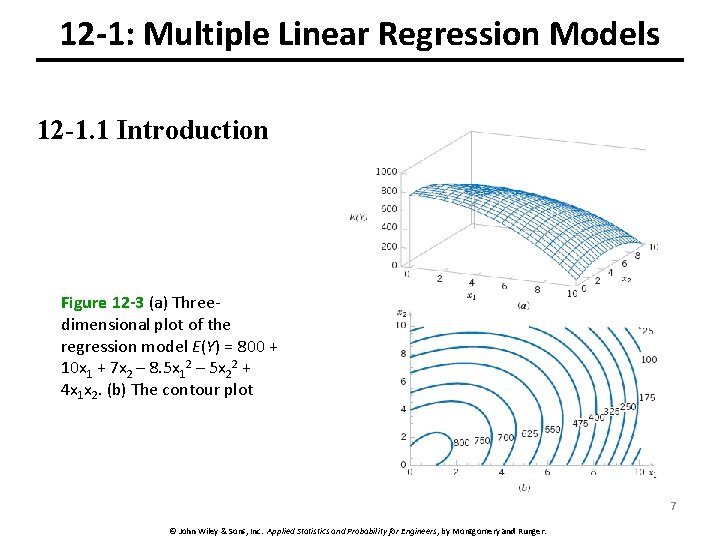

12 -1: Multiple Linear Regression Models 12 -1. 1 Introduction Figure 12 -3 (a) Threedimensional plot of the regression model E(Y) = 800 + 10 x 1 + 7 x 2 – 8. 5 x 12 – 5 x 22 + 4 x 1 x 2. (b) The contour plot 7 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

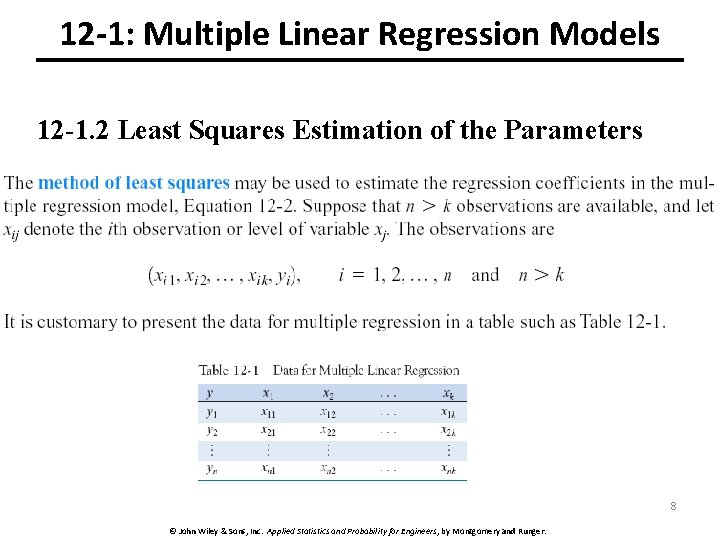

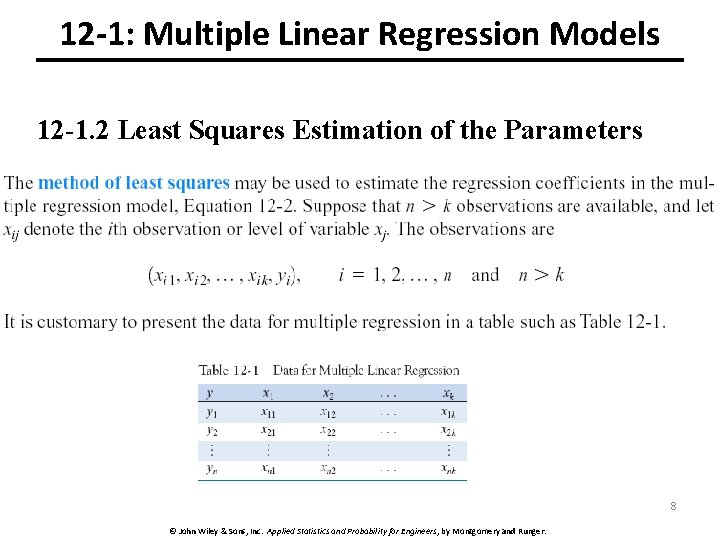

12 -1: Multiple Linear Regression Models 12 -1. 2 Least Squares Estimation of the Parameters 8 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

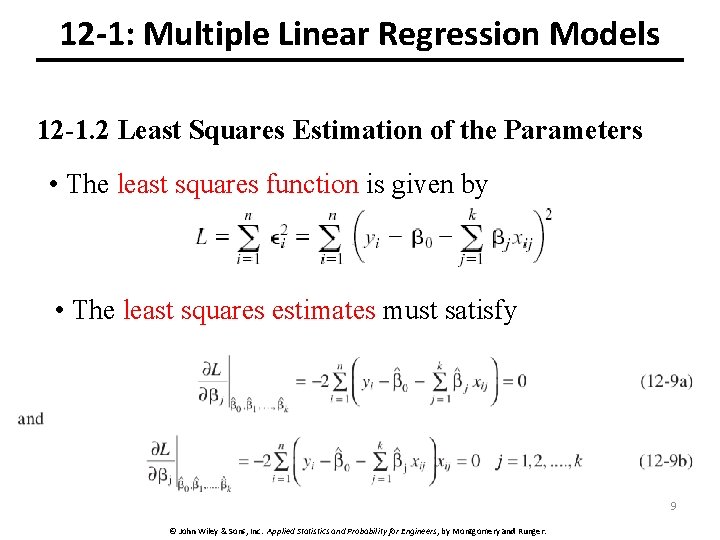

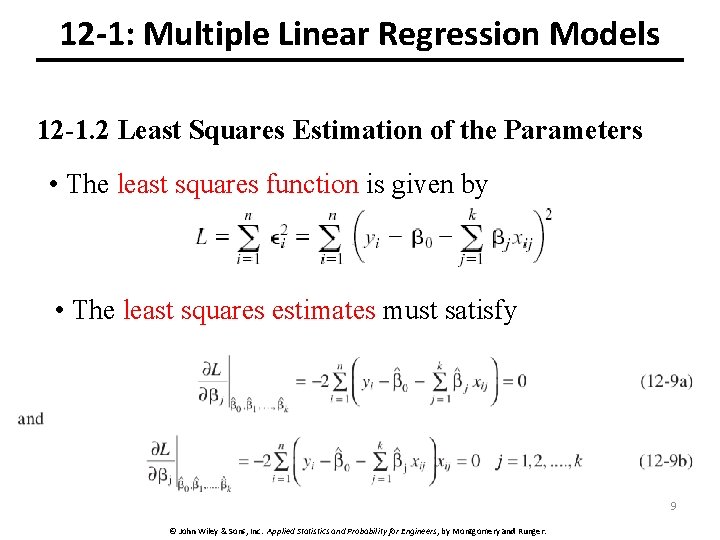

12 -1: Multiple Linear Regression Models 12 -1. 2 Least Squares Estimation of the Parameters • The least squares function is given by • The least squares estimates must satisfy 9 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

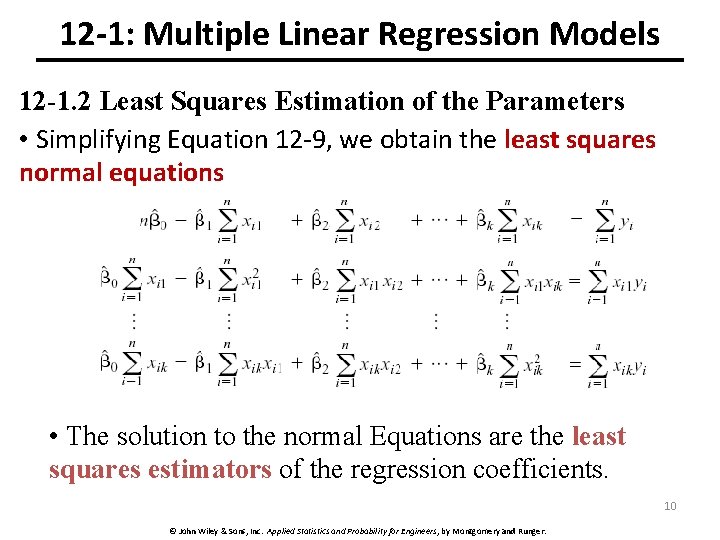

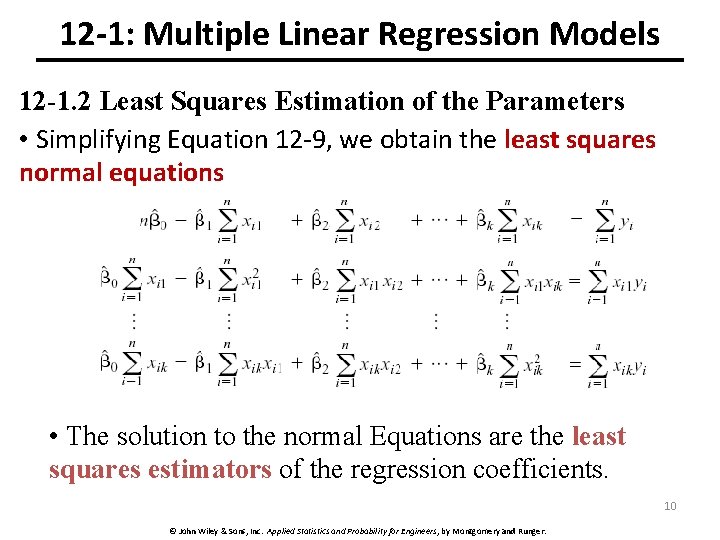

12 -1: Multiple Linear Regression Models 12 -1. 2 Least Squares Estimation of the Parameters • Simplifying Equation 12 -9, we obtain the least squares normal equations • The solution to the normal Equations are the least squares estimators of the regression coefficients. 10 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

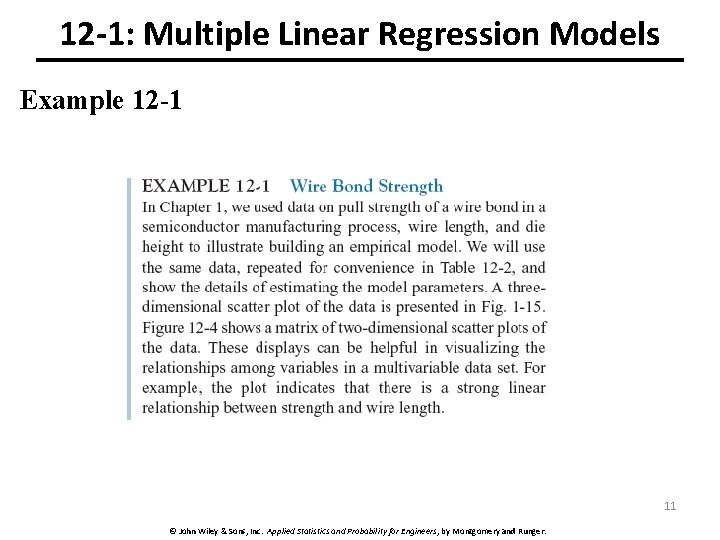

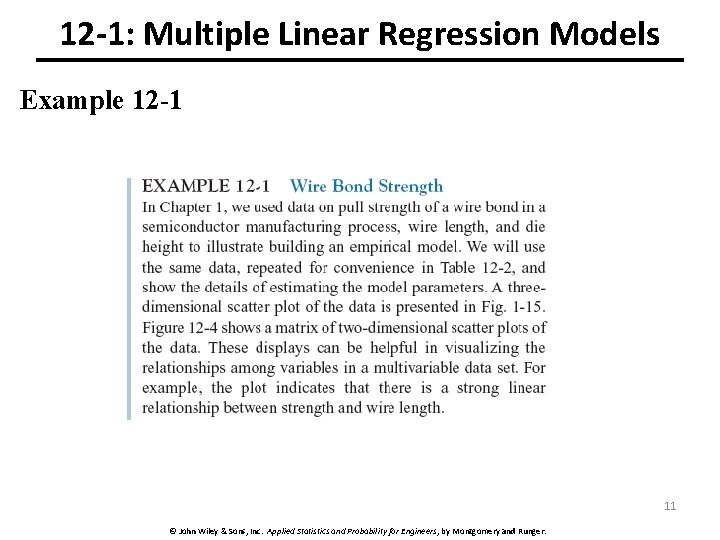

12 -1: Multiple Linear Regression Models Example 12 -1 11 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

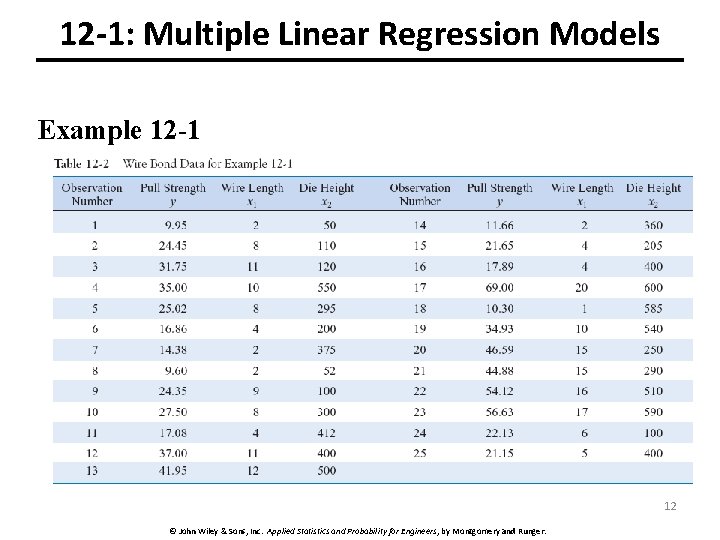

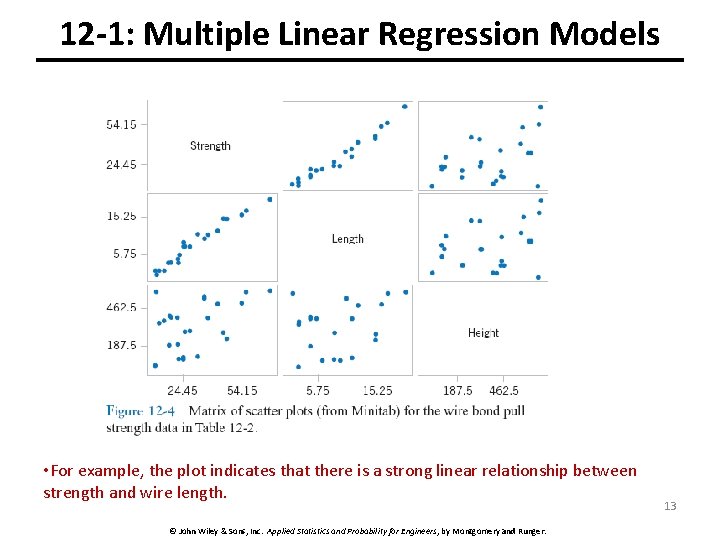

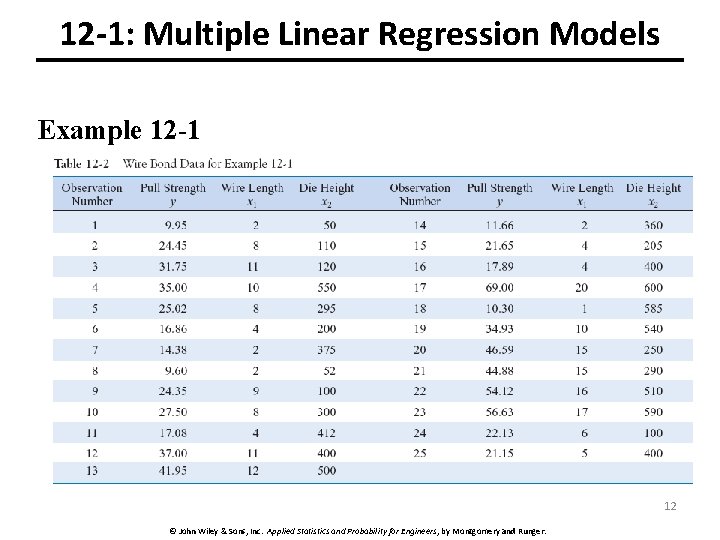

12 -1: Multiple Linear Regression Models Example 12 -1 12 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

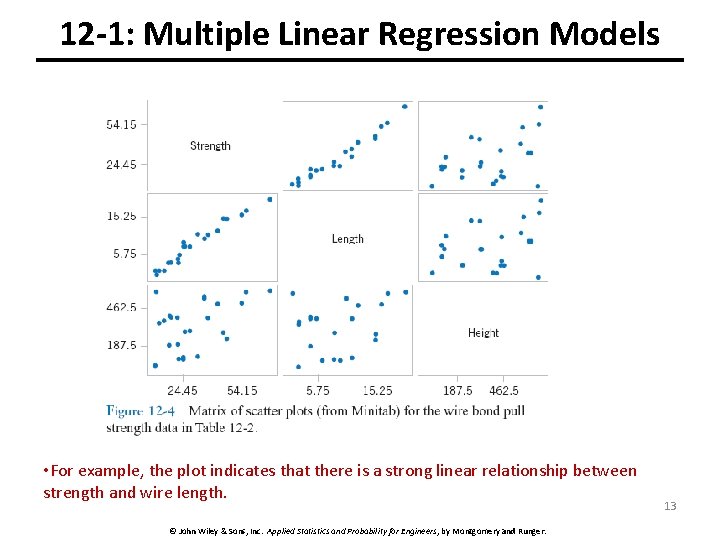

12 -1: Multiple Linear Regression Models • For example, the plot indicates that there is a strong linear relationship between strength and wire length. © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger. 13

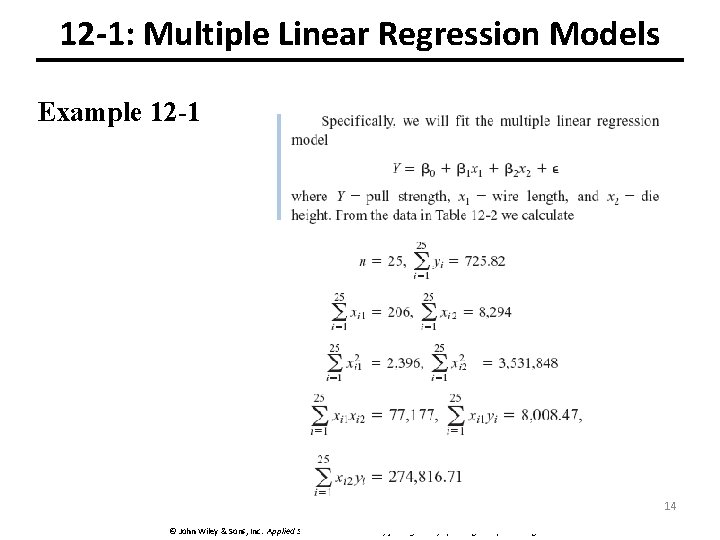

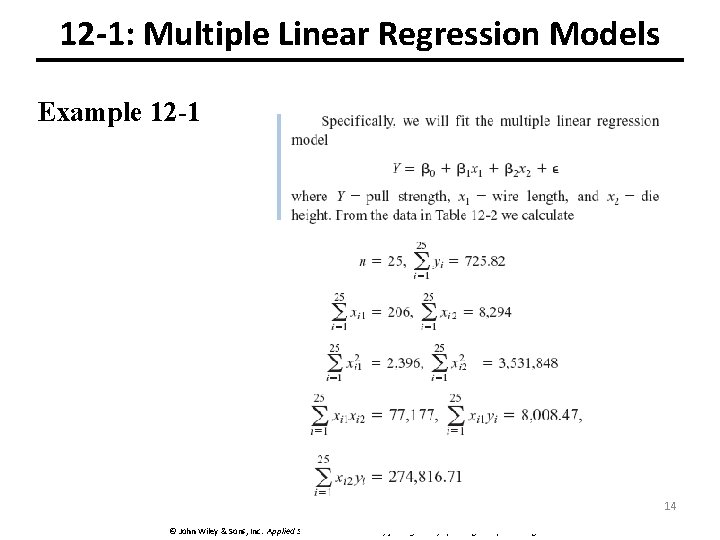

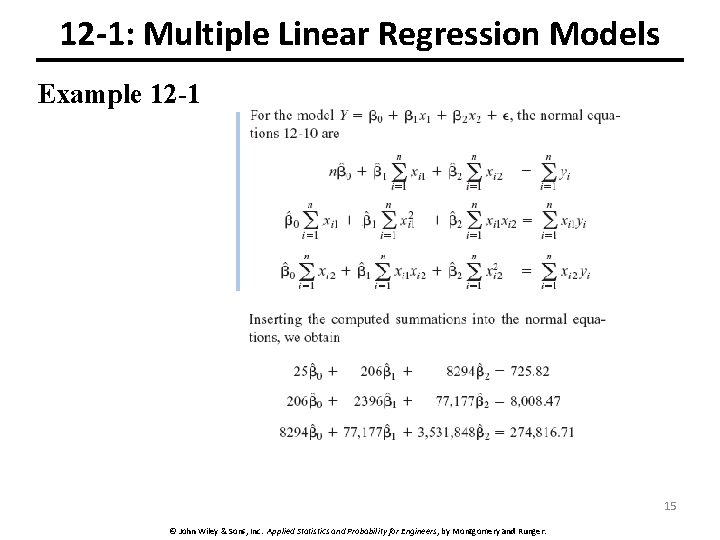

12 -1: Multiple Linear Regression Models Example 12 -1 14 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

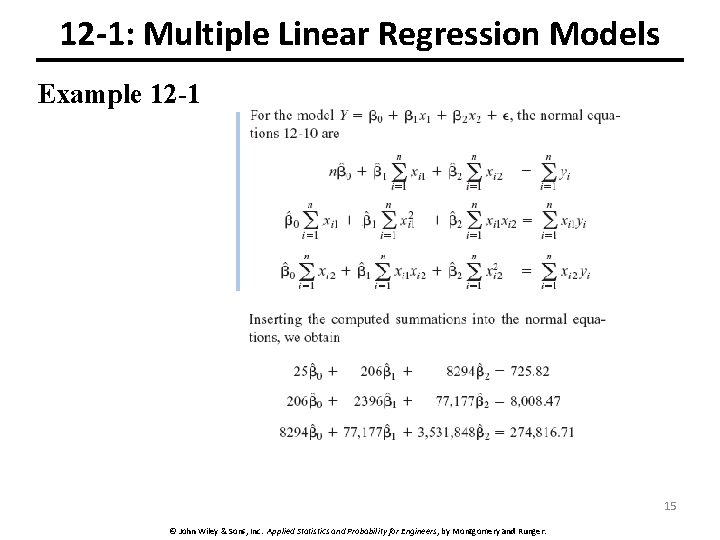

12 -1: Multiple Linear Regression Models Example 12 -1 15 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

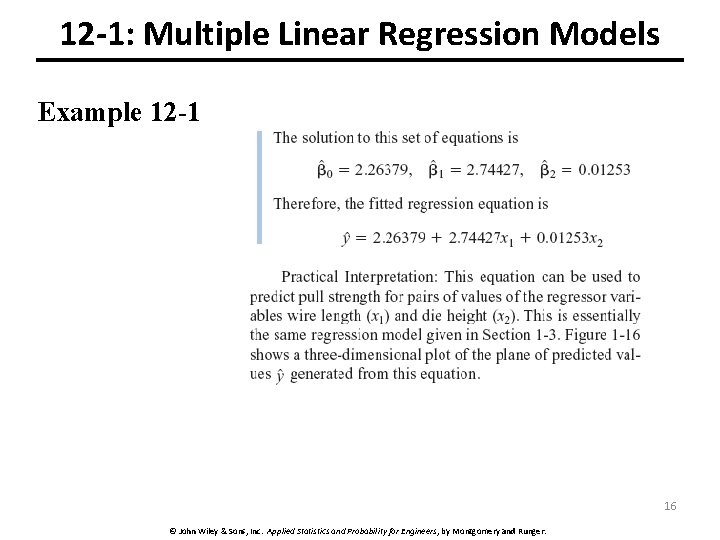

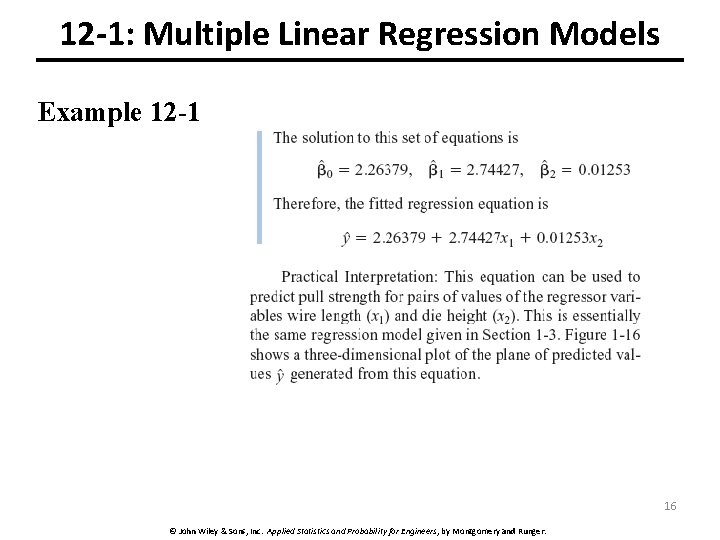

12 -1: Multiple Linear Regression Models Example 12 -1 16 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

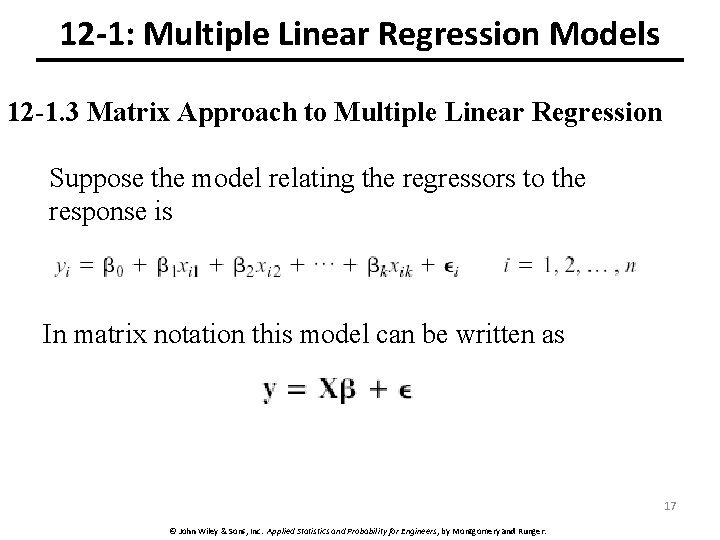

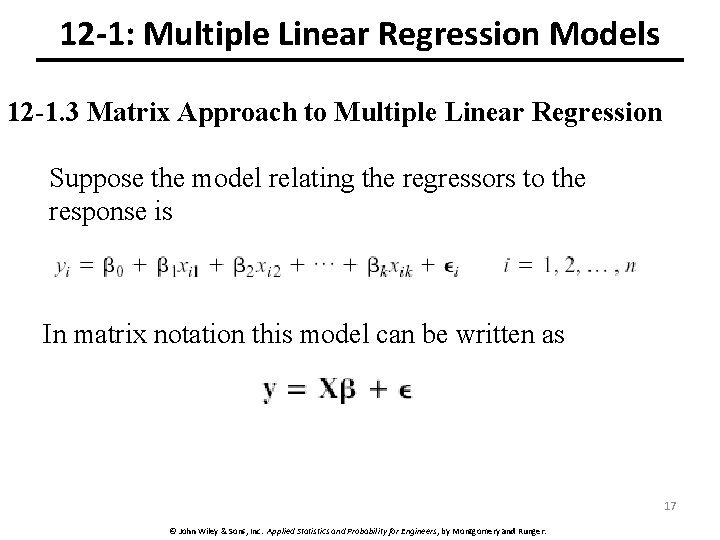

12 -1: Multiple Linear Regression Models 12 -1. 3 Matrix Approach to Multiple Linear Regression Suppose the model relating the regressors to the response is In matrix notation this model can be written as 17 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

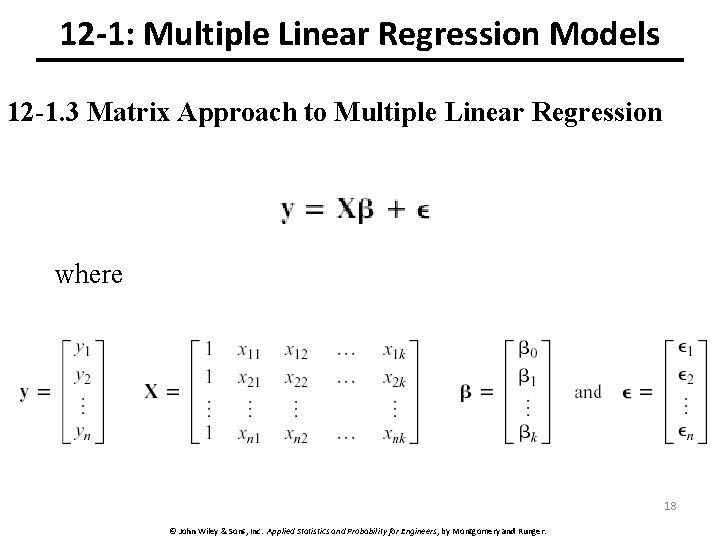

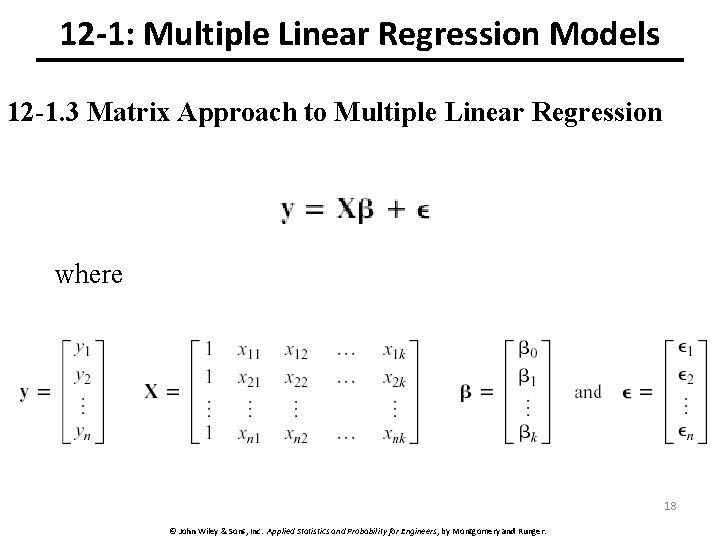

12 -1: Multiple Linear Regression Models 12 -1. 3 Matrix Approach to Multiple Linear Regression where 18 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

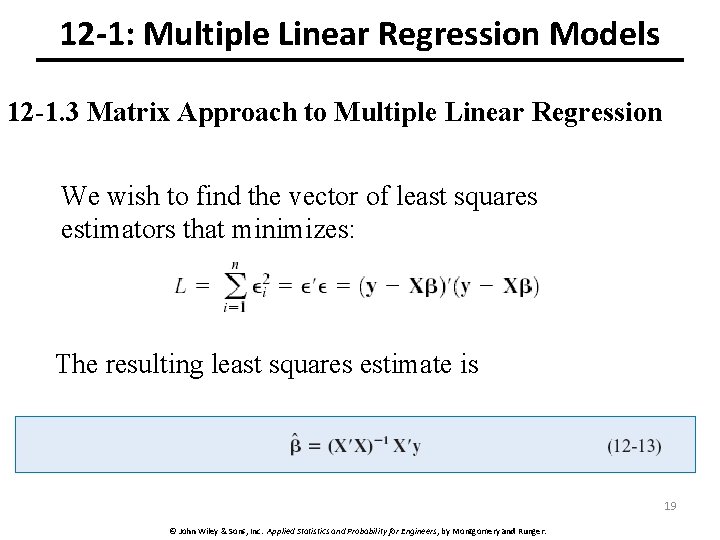

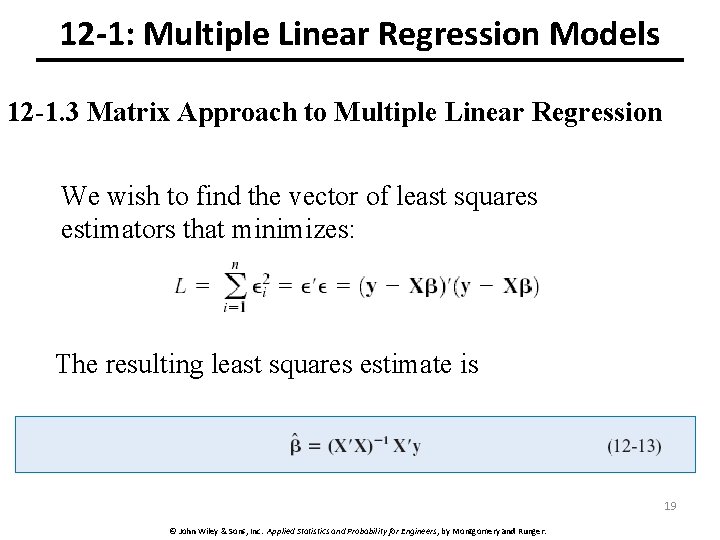

12 -1: Multiple Linear Regression Models 12 -1. 3 Matrix Approach to Multiple Linear Regression We wish to find the vector of least squares estimators that minimizes: The resulting least squares estimate is 19 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

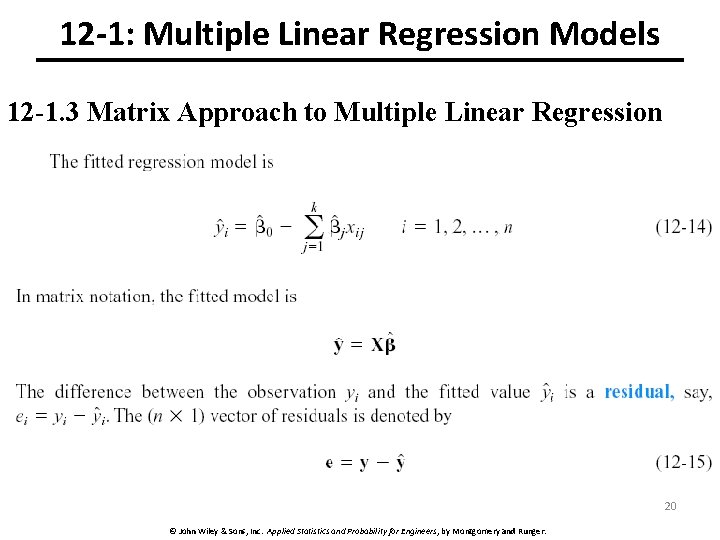

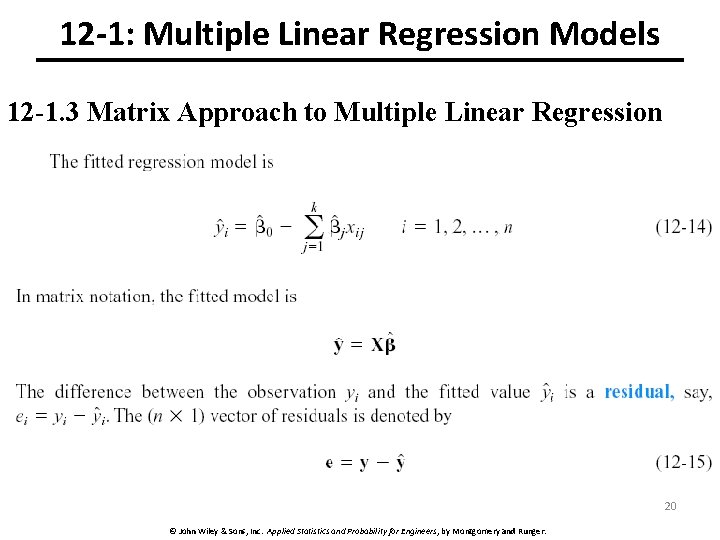

12 -1: Multiple Linear Regression Models 12 -1. 3 Matrix Approach to Multiple Linear Regression 20 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

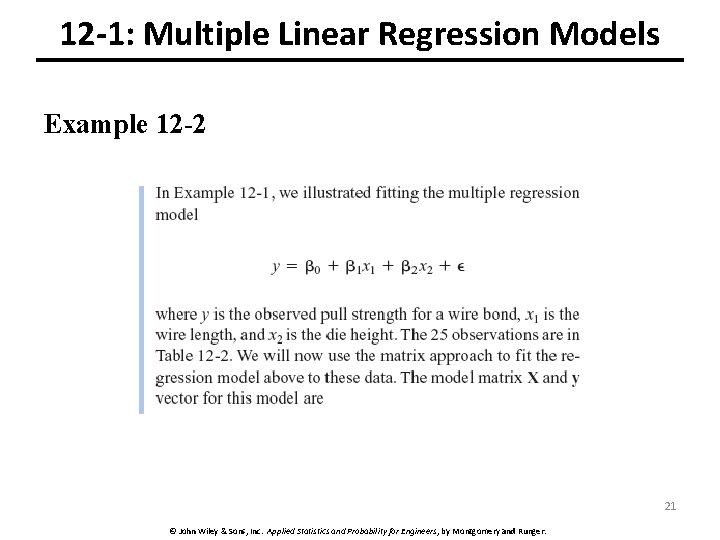

12 -1: Multiple Linear Regression Models Example 12 -2 21 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

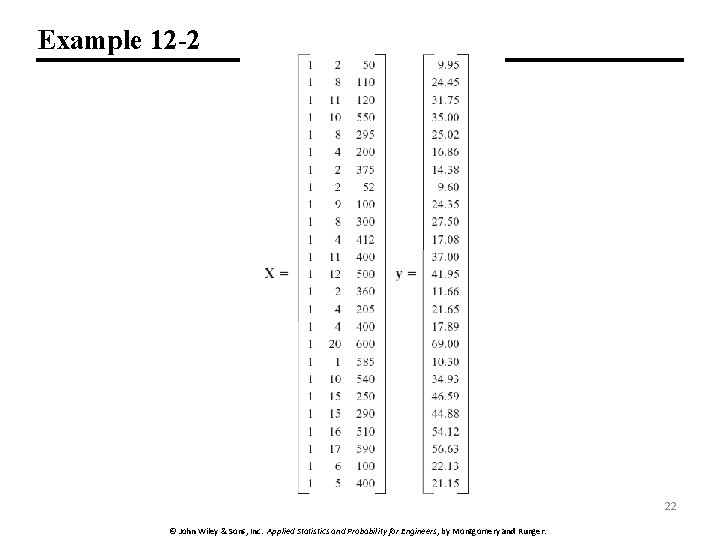

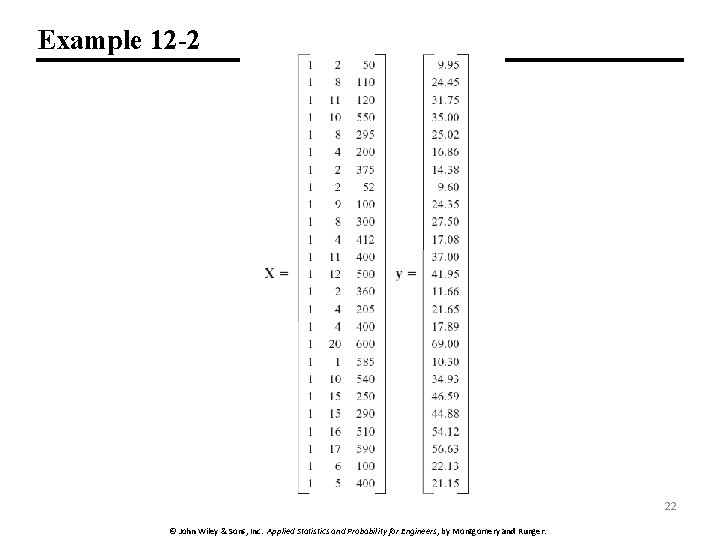

Example 12 -2 22 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

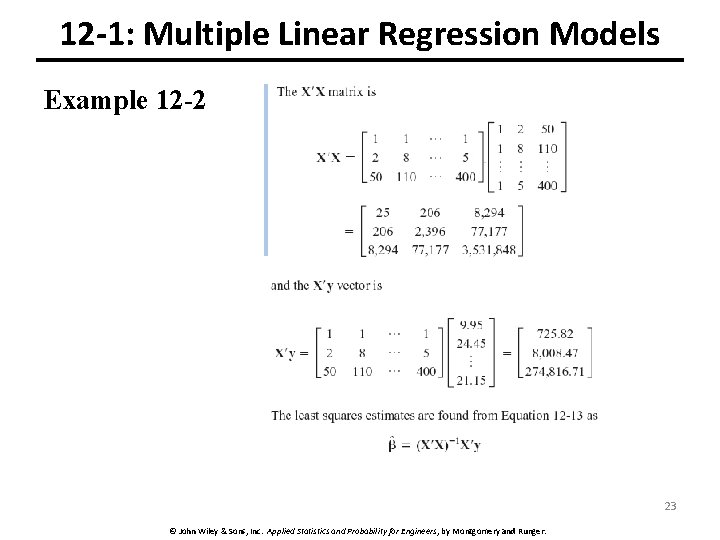

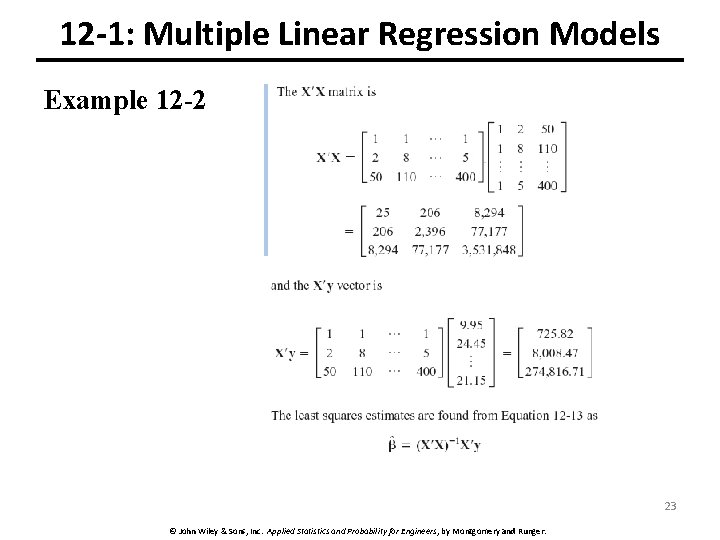

12 -1: Multiple Linear Regression Models Example 12 -2 23 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

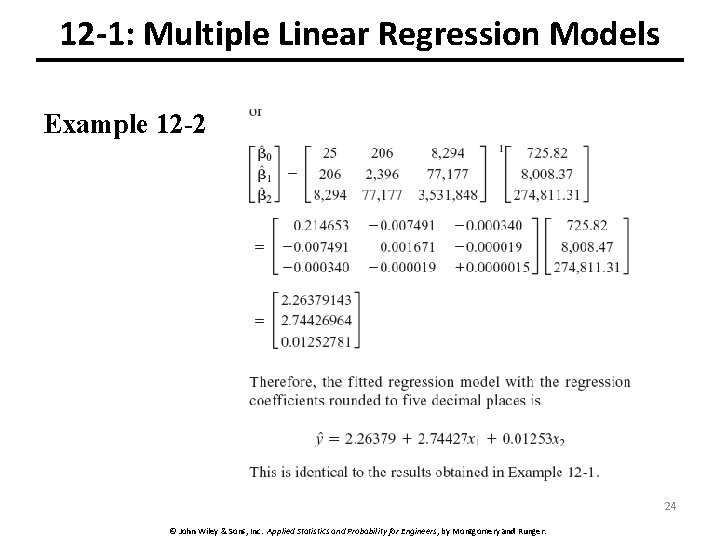

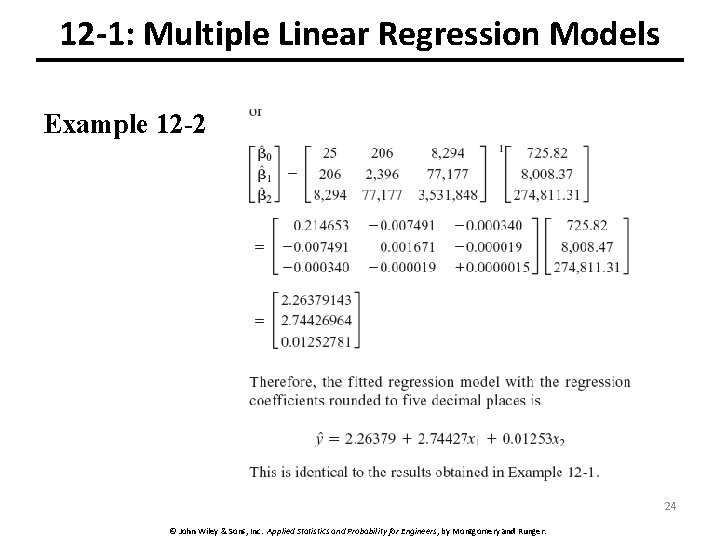

12 -1: Multiple Linear Regression Models Example 12 -2 24 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

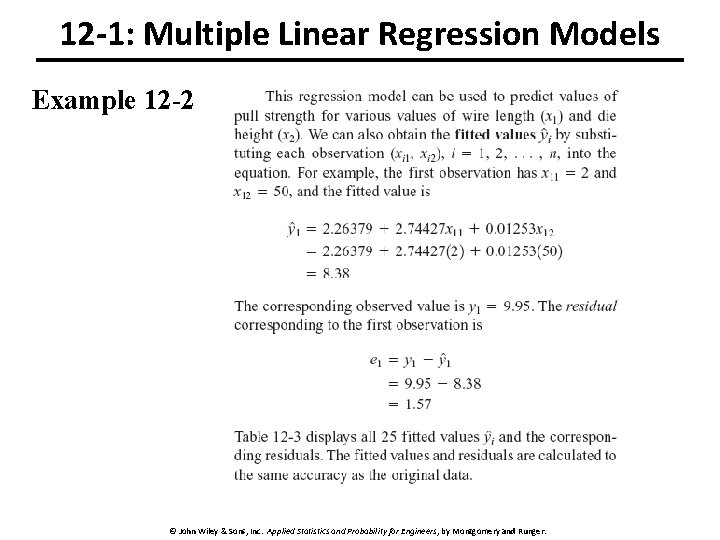

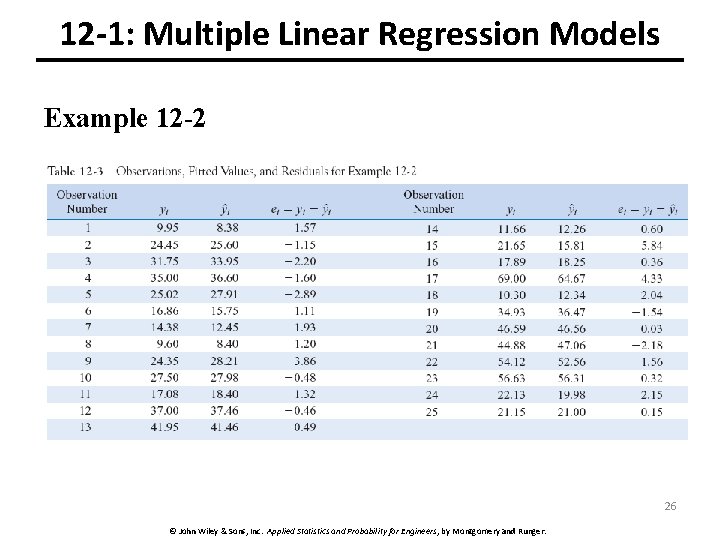

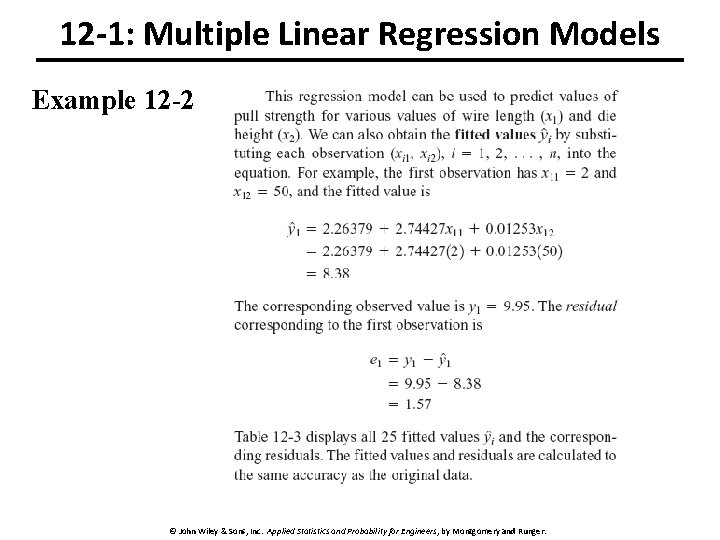

12 -1: Multiple Linear Regression Models Example 12 -2 25 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

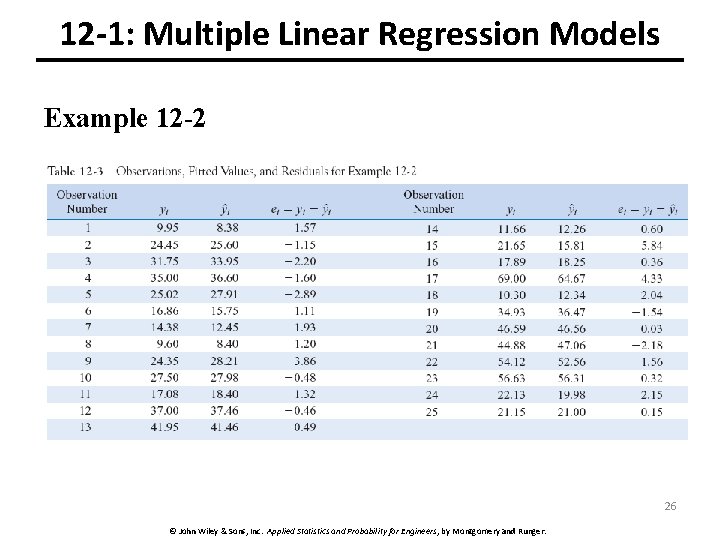

12 -1: Multiple Linear Regression Models Example 12 -2 26 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

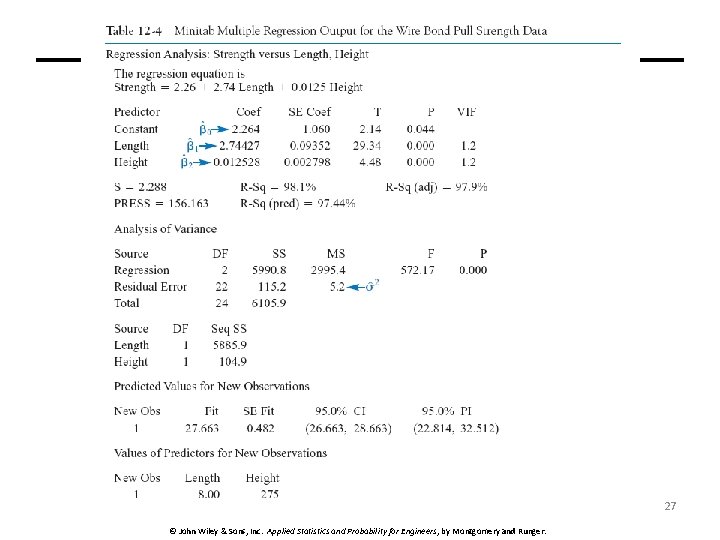

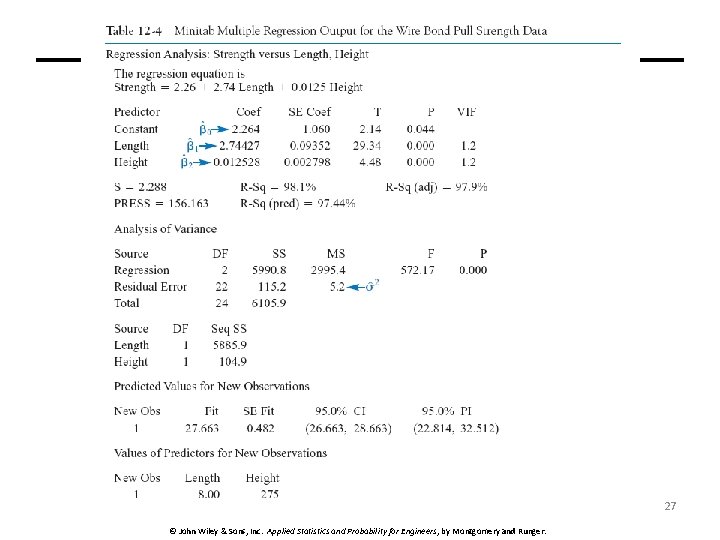

27 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

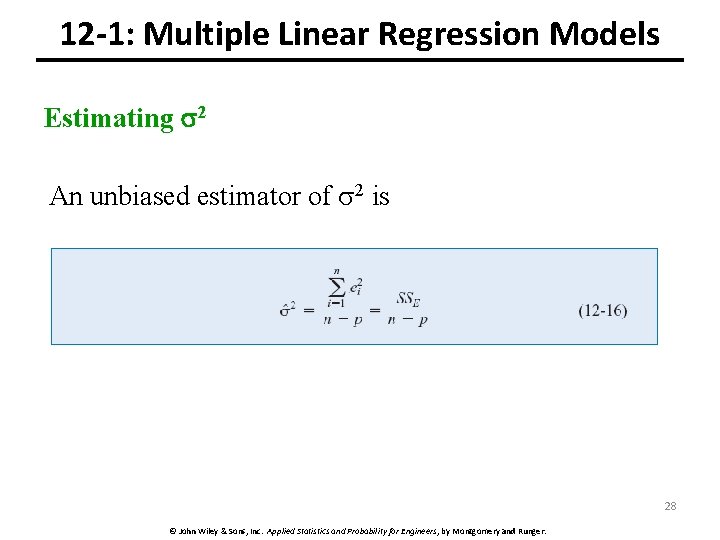

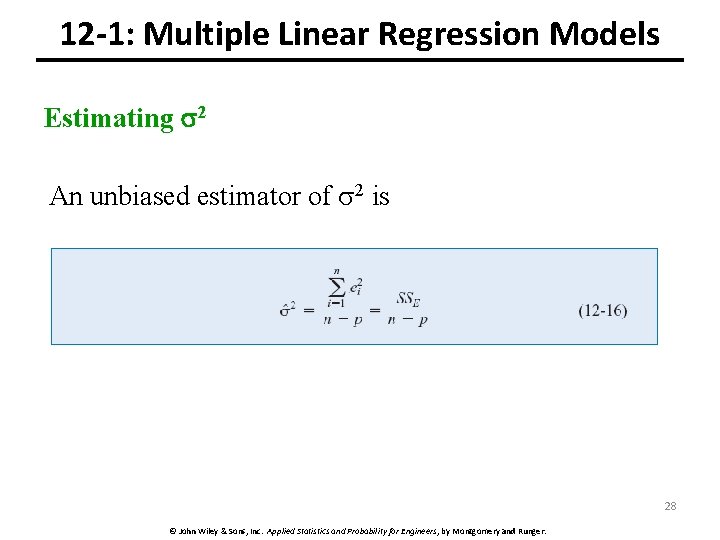

12 -1: Multiple Linear Regression Models Estimating 2 An unbiased estimator of 2 is 28 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

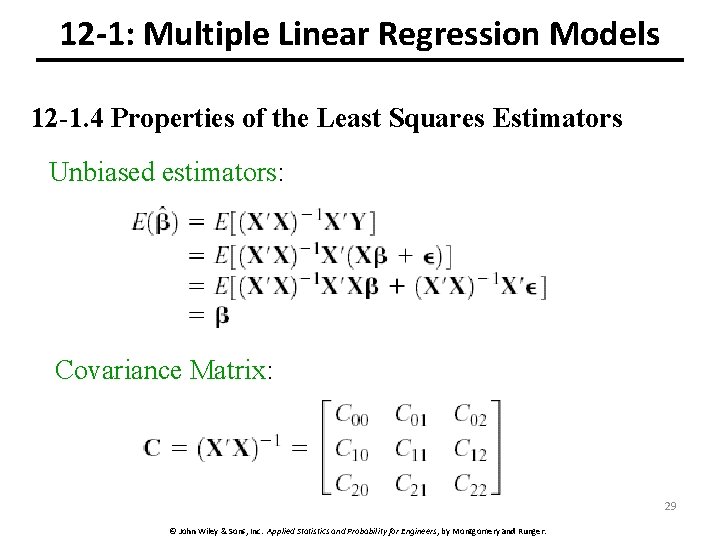

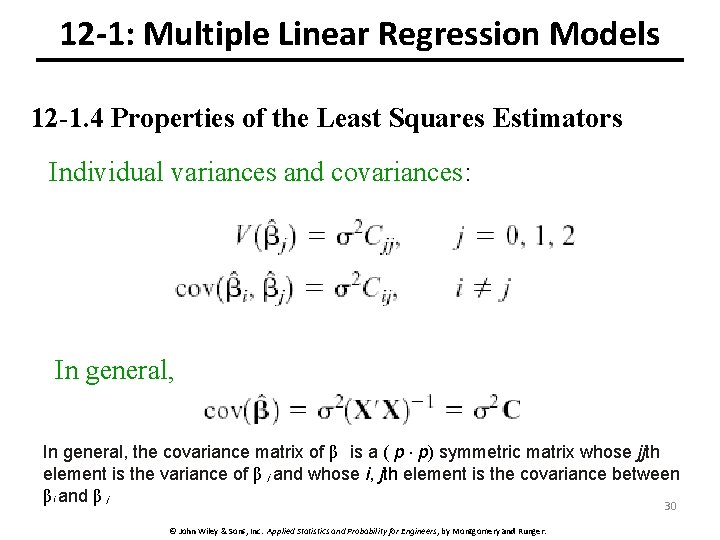

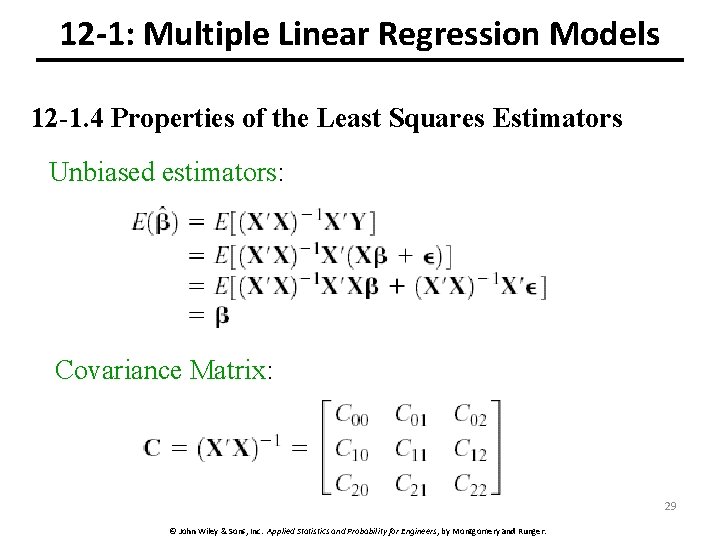

12 -1: Multiple Linear Regression Models 12 -1. 4 Properties of the Least Squares Estimators Unbiased estimators: Covariance Matrix: 29 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

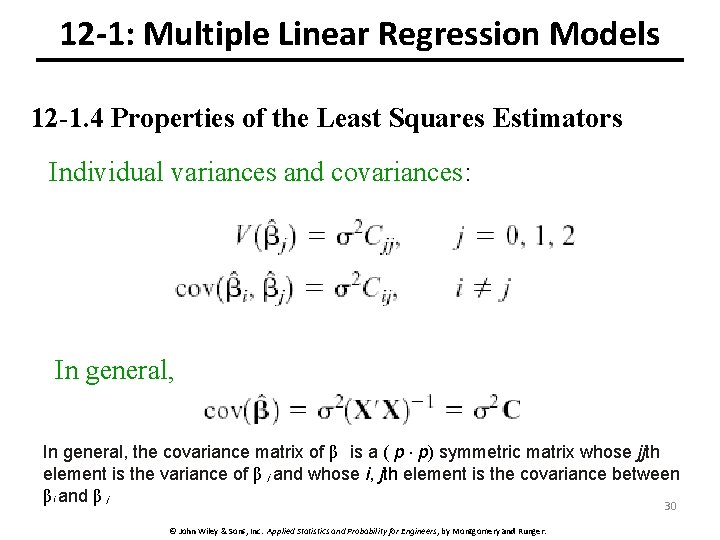

12 -1: Multiple Linear Regression Models 12 -1. 4 Properties of the Least Squares Estimators Individual variances and covariances: In general, the covariance matrix of β is a ( p × p) symmetric matrix whose jjth element is the variance of β j and whose i, jth element is the covariance between β i and β j 30 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

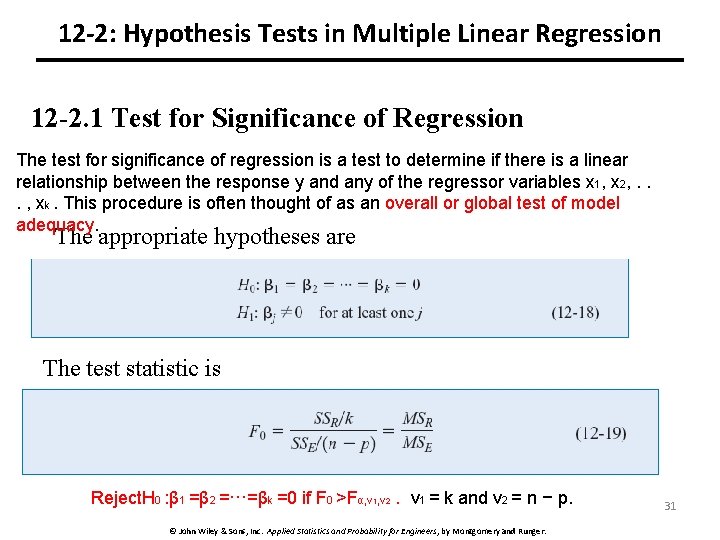

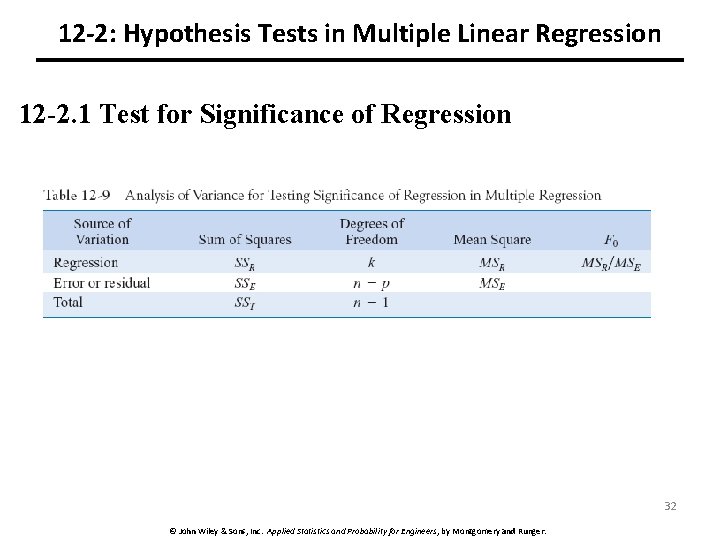

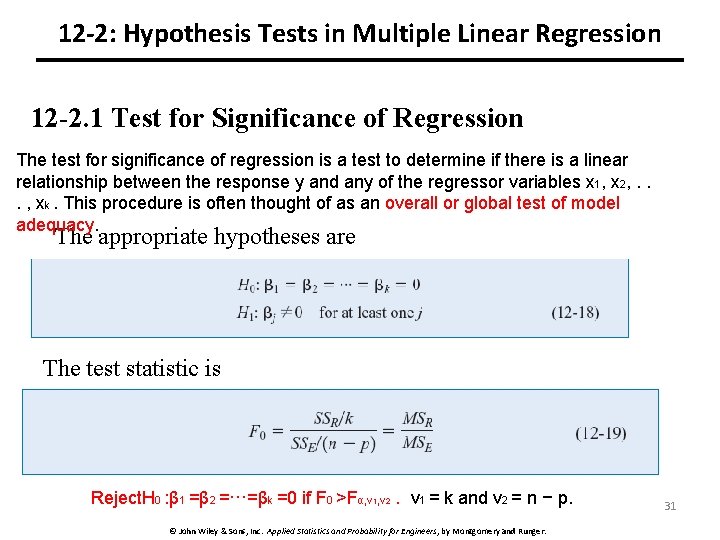

12 -2: Hypothesis Tests in Multiple Linear Regression 12 -2. 1 Test for Significance of Regression The test for significance of regression is a test to determine if there is a linear relationship between the response y and any of the regressor variables x 1, x 2, . . . , xk. This procedure is often thought of as an overall or global test of model adequacy. The appropriate hypotheses are The test statistic is Reject. H 0 : β 1 =β 2 =···=βk =0 if F 0 >Fα, ν 1, ν 2. ν 1 = k and ν 2 = n − p. © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger. 31

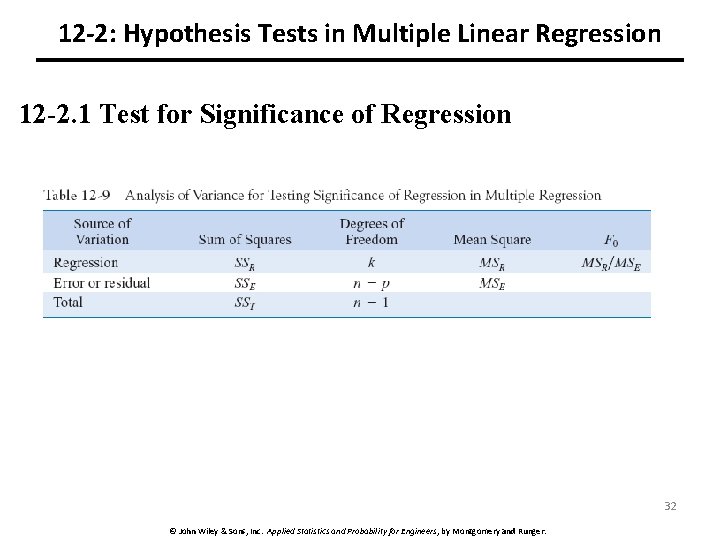

12 -2: Hypothesis Tests in Multiple Linear Regression 12 -2. 1 Test for Significance of Regression 32 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

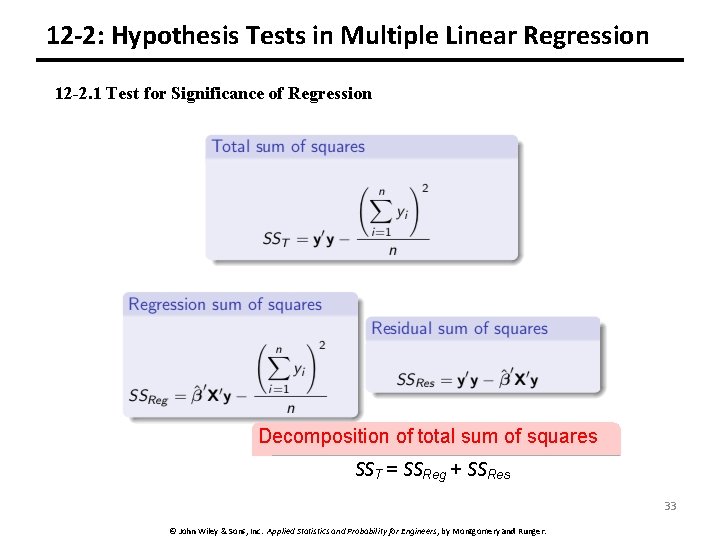

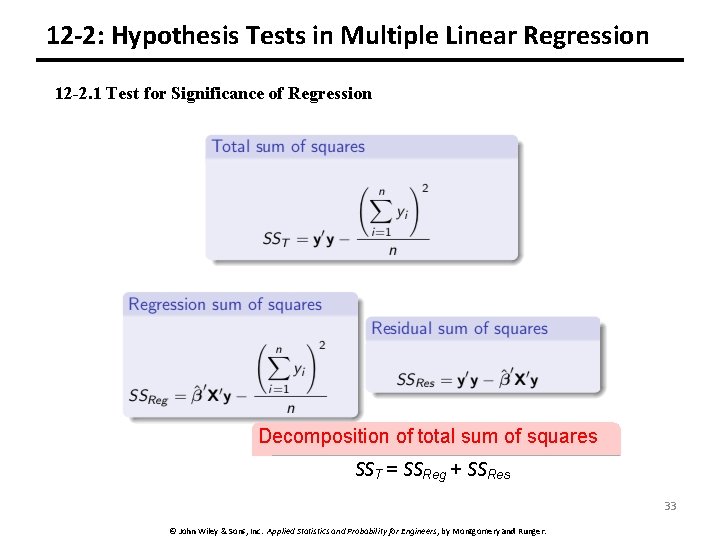

12 -2: Hypothesis Tests in Multiple Linear Regression 12 -2. 1 Test for Significance of Regression Decomposition of total sum of squares SST = SSReg + SSRes 33 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

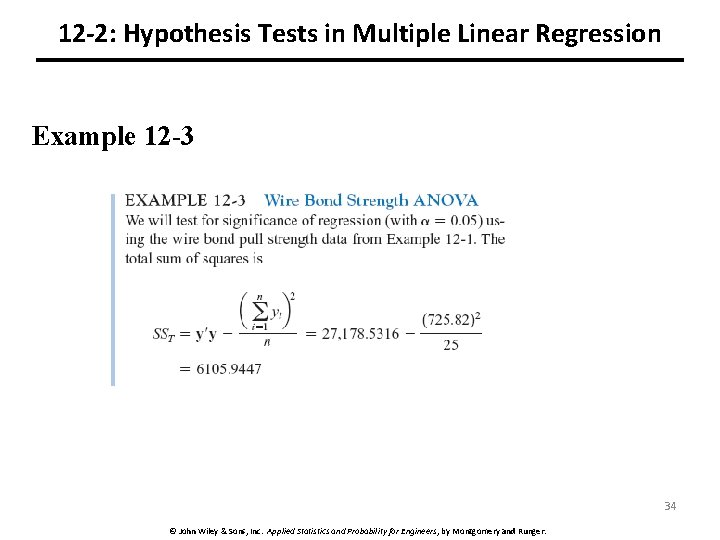

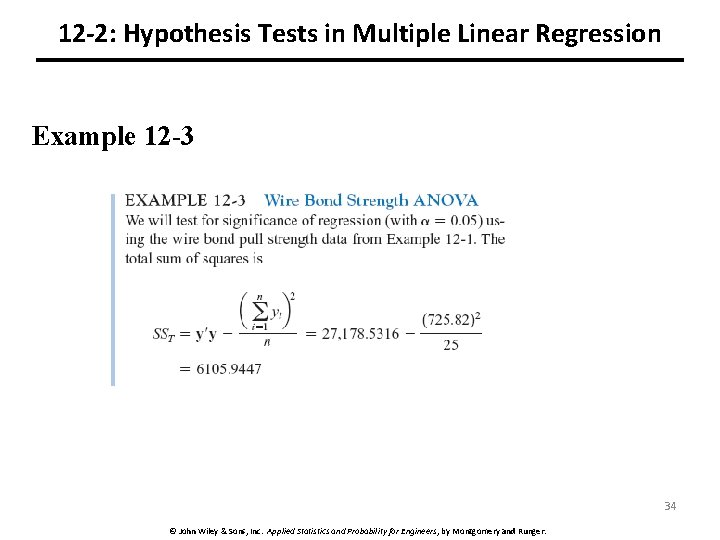

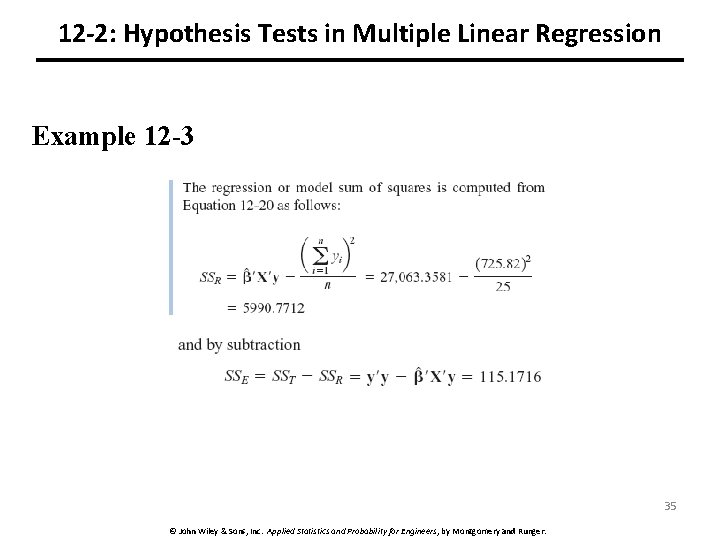

12 -2: Hypothesis Tests in Multiple Linear Regression Example 12 -3 34 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

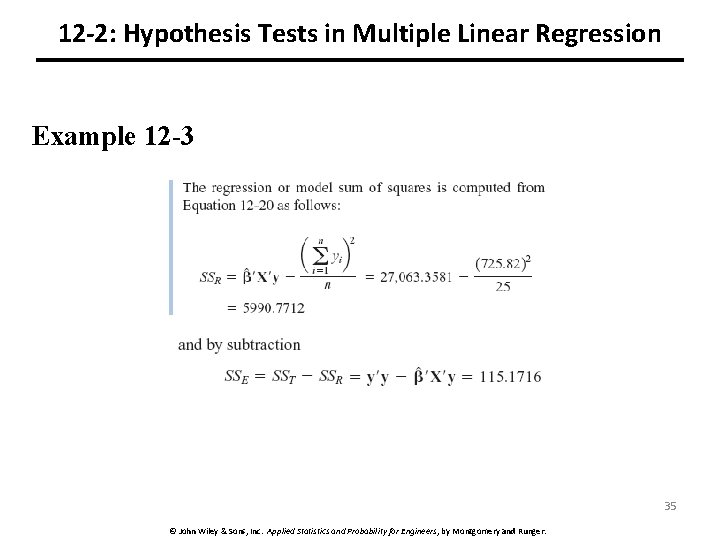

12 -2: Hypothesis Tests in Multiple Linear Regression Example 12 -3 35 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

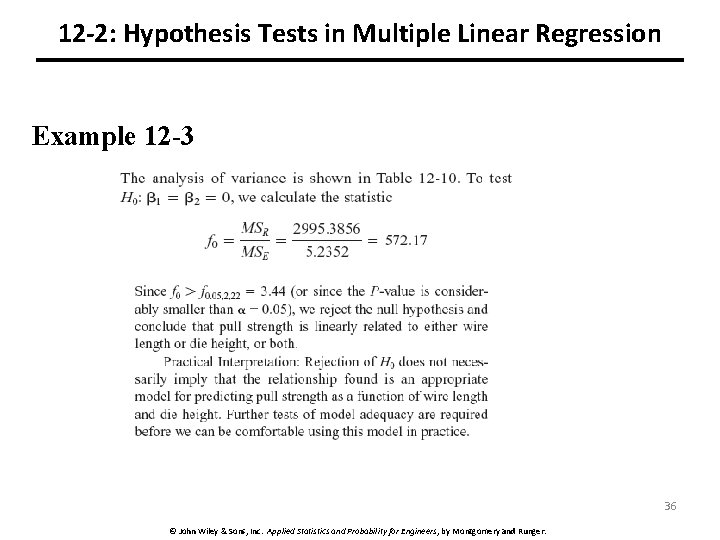

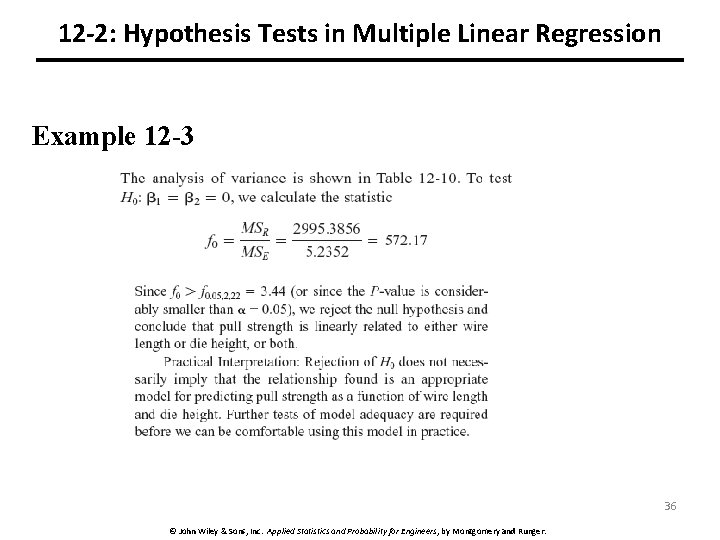

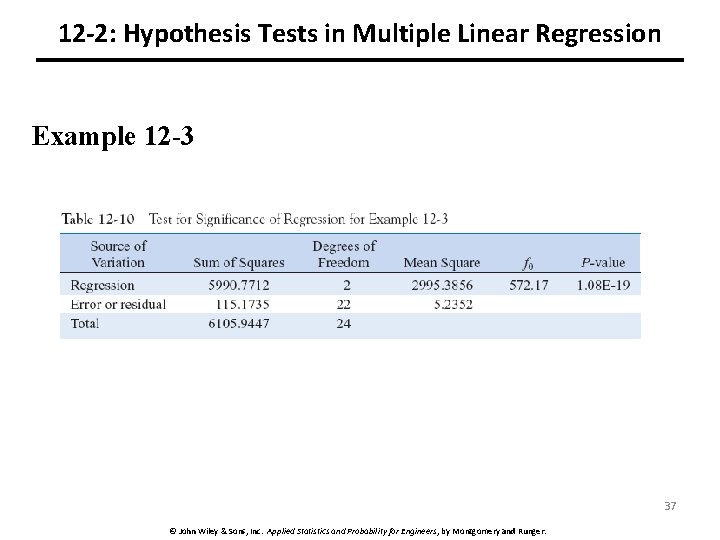

12 -2: Hypothesis Tests in Multiple Linear Regression Example 12 -3 36 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

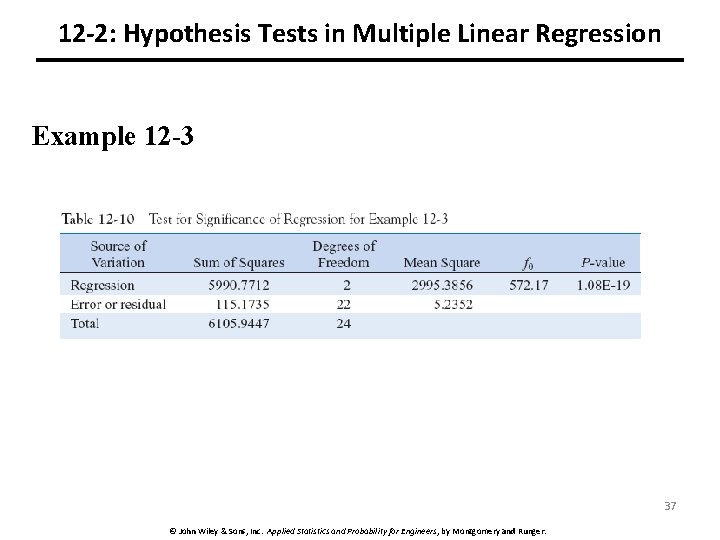

12 -2: Hypothesis Tests in Multiple Linear Regression Example 12 -3 37 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

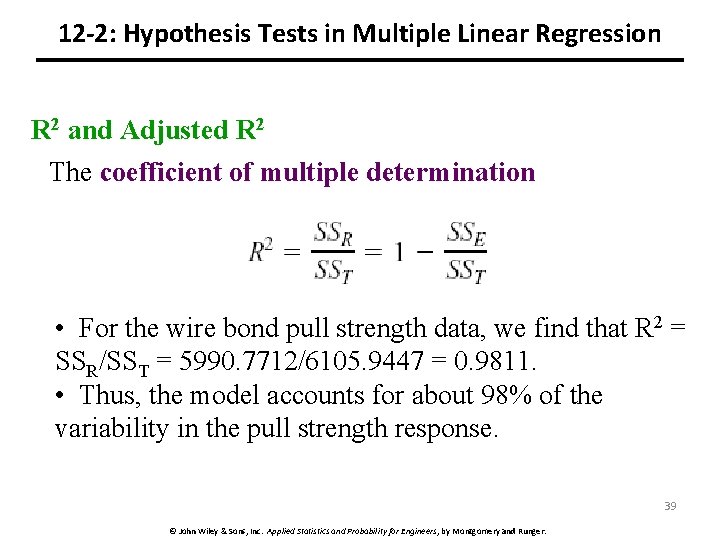

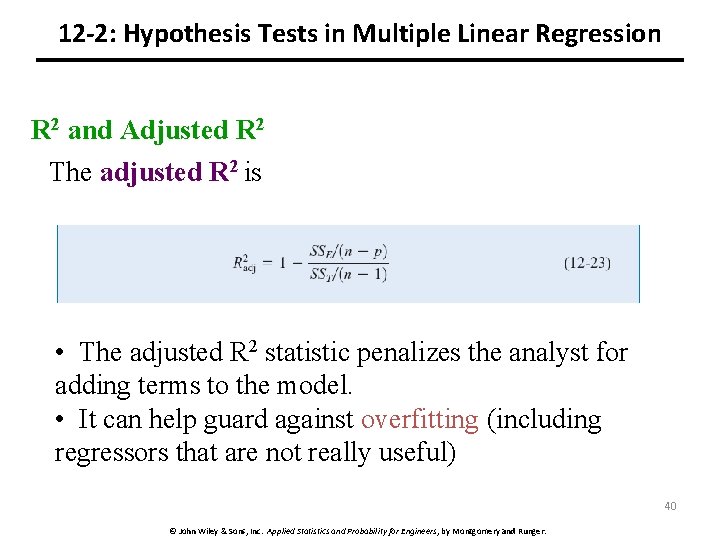

12 -2: Hypothesis Tests in Multiple Linear Regression R 2 and Adjusted R 2 • Two other ways to assess the overall adequacy of the model are R 2 and adjusted R 2, denoted R 2 Adj. • In general, R 2 never decreases when a regressor is added to the model, regardless of the value of the contribution of that variable. Therefore, it is difficult to judge whether an increase in R 2 is really telling us anything important. • R 2 Adj will only increase on adding a variable to the model if the addition of the variable reduces the residual mean square. 38 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

12 -2: Hypothesis Tests in Multiple Linear Regression R 2 and Adjusted R 2 The coefficient of multiple determination • For the wire bond pull strength data, we find that R 2 = SSR/SST = 5990. 7712/6105. 9447 = 0. 9811. • Thus, the model accounts for about 98% of the variability in the pull strength response. 39 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

12 -2: Hypothesis Tests in Multiple Linear Regression R 2 and Adjusted R 2 The adjusted R 2 is • The adjusted R 2 statistic penalizes the analyst for adding terms to the model. • It can help guard against overfitting (including regressors that are not really useful) 40 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

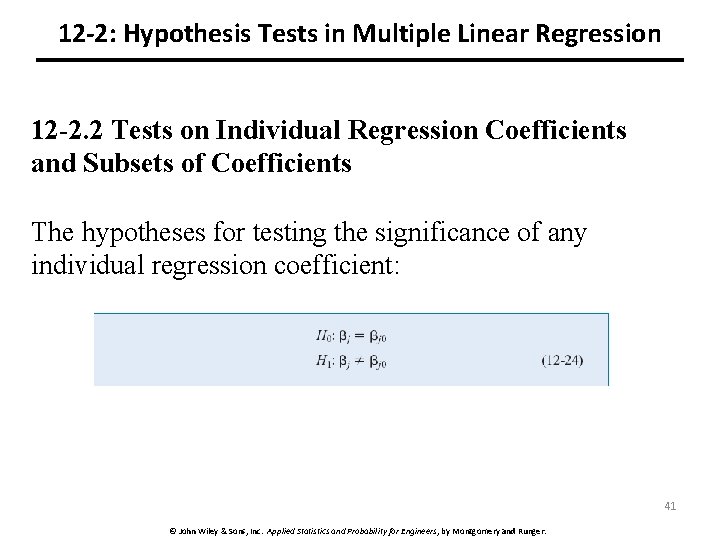

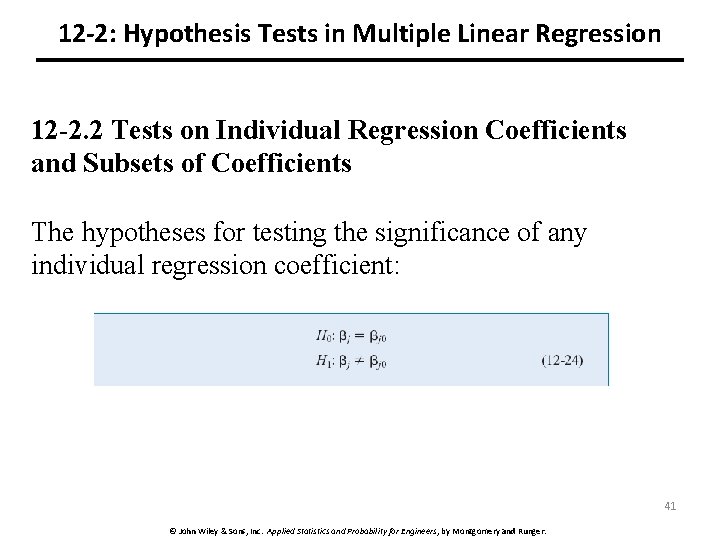

12 -2: Hypothesis Tests in Multiple Linear Regression 12 -2. 2 Tests on Individual Regression Coefficients and Subsets of Coefficients The hypotheses for testing the significance of any individual regression coefficient: 41 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

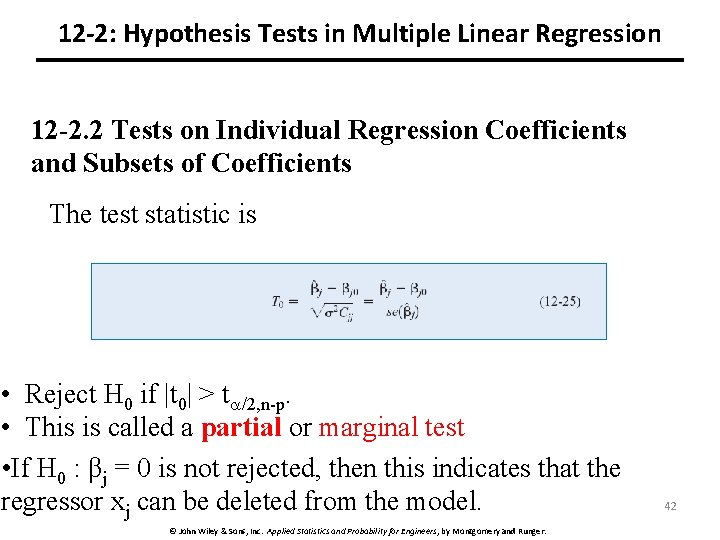

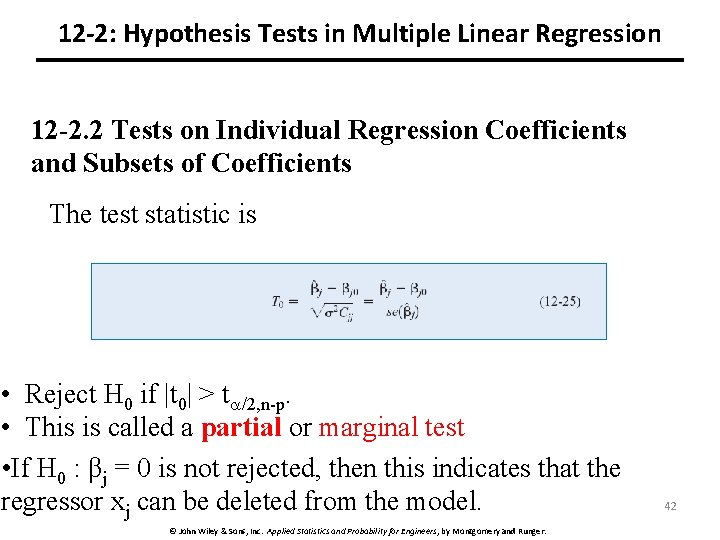

12 -2: Hypothesis Tests in Multiple Linear Regression 12 -2. 2 Tests on Individual Regression Coefficients and Subsets of Coefficients The test statistic is • Reject H 0 if |t 0| > t /2, n-p. • This is called a partial or marginal test • If H 0 : βj = 0 is not rejected, then this indicates that the regressor xj can be deleted from the model. © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger. 42

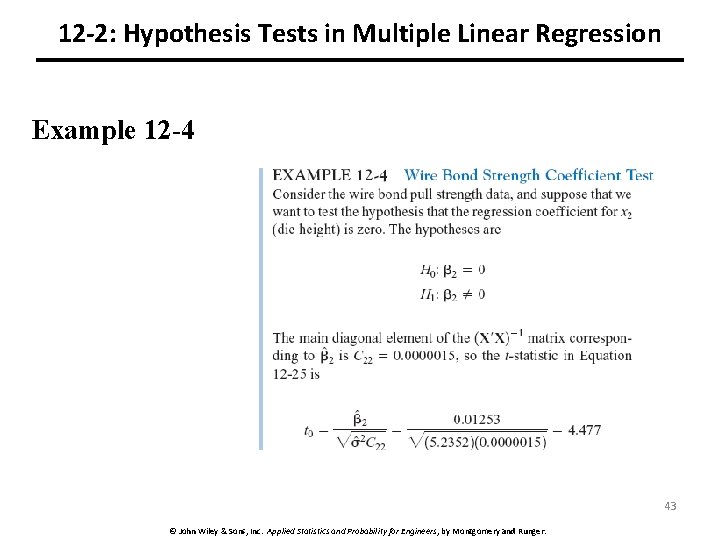

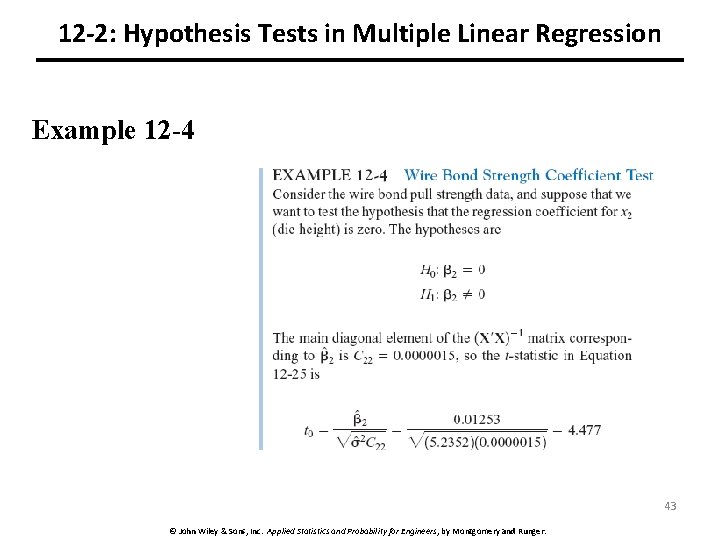

12 -2: Hypothesis Tests in Multiple Linear Regression Example 12 -4 43 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

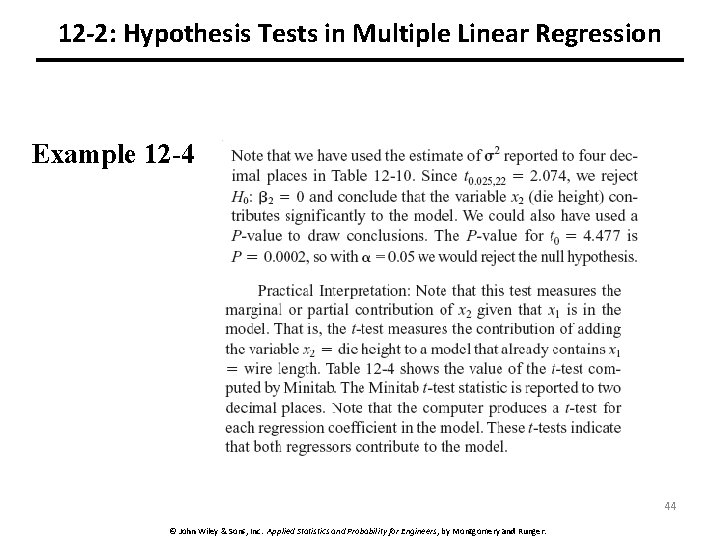

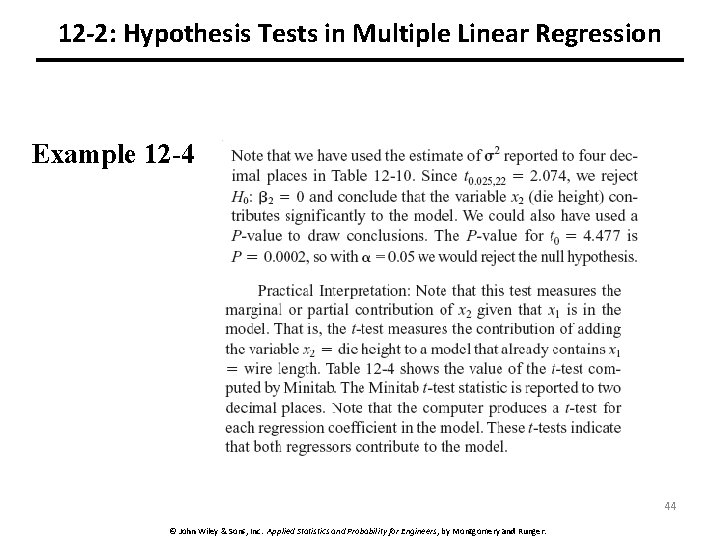

12 -2: Hypothesis Tests in Multiple Linear Regression Example 12 -4 44 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

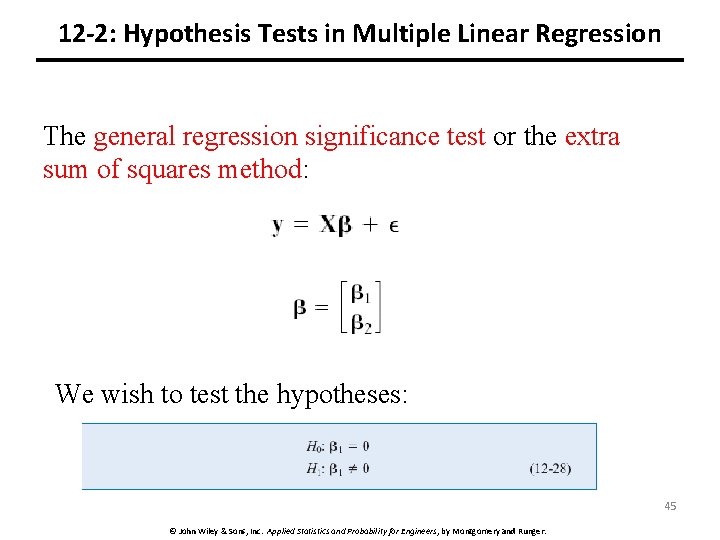

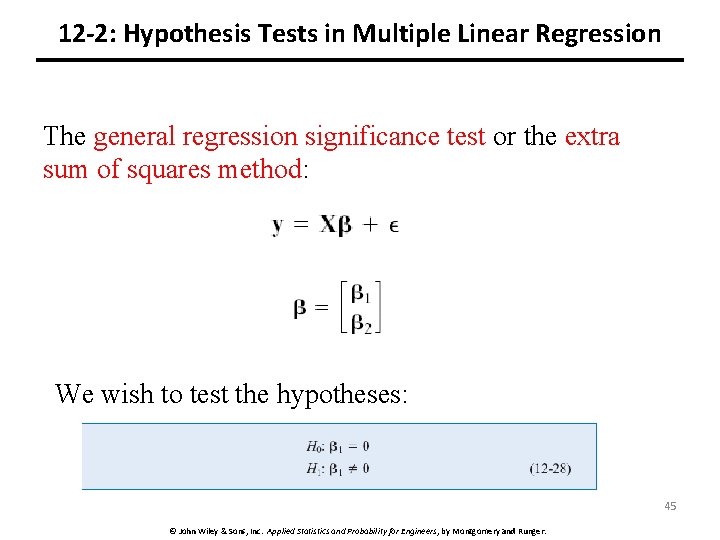

12 -2: Hypothesis Tests in Multiple Linear Regression The general regression significance test or the extra sum of squares method: We wish to test the hypotheses: 45 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

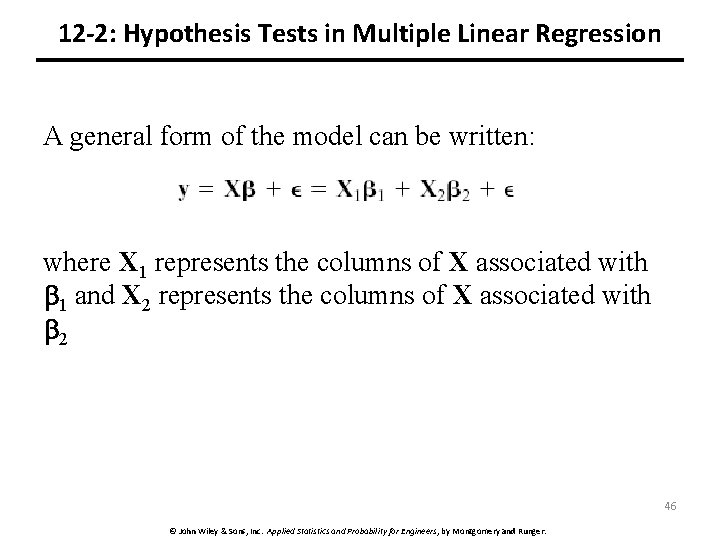

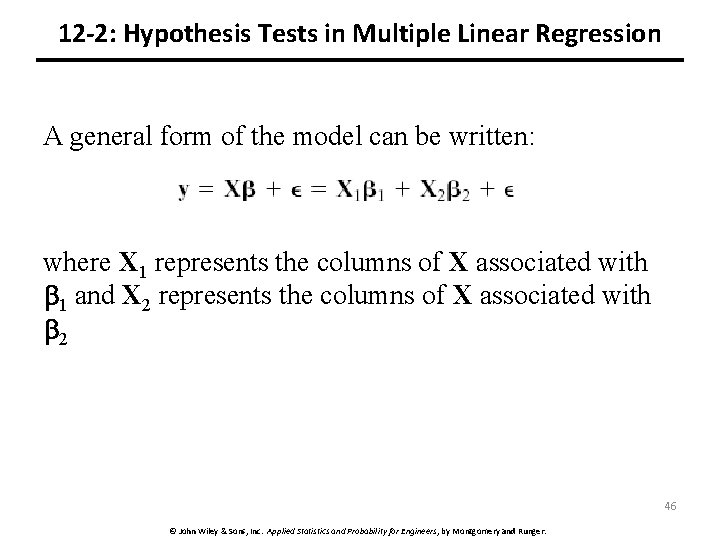

12 -2: Hypothesis Tests in Multiple Linear Regression A general form of the model can be written: where X 1 represents the columns of X associated with 1 and X 2 represents the columns of X associated with 2 46 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

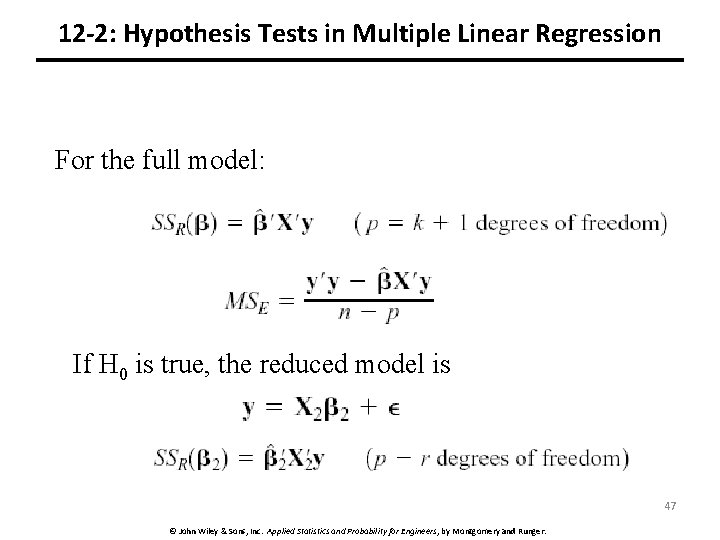

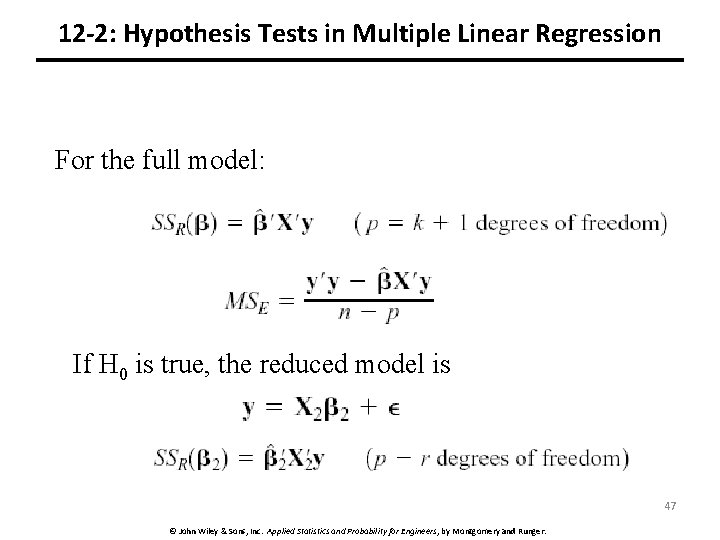

12 -2: Hypothesis Tests in Multiple Linear Regression For the full model: If H 0 is true, the reduced model is 47 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

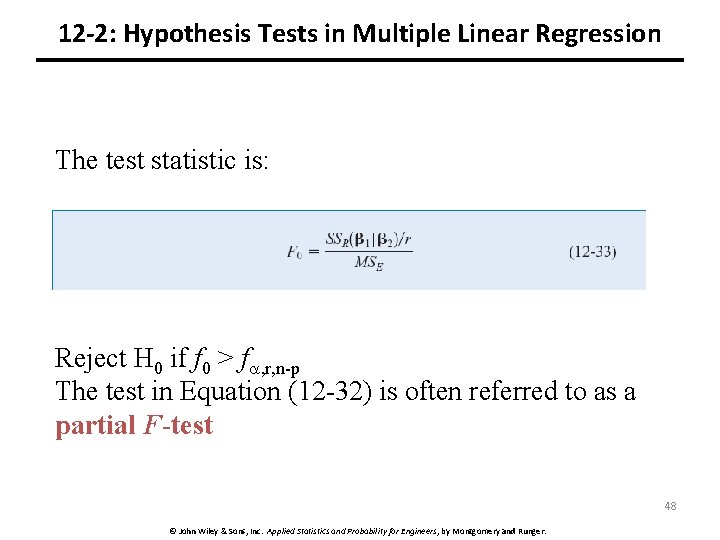

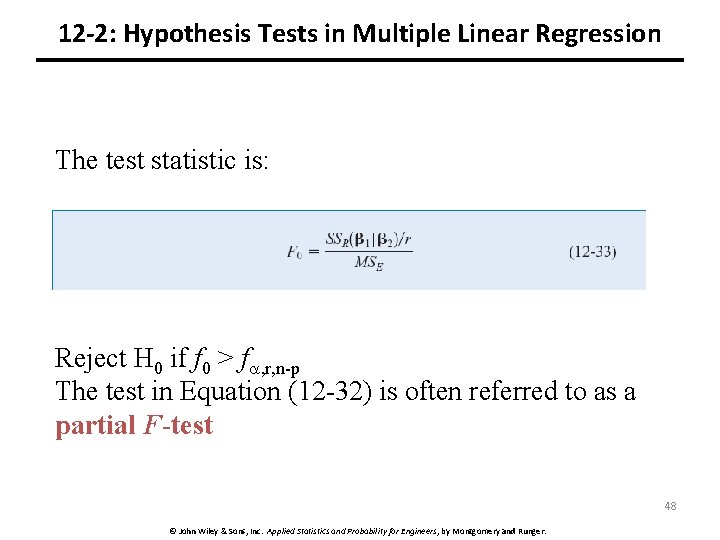

12 -2: Hypothesis Tests in Multiple Linear Regression The test statistic is: Reject H 0 if f 0 > f , r, n-p The test in Equation (12 -32) is often referred to as a partial F-test 48 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

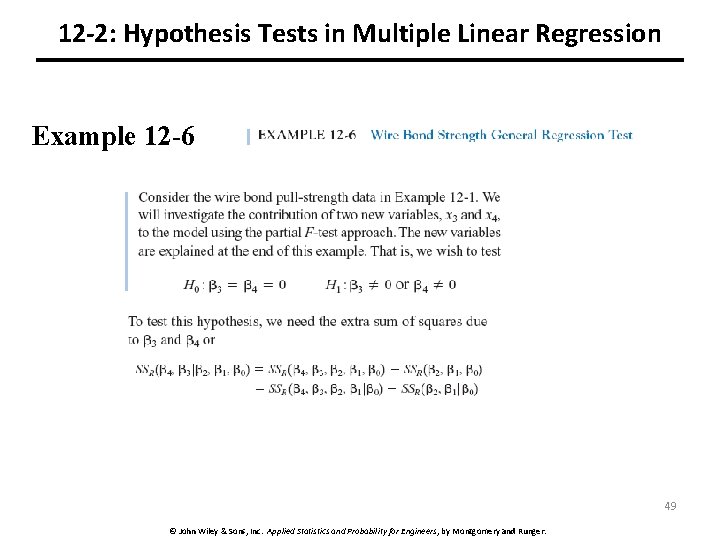

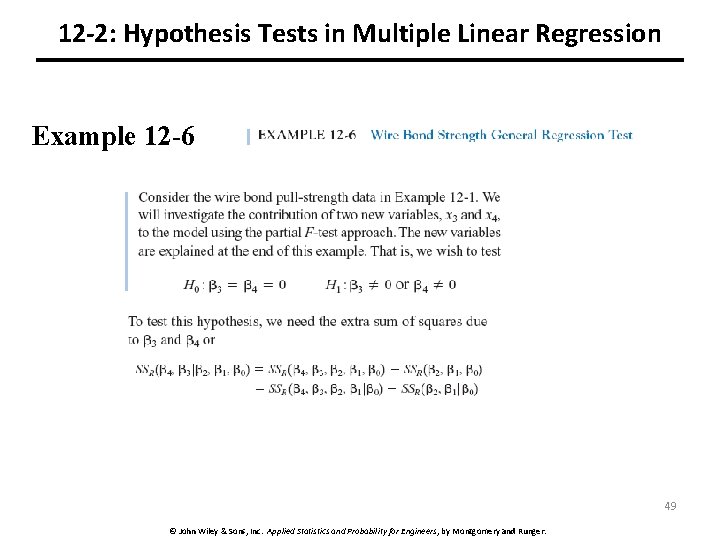

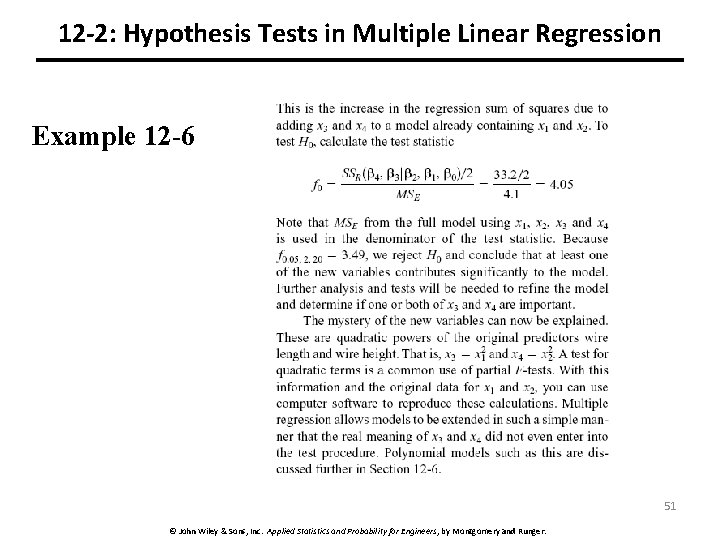

12 -2: Hypothesis Tests in Multiple Linear Regression Example 12 -6 49 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

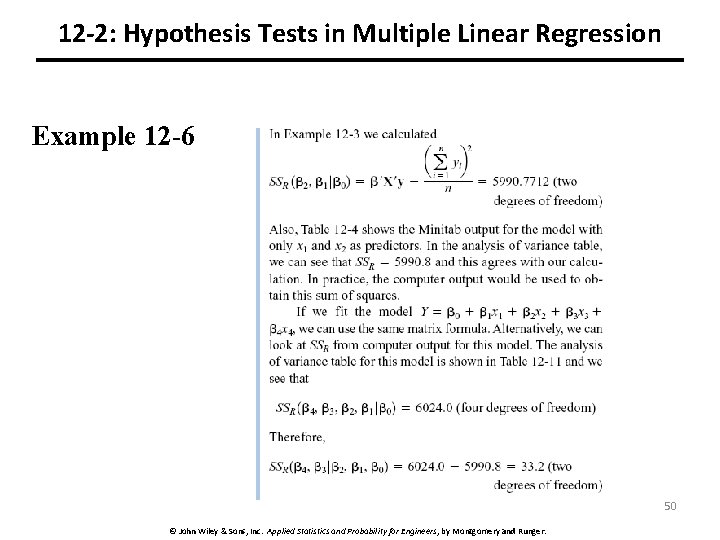

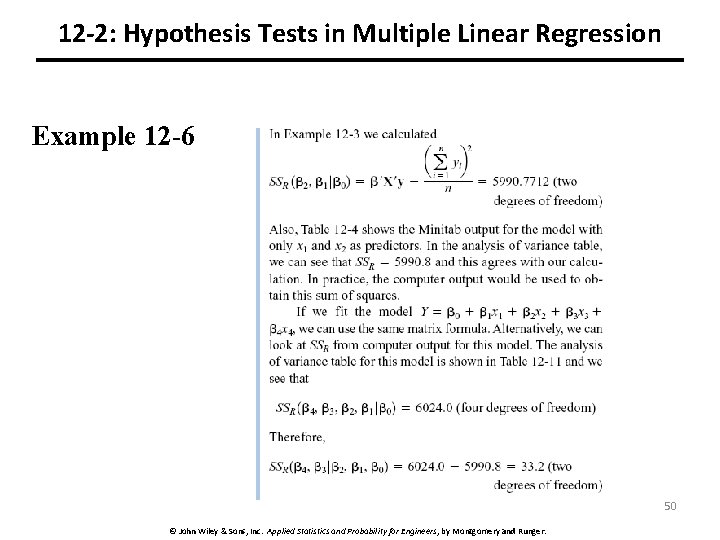

12 -2: Hypothesis Tests in Multiple Linear Regression Example 12 -6 50 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

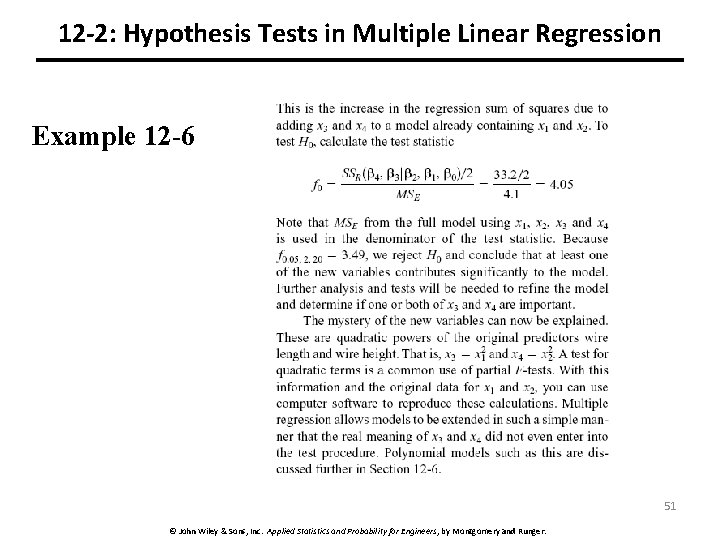

12 -2: Hypothesis Tests in Multiple Linear Regression Example 12 -6 51 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

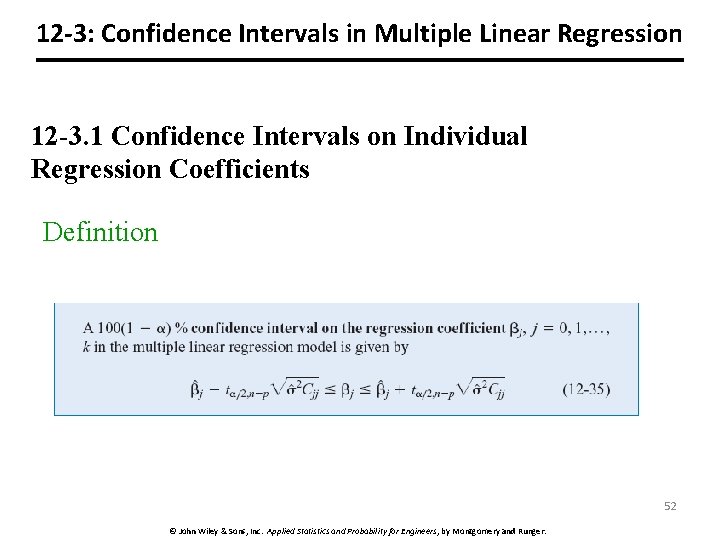

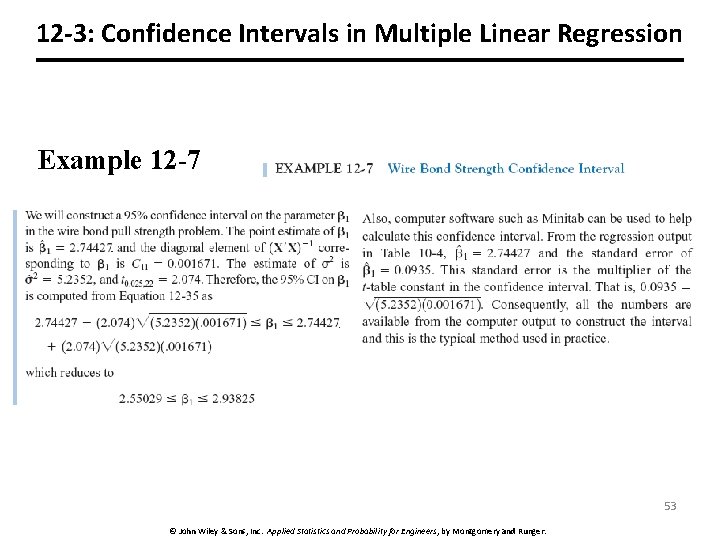

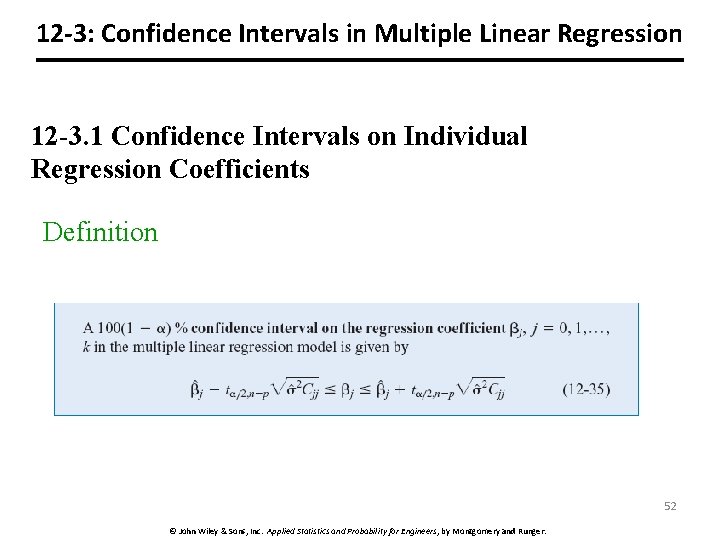

12 -3: Confidence Intervals in Multiple Linear Regression 12 -3. 1 Confidence Intervals on Individual Regression Coefficients Definition 52 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

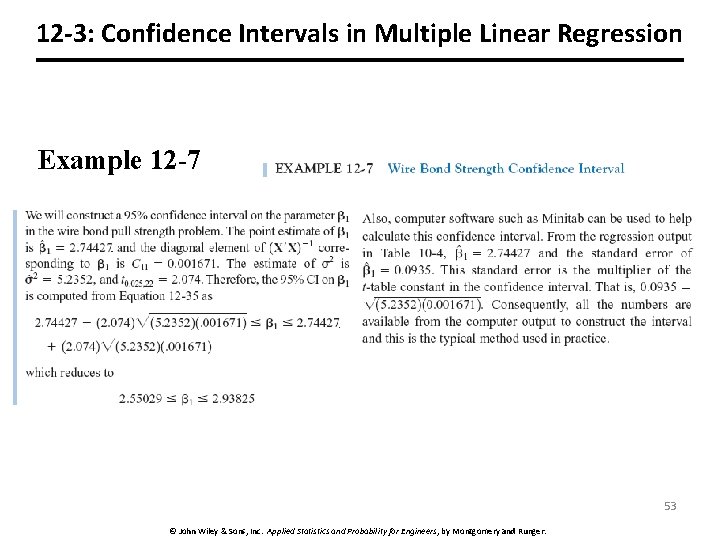

12 -3: Confidence Intervals in Multiple Linear Regression Example 12 -7 53 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

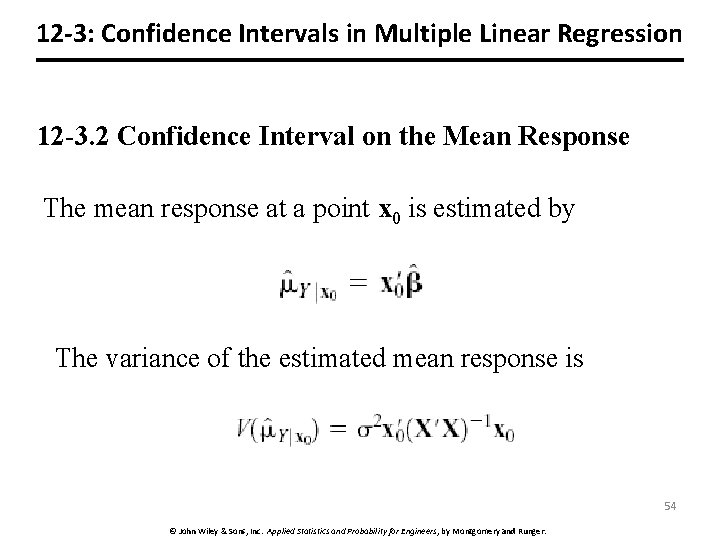

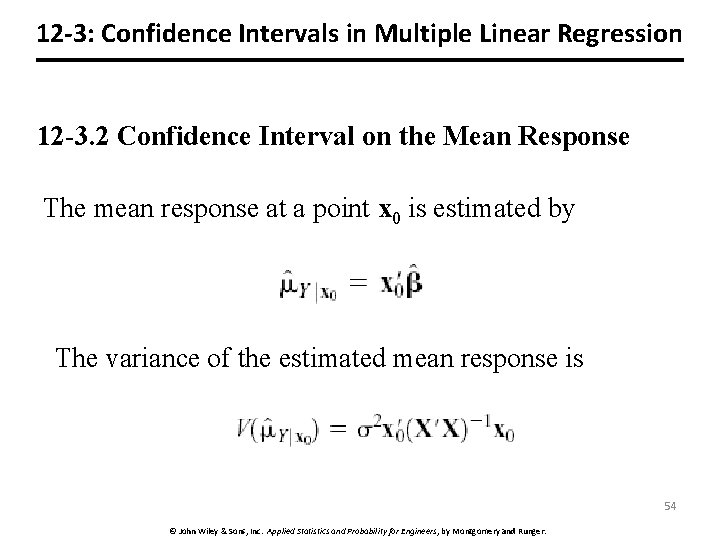

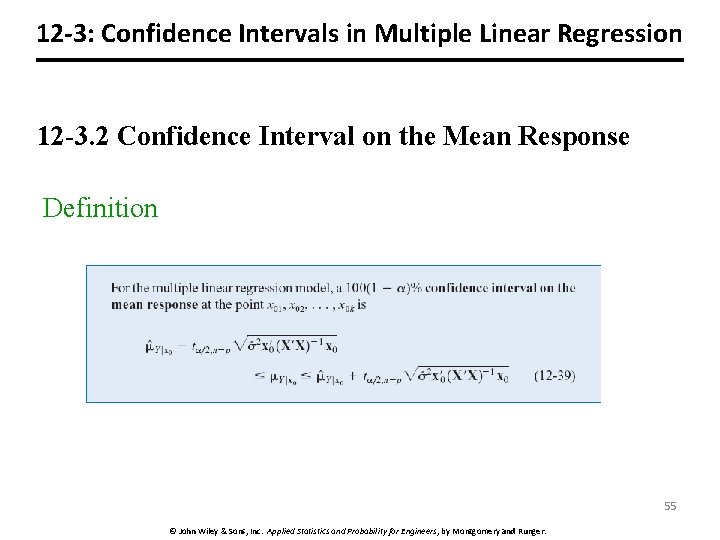

12 -3: Confidence Intervals in Multiple Linear Regression 12 -3. 2 Confidence Interval on the Mean Response The mean response at a point x 0 is estimated by The variance of the estimated mean response is 54 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

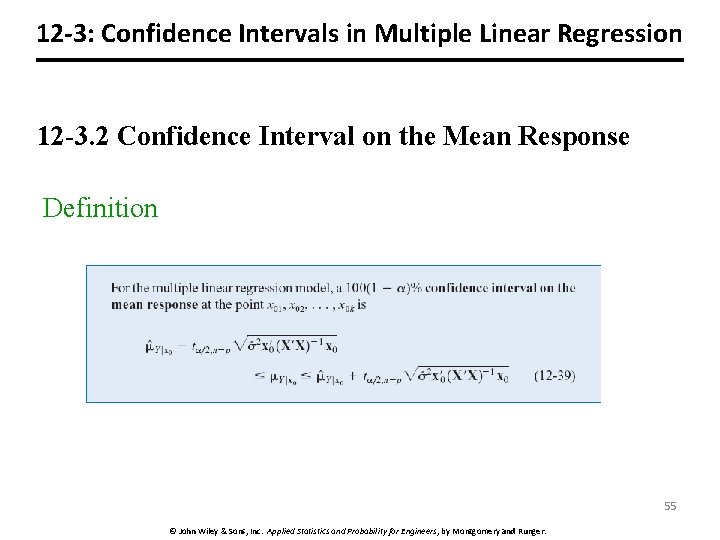

12 -3: Confidence Intervals in Multiple Linear Regression 12 -3. 2 Confidence Interval on the Mean Response Definition 55 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

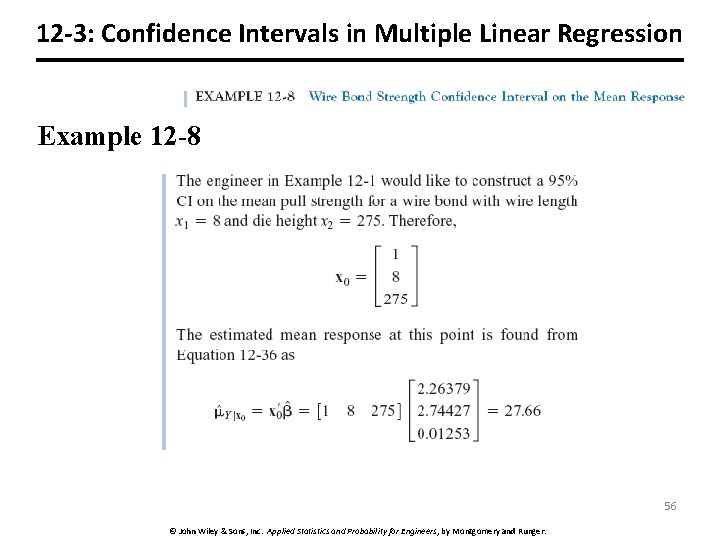

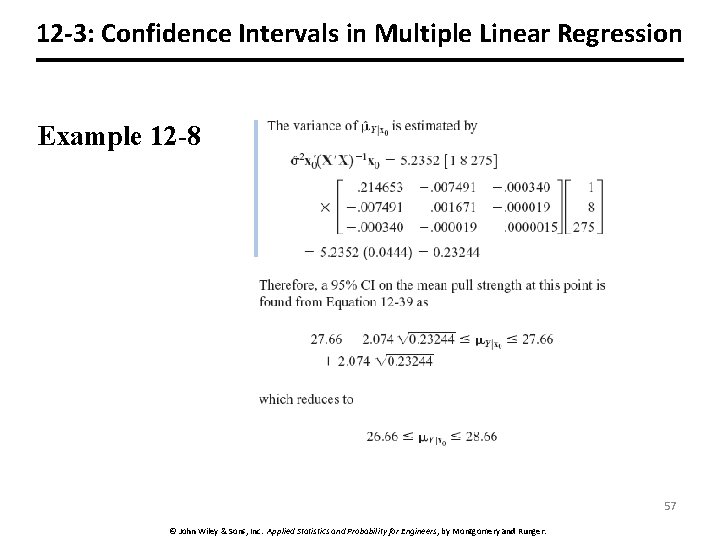

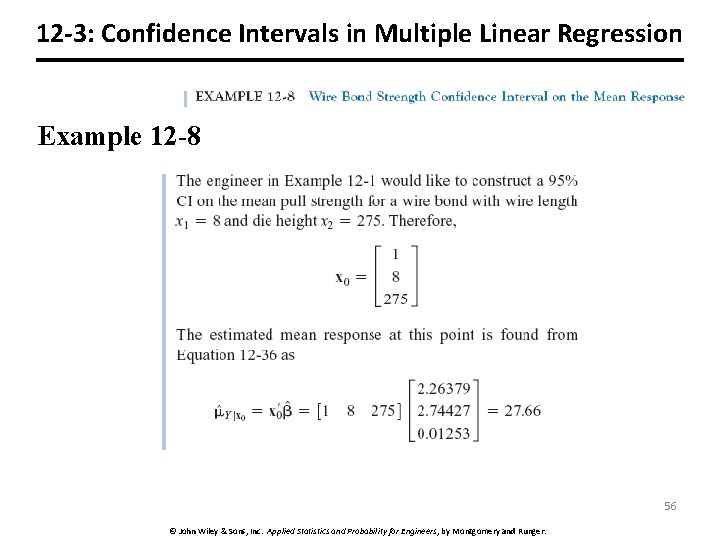

12 -3: Confidence Intervals in Multiple Linear Regression Example 12 -8 56 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

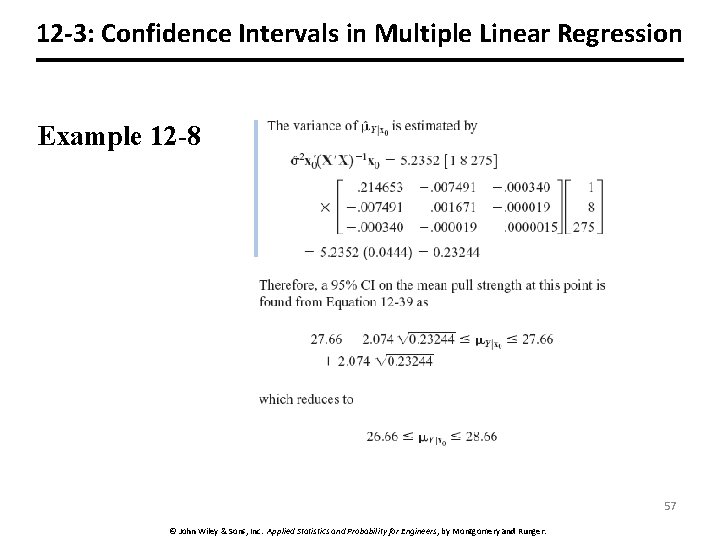

12 -3: Confidence Intervals in Multiple Linear Regression Example 12 -8 57 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

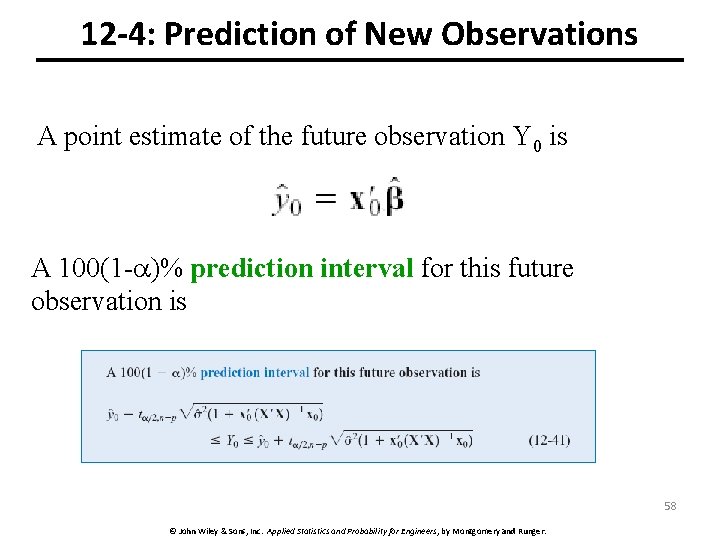

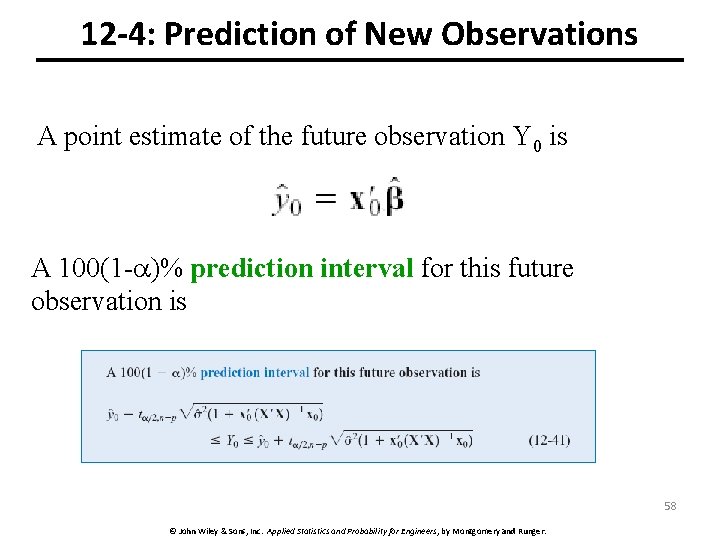

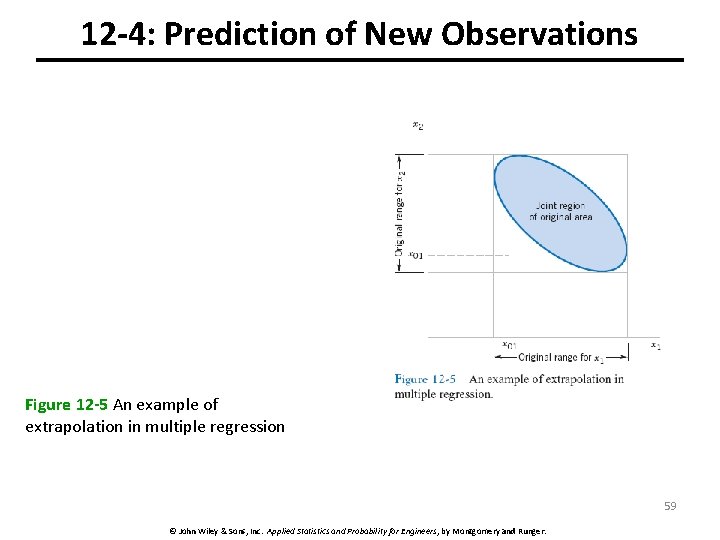

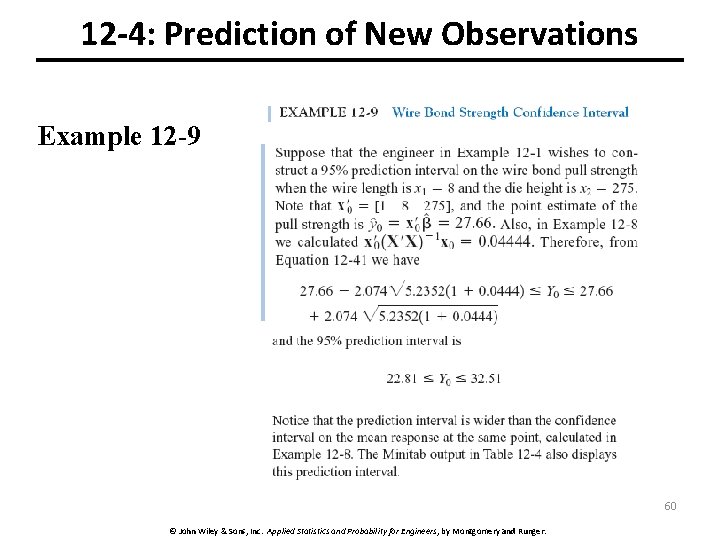

12 -4: Prediction of New Observations A point estimate of the future observation Y 0 is A 100(1 - )% prediction interval for this future observation is 58 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

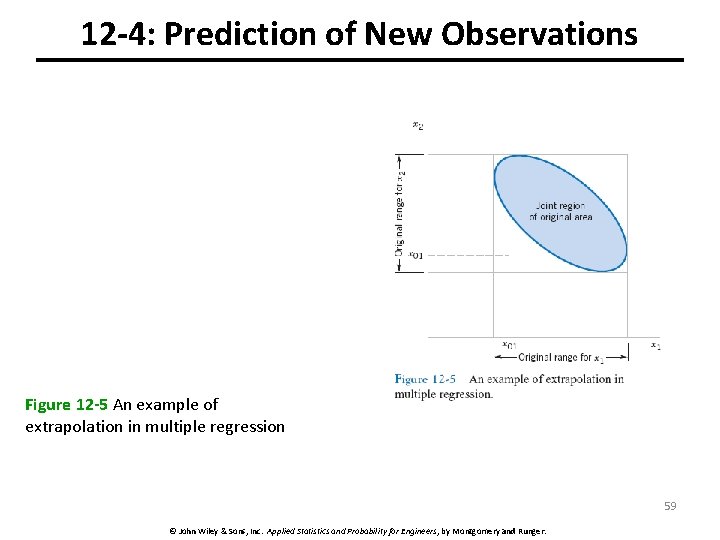

12 -4: Prediction of New Observations Figure 12 -5 An example of extrapolation in multiple regression 59 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

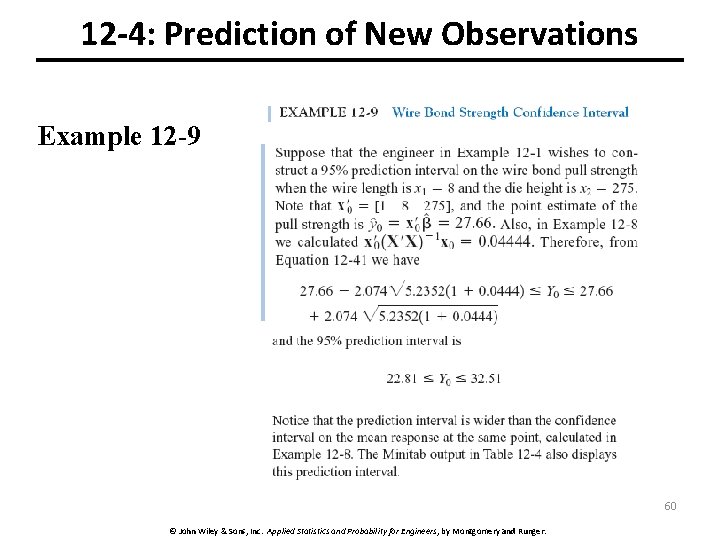

12 -4: Prediction of New Observations Example 12 -9 60 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

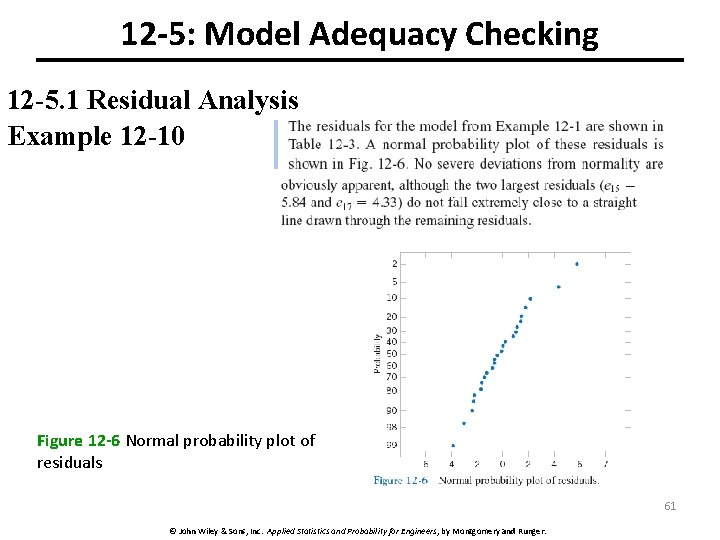

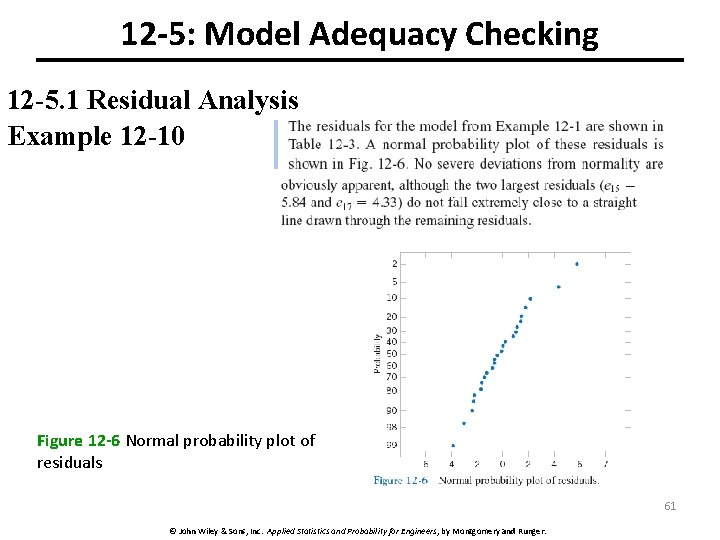

12 -5: Model Adequacy Checking 12 -5. 1 Residual Analysis Example 12 -10 Figure 12 -6 Normal probability plot of residuals 61 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

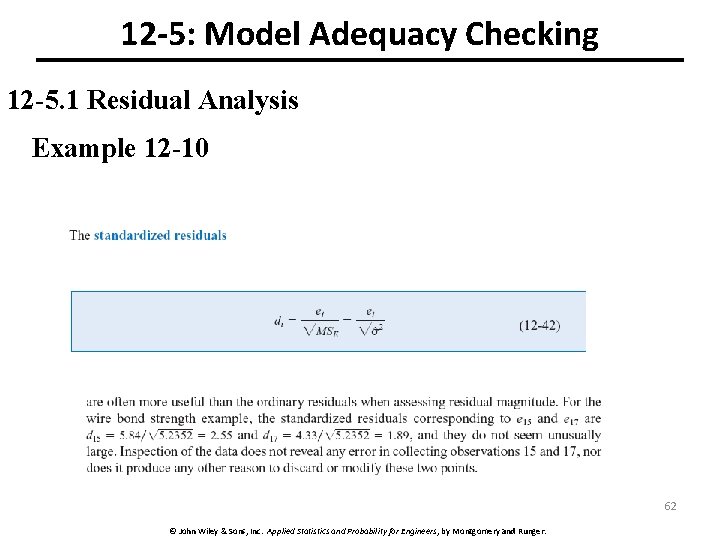

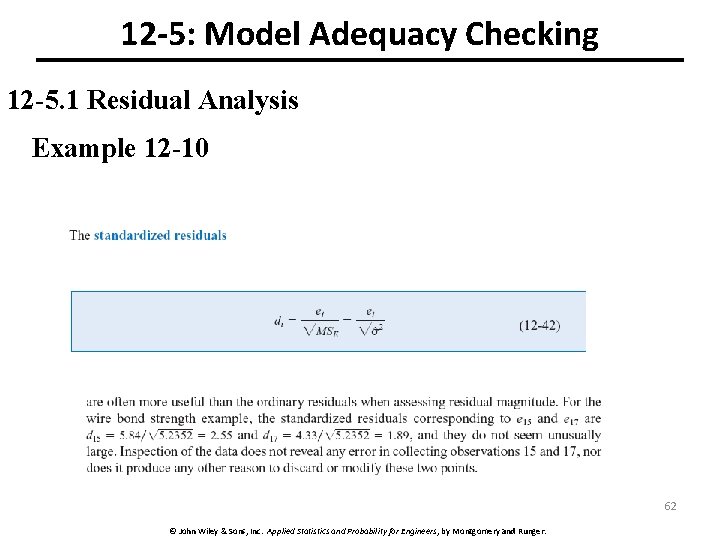

12 -5: Model Adequacy Checking 12 -5. 1 Residual Analysis Example 12 -10 62 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

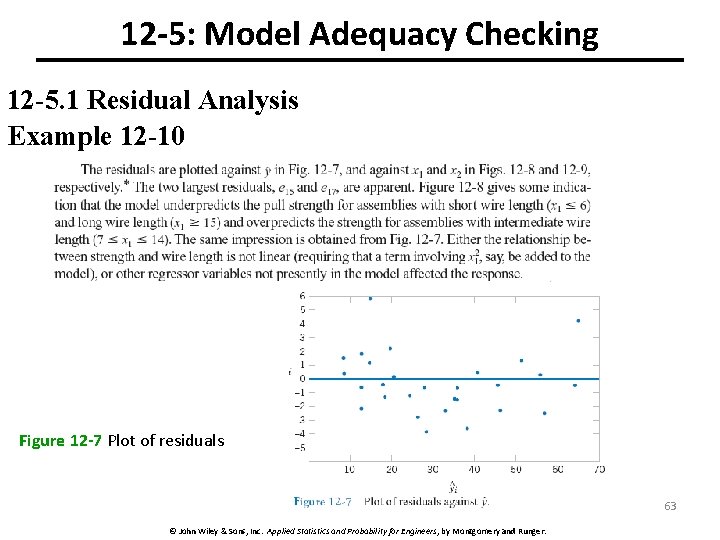

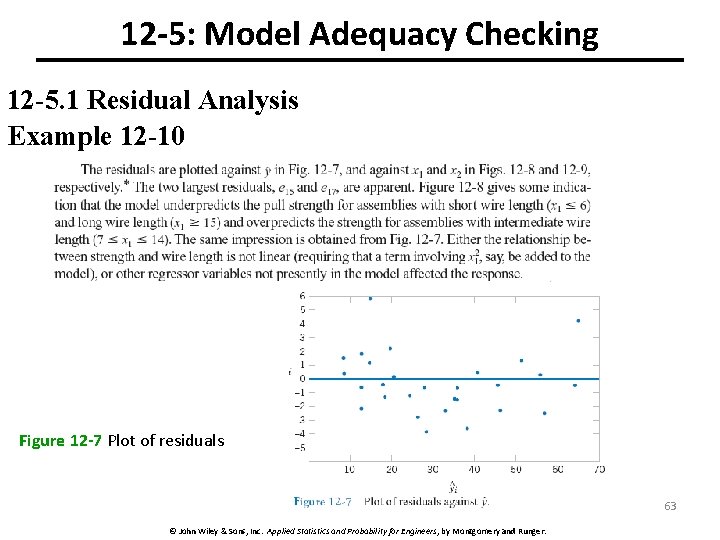

12 -5: Model Adequacy Checking 12 -5. 1 Residual Analysis Example 12 -10 Figure 12 -7 Plot of residuals 63 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

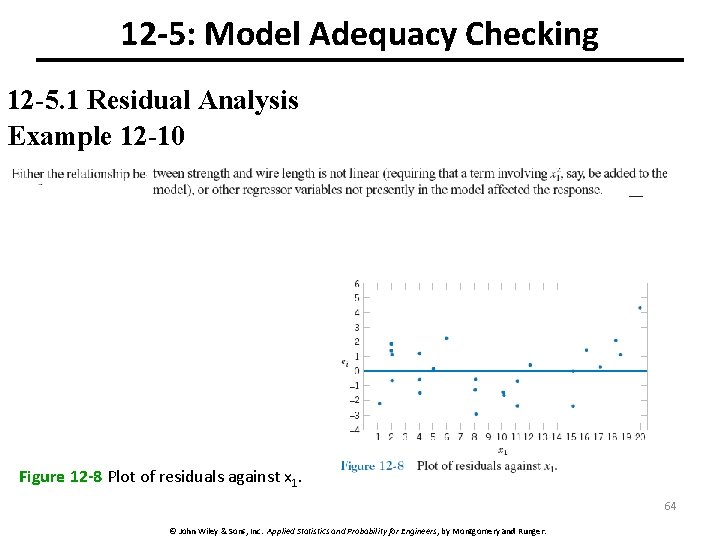

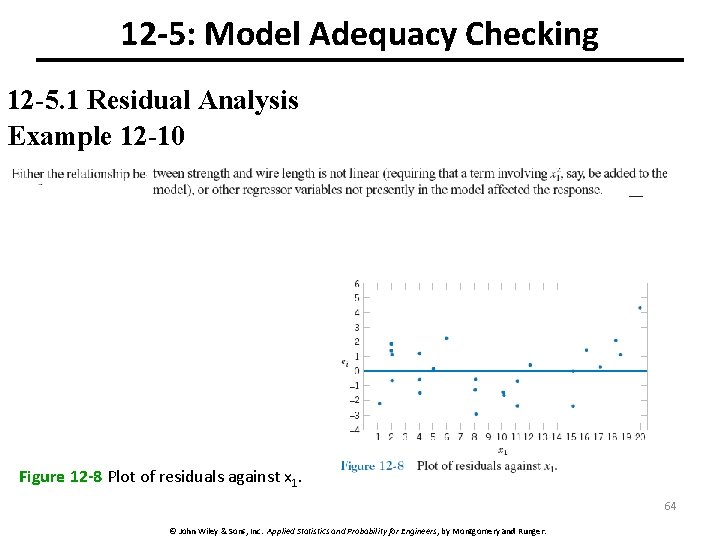

12 -5: Model Adequacy Checking 12 -5. 1 Residual Analysis Example 12 -10 Figure 12 -8 Plot of residuals against x 1. 64 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

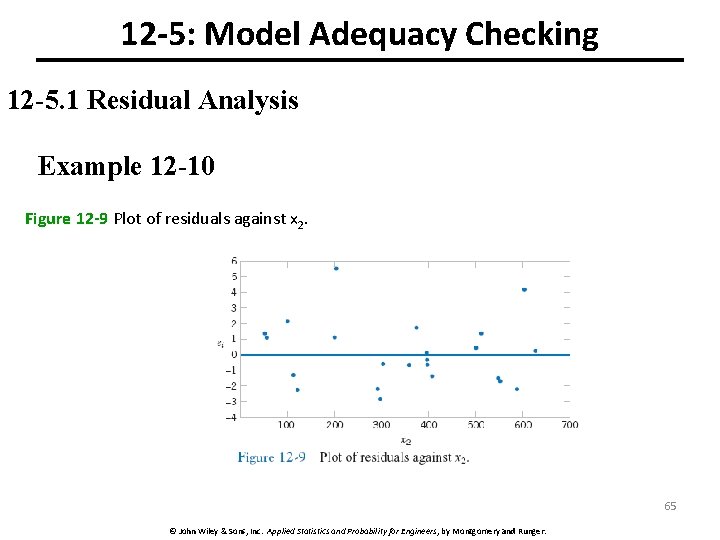

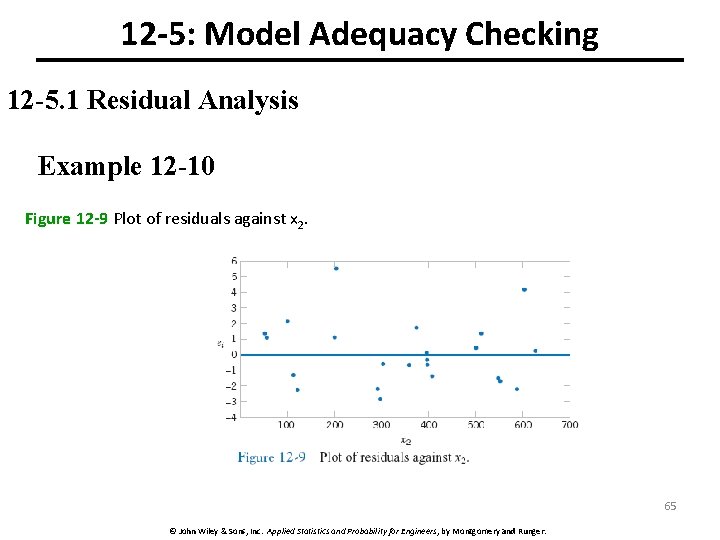

12 -5: Model Adequacy Checking 12 -5. 1 Residual Analysis Example 12 -10 Figure 12 -9 Plot of residuals against x 2. 65 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

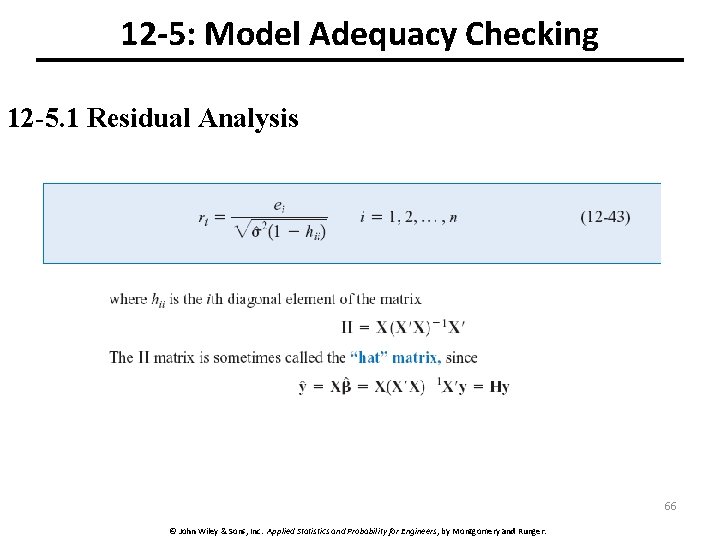

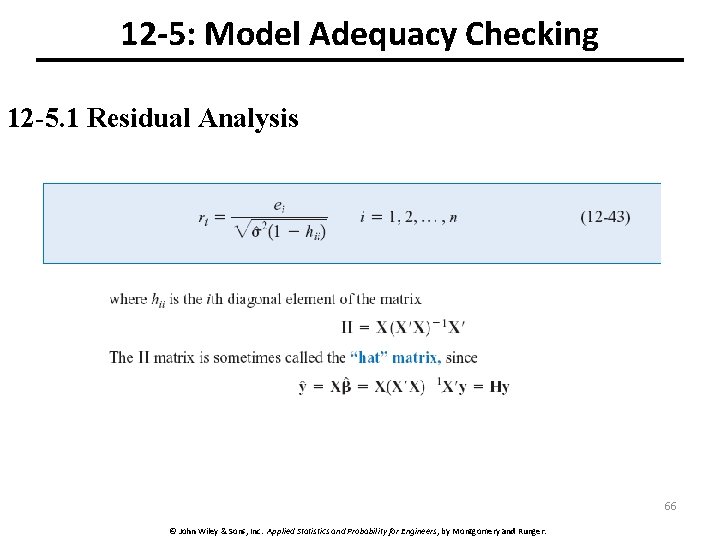

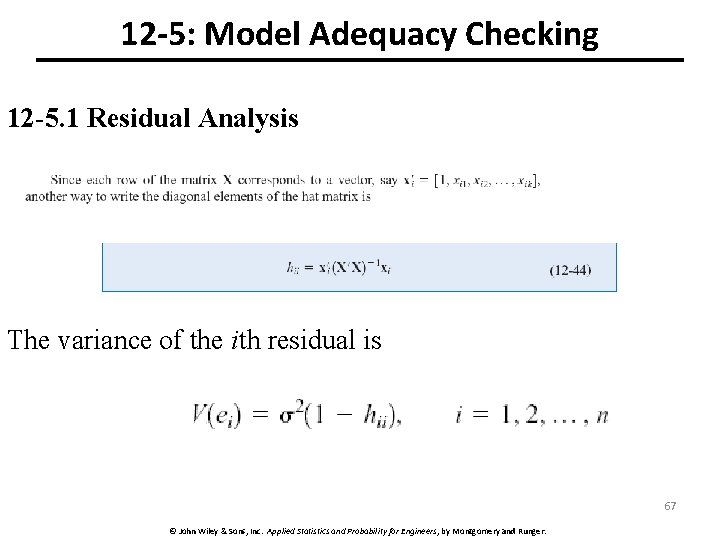

12 -5: Model Adequacy Checking 12 -5. 1 Residual Analysis 66 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

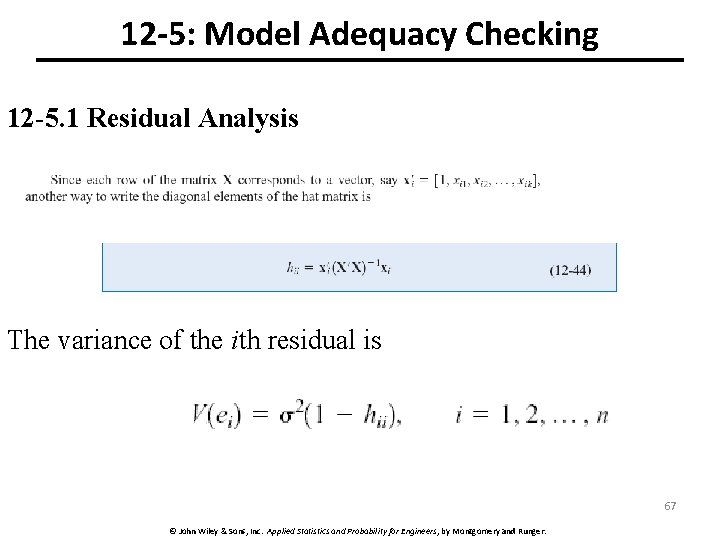

12 -5: Model Adequacy Checking 12 -5. 1 Residual Analysis The variance of the ith residual is 67 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

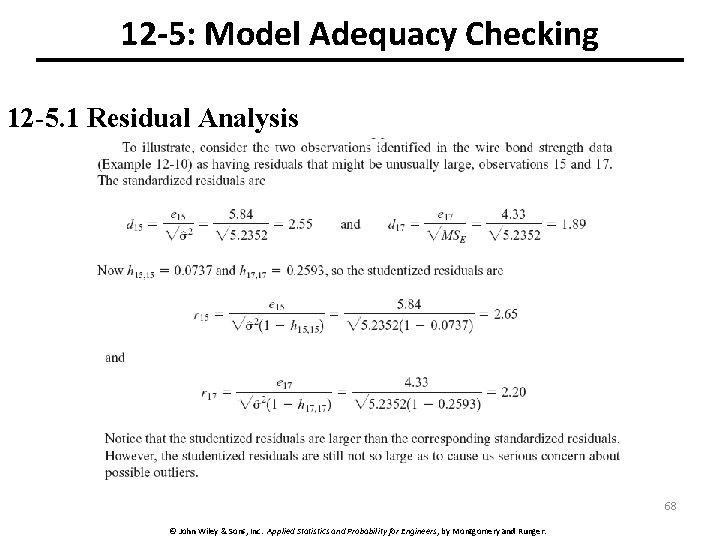

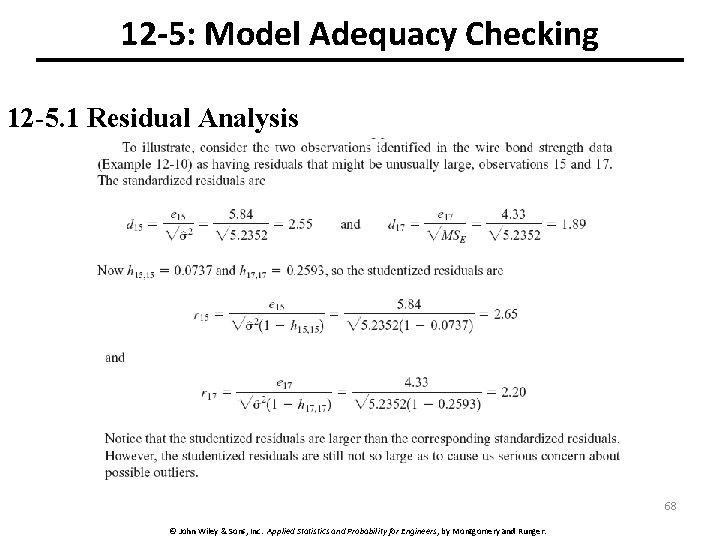

12 -5: Model Adequacy Checking 12 -5. 1 Residual Analysis 68 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

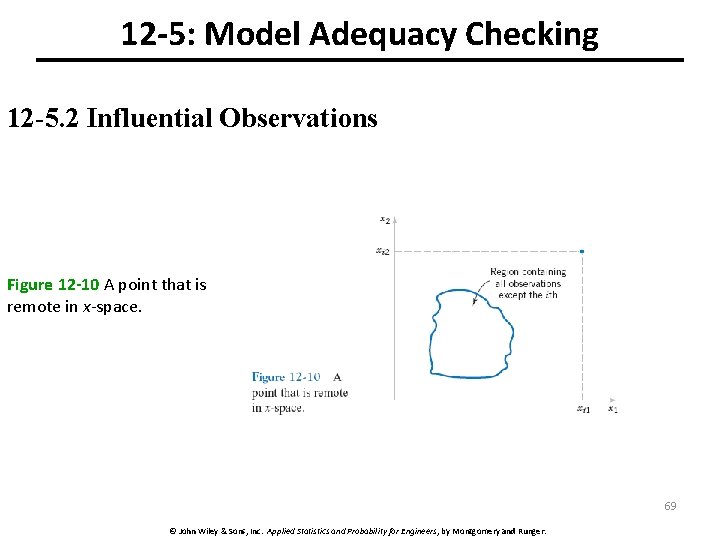

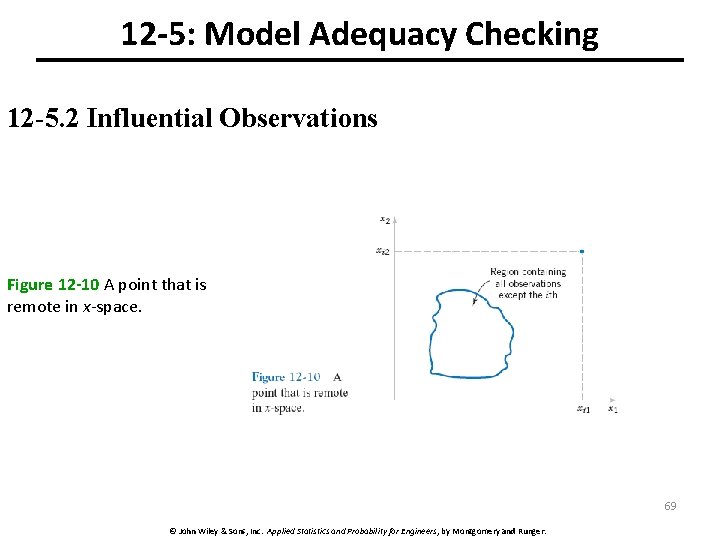

12 -5: Model Adequacy Checking 12 -5. 2 Influential Observations Figure 12 -10 A point that is remote in x-space. 69 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

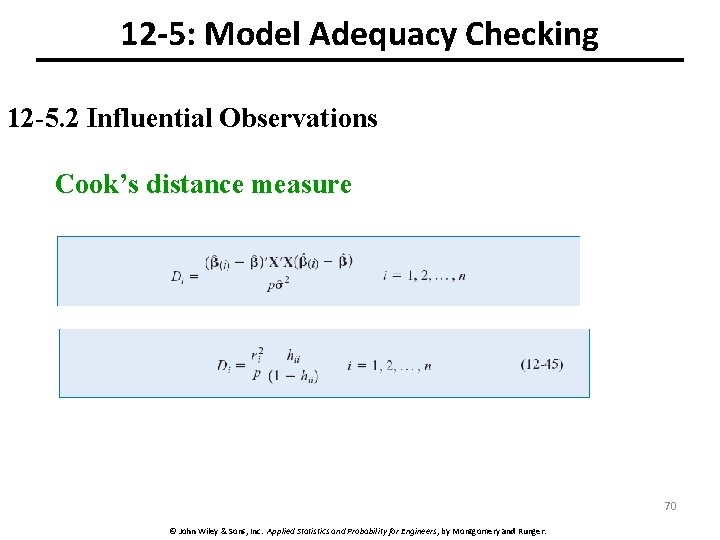

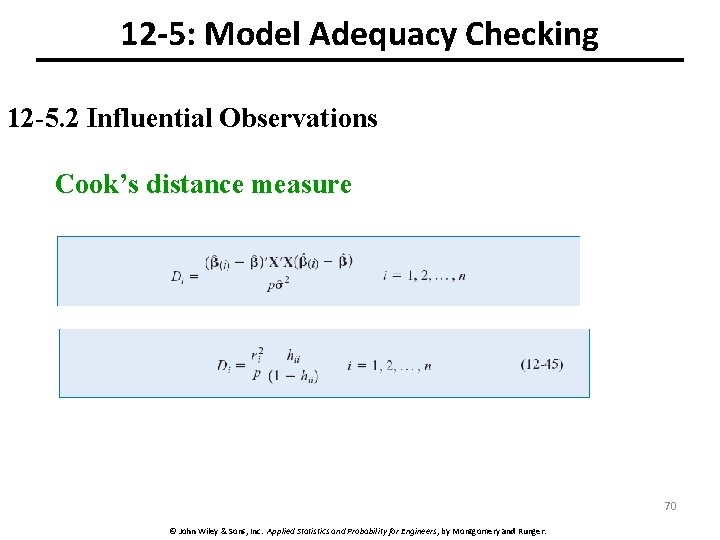

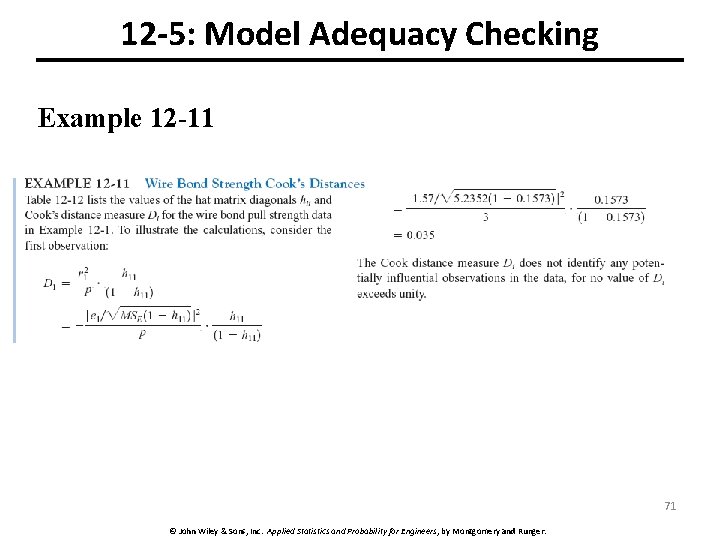

12 -5: Model Adequacy Checking 12 -5. 2 Influential Observations Cook’s distance measure 70 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

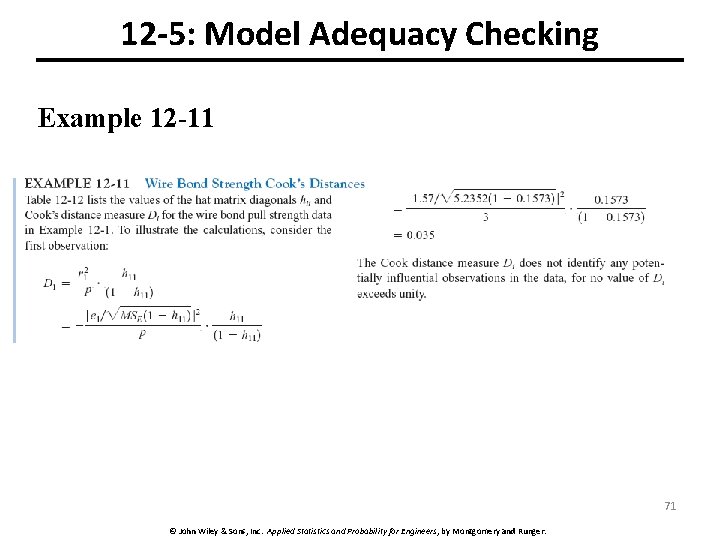

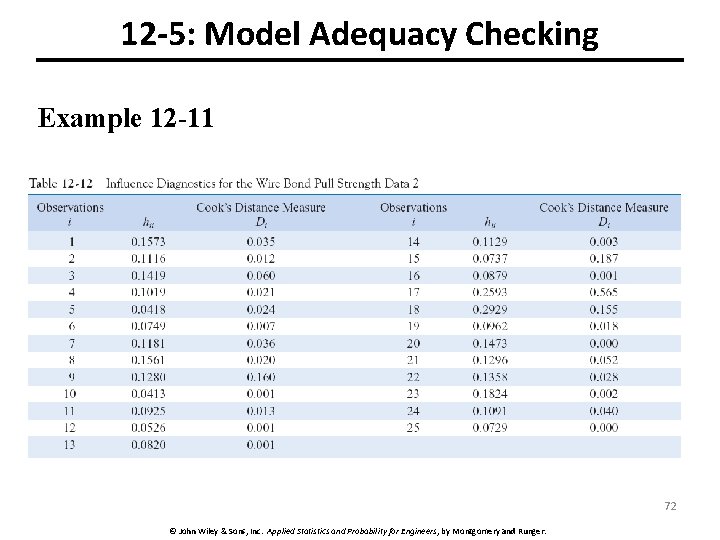

12 -5: Model Adequacy Checking Example 12 -11 71 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

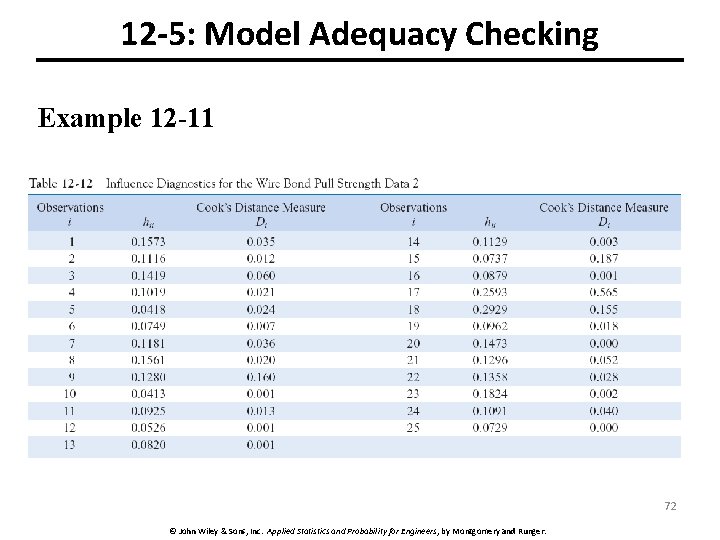

12 -5: Model Adequacy Checking Example 12 -11 72 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

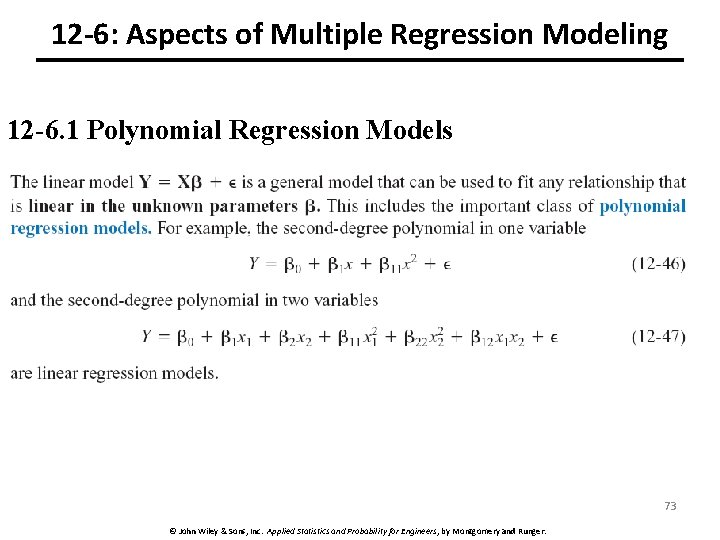

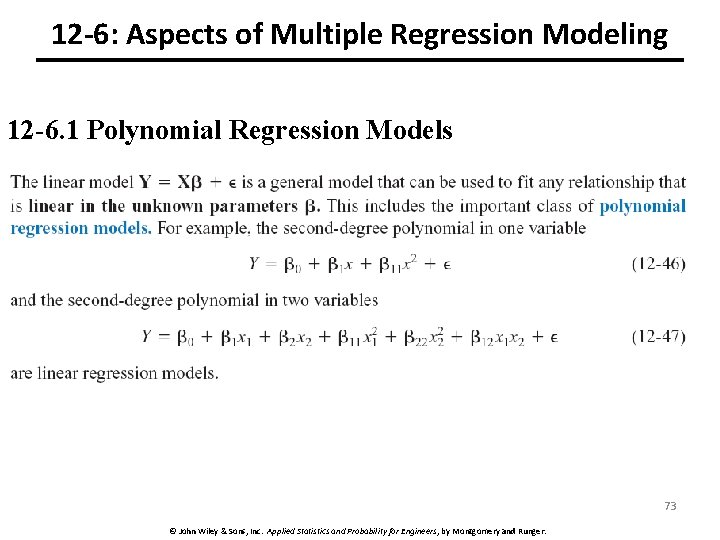

12 -6: Aspects of Multiple Regression Modeling 12 -6. 1 Polynomial Regression Models 73 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

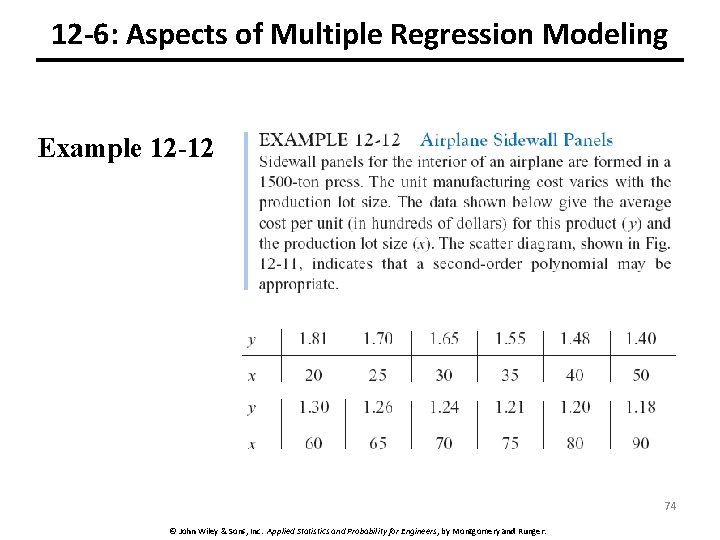

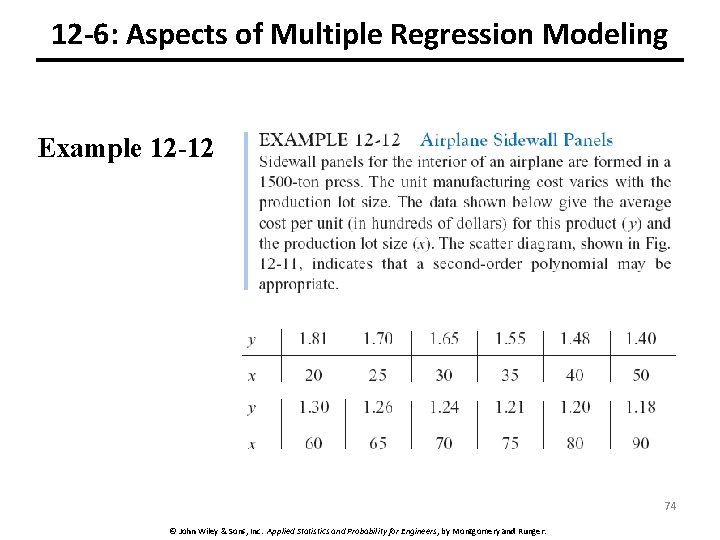

12 -6: Aspects of Multiple Regression Modeling Example 12 -12 74 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

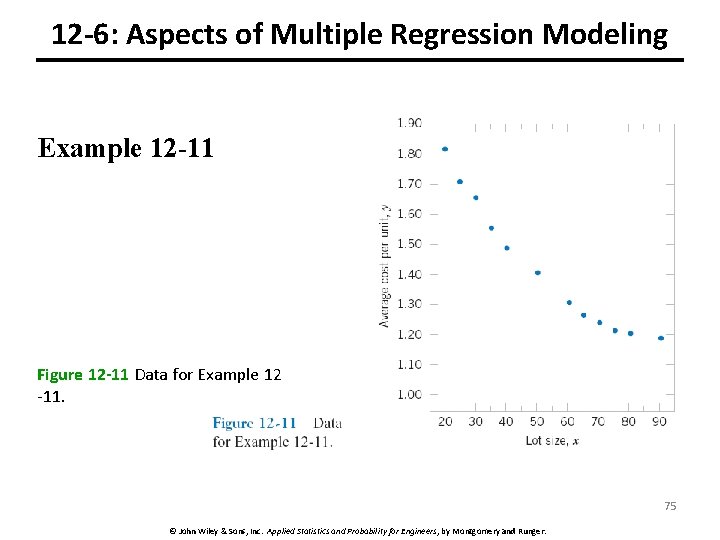

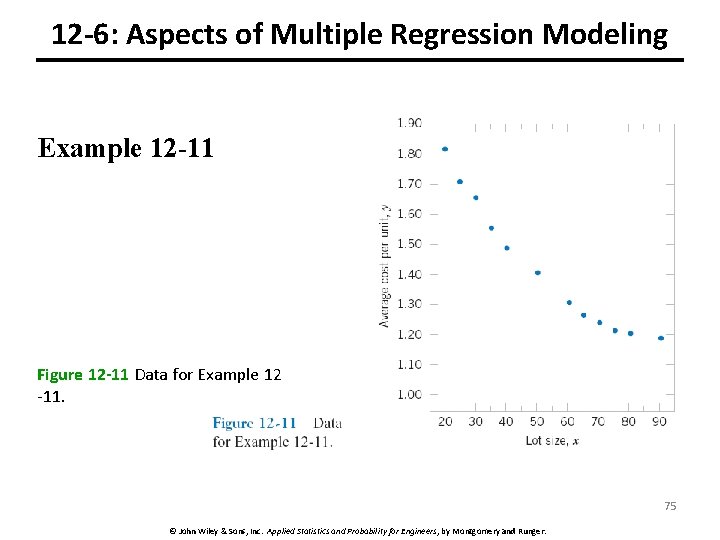

12 -6: Aspects of Multiple Regression Modeling Example 12 -11 Figure 12 -11 Data for Example 12 -11. 75 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

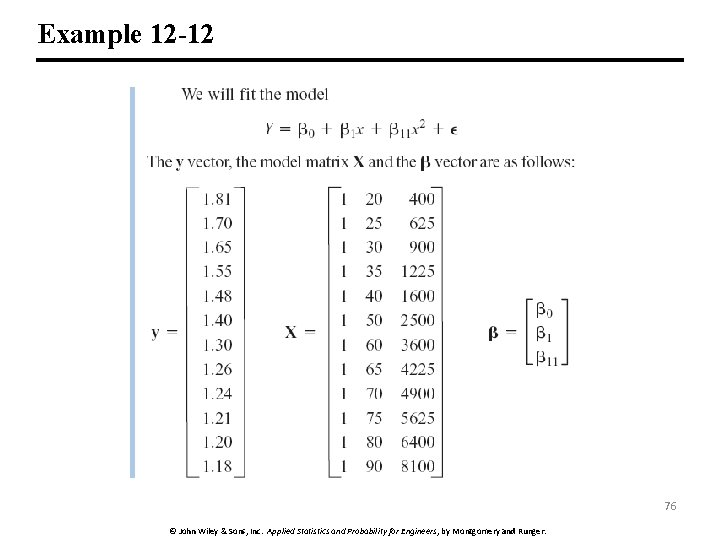

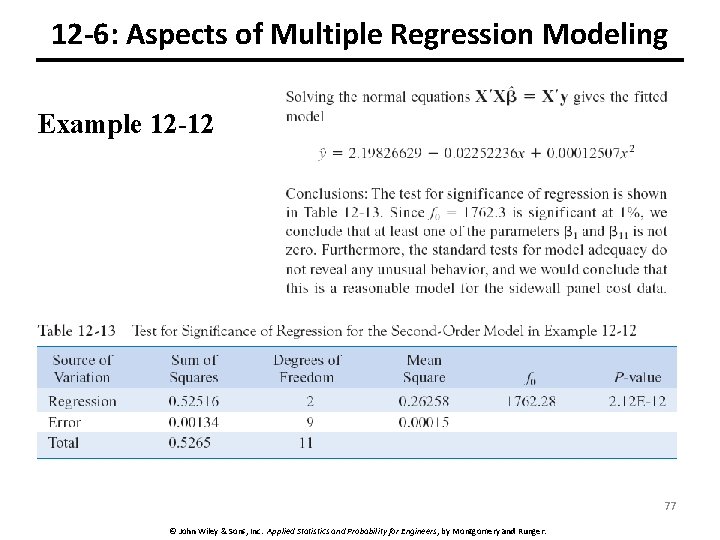

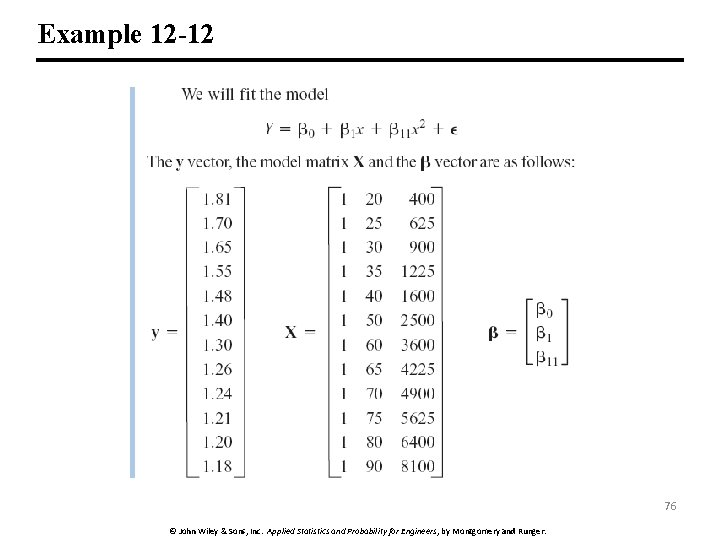

Example 12 -12 76 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

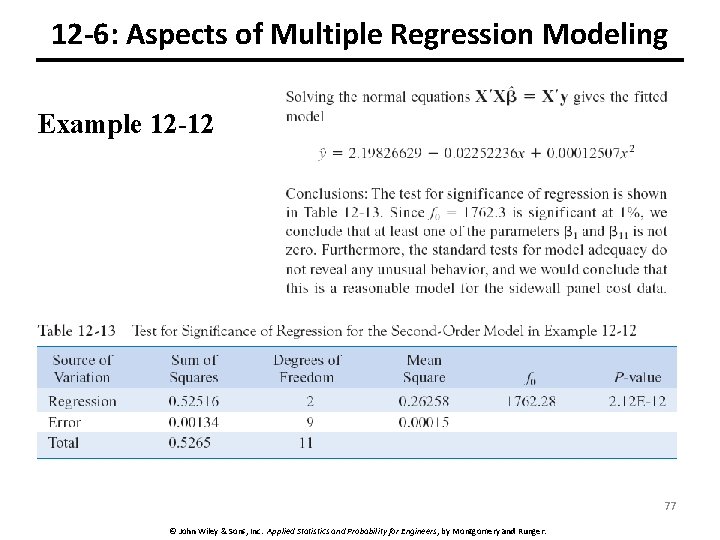

12 -6: Aspects of Multiple Regression Modeling Example 12 -12 77 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

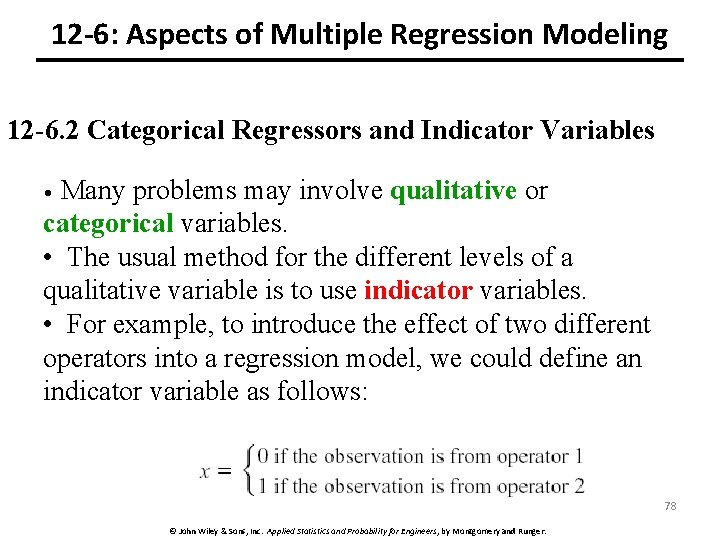

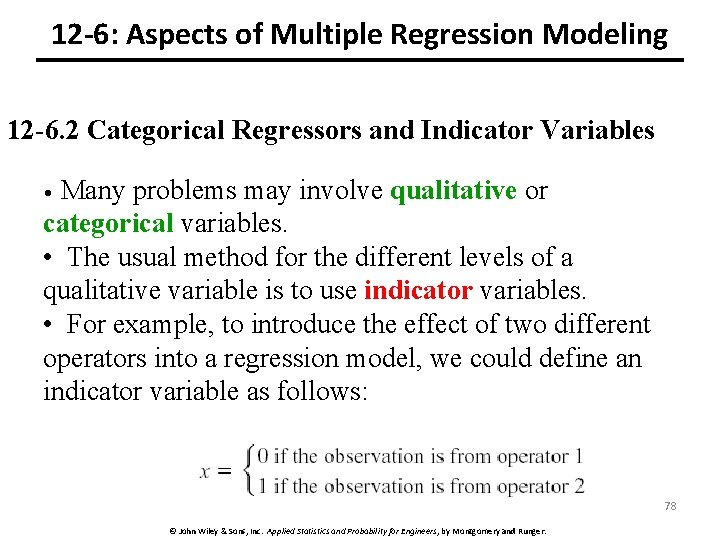

12 -6: Aspects of Multiple Regression Modeling 12 -6. 2 Categorical Regressors and Indicator Variables • Many problems may involve qualitative or categorical variables. • The usual method for the different levels of a qualitative variable is to use indicator variables. • For example, to introduce the effect of two different operators into a regression model, we could define an indicator variable as follows: 78 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

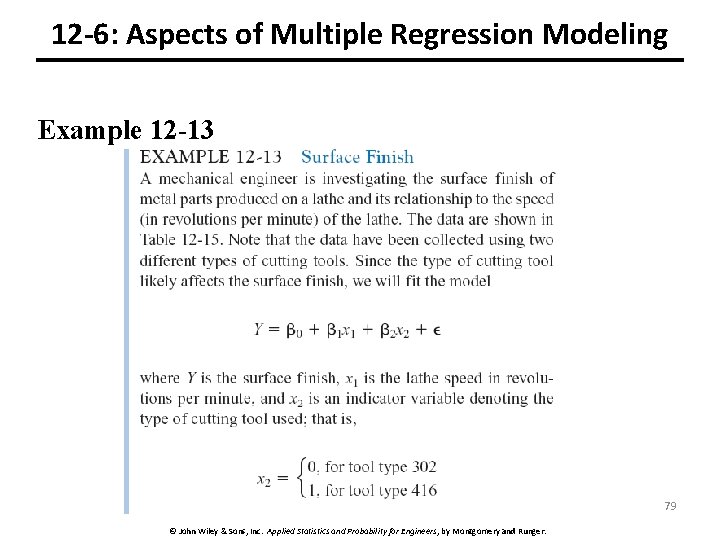

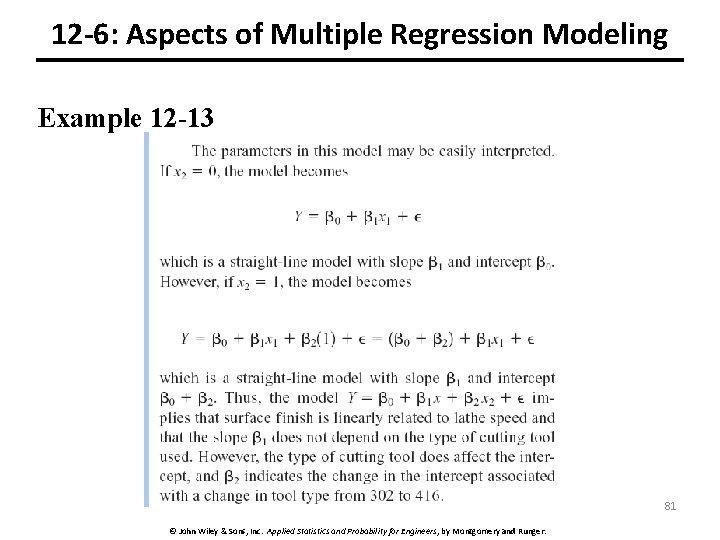

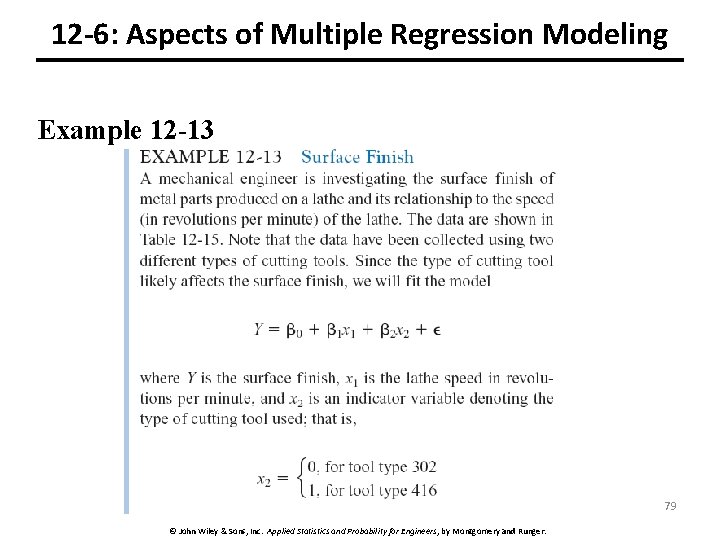

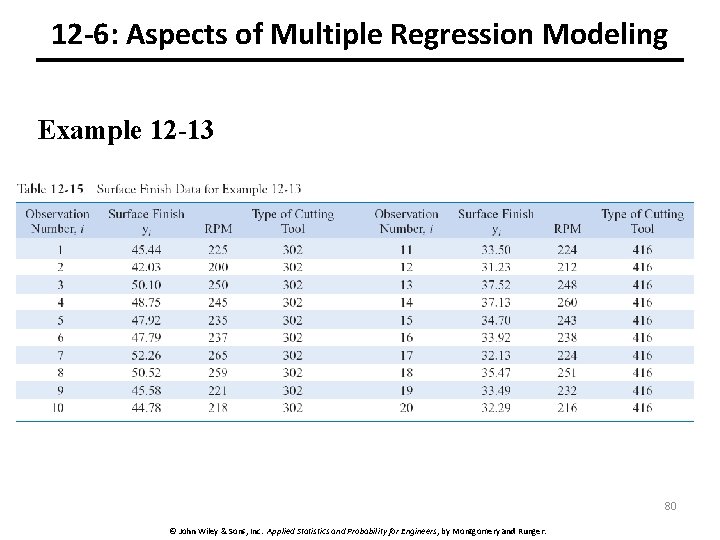

12 -6: Aspects of Multiple Regression Modeling Example 12 -13 79 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

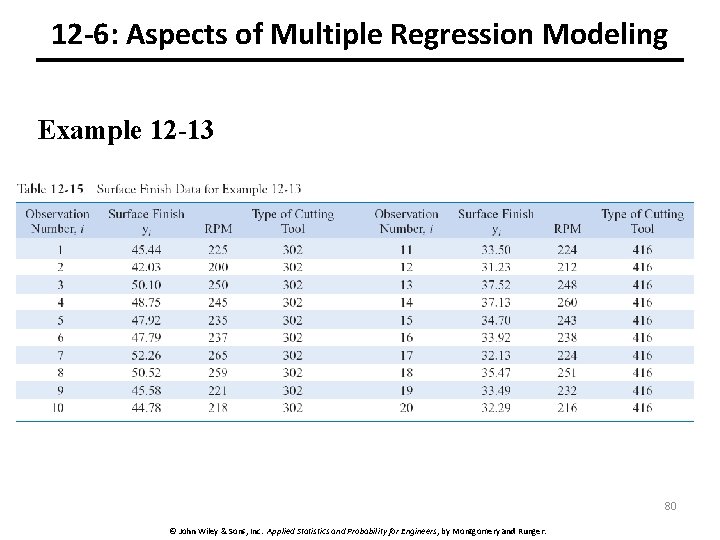

12 -6: Aspects of Multiple Regression Modeling Example 12 -13 80 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

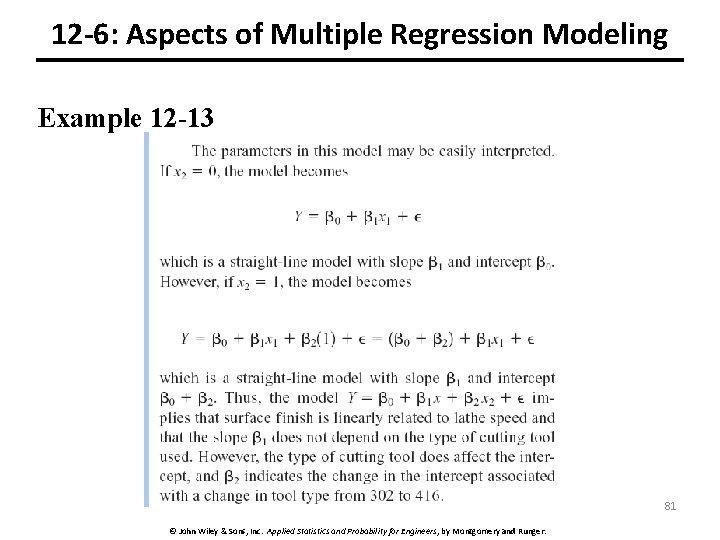

12 -6: Aspects of Multiple Regression Modeling Example 12 -13 81 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

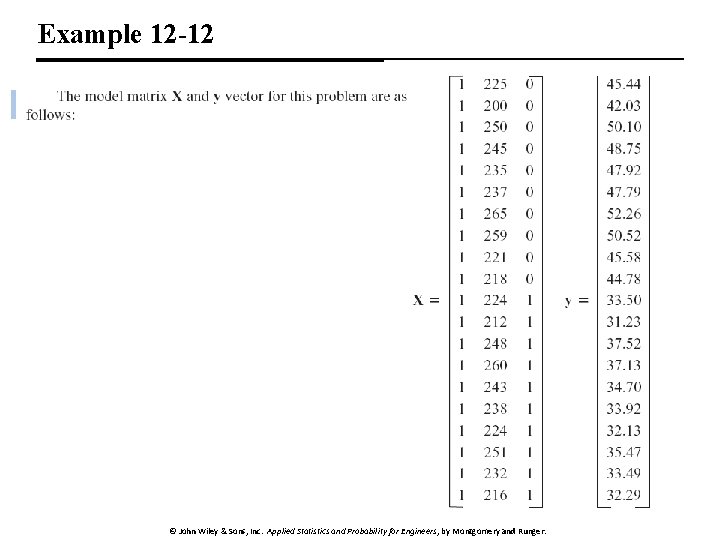

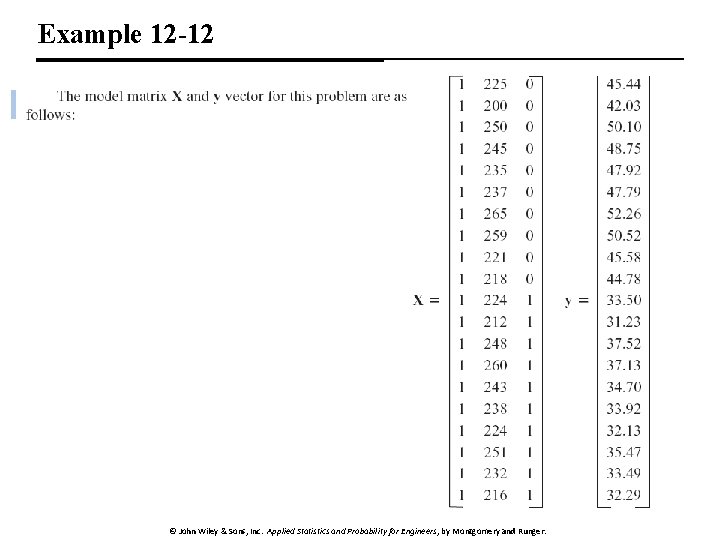

Example 12 -12 82 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

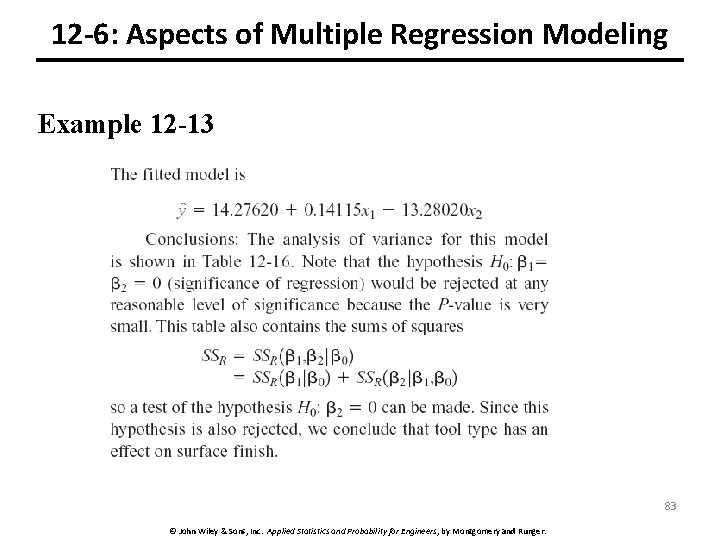

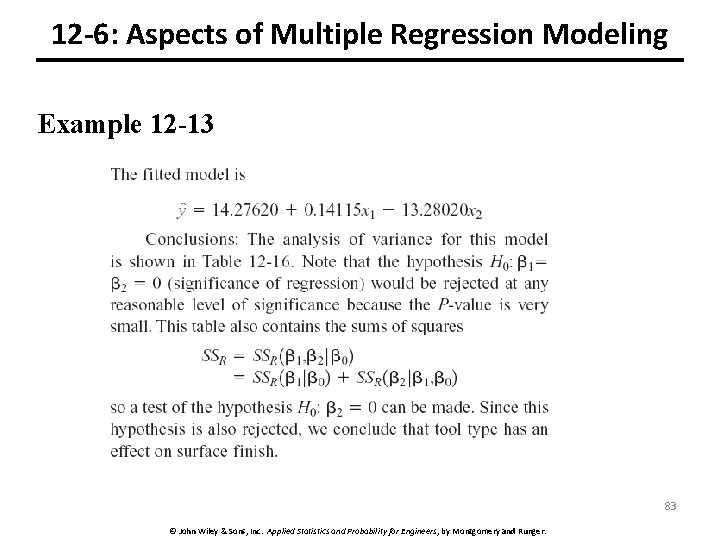

12 -6: Aspects of Multiple Regression Modeling Example 12 -13 83 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

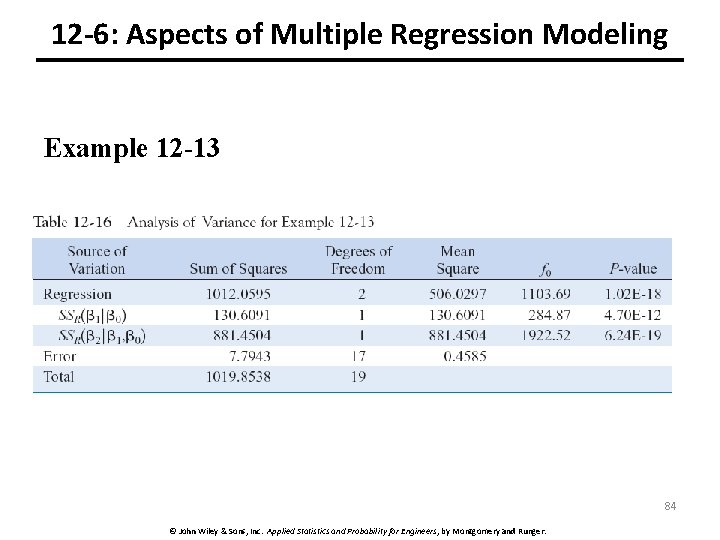

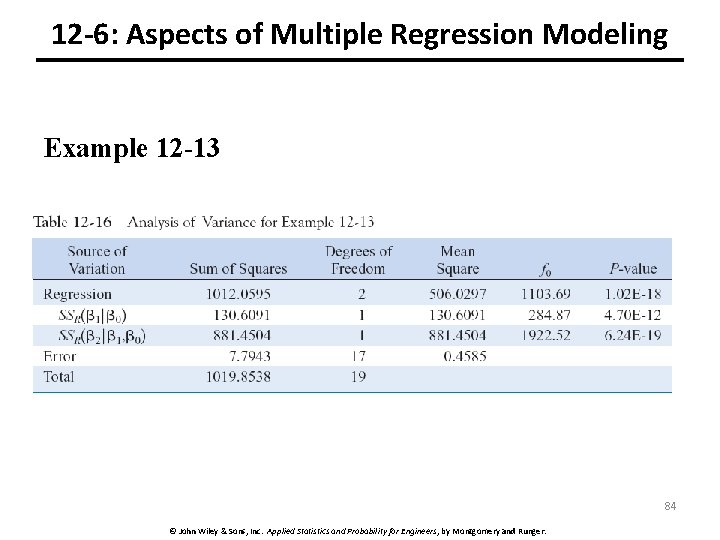

12 -6: Aspects of Multiple Regression Modeling Example 12 -13 84 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

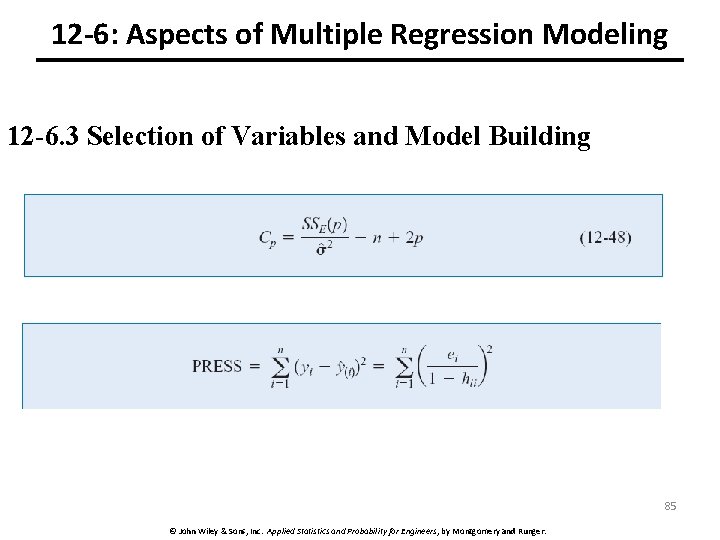

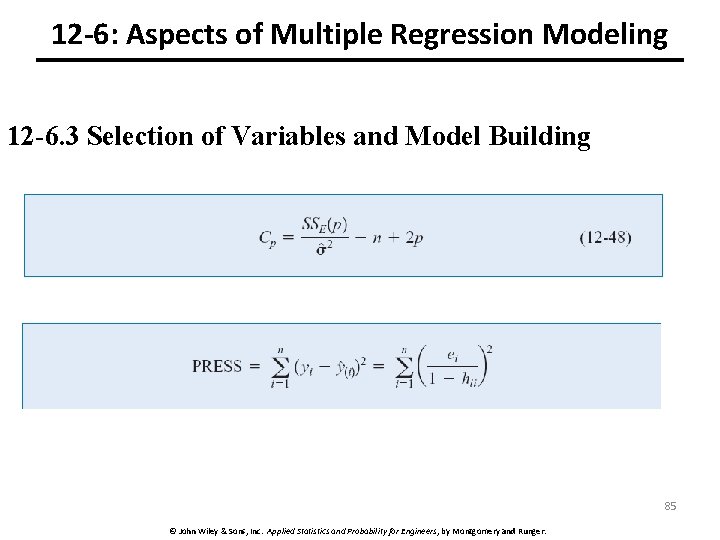

12 -6: Aspects of Multiple Regression Modeling 12 -6. 3 Selection of Variables and Model Building 85 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

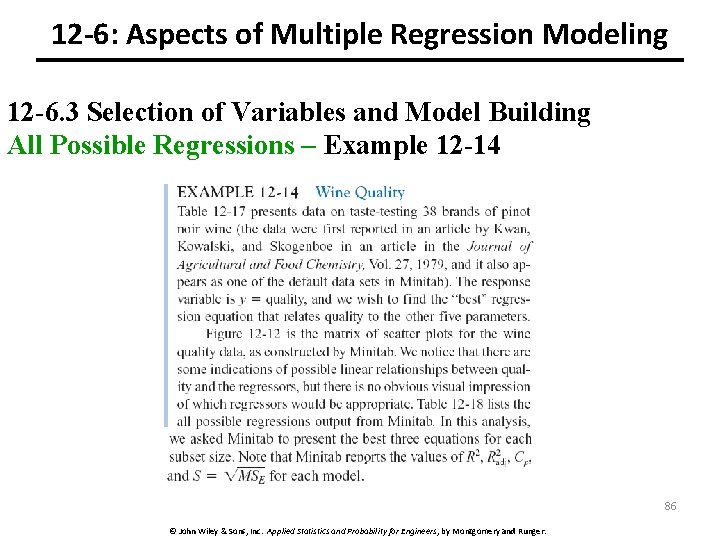

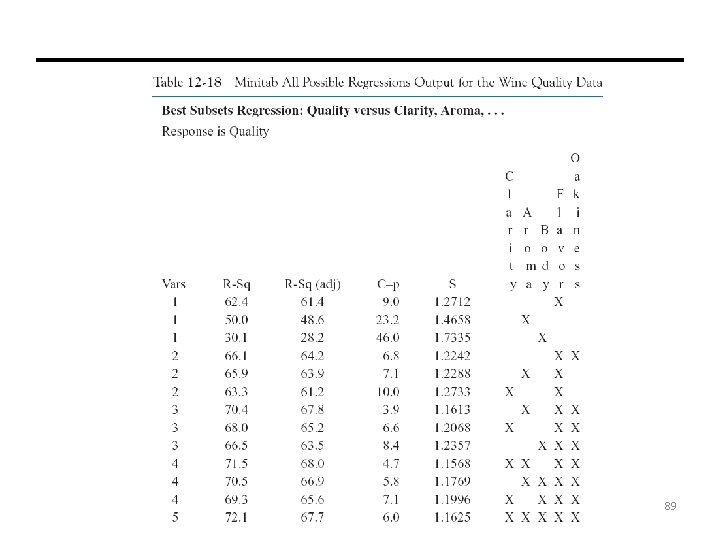

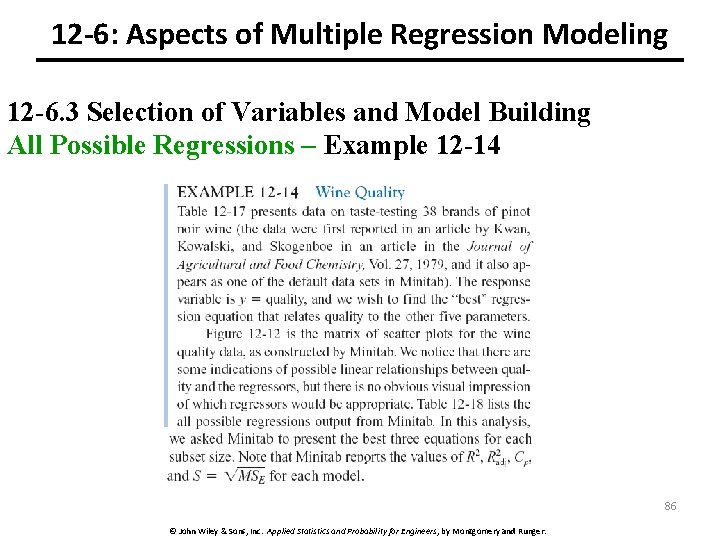

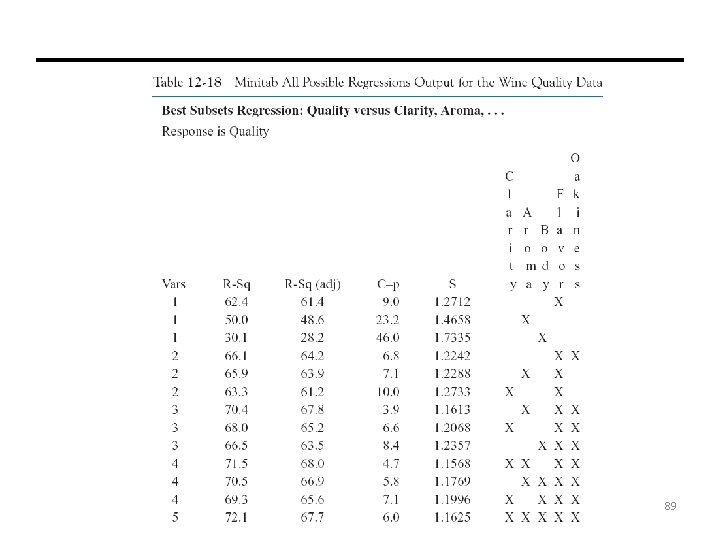

12 -6: Aspects of Multiple Regression Modeling 12 -6. 3 Selection of Variables and Model Building All Possible Regressions – Example 12 -14 86 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

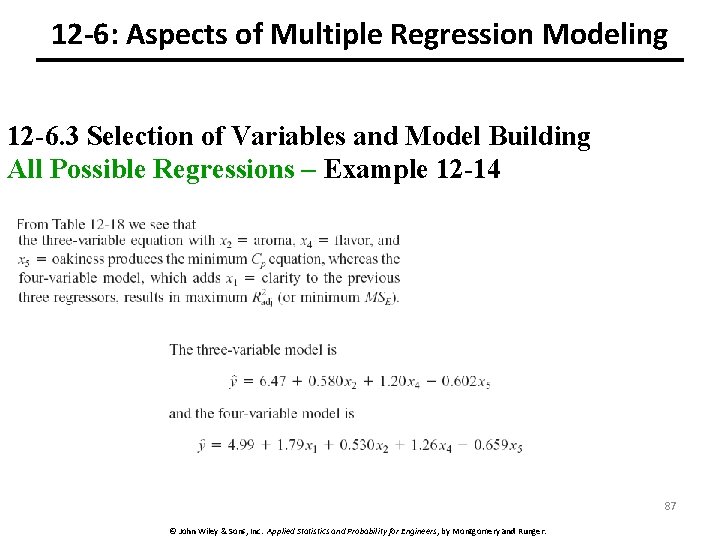

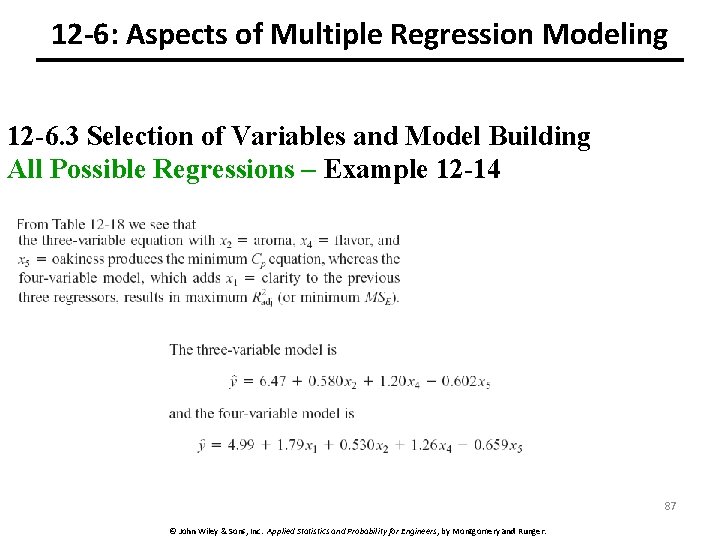

12 -6: Aspects of Multiple Regression Modeling 12 -6. 3 Selection of Variables and Model Building All Possible Regressions – Example 12 -14 87 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

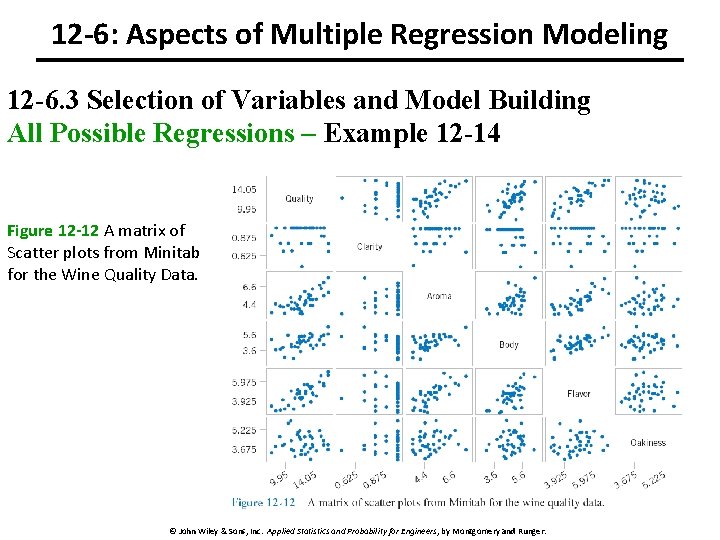

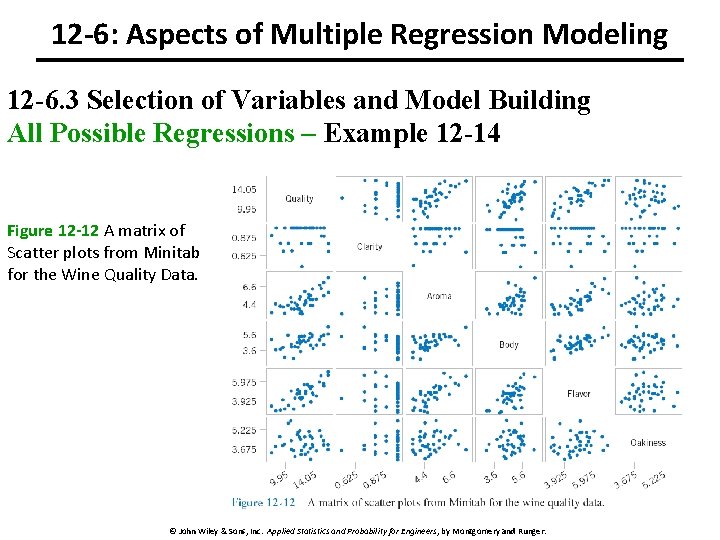

12 -6: Aspects of Multiple Regression Modeling 12 -6. 3 Selection of Variables and Model Building All Possible Regressions – Example 12 -14 Figure 12 -12 A matrix of Scatter plots from Minitab for the Wine Quality Data. 88 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

89 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

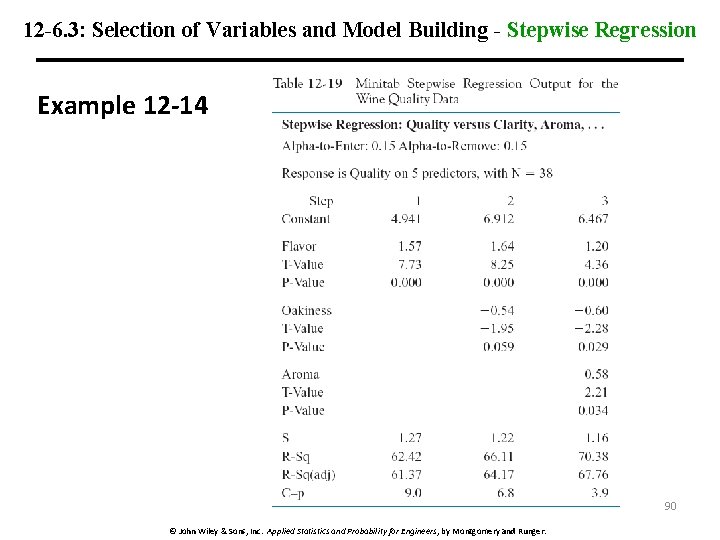

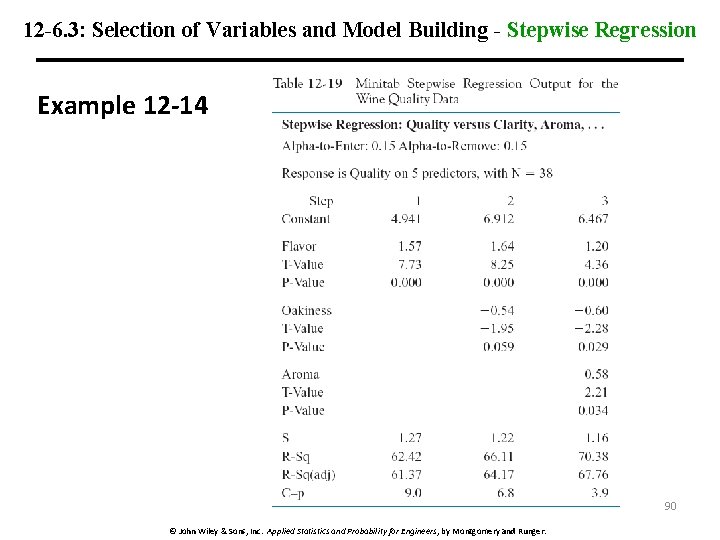

12 -6. 3: Selection of Variables and Model Building - Stepwise Regression Example 12 -14 90 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

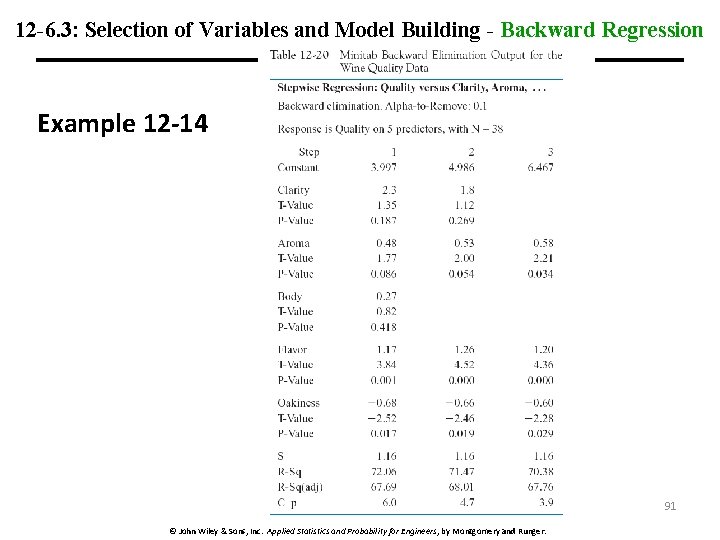

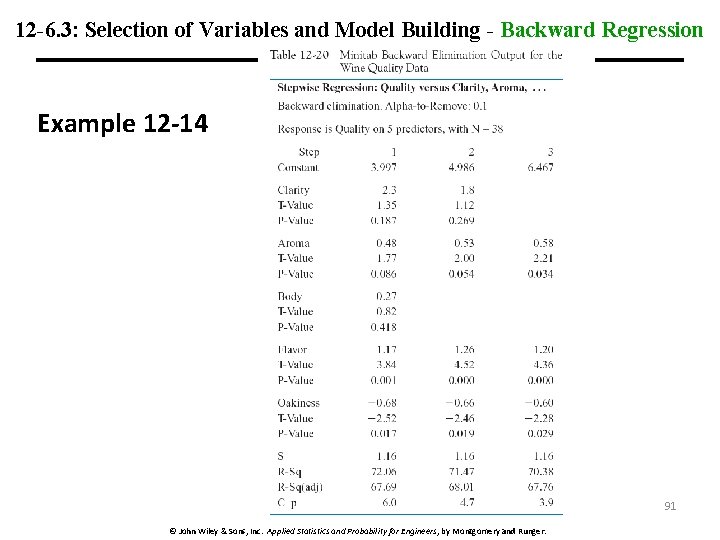

12 -6. 3: Selection of Variables and Model Building - Backward Regression Example 12 -14 91 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

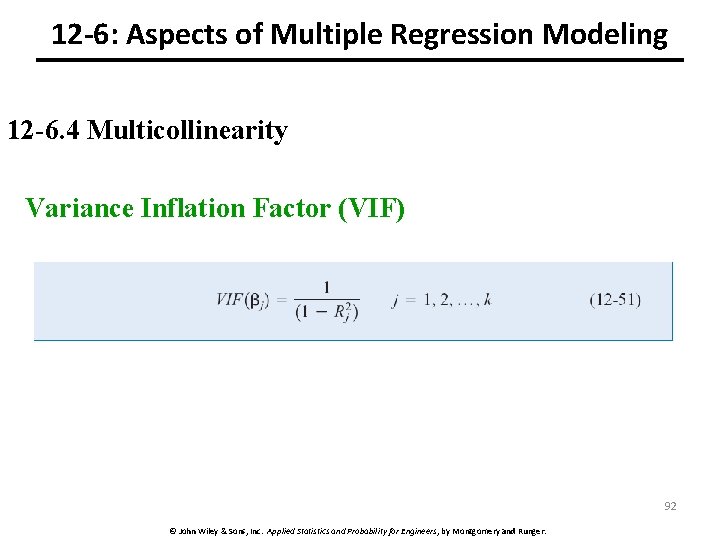

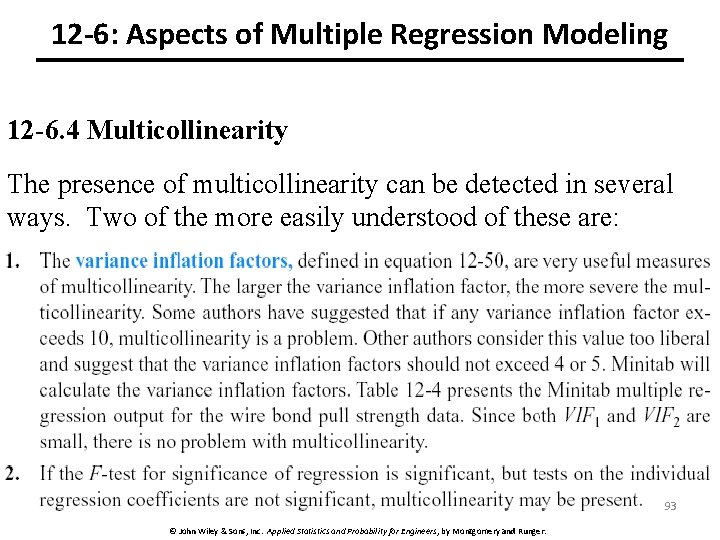

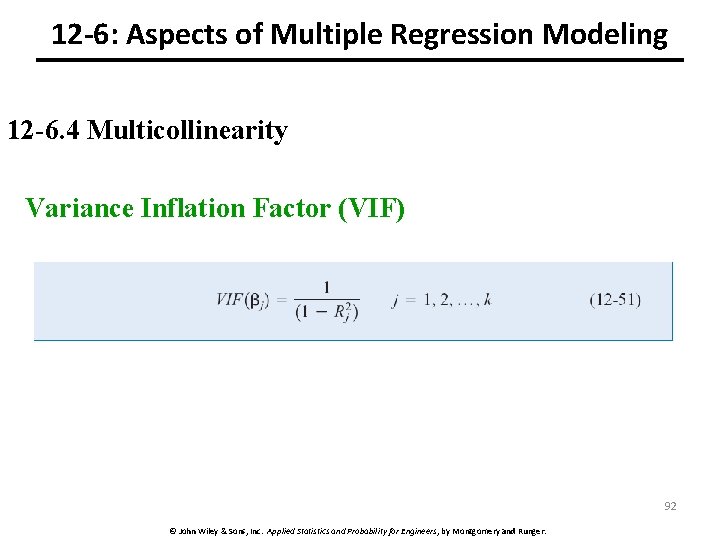

12 -6: Aspects of Multiple Regression Modeling 12 -6. 4 Multicollinearity Variance Inflation Factor (VIF) 92 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

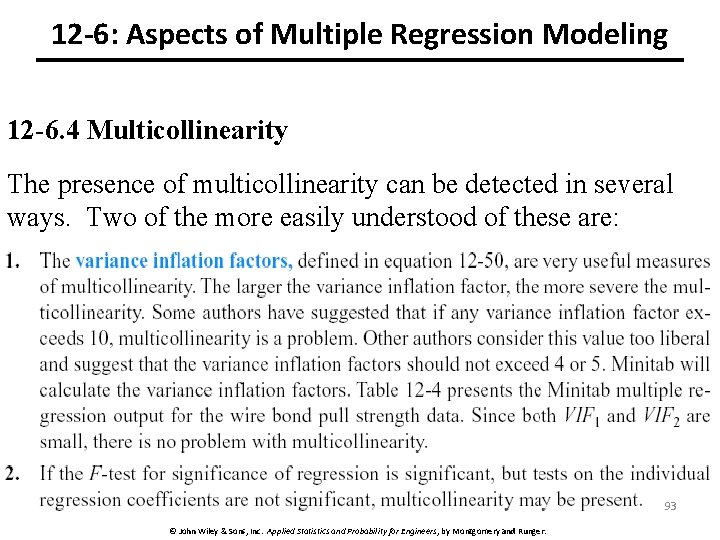

12 -6: Aspects of Multiple Regression Modeling 12 -6. 4 Multicollinearity The presence of multicollinearity can be detected in several ways. Two of the more easily understood of these are: 93 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.

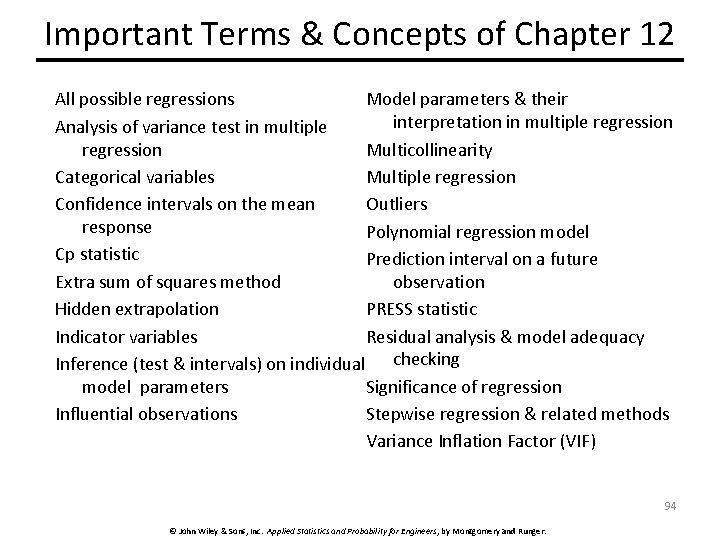

Important Terms & Concepts of Chapter 12 Model parameters & their All possible regressions interpretation in multiple regression Analysis of variance test in multiple Multicollinearity regression Multiple regression Categorical variables Outliers Confidence intervals on the mean response Polynomial regression model Cp statistic Prediction interval on a future observation Extra sum of squares method PRESS statistic Hidden extrapolation Residual analysis & model adequacy Indicator variables Inference (test & intervals) on individual checking Significance of regression model parameters Stepwise regression & related methods Influential observations Variance Inflation Factor (VIF) 94 © John Wiley & Sons, Inc. Applied Statistics and Probability for Engineers, by Montgomery and Runger.