100 Gigabit Ethernet Requirements Implementation Fall 2006 Internet

100 Gigabit Ethernet Requirements & Implementation Fall 2006 Internet 2 Member Meeting December 6, 2006 Serge Melle smelle@infinera. com 408 -572 -5200 Drew Perkins dperkins@infinera. com 408 -572 -5208

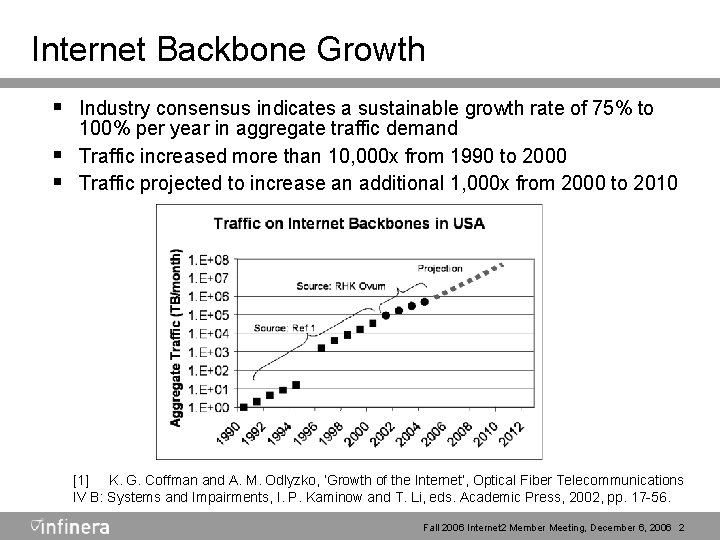

Internet Backbone Growth § Industry consensus indicates a sustainable growth rate of 75% to § § 100% per year in aggregate traffic demand Traffic increased more than 10, 000 x from 1990 to 2000 Traffic projected to increase an additional 1, 000 x from 2000 to 2010 [1] K. G. Coffman and A. M. Odlyzko, ‘Growth of the Internet’, Optical Fiber Telecommunications IV B: Systems and Impairments, I. P. Kaminow and T. Li, eds. Academic Press, 2002, pp. 17 -56. Fall 2006 Internet 2 Member Meeting, December 6, 2006 2

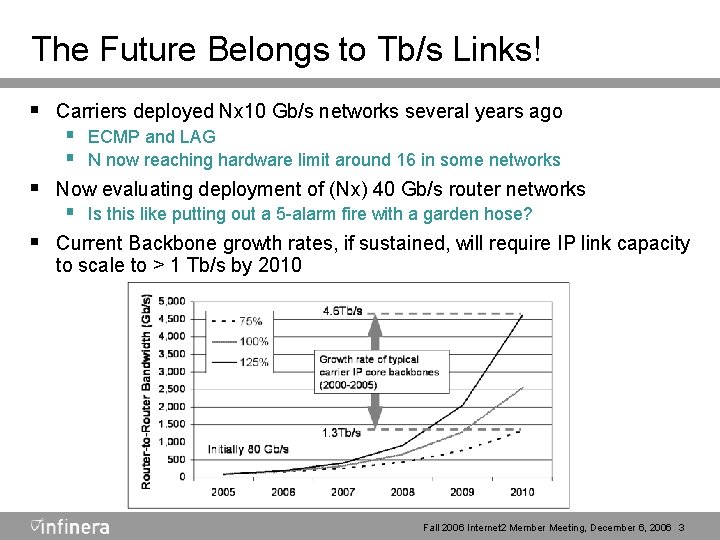

The Future Belongs to Tb/s Links! § Carriers deployed Nx 10 Gb/s networks several years ago § ECMP and LAG § N now reaching hardware limit around 16 in some networks § Now evaluating deployment of (Nx) 40 Gb/s router networks § Is this like putting out a 5 -alarm fire with a garden hose? § Current Backbone growth rates, if sustained, will require IP link capacity to scale to > 1 Tb/s by 2010 Fall 2006 Internet 2 Member Meeting, December 6, 2006 3

Proposed Requirements for Higher Speed Ethernet § Protocol Extensible for Speed § Ethernet tradition has been 10 x scaling § But at current growth rates, 100 Gb/s will be insufficient by 2010 § Desirable to standardize method of extending available speed without re-engineering the protocol stack § Incremental Growth § Most organizations deploy new technologies with a 4 -5 yr lifetime § Pre-deploying based on the speed requirement 5 yrs in advance is § economically burdensome Assuming 5 yr window and 100% growth per year, ability to grow link speed incrementally over 25 = 32 x without a “forklift upgrade” seems highly desirable Fall 2006 Internet 2 Member Meeting, December 6, 2006 4

Proposed Requirements (cont’d) § Hitless Growth § Problematic to “take down” core router links for a substantial period § § of time without customer service degradations SLAs may be compromised or require complicated temporary workarounds if substantial down time is required for upgrade. Ideally, upgrade of the link capacity should therefore be hitless, or at least only momentarily service-impacting. § Resiliency and Graceful Degradation § Protocol should provide rapid recovery from failure of an individual § channel or component If the failure is such that full performance can not be provided, degradation should only be proportional to the failed element(s). Fall 2006 Internet 2 Member Meeting, December 6, 2006 5

Proposed Requirements (cont’d) § Technology Reuse § Highly desirable to leverage existing 10 G PHYs, including 10 GBASE-R, W, X, S, L, E, Z and LRM in order to foster ubiquity and avoid duplication of standards efforts § Deterministic Performance § Latency/Delay Variation should be low for support of real-time packet based services, e. g. § Streaming video § VOIP § Gaming Fall 2006 Internet 2 Member Meeting, December 6, 2006 6

Proposed Requirements (cont’d) § WAN Manageability § 100 Gb. E will be transported over wide area networks § It should include features for low Op. Ex and should be: § Economical § Reliable § Operationally Manageable (e. g. simple fault isolation) § It should support equivalents for conventional transport network OAM mechanisms, e. g. § Alarm Indication Signal (AIS) § Forward Defect Indication (FDI) § Backward Defect Indication (BDI) § Tandem Connection Monitoring (TCM), etc. ) § WAN Transportability § Operation over WAN fiber optic networks § Transport across regional, national and inter-continental networks § The protocol should be resilient to intra-channel/intra-wavelength propagation delay differences (skew) Fall 2006 Internet 2 Member Meeting, December 6, 2006 7

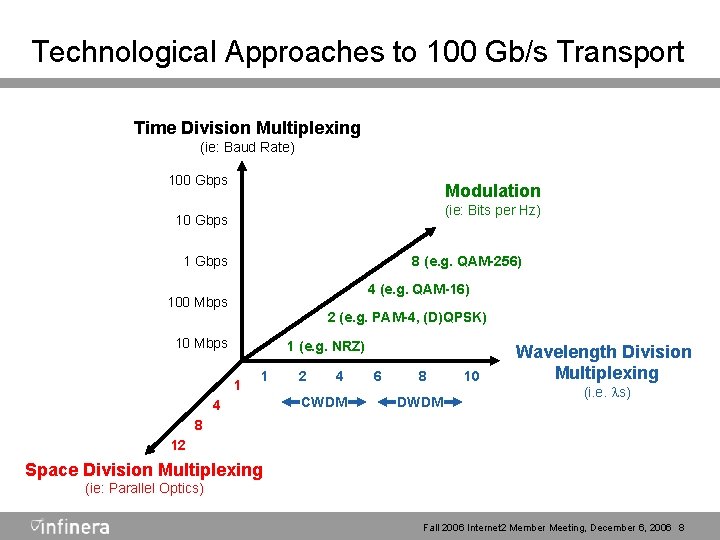

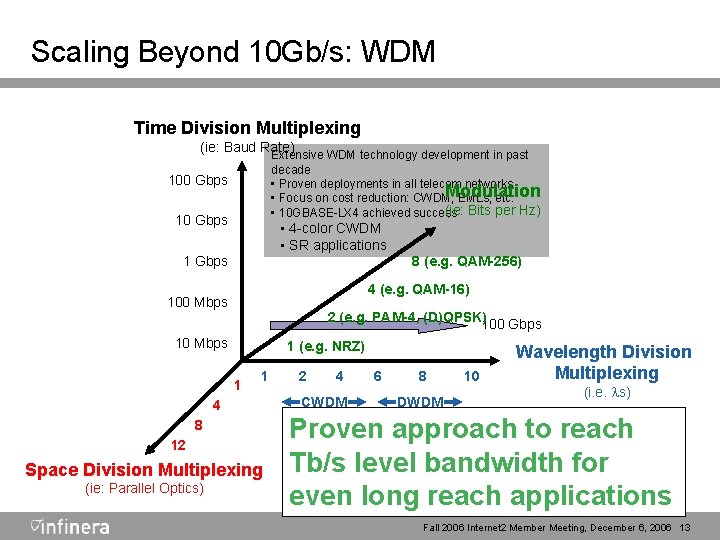

Technological Approaches to 100 Gb/s Transport Time Division Multiplexing (ie: Baud Rate) 100 Gbps Modulation (ie: Bits per Hz) 10 Gbps 8 (e. g. QAM-256) 1 Gbps 4 (e. g. QAM-16) 100 Mbps 2 (e. g. PAM-4, (D)QPSK) 10 Mbps 1 (e. g. NRZ) 1 1 4 2 4 CWDM 6 8 DWDM 10 Wavelength Division Multiplexing (i. e. ls) 8 12 Space Division Multiplexing (ie: Parallel Optics) Fall 2006 Internet 2 Member Meeting, December 6, 2006 8

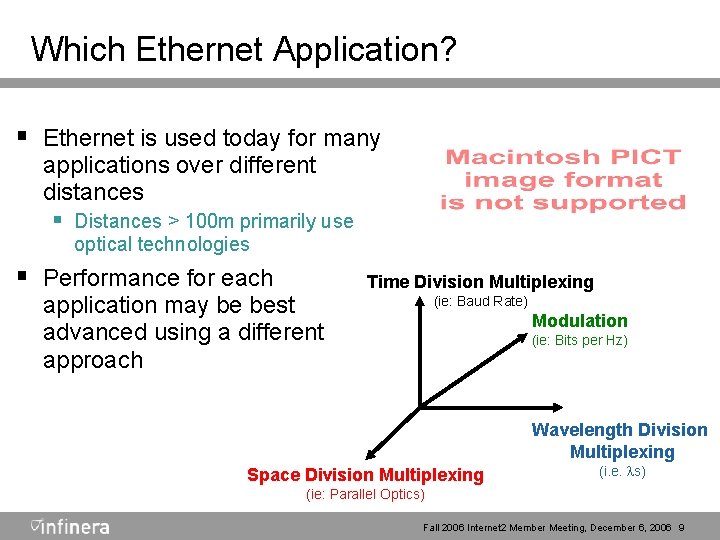

Which Ethernet Application? § Ethernet is used today for many applications over different distances § Distances > 100 m primarily use optical technologies § Performance for each application may be best advanced using a different approach Time Division Multiplexing (ie: Baud Rate) Modulation (ie: Bits per Hz) Wavelength Division Multiplexing Space Division Multiplexing (i. e. ls) (ie: Parallel Optics) Fall 2006 Internet 2 Member Meeting, December 6, 2006 9

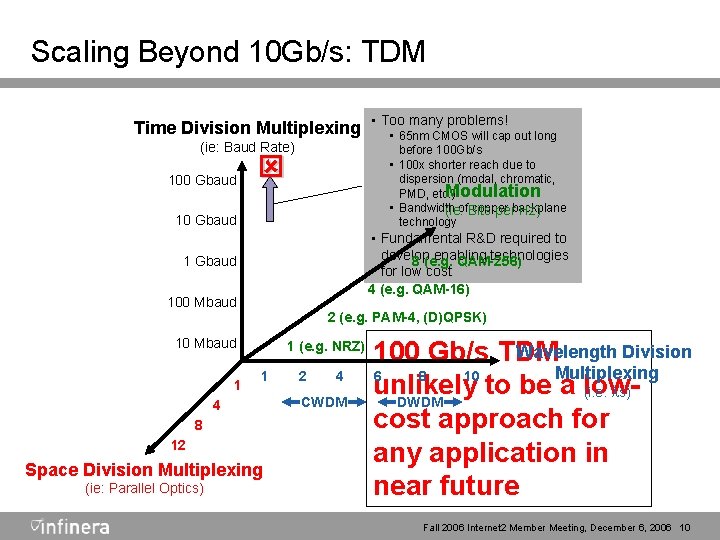

Scaling Beyond 10 Gb/s: TDM many problems! Time Division Multiplexing • Too • 65 nm CMOS will cap out long (ie: Baud Rate) 100 Gbaud before 100 Gb/s • 100 x shorter reach due to dispersion (modal, chromatic, Modulation PMD, etc. ) • Bandwidth copper (ie: of. Bits perbackplane Hz) technology ý 10 Gbaud • Fundamental R&D required to develop enabling technologies 8 (e. g. QAM-256) for low cost 4 (e. g. QAM-16) 1 Gbaud 100 Mbaud 2 (e. g. PAM-4, (D)QPSK) 10 Mbaud 1 1 (e. g. NRZ) 1 4 8 12 Space Division Multiplexing (ie: Parallel Optics) 2 4 CWDM Wavelength Division 100 Gb/s TDM Multiplexing 6 8 10 unlikely to be a low(i. e. ls) DWDM cost approach for any application in near future Fall 2006 Internet 2 Member Meeting, December 6, 2006 10

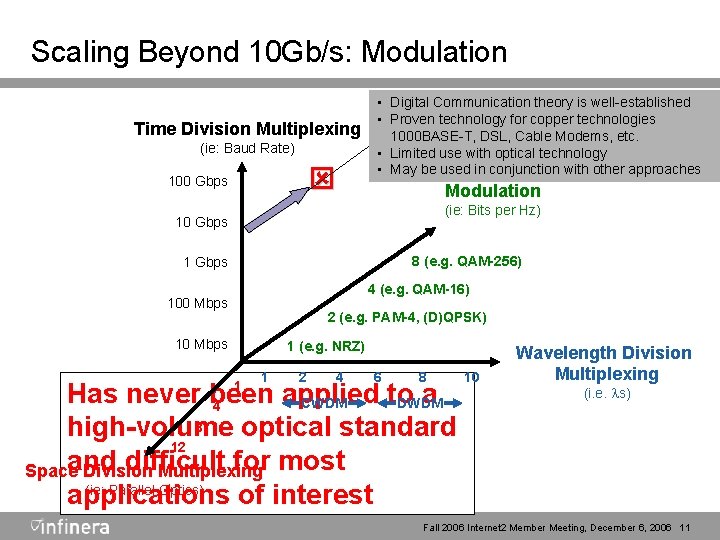

Scaling Beyond 10 Gb/s: Modulation Time Division Multiplexing (ie: Baud Rate) ý 100 Gbps • Digital Communication theory is well-established • Proven technology for copper technologies 1000 BASE-T, DSL, Cable Modems, etc. • Limited use with optical technology • May be used in conjunction with other approaches Modulation (ie: Bits per Hz) 10 Gbps 8 (e. g. QAM-256) 1 Gbps 4 (e. g. QAM-16) 100 Mbps 2 (e. g. PAM-4, (D)QPSK) 10 Mbps 1 (e. g. NRZ) 1 2 4 6 8 Has never been applied to a CWDM DWDM 4 8 high-volume optical standard 12 and difficult for most Space Division Multiplexing (ie: Parallel Optics) applications of interest 1 10 Wavelength Division Multiplexing (i. e. ls) Fall 2006 Internet 2 Member Meeting, December 6, 2006 11

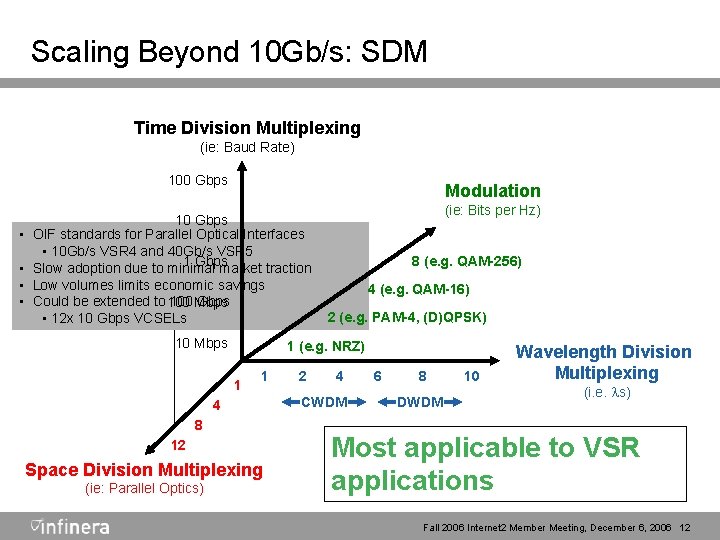

Scaling Beyond 10 Gb/s: SDM Time Division Multiplexing (ie: Baud Rate) 100 Gbps • • Modulation 10 Gbps OIF standards for Parallel Optical Interfaces • 10 Gb/s VSR 4 and 40 Gb/s VSR 5 1 Gbps Slow adoption due to minimal market traction Low volumes limits economic savings Could be extended to 100 Mbps Gbps • 12 x 10 Gbps VCSELs 10 Mbps 8 (e. g. QAM-256) 4 (e. g. QAM-16) 2 (e. g. PAM-4, (D)QPSK) 1 (e. g. NRZ) 1 1 4 8 12 Space Division Multiplexing (ie: Parallel Optics) (ie: Bits per Hz) 2 4 CWDM 6 8 DWDM 10 Wavelength Division Multiplexing (i. e. ls) Most applicable to VSR applications Fall 2006 Internet 2 Member Meeting, December 6, 2006 12

Scaling Beyond 10 Gb/s: WDM Time Division Multiplexing (ie: Baud Rate) Extensive WDM technology development in past decade • Proven deployments in all telecom networks Modulation • Focus on cost reduction: CWDM, EMLs, etc. (ie: • 10 GBASE-LX 4 achieved success Bits per Hz) 100 Gbps 10 Gbps • 4 -color CWDM • SR applications 8 (e. g. QAM-256) 1 Gbps 4 (e. g. QAM-16) 100 Mbps 2 (e. g. PAM-4, (D)QPSK)100 Gbps 10 Mbps 1 (e. g. NRZ) 1 1 4 8 12 Space Division Multiplexing (ie: Parallel Optics) 2 4 CWDM 6 8 DWDM 10 Wavelength Division Multiplexing (i. e. ls) Proven approach to reach Tb/s level bandwidth for even long reach applications Fall 2006 Internet 2 Member Meeting, December 6, 2006 13

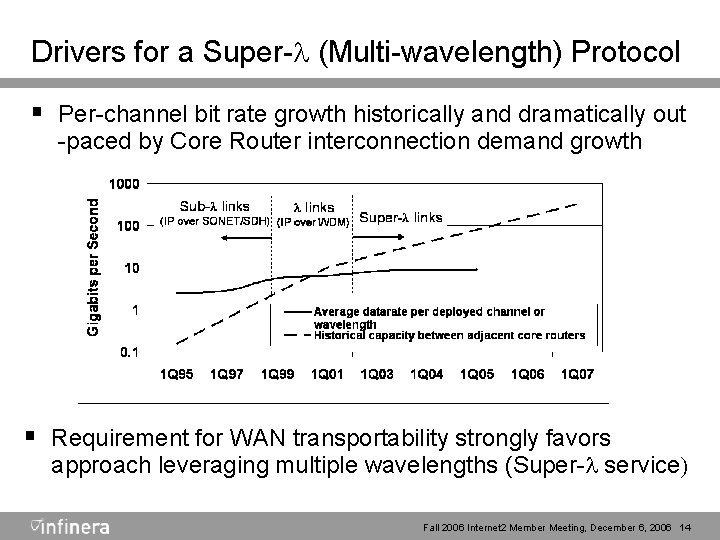

Drivers for a Super-l (Multi-wavelength) Protocol § Per-channel bit rate growth historically and dramatically out -paced by Core Router interconnection demand growth § Requirement for WAN transportability strongly favors approach leveraging multiple wavelengths (Super-l service) Fall 2006 Internet 2 Member Meeting, December 6, 2006 14

Won’t 802. 3 ad Link Aggregation (LAG) Solve the Scaling Problem? § LAG and ECMP rely on statistical flow distribution § mechanisms Provide fixed assignment of “conversations” to channels § Unacceptable performance as individual flows reach Gb/s range § A single 10 Gb/s flow will exhaust one LAG member yielding 1/N blocking probability for all other flows VPN and security technologies make all flows appear as one § § True deterministic ≥ 40 G link technology required today § Deterministic packet/fragment/word/byte distribution mechanism Fall 2006 Internet 2 Member Meeting, December 6, 2006 15

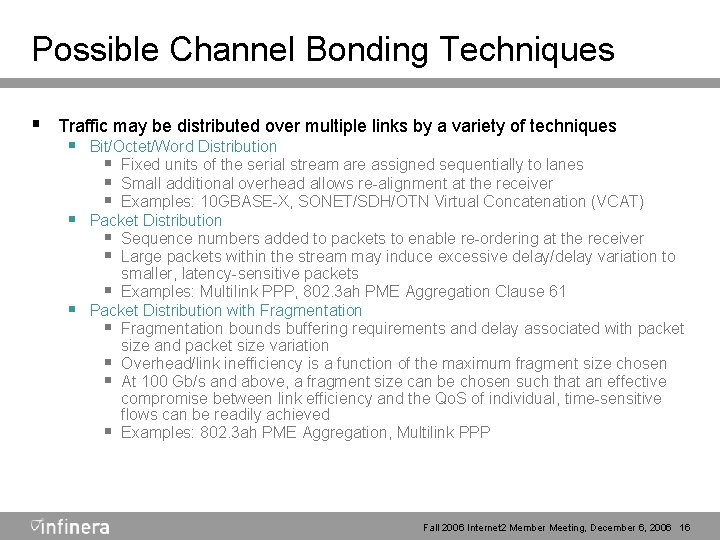

Possible Channel Bonding Techniques § Traffic may be distributed over multiple links by a variety of techniques § Bit/Octet/Word Distribution § Fixed units of the serial stream are assigned sequentially to lanes § Small additional overhead allows re-alignment at the receiver § Examples: 10 GBASE-X, SONET/SDH/OTN Virtual Concatenation (VCAT) § Packet Distribution § Sequence numbers added to packets to enable re-ordering at the receiver § Large packets within the stream may induce excessive delay/delay variation to § smaller, latency-sensitive packets § Examples: Multilink PPP, 802. 3 ah PME Aggregation Clause 61 Packet Distribution with Fragmentation § Fragmentation bounds buffering requirements and delay associated with packet size and packet size variation § Overhead/link inefficiency is a function of the maximum fragment size chosen § At 100 Gb/s and above, a fragment size can be chosen such that an effective compromise between link efficiency and the Qo. S of individual, time-sensitive flows can be readily achieved § Examples: 802. 3 ah PME Aggregation, Multilink PPP Fall 2006 Internet 2 Member Meeting, December 6, 2006 16

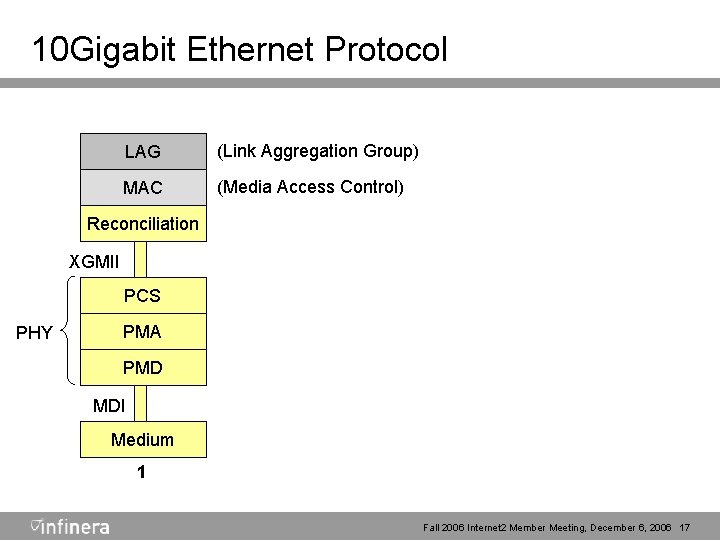

10 Gigabit Ethernet Protocol LAG (Link Aggregation Group) MAC (Media Access Control) Reconciliation XGMII PCS PHY PMA PMD MDI Medium 1 Fall 2006 Internet 2 Member Meeting, December 6, 2006 17

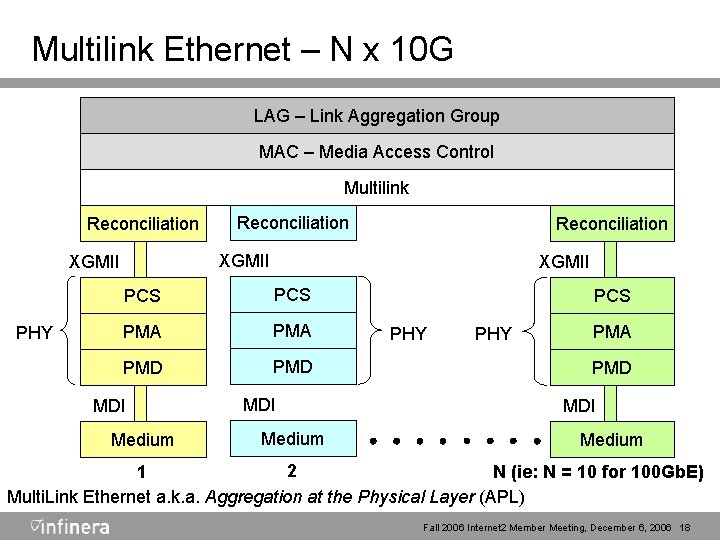

Multilink Ethernet – N x 10 G LAG – Link Aggregation Group LAG MAC – Media Access Control MAC Multilink Reconciliation XGMII PHY Reconciliation XGMII PCS PMA PMD MDI Medium PCS PHY PMA PMD MDI Medium 2 1 N (ie: N = 10 for 100 Gb. E) Multi. Link Ethernet a. k. a. Aggregation at the Physical Layer (APL) Fall 2006 Internet 2 Member Meeting, December 6, 2006 18

Multilink Ethernet Benefits § Ensures ordered delivery § Resilient and scalable § § § Incremental hitless growth up to 32 channels Minimal added latency Line code independent, preserves all existing 10 G PHYs Orthogonal to and lower level than LAG Scales into future as individual channel speeds increase Fall 2006 Internet 2 Member Meeting, December 6, 2006 20

Multilink Ethernet Benefits (Cont. ) § Concept well proven § Packet fragmentation, distribution, collection and reassembly similar to 802. 3 ah PME aggregation § Fits well with multi-port (4 x, 5 x, 10 x, etc. ) PHYs § Preserves existing interfaces (e. g. XGMII, XAUI) § Compatible with physical layer transport implementation over N x wavelengths Fall 2006 Internet 2 Member Meeting, December 6, 2006 21

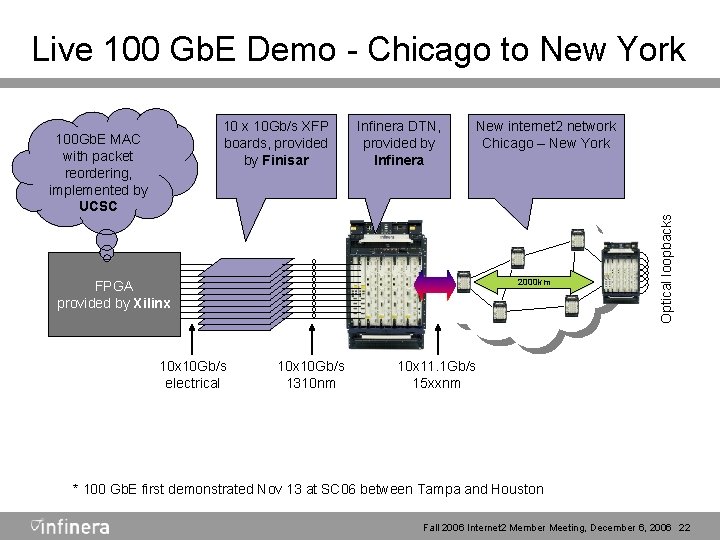

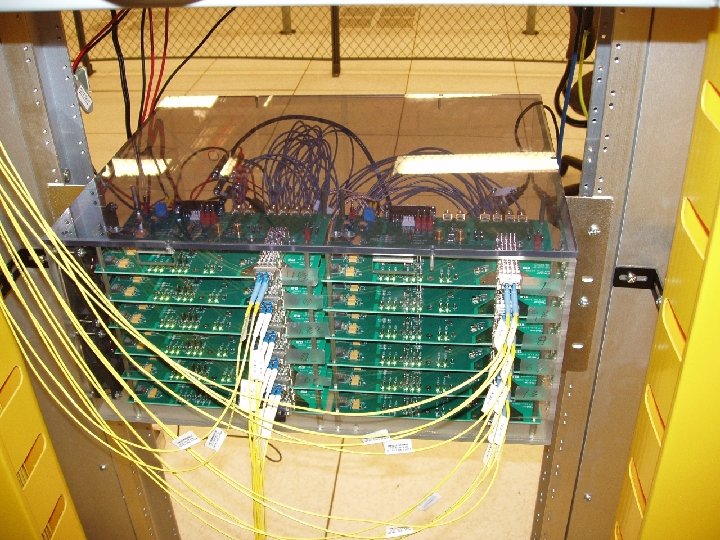

Live 100 Gb. E Demo - Chicago to New York 100 Gb. E MAC with packet reordering, implemented by UCSC Infinera DTN, provided by Infinera New internet 2 network Chicago – New York 2000 km FPGA provided by Xilinx 10 Gb/s electrical 10 x 10 Gb/s 1310 nm Optical loopbacks 10 x 10 Gb/s XFP boards, provided by Finisar 10 x 11. 1 Gb/s 15 xxnm * 100 Gb. E first demonstrated Nov 13 at SC 06 between Tampa and Houston Fall 2006 Internet 2 Member Meeting, December 6, 2006 22

Fall 2006 Internet 2 Member Meeting, December 6, 2006 26

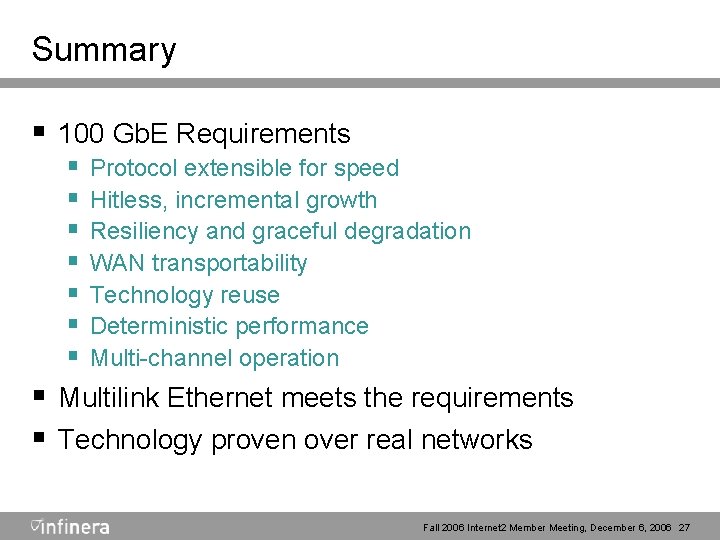

Summary § 100 Gb. E Requirements § Protocol extensible for speed § Hitless, incremental growth § Resiliency and graceful degradation § WAN transportability § Technology reuse § Deterministic performance § Multi-channel operation § Multilink Ethernet meets the requirements § Technology proven over real networks Fall 2006 Internet 2 Member Meeting, December 6, 2006 27

Thanks! Serge Melle smelle@infinera. com 408 -572 -5200 Drew Perkins dperkins@infinera. com 408 -572 -5308

- Slides: 26