1 Reinforcement learning in High Energy Physics MACIEJ

- Slides: 24

1 Reinforcement learning in High Energy Physics MACIEJ W. MAJEWSKI – AGH UST (Kraków, Poland)

Agenda • Short mention of my main projects • What is Reinforcement Learning • Deep. Learn. Physics • RL in LARTPC 2

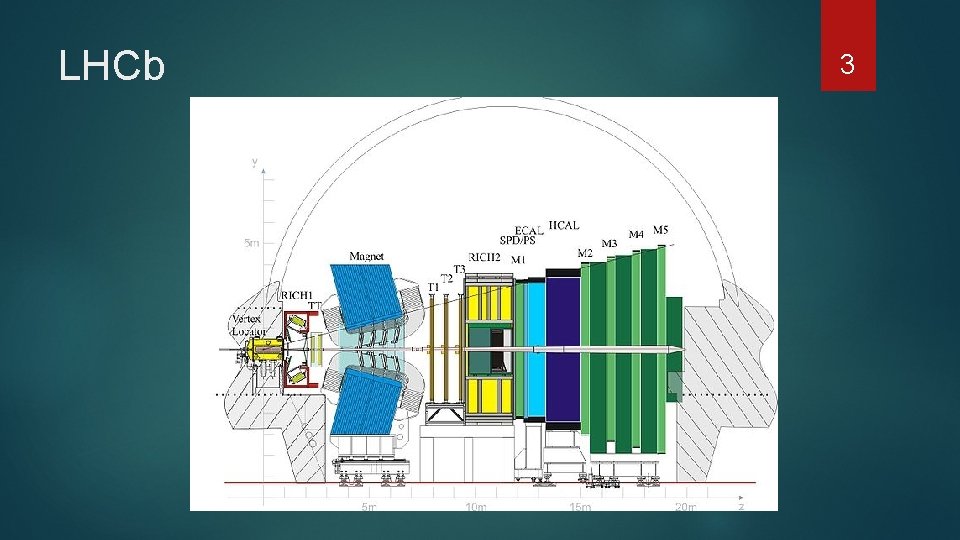

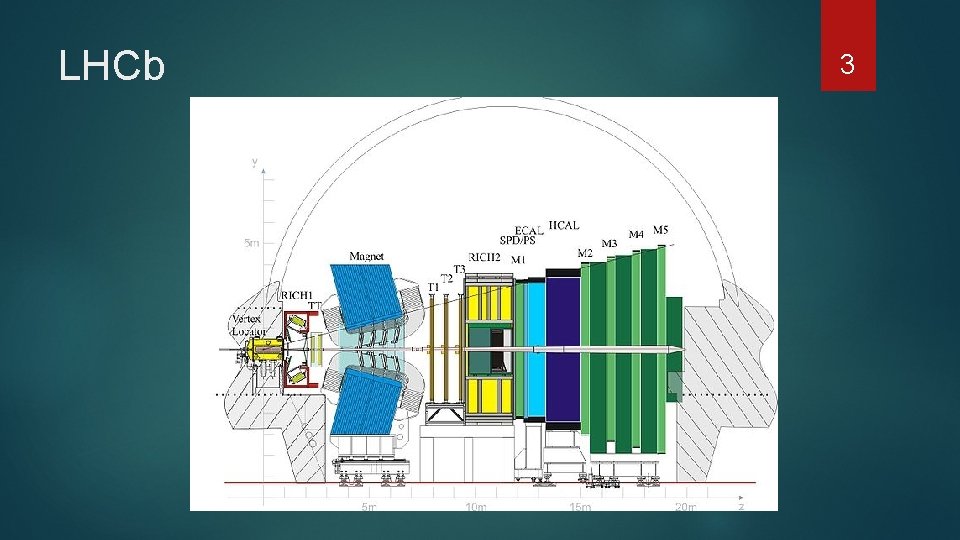

LHCb 3

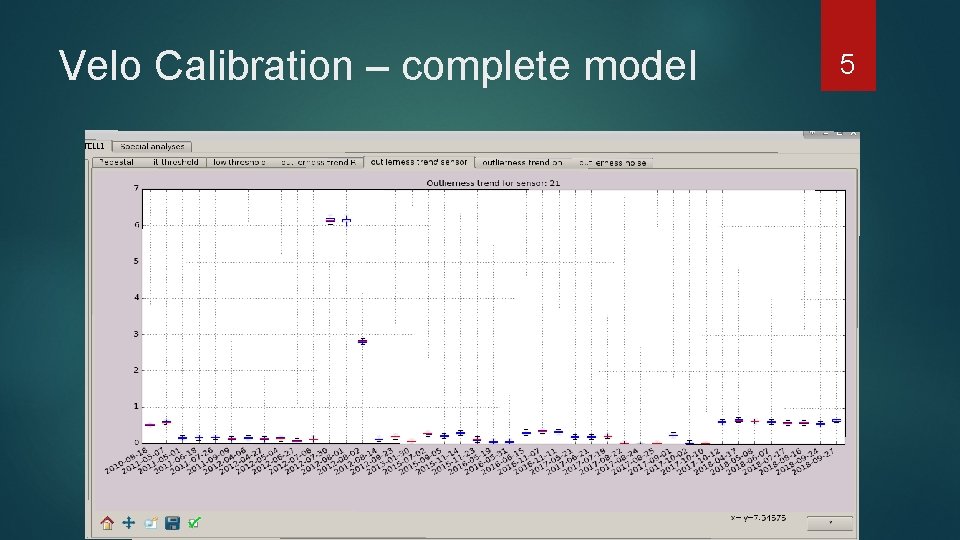

Machine Learning for Velo Anomaly detection system for Velo caliration – Uses bayesian network to detect anomalies before we use miscalibrated detector Calibration time prediction (survival analysis)– when should we recalibrate Dimensionality reduction for monitoring 4

Velo Calibration – complete model 5

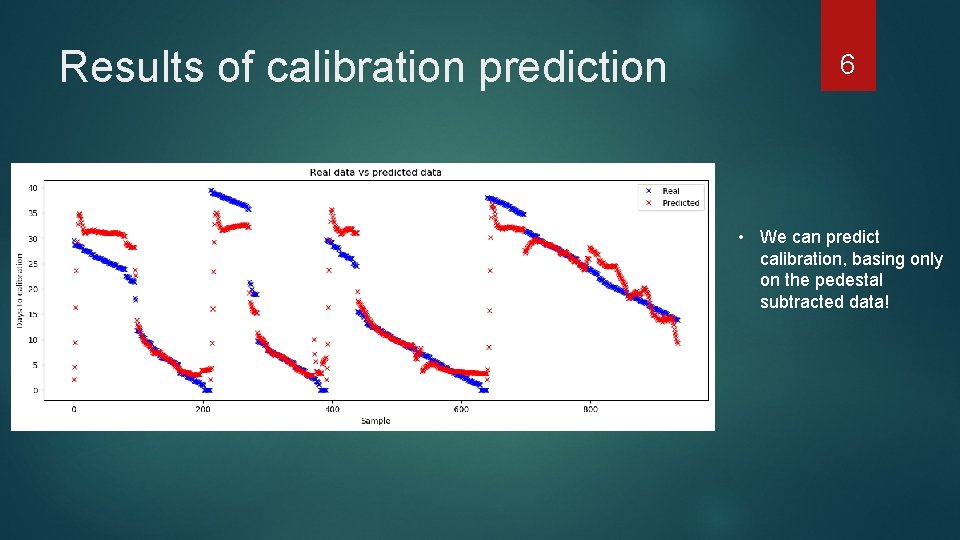

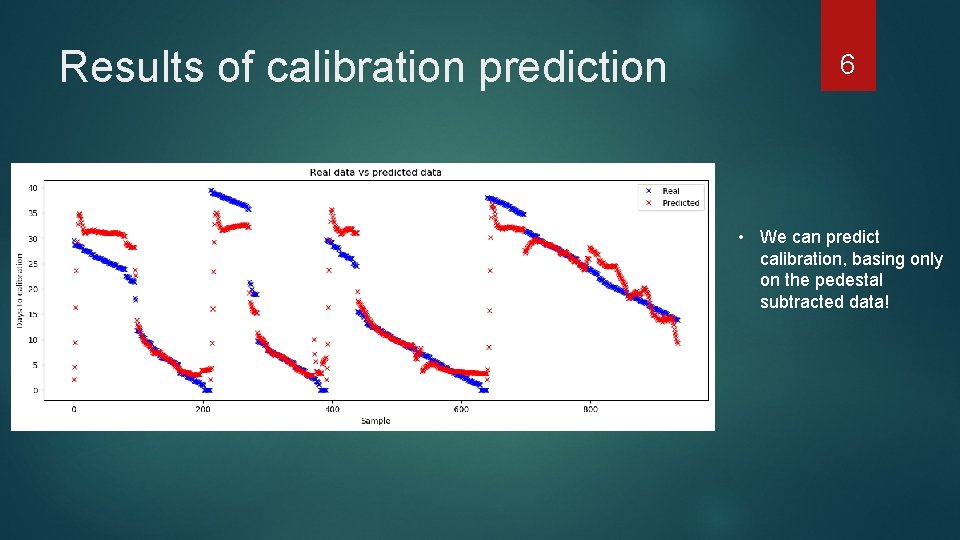

Results of calibration prediction 6 • We can predict calibration, basing only on the pedestal subtracted data!

7 Reinforcement Learning in High Energy Physics

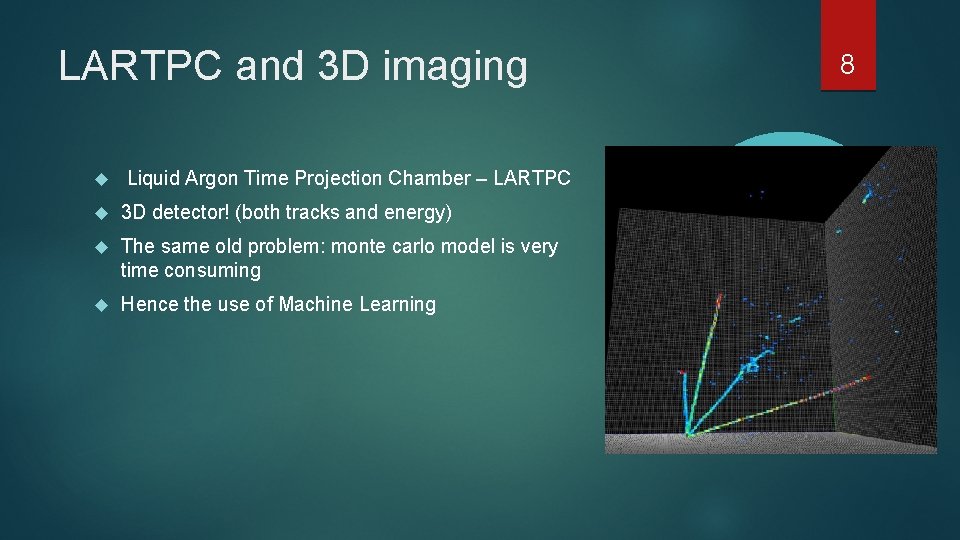

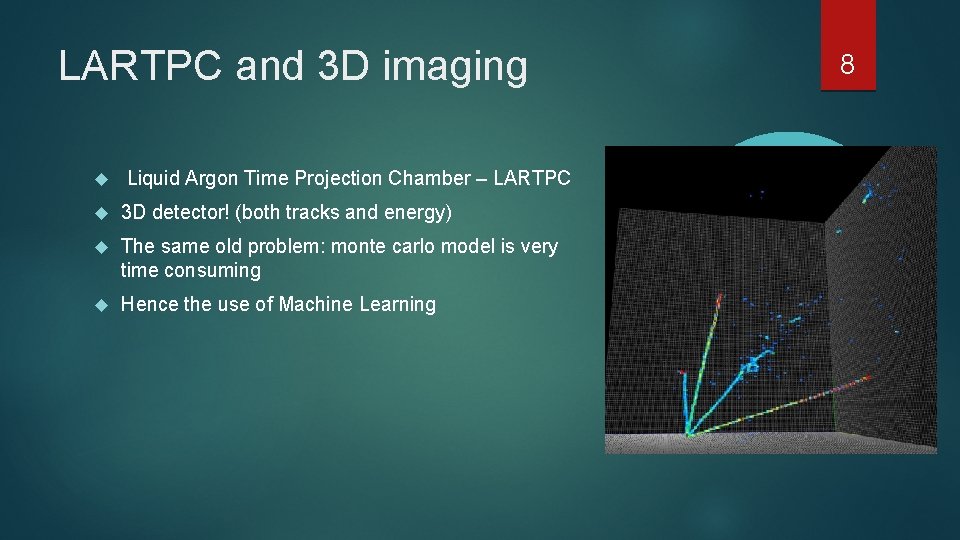

LARTPC and 3 D imaging Liquid Argon Time Projection Chamber – LARTPC 3 D detector! (both tracks and energy) The same old problem: monte carlo model is very time consuming Hence the use of Machine Learning 8

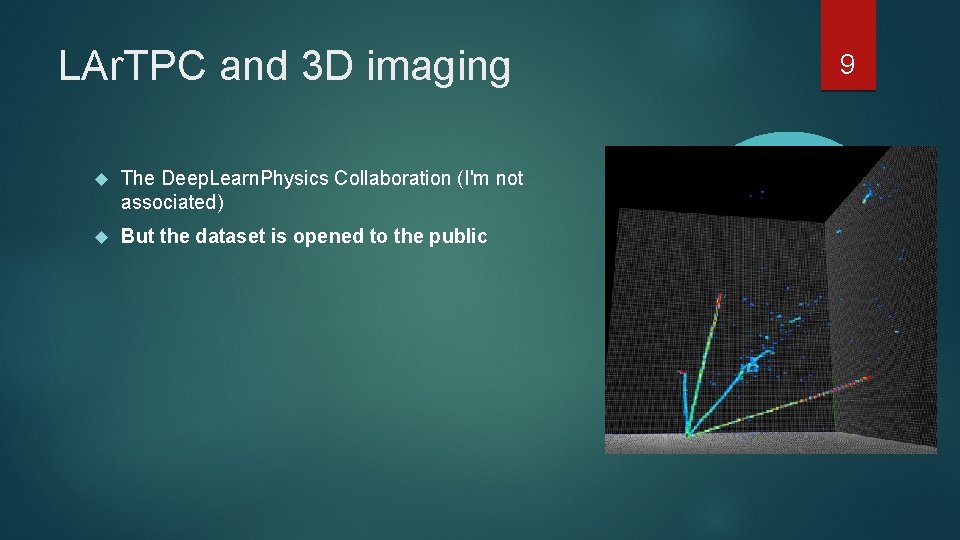

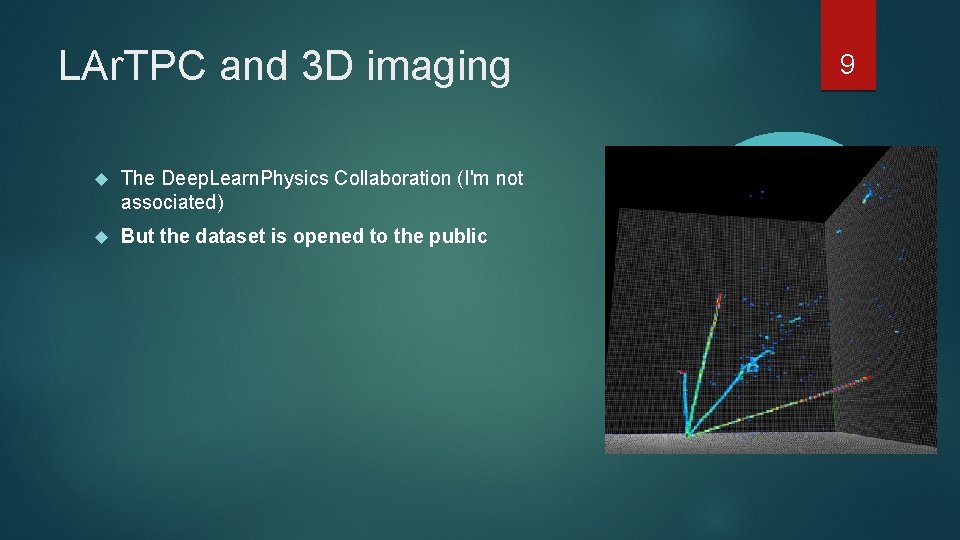

LAr. TPC and 3 D imaging The Deep. Learn. Physics Collaboration (I'm not associated) But the dataset is opened to the public 9

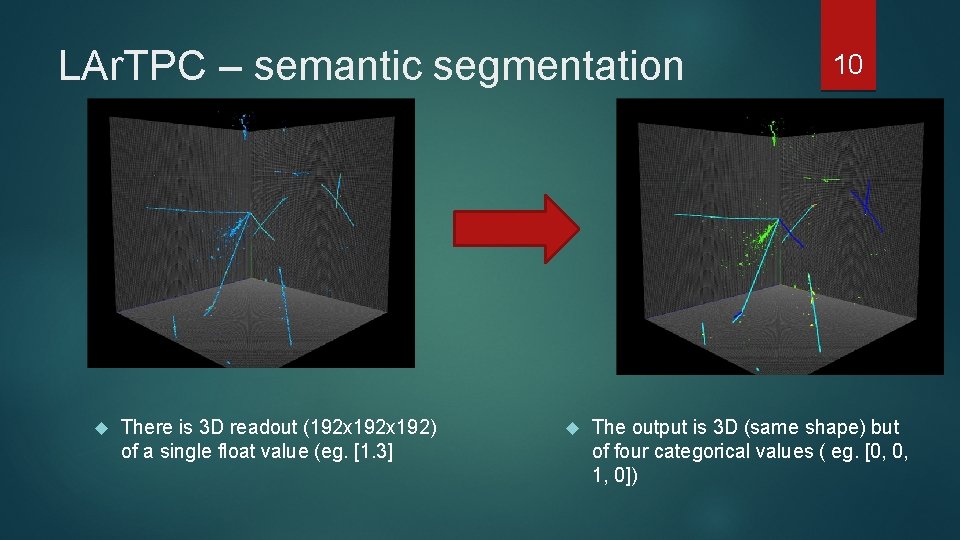

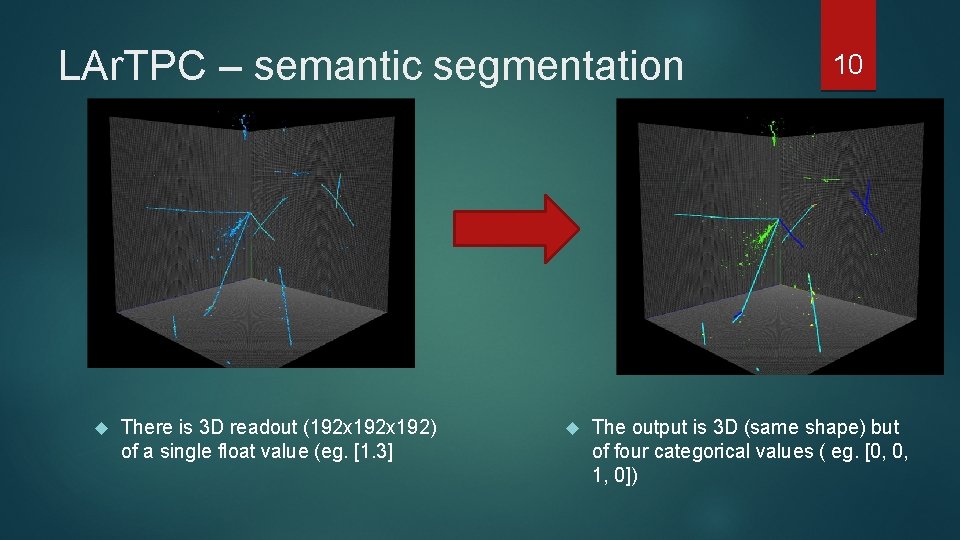

LAr. TPC – semantic segmentation There is 3 D readout (192 x 192) of a single float value (eg. [1. 3] 10 The output is 3 D (same shape) but of four categorical values ( eg. [0, 0, 1, 0])

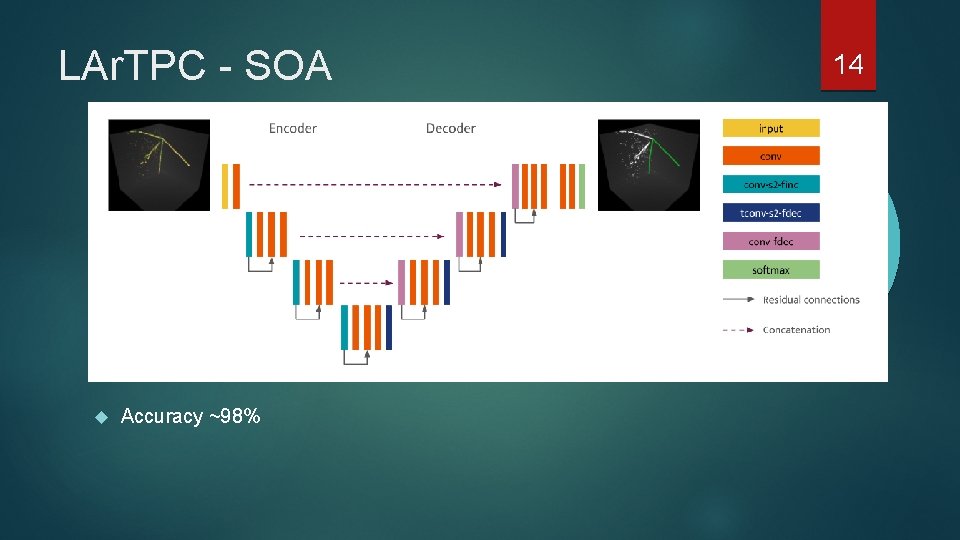

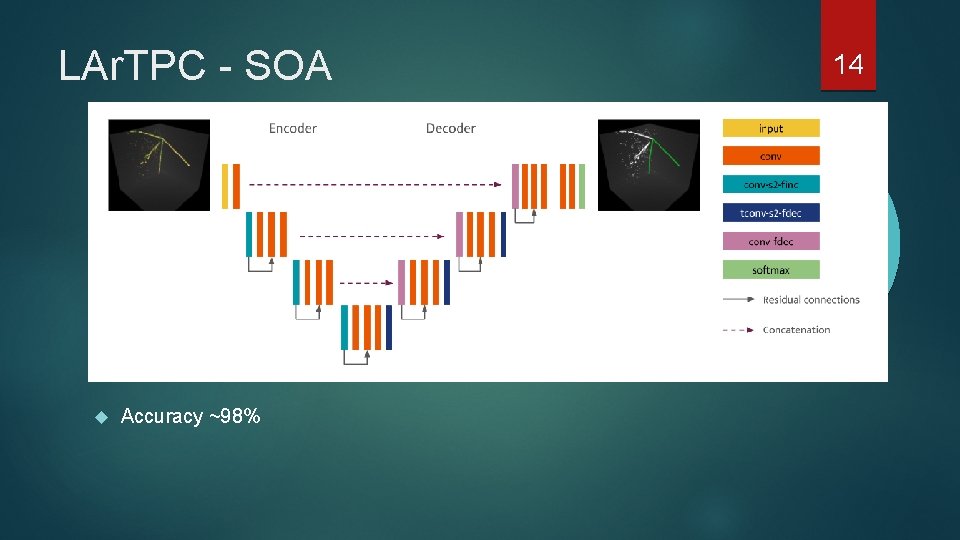

LAr. TPC – SOA solution Laura Domine and Kazuhiro Terao Solution uses Sparse Convolution U-net 11

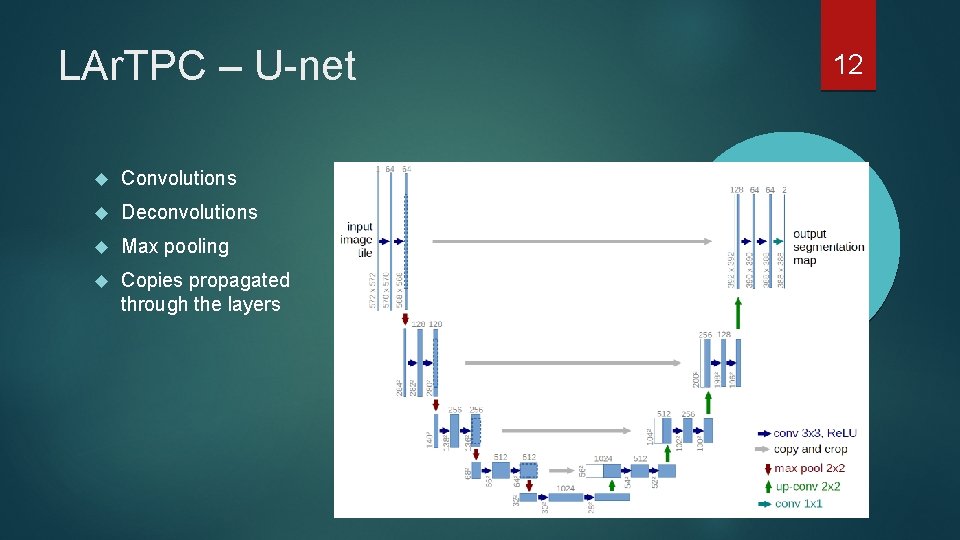

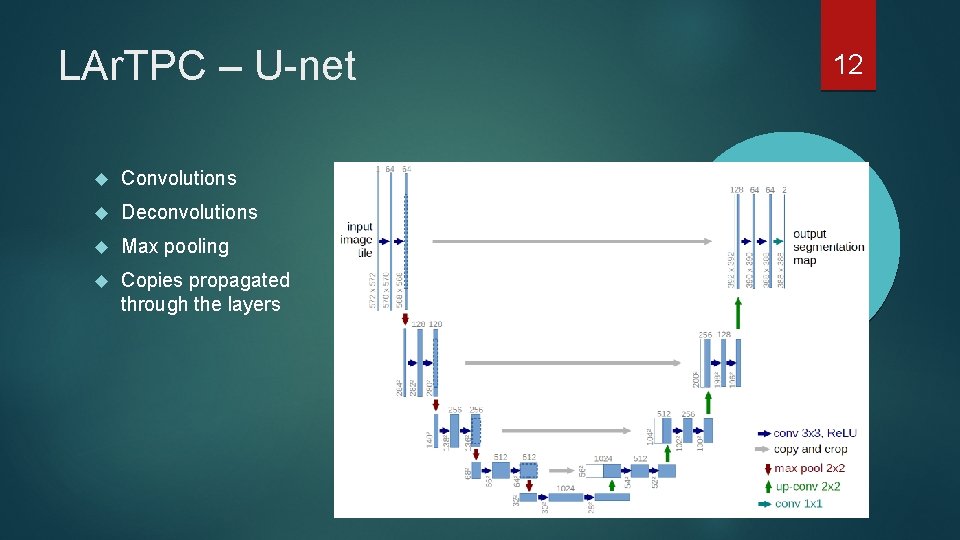

LAr. TPC – U-net Convolutions Deconvolutions Max pooling Copies propagated through the layers 12

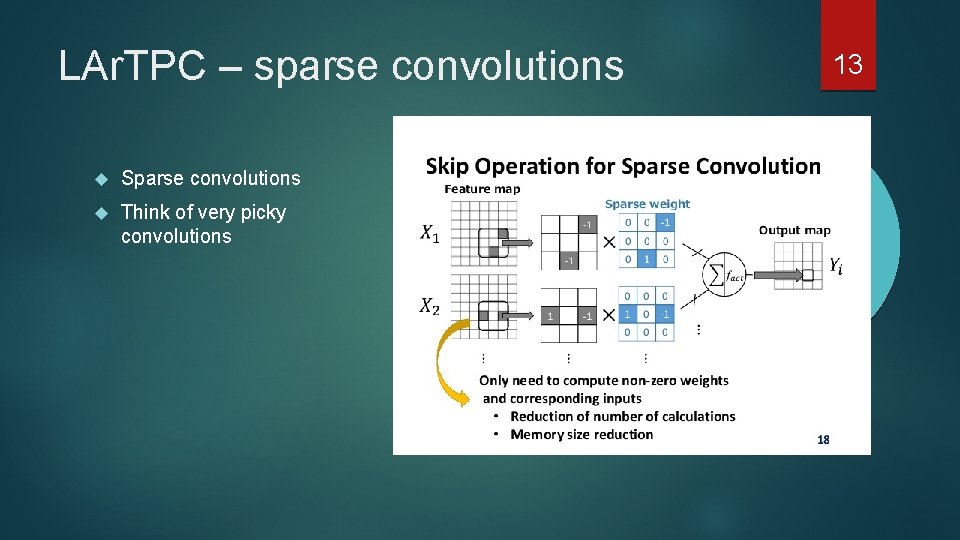

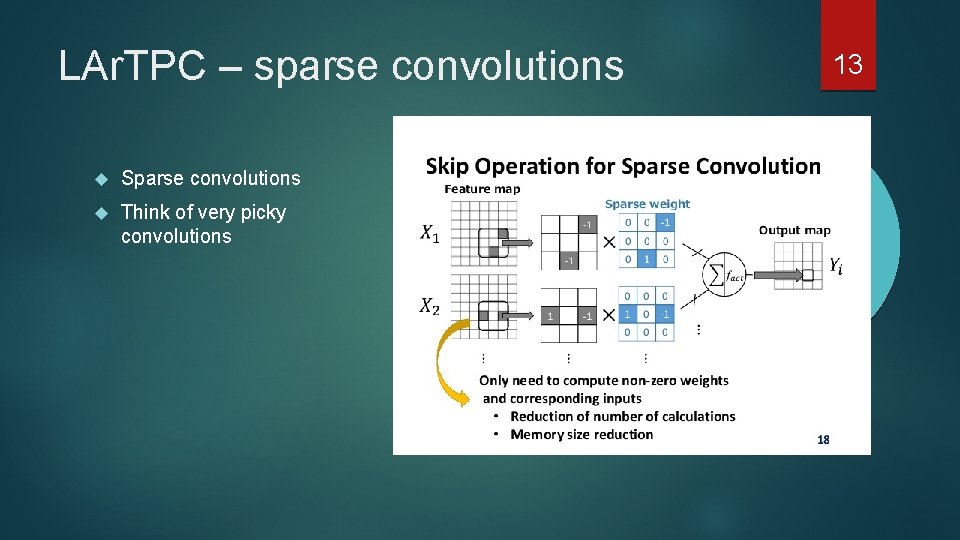

LAr. TPC – sparse convolutions Sparse convolutions Think of very picky convolutions 13

LAr. TPC - SOA Accuracy ~98% 14

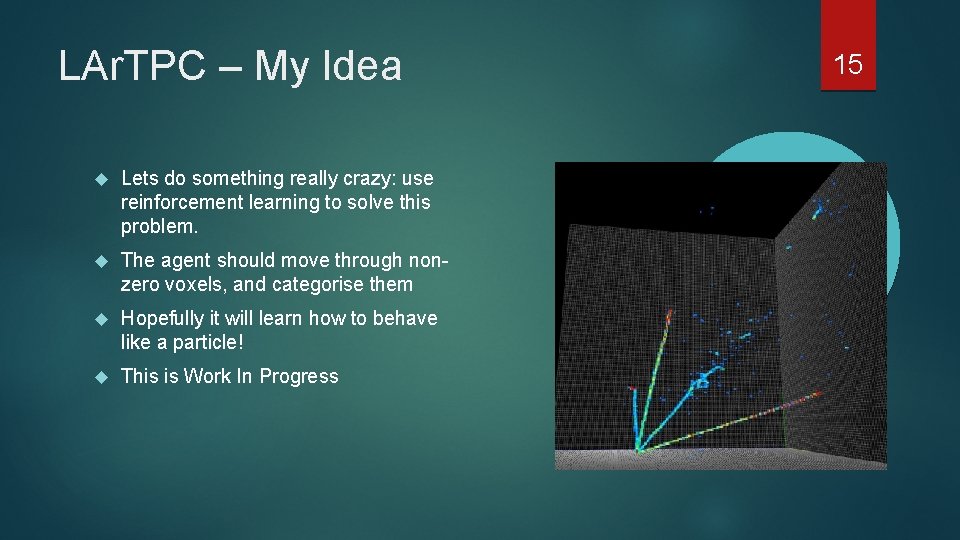

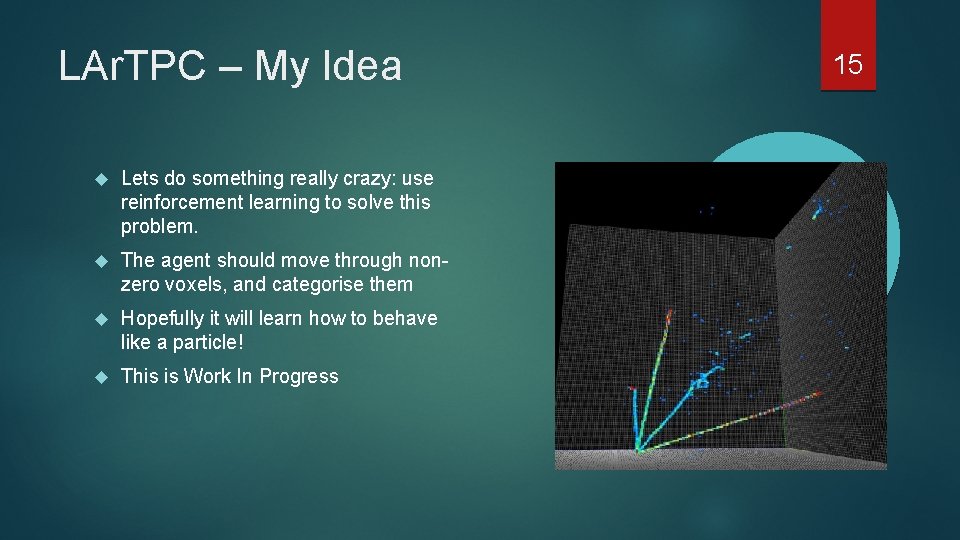

LAr. TPC – My Idea Lets do something really crazy: use reinforcement learning to solve this problem. The agent should move through nonzero voxels, and categorise them Hopefully it will learn how to behave like a particle! This is Work In Progress 15

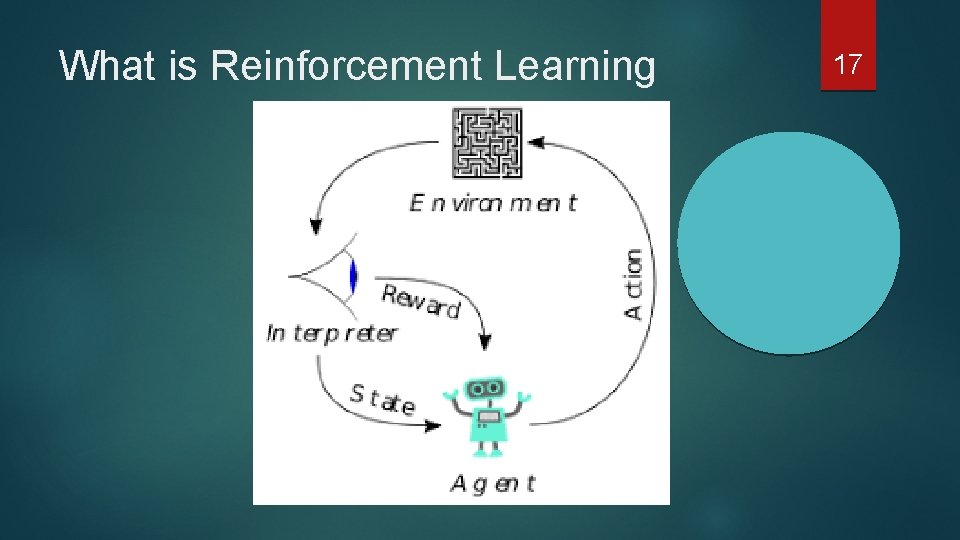

What is Reinforcement Learning 16

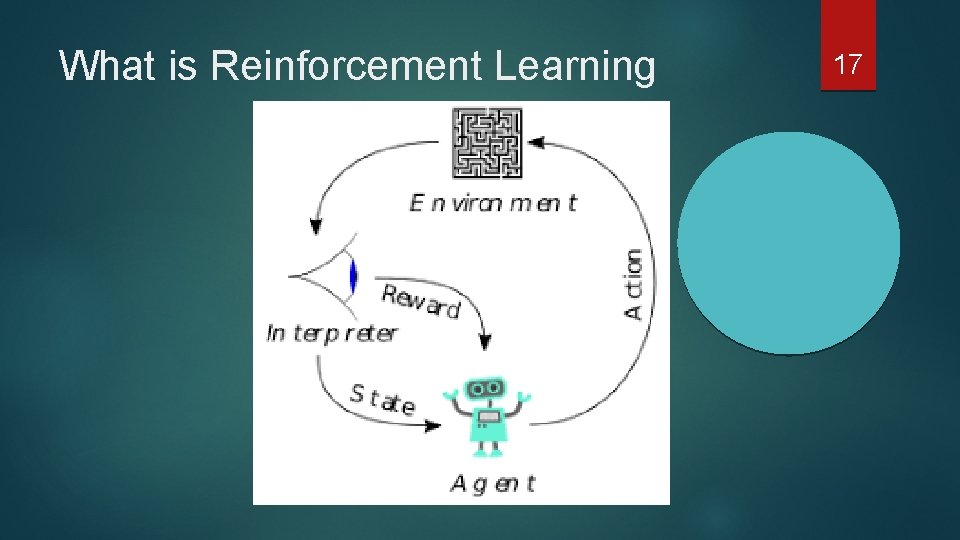

What is Reinforcement Learning 17

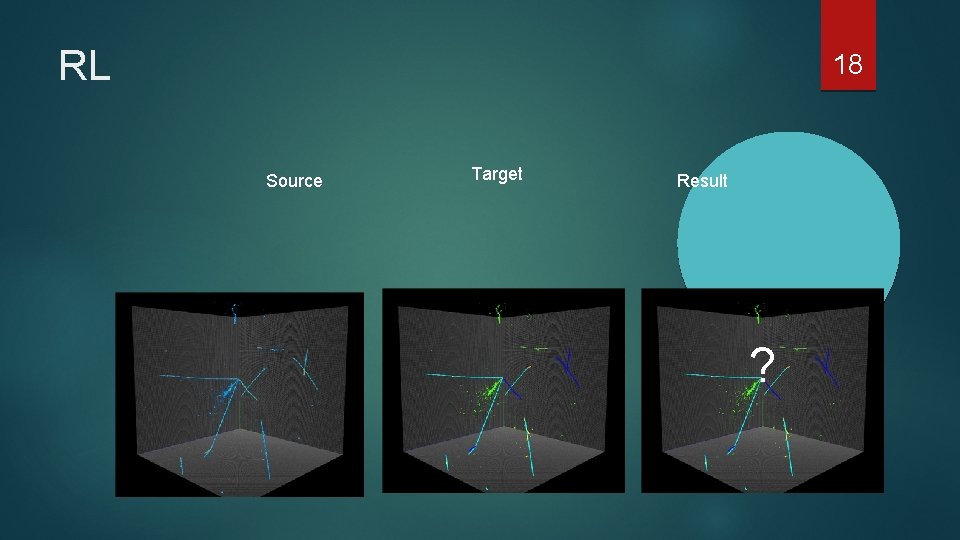

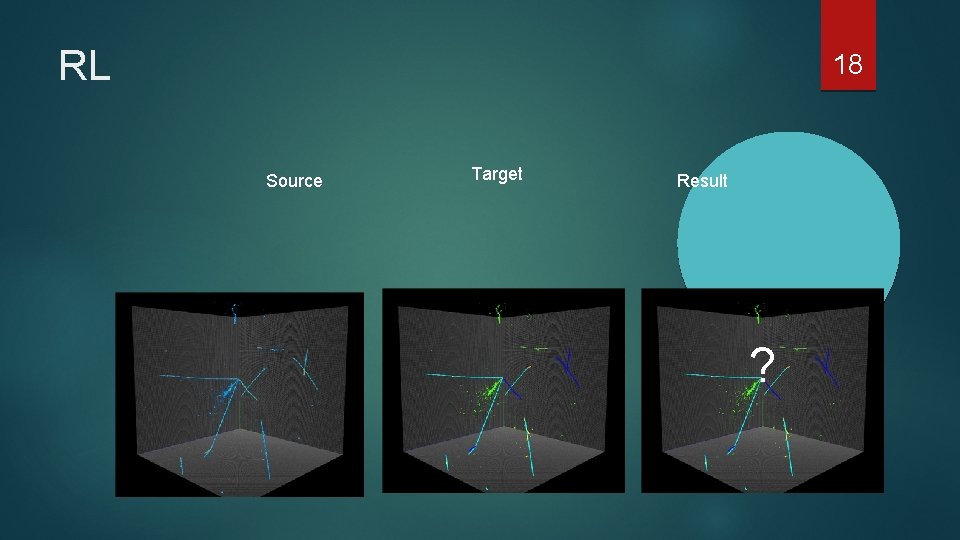

RL 18 Source Target Result ?

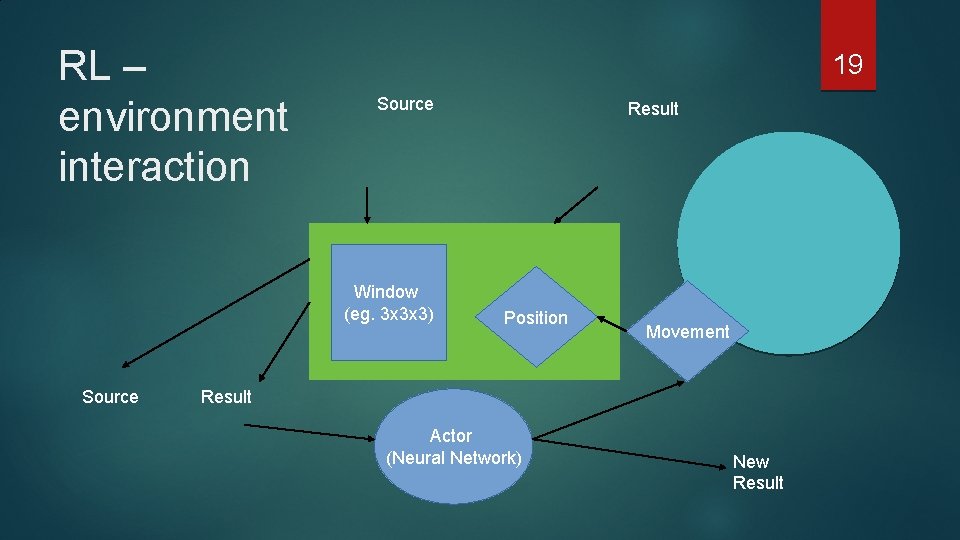

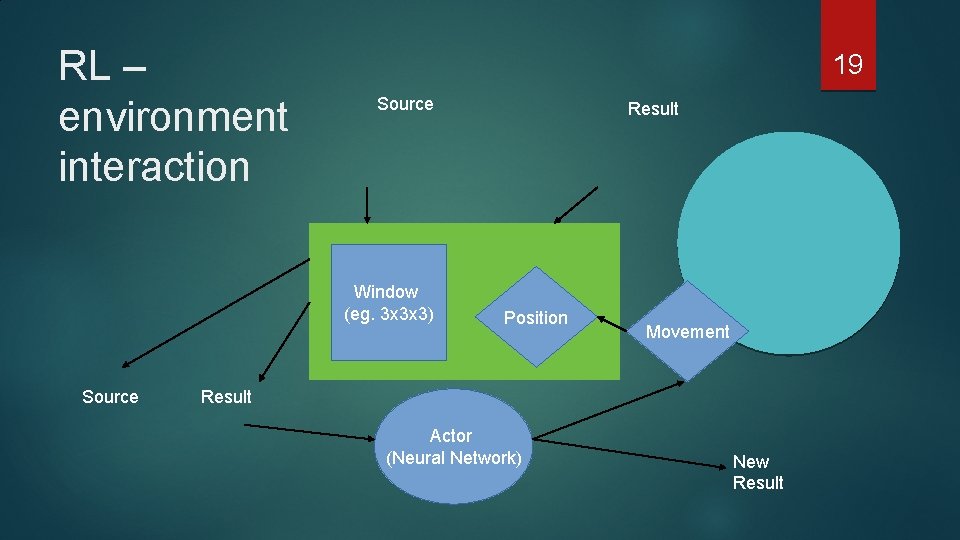

RL – environment interaction 19 Source Window (eg. 3 x 3 x 3) Source Result Position Movement Result Actor (Neural Network) New Result

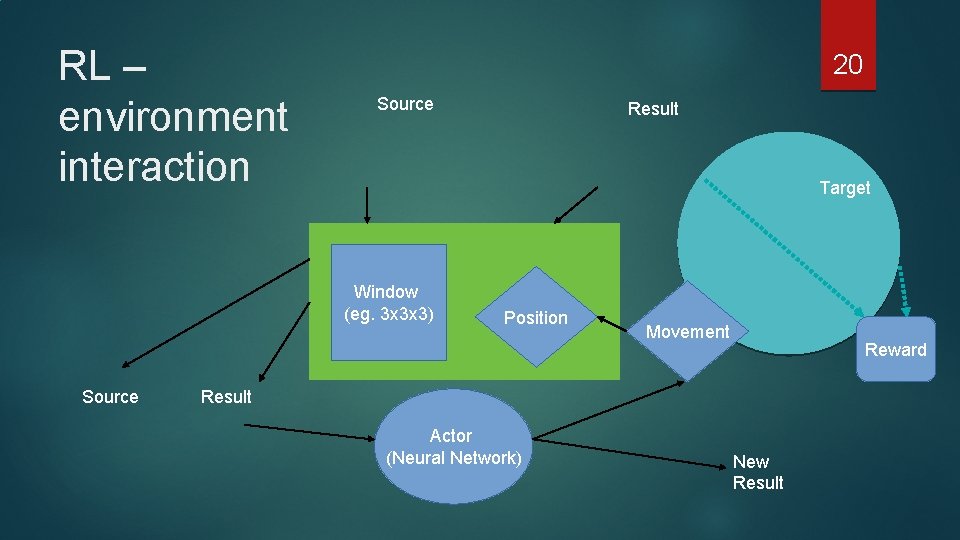

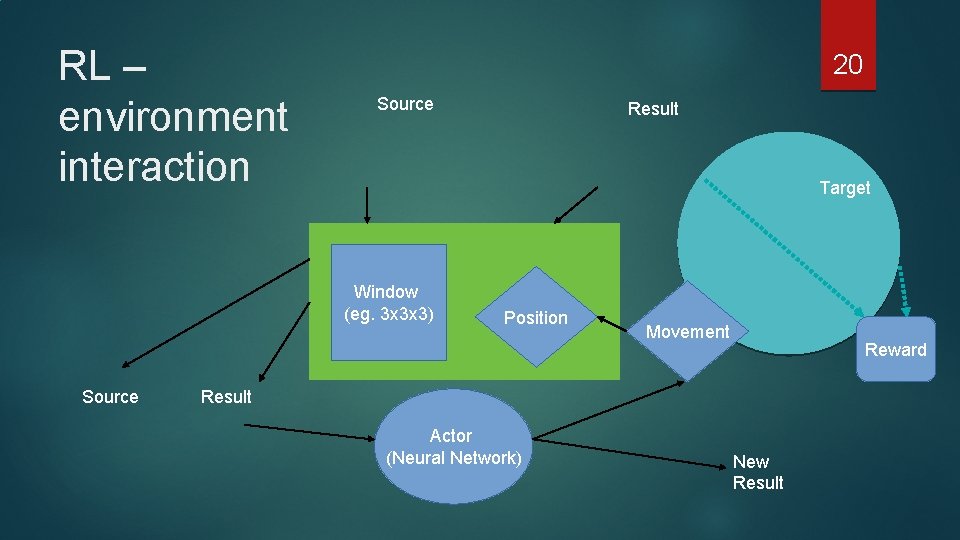

RL – environment interaction 20 Source Target Window (eg. 3 x 3 x 3) Source Result Position Movement Reward Result Actor (Neural Network) New Result

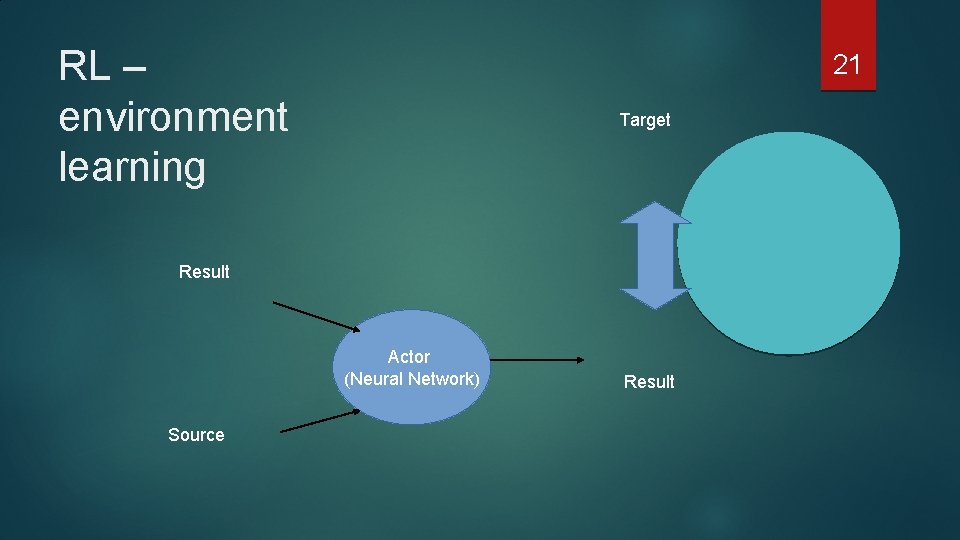

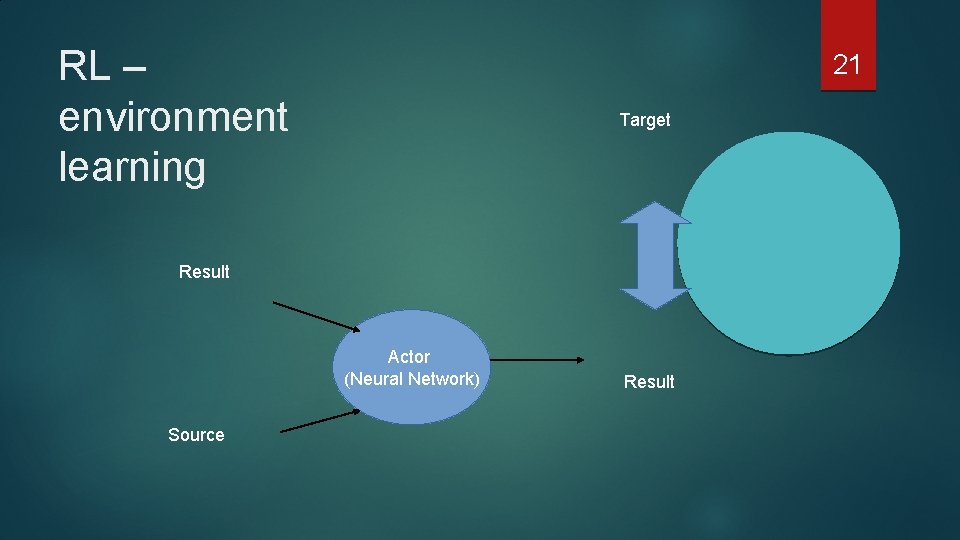

RL – environment learning 21 Target Result Actor (Neural Network) Source Result

RL REWARD( 22 Target , ) = ? ? Result

Demo time! (If we still have some time)

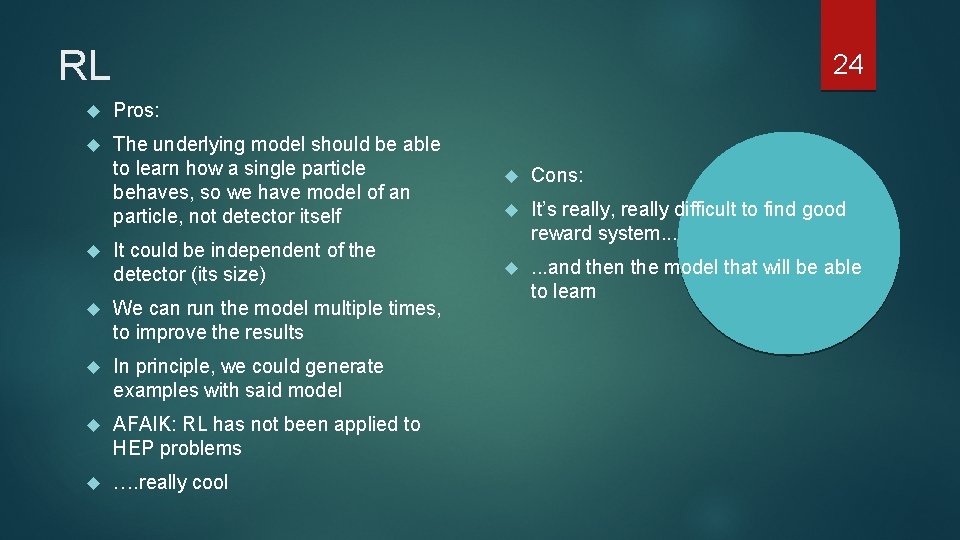

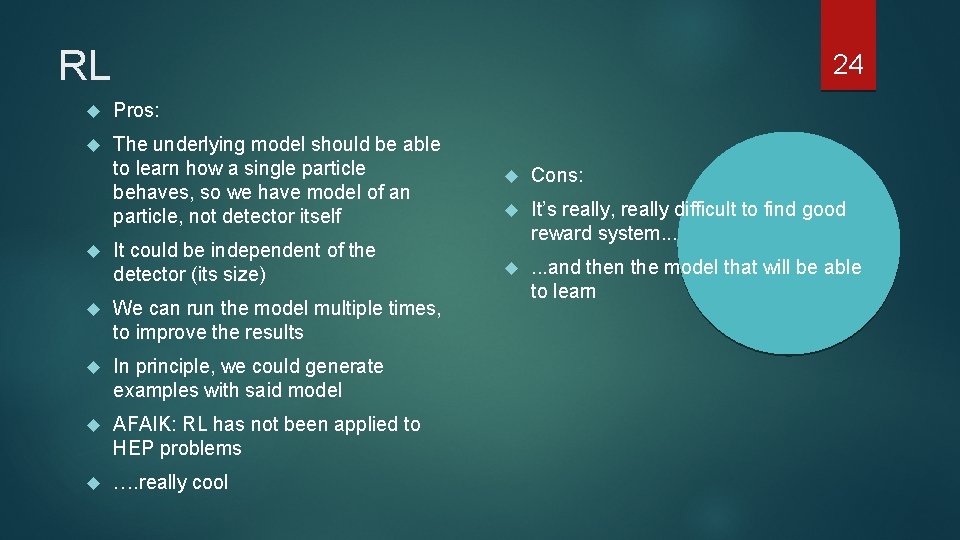

RL 24 Pros: The underlying model should be able to learn how a single particle behaves, so we have model of an particle, not detector itself Cons: It could be independent of the detector (its size) It’s really, really difficult to find good reward system. . . We can run the model multiple times, to improve the results . . . and then the model that will be able to learn In principle, we could generate examples with said model AFAIK: RL has not been applied to HEP problems …. really cool