1 Perceptron Learning Rule 2 Objectives How do

1 Perceptron Learning Rule

2 Objectives How do we determine the weight matrix and bias for perceptron networks with many inputs, where it is impossible to visualize the decision boundaries? The main object is to describe an algorithm for training perceptron networks, so that they can learn to solve classification problems.

3 Learning Rule Learning rule: a procedure (training algorithm) for modifying the weights and the biases of a network. The purpose of the learning rule is to train the network to perform some task. Supervised learning, unsupervised learning and reinforcement (graded) learning.

4 Supervised Learning The learning rule is provided with a set of examples (the training set) of proper network behavior: {p 1, t 1}, {p 2, t 2}, …, {p. Q, t. Q} where pq is an input to the network and tq is the corresponding correct (target) output. As the inputs are applied to the network, the network outputs are compared to the targets. The learning rule is then used to adjust the weights and biases of the network in order to move the network outputs closer to the targets.

5 Supervised Learning

6 Reinforcement Learning The learning rule is similar to supervised learning, except that, instead of being provided with the correct output for each network input, the algorithm is only given a grade. The grade (score) is a measure of the network performance over some sequence of inputs. It appears to be most suited to control system applications.

7 Unsupervised Learning The weights and biases are modified in response to network inputs only. There are no target outputs available. Most of these algorithms perform some kind of clustering operation. They learn to categorize the input patterns into a finite number of classes. This is especially in such applications as vector quantization.

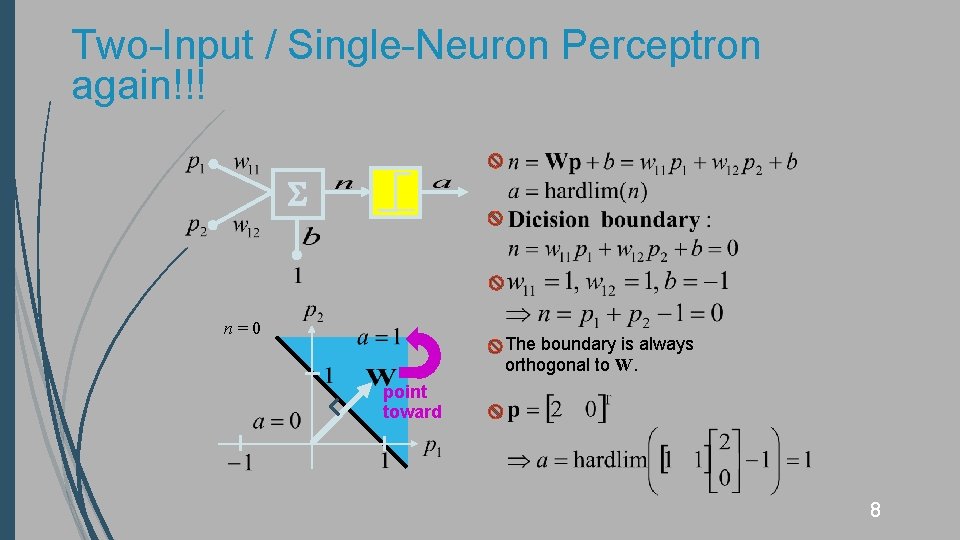

Two-Input / Single-Neuron Perceptron again!!! n=0 The boundary is always orthogonal to W. point toward 8

Perceptron Network Design The input/target pairs for the AND gate are AND Step 1: Select a decision boundary Step 2: Choose a weight vector W that is orthogonal to the decision boundary Dark circle : 1 Light circle : 0 Step 3: Find the bias b, e. g. , picking a point on the decision boundary and satisfying n = Wp + b = 0 9

10 Test Problem The given input/target pairs are p 1 p 2 p 3 Dark circle : 1 Light circle : 0 Two-input and one output network without a bias the decision boundary must pass through the origin. The length of the weight vector does not matter; only its direction is important.

Constructing Learning Rule 11 Training begins by assigning some initial values for the network parameters. p 1 p 2 p 3 The network has not returned the correct value, a = 0 and t 1 = 1. The initial weight vector results in a decision boundary that incorrectly classifies the vector p 1.

12 Constructing Learning Rule p 1 p 2 p 3 One approach would be set W equal to p 1 such that p 1 was classified properly in the future. Unfortunately, it is easy to construct a problem for which this rule cannot find a solution.

13 Constructing Learning Rule p 1 p 2 p 3 Another way would be to add W equal to p 1. Adding p 1 to W would make W point more in the direction of p 1.

14 Constructing Learning Rule The next input vector is p 2. p 1 p 2 p 3 A class 0 vector was misclassified as a 1, a = 1 and t 2 = 0.

15 Constructing Learning Rule Present the 3 rd vector p 3 p 1 p 2 p 3 A class 0 vector was misclassified as a 1, a = 1 and t 2 = 0.

16 Constructing Learning Rule If we present any of the input vectors to the neuron, it will output the correct class for that input vector. The perceptron has finally learned to classify the three vectors properly. The third and final rule: Training sequence: p 1 p 2 p 3 One iteration

17 Unified Learning Rule Perceptron error: e = t – a

18 Training Multiple-Neuron Perceptron Learning rate :

19 Apple/Orange Recognition Problem

Limitations The perceptron can be used to classify input vectors that can be separated by a linear boundary, like AND gate example. linearly separable (AND, OR and NOT gates) Not linearly separable, e. g. , XOR gate 20

21 Solved Problem P 4. 3 Design a perceptron network to solve the next problem ( 1, 2) (1, 2) Class 2: t = (0, 1) Class 1: t = (0, 0) (1, 1) (2, 0) ( 2, 1) ( 1, 1) Class 3: t = (1, 0) Class 4: t = (1, 1) ( 2, 2) (2, 1) A two-neuron perceptron creates two decision boundaries.

22 Solution of P 4. 3 Class 1 Class 2 Class 3 Class 4 1 -3 xin xout 1 -1 yin yout -2 0

23 Solved Problem P 4. 5 Train a perceptron network to solve P 4. 3 problem using the perceptron learning rule.

24 Solution of P 4. 5

25 Solution of P 4. 5

26 Solution of P 4. 5 -1 xin -2 xout 0 0 yin yout -2 0

- Slides: 26