1 Optimization methods Aleksey Minin SaintPetersburg State University

1 Optimization methods Aleksey Minin Saint-Petersburg State University Student of ACOPhys master program (10 th semester) Joint Advanced Students School A d d an e i l p p CO 12/1/2020 tion ta mpu ics s y h al P

2 What is optimization? Joint Advanced Students School 12/1/2020

3 Joint Advanced Students School 12/1/2020 Content: 1. 2. 3. 4. 5. 6. Applications of optimization Global Optimization Local Optimization Discrete optimization Constrained optimization Real application, Bounded Derivative Network.

4 Joint Advanced Students School 12/1/2020 Applications of optimization • Advanced engineering design • Biotechnology • Data analysis • Environmental management • Financial planning • Process control • Scientific modeling etc

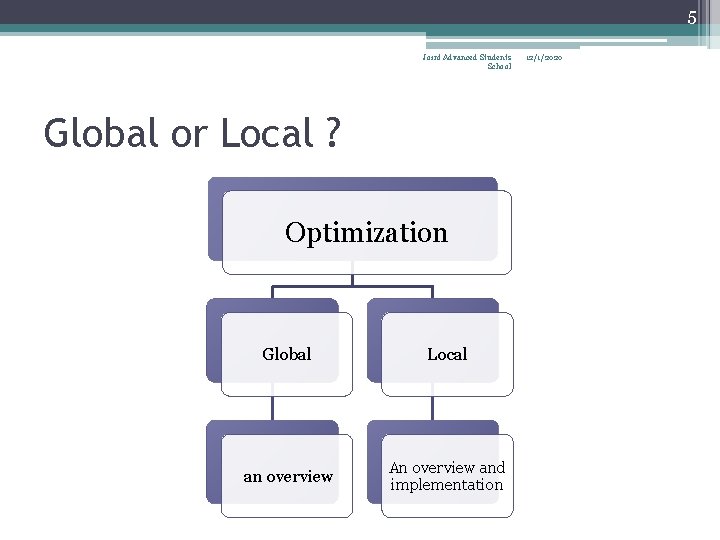

5 Joint Advanced Students School Global or Local ? Optimization Global Local an overview An overview and implementation 12/1/2020

6 Joint Advanced Students School 12/1/2020 What is global optimization? • The objective of global optimization is to find the globally best solution of (possibly nonlinear) models, in the (possible or known) presence of multiple local optima.

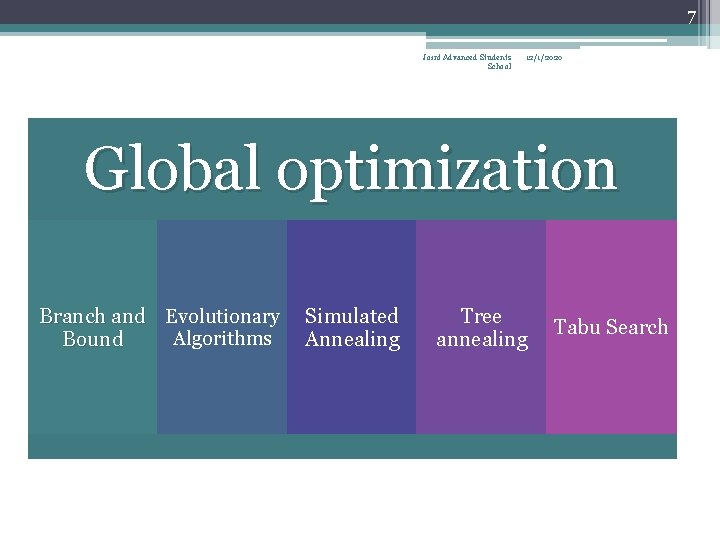

7 Joint Advanced Students School 12/1/2020 Global optimization Branch and Evolutionary Algorithms Bound Simulated Annealing Tree annealing Tabu Search

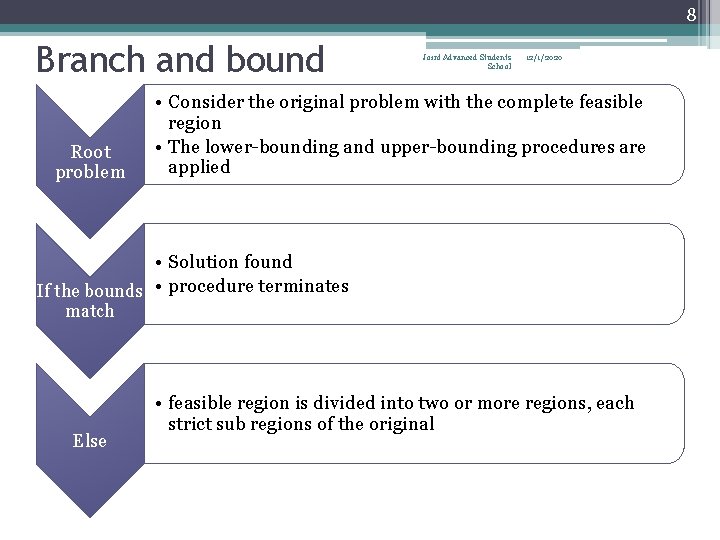

8 Branch and bound Root problem Joint Advanced Students School 12/1/2020 • Consider the original problem with the complete feasible region • The lower-bounding and upper-bounding procedures are applied • Solution found If the bounds • procedure terminates match Else • feasible region is divided into two or more regions, each strict sub regions of the original

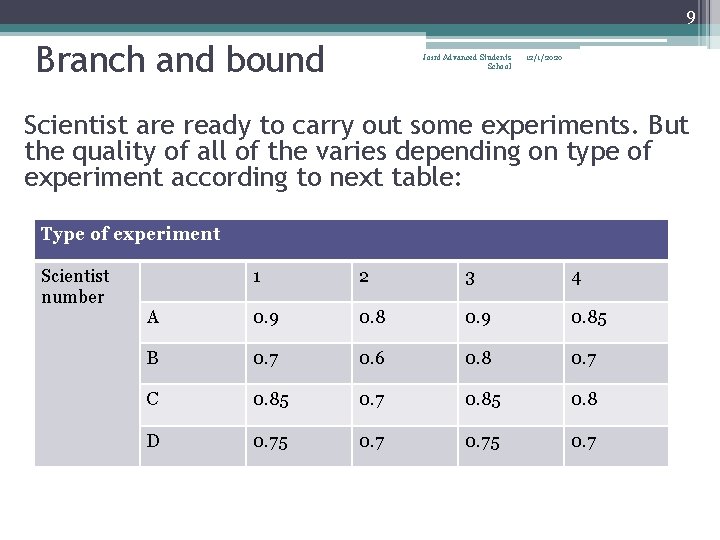

9 Branch and bound Joint Advanced Students School 12/1/2020 Scientist are ready to carry out some experiments. But the quality of all of the varies depending on type of experiment according to next table: Type of experiment Scientist number 1 2 3 4 A 0. 9 0. 85 B 0. 7 0. 6 0. 8 0. 7 C 0. 85 0. 7 0. 85 0. 8 D 0. 75 0. 7

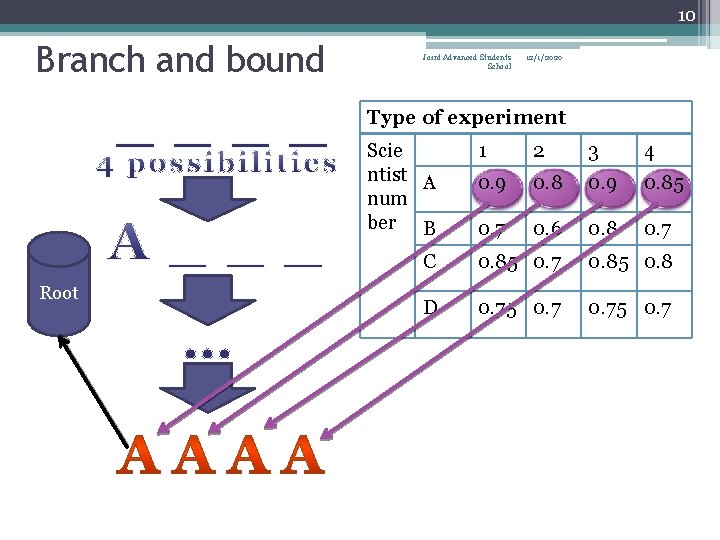

10 Branch and bound Joint Advanced Students School 12/1/2020 Type of experiment Scie ntist A num ber B Root 1 2 3 4 0. 9 0. 85 0. 7 0. 6 0. 8 0. 7 C 0. 85 0. 7 0. 85 0. 8 D 0. 75 0. 7

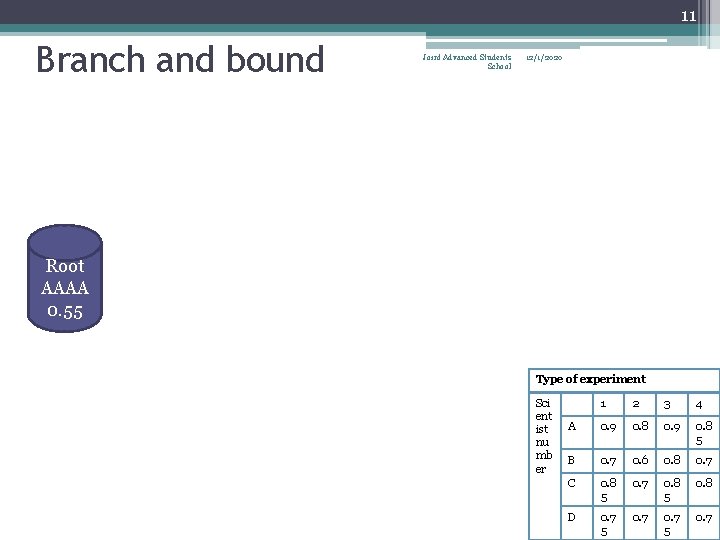

11 Branch and bound Joint Advanced Students School 12/1/2020 Root AAAA 0. 55 Type of experiment Sci ent ist nu mb er 1 2 3 4 A 0. 9 0. 8 5 B 0. 7 0. 6 0. 8 0. 7 C 0. 8 5 0. 7 0. 8 5 0. 8 D 0. 7 5 0. 7

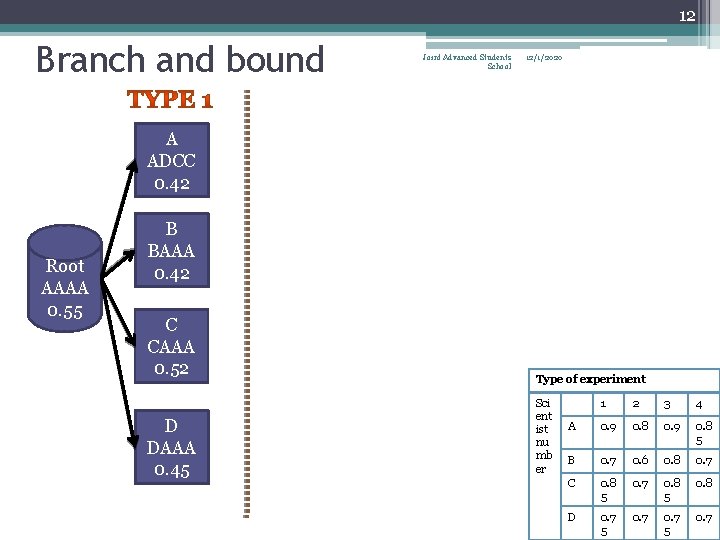

12 Branch and bound Joint Advanced Students School 12/1/2020 A ADCC 0. 42 Root AAAA 0. 55 B BAAA 0. 42 C CAAA 0. 52 D DAAA 0. 45 Type of experiment Sci ent ist nu mb er 1 2 3 4 A 0. 9 0. 8 5 B 0. 7 0. 6 0. 8 0. 7 C 0. 8 5 0. 7 0. 8 5 0. 8 D 0. 7 5 0. 7

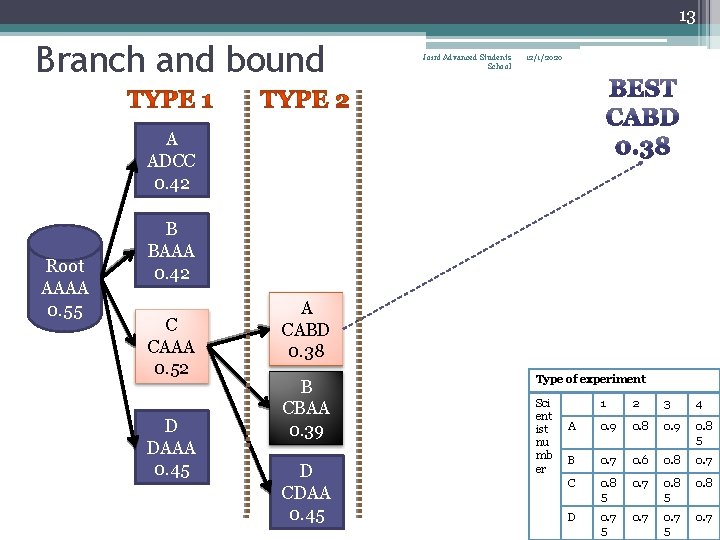

13 Branch and bound Joint Advanced Students School 12/1/2020 A ADCC 0. 42 Root AAAA 0. 55 B BAAA 0. 42 C CAAA 0. 52 D DAAA 0. 45 A CABD 0. 38 B CBAA 0. 39 D CDAA 0. 45 Type of experiment Sci ent ist nu mb er 1 2 3 4 A 0. 9 0. 8 5 B 0. 7 0. 6 0. 8 0. 7 C 0. 8 5 0. 7 0. 8 5 0. 8 D 0. 7 5 0. 7

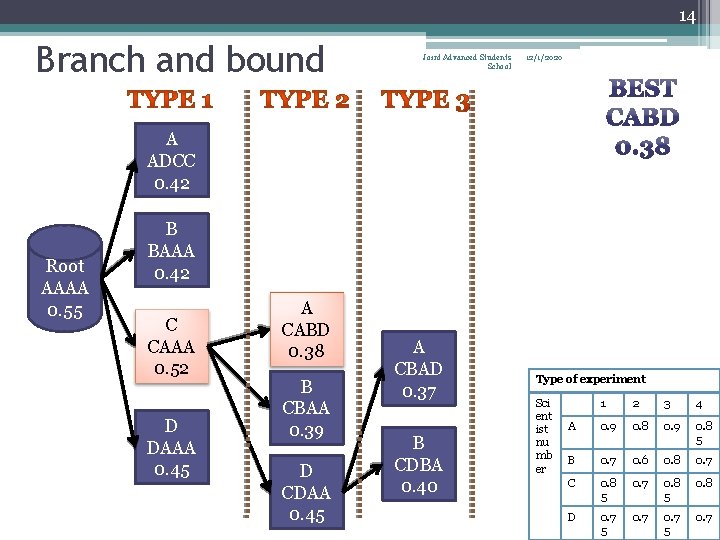

14 Branch and bound Joint Advanced Students School 12/1/2020 A ADCC 0. 42 Root AAAA 0. 55 B BAAA 0. 42 C CAAA 0. 52 D DAAA 0. 45 A CABD 0. 38 B CBAA 0. 39 D CDAA 0. 45 A CBAD 0. 37 B CDBA 0. 40 Type of experiment Sci ent ist nu mb er 1 2 3 4 A 0. 9 0. 8 5 B 0. 7 0. 6 0. 8 0. 7 C 0. 8 5 0. 7 0. 8 5 0. 8 D 0. 7 5 0. 7

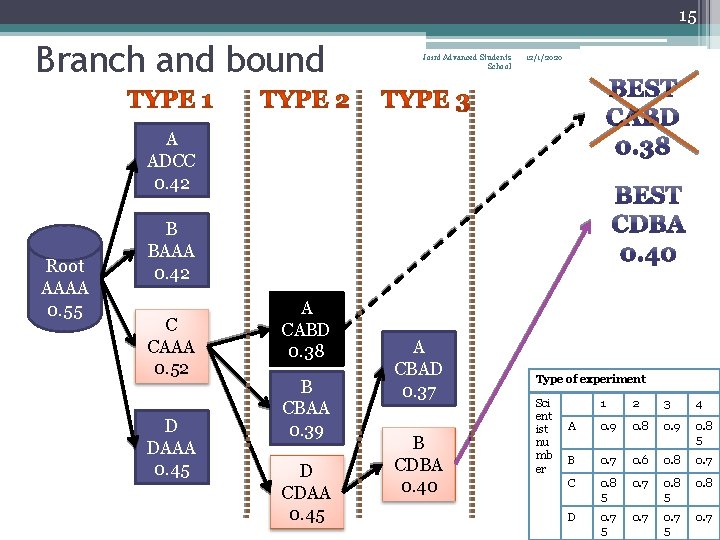

15 Branch and bound Joint Advanced Students School 12/1/2020 A ADCC 0. 42 Root AAAA 0. 55 B BAAA 0. 42 C CAAA 0. 52 D DAAA 0. 45 A CABD 0. 38 B CBAA 0. 39 D CDAA 0. 45 A CBAD 0. 37 B CDBA 0. 40 Type of experiment Sci ent ist nu mb er 1 2 3 4 A 0. 9 0. 8 5 B 0. 7 0. 6 0. 8 0. 7 C 0. 8 5 0. 7 0. 8 5 0. 8 D 0. 7 5 0. 7

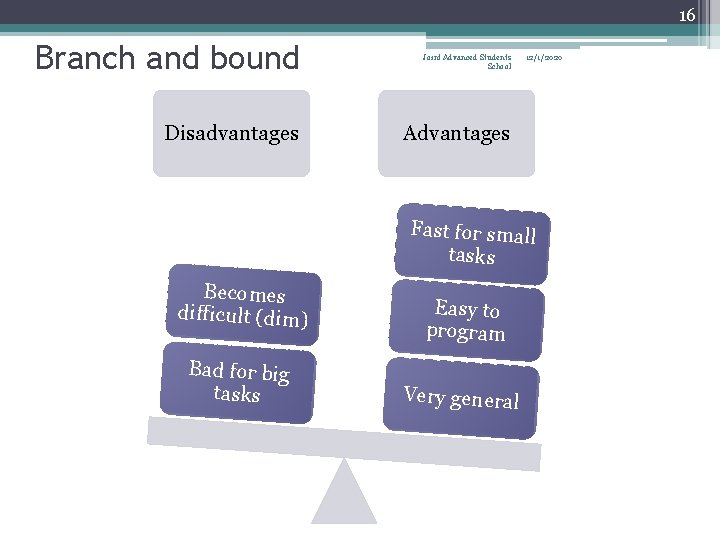

16 Branch and bound Disadvantages Joint Advanced Students School 12/1/2020 Advantages Fast for small tasks Becomes difficult (dim) Bad for big tasks Easy to program Very general

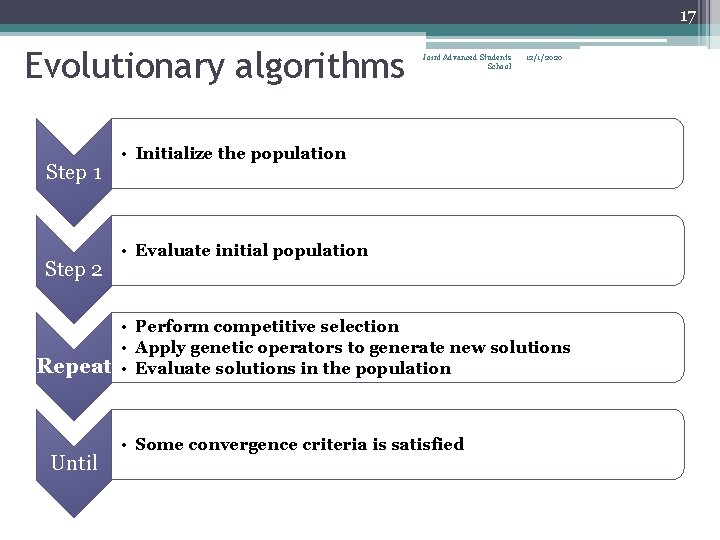

17 Evolutionary algorithms Step 1 Step 2 Repeat Until Joint Advanced Students School 12/1/2020 • Initialize the population • Evaluate initial population • Perform competitive selection • Apply genetic operators to generate new solutions • Evaluate solutions in the population • Some convergence criteria is satisfied

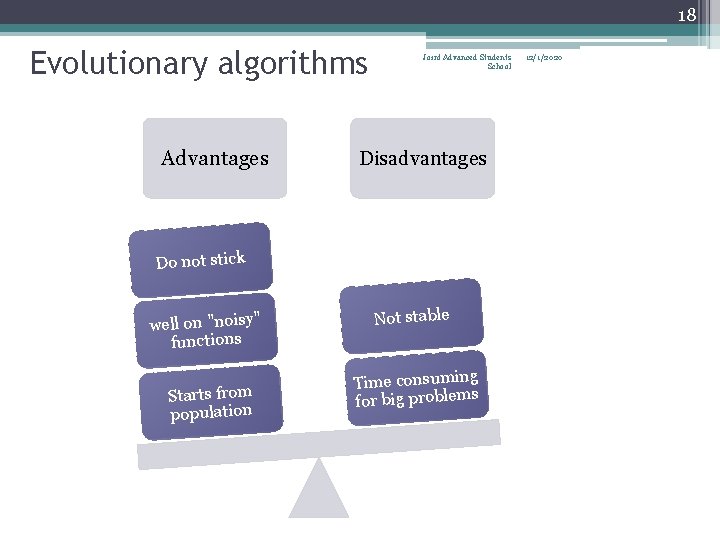

18 Evolutionary algorithms Advantages Joint Advanced Students School Disadvantages Do not stick well on "noisy" functions Starts from population Not stable Time consuming for big problems 12/1/2020

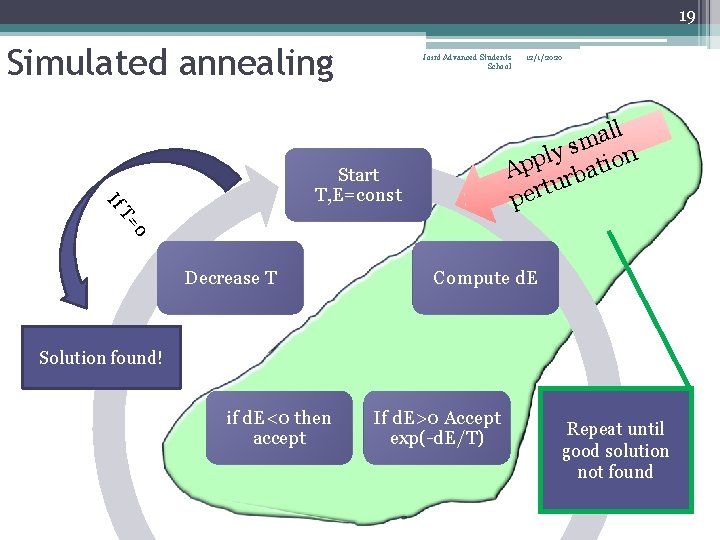

19 Simulated annealing Joint Advanced Students School 12/1/2020 ll a m s y l n App rbatio tu r e p If Start T, E=const 0 T= Decrease T Compute d. E Solution found! if d. E<0 then accept If d. E>0 Accept exp(-d. E/T) Repeat until good solution not found

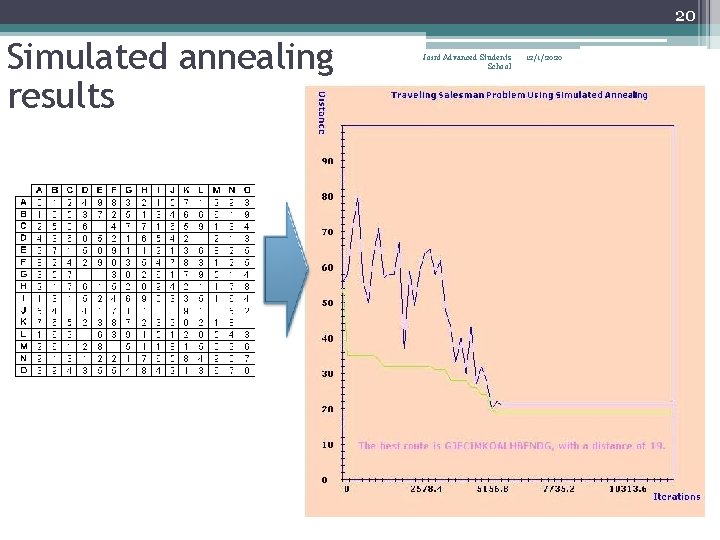

20 Simulated annealing results Joint Advanced Students School 12/1/2020

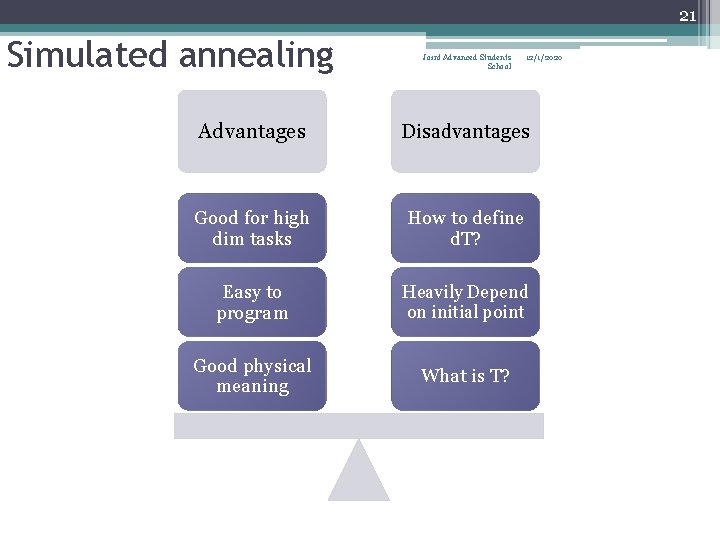

21 Simulated annealing Joint Advanced Students School 12/1/2020 Advantages Disadvantages Good for high dim tasks How to define d. T? Easy to program Heavily Depend on initial point Good physical meaning What is T?

![22 Tree annealing Joint Advanced Students School 12/1/2020 developed by Bilbro and Snyder [1991] 22 Tree annealing Joint Advanced Students School 12/1/2020 developed by Bilbro and Snyder [1991]](http://slidetodoc.com/presentation_image_h/cbe71030301ed3c60b5321ca60a7155f/image-22.jpg)

22 Tree annealing Joint Advanced Students School 12/1/2020 developed by Bilbro and Snyder [1991] 1. Randomly choose an initial point x over the search interval S 0 2. Randomly travel down the tree to an arbitrary terminal node i, and generate a candidate point y over the subspace defined by Si. 3. If f(y)<F(X)< I> replace x with y, and go to step 5. 4. Compute P = exp (-(f(y)-f(x))/T). If P>R, where R is a random number uniformly distributed between 0 and 1, replace x with y. 5. If y replace x, decrease T slightly and update the tree until T < Tmin.

![23 Tree annealing developed by Bilbro and Snyder [1991] Advantages Joint Advanced Students School 23 Tree annealing developed by Bilbro and Snyder [1991] Advantages Joint Advanced Students School](http://slidetodoc.com/presentation_image_h/cbe71030301ed3c60b5321ca60a7155f/image-23.jpg)

23 Tree annealing developed by Bilbro and Snyder [1991] Advantages Joint Advanced Students School Disadvantages Gives just region of solution Handle continuous variables Good for nonconvex Slow convergence No guaranties fo r convergence 12/1/2020

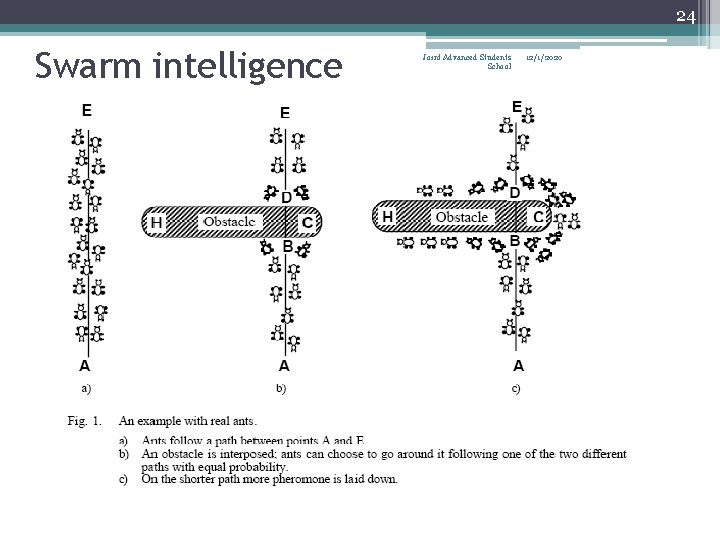

24 Swarm intelligence Joint Advanced Students School 12/1/2020

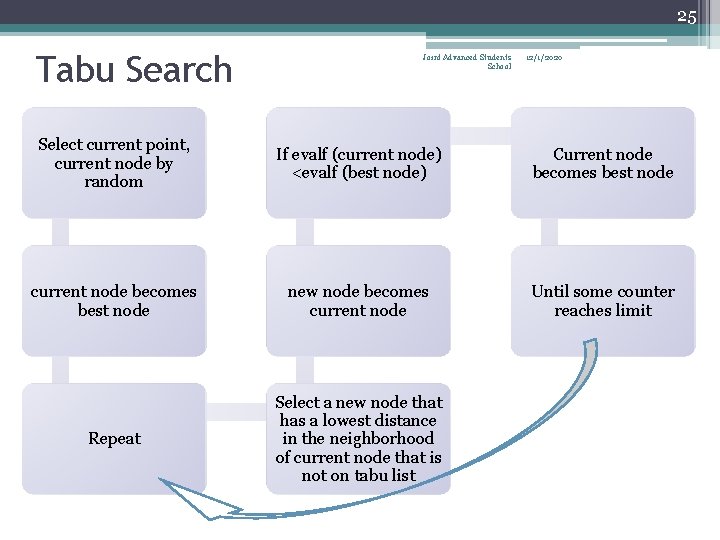

25 Tabu Search Joint Advanced Students School 12/1/2020 Select current point, current node by random If evalf (current node) <evalf (best node) Current node becomes best node current node becomes best node new node becomes current node Until some counter reaches limit Repeat Select a new node that has a lowest distance in the neighborhood of current node that is not on tabu list

26 Taboo search implementation 1 Taboo list Joint Advanced Students School 12/1/2020

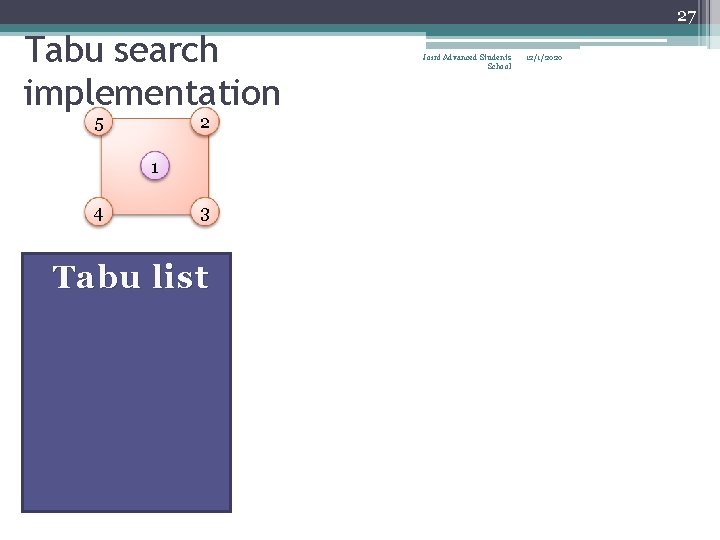

27 Tabu search implementation 5 2 1 4 3 Tabu list Joint Advanced Students School 12/1/2020

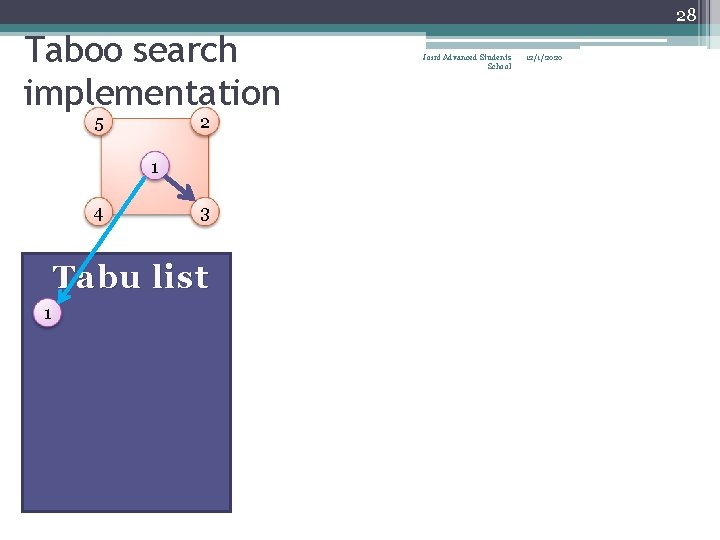

28 Taboo search implementation 5 2 1 4 3 Tabu list 1 Joint Advanced Students School 12/1/2020

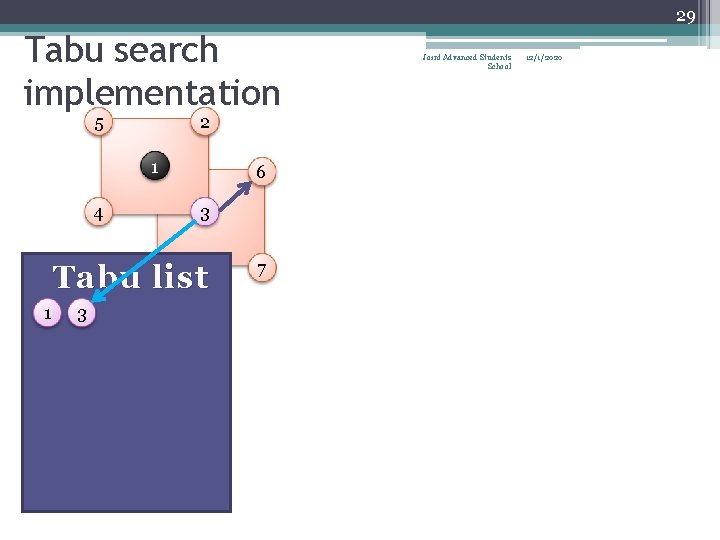

29 Tabu search implementation 5 2 1 4 6 3 Tabu list 1 3 7 Joint Advanced Students School 12/1/2020

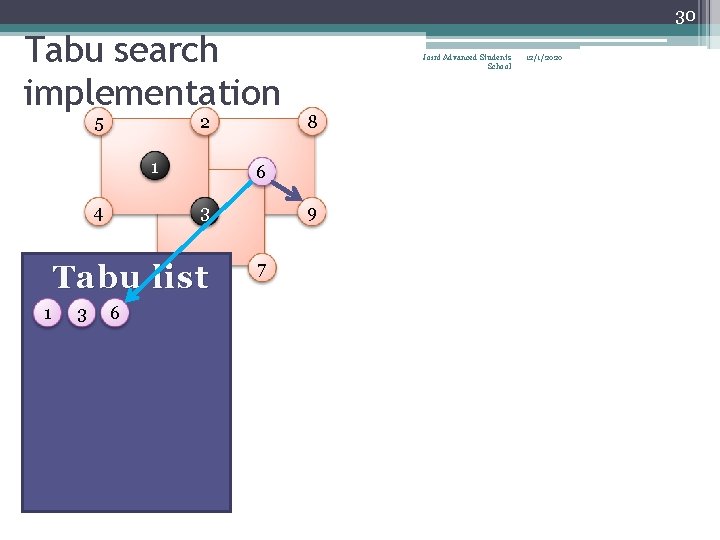

30 Tabu search implementation 5 2 1 4 3 3 6 8 6 Tabu list 1 Joint Advanced Students School 9 7 12/1/2020

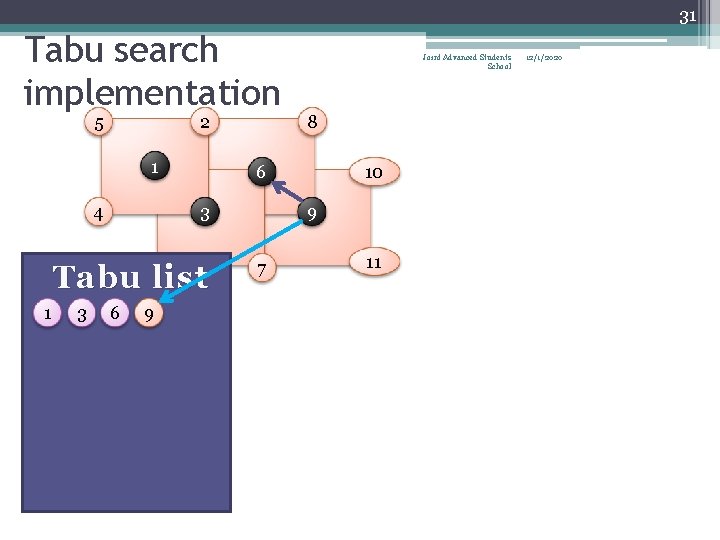

31 Tabu search implementation 5 2 1 4 3 3 6 9 8 6 Tabu list 1 Joint Advanced Students School 10 9 7 11 12/1/2020

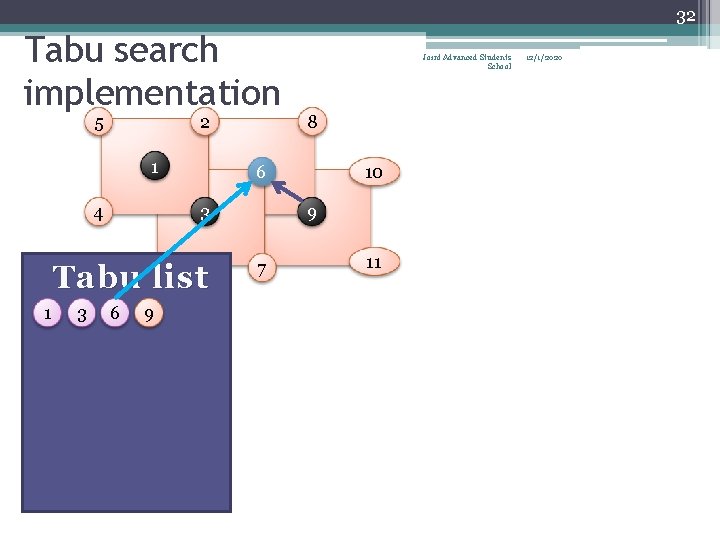

32 Tabu search implementation 5 2 1 4 3 3 6 9 8 6 Tabu list 1 Joint Advanced Students School 10 9 7 11 12/1/2020

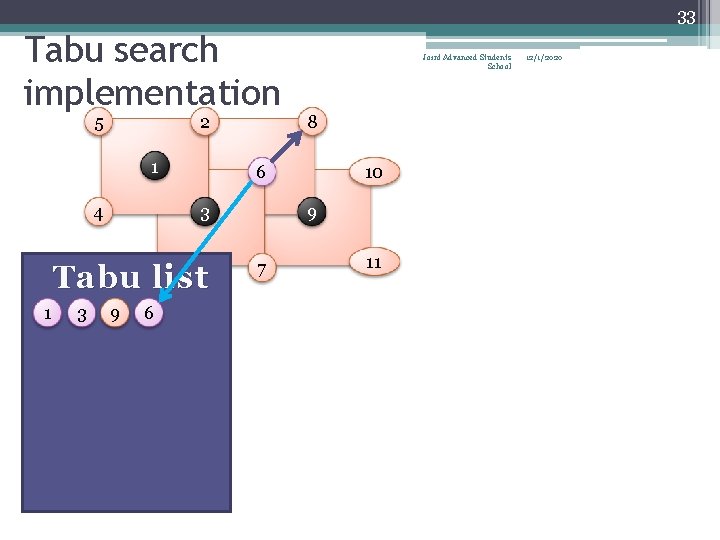

33 Tabu search implementation 5 2 1 4 3 3 9 6 8 6 Tabu list 1 Joint Advanced Students School 10 9 7 11 12/1/2020

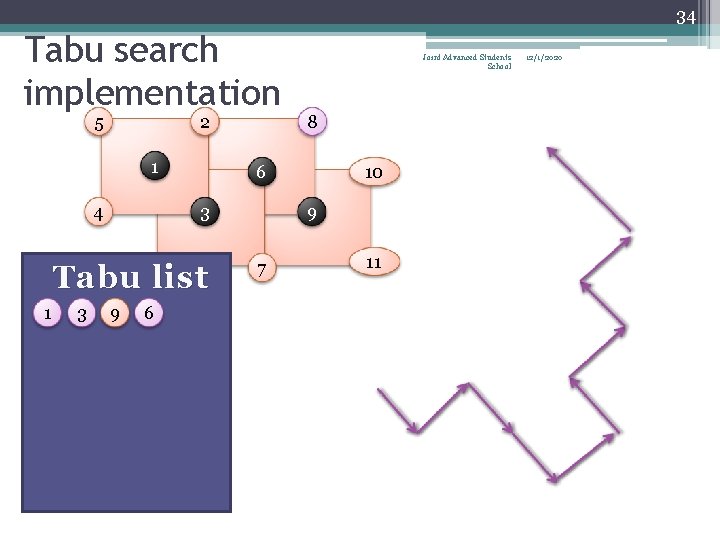

34 Tabu search implementation 5 2 1 4 3 3 9 6 8 6 Tabu list 1 Joint Advanced Students School 10 9 7 11 12/1/2020

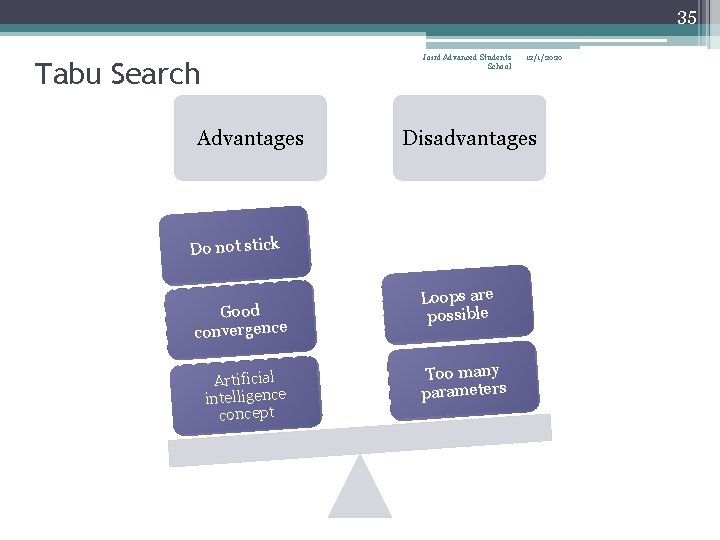

35 Joint Advanced Students School Tabu Search Advantages Disadvantages Do not stick Good convergence Artificial intelligence concept 12/1/2020 Loops are possible Too many parameters

36 Joint Advanced Students School 12/1/2020 What is Local Optimization? • The term LOCAL refers both to the fact that only information about the function from the neighborhood of the current approximation is used in updating the approximation as well as that we usually expect such methods to converge to whatever local extremum is closest to the starting approximation. • Global structure of the objective function is unknown to a local method.

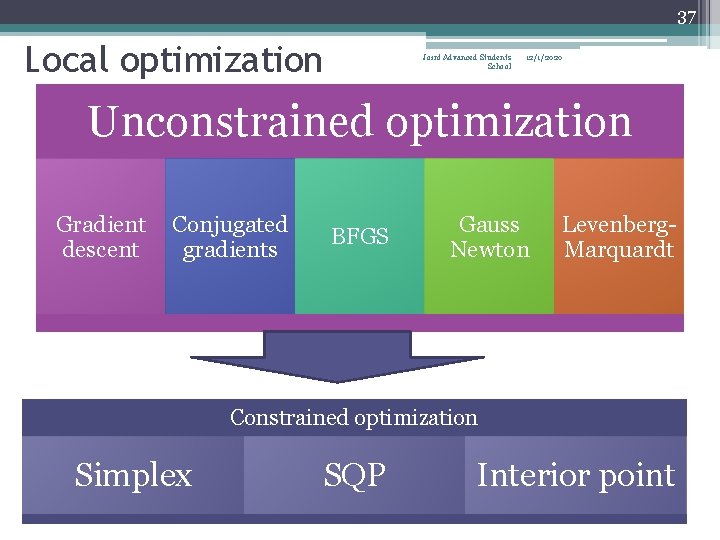

37 Local optimization Joint Advanced Students School 12/1/2020 Unconstrained optimization Gradient descent Conjugated gradients BFGS Gauss Newton Levenberg. Marquardt Constrained optimization Simplex SQP Interior point

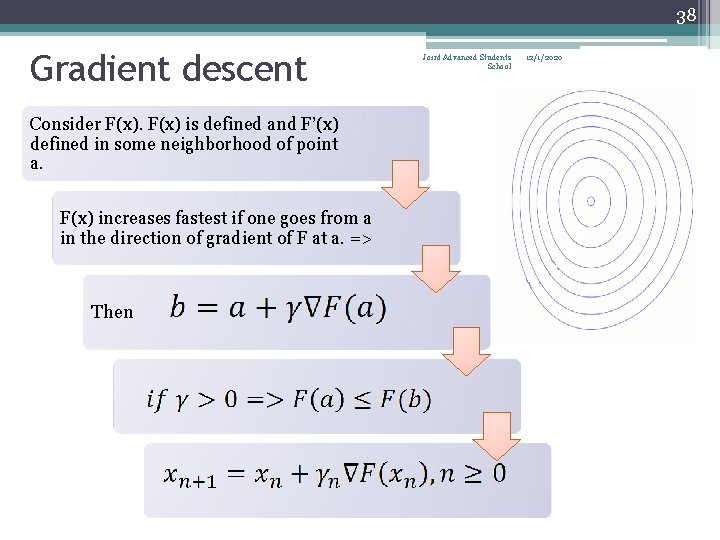

38 Gradient descent Consider F(x) is defined and F’(x) defined in some neighborhood of point a. F(x) increases fastest if one goes from a in the direction of gradient of F at a. => Then Joint Advanced Students School 12/1/2020

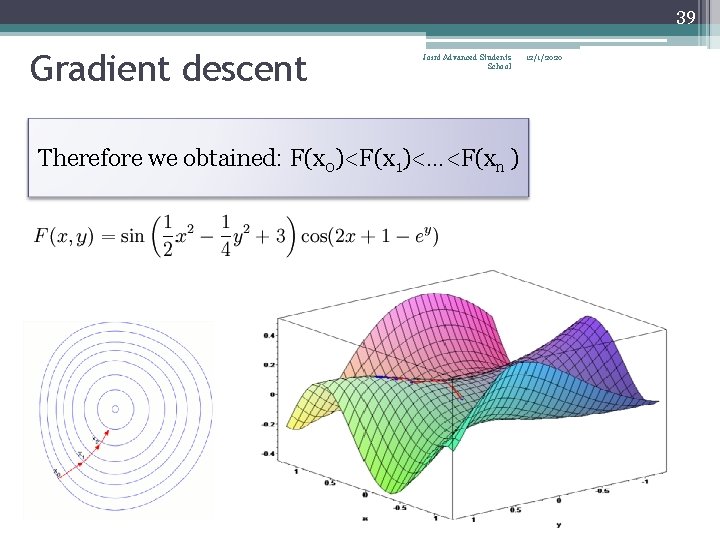

39 Gradient descent Joint Advanced Students School Therefore we obtained: F(x 0)<F(x 1)<…<F(xn ) 12/1/2020

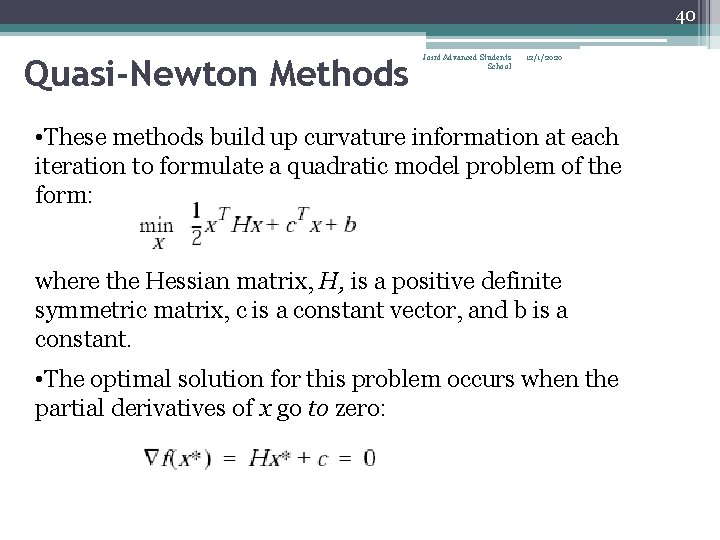

40 Quasi-Newton Methods Joint Advanced Students School 12/1/2020 • These methods build up curvature information at each iteration to formulate a quadratic model problem of the form: where the Hessian matrix, H, is a positive definite symmetric matrix, c is a constant vector, and b is a constant. • The optimal solution for this problem occurs when the partial derivatives of x go to zero:

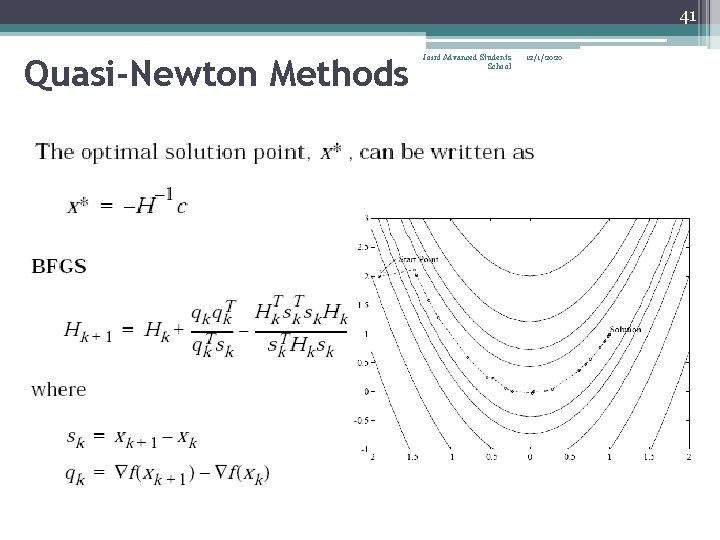

41 Quasi-Newton Methods Joint Advanced Students School 12/1/2020

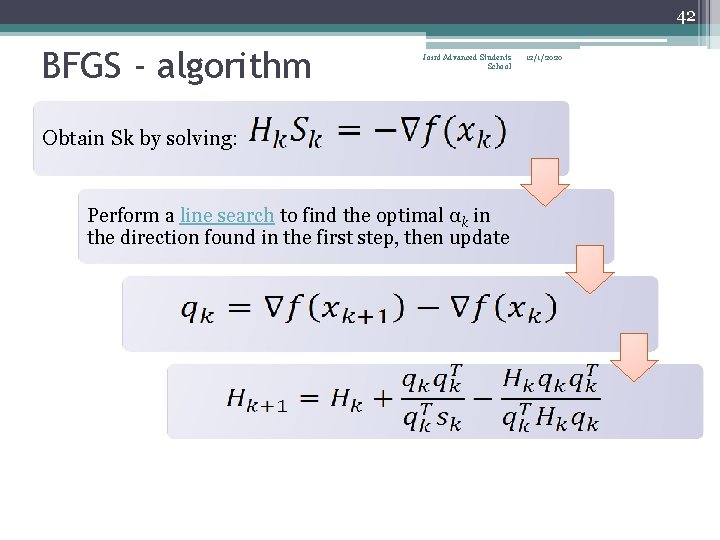

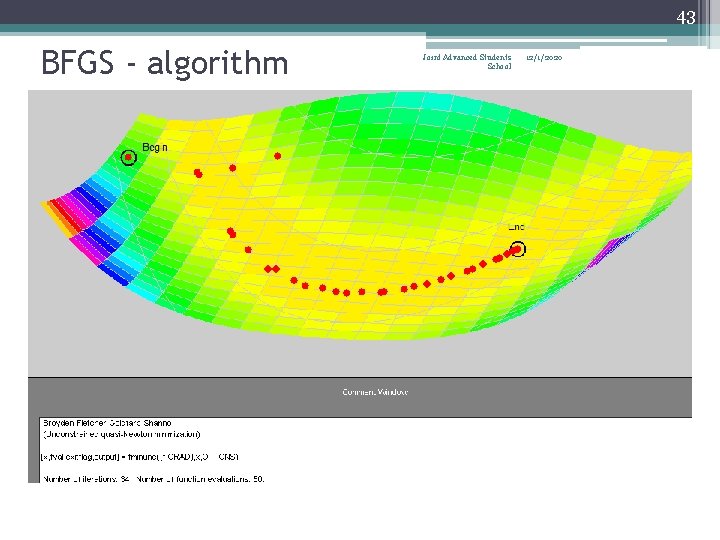

42 BFGS - algorithm Joint Advanced Students School Obtain Sk by solving: Perform a line search to find the optimal αk in the direction found in the first step, then update 12/1/2020

43 BFGS - algorithm Joint Advanced Students School 12/1/2020

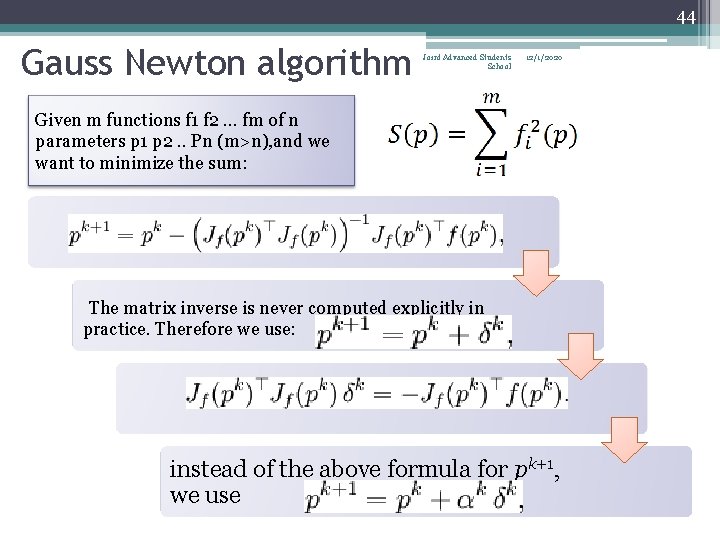

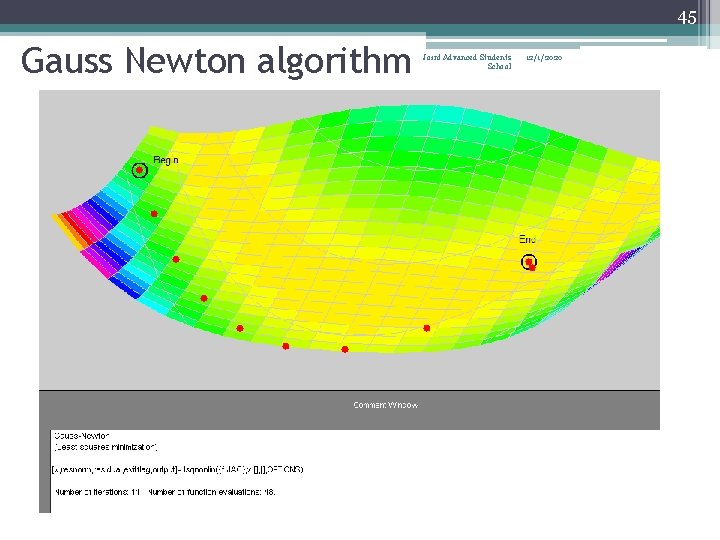

44 Gauss Newton algorithm Joint Advanced Students School 12/1/2020 Given m functions f 1 f 2 … fm of n parameters p 1 p 2. . Pn (m>n), and we want to minimize the sum: The matrix inverse is never computed explicitly in practice. Therefore we use: instead of the above formula for pk+1, we use

45 Gauss Newton algorithm Joint Advanced Students School 12/1/2020

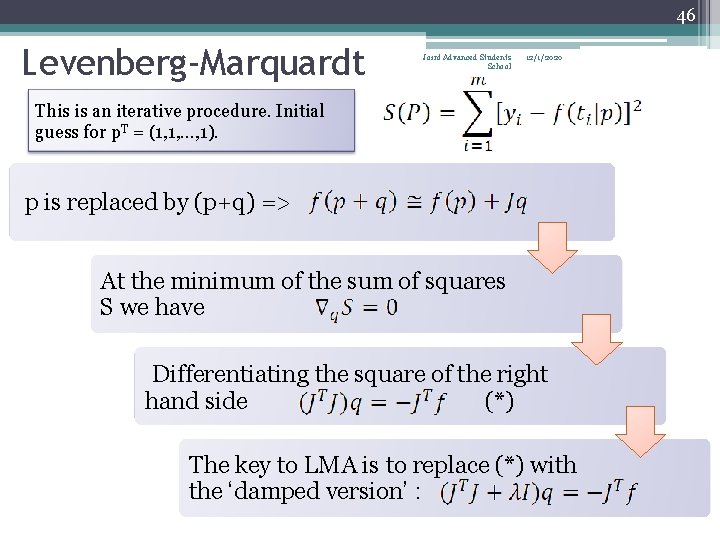

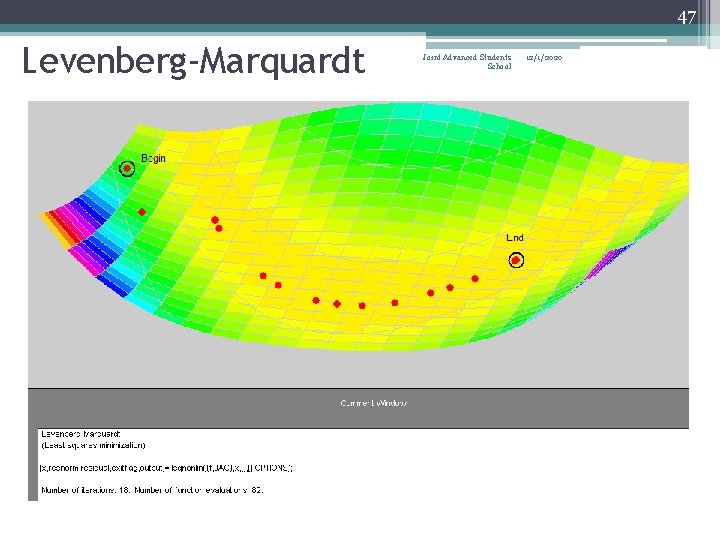

46 Levenberg-Marquardt Joint Advanced Students School 12/1/2020 This is an iterative procedure. Initial guess for p. T = (1, 1, …, 1). p is replaced by (p+q) => At the minimum of the sum of squares S we have Differentiating the square of the right hand side (*) The key to LMA is to replace (*) with the ‘damped version’ :

47 Levenberg-Marquardt Joint Advanced Students School 12/1/2020

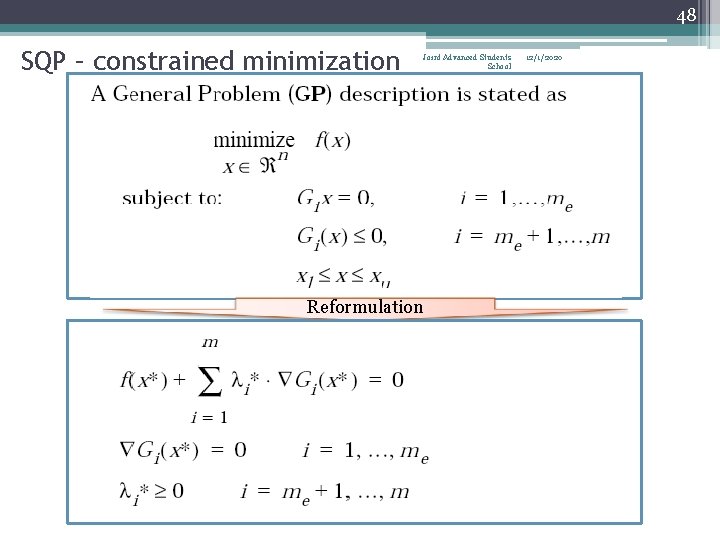

48 SQP – constrained minimization Joint Advanced Students School Reformulation 12/1/2020

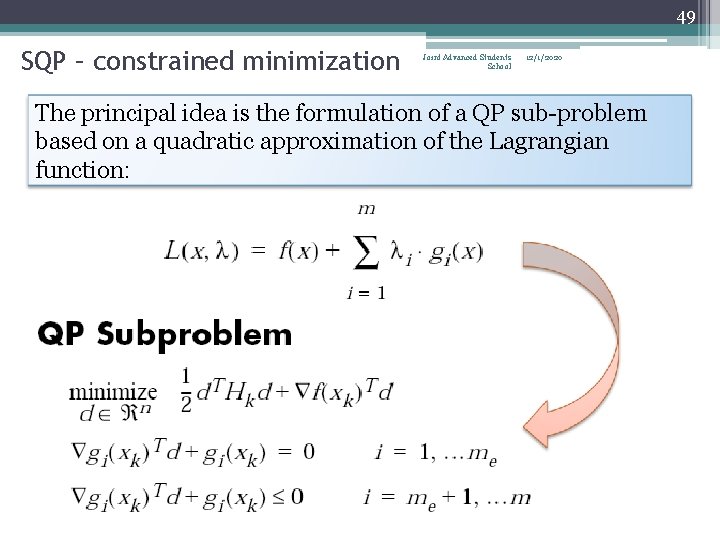

49 SQP – constrained minimization Joint Advanced Students School 12/1/2020 The principal idea is the formulation of a QP sub-problem based on a quadratic approximation of the Lagrangian function:

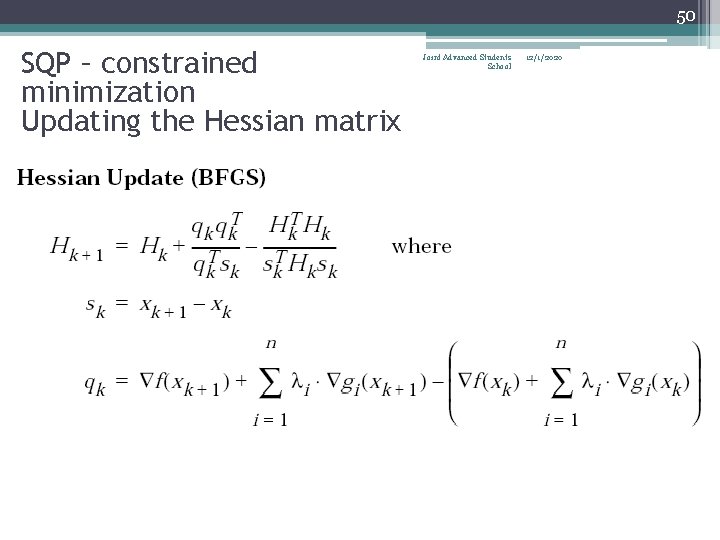

50 SQP – constrained minimization Updating the Hessian matrix Joint Advanced Students School 12/1/2020

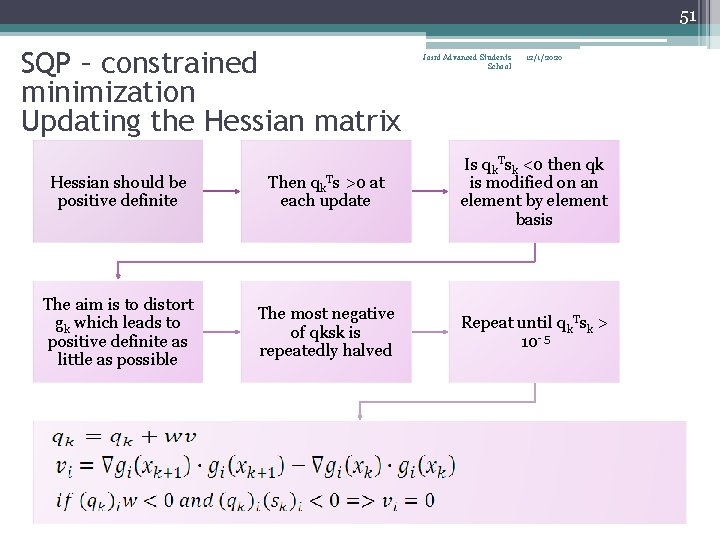

51 SQP – constrained minimization Updating the Hessian matrix Joint Advanced Students School 12/1/2020 Hessian should be positive definite Then qk. Ts >0 at each update Is qk. Tsk <0 then qk is modified on an element by element basis The aim is to distort gk which leads to positive definite as little as possible The most negative of qksk is repeatedly halved Repeat until qk. Tsk > 10 -5

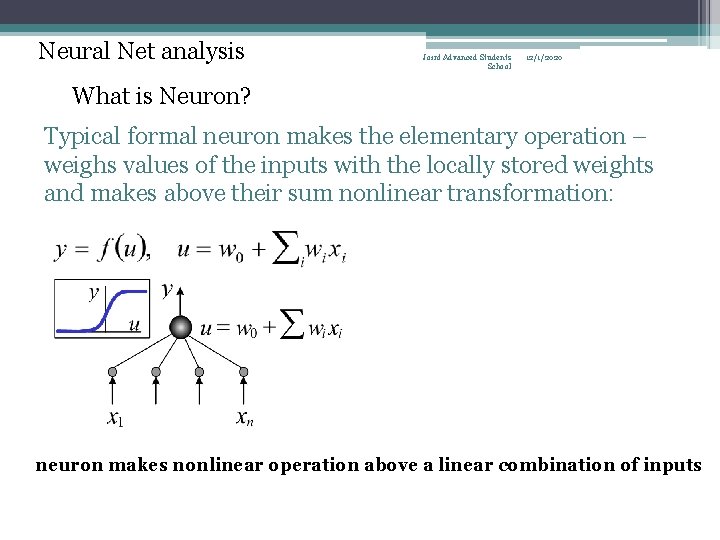

Neural Net analysis Joint Advanced Students School 12/1/2020 What is Neuron? Typical formal neuron makes the elementary operation – weighs values of the inputs with the locally stored weights and makes above their sum nonlinear transformation: neuron makes nonlinear operation above a linear combination of inputs

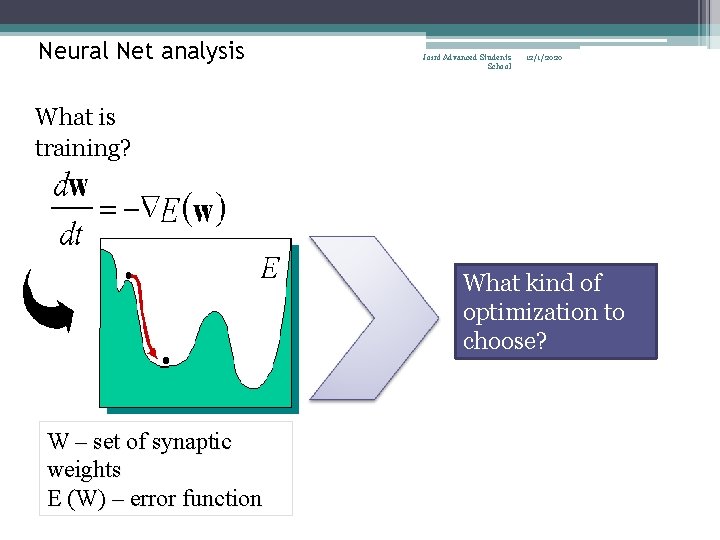

Neural Net analysis Joint Advanced Students School 12/1/2020 What is training? What kind of optimization to choose? W – set of synaptic weights E (W) – error function

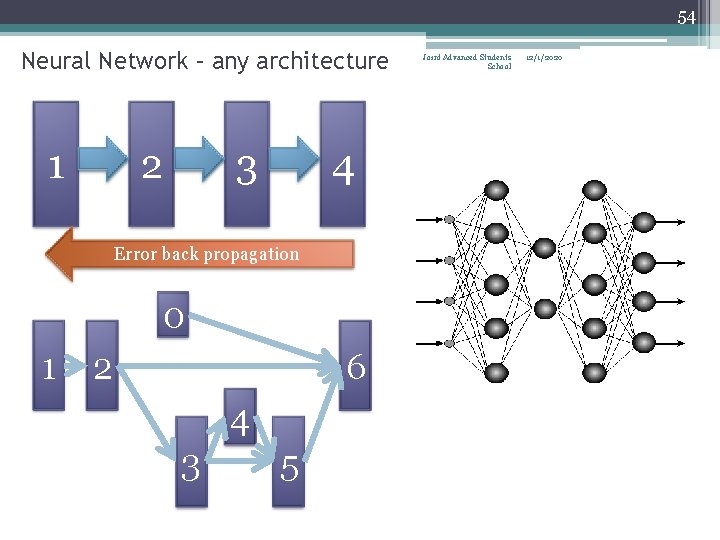

54 Neural Network – any architecture 1 2 3 4 Error back propagation 0 1 2 6 4 3 5 Joint Advanced Students School 12/1/2020

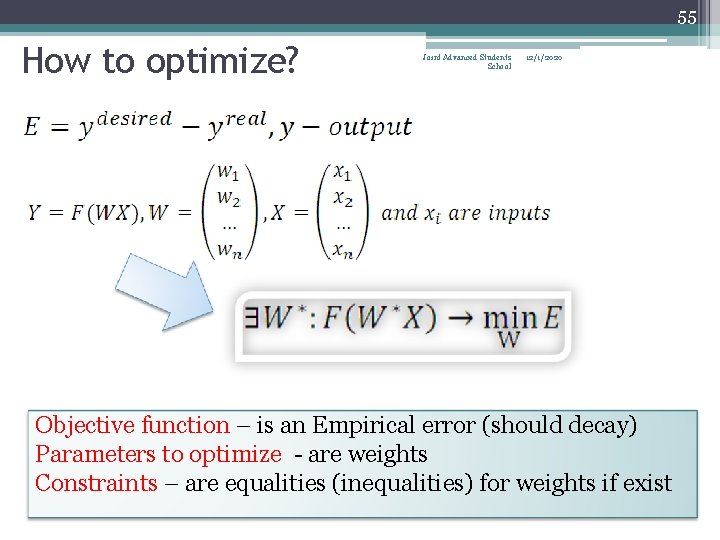

55 How to optimize? Joint Advanced Students School 12/1/2020 Objective function – is an Empirical error (should decay) Parameters to optimize - are weights Constraints – are equalities (inequalities) for weights if exist

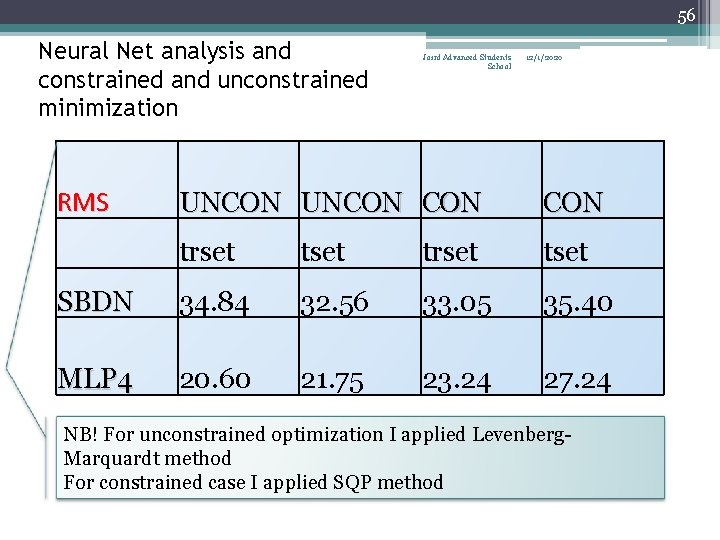

56 Neural Net analysis and constrained and unconstrained minimization RMS Joint Advanced Students School 12/1/2020 UNCON CON trset tset SBDN 34. 84 32. 56 33. 05 35. 40 MLP 4 20. 60 21. 75 23. 24 27. 24 NB! For unconstrained optimization I applied Levenberg. Marquardt method For constrained case I applied SQP method

57 Joint Advanced Students School 12/1/2020 Thank you for your attention

- Slides: 57