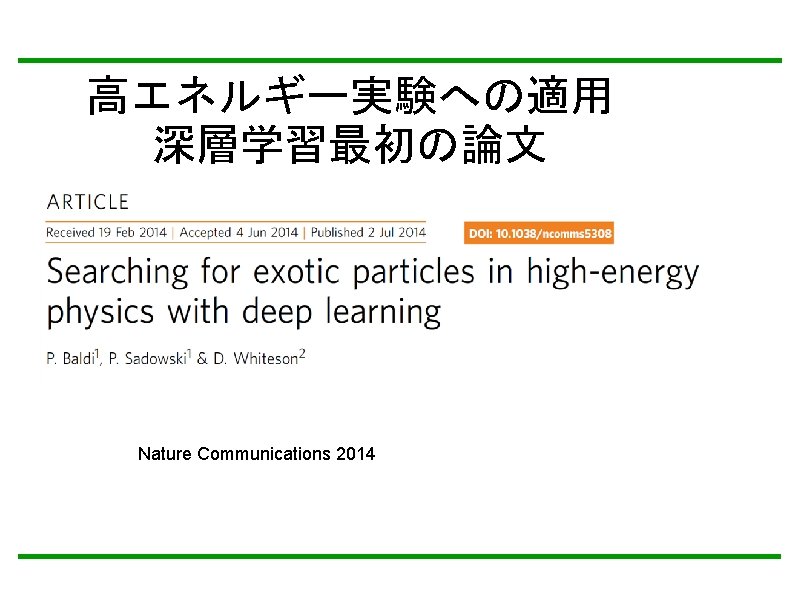

1 Introduction Deep Learning is effective to high

![[Grey Scale Image] Only Energy Deposit is considered. Energy Deposit Cut: Ignore Energy Deposit [Grey Scale Image] Only Energy Deposit is considered. Energy Deposit Cut: Ignore Energy Deposit](https://slidetodoc.com/presentation_image_h/ad114186d4a4d453bad51e514c1fd3d8/image-32.jpg)

![Making input data [Grey Scale Image] Only Energy Deposit is considered. Energy Deposit Cut: Making input data [Grey Scale Image] Only Energy Deposit is considered. Energy Deposit Cut:](https://slidetodoc.com/presentation_image_h/ad114186d4a4d453bad51e514c1fd3d8/image-33.jpg)

- Slides: 64

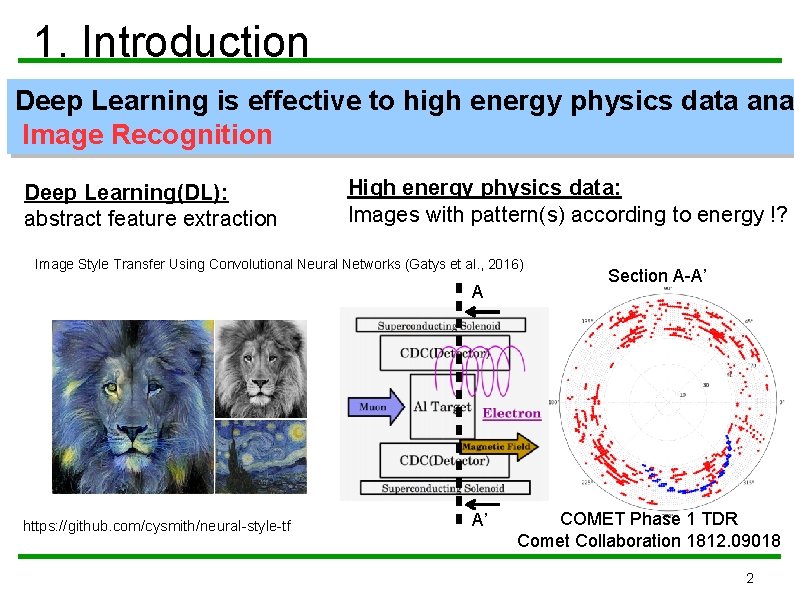

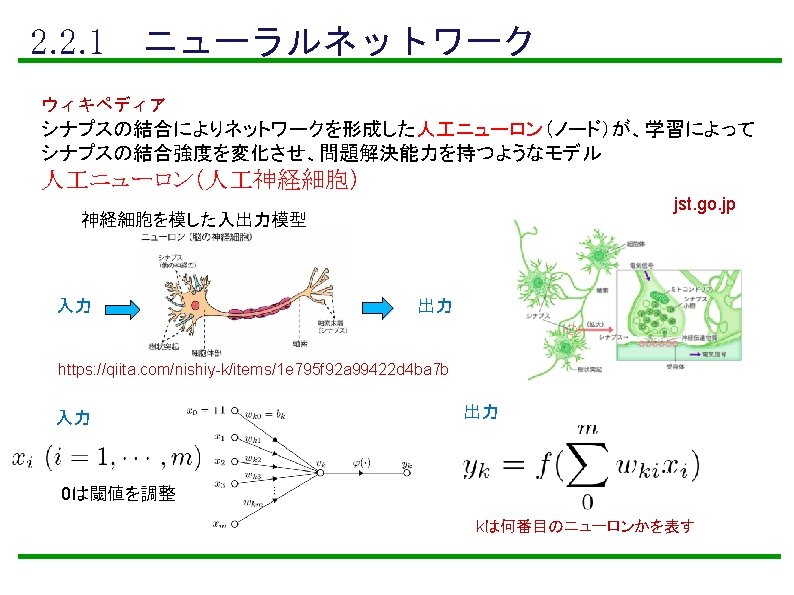

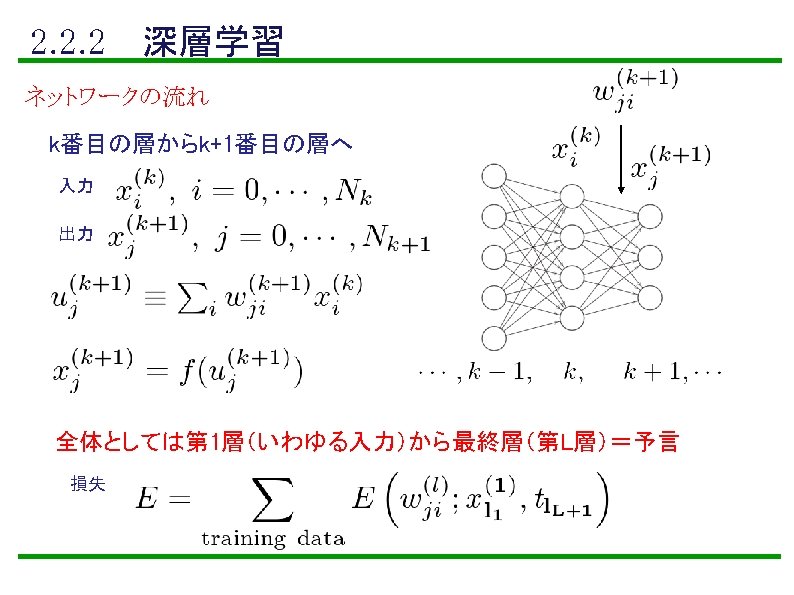

1. Introduction Deep Learning is effective to high energy physics data ana Image Recognition Deep Learning(DL): abstract feature extraction High energy physics data: Images with pattern(s) according to energy !? Image Style Transfer Using Convolutional Neural Networks (Gatys et al. , 2016) A https: //github. com/cysmith/neural-style-tf A’ Section A-A’ COMET Phase 1 TDR Comet Collaboration 1812. 09018 2

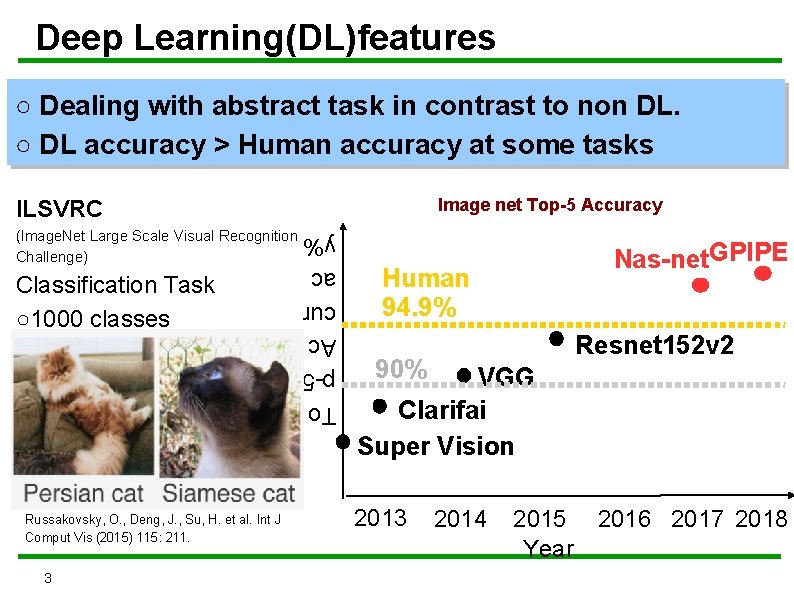

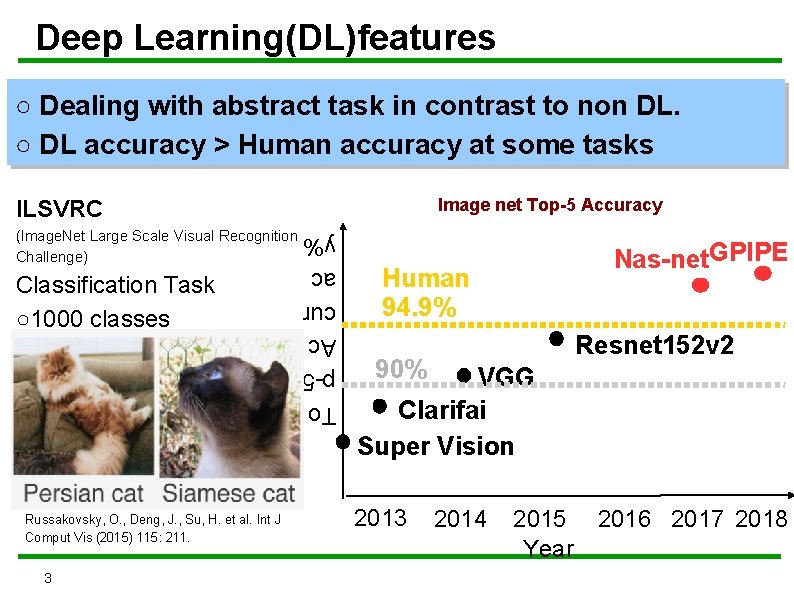

Deep Learning(DL)features ○ Dealing with abstract task in contrast to non DL. ○ DL accuracy > Human accuracy at some tasks Image net Top-5 Accuracy ILSVRC (Image. Net Large Scale Visual Recognition Challenge) Russakovsky, O. , Deng, J. , Su, H. et al. Int J Comput Vis (2015) 115: 211. 3 To p-5 Ac cur ac y% Classification Task ○1000 classes Nas-net. GPIPE Human 94. 9% Resnet 152 v 2 90% VGG Clarifai Super Vision 2013 2014 2015 Year 2016 2017 2018

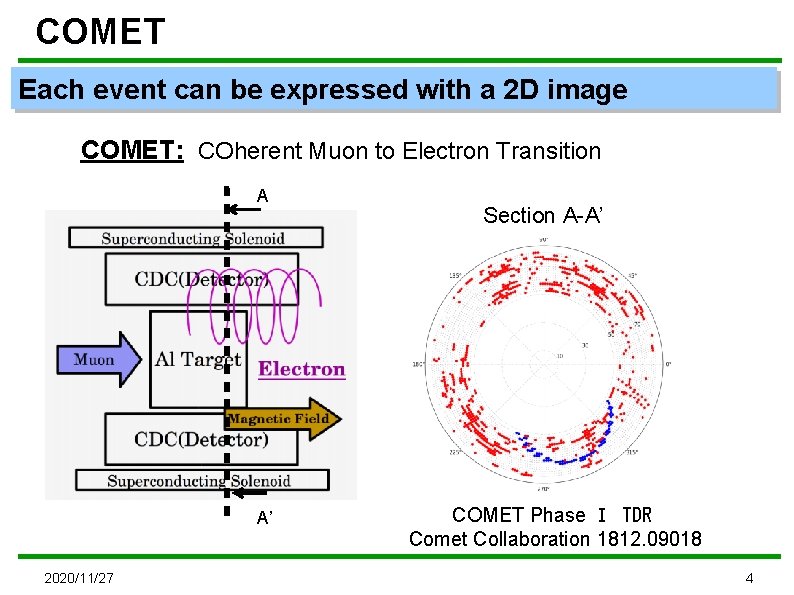

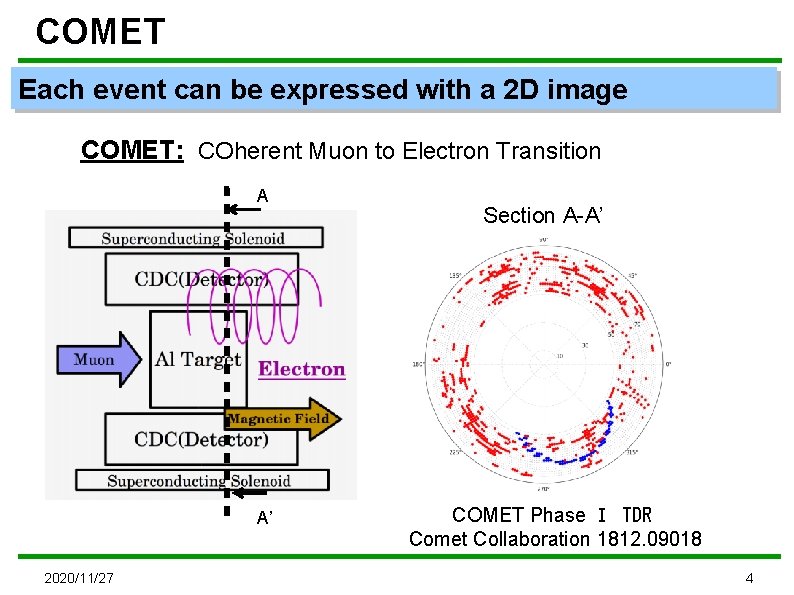

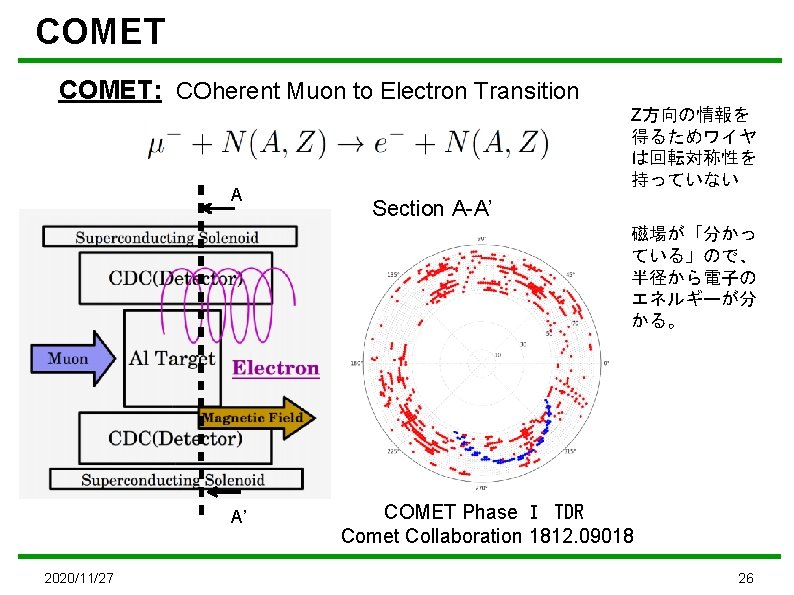

COMET Each event can be expressed with a 2 D image COMET: COherent Muon to Electron Transition A A’ 2020/11/27 Section A-A’ COMET Phase Ⅰ TDR Comet Collaboration 1812. 09018 4

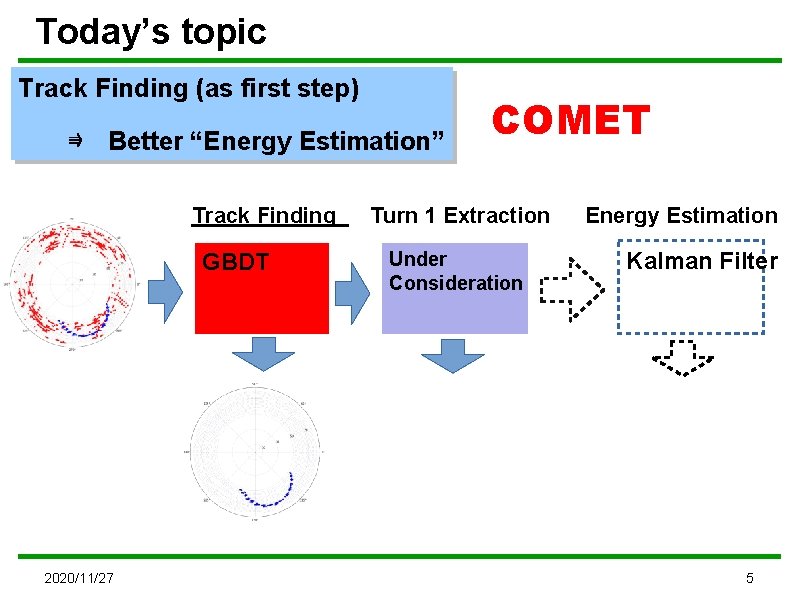

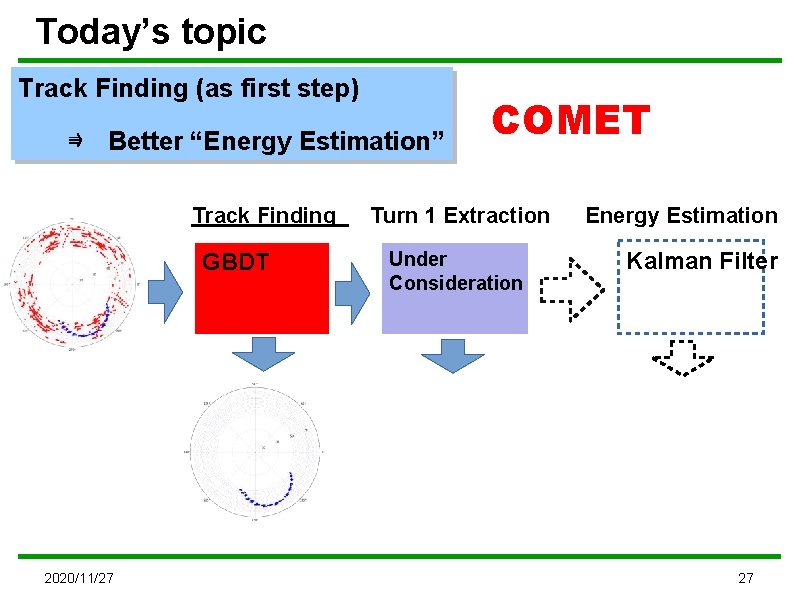

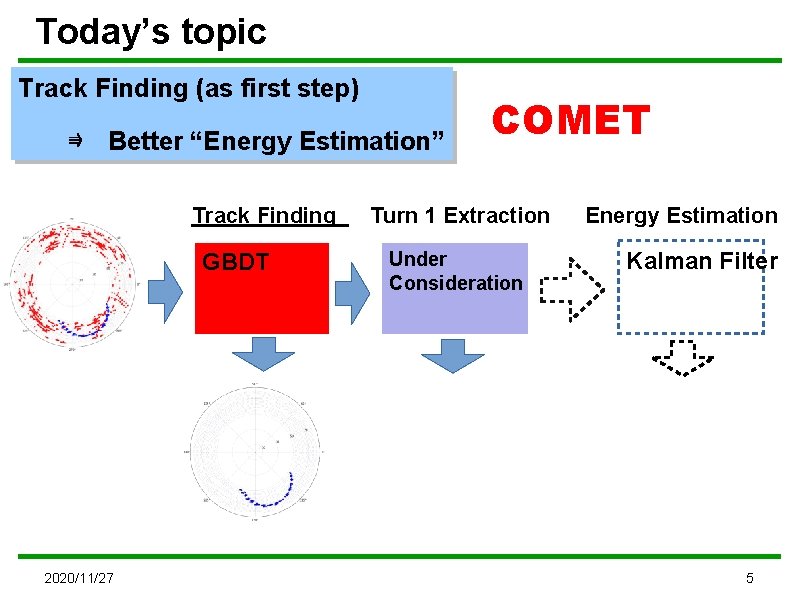

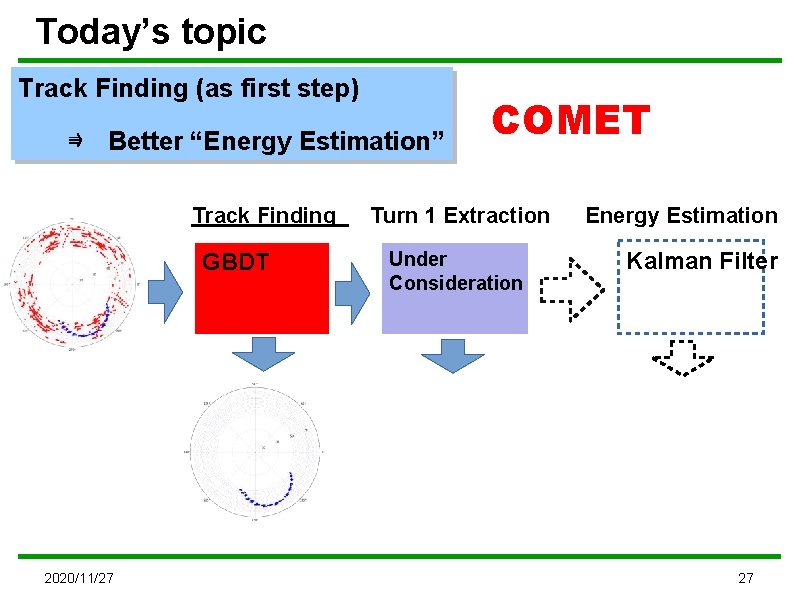

Today’s topic Track Finding (as first step) ⇛ Better “Energy Estimation” Track Finding GBDT 2020/11/27 COMET Turn 1 Extraction Under Consideration Energy Estimation Kalman Filter 5

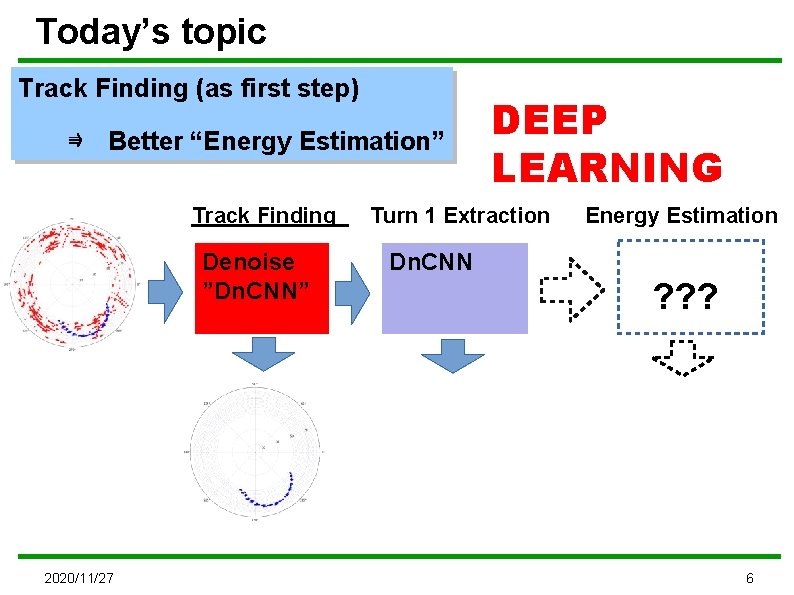

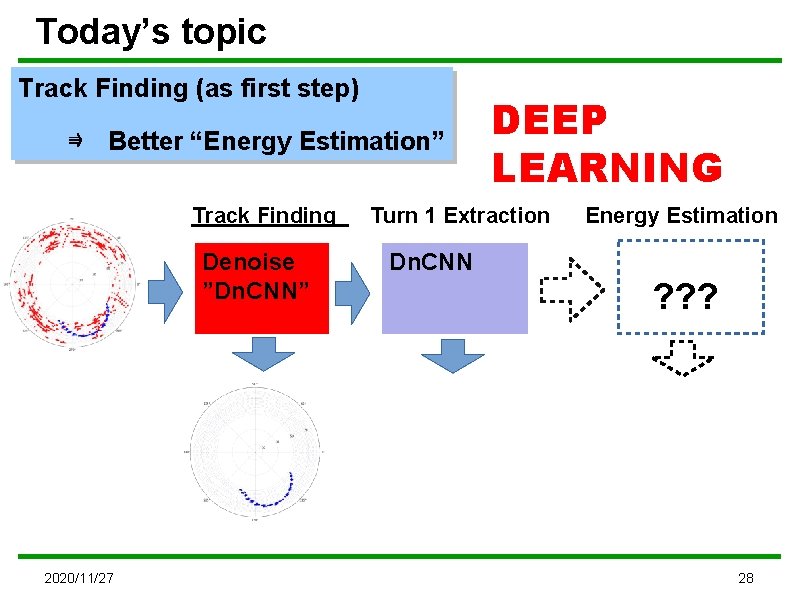

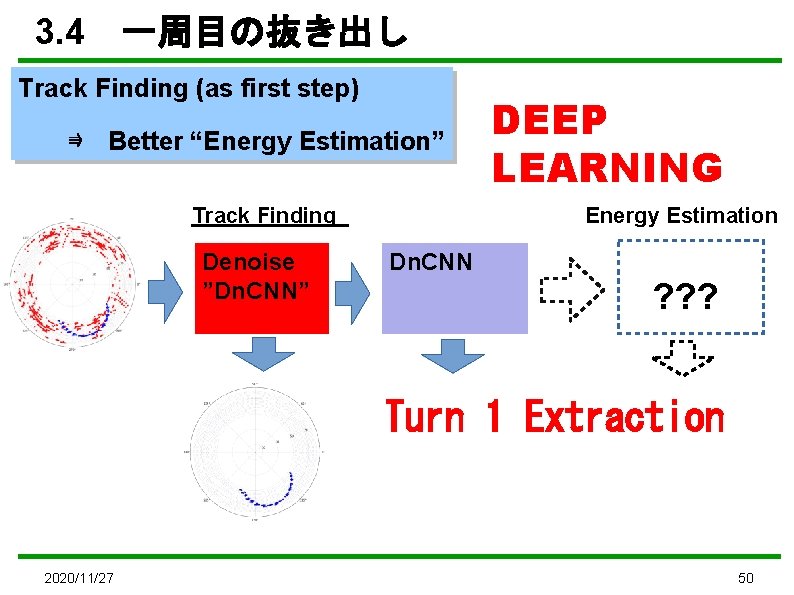

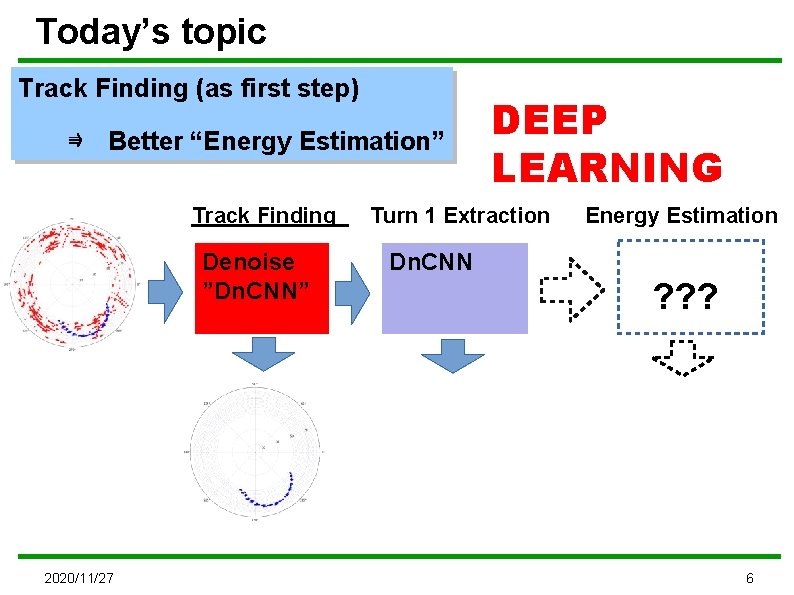

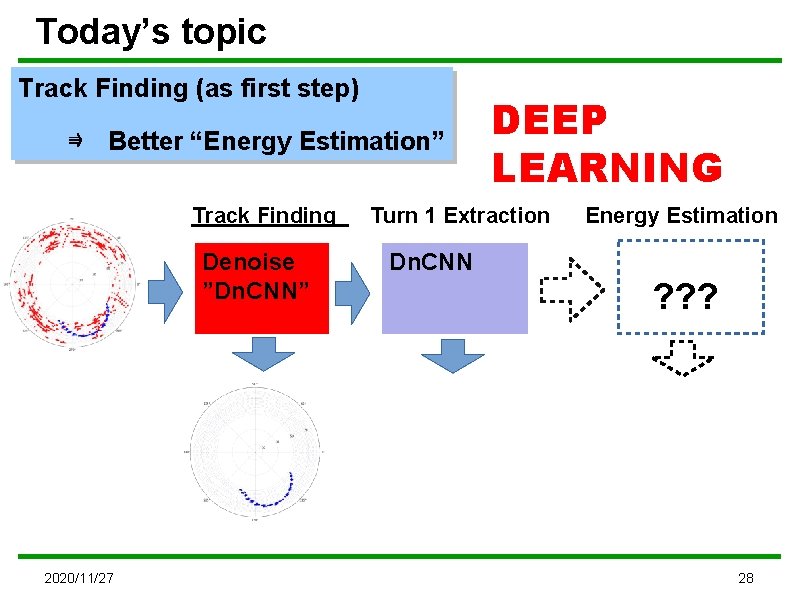

Today’s topic Track Finding (as first step) ⇛ Better “Energy Estimation” Track Finding Denoise ”Dn. CNN” 2020/11/27 DEEP LEARNING Turn 1 Extraction Energy Estimation Dn. CNN ? ? ? 6

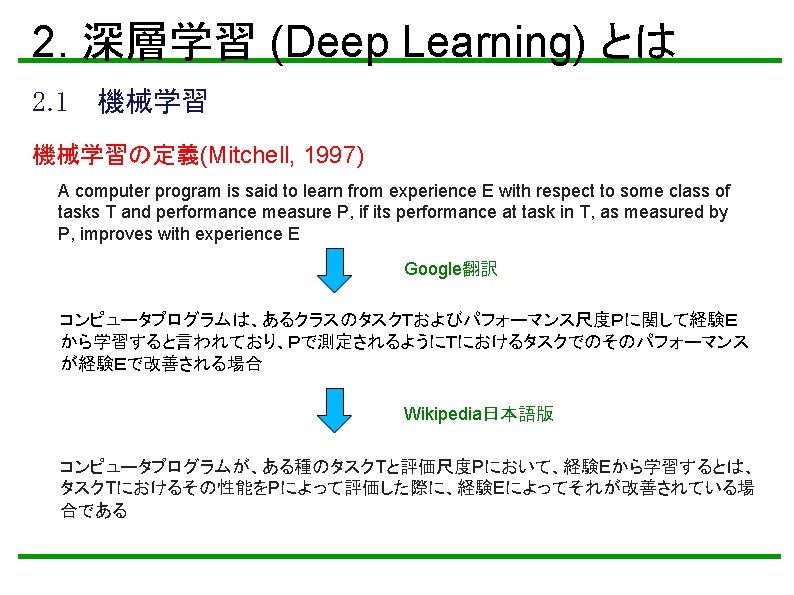

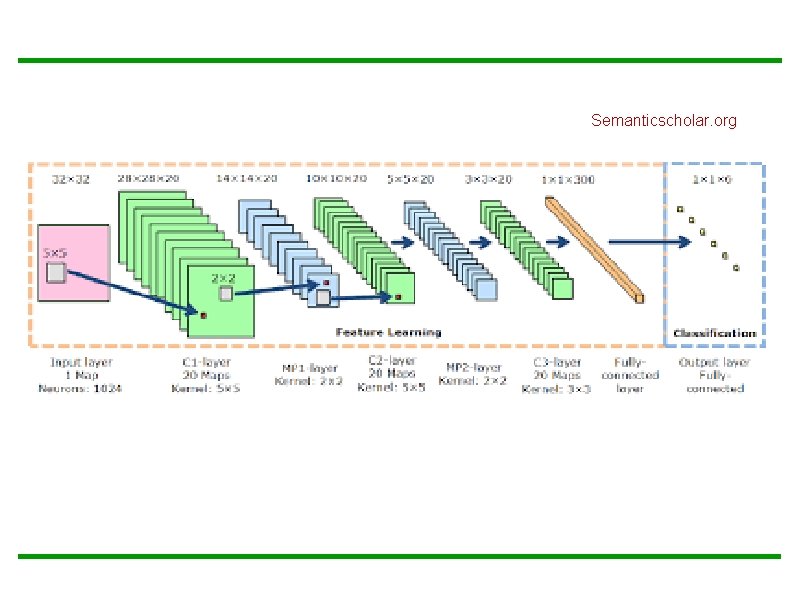

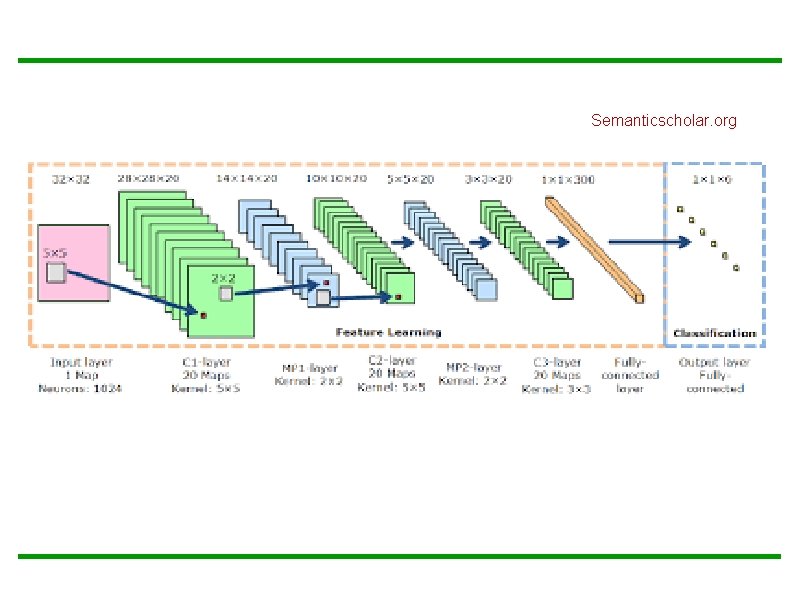

Semanticscholar. org

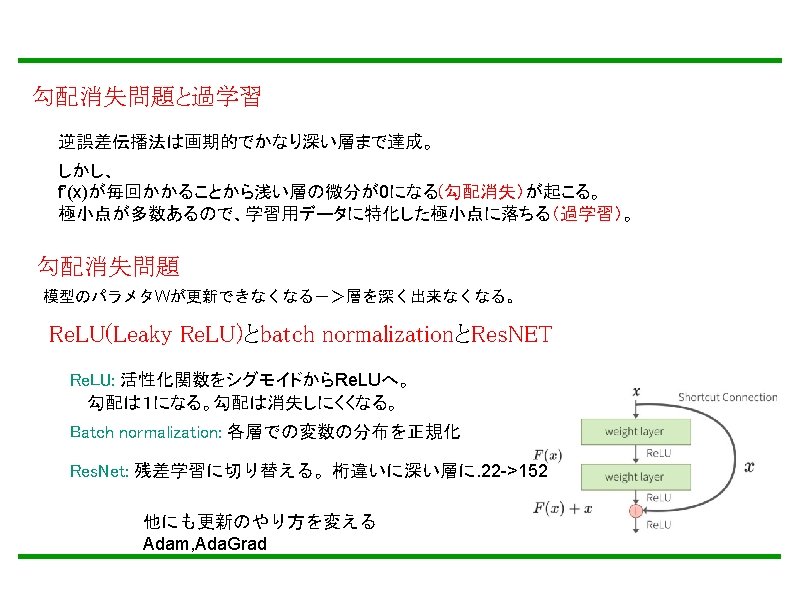

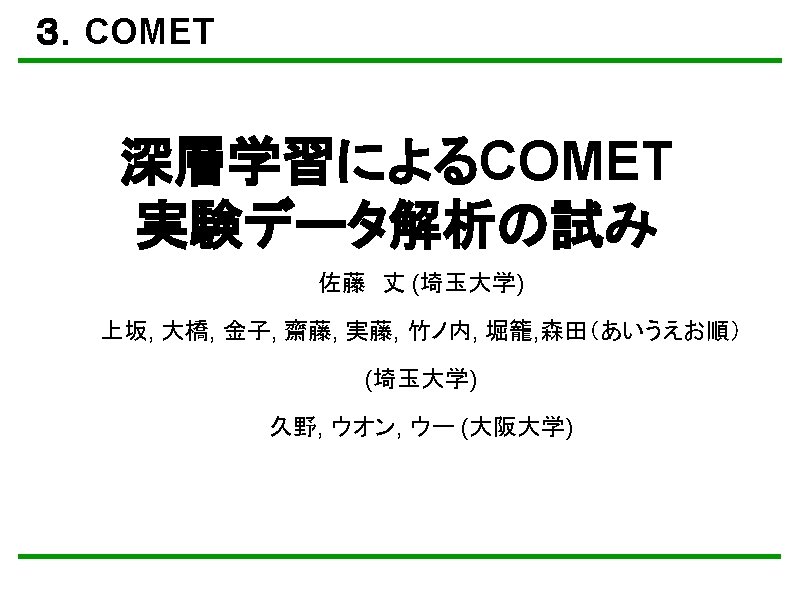

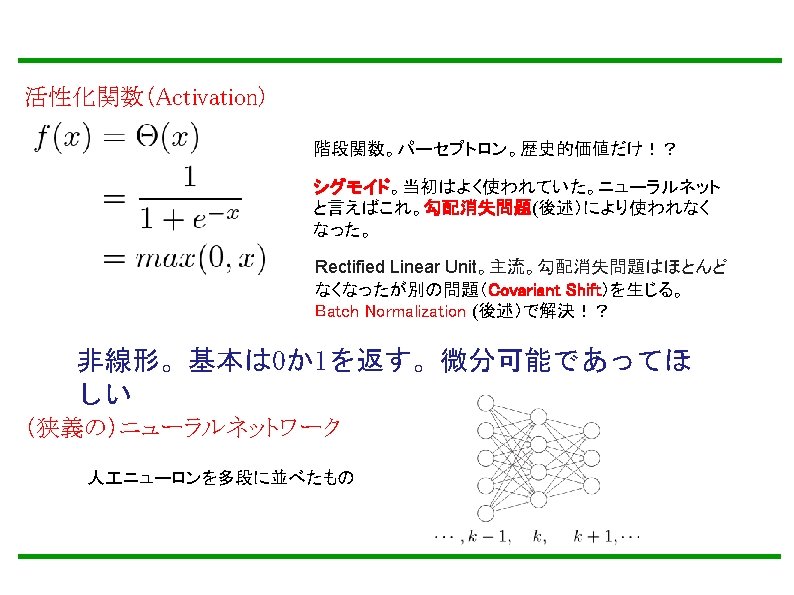

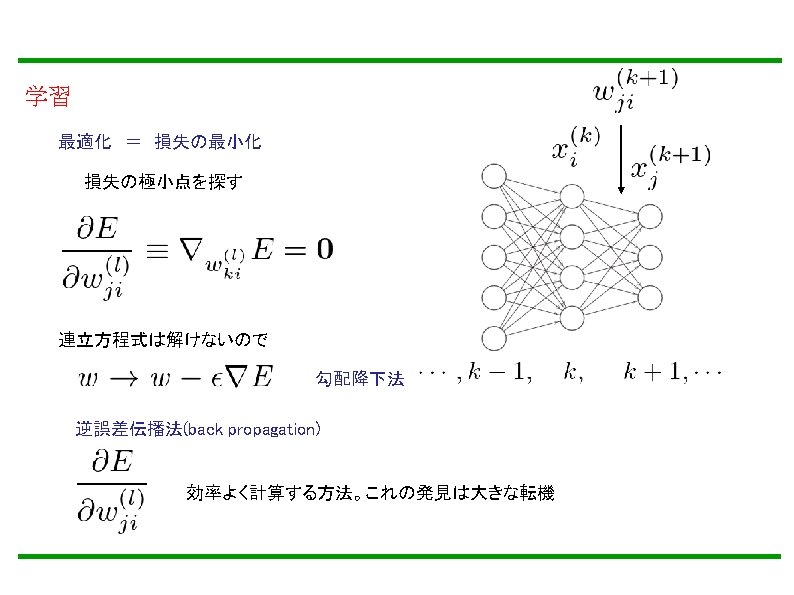

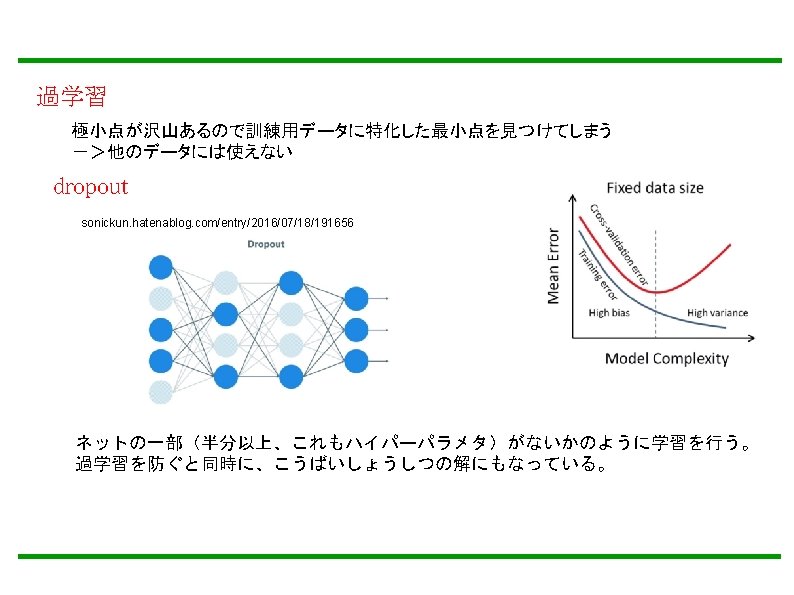

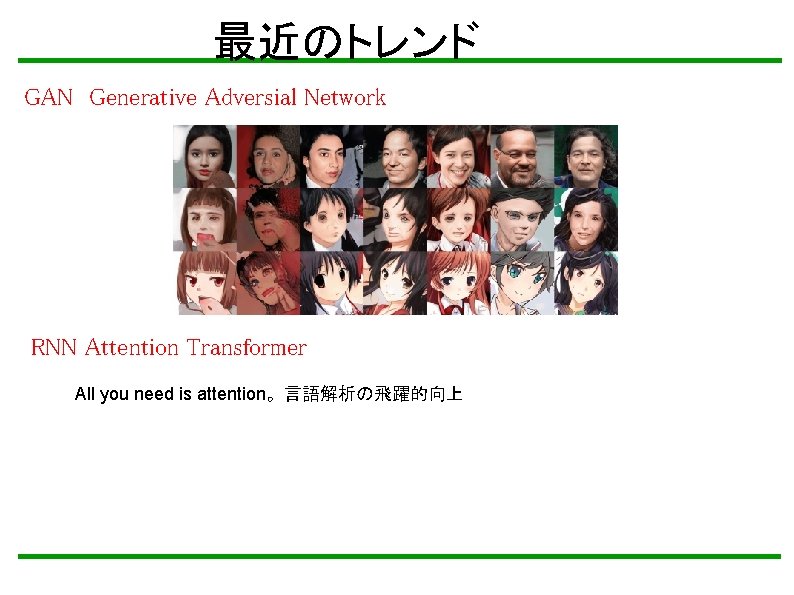

最近のトレンド GAN Generative Adversial Network RNN Attention Transformer All you need is attention。言語解析の飛躍的向上

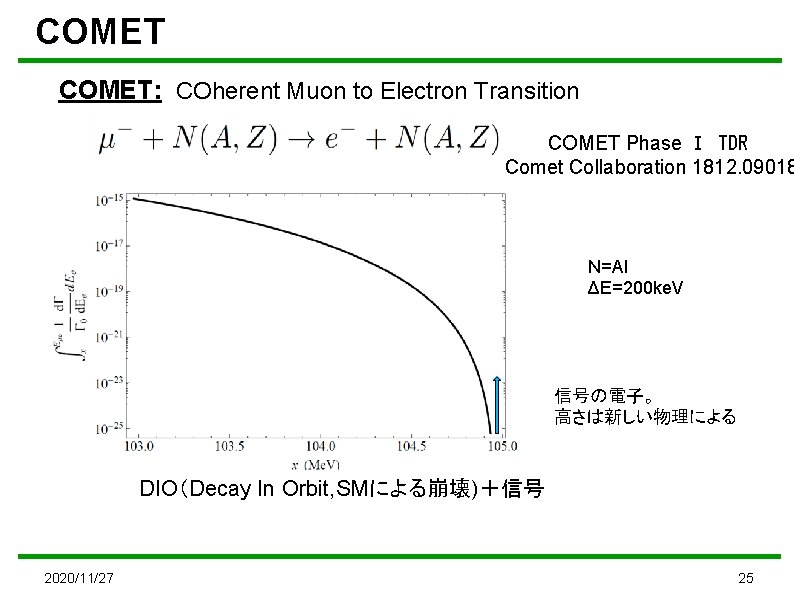

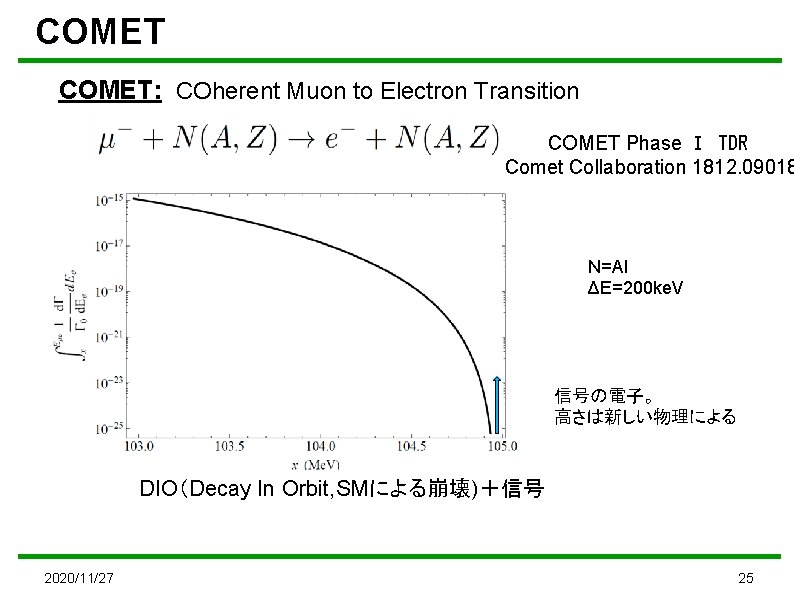

COMET: COherent Muon to Electron Transition COMET Phase Ⅰ TDR Comet Collaboration 1812. 09018 N=Al ΔE=200 ke. V 信号の電子。 高さは新しい物理による DIO(Decay In Orbit, SMによる崩壊)+信号 2020/11/27 25

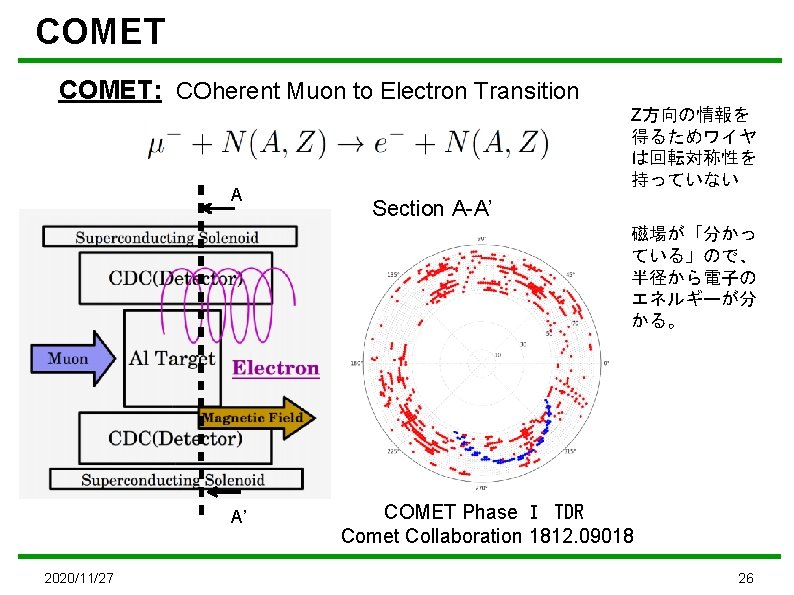

COMET: COherent Muon to Electron Transition A Z方向の情報を 得るためワイヤ は回転対称性を 持っていない Section A-A’ 磁場が「分かっ ている」ので、 半径から電子の エネルギーが分 かる。 A’ 2020/11/27 COMET Phase Ⅰ TDR Comet Collaboration 1812. 09018 26

Today’s topic Track Finding (as first step) ⇛ Better “Energy Estimation” Track Finding GBDT 2020/11/27 COMET Turn 1 Extraction Under Consideration Energy Estimation Kalman Filter 27

Today’s topic Track Finding (as first step) ⇛ Better “Energy Estimation” Track Finding Denoise ”Dn. CNN” 2020/11/27 DEEP LEARNING Turn 1 Extraction Energy Estimation Dn. CNN ? ? ? 28

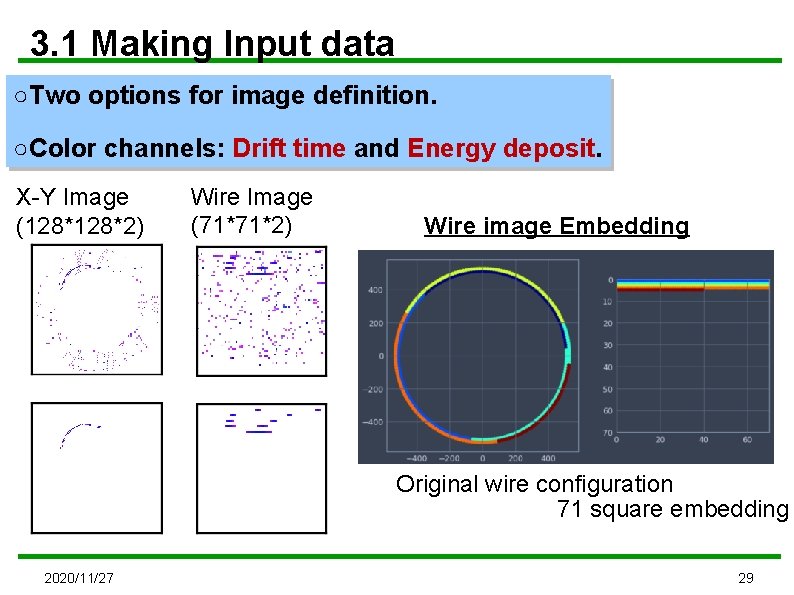

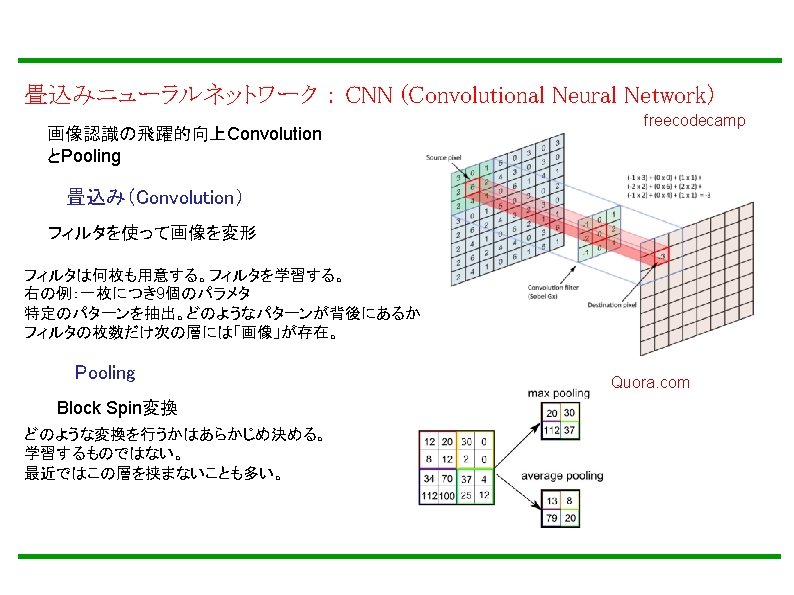

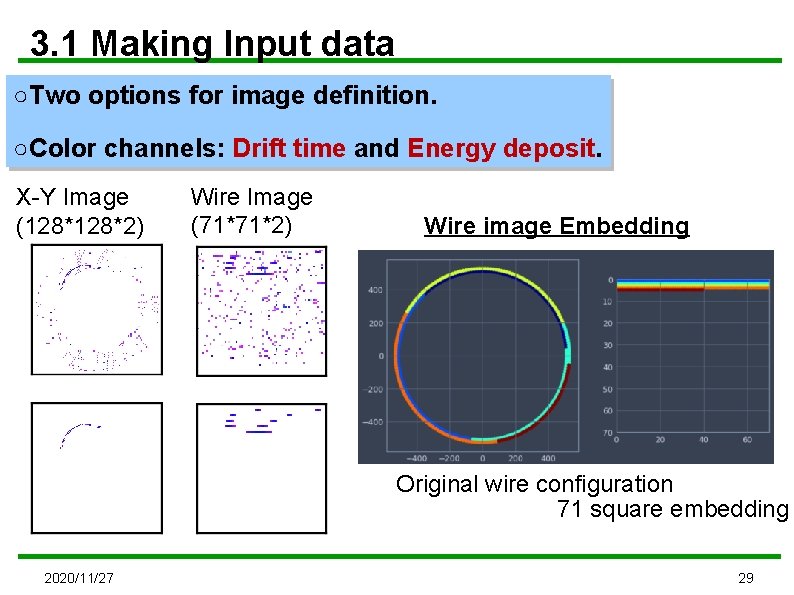

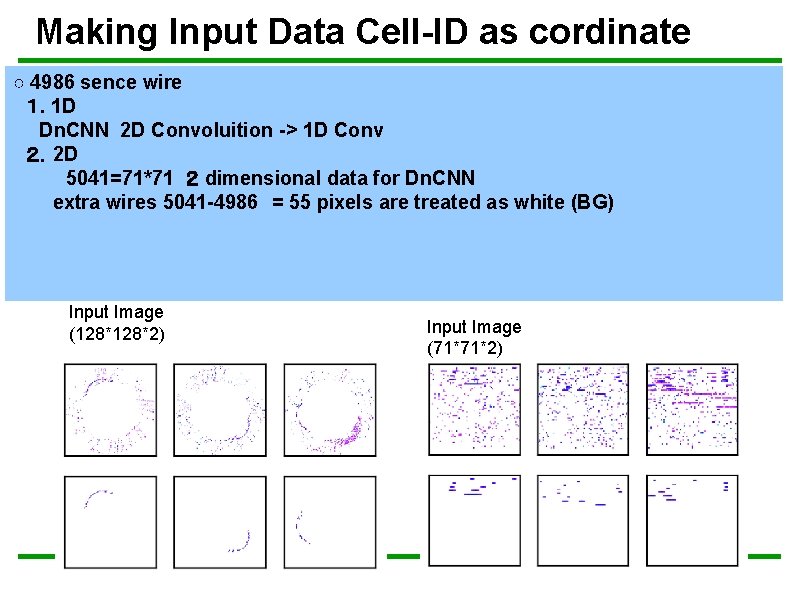

3. 1 Making Input data ○Two options for image definition. ○Color channels: Drift time and Energy deposit. X-Y Image (128*2) Wire Image (71*71*2) Wire image Embedding Original wire configuration 71 square embedding 2020/11/27 29

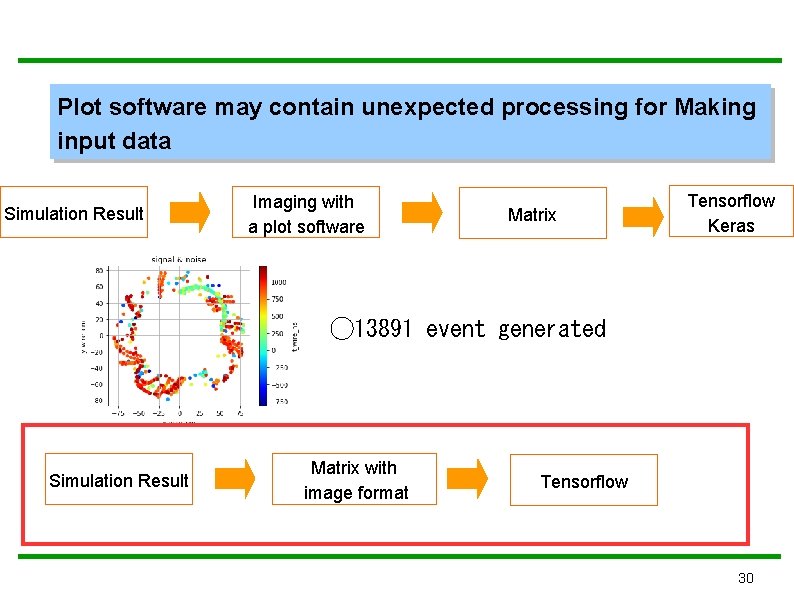

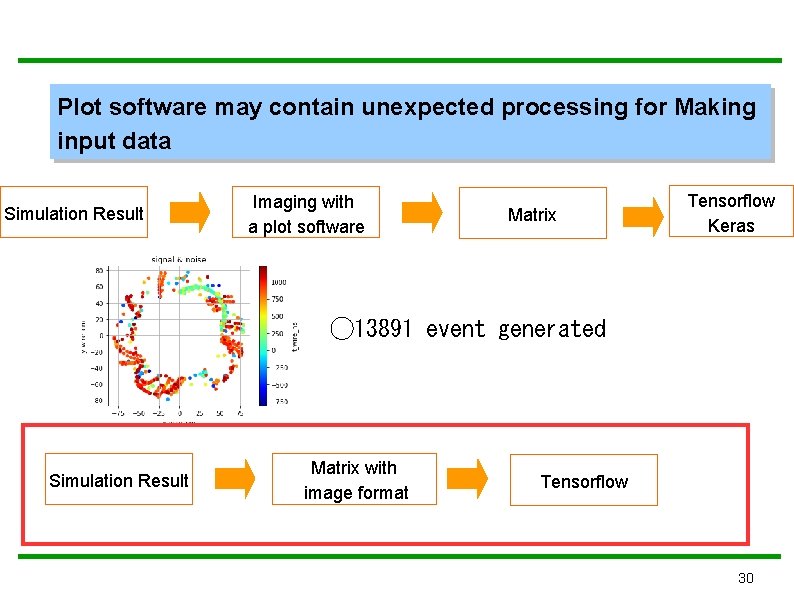

Plot software may contain unexpected processing for Making input data Simulation Result Imaging with a plot software Matrix Tensorflow Keras ◯ 13891 event generated Simulation Result Matrix with image format Tensorflow 30

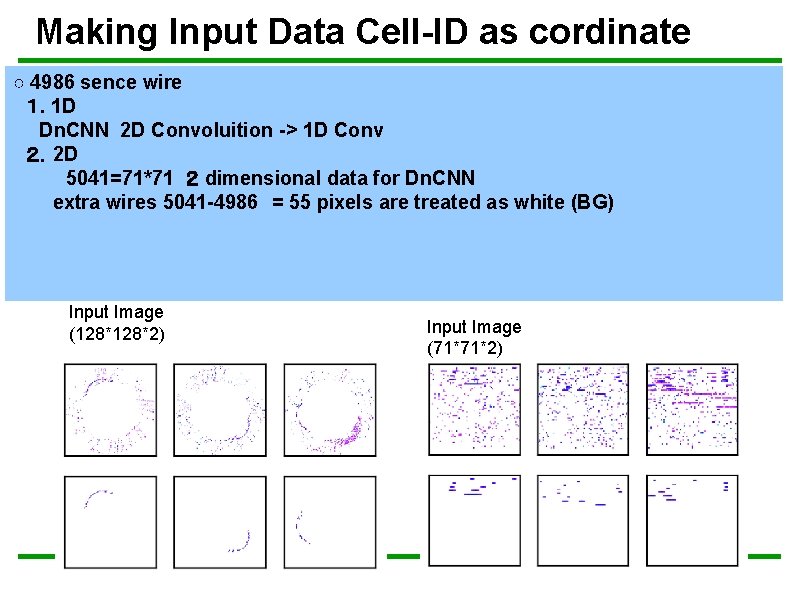

Making Input Data Cell-ID as cordinate ○ 4986 sence wire 1. 1 D Dn. CNN 2 D Convoluition -> 1 D Conv 2.2 D 5041=71*71 2 dimensional data for Dn. CNN extra wires 5041 -4986 = 55 pixels are treated as white (BG) Input Image (128*2) Input Image (71*71*2)

![Grey Scale Image Only Energy Deposit is considered Energy Deposit Cut Ignore Energy Deposit [Grey Scale Image] Only Energy Deposit is considered. Energy Deposit Cut: Ignore Energy Deposit](https://slidetodoc.com/presentation_image_h/ad114186d4a4d453bad51e514c1fd3d8/image-32.jpg)

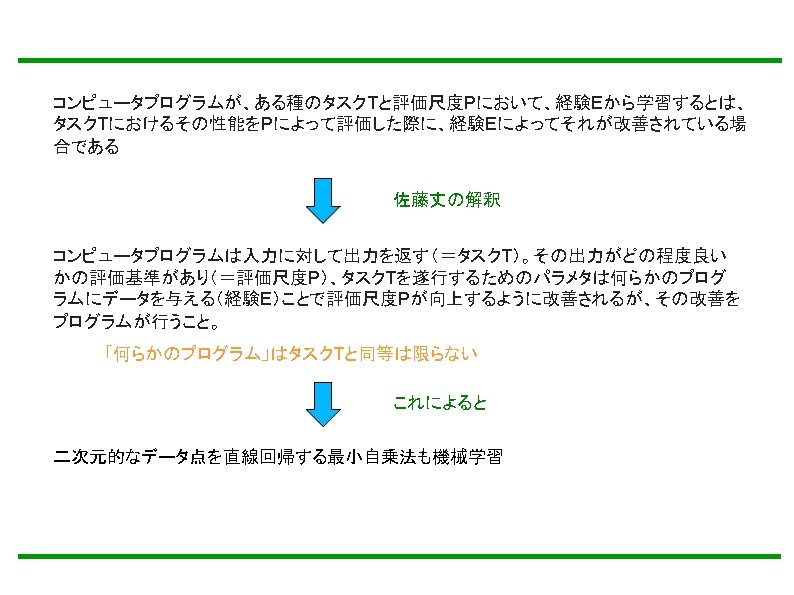

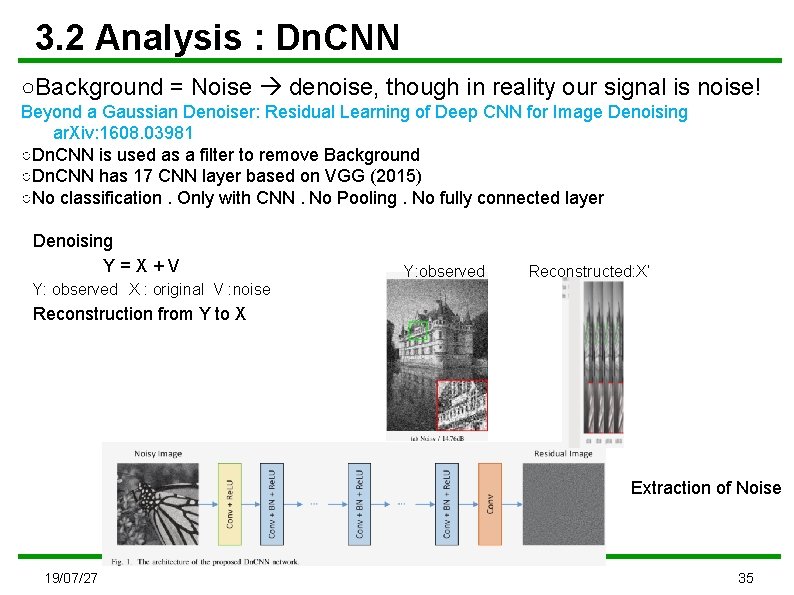

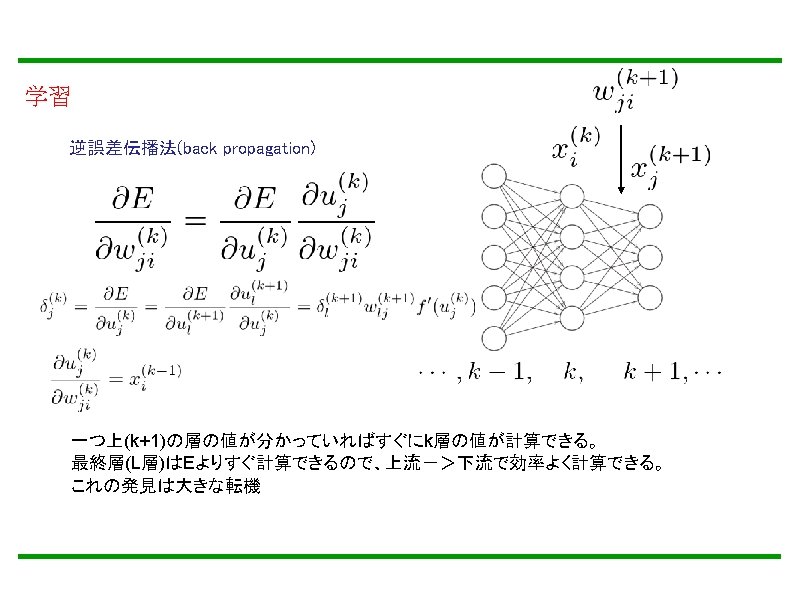

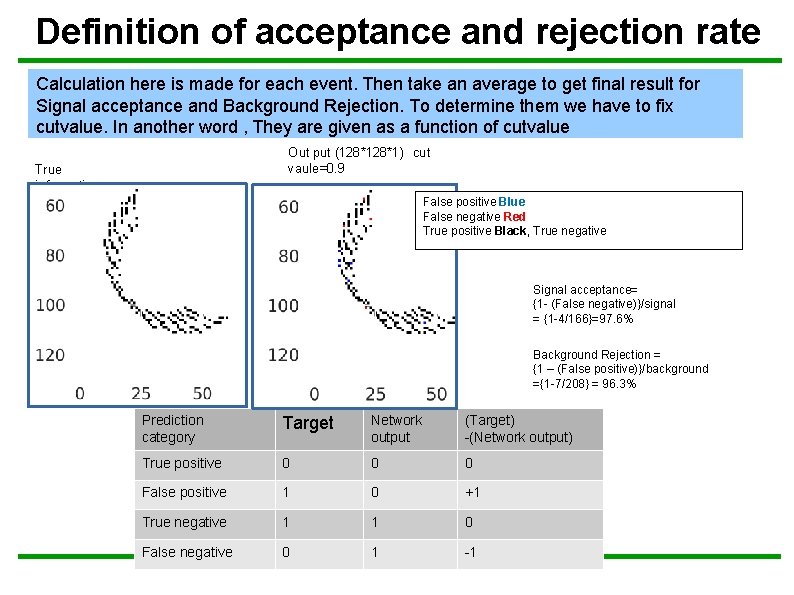

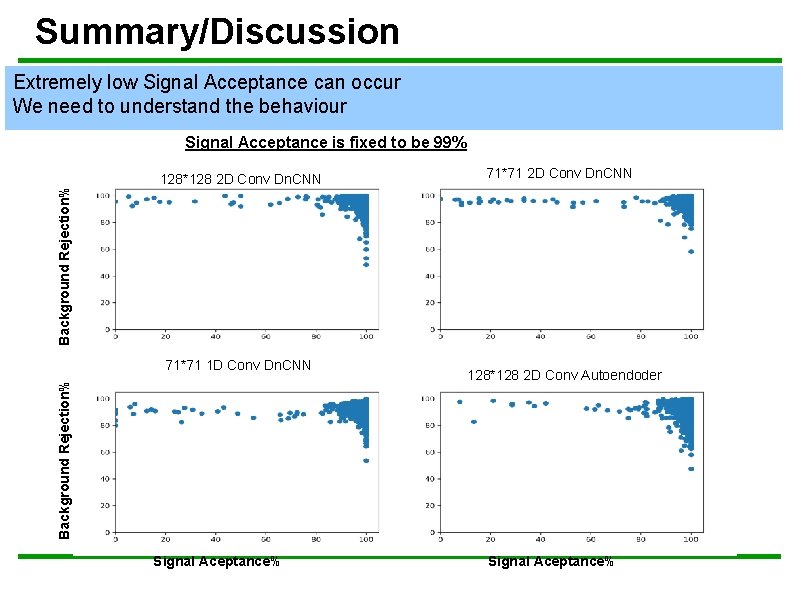

[Grey Scale Image] Only Energy Deposit is considered. Energy Deposit Cut: Ignore Energy Deposit > 255 ◯pandas hit_frame event_index img_posi_y energy_deposit turn_index 0 14 86 8. 0 0 1 0 9 64 115. 0 0 2 0 80 11. 0 0 10 104 44 10. 0 1 11 0 114 41 0. 0 0. . . . . 457 199 18 91 7. 0 1 458 199 18 92 10. 0 1 image ◯Ignore Energy Deposit > 255 hit ◯To elaborate Signal Hits tuning grey scale definition for energy d 0 ⇔ black and 255 ⇔ White ◯The labels are generated for each event by the turn_index Only include turn index = 1 hits → label 1 Include turn index > 1 hits → label 2 Eliminate turn index >0 hits → label 0 32

![Making input data Grey Scale Image Only Energy Deposit is considered Energy Deposit Cut Making input data [Grey Scale Image] Only Energy Deposit is considered. Energy Deposit Cut:](https://slidetodoc.com/presentation_image_h/ad114186d4a4d453bad51e514c1fd3d8/image-33.jpg)

Making input data [Grey Scale Image] Only Energy Deposit is considered. Energy Deposit Cut: Ignore Energy Deposit > 255 hit Among 13891 events: background 5583905 signal 1062679 after this cut : background 4073941(72. 96%) signal 1060872(99. 83%) ◯ 33

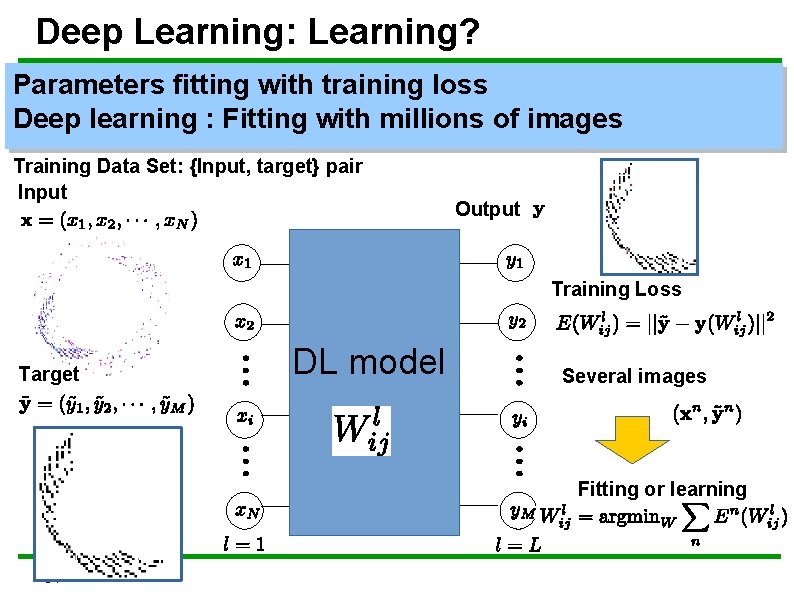

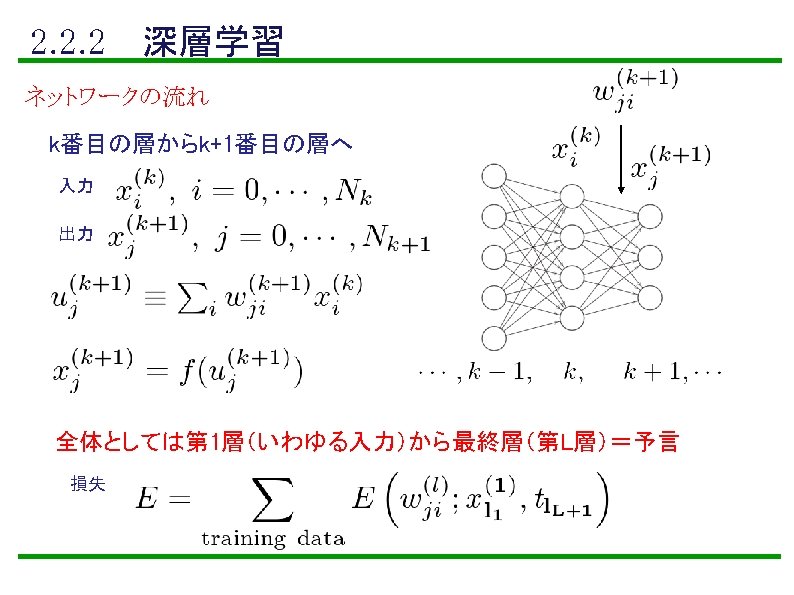

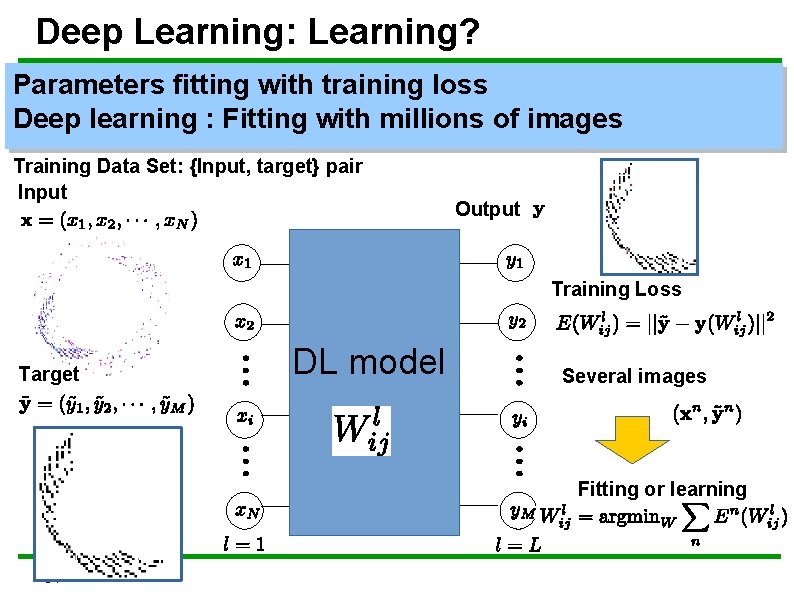

Deep Learning: Learning? Parameters fitting with training loss Deep learning : Fitting with millions of images Training Data Set: {Input, target} pair Input Output Training Loss Target DL model Several images Fitting or learning 34

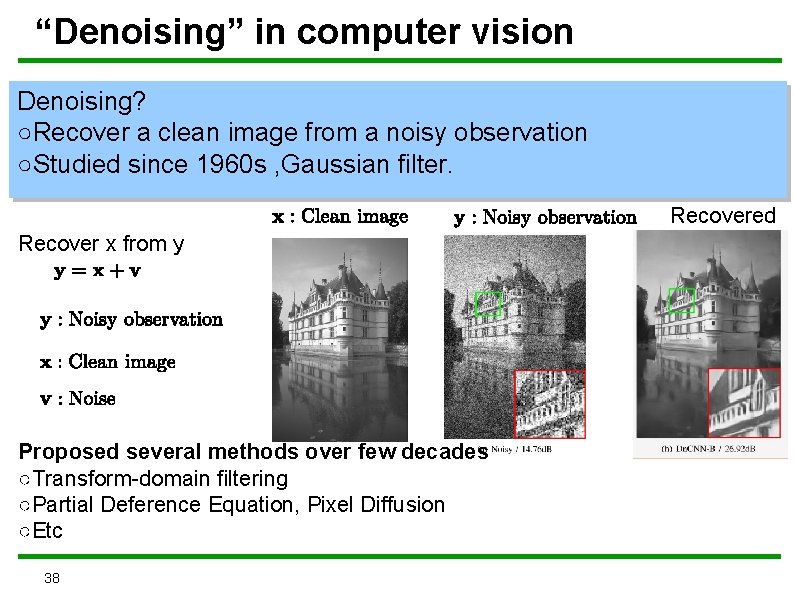

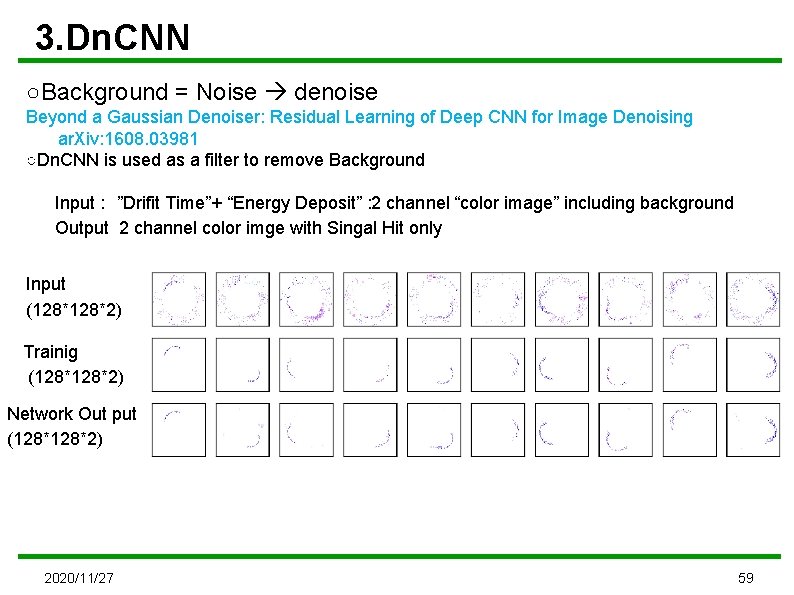

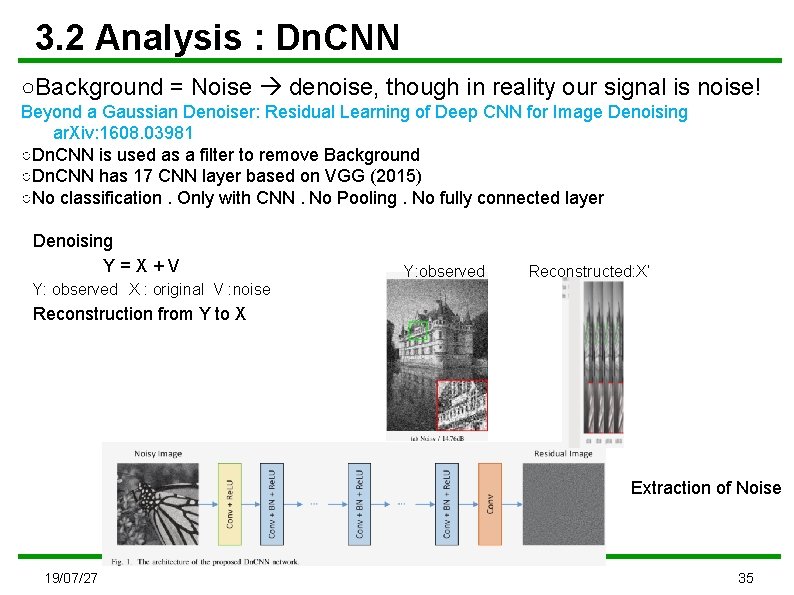

3. 2 Analysis : Dn. CNN ○Background = Noise denoise, though in reality our signal is noise! Beyond a Gaussian Denoiser: Residual Learning of Deep CNN for Image Denoising ar. Xiv: 1608. 03981 ○Dn. CNN is used as a filter to remove Background ○Dn. CNN has 17 CNN layer based on VGG (2015) ○No classification. Only with CNN. No Pooling. No fully connected layer Denoising Y = X + V Y: observed X : original V : noise Y: observed Reconstructed: X’ Reconstruction from Y to X Extraction of Noise 19/07/27 35

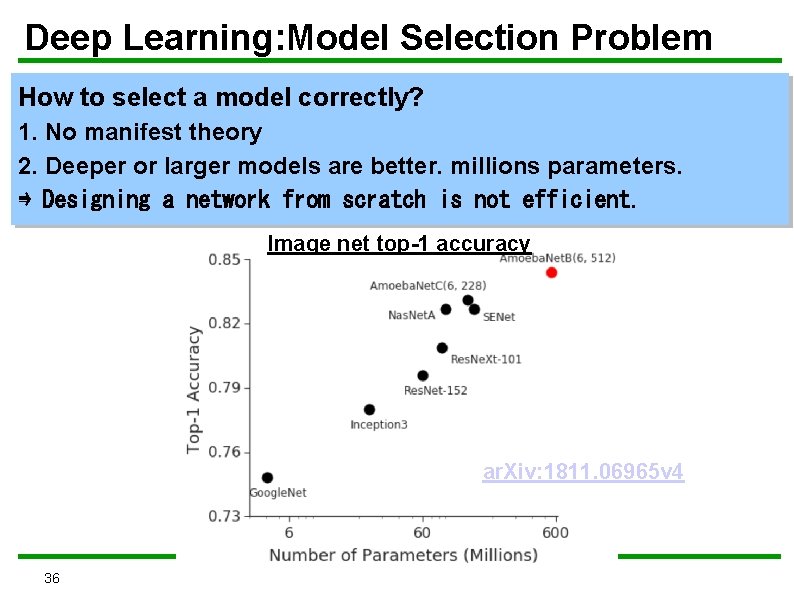

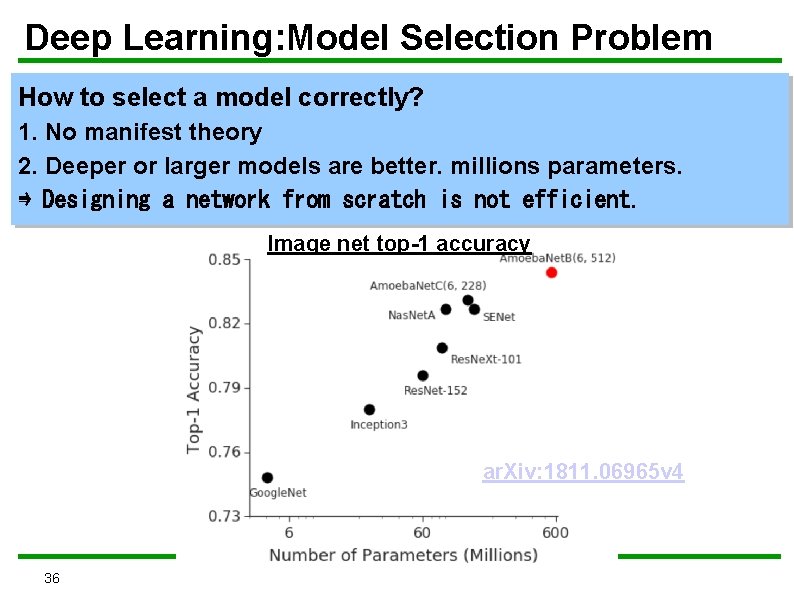

Deep Learning: Model Selection Problem How to select a model correctly? 1. No manifest theory 2. Deeper or larger models are better. millions parameters. ⇛ Designing a network from scratch is not efficient. Image net top-1 accuracy ar. Xiv: 1811. 06965 v 4 36

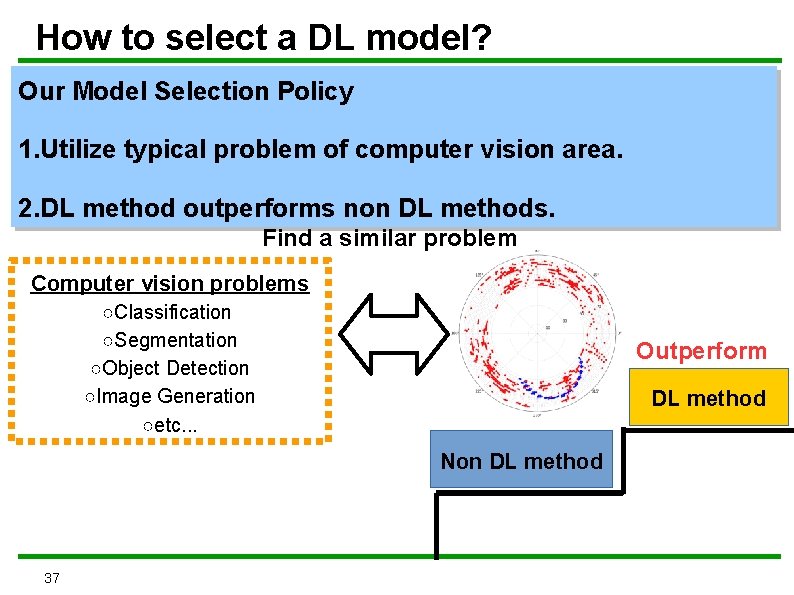

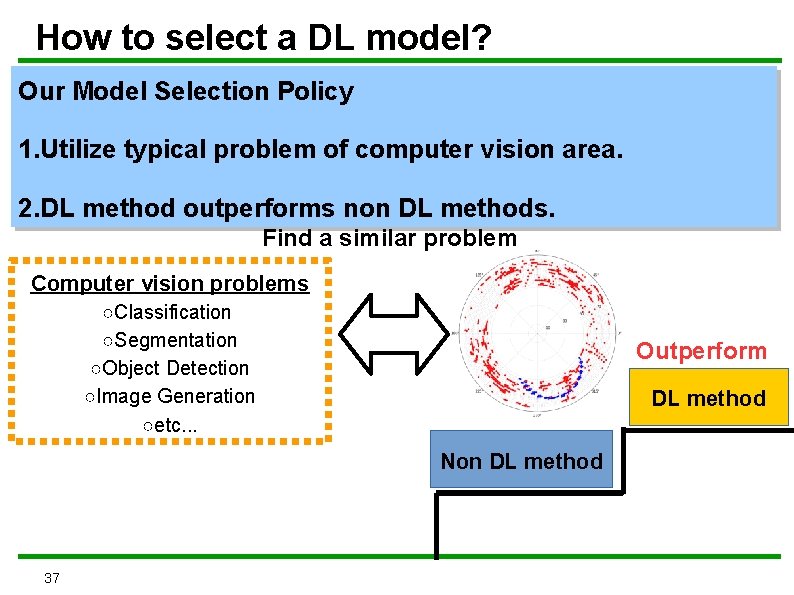

How to select a DL model? Our Model Selection Policy 1. Utilize typical problem of computer vision area. 2. DL method outperforms non DL methods. Find a similar problem Computer vision problems ○Classification ○Segmentation ○Object Detection ○Image Generation ○etc. . . Outperform DL method Non DL method 37

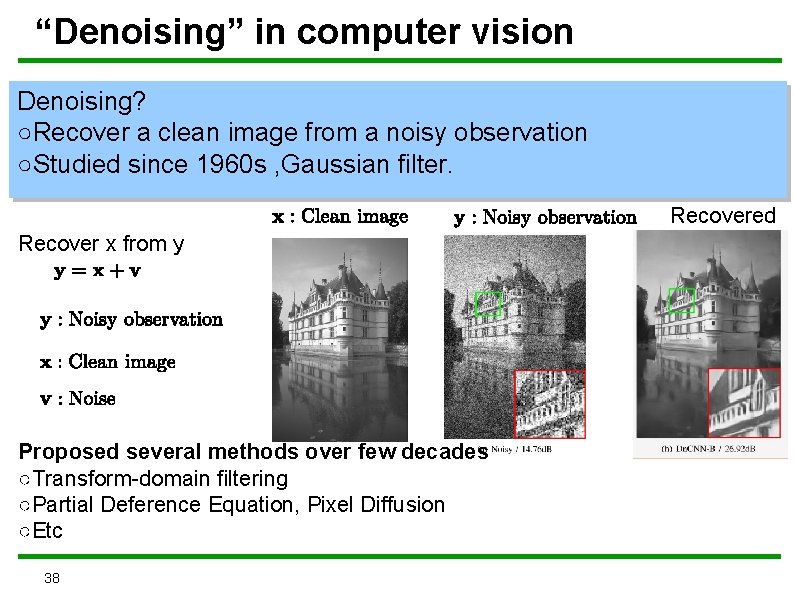

“Denoising” in computer vision Denoising? ○Recover a clean image from a noisy observation ○Studied since 1960 s , Gaussian filter. Recovered Recover x from y Proposed several methods over few decades ○Transform-domain filtering ○Partial Deference Equation, Pixel Diffusion ○Etc 38

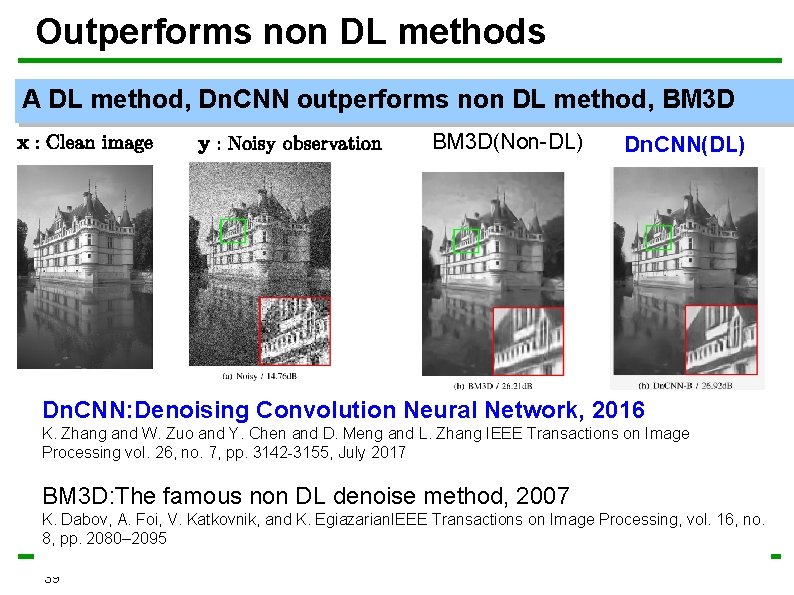

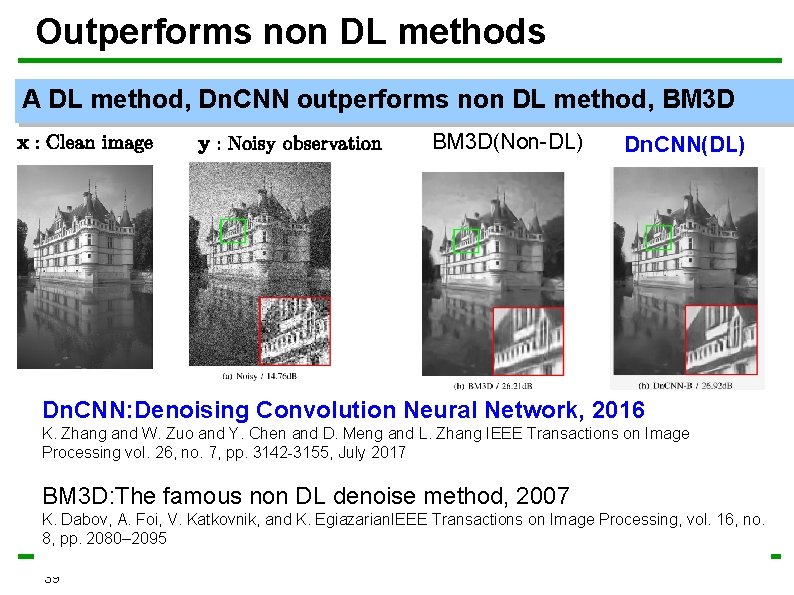

Outperforms non DL methods A DL method, Dn. CNN outperforms non DL method, BM 3 D(Non-DL) Dn. CNN(DL) Dn. CNN: Denoising Convolution Neural Network, 2016 K. Zhang and W. Zuo and Y. Chen and D. Meng and L. Zhang IEEE Transactions on Image Processing vol. 26, no. 7, pp. 3142 -3155, July 2017 BM 3 D: The famous non DL denoise method, 2007 K. Dabov, A. Foi, V. Katkovnik, and K. Egiazarian. IEEE Transactions on Image Processing, vol. 16, no. 8, pp. 2080– 2095 39

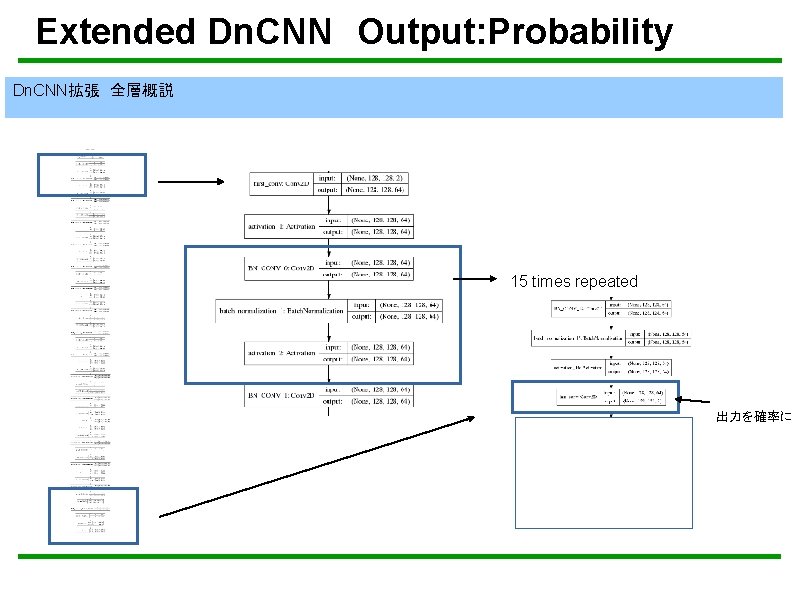

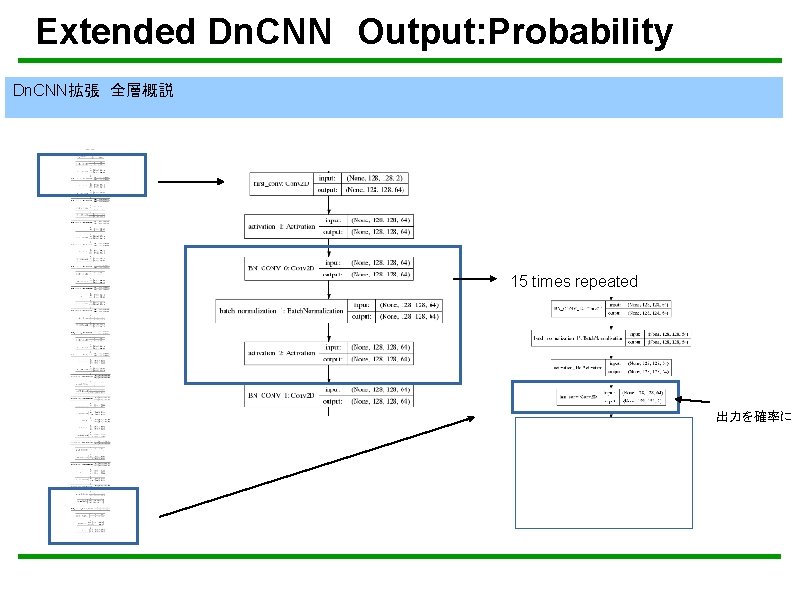

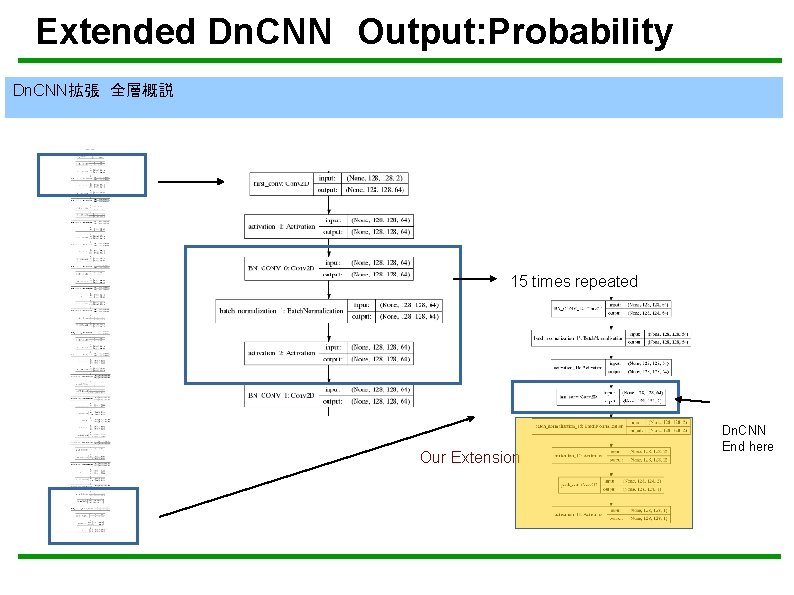

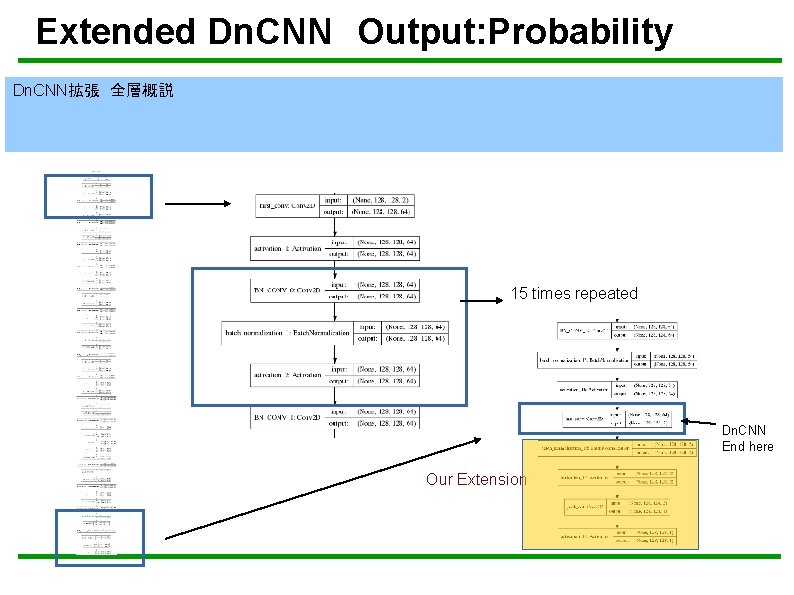

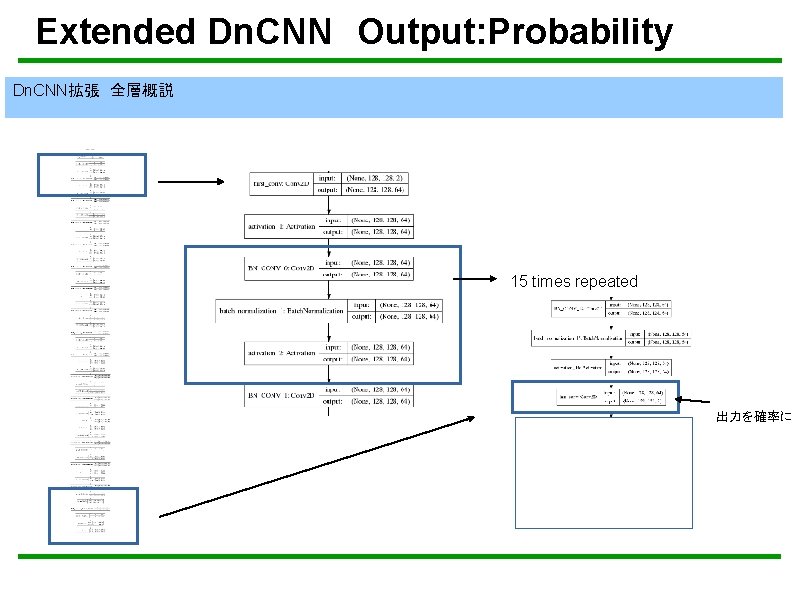

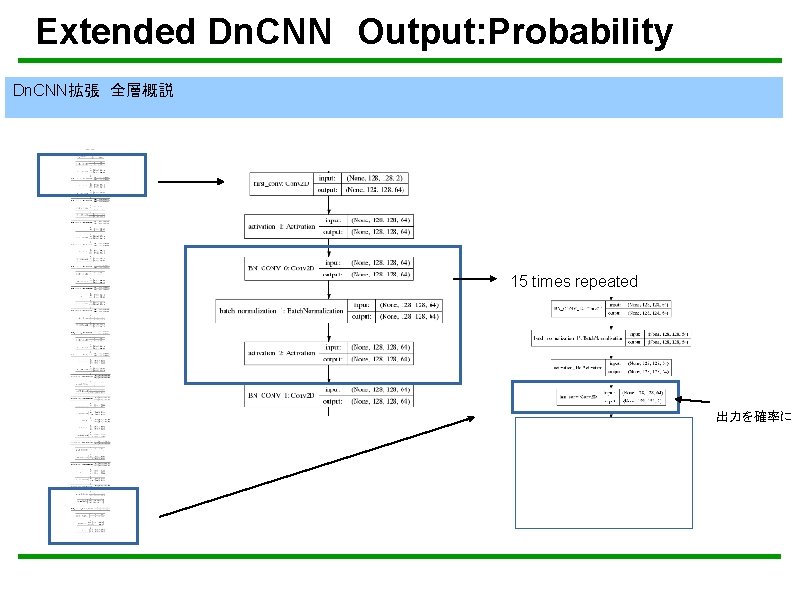

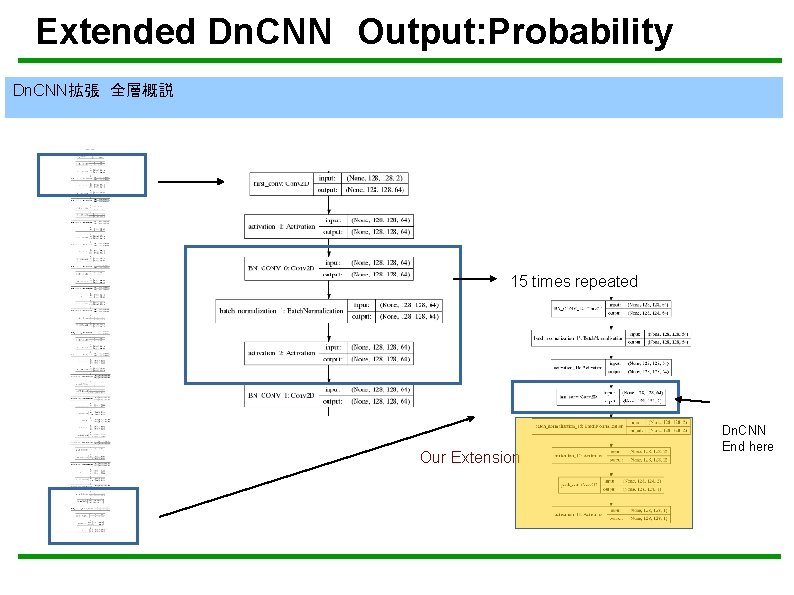

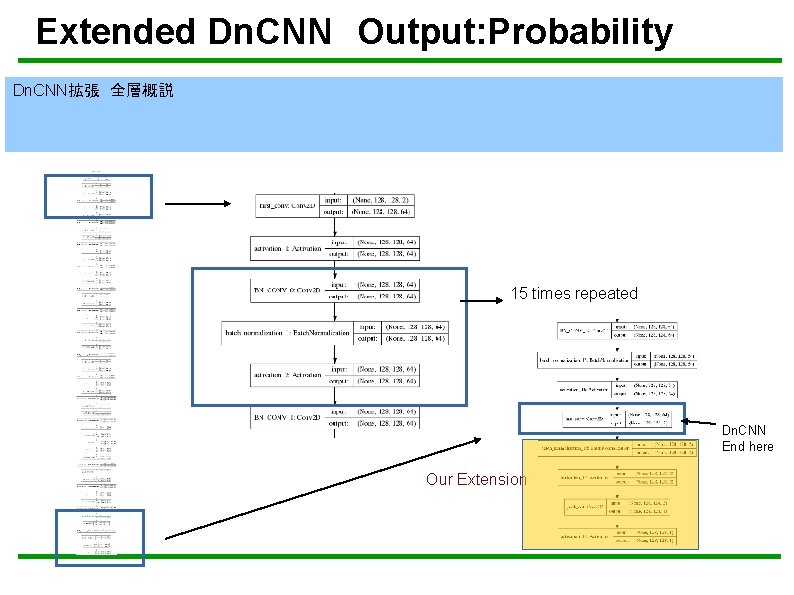

Extended Dn. CNN Output: Probability Dn. CNN拡張 全層概説 15 times repeated 出力を確率に

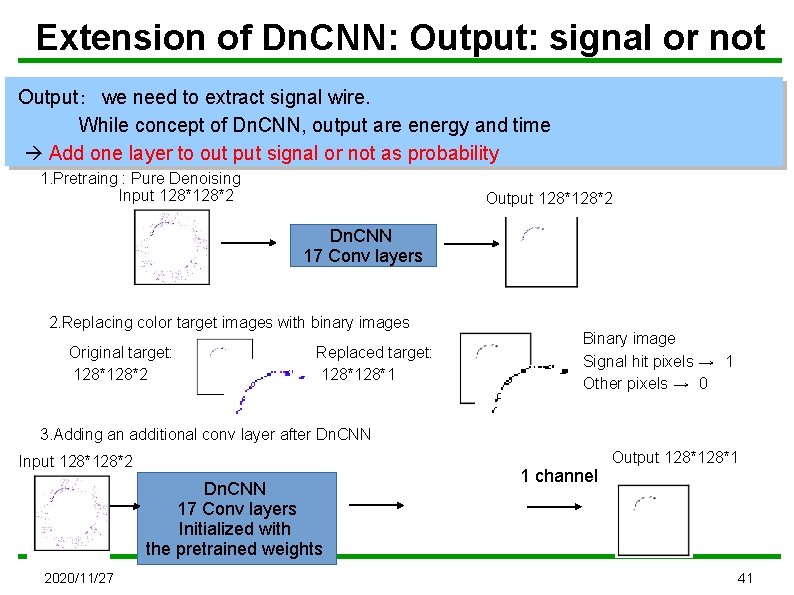

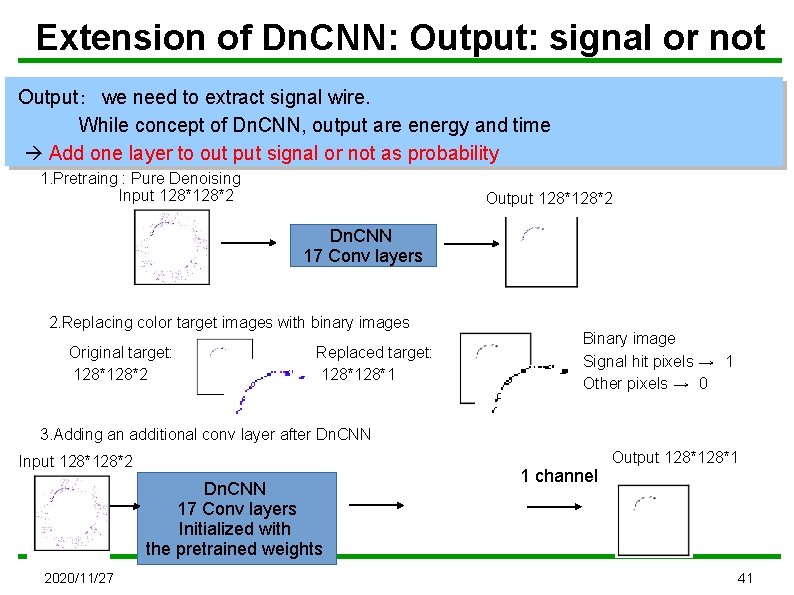

Extension of Dn. CNN: Output: signal or not Output: we need to extract signal wire. While concept of Dn. CNN, output are energy and time Add one layer to out put signal or not as probability 1. Pretraing : Pure Denoising Input 128*2 Output 128*2 Dn. CNN 17 Conv layers 2. Replacing color target images with binary images Original target: 128*2 Replaced target: 128*1 Binary image Signal hit pixels → 1 Other pixels → 0 3. Adding an additional conv layer after Dn. CNN Input 128*2 Dn. CNN 17 Conv layers Initialized with the pretrained weights 2020/11/27 1 channel Output 128*1 41

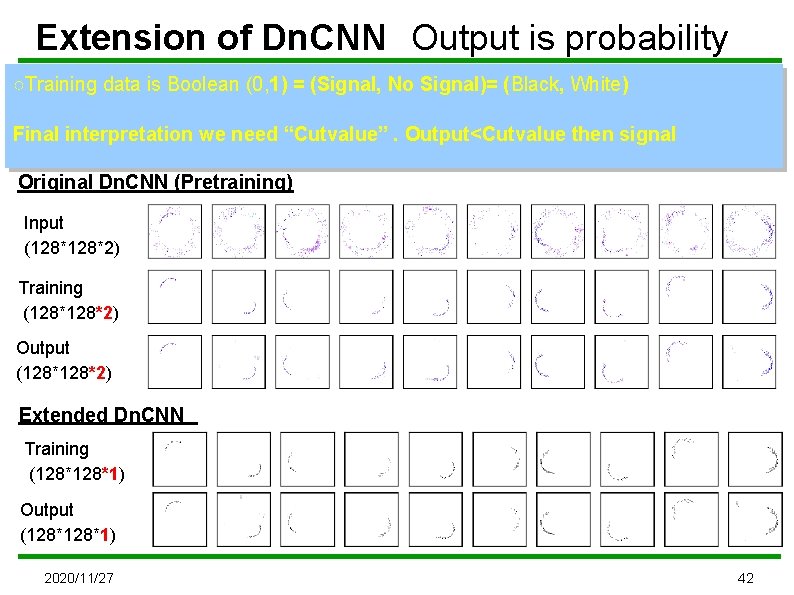

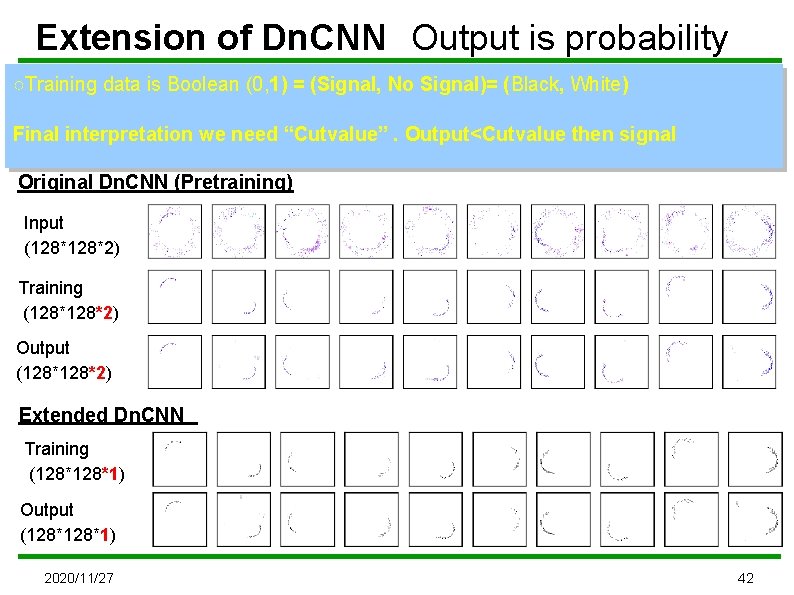

Extension of Dn. CNN Output is probability ○Training data is Boolean (0, 1) = (Signal, No Signal)= (Black, White) Final interpretation we need “Cutvalue”. Output<Cutvalue then signal Original Dn. CNN (Pretraining) Input (128*2) Training (128*2) Output (128*2) Extended Dn. CNN Training (128*1) Output (128*1) 2020/11/27 42

Extended Dn. CNN Output: Probability Dn. CNN拡張 全層概説 15 times repeated 出力を確率に

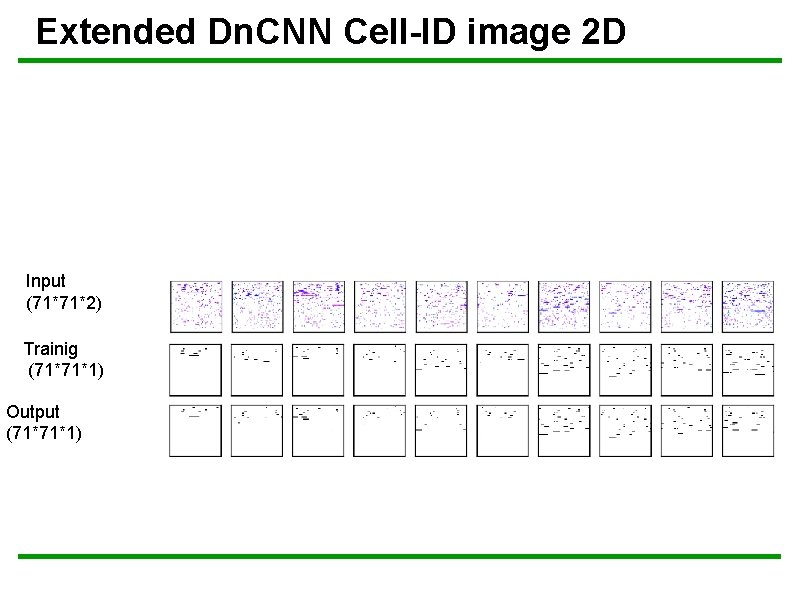

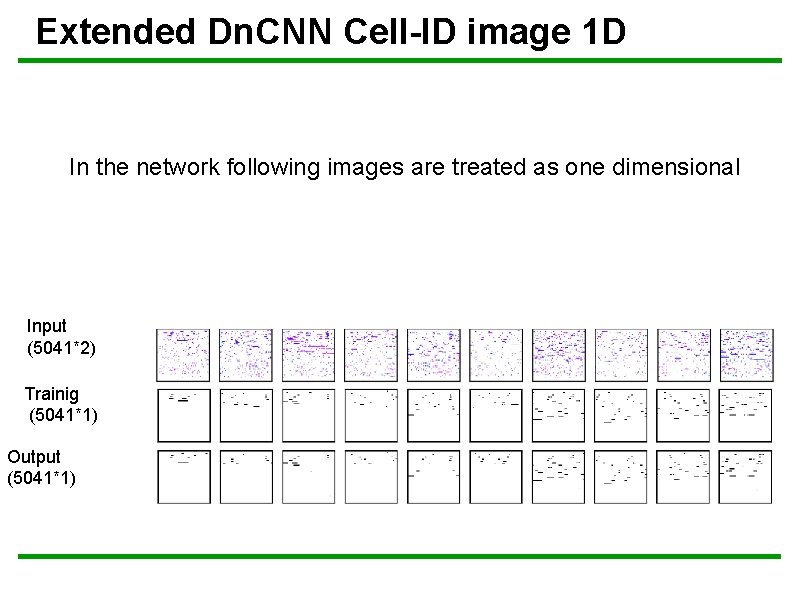

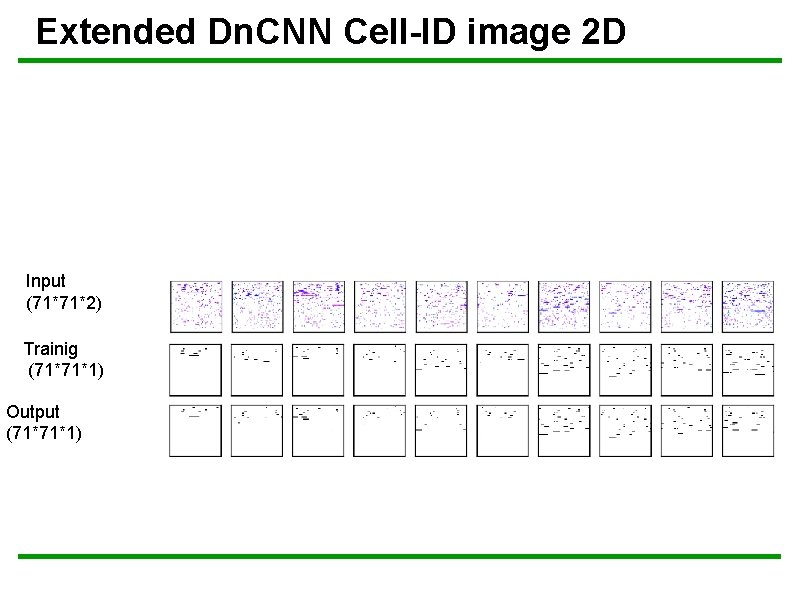

Extended Dn. CNN Cell-ID image 2 D Input (71*71*2) Trainig (71*71*1) Output (71*71*1)

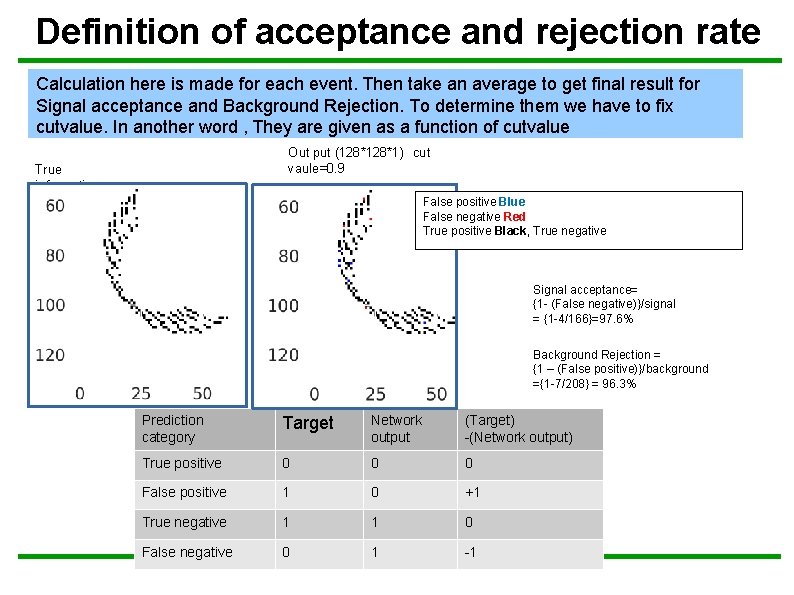

Definition of acceptance and rejection rate Calculation here is made for each event. Then take an average to get final result for Signal acceptance and Background Rejection. To determine them we have to fix cutvalue. In another word , They are given as a function of cutvalue Out put (128*1) cut vaule=0. 9 True information False positive Blue False negative Red True positive Black, True negative Signal acceptance= {1 - (False negative)}/signal = {1 -4/166}=97. 6% Background Rejection = {1 – (False positive)}/background ={1 -7/208} = 96. 3% Prediction category Target Network output (Target) -(Network output) True positive 0 0 0 False positive 1 0 +1 True negative 1 1 0 False negative 0 1 -1

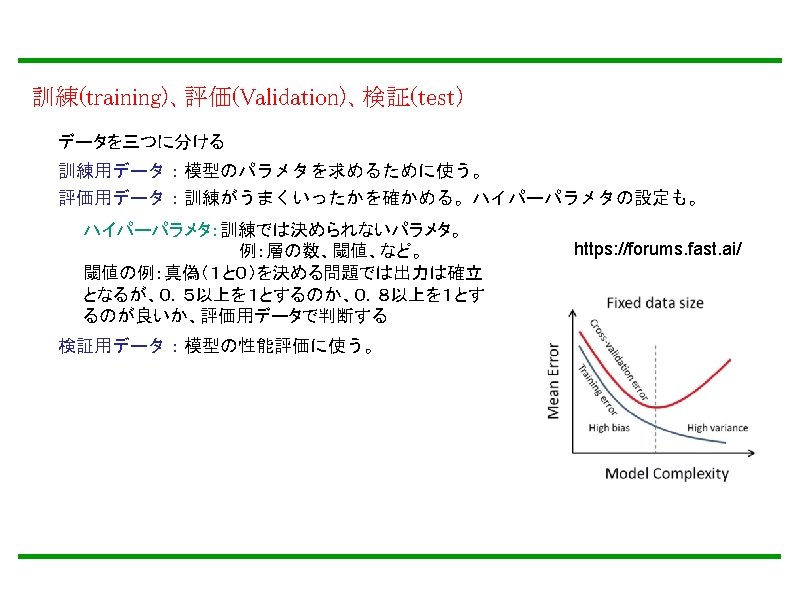

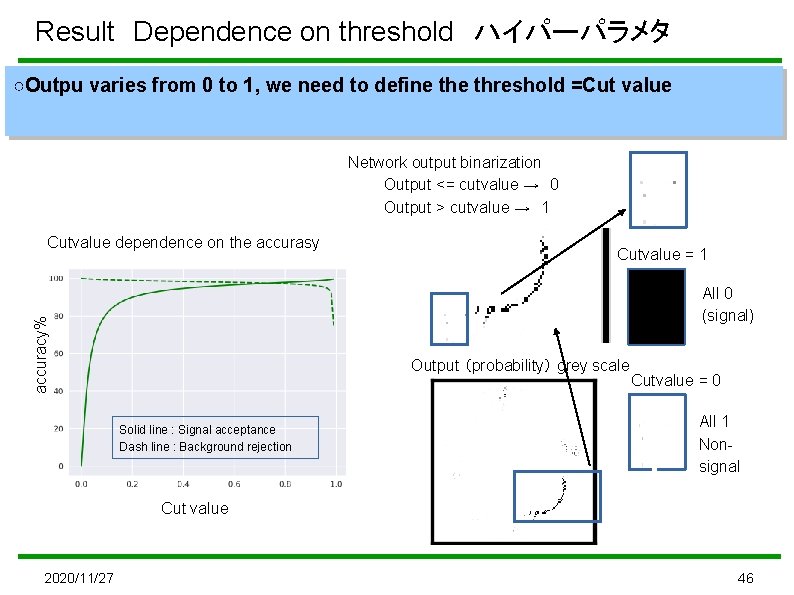

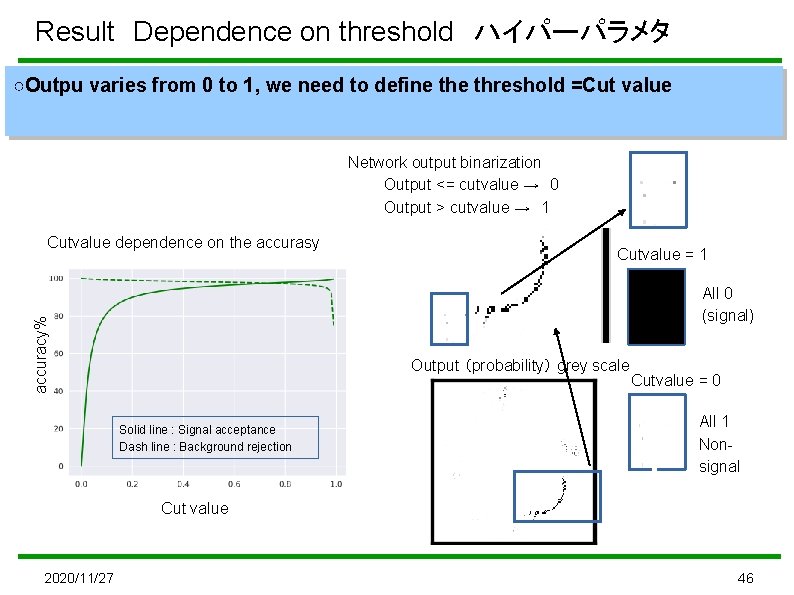

Result Dependence on threshold ハイパーパラメタ ○Outpu varies from 0 to 1, we need to define threshold =Cut value Network output binarization Output <= cutvalue → 0 Output > cutvalue → 1 Cutvalue dependence on the accurasy Cutvalue = 1 accuracy% All 0 (signal) Output (probability) grey scale Solid line : Signal acceptance Dash line : Background rejection Cutvalue = 0 All 1 Nonsignal Cut value 2020/11/27 46

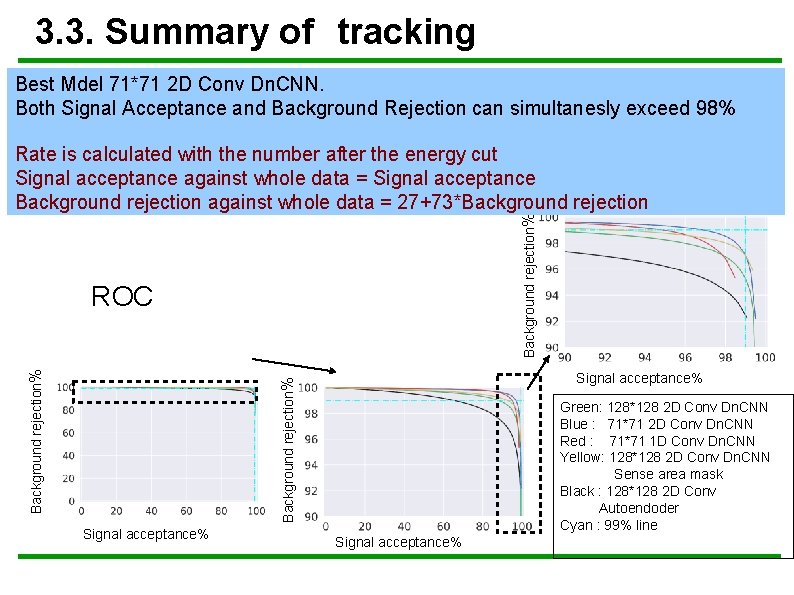

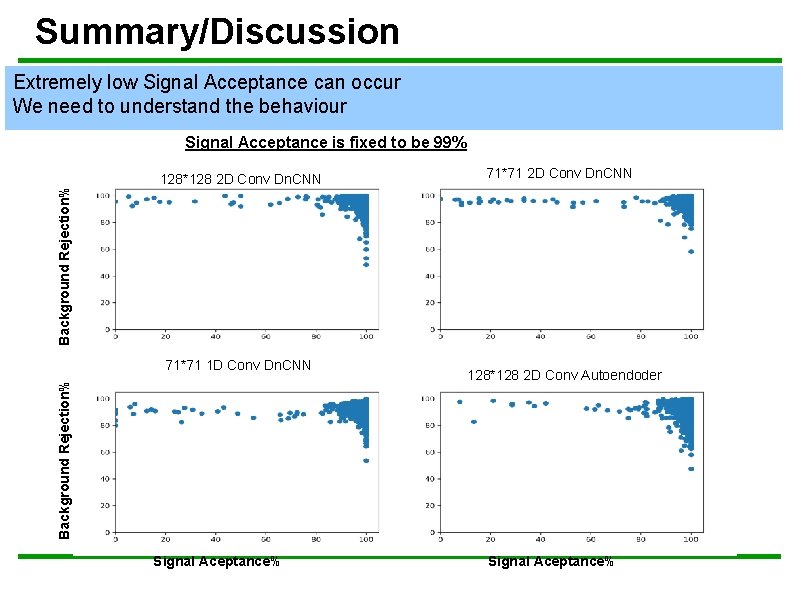

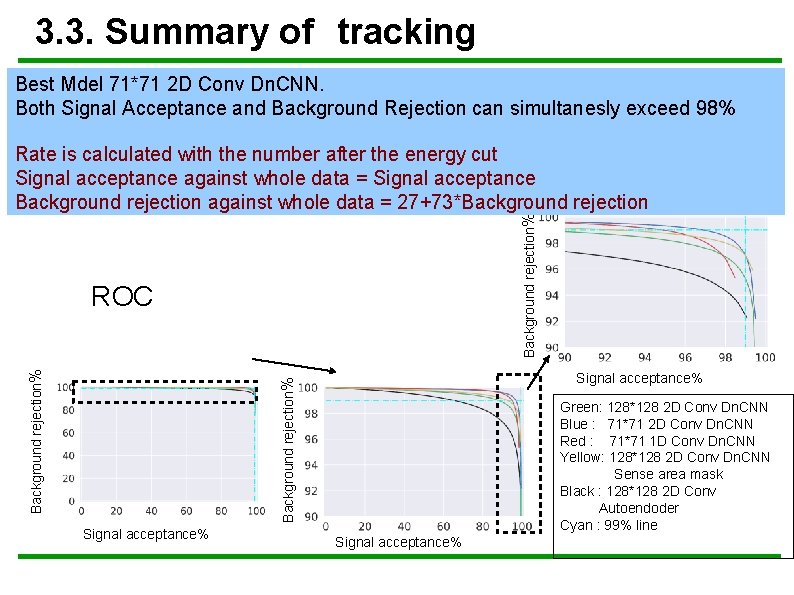

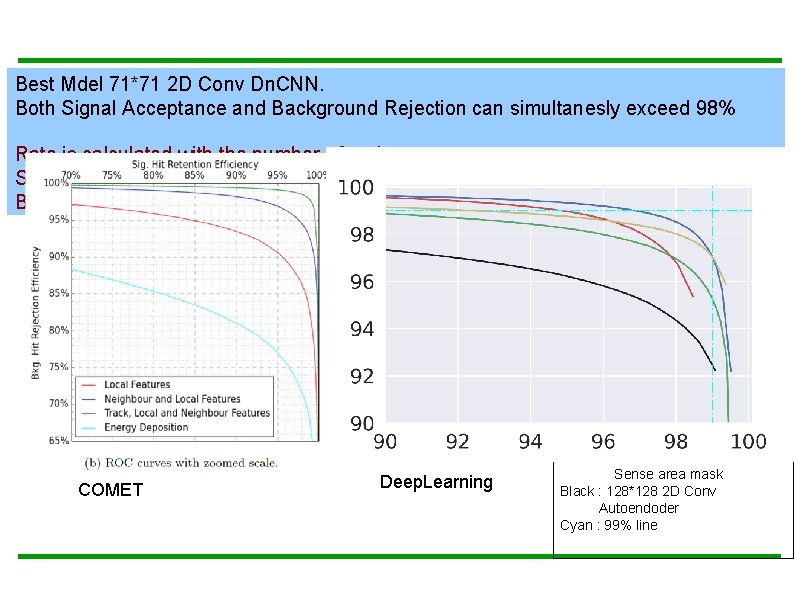

3. 3. Summary of tracking Best Mdel 71*71 2 D Conv Dn. CNN. Both Signal Acceptance and Background Rejection can simultanesly exceed 98% Background rejection% Rate is calculated with the number after the energy cut Signal acceptance against whole data = Signal acceptance Background rejection against whole data = 27+73*Background rejection Signal acceptance% Background rejection% ROC Signal acceptance% Green: 128*128 2 D Conv Dn. CNN Blue : 71*71 2 D Conv Dn. CNN Red : 71*71 1 D Conv Dn. CNN Yellow: 128*128 2 D Conv Dn. CNN Sense area mask Black : 128*128 2 D Conv Autoendoder Cyan : 99% line Signal acceptance%

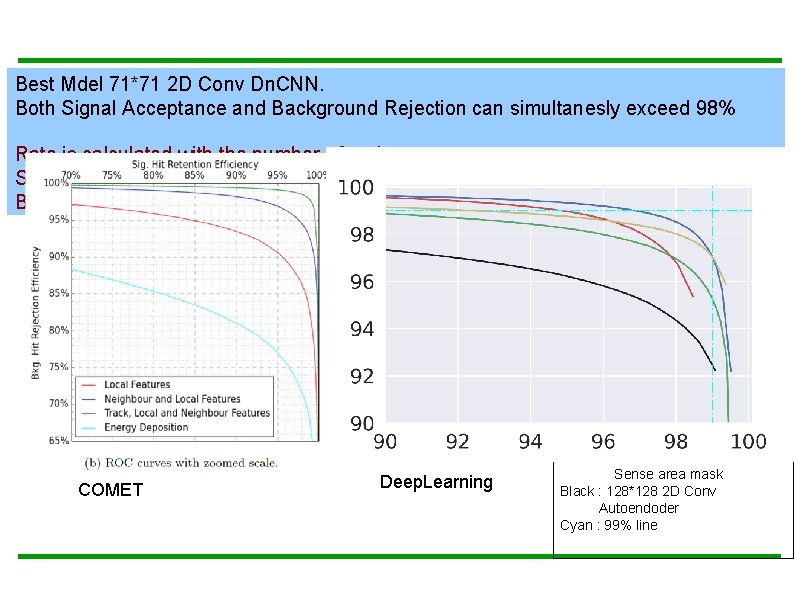

Best Mdel 71*71 2 D Conv Dn. CNN. Both Signal Acceptance and Background Rejection can simultanesly exceed 98% Rate is calculated with the number after the energy cut Signal acceptance against whole data = Signal acceptance Background rejection against whole data = 27+73*Background rejection ROC COMET Deep. Learning Green: 128*128 2 D Conv Dn. CNN Blue : 71*71 2 D Conv Dn. CNN Red : 71*71 1 D Conv Dn. CNN Yellow: 128*128 2 D Conv Dn. CNN Sense area mask Black : 128*128 2 D Conv Autoendoder Cyan : 99% line

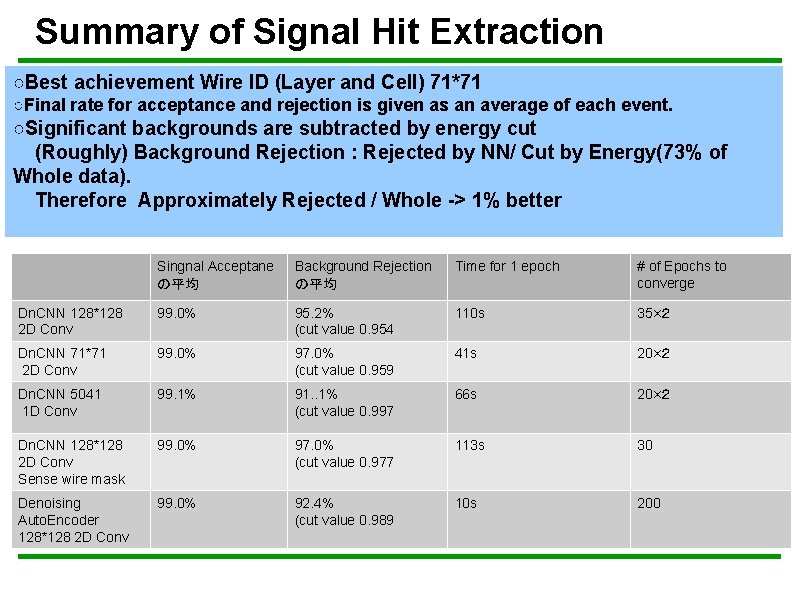

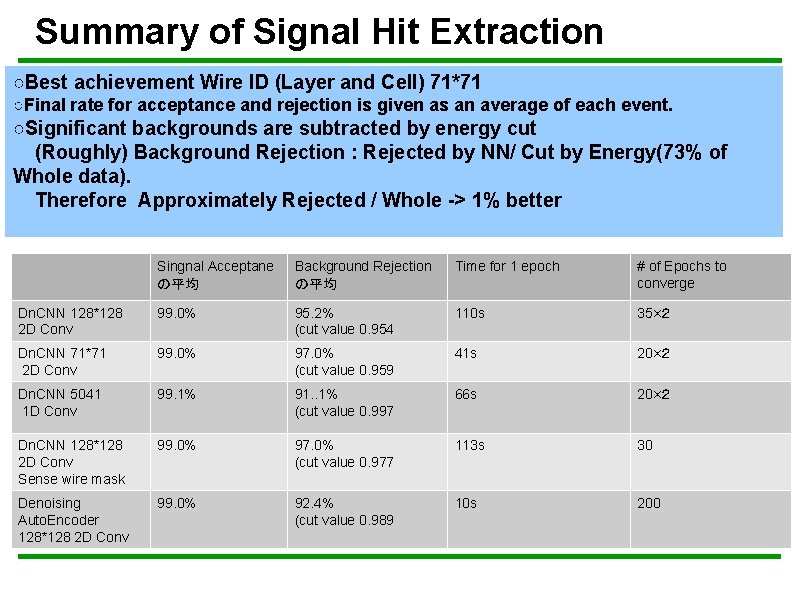

Summary of Signal Hit Extraction ○Best achievement Wire ID (Layer and Cell) 71*71 ○Final rate for acceptance and rejection is given as an average of each event. ○Significant backgrounds are subtracted by energy cut (Roughly) Background Rejection : Rejected by NN/ Cut by Energy(73% of Whole data). Therefore Approximately Rejected / Whole -> 1% better ○Batch Normalization 層追加 Singnal Acceptane Background Rejection ○Dropout 層を追加 の平均 各Pooling層の後とFC層の後 95. 2% ○最適化はAdam (cut value 0. 954 ○Batch size は 45 Time for 1 epoch # of Epochs to converge 110 s 35×2 Dn. CNN 128*128 2 D Conv 99. 0% Dn. CNN 71*71 2 D Conv 99. 0% 97. 0% (cut value 0. 959 41 s 20×2 Dn. CNN 5041 1 D Conv 99. 1% 91. . 1% (cut value 0. 997 66 s 20×2 Dn. CNN 128*128 2 D Conv Sense wire mask 99. 0% 97. 0% (cut value 0. 977 113 s 30 Denoising Auto. Encoder 128*128 2 D Conv 99. 0% 92. 4% (cut value 0. 989 10 s 200

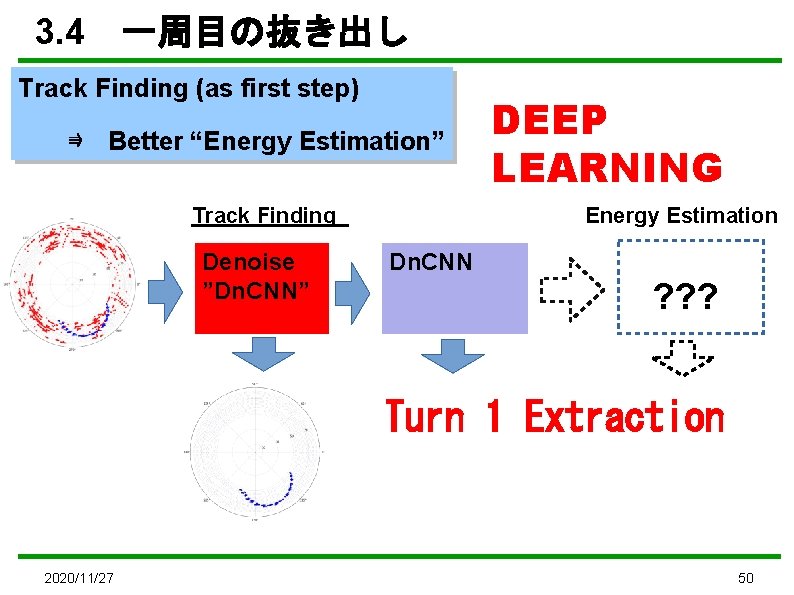

3. 4 一周目の抜き出し Track Finding (as first step) ⇛ Better “Energy Estimation” Track Finding Denoise ”Dn. CNN” DEEP LEARNING Energy Estimation Dn. CNN ? ? ? Turn 1 Extraction 2020/11/27 50

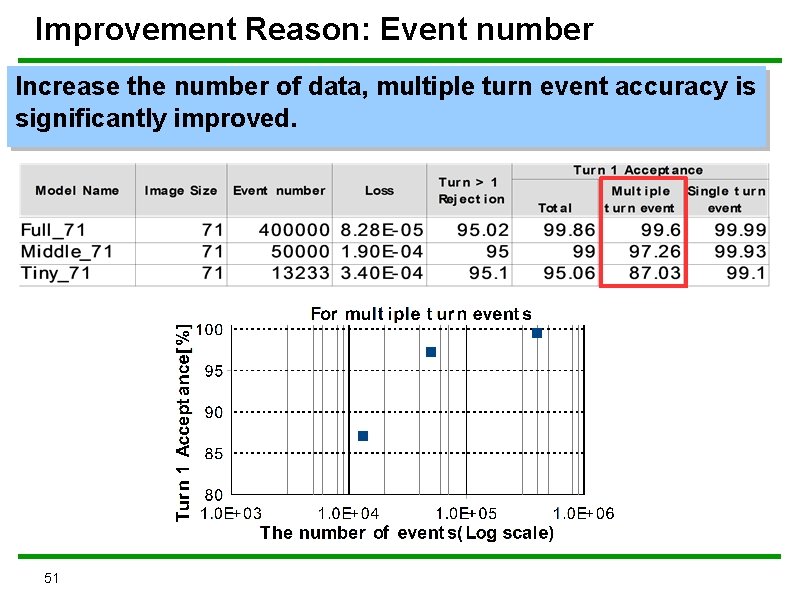

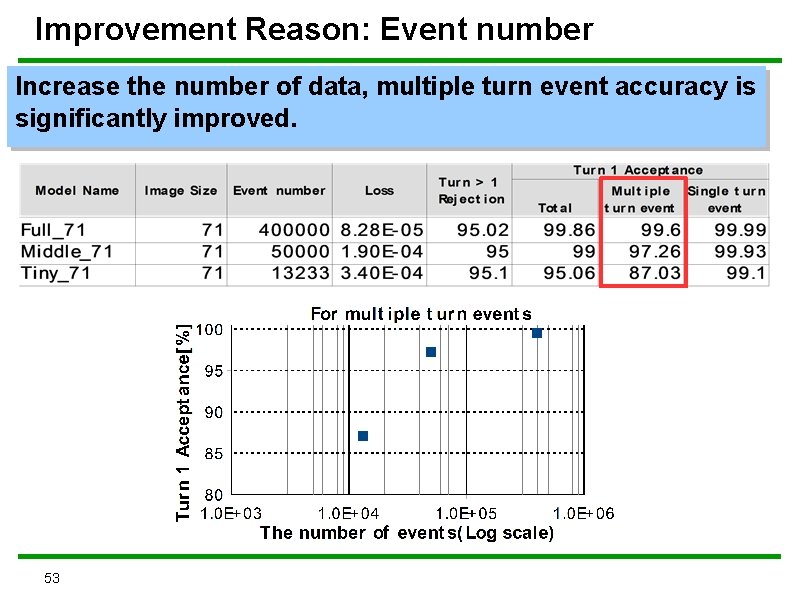

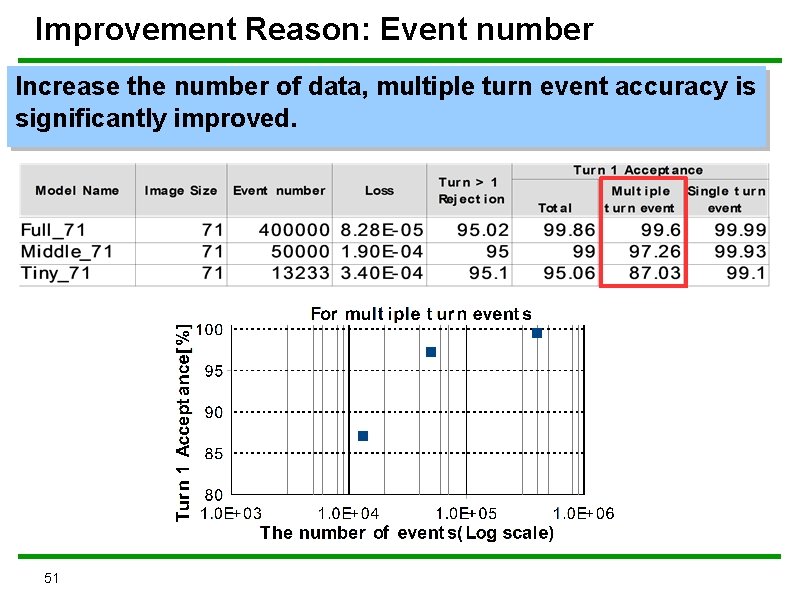

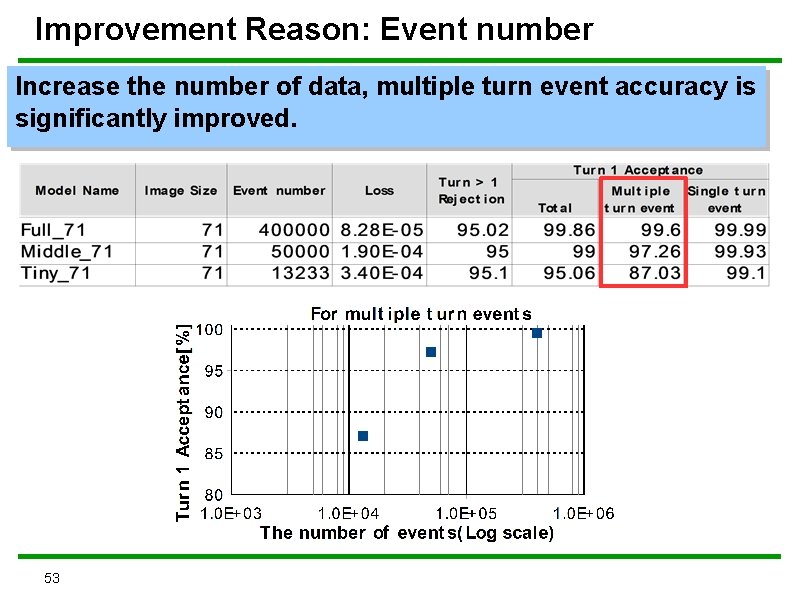

Improvement Reason: Event number Increase the number of data, multiple turn event accuracy is significantly improved. 51

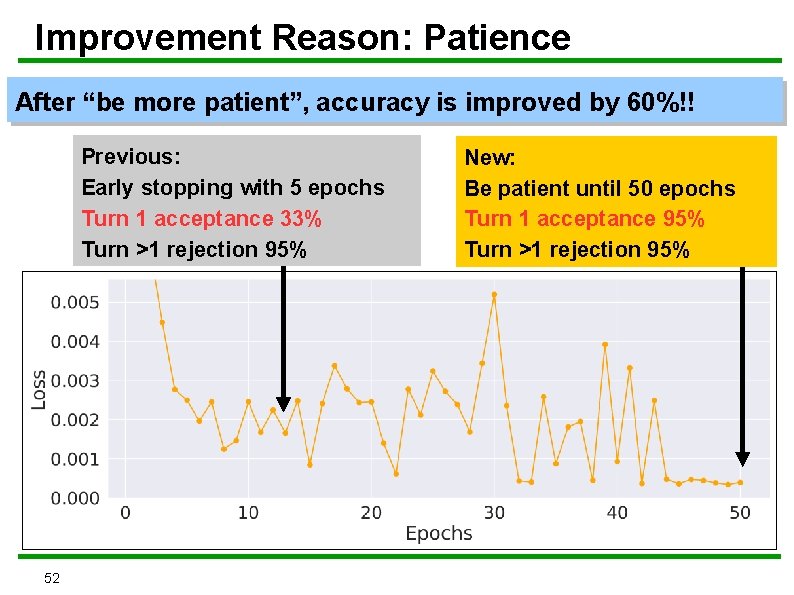

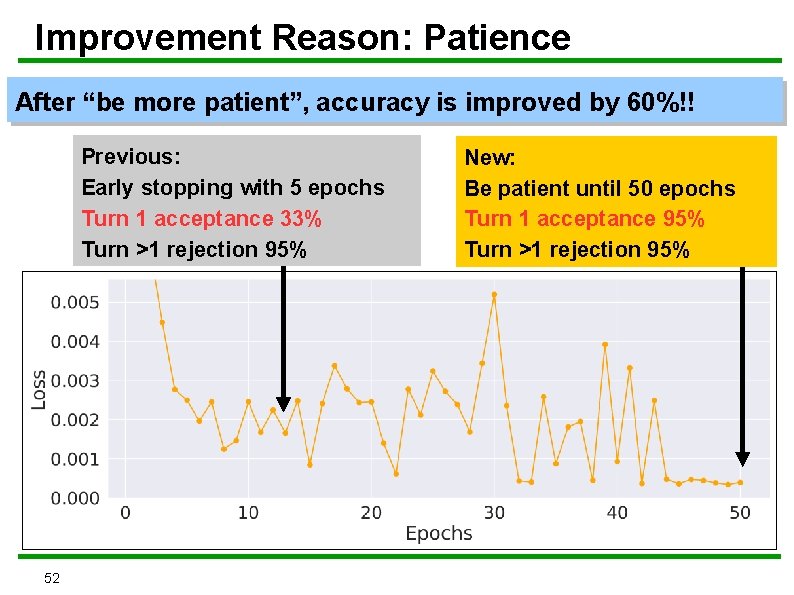

Improvement Reason: Patience After “be more patient”, accuracy is improved by 60%!! Previous: Early stopping with 5 epochs Turn 1 acceptance 33% Turn >1 rejection 95% 52 New: Be patient until 50 epochs Turn 1 acceptance 95% Turn >1 rejection 95%

Improvement Reason: Event number Increase the number of data, multiple turn event accuracy is significantly improved. 53

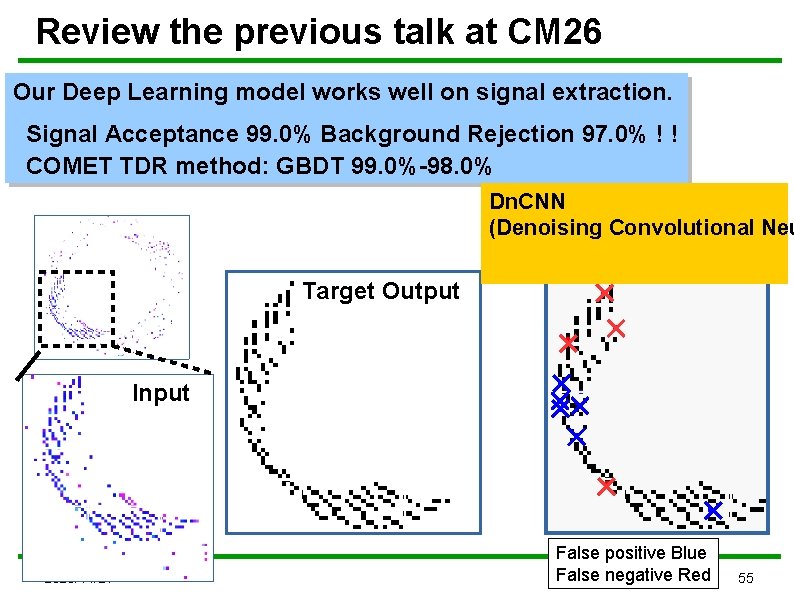

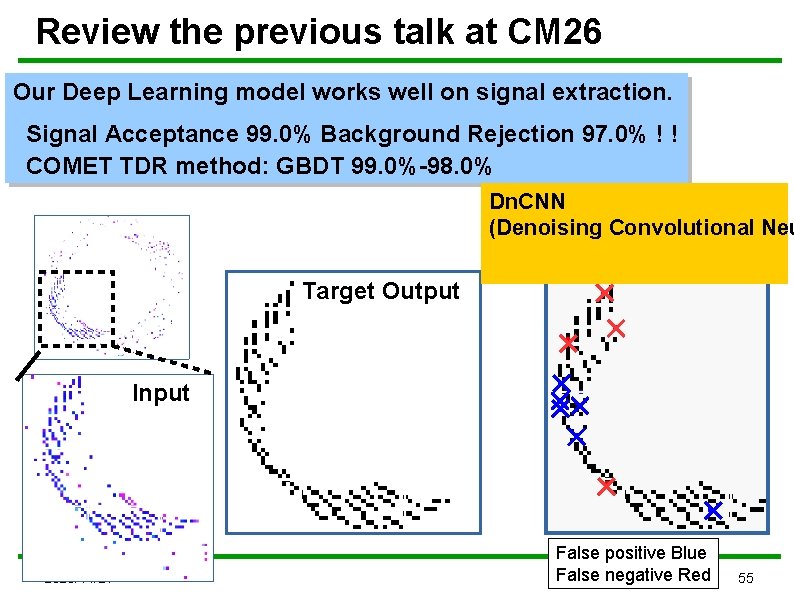

Review the previous talk at CM 26 Our Deep Learning model works well on signal extraction. Signal Acceptance 99. 0% Background Rejection 97. 0% ! ! COMET TDR method: GBDT 99. 0%-98. 0% Dn. CNN (Denoising Convolutional Neu Network output Target Output Input 2020/11/27 False positive Blue False negative Red 55

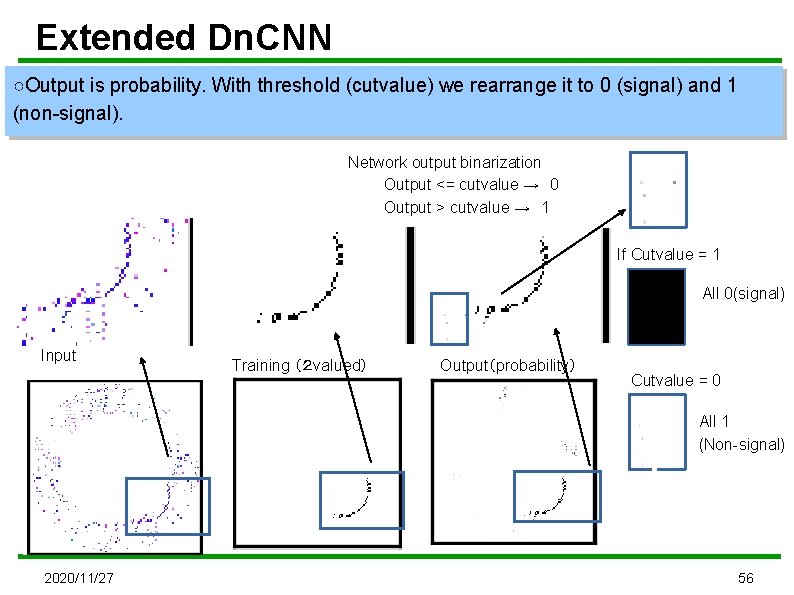

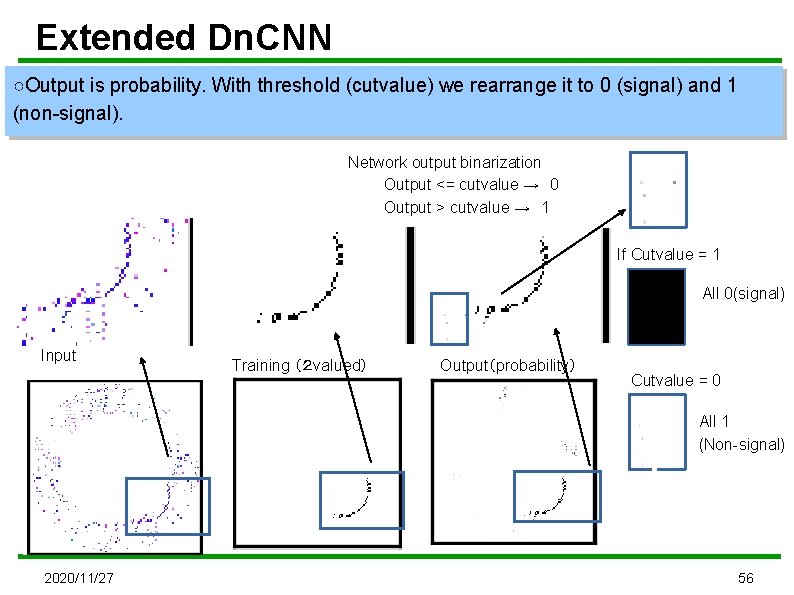

Extended Dn. CNN ○Output is probability. With threshold (cutvalue) we rearrange it to 0 (signal) and 1 (non-signal). Network output binarization Output <= cutvalue → 0 Output > cutvalue → 1 If Cutvalue = 1 All 0(signal) Input Training (2 valued) Output(probability) Cutvalue = 0 All 1 (Non-signal) 2020/11/27 56

Summary/Discussion Extremely low Signal Acceptance can occur We need to understand the behaviour Background Rejection% Signal Acceptance is fixed to be 99% 128*128 2 D Conv Dn. CNN Background Rejection% 71*71 1 D Conv Dn. CNN Signal Aceptance% 71*71 2 D Conv Dn. CNN 128*128 2 D Conv Autoendoder Signal Aceptance%

Extended Dn. CNN Output: Probability Dn. CNN拡張 全層概説 15 times repeated Our Extension Dn. CNN End here

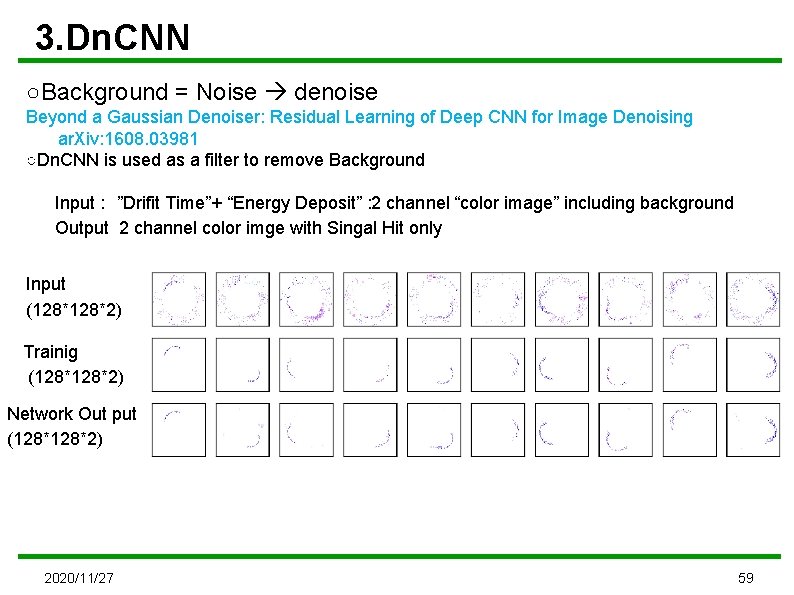

3. Dn. CNN ○Background = Noise denoise Beyond a Gaussian Denoiser: Residual Learning of Deep CNN for Image Denoising ar. Xiv: 1608. 03981 ○Dn. CNN is used as a filter to remove Background Input : ”Drifit Time”+ “Energy Deposit” : 2 channel “color image” including background Output 2 channel color imge with Singal Hit only Input (128*2) Trainig (128*2) Network Out put (128*2) 2020/11/27 59

Dn. CNNコード本体 /home/comet/work/kaneko_code/denoising_test/main_batch_learning_02. py 587行目あたり 60

Extended Dn. CNN Output: Probability Dn. CNN拡張 全層概説 15 times repeated Dn. CNN End here Our Extension

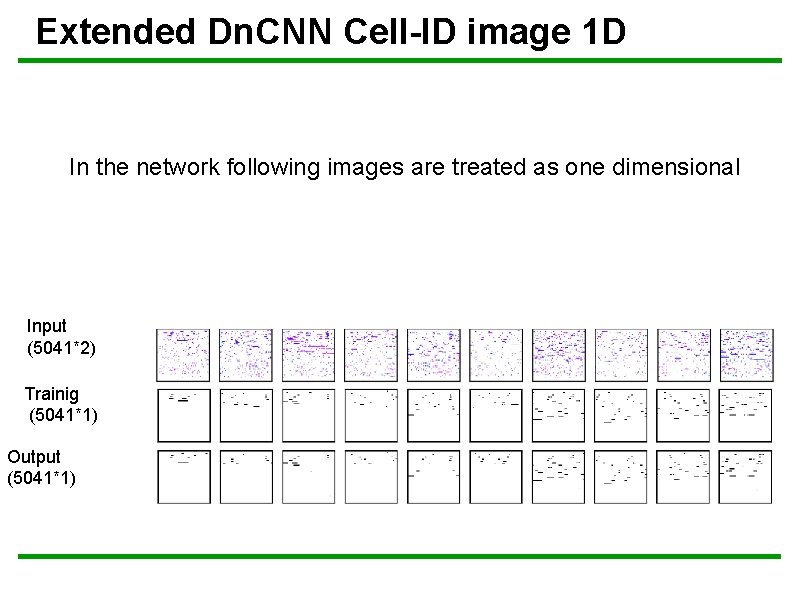

Extended Dn. CNN Cell-ID image 1 D In the network following images are treated as one dimensional Input (5041*2) Trainig (5041*1) Output (5041*1)

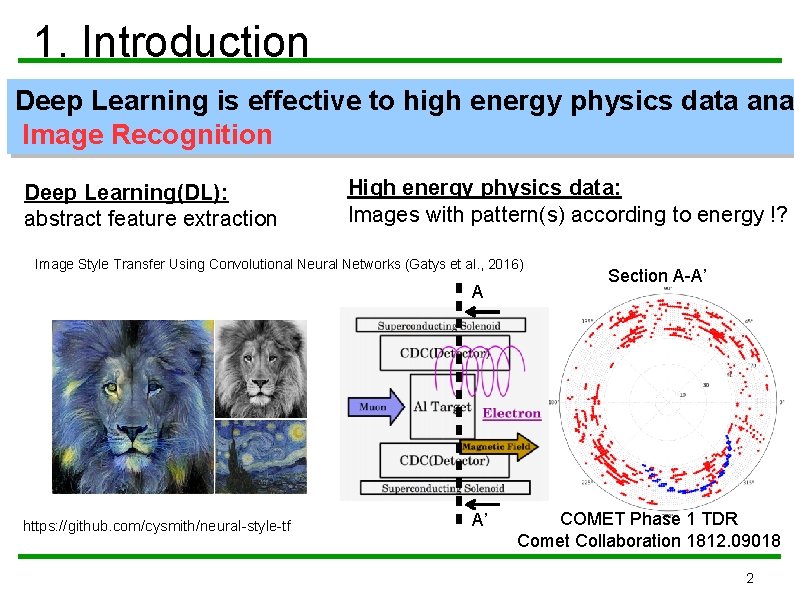

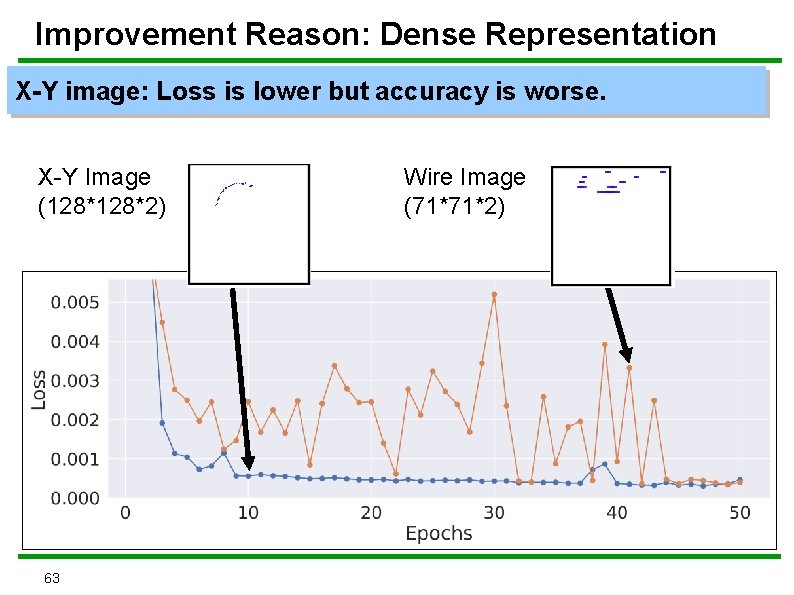

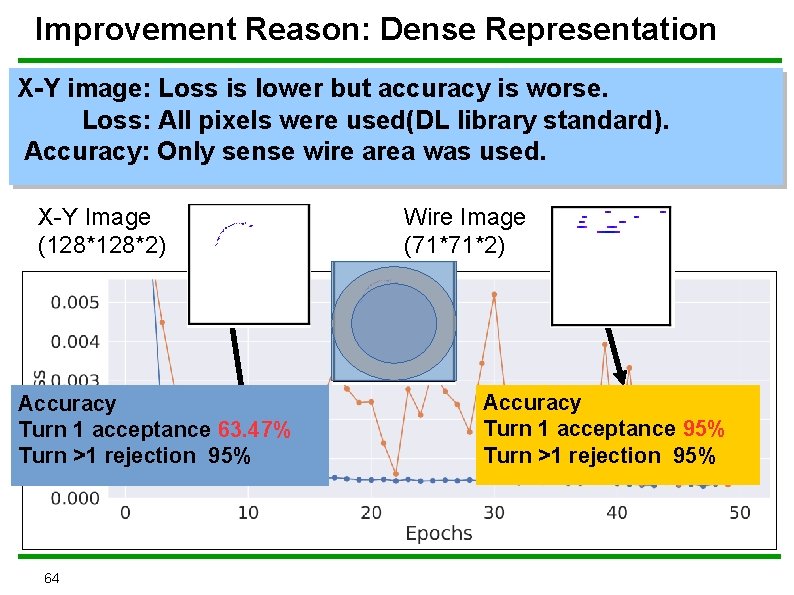

Improvement Reason: Dense Representation X-Y image: Loss is lower but accuracy is worse. X-Y Image (128*2) 63 Wire Image (71*71*2)

Improvement Reason: Dense Representation X-Y image: Loss is lower but accuracy is worse. Loss: All pixels were used(DL library standard). Accuracy: Only sense wire area was used. X-Y Image (128*2) Accuracy Turn 1 acceptance 63. 47% Turn >1 rejection 95% 64 Wire Image (71*71*2) Accuracy Turn 1 acceptance 95% Turn >1 rejection 95%