1 Information of sequential data RNN CHI TSUNG

- Slides: 37

1 Information of sequential data - RNN CHI TSUNG, CHANG

Outline 1. Recurrent neural network 2. Long term dependency – LSTM & GRU 3. Paper researching 4. Conclution & Reference 2

Outline 1. Recurrent neural network 2. Long term dependency – LSTM & GRU 3. Paper researching 4. Conclution & Reference 3

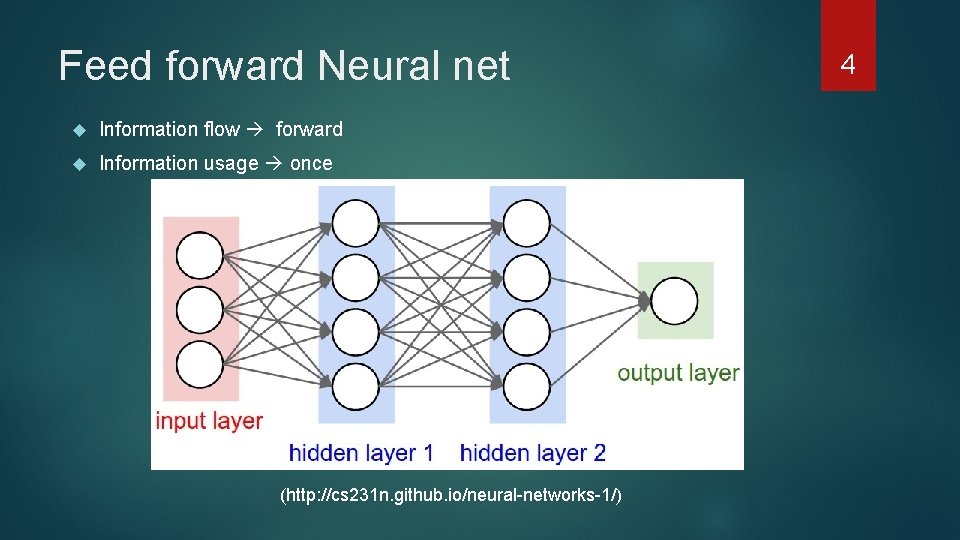

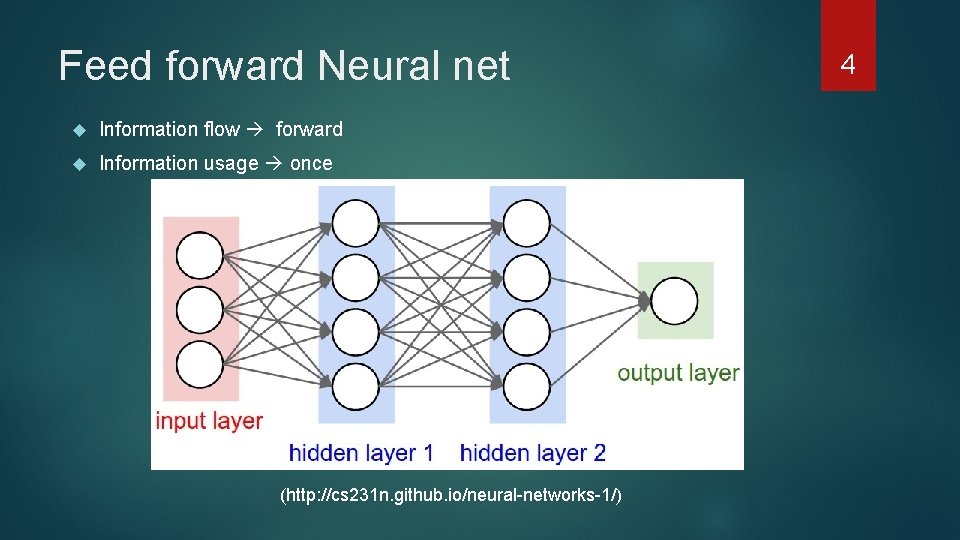

Feed forward Neural net Information flow forward Information usage once (http: //cs 231 n. github. io/neural-networks-1/) 4

Sometimes, temporal information matters Moving, Sentence , action 5

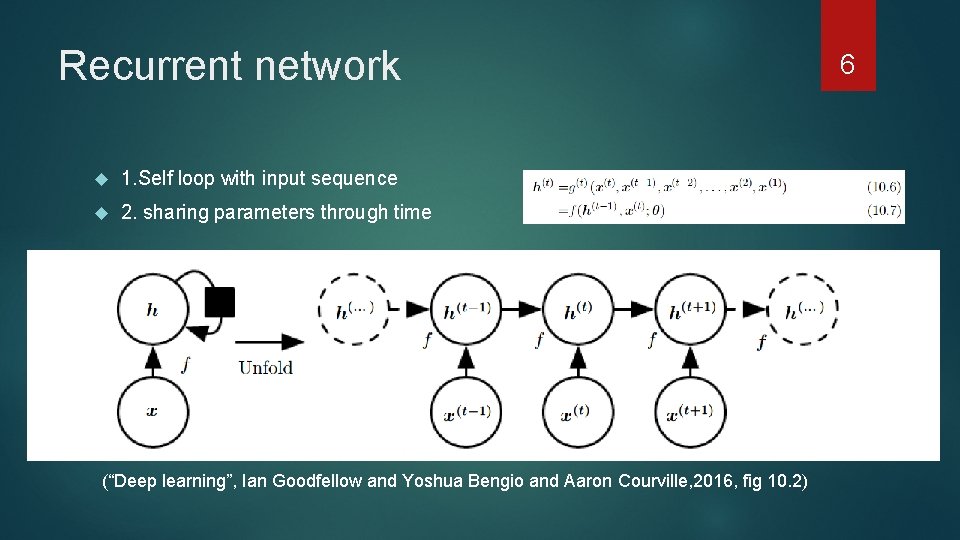

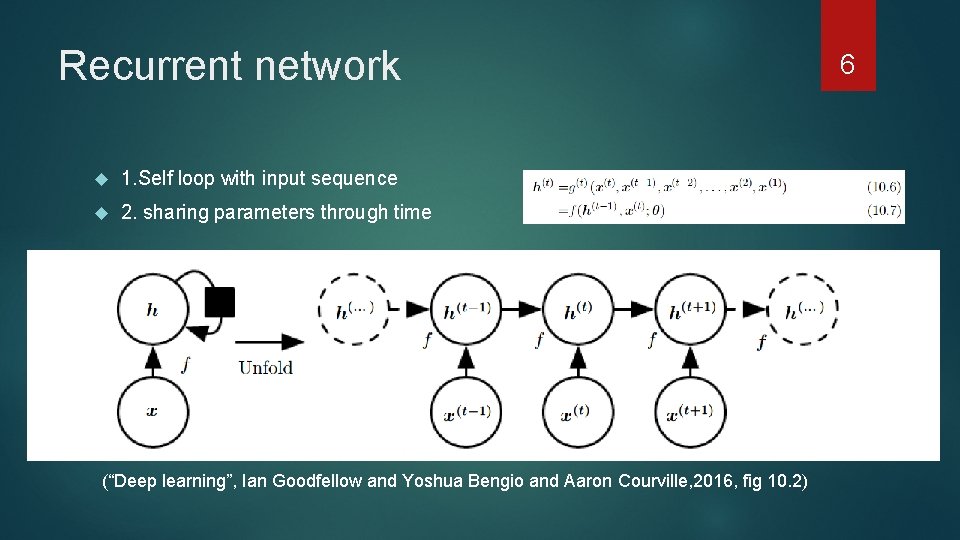

Recurrent network 1. Self loop with input sequence 2. sharing parameters through time (“Deep learning”, Ian Goodfellow and Yoshua Bengio and Aaron Courville, 2016, fig 10. 2) 6

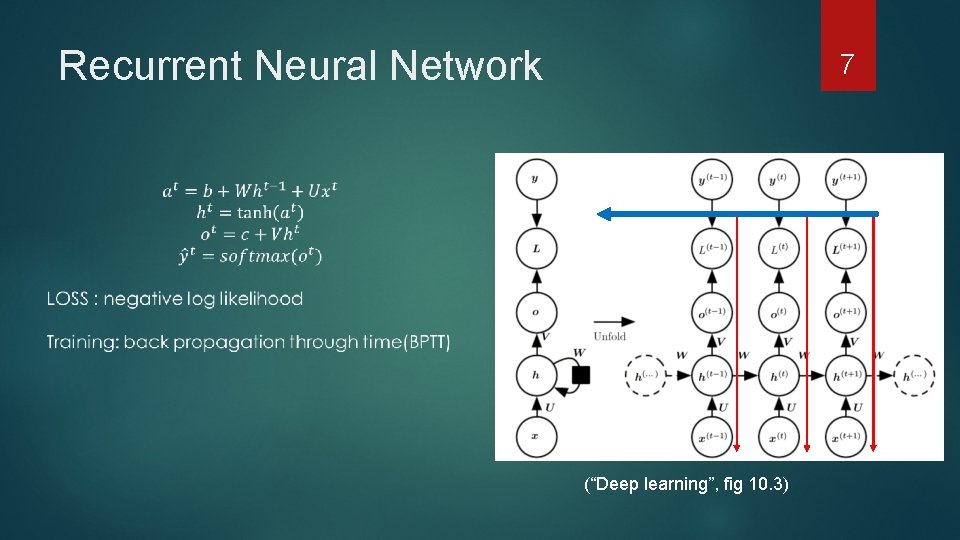

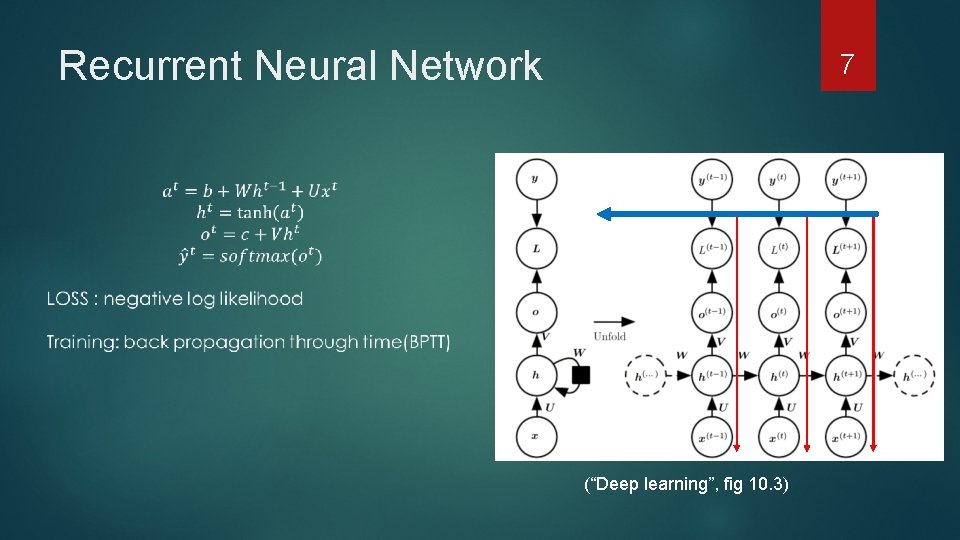

Recurrent Neural Network 7 (“Deep learning”, fig 10. 3)

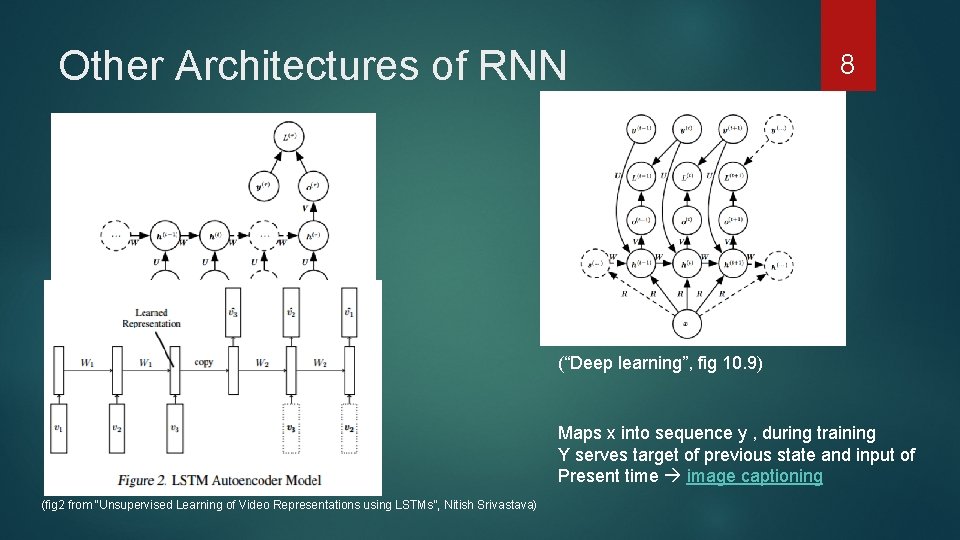

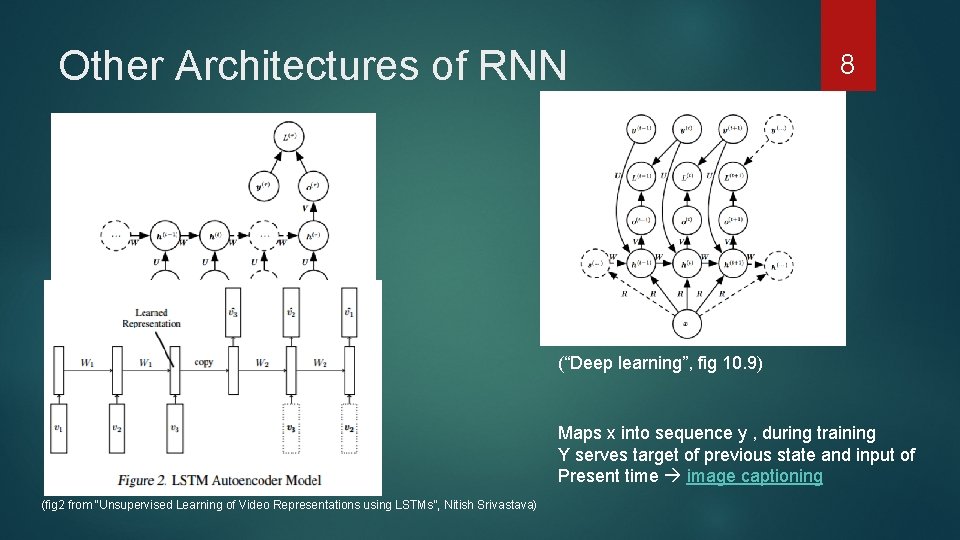

Other Architectures of RNN 8 (“Deep learning”, fig 10. 5) Generalize a sequence into a fixed Vector Representation Encoder (“Deep learning”, fig 10. 9) Maps x into sequence y , during training Y serves target of previous state and input of Present time image captioning (fig 2 from “Unsupervised Learning of Video Representations using LSTMs”, Nitish Srivastava)

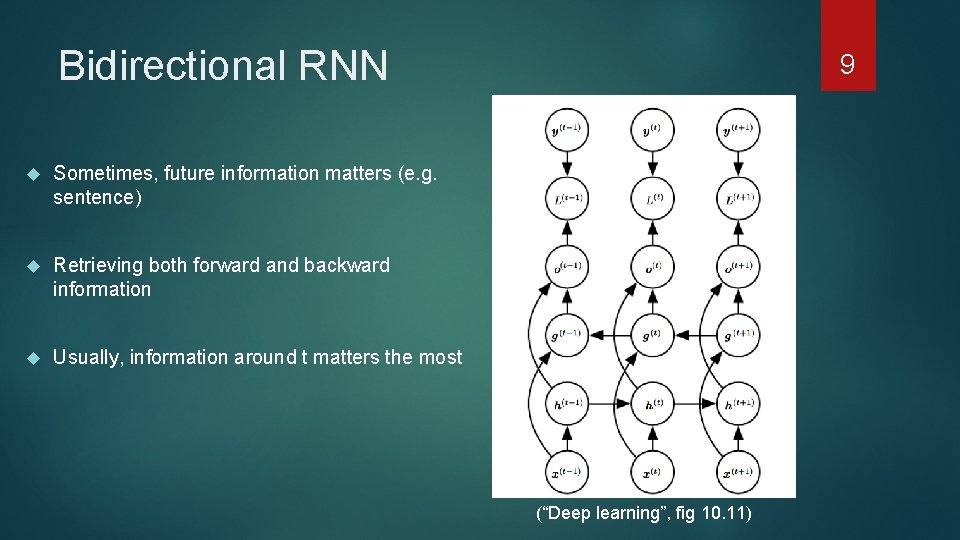

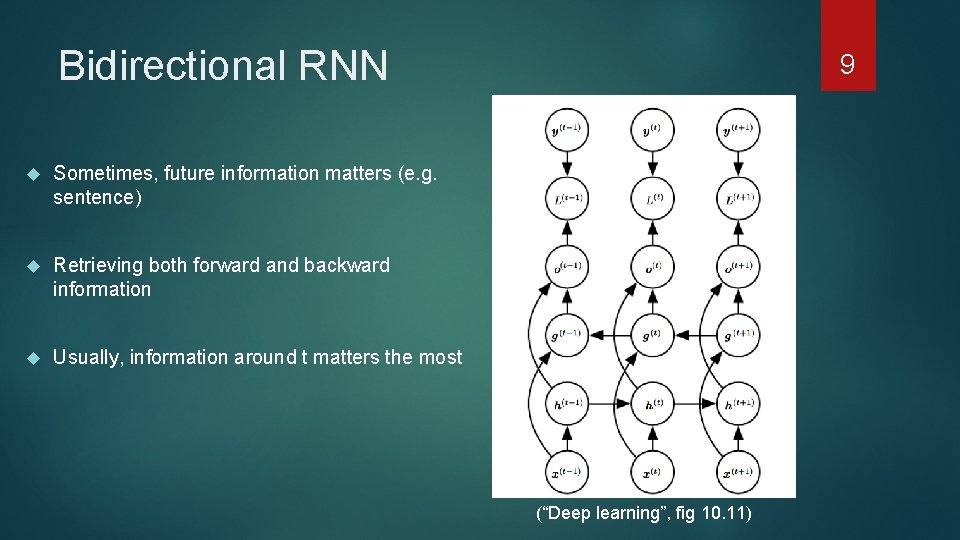

Bidirectional RNN Sometimes, future information matters (e. g. sentence) Retrieving both forward and backward information Usually, information around t matters the most 9 (“Deep learning”, fig 10. 11)

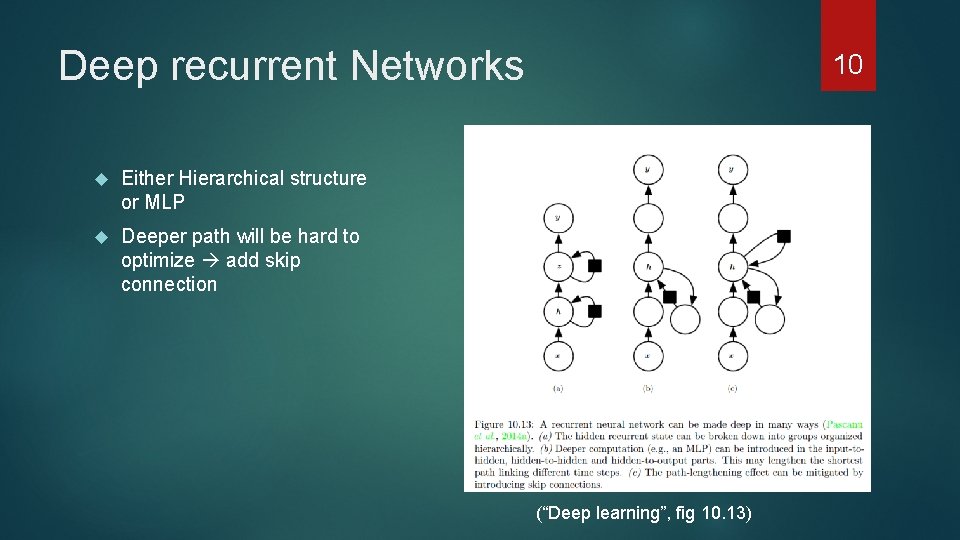

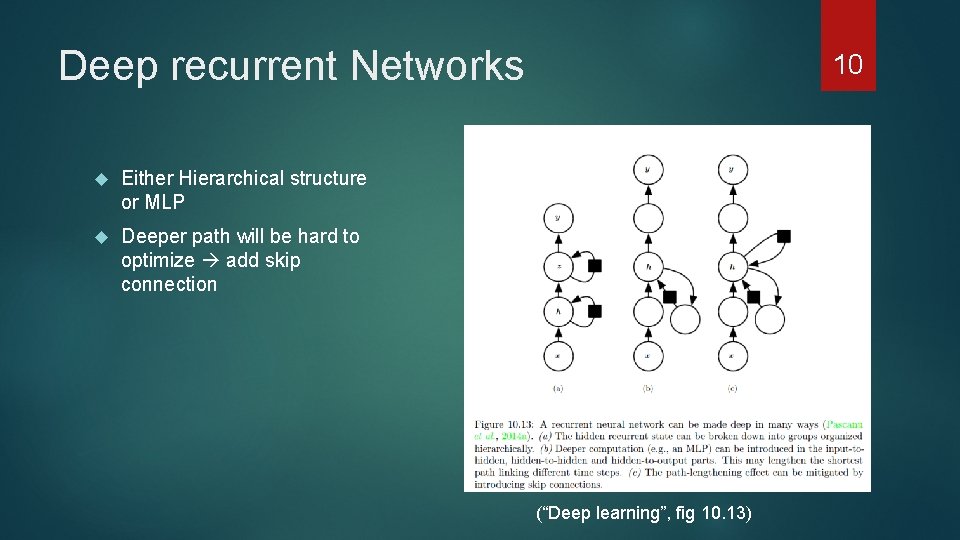

Deep recurrent Networks Either Hierarchical structure or MLP Deeper path will be hard to optimize add skip connection 10 (“Deep learning”, fig 10. 13)

Outline 1. Recurrent neural network 2. Long term dependency – LSTM & GRU 3. Paper researching 4. Conclution & Reference 11

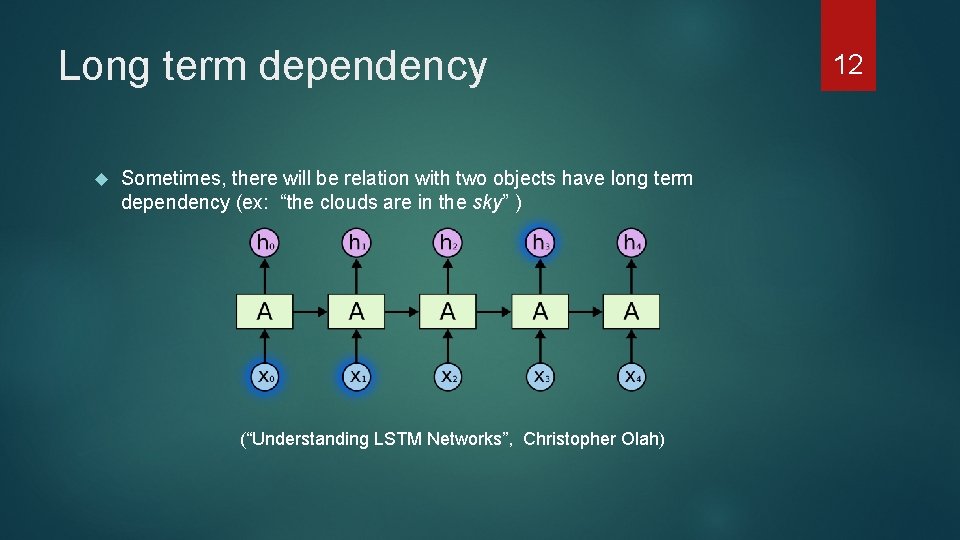

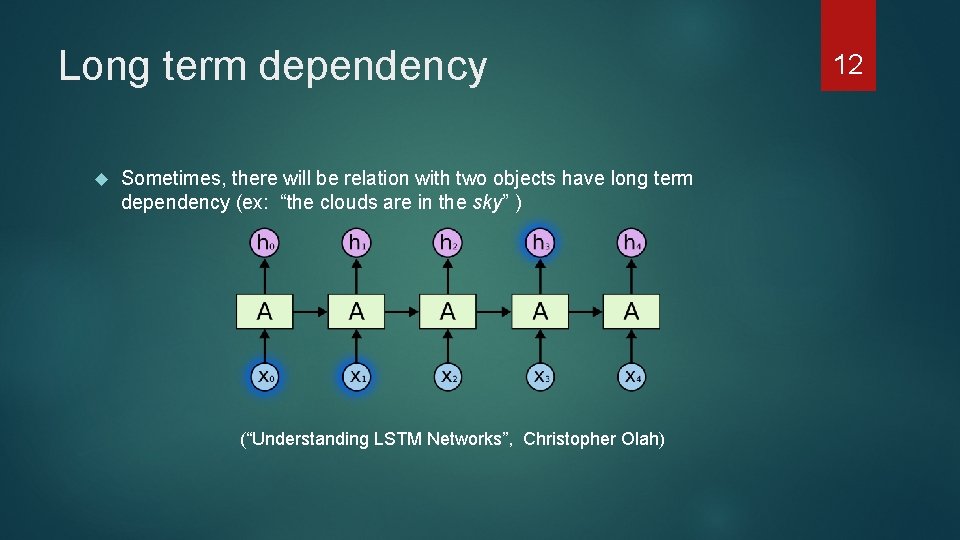

Long term dependency Sometimes, there will be relation with two objects have long term dependency (ex: “the clouds are in the sky” ) (“Understanding LSTM Networks”, Christopher Olah) 12

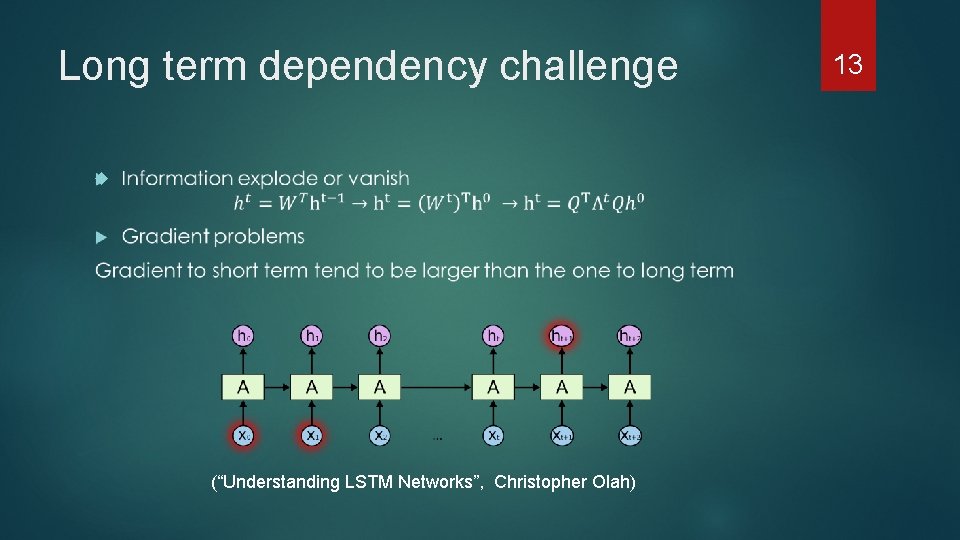

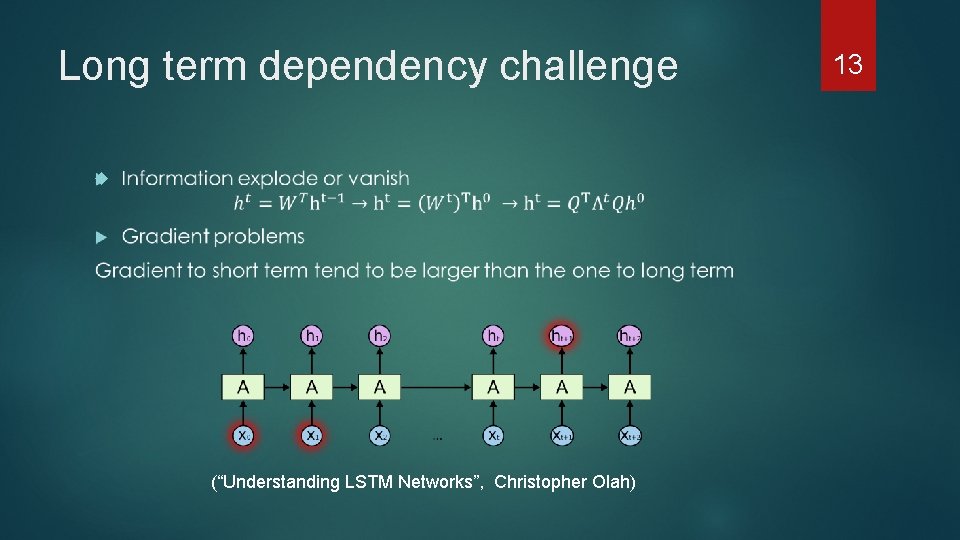

Long term dependency challenge (“Understanding LSTM Networks”, Christopher Olah) 13

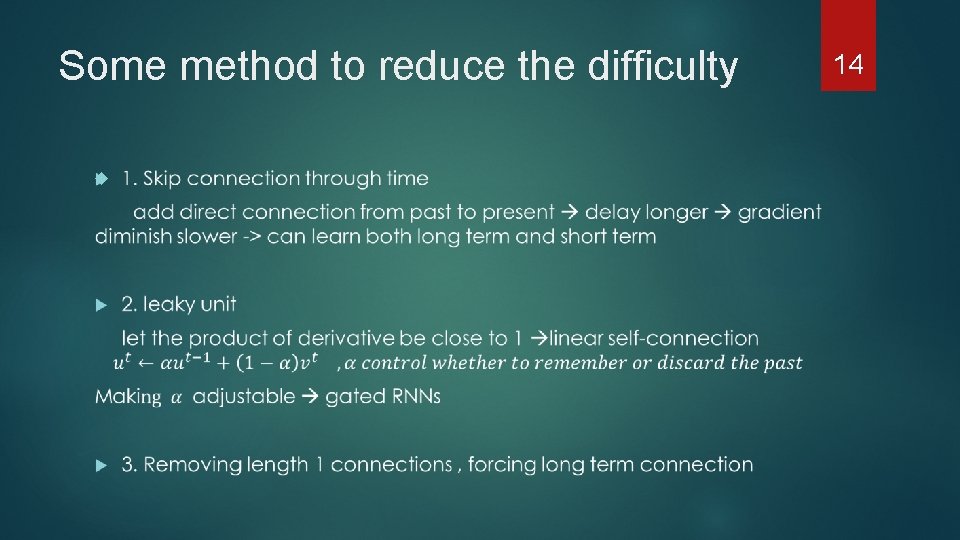

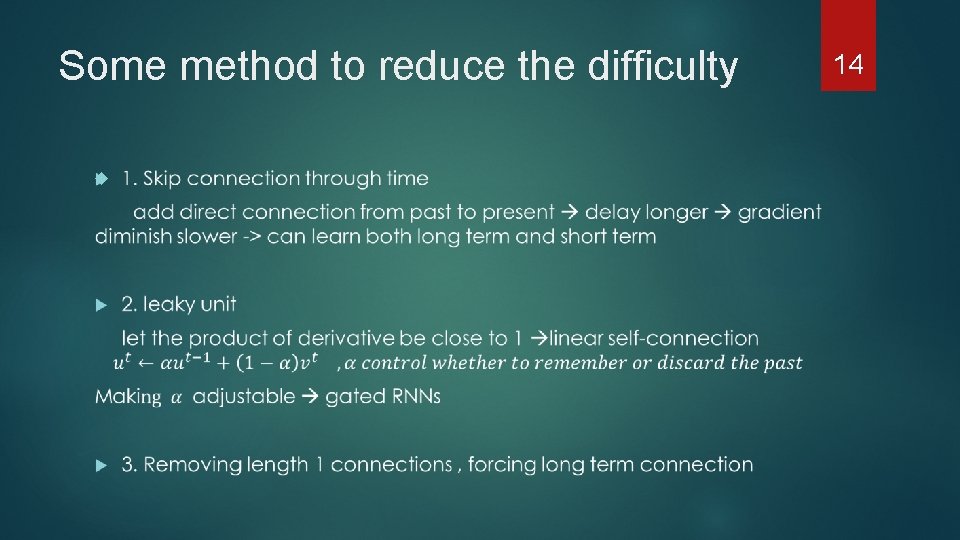

Some method to reduce the difficulty 14

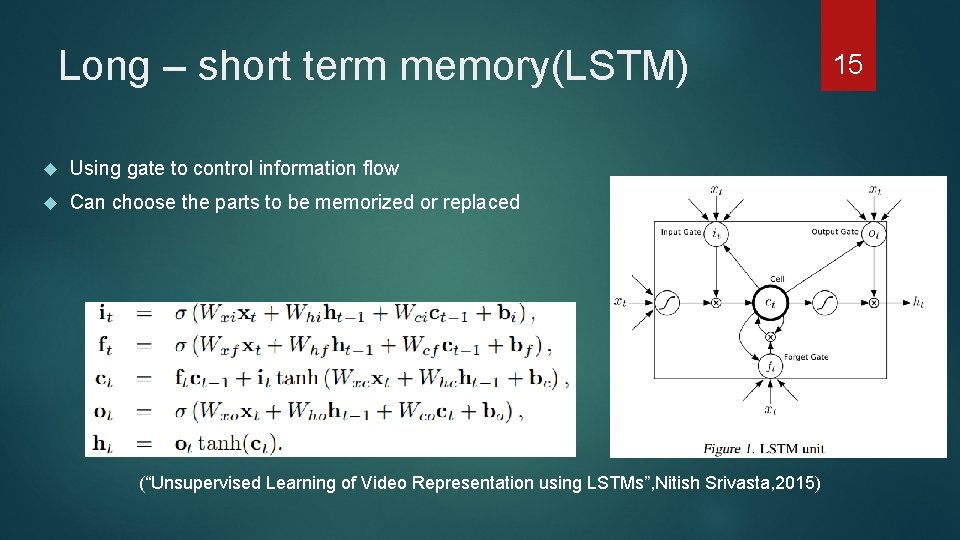

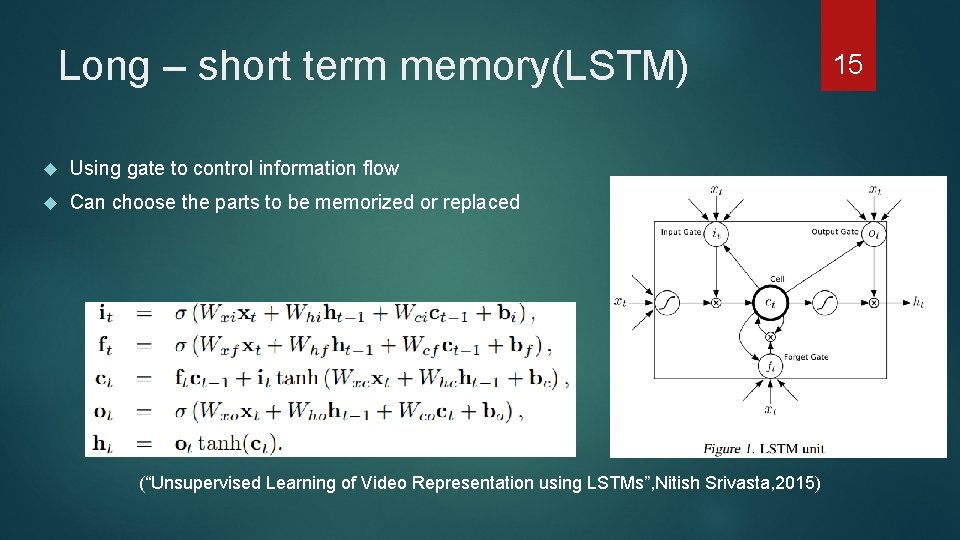

Long – short term memory(LSTM) Using gate to control information flow Can choose the parts to be memorized or replaced (“Unsupervised Learning of Video Representation using LSTMs”, Nitish Srivasta, 2015) 15

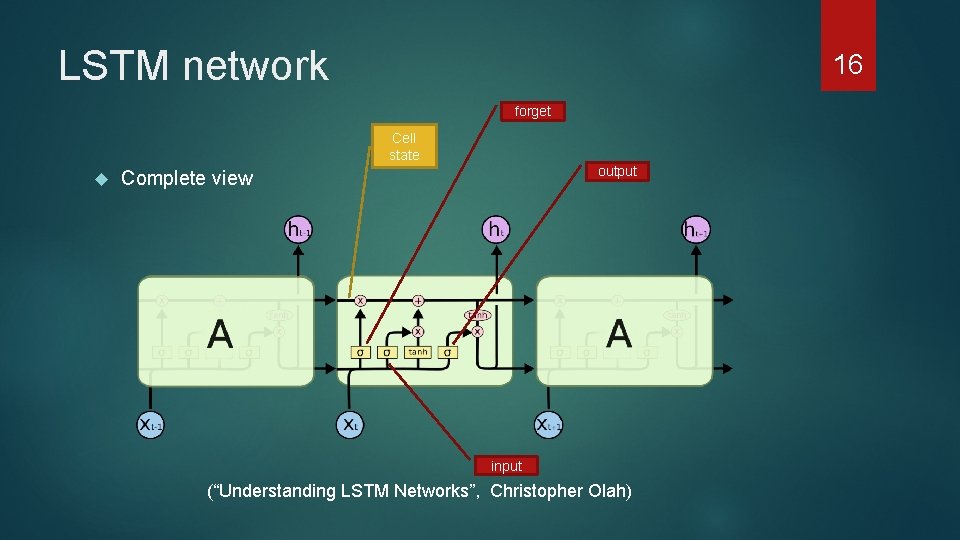

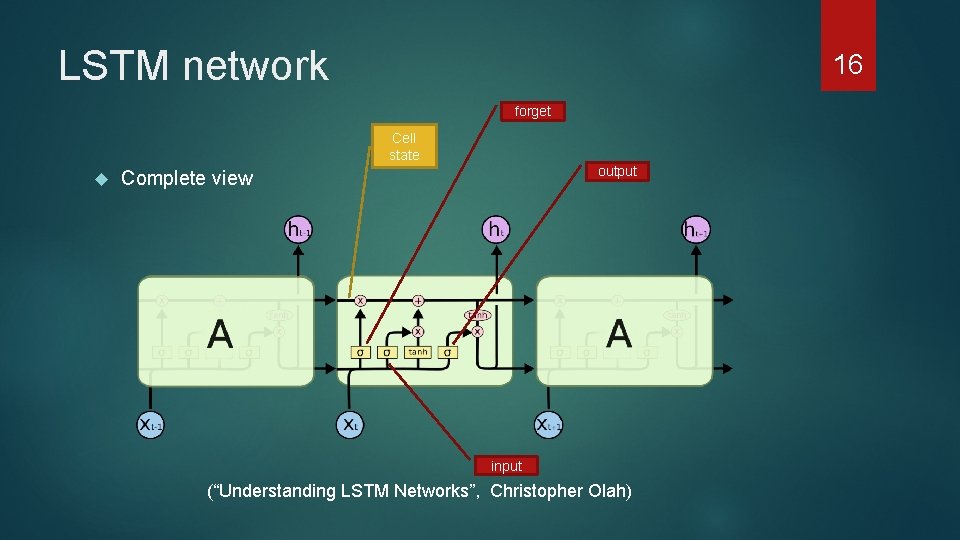

LSTM network 16 forget Cell state output Complete view input (“Understanding LSTM Networks”, Christopher Olah)

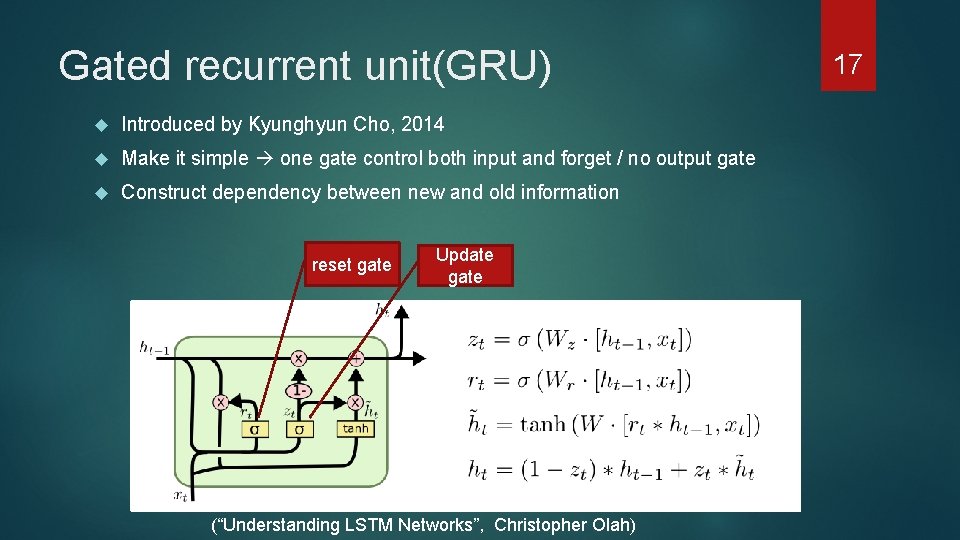

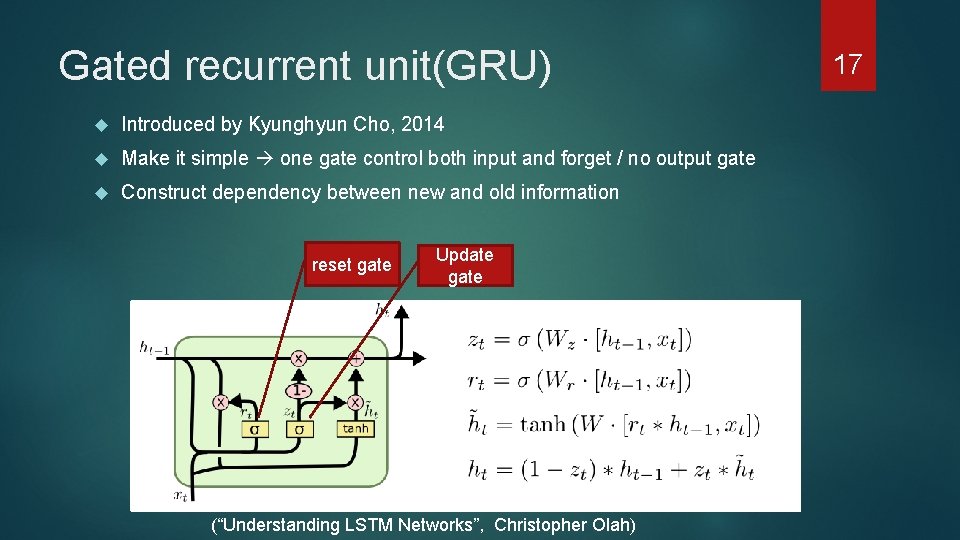

Gated recurrent unit(GRU) Introduced by Kyunghyun Cho, 2014 Make it simple one gate control both input and forget / no output gate Construct dependency between new and old information reset gate Update gate (“Understanding LSTM Networks”, Christopher Olah) 17

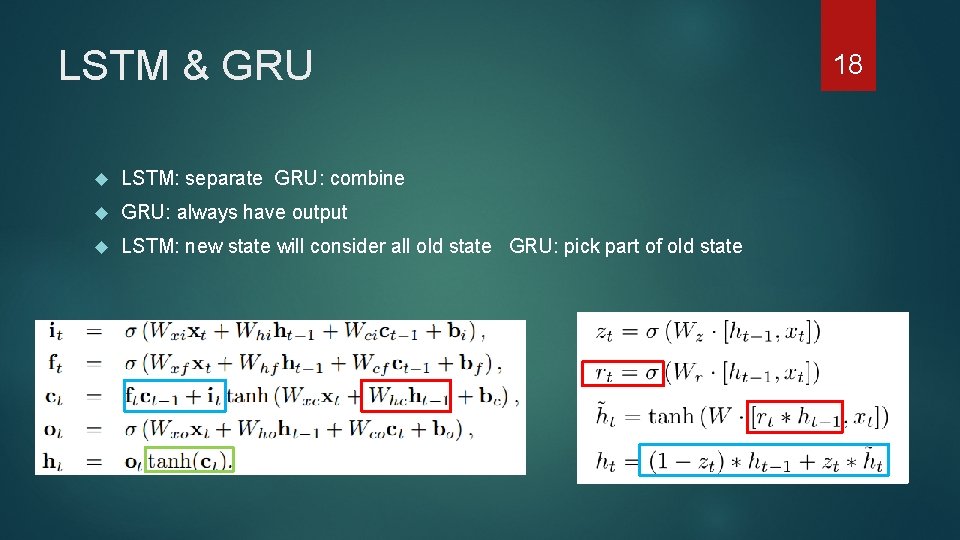

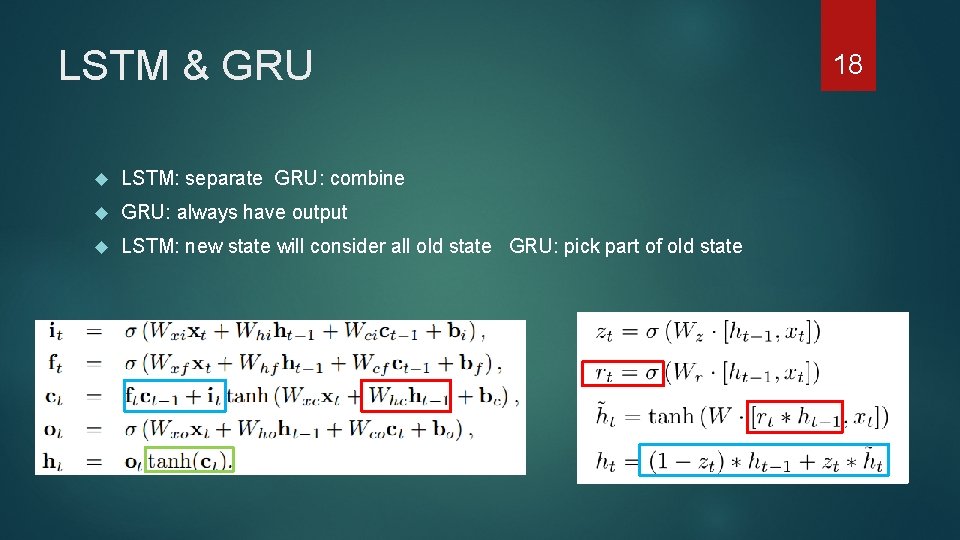

LSTM & GRU LSTM: separate GRU: combine GRU: always have output LSTM: new state will consider all old state GRU: pick part of old state 18

Outline 1. Recurrent neural network 2. Long term dependency – LSTM & GRU 3. Paper researching 4. Conclution & Reference 19

20 ”Empirical Evaluation of Gated Recurrent Neural Networks on sequence Modeling” , Junyoung Chung, Caglar Gulcehre, Kyung. Hyun Cho, Yoshau Bengio, 2014

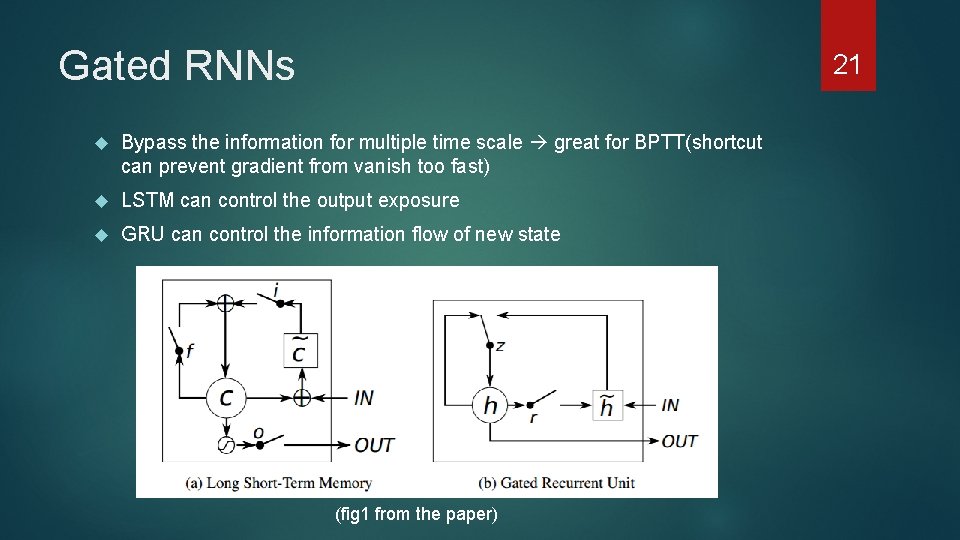

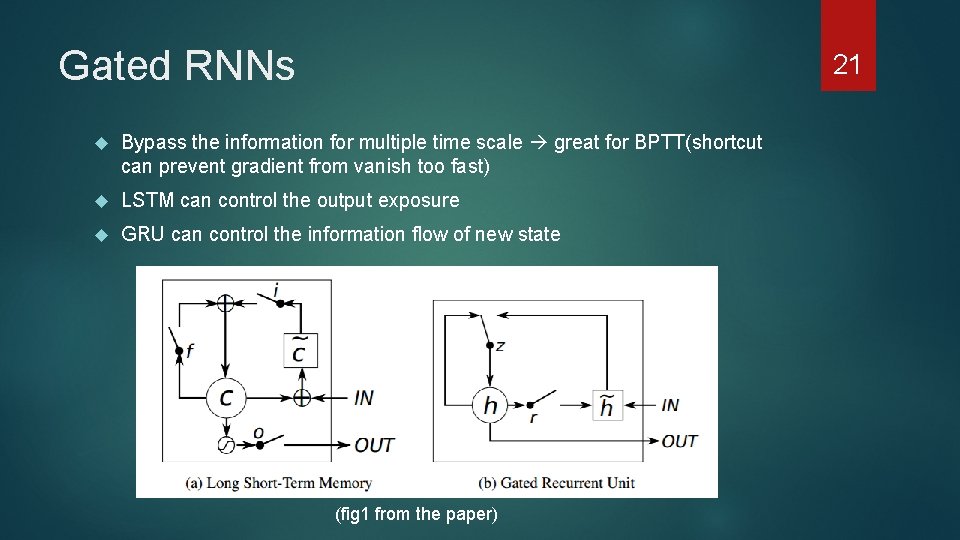

Gated RNNs 21 Bypass the information for multiple time scale great for BPTT(shortcut can prevent gradient from vanish too fast) LSTM can control the output exposure GRU can control the information flow of new state (fig 1 from the paper)

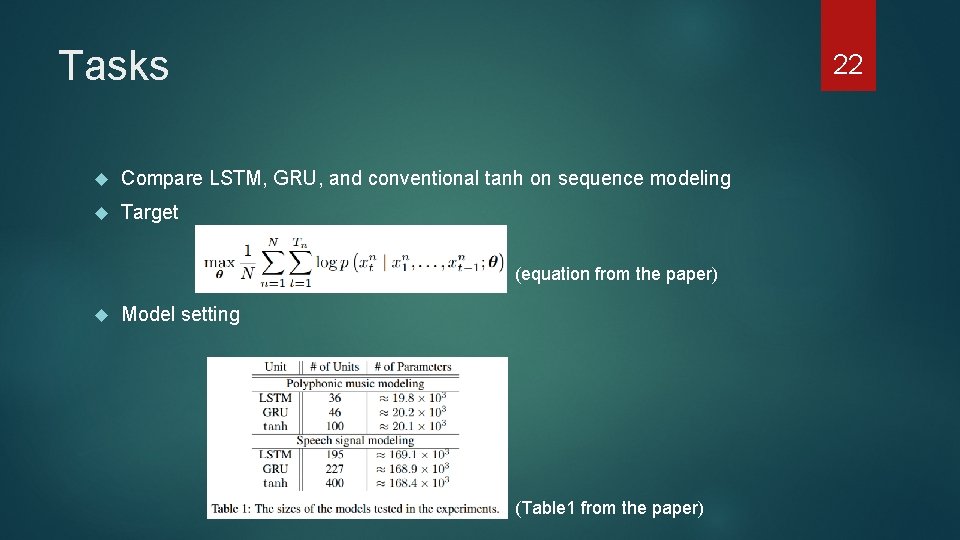

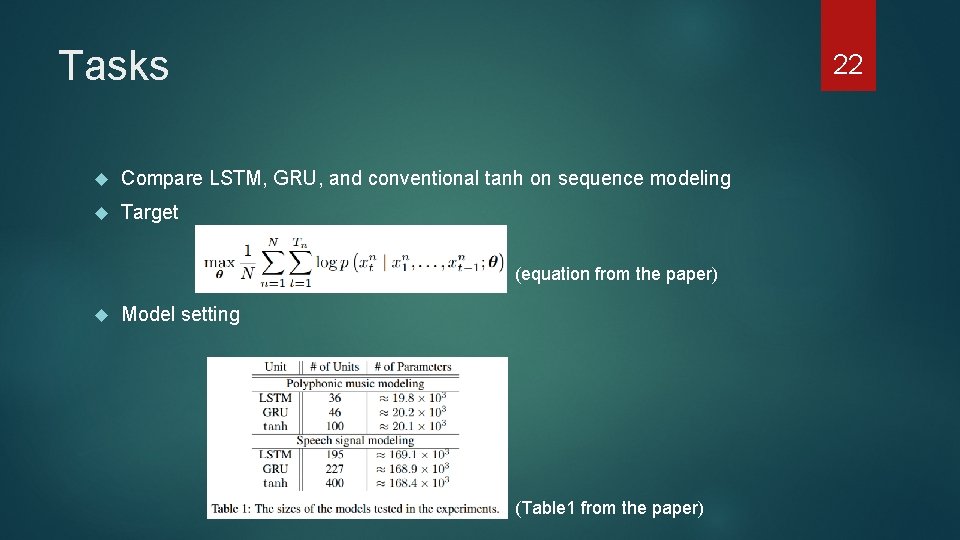

Tasks 22 Compare LSTM, GRU, and conventional tanh on sequence modeling Target (equation from the paper) Model setting (Table 1 from the paper)

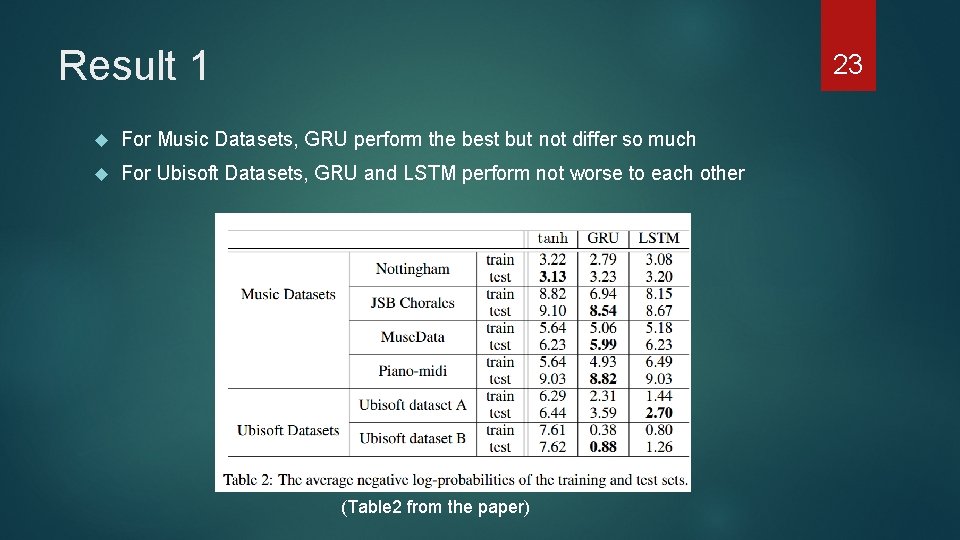

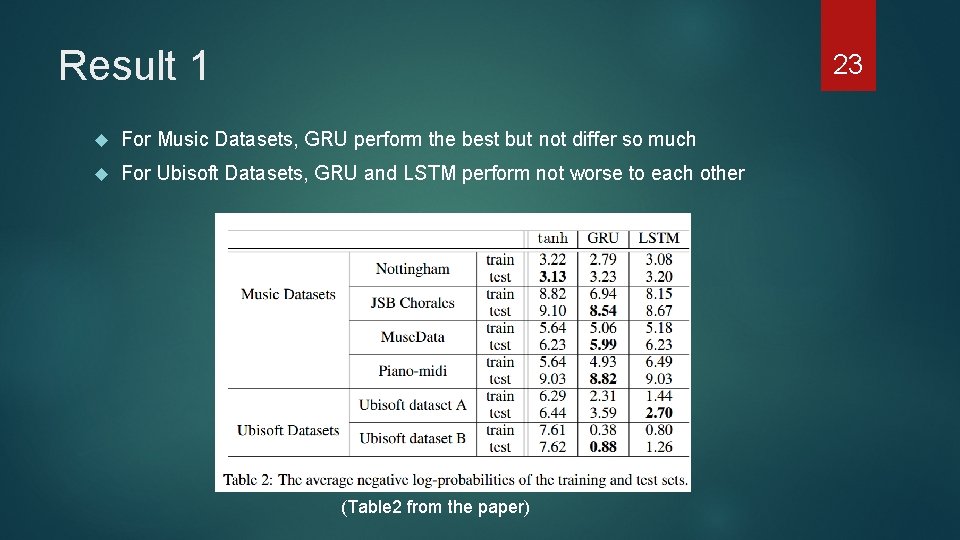

Result 1 23 For Music Datasets, GRU perform the best but not differ so much For Ubisoft Datasets, GRU and LSTM perform not worse to each other (Table 2 from the paper)

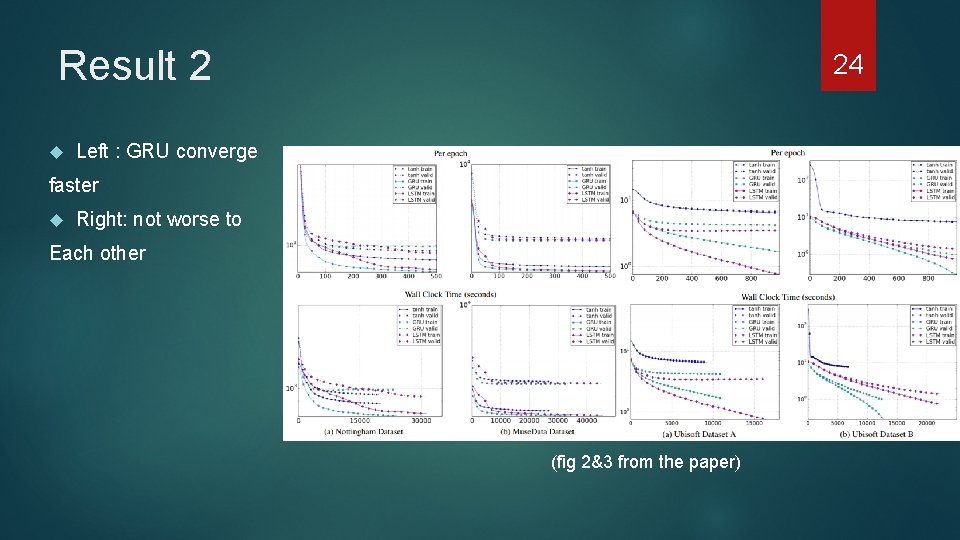

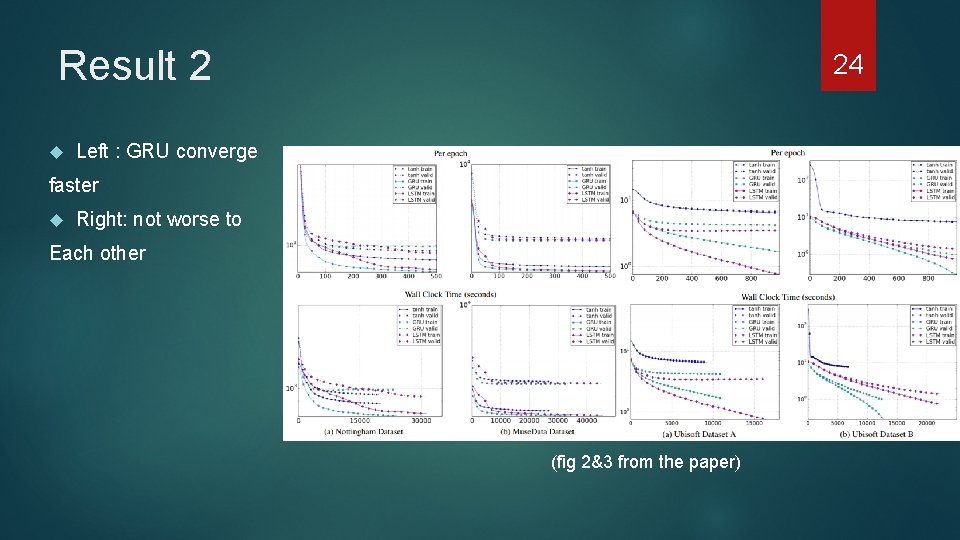

Result 2 24 Left : GRU converge faster Right: not worse to Each other (fig 2&3 from the paper)

Conclusion 1. Both GRU and LSTM perform better than tanh 2. In some way, GRU can have faster convergence , while in other way, LSTM can perform better 3. No one is better than the other, depending on tasks 25

26 “Hierarchical Recurrent Neural Network for Skeleton Based Action Recognition”, Yong Du, Wei Wang, et al. , CVPR 2015

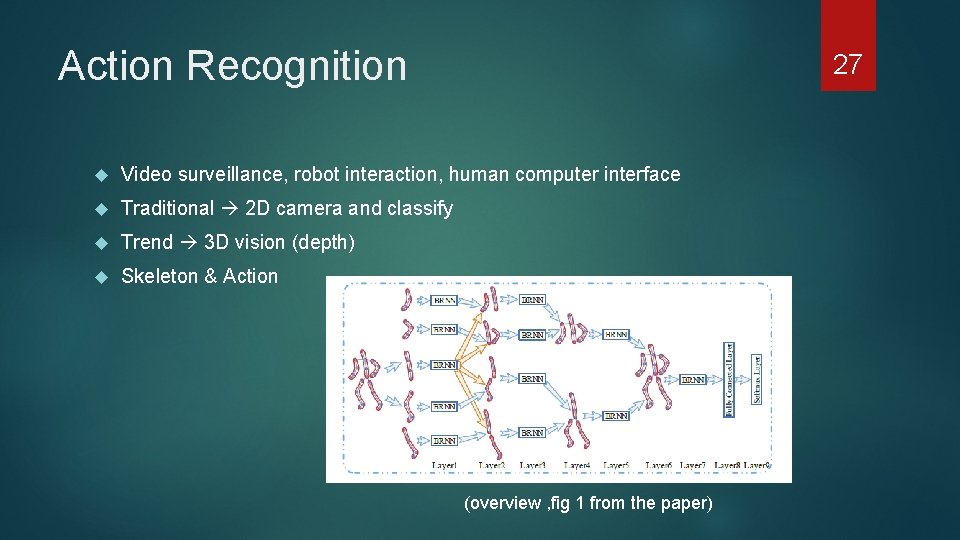

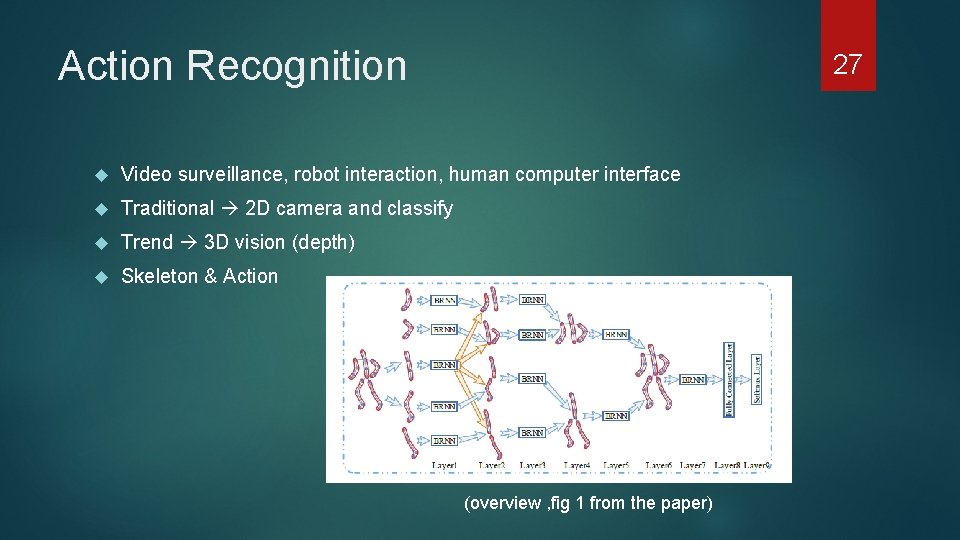

Action Recognition 27 Video surveillance, robot interaction, human computer interface Traditional 2 D camera and classify Trend 3 D vision (depth) Skeleton & Action (overview , fig 1 from the paper)

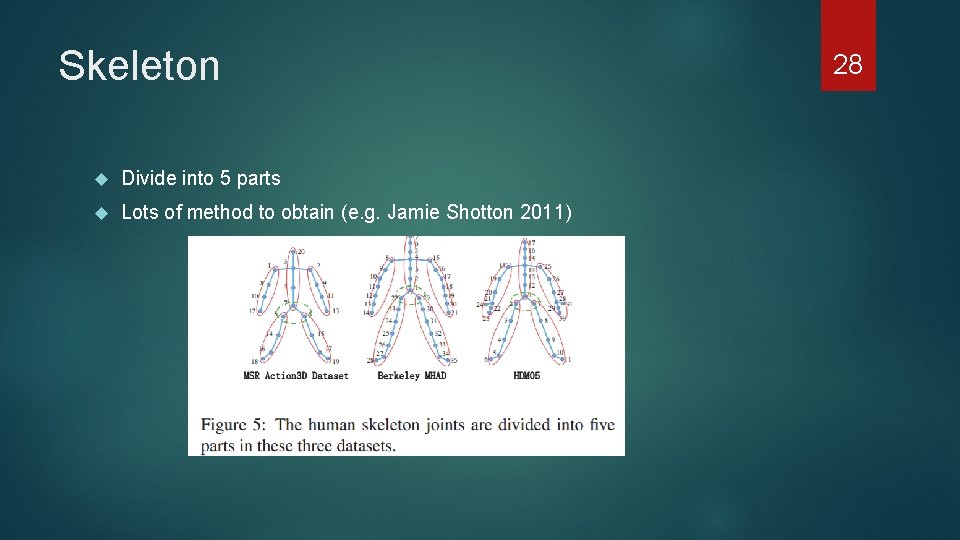

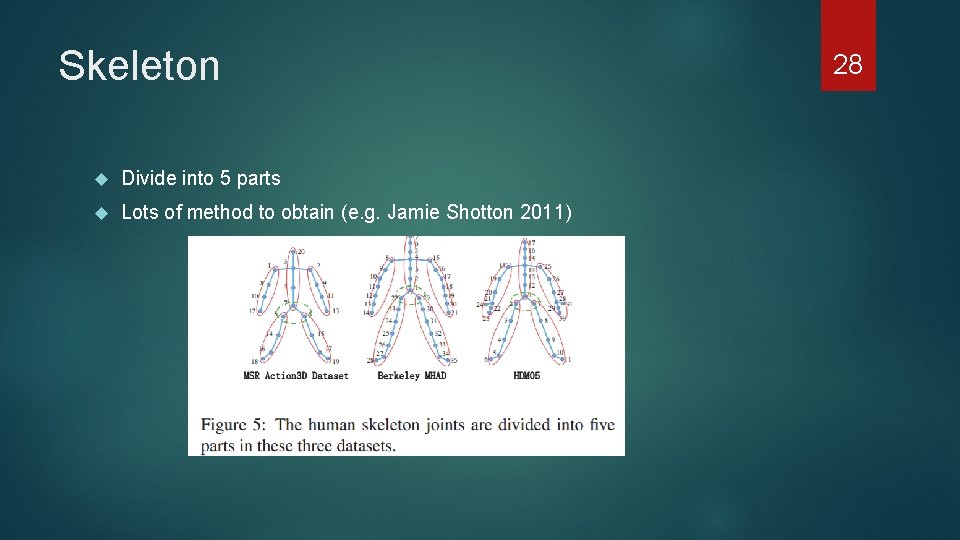

Skeleton Divide into 5 parts Lots of method to obtain (e. g. Jamie Shotton 2011) 28

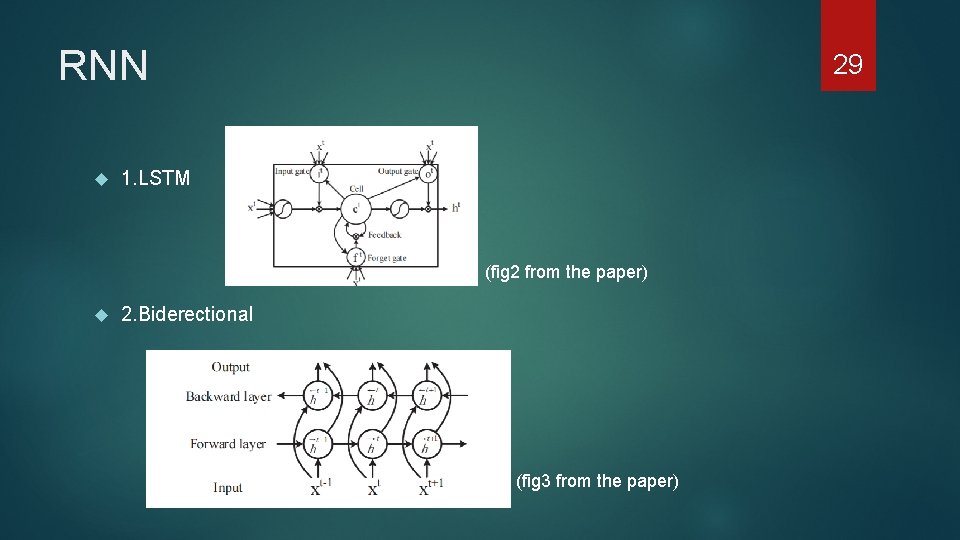

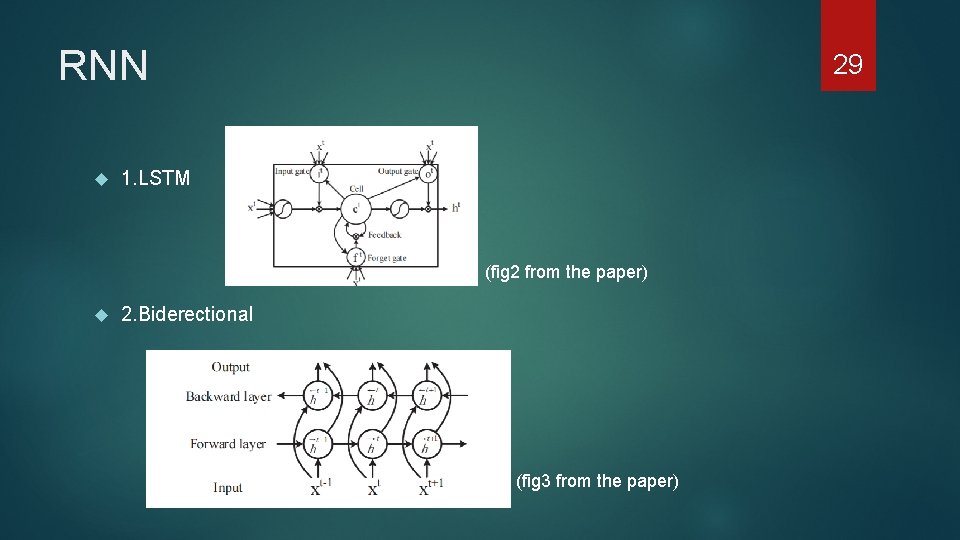

RNN 29 1. LSTM (fig 2 from the paper) 2. Biderectional (fig 3 from the paper)

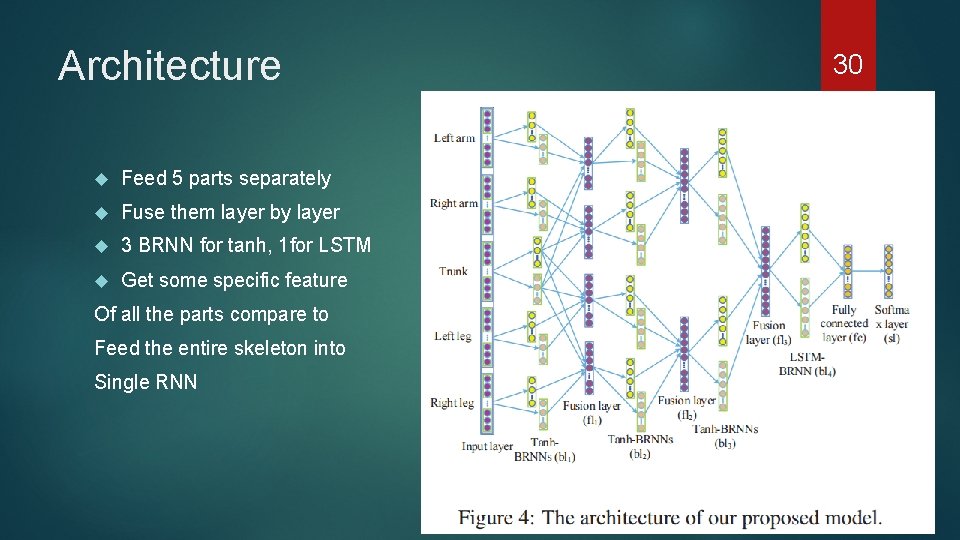

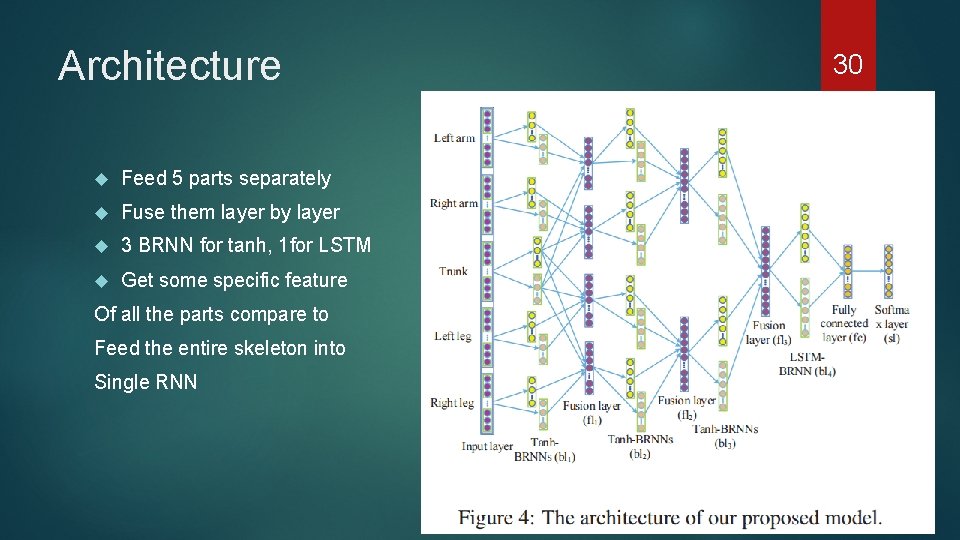

Architecture Feed 5 parts separately Fuse them layer by layer 3 BRNN for tanh, 1 for LSTM Get some specific feature Of all the parts compare to Feed the entire skeleton into Single RNN 30

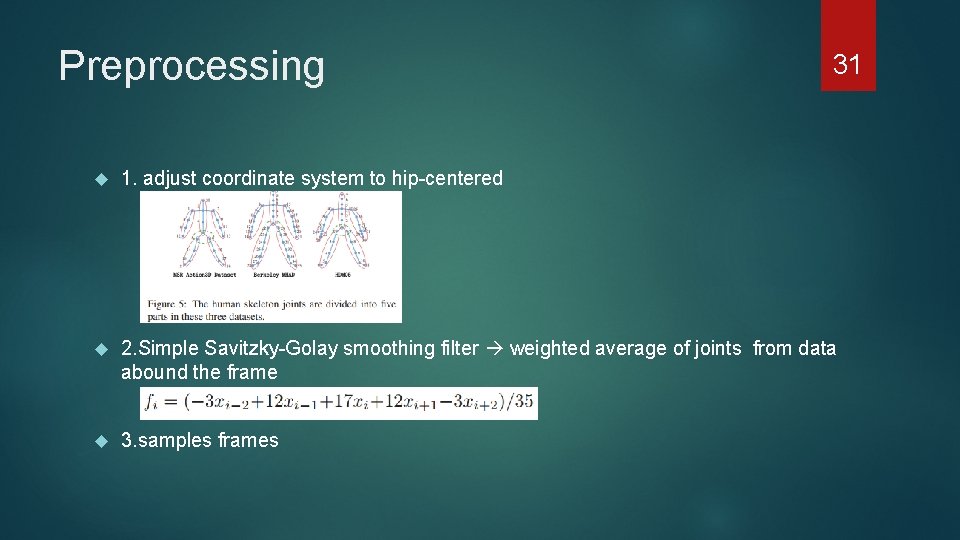

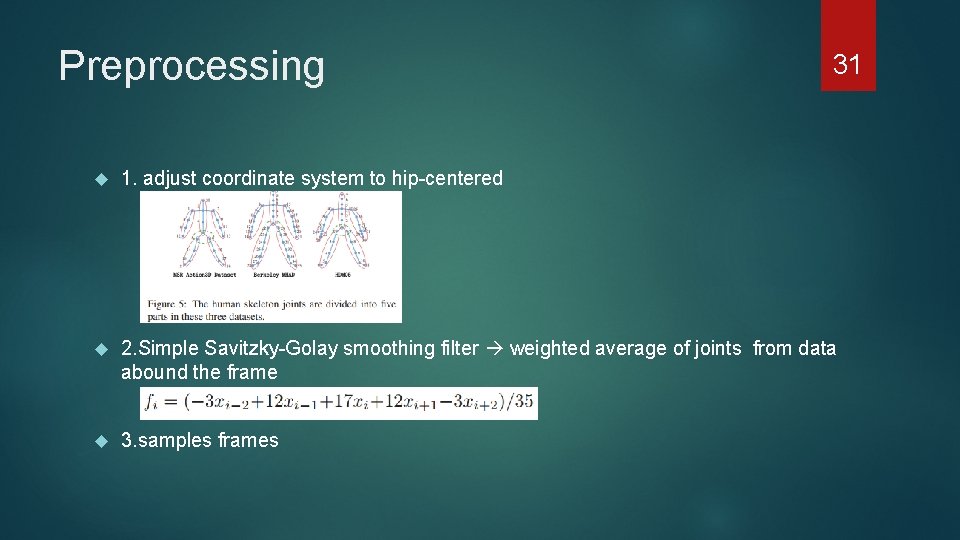

Preprocessing 31 1. adjust coordinate system to hip-centered 2. Simple Savitzky-Golay smoothing filter weighted average of joints from data abound the frame 3. samples frames

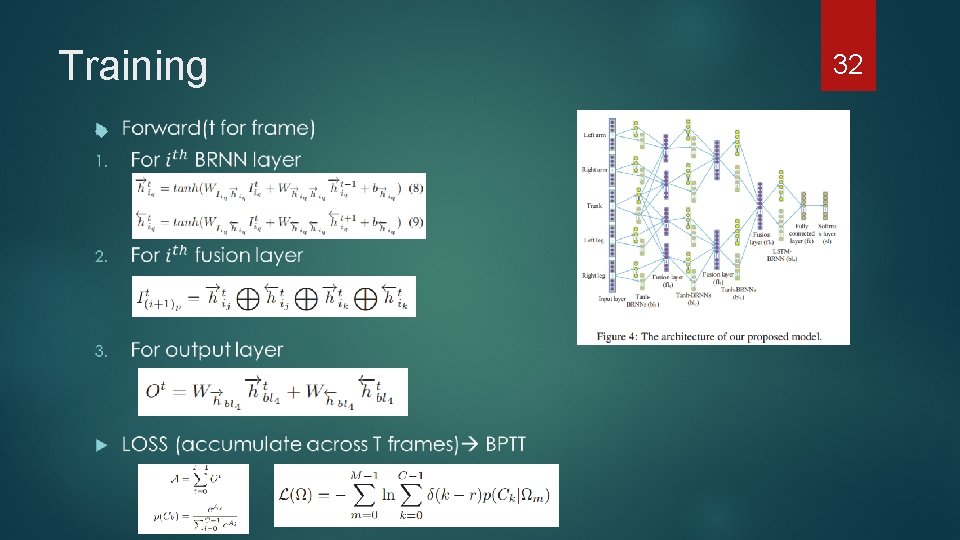

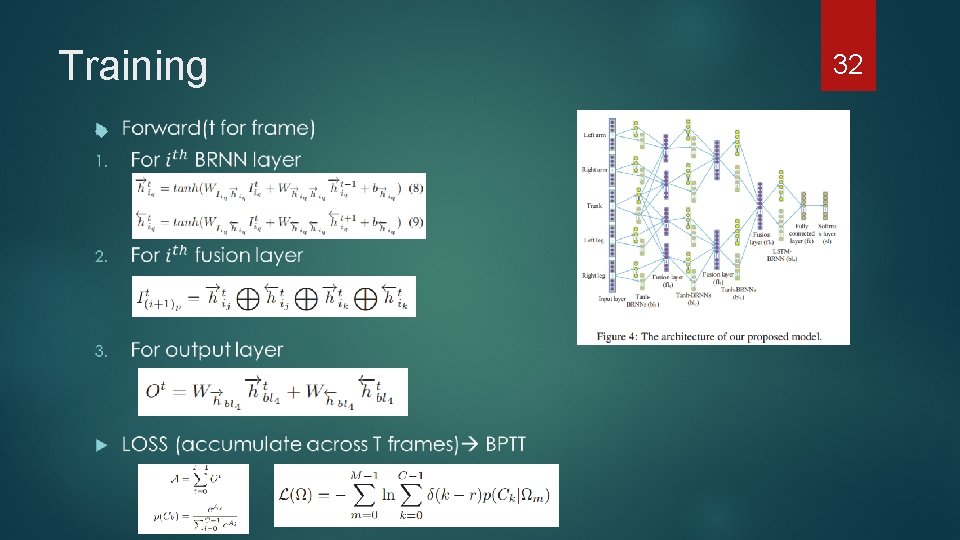

Training 32

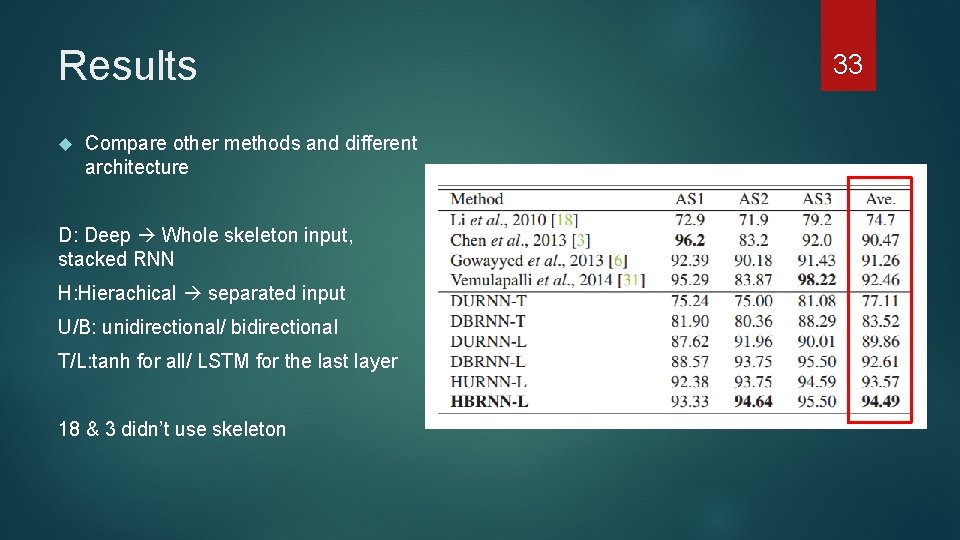

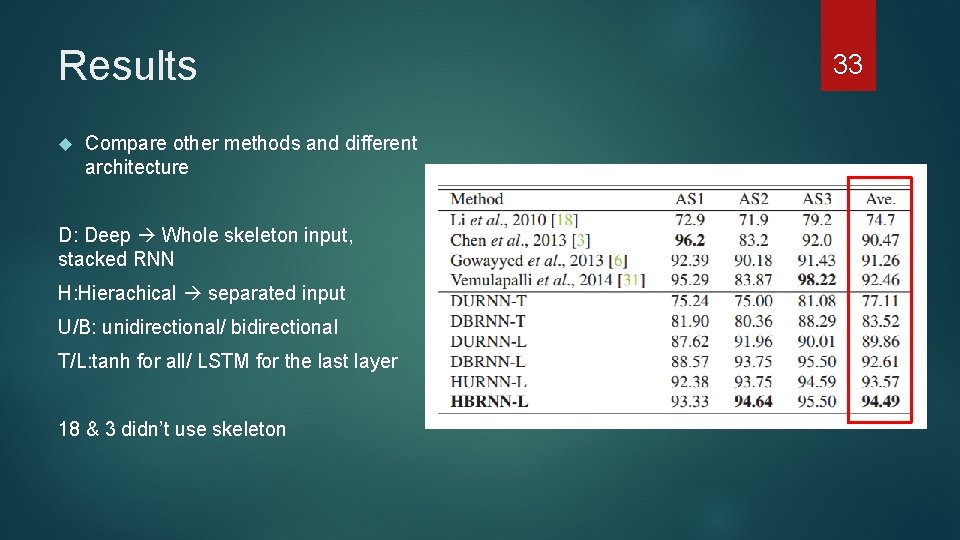

Results Compare other methods and different architecture D: Deep Whole skeleton input, stacked RNN H: Hierachical separated input U/B: unidirectional/ bidirectional T/L: tanh for all/ LSTM for the last layer 18 & 3 didn’t use skeleton 33

Outline 1. Recurrent neural network 2. Long term dependency – LSTM & GRU 3. Paper researching 4. Conclution & Reference 34

Conclusion Information from the past and even the future matters To overcome long term dependency challenge, we need to concern how to preserve important information while control the information flow, leave only the important one LSTM , GRU , and even other Gated RNNs can perform better than original RNN, just right now there are no one can beat any others on every task RNN really have more and more value to research and use it 35

References “Deep learning”, Ian Goodfellow and Yoshua Bengio and Aaron Courville, 2016 “Learning Phrase Representations using RNN Encoder-Decoder for Statistical Machine Translation”, Kyunghyun Cho, Bart van Merrienboer, Caglar Gulcehre, Dzmitry Bahdanau, Fethi Bougares, Holger Schwenk, Yoshua Bengio, 2014 ”Empirical Evaluation of Gated Recurrent Neural Networks on sequence Modeling” , Junyoung Chung, Caglar Gulcehre, Kyung. Hyun Cho, Yoshau Bengio, 2014 . ”Unsupervised Learning of Video Representation using LSTMs”, Nitish Srivastava, Elman Mansimov, Ruslan Salakhutdinov, 2015 36

References “Hierarchical Recurrent Neural Network for Skeleton Based Action Recognition”, Yong Du, Wei Wang, et al. , CVPR 2015 “Understanding LSTM Networks”, Christopher Olah 37