1 CSC 450 AI Intelligent Agents Outline 2

![Vacuum-cleaner world 6 � Percepts: location and contents, e. g. , [A, Dirty], , Vacuum-cleaner world 6 � Percepts: location and contents, e. g. , [A, Dirty], ,](https://slidetodoc.com/presentation_image_h/e57bc96ddc06827f97dd114b7f37fdbd/image-6.jpg)

![A vacuum-cleaner agent 7 Percept sequence Action [A, Clean] Right [A, Dirty] Suck [B, A vacuum-cleaner agent 7 Percept sequence Action [A, Clean] Right [A, Dirty] Suck [B,](https://slidetodoc.com/presentation_image_h/e57bc96ddc06827f97dd114b7f37fdbd/image-7.jpg)

![Agent program for a vacuum-cleaner agent 26 function REFLEX-VACUM-AGENT ( [location, status] ) returns Agent program for a vacuum-cleaner agent 26 function REFLEX-VACUM-AGENT ( [location, status] ) returns](https://slidetodoc.com/presentation_image_h/e57bc96ddc06827f97dd114b7f37fdbd/image-26.jpg)

- Slides: 49

1 CSC 450 - AI Intelligent Agents

Outline 2 �Agents and environments �Rationality �PEAS (Performance measure, Environment, Actuators, Sensors) �Environment types �Agent types

Agents 3 �An agent is anything that can be viewed as perceiving its environment through sensors and acting upon that environment through actuators �Human agent: eyes, ears, and other organs for sensors; Hands, legs, mouth, and other body parts for actuators �Robotic agent: cameras and infrared range finders for sensors; various motors for actuators

Agents 4 �Percept Agent's perceptual inputs at any given instant �Percept Sequence The complete history of everything the agent has ever perceived An agent's choice of action at any given instant can depend on the entire percept sequence observed to date

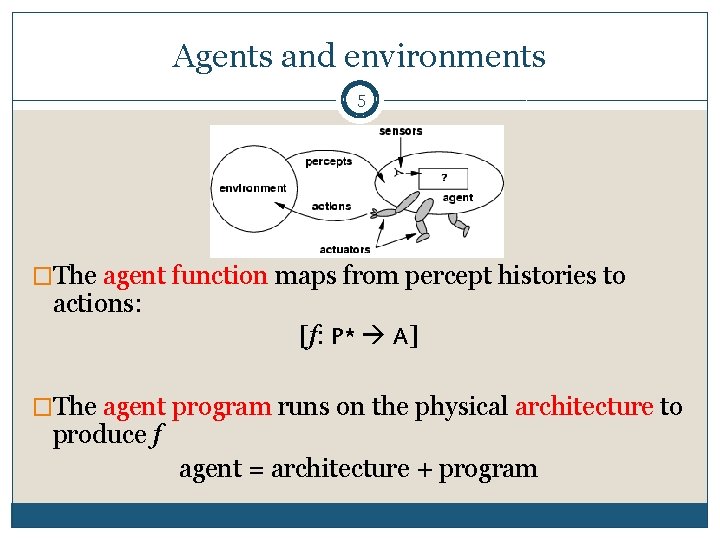

Agents and environments 5 �The agent function maps from percept histories to actions: [f: P* A] �The agent program runs on the physical architecture to produce f agent = architecture + program

![Vacuumcleaner world 6 Percepts location and contents e g A Dirty Vacuum-cleaner world 6 � Percepts: location and contents, e. g. , [A, Dirty], ,](https://slidetodoc.com/presentation_image_h/e57bc96ddc06827f97dd114b7f37fdbd/image-6.jpg)

Vacuum-cleaner world 6 � Percepts: location and contents, e. g. , [A, Dirty], , [B, Clean] � Actions: Left, Right, Suck, No. Op

![A vacuumcleaner agent 7 Percept sequence Action A Clean Right A Dirty Suck B A vacuum-cleaner agent 7 Percept sequence Action [A, Clean] Right [A, Dirty] Suck [B,](https://slidetodoc.com/presentation_image_h/e57bc96ddc06827f97dd114b7f37fdbd/image-7.jpg)

A vacuum-cleaner agent 7 Percept sequence Action [A, Clean] Right [A, Dirty] Suck [B, Clean] Left [B, Dirty] Suck [A, Clean], [A, Clean] Right [A, Clean], [A, Dirty] Suck Figure 2. 3 Partial tabulation of a simple agent function for the vacuum-cleaner world

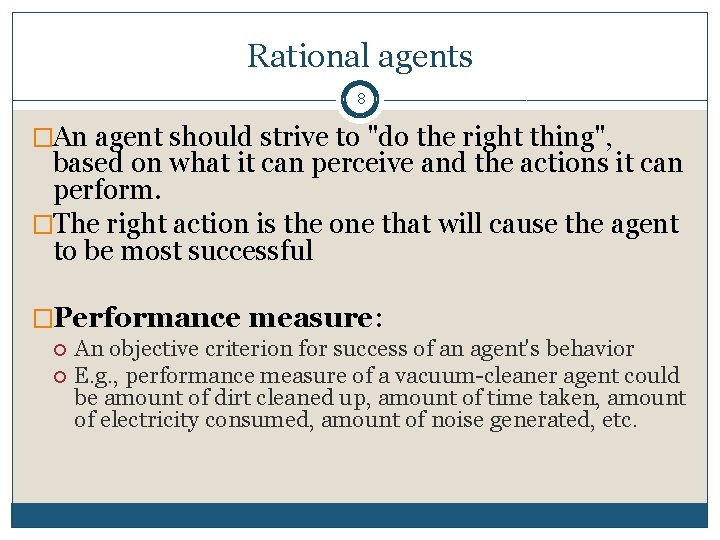

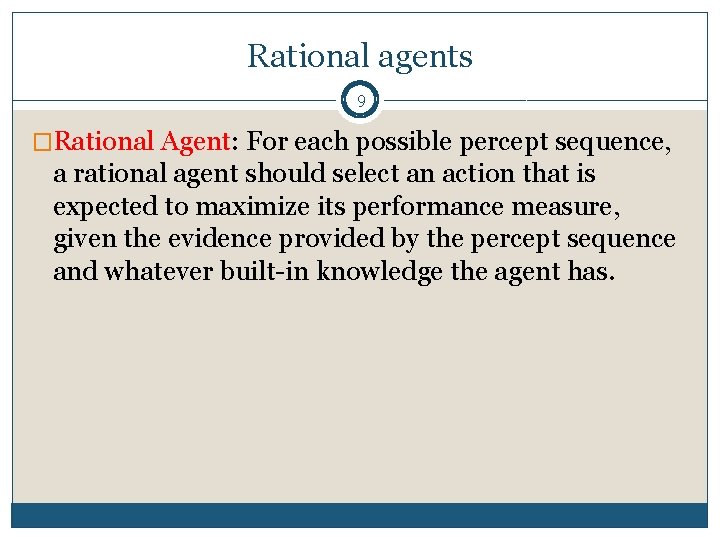

Rational agents 8 �An agent should strive to "do the right thing", based on what it can perceive and the actions it can perform. �The right action is the one that will cause the agent to be most successful �Performance measure: An objective criterion for success of an agent's behavior E. g. , performance measure of a vacuum-cleaner agent could be amount of dirt cleaned up, amount of time taken, amount of electricity consumed, amount of noise generated, etc.

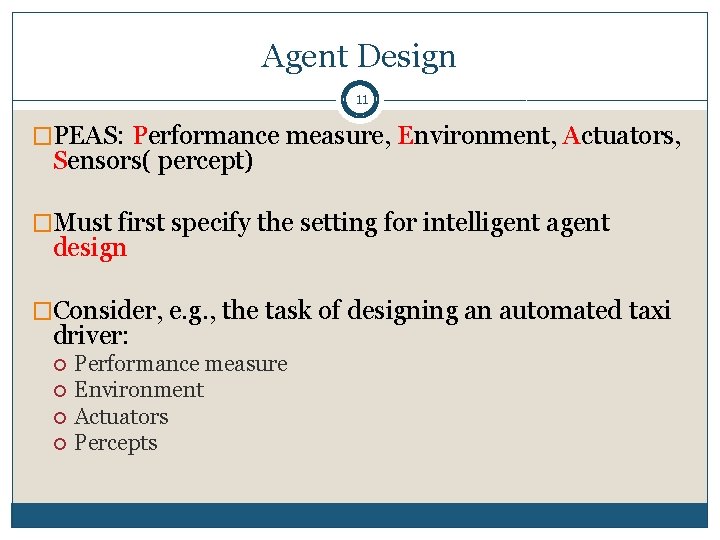

Rational agents 9 �Rational Agent: For each possible percept sequence, a rational agent should select an action that is expected to maximize its performance measure, given the evidence provided by the percept sequence and whatever built-in knowledge the agent has.

Rational agents 10 �Agents can perform actions in order to modify future percepts so as to obtain useful information (information gathering, exploration) �An agent is autonomous if its behavior is determined by its own experience (with ability to learn and adapt)

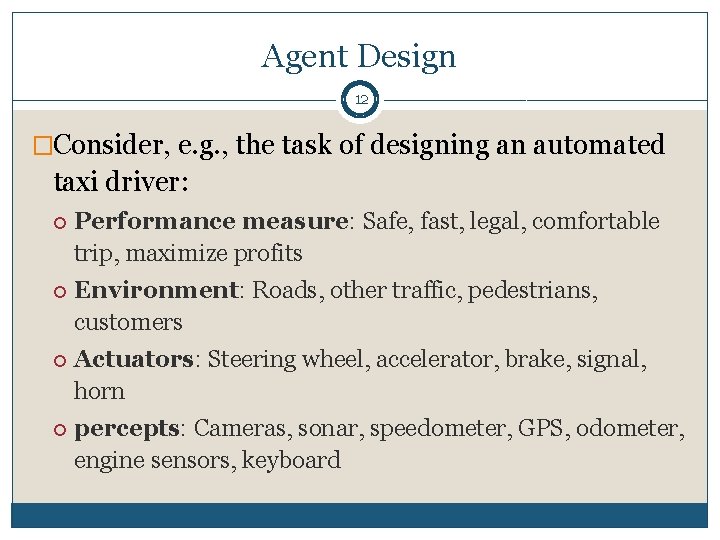

Agent Design 11 �PEAS: Performance measure, Environment, Actuators, Sensors( percept) �Must first specify the setting for intelligent agent design �Consider, e. g. , the task of designing an automated taxi driver: Performance measure Environment Actuators Percepts

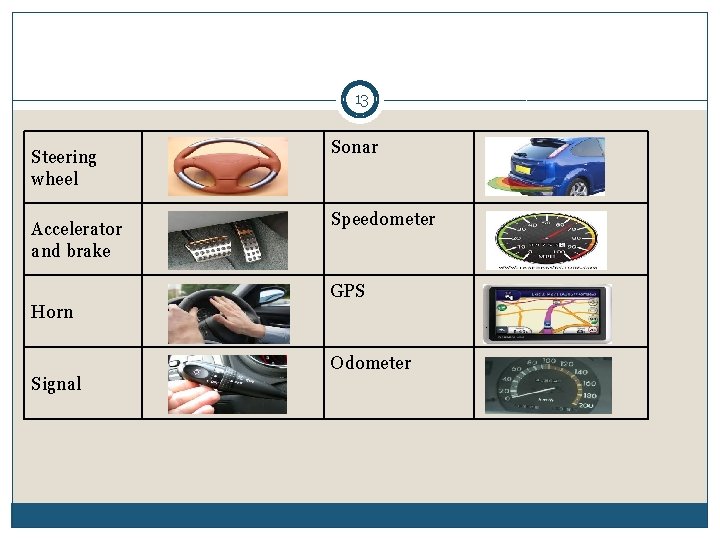

Agent Design 12 �Consider, e. g. , the task of designing an automated taxi driver: Performance measure: Safe, fast, legal, comfortable trip, maximize profits Environment: Roads, other traffic, pedestrians, customers Actuators: Steering wheel, accelerator, brake, signal, horn percepts: Cameras, sonar, speedometer, GPS, odometer, engine sensors, keyboard

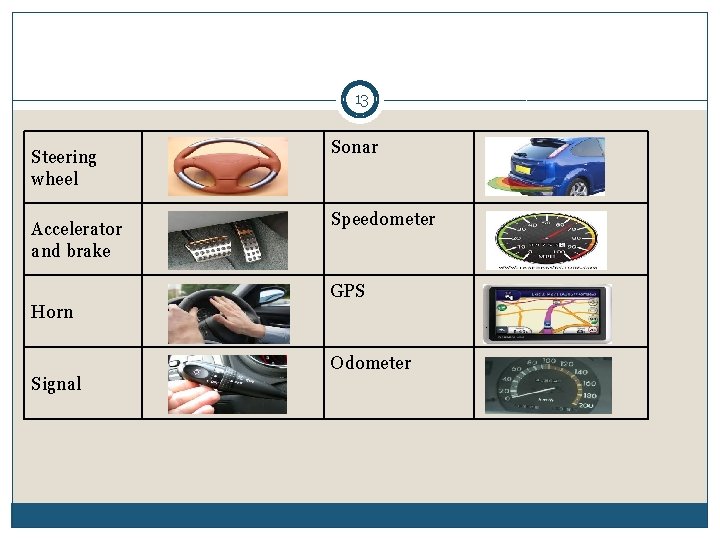

13 Steering wheel Accelerator and brake Sonar Speedometer GPS Horn Odometer Signal

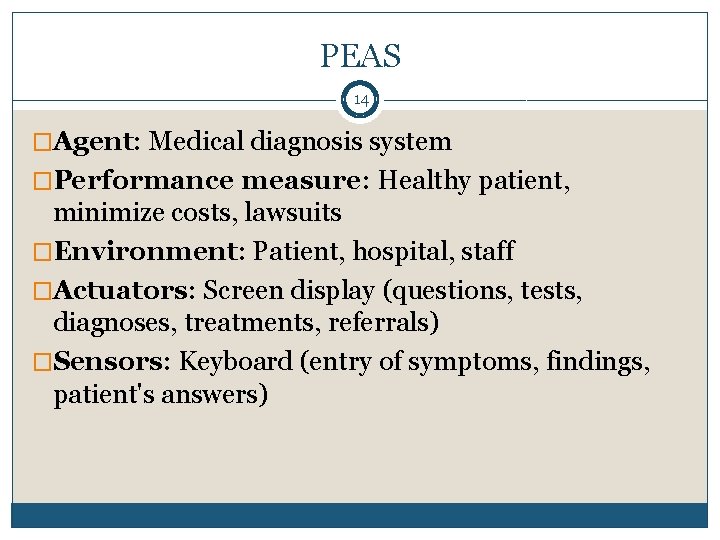

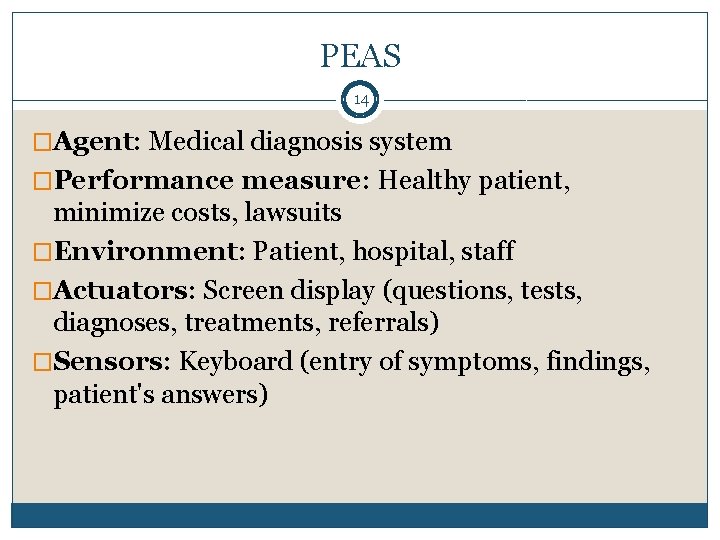

PEAS 14 �Agent: Medical diagnosis system �Performance measure: Healthy patient, minimize costs, lawsuits �Environment: Patient, hospital, staff �Actuators: Screen display (questions, tests, diagnoses, treatments, referrals) �Sensors: Keyboard (entry of symptoms, findings, patient's answers)

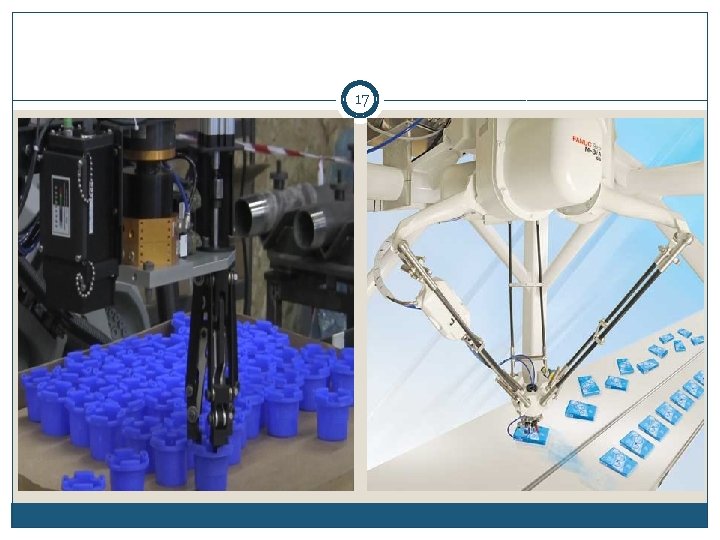

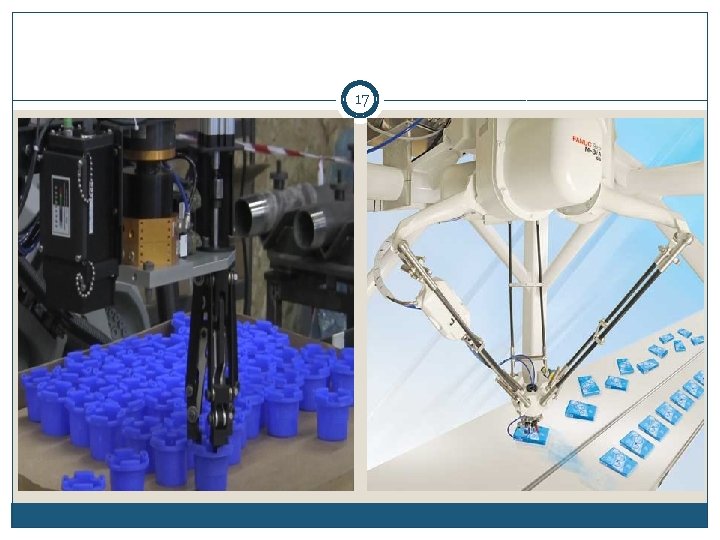

Agent Design 15 �Agent: Part-picking robot �Performance measure: Percentage of parts in correct bins �Environment: Conveyor belt with parts, bins �Actuators: Jointed arm and hand �Sensors: Camera, joint angle sensors

16 �Agent: intelligent house �Environment: occupants enter and leave house, occupants enter and leave rooms; daily variation in outside light and temperature �Goals: occupants warm, room lights are on when room is occupied, house energy efficient �Percepts: signals from temperature sensor, movement sensor, clock, sound sensor �Actions: room heaters on/off, lights on/off

17

PEAS 18 �Agent: Interactive English tutor �Performance measure: Maximize student's score on test �Environment: Set of students �Actuators: Screen display (exercises, suggestions, corrections) �Sensors: Keyboard

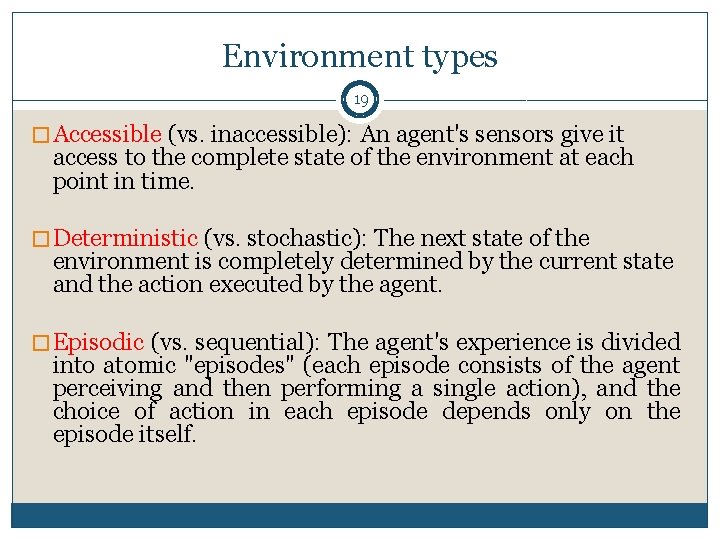

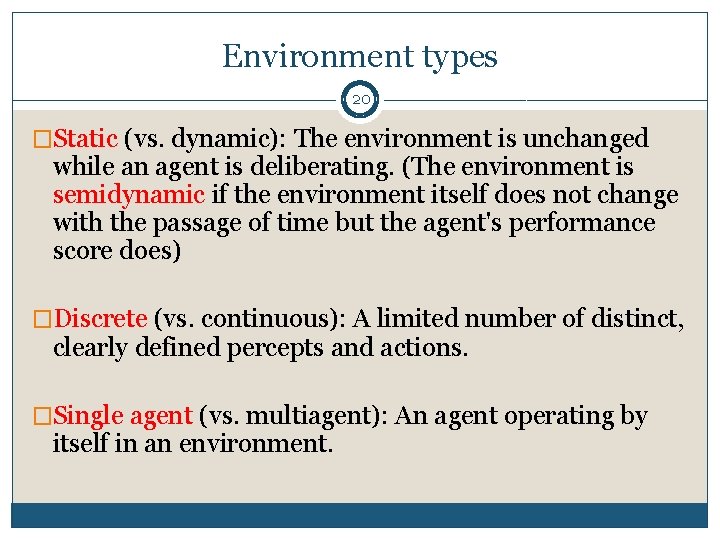

Environment types 19 � Accessible (vs. inaccessible): An agent's sensors give it access to the complete state of the environment at each point in time. � Deterministic (vs. stochastic): The next state of the environment is completely determined by the current state and the action executed by the agent. � Episodic (vs. sequential): The agent's experience is divided into atomic "episodes" (each episode consists of the agent perceiving and then performing a single action), and the choice of action in each episode depends only on the episode itself.

Environment types 20 �Static (vs. dynamic): The environment is unchanged while an agent is deliberating. (The environment is semidynamic if the environment itself does not change with the passage of time but the agent's performance score does) �Discrete (vs. continuous): A limited number of distinct, clearly defined percepts and actions. �Single agent (vs. multiagent): An agent operating by itself in an environment.

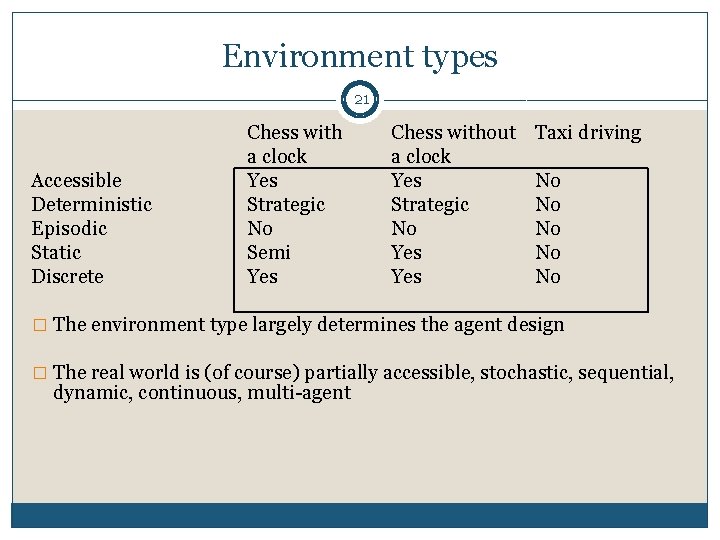

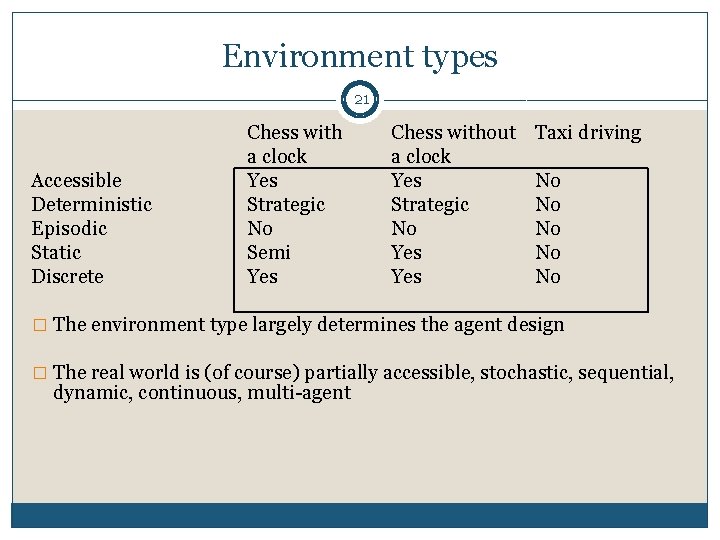

Environment types 21 Accessible Deterministic Episodic Static Discrete Chess with a clock Yes Strategic No Semi Yes Chess without a clock Yes Strategic No Yes Taxi driving No No No � The environment type largely determines the agent design � The real world is (of course) partially accessible, stochastic, sequential, dynamic, continuous, multi-agent

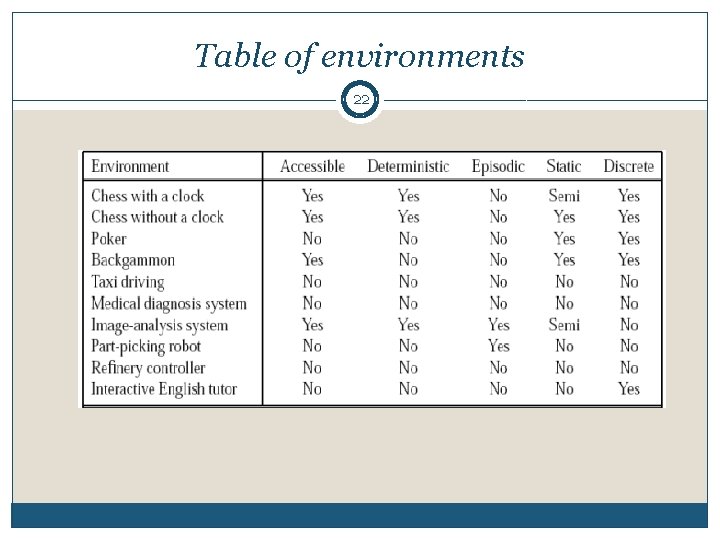

Table of environments 22

End of Lecture 23

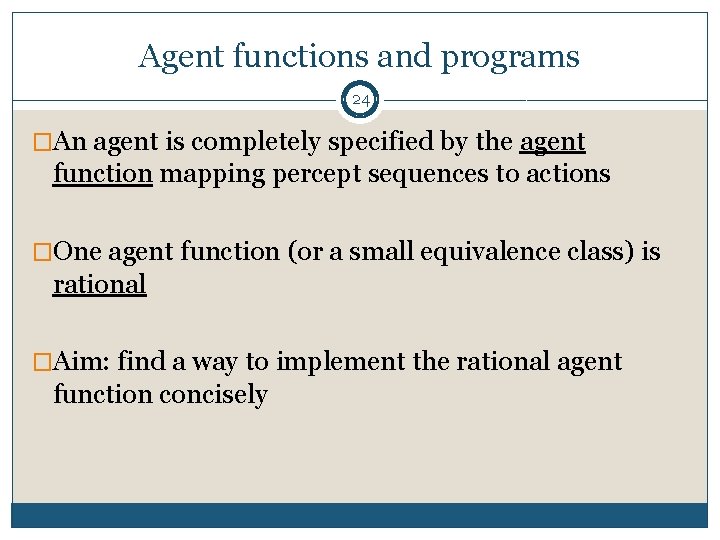

Agent functions and programs 24 �An agent is completely specified by the agent function mapping percept sequences to actions �One agent function (or a small equivalence class) is rational �Aim: find a way to implement the rational agent function concisely

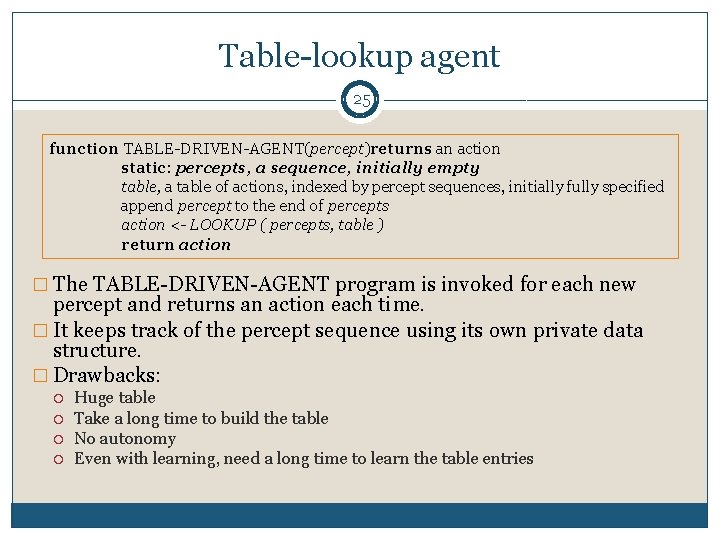

Table-lookup agent 25 function TABLE-DRIVEN-AGENT(percept)returns an action static: percepts, a sequence, initially empty table, a table of actions, indexed by percept sequences, initially fully specified append percept to the end of percepts action <- LOOKUP ( percepts, table ) return action � The TABLE-DRIVEN-AGENT program is invoked for each new percept and returns an action each time. � It keeps track of the percept sequence using its own private data structure. � Drawbacks: Huge table Take a long time to build the table No autonomy Even with learning, need a long time to learn the table entries

![Agent program for a vacuumcleaner agent 26 function REFLEXVACUMAGENT location status returns Agent program for a vacuum-cleaner agent 26 function REFLEX-VACUM-AGENT ( [location, status] ) returns](https://slidetodoc.com/presentation_image_h/e57bc96ddc06827f97dd114b7f37fdbd/image-26.jpg)

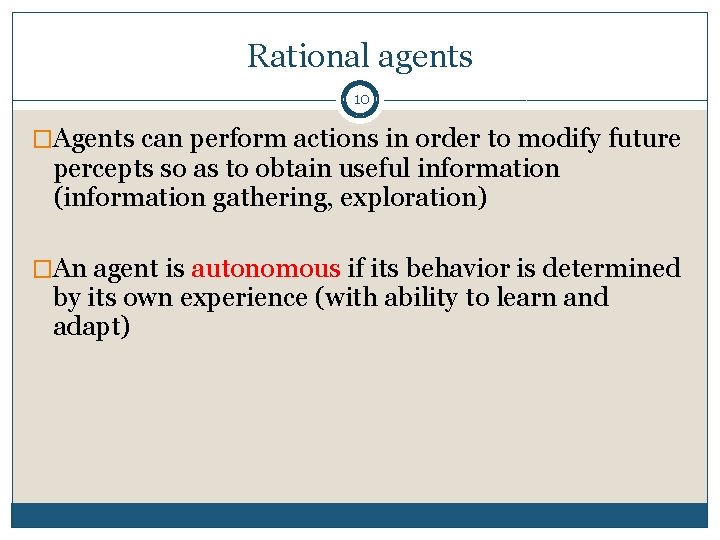

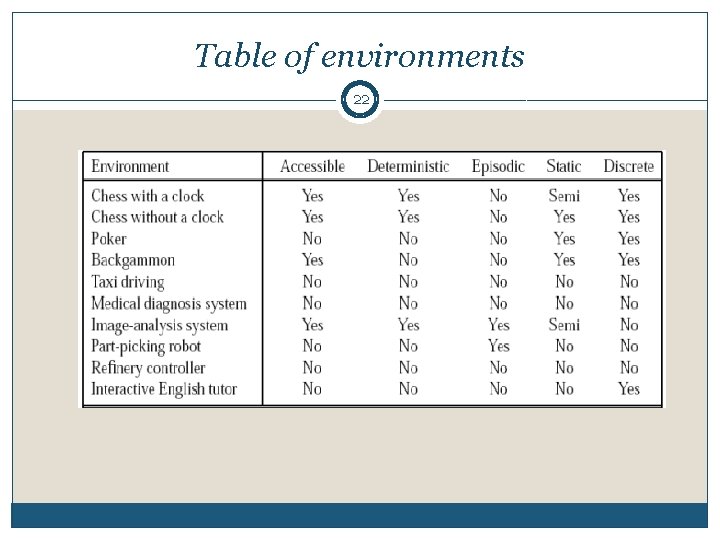

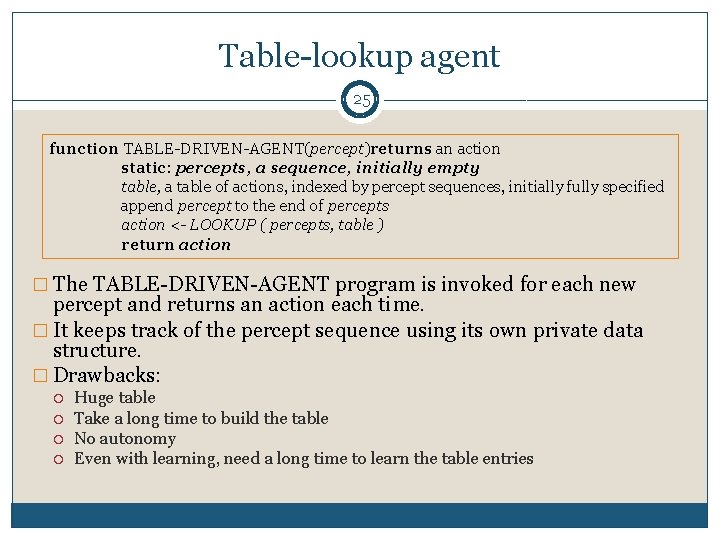

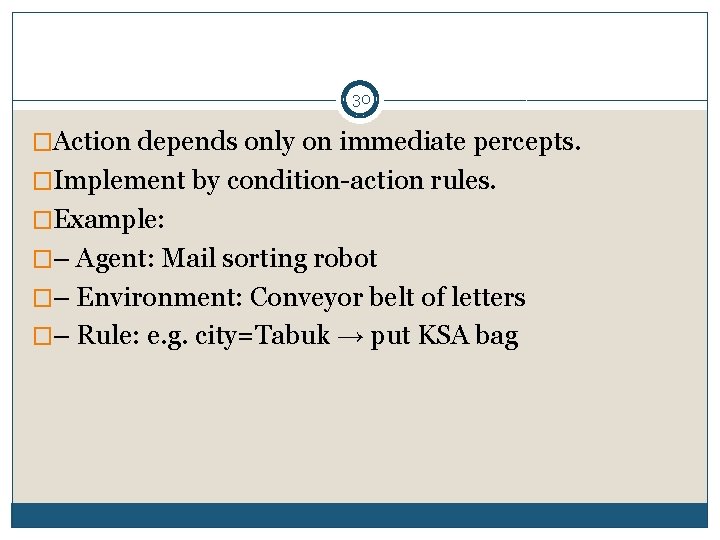

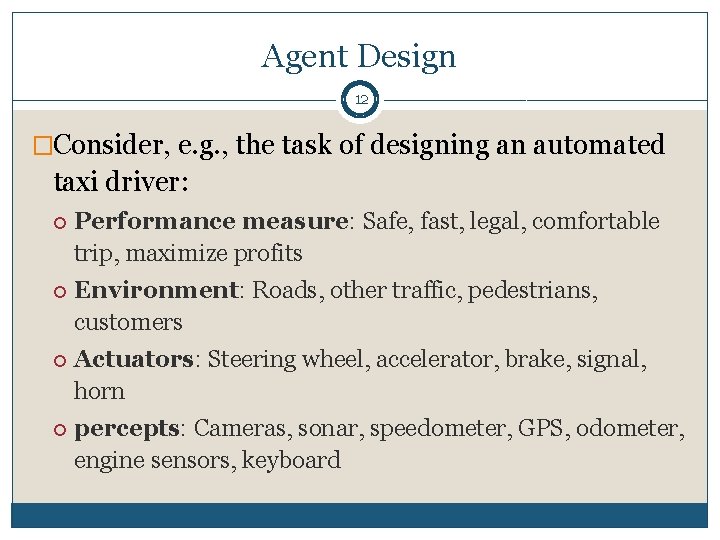

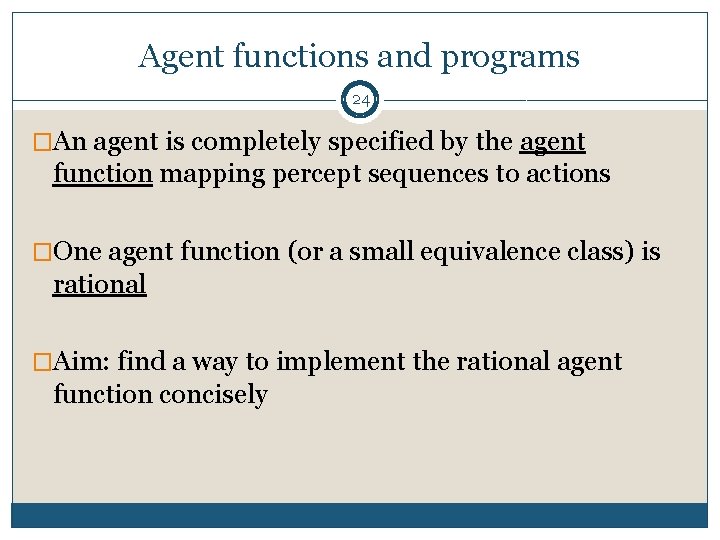

Agent program for a vacuum-cleaner agent 26 function REFLEX-VACUM-AGENT ( [location, status] ) returns an action if status = Dirty then return Suck else if location = A then return Right else if location = B then return Left � The agent program for a simple reflex agent in the two-state vacuum environment. � This program implements the agent function tabulated in Figure 2. 3.

Agent types 27 �Four basic types in order of increasing generality: Simple reflex agents Model-based reflex agents Goal-based agents Utility-based agents

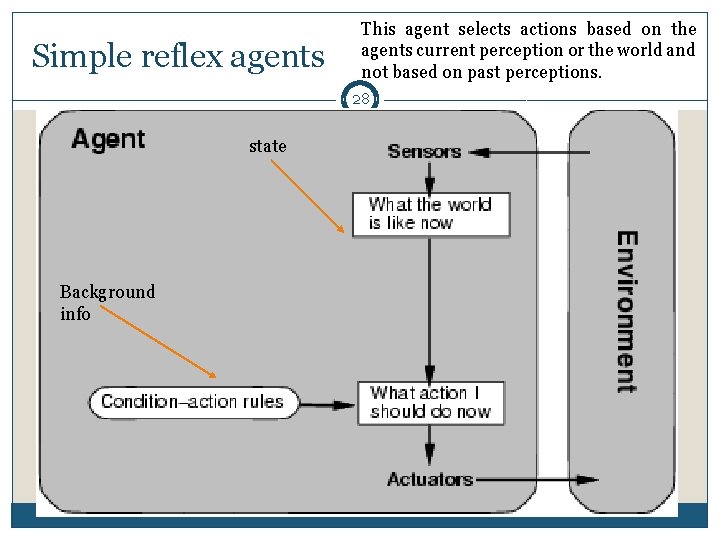

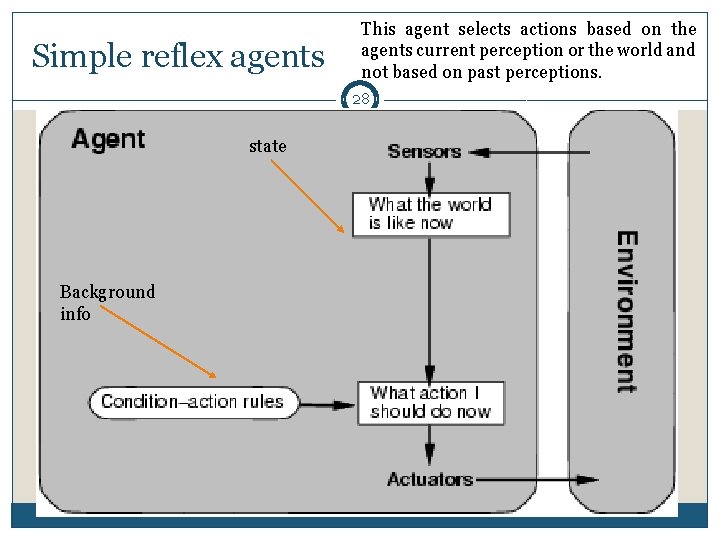

Simple reflex agents This agent selects actions based on the agents current perception or the world and not based on past perceptions. 28 state Background info

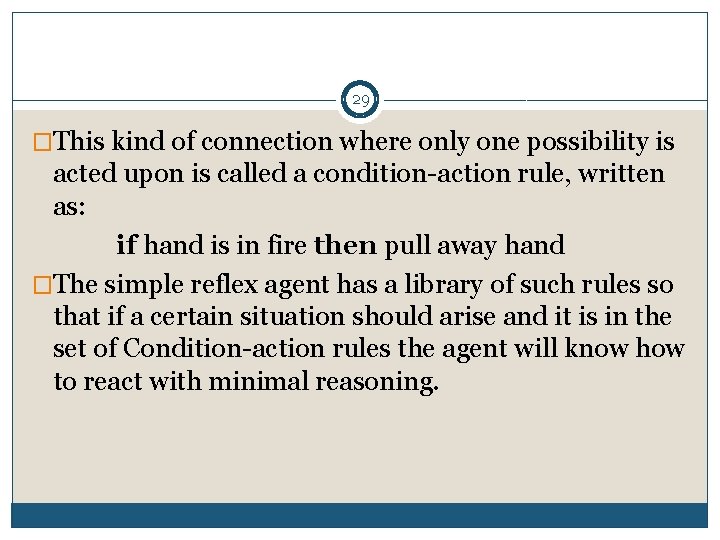

29 �This kind of connection where only one possibility is acted upon is called a condition-action rule, written as: if hand is in fire then pull away hand �The simple reflex agent has a library of such rules so that if a certain situation should arise and it is in the set of Condition-action rules the agent will know how to react with minimal reasoning.

30 �Action depends only on immediate percepts. �Implement by condition-action rules. �Example: �– Agent: Mail sorting robot �– Environment: Conveyor belt of letters �– Rule: e. g. city=Tabuk → put KSA bag

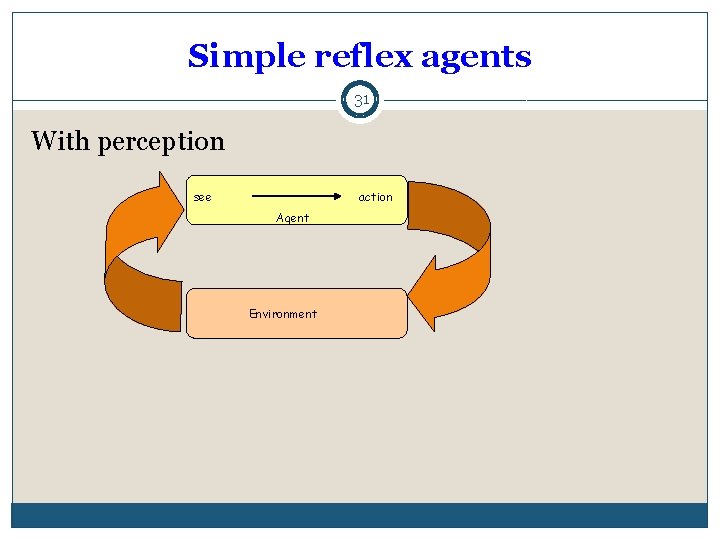

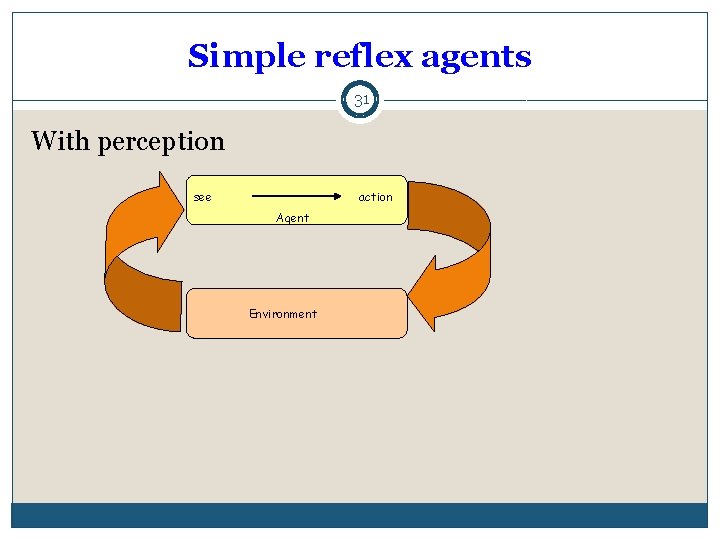

Simple reflex agents 31 With perception see action Agent Environment

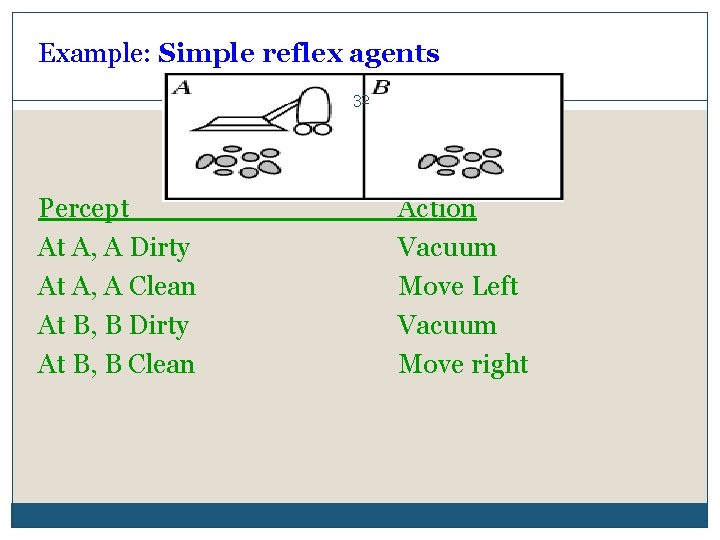

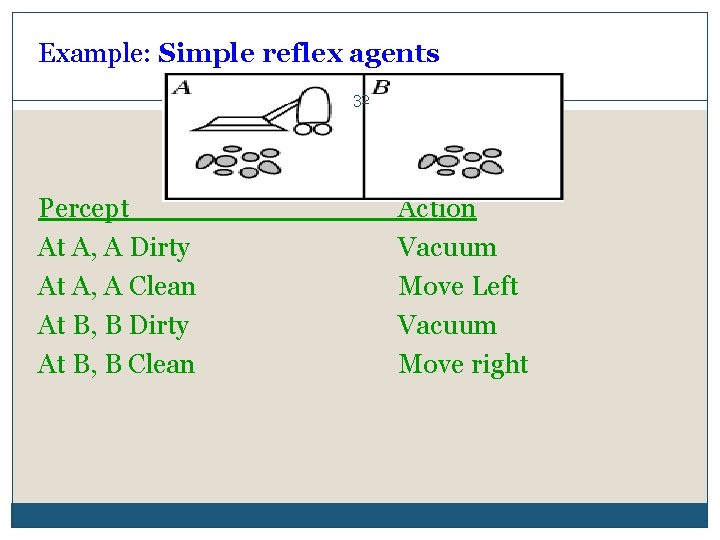

Example: Simple reflex agents 32 Percept At A, A Dirty At A, A Clean At B, B Dirty At B, B Clean Action Vacuum Move Left Vacuum Move right

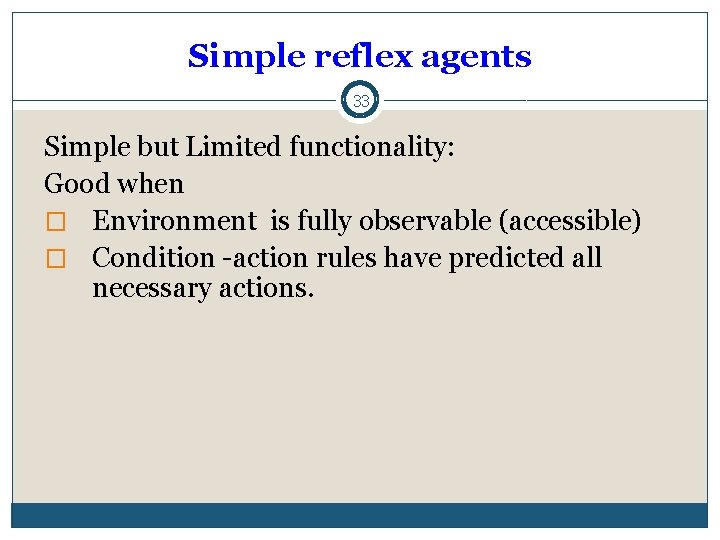

Simple reflex agents 33 Simple but Limited functionality: Good when � Environment is fully observable (accessible) � Condition -action rules have predicted all necessary actions.

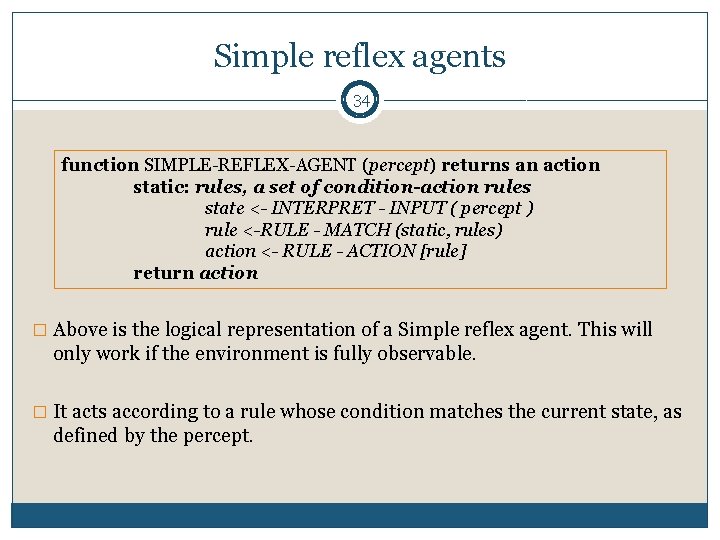

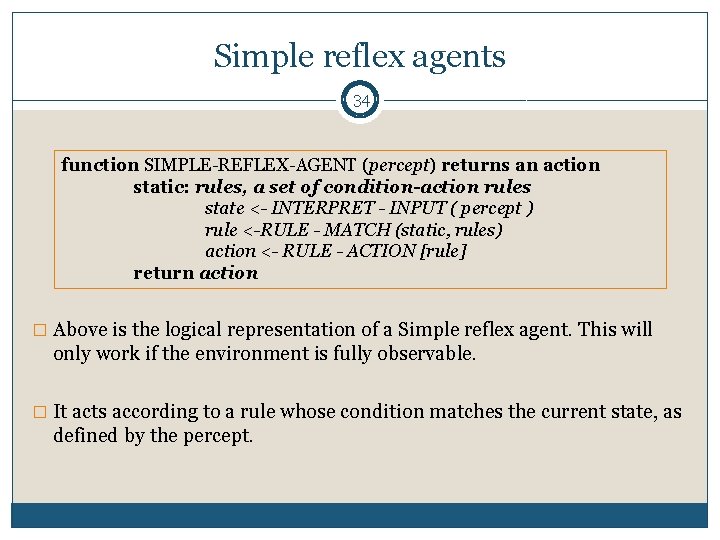

Simple reflex agents 34 function SIMPLE-REFLEX-AGENT (percept) returns an action static: rules, a set of condition-action rules state <- INTERPRET - INPUT ( percept ) rule <-RULE - MATCH (static, rules) action <- RULE - ACTION [rule] return action � Above is the logical representation of a Simple reflex agent. This will only work if the environment is fully observable. � It acts according to a rule whose condition matches the current state, as defined by the percept.

35

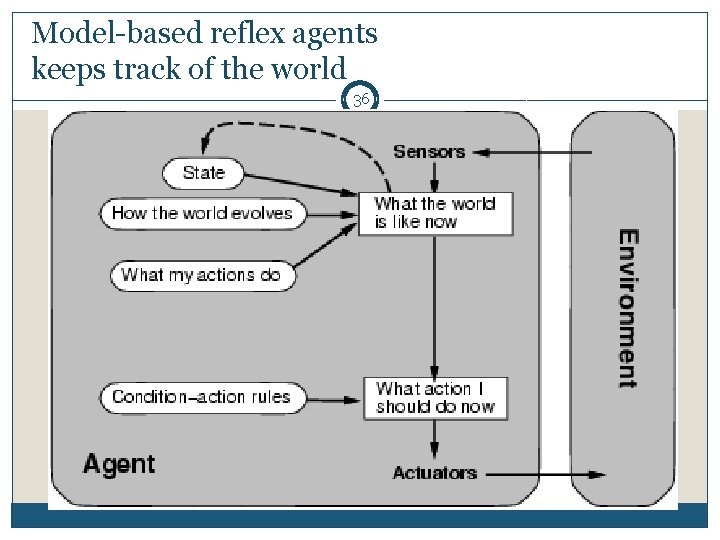

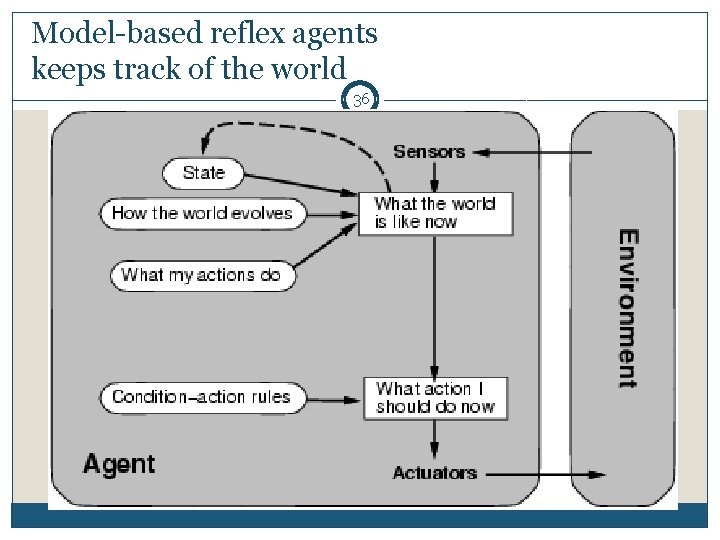

Model-based reflex agents keeps track of the world 36

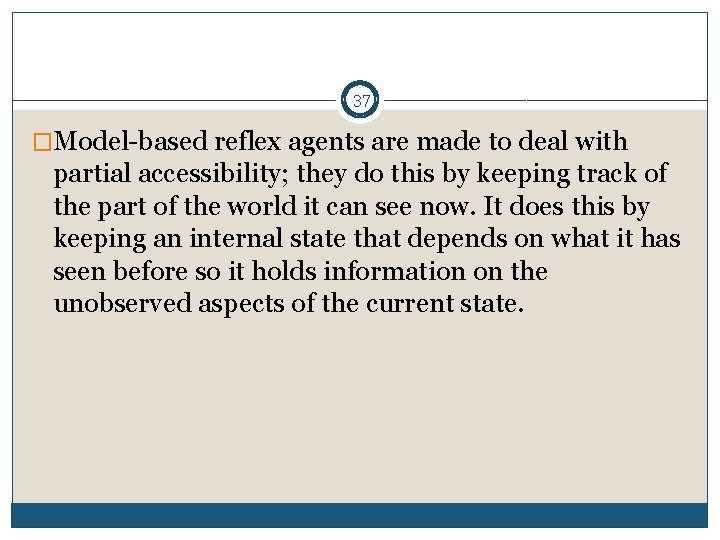

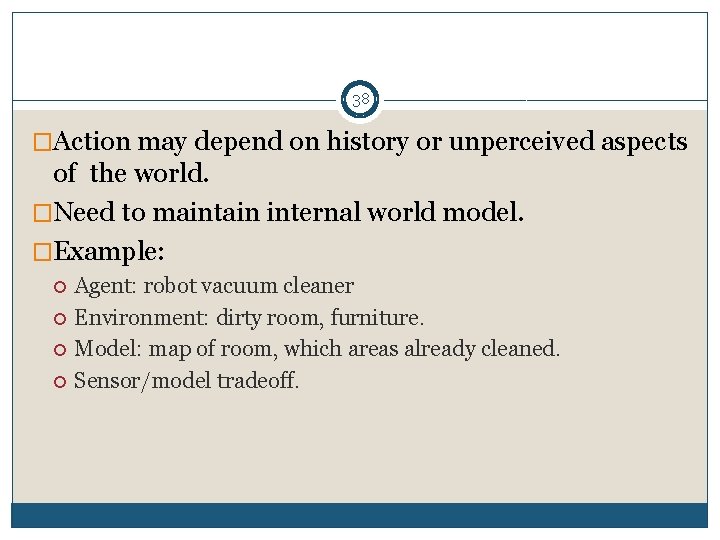

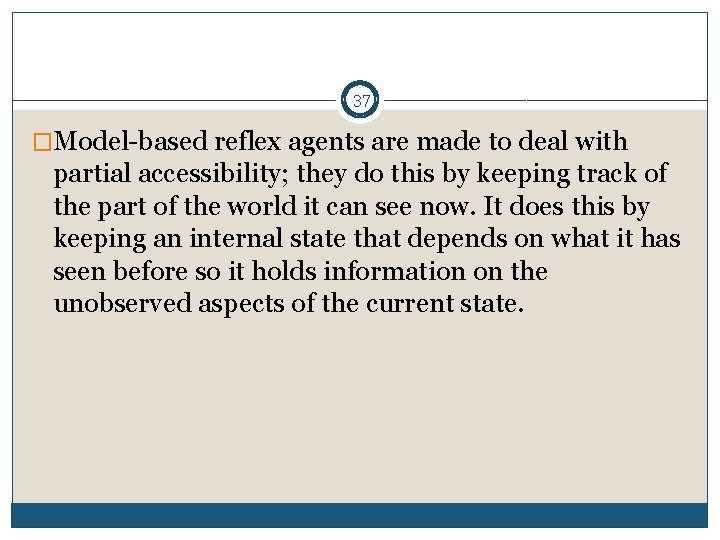

37 �Model-based reflex agents are made to deal with partial accessibility; they do this by keeping track of the part of the world it can see now. It does this by keeping an internal state that depends on what it has seen before so it holds information on the unobserved aspects of the current state.

38 �Action may depend on history or unperceived aspects of the world. �Need to maintain internal world model. �Example: Agent: robot vacuum cleaner Environment: dirty room, furniture. Model: map of room, which areas already cleaned. Sensor/model tradeoff.

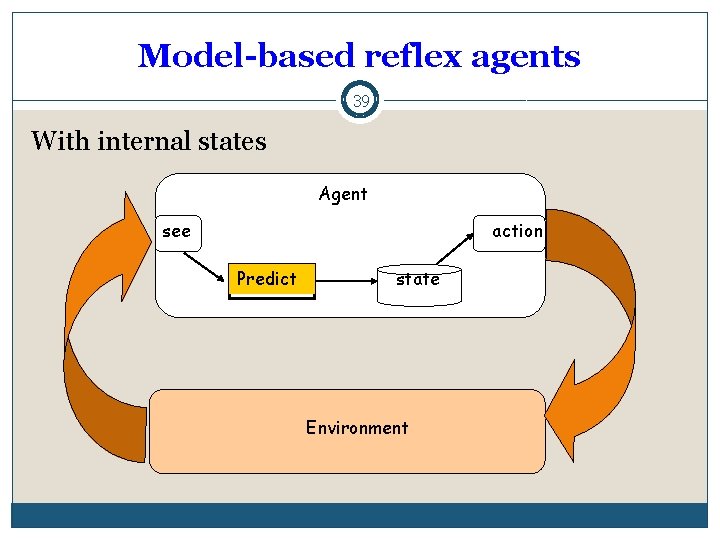

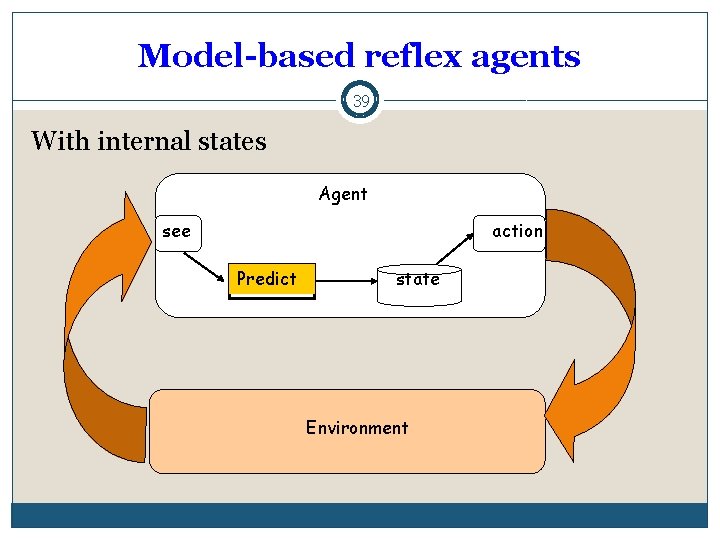

Model-based reflex agents 39 With internal states Agent see action Predict state Environment

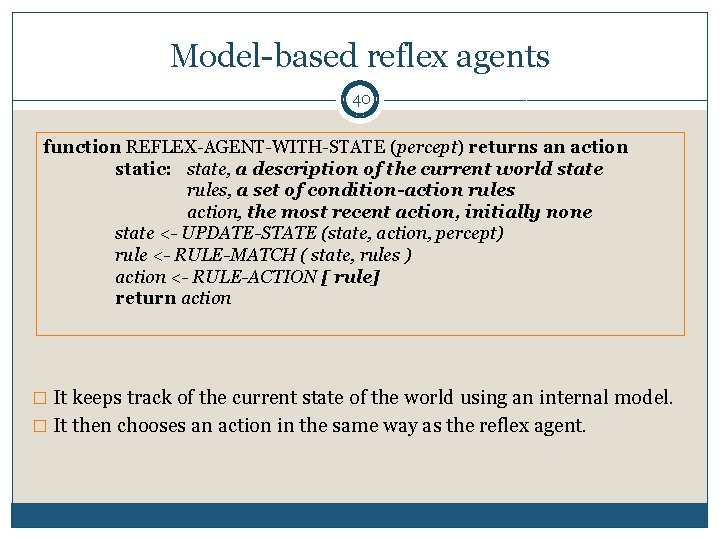

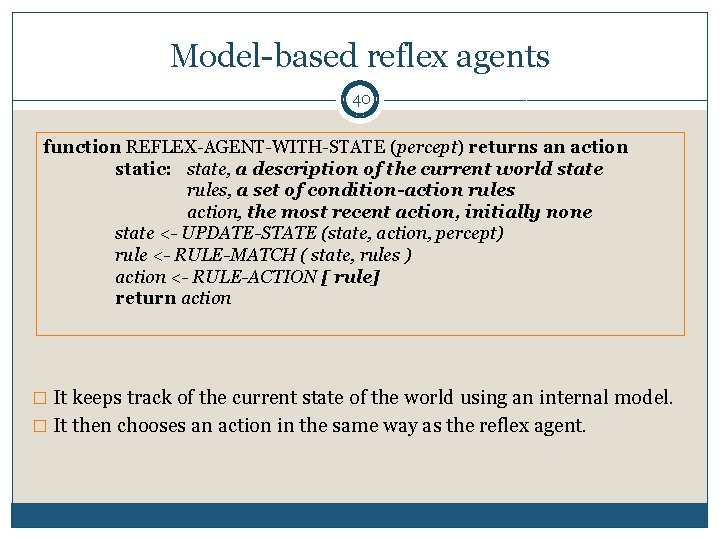

Model-based reflex agents 40 function REFLEX-AGENT-WITH-STATE (percept) returns an action static: state, a description of the current world state rules, a set of condition-action rules action, the most recent action, initially none state <- UPDATE-STATE (state, action, percept) rule <- RULE-MATCH ( state, rules ) action <- RULE-ACTION [ rule] return action � It keeps track of the current state of the world using an internal model. � It then chooses an action in the same way as the reflex agent.

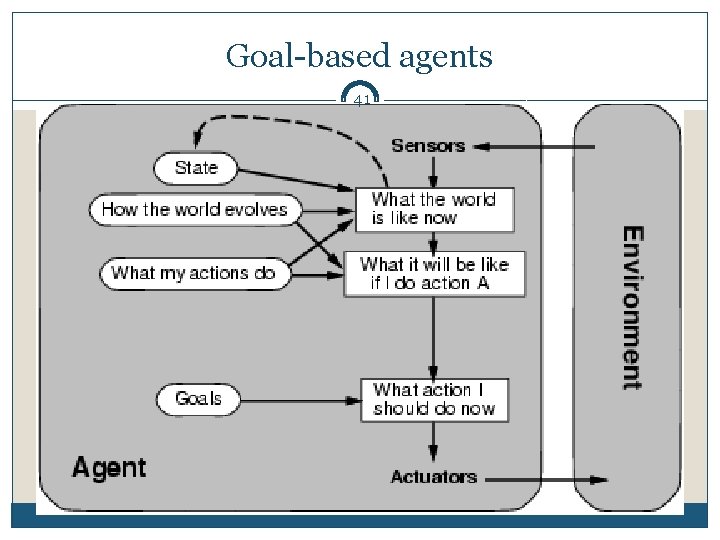

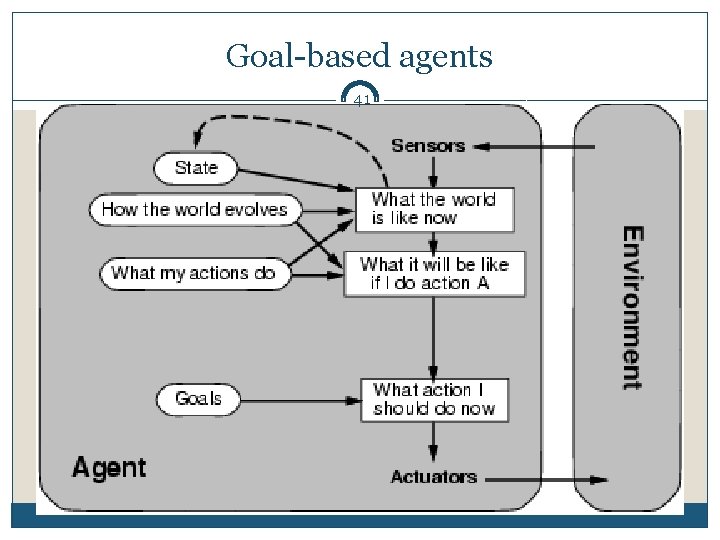

Goal-based agents 41

42 �In life, in order to get things done we set goals for us to achieve, this pushes us to make the right decisions when we need to. A simple example would be the shopping list; our goal is to pick up every thing on that list. This makes it easier to decide if you need to choose between milk and orange juice because you can only afford one. As milk is a goal on our shopping list and the orange juice is not we chose the milk.

43 �Agents so far have fixed, implicit goals. �We want agents with variable goals. �Example: Agent: robot maid Environment: house & people. Goals: clean clothes, tidy room, table laid, etc

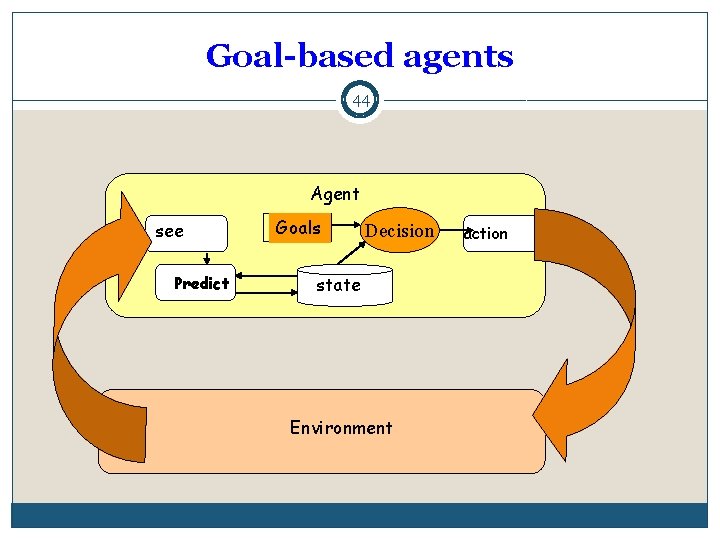

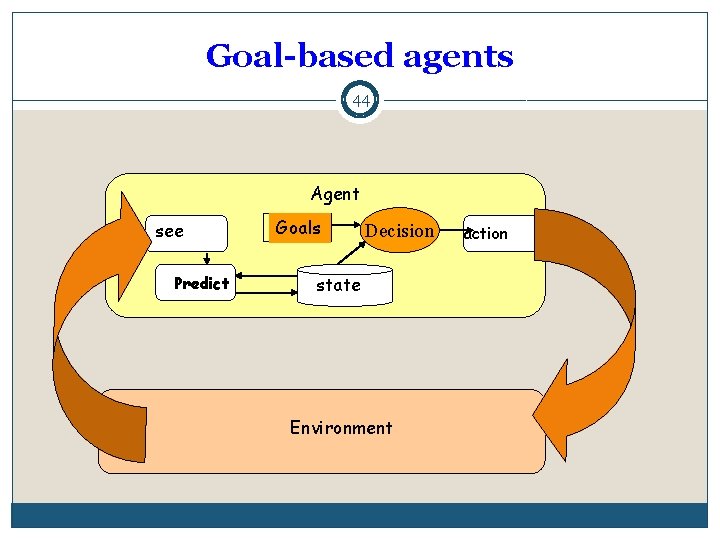

Goal-based agents 44 Agent see Predict Goals Decision state Environment action

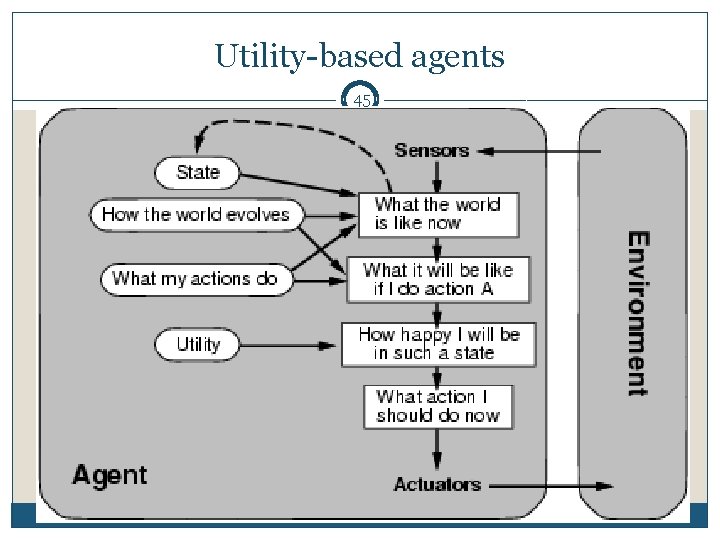

Utility-based agents 45

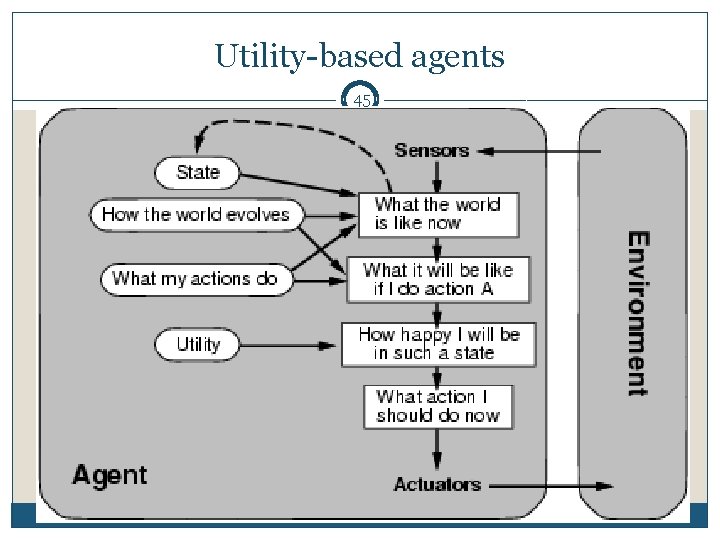

46 �Just having goals isn’t good enough because often we may have several actions which all satisfy our goal so we need some way of working out the most efficient one. A utility function maps each state after each action to a real number representing how efficiently each action achieves the goal. This is useful when we either have many actions all solving the same goal or when we have many goals that can be satisfied and we need to choose an action to perform

47 �Agents so far have had a single goal. �Agents may have to juggle conflicting goals. �Need to optimise utility over a range of goals. �Utility: measure of goodness (a real number). �Combine with probability of success to get expected utility. �Example: Agent: automatic car. Environment: roads, vehicles, signs, etc. Goals: stay safe, reach destination, be quick, obey law, save fuel, etc.

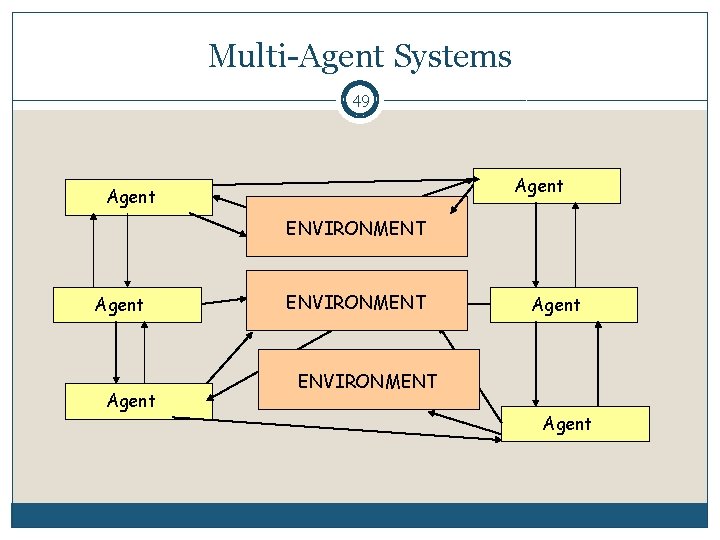

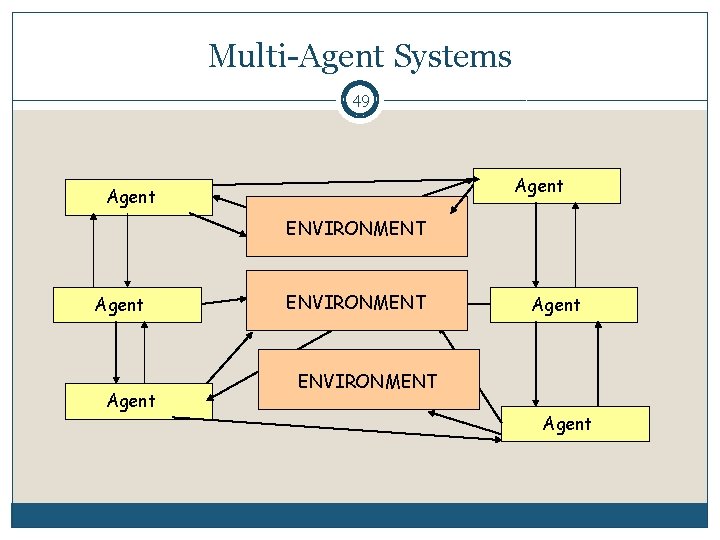

Multi-Agent Systems 49 Agent ENVIRONMENT Agent

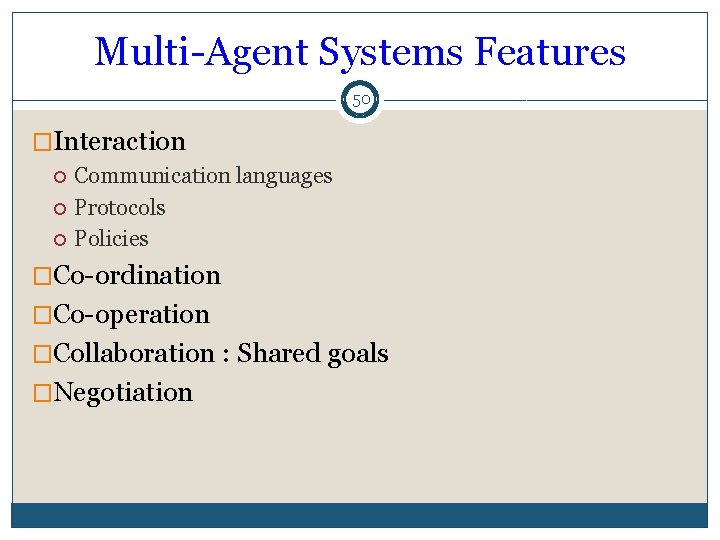

Multi-Agent Systems Features 50 �Interaction Communication languages Protocols Policies �Co-ordination �Co-operation �Collaboration : Shared goals �Negotiation