1 Assessing The Factual Accuracy of Generated Text

- Slides: 31

1 Assessing The Factual Accuracy of Generated Text ADVISOR: JIA-LING, KOH SOURCE: KDD ’ 19 SPEAKER: KUAN-YU, SHENG DATE: 2020/08/13

OUTLINE l Introduction l Method l Experiment l Conclusion 2

INTRODUCTION 33 Accurate or not ? Test Generation tasks • Text summarization • Machine translation • Dialogue response generation ….

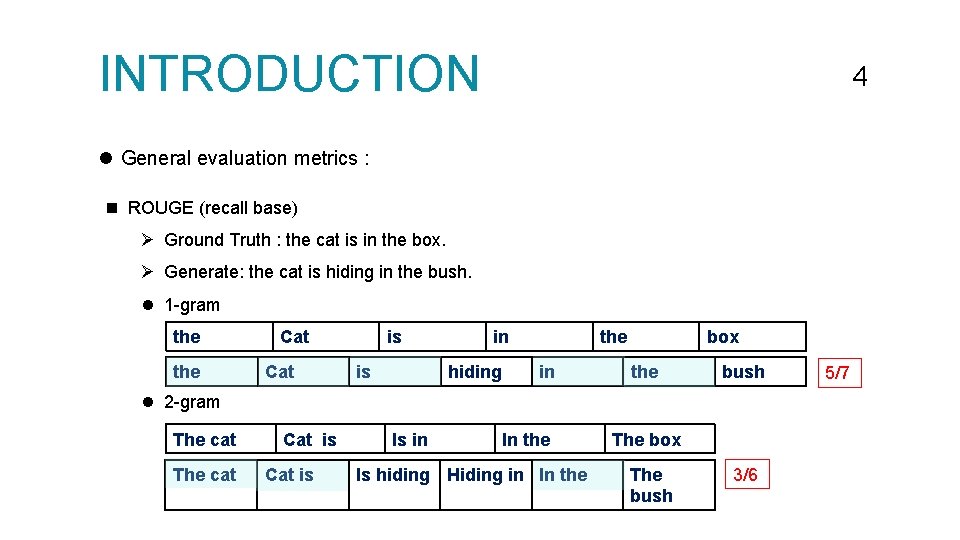

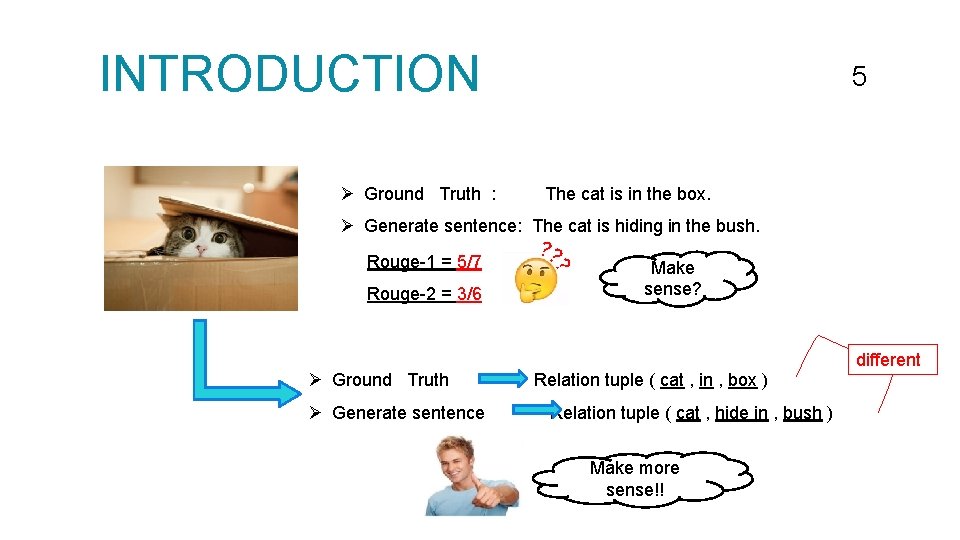

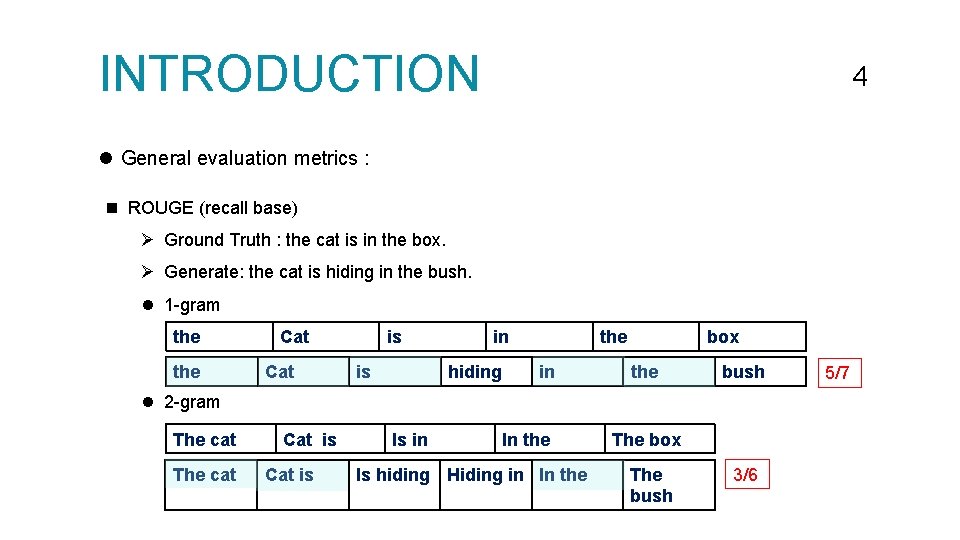

INTRODUCTION 44 l General evaluation metrics : n ROUGE (recall base) Ø Ground Truth : the cat is in the box. Ø Generate: the cat is hiding in the bush. l 1 -gram the Cat is is in hiding the in box the bush l 2 -gram The cat Cat is Is in In the Is hiding Hiding in In the The box The bush 3/6 5/7

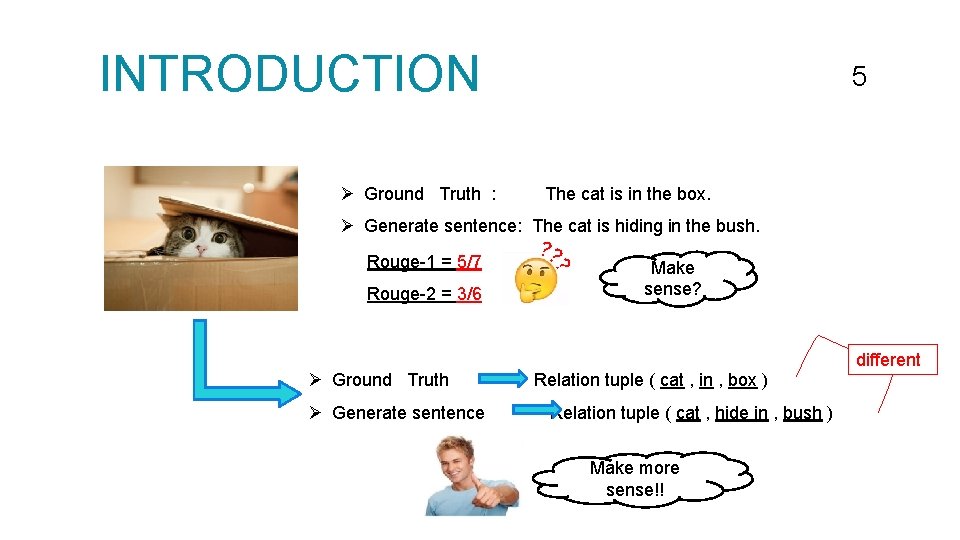

INTRODUCTION Ø Ground Truth : 55 The cat is in the box. Ø Generate sentence: The cat is hiding in the bush. Rouge-2 = 3/6 Ø Ground Truth Ø Generate sentence ? ? ? Rouge-1 = 5/7 Make sense? different Relation tuple ( cat , in , box ) Relation tuple ( cat , hide in , bush ) Make more sense!!

OUTLINE l Introduction l Method l Experiment l Conclusion 6

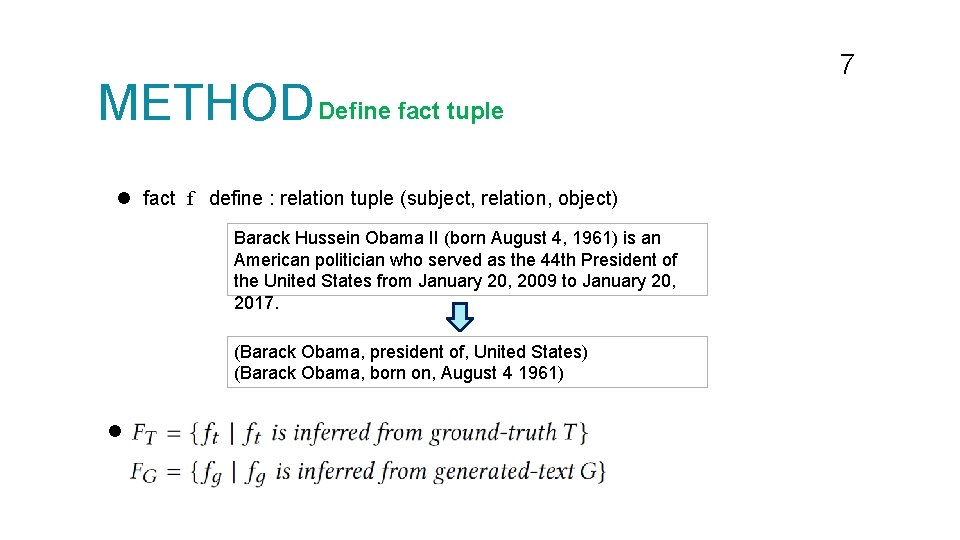

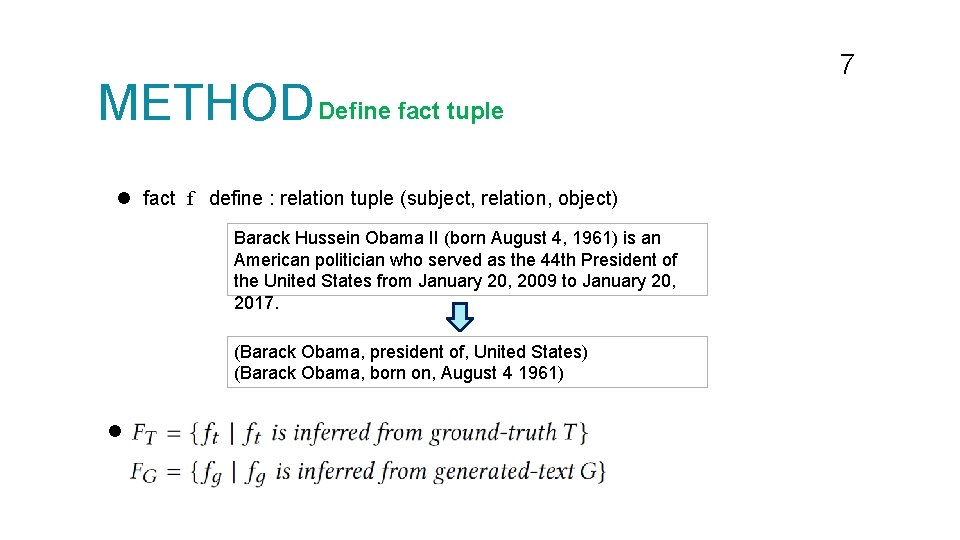

METHOD Define fact tuple l fact f define : relation tuple (subject, relation, object) Barack Hussein Obama II (born August 4, 1961) is an American politician who served as the 44 th President of the United States from January 20, 2009 to January 20, 2017. (Barack Obama, president of, United States) (Barack Obama, born on, August 4 1961) l 7

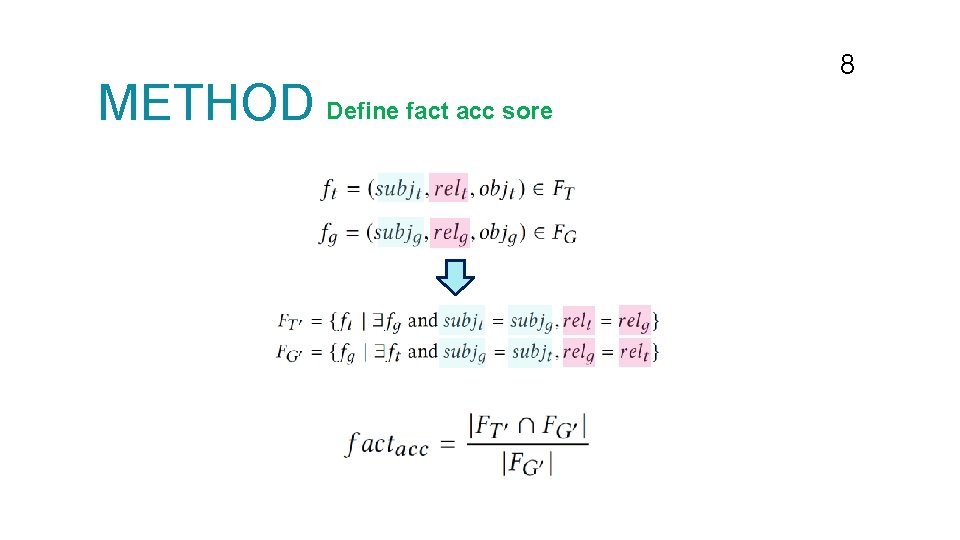

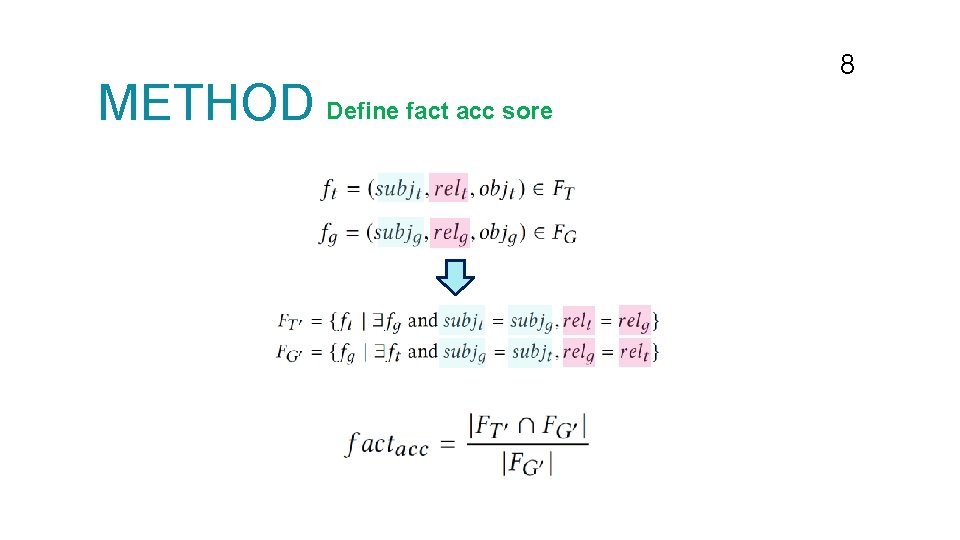

METHOD Define fact acc sore 8

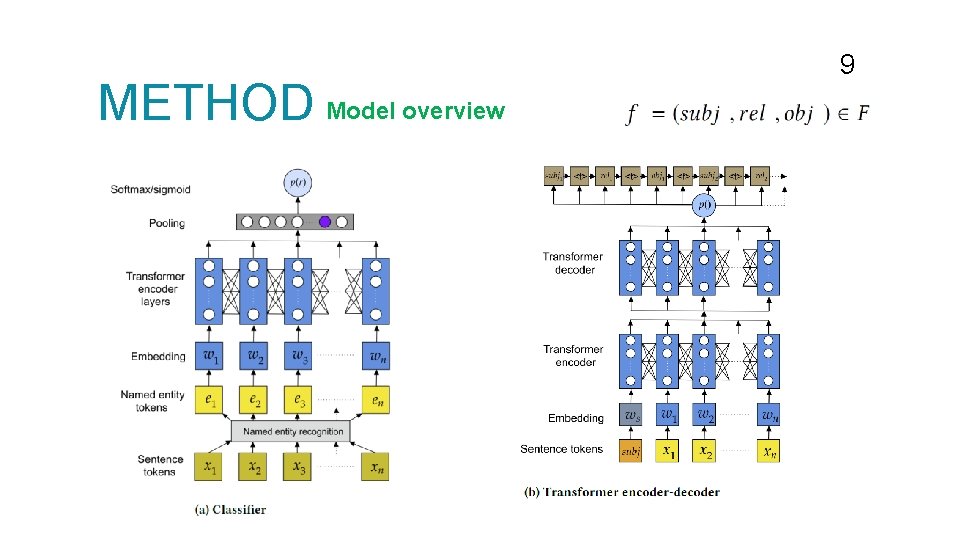

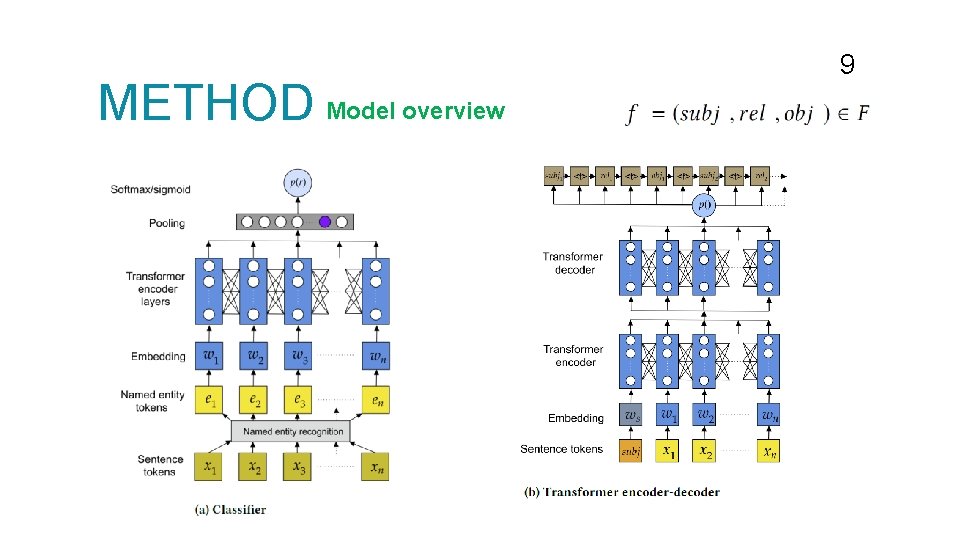

METHOD Model overview 9

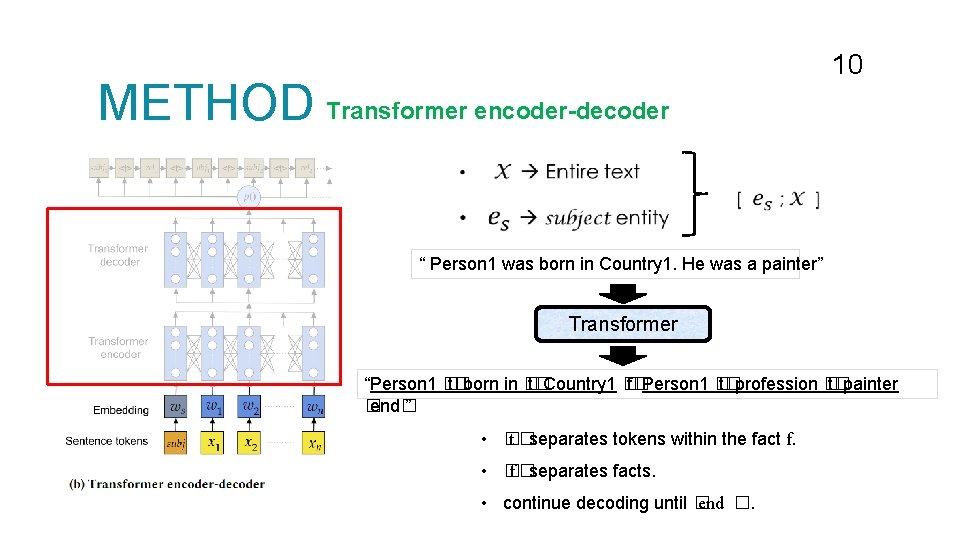

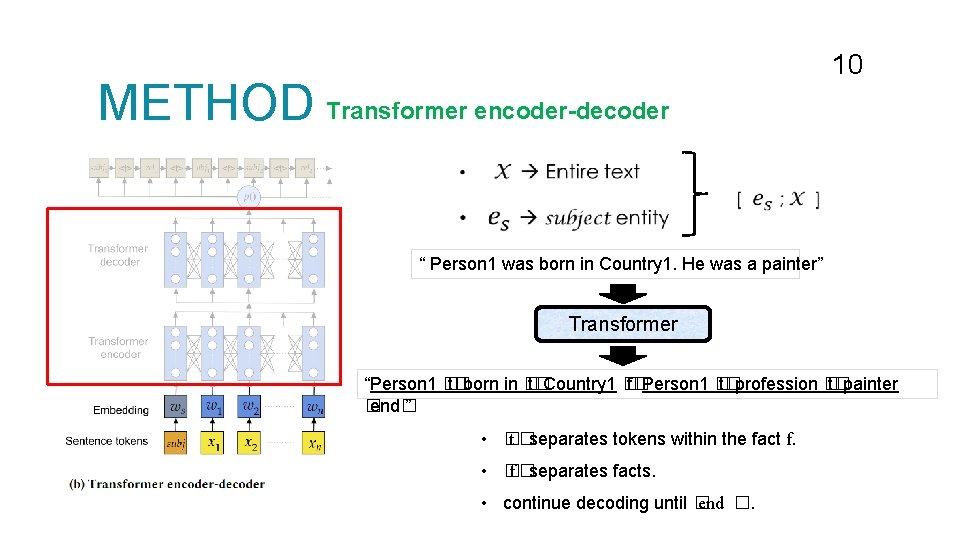

METHOD Transformer encoder-decoder 10 “ Person 1 was born in Country 1. He was a painter” Transformer “Person 1 � t�born in � t�Country 1 � f�Person 1 � t�profession � t�painter � end� ” • � t �separates tokens within the fact f. • � f �separates facts. • continue decoding until � end �.

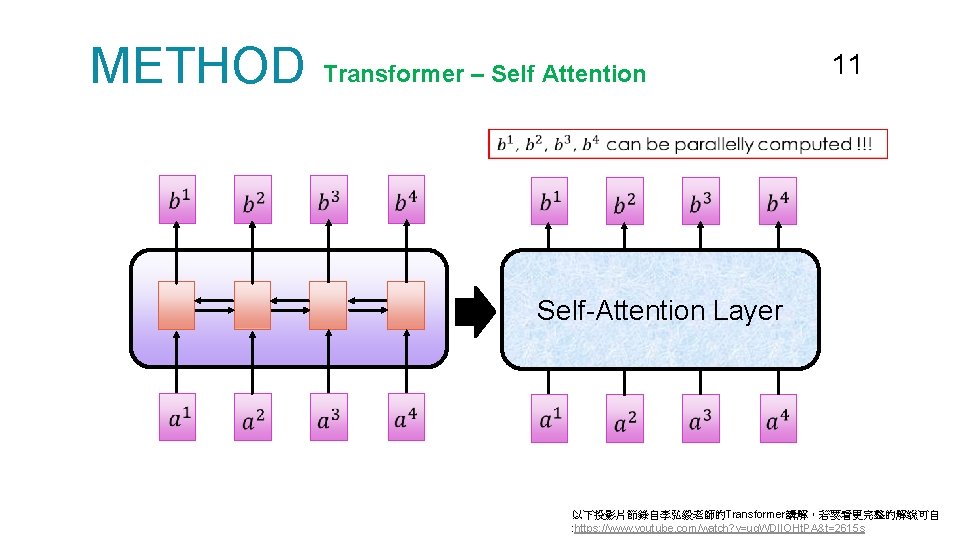

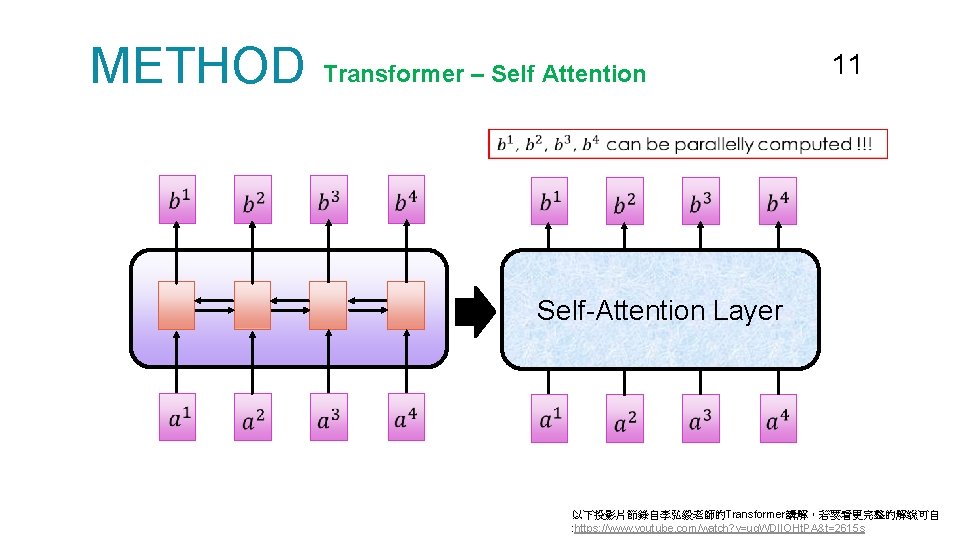

METHOD Transformer – Self Attention 11 Self-Attention Layer 以下投影片節錄自李弘毅老師的Transformer講解,若要看更完整的解說可自 : https: //www. youtube. com/watch? v=ug. WDIIOHt. PA&t=2615 s

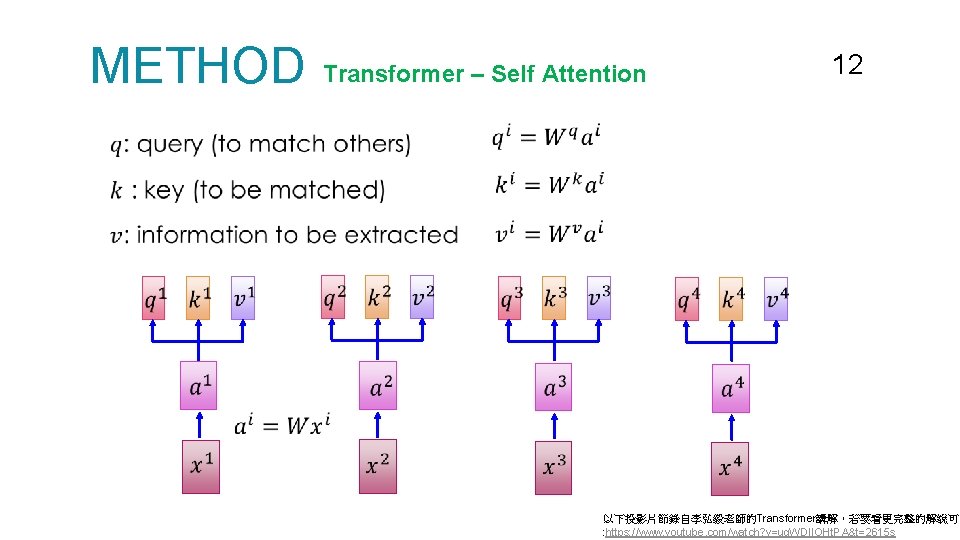

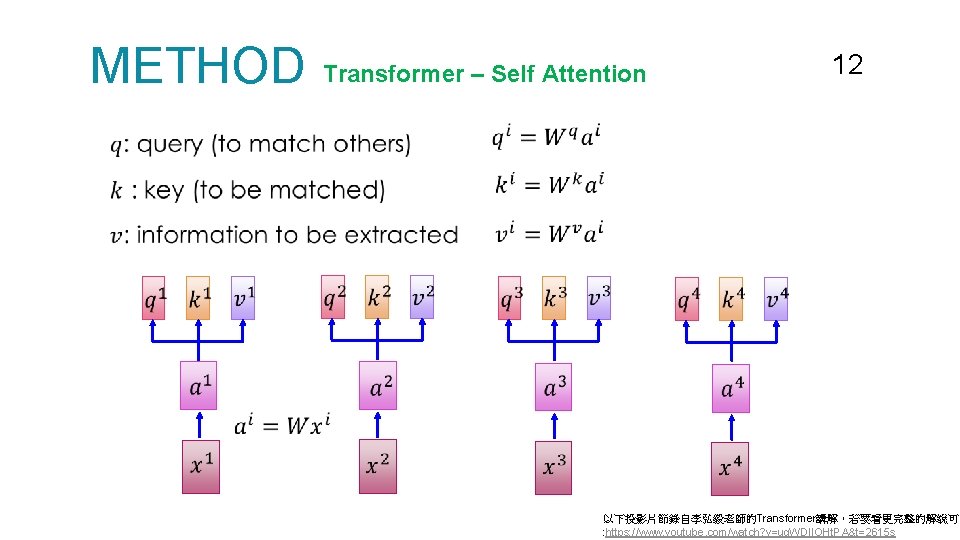

METHOD Transformer – Self Attention 12 以下投影片節錄自李弘毅老師的Transformer講解,若要看更完整的解說可自 : https: //www. youtube. com/watch? v=ug. WDIIOHt. PA&t=2615 s

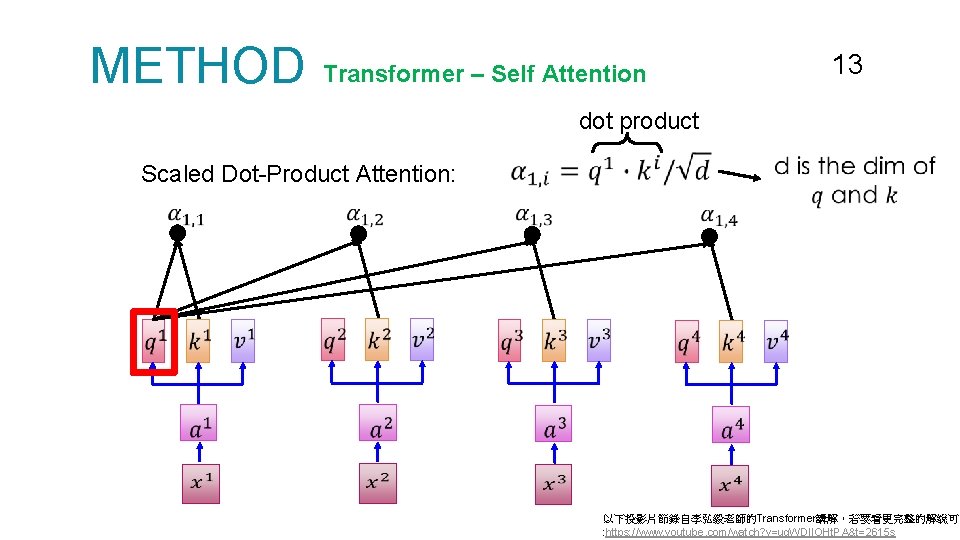

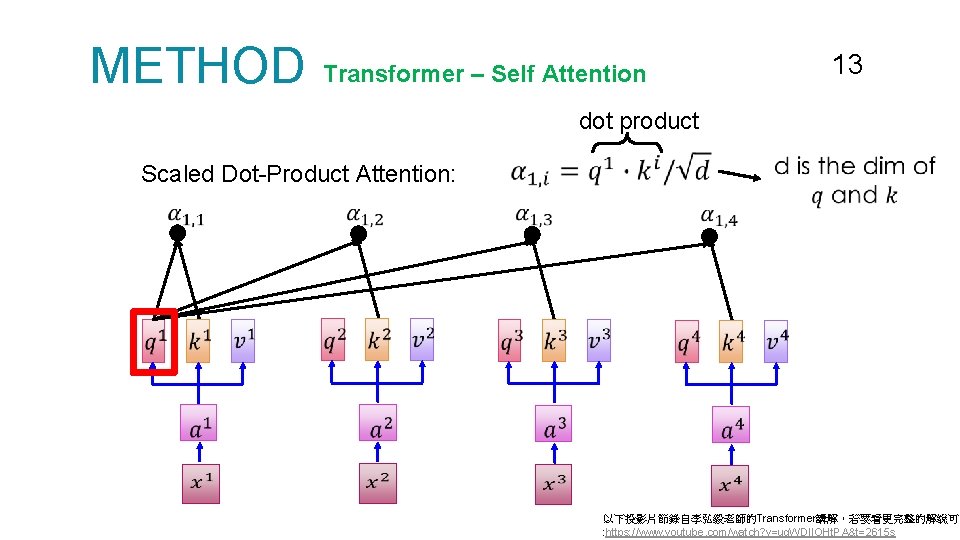

METHOD Transformer – Self Attention 13 dot product Scaled Dot-Product Attention: 以下投影片節錄自李弘毅老師的Transformer講解,若要看更完整的解說可自 : https: //www. youtube. com/watch? v=ug. WDIIOHt. PA&t=2615 s

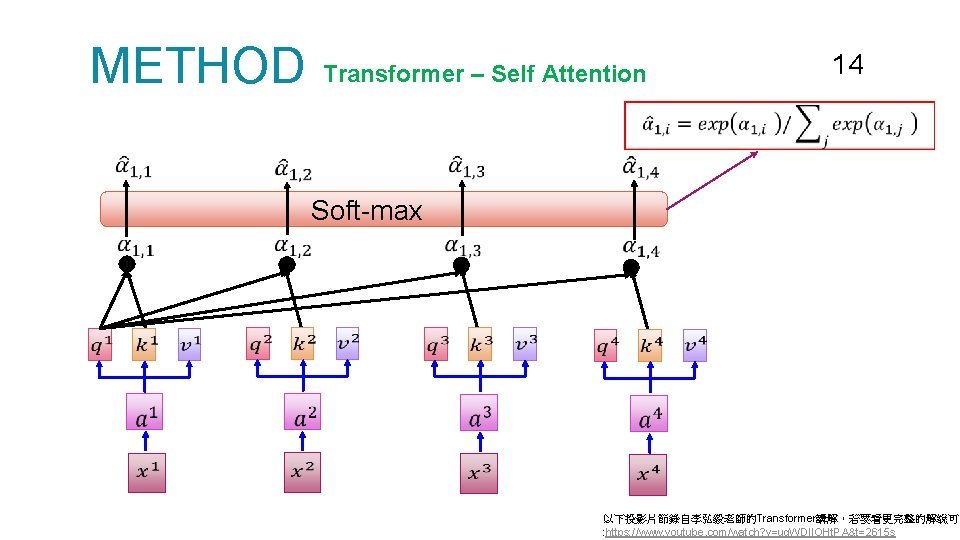

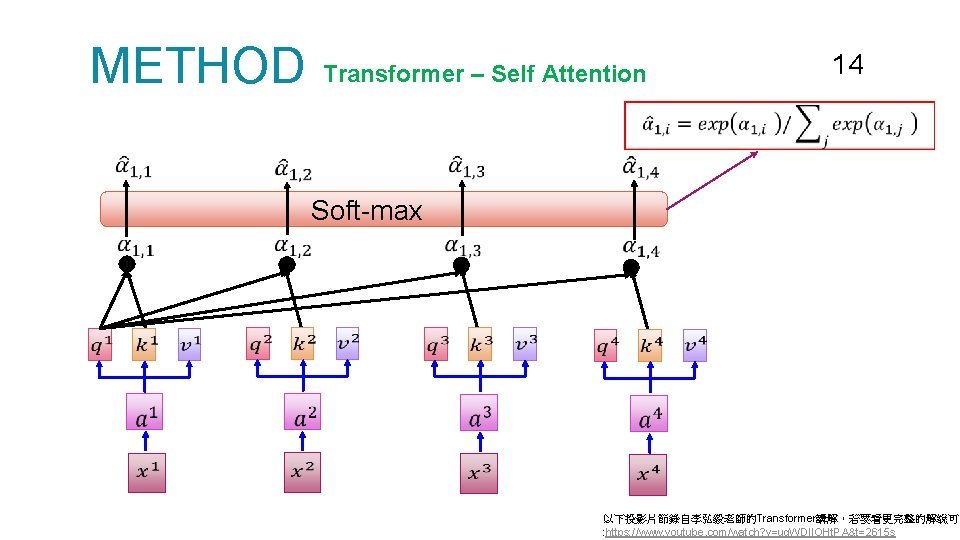

METHOD Transformer – Self Attention 14 Soft-max 以下投影片節錄自李弘毅老師的Transformer講解,若要看更完整的解說可自 : https: //www. youtube. com/watch? v=ug. WDIIOHt. PA&t=2615 s

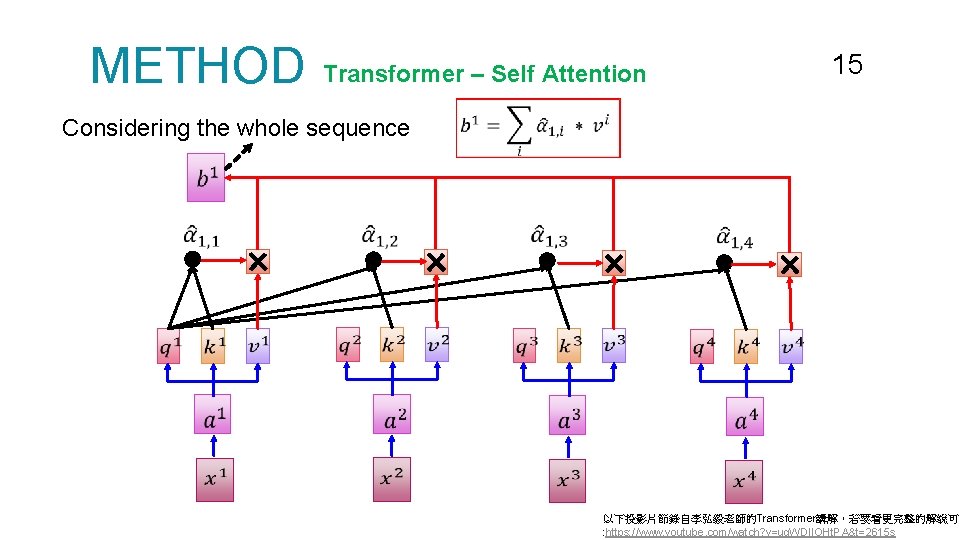

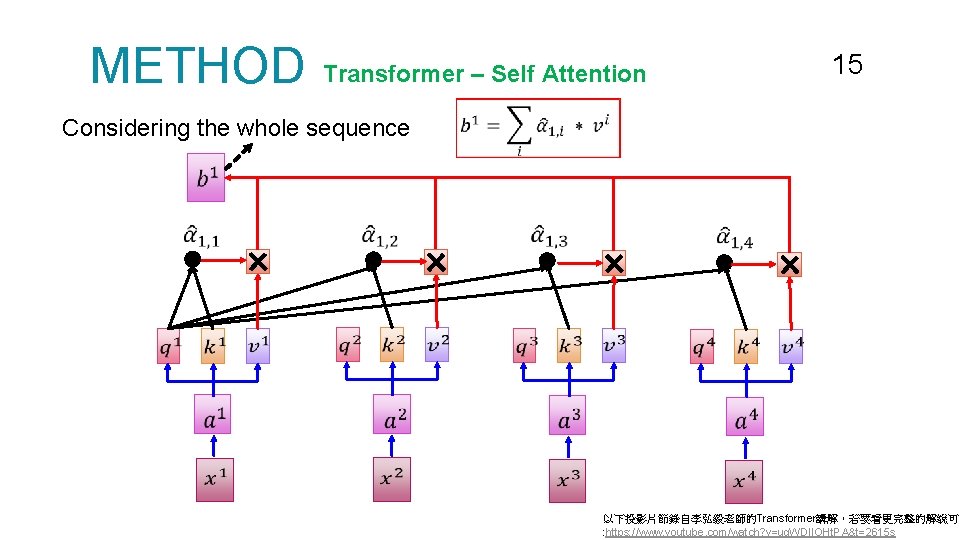

METHOD Transformer – Self Attention 15 Considering the whole sequence 以下投影片節錄自李弘毅老師的Transformer講解,若要看更完整的解說可自 : https: //www. youtube. com/watch? v=ug. WDIIOHt. PA&t=2615 s

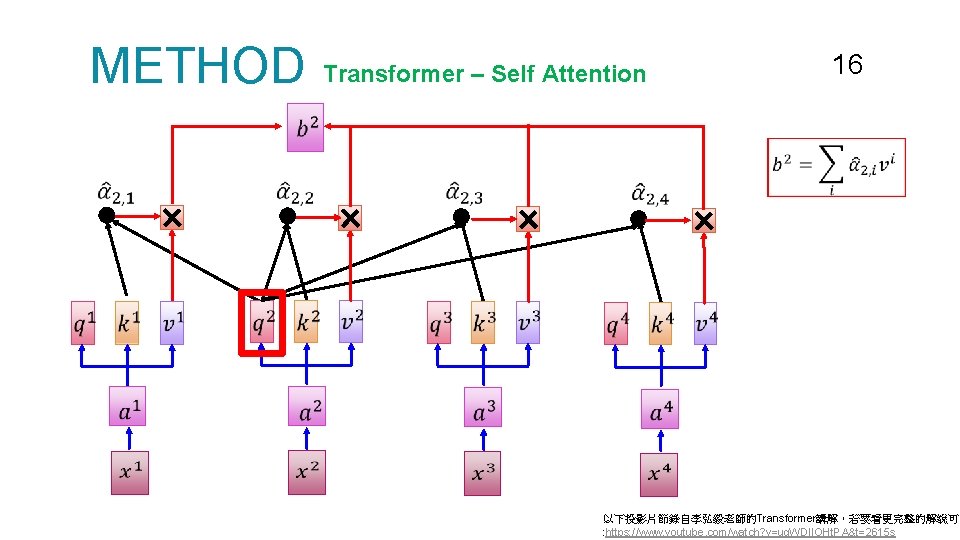

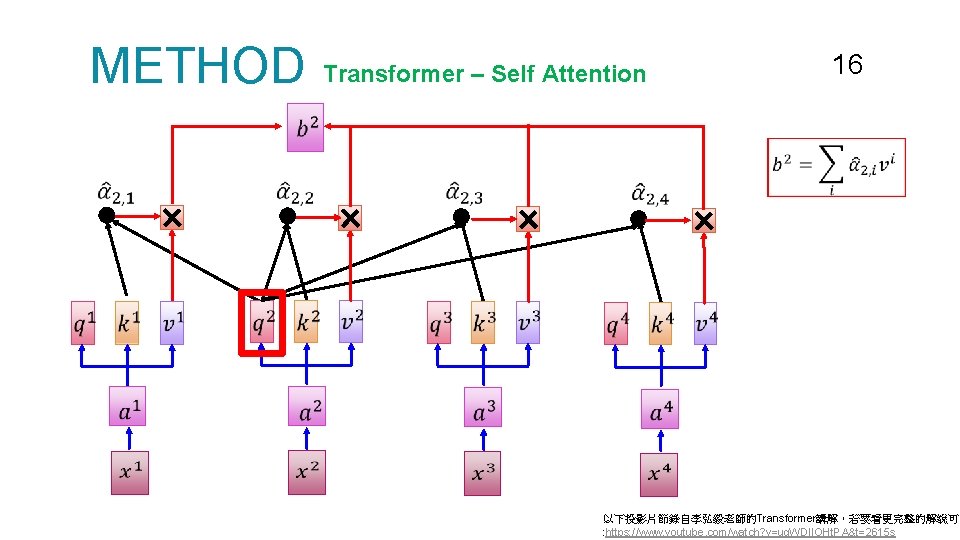

METHOD Transformer – Self Attention 16 以下投影片節錄自李弘毅老師的Transformer講解,若要看更完整的解說可自 : https: //www. youtube. com/watch? v=ug. WDIIOHt. PA&t=2615 s

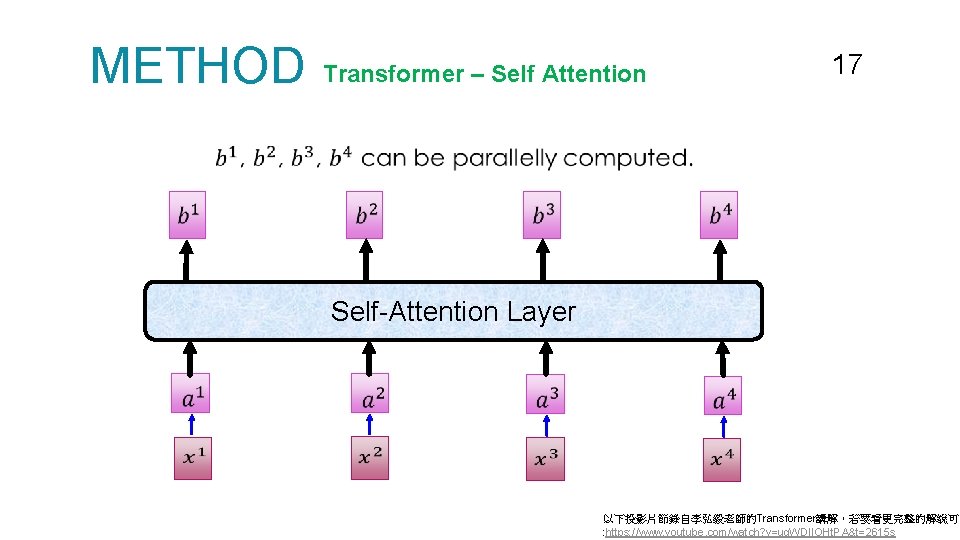

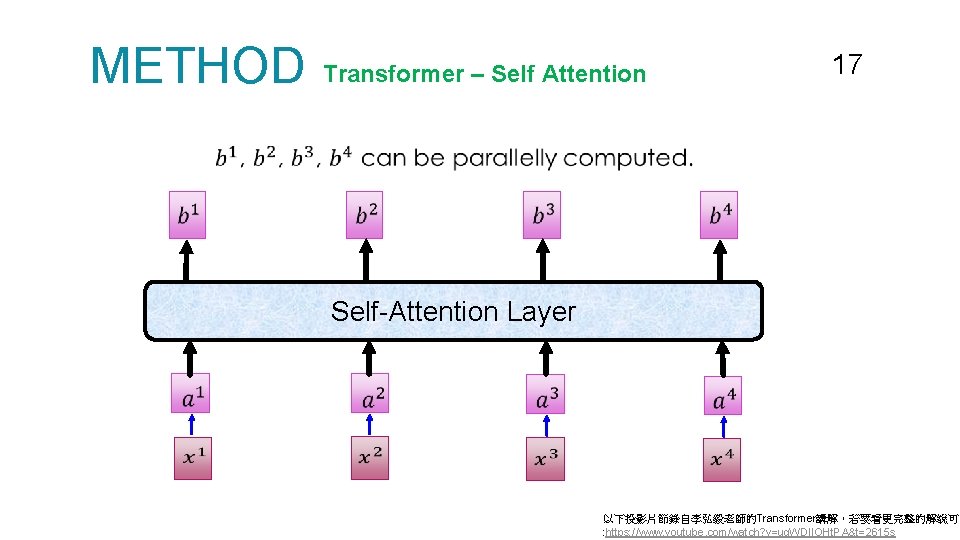

METHOD Transformer – Self Attention 17 Self-Attention Layer 以下投影片節錄自李弘毅老師的Transformer講解,若要看更完整的解說可自 : https: //www. youtube. com/watch? v=ug. WDIIOHt. PA&t=2615 s

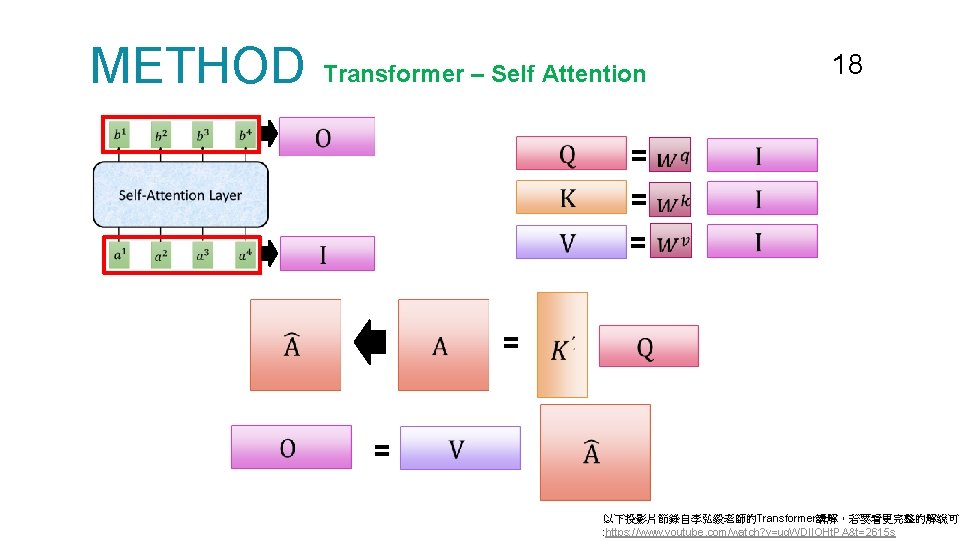

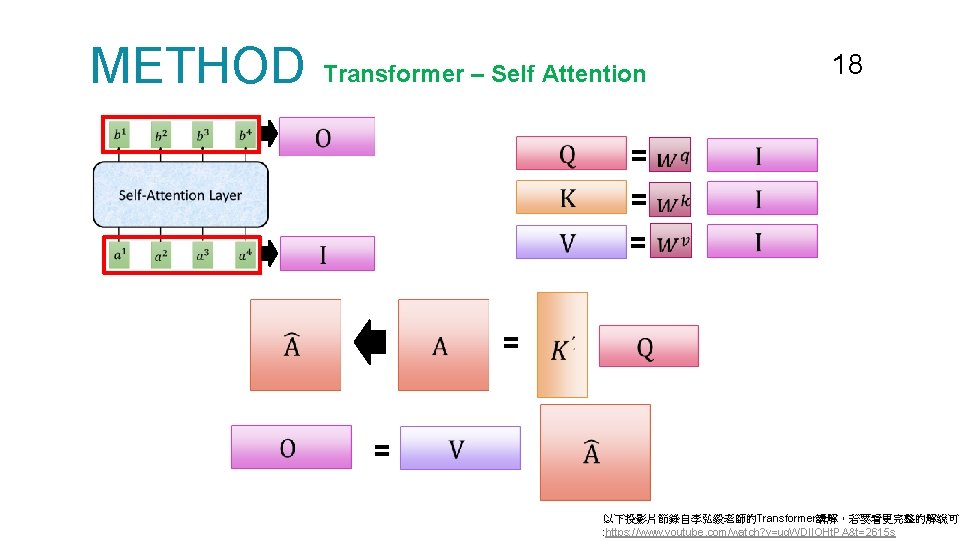

METHOD Transformer – Self Attention 18 = = = 以下投影片節錄自李弘毅老師的Transformer講解,若要看更完整的解說可自 : https: //www. youtube. com/watch? v=ug. WDIIOHt. PA&t=2615 s

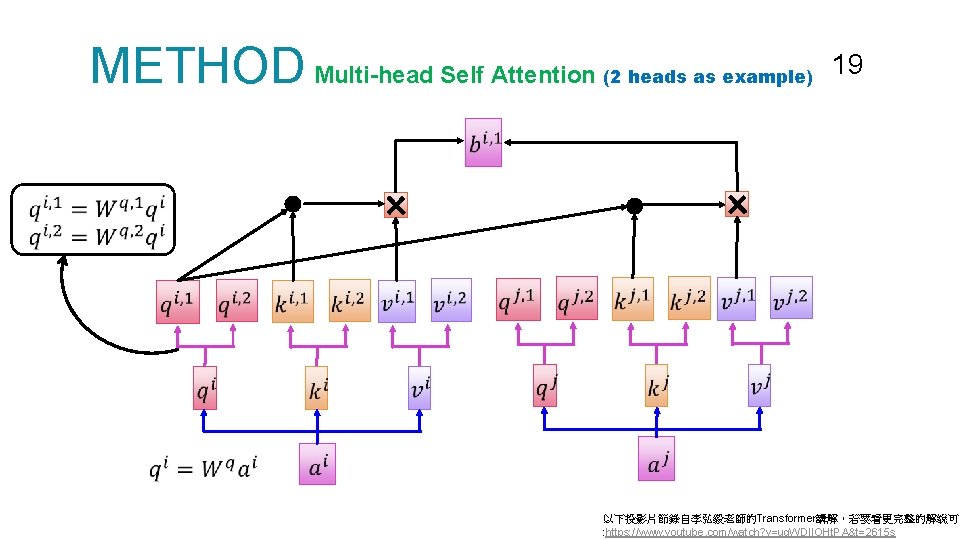

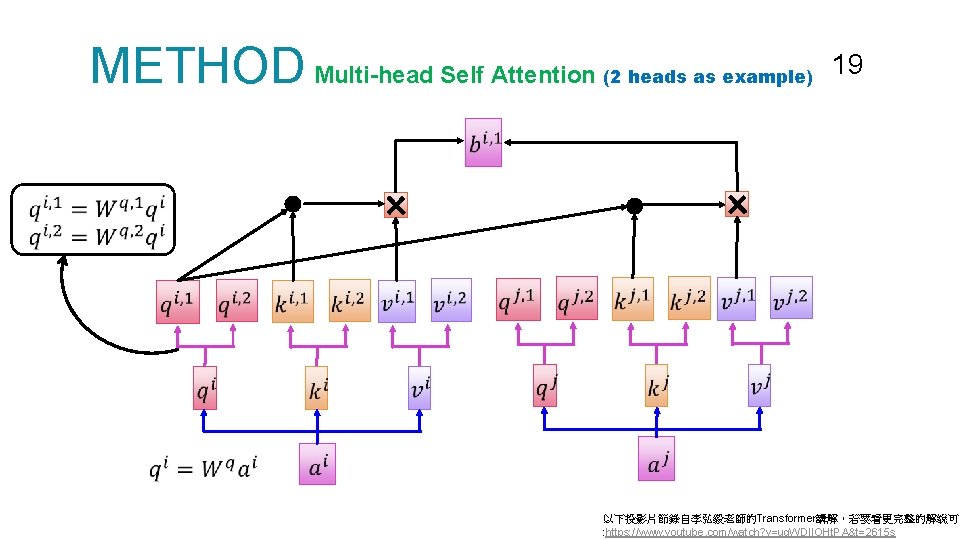

METHOD Multi-head Self Attention (2 heads as example) 19 以下投影片節錄自李弘毅老師的Transformer講解,若要看更完整的解說可自 : https: //www. youtube. com/watch? v=ug. WDIIOHt. PA&t=2615 s

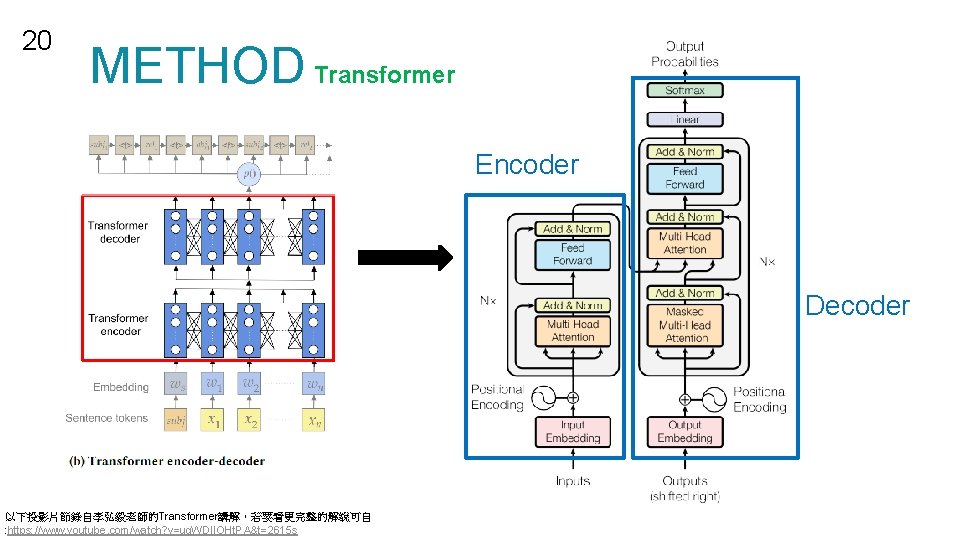

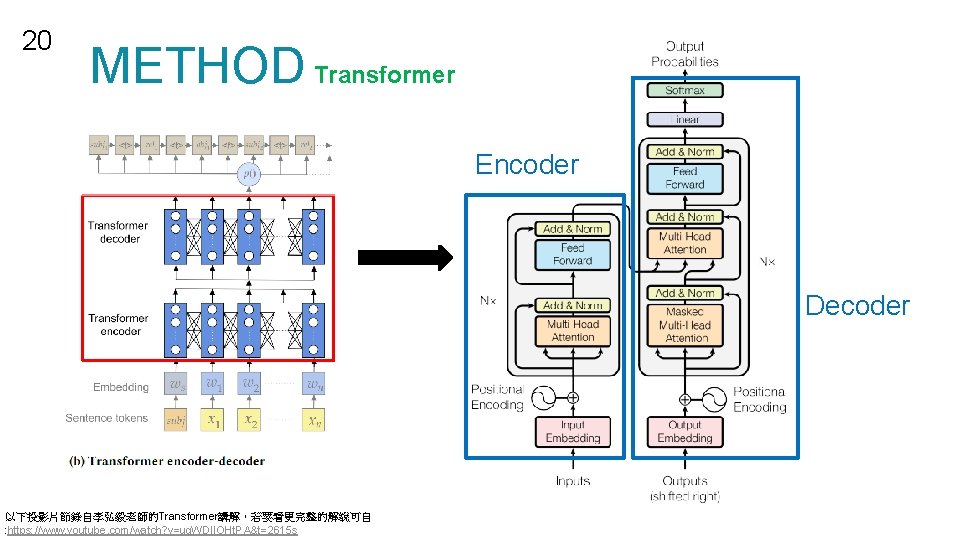

20 METHOD Transformer Encoder Decoder 以下投影片節錄自李弘毅老師的Transformer講解,若要看更完整的解說可自 : https: //www. youtube. com/watch? v=ug. WDIIOHt. PA&t=2615 s

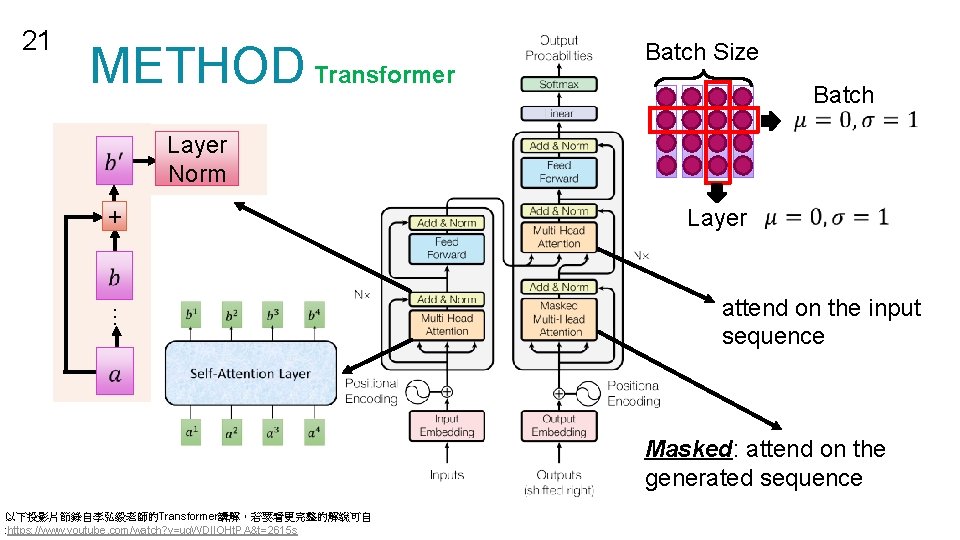

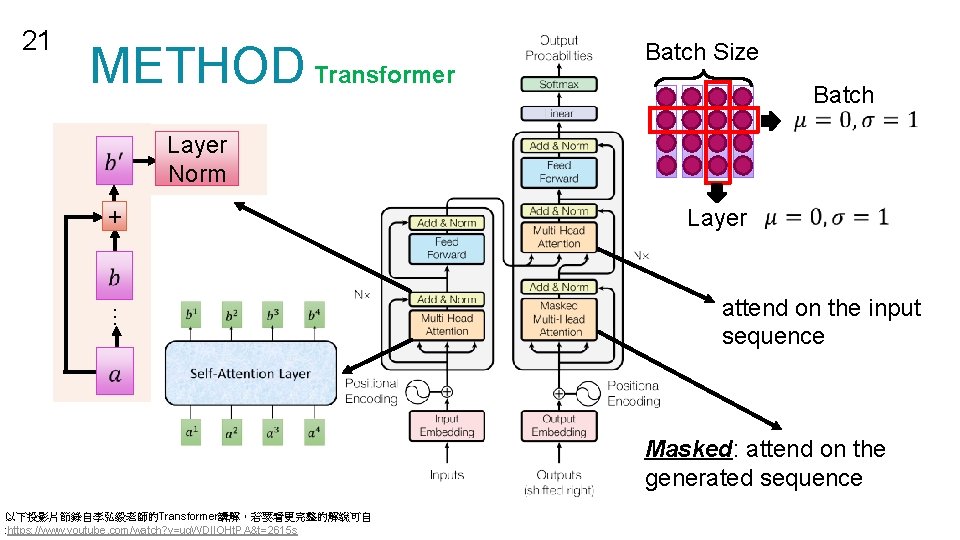

21 METHOD Transformer Batch Size Batch Layer Norm + Layer … attend on the input sequence Masked: attend on the generated sequence 以下投影片節錄自李弘毅老師的Transformer講解,若要看更完整的解說可自 : https: //www. youtube. com/watch? v=ug. WDIIOHt. PA&t=2615 s

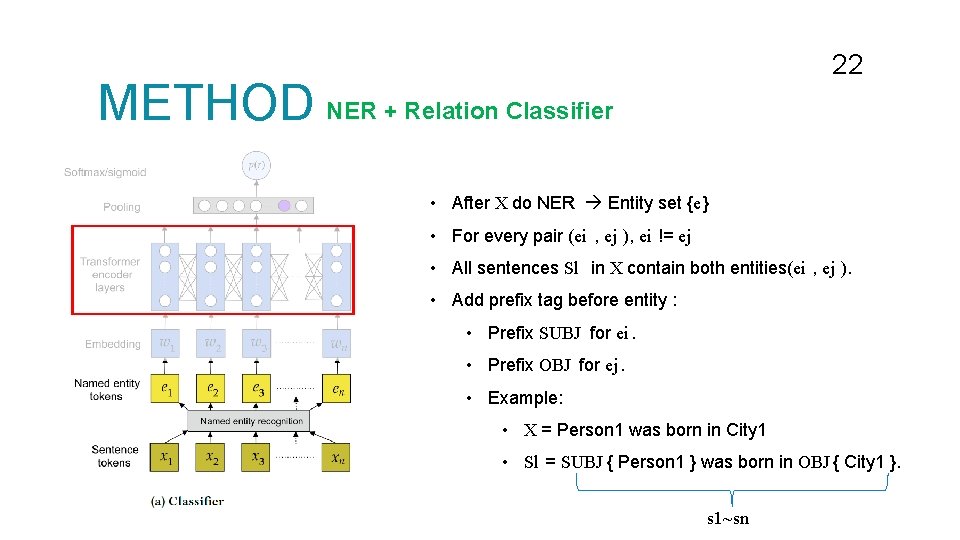

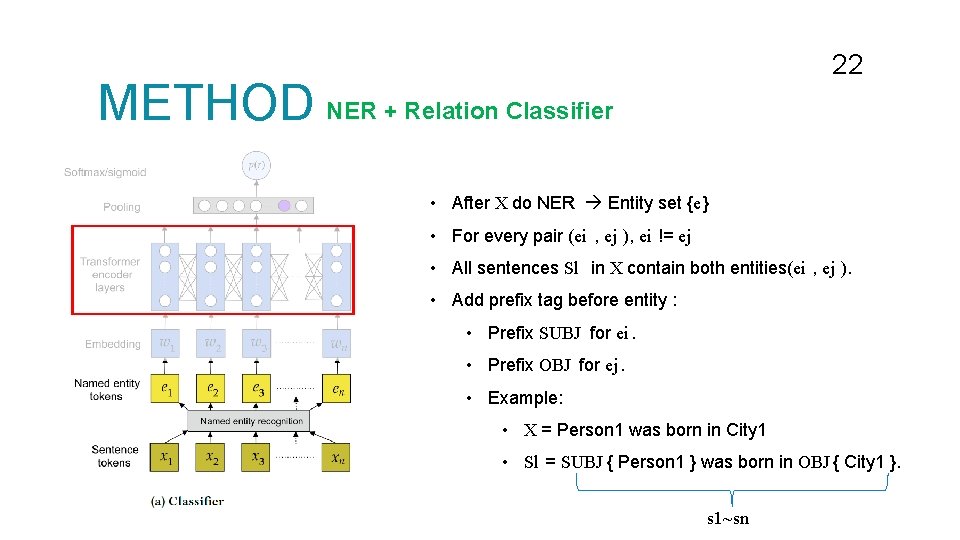

22 METHOD NER + Relation Classifier • After X do NER Entity set {e } • For every pair (ei , ej ), ei != ej • All sentences Sl in X contain both entities(ei , ej ). • Add prefix tag before entity : • Prefix SUBJ for ei. • Prefix OBJ for ej. • Example: • X = Person 1 was born in City 1 • Sl = SUBJ { Person 1 } was born in OBJ { City 1 }. s 1~sn

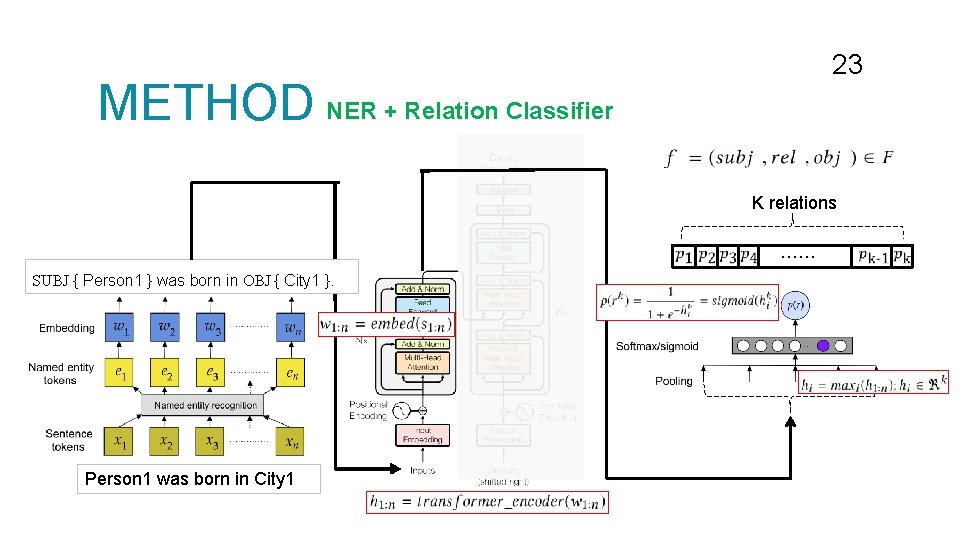

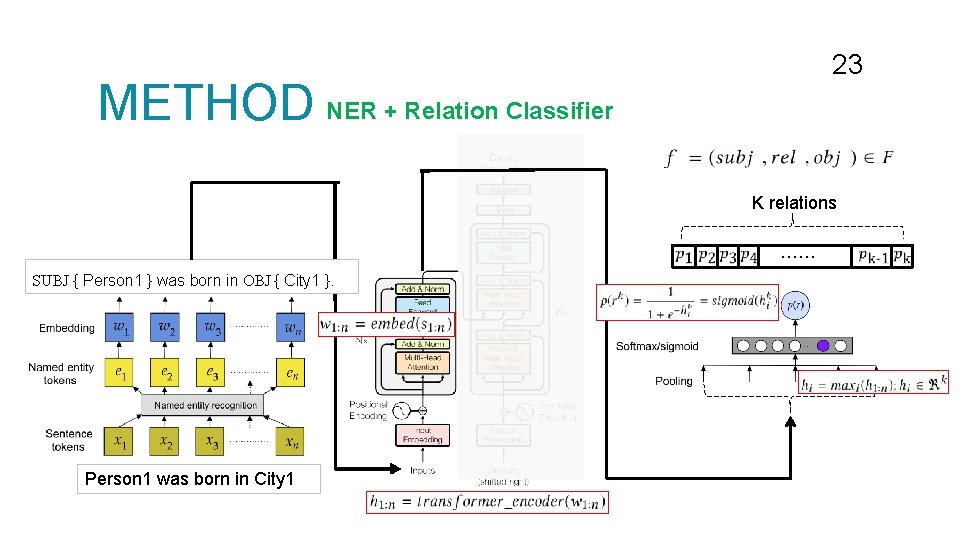

23 METHOD NER + Relation Classifier K relations …… SUBJ { Person 1 } was born in OBJ { City 1 }. Person 1 was born in City 1

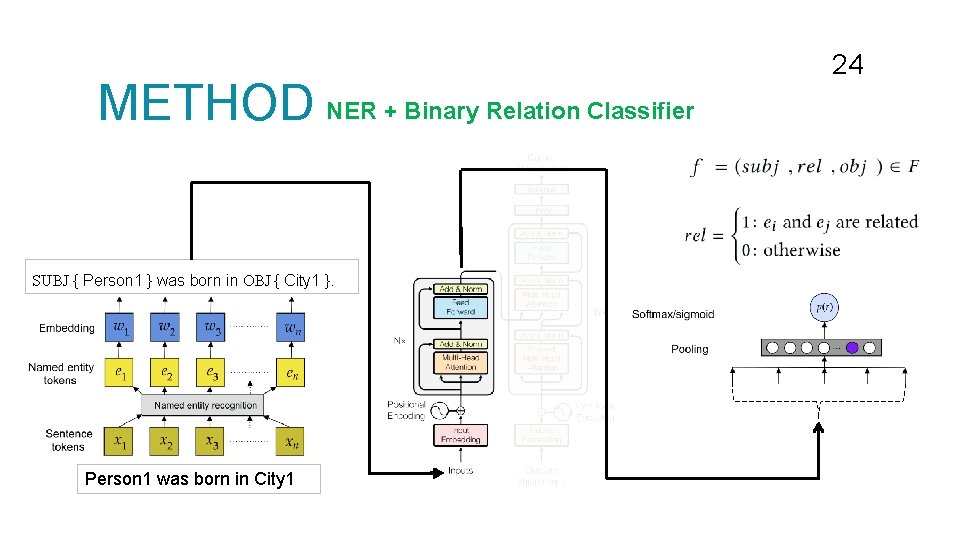

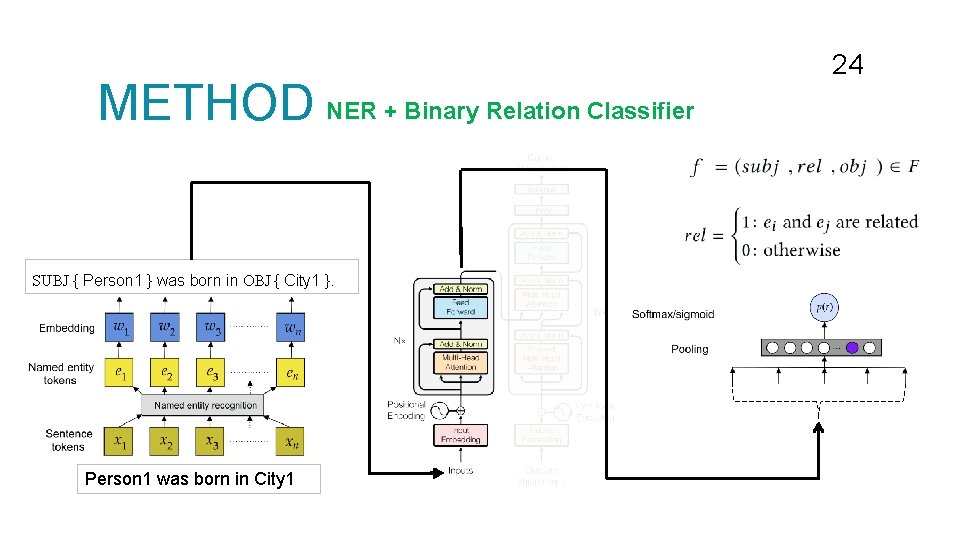

METHOD NER + Binary Relation Classifier SUBJ { Person 1 } was born in OBJ { City 1 }. Person 1 was born in City 1 24

OUTLINE l Introduction l Method l Experiment l Conclusion 25

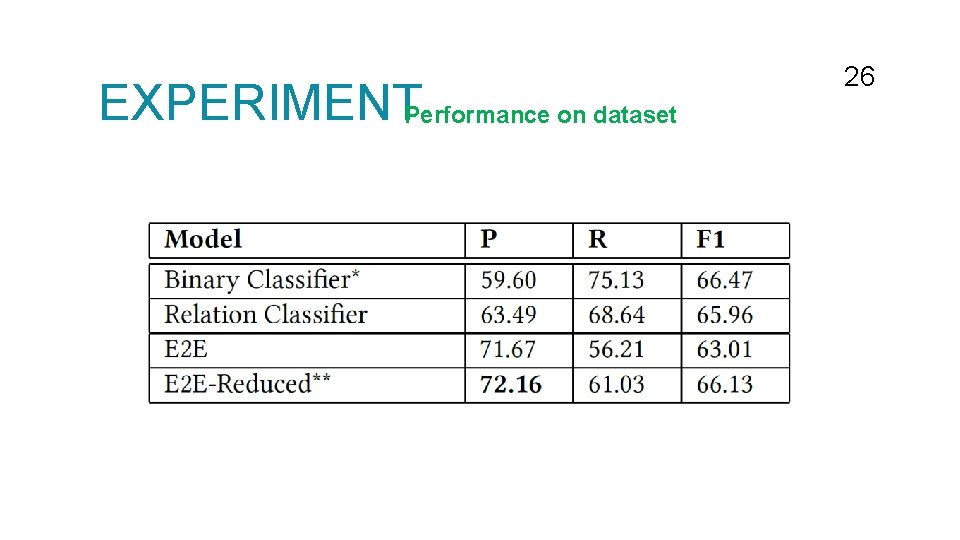

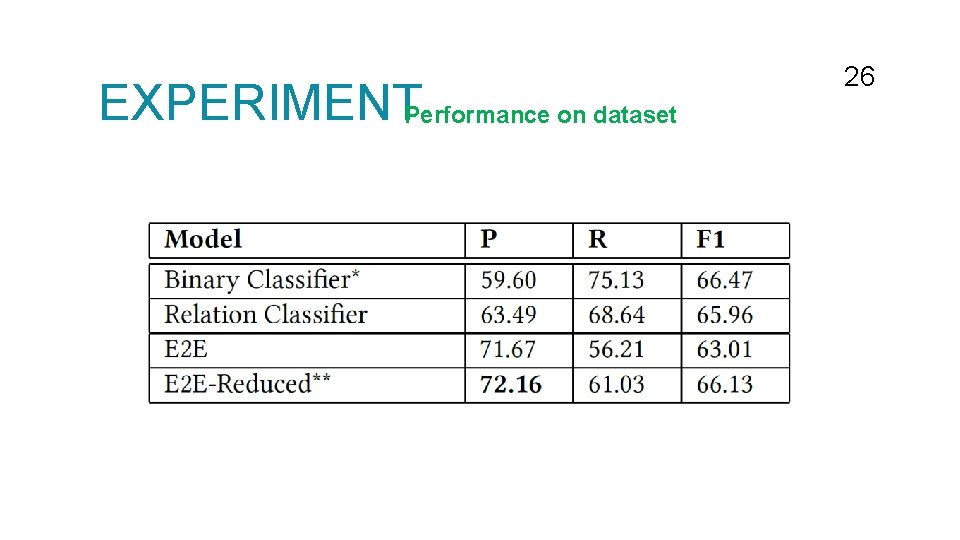

EXPERIMENTPerformance on dataset 26 26

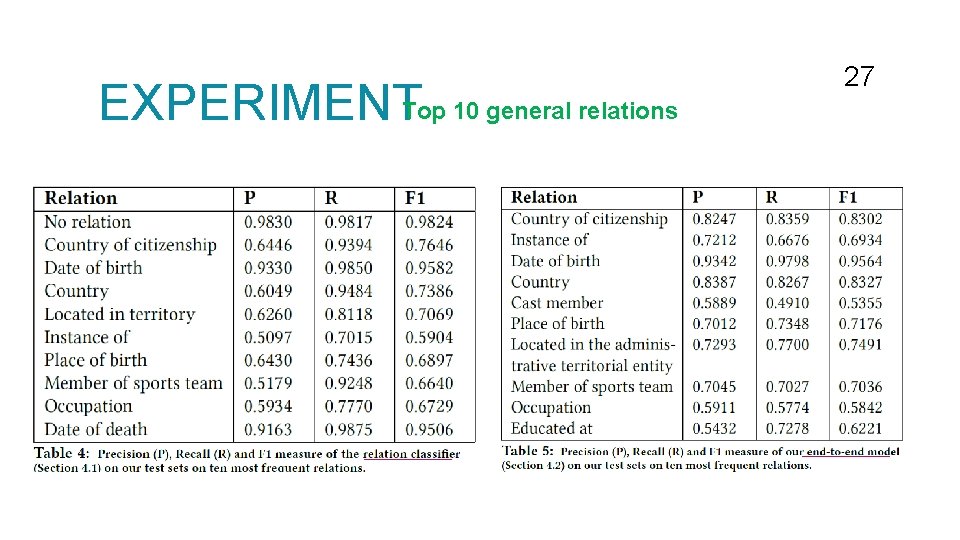

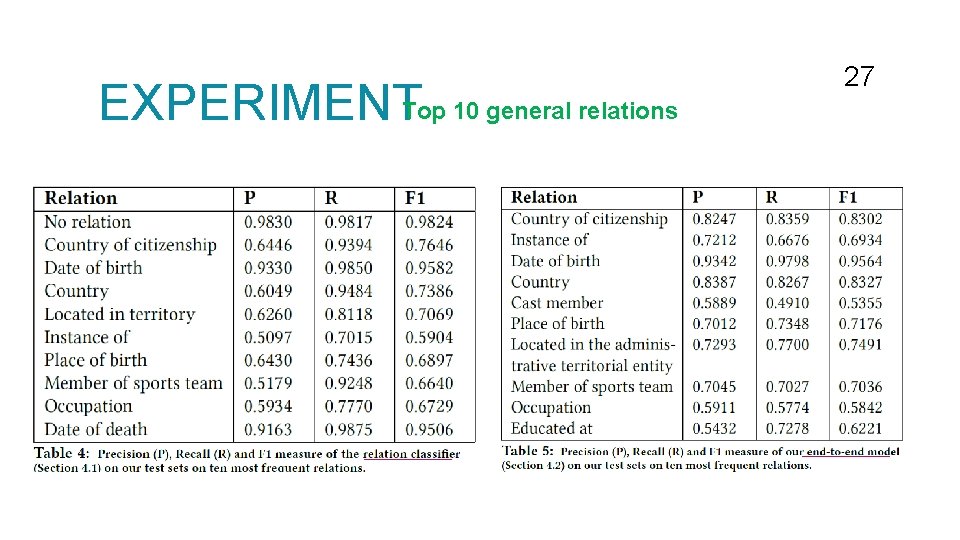

EXPERIMENTTop 10 general relations 27 27

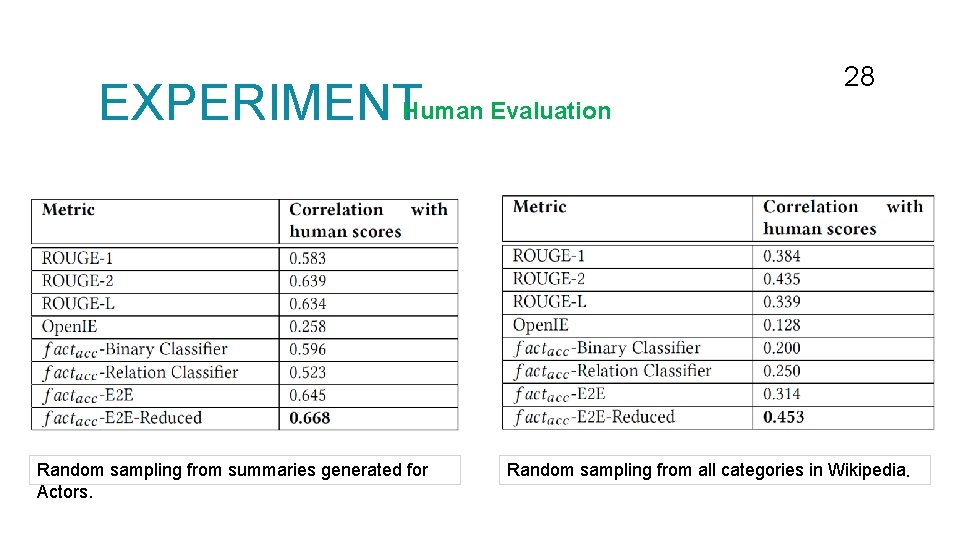

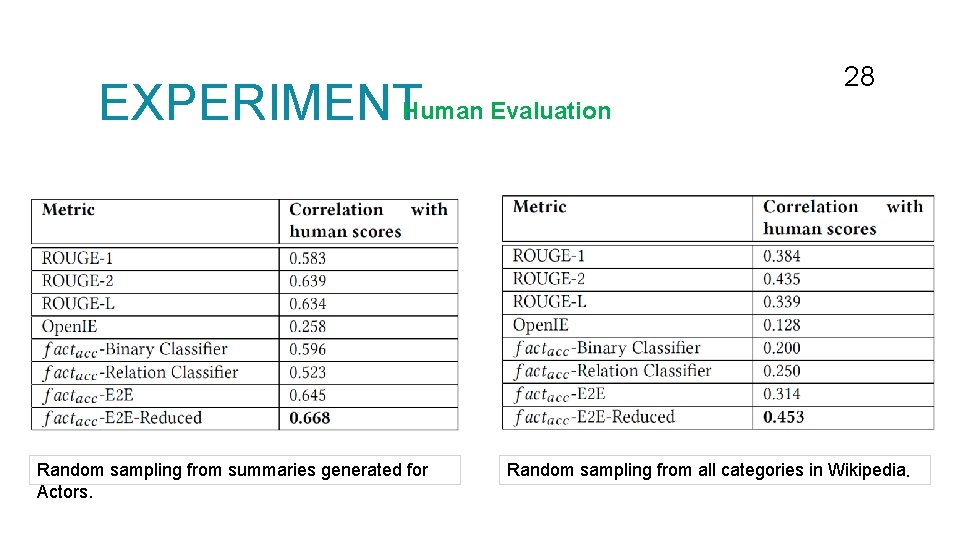

EXPERIMENTHuman Evaluation Random sampling from summaries generated for Actors. 28 28 Random sampling from all categories in Wikipedia.

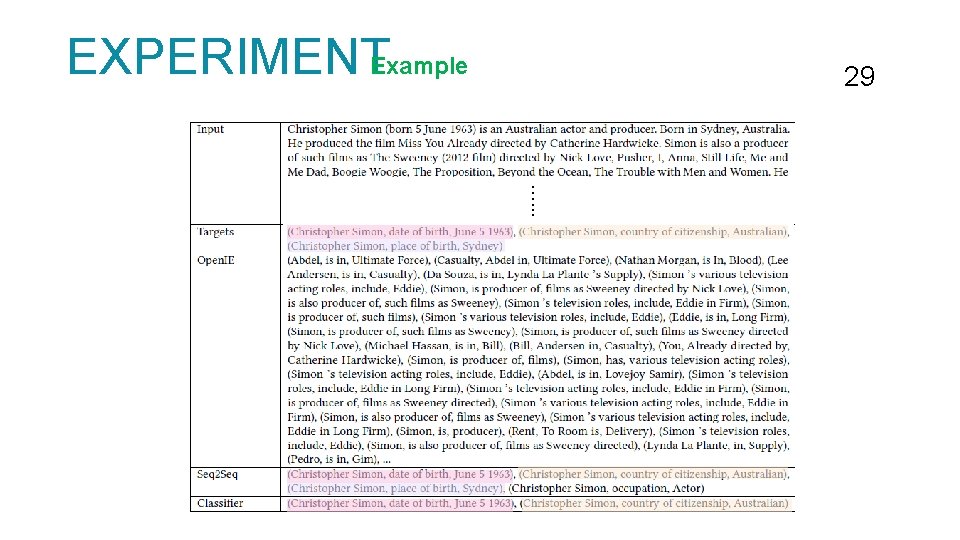

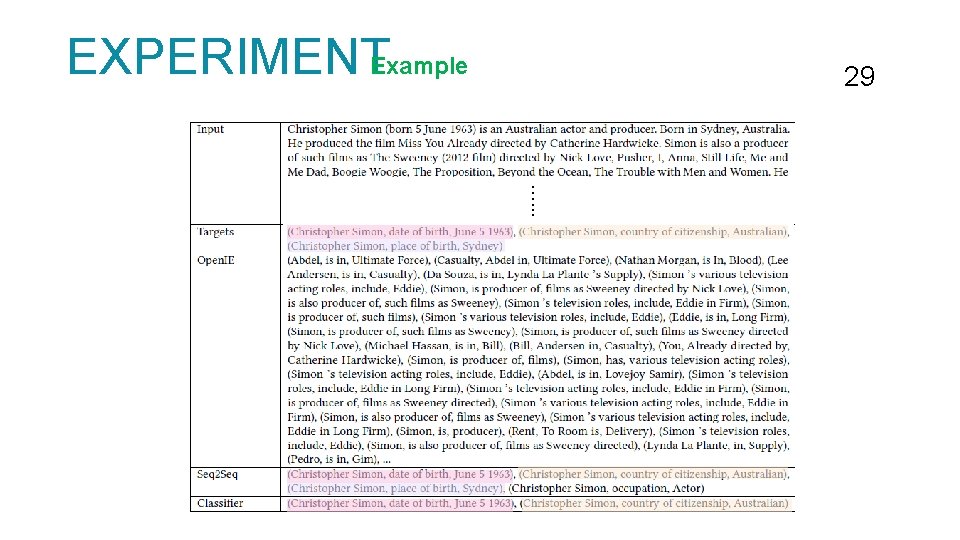

EXPERIMENTExample 29 29 …. . .

OUTLINE l Introduction l Method l Experiment l Conclusion 30

CONCLUSION u Limitation: Ø u 31 Our models are biased to sentences structured to the neutral tone set in Wikipedia Discussion: Ø Our proposed metric is able to indicate the factual accuracy of generated text, and agrees with human judgment. Ø Classifiers and end-to-end models with straightforward architectures are able to perform competitive fact extraction.